PocketFlow介绍

PocketFlow是我最近在探索的一个LLM 框架,我觉得很有意思,因此推荐给大家。

这个框架最大的特点就是:"Pocket Flow,一个仅用 100 行代码实现的 LLM 框架"。

我很好奇,一个框架只有100行代码是怎么做到的,它又有什么魅力呢?

正如作者所言现在的LLM框架过于臃肿了!

在使用各种框架的过程中,你可能会有如下的感觉:

- 臃肿的抽象:正如 Octomind 的工程团队所解释的:"LangChain 在最初对我们简单的功能需求与它的使用假设相匹配时很有帮助。但其高级抽象很快使我们的代码更难以理解并令人沮丧地难以维护。"这些框架通过不必要的复杂性隐藏了简单功能。

- 实现噩梦:除了抽象之外,这些框架还给开发者带来了依赖项臃肿、版本冲突和不断变化的接口的负担。开发者经常抱怨:"它不稳定,接口不断变化,文档经常过时。"另一个开发者开玩笑说:"在读这句话的时间内,LangChain 已经弃用了 4 个类而没有更新文档。"

PocketFlow作者开始思考这个问题:"我们真的需要这么多的包装器吗?如果我们去掉一切会怎样?什么是真正最小且可行的?"

PocketFlow作者在过去一年从零开始构建 LLM 应用程序后,有了一个顿悟:在所有复杂性之下,LLM 系统本质上只是简单的有向图。通过去除不必要的层,他创建了 Pocket Flow------一个没有任何冗余、没有任何依赖、没有任何供应商锁定的框架,全部代码仅 100 行。

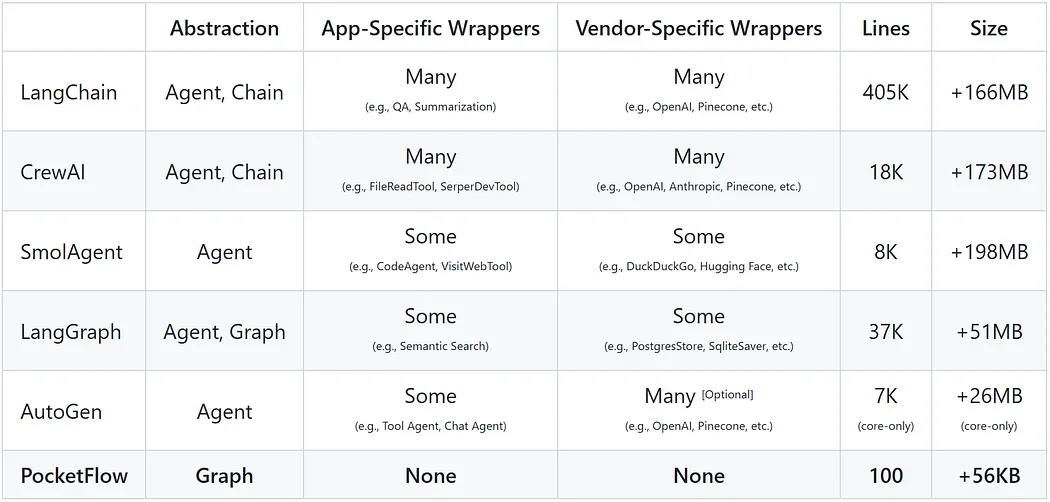

AI 系统框架的抽象、应用特定封装、供应商特定封装、代码行数和大小的比较。

来源:https://pocketflow.substack.com/p/i-built-an-llm-framework-in-just

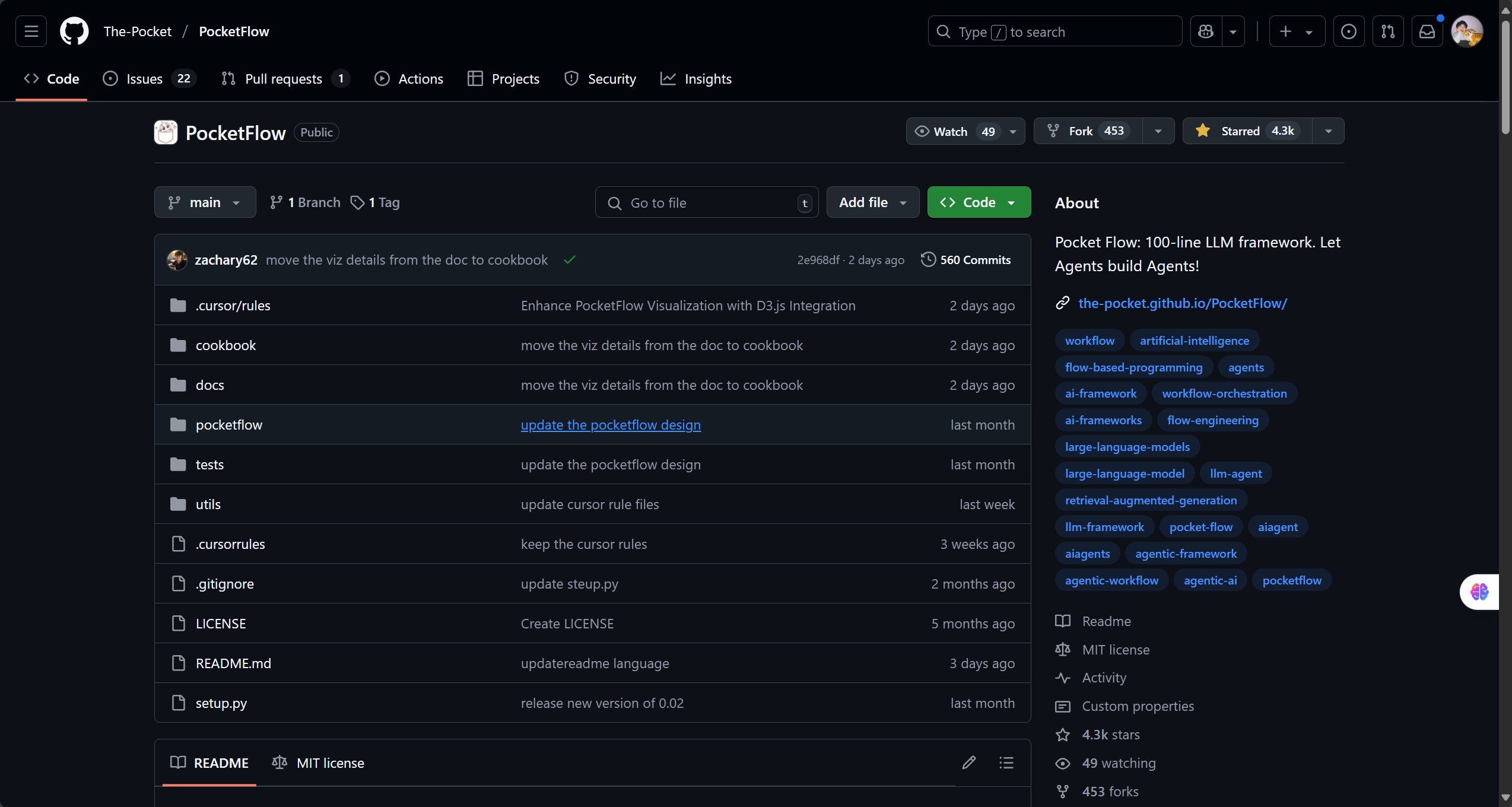

GitHub地址:https://github.com/The-Pocket/PocketFlow

PocketFlow的构建块

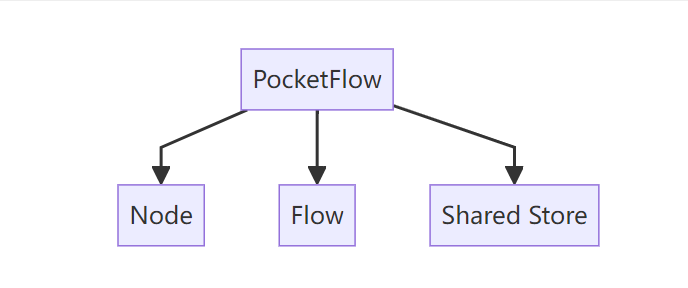

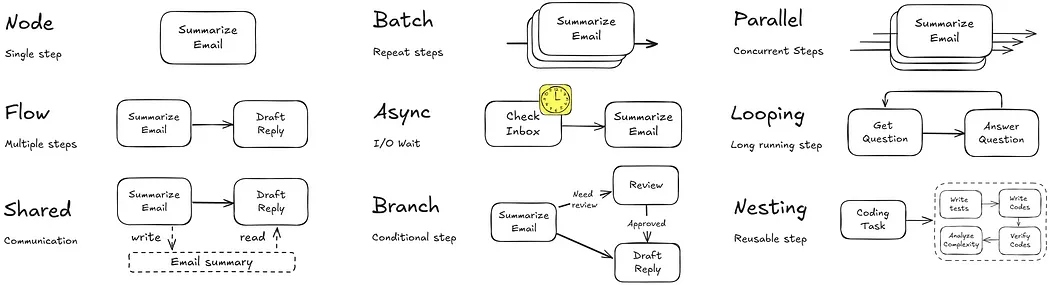

flowchart TD id1[PocketFlow] -->b[Node] & c[Flow] & D[Shared Store]

理解PocketFlow需要理解Node、Flow与Shared Store这三个基本的概念。

想象 Pocket Flow 就像一个井然有序的厨房:

- Node就像烹饪站(切菜、烹饪、摆盘)

- Flow就像食谱,指示下一步访问哪个站台。

- Shared Store是所有工作站都能看到原料的台面。

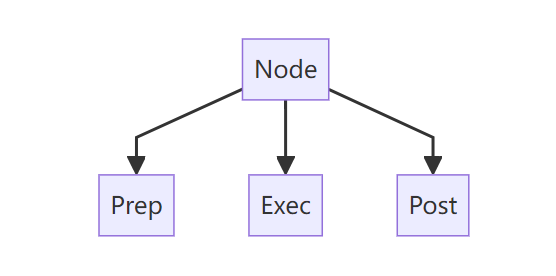

在我们的厨房(代理系统),每个站点(Node)执行三个简单的操作:

- Prep: 从共享存储中获取你需要的东西(收集原料)

- Exec: 执行你的专门任务(烹饪原料)

- Post: 将结果返回到共享存储并确定下一步行动(上菜并决定下一步做什么)

flowchart TD id1[Node] -->b[Prep] & c[Exec] & D[Post]

食谱 (Flow) 依据条件 (Orch) 指导执行:

- "如果蔬菜被切碎,前往烹饪站"

- "如果饭菜做好了,移到装盘站"

PocketFlow还支持批处理、异步执行和并行处理,适用于节点和流程。就是这样!这就是构建LLM应用程序所需的一切。没有不必要的抽象,没有复杂的架构------只有简单的构建块,可以组合成强大的系统。

Pocket Flow 核心图抽象

来源:https://pocketflow.substack.com/p/i-built-an-llm-framework-in-just

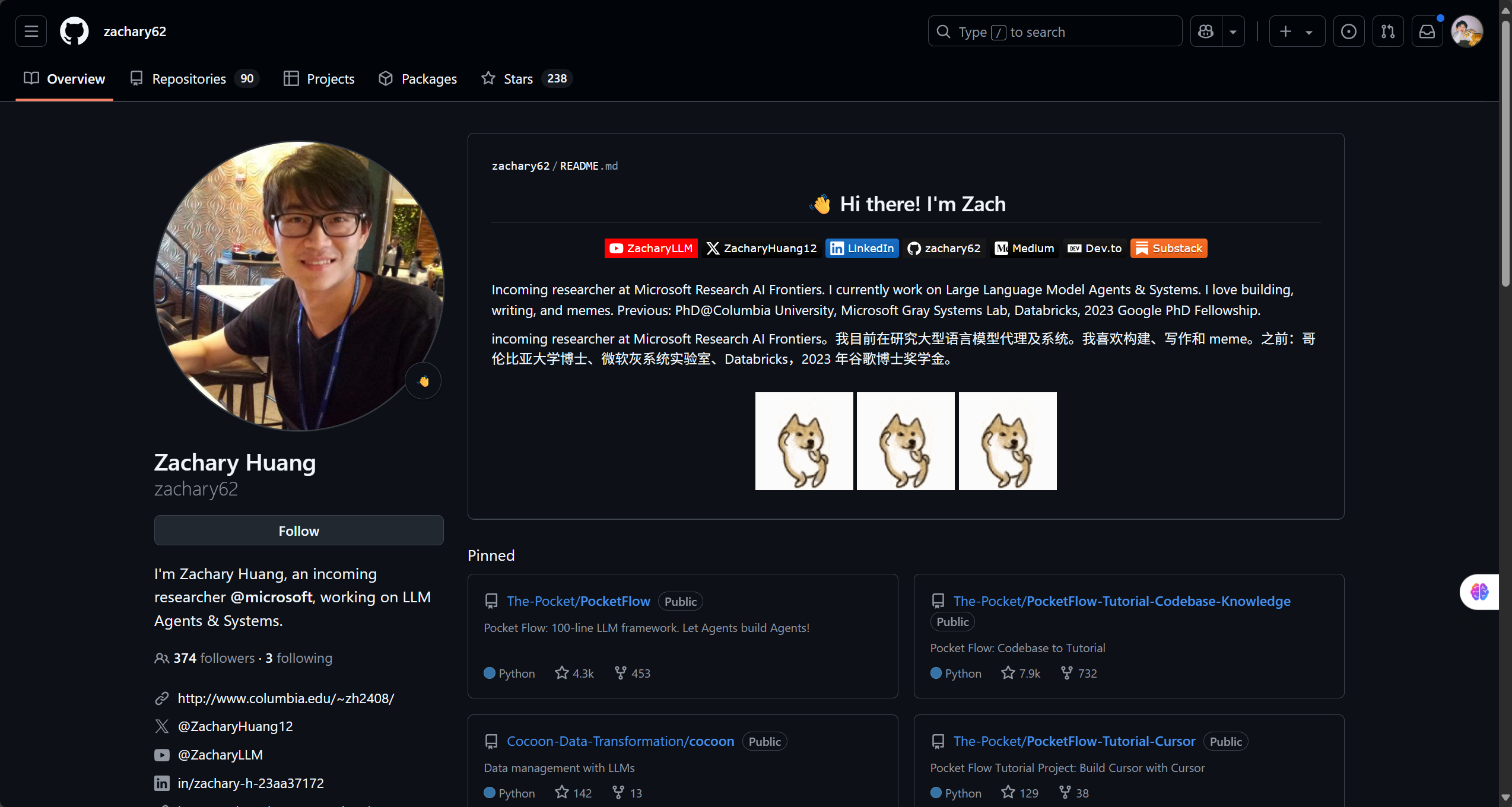

PocketFlow作者介绍

Zachary Huang:即将加入微软研究院AI前沿研究。目前从事大规模语言模型代理和系统的研究。喜欢构建、写作和制作梗图。之前经历:哥伦比亚大学博士,微软Gray Systems Lab,Databricks,2023年谷歌博士奖学金。

大佬不仅代码厉害还喜欢写通俗易懂的文章,最近看完了大佬的所有文章,感谢大佬的贡献,感兴趣的朋友也可以去看看。

地址:https://pocketflow.substack.com

PocketFlow实践

直接上手学习大佬提供的cookbook即可。

这里就演示一下几个入门的demo。

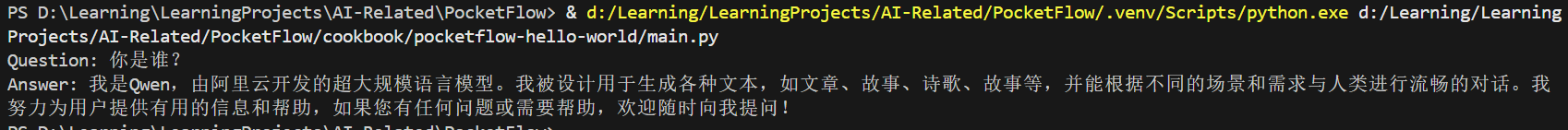

pocketflow-hello-world

定义Node与Flow:

python

from pocketflow import Node, Flow

from utils.call_llm import call_llm

# An example node and flow

# Please replace this with your own node and flow

class AnswerNode(Node):

def prep(self, shared):

# Read question from shared

return shared["question"]

def exec(self, question):

return call_llm(question)

def post(self, shared, prep_res, exec_res):

# Store the answer in shared

shared["answer"] = exec_res

answer_node = AnswerNode()

qa_flow = Flow(start=answer_node)主脚本写了Shared Store:

python

from flow import qa_flow

# Example main function

# Please replace this with your own main function

def main():

shared = {

"question": "你是谁?",

"answer": None

}

qa_flow.run(shared)

print("Question:", shared["question"])

print("Answer:", shared["answer"])

if __name__ == "__main__":

main()call_llm可以改成这样:

python

from openai import OpenAI

import os

def call_llm(prompt):

client = OpenAI(api_key="your api key",

base_url="https://api.siliconflow.cn/v1")

response = client.chat.completions.create(

model="Qwen/Qwen2.5-72B-Instruct",

messages=[{"role": "user", "content": prompt}],

temperature=0.7

)

return response.choices[0].message.content

if __name__ == "__main__":

# Test the LLM call

messages = [{"role": "user", "content": "In a few words, what's the meaning of life?"}]

response = call_llm(messages)

print(f"Prompt: {messages[0]['content']}")

print(f"Response: {response}")效果:

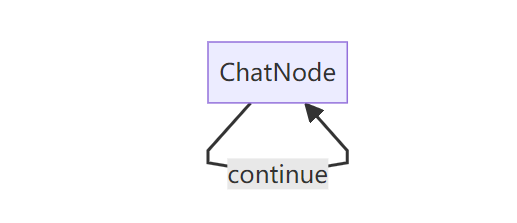

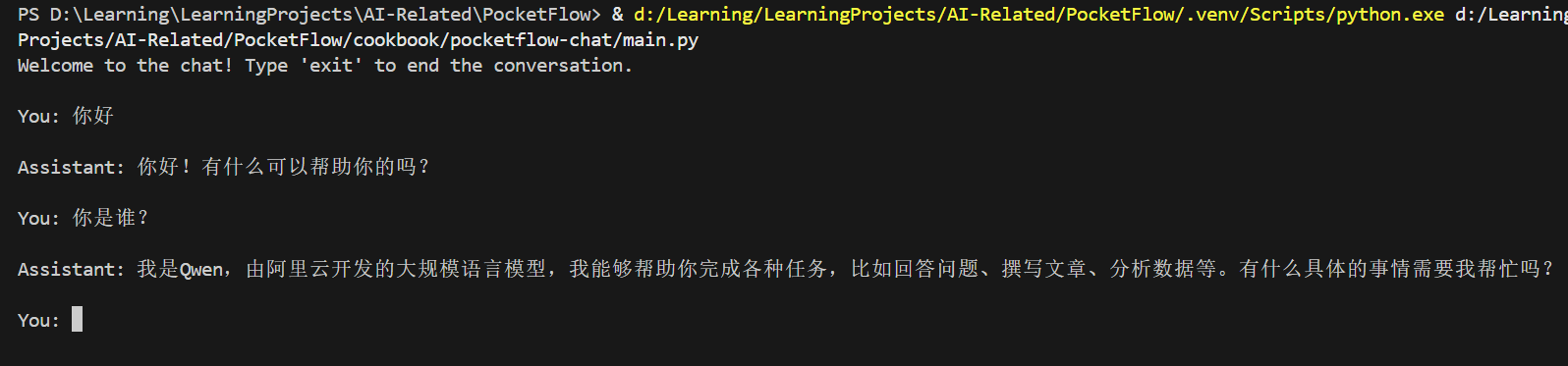

pocketflow-chat

flowchart LR chat[ChatNode] -->|continue| chat

python

from pocketflow import Node, Flow

from utils import call_llm

class ChatNode(Node):

def prep(self, shared):

# Initialize messages if this is the first run

if "messages" not in shared:

shared["messages"] = []

print("Welcome to the chat! Type 'exit' to end the conversation.")

# Get user input

user_input = input("\nYou: ")

# Check if user wants to exit

if user_input.lower() == 'exit':

return None

# Add user message to history

shared["messages"].append({"role": "user", "content": user_input})

# Return all messages for the LLM

return shared["messages"]

def exec(self, messages):

if messages is None:

return None

# Call LLM with the entire conversation history

response = call_llm(messages)

return response

def post(self, shared, prep_res, exec_res):

if prep_res is None or exec_res is None:

print("\nGoodbye!")

return None # End the conversation

# Print the assistant's response

print(f"\nAssistant: {exec_res}")

# Add assistant message to history

shared["messages"].append({"role": "assistant", "content": exec_res})

# Loop back to continue the conversation

return "continue"

# Create the flow with self-loop

chat_node = ChatNode()

chat_node - "continue" >> chat_node # Loop back to continue conversation

flow = Flow(start=chat_node)

# Start the chat

if __name__ == "__main__":

shared = {}

flow.run(shared)效果:

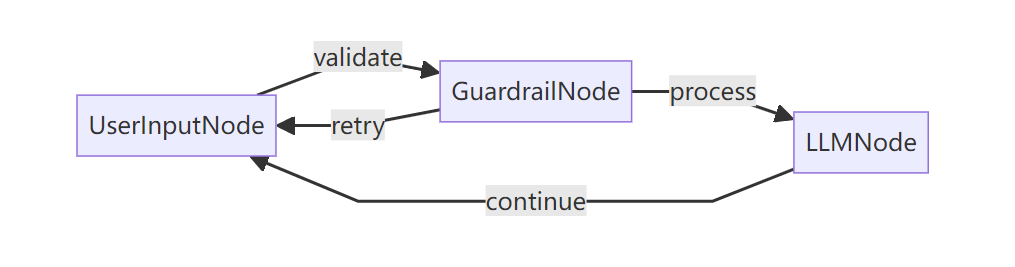

pocketflow-chat-guardrail

flowchart LR user[UserInputNode] -->|validate| guardrail[GuardrailNode] guardrail -->|retry| user guardrail -->|process| llm[LLMNode] llm -->|continue| user

- 一个

UserInputNode,其exec方法收集用户输入 - 一个

GuardrailNode,用于验证查询是否与旅行相关,使用:- 基本验证检查(空输入、过短)

- 基于 LLM 的验证,以确定查询是否与旅行相关

- 一个

LLMNode,使用带旅行顾问系统提示的LLM处理有效的旅行查询 - 流连接,在处理之前通过验证路由输入,并处理与旅行无关查询的重复尝试

python

from pocketflow import Node, Flow

from utils import call_llm

class UserInputNode(Node):

def prep(self, shared):

# Initialize messages if this is the first run

if "messages" not in shared:

shared["messages"] = []

print("Welcome to the Travel Advisor Chat! Type 'exit' to end the conversation.")

return None

def exec(self, _):

# Get user input

user_input = input("\nYou: ")

return user_input

def post(self, shared, prep_res, exec_res):

user_input = exec_res

# Check if user wants to exit

if user_input and user_input.lower() == 'exit':

print("\nGoodbye! Safe travels!")

return None # End the conversation

# Store user input in shared

shared["user_input"] = user_input

# Move to guardrail validation

return "validate"

class GuardrailNode(Node):

def prep(self, shared):

# Get the user input from shared data

user_input = shared.get("user_input", "")

return user_input

def exec(self, user_input):

# Basic validation checks

if not user_input or user_input.strip() == "":

return False, "Your query is empty. Please provide a travel-related question."

if len(user_input.strip()) < 3:

return False, "Your query is too short. Please provide more details about your travel question."

# LLM-based validation for travel topics

prompt = f"""

Evaluate if the following user query is related to travel advice, destinations, planning, or other travel topics.

The chat should ONLY answer travel-related questions and reject any off-topic, harmful, or inappropriate queries.

User query: {user_input}

Return your evaluation in YAML format:

```yaml

valid: true/false

reason: [Explain why the query is valid or invalid]

```"""

# Call LLM with the validation prompt

messages = [{"role": "user", "content": prompt}]

response = call_llm(messages)

# Extract YAML content

yaml_content = response.split("```yaml")[1].split("```")[0].strip() if "```yaml" in response else response

import yaml

result = yaml.safe_load(yaml_content)

assert result is not None, "Error: Invalid YAML format"

assert "valid" in result and "reason" in result, "Error: Invalid YAML format"

is_valid = result.get("valid", False)

reason = result.get("reason", "Missing reason in YAML response")

return is_valid, reason

def post(self, shared, prep_res, exec_res):

is_valid, message = exec_res

if not is_valid:

# Display error message to user

print(f"\nTravel Advisor: {message}")

# Skip LLM call and go back to user input

return "retry"

# Valid input, add to message history

shared["messages"].append({"role": "user", "content": shared["user_input"]})

# Proceed to LLM processing

return "process"

class LLMNode(Node):

def prep(self, shared):

# Add system message if not present

if not any(msg.get("role") == "system" for msg in shared["messages"]):

shared["messages"].insert(0, {

"role": "system",

"content": "You are a helpful travel advisor that provides information about destinations, travel planning, accommodations, transportation, activities, and other travel-related topics. Only respond to travel-related queries and keep responses informative and friendly. Your response are concise in 100 words."

})

# Return all messages for the LLM

return shared["messages"]

def exec(self, messages):

# Call LLM with the entire conversation history

response = call_llm(messages)

return response

def post(self, shared, prep_res, exec_res):

# Print the assistant's response

print(f"\nTravel Advisor: {exec_res}")

# Add assistant message to history

shared["messages"].append({"role": "assistant", "content": exec_res})

# Loop back to continue the conversation

return "continue"

# Create the flow with nodes and connections

user_input_node = UserInputNode()

guardrail_node = GuardrailNode()

llm_node = LLMNode()

# Create flow connections

user_input_node - "validate" >> guardrail_node

guardrail_node - "retry" >> user_input_node # Loop back if input is invalid

guardrail_node - "process" >> llm_node

llm_node - "continue" >> user_input_node # Continue conversation

flow = Flow(start=user_input_node)

# Start the chat

if __name__ == "__main__":

shared = {}

flow.run(shared)效果:

最后

PocketFlow还有很多有趣的例子,感兴趣的朋友可以自己去试试!!

但是说实话PocketFlow的"易用性"还是不足的,没法像很多框架那样开箱即用,还是需要自己写很多代码的,但也就是它的小巧给了它很大的灵活性,开发者可以根据自己的想法灵活地去写程序。