目录

- 一.系统环境

- 二.前言

- 三.安全容器隔离技术简介

- 四.Gvisor简介

- 五.容器runtime简介

- 六.docker容器缺陷

- 七.配置docker使用gVisor作为runtime

- [7.1 安装docker](#7.1 安装docker)

- [7.2 升级系统内核](#7.2 升级系统内核)

- [7.3 安装gvisor](#7.3 安装gvisor)

- [7.4 配置docker默认的runtime为gVisor](#7.4 配置docker默认的runtime为gVisor)

- [7.5 docker使用gVisor作为runtime创建容器](#7.5 docker使用gVisor作为runtime创建容器)

- 八.配置containerd使用gvisor作为runtime

- [8.1 安装containerd](#8.1 安装containerd)

- [8.2 安装gVisor](#8.2 安装gVisor)

- [8.3 配置containerd支持gVisor](#8.3 配置containerd支持gVisor)

- [8.4 containerd使用gvisor作为runtime创建容器](#8.4 containerd使用gvisor作为runtime创建容器)

- 九.在k8s环境里,配置containerd作为高级别runtime,containerd使用gvisor作为低级别runtime

- [9.1 把ubuntuk8sclient节点加入k8s集群](#9.1 把ubuntuk8sclient节点加入k8s集群)

- [9.2 配置kubelet使其支持gVisor](#9.2 配置kubelet使其支持gVisor)

- [9.3 创建容器运行时类(Runtime Class)](#9.3 创建容器运行时类(Runtime Class))

- [9.4 使用gVisor创建pod](#9.4 使用gVisor创建pod)

- 十.总结

一.系统环境

本文主要基于Kubernetes1.22.2和Linux操作系统Ubuntu 18.04。

| 服务器版本 | docker软件版本 | Kubernetes(k8s)集群版本 | gVisor软件版本 | containerd软件版本 | CPU架构 |

|---|---|---|---|---|---|

| Ubuntu 18.04.5 LTS | Docker version 20.10.14 | v1.22.2 | 1.0.2-dev | 1.6.4 | x86_64 |

Kubernetes集群架构:k8scludes1作为master节点,k8scludes2,k8scludes3作为worker节点。

| 服务器 | 操作系统版本 | CPU架构 | 进程 | 功能描述 |

|---|---|---|---|---|

| k8scludes1/192.168.110.128 | Ubuntu 18.04.5 LTS | x86_64 | docker,kube-apiserver,etcd,kube-scheduler,kube-controller-manager,kubelet,kube-proxy,coredns,calico | k8s master节点 |

| k8scludes2/192.168.110.129 | Ubuntu 18.04.5 LTS | x86_64 | docker,kubelet,kube-proxy,calico | k8s worker节点 |

| k8scludes3/192.168.110.130 | Ubuntu 18.04.5 LTS | x86_64 | docker,kubelet,kube-proxy,calico | k8s worker节点 |

二.前言

容器技术的发展极大地提高了开发和部署的效率,但容器的安全性一直是一个不容忽视的问题。传统的Docker容器虽然方便快捷,但在隔离机制上存在一定的缺陷。本文将介绍一种更为安全可靠的容器运行时解决方案------Gvisor。

以沙箱的方式运行容器的前提 是已经有一套可以正常运行的Kubernetes集群,关于Kubernetes(k8s)集群的安装部署,可以查看博客《Ubuntu 安装部署Kubernetes(k8s)集群》https://www.cnblogs.com/renshengdezheli/p/17632858.html。

三.安全容器隔离技术简介

安全容器是一种运行时技术,为容器应用提供一个完整的操作系统执行环境,但将应用的执行与宿主机操作系统隔离开,避免应用直接访问主机资源,从而可以在容器主机之间或容器之间提供额外的保护。

四.Gvisor简介

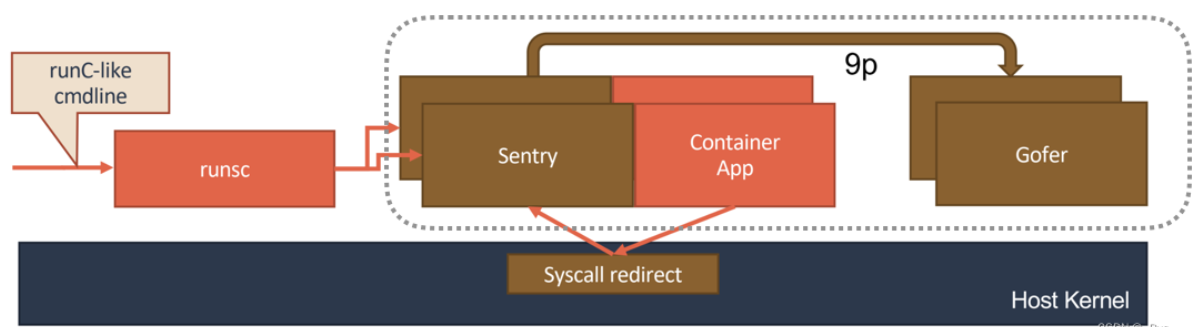

gVisor是由Google开发的一种轻量级的容器隔离技术。它通过在容器与主机操作系统之间插入一个虚拟化层来实现隔离。gVisor提供了一个类似于Linux内核的API,使得容器可以在一个更加受控的环境中运行。它使用了一种称为"Sandbox"的机制,将容器的系统调用转换为对gVisor的API调用,然后再由gVisor转发给宿主操作系统。这种方式可以有效地隔离容器与主机操作系统之间的资源访问,提高了容器的安全性。

gVisor的虚拟化层引入了一定的性能开销,但是相对于传统的虚拟机来说,它的性能损失较小。根据Google的测试数据,gVisor的性能损失在10%左右。这主要是因为gVisor使用了一些优化技术,如JIT编译器和缓存机制,来减少虚拟化层的开销。gVisor还支持多核并发,可以在多核系统上实现更好的性能。

gVisor 工作的核心,在于它为应用进程、也就是用户容器,启动了一个名叫 Sentry 的进程。 而 Sentry 进程的主要职责,就是提供一个传统的操作系统内核的能力,即:运行用户程序,执行系统调用。所以说,Sentry 并不是使用 Go 语言重新实现了一个完整的 Linux 内核,而只是一个对应用进程"冒充"内核的系统组件。

在这种设计思想下,我们就不难理解,Sentry 其实需要自己实现一个完整的 Linux 内核网络栈,以便处理应用进程的通信请求。然后,把封装好的二层帧直接发送给 Kubernetes 设置的 Pod 的 Network Namespace 即可。

五.容器runtime简介

在容器技术中,运行时(Runtime)是管理容器生命周期的软件。根据其提供的功能复杂度,可以将容器运行时分为低级别运行时和高级别运行时。

低级别运行时(Low-Level Runtime)通常指的是直接与操作系统内核交互的容器运行时管理工具。这些工具负责容器镜像的加载、容器的创建、启动、停止以及容器内部进程的管理。低级别运行时提供的功能主要包括:

- 容器镜像管理:处理容器的镜像下载、存储和更新。

- 容器生命周期管理:包括容器的创建、运行、暂停、恢复、停止和删除。

- 进程和资源隔离:通过操作系统的控制组(cgroups)和命名空间(namespaces)实现资源的隔离和分配。

- 网络配置:为容器提供网络接口和IP地址,以及容器间的通信机制。

低级别运行时有runC,lxc,gvisor,kata等等。

高级别运行时(High-Level Runtime)则通常是指在低级别运行时之上的容器编排和管理工具,它们提供了更高级的抽象和更多的管理功能。这些工具通常包括:

- 容器编排:自动化容器的部署、扩展和管理。

- 服务发现和负载均衡:自动配置服务间的相互发现和流量分配。

- 存储编排:管理容器的持久化数据和存储卷。

- 资源监控和日志管理:收集容器运行的监控数据和日志信息,以供分析和监控使用。

高级别运行时有docker,containerd,podman,ckt,cri-o,高级别运行时会调用低级别runtime。

k8s本身是不管理容器的,管理容器需要调用高级别运行时,k8s调用高级别运行时需要使用shim(垫片)接口,调用docker使用dockershim,调用containerd使用containerdshim,以此类推,kubelet里内置了dockershim,k8s1.24的时候要去除dockershim代码。

在实际应用中,低级别运行时和高级别运行时通常是协作工作的。低级别运行时负责底层的容器管理,而高级别运行时则在此基础上提供了更复杂的业务逻辑和自动化管理功能。

六.docker容器缺陷

可以查看docker默认的运行时,现在默认的runtime是runc。

shell

root@k8scludes1:~# docker info | grep Runtime

Runtimes: runc io.containerd.runc.v2 io.containerd.runtime.v1.linux

Default Runtime: runc现在宿主机上没有nginx进程。现在提出一个问题:"在宿主机上使用runc运行一个nginx容器,nginx容器运行着nginx进程,宿主机没运行nginx进程,在宿主机里能否看到nginx进程吗?"

shell

root@k8scludes1:~# ps -ef | grep nginx | grep -v grep现在有一个nginx镜像。

shell

root@k8scludes1:~# docker images | grep nginx

nginx latest 605c77e624dd 5 months ago 141MB使用nginx镜像创建一个容器。关于创建容器的详细操作,请查看博客《一文搞懂docker容器基础:docker镜像管理,docker容器管理》。

shell

root@k8scludes1:~# docker run -dit --name=nginxrongqi --restart=always nginx

7844b98cf01cc1b6ba05c575d284146c47cb3fb66e1fa61d6eeac696f0dbc1c3

root@k8scludes1:~# docker ps | grep nginx

7844b98cf01c nginx "/docker-entrypoint...." 8 seconds ago Up 6 seconds 80/tcp nginxrongqi查看宿主机的nginx进程,宿主机可以看到nginx进程。

docker默认的runtime为runc,通过runc创建出来的容器,会共享宿主机的进程空间和内核空间,容器的进程是暴露给宿主机的,如果容器里存在漏洞,不法分子会使用容器漏洞影响到宿主机的安全。

shell

root@k8scludes1:~# ps -ef | grep nginx

root 45384 45337 0 15:33 pts/0 00:00:00 nginx: master process nginx -g daemon off;

systemd+ 45465 45384 0 15:33 pts/0 00:00:00 nginx: worker process

systemd+ 45466 45384 0 15:33 pts/0 00:00:00 nginx: worker process

systemd+ 45467 45384 0 15:33 pts/0 00:00:00 nginx: worker process

systemd+ 45468 45384 0 15:33 pts/0 00:00:00 nginx: worker process

root 46215 6612 0 15:34 pts/0 00:00:00 grep --color=auto nginx以沙箱的方式运行容器,在宿主机里就看不到容器里运行的进程了,runc默认是不支持以沙箱的方式运行容器的,所以我们需要配置高级别runtime调用其他的低级别runtime运行,以实现沙箱的方式运行容器。

七.配置docker使用gVisor作为runtime

7.1 安装docker

我们在客户端机器etcd2(centos系统)上安装docker。

shell

[root@etcd2 ~]# yum -y install docker-ce设置docker开机自启动并现在启动docker。

shell

[root@etcd2 ~]# systemctl enable docker --now

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service.

[root@etcd2 ~]# systemctl status docker

● docker.service - Docker Application Container Engine

Loaded: loaded (/usr/lib/systemd/system/docker.service; enabled; vendor preset: disabled)

Active: active (running) since 二 2022-06-07 11:07:18 CST; 7s ago

Docs: https://docs.docker.com

Main PID: 1231 (dockerd)

Memory: 36.9M

CGroup: /system.slice/docker.service

└─1231 /usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock查看docker版本。

shell

[root@etcd2 ~]# docker --version

Docker version 20.10.12, build e91ed57配置docker镜像加速器。

shell

[root@etcd2 ~]# vim /etc/docker/daemon.json

[root@etcd2 ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://frz7i079.mirror.aliyuncs.com"]

}重启docker。

shell

[root@etcd2 ~]# systemctl restart docker设置iptables不对bridge的数据进行处理,启用IP路由转发功能。

shell

[root@etcd2 ~]# vim /etc/sysctl.d/k8s.conf

[root@etcd2 ~]# cat /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1使配置生效。

shell

[root@etcd2 ~]# sysctl -p /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1现在docker默认的runtime为runc。

shell

[root@etcd2 ~]# docker info | grep -i runtime

Runtimes: runc io.containerd.runc.v2 io.containerd.runtime.v1.linux

Default Runtime: runc下面开始配置docker使用gvisor作为runtime。

7.2 升级系统内核

查看操作系统版本。

shell

[root@etcd2 ~]# cat /etc/redhat-release

CentOS Linux release 7.4.1708 (Core) 查看系统内核。

shell

[root@etcd2 ~]# uname -r

3.10.0-693.el7.x86_64gVisor supports x86_64 and ARM64, and requires Linux 4.14.77+ ,安装gVisor需要Linux内核高于4.14.77,而当前内核版本只有3.10.0,需要升级系统内核。升级系统内核分为离线升级系统内核和在线升级系统内核,在博客《centos7 离线升级/在线升级操作系统内核》中进行了详细描述。

本文采用离线升级系统内核的方法。

更新yum源仓库。

shell

[root@etcd2 ~]# yum -y update启用 ELRepo 仓库,ELRepo 仓库是基于社区的用于企业级 Linux 仓库,提供对 RedHat Enterprise (RHEL) 和 其他基于 RHEL的 Linux 发行版(CentOS、Scientific、Fedora 等)的支持。ELRepo 聚焦于和硬件相关的软件包,包括文件系统驱动、显卡驱动、网络驱动、声卡驱动和摄像头驱动等。

导入ELRepo仓库的公共密钥。

shell

[root@etcd2 ~]# rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org安装ELRepo仓库的yum源。

shell

[root@etcd2 ~]# rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-3.el7.elrepo.noarch.rpm从elrepo下载系统内核包,如果不导入ELRepo仓库的公共密钥和安装ELRepo仓库的yum源,是下载不了内核包的。

shell

[root@etcd2 ~]# wget https://elrepo.org/linux/kernel/el7/x86_64/RPMS/kernel-lt-5.4.160-1.el7.elrepo.x86_64.rpm清华的这个镜像站可以直接下载。

shell

[root@etcd2 ~]# wget https://mirrors.tuna.tsinghua.edu.cn/elrepo/kernel/el7/x86_64/RPMS/kernel-lt-5.4.197-1.el7.elrepo.x86_64.rpm --no-check-certificate现在内核包就下载好了。

kernel-ml代表主线版本,总是保持主线最新的内核,kernel-lt代表长期支持版本,支持周期更长,如果你要追求最新的版本,直接选择带ml的rpm包即可,如果你要追求稳定且更长的支持周期,直接选择lt版本即可。

shell

[root@etcd2 ~]# ll -h kernel-lt-5.4.197-1.el7.elrepo.x86_64.rpm*

-rw-r--r-- 1 root root 51M 6月 5 19:47 kernel-lt-5.4.197-1.el7.elrepo.x86_64.rpm安装内核包。

shell

[root@etcd2 ~]# rpm -ivh kernel-lt-5.4.197-1.el7.elrepo.x86_64.rpm

警告:kernel-lt-5.4.197-1.el7.elrepo.x86_64.rpm: 头V4 DSA/SHA256 Signature, 密钥 ID baadae52: NOKEY

准备中... ################################# [100%]

正在升级/安装...

1:kernel-lt-5.4.197-1.el7.elrepo ################################# [100%]内核升级完毕后,需要我们修改内核的启动顺序,默认启动的顺序应该为1,升级以后内核是往前面插入为0,设置GRUB_DEFAULT=0。一般新安装的内核在第一个位置,所以设置default=0,意思是 GRUB 初始化页面的第一个内核将作为默认内核。

默认的grub文件,GRUB_DEFAULT=saved。

shell

[root@etcd2 ~]# cat /etc/default/grub

GRUB_TIMEOUT=5

GRUB_DISTRIBUTOR="$(sed 's, release .*$,,g' /etc/system-release)"

GRUB_DEFAULT=saved

GRUB_DISABLE_SUBMENU=true

GRUB_TERMINAL_OUTPUT="gfxterm"

GRUB_CMDLINE_LINUX="rhgb quiet nomodeset"

GRUB_DISABLE_RECOVERY="true"使 GRUB_DEFAULT=0。

shell

[root@etcd2 ~]# vim /etc/default/grub

[root@etcd2 ~]# cat /etc/default/grub

GRUB_TIMEOUT=5

GRUB_DISTRIBUTOR="$(sed 's, release .*$,,g' /etc/system-release)"

GRUB_DEFAULT=0

GRUB_DISABLE_SUBMENU=true

GRUB_TERMINAL_OUTPUT="gfxterm"

GRUB_CMDLINE_LINUX="rhgb quiet nomodeset"

GRUB_DISABLE_RECOVERY="true"设置默认启动内核,grub2-set-default 0和/etc/default/grub文件里的GRUB_DEFAULT=0意思一样。

shell

[root@etcd2 ~]# grub2-set-default 0查看所有的内核。

shell

[root@etcd2 ~]# awk -F\' '$1=="menuentry " {print i++ " : " $2}' /boot/grub2/grub.cfg

0 : CentOS Linux 7 Rescue 12667e2174a8483e915fd89a3bc359fc (5.4.197-1.el7.elrepo.x86_64)

1 : CentOS Linux (5.4.197-1.el7.elrepo.x86_64) 7 (Core)

2 : CentOS Linux (3.10.0-693.el7.x86_64) 7 (Core)

3 : CentOS Linux (0-rescue-80c608ceab5342779ba1adc2ac29c213) 7 (Core)重新生成grub配置文件。

shell

[root@etcd2 ~]# vim /boot/grub2/grub.cfg

[root@etcd2 ~]# grub2-mkconfig -o /boot/grub2/grub.cfg

Generating grub configuration file ...

Found linux image: /boot/vmlinuz-5.4.197-1.el7.elrepo.x86_64

Found initrd image: /boot/initramfs-5.4.197-1.el7.elrepo.x86_64.img

Found linux image: /boot/vmlinuz-3.10.0-693.el7.x86_64

Found initrd image: /boot/initramfs-3.10.0-693.el7.x86_64.img

Found linux image: /boot/vmlinuz-0-rescue-12667e2174a8483e915fd89a3bc359fc

Found initrd image: /boot/initramfs-0-rescue-12667e2174a8483e915fd89a3bc359fc.img

Found linux image: /boot/vmlinuz-0-rescue-80c608ceab5342779ba1adc2ac29c213

Found initrd image: /boot/initramfs-0-rescue-80c608ceab5342779ba1adc2ac29c213.img

done重启并查看内核版本。

shell

[root@etcd2 ~]# reboot可以看到内核升级成功。

shell

[root@etcd2 ~]# uname -r

5.4.197-1.el7.elrepo.x86_64

[root@etcd2 ~]# uname -rs

Linux 5.4.197-1.el7.elrepo.x86_647.3 安装gvisor

查看CPU架构。

shell

[root@etcd2 ~]# uname -m

x86_64下载runsc,containerd-shim-runsc-v1,以及对应的校验和:runsc.sha512,containerd-shim-runsc-v1.sha512。

shell

[root@etcd2 ~]# wget https://storage.googleapis.com/gvisor/releases/release/latest/x86_64/runsc https://storage.googleapis.com/gvisor/releases/release/latest/x86_64/runsc.sha512 https://storage.googleapis.com/gvisor/releases/release/latest/x86_64/containerd-shim-runsc-v1 https://storage.googleapis.com/gvisor/releases/release/latest/x86_64/containerd-shim-runsc-v1.sha512

[root@etcd2 ~]# ll -h runsc* containerd-shim*

-rw-r--r-- 1 root root 25M 5月 17 00:22 containerd-shim-runsc-v1

-rw-r--r-- 1 root root 155 5月 17 00:22 containerd-shim-runsc-v1.sha512

-rw-r--r-- 1 root root 38M 5月 17 00:22 runsc

-rw-r--r-- 1 root root 136 5月 17 00:22 runsc.sha512使用sha512sum校验文件是否完整。

shell

[root@etcd2 ~]# sha512sum -c runsc.sha512 -c containerd-shim-runsc-v1.sha512

runsc: 确定

containerd-shim-runsc-v1: 确定

[root@etcd2 ~]# cat *sha512

f24834bbd4d14d0d0827e31276ff74a1e08b7ab366c4a30fe9c30d656c1ec5cbfc2544fb06698b4749791e0c6f80e6d16ec746963ff6ecebc246dc6e5b2f34ba containerd-shim-runsc-v1

e5bc1c46d021246a69174aae71be93ff49661ff08eb6a957f7855f36076b44193765c966608d11a99f14542612438634329536d88fccb4b12bdd9bf2af20557f runsc授予可执行权限。

shell

[root@etcd2 ~]# chmod a+rx runsc containerd-shim-runsc-v1把文件移动到/usr/local/bin目录下。

shell

[root@etcd2 ~]# mv runsc containerd-shim-runsc-v1 /usr/local/bin安装gvisor。

shell

[root@etcd2 ~]# /usr/local/bin/runsc install

2022/06/07 13:04:16 Added runtime "runsc" with arguments [] to "/etc/docker/daemon.json".安装gvisor之后,/etc/docker/daemon.json文件会新增runtimes:runsc: "path": "/usr/local/bin/runsc"。

注意:/etc/docker/daemon.json文件里的"runtimes":"runsc",runsc可以更改为其他名字,比如:"runtimes":"gvisor"。

json

[root@etcd2 ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": [

"https://frz7i079.mirror.aliyuncs.com"

],

"runtimes": {

"runsc": {

"path": "/usr/local/bin/runsc"

}

}

}重新加载配置文件并重启docker。

shell

[root@etcd2 ~]# systemctl daemon-reload ;systemctl restart docker 查看runtime,可以发现Runtimes里现在已经有runsc了,说明现在docker是支持gvisor这个runtime的。

shell

[root@etcd2 ~]# docker info | grep -i runtime

Runtimes: io.containerd.runc.v2 io.containerd.runtime.v1.linux runc runsc

Default Runtime: runc查看runsc版本。

shell

[root@etcd2 ~]# runsc --version

runsc version release-20220510.0

spec: 1.0.2-dev7.4 配置docker默认的runtime为gVisor

查看docker状态。

shell

[root@etcd2 ~]# systemctl status docker

● docker.service - Docker Application Container Engine

Loaded: loaded (/usr/lib/systemd/system/docker.service; enabled; vendor preset: disabled)

Active: active (running) since 二 2022-06-07 17:02:18 CST; 12min ago

Docs: https://docs.docker.com

Main PID: 1109 (dockerd)

Memory: 130.7M

CGroup: /system.slice/docker.service

└─1109 /usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sockdocker启动参数如下:

shell

[root@etcd2 ~]# cat /usr/lib/systemd/system/docker.service | grep ExecStart

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock查看docker帮助,--default-runtime可以指定docker的Default Runtime。

shell

[root@etcd2 ~]# dockerd --help | grep default-runtime

--default-runtime string Default OCI runtime for containers (default "runc")现在需要修改docker的启动参数ExecStart,指定docker默认使用runsc作为runtime。

shell

[root@etcd2 ~]# vim /usr/lib/systemd/system/docker.service

#--default-runtime runsc指定docker的Default Runtime为gvisor

[root@etcd2 ~]# cat /usr/lib/systemd/system/docker.service | grep ExecStart

ExecStart=/usr/bin/dockerd --default-runtime runsc -H fd:// --containerd=/run/containerd/containerd.sock重新加载配置文件并重启docker。

shell

[root@etcd2 ~]# systemctl daemon-reload ; systemctl restart docker现在docker的Default Runtime就为gvisor了。

shell

[root@etcd2 ~]# docker info | grep -i runtime

Runtimes: io.containerd.runc.v2 io.containerd.runtime.v1.linux runc runsc

Default Runtime: runsc7.5 docker使用gVisor作为runtime创建容器

拉取nginx镜像。

shell

[root@etcd2 ~]# docker pull hub.c.163.com/library/nginx:latest

latest: Pulling from library/nginx

5de4b4d551f8: Pull complete

d4b36a5e9443: Pull complete

0af1f0713557: Pull complete

Digest: sha256:f84932f738583e0169f94af9b2d5201be2dbacc1578de73b09a6dfaaa07801d6

Status: Downloaded newer image for hub.c.163.com/library/nginx:latest

hub.c.163.com/library/nginx:latest

[root@etcd2 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

hub.c.163.com/library/nginx latest 46102226f2fd 5 years ago 109MB使用nginx镜像创建一个容器,默认是使用gVisor(runsc)创建的容器。

如果已经安装了gVisor,但是docker的Default Runtime为runc,则可以使用--runtime=runsc指定gvisor作为runtime创建容器,即:docker run -dit --runtime=runsc --name=nginxweb --restart=always hub.c.163.com/library/nginx:latest。

shell

[root@etcd2 ~]# docker run -dit --name=nginxweb --restart=always hub.c.163.com/library/nginx:latest

9a7b9091d0d07052ae972b480687e7a345ae22e0e4968e91133b1ad6ac1d5b3a查看容器。

shell

[root@etcd2 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

9a7b9091d0d0 hub.c.163.com/library/nginx:latest "nginx -g 'daemon of..." 3 minutes ago Up 3 minutes 80/tcp nginxweb

bc99f286802f quay.io/calico/node:v2.6.12 "start_runit" 3 months ago Up 19 seconds calico-nodegvisor以沙箱的方式运行容器,在宿主机里就看不到容器里运行的进程了。

shell

[root@etcd2 ~]# ps -ef | grep nginx

root 9031 2916 0 17:54 pts/1 00:00:00 grep --color=auto nginx删除容器。

shell

[root@etcd2 ~]# docker rm -f nginxweb

nginxweb

[root@etcd2 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

bc99f286802f quay.io/calico/node:v2.6.12 "start_runit" 3 months ago Up 7 seconds calico-node八.配置containerd使用gvisor作为runtime

8.1 安装containerd

如果你熟悉docker,但是不了解containerd,请查看博客《在centos下使用containerd管理容器:5分钟从docker转型到containerd》,里面有详细讲解。

我们在客户端机器ubuntuk8sclient(ubuntu系统)上安装containerd。

更新软件源。

shell

root@ubuntuk8sclient:~# apt-get update安装containerd。

shell

root@ubuntuk8sclient:~# apt-get -y install containerd.io cri-tools 设置containerd开机自启动并现在启动containerd。

shell

root@ubuntuk8sclient:~# systemctl enable containerd --now查看containerd状态。

shell

root@ubuntuk8sclient:~# systemctl is-active containerd

active

root@ubuntuk8sclient:~# systemctl status containerd

● containerd.service - containerd container runtime

Loaded: loaded (/lib/systemd/system/containerd.service; enabled; vendor preset: enabled)

Active: active (running) since Sat 2022-06-04 15:54:08 CST; 58min ago

Docs: https://containerd.io

Main PID: 722 (containerd)

Tasks: 8

CGroup: /system.slice/containerd.service

└─722 /usr/bin/containerdcontainerd的配置文件为/etc/containerd/config.toml 。

shell

root@ubuntuk8sclient:~# ll -h /etc/containerd/config.toml

-rw-r--r-- 1 root root 886 May 4 17:04 /etc/containerd/config.tomlcontainerd的默认配置文件/etc/containerd/config.toml 内容如下:

toml

root@ubuntuk8sclient:~# cat /etc/containerd/config.toml

# Copyright 2018-2022 Docker Inc.

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

# http://www.apache.org/licenses/LICENSE-2.0

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

disabled_plugins = ["cri"]

#root = "/var/lib/containerd"

#state = "/run/containerd"

#subreaper = true

#oom_score = 0

#[grpc]

# address = "/run/containerd/containerd.sock"

# uid = 0

# gid = 0

#[debug]

# address = "/run/containerd/debug.sock"

# uid = 0

# gid = 0

# level = "info"可以使用containerd config default > /etc/containerd/config.toml生成默认的配置文件,containerd config default生成的配置文件内容还是挺多的。

shell

root@ubuntuk8sclient:~# containerd config default > /etc/containerd/config.toml

root@ubuntuk8sclient:~# vim /etc/containerd/config.toml containerd config dump显示当前的配置。

toml

root@ubuntuk8sclient:~# containerd config dump

disabled_plugins = []

imports = ["/etc/containerd/config.toml"]

oom_score = 0

plugin_dir = ""

required_plugins = []

root = "/var/lib/containerd"

......

......

address = ""

gid = 0

uid = 0查看containerd版本。

shell

root@ubuntuk8sclient:~# containerd --version

containerd containerd.io 1.6.4 212e8b6fa2f44b9c21b2798135fc6fb7c53efc16

root@ubuntuk8sclient:~# containerd -v

containerd containerd.io 1.6.4 212e8b6fa2f44b9c21b2798135fc6fb7c53efc16修改配置文件,添加阿里云镜像加速器。

toml

root@ubuntuk8sclient:~# vim /etc/containerd/config.toml

root@ubuntuk8sclient:~# grep endpoint /etc/containerd/config.toml

endpoint = "https://frz7i079.mirror.aliyuncs.com"SystemdCgroup = false修改为SystemdCgroup = true。

toml

root@ubuntuk8sclient:~# vim /etc/containerd/config.toml

root@ubuntuk8sclient:~# grep SystemdCgroup -B 11 /etc/containerd/config.toml

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

BinaryName = ""

CriuImagePath = ""

CriuPath = ""

CriuWorkPath = ""

IoGid = 0

IoUid = 0

NoNewKeyring = false

NoPivotRoot = false

Root = ""

ShimCgroup = ""

SystemdCgroup = true有个sandbox的镜像,k8s.gcr.io/pause:3.6访问不了。

shell

root@ubuntuk8sclient:~# grep sandbox_image /etc/containerd/config.toml

sandbox_image = "k8s.gcr.io/pause:3.6"修改sandbox镜像为可以访问的阿里云镜像。

shell

root@ubuntuk8sclient:~# vim /etc/containerd/config.toml

root@ubuntuk8sclient:~# grep sandbox_image /etc/containerd/config.toml

sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.6"重新加载配置文件并重启containerd服务。

shell

root@ubuntuk8sclient:~# systemctl daemon-reload ; systemctl restart containerdcontainerd 客户端工具有 ctr 和 crictl ,如果使用 crictl 命令的话,需要执行 crictl config runtime-endpoint unix:///var/run/containerd/containerd.sock ,不然会有告警。

shell

root@ubuntuk8sclient:~# crictl config runtime-endpoint unix:///var/run/containerd/containerd.sock查看containerd信息。

json

root@ubuntuk8sclient:~# crictl info

{

"status": {

"conditions": [

{

"type": "RuntimeReady",

"status": true,

"reason": "",

"message": ""

},

......

"enableUnprivilegedPorts": false,

"enableUnprivilegedICMP": false,

"containerdRootDir": "/var/lib/containerd",

"containerdEndpoint": "/run/containerd/containerd.sock",

"rootDir": "/var/lib/containerd/io.containerd.grpc.v1.cri",

"stateDir": "/run/containerd/io.containerd.grpc.v1.cri"

},

"golang": "go1.17.9",

"lastCNILoadStatus": "cni config load failed: no network config found in /etc/cni/net.d: cni plugin not initialized: failed to load cni config",

"lastCNILoadStatus.default": "cni config load failed: no network config found in /etc/cni/net.d: cni plugin not initialized: failed to load cni config"

}containerd里有命名空间的概念,docker里没有命名空间,对于containerd,在default命名空间里拉取的镜像和创建的容器,在其他命名空间是看不到的,如果这个containerd节点加入到k8s环境中,则k8s默认使用k8s.io这个命名空间。

查看命名空间。

shell

root@ubuntuk8sclient:~# ctr ns list

NAME LABELS

moby

plugins.moby 查看镜像。

shell

root@ubuntuk8sclient:~# ctr i list

REF TYPE DIGEST SIZE PLATFORMS LABELS

root@ubuntuk8sclient:~# crictl images

IMAGE TAG IMAGE ID SIZE使用crictl拉取镜像。

shell

root@ubuntuk8sclient:~# crictl pull nginx

Image is up to date for sha256:0e901e68141fd02f237cf63eb842529f8a9500636a9419e3cf4fb986b8fe3d5d

root@ubuntuk8sclient:~# crictl images

IMAGE TAG IMAGE ID SIZE

docker.io/library/nginx latest 0e901e68141fd 56.7MBctr和crictl更多命令细节,请查看博客《在centos下使用containerd管理容器:5分钟从docker转型到containerd》。

containerd 客户端工具 ctr 和 crictl 不好用,推荐使用nerdctl,nerdctl是containerd的cli客户端工具,与docker cli大部分兼容,用法类似docker命令。

使用nerdctl命令需要两个安装包nerdctl-0.20.0-linux-amd64.tar.gz和cni-plugins-linux-amd64-v1.1.1.tgz。

nerdctl-0.20.0-linux-amd64.tar.gz下载地址:https://github.com/containerd/nerdctl/releases 。

网络插件cni-plugins-linux-amd64-v1.1.1.tgz下载地址:https://github.com/containernetworking/plugins/releases 。

shell

root@ubuntuk8sclient:~# ll -h cni-plugins-linux-amd64-v1.1.1.tgz nerdctl-0.20.0-linux-amd64.tar.gz

-rw-r--r-- 1 root root 35M Jun 5 12:19 cni-plugins-linux-amd64-v1.1.1.tgz

-rw-r--r-- 1 root root 9.8M Jun 5 12:15 nerdctl-0.20.0-linux-amd64.tar.gz分别进行解压。

shell

root@ubuntuk8sclient:~# tar xf nerdctl-0.20.0-linux-amd64.tar.gz -C /usr/local/bin/

root@ubuntuk8sclient:~# ls /usr/local/bin/

containerd-rootless-setuptool.sh containerd-rootless.sh nerdctl

root@ubuntuk8sclient:~# mkdir -p /opt/cni/bin

root@ubuntuk8sclient:~# tar xf cni-plugins-linux-amd64-v1.1.1.tgz -C /opt/cni/bin/

root@ubuntuk8sclient:~# ls /opt/cni/bin/

bandwidth bridge dhcp firewall host-device host-local ipvlan loopback macvlan portmap ptp sbr static tuning vlan vrf配置nerdctl命令tab自动补全,添加source <(nerdctl completion bash)。

shell

root@ubuntuk8sclient:~# vim /etc/profile

root@ubuntuk8sclient:~# cat /etc/profile | head -3

# /etc/profile: system-wide .profile file for the Bourne shell (sh(1))

# and Bourne compatible shells (bash(1), ksh(1), ash(1), ...).

source <(nerdctl completion bash)

root@ubuntuk8sclient:~# nerdctl completion bash使配置文件/etc/profile生效。

shell

root@ubuntuk8sclient:~# source /etc/profile查看镜像。

shell

root@ubuntuk8sclient:~# nerdctl images

REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE查看命名空间。

shell

root@ubuntuk8sclient:~# nerdctl ns list

NAME CONTAINERS IMAGES VOLUMES LABELS

default 0 0 0

k8s.io 0 4 0

moby 0 0 0

plugins.moby 0 0 0 nerdctl的命令和docker命令很相似,只要把docker命令里的docker换成nerdctl,基本都能执行成功。

拉取镜像。

shell

root@ubuntuk8sclient:~# nerdctl pull hub.c.163.com/library/nginx:latest

root@ubuntuk8sclient:~# nerdctl images

REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE

hub.c.163.com/library/nginx latest 8eeb06742b41 22 seconds ago linux/amd64 115.5 MiB 41.2 MiB查看containerd信息。

shell

root@ubuntuk8sclient:~# nerdctl info 8.2 安装gVisor

Note: gVisor supports x86_64 and ARM64, and requires Linux 4.14.77+,gvisor要求内核版本大于4.14.77,此机器版本为4.15.0-112-generic,因此不用升级内核。如果需要升级内核,请参考博客《centos7 离线升级/在线升级操作系统内核》。

shell

root@ubuntuk8sclient:~# uname -r

4.15.0-112-generic下载gvisor对应的可执行文件。

shell

root@ubuntuk8sclient:~# wget https://storage.googleapis.com/gvisor/releases/release/latest/x86_64/runsc https://storage.googleapis.com/gvisor/releases/release/latest/x86_64/runsc.sha512 https://storage.googleapis.com/gvisor/releases/release/latest/x86_64/containerd-shim-runsc-v1 https://storage.googleapis.com/gvisor/releases/release/latest/x86_64/containerd-shim-runsc-v1.sha512

root@ubuntuk8sclient:~# ll -h runsc* containerd-shim*

-rwxr-xr-x 1 root root 25M Jun 7 18:24 containerd-shim-runsc-v1*

-rw-r--r-- 1 root root 155 Jun 7 18:24 containerd-shim-runsc-v1.sha512

-rwxr-xr-x 1 root root 38M Jun 7 18:24 runsc*

-rw-r--r-- 1 root root 136 Jun 7 18:24 runsc.sha512进行文件校验。

shell

root@ubuntuk8sclient:~# sha512sum -c runsc.sha512 -c containerd-shim-runsc-v1.sha512

runsc: OK

containerd-shim-runsc-v1: OK

root@ubuntuk8sclient:~# cat *sha512

f24834bbd4d14d0d0827e31276ff74a1e08b7ab366c4a30fe9c30d656c1ec5cbfc2544fb06698b4749791e0c6f80e6d16ec746963ff6ecebc246dc6e5b2f34ba containerd-shim-runsc-v1

e5bc1c46d021246a69174aae71be93ff49661ff08eb6a957f7855f36076b44193765c966608d11a99f14542612438634329536d88fccb4b12bdd9bf2af20557f runsc授予可执行权限并移动到/usr/local/bin目录。

shell

root@ubuntuk8sclient:~# chmod a+rx runsc containerd-shim-runsc-v1

root@ubuntuk8sclient:~# mv runsc containerd-shim-runsc-v1 /usr/local/bin可以发现现在containerd只支持runc一种runtime。

shell

root@ubuntuk8sclient:~# crictl info | grep -A10 runtimes

"runtimes": {

"runc": {

"runtimeType": "io.containerd.runc.v2",

"runtimePath": "",

"runtimeEngine": "",

"PodAnnotations": [],

"ContainerAnnotations": [],

"runtimeRoot": "",

"options": {

"BinaryName": "",

"CriuImagePath": "",8.3 配置containerd支持gVisor

需要先修改配置文件,使containerd支持多种runtime。

原本的内容是plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc,新添加plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runsc使containerd支持gvisor,runtime_type = "containerd-shim-runsc-v1"就是我们下载的containerd-shim-runsc-v1文件。

runtime_type = "containerd-shim-runsc-v1"这种写法后面验证了一下,在containerd里创建容器没问题,但是到k8s里就有问题,正确的写法应该是:runtime_type = "io.containerd.runsc.v1"。

toml

root@ubuntuk8sclient:~# vim /etc/containerd/config.toml

root@ubuntuk8sclient:~# cat /etc/containerd/config.toml | grep -A27 "containerd.runtimes.runc"

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc]

base_runtime_spec = ""

cni_conf_dir = ""

cni_max_conf_num = 0

container_annotations = []

pod_annotations = []

privileged_without_host_devices = false

runtime_engine = ""

runtime_path = ""

runtime_root = ""

runtime_type = "io.containerd.runc.v2"

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

BinaryName = ""

CriuImagePath = ""

CriuPath = ""

CriuWorkPath = ""

IoGid = 0

IoUid = 0

NoNewKeyring = false

NoPivotRoot = false

Root = ""

ShimCgroup = ""

SystemdCgroup = true

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runsc]

base_runtime_spec = ""

cni_conf_dir = ""

cni_max_conf_num = 0

container_annotations = []

pod_annotations = []

privileged_without_host_devices = false

runtime_engine = ""

runtime_path = ""

runtime_root = ""

runtime_type = "io.containerd.runsc.v1"重新加载配置文件并重启containerd。

shell

root@ubuntuk8sclient:~# systemctl daemon-reload ;systemctl restart containerd现在就可以看到containerd支持两种runtime了:runc和runsc。

shell

root@ubuntuk8sclient:~# crictl info | grep -A36 runtimes

"runtimes": {

"runc": {

"runtimeType": "io.containerd.runc.v2",

"runtimePath": "",

"runtimeEngine": "",

"PodAnnotations": [],

"ContainerAnnotations": [],

"runtimeRoot": "",

"options": {

"BinaryName": "",

"CriuImagePath": "",

"CriuPath": "",

"CriuWorkPath": "",

"IoGid": 0,

"IoUid": 0,

"NoNewKeyring": false,

"NoPivotRoot": false,

"Root": "",

"ShimCgroup": "",

"SystemdCgroup": true

},

"privileged_without_host_devices": false,

"baseRuntimeSpec": "",

"cniConfDir": "",

"cniMaxConfNum": 0

},

"runsc": {

"runtimeType": "containerd-shim-runsc-v1",

"runtimePath": "",

"runtimeEngine": "",

"PodAnnotations": [],

"ContainerAnnotations": [],

"runtimeRoot": "",

"options": null,

"privileged_without_host_devices": false,

"baseRuntimeSpec": "",

"cniConfDir": "",查看容器。

shell

root@ubuntuk8sclient:~# nerdctl ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES查看镜像。

shell

root@ubuntuk8sclient:~# nerdctl images

REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE

hub.c.163.com/library/nginx latest 8eeb06742b41 2 days ago linux/amd64 115.5 MiB 41.2 MiB

sha256 e5bc191dff1f971254305a0dbc58c4145c783e34090bbd4360a36d7447fe3ef2 8eeb06742b41 2 days ago linux/amd64 115.5 MiB 41.2 MiB使用nginx镜像创建容器,默认使用runc作为runtime。

shell

root@ubuntuk8sclient:~# nerdctl run -d --name=nginxweb --restart=always hub.c.163.com/library/nginx:latest

bdef5e3fa6e6fb7c08f4df19810a42c81b7bc1bf7a16b3beaca53508ac4cedab查看容器。

shell

root@ubuntuk8sclient:~# nerdctl ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

bdef5e3fa6e6 hub.c.163.com/library/nginx:latest "nginx -g daemon off;" 4 seconds ago Up nginxweb containerd默认使用runc作为runtime创建的容器,会共享宿主机的进程空间和内核空间,容器的进程是暴露给宿主机的,如果容器里存在漏洞,不法分子会使用容器漏洞影响到宿主机的安全。

shell

root@ubuntuk8sclient:~# ps -ef | grep nginx

root 6540 6505 0 21:36 ? 00:00:00 nginx: master process nginx -g daemon off;

systemd+ 6625 6540 0 21:36 ? 00:00:00 nginx: worker process

root 6634 6251 0 21:36 pts/1 00:00:00 grep --color=auto nginx删除容器。

shell

root@ubuntuk8sclient:~# nerdctl rm -f nginxweb

nginxweb删除容器之后,宿主机就看不到nginx进程了。

shell

root@ubuntuk8sclient:~# nerdctl ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

root@ubuntuk8sclient:~# ps -ef | grep nginx

root 6726 6251 0 21:38 pts/1 00:00:00 grep --color=auto nginx8.4 containerd使用gvisor作为runtime创建容器

创建容器,--runtime=runsc指定containerd使用gvisor作为runtime创建容器。

shell

root@ubuntuk8sclient:~# nerdctl run -d --runtime=runsc --name=nginxweb --restart=always hub.c.163.com/library/nginx:latest

8ea86e8936374efbb626d11f79a9cb79fb32d9a44fafd71c02556a5ae842cac7containerd使用gvisor作为runtime,以沙箱的方式运行容器,在宿主机里就看不到容器里运行的进程了。

shell

root@ubuntuk8sclient:~# nerdctl ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

8ea86e893637 hub.c.163.com/library/nginx:latest "nginx -g daemon off;" 51 seconds ago Up nginxweb

root@ubuntuk8sclient:~# ps -ef | grep nginx

root 7153 6251 0 21:41 pts/1 00:00:00 grep --color=auto nginx删除不了正在运行的容器。

shell

root@ubuntuk8sclient:~# nerdctl rm -f nginxweb

WARN[0000] failed to delete task 8ea86e8936374efbb626d11f79a9cb79fb32d9a44fafd71c02556a5ae842cac7 error="unknown error after kill: runsc did not terminate successfully: exit status 128: sandbox is not running\n: unknown"

WARN[0000] failed to remove container "8ea86e8936374efbb626d11f79a9cb79fb32d9a44fafd71c02556a5ae842cac7" error="cannot delete running task 8ea86e8936374efbb626d11f79a9cb79fb32d9a44fafd71c02556a5ae842cac7: failed precondition"

WARN[0000] failed to remove container "8ea86e8936374efbb626d11f79a9cb79fb32d9a44fafd71c02556a5ae842cac7" error="cannot delete running task 8ea86e8936374efbb626d11f79a9cb79fb32d9a44fafd71c02556a5ae842cac7: failed precondition"

WARN[0000] failed to release name store for container "8ea86e8936374efbb626d11f79a9cb79fb32d9a44fafd71c02556a5ae842cac7" error="cannot delete running task 8ea86e8936374efbb626d11f79a9cb79fb32d9a44fafd71c02556a5ae842cac7: failed precondition"

FATA[0000] cannot delete running task 8ea86e8936374efbb626d11f79a9cb79fb32d9a44fafd71c02556a5ae842cac7: failed precondition 先停止容器,再删除容器。

shell

root@ubuntuk8sclient:~# nerdctl stop nginxweb

nginxweb

root@ubuntuk8sclient:~# nerdctl rm nginxweb

root@ubuntuk8sclient:~# nerdctl ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES九.在k8s环境里,配置containerd作为高级别runtime,containerd使用gvisor作为低级别runtime

9.1 把ubuntuk8sclient节点加入k8s集群

注意docker作为k8s的高级别runtime的时候,不支持gvisor作为docker的低级别runtime,只有单机版的时候,gvisor才能作为docker的低级别runtime。

描述一下当前的系统环境:现在有一个k8s集群,1个master,2个worker,三台机器都是使用docker作为高级别runtime,现在添加一个新的worker节点,新的worker节点使用containerd作为高级别runtime,gvisor作为containerd的低级别runtime。

现在把ubuntuk8sclient机器加入k8s集群,ubuntuk8sclient的CONTAINER-RUNTIME为containerd。

查看集群节点。

shell

root@k8scludes1:~# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8scludes1 Ready control-plane,master 55d v1.22.2 192.168.110.128 <none> Ubuntu 18.04.5 LTS 4.15.0-112-generic docker://20.10.14

k8scludes2 Ready <none> 55d v1.22.2 192.168.110.129 <none> Ubuntu 18.04.5 LTS 4.15.0-112-generic docker://20.10.14

k8scludes3 Ready <none> 55d v1.22.2 192.168.110.130 <none> Ubuntu 18.04.5 LTS 4.15.0-112-generic docker://20.10.14先在所有的机器配置IP主机名映射(以ubuntuk8sclient为例)。

shell

root@ubuntuk8sclient:~# vim /etc/hosts

root@ubuntuk8sclient:~# cat /etc/hosts

127.0.0.1 localhost

127.0.1.1 tom

192.168.110.139 ubuntuk8sclient

192.168.110.128 k8scludes1

192.168.110.129 k8scludes2

192.168.110.130 k8scludes3

# The following lines are desirable for IPv6 capable hosts

::1 localhost ip6-localhost ip6-loopback

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters配置软件源,软件源如下,最后三行是k8s源。

shell

root@ubuntuk8sclient:~# cat /etc/apt/sources.list

deb http://mirrors.aliyun.com/ubuntu/ bionic main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ bionic main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ bionic-security main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ bionic-security main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ bionic-updates main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ bionic-updates main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ bionic-proposed main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ bionic-proposed main restricted universe multiverse

deb http://mirrors.aliyun.com/ubuntu/ bionic-backports main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ bionic-backports main restricted universe multiverse

deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main

deb [arch=amd64] https://mirrors.aliyun.com/docker-ce/linux/ubuntu bionic stable

# deb-src [arch=amd64] https://mirrors.aliyun.com/docker-ce/linux/ubuntu bionic stableapt-key.gpg是k8s的deb源公钥,加载k8s的deb源公钥 apt-key add apt-key.gpg。

下载并加载k8s的deb源公钥:curl -s https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo apt-key add - ; apt-get update。但是谷歌的网址访问不了,我们直接去网上下载apt-key.gpg文件,加载k8s的deb源公钥。

shell

root@ubuntuk8sclient:~# cat apt-key.gpg | apt-key add -

OK更新软件源。

shell

root@ubuntuk8sclient:~# apt-get updateLinux swapoff命令用于关闭系统交换分区(swap area)。如果不关闭swap,就会在kubeadm初始化Kubernetes的时候报错:"[ERROR Swap]: running with swap on is not supported. Please disable swap"。

shell

root@ubuntuk8sclient:~# swapoff -a ;sed -i '/swap/d' /etc/fstab

root@ubuntuk8sclient:~# cat /etc/fstab

# /etc/fstab: static file system information.

#

# Use 'blkid' to print the universally unique identifier for a

# device; this may be used with UUID= as a more robust way to name devices

# that works even if disks are added and removed. See fstab(5).

#

# <file system> <mount point> <type> <options> <dump> <pass>

/dev/mapper/tom--vg-root / ext4 errors=remount-ro 0 1查看containerd版本。

shell

root@ubuntuk8sclient:~# containerd -v

containerd containerd.io 1.6.4 212e8b6fa2f44b9c21b2798135fc6fb7c53efc16registry.aliyuncs.com/google_containers/pause:3.6这个镜像需要提前拉取好。

shell

root@ubuntuk8sclient:~# cat /etc/containerd/config.toml | grep pause

pause_threshold = 0.02

sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.6"拉取镜像。

shell

root@ubuntuk8sclient:~# nerdctl pull registry.aliyuncs.com/google_containers/pause:3.6查看镜像。

shell

root@ubuntuk8sclient:~# nerdctl images | grep pause

registry.aliyuncs.com/google_containers/pause 3.6 3d380ca88645 3 days ago linux/amd64 672.0 KiB 294.7 KiB

root@ubuntuk8sclient:~# crictl images | grep pause

registry.aliyuncs.com/google_containers/pause 3.6 6270bb605e12e 302kB

shell

root@ubuntuk8sclient:~# cat /etc/nerdctl/nerdctl.toml | head -3

namespace = "k8s.io"加载overlay和br_netfilter模块。

shell

root@ubuntuk8sclient:~# cat > /etc/modules-load.d/containerd.conf <<EOF

> overlay

> br_netfilter

> EOF

root@ubuntuk8sclient:~# cat /etc/modules-load.d/containerd.conf

overlay

br_netfilter

root@ubuntuk8sclient:~# modprobe overlay

root@ubuntuk8sclient:~# modprobe br_netfilter设置iptables不对bridge的数据进行处理,启用IP路由转发功能。

shell

root@ubuntuk8sclient:~# cat <<EOF> /etc/sysctl.d/k8s.conf

> net.bridge.bridge-nf-call-ip6tables = 1

> net.bridge.bridge-nf-call-iptables = 1

> net.ipv4.ip_forward = 1

> EOF使配置生效。

shell

root@ubuntuk8sclient:~# sysctl -p /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1为了k8s节点间的通信,需要安装cni网络插件,提前下载好calico镜像,calico镜像版本要和k8s的那三个节点的calico版本一致。

shell

root@ubuntuk8sclient:~# nerdctl pull docker.io/calico/cni:v3.22.2

root@ubuntuk8sclient:~# nerdctl pull docker.io/calico/pod2daemon-flexvol:v3.22.2

root@ubuntuk8sclient:~# nerdctl pull docker.io/calico/node:v3.22.2

root@ubuntuk8sclient:~# nerdctl pull docker.io/calico/kube-controllers:v3.22.2

root@ubuntuk8sclient:~# nerdctl images | grep calico

calico/cni v3.22.2 757d06fe361c 4 minutes ago linux/amd64 227.1 MiB 76.8 MiB

calico/kube-controllers v3.22.2 751f1a8ba0af 20 seconds ago linux/amd64 128.1 MiB 52.4 MiB

calico/node v3.22.2 41aac6d0a440 2 minutes ago linux/amd64 194.2 MiB 66.5 MiB

calico/pod2daemon-flexvol v3.22.2 413c5ebad6a5 3 minutes ago linux/amd64 19.0 MiB 8.0 MiB安装kubelet,kubeadm,kubectl。

- Kubelet 是 kubernetes 工作节点上的一个代理组件,运行在每个节点上;

- Kubeadm 是一个快捷搭建kubernetes(k8s)的安装工具,它提供了 kubeadm init 以及 kubeadm join 这两个命令来快速创建 kubernetes 集群;kubeadm 通过执行必要的操作来启动和运行一个最小可用的集群;

- kubectl是Kubernetes集群的命令行工具,通过kubectl能够对集群本身进行管理,并能够在集群上进行容器化应用的安装部署。

shell

root@ubuntuk8sclient:~# apt-get -y install kubelet=1.22.2-00 kubeadm=1.22.2-00 kubectl=1.22.2-00设置kubelet开机自启动并现在启动。

shell

root@ubuntuk8sclient:~# systemctl enable kubelet --now在k8s的master节点,查看k8s worker节点加入k8s集群的token。

shell

root@k8scludes1:~# kubeadm token create --print-join-command

kubeadm join 192.168.110.128:6443 --token rwau00.plx8xdksa8zdnfrn --discovery-token-ca-cert-hash sha256:3f401b6187ed44ff8f4b50aa6453cf3eacc3b86d6a72e3bf2caba02556cb918e 把ubuntuk8sclient节点加入k8s集群。

shell

root@ubuntuk8sclient:~# kubeadm join 192.168.110.128:6443 --token rwau00.plx8xdksa8zdnfrn --discovery-token-ca-cert-hash sha256:3f401b6187ed44ff8f4b50aa6453cf3eacc3b86d6a72e3bf2caba02556cb918e

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.去k8s master节点查看是否加入k8s集群,可以看到ubuntuk8sclient成功加入k8s集群,并且CONTAINER-RUNTIME为containerd://1.6.4。

shell

root@k8scludes1:~# kubectl get node -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8scludes1 Ready control-plane,master 55d v1.22.2 192.168.110.128 <none> Ubuntu 18.04.5 LTS 4.15.0-112-generic docker://20.10.14

k8scludes2 Ready <none> 55d v1.22.2 192.168.110.129 <none> Ubuntu 18.04.5 LTS 4.15.0-112-generic docker://20.10.14

k8scludes3 Ready <none> 55d v1.22.2 192.168.110.130 <none> Ubuntu 18.04.5 LTS 4.15.0-112-generic docker://20.10.14

ubuntuk8sclient Ready <none> 87s v1.22.2 192.168.110.139 <none> Ubuntu 18.04.5 LTS 4.15.0-112-generic containerd://1.6.4现在需要配置containerd支持多个runtime,使其支持gvisor。

原本的内容是plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc,新添加plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runsc使containerd支持gvisor,runtime_type = "containerd-shim-runsc-v1"就是我们下载的containerd-shim-runsc-v1文件。

toml

root@ubuntuk8sclient:~# cat /etc/containerd/config.toml | grep -A27 "containerd.runtimes.runc"

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc]

base_runtime_spec = ""

cni_conf_dir = ""

cni_max_conf_num = 0

container_annotations = []

pod_annotations = []

privileged_without_host_devices = false

runtime_engine = ""

runtime_path = ""

runtime_root = ""

runtime_type = "io.containerd.runc.v2"

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

BinaryName = ""

CriuImagePath = ""

CriuPath = ""

CriuWorkPath = ""

IoGid = 0

IoUid = 0

NoNewKeyring = false

NoPivotRoot = false

Root = ""

ShimCgroup = ""

SystemdCgroup = true

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runsc]

base_runtime_spec = ""

cni_conf_dir = ""

cni_max_conf_num = 0

container_annotations = []

pod_annotations = []

privileged_without_host_devices = false

runtime_engine = ""

runtime_path = ""

runtime_root = ""

runtime_type = "containerd-shim-runsc-v1"重新加载配置文件并重启containerd。

shell

root@ubuntuk8sclient:~# systemctl daemon-reload ;systemctl restart containerd 现在就可以看到containerd支持两种runtime了:runc和runsc。

json

root@ubuntuk8sclient:~# crictl info | grep -A36 runtimes

"runtimes": {

"runc": {

"runtimeType": "io.containerd.runc.v2",

"runtimePath": "",

"runtimeEngine": "",

"PodAnnotations": [],

"ContainerAnnotations": [],

"runtimeRoot": "",

"options": {

"BinaryName": "",

"CriuImagePath": "",

"CriuPath": "",

"CriuWorkPath": "",

"IoGid": 0,

"IoUid": 0,

"NoNewKeyring": false,

"NoPivotRoot": false,

"Root": "",

"ShimCgroup": "",

"SystemdCgroup": true

},

"privileged_without_host_devices": false,

"baseRuntimeSpec": "",

"cniConfDir": "",

"cniMaxConfNum": 0

},

"runsc": {

"runtimeType": "containerd-shim-runsc-v1",

"runtimePath": "",

"runtimeEngine": "",

"PodAnnotations": [],

"ContainerAnnotations": [],

"runtimeRoot": "",

"options": null,

"privileged_without_host_devices": false,

"baseRuntimeSpec": "",

"cniConfDir": "",9.2 配置kubelet使其支持gVisor

配置kubelet,使其可以支持gvisor作为containerd的低级别runtime,修改kubelet参数,让其支持runsc作为runtime。

shell

root@ubuntuk8sclient:~# cat > /etc/systemd/system/kubelet.service.d/0-cri-containerd.conf <<EOF

> [Service]

> Environment="KUBELET_EXTRA_ARGS=--container-runtime=remote --runtime-request-timeout=15m

> --container-runtime-endpoint=unix:///run/containerd/containerd.sock"

> EOF

root@ubuntuk8sclient:~# cat /etc/systemd/system/kubelet.service.d/0-cri-containerd.conf

[Service]

Environment="KUBELET_EXTRA_ARGS=--container-runtime=remote --runtime-request-timeout=15m --container-runtime-endpoint=unix:///run/containerd/containerd.sock" 重新加载配置文件并重启kubelet。

shell

root@ubuntuk8sclient:~# systemctl daemon-reload ; systemctl restart kubelet

root@ubuntuk8sclient:~# systemctl status kubelet

● kubelet.service - kubelet: The Kubernetes Node Agent

Loaded: loaded (/lib/systemd/system/kubelet.service; enabled; vendor preset: enabled)

Drop-In: /etc/systemd/system/kubelet.service.d

└─0-cri-containerd.conf, 10-kubeadm.conf

Active: active (running) since Sat 2022-06-11 18:00:31 CST; 14s ago

Docs: https://kubernetes.io/docs/home/

Main PID: 31685 (kubelet)

Tasks: 13 (limit: 1404)

CGroup: /system.slice/kubelet.service

└─31685 /usr/bin/kubelet --bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf --kubeconfig=/etc/kubernetes/kubelet.conf --config=/var/lib/kubelet/config.yaml --container-runtime=remote --con一切就绪,现在就创建pod。

给ubuntuk8sclient节点定义一个标签:con=gvisor。

shell

root@k8scludes1:~# kubectl label nodes ubuntuk8sclient con=gvisor

node/ubuntuk8sclient labeled

root@k8scludes1:~# kubectl get node -l con=gvisor

NAME STATUS ROLES AGE VERSION

ubuntuk8sclient Ready <none> 29m v1.22.2创建目录存放文件。

shell

root@k8scludes1:~# mkdir containerd-gvisor

root@k8scludes1:~# cd containerd-gvisor/编辑pod配置文件,nodeSelector:con: gvisor 指定pod运行在ubuntuk8sclient节点,使用nginx镜像创建pod。

yaml

root@k8scludes1:~/containerd-gvisor# vim pod.yaml

root@k8scludes1:~/containerd-gvisor# cat pod.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: podtest

name: podtest

spec:

#当需要关闭容器时,立即杀死容器而不等待默认的30秒优雅停机时长。

terminationGracePeriodSeconds: 0

#nodeSelector:con: gvisor 指定pod运行在ubuntuk8sclient节点

nodeSelector:

con: gvisor

containers:

- image: hub.c.163.com/library/nginx:latest

#imagePullPolicy: IfNotPresent:表示如果本地已经存在该镜像,则不重新下载;否则从远程 Docker Hub 下载该镜像

imagePullPolicy: IfNotPresent

name: podtest

resources: {}

dnsPolicy: ClusterFirst

restartPolicy: Always

status: {}创建pod。

shell

root@k8scludes1:~/containerd-gvisor# kubectl apply -f pod.yaml

pod/podtest created

root@k8scludes1:~/containerd-gvisor# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

podtest 1/1 Running 0 16s 10.244.228.1 ubuntuk8sclient <none> <none>创建pod之后,去ubuntuk8sclient查看,看看宿主机是否能看到容器里的nginx进程,宿主机里看到了pod里的nginx进程,这说明pod是默认使用runc作为低级别runtime创建pod的。

shell

root@ubuntuk8sclient:~# ps -ef | grep nginx

root 38308 38227 0 18:15 ? 00:00:00 nginx: master process nginx -g daemon off;

systemd+ 38335 38308 0 18:15 ? 00:00:00 nginx: worker process

root 39009 27377 0 18:17 pts/1 00:00:00 grep --color=auto nginx删除pod。

shell

root@k8scludes1:~/containerd-gvisor# kubectl delete pod podtest

pod "podtest" deleted删除pod之后,宿主机也就没有nginx进程了。

shell

root@ubuntuk8sclient:~# ps -ef | grep nginx

root 40044 27377 0 18:20 pts/1 00:00:00 grep --color=auto nginx9.3 创建容器运行时类(Runtime Class)

在k8s里使用gvisor创建pod,需要使用到容器运行时类(Runtime Class)。

RuntimeClass 是一个用于选择容器运行时配置的特性,容器运行时配置用于运行 Pod 中的容器。你可以在不同的 Pod 设置不同的 RuntimeClass,以提供性能与安全性之间的平衡。 例如,如果你的部分工作负载需要高级别的信息安全保证,你可以决定在调度这些 Pod 时,尽量使它们在使用硬件虚拟化的容器运行时中运行。 这样,你将从这些不同运行时所提供的额外隔离中获益,代价是一些额外的开销。

你还可以使用 RuntimeClass 运行具有相同容器运行时,但具有不同设置的 Pod。

注意RuntimeClass是全局生效的,不受命名空间限制。

查看runtimeclass。

shell

root@k8scludes1:~/containerd-gvisor# kubectl get runtimeclass

No resources found编辑RuntimeClass配置文件,handler后面写runtime的名字,我们要使用gvisor就写runsc。

yaml

root@k8scludes1:~/containerd-gvisor# vim myruntimeclass.yaml

#创建runtimeclass,指定使用runsc

root@k8scludes1:~/containerd-gvisor# cat myruntimeclass.yaml

# RuntimeClass 定义于 node.k8s.io API 组

apiVersion: node.k8s.io/v1

kind: RuntimeClass

metadata:

# 用来引用 RuntimeClass 的名字

# RuntimeClass 是一个集群层面的资源

name: myruntimeclass

# 对应的 CRI 配置的名称

#handler: myconfiguration

#注意:handler后面写runtime的名字,我们要使用gvisor就写runsc

handler: runsc创建runtimeclass。

shell

root@k8scludes1:~/containerd-gvisor# kubectl apply -f myruntimeclass.yaml

runtimeclass.node.k8s.io/myruntimeclass created

root@k8scludes1:~/containerd-gvisor# kubectl get runtimeclass

NAME HANDLER AGE

myruntimeclass runsc 20s9.4 使用gVisor创建pod

一旦完成集群中 RuntimeClasses 的配置, 你就可以在 Pod spec 中指定 runtimeClassName 来使用它。

runtimeClassName这一设置会告诉 kubelet 使用所指的 RuntimeClass 来运行该 pod。 如果所指的 RuntimeClass 不存在或者 CRI 无法运行相应的 handler, 那么 pod 将会进入 Failed 终止 阶段。 你可以查看相应的事件, 获取执行过程中的错误信息。如果未指定 runtimeClassName ,则将使用默认的 RuntimeHandler,相当于禁用 RuntimeClass 功能特性。

编辑pod配置文件,runtimeClassName: myruntimeclass指定用myruntimeclass里的runsc来运行pod。

yaml

root@k8scludes1:~/containerd-gvisor# vim pod.yaml

root@k8scludes1:~/containerd-gvisor# cat pod.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

run: podtest

name: podtest

spec:

#当需要关闭容器时,立即杀死容器而不等待默认的30秒优雅停机时长。

terminationGracePeriodSeconds: 0

runtimeClassName: myruntimeclass

nodeSelector:

con: gvisor

containers:

- image: hub.c.163.com/library/nginx:latest

#imagePullPolicy: IfNotPresent:表示如果本地已经存在该镜像,则不重新下载;否则从远程 Docker Hub 下载该镜像

imagePullPolicy: IfNotPresent

name: podtest

resources: {}

dnsPolicy: ClusterFirst

restartPolicy: Always

status: {}创建pod。

shell

root@k8scludes1:~/containerd-gvisor# kubectl apply -f pod.yaml

pod/podtest created查看pod,但是创建失败。

shell

root@k8scludes1:~/containerd-gvisor# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

podtest 0/1 ContainerCreating 0 24s <none> ubuntuk8sclient <none> <none>查看pod描述,invalid runtime name containerd-shim-runsc-v1, correct runtime name should be either format like io.containerd.runc.v1 or a full path to the binary: unknown 告诉我们containerd-shim-runsc-v1的格式不对。

yaml

root@k8scludes1:~/containerd-gvisor# kubectl describe pod podtest

Name: podtest

Namespace: minsvcbug

Priority: 0

Node: ubuntuk8sclient/192.168.110.139

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: con=gvisor

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 49s default-scheduler Successfully assigned minsvcbug/podtest to ubuntuk8sclient

Warning FailedCreatePodSandBox 22s (x25 over 47s) kubelet Failed to create pod sandbox: rpc error: code = Unknown desc = failed to create containerd task: failed to start shim: failed to resolve runtime path: invalid runtime name containerd-shim-runsc-v1, correct runtime name should be either format like `io.containerd.runc.v1` or a full path to the binary: unknown删除pod。

shell

root@k8scludes1:~/containerd-gvisor# kubectl delete pod podtest

pod "podtest" deleted回到ubuntuk8sclient修改containerd配置文件,runsc的runtime_type不应该写为containerd-shim-runsc-v1,而应该是runtime_type = "io.containerd.runsc.v1"。

shell

root@ubuntuk8sclient:~# vim /etc/containerd/config.toml

root@ubuntuk8sclient:~# grep runtime_type /etc/containerd/config.toml

runtime_type = ""

runtime_type = "io.containerd.runc.v2"

runtime_type = "io.containerd.runsc.v1"

runtime_type = ""重新加载配置文件并重启containerd。

shell

root@ubuntuk8sclient:~# systemctl daemon-reload ;systemctl restart containerd继续创建pod。

shell

root@k8scludes1:~/containerd-gvisor# kubectl apply -f pod.yaml

pod/podtest createdpod创建成功了。

shell

root@k8scludes1:~/containerd-gvisor# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

podtest 1/1 Running 0 10s 10.244.228.27 ubuntuk8sclient <none> <none>在宿主机上查看nginx容器。

shell

root@ubuntuk8sclient:~# nerdctl ps | grep podtest

d4604b2b8b39 registry.aliyuncs.com/google_containers/pause:3.6 "/pause" 46 seconds ago Up k8s://minsvcbug/podtest

dcb76b70a98e hub.c.163.com/library/nginx:latest "nginx -g daemon off;" 45 seconds ago Up k8s://minsvcbug/podtest/podtest gvisor以沙箱的方式运行容器,在宿主机里就看不到容器里运行的进程了。

shell

root@ubuntuk8sclient:~# ps -ef | grep nginx

root 111683 27377 0 02:36 pts/1 00:00:00 grep --color=auto nginx删除pod。

shell

root@k8scludes1:~/containerd-gvisor# kubectl delete pod podtest

pod "podtest" deleted十.总结

Gvisor作为一种安全容器运行时,通过引入沙箱机制,实现了对容器进程的细粒度控制,有效提高了容器的安全性。虽然相较于传统容器技术,Gvisor可能带来一定的性能开销,但其在安全性方面的优势足以弥补这一不足。