目录

[二. k8s持久化存储:emptyDir](#二. k8s持久化存储:emptyDir)

[三. k8s持久化存储:hostPath](#三. k8s持久化存储:hostPath)

[四. k8s持久化存储:nfs](#四. k8s持久化存储:nfs)

[五. k8s持久化存储: PVC](#五. k8s持久化存储: PVC)

[5.1 k8s PV是什么?](#5.1 k8s PV是什么?)

[5.2 k8s PVC是什么?](#5.2 k8s PVC是什么?)

[5.3 k8s PVC和PV工作原理](#5.3 k8s PVC和PV工作原理)

[5.4 创建pod,使用pvc作为持久化存储卷](#5.4 创建pod,使用pvc作为持久化存储卷)

[六. k8s存储类:storageclass](#六. k8s存储类:storageclass)

[6.1 概述](#6.1 概述)

[6.2 安装nfs provisioner,用于配合存储类动态生成pv](#6.2 安装nfs provisioner,用于配合存储类动态生成pv)

[6.3 创建storageclass,动态供给pv](#6.3 创建storageclass,动态供给pv)

[6.4 创建pvc,通过storageclass动态生成pv](#6.4 创建pvc,通过storageclass动态生成pv)

[6.5 创建pod,挂载storageclass动态生成的pvc:storage-pvc](#6.5 创建pod,挂载storageclass动态生成的pvc:storage-pvc)

一.故事背景

本节内容将会讲述k8s的持久化存储,这是比较重要的一部分。

那么,在k8s中为什么要做持久化存储?

在k8s中部署的应用都是以pod容器的形式运行的,假如我们部署MySQL、Redis等数据库,需要对这些数据库产生的数据做备份。因为Pod是有生命周期的,如果pod不挂载数据卷,那pod被删除或重启后这些数据会随之消失,如果想要长久的保留这些数据就要用到pod数据持久化存储。

二. k8s持久化存储:emptyDir

查看k8s支持哪些存储

[root@k8s-master ~]# kubectl explain pods.spec.volumes

KIND: Pod

VERSION: v1

FIELD: volumes <[]Volume>

DESCRIPTION:

List of volumes that can be mounted by containers belonging to the pod. More

info: https://kubernetes.io/docs/concepts/storage/volumes

Volume represents a named volume in a pod that may be accessed by any

container in the pod.

FIELDS:

awsElasticBlockStore <AWSElasticBlockStoreVolumeSource>

awsElasticBlockStore represents an AWS Disk resource that is attached to a

kubelet's host machine and then exposed to the pod. More info:

https://kubernetes.io/docs/concepts/storage/volumes#awselasticblockstore

azureDisk <AzureDiskVolumeSource>

azureDisk represents an Azure Data Disk mount on the host and bind mount to

the pod.

azureFile <AzureFileVolumeSource>

azureFile represents an Azure File Service mount on the host and bind mount

to the pod.

cephfs <CephFSVolumeSource>

cephFS represents a Ceph FS mount on the host that shares a pod's lifetime

cinder <CinderVolumeSource>

cinder represents a cinder volume attached and mounted on kubelets host

machine. More info: https://examples.k8s.io/mysql-cinder-pd/README.md

configMap <ConfigMapVolumeSource>

configMap represents a configMap that should populate this volume

csi <CSIVolumeSource>

csi (Container Storage Interface) represents ephemeral storage that is

handled by certain external CSI drivers (Beta feature).

downwardAPI <DownwardAPIVolumeSource>

downwardAPI represents downward API about the pod that should populate this

volume

emptyDir <EmptyDirVolumeSource>

emptyDir represents a temporary directory that shares a pod's lifetime. More

info: https://kubernetes.io/docs/concepts/storage/volumes#emptydir

ephemeral <EphemeralVolumeSource>

ephemeral represents a volume that is handled by a cluster storage driver.

The volume's lifecycle is tied to the pod that defines it - it will be

created before the pod starts, and deleted when the pod is removed.

Use this if: a) the volume is only needed while the pod runs, b) features of

normal volumes like restoring from snapshot or capacity

tracking are needed,

c) the storage driver is specified through a storage class, and d) the

storage driver supports dynamic volume provisioning through

a PersistentVolumeClaim (see EphemeralVolumeSource for more

information on the connection between this volume type

and PersistentVolumeClaim).

Use PersistentVolumeClaim or one of the vendor-specific APIs for volumes

that persist for longer than the lifecycle of an individual pod.

Use CSI for light-weight local ephemeral volumes if the CSI driver is meant

to be used that way - see the documentation of the driver for more

information.

A pod can use both types of ephemeral volumes and persistent volumes at the

same time.

fc <FCVolumeSource>

fc represents a Fibre Channel resource that is attached to a kubelet's host

machine and then exposed to the pod.

flexVolume <FlexVolumeSource>

flexVolume represents a generic volume resource that is provisioned/attached

using an exec based plugin.

flocker <FlockerVolumeSource>

flocker represents a Flocker volume attached to a kubelet's host machine.

This depends on the Flocker control service being running

gcePersistentDisk <GCEPersistentDiskVolumeSource>

gcePersistentDisk represents a GCE Disk resource that is attached to a

kubelet's host machine and then exposed to the pod. More info:

https://kubernetes.io/docs/concepts/storage/volumes#gcepersistentdisk

gitRepo <GitRepoVolumeSource>

gitRepo represents a git repository at a particular revision. DEPRECATED:

GitRepo is deprecated. To provision a container with a git repo, mount an

EmptyDir into an InitContainer that clones the repo using git, then mount

the EmptyDir into the Pod's container.

glusterfs <GlusterfsVolumeSource>

glusterfs represents a Glusterfs mount on the host that shares a pod's

lifetime. More info: https://examples.k8s.io/volumes/glusterfs/README.md

hostPath <HostPathVolumeSource>

hostPath represents a pre-existing file or directory on the host machine

that is directly exposed to the container. This is generally used for system

agents or other privileged things that are allowed to see the host machine.

Most containers will NOT need this. More info:

https://kubernetes.io/docs/concepts/storage/volumes#hostpath

iscsi <ISCSIVolumeSource>

iscsi represents an ISCSI Disk resource that is attached to a kubelet's host

machine and then exposed to the pod. More info:

https://examples.k8s.io/volumes/iscsi/README.md

name <string> -required-

name of the volume. Must be a DNS_LABEL and unique within the pod. More

info:

https://kubernetes.io/docs/concepts/overview/working-with-objects/names/#names

nfs <NFSVolumeSource>

nfs represents an NFS mount on the host that shares a pod's lifetime More

info: https://kubernetes.io/docs/concepts/storage/volumes#nfs

persistentVolumeClaim <PersistentVolumeClaimVolumeSource>

persistentVolumeClaimVolumeSource represents a reference to a

PersistentVolumeClaim in the same namespace. More info:

https://kubernetes.io/docs/concepts/storage/persistent-volumes#persistentvolumeclaims

photonPersistentDisk <PhotonPersistentDiskVolumeSource>

photonPersistentDisk represents a PhotonController persistent disk attached

and mounted on kubelets host machine

portworxVolume <PortworxVolumeSource>

portworxVolume represents a portworx volume attached and mounted on kubelets

host machine

projected <ProjectedVolumeSource>

projected items for all in one resources secrets, configmaps, and downward

API

quobyte <QuobyteVolumeSource>

quobyte represents a Quobyte mount on the host that shares a pod's lifetime

rbd <RBDVolumeSource>

rbd represents a Rados Block Device mount on the host that shares a pod's

lifetime. More info: https://examples.k8s.io/volumes/rbd/README.md

scaleIO <ScaleIOVolumeSource>

scaleIO represents a ScaleIO persistent volume attached and mounted on

Kubernetes nodes.

secret <SecretVolumeSource>

secret represents a secret that should populate this volume. More info:

https://kubernetes.io/docs/concepts/storage/volumes#secret

storageos <StorageOSVolumeSource>

storageOS represents a StorageOS volume attached and mounted on Kubernetes

nodes.

vsphereVolume <VsphereVirtualDiskVolumeSource>

vsphereVolume represents a vSphere volume attached and mounted on kubelets

host machine常用的如下:

emptyDir

hostPath

nfs

persistentVolumeClaim

glusterfs

cephfs

configMap

secret

我们想要使用存储卷,需要经历如下步骤

1、定义pod的volume,这个volume指明它要关联到哪个存储上的

2、在容器中要使用volumemounts挂载对应的存储

经过以上两步才能正确的使用存储卷

emptyDir类型的Volume是在Pod分配到Node上时被创建,Kubernetes会在Node上自动分配一个目录,因此无需指定宿主机Node上对应的目录文件。 这个目录的初始内容为空,当Pod从Node上移除时,emptyDir中的数据会被永久删除。emptyDir Volume主要用于某些应用程序无需永久保存的临时目录,多个容器的共享目录等。

创建一个pod,挂载临时目录emptyDir

apiVersion: v1

kind: Pod

metadata:

name: pod-empty

spec:

containers:

- name: container-empty

image: nginx

imagePullPolicy: IfNotPresent

volumeMounts:

- mountPath: /cache

name: cache-volume ##与volumes中的name保持一致

volumes:

- emptyDir: {}

name: cache-volume更新资源清单文件

查看本机临时目录存在的位置,可用如下方法:

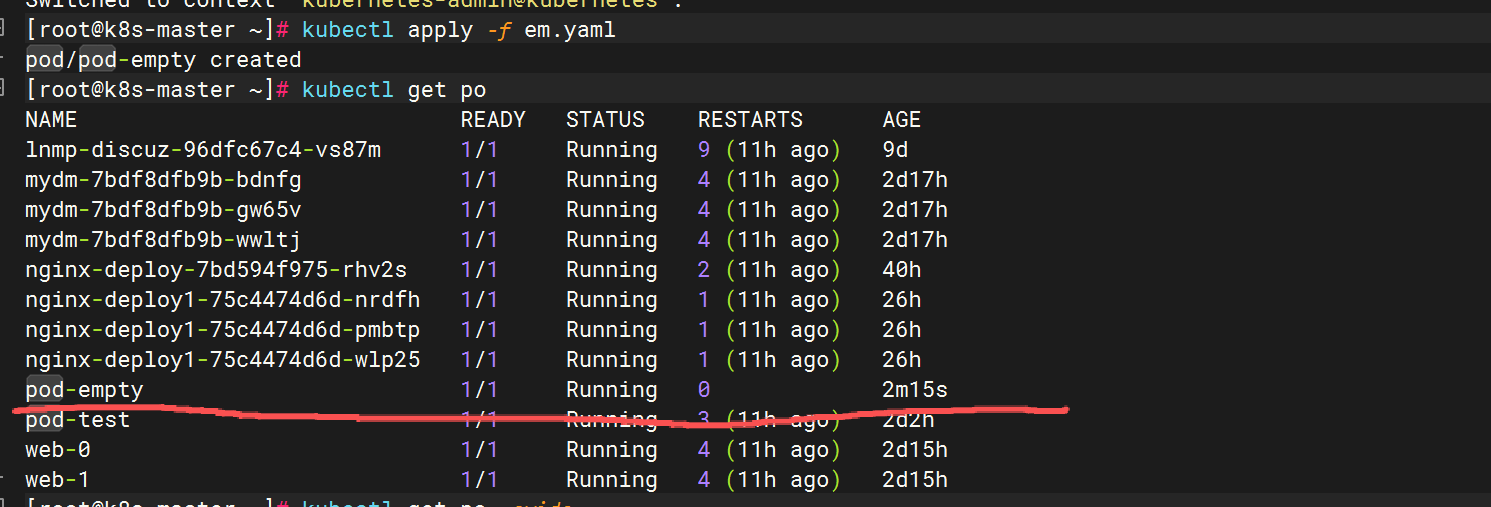

查看pod调度到哪个节点

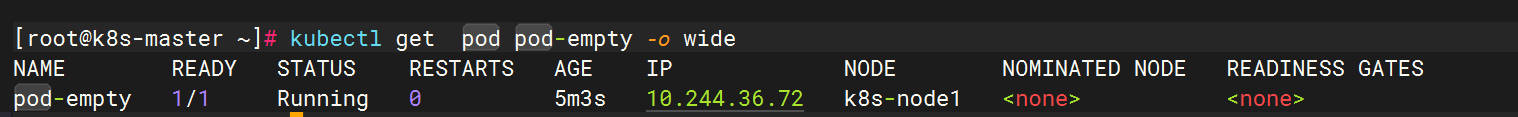

kubectl get pod pod-empty -o wide

查看pod的uid

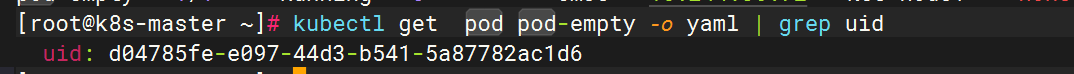

kubectl get pod pod-empty -o yaml | grep uid

登录到k8s-node1上

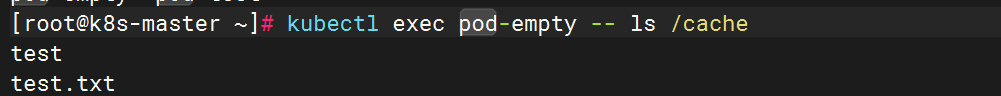

测试

成功挂载

三. k8s持久化存储:hostPath

hostPath Volume是指Pod挂载宿主机上的目录或文件。 hostPath Volume使得容器可以使用宿主机的文件系统进行存储,hostpath(宿主机路径):节点级别的存储卷,在pod被删除,这个存储卷还是存在的,不会被删除,所以只要同一个pod被调度到同一个节点上来,在pod被删除重新被调度到这个节点之后,对应的数据依然是存在的。

查看hostPath存储卷的用法

[root@k8s-master ~]# kubectl explain pods.spec.volumes.hostPath

KIND: Pod

VERSION: v1

FIELD: hostPath <HostPathVolumeSource>

DESCRIPTION:

hostPath represents a pre-existing file or directory on the host machine

that is directly exposed to the container. This is generally used for system

agents or other privileged things that are allowed to see the host machine.

Most containers will NOT need this. More info:

https://kubernetes.io/docs/concepts/storage/volumes#hostpath

Represents a host path mapped into a pod. Host path volumes do not support

ownership management or SELinux relabeling.

FIELDS:

path <string> -required-

path of the directory on the host. If the path is a symlink, it will follow

the link to the real path. More info:

https://kubernetes.io/docs/concepts/storage/volumes#hostpath

type <string>

type for HostPath Volume Defaults to "" More info:

https://kubernetes.io/docs/concepts/storage/volumes#hostpath

Possible enum values:

- `""` For backwards compatible, leave it empty if unset

- `"BlockDevice"` A block device must exist at the given path

- `"CharDevice"` A character device must exist at the given path

- `"Directory"` A directory must exist at the given path

- `"DirectoryOrCreate"` If nothing exists at the given path, an empty

directory will be created there as needed with file mode 0755, having the

same group and ownership with Kubelet.

- `"File"` A file must exist at the given path

- `"FileOrCreate"` If nothing exists at the given path, an empty file will

be created there as needed with file mode 0644, having the same group and

ownership with Kubelet.

- `"Socket"` A UNIX socket must exist at the given path创建一个pod,挂载hostPath存储卷

apiVersion: v1

kind: Pod

metadata:

name: test-hostpath

spec:

containers:

- image: nginx

imagePullPolicy: IfNotPresent

name: test-nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: test-volume

volumes:

- name: test-volume

hostPath:

path: /data

type: DirectoryOrCreate注意:

DirectoryOrCreate表示本地有/data目录,就用本地的,本地没有就会在pod调度到的节点自动创建一个

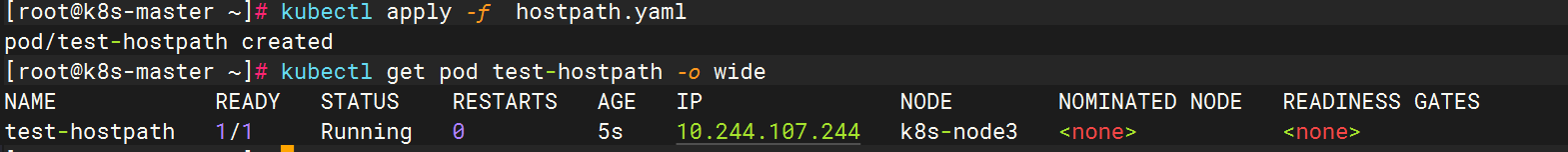

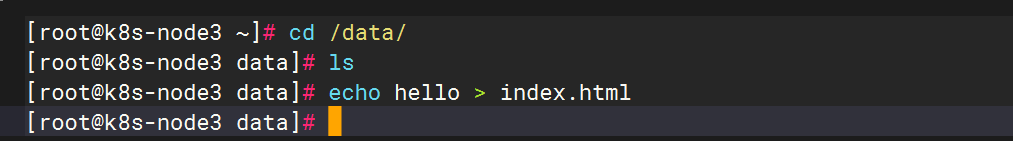

更新资源清单文件,并查看pod调度到了哪个物理节点

测试

没有首页

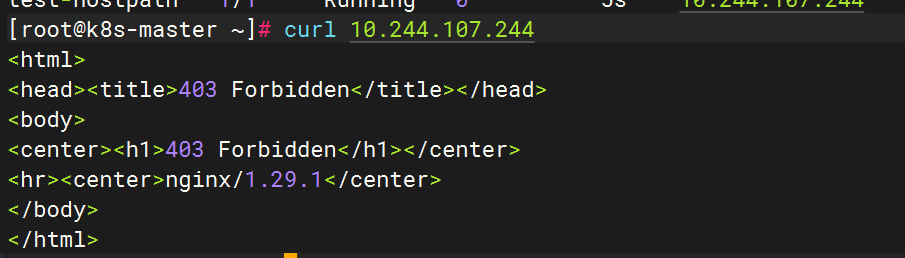

生成首页

hostpath存储卷缺点

单节点,pod删除之后重新创建必须调度到同一个node节点,数据才不会丢失

如何调度到同一个nodeName呢 ? 需要我们再yaml文件中进行指定就可以

apiVersion: v1

kind: Pod

metadata:

name: test-hostpath

spec:

nodeName: k8s-node3

containers:

- image: nginx

name: test-nginx

volumeMounts:

- mountPath: /usr/share/nginx/html

name: test-volume

volumes:

- name: test-volume

hostPath:

path: /data

type: DirectoryOrCreate四. k8s持久化存储:nfs

上面说的hostPath存储,存在单点故障,pod挂载hostPath时,只有调度到同一个节点,数据才不会丢失。那可以使用nfs作为持久化存储。

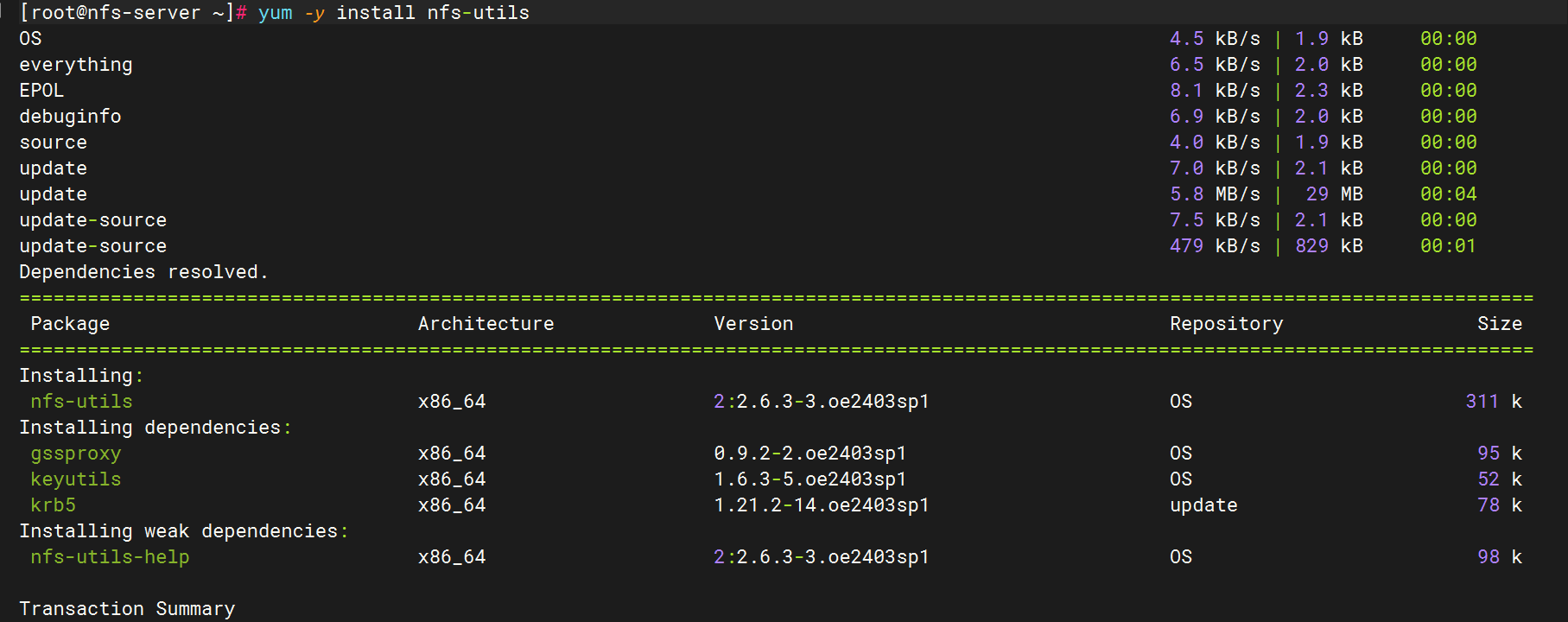

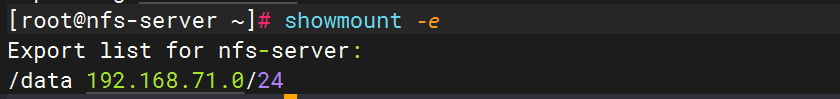

搭建nfs服务

以使用第五台机器作为NFS服务端,每一台节点以及master都要安装

yum install -y nfs-utils

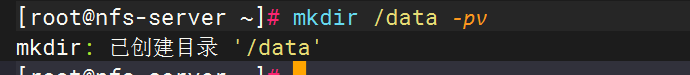

在宿主机(第五台机)创建NFS需要的共享目录

mkdir /data -pv

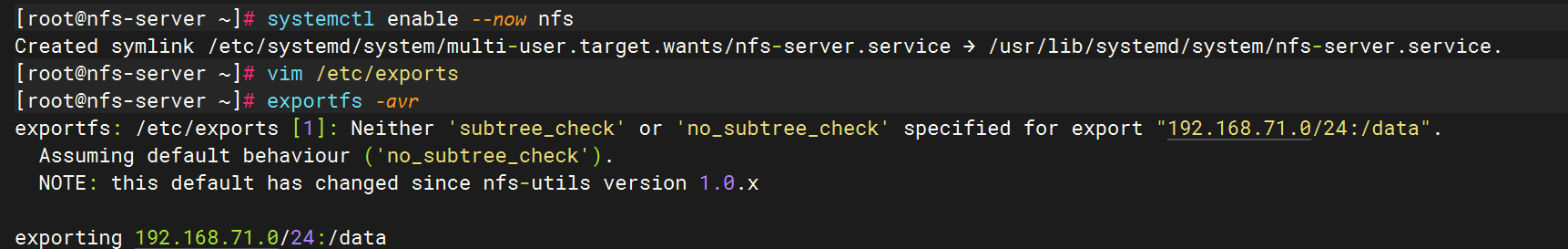

配置nfs共享服务器上的/data目录

systemctl enable --now nfs

vim /etc/exports

/data 192.168.71.0/24(rw,sync,no_root_squash)

使NFS配置生效

exportfs -avr

所有的worker节点安装nfs-utils

yum install nfs-utils -y

systemctl enable --now nfs

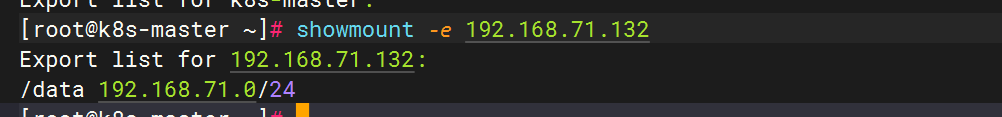

在k8s-node1和k8s-node2上手动挂载试试:

mount 192.168.71.132:/data /mnt

df -Th | grep nfs

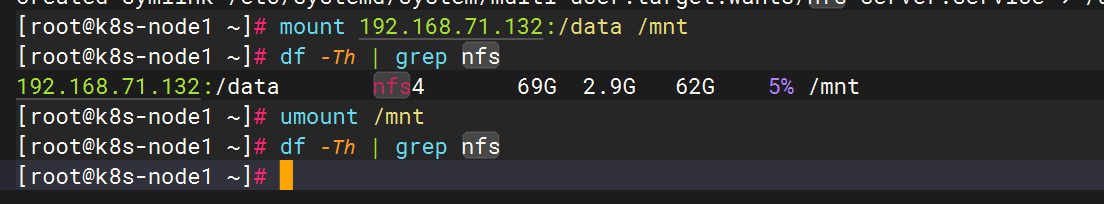

创建Pod,挂载NFS共享出来的目录

apiVersion: v1

kind: Pod

metadata:

name: test-nfs

spec:

containers:

- name: test-nfs

image: nginx

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

protocol: TCP

volumeMounts:

- name: nfs-volumes

mountPath: /usr/share/nginx/html

volumes:

- name: nfs-volumes

nfs:

path: /data #共享目录

server: 192.168.71.132 ##nfs服务器地址更新资源清单文件

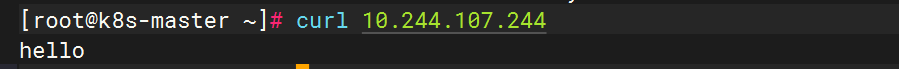

测试

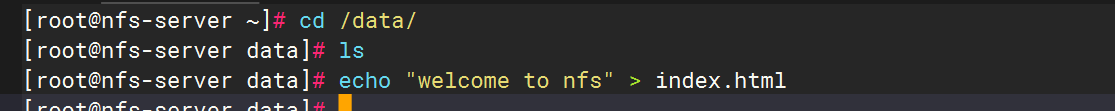

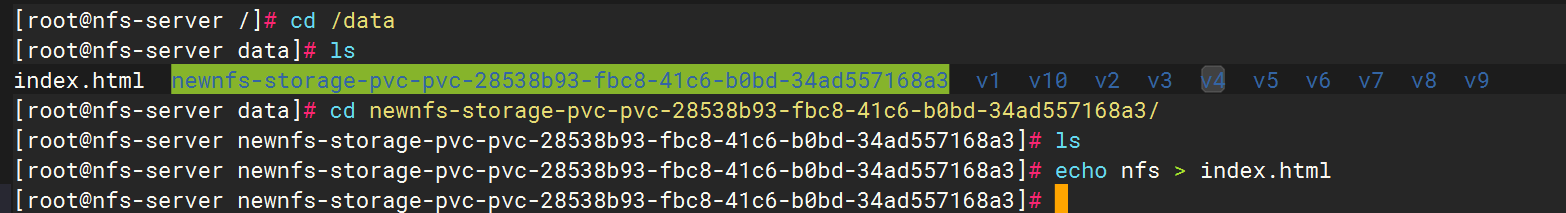

登录到nfs服务器,在共享目录创建一个index.html

cd /data/

echo nfs > index.html

curl 10.244.36.86

上面说明挂载nfs存储卷成功了,nfs支持多个客户端挂载,可以创建多个pod,挂载同一个nfs服务器共享出来的目录;但是nfs如果宕机了,数据也就丢失了,所以需要使用分布式存储,常见的分布式存储有glusterfs和cephfs

五. k8s持久化存储: PVC

5.1 k8s PV是什么?

PersistentVolume(PV)是群集中的一块存储,由管理员配置或使用存储类动态配置。 它是集群中的资源,就像pod是k8s集群资源一样。 PV是容量插件,如Volumes,其生命周期独立于使用PV的任何单个pod。

5.2 k8s PVC是什么?

PersistentVolumeClaim(PVC)是用户使用存储的请求。 它类似于pod。Pod消耗节点资源,PVC消耗存储资源。 pod可以请求特定级别的资源(CPU和内存)。 pvc在申请pv的时候也可以请求特定的大小和访问模式(例如,可以一次读写或多次只读)。

5.3 k8s PVC和PV工作原理

PV是群集中的资源。 PVC是对这些资源的请求。

PV和PVC之间的相互作用遵循以下生命周期:

(1)pv的供应方式

可以通过两种方式配置PV:静态或动态。

静态的:

集群管理员创建了许多PV。它们包含可供群集用户使用的实际存储的详细信息。它们存在于Kubernetes API中,可供使用。

动态的:

当管理员创建的静态PV都不匹配用户的PersistentVolumeClaim时,群集可能会尝试为PVC专门动态配置卷。此配置基于StorageClasses,PVC必须请求存储类,管理员必须创建并配置该类,以便进行动态配置。

(2)绑定

用户创建pvc并指定需要的资源和访问模式。在找到可用pv之前,pvc会保持未绑定状态

(3)使用

-

需要找一个存储服务器,把它划分成多个存储空间;

-

k8s管理员可以把这些存储空间定义成多个pv;

-

在pod中使用pvc类型的存储卷之前需要先创建pvc,通过定义需要使用的pv的大小和对应的访问模式,找到合适的pv;

-

pvc被创建之后,就可以当成存储卷来使用了,我们在定义pod时就可以使用这个pvc的存储卷;

-

pvc和pv它们是一一对应的关系,pv如果被pvc绑定了,就不能被其他pvc使用了;

-

我们在创建pvc的时候,应该确保和底下的pv能绑定,如果没有合适的pv,那么pvc就会处于pending状态。

(4)回收策略

当我们创建pod时如果使用pvc做为存储卷,那么它会和pv绑定,当删除pod,pvc和pv绑定就会解除,解除之后和pvc绑定的pv卷里的数据需要怎么处理,目前,卷可以保留、回收或删除:

1、Retain

当删除pvc的时候,pv仍然存在,处于released状态,但是它不能被其他pvc绑定使用,里面的数据还是存在的,当我们下次再使用的时候,数据还是存在的,这个是默认的回收策略。

2、Delete

删除pvc时即会从Kubernetes中移除PV,也会从相关的外部设施中删除存储资产。

5.4 创建pod,使用pvc作为持久化存储卷

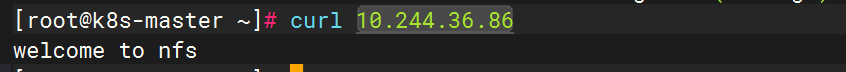

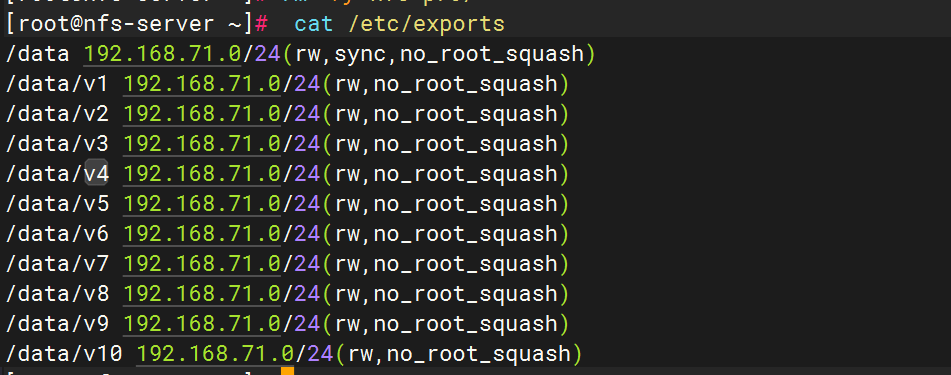

1、创建nfs共享目录

在宿主机创建NFS需要的共享目录

mkdir /data/v{1..10} -p

配置nfs共享宿主机上的/data/v1..v10目录

/data 192.168.71.0/24(rw,sync,no_root_squash)

/data/v1 192.168.71.0/24(rw,no_root_squash)

/data/v2 192.168.71.0/24(rw,no_root_squash)

/data/v3 192.168.71.0/24(rw,no_root_squash)

/data/v4 192.168.71.0/24(rw,no_root_squash)

/data/v5 192.168.71.0/24(rw,no_root_squash)

/data/v6 192.168.71.0/24(rw,no_root_squash)

/data/v7 192.168.71.0/24(rw,no_root_squash)

/data/v8 192.168.71.0/24(rw,no_root_squash)

/data/v9 192.168.71.0/24(rw,no_root_squash)

/data/v10 192.168.71.0/24(rw,no_root_squash)

重新加载配置,使配置成效

exportfs -arv

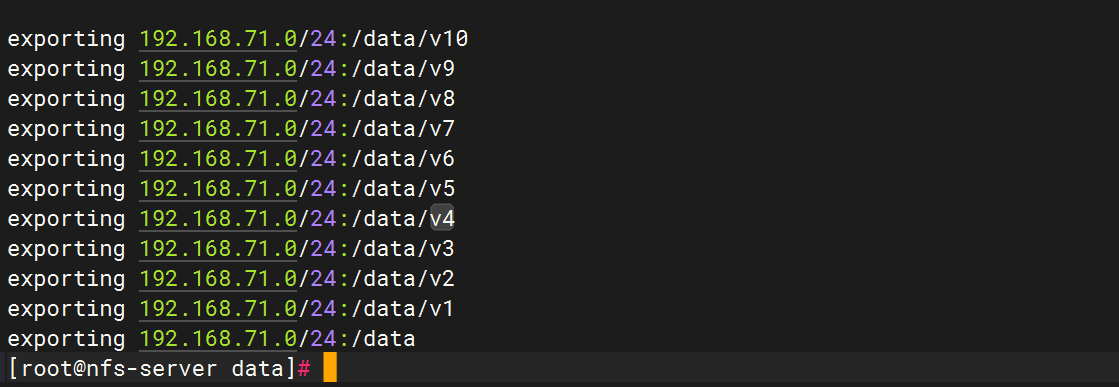

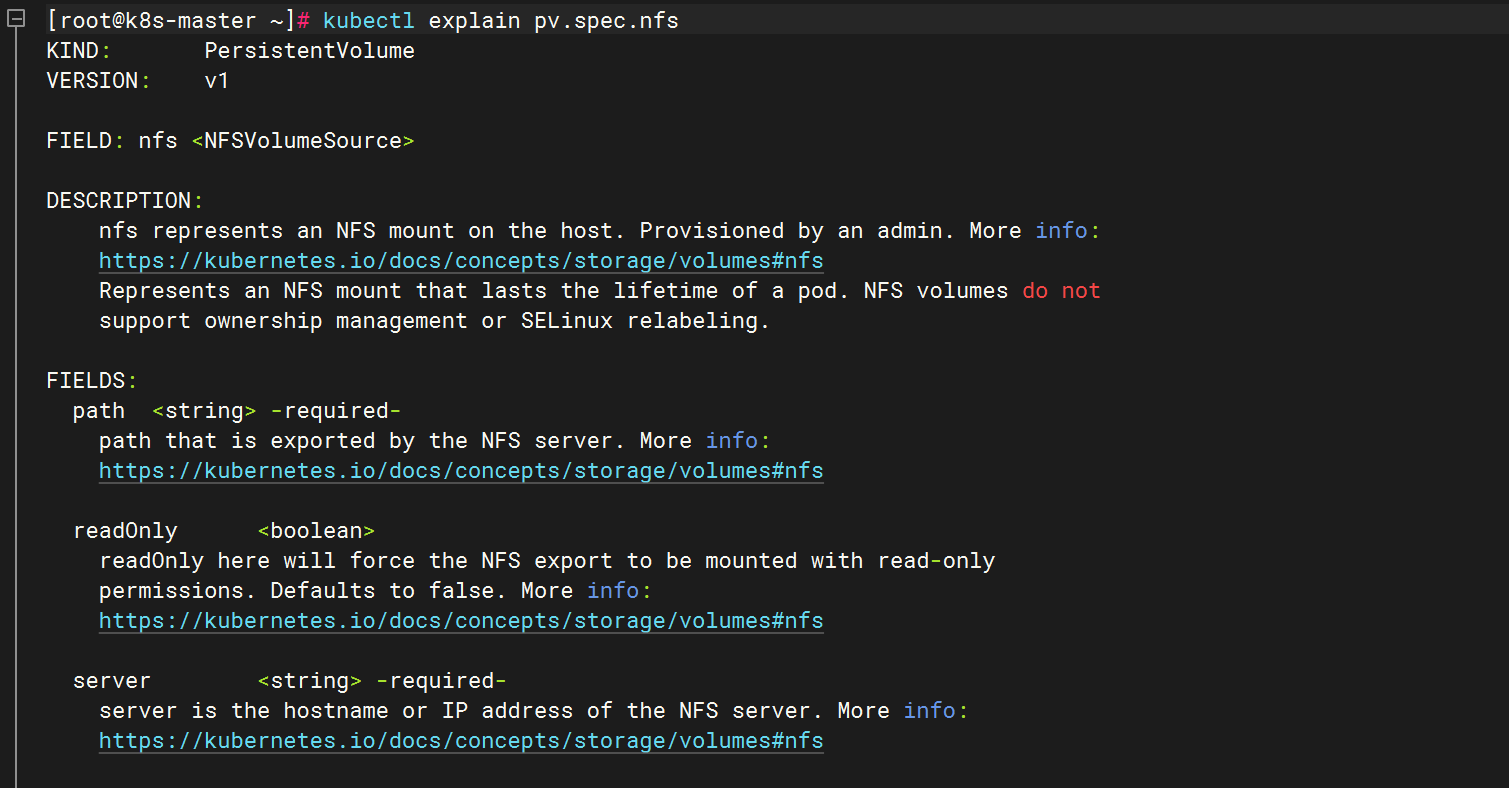

2、如何编写pv的资源清单文件

查看定义pv需要的字段

查看定义nfs类型的pv需要的字段

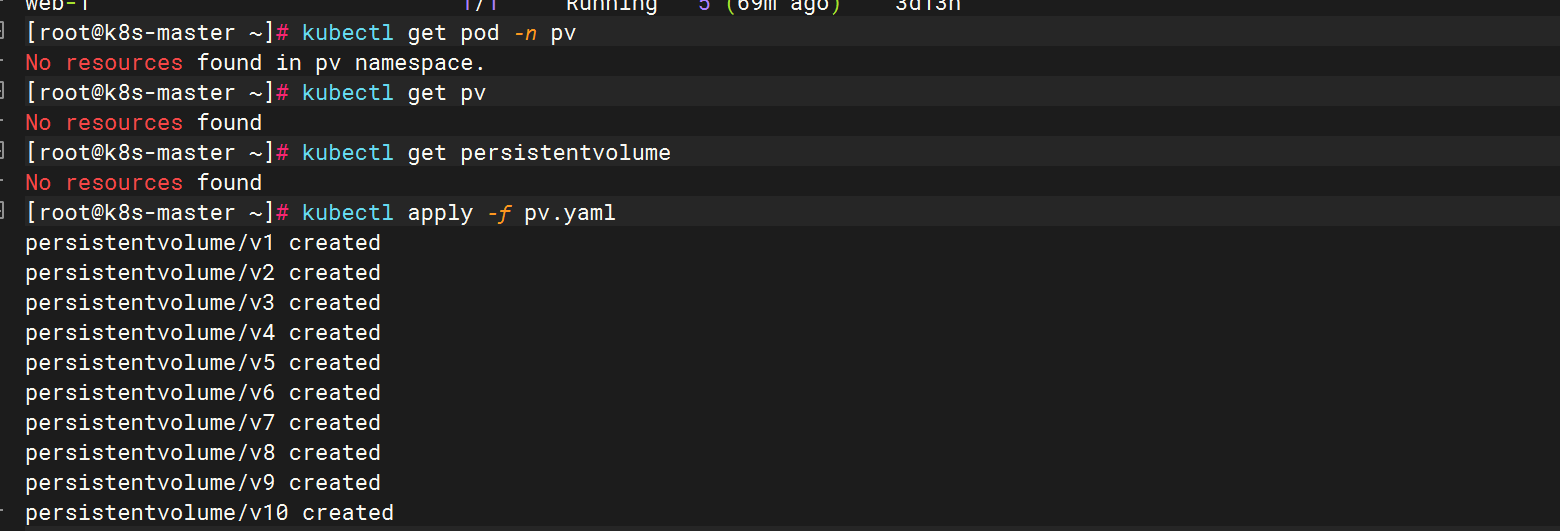

3、创建pv

apiVersion: v1

kind: PersistentVolume

metadata:

name: v1

spec:

capacity:

storage: 1Gi #pv的存储空间容量

accessModes: ["ReadWriteOnce"]

nfs:

path: /data/v1 #把nfs的存储空间创建成pv

server: 192.168.71.132 #nfs服务器的地址

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: v2

spec:

capacity:

storage: 2Gi

accessModes: ["ReadWriteMany"]

nfs:

path: /data/v2

server: 192.168.71.132

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: v3

spec:

capacity:

storage: 3Gi

accessModes: ["ReadOnlyMany"]

nfs:

path: /data/v3

server: 192.168.71.132

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: v4

spec:

capacity:

storage: 4Gi

accessModes: ["ReadWriteOnce","ReadWriteMany"]

nfs:

path: /data/v4

server: 192.168.71.132

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: v5

spec:

capacity:

storage: 5Gi

accessModes: ["ReadWriteOnce","ReadWriteMany"]

nfs:

path: /data/v5

server: 192.168.71.132

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: v6

spec:

capacity:

storage: 6Gi

accessModes: ["ReadWriteOnce","ReadWriteMany"]

nfs:

path: /data/v6

server: 192.168.71.132

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: v7

spec:

capacity:

storage: 7Gi

accessModes: ["ReadWriteOnce","ReadWriteMany"]

nfs:

path: /data/v7

server: 192.168.71.132

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: v8

spec:

capacity:

storage: 8Gi

accessModes: ["ReadWriteOnce","ReadWriteMany"]

nfs:

path: /data/v8

server: 192.168.71.132

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: v9

spec:

capacity:

storage: 9Gi

accessModes: ["ReadWriteOnce","ReadWriteMany"]

nfs:

path: /data/v9

server: 192.168.71.132

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: v10

spec:

capacity:

storage: 10Gi

accessModes: ["ReadWriteOnce","ReadWriteMany"]

nfs:

path: /data/v10

server: 192.168.71.132更新资源清单文件

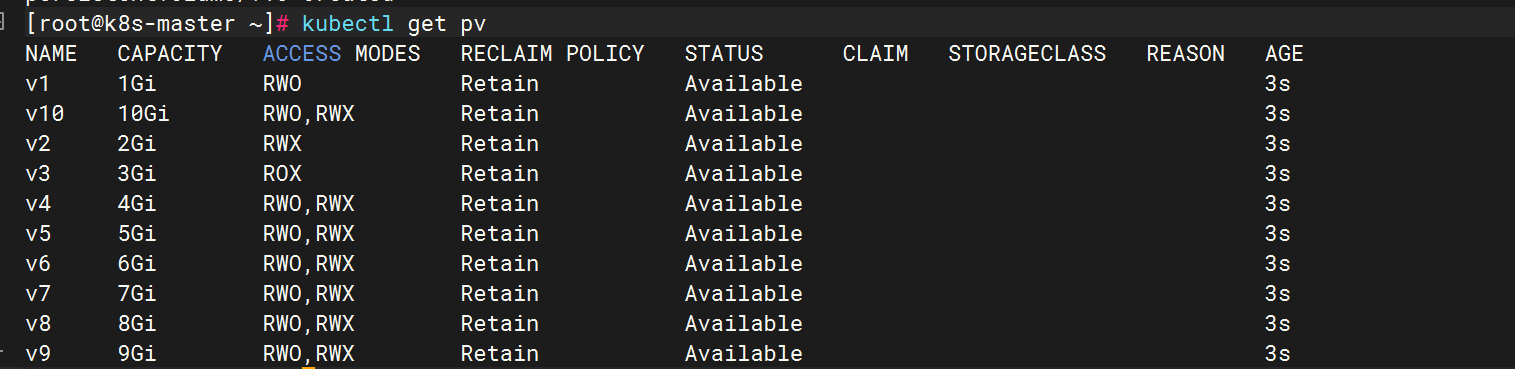

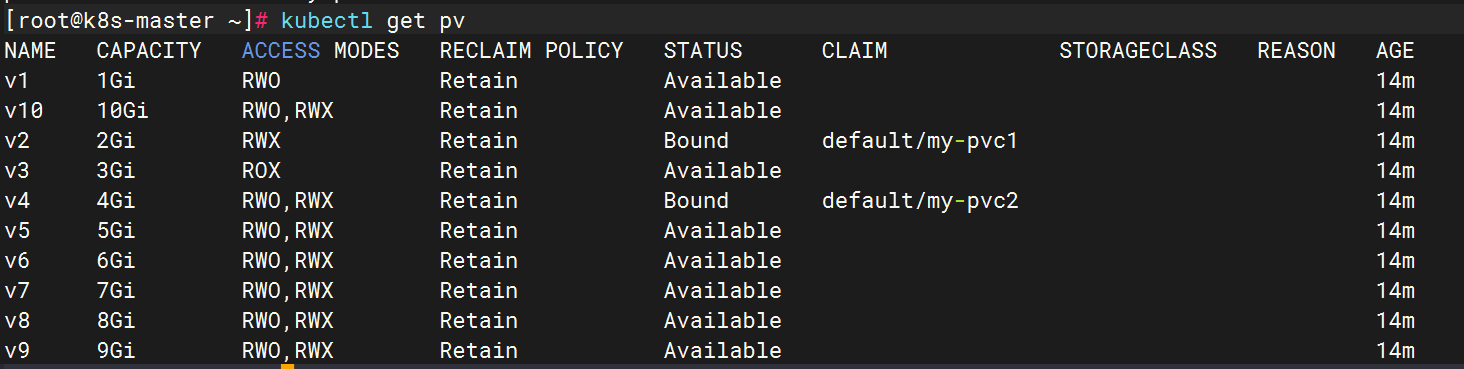

查看pv资源

#STATUS是Available,表示pv是可用的

NAME(名称)

通常用于标识某个特定的对象,在这里可能是指存储相关的实体(比如存储卷等)的具体名字,方便对其进行区分和引用。

CAPACITY(容量)

代表该对象所具备的存储容量大小,一般会以特定的存储单位(如字节、KB、MB、GB、TB 等)来衡量,表示其能够容纳的数据量多少。

ACCESS MODES(访问模式)

指的是允许对相应存储资源进行访问的方式,例如可以是只读、读写、可追加等不同模式,决定了用户或系统对其存储内容操作的权限范围。

RECLAIM POLICY(回收策略)

关乎当存储资源不再被使用或者释放时,对其所占用空间等资源的处理方式,常见的策略比如立即回收、延迟回收、按特定条件回收等,确保资源能合理地被再次利用。

STATUS(状态)

描述该存储相关对象当前所处的情况,例如可能是可用、不可用、正在初始化、已损坏等不同状态,便于了解其是否能正常工作。

CLAIM(声明)

在存储语境中,往往涉及对存储资源的一种请求或者占用声明,表明某个主体对相应存储资源有着相关权益或者正在使用它等情况。

STORAGECLASS(存储类别)

用于区分不同特性的存储分类,比如可以根据存储的性能(高速、低速)、存储介质(磁盘、磁带等)、存储成本(昂贵、廉价)等因素划分出不同的存储类,以满足不同场景的需求。

VOLUMEATTRIBUTESCLASS(卷属性类别)

主要是针对存储卷这一特定存储对象而言,涵盖了该卷在诸如容量属性、性能属性、安全属性等多方面的类别划分,体现其具备的各类特性。

REASON(原因)

如果存储对象处于某种特定状态(比如异常状态等),此处用于说明造成该状态的具体缘由,方便排查问题、分析情况。

AGE(时长)

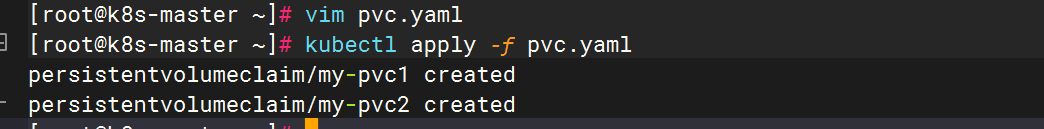

一般是指从该存储对象创建开始到当前所经历的时间长度,可用于衡量其存在的时间阶段,辅助判断其使用情况等。4、创建pvc,和符合条件的pv绑定

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: my-pvc1

spec:

accessModes: ["ReadWriteMany"]

resources:

requests:

storage: 2Gi

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: my-pvc2

spec:

accessModes: ["ReadWriteMany"]

resources:

requests:

storage: 1Gi更新资源清单文件

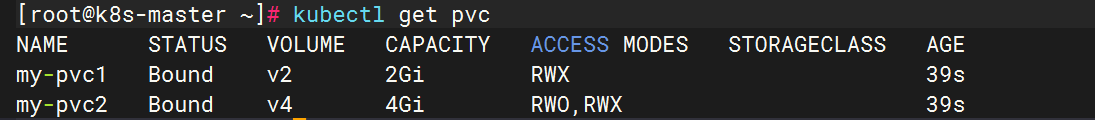

查看pv和pvc,STATUS是Bound,表示这个pv已经被my-pvc绑定了

之所以第二个申请到v4,是因为v1是RWO不符合,然后v2被pvc1使用了,v3是ROX也不符合,所以申请到的是v4,虽然1Gi不符合,但是两个条件是or的关系,符合一个即可

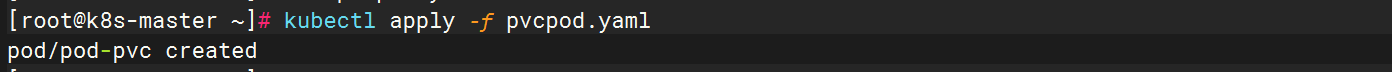

5、创建pod,挂载pvc

apiVersion: v1

kind: Pod

metadata:

name: pod-pvc

spec:

containers:

- name: nginx

image: nginx

imagePullPolicy: IfNotPresent

volumeMounts:

- name: nginx-html

mountPath: /usr/share/nginx/html

volumes:

- name: nginx-html

persistentVolumeClaim:

claimName: my-pvc1更新资源清单文件

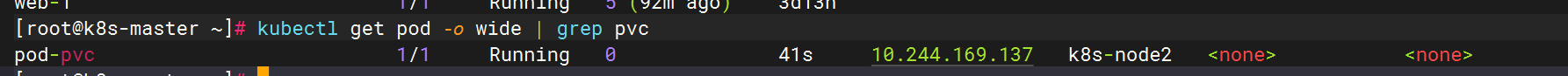

查看pod状态

通过上面可以看到pod处于running状态,正常运行

测试

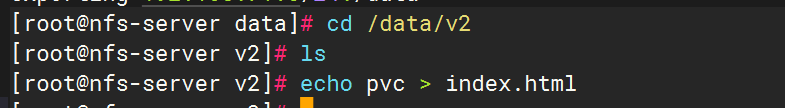

在nfs服务器端写入index.html

cd /data/v2

echo pvc > index.html

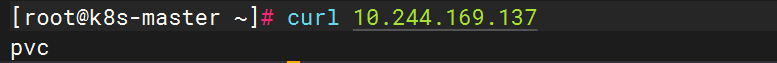

curl 10.244.169.137

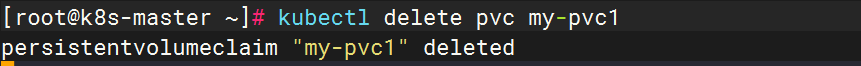

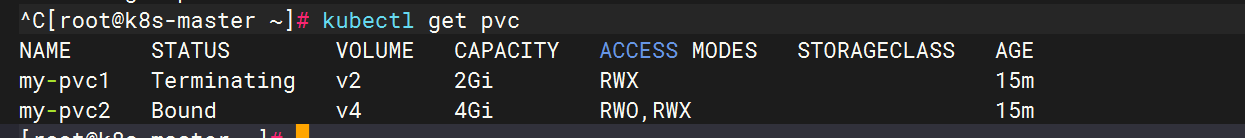

删除pvc

kubectl delete pvc my-pvc1

此时我们进入到nfs的共享目录,发现我们建立的index.html还是存在的

注:使用pvc和pv的注意事项

1、我们每次创建pvc的时候,需要事先有划分好的pv,这样可能不方便,那么可以在创建pvc的时候直接动态创建一个pv这个存储类,pv事先是不存在的

2、pvc和pv绑定,如果使用默认的回收策略retain,那么删除pvc之后,pv会处于released状态,我们想要继续使用这个pv,需要手动删除pv,kubectl delete pv pv_name,删除pv,不会删除pv里的数据,当我们重新创建pvc时还会和这个最匹配的pv绑定,数据还是原来数据,不会丢失。

六. k8s存储类:storageclass

6.1 概述

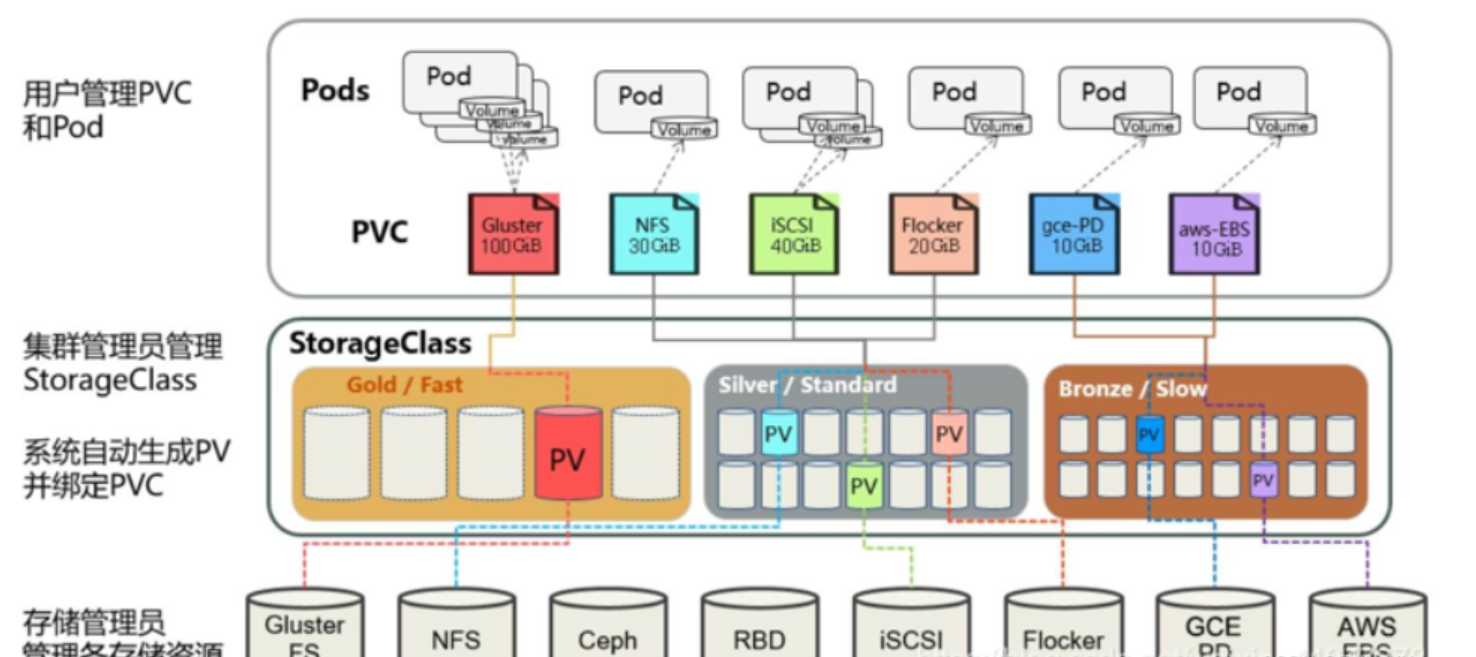

上面介绍的PV和PVC模式都是需要先创建好PV,然后定义好PVC和pv进行一对一的Bond,但是如果PVC请求成千上万,那么就需要创建成千上万的PV,对于运维人员来说维护成本很高,Kubernetes提供一种自动创建PV的机制,叫StorageClass,它的作用就是创建PV的模板。k8s集群管理员通过创建storageclass可以动态生成一个存储卷pv供k8s pvc使用。

每个StorageClass都包含字段provisioner,parameters和reclaimPolicy。

具体来说,StorageClass会定义以下两部分:

1、PV的属性 ,比如存储的大小、类型等;

2、创建这种PV需要使用到的存储插件,比如Ceph、NFS等

有了这两部分信息,Kubernetes就能够根据用户提交的PVC,找到对应的StorageClass,然后Kubernetes就会调用 StorageClass声明的存储插件,创建出需要的PV。

StorageClass运行原理

-

volumeClaimTemplates实现了pvc的自动化,StorageClass实现了pv的自动化

-

每个 StorageClass 都包含 provisioner、parameters 和 reclaimPolicy 字段, 这些字段会在 StorageClass 需要动态分配 PersistentVolume 时会使用到。

-

StorageClass 对象的命名很重要,用户使用这个命名来请求生成一个特定的类。 当创建 StorageClass 对象时,管理员设置 StorageClass 对象的命名和其他参数,一旦创建了对象就不能再对其更新。

-

管理员可以为没有申请绑定到特定 StorageClass 的 PVC 指定一个默认的存储类

要使用 StorageClass,我们就得安装对应的自动配置程序,比如我们这里存储后端使用的是 nfs,那么我们就需要使用到一个 nfs-client 的自动配置程序,我们也叫它 Provisioner(制备器),这个程序使我们已经配置好的 nfs 服务器,来自动创建持久卷,也就是自动帮我们创建 PV。

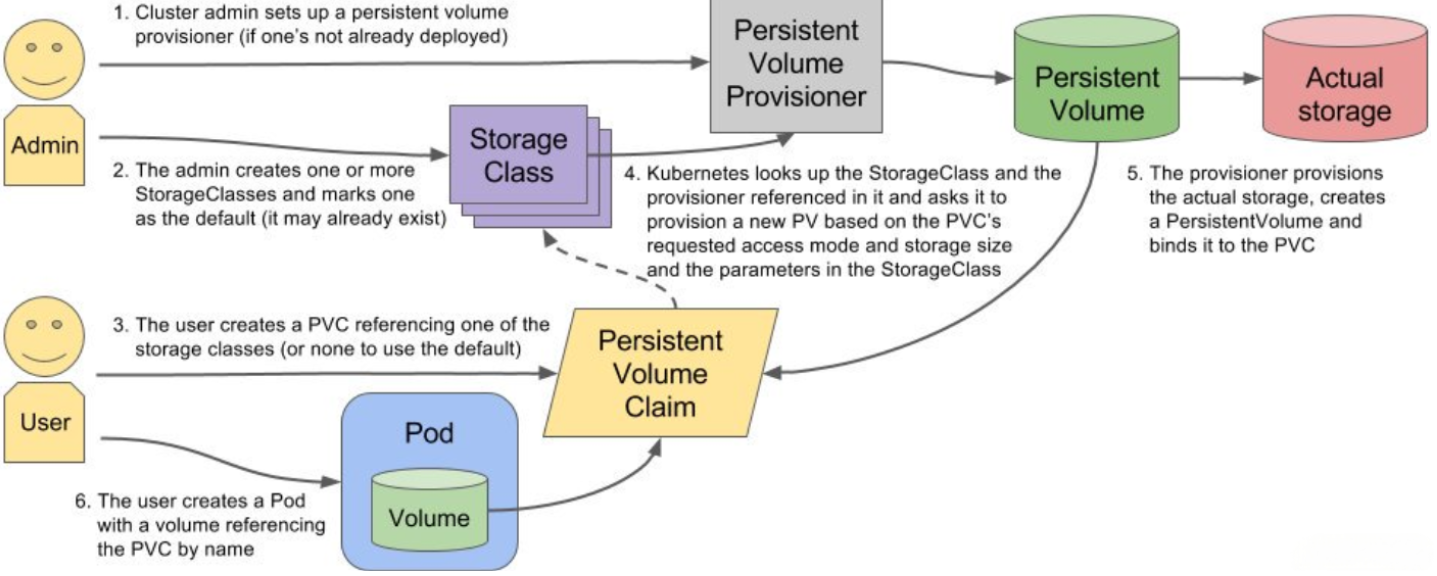

搭建StorageClass+NFS,大致有以下几个步骤:

1.创建一个可用的NFS Serve(实际存储文件的空间)

2.创建Service Account 这是用来管控NFS provisioner在k8s集群中运行的权限

3.创建StorageClass 负责建立PVC并调用NFS provisioner进行预定的工作,并让PV与PVC建立管理

4.创建NFS provisioner(存储制备器)

1)每个 StorageClass 都有一个制备器(Provisioner)用来决定使用哪个卷插件制备 PV。 该字段必须指定。

2)主要有两个功能

一个是在NFS共享目录下创建挂载点(volume)

另一个则是建了PV并将PV与NFS的挂载点建立关联

storageclass字段

[root@k8s-master ~]# kubectl explain storageclass

GROUP: storage.k8s.io

KIND: StorageClass

VERSION: v1

DESCRIPTION:

StorageClass describes the parameters for a class of storage for which

PersistentVolumes can be dynamically provisioned.

StorageClasses are non-namespaced; the name of the storage class according

to etcd is in ObjectMeta.Name.

FIELDS:

allowVolumeExpansion <boolean>

allowVolumeExpansion shows whether the storage class allow volume expand.

allowedTopologies <[]TopologySelectorTerm>

allowedTopologies restrict the node topologies where volumes can be

dynamically provisioned. Each volume plugin defines its own supported

topology specifications. An empty TopologySelectorTerm list means there is

no topology restriction. This field is only honored by servers that enable

the VolumeScheduling feature.

apiVersion <string>

APIVersion defines the versioned schema of this representation of an object.

Servers should convert recognized schemas to the latest internal value, and

may reject unrecognized values. More info:

https://git.k8s.io/community/contributors/devel/sig-architecture/api-conventions.md#resources

kind <string>

Kind is a string value representing the REST resource this object

represents. Servers may infer this from the endpoint the client submits

requests to. Cannot be updated. In CamelCase. More info:

https://git.k8s.io/community/contributors/devel/sig-architecture/api-conventions.md#types-kinds

metadata <ObjectMeta>

Standard object's metadata. More info:

https://git.k8s.io/community/contributors/devel/sig-architecture/api-conventions.md#metadata

mountOptions <[]string>

mountOptions controls the mountOptions for dynamically provisioned

PersistentVolumes of this storage class. e.g. ["ro", "soft"]. Not validated

- mount of the PVs will simply fail if one is invalid.

parameters <map[string]string>

parameters holds the parameters for the provisioner that should create

volumes of this storage class.

provisioner <string> -required-

provisioner indicates the type of the provisioner.

reclaimPolicy <string>

reclaimPolicy controls the reclaimPolicy for dynamically provisioned

PersistentVolumes of this storage class. Defaults to Delete.

Possible enum values:

- `"Delete"` means the volume will be deleted from Kubernetes on release

from its claim. The volume plugin must support Deletion.

- `"Recycle"` means the volume will be recycled back into the pool of

unbound persistent volumes on release from its claim. The volume plugin must

support Recycling.

- `"Retain"` means the volume will be left in its current phase (Released)

for manual reclamation by the administrator. The default policy is Retain.

volumeBindingMode <string>

volumeBindingMode indicates how PersistentVolumeClaims should be provisioned

and bound. When unset, VolumeBindingImmediate is used. This field is only

honored by servers that enable the VolumeScheduling feature.

Possible enum values:

- `"Immediate"` indicates that PersistentVolumeClaims should be immediately

provisioned and bound. This is the default mode.

- `"WaitForFirstConsumer"` indicates that PersistentVolumeClaims should not

be provisioned and bound until the first Pod is created that references the

PeristentVolumeClaim. The volume provisioning and binding will occur during

Pod scheduing.**provisioner:**供应商(也称作制备器),storageclass需要有一个供应者,用来确定我们使用什么样的存储来创建pv

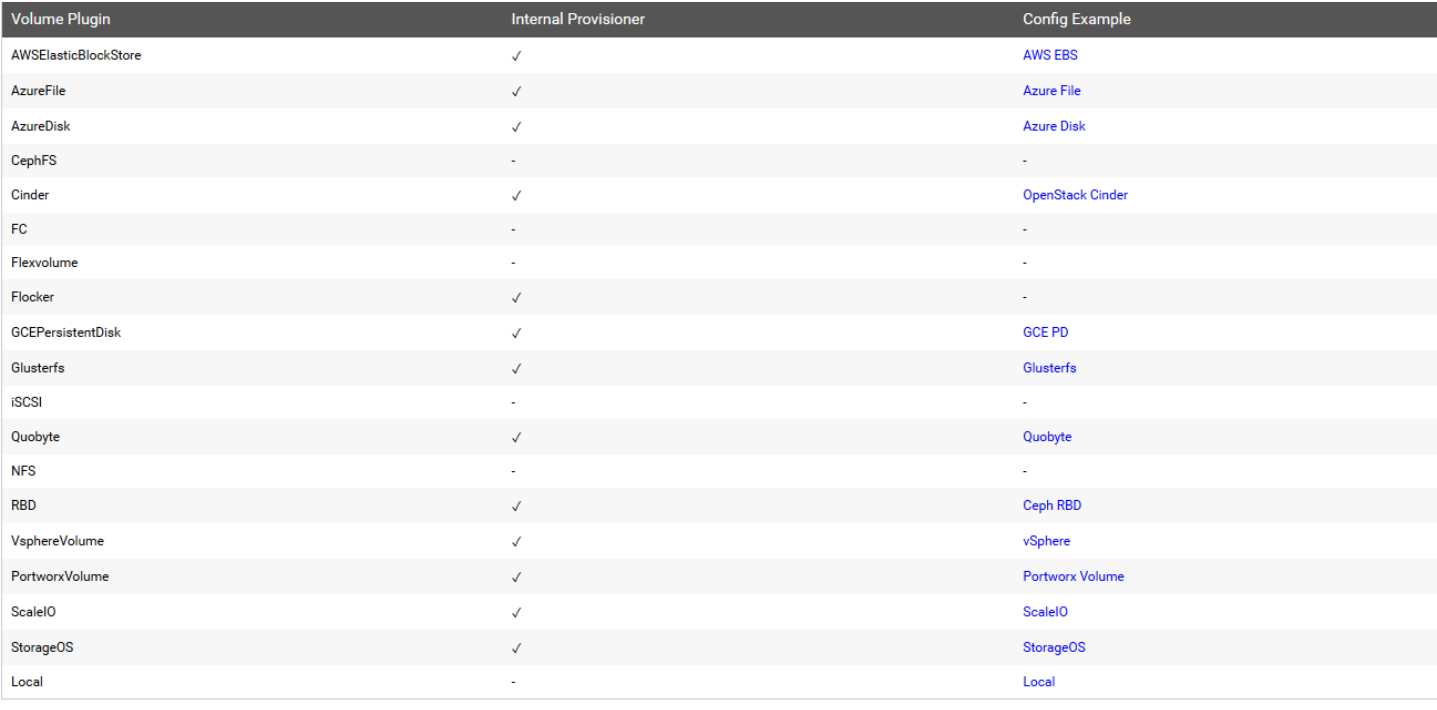

常见的provisioner

provisioner既可以由内部供应商提供,也可以由外部供应商提供,如果是外部供应商可以参考https://github.com/kubernetes-incubator/external-storage/下提供的方法创建。

https://github.com/kubernetes-sigs/sig-storage-lib-external-provisioner

以NFS为例,要想使用NFS,我们需要一个nfs-client的自动装载程序,称之为provisioner,这个程序会使我们已经配置好的NFS服务器自动创建持久卷,也就是自动帮我们创建PV。

allowVolumeExpansion:允许卷扩展,PersistentVolume 可以配置成可扩展。将此功能设置为true时,允许用户通过编辑相应的 PVC 对象来调整卷大小。当基础存储类的allowVolumeExpansion字段设置为 true 时,以下类型的卷支持卷扩展。

注意:此功能仅用于扩容卷,不能用于缩小卷。

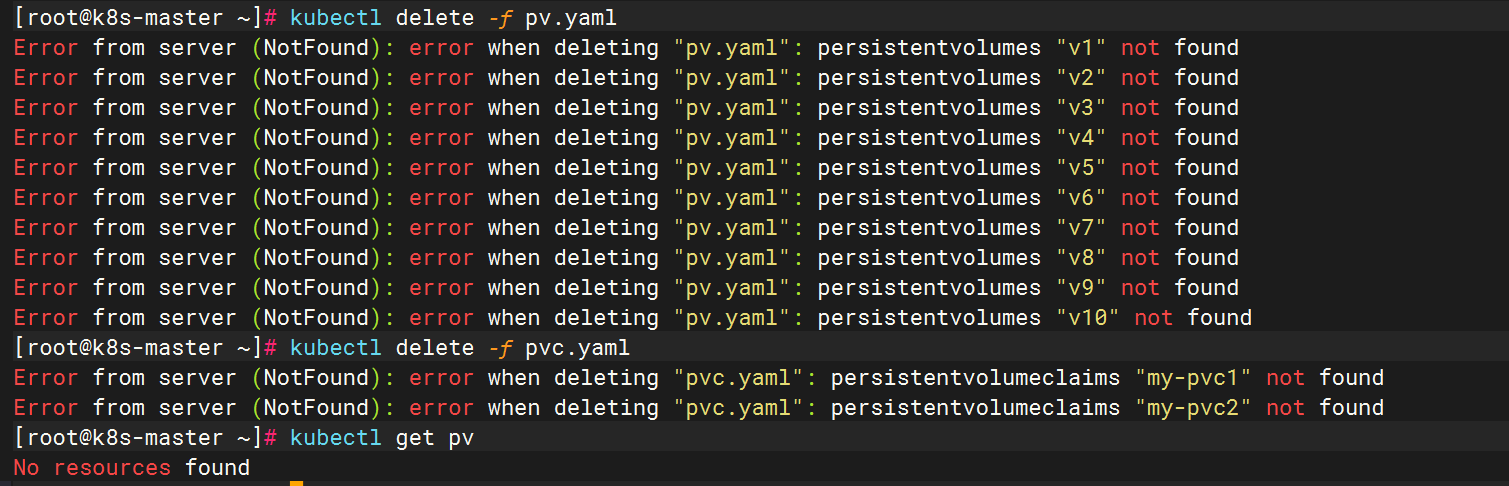

先删除之前手动创建的pv

6.2 安装nfs provisioner,用于配合存储类动态生成pv

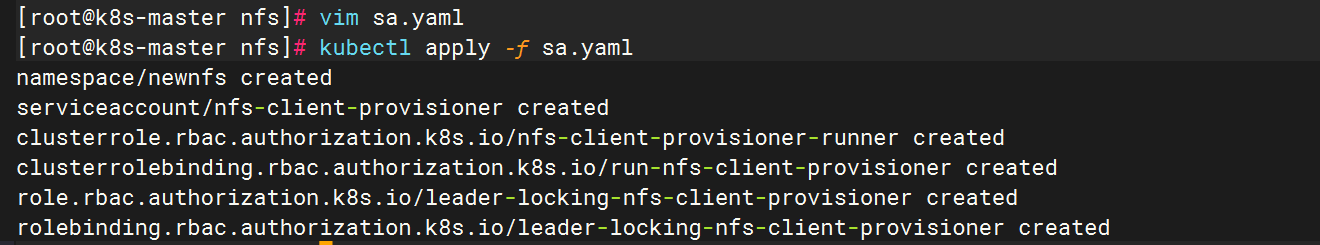

1、创建运行nfs-provisioner需要的sa账号

apiVersion: v1

kind: Namespace

metadata:

name: newnfs

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

namespace: newnfs

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: newnfs

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

namespace: newnfs

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

namespace: newnfs

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: newnfs

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

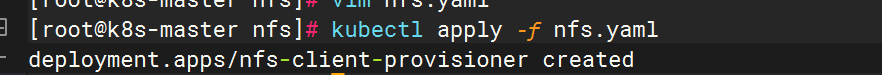

2、安装nfs-provisioner程序

kind: Deployment

apiVersion: apps/v1

metadata:

name: nfs-client-provisioner

namespace: newnfs

spec:

replicas: 1

selector:

matchLabels:

app: nfs-client-provisioner

strategy:

type: Recreate #设置升级策略为删除再创建(默认为滚动更新)

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner #上一步创建的ServiceAccount名称

containers:

- name: nfs-client-provisioner

image: registry.cn-beijing.aliyuncs.com/mydlq/nfs-subdir-external-provisioner:v4.0.0

imagePullPolicy: IfNotPresent

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME # Provisioner的名称,以后设置的storageclass要和这个保持一致

value: storage-nfs

- name: NFS_SERVER # NFS服务器地址,需和valumes参数中配置的保持一致

value: 192.168.71.132

- name: NFS_PATH # NFS服务器数据存储目录,需和volumes参数中配置的保持一致

value: /data

- name: ENABLE_LEADER_ELECTION

value: "true"

volumes:

- name: nfs-client-root

nfs:

server: 192.168.71.132 # NFS服务器地址

path: /data # NFS共享目录更新资源清单文件

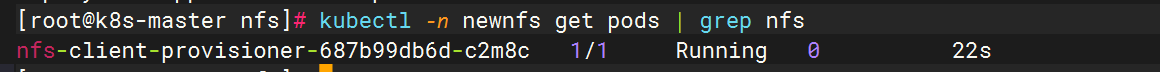

查看nfs-provisioner是否正常运行

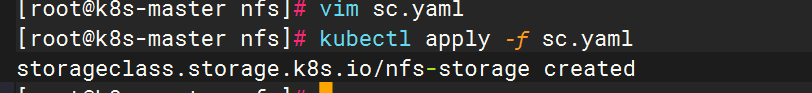

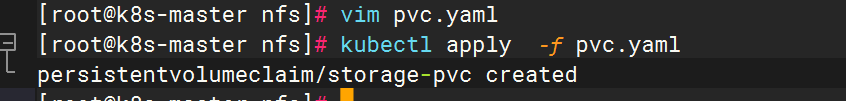

6.3 创建storageclass,动态供给pv

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

namespace: newnfs

name: nfs-storage

annotations:

storageclass.kubernetes.io/is-default-class: "false" ## 是否设置为默认的storageclass

provisioner: storage-nfs ## 动态卷分配者名称,必须和上面创建的deploy中环境变量"PROVISIONER_NAME"变量值一致

parameters:

archiveOnDelete: "true" ## 设置为"false"时删除PVC不会保留数据,"true"则保留数据

mountOptions:

- hard ## 指定为硬挂载方式

- nfsvers=4 ## 指定NFS版本,这个需要根据NFS Server版本号设置nfs更新资源清单文件

查看storageclass是否创建成功

显示内容如上,说明storageclass创建成功了

注意:provisioner处写的example.com/nfs应该跟安装nfs provisioner时候的env下的PROVISIONER_NAME的value值保持一致,如下:

env:

name: PROVISIONER_NAME

value: storage-nfs

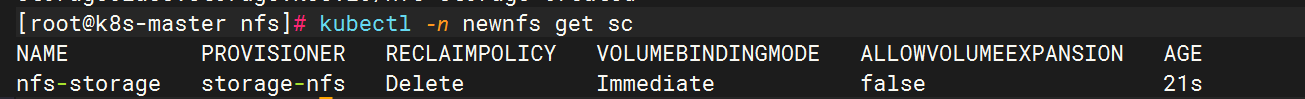

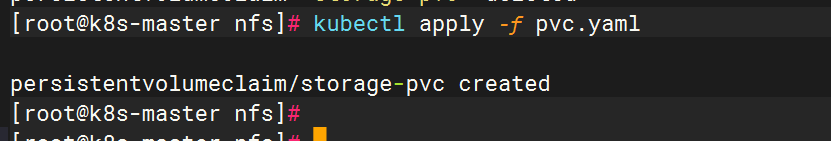

6.4 创建pvc,通过storageclass动态生成pv

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: storage-pvc

namespace: newnfs

spec:

storageClassName: nfs-storage ## 需要与上面创建的storageclass的名称一致

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Mi

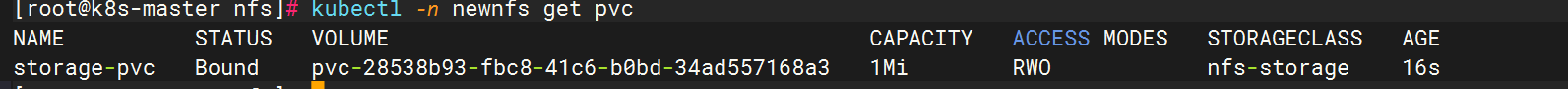

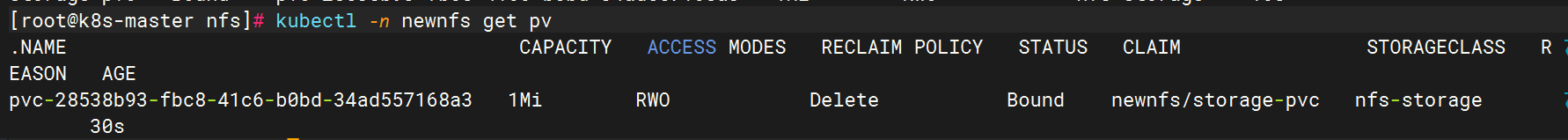

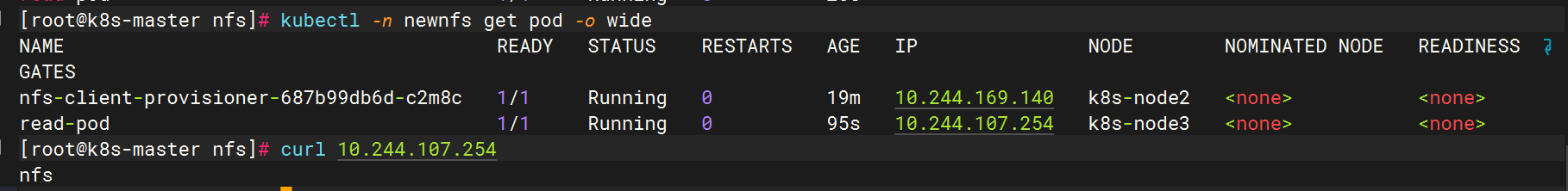

查看是否动态生成了pv,pvc是否创建成功,并和pv绑定

通过上面可以看到test-claim1的pvc已经成功创建了,绑定的pv是pvc-28538b93-fbc8-41c6-b0bd-34ad557168a3,这个pv是由storageclass调用nfs provisioner自动生成的。

步骤总结:

1、供应商:创建一个nfs provisioner

2、创建storageclass,storageclass指定刚才创建的供应商

3、创建pvc,这个pvc指定storageclass

6.5 创建pod,挂载storageclass动态生成的pvc:storage-pvc

kind: Pod

apiVersion: v1

metadata:

name: read-pod

namespace: newnfs

spec:

containers:

- name: read-pod

image: nginx

imagePullPolicy: IfNotPresent

volumeMounts:

- name: nfs-pvc

mountPath: /usr/share/nginx/html

restartPolicy: "Never"

volumes:

- name: nfs-pvc

persistentVolumeClaim:

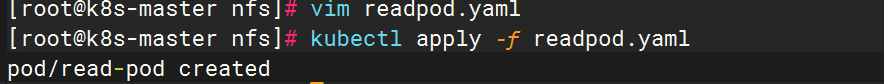

claimName: storage-pvc更新资源清单文件

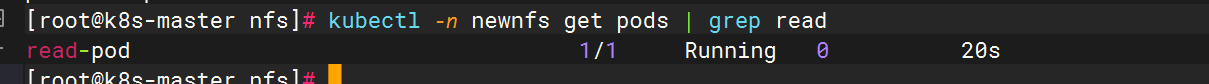

查看pod是否创建成功

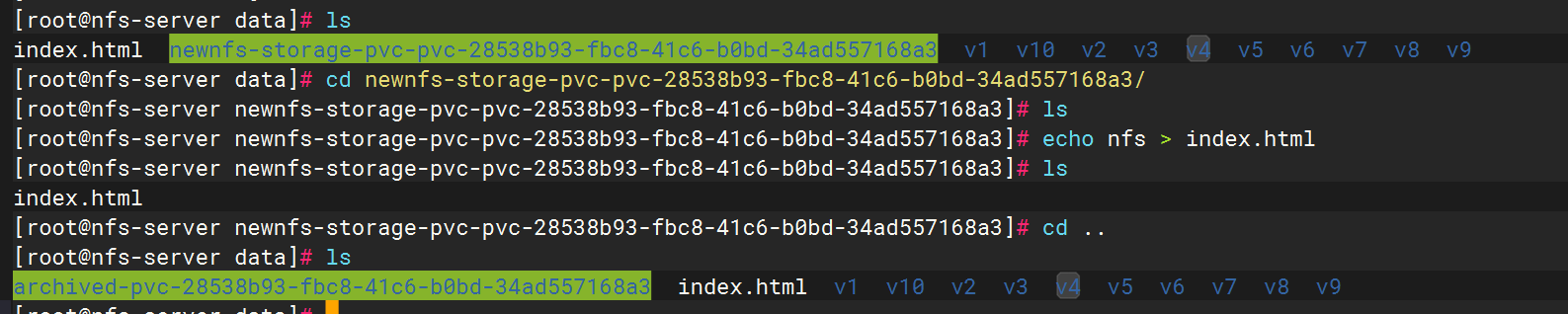

在NFS服务端写入首页

测试访问

查看index.html的物理位置

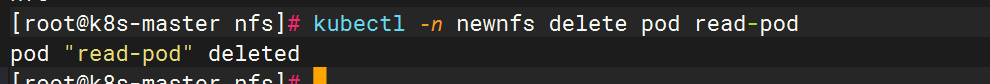

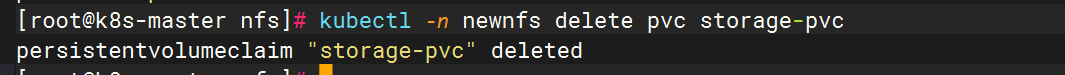

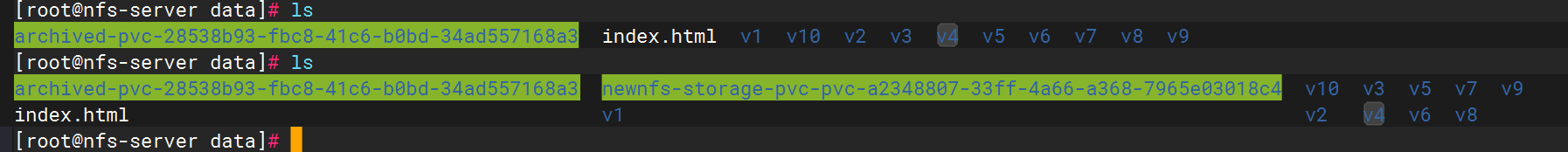

删除read-pod ,删除pvc 会自动删除pv ,所以默认的回收策略是delete是可以验证出来的

重新更新pvc文件,会发现物理nfs中多了一个目录。

七.总结

Kubernetes持久化存储是确保数据在Pod生命周期外仍可保留的关键技术。文章详细介绍了Kubernetes中的多种存储方案:包括临时存储emptyDir、节点级存储hostPath、网络共享存储NFS,以及更高级的PersistentVolume(PV)和PersistentVolumeClaim(PVC)机制。重点讲解了StorageClass实现PV动态供给的原理,通过NFS Provisioner示例演示了自动化PV创建过程。不同存储方案适用于不同场景:emptyDir适合临时数据,hostPath用于节点绑定数据,NFS提供共享存储,而PVC+StorageClass组合则能实现存储资源的动态分配和自动化管理。