最近在做一个 AI 客服对话组件,需要接入语音输入。第一反应是用浏览器原生的 window.SpeechRecognition:

js

const recognition = new webkitSpeechRecognition()

recognition.lang = 'zh-CN'

recognition.onresult = (e) => console.log(e.results[0][0].transcript)

recognition.start()代码很简单,本地测试也没问题。但上线后发现:在国内完全不可用。

加了完整日志后才搞清楚原因:

js

recognition.onstart = () => console.log('已启动') // ✅ 触发

recognition.onspeechstart = () => console.log('检测到声音') // ❌ 不触发

recognition.onresult = (e) => console.log('有结果', e) // ❌ 不触发

recognition.onerror = (e) => console.log('错误', e.error) // 有时触发 network

recognition.onend = () => console.log('结束') // ✅ 触发(静默结束)根本原因 :Chrome 的 SpeechRecognition 底层调用 www.google.com/speech-api,这个域名在国内被 GFW 封锁。表现为 onstart 触发后静默等待,最终 onend 直接结束,没有任何识别结果。

解决方案:Vosk-Browser 离线识别

Vosk 是开源的离线语音识别引擎,@lichess-org/vosk-browser 是其 WebAssembly 浏览器版本的维护 fork(原版 vosk-browser 已停止维护)。

核心优势:

- 完全离线,不依赖任何外部服务,国内可用

- 支持中文,小模型约 40MB

- 基于 WebWorker + WASM,识别不阻塞主线程

准备工作

1. 安装依赖

bash

npm install @lichess-org/vosk-browser2. 复制静态文件到 public 目录

bash

cp node_modules/@lichess-org/vosk-browser/dist/vosk.wasm.js public/

cp node_modules/@lichess-org/vosk-browser/dist/vosk.worker.js public/

cp node_modules/@lichess-org/vosk-browser/dist/vosk.wasm public/Worker 和 WASM 文件必须放到可以直接通过 URL 访问的静态目录,不能走 webpack 打包。

3. 下载中文模型

ruby

https://alphacephei.com/vosk/models/vosk-model-small-cn-0.22.zip下载后放到 public/ 目录。建议放自己服务器,不要依赖 CDN,国内访问不稳定。

核心 API

js

import { createVoskClient } from '@lichess-org/vosk-browser'

// 1. 加载模型(只需一次,内部启动 WebWorker)

const client = await createVoskClient({

workerUrl: '/vosk.worker.js',

wasmUrl: '/vosk.wasm',

modelUrl: '/vosk-model-small-cn-0.22.zip'

})

// 2. 创建识别器(每次录音新建一个)

const recognizer = new client.KaldiRecognizer(16000) // 采样率必须 16000

// 3. 监听识别结果(每句话结束触发一次)

recognizer.on('result', (msg) => {

console.log(msg.result.text) // 识别出的文字

})

// 4. 喂音频数据(通过 ScriptProcessor 实时传入)

recognizer.acceptWaveformFloat(float32Array, 16000)

// 5. 停止时获取最后一段未提交的结果

recognizer.retrieveFinalResult()

// 6. 销毁识别器

recognizer.remove()实现要点

要点一:页面空闲时预加载模型

模型首次加载需要解压 WASM,用 requestIdleCallback 在页面空闲时静默加载,用户点击录音时已就绪:

js

async function preloadVosk() {

if (vosk.client || vosk.loading) return

vosk.loading = true

try {

vosk.client = await Promise.race([

createVoskClient({

workerUrl: '/vosk.worker.js',

wasmUrl: '/vosk.wasm',

modelUrl: '/vosk-model-small-cn-0.22.zip'

}),

// 加超时保护,防止模型文件不存在时永远挂起

new Promise((_, reject) => setTimeout(() => reject(new Error('模型加载超时')), 60000))

])

} catch (e) {

vosk.client = null

} finally {

vosk.loading = false

}

}

// 页面空闲时预加载

if (window.requestIdleCallback) {

requestIdleCallback(() => preloadVosk(), { timeout: 3000 })

} else {

setTimeout(preloadVosk, 2000)

}要点二:用 AudioContext + ScriptProcessor 喂数据

Vosk 需要 16000Hz 的 PCM Float32 音频流:

js

const sampleRate = 16000

const stream = await navigator.mediaDevices.getUserMedia({ audio: true })

const audioCtx = new AudioContext({ sampleRate })

const source = audioCtx.createMediaStreamSource(stream)

const processor = audioCtx.createScriptProcessor(4096, 1, 1)

source.connect(processor)

processor.connect(audioCtx.destination)

processor.onaudioprocess = (e) => {

// 实时把麦克风数据喂给 Vosk

recognizer.acceptWaveformFloat(e.inputBuffer.getChannelData(0), sampleRate)

}要点三:避免识别文本重复

录音过程中 result 事件持续触发(每句话结束一次),停止时还需调 retrieveFinalResult() 获取最后一段。

如果在 startVoice 和 stopVoice 里各绑一次 result 监听,最后一段文本会被计算两遍。

用 finalizing 标志区分:

js

// 开始录音时绑定监听,录音中累积文本

recognizer.on('result', (msg) => {

const text = msg?.result?.text?.replace(/\s+/g, '') || ''

if (!text) return

if (vosk.finalizing) {

// retrieveFinalResult 触发的最后一段

inputBox.value += vosk.partialText + text

vosk.partialText = ''

} else {

// 录音中间的结果,先累积

vosk.partialText += text

}

})

// 停止录音

function stopVoice() {

// ... 停止音频流 ...

vosk.finalizing = true

recognizer.on('result', () => {

recognizer.remove()

vosk.finalizing = false

})

recognizer.retrieveFinalResult()

}要点四:Vue 2 中不要用 _ 前缀存状态

Vue 2 不会代理 _ 或 $ 开头的 data 属性,直接赋值会是 undefined:

js

// ❌ 错误:this._voskClient 永远是 undefined

data() {

return { _voskClient: null }

}

// ✅ 正确:在 mounted 里直接挂到实例上(非响应式)

mounted() {

this.$vosk = {

client: null, loading: false,

recognizer: null, stream: null,

audioCtx: null, processor: null,

partialText: '', finalizing: false

}

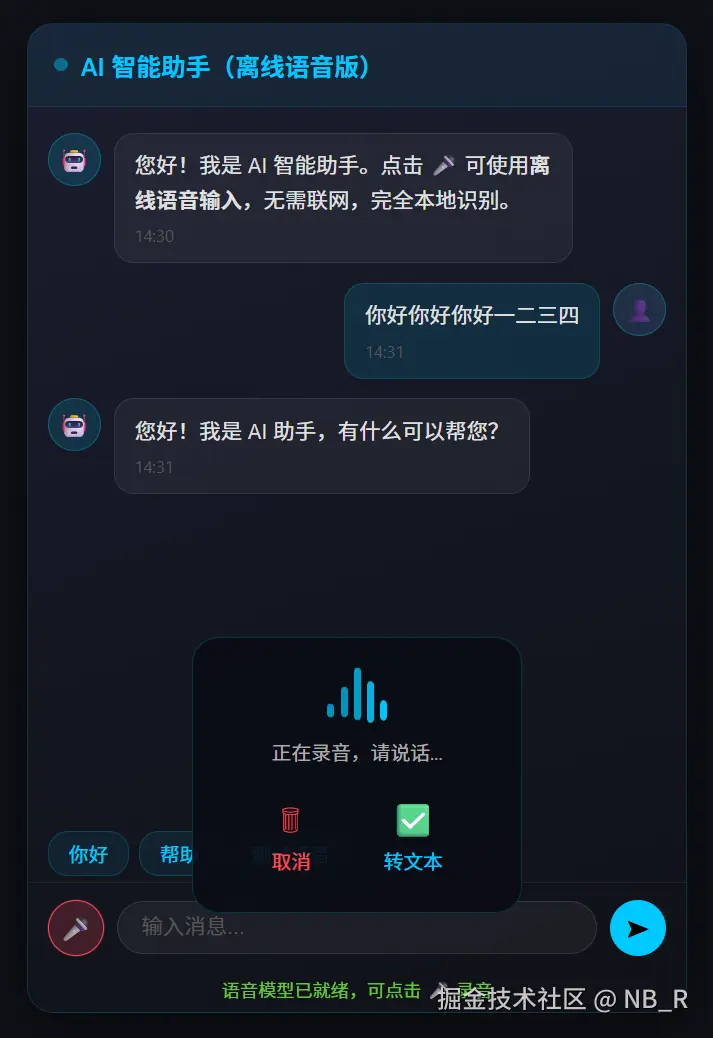

}完整 H5 Demo(纯离线版)

以下是可直接运行的完整示例,所有依赖全部本地化,无任何外部请求:

html

<!DOCTYPE html>

<html lang="zh-CN">

<head>

<meta charset="UTF-8" />

<meta name="viewport" content="width=device-width, initial-scale=1.0" />

<title>离线语音转文字 Demo</title>

<style>

* { box-sizing: border-box; margin: 0; padding: 0; }

body {

font-family: -apple-system, BlinkMacSystemFont, sans-serif;

background: #0f1117; color: #e0e0e0;

display: flex; justify-content: center; align-items: center; min-height: 100vh;

}

.chat-box {

width: 400px; height: 600px;

background: linear-gradient(160deg, #1a1d2e 0%, #0f1117 100%);

border-radius: 16px; border: 1px solid rgba(0,200,255,0.15);

box-shadow: 0 8px 40px rgba(0,0,0,0.6);

display: flex; flex-direction: column; overflow: hidden; position: relative;

}

.chat-header {

padding: 14px 16px; background: rgba(0,200,255,0.06);

border-bottom: 1px solid rgba(0,200,255,0.1);

display: flex; align-items: center; gap: 8px;

font-size: 15px; font-weight: 600; color: #00c8ff;

}

.dot { width: 8px; height: 8px; border-radius: 50%; background: #00c8ff; animation: blink 1.4s infinite; }

@keyframes blink { 0%,100%{opacity:1} 50%{opacity:0.3} }

.chat-content {

flex: 1; overflow-y: auto; padding: 16px 12px;

display: flex; flex-direction: column; gap: 12px;

}

.chat-content::-webkit-scrollbar { width: 4px; }

.chat-content::-webkit-scrollbar-thumb { background: rgba(0,200,255,0.2); border-radius: 2px; }

.msg { display: flex; gap: 8px; max-width: 85%; }

.msg.user { align-self: flex-end; flex-direction: row-reverse; }

.msg-avatar {

width: 32px; height: 32px; border-radius: 50%;

background: rgba(0,200,255,0.15); border: 1px solid rgba(0,200,255,0.3);

display: flex; align-items: center; justify-content: center; font-size: 14px; flex-shrink: 0;

}

.msg.user .msg-avatar { background: rgba(100,180,255,0.15); }

.msg-bubble {

background: rgba(255,255,255,0.05); border: 1px solid rgba(255,255,255,0.08);

border-radius: 12px; padding: 8px 12px; font-size: 13px; line-height: 1.6;

}

.msg.user .msg-bubble { background: rgba(0,200,255,0.12); border-color: rgba(0,200,255,0.2); }

.msg-time { font-size: 10px; color: #555; margin-top: 4px; }

.quick-cmds { display: flex; flex-wrap: wrap; gap: 6px; padding: 8px 12px 4px; }

.quick-cmd {

padding: 4px 12px; border-radius: 12px;

background: rgba(0,200,255,0.08); border: 1px solid rgba(0,200,255,0.2);

font-size: 12px; color: #00c8ff; cursor: pointer;

}

.quick-cmd:hover { background: rgba(0,200,255,0.18); }

.chat-input {

display: flex; align-items: center; gap: 8px;

padding: 10px 12px; border-top: 1px solid rgba(255,255,255,0.06);

}

.input-box {

flex: 1; background: rgba(255,255,255,0.05); border: 1px solid rgba(255,255,255,0.1);

border-radius: 20px; padding: 8px 14px; color: #e0e0e0; font-size: 13px; outline: none;

}

.input-box:focus { border-color: rgba(0,200,255,0.4); }

.input-box::placeholder { color: #555; }

.btn-send, .btn-mic {

width: 34px; height: 34px; border-radius: 50%; border: none;

cursor: pointer; display: flex; align-items: center; justify-content: center;

font-size: 15px; flex-shrink: 0;

}

.btn-send { background: #00c8ff; color: #000; }

.btn-mic { background: rgba(0,200,255,0.1); border: 1px solid rgba(0,200,255,0.3); color: #00c8ff; }

.btn-mic.recording { background: rgba(255,71,87,0.2); border-color: #ff4757; color: #ff4757; animation: pulse-red 1s infinite; }

@keyframes pulse-red { 0%,100%{box-shadow:0 0 0 0 rgba(255,71,87,0.4)} 50%{box-shadow:0 0 0 6px rgba(255,71,87,0)} }

.voice-overlay {

position: absolute; bottom: 60px; left: 50%; transform: translateX(-50%);

background: rgba(10,12,20,0.92); border: 1px solid rgba(0,200,255,0.2);

border-radius: 16px; padding: 16px 24px;

display: none; flex-direction: column; align-items: center; gap: 12px;

z-index: 20; min-width: 200px;

}

.voice-overlay.show { display: flex; }

.voice-wave { display: flex; align-items: flex-end; gap: 4px; height: 32px; }

.voice-wave span { width: 4px; border-radius: 2px; background: #00c8ff; animation: wave-bar 0.8s ease-in-out infinite; }

.voice-wave span:nth-child(1){height:8px;animation-delay:0s}

.voice-wave span:nth-child(2){height:18px;animation-delay:0.1s}

.voice-wave span:nth-child(3){height:28px;animation-delay:0.2s}

.voice-wave span:nth-child(4){height:18px;animation-delay:0.3s}

.voice-wave span:nth-child(5){height:8px;animation-delay:0.4s}

@keyframes wave-bar { 0%,100%{transform:scaleY(1);opacity:0.7} 50%{transform:scaleY(1.6);opacity:1} }

.voice-tip { font-size: 12px; color: #aaa; }

.voice-actions { display: flex; gap: 24px; }

.voice-action {

display: flex; flex-direction: column; align-items: center; gap: 4px;

cursor: pointer; font-size: 12px; color: #aaa; padding: 6px 12px;

border-radius: 8px; transition: all 0.2s;

}

.voice-action .icon { font-size: 20px; }

.voice-action.cancel:hover { color: #ff4757; background: rgba(255,71,87,0.1); }

.voice-action.confirm:hover { color: #00c8ff; background: rgba(0,200,255,0.1); }

.status-bar { padding: 4px 12px 8px; font-size: 11px; color: #555; text-align: center; }

.status-bar.ready { color: #67c23a; }

.status-bar.error { color: #f56c6c; }

</style>

</head>

<body>

<div class="chat-box">

<div class="chat-header">

<div class="dot"></div>

AI 智能助手(离线语音版)

</div>

<div class="chat-content" id="chatContent"></div>

<div class="quick-cmds">

<span class="quick-cmd" onclick="sendText('你好')">你好</span>

<span class="quick-cmd" onclick="sendText('帮助')">帮助</span>

<span class="quick-cmd" onclick="sendText('测试语音')">测试语音</span>

</div>

<!-- 录音遮罩 -->

<div class="voice-overlay" id="voiceOverlay">

<div class="voice-wave">

<span></span><span></span><span></span><span></span><span></span>

</div>

<div class="voice-tip">正在录音,请说话...</div>

<div class="voice-actions">

<div class="voice-action cancel" onclick="cancelVoice()">

<span class="icon">🗑</span><span>取消</span>

</div>

<div class="voice-action confirm" onclick="stopVoice()">

<span class="icon">✅</span><span>转文本</span>

</div>

</div>

</div>

<div class="chat-input">

<button class="btn-mic" id="btnMic" onclick="startVoice()" title="点击录音">🎤</button>

<input class="input-box" id="inputBox" placeholder="输入消息..." onkeydown="if(event.key==='Enter')sendMessage()" />

<button class="btn-send" onclick="sendMessage()">➤</button>

</div>

<div class="status-bar" id="statusBar">语音模型加载中...</div>

</div>

<script type="module">

// 所有文件全部本地化,无外部依赖

import { createVoskClient } from '/vosk.wasm.js'

const vosk = {

client: null, loading: false,

recognizer: null, stream: null,

audioCtx: null, processor: null,

partialText: '', finalizing: false

}

let isRecording = false

const chatContent = document.getElementById('chatContent')

const inputBox = document.getElementById('inputBox')

const btnMic = document.getElementById('btnMic')

const voiceOverlay = document.getElementById('voiceOverlay')

const statusBar = document.getElementById('statusBar')

// ── 预加载模型 ──────────────────────────────────────────────

async function preloadVosk() {

if (vosk.client || vosk.loading) return

vosk.loading = true

setStatus('语音模型加载中...', '')

try {

vosk.client = await Promise.race([

createVoskClient({

workerUrl: '/vosk.worker.js',

wasmUrl: '/vosk.wasm',

modelUrl: '/vosk-model-small-cn-0.22.zip'

}),

new Promise((_, r) => setTimeout(() => r(new Error('加载超时,请确认模型文件已放置')), 60000))

])

setStatus('语音模型已就绪,可点击 🎤 录音', 'ready')

} catch (e) {

vosk.client = null

setStatus('模型加载失败:' + e.message, 'error')

} finally {

vosk.loading = false

}

}

// ── 开始录音 ────────────────────────────────────────────────

window.startVoice = async function () {

if (isRecording) return

if (!vosk.client) {

if (vosk.loading) { alert('模型加载中,请稍候...'); return }

await preloadVosk()

if (!vosk.client) return

}

try {

const sampleRate = 16000

vosk.recognizer = new vosk.client.KaldiRecognizer(sampleRate)

vosk.partialText = ''

vosk.finalizing = false

vosk.recognizer.on('result', (msg) => {

const text = msg?.result?.text?.replace(/\s+/g, '') || ''

if (!text) return

if (vosk.finalizing) {

const full = vosk.partialText + text

if (full) inputBox.value += full

vosk.partialText = ''

} else {

vosk.partialText += text

}

})

vosk.stream = await navigator.mediaDevices.getUserMedia({ audio: true })

vosk.audioCtx = new AudioContext({ sampleRate })

const source = vosk.audioCtx.createMediaStreamSource(vosk.stream)

vosk.processor = vosk.audioCtx.createScriptProcessor(4096, 1, 1)

source.connect(vosk.processor)

vosk.processor.connect(vosk.audioCtx.destination)

vosk.processor.onaudioprocess = (e) => {

if (vosk.recognizer) vosk.recognizer.acceptWaveformFloat(e.inputBuffer.getChannelData(0), sampleRate)

}

isRecording = true

btnMic.classList.add('recording')

voiceOverlay.classList.add('show')

} catch (err) {

alert(err.name === 'NotAllowedError' ? '麦克风权限被拒绝' : '录音启动失败:' + err.message)

}

}

// ── 停止录音(转文本) ───────────────────────────────────────

window.stopVoice = function () {

if (!isRecording) return

isRecording = false

btnMic.classList.remove('recording')

voiceOverlay.classList.remove('show')

if (vosk.processor) { vosk.processor.disconnect(); vosk.processor = null }

if (vosk.audioCtx) { vosk.audioCtx.close(); vosk.audioCtx = null }

if (vosk.stream) { vosk.stream.getTracks().forEach(t => t.stop()); vosk.stream = null }

if (vosk.recognizer) {

vosk.finalizing = true

vosk.recognizer.on('result', () => {

vosk.recognizer.remove()

vosk.recognizer = null

vosk.finalizing = false

})

vosk.recognizer.retrieveFinalResult()

}

}

// ── 取消录音 ────────────────────────────────────────────────

window.cancelVoice = function () {

isRecording = false

btnMic.classList.remove('recording')

voiceOverlay.classList.remove('show')

if (vosk.processor) { vosk.processor.disconnect(); vosk.processor = null }

if (vosk.audioCtx) { vosk.audioCtx.close(); vosk.audioCtx = null }

if (vosk.stream) { vosk.stream.getTracks().forEach(t => t.stop()); vosk.stream = null }

if (vosk.recognizer){ vosk.recognizer.remove(); vosk.recognizer = null }

vosk.partialText = ''

}

// ── 消息收发 ────────────────────────────────────────────────

window.sendMessage = function () {

const text = inputBox.value.trim()

if (!text) return

appendMsg('user', text)

inputBox.value = ''

setTimeout(() => appendMsg('ai', getReply(text)), 600)

}

window.sendText = function (text) {

inputBox.value = text

sendMessage()

}

function appendMsg(type, content) {

const div = document.createElement('div')

div.className = 'msg ' + type

const now = new Date()

const time = `${now.getHours().toString().padStart(2,'0')}:${now.getMinutes().toString().padStart(2,'0')}`

div.innerHTML = `

<div class="msg-avatar">${type === 'user' ? '👤' : '🤖'}</div>

<div class="msg-bubble">

<div>${content}</div>

<div class="msg-time">${time}</div>

</div>`

chatContent.appendChild(div)

chatContent.scrollTop = chatContent.scrollHeight

}

function getReply(text) {

const map = {

'你好': '您好!我是 AI 助手,有什么可以帮您?',

'帮助': '您可以直接输入问题,或点击 🎤 使用语音输入。',

'测试语音': '语音识别正常!这是一条测试回复。',

}

for (const k in map) { if (text.includes(k)) return map[k] }

return `您说的是:"${text}",我已收到。`

}

function setStatus(msg, type) {

statusBar.textContent = msg

statusBar.className = 'status-bar ' + (type || '')

}

// 页面空闲时预加载模型

if (window.requestIdleCallback) requestIdleCallback(() => preloadVosk(), { timeout: 3000 })

else setTimeout(preloadVosk, 2000)

appendMsg('ai', '您好!我是 AI 智能助手。点击 🎤 可使用<b>离线语音输入</b>,无需联网,完全本地识别。')

</script>

</body>

</html>运行前确保以下文件都在 public 根目录:

vosk.wasm.jsvosk.worker.jsvosk.wasmvosk-model-small-cn-0.22.zip