目录

[2. 克隆两台虚拟机](#2. 克隆两台虚拟机)

[3 配置网卡及主机名](#3 配置网卡及主机名)

[3.1 controller](#3.1 controller)

[3.2 compute](#3.2 compute)

[4. 关闭网络防火墙/selinux/NetworkManager](#4. 关闭网络防火墙/selinux/NetworkManager)

[4.1 controller](#4.1 controller)

[4.2 compute](#4.2 compute)

[5.1 contoller](#5.1 contoller)

[5.2 compute](#5.2 compute)

[6. NTP 时钟同步](#6. NTP 时钟同步)

[6.1. controller](#6.1. controller)

[6.2 compute](#6.2 compute)

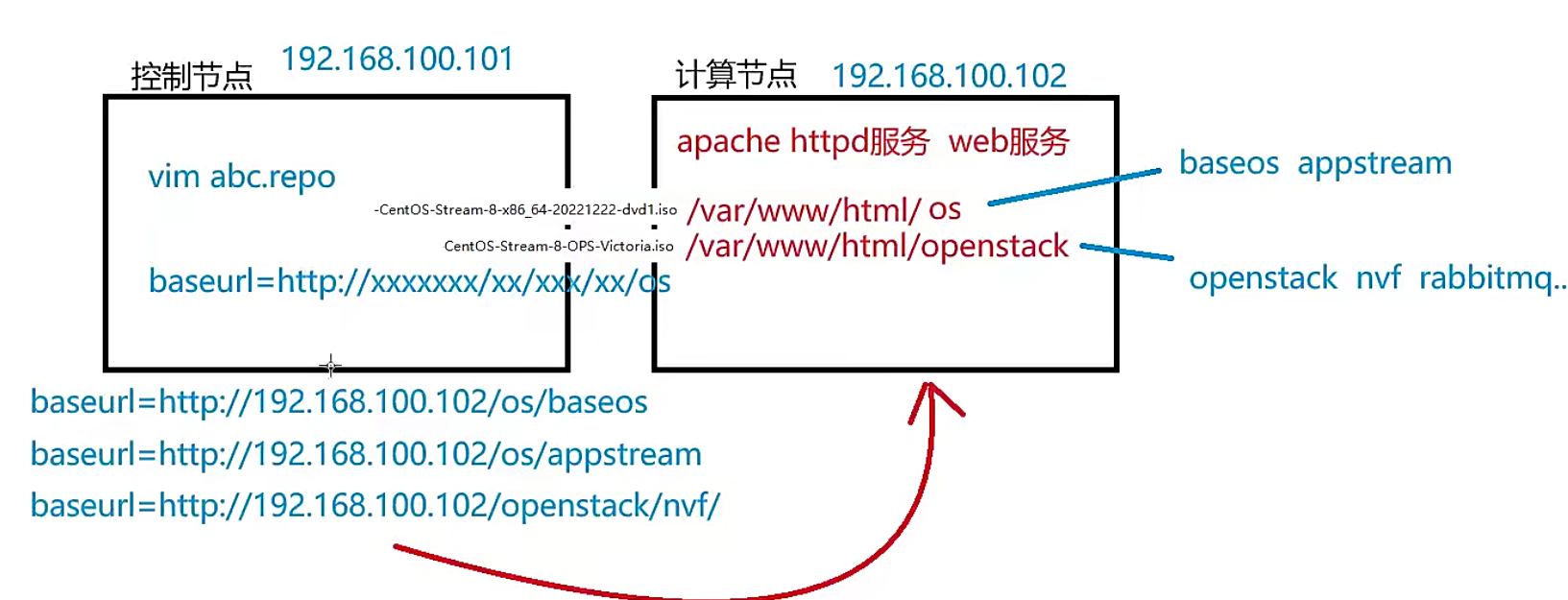

[7. 配置 httpd 服务](#7. 配置 httpd 服务)

[8. 配置yum源](#8. 配置yum源)

[8.1 控制节点](#8.1 控制节点)

[8.2 计算节点](#8.2 计算节点)

[9 安装 packstack 工具](#9 安装 packstack 工具)

[10. 注意](#10. 注意)

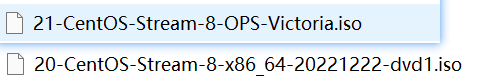

本地源,是自己手工制作的一个本地iso文件,配套openstack V版。

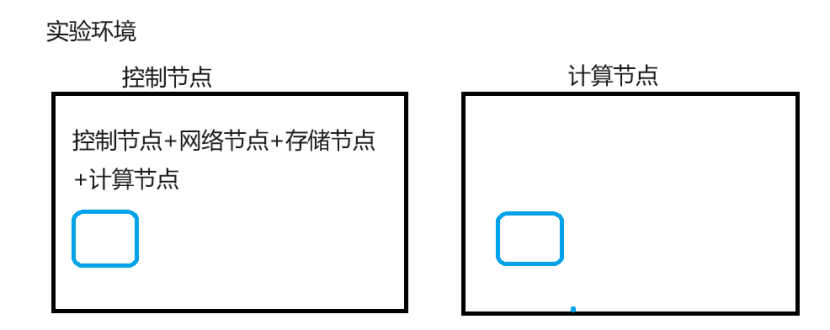

1.资源规划

大家一定要注意:你虚拟机给的内存规格加起来,一定要小于宿主机内存。

比如宿主机16G内存,每一台给8G内存,不可以。

大家通过模板克隆出来两台linux。

controller控制节点 192.168.153.200

compute计算节点 192.168.153.202

2. 克隆两台虚拟机

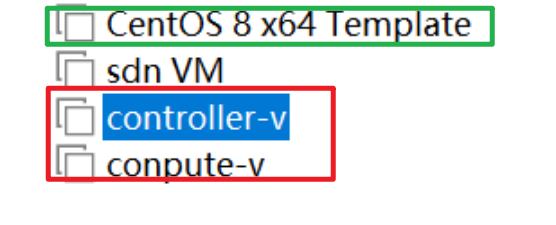

根据模板克隆两台虚拟机

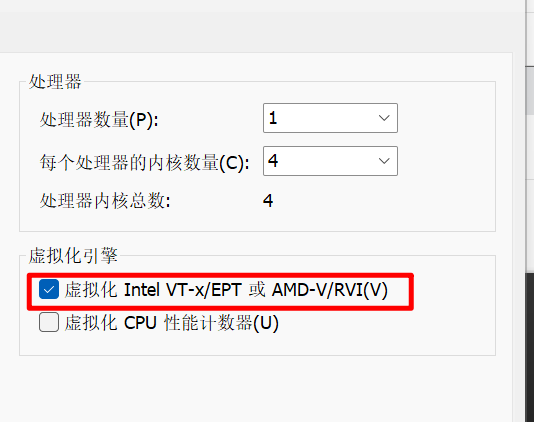

控制节点和计算节点都要开启cpu虚拟化

3 配置网卡及主机名

3.1 controller

bash

[root@template ~]# cd /etc/sysconfig/network-scripts/

[root@template network-scripts]# ls

ifcfg-ens160

[root@template network-scripts]# vim ifcfg-ens160

[root@template network-scripts]# cat ifcfg-ens160

TYPE=Ethernet

BOOTPROTO=none

NAME=ens160

DEVICE=ens160

ONBOOT=yes

IPADDR=192.168.153.200

NETMASK=255.255.255.0

GATEWAY=192.168.153.2

DNS1=192.168.153.2修改控制节点计算机名:

root@template network-scripts# hostnamectl set-hostname controller

3.2 compute

bash

[root@template ~]# cd /etc/sysconfig/network-scripts/

[root@template network-scripts]# ls

ifcfg-ens160

[root@template network-scripts]# cat ifcfg-ens160

TYPE=Ethernet

BOOTPROTO=dhcp

NAME=ens160

DEVICE=ens160

ONBOOT=yes

[root@template network-scripts]# vim ifcfg-ens160

[root@template network-scripts]# cat ifcfg-ens160

TYPE=Ethernet

BOOTPROTO=none

NAME=ens160

DEVICE=ens160

ONBOOT=yes

IPADDR=192.168.153.202

NETMASK=255.255.255.0

GATEWAY=192.168.153.2

DNS1=192.168.153.2修改计算节点计算机别名:

root@template network-scripts# hostnamectl set-hostname compute

两台主机设置完后需要重启

4. 关闭网络防火墙/selinux/NetworkManager

注意:networkmanager这个组件是在linux 8版本里面管理网络服务的(ip地址),如果你提前把它关闭并禁用了,那么当节点重启的时候,是无法自动获取到ip地址的。

但是如果不关闭,它又会和openstack里面的neutron网络服务组件产生冲突。

我们采用这种方式:暂时先不关闭networkmanager,等把所有环境全部安装好之后,再手工关闭和禁用networkmanager,并使用network来替换networkmanager这个服务。

控制节点关闭防火墙及selinux

4.1 controller

bash

[root@controller ~]# systemctl stop firewalld

[root@controller ~]# systemctl disable firewalld

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@controller ~]# setenforce 0

[root@controller ~]# sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/sysconfig/selinux

[root@controller ~]# systemctl stop NetworkManager

[root@controller ~]# systemctl disable NetworkManager

Removed /etc/systemd/system/multi-user.target.wants/NetworkManager.service.

Removed /etc/systemd/system/dbus-org.freedesktop.nm-dispatcher.service.

Removed /etc/systemd/system/network-online.target.wants/NetworkManager-wait-online.service.4.2 compute

bash

[root@compute ~]# systemctl stop firewalld

[root@compute ~]# systemctl disable firewalld

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@compute ~]# setenforce 0

[root@compute ~]# sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/sysconfig/selinux

[root@compute ~]# systemctl stop NetworkManager

[root@compute ~]# systemctl disable NetworkManager

Removed /etc/systemd/system/multi-user.target.wants/NetworkManager.service.

Removed /etc/systemd/system/dbus-org.freedesktop.nm-dispatcher.service.

Removed /etc/systemd/system/network-online.target.wants/NetworkManager-wait-online.service.5.主机名映射、yum源、基础软件包

5.1 contoller

bash

[root@controller ~]# echo '192.168.153.200 controller' >> /etc/hosts

[root@controller ~]# echo '192.168.153.202 compute' >> /etc/hosts

[root@controller ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.153.200 controller

192.168.153.202 compute本次推荐方式2

方式1:配置在线源

bash

[root@controller ~]# mkdir /etc/yum.repos.d/bak

[root@controller ~]# mv /etc/yum.repos.d/*.repo /etc/yum.repos.d/bak/

[root@controller ~]# cat <<EOF > /etc/yum.repos.d/cloudcs.repo

>

> [highavailability]

> name=CentOS Stream 8 - HighAvailability

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/HighAvailability/x86_64/os/

> gpgcheck=0

>

> [nfv]

> name=CentOS Stream 8 - NFV

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/NFV/x86_64/os/

> gpgcheck=0

>

> [rt]

> name=CentOS Stream 8 - RT

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/RT/x86_64/os/

> gpgcheck=0

>

> [resilientstorage]

> name=CentOS Stream 8 - ResilientStorage

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/ResilientStorage/x86_64/os/

> gpgcheck=0

>

> [extras-common]

> name=CentOS Stream 8 - Extras packages

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/extras/x86_64/extras-common/

> gpgcheck=0

>

> [extras]

> name=CentOS Stream $releasever - Extras

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/extras/x86_64/os/

> gpgcheck=0

>

> [centos-ceph-pacific]

> name=CentOS - Ceph Pacific

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/storage/x86_64/ceph-pacific/

> gpgcheck=0

>

> [centos-rabbitmq-38]

> name=CentOS-8 - RabbitMQ 38

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/messaging/x86_64/rabbitmq-38/

> gpgcheck=0

>

> [centos-nfv-openvswitch]

> name=CentOS Stream 8 - NFV OpenvSwitch

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/nfv/x86_64/openvswitch-2/

> gpgcheck=0

>

> [baseos]

> name=CentOS Stream 8 - BaseOS

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/BaseOS/x86_64/os/

> gpgcheck=0

>

> [appstream]

> name=CentOS Stream 8 - AppStream

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/AppStream/x86_64/os/

> gpgcheck=0

>

> [centos-openstack-victoria]

> name=CentOS 8 - OpenStack victoria

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/cloud/x86_64/openstack-victoria/

> gpgcheck=0

>

> [powertools]

> name=CentOS Stream 8 - PowerTools

> baseurl=https://mirrors.aliyun.com/centos-vault/8-stream/PowerTools/x86_64/os/

> gpgcheck=0

> EOF

[root@controller ~]# ls /etc/yum.repos.d/

bak cloudcs.repo

[root@controller ~]# yum clean all

0 文件已删除

[root@controller ~]# yum repolist all

仓库 id 仓库名称 状态

appstream CentOS Stream 8 - AppStream 启用

baseos CentOS Stream 8 - BaseOS 启用

centos-ceph-pacific CentOS - Ceph Pacific 启用

centos-nfv-openvswitch CentOS Stream 8 - NFV OpenvSwitch 启用

centos-openstack-victoria CentOS 8 - OpenStack victoria 启用

centos-rabbitmq-38 CentOS-8 - RabbitMQ 38 启用

extras CentOS Stream - Extras 启用

extras-common CentOS Stream 8 - Extras packages 启用

highavailability CentOS Stream 8 - HighAvailability 启用

nfv CentOS Stream 8 - NFV 启用

powertools CentOS Stream 8 - PowerTools 启用

resilientstorage CentOS Stream 8 - ResilientStorage 启用

rt CentOS Stream 8 - RT 启用

TypeScript

[root@controller ~]# yum install -y vim net-tools bash-completion chrony.x86_64 centos-release-openstack-victoria.noarch

CentOS Stream 8 - HighAvailability 754 kB/s | 1.3 MB 00:01

CentOS Stream 8 - NFV 1.6 MB/s | 4.4 MB 00:02

CentOS Stream 8 - RT 3.3 MB/s | 4.3 MB 00:01

CentOS Stream 8 - ResilientStorage 833 kB/s | 1.3 MB 00:01

CentOS Stream 8 - Extras packages 10 kB/s | 8.0 kB 00:00

CentOS Stream - Extras 18 kB/s | 18 kB 00:00

。。

。。

。。

已升级:

chrony-4.5-1.el8.x86_64

已安装:

centos-release-advanced-virtualization-1.0-4.el8.noarch

centos-release-ceph-nautilus-1.3-2.el8.noarch

centos-release-messaging-1-3.el8.noarch

centos-release-nfv-common-1-3.el8.noarch

centos-release-nfv-openvswitch-1-3.el8.noarch

centos-release-openstack-victoria-1-3.el8.noarch

centos-release-rabbitmq-38-1-3.el8.noarch

centos-release-storage-common-2-2.el8.noarch

centos-release-virt-common-1-2.el8.noarch

完毕!方式2: 配置本地源

控制节点和计算节点相同

TypeScript

[root@compute ~]# mount /dev/cdrom /mnt

mount: /mnt: WARNING: device write-protected, mounted read-only.

[root@compute ~]# ls /mnt

AppStream BaseOS EFI images isolinux LICENSE media.repo TRANS.TBL

[root@compute ~]# cd /etc/yum.repos.d/

[root@compute yum.repos.d]# ls

bak CentOS-NFV-OpenvSwitch.repo

CentOS-Advanced-Virtualization.repo CentOS-OpenStack-victoria.repo

CentOS-Ceph-Nautilus.repo CentOS-Storage-common.repo

CentOS-Messaging-rabbitmq.repo cloudcs.repo

[root@compute yum.repos.d]# mkdir bak

mkdir: 无法创建目录 "bak": 文件已存在

[root@compute yum.repos.d]# mv *.repo bak/

[root@compute yum.repos.d]# ls

bak

[root@compute yum.repos.d]# vi abc.repo

[root@compute yum.repos.d]# yum clean all

74 文件已删除

[root@compute yum.repos.d]# yum repolist all

仓库 id 仓库名称 状态

app app 启用

os os 启用

[root@compute yum.repos.d]# yum install -y vim net-tools bash-completion chrony.x86_64

os 77 MB/s | 2.7 MB 00:00

app 90 MB/s | 7.8 MB 00:00

上次元数据过期检查:0:00:01 前,执行于 2026年04月21日 星期二 17时09分14秒。

软件包 vim-enhanced-2:8.0.1763-19.el8.4.x86_64 已安装。

软件包 net-tools-2.0-0.52.20160912git.el8.x86_64 已安装。

软件包 bash-completion-1:2.7-5.el8.noarch 已安装。

软件包 chrony-4.5-1.el8.x86_64 已安装。

依赖关系解决。

无需任何处理。

完毕!5.2 compute

bash

[root@compute ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

[root@compute ~]# echo '192.168.153.200 controller' >> /etc/hosts

[root@compute ~]# echo '192.168.153.202 compute' >> /etc/hosts

[root@compute ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.153.200 controller

192.168.153.202 compute本次推荐方式2

方式1:配置在线源

bash

[root@compute ~]# mkdir /etc/yum.repos.d/bak

[root@compute ~]# mv /etc/yum.repos.d/*.repo /etc/yum.repos.d/bak/

[root@compute ~]# scp controller:/etc/yum.repos.d/cloudcs.repo /etc/yum.repos.d/

The authenticity of host 'controller (192.168.153.200)' can't be established.

ECDSA key fingerprint is SHA256:JARhA2ie+ws2734m3GAbn1Sbo4Wj5HEoY3LLlQiOGk8.

Are you sure you want to continue connecting (yes/no/[fingerprint])? yes

Warning: Permanently added 'controller,192.168.153.200' (ECDSA) to the list of known hosts.

root@controller's password:

cloudcs.repo 100% 1839 1.5MB/s 00:00

[root@compute ~]# ls /etc/yum.repos.d/

bak cloudcs.repo

[root@compute ~]# yum clean all

0 文件已删除

[root@compute ~]# yum repolist all

仓库 id 仓库名称 状态

appstream CentOS Stream 8 - AppStream 启用

baseos CentOS Stream 8 - BaseOS 启用

centos-ceph-pacific CentOS - Ceph Pacific 启用

centos-nfv-openvswitch CentOS Stream 8 - NFV OpenvSwitch 启用

centos-openstack-victoria CentOS 8 - OpenStack victoria 启用

centos-rabbitmq-38 CentOS-8 - RabbitMQ 38 启用

extras CentOS Stream - Extras 启用

extras-common CentOS Stream 8 - Extras packages 启用

highavailability CentOS Stream 8 - HighAvailability 启用

nfv CentOS Stream 8 - NFV 启用

powertools CentOS Stream 8 - PowerTools 启用

resilientstorage CentOS Stream 8 - ResilientStorage 启用

rt CentOS Stream 8 - RT 启用

TypeScript

[root@controller ~]# yum install -y vim net-tools bash-completion chrony.x86_64 centos-release-openstack-victoria.noarch

CentOS Stream 8 - HighAvailability 754 kB/s | 1.3 MB 00:01

CentOS Stream 8 - NFV 1.6 MB/s | 4.4 MB 00:02

CentOS Stream 8 - RT 3.3 MB/s | 4.3 MB 00:01

CentOS Stream 8 - ResilientStorage 833 kB/s | 1.3 MB 00:01

CentOS Stream 8 - Extras packages 10 kB/s | 8.0 kB 00:00

CentOS Stream - Extras 18 kB/s | 18 kB 00:00

。。

。。

。。

已升级:

chrony-4.5-1.el8.x86_64

已安装:

centos-release-advanced-virtualization-1.0-4.el8.noarch

centos-release-ceph-nautilus-1.3-2.el8.noarch

centos-release-messaging-1-3.el8.noarch

centos-release-nfv-common-1-3.el8.noarch

centos-release-nfv-openvswitch-1-3.el8.noarch

centos-release-openstack-victoria-1-3.el8.noarch

centos-release-rabbitmq-38-1-3.el8.noarch

centos-release-storage-common-2-2.el8.noarch

centos-release-virt-common-1-2.el8.noarch

完毕!方式2:本地yum

TypeScript

[root@compute ~]# mount /dev/cdrom /mnt

mount: /mnt: WARNING: device write-protected, mounted read-only.

[root@compute ~]# ls /mnt

AppStream BaseOS EFI images isolinux LICENSE media.repo TRANS.TBL

[root@compute ~]# cd /etc/yum.repos.d/

[root@compute yum.repos.d]# ls

bak CentOS-NFV-OpenvSwitch.repo

CentOS-Advanced-Virtualization.repo CentOS-OpenStack-victoria.repo

CentOS-Ceph-Nautilus.repo CentOS-Storage-common.repo

CentOS-Messaging-rabbitmq.repo cloudcs.repo

[root@compute yum.repos.d]# mkdir bak

mkdir: 无法创建目录 "bak": 文件已存在

[root@compute yum.repos.d]# mv *.repo bak/

[root@compute yum.repos.d]# ls

bak

[root@compute yum.repos.d]# vi abc.repo

[root@compute yum.repos.d]# yum clean all

74 文件已删除

[root@compute yum.repos.d]# yum repolist all

仓库 id 仓库名称 状态

app app 启用

os os 启用

[root@compute yum.repos.d]# yum install -y vim net-tools bash-completion chrony.x86_64

os 77 MB/s | 2.7 MB 00:00

app 90 MB/s | 7.8 MB 00:00

上次元数据过期检查:0:00:01 前,执行于 2026年04月21日 星期二 17时09分14秒。

软件包 vim-enhanced-2:8.0.1763-19.el8.4.x86_64 已安装。

软件包 net-tools-2.0-0.52.20160912git.el8.x86_64 已安装。

软件包 bash-completion-1:2.7-5.el8.noarch 已安装。

软件包 chrony-4.5-1.el8.x86_64 已安装。

依赖关系解决。

无需任何处理。

完毕!6. NTP 时钟同步

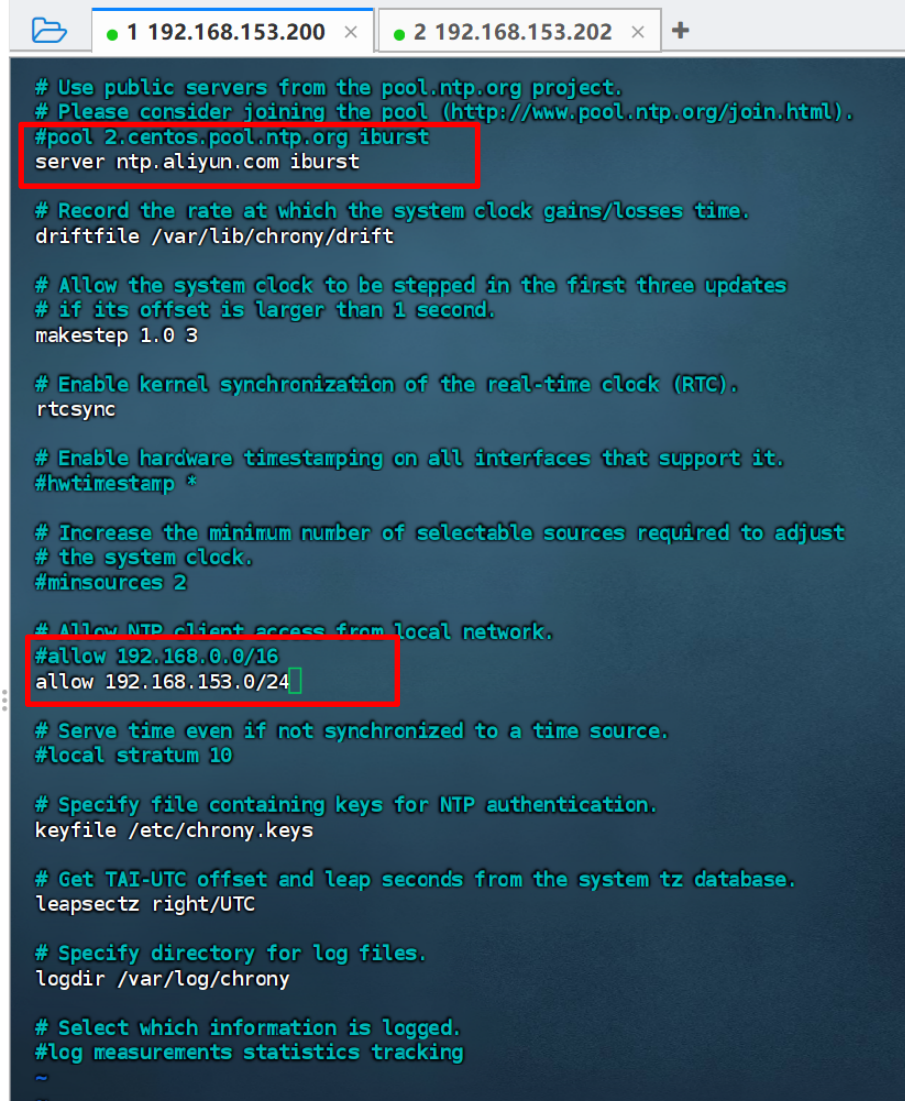

6.1. controller

TypeScript

[root@controller yum.repos.d]# vim /etc/chrony.conf

[root@controller yum.repos.d]# systemctl start chronyd.service

[root@controller yum.repos.d]# systemctl enable chronyd.service

Created symlink /etc/systemd/system/multi-user.target.wants/chronyd.service → /usr/lib/systemd/system/chronyd.service.

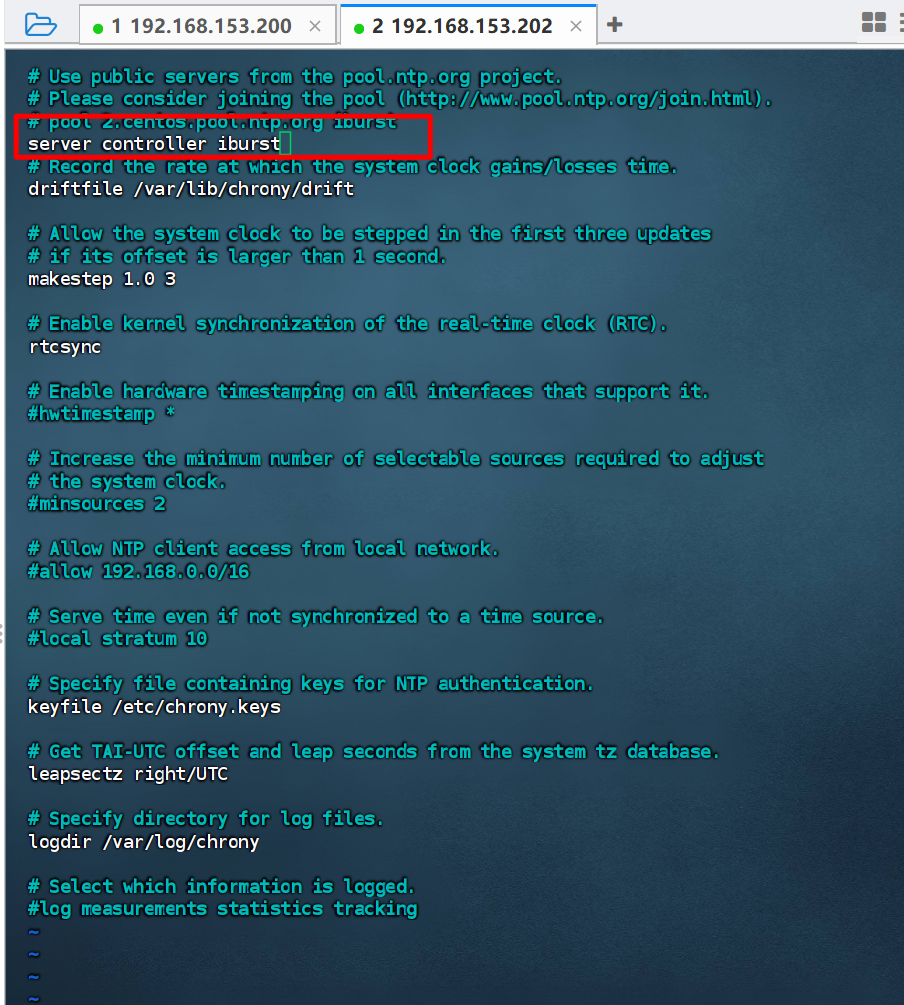

6.2 compute

TypeScript

[root@compute yum.repos.d]# vim /etc/chrony.conf

[root@compute yum.repos.d]# systemctl start chronyd.service

[root@compute yum.repos.d]# systemctl enable chronyd.service

Created symlink /etc/systemd/system/multi-user.target.wants/chronyd.service → /usr/lib/systemd/system/chronyd.service.

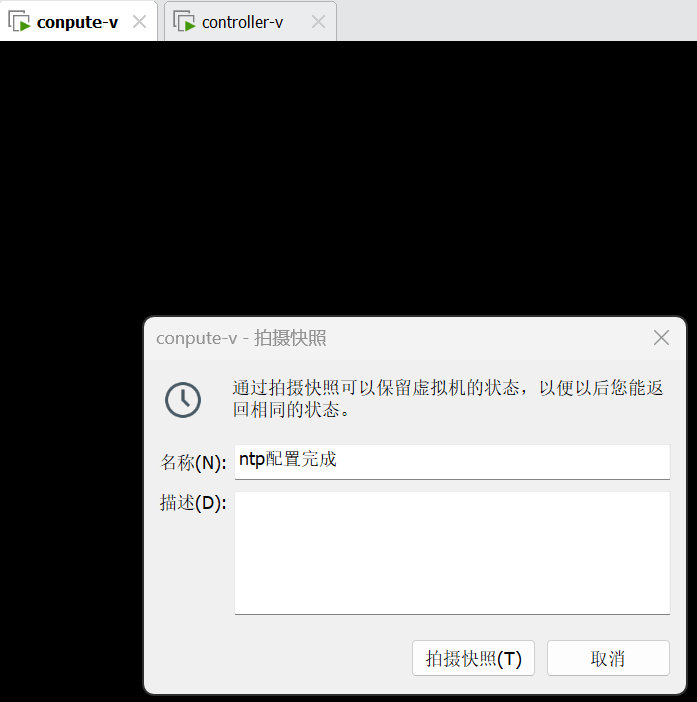

两台均拍摄快照

拍完快照之后,启动虚拟机,因为之前关闭了NetworkManager服务,所以无法自动启动网卡,没有ip地址,怎么办?手工启动NM服务,再手工关闭NM服务即可。

systemctl start NetworkManager

systemctl stop NetworkManager

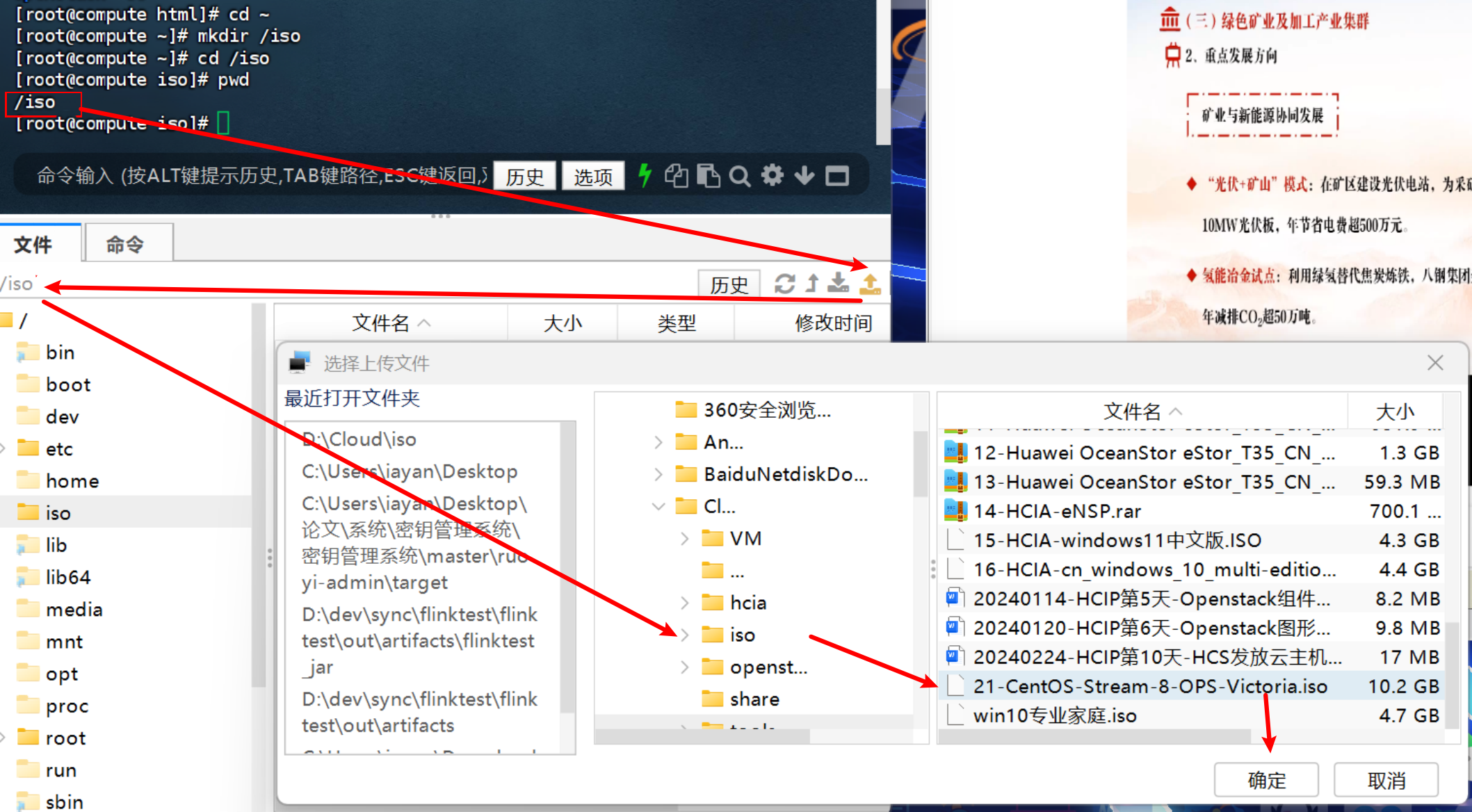

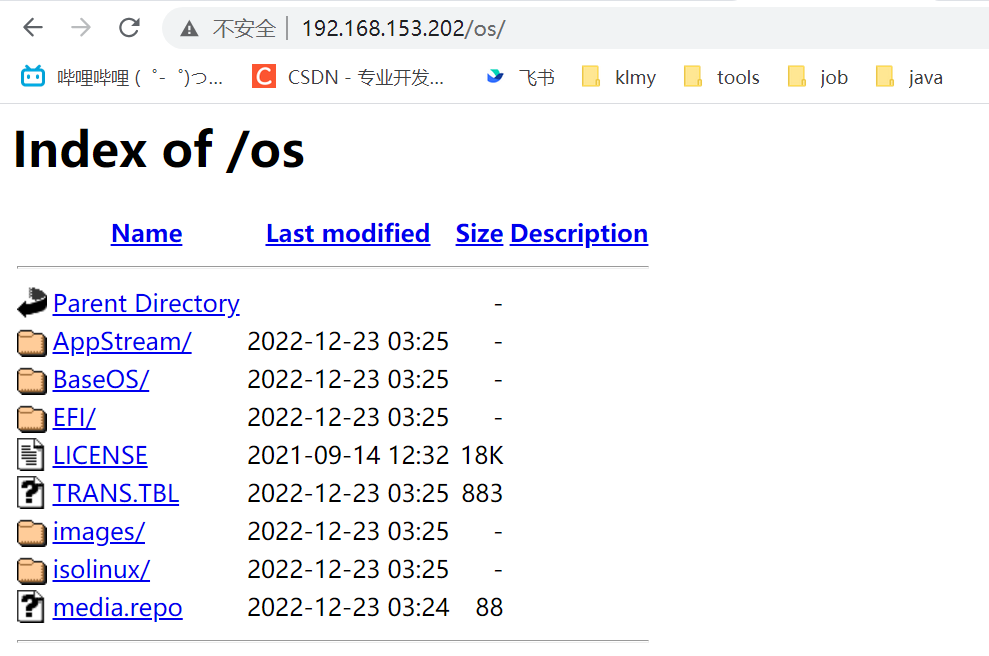

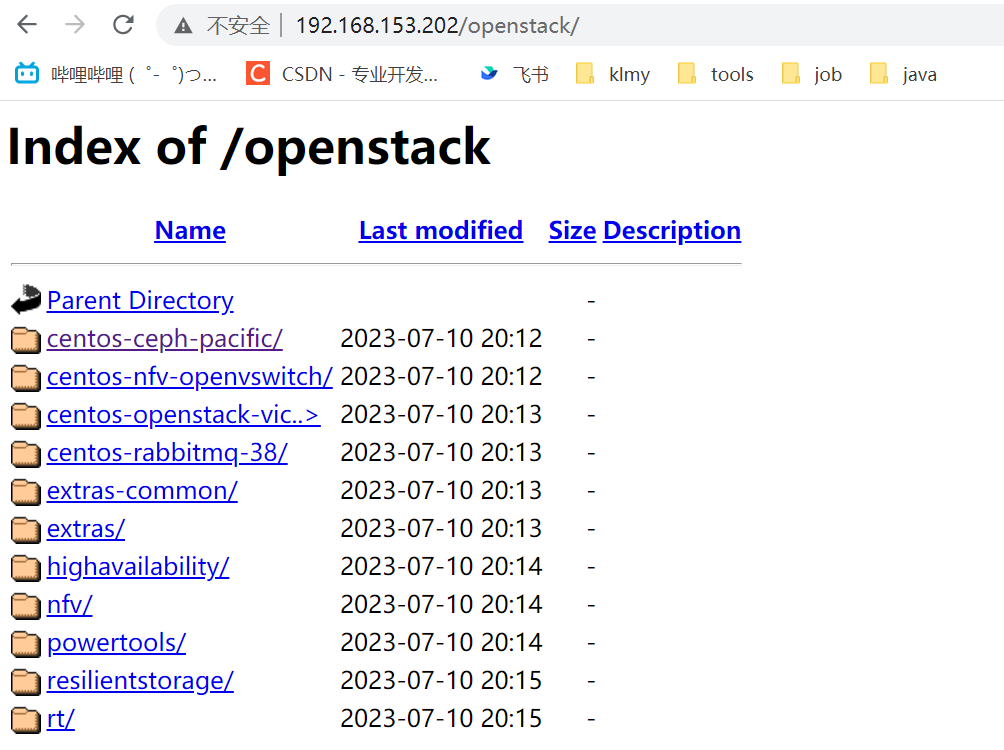

7. 配置 httpd 服务

注意:仅在计算节点上配置即可。

因为你已经重启了,所以光盘就掉了,因此,重新挂载

TypeScript

[root@compute ~]# mount /dev/cdrom /mnt

mount: /mnt: WARNING: device write-protected, mounted read-only.

[root@compute ~]# yum install -y httpd

上次元数据过期检查:0:29:27 前,执行于 2026年04月21日 星期二 17时09分14秒。

依赖关系解决。

==================================================================================

软件包 架构 版本 仓库 大小

==================================================================================

安装:

httpd

mod_http2-1.15.7-5.module_el8.6.0+1111+ce6f4ceb.x86_64

完毕!

[root@compute ~]# systemctl start httpd

[root@compute ~]# systemctl enable httpd

Created symlink /etc/systemd/system/multi-user.target.wants/httpd.service → /usr/lib/systemd/system/httpd.service.

[root@compute ~]# cd /var/www/html

[root@compute html]# mkdir os openstack

[root@compute html]# ls

openstack os

[root@compute html]# cd ~

[root@compute ~]# mkdir /iso

[root@compute ~]# cd /iso上传两个镜像文件

TypeScript

[root@compute iso]# ls

20-CentOS-Stream-8-x86_64-20221222-dvd1.iso 21-CentOS-Stream-8-OPS-Victoria.iso

[root@compute iso]# mount /iso/20-CentOS-Stream-8-x86_64-20221222-dvd1.iso /var/www/html/os/

mount: /var/www/html/os: WARNING: device write-protected, mounted read-only.

[root@compute iso]# mount /iso/21-CentOS-Stream-8-OPS-Victoria.iso /var/www/html/openstack/

mount: /var/www/html/openstack: WARNING: device write-protected, mounted read-only.测试

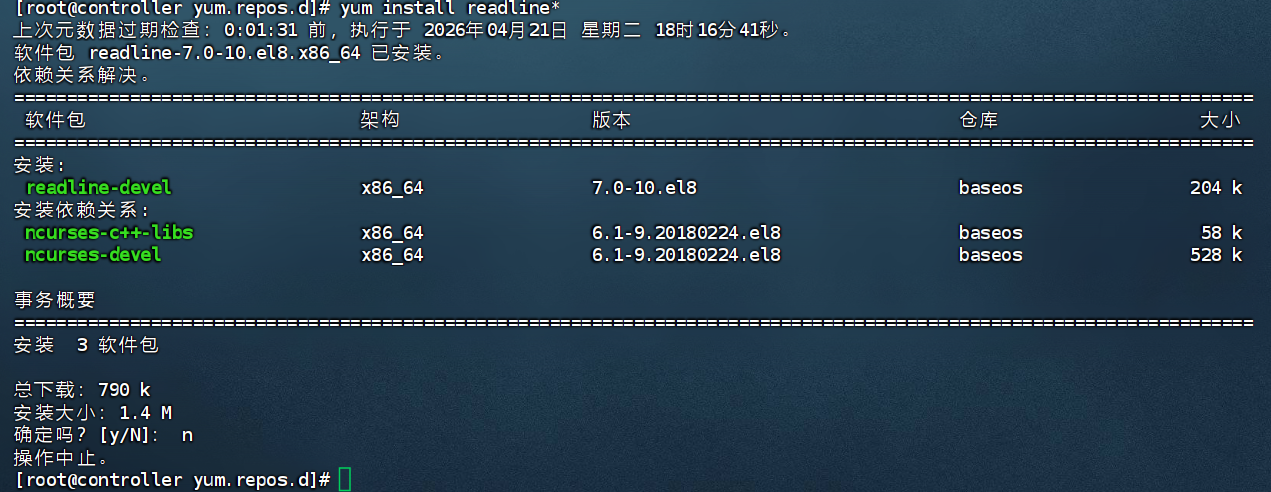

8. 配置yum源

8.1 控制节点

TypeScript

[root@controller ~]# cd /etc/yum.repos.d/

[root@controller yum.repos.d]# ls

abc.repo bak

[root@controller yum.repos.d]# rm -rf *

[root@controller yum.repos.d]# ls

[root@controller yum.repos.d]# yum clean all

13 文件已删除

[root@controller yum.repos.d]# yum repolist all

没有可用的软件仓库

[root@controller yum.repos.d]# ls

[root@controller yum.repos.d]# yum install gcc*

错误:在"/etc/yum.repos.d", "/etc/yum/repos.d", "/etc/distro.repos.d"中没有被启用的仓库。

[root@controller yum.repos.d]# vim phdvb.repo

[root@controller yum.repos.d]# cat phdvb.repo highavailability

name=highavailability

baseurl=http://192.168.153.202/openstack/highavailability/

gpgcheck=0

resilientstorage

name=CentOS Stream 8 - ResilientStorage

baseurl=http://192.168.153.202/openstack/resilientstorage/

gpgcheck=0

extras-common

name=CentOS Stream 8 - Extras packages

baseurl=http://192.168.153.202/openstack/extras-common/

gpgcheck=0

extras

name=CentOS Stream $releasever - Extras

baseurl=http://192.168.153.202/openstack/extras/

gpgcheck=0

centos-ceph-pacific

name=CentOS - Ceph Pacific

baseurl=http://192.168.153.202/openstack/centos-ceph-pacific/

gpgcheck=0

centos-rabbitmq-38

name=CentOS-8 - RabbitMQ 38

baseurl=http://192.168.153.202/openstack/centos-rabbitmq-38/

gpgcheck=0

centos-nfv-openvswitch

name=CentOS Stream 8 - NFV OpenvSwitch

baseurl=http://192.168.153.202/openstack/centos-nfv-openvswitch/

gpgcheck=0

baseos

name=CentOS Stream 8 - BaseOS

baseurl=http://192.168.153.202/os/BaseOS/

gpgcheck=0

appstream

name=CentOS Stream 8 - AppStream

baseurl=http://192.168.153.202/os/AppStream/

gpgcheck=0

centos-openstack-victoria

name=CentOS 8 - OpenStack victoria

baseurl=http://192.168.153.202/openstack/centos-openstack-victoria/

gpgcheck=0

powertools

name=CentOS Stream 8 - PowerTools

baseurl=http://192.168.153.202/openstack/powertools/

gpgcheck=0

nfv

name=CentOS Stream 8 - nvf

baseurl=http://192.168.153.202/openstack/nfv/

gpgcheck=0

rt

name=CentOS Stream 8 - rt

baseurl=http://192.168.153.202/openstack/rt/

gpgcheck=0

8.2 计算节点

TypeScript

[root@compute iso]# cd /etc/yum.repos.d

[root@compute yum.repos.d]# ls

abc.repo bak

[root@compute yum.repos.d]# rm -rf *

[root@compute yum.repos.d]# ls

[root@compute yum.repos.d]# scp controller:/etc/yum.repos.d/phdvb.repo .

root@controller's password:

phdvb.repo 100% 1552 608.1KB/s 00:00

[root@compute yum.repos.d]# ls

phdvb.repo

[root@compute yum.repos.d]# yum clean all

13 文件已删除

[root@compute yum.repos.d]# yum repolist all

仓库 id 仓库名称 状态

appstream CentOS Stream 8 - AppStream 启用

baseos CentOS Stream 8 - BaseOS 启用

centos-ceph-pacific CentOS - Ceph Pacific 启用

centos-nfv-openvswitch CentOS Stream 8 - NFV OpenvSwitch 启用

centos-openstack-victoria CentOS 8 - OpenStack victoria 启用

centos-rabbitmq-38 CentOS-8 - RabbitMQ 38 启用

extras CentOS Stream 8 - Extras 启用

extras-common CentOS Stream 8 - Extras packages 启用

highavailability highavailability 启用

nfv CentOS Stream 8 - nvf 启用

powertools CentOS Stream 8 - PowerTools 启用

resilientstorage CentOS Stream 8 - ResilientStorage 启用

rt CentOS Stream 8 - rt 启用测试

9 安装 packstack 工具

仅在控制节点安装

TypeScript

[root@controller ~]# yum install -y openstack-packstack

上次元数据过期检查:0:20:18 前,执行于 2026年04月21日 星期二 18时16分41秒。

依赖关系解决。

======================================================================================================================

软件包 架构 版本 仓库 大小

======================================================================================================================

安装:

openstack-packstack 两台均重启

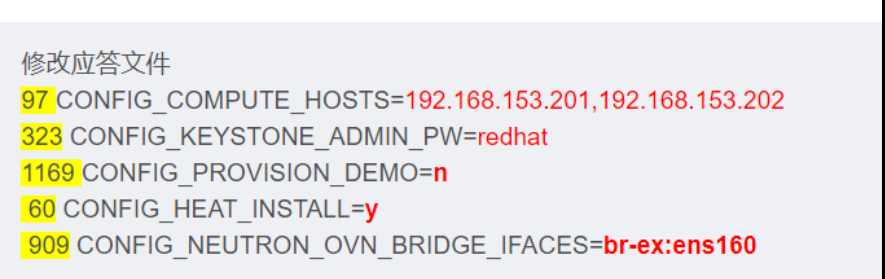

生成应答文件并修改

packstack --gen-answer-file=phdvb.txt

TypeScript

[root@controller ~]# packstack --gen-answer-file=phdvb.txt

Packstack changed given value to required value /root/.ssh/id_rsa.pub

Additional information:

* Parameter CONFIG_NEUTRON_L2_AGENT: You have chosen OVN Neutron backend. Note that this backend does not support the VPNaaS plugin. Geneve will be used as the encapsulation method for tenant networks

[root@controller ~]# vim phdvb.txt

计算节点重新挂载

root@compute iso# ls

20-CentOS-Stream-8-x86_64-20221222-dvd1.iso 21-CentOS-Stream-8-OPS-Victoria.iso

root@compute iso# mount /iso/20-CentOS-Stream-8-x86_64-20221222-dvd1.iso /var/www/html/os/

mount: /var/www/html/os: WARNING: device write-protected, mounted read-only.

root@compute iso# mount /iso/21-CentOS-Stream-8-OPS-Victoria.iso /var/www/html/openstack/

mount: /var/www/html/openstack: WARNING: device write-protected, mounted read-only.

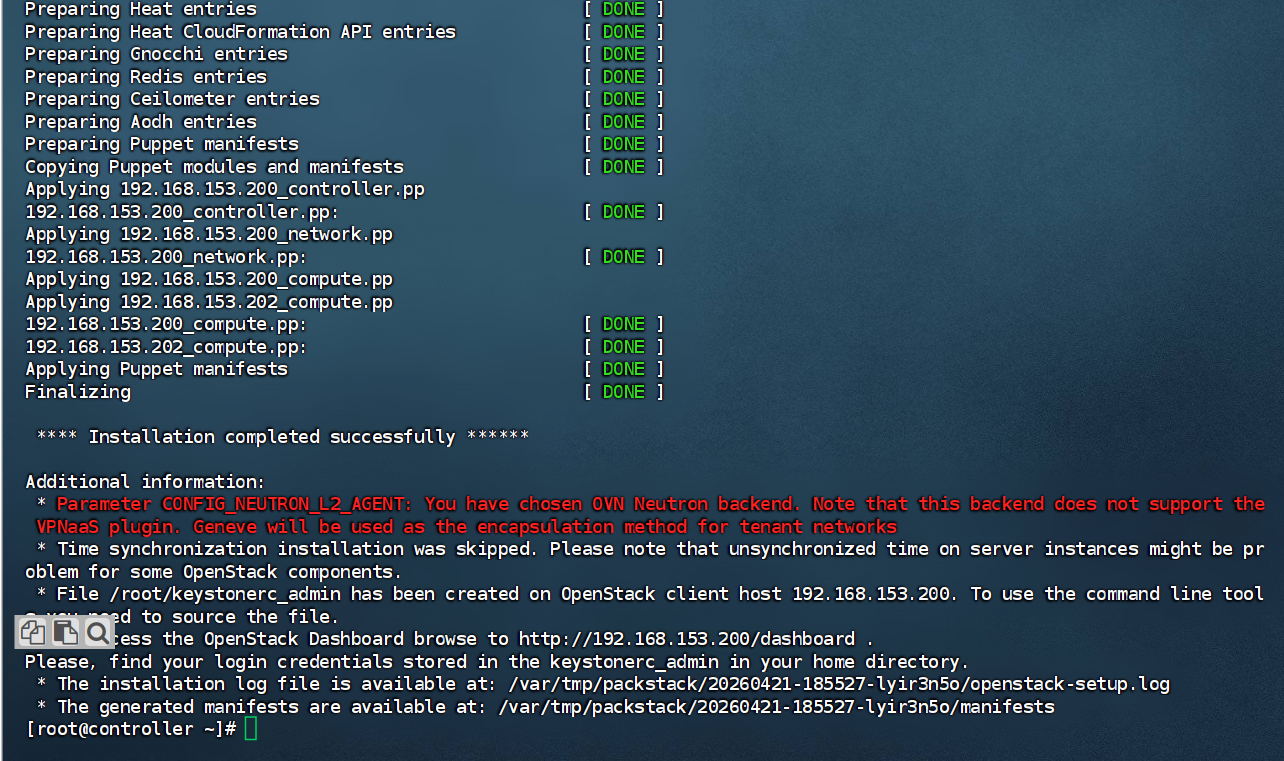

执行安装:packstack --answer-file=phdvb.txt

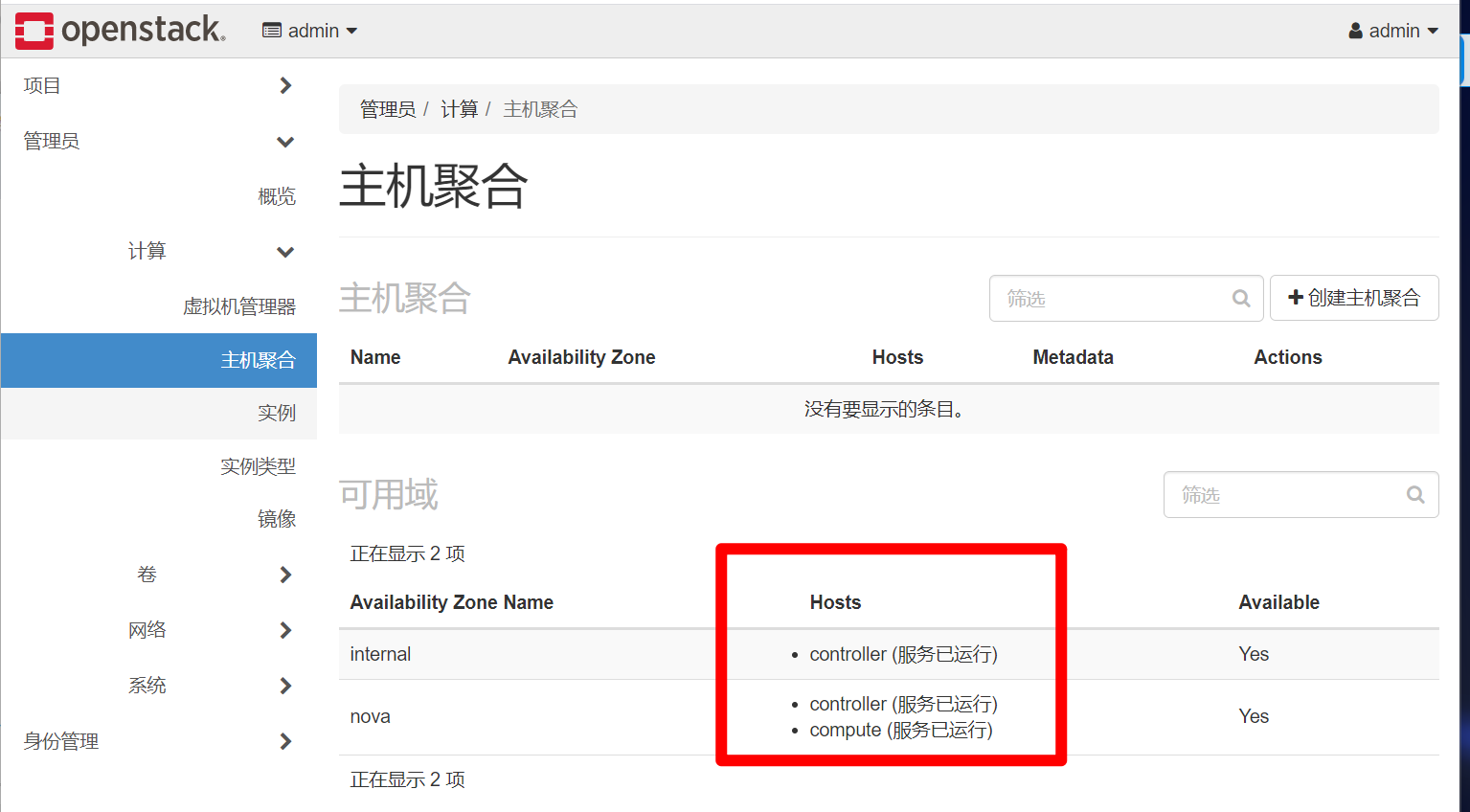

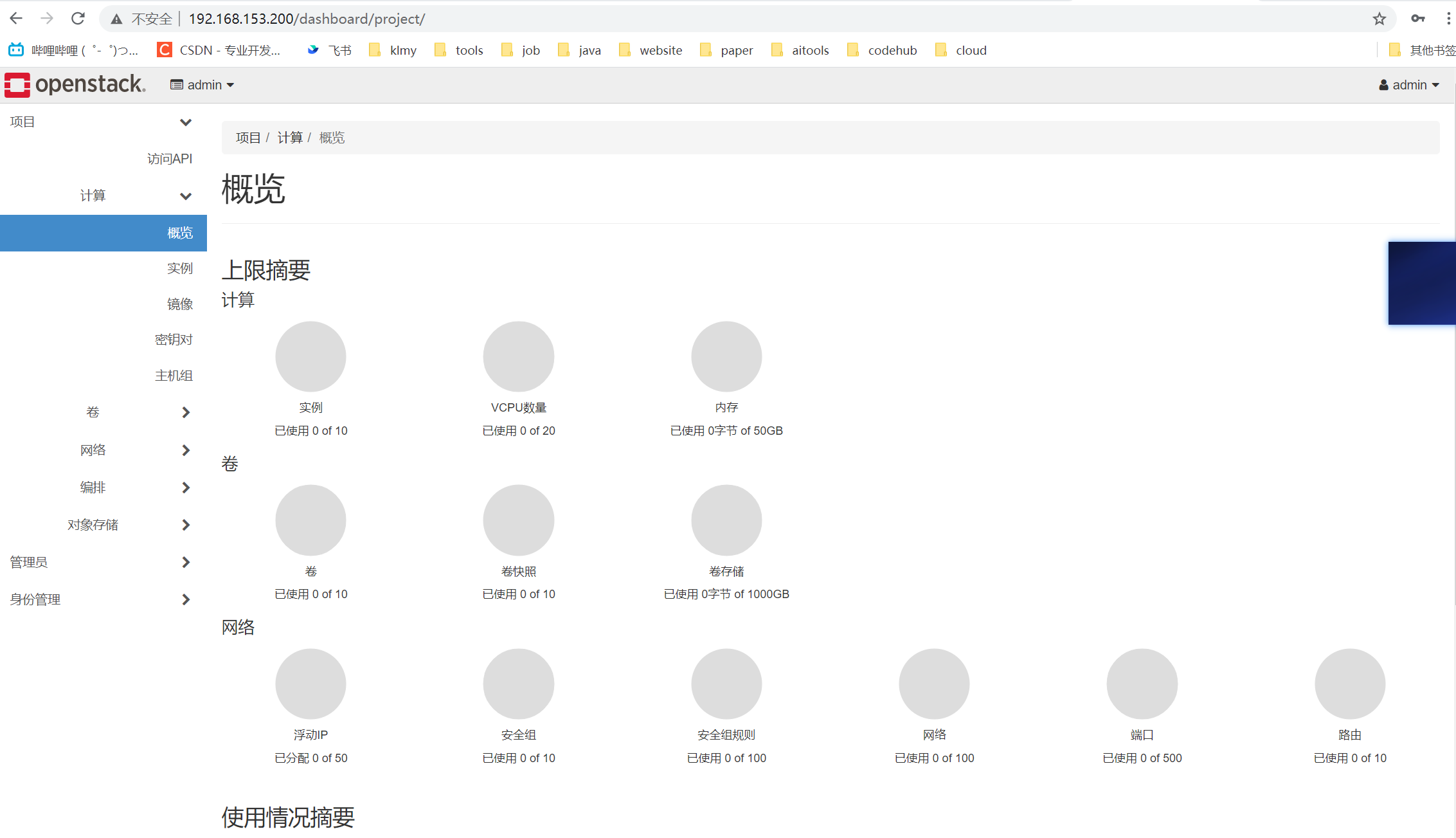

登录dashboard

10. 注意

由于有些环境虚拟机配置有点低,所以第一次启动的时候,尤其是控制节点,可能某些组件无法起来,比如rabbitmq组件,一定关注这个组件。

如果这里的服务显示的是关闭的。那么需要查询rabbitmq服务有没有启动。

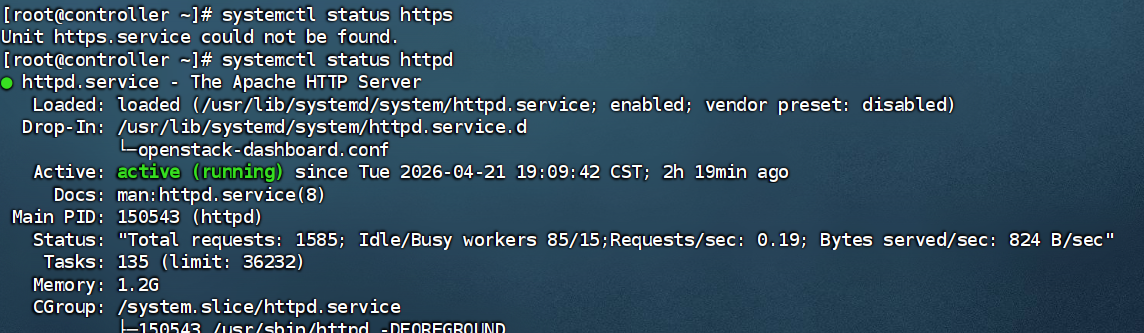

TypeScript[root@controller ~]# systemctl status rabbitmq-server.service ● rabbitmq-server.service - RabbitMQ broker Loaded: loaded (/usr/lib/systemd/system/rabbitmq-server.service; enabled; vendor preset: disabled) Drop-In: /etc/systemd/system/rabbitmq-server.service.d └─90-limits.conf Active: active (running) since Tue 2026-04-21 19:08:58 CST; 2h 18min ago Main PID: 144598 (beam.smp) Status: "Initialized" Tasks: 91 (limit: 36232) Memory: 132.2M CGroup: /system.slice/rabbitmq-server.service ├─144598 /usr/lib64/erlang/erts-10.7.2.1/bin/beam.smp -W w -A 64 -MBas ageffcbf -MHas ageffcbf -MBlmbcs 51> ├─144698 /usr/lib64/erlang/erts-10.7.2.1/bin/epmd -daemon ├─144914 erl_child_setup 16384 ├─145379 inet_gethost 4 └─145380 inet_gethost 4 4月 21 19:08:58 controller rabbitmq-server[144598]: ########## Licensed under the MPL 1.1. Website: https://rabbit> 4月 21 19:08:58 controller rabbitmq-server[144598]: Doc guides: https://rabbitmq.com/documentation.html 4月 21 19:08:58 controller rabbitmq-server[144598]: Support: https://rabbitmq.com/contact.html 4月 21 19:08:58 controller rabbitmq-server[144598]: Tutorials: https://rabbitmq.com/getstarted.html 4月 21 19:08:58 controller rabbitmq-server[144598]: Monitoring: https://rabbitmq.com/monitoring.html 4月 21 19:08:58 controller rabbitmq-server[144598]: Logs: /var/log/rabbitmq/rabbit@controller.log 4月 21 19:08:58 controller rabbitmq-server[144598]: /var/log/rabbitmq/rabbit@controller_upgrade.log 4月 21 19:08:58 controller rabbitmq-server[144598]: Config file(s): /etc/rabbitmq/rabbitmq.config 4月 21 19:08:58 controller systemd[1]: Started RabbitMQ broker. 4月 21 19:08:59 controller rabbitmq-server[144598]: Starting broker... completed with 0 plugins. lines 1-26/26 (END)如果这个服务没启动,需要手工启动一下。

systemctl start rabbitmq-server.service.

环境启动后,能不能通过界面登录,是要看一个服务的,httpd(apache服务),因为dashboard服务集成在了httpd里面。所以要查看httpd。