K8s 中部署 LNMP 架构 ECShop 电商

项目需求

在 K8s 中部署 LNMP 架构 ECShop 电商。

-

部署 NFS 服务器,提供共享目录 /ecshop

-

部署 NFS 动态卷制备,存储类型 ecshop

-

电商相关资源部署在 ecshop 命名空间

-

为 ecshop 命名空间设置 Quota:

- cpu: "4"

- memory: 8G

- requests.cpu: "4"

- requests.memory: 8G

- requests.storage: 500G

-

为 ecshop 命名空间设置 LimitRange:

- requests.cpu:默认:200m,最小:100m

- requests.memory:默认:256Mi,最小:256Mi

- cpu:默认:500m,最大:1

- memory:默认:512Mi,最大:2048Mi

-

部署 mysql 应用:

- 使用 StatefullSets 部署,基于 mysql:5.7 镜像,副本数1个

- 存储由 NFS 动态卷制备提供

- 使用 secret 存储mysql 用户 root 密码,ecshop应用用户名ecshop、密码shizhan@123、数据库ecshop

- 配置合适的Probe,进行健康监测

- 额外配置一个 svc,用于暴漏 mysql 应用

-

部署 php 应用:

- 使用 deployment 部署,基于 php:7.2-fpm-ecshop 镜像,副本数1个

- 配置合适的Probe,进行健康监测

- 使用 service-clusterIP 暴露

-

部署 nginx 应用:

- 使用 deployment 部署,基于 nginx:1.24 镜像,副本数1个

- 存储由 NFS 动态卷制备提供

- 使用 cm 存储 nginx 配置文件,

- 配置合适的Probe,进行健康监测

- 配置一个 svc,用于暴漏 ecshop 应用

-

部署网络组件:

- 部署 metallab

- 部署 ingress,为ingress-nginx控制器提供自谦名证书和私钥,用于后续的应用的https通信

-

配置 ingress 规则,通过 https://shop.shizhan.cloud 访问商城。

-

部署 Metric Server,针对 php 和 nginx 应用,配置 HPA 规则,最小2个pod,最大5个pod。

-

部署 Kubernetes Dashboard 用于监视和管理 Kubernetes 集群,配置 ingress 规则,通过 https://dashboard.shizhan.cloud 访问 Kubernetes Dashboard。

站点架构

Dashboard_GROUP

Nginx_GROUP

PHP_GROUP

MySQL_GROUP

right

NFS服务器

10.1.8.30 /ecshop

PV 动态卷制备

PVC-mysql

ConfigMap: nginx-config

PVC-ecshop

Secret: mysql账号密码

Secret: nginx-tls

通用TLS证书

Statefulset: mysql

Service: mysql HeadLess

Service: mysql ClusterIP

Deployment: php

Service: php ClusterIP

Deployment: nginx

Service: nginx ClusterIP

Deployment: kubernetes-dashboard

Service: kubernetes-dashboard ClusterIP

MetalLB 负载均衡

分配外部LB VIP

Ingress-Nginx Controller

集群入口网关

Ingress规则: shop.shizhan.cloud

Ingress规则: dashboard.shizhan.cloud

步骤 1:部署存储

1.1 部署 NFS 服务

NFS 服务器操作

bash

# 安装 NFS 服务

[root@master30 ~]# apt install -y nfs-kernel-server

# 创建共享目录

[root@master30 ~]# mkdir -p -m 777 /ecshop

# 配置 NFS 共享(地址已改为10.1.8.30,允许所有节点访问)

[root@master30 ~]# echo "/ecshop *(rw,sync,no_root_squash,no_all_squash)" >> /etc/exports

# 启动服务

[root@master30 ~]# systemctl restart nfs-serverNFS 客户端操作

bash

## 安装 NFS 客户端

[root@worker31-32 ~]# apt install -y nfs-common

## 验证共享

[root@worker31-32 ~]# showmount -e master30

Export list for master30:

/ecshop *1.2 部署 NFS provisioner

NFS没有内置制备器,这里我们自定义外部分配器。

bash

# 部署 NFS provisioner到命名空间:kube-storage

[root@master30 ~]# kubectl create ns kube-storage创建 nfs-rbac.yaml 文件,赋予 Provisioner 操作 PV/PVC 的权限。

yaml

[root@master30 ~]# cat > nfs-rbac.yaml <<'EOF'

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

namespace: kube-storage

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: kube-storage

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

EOF

bash

[root@master30 ~]# kubectl apply -f nfs-rbac.yaml部署 NFS Provisioner 应用

yaml

[root@master30 ~]# cat > nfs-provisioner.yaml <<'EOF'

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

namespace: kube-storage

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

volumes:

- name: nfs-client-root

nfs:

server: 10.1.8.30 # 同上 NFS 服务器 IP

path: /ecshop # 核心:同步改为 /ecshop

containers:

- name: nfs-client-provisioner

image: registry.k8s.io/sig-storage/nfs-subdir-external-provisioner:v4.0.2

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: fuseim.pri/ifs

- name: NFS_SERVER

value: 10.1.8.30 # 替换为你的 NFS 服务器 IP

- name: NFS_PATH

value: /ecshop # 核心:共享路径改为 /ecshop

EOF

bash

# 部署 NFS Provisioner 应用

[root@master30 ~]# kubectl apply -f nfs-provisioner.yaml

# 查看 NFS Provisioner 应用

[root@master30 ~]# kubectl get deployments.apps -n kube-storage

NAME READY UP-TO-DATE AVAILABLE AGE

nfs-client-provisioner 1/1 1 1 1m创建 NFS StorageClass

yaml

[root@master30 ~]# cat > ecshop-StorageClass.yaml <<'EOF'

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: ecshop

namespace: kube-storage

provisioner: fuseim.pri/ifs # 必须和 Provisioner 名称一致

parameters:

onDelete: retain # 删除 PVC 保留 NFS 数据

archiveOnDelete: "false"

# 按需设置回收策略

#reclaimPolicy: Retain

reclaimPolicy: Delete

allowVolumeExpansion: true

volumeBindingMode: Immediate

EOF

bash

# 创建 StorageClass

[root@master30 ~]# kubectl apply -f ecshop-StorageClass.yaml

[root@master30 ~]# kubectl get sc ecshop

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

ecshop fuseim.pri/ifs Delete Immediate true 11s步骤 2:配置命名空间

2.1 创建 Namespace

bash

[root@master30 ~]# kubectl create ns ecshop

[root@master30 ~]# kubectl config set-context --current --namespace ecshop2.2 配置 Quota

bash

[root@master30 ~]# kubectl create quota ecshop \

--hard=cpu=4,memory=8G,requests.cpu=4,requests.memory=8G,requests.storage=500G

[root@master30 ~]# kubectl describe quota ecshop

Name: ecshop

Namespace: ecshop

Resource Used Hard

-------- ---- ----

cpu 0 4

memory 0 8G

requests.cpu 0 4

requests.memory 0 8G

requests.storage 0 500G2.3 配置 LimitRange

yaml

[root@master30 ~]# cat > ecshop-LimitRange.yaml <<'EOF'

apiVersion: v1

kind: LimitRange

metadata:

name: ecshop

namespace: ecshop

spec:

limits:

- type: Container

max:

memory: 2048Mi

cpu: 1

min:

memory: 256Mi

cpu: 100m

default:

memory: 512Mi

cpu: 500m

defaultRequest:

memory: 256Mi

cpu: 200m

EOF

yaml

[root@master30 ~]# kubectl apply -f ecshop-LimitRange.yaml

[root@master30 ~]# kubectl describe limitranges ecshop

Name: ecshop

Namespace: ecshop

Type Resource Min Max Default Request Default Limit Max Limit/Request Ratio

---- -------- --- --- --------------- ------------- -----------------------

Container cpu 100m 1 200m 500m -

Container memory 256Mi 2Gi 256Mi 512Mi -步骤 3:部署 MySQL

3.1 创建 Secret

创建 Secret 存储 MySQL root 密码、ECShop 专用用户及密码、数据库,替代明文配置,提升安全性。

这里定义:

- MySQL root 密码:

Root@123(可按需修改) - ECShop 专用数据库用户:

ecshop - ECShop 专用用户密码:

ECShop@123(可按需修改) - ECShop 专用数据库:

ecshop

执行命令创建 Secret:

bash

# 创建 Secret 存储数据库敏感信息

[root@master30 ~]# kubectl create secret generic mysql \

--namespace ecshop \

--from-literal=mysql-root-password=Root@123 \

--from-literal=ecshop-db-user=ecshop \

--from-literal=ecshop-db-password=ECShop@123

# 验证 Secret

[root@master30 ~]# kubectl get secrets

NAME TYPE DATA AGE

mysql Opaque 3 10s3.2 创建 Service

创建 mysql-service.yaml:

- mysql-headless,为后续 StatefulSet 管理的 Pod 提供固定名称

- mysql,为后续 StatefulSet 管理的 Pod 提供负载均衡

yaml

[root@master30 ~]# cat > mysql-service.yaml <<'EOF'

apiVersion: v1

kind: Service

metadata:

name: mysql-headless

namespace: ecshop

spec:

type: ClusterIP

selector:

app: mysql ## 匹配 StatefulSet 管理的 Pod 标签

clusterIP: None ## 无头服务,ClusterIP 设为 None

ports:

- port: 3306

targetPort: 3306

name: mysql-port ## 端口名称,便于识别

---

apiVersion: v1

kind: Service

metadata:

name: mysql

namespace: ecshop

spec:

type: ClusterIP

selector:

app: mysql ## 匹配 StatefulSet 管理的 Pod 标签

ports:

- port: 3306

targetPort: 3306

name: mysql-port ## 端口名称,便于识别

EOF

# 创建服务

[root@master30 ~]# kubectl apply -f mysql-service.yaml

# 没有 CLUSTER-IP

[root@master30 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mysql ClusterIP 10.100.246.245 <none> 3306/TCP 6s

mysql-headless ClusterIP None <none> 3306/TCP 6s3.3 部署 MySQL

bash

# worker节点提前准备好镜像,并导入

[root@worker31 ~]# nerdctl load -i docker.io_library_mysql_5.7.tar

[root@worker31 ~]# nerdctl load -i docker.io_library_nginx-1.24.tar

[root@worker31 ~]# nerdctl load -i php-7.2-fpm-ecshop.tar

[root@worker32 ~]# nerdctl load -i docker.io_library_mysql_5.7.tar

[root@worker32 ~]# nerdctl load -i docker.io_library_nginx-1.24.tar

[root@worker32 ~]# nerdctl load -i php-7.2-fpm-ecshop.tar创建 mysql-statefulset.yaml,副本数保持1个,Service为ClusterIP,无其他修改:

yaml

[root@master30 ~]# cat > mysql-statefulset.yaml <<'EOF'

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: mysql

namespace: ecshop

spec:

serviceName: mysql-headless

replicas: 1

selector:

matchLabels:

app: mysql

template:

metadata:

labels:

app: mysql

spec:

containers:

- name: mysql

image: mysql:5.7

ports:

- containerPort: 3306

name: mysql

env:

- name: MYSQL_ROOT_PASSWORD

valueFrom:

secretKeyRef:

name: mysql

key: mysql-root-password

- name: MYSQL_USER

valueFrom:

secretKeyRef:

name: mysql

key: ecshop-db-user

- name: MYSQL_PASSWORD

valueFrom:

secretKeyRef:

name: mysql

key: ecshop-db-password

- name: MYSQL_DATABASE

value: "ecshop"

volumeMounts:

- name: mysql-data ## 挂载存储卷,名称与 volumeClaimTemplates 一致

mountPath: /var/lib/mysql ## MySQL 数据存储路径

# 1. 启动探针:等待MySQL完全启动(适配初始化耗时)

startupProbe:

exec:

command:

- sh

- -c

- "mysql -uroot -p${MYSQL_ROOT_PASSWORD} -e 'SELECT 1;'"

initialDelaySeconds: 10 # 启动后10秒开始检测

periodSeconds: 5 # 每5秒检测一次

failureThreshold: 30 # 最多重试30次(总超时150秒)

timeoutSeconds: 3 # 单次检测超时3秒

# 2. 存活探针:检测MySQL是否存活,异常则重启

livenessProbe:

exec:

command:

- sh

- -c

- "mysqladmin ping -uroot -p${MYSQL_ROOT_PASSWORD}"

initialDelaySeconds: 30 # 启动后30秒开始检测(避开初始化)

periodSeconds: 10 # 每10秒检测一次

timeoutSeconds: 5 # 单次检测超时5秒

failureThreshold: 3 # 失败3次则判定异常

# 3. 就绪探针:检测MySQL是否可接收请求

readinessProbe:

exec:

command:

- sh

- -c

- "mysql -uroot -p${MYSQL_ROOT_PASSWORD} -e 'SHOW DATABASES;'"

initialDelaySeconds: 20 # 比存活探针早启动

periodSeconds: 5 # 每5秒检测一次

timeoutSeconds: 2 # 单次检测超时2秒

successThreshold: 1 # 成功1次则判定就绪

failureThreshold: 2 # 失败2次则移除服务端点

volumeClaimTemplates: ## PVC 模板,为每个 Pod 自动创建 PVC

- metadata:

name: mysql-data

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: "ecshop" ## 与 PV 的 storageClassName 一致

resources:

requests:

storage: 10Gi

EOF

bash

[root@master30 ~]# kubectl apply -f mysql-statefulset.yaml

[root@master30 ~]# kubectl get statefulsets.apps

NAME READY AGE

mysql 1/1 52s

[root@master30 ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

mysql-0 1/1 Running 0 78s

[root@master30 ~]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

mysql-data-mysql-0 Bound pvc-c40fcf08-0f68-481f-b266-29b03eca925b 10Gi RWO ecshop <unset> 3m2s验证数据库

bash

[root@master30 ~]# apt install -y mysql-client

[root@master30 ~]# mysql -uecshop -pECShop@123 -h 10.100.246.245 -e 'show databases;'

mysql: [Warning] Using a password on the command line interface can be insecure.

+--------------------+

| Database |

+--------------------+

| information_schema |

| ecshop |

+--------------------+步骤 4:部署 PHP

4.1 创建 PVC

PHP 和 Nginx 使用相同存储存储,创建 ecshop-pvc.yaml:

yaml

[root@master30 ~]# cat > ecshop-pvc.yaml <<'EOF'

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: ecshop

namespace: ecshop

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 10Gi

storageClassName: ecshop

EOF执行创建:

bash

[root@master30 ~]# kubectl apply -f ecshop-pvc.yaml

[root@master30 ~]# kubectl get pvc ecshop

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

ecshop Bound pvc-54472f60-0c65-4f41-bc38-e54a775f9c61 10Gi RWX ecshop <unset> 4s4.2 部署 PHP

创建 php-deploy.yaml。

yaml

[root@master30 ~]# cat > php-deploy.yaml <<'EOF'

apiVersion: apps/v1

kind: Deployment

metadata:

name: php

namespace: ecshop

spec:

replicas: 1

selector:

matchLabels:

app: php

template:

metadata:

labels:

app: php

spec:

containers:

- name: php

image: php:7.2-fpm-ecshop

ports:

- containerPort: 9000

# 1. 存活探针:验证 FPM 进程存活(核心)

livenessProbe:

tcpSocket:

port: 9000

initialDelaySeconds: 10 # 启动后10秒开始检测(避开初始化)

periodSeconds: 10 # 每10秒检测一次

timeoutSeconds: 5 # 单次检测超时5秒

failureThreshold: 3 # 失败3次重启容器

# 2. 就绪探针:验证 FPM 可接收请求(推荐用状态页)

readinessProbe:

tcpSocket:

port: 9000

initialDelaySeconds: 5 # 比存活探针早启动

periodSeconds: 5 # 每5秒检测一次

timeoutSeconds: 3 # 单次检测超时3秒

successThreshold: 1 # 成功1次即就绪

failureThreshold: 2 # 失败2次移除服务端点

volumeMounts:

## PHP 网站根目录

- name: ecshop-data

mountPath: /usr/share/nginx/html

volumes:

## PHP 网站根目录

- name: ecshop-data

persistentVolumeClaim:

claimName: ecshop

EOF

bash

[root@master30 ~]# kubectl apply -f php-deploy.yaml

[root@master30 ~]# kubectl get deploy php

NAME READY UP-TO-DATE AVAILABLE AGE

php 1/1 1 1 6s4.3 创建 Service

创建 php-service.yaml:

bash

[root@master30 ~]# cat > php-service.yaml <<'EOF'

---

apiVersion: v1

kind: Service

metadata:

name: php

namespace: ecshop

spec:

type: ClusterIP

selector:

app: php

ports:

- port: 9000

targetPort: 9000

EOF

bash

[root@master30 ~]# kubectl apply -f php-service.yaml

[root@master30 ~]# kubectl get svc php

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

php ClusterIP 10.111.26.134 <none> 9000/TCP 5s步骤 5:部署 Nginx

5.1 部署 ECShop 代码

bash

## 进入 NFS 服务器(IP:10.1.8.30),配置 ECShop 源码

[root@master30 ~]# unzip ECShop_V4.1.20_UTF8.zip

# 查看代码物理位置名称

[root@master30 ~]# kubectl get pvc ecshop

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

ecshop Bound pvc-54472f60-0c65-4f41-bc38-e54a775f9c61 10Gi RWX ecshop <unset> 10m

[root@master30 ~]# cp -a ECShop_V4.1.20_UTF8_release20250416/source/ecshop/* /ecshop/ecshop-ecshop-pvc-54472f60-0c65-4f41-bc38-e54a775f9c61/

[root@master30 ~]# chmod 777 -R /ecshop/ecshop-ecshop-pvc-54472f60-0c65-4f41-bc38-e54a775f9c61/

# 存储服务器准备测试页面

[root@master30 ~]# echo Hello World From Nginx > /ecshop/ecshop-ecshop-pvc-54472f60-0c65-4f41-bc38-e54a775f9c61/index.html

[root@master30 ~]# echo "<?php phpinfo(); ?>" > /ecshop/ecshop-ecshop-pvc-54472f60-0c65-4f41-bc38-e54a775f9c61/phpinfo.php

[root@master30 ~]# cat > /ecshop/ecshop-ecshop-pvc-54472f60-0c65-4f41-bc38-e54a775f9c61/php-mysql.php <<'EOF'

<?php

$link=mysqli_connect('mysql','ecshop','ECShop@123');

if($link)

echo "Connect Mysql Success !\n";

else

echo "Connect Mysql Failed !\n";

$link->close();

?>

EOF5.2 创建 Nginx 配置

bash

[root@master30 ~]# cat > nginx-configmap.yaml <<'EOF'

apiVersion: v1

kind: ConfigMap

metadata:

name: nginx

namespace: ecshop

data:

nginx.conf: |

worker_processes auto;

events {

worker_connections 1024;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

# ECShop 站点配置

server {

listen 80;

server_name shop.shizhan.cloud;

# 网站根目录

root /usr/share/nginx/html;

index index.php index.html;

# PHP 转发配置(指向ecshop命名空间的PHP Service)

location ~ \.php$ {

fastcgi_pass php.ecshop.svc.cluster.local:9000; # 跨命名空间访问,格式:服务名.命名空间.svc.cluster.local

fastcgi_index index.php;

fastcgi_param SCRIPT_FILENAME $document_root$fastcgi_script_name;

include fastcgi_params;

fastcgi_param HTTPS on;

}

# 静态资源缓存

location ~* \.(jpg|jpeg|png|gif|css|js)$ {

expires 30d;

add_header Cache-Control "public, max-age=2592000";

}

}

}

EOF

bash

[root@master30 ~]# kubectl apply -f nginx-configmap.yaml

[root@master30 ~]# kubectl get cm nginx

NAME DATA AGE

nginx 1 27s5.4 部署 Nginx

创建 nginx-deploy.yaml:

bash

[root@master30 ~]# cat > nginx-deploy.yaml <<'EOF'

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

namespace: ecshop

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.24

ports:

- containerPort: 80

- containerPort: 443

# 1. 启动探针(可选):适配首次启动耗时

startupProbe:

tcpSocket:

port: 80

initialDelaySeconds: 3

periodSeconds: 2

failureThreshold: 10 # 总超时20秒,确保启动完成

# 2. 存活探针:验证 Nginx 核心服务存活

livenessProbe:

exec:

# 访问 Nginx 内置状态页(需提前配置),返回 200 则正常

command:

- sh

- -c

- "curl -s -o /dev/null -w '%{http_code}' http://127.0.0.1/index.html | grep '200'"

initialDelaySeconds: 10 # 启动后10秒开始检测

periodSeconds: 10 # 每10秒检测一次

timeoutSeconds: 5 # 单次检测超时5秒

failureThreshold: 3 # 失败3次重启容器

# 3. 就绪探针:验证 Nginx 可接收外部请求

readinessProbe:

httpGet:

path: / # 业务根路径(可替换为你的实际业务路径)

port: 80

scheme: HTTP

initialDelaySeconds: 5 # 比存活探针早启动

periodSeconds: 5 # 每5秒检测一次

timeoutSeconds: 2 # 单次检测超时2秒

successThreshold: 1 # 成功1次即就绪

failureThreshold: 2 # 失败2次移除服务端点

volumeMounts:

- name: nginx

mountPath: /etc/nginx/nginx.conf ## 挂载 Nginx 配置

subPath: nginx.conf

- name: ecshop-data

mountPath: /usr/share/nginx/html ## 挂载 ECShop 代码目录

volumes:

- name: nginx

configMap:

name: nginx

- name: ecshop-data

persistentVolumeClaim:

claimName: ecshop

EOF

bash

[root@master30 ~]# kubectl apply -f nginx-deploy.yaml

[root@master30 ~]# kubectl get deploy nginx

NAME READY UP-TO-DATE AVAILABLE AGE

nginx 1/1 1 1 21s5.5 创建 Service

创建 ecshop-service.yaml:

bash

[root@master30 ~]# cat > nginx-service.yaml <<'EOF'

---

apiVersion: v1

kind: Service

metadata:

name: nginx

namespace: ecshop

spec:

type: ClusterIP

selector:

app: nginx

ports:

- port: 80

targetPort: 80

name: http

EOF

bash

[root@master30 ~]# kubectl apply -f nginx-service.yaml

[root@master30 ~]# kubectl get svc nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx ClusterIP 10.109.217.173 <none> 80/TCP 5s5.6 测试 Nginx

bash

[root@master30 ~]# kubectl get svc nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx ClusterIP 10.98.1.27 <none> 80/TCP,443/TCP 50s

# 欢迎页面

[root@master30 ~]# curl http://10.109.217.173/index.html

Hello World From Nginx

# php页面

[root@master30 ~]# curl http://10.109.217.173/phpinfo.php -s|grep -o 'PHP Version 7.2'

PHP Version 7.2

# mysql连接

[root@master30 ~]# curl http://10.109.217.173/php-mysql.php

Connect Mysql Success !步骤 6:部署 LoadBalancer

bash

[root@master30 ~]# tar -xf metallb-0.14.8.tar.gz

# 查看metallb使用了哪些镜像

[root@master30 ~]# grep image: metallb-0.14.8/config/manifests/metallb-native.yaml

image: quay.io/metallb/controller:v0.14.8

image: quay.io/metallb/speaker:v0.14.8

# 导入metallb镜像 metallb-images-v0.14.8.zip包中的controller:v0.14.8 speaker:v0.14.8

[root@worker31 ~]# unzip metallb-images-v0.14.8.zip

[root@worker31 ~]# cd metallb-images-v0.14.8/

[root@worker31 metallb-images-v0.14.8]# nerdctl load -i metallb-controller-v0.14.8.tar

[root@worker31 metallb-images-v0.14.8]# nerdctl load -i metallb-speaker-v0.14.8.tar

[root@worker32 metallb-images-v0.14.8]# nerdctl load -i metallb-controller-v0.14.8.tar

[root@worker32 metallb-images-v0.14.8]# nerdctl load -i metallb-speaker-v0.14.8.tar

[root@master30 metallb-images-v0.14.8]# nerdctl load -i metallb-speaker-v0.14.8.tar

# 部署metallb

[root@master30 ~]# kubectl apply -f metallb-0.14.8/config/manifests/metallb-native.yaml

# 等待着所有pod正常运行再进行下一步

[root@master30 ~]# kubectl get pods -n metallb-system

NAME READY STATUS RESTARTS AGE

pod/controller-786f9df989-98bjh 1/1 Running 0 85s

pod/speaker-gthhx 1/1 Running 0 85s

pod/speaker-jwj25 1/1 Running 0 85s

pod/speaker-s5zvq 1/1 Running 0 85s

# 配置地址池

[root@master30 ~]# cat << 'EOF' > ippool.yaml

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: first-pool

namespace: metallb-system

spec:

addresses:

- 10.1.8.50-10.1.8.80

EOF

[root@master30 ~]# kubectl apply -f ippool.yaml

# 配置 lay2

[root@master30 ~]# cat << 'EOF' > L2.yaml

apiVersion: metallb.io/v1beta1

kind: L2Advertisement

metadata:

name: example

namespace: metallb-system

EOF

[root@master30 ~]# kubectl apply -f L2.yaml步骤 7:部署 Ingress

bash

# worker节点导入镜像controller:v1.11.2 kube-webhook-certgen:v1.4.3

[root@worker31 ~]# nerdctl load -i ingress-nginx-controller-v1.11.2.tar

[root@worker31 ~]# nerdctl load -i ingress-nginx-kube-webhook-certgen-v1.4.3.tar

[root@worker32 ~]# nerdctl load -i ingress-nginx-controller-v1.11.2.tar

[root@worker32 ~]# nerdctl load -i ingress-nginx-kube-webhook-certgen-v1.4.3.tar

[root@master30 ~]# tar -xf ingress-nginx-controller-v1.11.2.tar.gz

[root@master30 ~]# kubectl apply -f ingress-nginx-controller-v1.11.2/deploy/static/provider/cloud/deploy.yaml

[root@master30 ~]# kubectl get svc -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller LoadBalancer 10.108.53.60 10.1.8.50 80:31003/TCP,443:31797/TCP 5s

ingress-nginx-controller-admission ClusterIP 10.100.8.74 <none> 443/TCP 5s准备证书

bash

## 生成自签名证书

[root@master30 ~]# openssl req -x509 -nodes -days 3650 -newkey rsa:2048 \

-keyout tls.key -out tls.crt \

-subj "/CN=*.shizhan.cloud"

## 创建 Secret 存储证书

[root@master30 ~]# kubectl create secret tls ingress-nginx-tls \

--namespace ecshop \

--cert=tls.crt \

--key=tls.key

[root@master30 ~]# kubectl get secrets ingress-nginx-tls

NAME TYPE DATA AGE

ingress-nginx-tls kubernetes.io/tls 2 28s步骤 8:初始化站点

8.1 配置 ingress 规则

bash

[root@master30 ~]# cat > shop-ingress.yaml <<'EOF'

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: tls-ingress-shop

annotations:

nginx.ingress.kubernetes.io/ssl-redirect: "true"

nginx.ingress.kubernetes.io/force-ssl-redirect: "true"

spec:

ingressClassName: nginx

tls:

- hosts:

- shop.shizhan.cloud

secretName: ingress-nginx-tls

rules:

- host: shop.shizhan.cloud

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: nginx

port:

number: 80

EOF

bash

[root@master30 ~]# kubectl apply -f shop-ingress.yaml

[root@master30 ~]# kubectl describe ingress tls-ingress-shop

Name: tls-ingress-shop

Labels: <none>

Namespace: ecshop

Address: 10.1.8.50

Ingress Class: nginx

Default backend: <default>

TLS:

ingress-nginx-tls terminates shop.shizhan.cloud

Rules:

Host Path Backends

---- ---- --------

shop.shizhan.cloud

/ nginx:80 (10.224.103.70:80)

Annotations: nginx.ingress.kubernetes.io/force-ssl-redirect: true

nginx.ingress.kubernetes.io/ssl-redirect: true

Events: <none>8.2 访问站点

windows 访问,需提前配置域名解析。

C:\Windows\System32\drivers\etc\hosts

10.1.8.50 shop.shizhan.cloud

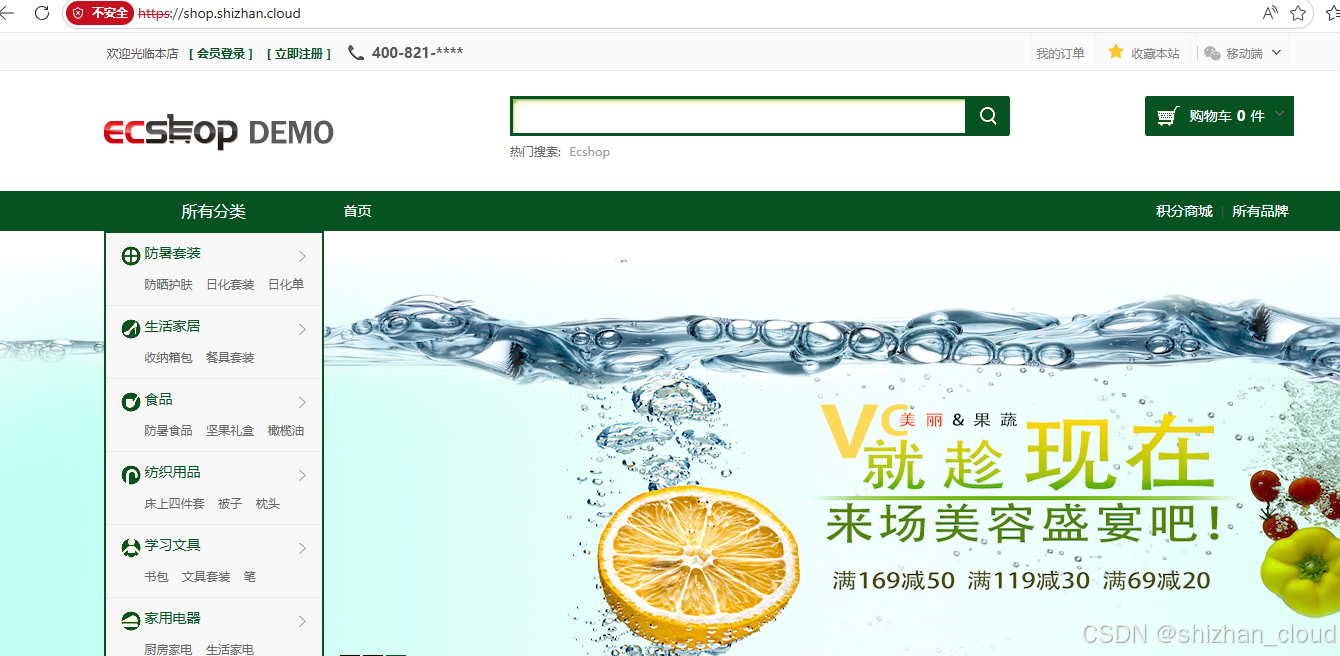

访问 https://shop.shizhan.cloud,点击高级继续。

点击继续前往shop.shizhan.cloud(不安全)继续。

8.3 初始化站点

进入 ECShop 商城站点欢迎页面,勾选我已仔细阅读,并同意上述条款中的所有内容,点击下一步:配置安装环境继续。

点击下一步:配置系统继续。

根据提示填写相关内容,然后点击立即安装继续。

安装完成后访问主页https://shop.shizhan.cloud/。

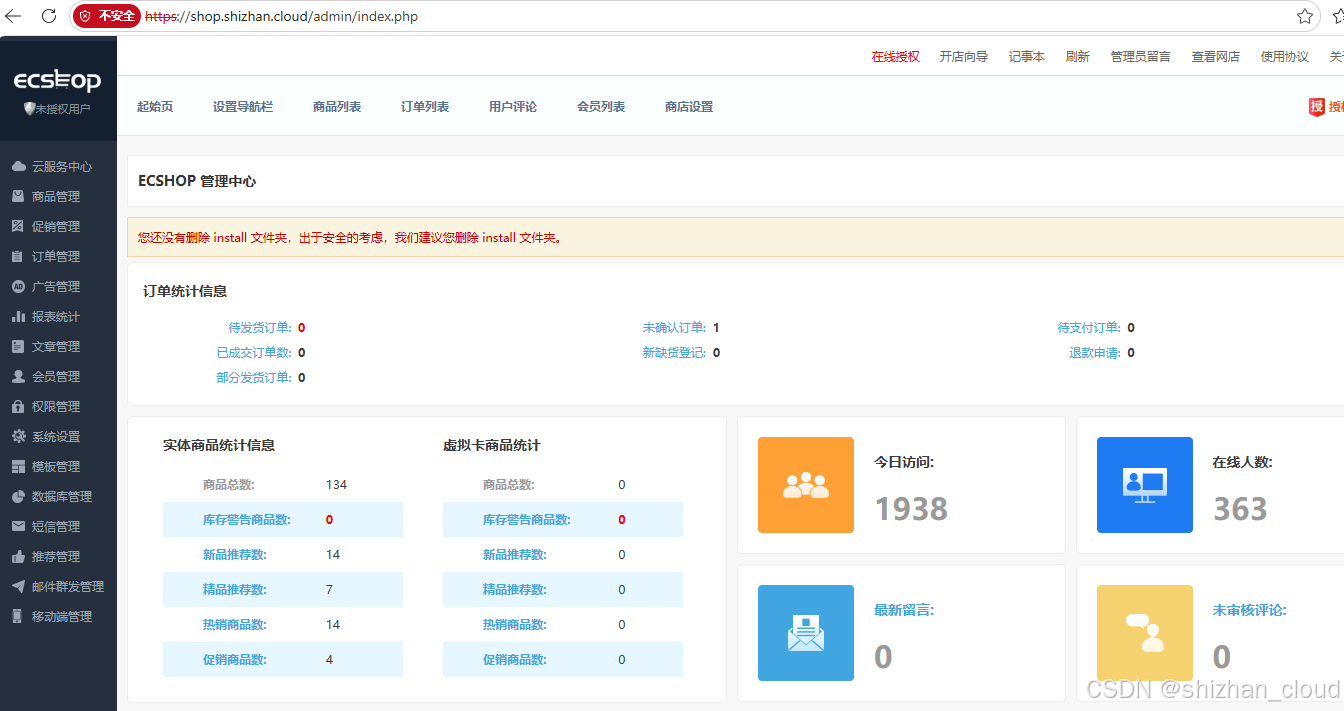

访问后台 https://shop.shizhan.cloud/admin,使用ECShop账号登录。

输入用户名和密码,点击登录。

登录成功。

步骤 9:部署 Metric Server

bash

# 下载 Metrics-Server 资源定义

[root@master30 ~]# wget https://github.com/kubernetes-sigs/metrics-server/releases/download/v0.7.1/components.yaml

# 修改Metrics-Server配置,不校验tls

[root@master30 ~]# vim components.yaml

yaml

......

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 0

template:

metadata:

labels:

k8s-app: metrics-server

spec:

containers:

- args:

- --cert-dir=/tmp

- --secure-port=10250

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

- --metric-resolution=15s

# 添加--kubelet-insecure-tls参数

- --kubelet-insecure-tls

......

bash

[root@master30 ~]# kubectl apply -f components.yaml

[root@master30 ~]# kubectl top nodes

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

master30.shizhan.cloud 70m 3% 1709Mi 45%

worker31.shizhan.cloud 22m 1% 1166Mi 30%

worker32.shizhan.cloud 24m 1% 1069Mi 28步骤 10:配置 HPA

bash

# 扩容

[root@master30 ~]# kubectl autoscale deployment php --min=2 --max=5 --cpu-percent=80

[root@master30 ~]# kubectl get deployments.apps php

NAME READY UP-TO-DATE AVAILABLE AGE

php 2/2 2 2 3h33m

[root@master30 ~]# kubectl autoscale deployment nginx --min=2 --max=5 --cpu-percent=80

[root@master30 ~]# kubectl get deployments.apps nginx

NAME READY UP-TO-DATE AVAILABLE AGE

nginx 2/2 2 2 3h27m

[root@master30 ~]# kubectl describe quota ecshop

Name: ecshop

Namespace: ecshop

Resource Used Hard

-------- ---- ----

cpu 1 4

memory 1342177280 8G

requests.cpu 1 4

requests.memory 1342177280 8G

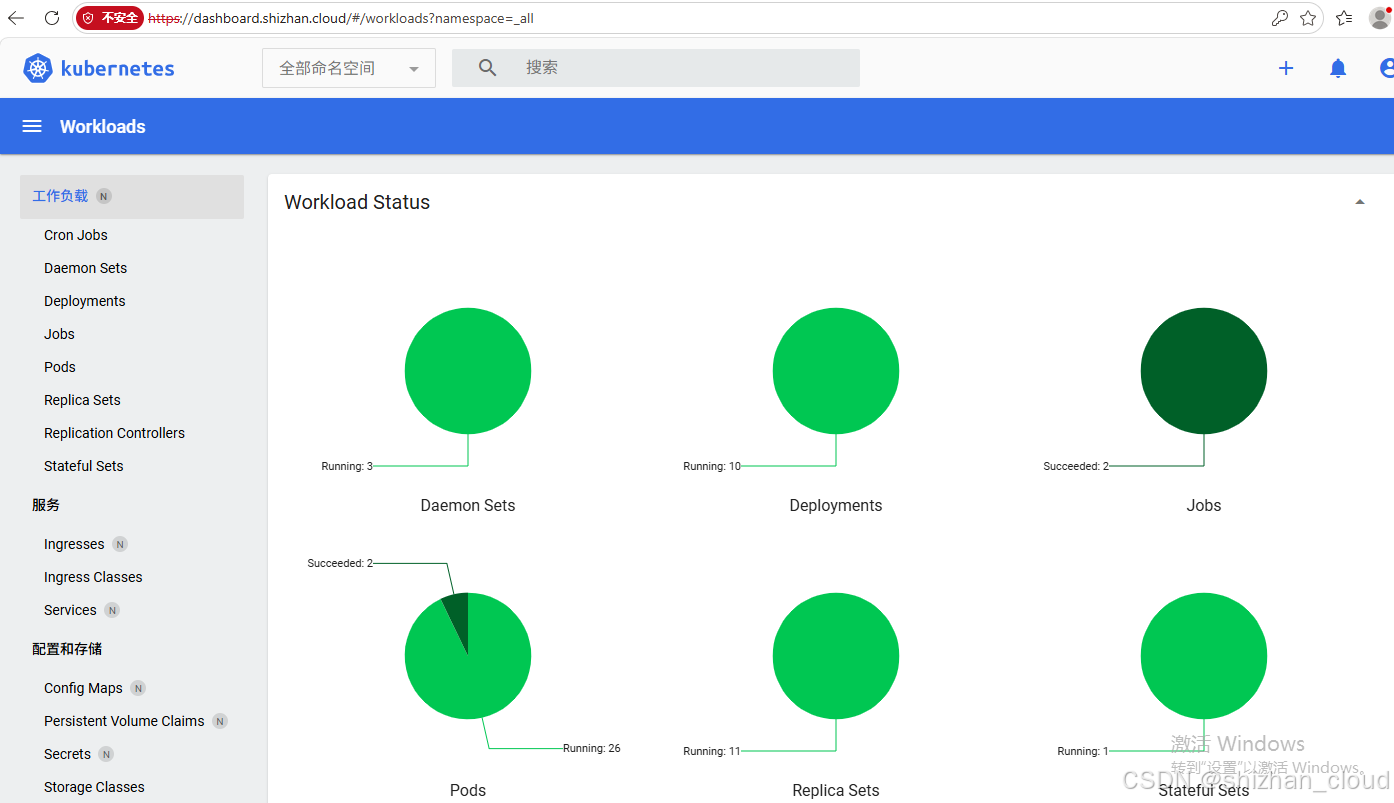

requests.storage 21474836480 500G步骤 11:部署 Dashboard

11.1 部署 Dashboard

bash

# 下载资源yaml文件

[root@master30 ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.6.1/aio/deploy/recommended.yaml

# 查看images

[root@master30 ~]# grep image: recommended.yaml

image: kubernetesui/dashboard:v2.6.1

image: kubernetesui/metrics-scraper:v1.0.8

# 导入镜像kubernetesui_dashboard_v2.6.1.tar、kubernetesui_metrics-scraper_v1.0.8.tar

[root@worker31 ~]# nerdctl load -i kubernetesui_dashboard_v2.6.1.tar

[root@worker31 ~]# nerdctl load -i kubernetesui_metrics-scraper_v1.0.8.tar

[root@worker32 ~]# nerdctl load -i kubernetesui_dashboard_v2.6.1.tar

[root@worker32 ~]# nerdctl load -i kubernetesui_metrics-scraper_v1.0.8.tar

# 执行部署

[root@master30 ~]# kubectl apply -f recommended.yaml11.2 配置 ingress 规则

bash

[root@master30 ~]# cat > dashborad-ingress.yaml <<'EOF'

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: tls-ingress-dashborad

namespace: kubernetes-dashboard

annotations:

nginx.ingress.kubernetes.io/ssl-redirect: "true"

nginx.ingress.kubernetes.io/backend-protocol: "HTTPS"

nginx.ingress.kubernetes.io/force-ssl-redirect: "true"

spec:

ingressClassName: nginx

tls:

- hosts:

- dashboard.shizhan.cloud

secretName: ingress-nginx-tls

rules:

- host: dashboard.shizhan.cloud

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: kubernetes-dashboard

port:

number: 443

EOF

bash

[root@master30 ~]# kubectl apply -f dashborad-ingress.yaml

[root@master30 ~]# kubectl describe ingress -n kubernetes-dashboard tls-ingress-dashborad

Name: tls-ingress-dashborad

Labels: <none>

Namespace: kubernetes-dashboard

Address: 10.1.8.50

Ingress Class: nginx

Default backend: <default>

TLS:

ingress-nginx-tls terminates dashboard.shizhan.cloud

Rules:

Host Path Backends

---- ---- --------

dashboard.shizhan.cloud

/ kubernetes-dashboard:443 (10.224.88.201:8443)

Annotations: nginx.ingress.kubernetes.io/backend-protocol: HTTPS

nginx.ingress.kubernetes.io/force-ssl-redirect: true

nginx.ingress.kubernetes.io/ssl-redirect: true

Events: <none>

[root@master30 ~]# kubectl get service -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 10.111.171.224 <none> 8000/TCP 7m48s

kubernetes-dashboard ClusterIP 10.107.0.186 <none> 443/TCP 7m48s

# 修改 service/kubernetes-dashboard 类型为 NodePort,从外部访问。

[root@master30 ~]# kubectl edit svc kubernetes-dashboard

spec:

......

type: NodePort

[root@master30 ~]# kubectl get svc kubernetes-dashboard -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes-dashboard NodePort 10.107.0.186 <none> 443:32065/TCP 12m

# 配置hosts

C:\Windows\System32\drivers\etc\hosts

10.1.8.50 dashboard.shizhan.cloud11.3 访问 dashboard

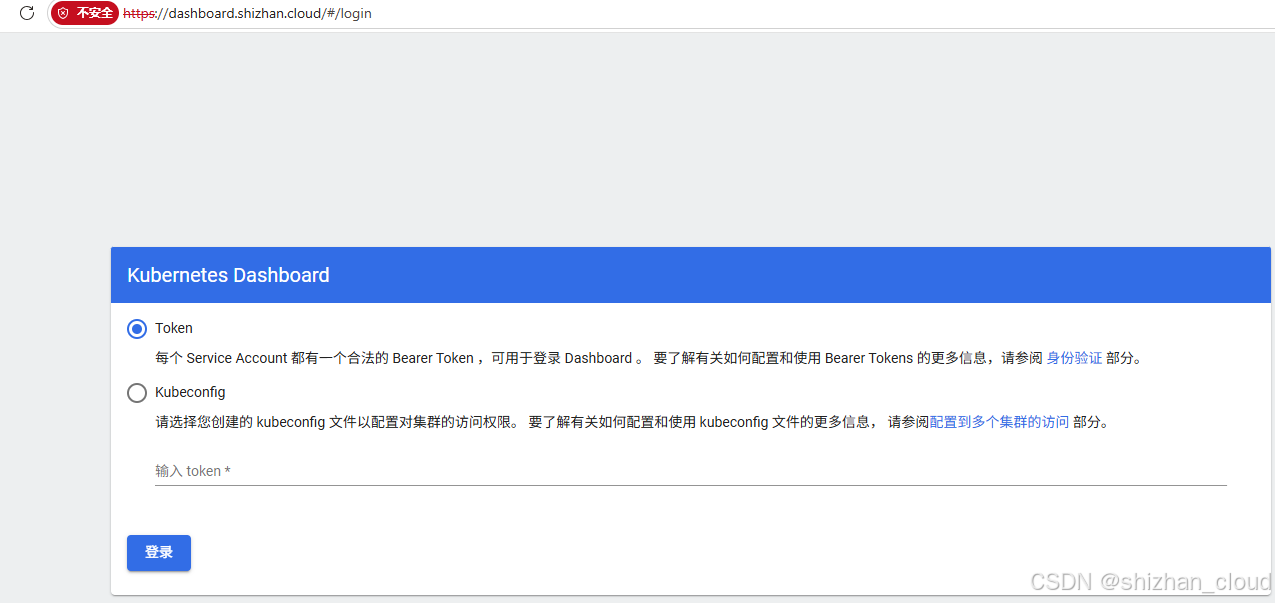

访问 https://dashboard.shizhan.cloud

要访问 https://dashboard.shizhan.cloud/,需要创建一个名为 admin-user 的 ServiceAccount,再创建一个 ClusterRolebinding,将其绑定到 Kubernetes 集群中默认初始化的 cluster-admin 这个 ClusterRole。

bash

[root@master30 ~]# cat > kubernetes-dashboard-admin-user.yaml <<'EOF'

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

EOF

bash

[root@master30 ~]# kubectl apply -f kubernetes-dashboard-admin-user.yaml

# 创建 Bearer Token

[root@master30 ~]# kubectl -n kubernetes-dashboard create token admin-user

eyJhbGciOiJSUzI1NiIsImtpZCI6IkszbHV2VVhzZGptc2VqREJ5aUhWRUdNRWwzVFUtQkxOM0VYTzdfckYxWEEifQ.eyJhdWQiOlsiaHR0cHM6Ly9rdWJlcm5ldGVzLmRlZmF1bHQuc3ZjLmNsdXN0ZXIubG9jYWwiXSwiZXhwIjoxNzc4MjMzNzU3LCJpYXQiOjE3NzgyMzAxNTcsImlzcyI6Imh0dHBzOi8va3ViZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVyLmxvY2FsIiwianRpIjoiZWM2NzZjZGEtYTgxYy00YWE1LWI3OTYtMTA4ODY2ODNjMGJkIiwia3ViZXJuZXRlcy5pbyI6eyJuYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsInNlcnZpY2VhY2NvdW50Ijp7Im5hbWUiOiJhZG1pbi11c2VyIiwidWlkIjoiNmIzZDIwNjAtZDMzZC00ZTIyLWFmMzctZTg4MmEyMDliYjY2In19LCJuYmYiOjE3NzgyMzAxNTcsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlcm5ldGVzLWRhc2hib2FyZDphZG1pbi11c2VyIn0.RrS_S0PYN2JPuK7PsCJWT4RhltuM3eVOwTJFKAisCwXFaGQdHJ47ZI6CuhLN3WdI1bccnVo2Sk5xTkWRVXXUQferkOtrtVrYpuSq6mEf4Xz1uIbsKdbfrnuvjSf6b-jDLrvIb2LwYG46ENuyETN6vaWY-AfRkGxNvWWeIlai6l_JNuD7K_tNFPxI-uQIWg38plmvq44gg-HrKPLpBHMqnL82HHoY4qGdrUj24rLYlJG2D08OtW_lDJnqpMnJYBw0nPye3uNdMxtOSyXHc9l-NaNGLoejoi9VmdCPW97wXzEreoYzwWVDswavQT5c1Q8m53enahDhtlO7Yve89KIgRA提示:Token默认有效期1小时,过期后自动删除。下一次登录需要生成新的Token。

pod/kubernetes-dashboard 以 sa/kubernetes-dashboard身份运行,权限有限。

赋予sa/kubernetes-dashboard最高权限clusterrole/cluster-admin。

bash

[root@master30 ~]# kubectl create clusterrolebinding kubernetes-dashboard-cluster-admin --clusterrole=cluster-admin --serviceaccount=kubernetes-dashboard:kubernetes-dashboard再次刷新页面查看: