部署k8s基础环境

一、环境准备

1、主机准备:

k8s-master(192.168.2.90)k8s-node01(192.168.2.91)k8s-node02(192.168.2.92)

2、关闭防火墙、selinux、NetworkManager

root@k8s-master \~\]# systemctl stop firewalld

\[root@k8s-master \~\]# systemctl disable firewalld

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

\[root@k8s-master \~\]# setenforce 0

\[root@k8s-master \~\]# sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux

\[root@k8s-master \~\]# sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

###### 3、设置主机劫持

\[root@k8s-master \~\]# vim /etc/hosts

192.168.2.90 k8s-master

192.168.2.91 k8s-node01

192.168.2.92 k8s-node02

\[root@k8s-master \~\]# scp /etc/hosts root@192.168.2.91:/etc/hosts

\[root@k8s-master \~\]# scp /etc/hosts root@192.168.2.92:/etc/hosts

\[root@k8s-master \~\]# ping k8s-node01

PING k8s-node01 (192.168.2.91) 56(84) bytes of data.

64 bytes from k8s-node01 (192.168.2.91): icmp_seq=1 ttl=64 time=0.346 ms

64 bytes from k8s-node01 (192.168.2.91): icmp_seq=2 ttl=64 time=0.265 ms

###### 4、设置主机间免密:

\[root@k8s-master \~\]# ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:pJNP7Nx9pi00P7w8nBNECxdAyHyKPnc6UNaLdXYs6b8 root@k8s-master

The key's randomart image is:

+---\[RSA 2048\]----+

\| o oo... \|

\| + o o \|

\| .. + + + \|

\| =. + o B o\|

\| +.So o \* o \|

\| \*+.o.= o \|

\| ++.+.=o+ \|

\| o .\*O .\|

\| ...+E.\|

+----\[SHA256\]-----+

\[root@k8s-master \~\]# ssh-copy-id root@192.168.2.91

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@192.168.2.91's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'root@192.168.2.91'"

and check to make sure that only the key(s) you wanted were added.

\[root@k8s-master \~\]# ssh-copy-id root@192.168.2.92

###### 5、配置yum源:

\[root@k8s-master \~\]# cd /etc/yum.repos.d/

# docker软件源

\[root@k8s-master yum.repos.d\]# vim docker-ce.repo

\[docker-ce-stable

name=Docker CE Stable - $basearch

baseurl=https://mirrors.aliyun.com/docker-ce/linux/centos/$releasever/$basearch/stable

enabled=1

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

docker-ce-stable-debuginfo

name=Docker CE Stable - Debuginfo $basearch

baseurl=https://mirrors.aliyun.com/docker-ce/linux/centos/$releasever/debug-$basearch/stable

enabled=0

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

docker-ce-stable-source

name=Docker CE Stable - Sources

baseurl=https://mirrors.aliyun.com/docker-ce/linux/centos/$releasever/source/stable

enabled=0

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

docker-ce-test

name=Docker CE Test - $basearch

baseurl=https://mirrors.aliyun.com/docker-ce/linux/centos/$releasever/$basearch/test

enabled=0

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

docker-ce-test-debuginfo

name=Docker CE Test - Debuginfo $basearch

baseurl=https://mirrors.aliyun.com/docker-ce/linux/centos/$releasever/debug-$basearch/test

enabled=0

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

docker-ce-test-source

name=Docker CE Test - Sources

baseurl=https://mirrors.aliyun.com/docker-ce/linux/centos/$releasever/source/test

enabled=0

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

docker-ce-nightly

name=Docker CE Nightly - $basearch

baseurl=https://mirrors.aliyun.com/docker-ce/linux/centos/$releasever/$basearch/nightly

enabled=0

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

docker-ce-nightly-debuginfo

name=Docker CE Nightly - Debuginfo $basearch

baseurl=https://mirrors.aliyun.com/docker-ce/linux/centos/$releasever/debug-$basearch/nightly

enabled=0

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

docker-ce-nightly-source

name=Docker CE Nightly - Sources

baseurl=https://mirrors.aliyun.com/docker-ce/linux/centos/$releasever/source/nightly

enabled=0

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

K8S软件源

root@k8s-master yum.repos.d\]# vim kubernetes.repo

\[kubernetes

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

root@k8s-master yum.repos.d\]# yum clean all \&\& yum makecache

\[root@k8s-master yum.repos.d\]# scp docker-ce.repo root@10.0.0.22:/etc/yum.repos.d/

docker-ce.repo 100% 2073 1.9MB/s 00:00

\[root@k8s-master yum.repos.d\]# scp kubernetes.repo root@10.0.0.22:/etc/yum.repos.d/

kubernetes.repo 100% 211 281.2KB/s 00:00

\[root@k8s-master yum.repos.d\]# scp docker-ce.repo root@10.0.0.33:/etc/yum.repos.d/

docker-ce.repo 100% 2073 1.9MB/s 00:00

\[root@k8s-master yum.repos.d\]# scp kubernetes.repo root@10.0.0.33:/etc/yum.repos.d/

kubernetes.repo 100% 211 281.2KB/s 00:00

###### 6、安装必备工具:

\[root@k8s-master \~\]# yum install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git -y

###### 7、关闭swap 分区:

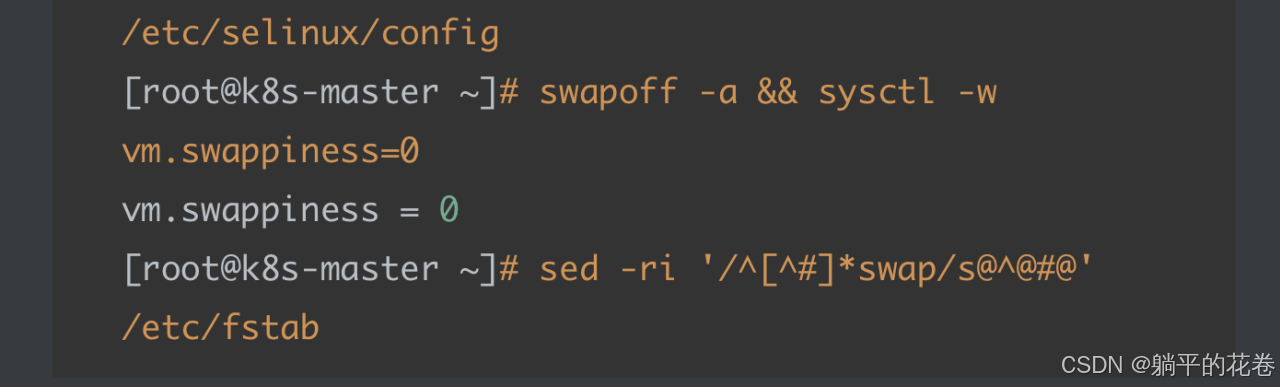

\[root@k8s-master \~\]# swapoff -a \&\& sysctl -w vm.swappiness=0

vm.swappiness = 0

\[root@k8s-master \~\]# sed -ri '/\^\[\^#\]\*swap/s@\^@#@' /etc/fstab

###### 8、同步时间

\[root@k8s-master \~\]# yum -y install ntpdate

\[root@k8s-master \~\]# ntpdate time2.aliyun.com

4 Sep 10:08:59 ntpdate\[1897\]: adjust time server 203.107.6.88 offset 0.007780 sec

\[root@k8s-master \~\]# which ntpdate

/usr/sbin/ntpdate

\[root@k8s-master \~\]# crontab -e

\* 5 \* \* \* /usr/sbin/ntpdate time2.aliyun.com

###### 9、配置 limit

# 单个进程可以打开的⽂件数量将被限制为 65535

\[root@k8s-master \~\]# ulimit -SHn 65535

\[root@k8s-master \~\]# vim /etc/security/limits.conf

# 末尾添加如下内容

\* soft nofile 65536

\* hard nofile 131072

\* soft nproc 65535

\* hard nproc 655350

\* soft memlock unlimited

\* hard memlock unlimited

###### 10、安装 k8s ⾼可⽤性 Git 仓库并重启

# 在 /root/ ⽬录下克隆⼀个名为 k8s-ha-install.git 的 Git 仓库

\[root@k8s-master \~\]# git clone https://gitee.com/dukuan/k8s-ha-install.git

正克隆到 'k8s-ha-install'...

remote: Enumerating objects: 920, done.

remote: Counting objects: 100% (8/8), done.

remote: Compressing objects: 100% (6/6), done.

remote: Total 920 (delta 1), reused 0 (delta 0), pack-reused 912

接收对象中: 100% (920/920), 19.74 MiB \| 1.51 MiB/s, done.

处理 delta 中: 100% (388/388), done.

\[root@k8s-master \~\]# cd k8s-ha-install/

\[root@k8s-master k8s-ha-install\]# ls

calico.yaml krm.yaml LICENSE metrics-server-0.3.7 metrics-server-3.6.1 README.md

\[root@k8s-master k8s-ha-install\]# reboot

##### 二、配置内核模块

###### 1、配置ipvs模块

\[root@k8s-master \~\]# yum install ipvsadm ipset sysstat conntrack libseccomp -y

# 使⽤ modprobe 命令加载内核模块,核⼼ IPVS 模块。

\[root@k8s-master \~\]# modprobe -- ip_vs

# IPVS 负载均衡算法 rr。

\[root@k8s-master \~\]# modprobe -- ip_vs_rr

# IPVS 负载均衡算法 wrr

\[root@k8s-master \~\]# modprobe -- ip_vs_wrr

# ⽤于源端负载均衡的模块

\[root@k8s-master \~\]# modprobe -- ip_vs_sh

# ⽤于⽹络流量过滤和跟踪的模块

\[root@k8s-master \~\]# modprobe -- nf_conntrack

# 在系统启动时加载下列 IPVS 和相关功能所需的模块

\[root@k8s-master \~\]# find / -name "ipvs.config"

\[root@k8s-master \~\]# vim /etc/modules-load.d/ipvs.config

ip_vs

# 负载均衡模块

ip_vs_lc

# ⽤于实现基于连接数量的负载均衡算法

ip_vs_wlc

# ⽤于实现带权重的最少连接算法的模块

ip_vs_rr

# 负载均衡rr算法模块

ip_vs_wrr

# 负载均衡wrr算法模块

ip_vs_lblc

# 负载均衡算法,它结合了最少连接(LC)算法和基于偏置的轮询(Round Robin with Bias)算法

ip_vs_lblcr

# ⽤于实现基于链路层拥塞状况的最少连接负载调度算法的模块

ip_vs_dh

# ⽤于实现基于散列(Hashing)的负载均衡算法的模块

ip_vs_sh

# ⽤于源端负载均衡的模块

ip_vs_fo

# ⽤于实现基于本地服务的负载均衡算法的模块

ip_vs_nq

# ⽤于实现NQ算法的模块

ip_vs_sed

# ⽤于实现随机早期检测(Random Early Detection)算法的模块

ip_vs_ftp

# ⽤于实现FTP服务的负载均衡模块

ip_vs_sh

nf_conntrack

# ⽤于跟踪⽹络连接的状态的模块

ip_tables

# ⽤于管理防护墙的机制

ip_set

# ⽤于创建和管理IP集合的模块

xt_set

# ⽤于处理IP数据包集合的模块,提供了与iptables等⽹络⼯具的接⼝

ipt_set

# ⽤于处理iptables规则集合的模块

ipt_rpfilter

# ⽤于实现路由反向路径过滤的模块

ipt_REJECT

# iptables模块之⼀,⽤于将不符合规则的数据包拒绝,并返回特定的错误码

ipip

# ⽤于实现IP隧道功能的模块,使得数据可以在两个⽹络之间进⾏传输

\[root@k8s-master \~\]# sysctl --system

\* Applying /usr/lib/sysctl.d/00-system.conf ...

\* Applying /usr/lib/sysctl.d/10-default-yama-scope.conf ...

kernel.yama.ptrace_scope = 0

\* Applying /usr/lib/sysctl.d/50-default.conf ...

kernel.sysrq = 16

kernel.core_uses_pid = 1

net.ipv4.conf.default.rp_filter = 1

net.ipv4.conf.all.rp_filter = 1

net.ipv4.conf.default.accept_source_route = 0

net.ipv4.conf.all.accept_source_route = 0

net.ipv4.conf.default.promote_secondaries = 1

net.ipv4.conf.all.promote_secondaries = 1

fs.protected_hardlinks = 1

fs.protected_symlinks = 1

\* Applying /etc/sysctl.d/99-sysctl.conf ...

\* Applying /etc/sysctl.conf ...

# 开机⾃启systemd默认提供的⽹络管理服务

\[root@k8s-master \~\]# systemctl enable systemd-modules-load.service

\[root@k8s-master \~\]# systemctl start systemd-modules-load.service

# 查看已写⼊加载的模块

\[root@k8s-master \~\]# lsmod \| grep -e ip_vs -e nf_conntrack

ip_vs_sh 12688 0

ip_vs_wrr 12697 0

ip_vs_rr 12600 0

ip_vs 141432 6 ip_vs_rr,ip_vs_sh,ip_vs_wrr

nf_conntrack 133053 1 ip_vs

libcrc32c 12644 3 xfs,ip_vs,nf_conntrack

###### 2、配置k8s内核

# 写⼊k8s所需内核模块

\[root@k8s-master \~\]# vim /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

# 控制网络桥接与iptables之间的网络转发行为

net.bridge.bridge-nf-call-ip6tables = 1

# ⽤于控制网络桥接(bridge)的IP6tables过滤规则。当该参数设置为1时,表示启⽤对⽹络桥接的IP6tables过滤规则

fs.may_detach_mounts = 1

# ⽤于控制⽂件系统是否允许分离挂载,1表示允许

net.ipv4.conf.all.route_localnet = 1

# 允许本地⽹络上的路由。设置为1表示允许,设置为0表示禁⽌。

vm.overcommit_memory=1

# 控制内存分配策略。设置为1表示允许内存过量分配,设置为0表示不允许。

vm.panic_on_oom=0

# 决定当系统遇到内存不⾜(OOM)时是否产⽣panic。设置为0表示不产⽣panic,设置为1表示产⽣panic。

fs.inotify.max_user_watches=89100

# inotify可以监视的⽂件和⽬录的最⼤数量。

fs.file-max=52706963

# 系统级别的⽂件描述符的最大数量。

fs.nr_open=52706963

# 单个进程可以打开的⽂件描述符的最⼤数量。

net.netfilter.nf_conntrack_max=2310720

# ⽹络连接跟踪表的最⼤⼤⼩。

net.ipv4.tcp_keepalive_time = 600

# TCP保活机制发送探测包的间隔时间(秒)。

net.ipv4.tcp_keepalive_probes = 3

# TCP保活机制发送探测包的最⼤次数。

net.ipv4.tcp_keepalive_intvl =15

# TCP保活机制在发送下⼀个探测包之前等待响应的时间(秒)。

net.ipv4.tcp_max_tw_buckets = 36000

# TCP TIME_WAIT状态的bucket数量。

net.ipv4.tcp_tw_reuse = 1

# 允许重⽤TIME_WAIT套接字。设置为1表示允许,设置为0表示不允许。

net.ipv4.tcp_max_orphans = 327680

# 系统中最⼤的孤套接字数量。

net.ipv4.tcp_orphan_retries = 3

# 系统尝试重新分配孤套接字的次数。

net.ipv4.tcp_syncookies = 1

# ⽤于防⽌SYN洪⽔攻击。设置为1表示启⽤SYN cookies,设置为0表示禁⽤。

net.ipv4.tcp_max_syn_backlog = 16384

# SYN连接请求队列的最大长度。

net.ipv4.ip_conntrack_max = 65536

# IP连接跟踪表的最大大小。

net.ipv4.tcp_max_syn_backlog = 16384

# 系统中最⼤的监听队列的长度。

net.ipv4.tcp_timestamps = 0

# ⽤于关闭TCP时间戳选项。

net.core.somaxconn = 16384

# ⽤于设置系统中最⼤的监听队列的⻓度,保存后,所有节点重启,保证重启后内核依然加载

\[root@k8s-master \~\]# lsmod \| grep --color=auto -e ip_vs -e nf_conntrack

ip_vs_sh 12688 0

ip_vs_wrr 12697 0

ip_vs_rr 12600 0

ip_vs 141432 6 ip_vs_rr,ip_vs_sh,ip_vs_wrr

nf_conntrack 133053 1 ip_vs

libcrc32c 12644 3 xfs,ip_vs,nf_conntrack

##### 三、基本组件安装

###### 1、安装 Containerd

> 1)安装 Docker

>

> \[root@k8s-master \~\]# yum remove -y podman runc containerd # 卸载之前的containerd

>

> \[root@k8s-master \~\]# yum install containerd.io docker-ce dockerce-cli -y

>

> 2)配置 Containerd 所需模块

>

> \[root@k8s-master \~\]# cat \<\

> \> overlay

>

> \> br_netfilter

>

> \> EOF

>

> overlay # ⽤于⽀持Overlay⽹络⽂件系统的模块,它可以在现有的⽂件系统之上创建叠加层,以实现虚拟化、隔离和管理等功能。

>

> br_netfilter # ⽤于containerd的⽹络过滤模块,它可以对进出容器的⽹络流量进⾏过滤和管理。

>

> \[root@k8s-master \~\]# cat /etc/modules-load.d/containerd.conf

>

> overlay

>

> br_netfilter

>

> \[root@k8s-master \~\]# modprobe -- overlay

>

> \[root@k8s-master \~\]# modprobe -- br_netfilter

>

> 3)配置 Containerd 所需内核

>

> \[root@k8s-master \~\]# vim /etc/sysctl.d/99-kubernetes-cri.conf

>

> net.bridge.bridge-nf-call-iptables = 1 # ⽤于控制⽹络桥接是否调⽤iptables进⾏包过滤和转发。

>

> net.ipv4.ip_forward = 1

>

> # 路由转发,1为开启

>

> net.bridge.bridge-nf-call-ip6tables = 1

>

> # 控制是否在桥接接⼝上调⽤IPv6的iptables进⾏数据包过滤和转发。

>

> \[root@k8s-master \~\]# sysctl --system

>

> 4)Containerd 配置⽂件

>

> \[root@k8s-master \~\]# mkdir -p /etc/containerd

>

> # 读取containerd的配置并保存到/etc/containerd/config.toml

>

> \[root@k8s-master \~\]# containerd config default \| tee /etc/containerd/config.toml

>

> \[root@k8s-master \~\]# vim /etc/containerd/config.toml

>

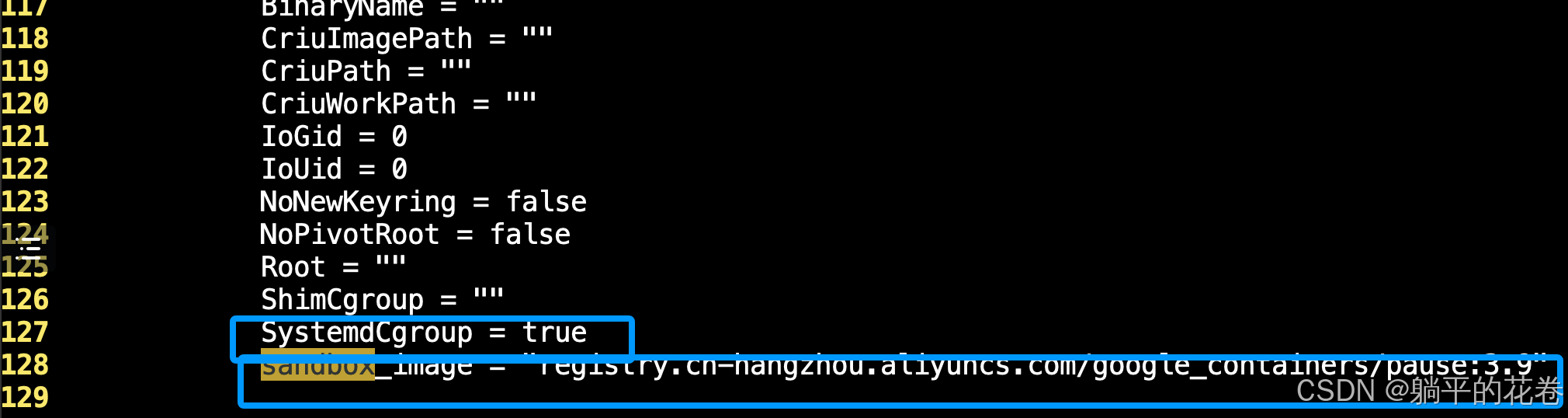

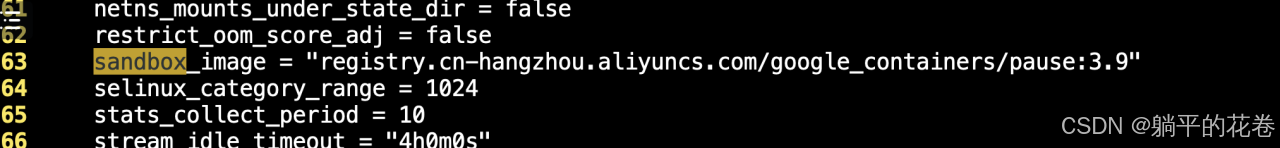

> # 找到第63行修改为sandbox_image = "registry.cnhangzhou.aliyuncs.com/google_containers/pause:3.9"

>

> # 找到containerd.runtimes.runc.options模块,添加SystemdCgroup = false,如果已经存在则直接修改(127行)

>

>

>

> # 添加sandbox_image = "registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.9" (128行)

>

>

>

> # 加载systemctl控制脚本

>

> \[root@k8s-master \~\]# systemctl daemon-reload

>

> # 启动containerd并设置开机启动

>

> \[root@k8s-master \~\]# systemctl start containerd.service

>

> \[root@k8s-master \~\]# systemctl enable containerd.service

>

> Created symlink from /etc/systemd/system/multi-user.target.wants/containerd.service to /usr/lib/systemd/system/containerd.service.

>

> 注意!!!:不能启动的情况,关闭swap虚拟分区,保证kubelet正常启动

>

>

>

> 5)配置 crictl 客户端连接的运⾏位置

>

> # 配置容器运⾏环境的crictl.yml⽂件

>

> \[root@k8s-master \~\]# cat \<\

> runtime-endpoint: unix:///run/containerd/containerd.sock

>

> image-endpoint: unix:///run/containerd/containerd.sock

>

> timeout: 10

>

> debug: false

>

> EOF

> # 指定了容器运⾏时的地址为:unix://...

>

> image-endpoint: unix:///run/containerd/containerd.sock

> # 指定了镜像运⾏时的地址为:unix://...

>

> timeout: 10

> # 设置了超时时间为10秒

>

> debug: false

###### 2、安装 Kubernetes 组件

> # 安装 Kubeadm、Kubelet 和 Kubectl

>

> # 查询最新的Kubernetes版本号

>

> \[root@k8s-master \~\]# yum list kubeadm.x86_64 --showduplicates \| sort -r

>

> # 安装1.28最新版本kubeadm、kubelet和kubectl

>

> \[root@k8s-master \~\]# yum install kubeadm-1.28\* kubelet-1.28\* kubectl-1.28\* -y

>

> \[root@k8s-master \~\]# systemctl daemon-reload

>

> # 允许开机⾃启kubelet

>

> \[root@k8s-master \~\]# systemctl enable --now kubelet

>

> # 查看当前安装的kubeadm版本号

>

> \[root@k8s-master \~\]# kubeadm version

>

> kubeadm version: \&version.Info{Major:"1", Minor:"28", GitVersion:"v1.28.2", GitCommit:"89a4ea3e1e4ddd7f7572286090359983e0387b2f", GitTreeState:"clean", BuildDate:"2023-09-13T09:34:32Z", GoVersion:"go1.20.8", Compiler:"gc", Platform:"linux/amd64"}

>

> 问题:kubelet启动失败

>

> # 查看日志

>

> \[root@k8s-master \~\]# vim /var/log/messages

>

> 解决-----配置文件未生成,重新安装kubelet

>

> \[root@k8s-master \~\]# yum -y remove kubelet

>

> \[root@k8s-master \~\]# yum -y install kubelet-1.28\*

>

> \[root@k8s-master \~\]# systemctl start kubelet

>

> \[root@k8s-master \~\]# systemctl status kubelet

>

> Active: active (running) since 三 2024-09-11 14:25:57 CST; 3s ago

>

> # 由于kubeadm依赖kubelet所以卸载前者时后者也卸载了,需要重新安装

>

> \[root@k8s-master \~\]# yum -y install kubeadm-1.28\*

>

> # 查看kubelet端口是否启动

>

> \[root@k8s-master \~\]# netstat -lntup \| grep kube

>

> tcp 0 0 127.0.0.1:10248 0.0.0.0:\* LISTEN 2392/kubelet

>

> tcp6 0 0 :::10250 :::\* LISTEN 2392/kubelet

>

> tcp6 0 0 :::10255 :::\* LISTEN 2392/kubelet

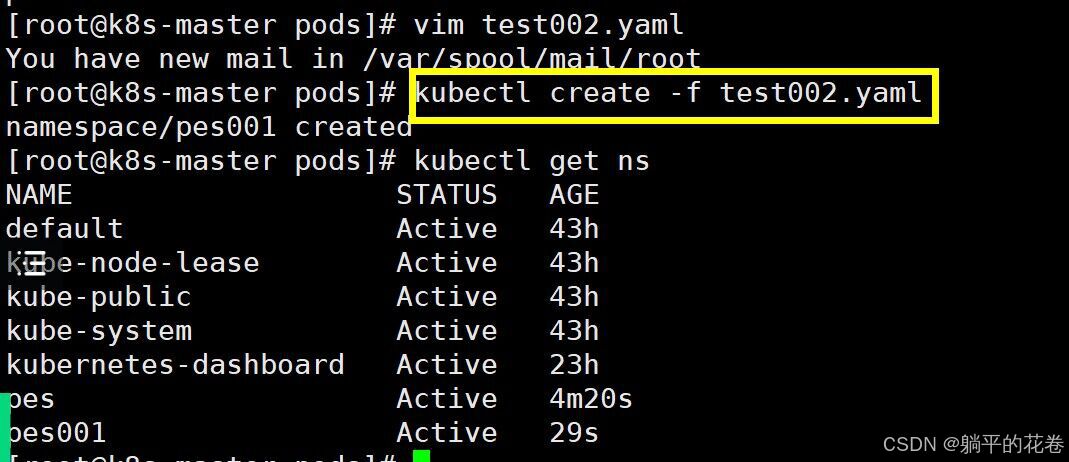

###### 3、Kubernetes 集群初始化

> 1)Kubeadm 配置⽂件

>

> # 修改kubeadm配置⽂件

>

> \[root@k8s-master \~\]# vim kubeadm-config.yaml

>

> apiVersion: kubeadm.k8s.io/v1beta3

>

> # 指定Kubernetes配置文件的版本,使用的是kubeadm API的v1beta3版本

>

> bootstrapTokens:

>

> # 定义bootstrap tokens的信息。这些tokens用于在Kubernetes集群初始化过程中进行身份验证

>

> - groups:

>

> # 定义了与此token关联的组

>

> - system:bootstrappers:kubeadm:default-node-token

>

> token: 7t2weq.bjbawausm0jaxury

>

> # bootstrap token的值

>

> ttl: 24h0m0s

>

> # token的生存时间,这里设置为24小时

>

> usages:

>

> # 定义token的用途

>

> - signing

>

> # 数字签名

>

> - authentication

>

> # 身份验证

>

> kind: InitConfiguration

>

> # 指定配置对象的类型,InitConfiguration:表示这是一个初始化配置

>

> localAPIEndpoint:

>

> # 定义本地API端点的地址和端口

>

> advertiseAddress: 192.168.15.11

>

> bindPort: 6443

>

> nodeRegistration:

>

> # 定义节点注册时的配置

>

> criSocket: unix:///var/run/containerd/containerd.sock

>

> # 容器运行时(CRI)的套接字路径

>

> name: k8s-master

>

> # 节点的名称

>

> taints:

>

> # 标记

>

> - effect: NoSchedule

>

> # 免调度节点

>

> key: node-role.kubernetes.io/control-plane

>

> # 该节点为控制节点

>

> ---

>

> apiServer:

>

> # 定义了API服务器的配置

>

> certSANs:

>

> # 为API服务器指定了附加的证书主体名称(SAN),指定IP即可

>

> - 192.168.15.11

>

> timeoutForControlPlane: 4m0s

>

> # 控制平面的超时时间,这里设置为4分钟

>

> apiVersion: kubeadm.k8s.io/v1beta3

>

> # 指定API Server版本

>

> certificatesDir: /etc/kubernetes/pki

>

> # 指定了证书的存储目录

>

> clusterName: kubernetes

>

> # 定义了集群的名称为"kubernetes"

>

> controlPlaneEndpoint: 192.168.15.11:6443

>

> # 定义了控制节点的地址和端口

>

> controllerManager: {}

>

> # 控制器管理器的配置,为空表示使用默认配置

>

> etcd:

>

> # 定义了etcd的配置

>

> local:

>

> # 本地etcd实例

>

> dataDir: /var/lib/etcd

>

> # 数据目录

>

> imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

>

> # 指定了Kubernetes使用的镜像仓库的地址,阿里云的镜像仓库。

>

> kind: ClusterConfiguration

>

> # 指定了配置对象的类型,ClusterConfiguration:表示这是一个集群配置

>

> kubernetesVersion: v1.28.2

>

> # 指定了kubernetes的版本

>

> networking:

>

> # 定义了kubernetes集群网络设置

>

> dnsDomain: cluster.local

>

> # 定义了集群的DNS域为:cluster.local

>

> podSubnet: 172.16.0.0/16

>

> # 定义了Pod的子网

>

> serviceSubnet: 10.96.0.0/16

>

> # 定义了服务的子网

>

> scheduler: {}

>

> # 使用默认的调度器行为

>

> # 将旧的kubeadm配置⽂件转换为新的格式

>

> \[root@k8s-master \~\]# kubeadm config migrate --old-config kubeadm-config.yaml --new-config new.yaml

>

> \[root@k8s-master \~\]# vim new.yaml # 修改第12行、24行、29行的ip地址为自己本机的ip地址

>

> 2)下载组件镜像

>

> # 通过新的配置⽂件new.yaml从指定的阿⾥云仓库拉取kubernetes组件镜像

>

> \[root@k8s-master \~\]# kubeadm config images pull --config /root/new.yaml

>

> \[config/images\] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.28.2

>

> \[config/images\] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.28.2

>

> \[config/images\] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.28.2

>

> \[config/images\] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.28.2

>

> \[config/images\] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.9

>

> \[config/images\] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.9-0

>

> \[config/images\] Pulled registry.cnhangzhou.aliyuncs.com/google_containers/coredns:v1.10.1

>

> 3)集群初始化

>

> \[root@k8s-master \~\]# kubeadm init --config /root/new.yaml --upload-certs

>

> # 等待初始化后保存这些命令

>

> # 当需要加⼊新node节点时,只复制这执行即可

>

> \[root@k8s-master \~\]# vim token.txt

>

> kubeadm join 10.0.0.200:6443 --token 7t2weq.bjbawausm0jaxury --discovery-token-ca-cert-hash sha256:92191cb8741805ac561c5781d936f60a44a3233740209abf6e64738bfecd4c5e

>

> # 当需要⾼可⽤master集群时,整个token复制

>

> --control-plane --certificate-key f9984be15f98141b212efa176c7a49fcda982888f8869b7cc668e661982cbcc0

>

> 问题1:初始化时报错!!!

>

> \[root@k8s-master \~\]# kubeadm init --config /root/new.yaml --upload-certs

>

> # 端口18258正被kubelet使用,初始化会自动启动kubelet,所以手动关闭kubelet服务 \[root@k8s-master \~\]# systemctl stop kubelet

>

> 问题2:错误信息显示需要修改配置文件/proc/sys/net/ipv4/ip_forward

>

> \[root@k8s-master \~\]# echo 1 \> /proc/sys/net/ipv4/ip_forward

>

> \[root@k8s-master \~\]# kubeadm init --config /root/new.yaml --upload-certs

>

> 问题3:错误信息显示本机内存不够,cpu数量不够,我们现在将本机内存提到4个G,cpu数量提到4个, 需关闭本主机然后进行修改主机配置的操作。

>

> # 检查kubelet为运行状态

>

> \[root@master \~\]# systemctl status kubelet

>

> Active: active (running) since 五 2024-09-06 17:33:30 CST; 5min ago

>

> # 可能是配置文件的地址没有改,所以找不到主机,所以超时

>

> \[root@k8s-master \~\]# vim new.yaml

>

> # 修改第12行、24行、29行的ip地址为自己本机的ip地址,初始化重置

>

> \[root@k8s-master \~\]# kubeadm reset -f ; ipvsadm --clear ; rm -rf \~/.kube

>

> \[root@k8s-master \~\]# kubeadm init --config /root/new.yaml --upload-certs

>

> 5)加载环境变量

>

> \[root@k8s-master \~\]# vim /root/.bashrc export KUBECONFIG=/etc/kubernetes/admin.conf \[root@k8s-master \~\]# source /root/.bashrc

>

> 6)查看组件容器状态

>

> 之前采⽤初始化安装⽅式,所有的系统组件均以容器的⽅式运⾏ 并且在 kube-system 命名空间内,此时可以查看 Pod(容器 组)状态

>

> pending 挂起 当前pod没有工作

>

> running 运行中 当前pod正常工作

>

> containercreating 正在创建容器 正在创建

>

> \[root@k8s-master \~\]# kubectl get po -A

>

> NAMESPACE NAME READY STATUS RESTARTS AGE

>

> kube-system coredns-6554b8b87f-2v4tx 0/1 Pending 0 52m

>

> kube-system coredns-6554b8b87f-zfqlb 0/1 Pending 0 52m

>

> kube-system etcd-k8s-master 1/1 Running 0 52m

>

> kube-system kube-apiserver-k8s-master 1/1 Running 0 52m

>

> kube-system kube-controller-manager-k8s-master 1/1 Running 0 52m

>

> kube-system kube-proxy-9r6st 1/1 Running 0 52m

>

> kube-system kube-proxy-lx5wz 1/1 Running 0 22m

>

> kube-system kube-proxy-xmk6s 1/1 Running 0 25m

>

> kube-system kube-scheduler-k8s-master 1/1 Running 0 52m

>

> \[root@k8s-master \~\]# kubectl get po -n kube-system

>

> NAME READY STATUS RESTARTS AGE

>

> coredns-6554b8b87f-2jslr 0/1 Pending 0 10m

>

> coredns-6554b8b87f-mmgbd 0/1 Pending 0 10m

>

> etcd-k8s-master 1/1 Running 0 10m

>

> kube-apiserver-k8s-master 1/1 Running 0 10m

>

> kube-controller-manager-k8s-master 1/1 Running 3 10m

>

> kube-proxy-tvk64 1/1 Running 0 10m

>

> kube-scheduler-k8s-master 1/1 Running 3 10m

>

> # kubectl:k8s控制命令

>

> # get:获取参数

>

> # po:pod缩写

>

> # -n:指定命名空间

>

> # kube-system:命名空间

4、Token 过期处理

注意!!!:以下步骤是上述初始化命令产⽣的 Token 过期了才需要执 ⾏以下步骤,如果没有过期不需要执⾏,直接 join 即可。

Token 过期后⽣成新的 token:kubeadm token create --print-join-command

Master 需要⽣成 --certificate-key:kubeadm init phase upload-certs --upload-certs

###### 5、Node 节点配置

> 1)概述:Node 节点上主要部署公司的⼀些业务应⽤,⽣产环境中不建议 Master 节点部署系统组件之外的其他 Pod,测试环境可以允许 Master 节点部署 Pod 以节省系统资源。

>

> 2)加入集群

>

> \[root@k8s-node01 \~\]# kubeadm join 10.0.0.66:6443 --token 7t2weq.bjbawausm0jaxury \\

>

> \> --discovery-token-ca-cert-hash sha256:f3ac431e03dae7f972728eb71eef1828264d42ec20a163893c812a2a0289cf99

>

> 问题:加入集群失败时如何解决?

>

> # 端口被占用,手动停止kubelet,加入集群的过程中会自动启动

>

> \[root@k8s-node01 \~\]# systemctl stop kubelet

>

> Warning: kubelet.service changed on disk. Run 'systemctl daemon-reload' to reload units.

>

> # 修改ip_forward文件

>

> \[root@k8s-node01 \~\]# echo 1 \> /proc/sys/net/ipv4/ip_forward

>

> \[root@k8s-node01 \~\]# kubeadm join 10.0.0.66:6443 --token 7t2weq.bjbawausm0jaxury --discovery-token-ca-cert-hash sha256:f3ac431e03dae7f972728eb71eef1828264d42ec20a163893c812a2a0289cf99

>

> \[root@k8s-master \~\]# kubectl get node # 获取所有节点信息

>

> NAME STATUS ROLES AGE VERSION

>

> k8s-master NotReady control-plane 24h v1.28.2

>

> node01 NotReady \ 68s v1.28.2

>

> node02 NotReady \ 57s v1.28.2

>

> \[root@k8s-master \~\]# kubectl get po -A

>

> NAMESPACE NAME READY STATUS RESTARTS AGE

>

> kube-system coredns-6554b8b87f-2v4tx 0/1 Pending 0 52m

>

> kube-system coredns-6554b8b87f-zfqlb 0/1 Pending 0 52m

>

> kube-system etcd-k8s-master 1/1 Running 0 52m

>

> kube-system kube-apiserver-k8s-master 1/1 Running 0 52m

>

> kube-system kube-controller-manager-k8s-master 1/1 Running 0 52m

>

> kube-system kube-proxy-9r6st 1/1 Running 0 52m

>

> kube-system kube-proxy-lx5wz 1/1 Running 0 22m

>

> kube-system kube-proxy-xmk6s 1/1 Running 0 25m

>

> kube-system kube-scheduler-k8s-master 1/1 Running 0 52m

>

> \[root@k8s-master \~\]# kubectl get po -Aowide

>

> NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

>

> kube-system coredns-6554b8b87f-2v4tx 0/1 Pending 0 53m \ \ \ \

>

> kube-system coredns-6554b8b87f-zfqlb 0/1 Pending 0 53m \ \ \ \

>

> kube-system etcd-k8s-master 1/1 Running 0 54m 10.0.0.66 k8s-master \ \

>

> kube-system kube-apiserver-k8s-master 1/1 Running 0 54m 10.0.0.66 k8s-master \ \

>

> kube-system kube-controller-manager-k8s-master 1/1 Running 0 54m 10.0.0.66 k8s-master \ \

>

> kube-system kube-proxy-9r6st 1/1 Running 0 53m 10.0.0.66 k8s-master \ \

>

> kube-system kube-proxy-lx5wz 1/1 Running 0 23m 10.0.0.88 k8s-node02 \ \

>

> kube-system kube-proxy-xmk6s 1/1 Running 0 26m 10.0.0.77 k8s-node01 \ \

>

> kube-system kube-scheduler-k8s-master 1/1 Running 0 54m 10.0.0.66 k8s-master \ \

###### 6、Calico 组件安装

(1)切换 git 分⽀

\[root@k8s-master \~\]# cd k8s-ha-install/

\[root@k8s-master k8s-ha-install\]# ls

calico.yaml krm.yaml LICENSE metrics-server-0.3.7 metrics-server-3.6.1 README.md

\[root@k8s-master k8s-ha-install\]# git checkout manual-installation-v1.28.x

分支 manual-installation-v1.28.x 设置为跟踪来自 origin 的远程分支 manual-installation-v1.28.x,

切换到一个新分支 'manual-installation-v1.28.x'

(2)修改 Pod ⽹段

\[root@k8s-master k8s-ha-install\]# cd calico/

# 获取已定义的Pod⽹段

\[root@k8s-master calico\]# POD_SUBNET=\`cat /etc/kubernetes/manifests/kube-controller-manager.yaml \| grep cluster-cidr= \| awk -F= '{print $NF}'\`

\[root@k8s-master calico\]# echo $POD_SUBNET

172.16.0.0/16

# 修改calico.yml⽂件中的pod⽹段

\[root@k8s-master calico\]# sed -i "s#POD_CIDR#${POD_SUBNET}#g" calico.yaml

# 创建calico的pod

\[root@k8s-master calico\]# kubectl apply -f calico.yaml

(3)查看容器和节点状态

\[root@k8s-master calico\]# kubectl get po -n kube-system

\[root@k8s-master calico\]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master NotReady control-plane 24h v1.28.2

node01 NotReady \ 20m v1.28.2

\[root@k8s-master calico\]# kubectl describe po -n kube-system calico

(4)部署calico的pod

> # 找到配置文件calico

>

> \[root@k8s-master \~\]# cd k8s-ha-install/

>

> # 切换 git 分⽀

>

> \[root@k8s-master k8s-ha-install\]# git checkout manual-installation-v1.28.x

>

> 分支 manual-installation-v1.28.x 设置为跟踪来自 origin 的远程分支 manual-installation-v1.28.x。

>

> 切换到一个新分支 'manual-installation-v1.28.x'

>

> # 修改 Pod ⽹段

>

> \[root@k8s-master k8s-ha-install\]# ls

>

> bootstrap CoreDNS dashboard metrics-server README.md

>

> calico csi-hostpath kubeadm-metrics-server pki snapshotter

>

> \[root@k8s-master k8s-ha-install\]# cd calico/

>

> \[root@k8s-master calico\]# ls

>

> calico.yaml

>

> \[root@k8s-master calico\]# vim /etc/kubernetes/manifests/kube-controller-manager.yaml

>

> # 获取已定义的Pod⽹段

>

> \[root@k8s-master calico\]# POD_SUBNET=\`cat /etc/kubernetes/manifests/kube-controller-manager.yaml \| grep cluster-cidr= \| awk -F= '{print $NF}'\`

>

> \[root@k8s-master calico\]# echo $POD_SUBNET

>

> 172.16.0.0/16

>

> # 修改配置文件,将文件中的POD_CIDR替换成172.16.0.0/16

>

> \[root@k8s-master calico\]# sed -i "s#POD_CIDR#${POD_SUBNET}#g" calico.yaml

>

> # 创建pod

>

> \[root@k8s-master calico\]# kubectl apply -f calico.yaml

(5) 查看容器状态

> \[root@k8s-master calico\]# kubectl get po -A

>

> NAMESPACE NAME READY STATUS RESTARTS AGE

>

> kube-system calico-kube-controllers-6d48795585-v5d7x 0/1 Pending 0 69s

>

> kube-system calico-node-747k8 0/1 Init:0/3 0 69s

>

> kube-system calico-node-7klq9 0/1 Init:0/3 0 69s

>

> kube-system calico-node-j9b44 0/1 Init:0/3 0 69s

>

> kube-system coredns-6554b8b87f-2v4tx 0/1 Pending 0 104m

>

> kube-system coredns-6554b8b87f-zfqlb 0/1 Pending 0 104m

>

> kube-system etcd-k8s-master 1/1 Running 0 104m

>

> kube-system kube-apiserver-k8s-master 1/1 Running 0 104m

>

> kube-system kube-controller-manager-k8s-master 1/1 Running 1 (7m42s ago) 7m27s

>

> kube-system kube-proxy-9r6st 1/1 Running 0 104m

>

> kube-system kube-proxy-lx5wz 1/1 Running 0 74m

>

> kube-system kube-proxy-xmk6s 1/1 Running 0 77m

>

> kube-system kube-scheduler-k8s-master 1/1 Running 0 104m