若该文为原创文章,转载请注明原文出处

本文章博客地址:https://blog.csdn.net/qq21497936/article/details/147714800

长沙红胖子Qt(长沙创微智科)博文大全:开发技术集合(包含Qt实用技术、树莓派、三维、OpenCV、OpenGL、ffmpeg、OSG、单片机、软硬结合等等)持续更新中...

FFmpeg、SDL和流媒体开发专栏

上一篇:《GStreamer开发笔记(二):GStreamer在ubnutn平台部署安装,测试gstreamer/cheese/ffmpeg/fmplayer打摄像头延迟和内存》

下一篇:持续补充中...

前言

前面测试了多种技术路线,本篇补全剩下的2种主流技术,v4l2+sdl2(偏底层),v4l2+QtOpengl(应用),v4l2+ffmpeg+QtQImage(Image的方式转图低于1ms,但是从yuv格式转到rgb格式需要ffmpeg进行转码耗时)。

Demo

注意

存在色彩空间不准确,不进行细究。

延迟和内存对比

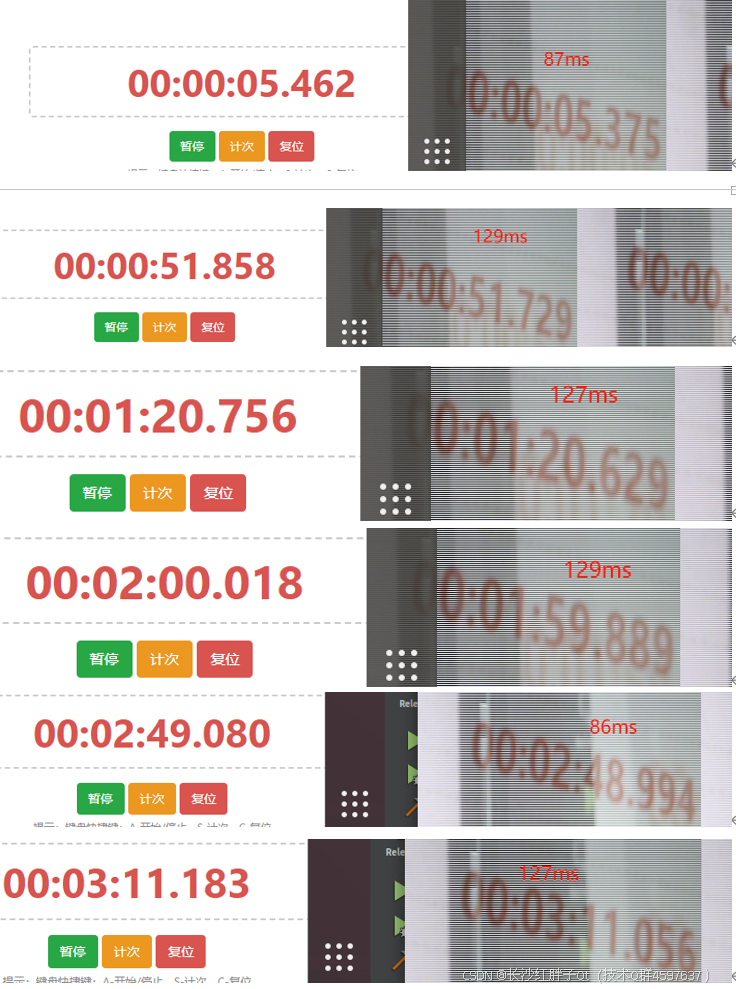

步骤一:v4l2代码测试延迟和内存

没有找到命令行,只找到了v4l2-ctl可以查看和控制摄像头的参数。

看gsteamer的源头就是v4l2src,随手写个代码使用v4l2打开摄像头查看延迟,其中v4l2是个框架负责操作和捕获,无法直接进行渲染显示,本次使用了SDL进行显示。

注意:这里不对v4l2介绍,会有专门的专栏去讲解v4l2的多媒体开发,但是这里使用v4l2的代码写个简单的程序来打开。

shell

sudo apt-get install libsdl2-dev libsdl2-2.0-0然后写代码,代码贴在Demo里面

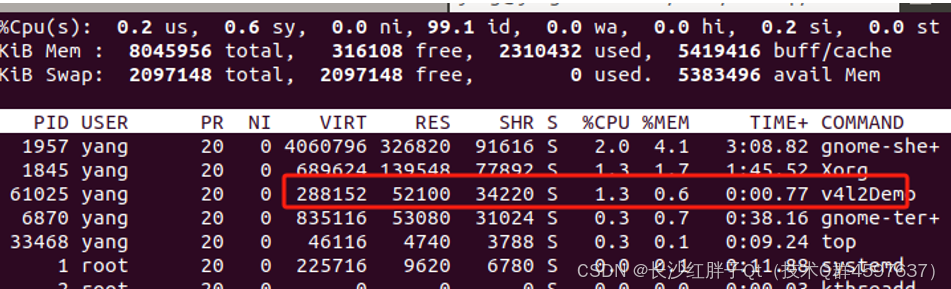

步骤二:v4l2+QtOpenGL+memcpy复制一次

查看内存:

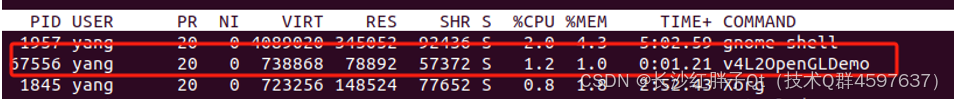

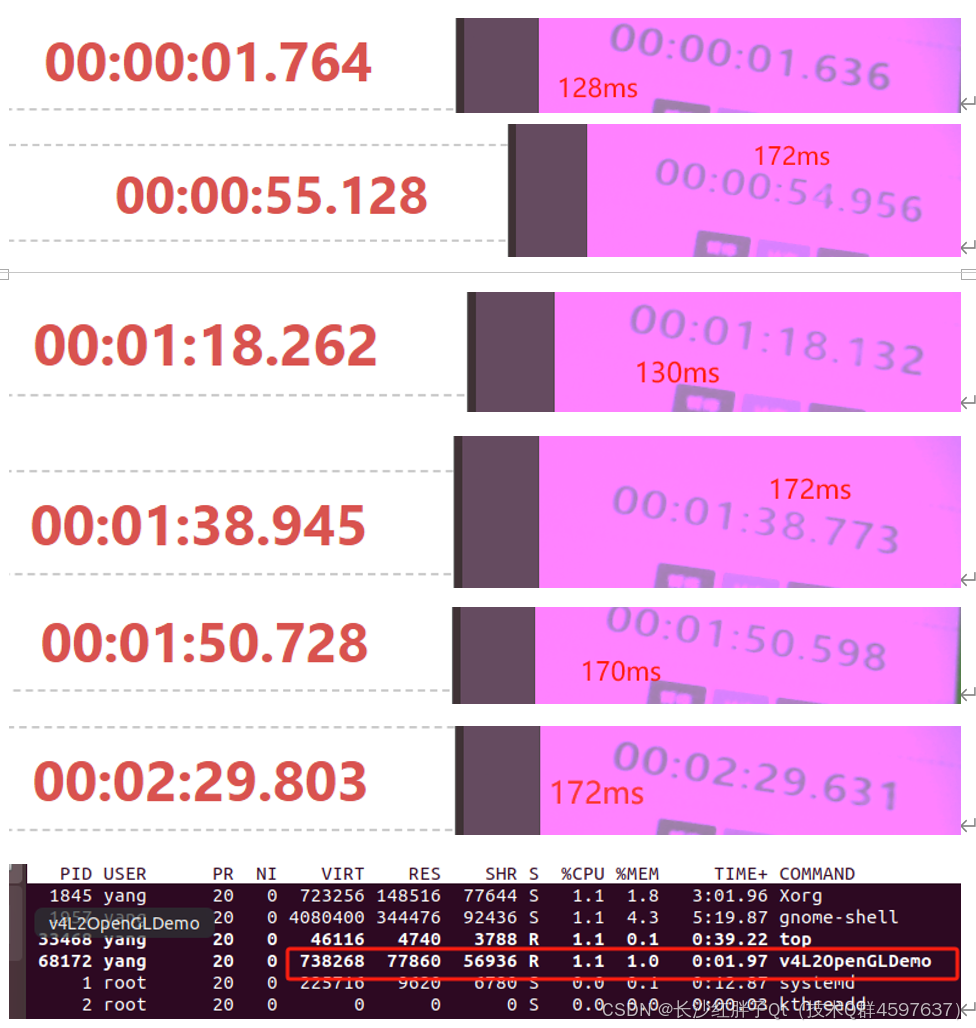

步骤三:v4l2+QtOpenGL+共享内存

最终总结

到这里,我们得出结论,gstreamer基本是最优秀的框架之一了,初步测试不是特别严谨,但是基本能反应情况(比如ffmpeg得fmplay本轮测试是最差,但是ffmpeg写代码可以进行ffmpeg源码和编程代码的优化,达到150ms左右,诸如这类情况不考虑)。

V4l2+SDL优于gstreamer优于ffmplayer优于v4l2+QtOpenGL优于cheese优于ffmpeg。

其中v4l2+SDL、gstreamer、fmplayer在内存占用上有点区别,延迟差不多130ms左右。Cheese和v4l2+QtOpenGL延迟差不多

到170ms。Ffmpeg的播放器延迟到500ms左右。

扩展

这里要注意,大部分低延迟内窥镜笔者接触的都是buffer叠显存的方式,少数厂家使用v4l2+QtOpenGL的方式,经过测试慢了一帧左右。

Demo:V4l2+SDL

cpp

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <fcntl.h>

#include <unistd.h>

#include <sys/ioctl.h>

#include <sys/mman.h>

#include <linux/videodev2.h>

#include <errno.h>

#include <SDL2/SDL.h>

#include <SDL2/SDL_pixels.h>

#define WIDTH 640

#define HEIGHT 480

int main() {

setbuf(stdout, NULL);

int fd;

struct v4l2_format fmt;

struct v4l2_requestbuffers req;

struct v4l2_buffer buf;

void *buffer_start;

unsigned int buffer_length;

// 打开摄像头设备

fd = open("/dev/video0", O_RDWR);

if (fd == -1) {

perror("打开摄像头设备失败");

return EXIT_FAILURE;

}

// 设置视频格式

memset(&fmt, 0, sizeof(fmt));

fmt.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

fmt.fmt.pix.width = WIDTH;

fmt.fmt.pix.height = HEIGHT;

fmt.fmt.pix.pixelformat = V4L2_PIX_FMT_YUYV;

fmt.fmt.pix.field = V4L2_FIELD_INTERLACED;

if (ioctl(fd, VIDIOC_S_FMT, &fmt) == -1) {

perror("设置视频格式失败");

close(fd);

return EXIT_FAILURE;

}

// 请求缓冲区

memset(&req, 0, sizeof(req));

req.count = 1;

req.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

req.memory = V4L2_MEMORY_MMAP;

if (ioctl(fd, VIDIOC_REQBUFS, &req) == -1) {

perror("请求缓冲区失败");

close(fd);

return EXIT_FAILURE;

}

// 映射缓冲区

memset(&buf, 0, sizeof(buf));

buf.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

buf.memory = V4L2_MEMORY_MMAP;

buf.index = 0;

if (ioctl(fd, VIDIOC_QUERYBUF, &buf) == -1) {

perror("查询缓冲区失败");

close(fd);

return EXIT_FAILURE;

}

buffer_length = buf.length;

buffer_start = mmap(NULL, buffer_length, PROT_READ | PROT_WRITE, MAP_SHARED, fd, buf.m.offset);

if (buffer_start == MAP_FAILED) {

perror("映射缓冲区失败");

close(fd);

return EXIT_FAILURE;

}

// 将缓冲区放入队列

if (ioctl(fd, VIDIOC_QBUF, &buf) == -1) {

perror("缓冲区入队失败");

munmap(buffer_start, buffer_length);

close(fd);

return EXIT_FAILURE;

}

// 开始视频捕获

enum v4l2_buf_type type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

if (ioctl(fd, VIDIOC_STREAMON, &type) == -1) {

perror("开始视频捕获失败");

munmap(buffer_start, buffer_length);

close(fd);

return EXIT_FAILURE;

}

// 初始化 SDL

if (SDL_Init(SDL_INIT_VIDEO) < 0) {

fprintf(stderr, "SDL 初始化失败: %s\n", SDL_GetError());

munmap(buffer_start, buffer_length);

close(fd);

return EXIT_FAILURE;

}

SDL_Window *window = SDL_CreateWindow("V4L2 Camera", SDL_WINDOWPOS_UNDEFINED, SDL_WINDOWPOS_UNDEFINED, WIDTH, HEIGHT, 0);

if (!window) {

fprintf(stderr, "创建 SDL 窗口失败: %s\n", SDL_GetError());

SDL_Quit();

munmap(buffer_start, buffer_length);

close(fd);

return EXIT_FAILURE;

}

SDL_Renderer *renderer = SDL_CreateRenderer(window, -1, 0);

// SDL_PIXELFORMAT_YV12 = /**< Planar mode: Y + V + U (3 planes) */

// SDL_PIXELFORMAT_IYUV = /**< Planar mode: Y + U + V (3 planes) */

// SDL_PIXELFORMAT_YUY2 = /**< Packed mode: Y0+U0+Y1+V0 (1 plane) */

// SDL_PIXELFORMAT_UYVY = /**< Packed mode: U0+Y0+V0+Y1 (1 plane) */

// SDL_PIXELFORMAT_YVYU = /**< Packed mode: Y0+V0+Y1+U0 (1 plane) */

// SDL_Texture *texture = SDL_CreateTexture(renderer, SDL_PIXELFORMAT_YV12, SDL_TEXTUREACCESS_STREAMING, WIDTH, HEIGHT);

// SDL_Texture *texture = SDL_CreateTexture(renderer, SDL_PIXELFORMAT_IYUV, SDL_TEXTUREACCESS_STREAMING, WIDTH, HEIGHT);

SDL_Texture *texture = SDL_CreateTexture(renderer, SDL_PIXELFORMAT_YUY2, SDL_TEXTUREACCESS_STREAMING, WIDTH, HEIGHT);

// SDL_Texture *texture = SDL_CreateTexture(renderer, SDL_PIXELFORMAT_UYVY, SDL_TEXTUREACCESS_STREAMING, WIDTH, HEIGHT);

// SDL_Texture *texture = SDL_CreateTexture(renderer, SDL_PIXELFORMAT_YVYU, SDL_TEXTUREACCESS_STREAMING, WIDTH, HEIGHT);

int running = 1;

SDL_Event event;

while (running) {

// 处理事件

while (SDL_PollEvent(&event)) {

if (event.type == SDL_QUIT) {

running = 0;

}

}

// 捕获帧

memset(&buf, 0, sizeof(buf));

buf.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

buf.memory = V4L2_MEMORY_MMAP;

if (ioctl(fd, VIDIOC_DQBUF, &buf) == -1) {

perror("出队缓冲区失败");

break;

}

// 更新 SDL 纹理

SDL_UpdateTexture(texture, NULL, buffer_start, WIDTH);

// 渲染纹理

SDL_RenderClear(renderer);

SDL_RenderCopy(renderer, texture, NULL, NULL);

SDL_RenderPresent(renderer);

// 将缓冲区重新入队

if (ioctl(fd, VIDIOC_QBUF, &buf) == -1) {

perror("缓冲区入队失败");

break;

}

}

// 清理资源

SDL_DestroyTexture(texture);

SDL_DestroyRenderer(renderer);

SDL_DestroyWindow(window);

SDL_Quit();

munmap(buffer_start, buffer_length);

close(fd);

return EXIT_SUCCESS;

}Demo:V4l2+QtOpenGL+共享内存

cpp

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <fcntl.h>

#include <unistd.h>

#include <sys/ioctl.h>

#include <sys/mman.h>

#include <linux/videodev2.h>

#include <errno.h>

#include "DisplayOpenGLWidget.h"

#include <QApplication>

#include <QElapsedTimer>

#define WIDTH 640

#define HEIGHT 480

#include <QDebug>

#include <QDateTime>

//#define LOG qDebug()<<__FILE__<<__LINE__

//#define LOG qDebug()<<__FILE__<<__LINE__<<__FUNCTION__

//#define LOG qDebug()<<__FILE__<<__LINE__<<QThread()::currentThread()

//#define LOG qDebug()<<__FILE__<<__LINE__<<QDateTime::currentDateTime().toString("yyyy-MM-dd")

#define LOG qDebug()<<__FILE__<<__LINE__<<QDateTime::currentDateTime().toString("yyyy-MM-dd hh:mm:ss:zzz")

int main(int argc, char *argv[])

{

QApplication a(argc, argv);

DisplayOpenGLWidget displayOpenGLWidget;

displayOpenGLWidget.show();

setbuf(stdout, NULL);

int fd;

struct v4l2_format fmt;

struct v4l2_requestbuffers req;

struct v4l2_buffer buf;

void *buffer_start;

unsigned int buffer_length;

// 打开摄像头设备

fd = open("/dev/video0", O_RDWR);

if (fd == -1) {

perror("打开摄像头设备失败");

return EXIT_FAILURE;

}

// 设置视频格式

memset(&fmt, 0, sizeof(fmt));

fmt.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

fmt.fmt.pix.width = WIDTH;

fmt.fmt.pix.height = HEIGHT;

fmt.fmt.pix.pixelformat = V4L2_PIX_FMT_YUYV;

fmt.fmt.pix.field = V4L2_FIELD_INTERLACED;

if (ioctl(fd, VIDIOC_S_FMT, &fmt) == -1) {

perror("设置视频格式失败");

close(fd);

return EXIT_FAILURE;

}

// 请求缓冲区

memset(&req, 0, sizeof(req));

req.count = 1;

req.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

req.memory = V4L2_MEMORY_MMAP;

if (ioctl(fd, VIDIOC_REQBUFS, &req) == -1) {

perror("请求缓冲区失败");

close(fd);

return EXIT_FAILURE;

}

// 映射缓冲区

memset(&buf, 0, sizeof(buf));

buf.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

buf.memory = V4L2_MEMORY_MMAP;

buf.index = 0;

if (ioctl(fd, VIDIOC_QUERYBUF, &buf) == -1) {

perror("查询缓冲区失败");

close(fd);

return EXIT_FAILURE;

}

buffer_length = buf.length;

buffer_start = mmap(NULL, buffer_length, PROT_READ | PROT_WRITE, MAP_SHARED, fd, buf.m.offset);

if (buffer_start == MAP_FAILED) {

perror("映射缓冲区失败");

close(fd);

return EXIT_FAILURE;

}

displayOpenGLWidget.initDrawBuffer(WIDTH, HEIGHT, true, (char *)buffer_start);

// 将缓冲区放入队列

if (ioctl(fd, VIDIOC_QBUF, &buf) == -1) {

perror("缓冲区入队失败");

munmap(buffer_start, buffer_length);

close(fd);

return EXIT_FAILURE;

}

// 开始视频捕获

enum v4l2_buf_type type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

if (ioctl(fd, VIDIOC_STREAMON, &type) == -1) {

perror("开始视频捕获失败");

munmap(buffer_start, buffer_length);

close(fd);

return EXIT_FAILURE;

}

int running = 1;

while (running) {

// 捕获帧

memset(&buf, 0, sizeof(buf));

buf.type = V4L2_BUF_TYPE_VIDEO_CAPTURE;

buf.memory = V4L2_MEMORY_MMAP;

if (ioctl(fd, VIDIOC_DQBUF, &buf) == -1) {

perror("出队缓冲区失败");

break;

}

// 渲染

// memcpy(drawBuffer, buffer_start, buffer_length);

displayOpenGLWidget.displayVideoFrame();

QApplication::processEvents();

QApplication::processEvents();

QApplication::processEvents();

// 将缓冲区重新入队

if (ioctl(fd, VIDIOC_QBUF, &buf) == -1) {

perror("缓冲区入队失败");

break;

}

}

// 清理资源

munmap(buffer_start, buffer_length);

close(fd);

return EXIT_SUCCESS;

}入坑

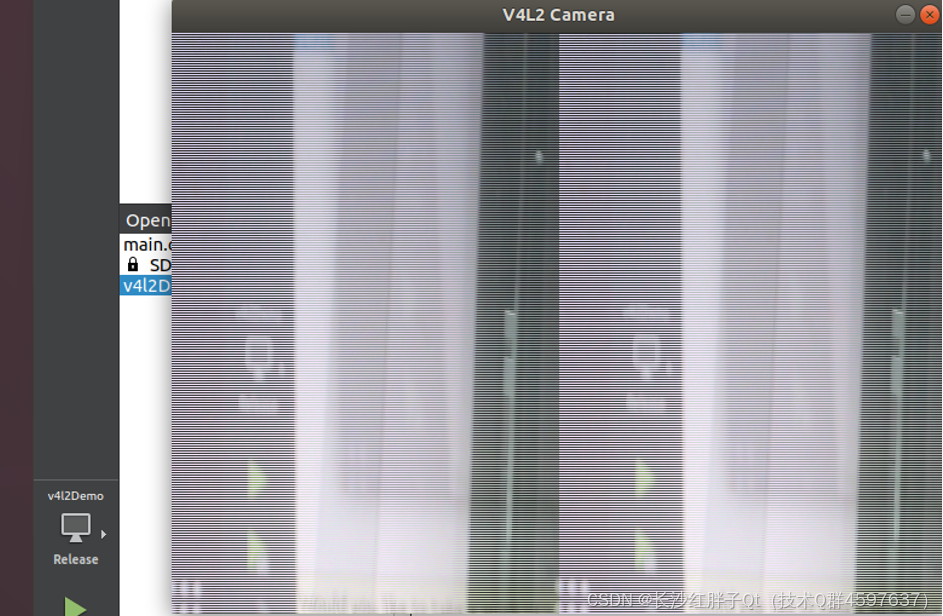

入坑一:v4l2打开视频代码不对

问题

V4l2打开视频代码数据错位

原因

纹理格式不同,但是笔者测试了SDL所有支持的,都不行,不钻了,是需要进行色彩空间转换下才可以(会额外消耗一定延迟,预估10ms以内),我们选个可以的,测试延迟内存即可。

cpp

// SDL_PIXELFORMAT_YV12 = /**< Planar mode: Y + V + U (3 planes) */

// SDL_PIXELFORMAT_IYUV = /**< Planar mode: Y + U + V (3 planes) */

// SDL_PIXELFORMAT_YUY2 = /**< Packed mode: Y0+U0+Y1+V0 (1 plane) */

// SDL_PIXELFORMAT_UYVY = /**< Packed mode: U0+Y0+V0+Y1 (1 plane) */

// SDL_PIXELFORMAT_YVYU = /**< Packed mode: Y0+V0+Y1+U0 (1 plane) */

// SDL_Texture *texture = SDL_CreateTexture(renderer, SDL_PIXELFORMAT_YV12, SDL_TEXTUREACCESS_STREAMING, WIDTH, HEIGHT);

// SDL_Texture *texture = SDL_CreateTexture(renderer, SDL_PIXELFORMAT_IYUV, SDL_TEXTUREACCESS_STREAMING, WIDTH, HEIGHT);

SDL_Texture *texture = SDL_CreateTexture(renderer, SDL_PIXELFORMAT_YUY2, SDL_TEXTUREACCESS_STREAMING, WIDTH, HEIGHT);

// SDL_Texture *texture = SDL_CreateTexture(renderer, SDL_PIXELFORMAT_UYVY, SDL_TEXTUREACCESS_STREAMING, WIDTH, HEIGHT);

// SDL_Texture *texture = SDL_CreateTexture(renderer, SDL_PIXELFORMAT_YVYU, SDL_TEXTUREACCESS_STREAMING, WIDTH, HEIGHT);解决

不解决,选择能看清楚的就好了。

上一篇:《GStreamer开发笔记(二):GStreamer在ubnutn平台部署安装,测试gstreamer/cheese/ffmpeg/fmplayer打摄像头延迟和内存》

下一篇:持续补充中...

本文章博客地址:https://blog.csdn.net/qq21497936/article/details/147714800