代码总览

python

%matplotlib inline

import math

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

python

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)

python

# 独热编码

python

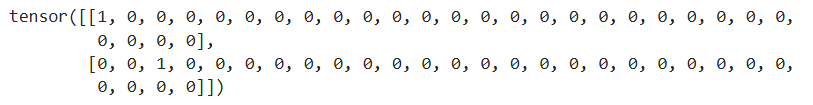

F.one_hot(torch.tensor([0, 2]), len(vocab))

python

# 小批量数据形状是二维张量: (批量大小,时间步数)

python

X = torch.arange(10).reshape((2, 5))

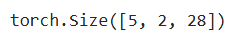

F.one_hot(X.T, 28).shape

python

# 初始化模型参数

python

def get_params(vocab_size, num_hiddens, device):

num_inputs = num_outputs = vocab_size

def normal(shape):

return torch.randn(size=shape, device=device) * 0.01

# 隐藏层参数

W_xh = normal((num_inputs, num_hiddens))

W_hh = normal((num_hiddens, num_hiddens)) # 这行若没有,就是一个单隐藏层的 MLP

b_h = torch.zeros(num_hiddens, device=device)

# 输出层参数

W_hq = normal((num_hiddens, num_outputs))

b_q = torch.zeros(num_outputs, device=device)

# 附加梯度

params = [W_xh, W_hh, b_h, W_hq, b_q]

for param in params:

param.requires_grad_(True)

return params

python

# 一个 init_rnn_state 函数在初始化时返回隐状态

python

def init_rnn_state(batch_size, num_hiddens, device):

return (torch.zeros((batch_size, num_hiddens), device=device), )

python

# 下面的rnn函数定义了如何在一个时间步内计算隐状态和输出

python

def rnn(inputs, state, params):

# inputs的形状:(时间步数量,批量大小,词表大小)

W_xh, W_hh, b_h, W_hq, b_q = params

H, = state

outputs = []

# X的形状:(批量大小,词表大小)

for X in inputs:

H = torch.tanh(torch.mm(X, W_xh) + torch.mm(H, W_hh) + b_h)

Y = torch.mm(H, W_hq) + b_q

outputs.append(Y)

return torch.cat(outputs, dim=0), (H,)

python

# 创建一个类来包装这些函数, 并存储从零开始实现的循环神经网络模型的参数

python

class RNNModelScratch:

"""从零开始实现的循环神经网络模型"""

def __init__(self, vocab_size, num_hiddens, device,

get_params, init_state, forward_fn):

self.vocab_size, self.num_hiddens = vocab_size, num_hiddens

self.params = get_params(vocab_size, num_hiddens, device)

self.init_state, self.forward_fn = init_state, forward_fn

def __call__(self, X, state):

X = F.one_hot(X.T, self.vocab_size).type(torch.float32)

return self.forward_fn(X, state, self.params)

def begin_state(self, batch_size, device):

return self.init_state(batch_size, self.num_hiddens, device)

python

# 检查输出是否具有正确的形状

python

num_hiddens = 512

net = RNNModelScratch(len(vocab), num_hiddens, d2l.try_gpu(), get_params,

init_rnn_state, rnn)

state = net.begin_state(X.shape[0], d2l.try_gpu())

python

Y, new_state = net(X.to(d2l.try_gpu()), state)

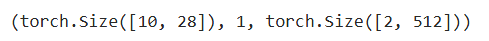

Y.shape, len(new_state), new_state[0].shape

python

# 首先定义预测函数来生成prefix之后的新字符

python

def predict_ch8(prefix, num_preds, net, vocab, device):

"""在prefix后面生成新字符"""

state = net.begin_state(batch_size=1, device=device)

outputs = [vocab[prefix[0]]]

get_input = lambda: torch.tensor([outputs[-1]], device=device).reshape((1, 1))

for y in prefix[1:]: # 预热期

_, state = net(get_input(), state)

outputs.append(vocab[y])

for _ in range(num_preds): # 预测num_preds步

y, state = net(get_input(), state)

outputs.append(int(y.argmax(dim=1).reshape(1)))

return ''.join([vocab.idx_to_token[i] for i in outputs])

python

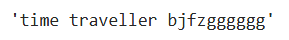

predict_ch8('time traveller ', 10, net, vocab, d2l.try_gpu())

python

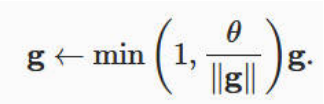

# 梯度裁剪

python

def grad_clipping(net, theta):

"""裁剪梯度"""

if isinstance(net, nn.Module):

params = [p for p in net.parameters() if p.requires_grad]

else:

params = net.params

norm = torch.sqrt(sum(torch.sum((p.grad ** 2)) for p in params))

if norm > theta:

for param in params:

param.grad[:] *= theta / norm

python

# 定义一个函数在一个迭代周期内训练模型

python

def train_epoch_ch8(net, train_iter, loss, updater, device, use_random_iter):

"""训练网络一个迭代周期(定义见第8章)"""

state, timer = None, d2l.Timer()

metric = d2l.Accumulator(2) # 训练损失之和,词元数量

for X, Y in train_iter:

if state is None or use_random_iter:

# 在第一次迭代或使用随机抽样时初始化state

state = net.begin_state(batch_size=X.shape[0], device=device)

else:

if isinstance(net, nn.Module) and not isinstance(state, tuple):

# state对于nn.GRU是个张量

state.detach_()

else:

# state对于nn.LSTM或对于我们从零开始实现的模型是个张量

for s in state:

s.detach_()

y = Y.T.reshape(-1)

X, y = X.to(device), y.to(device)

y_hat, state = net(X, state)

l = loss(y_hat, y.long()).mean()

if isinstance(updater, torch.optim.Optimizer):

updater.zero_grad()

l.backward()

grad_clipping(net, 1)

updater.step()

else:

l.backward()

grad_clipping(net, 1)

# 因为已经调用了mean函数

updater(batch_size=1)

metric.add(l * y.numel(), y.numel())

return math.exp(metric[0] / metric[1]), metric[1] / timer.stop()

python

# 循环神经网络模型的训练函数既支持从零开始实现, 也可以使用高级API来实现

python

def train_ch8(net, train_iter, vocab, lr, num_epochs, device,

use_random_iter=False):

"""训练模型(定义见第8章)"""

loss = nn.CrossEntropyLoss()

animator = d2l.Animator(xlabel='epoch', ylabel='perplexity',

legend=['train'], xlim=[10, num_epochs])

# 初始化

if isinstance(net, nn.Module):

updater = torch.optim.SGD(net.parameters(), lr)

else:

updater = lambda batch_size: d2l.sgd(net.params, lr, batch_size)

predict = lambda prefix: predict_ch8(prefix, 50, net, vocab, device)

# 训练和预测

for epoch in range(num_epochs):

ppl, speed = train_epoch_ch8(

net, train_iter, loss, updater, device, use_random_iter)

if (epoch + 1) % 10 == 0:

print(predict('time traveller'))

animator.add(epoch + 1, [ppl])

print(f'困惑度 {ppl:.1f}, {speed:.1f} 词元/秒 {str(device)}')

print(predict('time traveller'))

print(predict('traveller'))

python

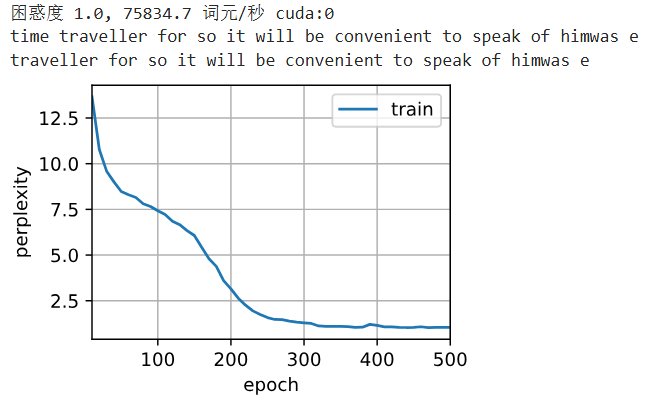

# 现在,我们训练循环神经网络模型

python

num_epochs, lr = 500, 1

python

train_ch8(net, train_iter, vocab, lr, num_epochs, d2l.try_gpu())

python

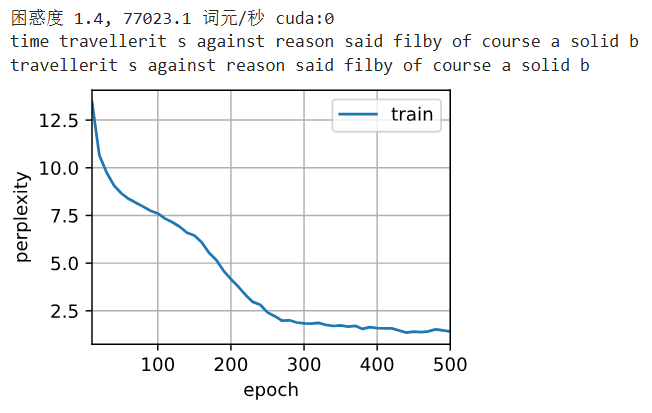

# 最后,让我们检查一下使用随机抽样方法的结果

python

net = RNNModelScratch(len(vocab), num_hiddens, d2l.try_gpu(), get_params, init_rnn_state, rnn)

python

train_ch8(net, train_iter, vocab, lr, num_epochs, d2l.try_gpu(), use_random_iter=True)

代码解释

1. 初始设置与数据准备

python

%matplotlib inline

import math

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l-

功能:

-

%matplotlib inline: 在Jupyter Notebook中内嵌显示matplotlib图形

-

import math: 导入数学计算模块

-

import torch: 导入PyTorch深度学习框架

-

from torch import nn: 导入PyTorch的神经网络模块

-

from torch.nn import functional as F: 导入PyTorch的函数模块

-

from d2l import torch as d2l: 导入《动手学深度学习》的配套工具库

-

python

batch_size, num_steps = 32, 35

train_iter, vocab = d2l.load_data_time_machine(batch_size, num_steps)-

功能:

-

设置批量大小为32,时间步数为35

-

加载时间机器数据集:

-

d2l.load_data_time_machine() 函数加载并预处理数据

-

返回数据迭代器(train_iter)和词汇表(vocab)

-

词汇表大小:28个字符(小写字母+空格+标点)

-

-

2. 数据预处理与表示

python

# 独热编码

F.one_hot(torch.tensor([0, 2]), len(vocab))-

功能:

-

演示如何将整数索引转换为独热编码

-

输入:[0, 2](两个字符的索引)

-

输出:形状为(2, 28)的张量,每行对应一个字符的独热编码

-

例如:索引0 → [1,0,0,...],索引2 → [0,0,1,0,...]

-

python

# 小批量数据形状是二维张量: (批量大小,时间步数)

X = torch.arange(10).reshape((2, 5))

F.one_hot(X.T, 28).shape-

功能:

-

创建示例数据:2个样本,每个样本5个时间步

-

转置数据:从(2,5)变为(5,2)

-

应用独热编码:得到形状(5, 2, 28)

-

这表示:5个时间步,2个样本,每个时间步是28维的独热向量

-

3. 模型参数初始化

python

# 初始化模型参数

def get_params(vocab_size, num_hiddens, device):

num_inputs = num_outputs = vocab_size

def normal(shape):

return torch.randn(size=shape, device=device) * 0.01

# 隐藏层参数

W_xh = normal((num_inputs, num_hiddens))

W_hh = normal((num_hiddens, num_hiddens)) # 这行若没有,就是一个单隐藏层的 MLP

b_h = torch.zeros(num_hiddens, device=device)

# 输出层参数

W_hq = normal((num_hiddens, num_outputs))

b_q = torch.zeros(num_outputs, device=device)

# 附加梯度

params = [W_xh, W_hh, b_h, W_hq, b_q]

for param in params:

param.requires_grad_(True)

return params-

功能:

-

初始化RNN的五个关键参数:

-

W_xh: 输入到隐藏层的权重 (28×512)

-

W_hh: 隐藏层到隐藏层的权重 (512×512) - RNN的关键!

-

b_h: 隐藏层偏置 (512,)

-

W_hq: 隐藏层到输出层的权重 (512×28)

-

b_q: 输出层偏置 (28,)

-

-

使用小随机数初始化权重(标准差0.01)

-

偏置初始化为0

-

所有参数设置为需要梯度计算

-

4. 隐藏状态初始化

python

# 一个 init_rnn_state 函数在初始化时返回隐状态

def init_rnn_state(batch_size, num_hiddens, device):

return (torch.zeros((batch_size, num_hiddens), device=device), )-

功能:

-

创建初始隐藏状态(H0)

-

形状:(batch_size, num_hiddens) = (32, 512)

-

全部初始化为0

-

返回元组格式(为了与LSTM等更复杂模型兼容)

-

5. RNN前向传播

python

# 下面的rnn函数定义了如何在一个时间步内计算隐状态和输出

def rnn(inputs, state, params):

# inputs的形状:(时间步数量,批量大小,词表大小)

W_xh, W_hh, b_h, W_hq, b_q = params

H, = state

outputs = []

# X的形状:(批量大小,词表大小)

for X in inputs:

H = torch.tanh(torch.mm(X, W_xh) + torch.mm(H, W_hh) + b_h)

Y = torch.mm(H, W_hq) + b_q

outputs.append(Y)

return torch.cat(outputs, dim=0), (H,)-

功能:

-

RNN核心计算逻辑

-

遍历每个时间步:

-

计算新隐藏状态:H = tanh(X·W_xh + H·W_hh + b_h)

-

计算当前输出:Y = H·W_hq + b_q

-

-

拼接所有时间步的输出

-

返回输出序列和最终隐藏状态

-

6. RNN模型封装

python

# 创建一个类来包装这些函数, 并存储从零开始实现的循环神经网络模型的参数

class RNNModelScratch:

"""从零开始实现的循环神经网络模型"""

def __init__(self, vocab_size, num_hiddens, device,

get_params, init_state, forward_fn):

self.vocab_size, self.num_hiddens = vocab_size, num_hiddens

self.params = get_params(vocab_size, num_hiddens, device)

self.init_state, self.forward_fn = init_state, forward_fn

def __call__(self, X, state):

X = F.one_hot(X.T, self.vocab_size).type(torch.float32)

return self.forward_fn(X, state, self.params)

def begin_state(self, batch_size, device):

return self.init_state(batch_size, self.num_hiddens, device)-

功能:

-

封装RNN模型为可调用类

-

init: 初始化参数和前向函数

-

call:

-

将输入转换为独热编码

-

调用前向传播函数

-

-

begin_state: 创建初始隐藏状态

-

7. 模型验证与文本生成

python

# 检查输出是否具有正确的形状

num_hiddens = 512

net = RNNModelScratch(len(vocab), num_hiddens, d2l.try_gpu(), get_params,

init_rnn_state, rnn)

state = net.begin_state(X.shape[0], d2l.try_gpu())-

功能:

-

实例化RNN模型

-

创建初始隐藏状态

-

python

Y, new_state = net(X.to(d2l.try_gpu()), state)

Y.shape, len(new_state), new_state[0].shape-

功能:

-

执行前向传播

-

验证输出形状:(时间步×批量大小, 词汇表大小) = (10, 28)

-

验证隐藏状态形状:(批量大小, 隐藏单元数) = (2, 512)

-

python

# 首先定义预测函数来生成prefix之后的新字符

def predict_ch8(prefix, num_preds, net, vocab, device):

"""在prefix后面生成新字符"""

state = net.begin_state(batch_size=1, device=device)

outputs = [vocab[prefix[0]]]

get_input = lambda: torch.tensor([outputs[-1]], device=device).reshape((1, 1))

for y in prefix[1:]: # 预热期

_, state = net(get_input(), state)

outputs.append(vocab[y])

for _ in range(num_preds): # 预测num_preds步

y, state = net(get_input(), state)

outputs.append(int(y.argmax(dim=1).reshape(1))

return ''.join([vocab.idx_to_token[i] for i in outputs])-

功能:

-

初始化隐藏状态

-

预热期:用前缀字符初始化状态

-

预测期:用模型预测下一个字符

-

将预测结果转换为字符串

-

8. 训练准备:梯度裁剪

python

# 梯度裁剪

def grad_clipping(net, theta):

"""裁剪梯度"""

if isinstance(net, nn.Module):

params = [p for p in net.parameters() if p.requires_grad]

else:

params = net.params

norm = torch.sqrt(sum(torch.sum((p.grad ** 2)) for p in params))

if norm > theta:

for param in params:

param.grad[:] *= theta / norm-

功能:

-

防止梯度爆炸

-

计算所有参数梯度的L2范数

-

如果范数超过阈值(theta=1),等比例缩小梯度

-

9. 训练循环实现

python

# 定义一个函数在一个迭代周期内训练模型

def train_epoch_ch8(net, train_iter, loss, updater, device, use_random_iter):

"""训练网络一个迭代周期(定义见第8章)"""

state, timer = None, d2l.Timer()

metric = d2l.Accumulator(2) # 训练损失之和,词元数量

for X, Y in train_iter:

if state is None or use_random_iter:

# 在第一次迭代或使用随机抽样时初始化state

state = net.begin_state(batch_size=X.shape[0], device=device)

else:

if isinstance(net, nn.Module) and not isinstance(state, tuple):

# state对于nn.GRU是个张量

state.detach_()

else:

# state对于nn.LSTM或对于我们从零开始实现的模型是个张量

for s in state:

s.detach_()

y = Y.T.reshape(-1)

X, y = X.to(device), y.to(device)

y_hat, state = net(X, state)

l = loss(y_hat, y.long()).mean()

if isinstance(updater, torch.optim.Optimizer):

updater.zero_grad()

l.backward()

grad_clipping(net, 1)

updater.step()

else:

l.backward()

grad_clipping(net, 1)

# 因为已经调用了mean函数

updater(batch_size=1)

metric.add(l * y.numel(), y.numel())

return math.exp(metric[0] / metric[1]), metric[1] / timer.stop()-

功能:

-

管理隐藏状态(初始化或分离)

-

准备数据(移动到设备)

-

前向传播

-

计算损失(交叉熵)

-

反向传播

-

梯度裁剪

-

参数更新

-

计算困惑度(perplexity)和训练速度

-

python

# 循环神经网络模型的训练函数既支持从零开始实现, 也可以使用高级API来实现

def train_ch8(net, train_iter, vocab, lr, num_epochs, device,

use_random_iter=False):

"""训练模型(定义见第8章)"""

loss = nn.CrossEntropyLoss()

animator = d2l.Animator(xlabel='epoch', ylabel='perplexity',

legend=['train'], xlim=[10, num_epochs])

# 初始化

if isinstance(net, nn.Module):

updater = torch.optim.SGD(net.parameters(), lr)

else:

updater = lambda batch_size: d2l.sgd(net.params, lr, batch_size)

predict = lambda prefix: predict_ch8(prefix, 50, net, vocab, device)

# 训练和预测

for epoch in range(num_epochs):

ppl, speed = train_epoch_ch8(

net, train_iter, loss, updater, device, use_random_iter)

if (epoch + 1) % 10 == 0:

print(predict('time traveller'))

animator.add(epoch + 1, [ppl])

print(f'困惑度 {ppl:.1f}, {speed:.1f} 词元/秒 {str(device)}')

print(predict('time traveller'))

print(predict('traveller'))-

功能:

-

设置损失函数和可视化

-

初始化优化器

-

每10个epoch生成预测文本

-

绘制困惑度曲线

-

输出最终训练结果

-

10. 模型训练执行

python

# 训练循环神经网络模型

num_epochs, lr = 500, 1- 功能:设置训练轮数(500)和学习率(1)

python

train_ch8(net, train_iter, vocab, lr, num_epochs, d2l.try_gpu())- 功能:执行训练(顺序采样)

python

# 最后,检查一下使用随机抽样方法的结果

net = RNNModelScratch(len(vocab), num_hiddens, d2l.try_gpu(), get_params, init_rnn_state, rnn)- 功能:重新初始化模型(确保公平比较)

python

train_ch8(net, train_iter, vocab, lr, num_epochs, d2l.try_gpu(), use_random_iter=True)- 功能:执行训练(随机采样)

关键执行流程总结

1. 数据流

-

文本数据 → 字符索引 → 独热编码

-

输入形状:(批量大小, 时间步数) → (时间步数, 批量大小, 词汇表大小)

2. 模型流

bash

输入X → 独热编码 → RNN单元 → 隐藏状态H → 输出Y

↑ ↓

└───[H]──┘3. 训练流

bash

for epoch in 500:

初始化隐藏状态

for batch in 数据迭代器:

前向传播 → 计算损失 → 反向传播 → 梯度裁剪 → 更新参数

每10个epoch:生成文本并显示困惑度4. 文本生成流

bash

给定前缀 → 预热状态 → 循环生成字符 → 拼接结果