水善利万物而不争,处众人之所恶,故几于道💦

FlinkCDC以DataStream的方式读取MySQL变更,打印到控制台

文章目录

-

-

- [1. 引入相关依赖](#1. 引入相关依赖)

- [2. 编写代码](#2. 编写代码)

- [3. 启动](#3. 启动)

- 相关报错

-

- [1. JsonDebeziumDeserializationSchema类型转换异常](#1. JsonDebeziumDeserializationSchema类型转换异常)

- [2. 找不到方法getStaticJavaEncodingForMysqlCharset(Ljava/lang/String;)Ljava/lang/String](#2. 找不到方法getStaticJavaEncodingForMysqlCharset(Ljava/lang/String;)Ljava/lang/String)

-

1. 引入相关依赖

xml

<properties>

<maven.compiler.source>8</maven.compiler.source>

<maven.compiler.target>8</maven.compiler.target>

<flink-version>1.18.0</flink-version>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>${flink-version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java</artifactId>

<version>${flink-version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients</artifactId>

<version>${flink-version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner_2.12</artifactId>

<version>${flink-version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-runtime</artifactId>

<version>${flink-version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-java-bridge</artifactId>

<version>${flink-version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-base</artifactId>

<version>${flink-version}</version>

</dependency>

<dependency>

<groupId>com.ververica</groupId>

<artifactId>flink-connector-mysql-cdc</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>8.0.31</version>

</dependency>

<dependency>

<groupId>com.ververica</groupId>

<artifactId>flink-connector-debezium</artifactId>

<version>3.0.0</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>3.0.0</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>2. 编写代码

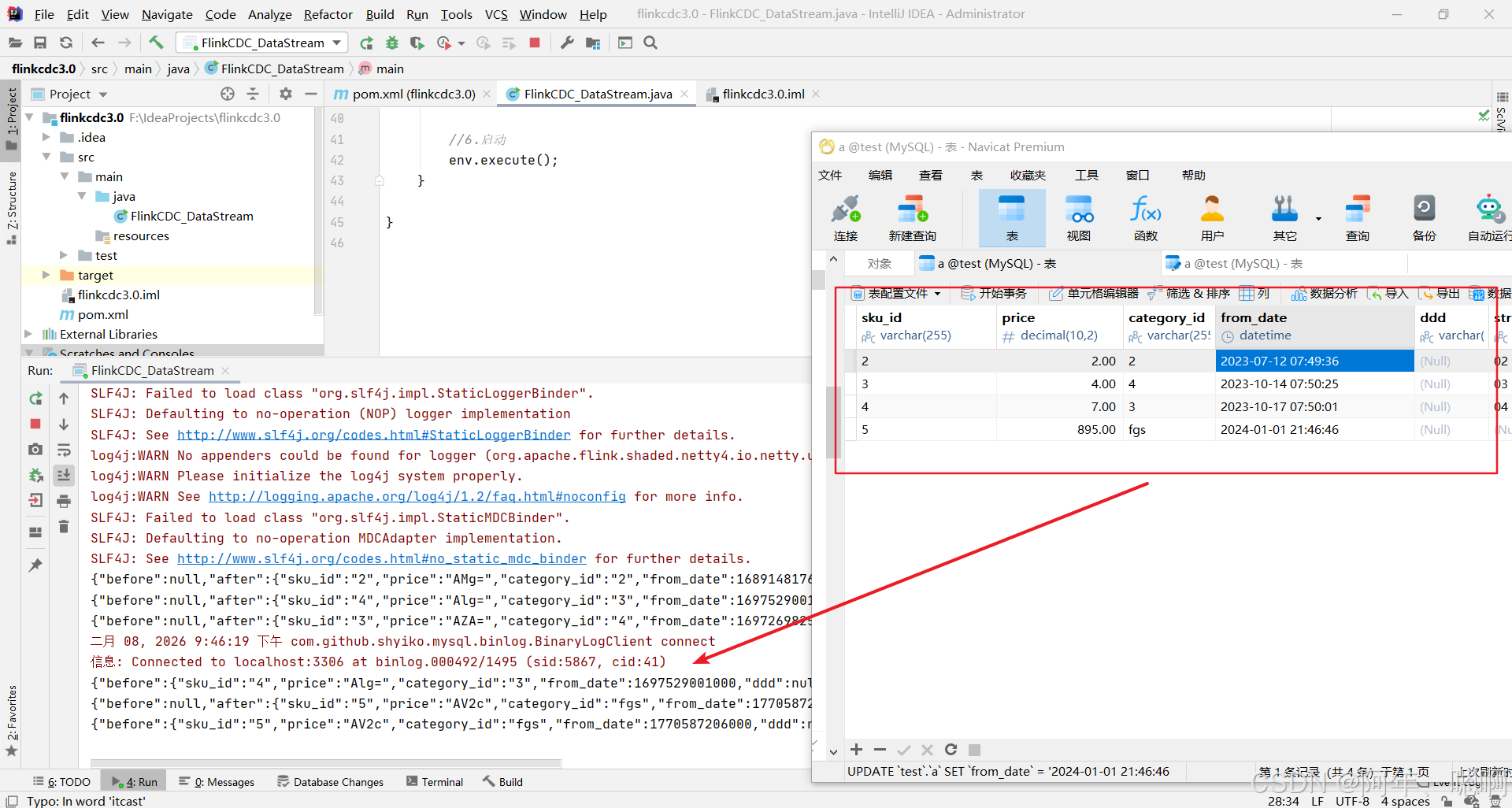

将监控到的cdc打印到控制台

java

import com.ververica.cdc.connectors.mysql.source.MySqlSource;

import com.ververica.cdc.connectors.mysql.table.StartupOptions;

import com.ververica.cdc.debezium.JsonDebeziumDeserializationSchema;

import org.apache.flink.api.common.eventtime.WatermarkStrategy;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

/**

* Author: Pepsi

* Date: 2026/2/8

* Desc:

*/

public class FlinkCDC_DataStream {

public static void main(String[] args) throws Exception {

// 1. 获取flink执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

// 2. 开启CheckPoint

// 3. 使用FlinkCDC构建MysqlSource

MySqlSource<String> mySqlSource = MySqlSource.<String>builder()

.hostname("localhost")

.port(3306)

.username("root")

.password("xxxxxx")

.databaseList("test")

.tableList("test.a") //在写表时,需要带上库名。如果什么都不写,则表示监控所有的表

.startupOptions(StartupOptions.initial())

.deserializer(new JsonDebeziumDeserializationSchema()) // 返回String

.build();

//4.读取数据

DataStreamSource<String> mysqlDS = env.fromSource(mySqlSource, WatermarkStrategy.noWatermarks(), "mysql-source");

//5.打印

mysqlDS.print();

//6.启动

env.execute();

}

}3. 启动

相关报错

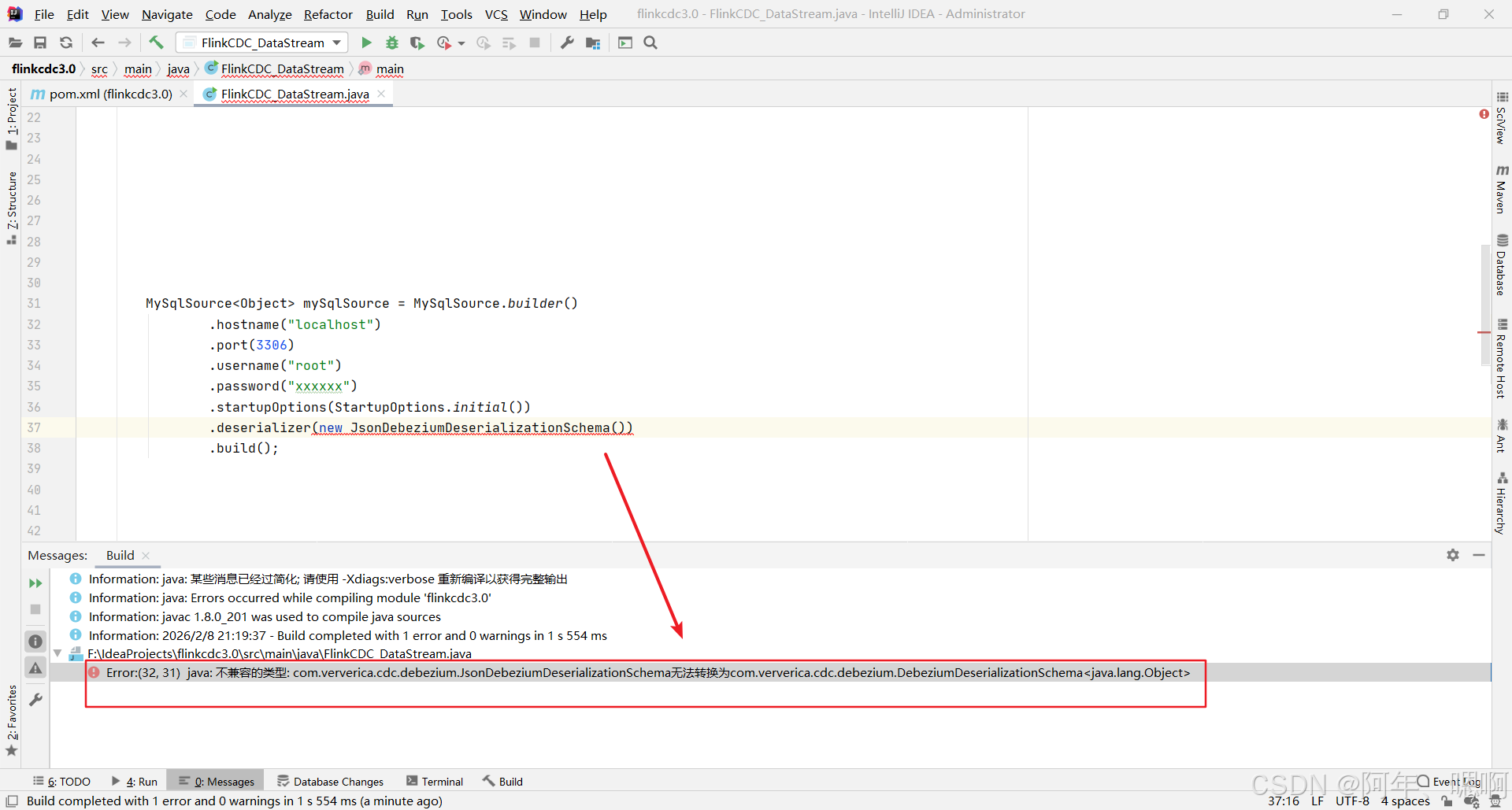

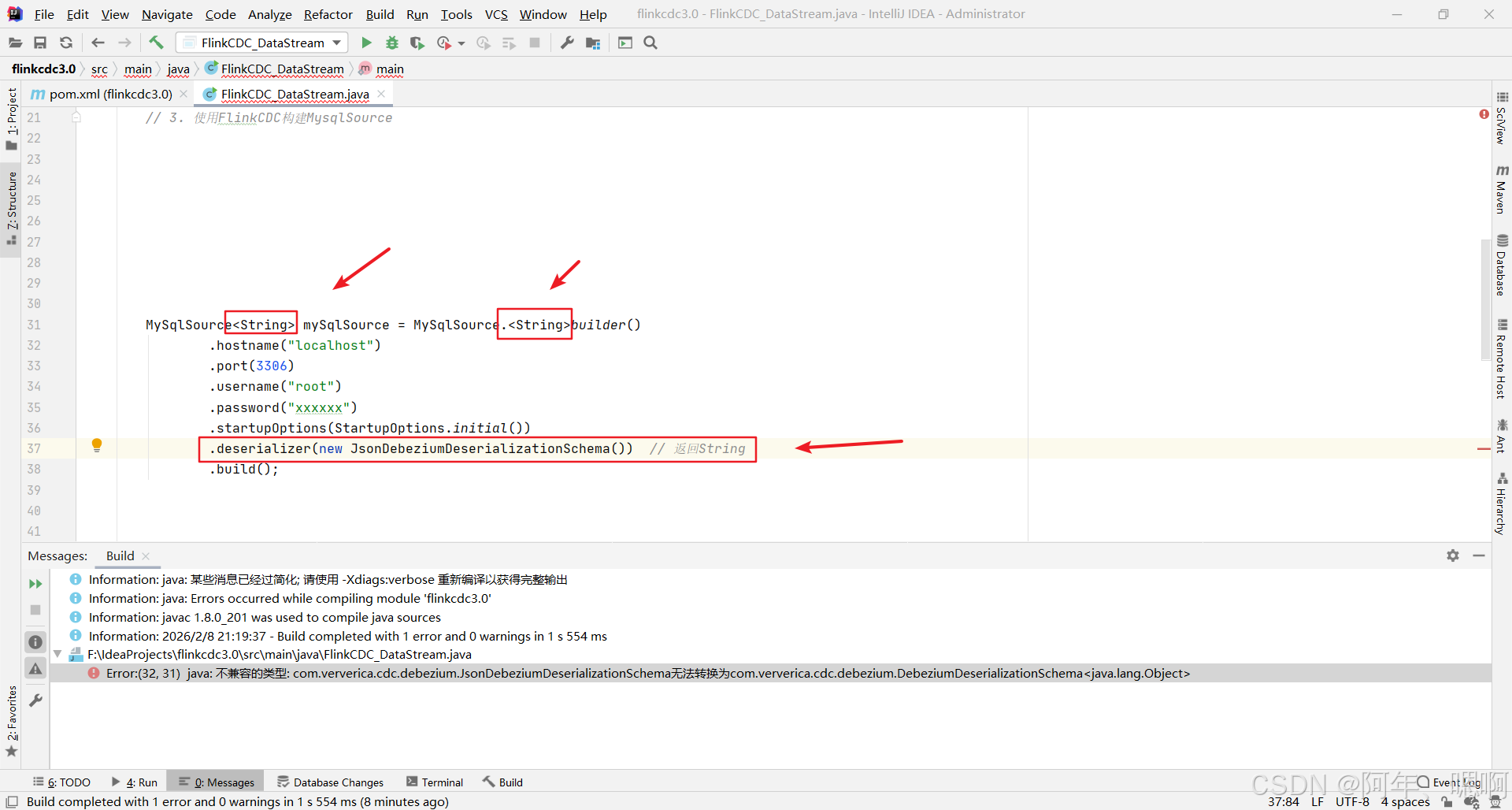

1. JsonDebeziumDeserializationSchema类型转换异常

Error:(32, 31) java: 不兼容的类型:com.ververica.cdc.debezium.JsonDebeziumDeserializationSchema 无法转换为com.ververica.cdc.debezium.DebeziumDeserializationSchema

要把类型改为String

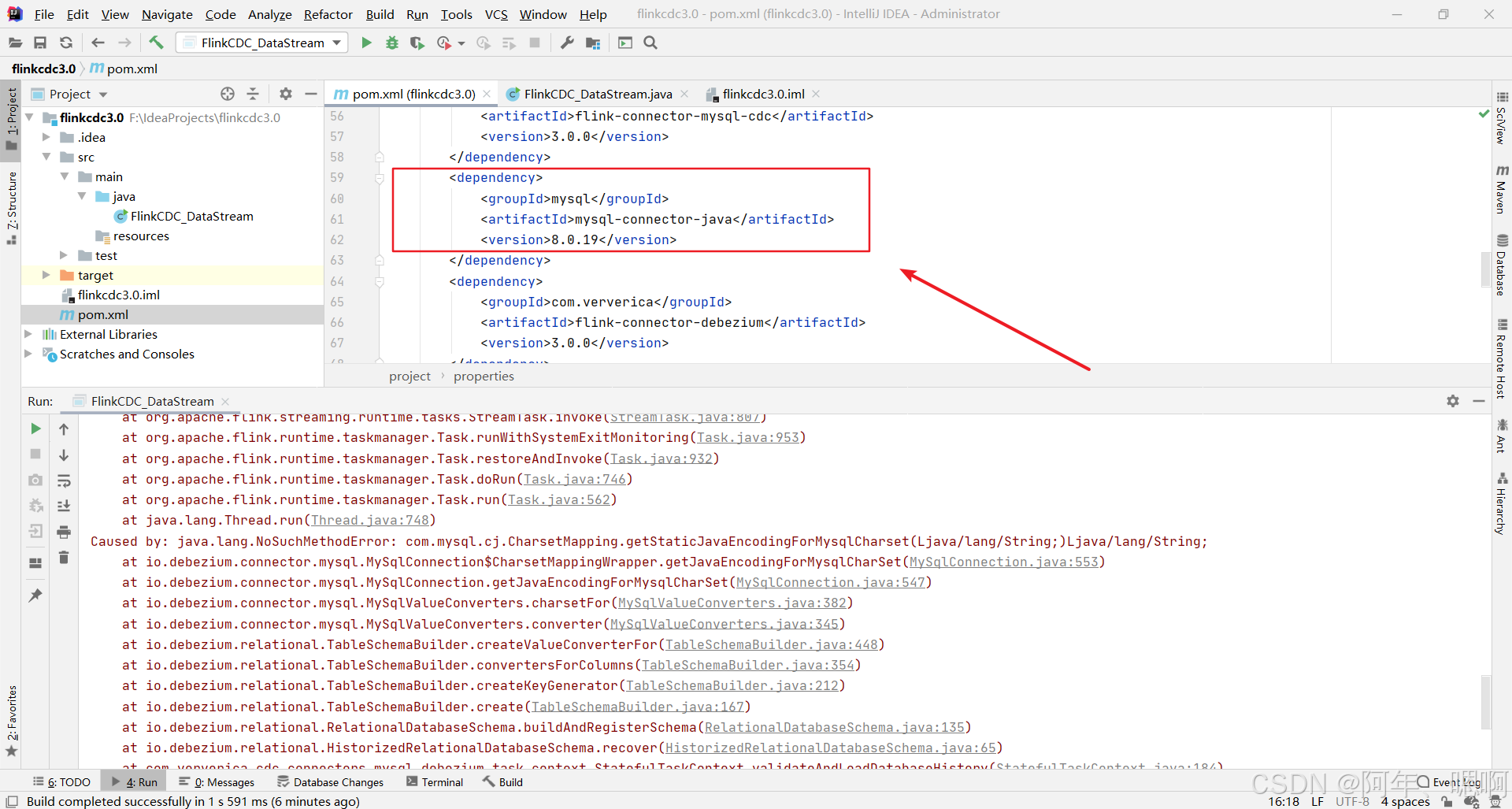

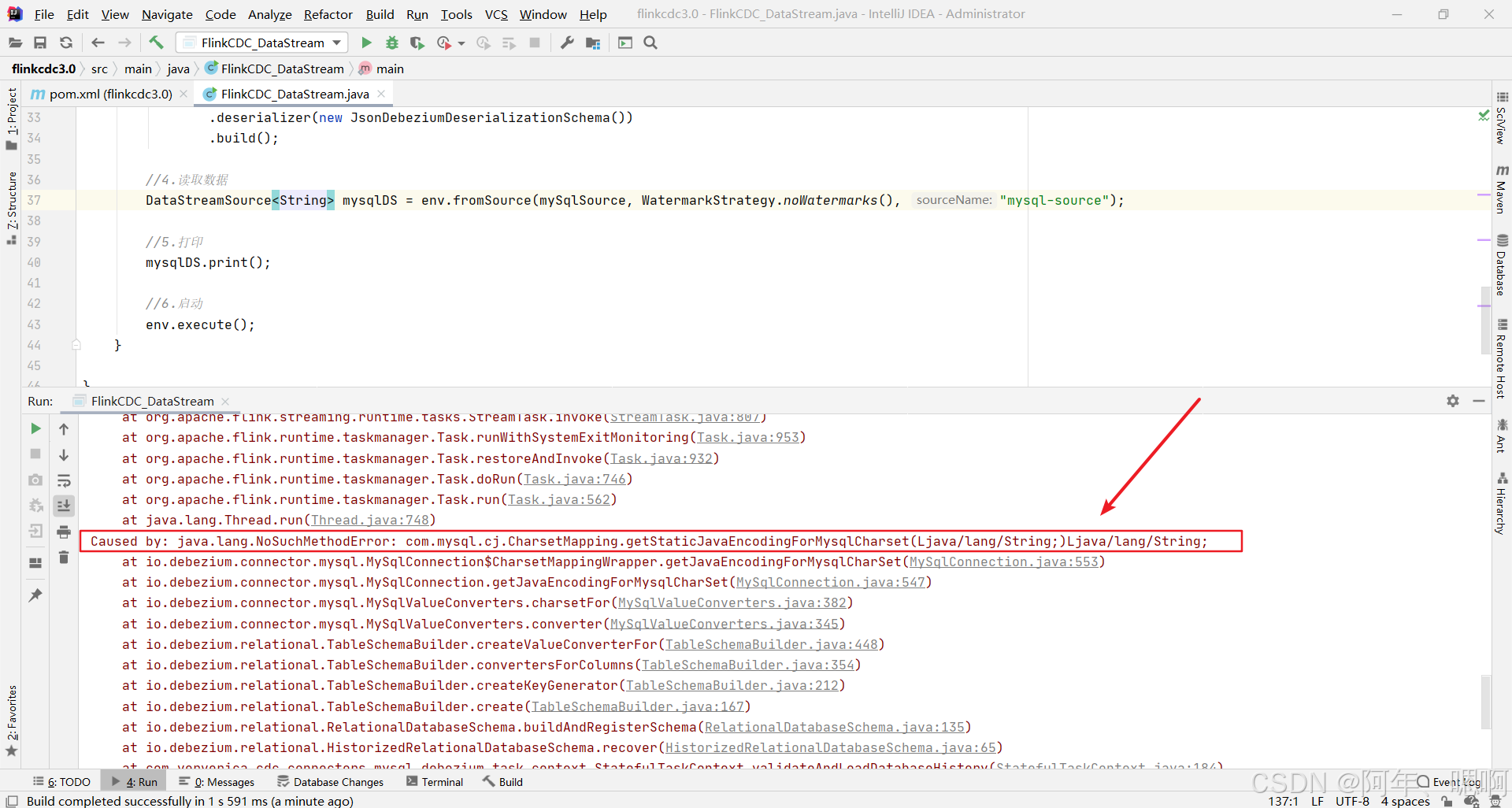

2. 找不到方法getStaticJavaEncodingForMysqlCharset(Ljava/lang/String;)Ljava/lang/String

mysql连接器的jar包版本太低,没有这个方法,升级到8.0.31