目录

-

- 相关链接

- 环境准备:

- 关键函数:

- 完整RV3588模型推理模块实现:

- [rk3588 模型转换:](#rk3588 模型转换:)

相关链接

基于瑞芯微rv1126的C+±api的推理模块的cpython封装(比python-api提速5倍以上)

【Yolov5】基于瑞芯微rv1126 python-api的推理模块

环境准备:

硬件 :瑞芯微RK3588

系统 :ubuntu20.04

语言 :python3.8

第三方依赖库 :rknn_toolkit_lite2,rknn_toolkit2

架构 :aarch64

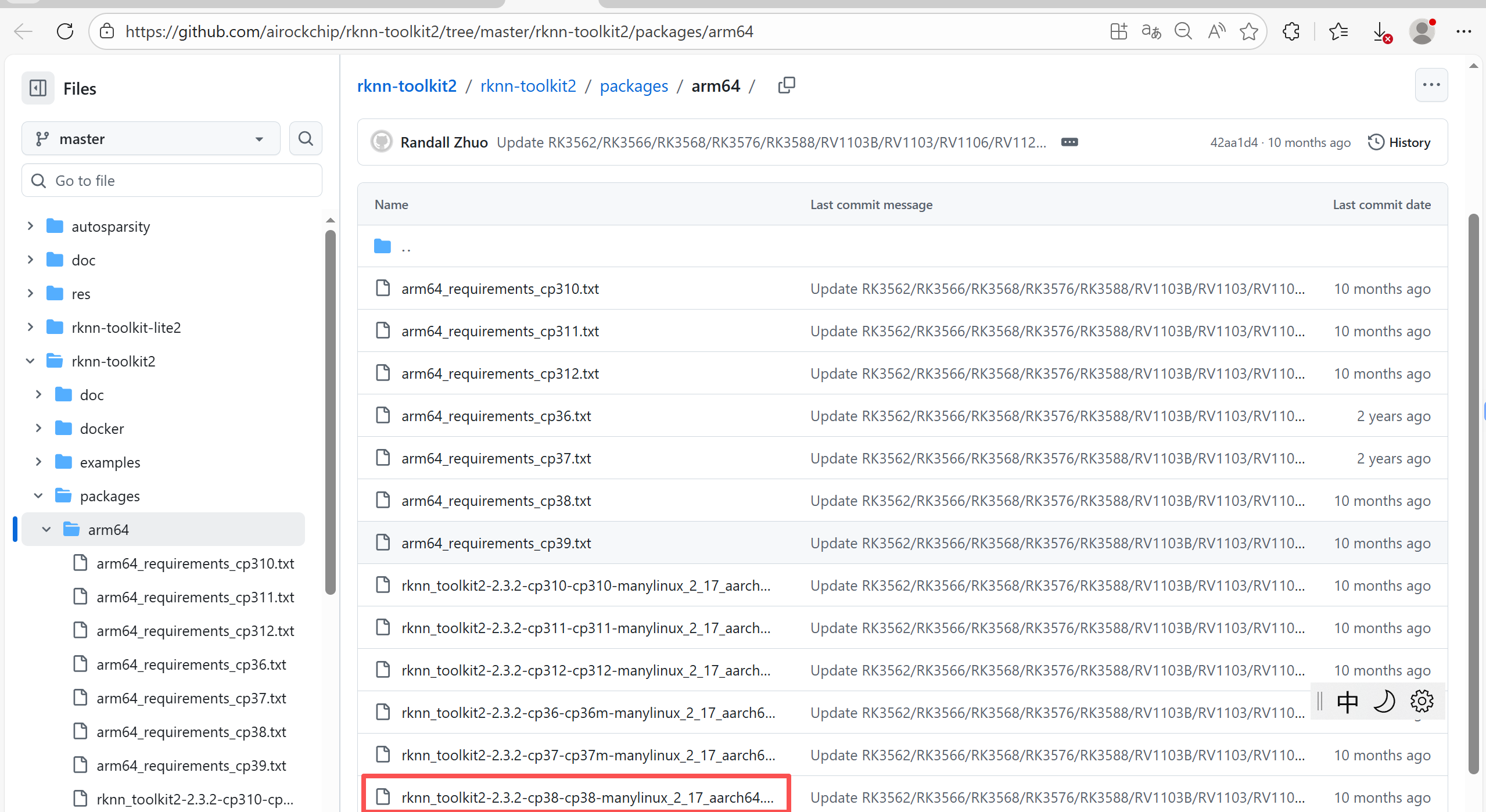

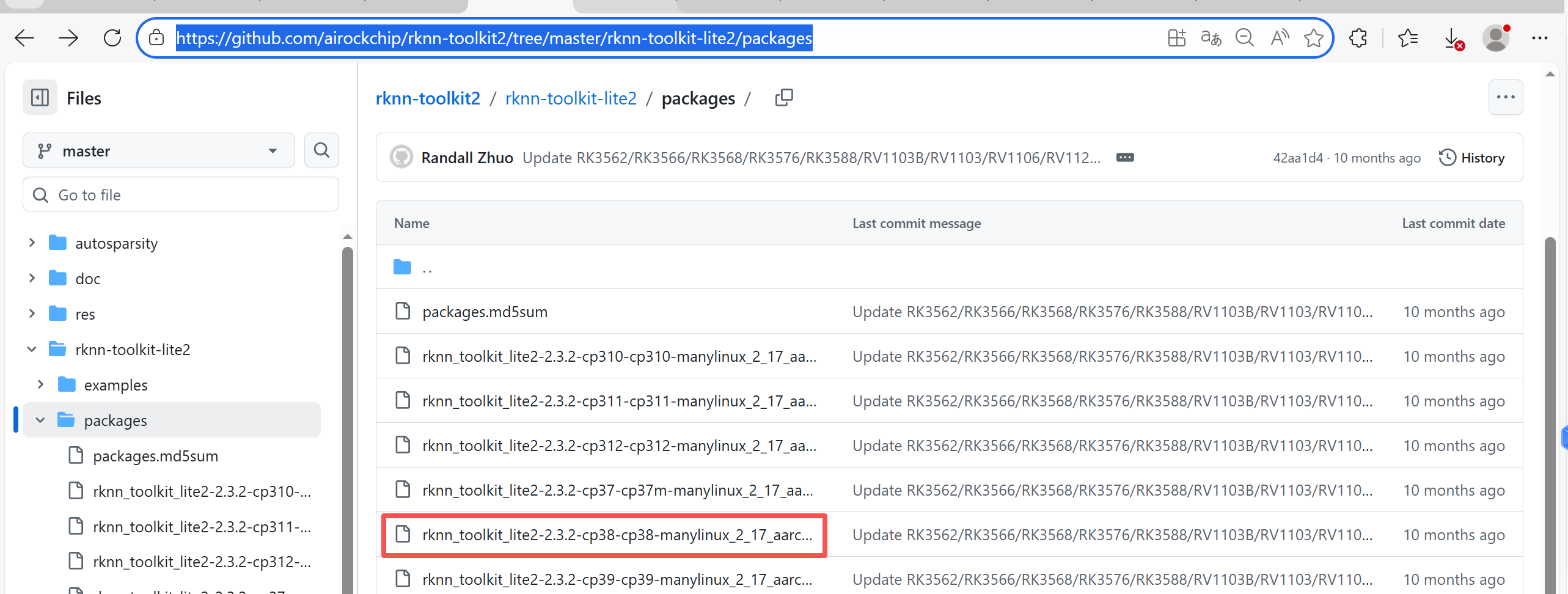

依赖库下载链接 :

根据python版本合和架构(x86/arm64)选择对应版本

https://github.com/airockchip/rknn-toolkit2/tree/master/rknn-toolkit2/packages/arm64

https://github.com/airockchip/rknn-toolkit2/tree/master/rknn-toolkit-lite2/packages

关键函数:

引入依赖库:

python

from rknn.api import RKNNRKNN初始化:

python

rknn = RKNN()

# 加载RKNN模型

model_path = "xxxx/yyyy/zzz.rknn"

rknn.load_rknn(model_path)

# 运行环境初始化,可选择指定NPU芯片

if target==None:

ret = rknn.init_runtime()

else:

ret = rknn.init_runtime(target=target, device_id=device_id)

# if device_id== 0:

# ret = rknn.init_runtime(target=target, core_mask=rknn.NPU_CORE_0)

# elif device_id==1:

# ret = rknn.init_runtime(target=target, core_mask=rknn.NPU_CORE_1)

# elif device_id == 2:

# ret = rknn.init_runtime(target=target, core_mask=rknn.NPU_CORE_2)

# else:

# ret = rknn.init_runtime(target=target, core_mask=rknn.NPU_CORE_0_1_2)

if ret != 0:

print('Init runtime environment failed')

exit(ret)

print('done')RKNN推理:

python

# 读取本地图片模拟

img_path = "aaa/bbb.jpg"

img = cv2.imread(img_path)

# letter_box调整img图像尺寸,此处略

# BGR转RGB

inputs = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

# 输入图片必须以list或者tuple类型

if isinstance(inputs, list) or isinstance(inputs, tuple):

pass

else:

inputs = [inputs]

# rknn模型推理

result = self.rknn.inference(inputs=inputs)完整RV3588模型推理模块实现:

python

from rknn.api import RKNN

import yaml

import os

import cv2

import threading

from coco_utils import COCO_test_helper

def calculate_iou(boxA, boxB):

# 获取每个框的坐标

xA = max(boxA[0], boxB[0])

yA = max(boxA[1], boxB[1])

xB = min(boxA[2], boxB[2])

yB = min(boxA[3], boxB[3])

# 计算交集矩形的宽高

intersection_area = max(0, xB - xA + 1) * max(0, yB - yA + 1)

# 计算每个框的面积

boxAArea = (boxA[2] - boxA[0] + 1) * (boxA[3] - boxA[1] + 1)

boxBArea = (boxB[2] - boxB[0] + 1) * (boxB[3] - boxB[1] + 1)

# 计算IoU

iou = intersection_area / float(boxAArea + boxBArea - intersection_area)

return iou

class Yolov5RK3588():

def __init__(self, model_path, device_id=None, target=None):

rknn = RKNN()

# Direct Load RKNN Model

rknn.load_rknn(model_path)

print('--> Init runtime environment')

if target==None:

ret = rknn.init_runtime()

else:

ret = rknn.init_runtime(target=target, device_id=device_id)

# if device_id== 0:

# ret = rknn.init_runtime(target=target, core_mask=rknn.NPU_CORE_0)

# elif device_id==1:

# ret = rknn.init_runtime(target=target, core_mask=rknn.NPU_CORE_1)

# elif device_id == 2:

# ret = rknn.init_runtime(target=target, core_mask=rknn.NPU_CORE_2)

# else:

# ret = rknn.init_runtime(target=target, core_mask=rknn.NPU_CORE_0_1_2)

if ret != 0:

print('Init runtime environment failed')

exit(ret)

print('done')

self.model = rknn

self.co_helper = COCO_test_helper(enable_letter_box=True)

# 加载参数

with open("model_rknn.yaml") as f:

self.cfg_yolov5 = yaml.load(f, Loader=yaml.FullLoader)

# Checks

assert 0 <= self.conf_thres <= 1, f'Invalid Confidence threshold {self.conf_thres}, valid values are between 0.0 and 1.0'

assert 0 <= self.iou_thres <= 1, f'Invalid IoU {self.iou_thres}, valid values are between 0.0 and 1.0'

self.img_size = self.cfg_yolov5['img_size'] # [640, 384]

anchors = self.cfg_yolov5["anchors"]

self.anchors = np.array(anchors).reshape(3, -1, 2).tolist()

self._modelLock = threading.Lock()

def __call__(self, inputs):

with self._modelLock:

if self.model is None:

print("ERROR: rknn has been released")

return []

if isinstance(inputs, list) or isinstance(inputs, tuple):

pass

else:

inputs = [inputs]

result = self.model.inference(inputs=inputs)

return pred

def close(self):

"""显式释放NPU/GPU资源"""

if not self._is_closed and hasattr(self, '_model'):

with self._modelLock: # 确保线程安全

if hasattr(self._model, 'release'): # 如果RKNN_model_container有释放方法

self._model.release()

del self._model

self._is_closed = True

logger.info("RKNN model resources released.")

def __del__(self):

"""析构函数(备用清理)"""

if not self._is_closed:

logger.info("Resource cleanup via __del__. Call close() explicitly instead!")

self.close()

def work(self, cv2_image):

# 数据预处理

img = self.preprocess(cv2_image)

# 模型推理

result = self.model(img)

# 后处理

boxes, classes, scores = self.postprocess(result,self.anchors)

# 映射到原始图像

pred_result = []

if boxes is not None:

boxes = self.co_helper.get_real_box(boxes)

for nbox,nclasses,nscore in zip(boxes.tolist(),classes.tolist(),scores.tolist()):

pred_result.append([int(nbox[0]), int(nbox[1]), int(nbox[2]), int(nbox[3]), nscore, int(nclasses)])

return pred_result

# 图像预处理

def preprocess(self, im0):

# Due to rga init with (0,0,0), we using pad_color (0,0,0) instead of (114, 114, 114)

pad_color = (0,0,0)

im0_copy = im0.copy()

img = self.co_helper.letter_box(im= im0_copy, new_shape=(self.img_size[1], self.img_size[0]), pad_color=(0,0,0))

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

del im0_copy

return img

def postprocess(self,input_data, anchors):

boxes, scores, classes_conf = [], [], []

# 1*255*h*w -> 3*85*h*w

# print("input_data.shape=", input_data.shape)

# print("len(input_data)=", len(input_data))

# print("len(input_data[0])=", len(input_data[0]))

# print("len(input_data[0][0])=", len(input_data[0][0]))

# print("len(input_data[0][0][0])=", len(input_data[0][0][0]))

# print("----------------------------------")

input_data = [_in.reshape([len(anchors[0]), -1] + list(_in.shape[-2:])) for _in in input_data]

# print("len(input_data)=", len(input_data))

# print("len(input_data[0])=", len(input_data[0]))

# print("len(input_data[0][0])=", len(input_data[0][0]))

# print("len(input_data[0][0][0])=", len(input_data[0][0][0]))

for i in range(len(input_data)):

boxes.append(self.box_process(input_data[i][:, :4, :, :], anchors[i]))

scores.append(input_data[i][:, 4:5, :, :])

classes_conf.append(input_data[i][:, 5:, :, :])

def sp_flatten(_in):

ch = _in.shape[1]

_in = _in.transpose(0, 2, 3, 1)

return _in.reshape(-1, ch)

boxes = [sp_flatten(_v) for _v in boxes]

classes_conf = [sp_flatten(_v) for _v in classes_conf]

scores = [sp_flatten(_v) for _v in scores]

boxes = np.concatenate(boxes)

classes_conf = np.concatenate(classes_conf)

scores = np.concatenate(scores)

# filter according to threshold

boxes, classes, scores = self.filter_boxes(boxes, scores, classes_conf)

# nms

nboxes, nclasses, nscores = [], [], []

for c in set(classes):

inds = np.where(classes == c)

b = boxes[inds]

c = classes[inds]

s = scores[inds]

keep = self.nms_boxes(b, s)

if len(keep) != 0:

nboxes.append(b[keep])

nclasses.append(c[keep])

nscores.append(s[keep])

if not nclasses and not nscores:

return None, None, None

# 拼接

boxes = np.concatenate(nboxes)

classes = np.concatenate(nclasses)

scores = np.concatenate(nscores)

return boxes, classes, scores

def filter_boxes(self,boxes, box_confidences, box_class_probs):

"""Filter boxes with object threshold.

"""

box_confidences = box_confidences.reshape(-1)

class_max_score = np.max(box_class_probs, axis=-1)

classes = np.argmax(box_class_probs, axis=-1)

_class_pos = np.where(class_max_score * box_confidences >= self.conf_thres)

scores = (class_max_score * box_confidences)[_class_pos]

boxes = boxes[_class_pos]

classes = classes[_class_pos]

return boxes, classes, scores

def nms_boxes(self,boxes, scores):

"""Suppress non-maximal boxes.

# Returns

keep: ndarray, index of effective boxes.

"""

x = boxes[:, 0]

y = boxes[:, 1]

w = boxes[:, 2] - boxes[:, 0]

h = boxes[:, 3] - boxes[:, 1]

areas = w * h

order = scores.argsort()[::-1]

keep = []

while order.size > 0:

i = order[0]

keep.append(i)

xx1 = np.maximum(x[i], x[order[1:]])

yy1 = np.maximum(y[i], y[order[1:]])

xx2 = np.minimum(x[i] + w[i], x[order[1:]] + w[order[1:]])

yy2 = np.minimum(y[i] + h[i], y[order[1:]] + h[order[1:]])

w1 = np.maximum(0.0, xx2 - xx1 + 0.00001)

h1 = np.maximum(0.0, yy2 - yy1 + 0.00001)

inter = w1 * h1

ovr = inter / (areas[i] + areas[order[1:]] - inter)

inds = np.where(ovr <= self.iou_thres)[0]

order = order[inds + 1]

keep = np.array(keep)

return keep

def box_process(self,position, anchors):

grid_h, grid_w = position.shape[2:4]

col, row = np.meshgrid(np.arange(0, grid_w), np.arange(0, grid_h))

col = col.reshape(1, 1, grid_h, grid_w)

row = row.reshape(1, 1, grid_h, grid_w)

grid = np.concatenate((col, row), axis=1)

stride = np.array([self.img_size[1] // grid_h, self.img_size[0] // grid_w]).reshape(1, 2, 1, 1)

col = col.repeat(len(anchors), axis=0)

row = row.repeat(len(anchors), axis=0)

anchors = np.array(anchors)

anchors = anchors.reshape(*anchors.shape, 1, 1)

box_xy = position[:, :2, :, :] * 2 - 0.5

box_wh = pow(position[:, 2:4, :, :] * 2, 2) * anchors

box_xy += grid

box_xy *= stride

box = np.concatenate((box_xy, box_wh), axis=1)

# Convert [c_x, c_y, w, h] to [x1, y1, x2, y2]

xyxy = np.copy(box)

xyxy[:, 0, :, :] = box[:, 0, :, :] - box[:, 2, :, :] / 2 # top left x

xyxy[:, 1, :, :] = box[:, 1, :, :] - box[:, 3, :, :] / 2 # top left y

xyxy[:, 2, :, :] = box[:, 0, :, :] + box[:, 2, :, :] / 2 # bottom right x

xyxy[:, 3, :, :] = box[:, 1, :, :] + box[:, 3, :, :] / 2 # bottom right y

return xyxy

依赖的python文件COCO_test_helper.py:

python

from copy import copy

import os

import cv2

import numpy as np

import json

class Letter_Box_Info():

def __init__(self, shape, new_shape, w_ratio, h_ratio, dw, dh, pad_color) -> None:

self.origin_shape = shape

self.new_shape = new_shape

self.w_ratio = w_ratio

self.h_ratio = h_ratio

self.dw = dw

self.dh = dh

self.pad_color = pad_color

def coco_eval_with_json(anno_json, pred_json):

from pycocotools.coco import COCO

from pycocotools.cocoeval import COCOeval

anno = COCO(anno_json)

pred = anno.loadRes(pred_json)

eval = COCOeval(anno, pred, 'bbox')

# eval.params.useCats = 0

# eval.params.maxDets = list((100, 300, 1000))

# a = np.array(list(range(50, 96, 1)))/100

# eval.params.iouThrs = a

eval.evaluate()

eval.accumulate()

eval.summarize()

map, map50 = eval.stats[:2] # update results (mAP@0.5:0.95, mAP@0.5)

print('map --> ', map)

print('map50--> ', map50)

print('map75--> ', eval.stats[2])

print('map85--> ', eval.stats[-2])

print('map95--> ', eval.stats[-1])

class COCO_test_helper():

def __init__(self, enable_letter_box = False) -> None:

self.record_list = []

self.enable_ltter_box = enable_letter_box

if self.enable_ltter_box is True:

self.letter_box_info_list = []

else:

self.letter_box_info_list = None

def letter_box(self, im, new_shape, pad_color=(0,0,0), info_need=False):

# Resize and pad image while meeting stride-multiple constraints

shape = im.shape[:2] # current shape [height, width]

if isinstance(new_shape, int):

new_shape = (new_shape, new_shape)

# Scale ratio

r = min(new_shape[0] / shape[0], new_shape[1] / shape[1])

# Compute padding

ratio = r # width, height ratios

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))

dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - new_unpad[1] # wh padding

dw /= 2 # divide padding into 2 sides

dh /= 2

if shape[::-1] != new_unpad: # resize

im = cv2.resize(im, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))

left, right = int(round(dw - 0.1)), int(round(dw + 0.1))

im = cv2.copyMakeBorder(im, top, bottom, left, right, cv2.BORDER_CONSTANT, value=pad_color) # add border

if self.enable_ltter_box is True:

self.letter_box_info_list.append(Letter_Box_Info(shape, new_shape, ratio, ratio, dw, dh, pad_color))

if info_need is True:

return im, ratio, (dw, dh)

else:

return im

def direct_resize(self, im, new_shape, info_need=False):

shape = im.shape[:2]

h_ratio = new_shape[0]/ shape[0]

w_ratio = new_shape[1]/ shape[1]

if self.enable_ltter_box is True:

self.letter_box_info_list.append(Letter_Box_Info(shape, new_shape, w_ratio, h_ratio, 0, 0, (0,0,0)))

im = cv2.resize(im, (new_shape[1], new_shape[0]))

return im

def get_real_box(self, box, in_format='xyxy'):

bbox = copy(box)

if self.enable_ltter_box == True:

# unletter_box result

if in_format=='xyxy':

bbox[:,0] -= self.letter_box_info_list[-1].dw

bbox[:,0] /= self.letter_box_info_list[-1].w_ratio

bbox[:,0] = np.clip(bbox[:,0], 0, self.letter_box_info_list[-1].origin_shape[1])

bbox[:,1] -= self.letter_box_info_list[-1].dh

bbox[:,1] /= self.letter_box_info_list[-1].h_ratio

bbox[:,1] = np.clip(bbox[:,1], 0, self.letter_box_info_list[-1].origin_shape[0])

bbox[:,2] -= self.letter_box_info_list[-1].dw

bbox[:,2] /= self.letter_box_info_list[-1].w_ratio

bbox[:,2] = np.clip(bbox[:,2], 0, self.letter_box_info_list[-1].origin_shape[1])

bbox[:,3] -= self.letter_box_info_list[-1].dh

bbox[:,3] /= self.letter_box_info_list[-1].h_ratio

bbox[:,3] = np.clip(bbox[:,3], 0, self.letter_box_info_list[-1].origin_shape[0])

return bbox

def get_real_seg(self, seg):

#! fix side effect

dh = int(self.letter_box_info_list[-1].dh)

dw = int(self.letter_box_info_list[-1].dw)

origin_shape = self.letter_box_info_list[-1].origin_shape

new_shape = self.letter_box_info_list[-1].new_shape

if (dh == 0) and (dw == 0) and origin_shape == new_shape:

return seg

elif dh == 0 and dw != 0:

seg = seg[:, :, dw:-dw] # a[0:-0] = []

elif dw == 0 and dh != 0 :

seg = seg[:, dh:-dh, :]

seg = np.where(seg, 1, 0).astype(np.uint8).transpose(1,2,0)

seg = cv2.resize(seg, (origin_shape[1], origin_shape[0]), interpolation=cv2.INTER_LINEAR)

if len(seg.shape) < 3:

return seg[None,:,:]

else:

return seg.transpose(2,0,1)

def add_single_record(self, image_id, category_id, bbox, score, in_format='xyxy', pred_masks = None):

if self.enable_ltter_box == True:

# unletter_box result

if in_format=='xyxy':

bbox[0] -= self.letter_box_info_list[-1].dw

bbox[0] /= self.letter_box_info_list[-1].w_ratio

bbox[1] -= self.letter_box_info_list[-1].dh

bbox[1] /= self.letter_box_info_list[-1].h_ratio

bbox[2] -= self.letter_box_info_list[-1].dw

bbox[2] /= self.letter_box_info_list[-1].w_ratio

bbox[3] -= self.letter_box_info_list[-1].dh

bbox[3] /= self.letter_box_info_list[-1].h_ratio

# bbox = [value/self.letter_box_info_list[-1].ratio for value in bbox]

if in_format=='xyxy':

# change xyxy to xywh

bbox[2] = bbox[2] - bbox[0]

bbox[3] = bbox[3] - bbox[1]

else:

assert False, "now only support xyxy format, please add code to support others format"

def single_encode(x):

from pycocotools.mask import encode

rle = encode(np.asarray(x[:, :, None], order="F", dtype="uint8"))[0]

rle["counts"] = rle["counts"].decode("utf-8")

return rle

if pred_masks is None:

self.record_list.append({"image_id": image_id,

"category_id": category_id,

"bbox":[round(x, 3) for x in bbox],

'score': round(score, 5),

})

else:

rles = single_encode(pred_masks)

self.record_list.append({"image_id": image_id,

"category_id": category_id,

"bbox":[round(x, 3) for x in bbox],

'score': round(score, 5),

'segmentation': rles,

})

def export_to_json(self, path):

with open(path, 'w') as f:

json.dump(self.record_list, f)rk3588 模型转换:

参考官方github项目:

https://github.com/airockchip/rknn_model_zoo/tree/main/examples

选择对应的网络模型(yolov5)

运行convert.py实现转换。

常用的模型转换方式:pt->onnx->rknn

在yolov5比较新的版本里,export.py存在--rknpu的参数:

如:

python

# YOLOv5 馃殌 by Ultralytics, GPL-3.0 license

"""

Export a YOLOv5 PyTorch model to other formats. TensorFlow exports authored by https://github.com/zldrobit

Format | `export.py --include` | Model

--- | --- | ---

PyTorch | - | yolov5s.pt

TorchScript | `torchscript` | yolov5s.torchscript

ONNX | `onnx` | yolov5s.onnx

OpenVINO | `openvino` | yolov5s_openvino_model/

TensorRT | `engine` | yolov5s.engine

CoreML | `coreml` | yolov5s.mlmodel

TensorFlow SavedModel | `saved_model` | yolov5s_saved_model/

TensorFlow GraphDef | `pb` | yolov5s.pb

TensorFlow Lite | `tflite` | yolov5s.tflite

TensorFlow Edge TPU | `edgetpu` | yolov5s_edgetpu.tflite

TensorFlow.js | `tfjs` | yolov5s_web_model/

PaddlePaddle | `paddle` | yolov5s_paddle_model/

Requirements:

$ pip install -r requirements.txt coremltools onnx onnx-simplifier onnxruntime openvino-dev tensorflow-cpu # CPU

$ pip install -r requirements.txt coremltools onnx onnx-simplifier onnxruntime-gpu openvino-dev tensorflow # GPU

Usage:

$ python export.py --weights yolov5s.pt --include torchscript onnx openvino engine coreml tflite ...

Inference:

$ python detect.py --weights yolov5s.pt # PyTorch

yolov5s.torchscript # TorchScript

yolov5s.onnx # ONNX Runtime or OpenCV DNN with --dnn

yolov5s_openvino_model # OpenVINO

yolov5s.engine # TensorRT

yolov5s.mlmodel # CoreML (macOS-only)

yolov5s_saved_model # TensorFlow SavedModel

yolov5s.pb # TensorFlow GraphDef

yolov5s.tflite # TensorFlow Lite

yolov5s_edgetpu.tflite # TensorFlow Edge TPU

yolov5s_paddle_model # PaddlePaddle

TensorFlow.js:

$ cd .. && git clone https://github.com/zldrobit/tfjs-yolov5-example.git && cd tfjs-yolov5-example

$ npm install

$ ln -s ../../yolov5/yolov5s_web_model public/yolov5s_web_model

$ npm start

"""

import argparse

import contextlib

import json

import os

import platform

import re

import subprocess

import sys

import time

import warnings

from pathlib import Path

# activate rknn hack

if '--rknpu' in sys.argv:

os.environ['RKNN_model_hack'] = "1"

import pandas as pd

import torch

from torch.utils.mobile_optimizer import optimize_for_mobile

FILE = Path(__file__).resolve()

ROOT = FILE.parents[0] # YOLOv5 root directory

if str(ROOT) not in sys.path:

sys.path.append(str(ROOT)) # add ROOT to PATH

if platform.system() != 'Windows':

ROOT = Path(os.path.relpath(ROOT, Path.cwd())) # relative

from models.experimental import attempt_load

from models.yolo import ClassificationModel, Detect, DetectionModel, SegmentationModel, Segment

from utils.dataloaders import LoadImages

from utils.general import (LOGGER, Profile, check_dataset, check_img_size, check_requirements, check_version,

check_yaml, colorstr, file_size, get_default_args, print_args, url2file, yaml_save)

from utils.torch_utils import select_device, smart_inference_mode

MACOS = platform.system() == 'Darwin' # macOS environment

def export_formats():

# YOLOv5 export formats

x = [

['PyTorch', '-', '.pt', True, True],

['TorchScript', 'torchscript', '.torchscript', True, True],

['ONNX', 'onnx', '.onnx', True, True],

['OpenVINO', 'openvino', '_openvino_model', True, False],

['TensorRT', 'engine', '.engine', False, True],

['CoreML', 'coreml', '.mlmodel', True, False],

['TensorFlow SavedModel', 'saved_model', '_saved_model', True, True],

['TensorFlow GraphDef', 'pb', '.pb', True, True],

['TensorFlow Lite', 'tflite', '.tflite', True, False],

['TensorFlow Edge TPU', 'edgetpu', '_edgetpu.tflite', False, False],

['TensorFlow.js', 'tfjs', '_web_model', False, False],

['PaddlePaddle', 'paddle', '_paddle_model', True, True],]

return pd.DataFrame(x, columns=['Format', 'Argument', 'Suffix', 'CPU', 'GPU'])

def try_export(inner_func):

# YOLOv5 export decorator, i..e @try_export

inner_args = get_default_args(inner_func)

def outer_func(*args, **kwargs):

prefix = inner_args['prefix']

try:

with Profile() as dt:

f, model = inner_func(*args, **kwargs)

LOGGER.info(f'{prefix} export success 鉁?{dt.t:.1f}s, saved as {f} ({file_size(f):.1f} MB)')

return f, model

except Exception as e:

LOGGER.info(f'{prefix} export failure 鉂?{dt.t:.1f}s: {e}')

return None, None

return outer_func

@try_export

def export_torchscript(model, im, file, optimize, prefix=colorstr('TorchScript:')):

# YOLOv5 TorchScript model export

LOGGER.info(f'\n{prefix} starting export with torch {torch.__version__}...')

f = file.with_suffix('.torchscript')

ts = torch.jit.trace(model, im, strict=False)

d = {"shape": im.shape, "stride": int(max(model.stride)), "names": model.names}

extra_files = {'config.txt': json.dumps(d)} # torch._C.ExtraFilesMap()

if optimize: # https://pytorch.org/tutorials/recipes/mobile_interpreter.html

optimize_for_mobile(ts)._save_for_lite_interpreter(str(f), _extra_files=extra_files)

else:

ts.save(str(f), _extra_files=extra_files)

return f, None

@try_export

def export_onnx(model, im, file, opset, dynamic, simplify, prefix=colorstr('ONNX:')):

# YOLOv5 ONNX export

check_requirements('onnx')

import onnx

LOGGER.info(f'\n{prefix} starting export with onnx {onnx.__version__}...')

f = file.with_suffix('.onnx')

output_names = ['output0', 'output1'] if isinstance(model, SegmentationModel) else ['output0']

if dynamic:

dynamic = {'images': {0: 'batch', 2: 'height', 3: 'width'}} # shape(1,3,640,640)

if isinstance(model, SegmentationModel):

dynamic['output0'] = {0: 'batch', 1: 'anchors'} # shape(1,25200,85)

dynamic['output1'] = {0: 'batch', 2: 'mask_height', 3: 'mask_width'} # shape(1,32,160,160)

elif isinstance(model, DetectionModel):

dynamic['output0'] = {0: 'batch', 1: 'anchors'} # shape(1,25200,85)

torch.onnx.export(

model.cpu() if dynamic else model, # --dynamic only compatible with cpu

im.cpu() if dynamic else im,

f,

verbose=False,

opset_version=opset,

do_constant_folding=True,

input_names=['images'],

output_names=output_names,

dynamic_axes=dynamic or None)

# Checks

model_onnx = onnx.load(f) # load onnx model

onnx.checker.check_model(model_onnx) # check onnx model

# Metadata

d = {'stride': int(max(model.stride)), 'names': model.names}

for k, v in d.items():

meta = model_onnx.metadata_props.add()

meta.key, meta.value = k, str(v)

onnx.save(model_onnx, f)

# Simplify

if simplify:

try:

cuda = torch.cuda.is_available()

check_requirements(('onnxruntime-gpu' if cuda else 'onnxruntime', 'onnx-simplifier>=0.4.1'))

import onnxsim

LOGGER.info(f'{prefix} simplifying with onnx-simplifier {onnxsim.__version__}...')

model_onnx, check = onnxsim.simplify(model_onnx)

assert check, 'assert check failed'

onnx.save(model_onnx, f)

except Exception as e:

LOGGER.info(f'{prefix} simplifier failure: {e}')

return f, model_onnx

@try_export

def export_openvino(file, metadata, half, prefix=colorstr('OpenVINO:')):

# YOLOv5 OpenVINO export

check_requirements('openvino-dev') # requires openvino-dev: https://pypi.org/project/openvino-dev/

import openvino.inference_engine as ie

LOGGER.info(f'\n{prefix} starting export with openvino {ie.__version__}...')

f = str(file).replace('.pt', f'_openvino_model{os.sep}')

cmd = f"mo --input_model {file.with_suffix('.onnx')} --output_dir {f} --data_type {'FP16' if half else 'FP32'}"

subprocess.run(cmd.split(), check=True, env=os.environ) # export

yaml_save(Path(f) / file.with_suffix('.yaml').name, metadata) # add metadata.yaml

return f, None

@try_export

def export_paddle(model, im, file, metadata, prefix=colorstr('PaddlePaddle:')):

# YOLOv5 Paddle export

check_requirements(('paddlepaddle', 'x2paddle'))

import x2paddle

from x2paddle.convert import pytorch2paddle

LOGGER.info(f'\n{prefix} starting export with X2Paddle {x2paddle.__version__}...')

f = str(file).replace('.pt', f'_paddle_model{os.sep}')

pytorch2paddle(module=model, save_dir=f, jit_type='trace', input_examples=[im]) # export

yaml_save(Path(f) / file.with_suffix('.yaml').name, metadata) # add metadata.yaml

return f, None

@try_export

def export_coreml(model, im, file, int8, half, prefix=colorstr('CoreML:')):

# YOLOv5 CoreML export

check_requirements('coremltools')

import coremltools as ct

LOGGER.info(f'\n{prefix} starting export with coremltools {ct.__version__}...')

f = file.with_suffix('.mlmodel')

ts = torch.jit.trace(model, im, strict=False) # TorchScript model

ct_model = ct.convert(ts, inputs=[ct.ImageType('image', shape=im.shape, scale=1 / 255, bias=[0, 0, 0])])

bits, mode = (8, 'kmeans_lut') if int8 else (16, 'linear') if half else (32, None)

if bits < 32:

if MACOS: # quantization only supported on macOS

with warnings.catch_warnings():

warnings.filterwarnings("ignore", category=DeprecationWarning) # suppress numpy==1.20 float warning

ct_model = ct.models.neural_network.quantization_utils.quantize_weights(ct_model, bits, mode)

else:

print(f'{prefix} quantization only supported on macOS, skipping...')

ct_model.save(f)

return f, ct_model

@try_export

def export_engine(model, im, file, half, dynamic, simplify, workspace=4, verbose=False, prefix=colorstr('TensorRT:')):

# YOLOv5 TensorRT export https://developer.nvidia.com/tensorrt

assert im.device.type != 'cpu', 'export running on CPU but must be on GPU, i.e. `python export.py --device 0`'

try:

import tensorrt as trt

except Exception:

if platform.system() == 'Linux':

check_requirements('nvidia-tensorrt', cmds='-U --index-url https://pypi.ngc.nvidia.com')

import tensorrt as trt

if trt.__version__[0] == '7': # TensorRT 7 handling https://github.com/ultralytics/yolov5/issues/6012

grid = model.model[-1].anchor_grid

model.model[-1].anchor_grid = [a[..., :1, :1, :] for a in grid]

export_onnx(model, im, file, 12, dynamic, simplify) # opset 12

model.model[-1].anchor_grid = grid

else: # TensorRT >= 8

check_version(trt.__version__, '8.0.0', hard=True) # require tensorrt>=8.0.0

export_onnx(model, im, file, 12, dynamic, simplify) # opset 12

onnx = file.with_suffix('.onnx')

LOGGER.info(f'\n{prefix} starting export with TensorRT {trt.__version__}...')

assert onnx.exists(), f'failed to export ONNX file: {onnx}'

f = file.with_suffix('.engine') # TensorRT engine file

logger = trt.Logger(trt.Logger.INFO)

if verbose:

logger.min_severity = trt.Logger.Severity.VERBOSE

builder = trt.Builder(logger)

config = builder.create_builder_config()

config.max_workspace_size = workspace * 1 << 30

# config.set_memory_pool_limit(trt.MemoryPoolType.WORKSPACE, workspace << 30) # fix TRT 8.4 deprecation notice

flag = (1 << int(trt.NetworkDefinitionCreationFlag.EXPLICIT_BATCH))

network = builder.create_network(flag)

parser = trt.OnnxParser(network, logger)

if not parser.parse_from_file(str(onnx)):

raise RuntimeError(f'failed to load ONNX file: {onnx}')

inputs = [network.get_input(i) for i in range(network.num_inputs)]

outputs = [network.get_output(i) for i in range(network.num_outputs)]

for inp in inputs:

LOGGER.info(f'{prefix} input "{inp.name}" with shape{inp.shape} {inp.dtype}')

for out in outputs:

LOGGER.info(f'{prefix} output "{out.name}" with shape{out.shape} {out.dtype}')

if dynamic:

if im.shape[0] <= 1:

LOGGER.warning(f"{prefix} WARNING 鈿狅笍 --dynamic model requires maximum --batch-size argument")

profile = builder.create_optimization_profile()

for inp in inputs:

profile.set_shape(inp.name, (1, *im.shape[1:]), (max(1, im.shape[0] // 2), *im.shape[1:]), im.shape)

config.add_optimization_profile(profile)

LOGGER.info(f'{prefix} building FP{16 if builder.platform_has_fast_fp16 and half else 32} engine as {f}')

if builder.platform_has_fast_fp16 and half:

config.set_flag(trt.BuilderFlag.FP16)

with builder.build_engine(network, config) as engine, open(f, 'wb') as t:

t.write(engine.serialize())

return f, None

@try_export

def export_saved_model(model,

im,

file,

dynamic,

tf_nms=False,

agnostic_nms=False,

topk_per_class=100,

topk_all=100,

iou_thres=0.45,

conf_thres=0.25,

keras=False,

prefix=colorstr('TensorFlow SavedModel:')):

# YOLOv5 TensorFlow SavedModel export

try:

import tensorflow as tf

except Exception:

check_requirements(f"tensorflow{'' if torch.cuda.is_available() else '-macos' if MACOS else '-cpu'}")

import tensorflow as tf

from tensorflow.python.framework.convert_to_constants import convert_variables_to_constants_v2

from models.tf import TFModel

LOGGER.info(f'\n{prefix} starting export with tensorflow {tf.__version__}...')

f = str(file).replace('.pt', '_saved_model')

batch_size, ch, *imgsz = list(im.shape) # BCHW

tf_model = TFModel(cfg=model.yaml, model=model, nc=model.nc, imgsz=imgsz)

im = tf.zeros((batch_size, *imgsz, ch)) # BHWC order for TensorFlow

_ = tf_model.predict(im, tf_nms, agnostic_nms, topk_per_class, topk_all, iou_thres, conf_thres)

inputs = tf.keras.Input(shape=(*imgsz, ch), batch_size=None if dynamic else batch_size)

outputs = tf_model.predict(inputs, tf_nms, agnostic_nms, topk_per_class, topk_all, iou_thres, conf_thres)

keras_model = tf.keras.Model(inputs=inputs, outputs=outputs)

keras_model.trainable = False

keras_model.summary()

if keras:

keras_model.save(f, save_format='tf')

else:

spec = tf.TensorSpec(keras_model.inputs[0].shape, keras_model.inputs[0].dtype)

m = tf.function(lambda x: keras_model(x)) # full model

m = m.get_concrete_function(spec)

frozen_func = convert_variables_to_constants_v2(m)

tfm = tf.Module()

tfm.__call__ = tf.function(lambda x: frozen_func(x)[:4] if tf_nms else frozen_func(x), [spec])

tfm.__call__(im)

tf.saved_model.save(tfm,

f,

options=tf.saved_model.SaveOptions(experimental_custom_gradients=False) if check_version(

tf.__version__, '2.6') else tf.saved_model.SaveOptions())

return f, keras_model

@try_export

def export_pb(keras_model, file, prefix=colorstr('TensorFlow GraphDef:')):

# YOLOv5 TensorFlow GraphDef *.pb export https://github.com/leimao/Frozen_Graph_TensorFlow

import tensorflow as tf

from tensorflow.python.framework.convert_to_constants import convert_variables_to_constants_v2

LOGGER.info(f'\n{prefix} starting export with tensorflow {tf.__version__}...')

f = file.with_suffix('.pb')

m = tf.function(lambda x: keras_model(x)) # full model

m = m.get_concrete_function(tf.TensorSpec(keras_model.inputs[0].shape, keras_model.inputs[0].dtype))

frozen_func = convert_variables_to_constants_v2(m)

frozen_func.graph.as_graph_def()

tf.io.write_graph(graph_or_graph_def=frozen_func.graph, logdir=str(f.parent), name=f.name, as_text=False)

return f, None

@try_export

def export_tflite(keras_model, im, file, int8, data, nms, agnostic_nms, prefix=colorstr('TensorFlow Lite:')):

# YOLOv5 TensorFlow Lite export

import tensorflow as tf

LOGGER.info(f'\n{prefix} starting export with tensorflow {tf.__version__}...')

batch_size, ch, *imgsz = list(im.shape) # BCHW

f = str(file).replace('.pt', '-fp16.tflite')

converter = tf.lite.TFLiteConverter.from_keras_model(keras_model)

converter.target_spec.supported_ops = [tf.lite.OpsSet.TFLITE_BUILTINS]

converter.target_spec.supported_types = [tf.float16]

converter.optimizations = [tf.lite.Optimize.DEFAULT]

if int8:

from models.tf import representative_dataset_gen

dataset = LoadImages(check_dataset(check_yaml(data))['train'], img_size=imgsz, auto=False)

converter.representative_dataset = lambda: representative_dataset_gen(dataset, ncalib=100)

converter.target_spec.supported_ops = [tf.lite.OpsSet.TFLITE_BUILTINS_INT8]

converter.target_spec.supported_types = []

converter.inference_input_type = tf.uint8 # or tf.int8

converter.inference_output_type = tf.uint8 # or tf.int8

converter.experimental_new_quantizer = True

f = str(file).replace('.pt', '-int8.tflite')

if nms or agnostic_nms:

converter.target_spec.supported_ops.append(tf.lite.OpsSet.SELECT_TF_OPS)

tflite_model = converter.convert()

open(f, "wb").write(tflite_model)

return f, None

@try_export

def export_edgetpu(file, prefix=colorstr('Edge TPU:')):

# YOLOv5 Edge TPU export https://coral.ai/docs/edgetpu/models-intro/

cmd = 'edgetpu_compiler --version'

help_url = 'https://coral.ai/docs/edgetpu/compiler/'

assert platform.system() == 'Linux', f'export only supported on Linux. See {help_url}'

if subprocess.run(f'{cmd} >/dev/null', shell=True).returncode != 0:

LOGGER.info(f'\n{prefix} export requires Edge TPU compiler. Attempting install from {help_url}')

sudo = subprocess.run('sudo --version >/dev/null', shell=True).returncode == 0 # sudo installed on system

for c in (

'curl https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo apt-key add -',

'echo "deb https://packages.cloud.google.com/apt coral-edgetpu-stable main" | sudo tee /etc/apt/sources.list.d/coral-edgetpu.list',

'sudo apt-get update', 'sudo apt-get install edgetpu-compiler'):

subprocess.run(c if sudo else c.replace('sudo ', ''), shell=True, check=True)

ver = subprocess.run(cmd, shell=True, capture_output=True, check=True).stdout.decode().split()[-1]

LOGGER.info(f'\n{prefix} starting export with Edge TPU compiler {ver}...')

f = str(file).replace('.pt', '-int8_edgetpu.tflite') # Edge TPU model

f_tfl = str(file).replace('.pt', '-int8.tflite') # TFLite model

cmd = f"edgetpu_compiler -s -d -k 10 --out_dir {file.parent} {f_tfl}"

subprocess.run(cmd.split(), check=True)

return f, None

@try_export

def export_tfjs(file, prefix=colorstr('TensorFlow.js:')):

# YOLOv5 TensorFlow.js export

check_requirements('tensorflowjs')

import tensorflowjs as tfjs

LOGGER.info(f'\n{prefix} starting export with tensorflowjs {tfjs.__version__}...')

f = str(file).replace('.pt', '_web_model') # js dir

f_pb = file.with_suffix('.pb') # *.pb path

f_json = f'{f}/model.json' # *.json path

cmd = f'tensorflowjs_converter --input_format=tf_frozen_model ' \

f'--output_node_names=Identity,Identity_1,Identity_2,Identity_3 {f_pb} {f}'

subprocess.run(cmd.split())

json = Path(f_json).read_text()

with open(f_json, 'w') as j: # sort JSON Identity_* in ascending order

subst = re.sub(

r'{"outputs": {"Identity.?.?": {"name": "Identity.?.?"}, '

r'"Identity.?.?": {"name": "Identity.?.?"}, '

r'"Identity.?.?": {"name": "Identity.?.?"}, '

r'"Identity.?.?": {"name": "Identity.?.?"}}}', r'{"outputs": {"Identity": {"name": "Identity"}, '

r'"Identity_1": {"name": "Identity_1"}, '

r'"Identity_2": {"name": "Identity_2"}, '

r'"Identity_3": {"name": "Identity_3"}}}', json)

j.write(subst)

return f, None

def add_tflite_metadata(file, metadata, num_outputs):

# Add metadata to *.tflite models per https://www.tensorflow.org/lite/models/convert/metadata

with contextlib.suppress(ImportError):

# check_requirements('tflite_support')

from tflite_support import flatbuffers

from tflite_support import metadata as _metadata

from tflite_support import metadata_schema_py_generated as _metadata_fb

tmp_file = Path('/tmp/meta.txt')

with open(tmp_file, 'w') as meta_f:

meta_f.write(str(metadata))

model_meta = _metadata_fb.ModelMetadataT()

label_file = _metadata_fb.AssociatedFileT()

label_file.name = tmp_file.name

model_meta.associatedFiles = [label_file]

subgraph = _metadata_fb.SubGraphMetadataT()

subgraph.inputTensorMetadata = [_metadata_fb.TensorMetadataT()]

subgraph.outputTensorMetadata = [_metadata_fb.TensorMetadataT()] * num_outputs

model_meta.subgraphMetadata = [subgraph]

b = flatbuffers.Builder(0)

b.Finish(model_meta.Pack(b), _metadata.MetadataPopulator.METADATA_FILE_IDENTIFIER)

metadata_buf = b.Output()

populator = _metadata.MetadataPopulator.with_model_file(file)

populator.load_metadata_buffer(metadata_buf)

populator.load_associated_files([str(tmp_file)])

populator.populate()

tmp_file.unlink()

@smart_inference_mode()

def run(

data=ROOT / 'data/coco128.yaml', # 'dataset.yaml path'

weights=ROOT / 'yolov5s.pt', # weights path

imgsz=(640, 640), # image (height, width)

batch_size=1, # batch size

device='cpu', # cuda device, i.e. 0 or 0,1,2,3 or cpu

include=('torchscript', 'onnx'), # include formats

half=False, # FP16 half-precision export

inplace=False, # set YOLOv5 Detect() inplace=True

keras=False, # use Keras

optimize=False, # TorchScript: optimize for mobile

int8=False, # CoreML/TF INT8 quantization

dynamic=False, # ONNX/TF/TensorRT: dynamic axes

simplify=False, # ONNX: simplify model

opset=12, # ONNX: opset version

verbose=False, # TensorRT: verbose log

workspace=4, # TensorRT: workspace size (GB)

nms=False, # TF: add NMS to model

agnostic_nms=False, # TF: add agnostic NMS to model

topk_per_class=100, # TF.js NMS: topk per class to keep

topk_all=100, # TF.js NMS: topk for all classes to keep

iou_thres=0.45, # TF.js NMS: IoU threshold

conf_thres=0.25, # TF.js NMS: confidence threshold

):

t = time.time()

include = [x.lower() for x in include] # to lowercase

fmts = tuple(export_formats()['Argument'][1:]) # --include arguments

flags = [x in include for x in fmts]

assert sum(flags) == len(include), f'ERROR: Invalid --include {include}, valid --include arguments are {fmts}'

jit, onnx, xml, engine, coreml, saved_model, pb, tflite, edgetpu, tfjs, paddle = flags # export booleans

file = Path(url2file(weights) if str(weights).startswith(('http:/', 'https:/')) else weights) # PyTorch weights

# Load PyTorch model

device = select_device(device)

if half:

assert device.type != 'cpu' or coreml, '--half only compatible with GPU export, i.e. use --device 0'

assert not dynamic, '--half not compatible with --dynamic, i.e. use either --half or --dynamic but not both'

model = attempt_load(weights, device=device, inplace=True, fuse=True) # load FP32 model

# Checks

imgsz *= 2 if len(imgsz) == 1 else 1 # expand

if optimize:

assert device.type == 'cpu', '--optimize not compatible with cuda devices, i.e. use --device cpu'

# Input

gs = int(max(model.stride)) # grid size (max stride)

imgsz = [check_img_size(x, gs) for x in imgsz] # verify img_size are gs-multiples

im = torch.zeros(batch_size, 3, *imgsz).to(device) # image size(1,3,320,192) BCHW iDetection

# Update model

model.eval()

for k, m in model.named_modules():

if isinstance(m, Detect):

m.inplace = inplace

m.dynamic = dynamic

m.export = True

if os.getenv('RKNN_model_hack', '0') in ['1']:

from models.common import Focus

from models.common import Conv

from models.common_rk_plug_in import surrogate_focus

if isinstance(model.model[0], Focus):

# For yolo v5 version

surrogate_focous = surrogate_focus(int(model.model[0].conv.conv.weight.shape[1]/4),

model.model[0].conv.conv.weight.shape[0],

k=tuple(model.model[0].conv.conv.weight.shape[2:4]),

s=model.model[0].conv.conv.stride,

p=model.model[0].conv.conv.padding,

g=model.model[0].conv.conv.groups,

act=True)

surrogate_focous.conv.conv.weight = model.model[0].conv.conv.weight

surrogate_focous.conv.conv.bias = model.model[0].conv.conv.bias

surrogate_focous.conv.act = model.model[0].conv.act

temp_i = model.model[0].i

temp_f = model.model[0].f

model.model[0] = surrogate_focous

model.model[0].i = temp_i

model.model[0].f = temp_f

model.model[0].eval()

elif isinstance(model.model[0], Conv) and model.model[0].conv.kernel_size == (6, 6):

# For yolo v6 version

surrogate_focous = surrogate_focus(model.model[0].conv.weight.shape[1],

model.model[0].conv.weight.shape[0],

k=(3,3), # 6/2, 6/2

s=1,

p=(1,1), # 2/2, 2/2

g=model.model[0].conv.groups,

act=hasattr(model.model[0], 'act'))

surrogate_focous.conv.conv.weight[:,:3,:,:] = model.model[0].conv.weight[:,:,::2,::2]

surrogate_focous.conv.conv.weight[:,3:6,:,:] = model.model[0].conv.weight[:,:,1::2,::2]

surrogate_focous.conv.conv.weight[:,6:9,:,:] = model.model[0].conv.weight[:,:,::2,1::2]

surrogate_focous.conv.conv.weight[:,9:,:,:] = model.model[0].conv.weight[:,:,1::2,1::2]

surrogate_focous.conv.conv.bias = model.model[0].conv.bias

surrogate_focous.conv.act = model.model[0].act

temp_i = model.model[0].i

temp_f = model.model[0].f

model.model[0] = surrogate_focous

model.model[0].i = temp_i

model.model[0].f = temp_f

model.model[0].eval()

if os.getenv('RKNN_model_hack', '0') in ['1']:

if isinstance(model.model[-1], Detect):

# save anchors

print('---> save anchors for RKNN')

RK_anchors = model.model[-1].stride.reshape(3,1).repeat(1,3).reshape(-1,1)* model.model[-1].anchors.reshape(9,2)

with open('RK_anchors.txt', 'w') as anf:

# anf.write(str(model.model[-1].na)+'\n')

for _v in RK_anchors.numpy().flatten():

anf.write(str(_v)+'\n')

RK_anchors = RK_anchors.tolist()

print(RK_anchors)

if isinstance(model.model[-1], Segment):

print("export segment model for RKNPU")

model.model[-1]._register_seg_seperate(True)

else:

print("export detect model for RKNPU")

model.model[-1]._register_detect_seperate(True)

for _ in range(2):

y = model(im) # dry runs

if half and not coreml:

im, model = im.half(), model.half() # to FP16

shape = tuple((y[0] if (isinstance(y, tuple) or (isinstance(y, list))) else y).shape) # model output shape

metadata = {'stride': int(max(model.stride)), 'names': model.names} # model metadata

LOGGER.info(f"\n{colorstr('PyTorch:')} starting from {file} with output shape {shape} ({file_size(file):.1f} MB)")

# Exports

f = [''] * len(fmts) # exported filenames

warnings.filterwarnings(action='ignore', category=torch.jit.TracerWarning) # suppress TracerWarning

if jit: # TorchScript

f[0], _ = export_torchscript(model, im, file, optimize)

if engine: # TensorRT required before ONNX

f[1], _ = export_engine(model, im, file, half, dynamic, simplify, workspace, verbose)

if onnx or xml: # OpenVINO requires ONNX

f[2], _ = export_onnx(model, im, file, opset, dynamic, simplify)

if xml: # OpenVINO

f[3], _ = export_openvino(file, metadata, half)

if coreml: # CoreML

f[4], _ = export_coreml(model, im, file, int8, half)

if any((saved_model, pb, tflite, edgetpu, tfjs)): # TensorFlow formats

assert not tflite or not tfjs, 'TFLite and TF.js models must be exported separately, please pass only one type.'

assert not isinstance(model, ClassificationModel), 'ClassificationModel export to TF formats not yet supported.'

f[5], s_model = export_saved_model(model.cpu(),

im,

file,

dynamic,

tf_nms=nms or agnostic_nms or tfjs,

agnostic_nms=agnostic_nms or tfjs,

topk_per_class=topk_per_class,

topk_all=topk_all,

iou_thres=iou_thres,

conf_thres=conf_thres,

keras=keras)

if pb or tfjs: # pb prerequisite to tfjs

f[6], _ = export_pb(s_model, file)

if tflite or edgetpu:

f[7], _ = export_tflite(s_model, im, file, int8 or edgetpu, data=data, nms=nms, agnostic_nms=agnostic_nms)

if edgetpu:

f[8], _ = export_edgetpu(file)

add_tflite_metadata(f[8] or f[7], metadata, num_outputs=len(s_model.outputs))

if tfjs:

f[9], _ = export_tfjs(file)

if paddle: # PaddlePaddle

f[10], _ = export_paddle(model, im, file, metadata)

# Finish

f = [str(x) for x in f if x] # filter out '' and None

if any(f):

cls, det, seg = (isinstance(model, x) for x in (ClassificationModel, DetectionModel, SegmentationModel)) # type

dir = Path('segment' if seg else 'classify' if cls else '')

h = '--half' if half else '' # --half FP16 inference arg

s = "# WARNING 鈿狅笍 ClassificationModel not yet supported for PyTorch Hub AutoShape inference" if cls else \

"# WARNING 鈿狅笍 SegmentationModel not yet supported for PyTorch Hub AutoShape inference" if seg else ''

LOGGER.info(f'\nExport complete ({time.time() - t:.1f}s)'

f"\nResults saved to {colorstr('bold', file.parent.resolve())}"

f"\nDetect: python {dir / ('detect.py' if det else 'predict.py')} --weights {f[-1]} {h}"

f"\nValidate: python {dir / 'val.py'} --weights {f[-1]} {h}"

f"\nPyTorch Hub: model = torch.hub.load('ultralytics/yolov5', 'custom', '{f[-1]}') {s}"

f"\nVisualize: https://netron.app")

return f # return list of exported files/dirs

def parse_opt():

parser = argparse.ArgumentParser()

parser.add_argument('--data', type=str, default=ROOT / 'data/coco128.yaml', help='dataset.yaml path')

parser.add_argument('--weights', nargs='+', type=str, default=ROOT / 'yolov5s.pt', help='model.pt path(s)')

parser.add_argument('--imgsz', '--img', '--img-size', nargs='+', type=int, default=[640, 640], help='image (h, w)')

parser.add_argument('--batch-size', type=int, default=1, help='batch size')

parser.add_argument('--device', default='cpu', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

parser.add_argument('--half', action='store_true', help='FP16 half-precision export')

parser.add_argument('--inplace', action='store_true', help='set YOLOv5 Detect() inplace=True')

parser.add_argument('--keras', action='store_true', help='TF: use Keras')

parser.add_argument('--optimize', action='store_true', help='TorchScript: optimize for mobile')

parser.add_argument('--int8', action='store_true', help='CoreML/TF INT8 quantization')

parser.add_argument('--dynamic', action='store_true', help='ONNX/TF/TensorRT: dynamic axes')

parser.add_argument('--simplify', action='store_true', help='ONNX: simplify model')

parser.add_argument('--opset', type=int, default=12, help='ONNX: opset version')

parser.add_argument('--verbose', action='store_true', help='TensorRT: verbose log')

parser.add_argument('--workspace', type=int, default=4, help='TensorRT: workspace size (GB)')

parser.add_argument('--nms', action='store_true', help='TF: add NMS to model')

parser.add_argument('--agnostic-nms', action='store_true', help='TF: add agnostic NMS to model')

parser.add_argument('--topk-per-class', type=int, default=100, help='TF.js NMS: topk per class to keep')

parser.add_argument('--topk-all', type=int, default=100, help='TF.js NMS: topk for all classes to keep')

parser.add_argument('--iou-thres', type=float, default=0.45, help='TF.js NMS: IoU threshold')

parser.add_argument('--conf-thres', type=float, default=0.25, help='TF.js NMS: confidence threshold')

parser.add_argument('--include',

nargs='+',

default=['onnx'],

help='torchscript, onnx, openvino, engine, coreml, saved_model, pb, tflite, edgetpu, tfjs')

parser.add_argument('--rknpu', action='store_true', help='RKNN npu platform')

opt = parser.parse_args()

print_args(vars(opt))

return opt

def main(opt):

for opt.weights in (opt.weights if isinstance(opt.weights, list) else [opt.weights]):

run(**vars(opt))

if __name__ == "__main__":

opt = parse_opt()

del opt.rknpu

main(opt)然后运行指令将yolov5训练的pt模型转换为onnx模型:

python

python export.py --rknpu --weight /YOLOv5_demo.pt --img 384 640--img后面放入参数分别表示高和宽,按实际情况进行设置。

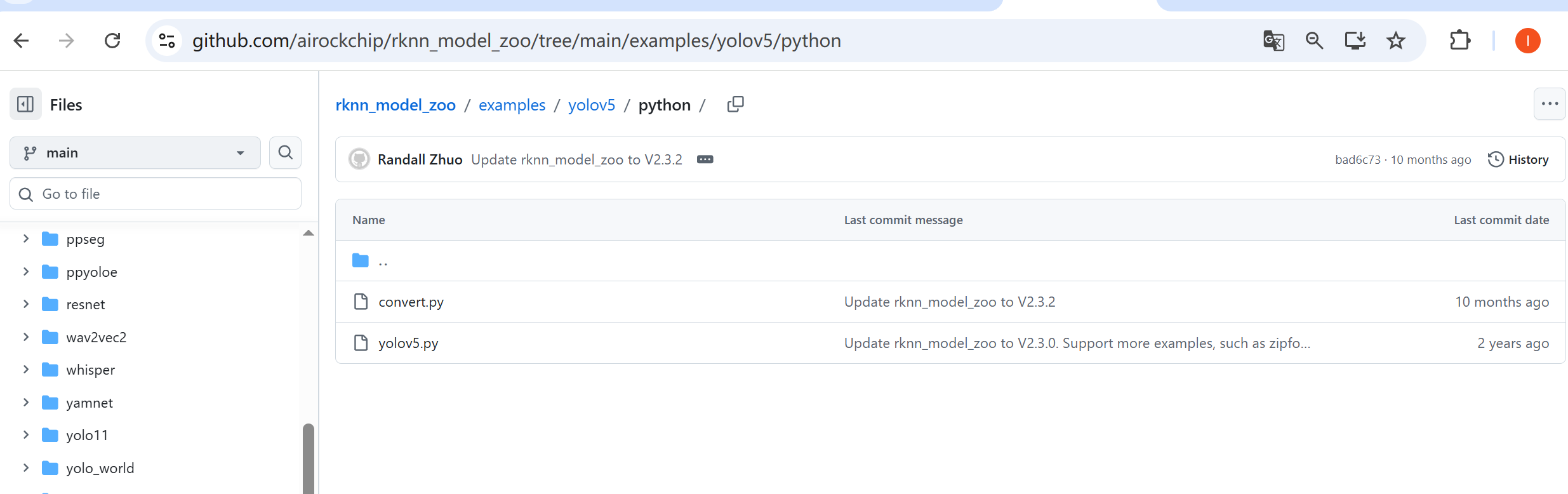

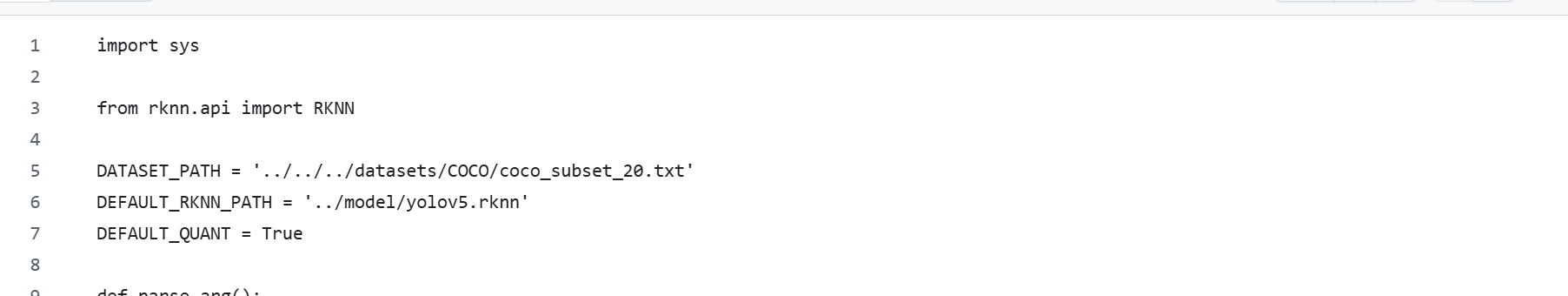

然后在rknn_model_zoo项目中的rknn_model_zoo/examples/yolov5/python/子目录下:

python

vim convert.py 修改保存的rknn模型路径,以及量化数据集的txt索引文件。

txt量化数据集的索引文件: 将量化数据集上传至convert_rknn_demo/yolov5目录下,将所有量化数据集的文件相对路径写在txt索引文件即可。

然后运行模型转换指令:

python

python convert.py ./YOLOv5_demo.onnx rk3588