一.LVS

1.1 LVS介绍

LVS 是Linux 内核级四层负载均衡的标杆,核心优势是极致性能、低延迟、高稳定,适合承载大规模 TCP/UDP 流量(如数据库、高并发入口)。生产中首选DR 模式,配合 Keepalived 实现高可用,必要时与 Nginx 组合构建 "四层 + 七层" 的完整负载均衡架构。

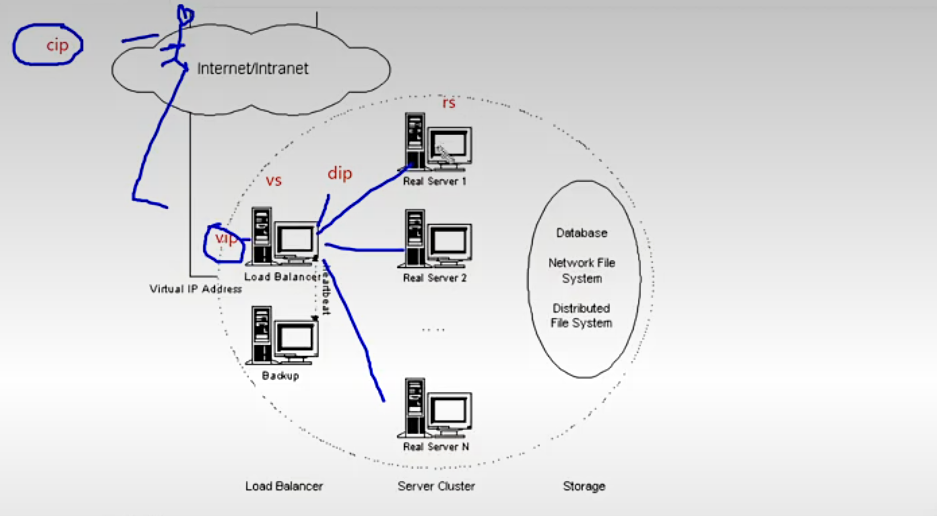

1.2 LVS概念

VS:VirtualServer(调度器)

RS:RealServer(真实业务主机)

CIP:ClientIp(客户端主机的ip)

VIP: Virtual serve IP Vs外网的IP (对外开放的让客户访问的ip)

DIP:DirectorIp Vs内网的IP(调度器负责访问内网的ip)

RIP:RealserverIP(真实业务主机IP)

1.2 LVS集群的类型

Ivs-nat:修改请求报文的目标IP,多目标IP的DNAT

lvs-dr: 封装新的MAC地址

Ivs-tun :在原请求IP报文之外新加一个IP首部

Ivs-fullnat:修改请求报文的源和目标IP

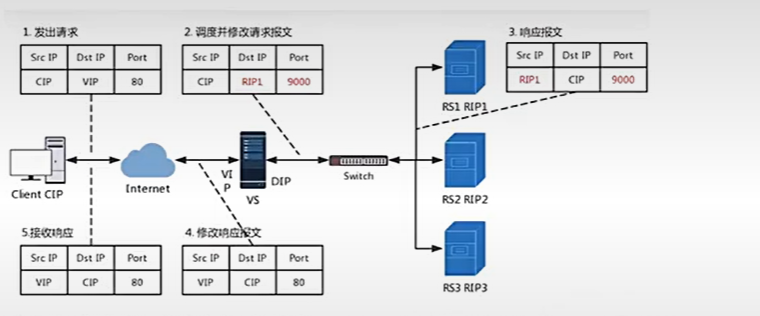

1.2.1.nat模式

Ivs-nat:

本质是多目标IP的DNAT,通过将请求报文中的目标地址和目标端口修改为某挑出的RS的RIP和PORT实现转发。

RIP和DIP应在同一个IP网络,且应使用私网地址;RS的网关要指向DIP

请求报文和响应报文都必须经由Director转发,Director易于成为系统瓶颈

支持端口映射,可修改请求报文的目标PORT

VS必须是Linux系统,RS可以是任意OS系统

LVS 的 NAT 模式(Network Address Translation,网络地址转换) 是三种工作模式中部署最简单、但性能相对受限的一种,核心是通过 IP 地址改写实现流量转发。

一、核心原理

请求路径:客户端访问 VIP(虚拟服务 IP),Director(调度器)收到请求后,将数据包的目标 IP 从 VIP 改写为选中的 Real Server 的 RIP(真实服务器 IP),然后转发给后端。

响应路径:Real Server 处理完请求后,响应包的源 IP 是 RIP,目标 IP 是客户端 IP。由于 Real Server 的默认网关必须指向 Director,所以响应包会先回到 Director,Director 再将源 IP 从 RIP 改写回 VIP,最后发送给客户端。

关键特点:双向流量都必须经过 Director,Director 既是请求入口,也是响应出口。

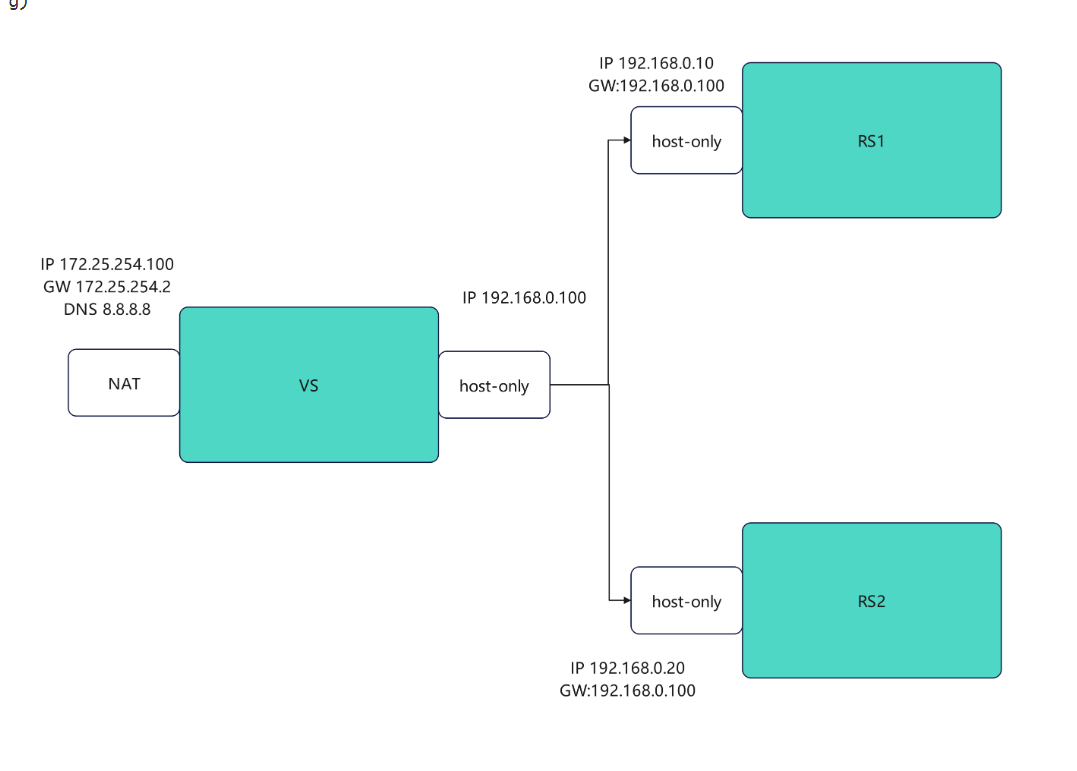

1.2.2 LVS实验

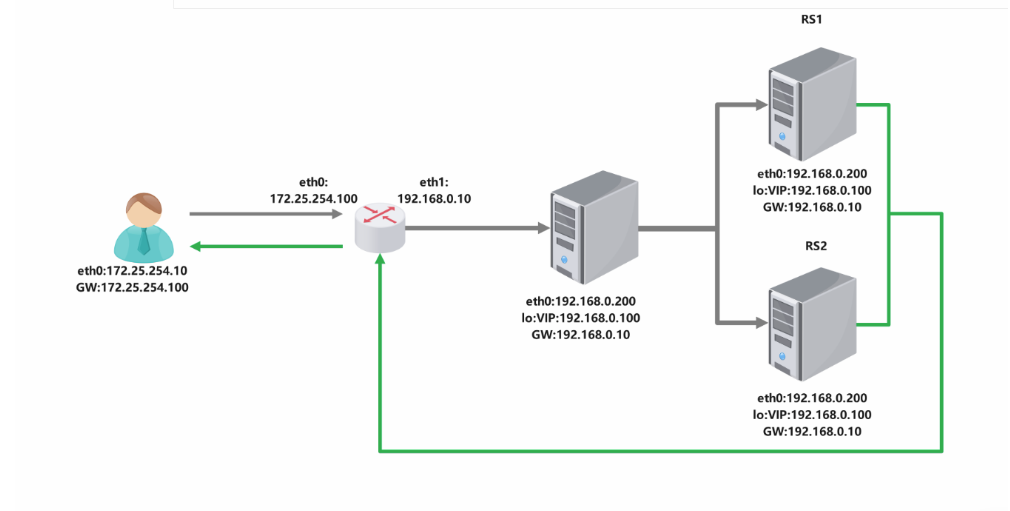

实验图示

RS1

bash

#设定网络,设定Dip

[root@RS1 ~]# vmset.sh eth0 192.168.20.10 RS1 noroute

[root@RS1 ~]# nmcli connection modify eth0 ipv4.gateway 192.168.20.100

[root@RS1 ~]# nmcli connection reload

[root@RS1 ~]# nmcli connection up eth0

[root@RS1 ~]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.20.100 0.0.0.0 UG 100 0 0 eth0

192.168.20.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

[root@RS1 ~]# dnf install httpd -y

[root@RS1 ~]# systemctl enable --now httpd

[root@RS1 ~]# echo RS1 - 192.168.20.10 > /var/www/html/index.htmlRS2

bash

#设定网络,设定Dip

[root@RS2 ~]# vmset.sh eth0 192.168.20.20 RS2 noroute

[root@RS2 ~]# nmcli connection modify eth0 ipv4.gateway 192.168.20.100

[root@RS2 ~]# nmcli connection reload

[root@RS2 ~]# nmcli connection up eth0

[root@RS2 ~]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.20.100 0.0.0.0 UG 100 0 0 eth0

192.168.20.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

[root@RS1 ~]# dnf install httpd -y

[root@RS1 ~]# systemctl enable --now httpd

[root@RS1 ~]# echo RS2 - 192.168.20.20 > /var/www/html/index.htmlVSnode

bash

#1,开启内核路由功能,安装ipvsadm

[root@vsnode ~]# dnf install ipvsadm.x86_64

[root@vsnode ~]# echo net.ipv4.ip_forward=1 >> /etc/sysctl.conf

[root@vsnode ~]# sysctl -p

net.ipv4.ip_forward = 1

#2.编写策略

[root@vsnode ~]# ipvsadm -C

[root@vsnode ~]# ipvsadm -A -t 172.25.254.100:80 -s wrr

[root@vsnode ~]# ipvsadm -a -t 172.25.254.100:80 -r 192.168.0.10:80 -m -w 1

[root@vsnode ~]# ipvsadm -a -t 172.25.254.100:80 -r 192.168.0.20:80 -m -w 1

[root@vsnode ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.254.100:80 wrr

-> 192.168.0.10:80 Masq 1 0 0

-> 192.168.0.20:80 Masq 1 0 0

#测试

[root@vsnode ~]# for i in {1..10};do curl 172.25.254.100;done

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

#更改权重

[root@vsnode ~]# ipvsadm -e -t 172.25.254.100:80 -r 192.168.0.10:80 -m -w 2

[root@vsnode ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.254.100:80 wrr

-> 192.168.0.10:80 Masq 2 0 5

-> 192.168.0.20:80 Masq 1 0 5

#测试

[root@vsnode ~]# for i in {1..10};do curl 172.25.254.100;done

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS1 - 192.168.0.10

RS2 - 192.168.0.20

#监控

[root@vsnode ~]# watch -n 1 ipvsadm -Ln

#配置数据持久化

[root@vsnode ~]# ipvsadm-save -n > /etc/sysconfig/ipvsadm

[root@vsnode ~]# ipvsadm -C

[root@vsnode ~]# systemctl enable --now ipvsadm.service

Created symlink /etc/systemd/system/multi-user.target.wants/ipvsadm.service → /usr/lib/systemd/system/ipvsadm.service.1.3 ipvsadm命令介绍

bash

集群服务的管理(VS)

ipvsadm -A|E -t|u|fservice-address [-s scheduler] [-p [timeout]]

ipvsadm -A -t 172.25.254.100:80 -s wrr

-A 添加

-E 修改

-t tcp服务

-u udp服务

-s 调度算法

-p 设置持久连接超时

-f firewa1] mask 火墙标记,是一个数字

集群服务中的RealServer的管理

ipvsadm -a|e -t|u|f service-address -r realserver-address [-g|i|m] [-w weight]

ipvsadm -a -t 172.25.254.100:80 -r 192.168.0.10:80 -m -w 1

-a:添加 realserver(真实服务器)

-e:更改 realserver(真实服务器)

-t:指定 TCP 协议

-u:指定 UDP 协议

-f:防火墙标签(firewall mark)

-r:指定 realserver 地址

-g:启用 直接路由模式(DR)

-i:启用 IPIP 隧道模式(TUN)

-m:启用 NAT 模式

-w:设定权重(weight)

-Z:清空计数器

-C:清空 LVS 策略

-L:查看 LVS 策略

-n:不做解析(显示数字 IP / 端口,不反查域名)

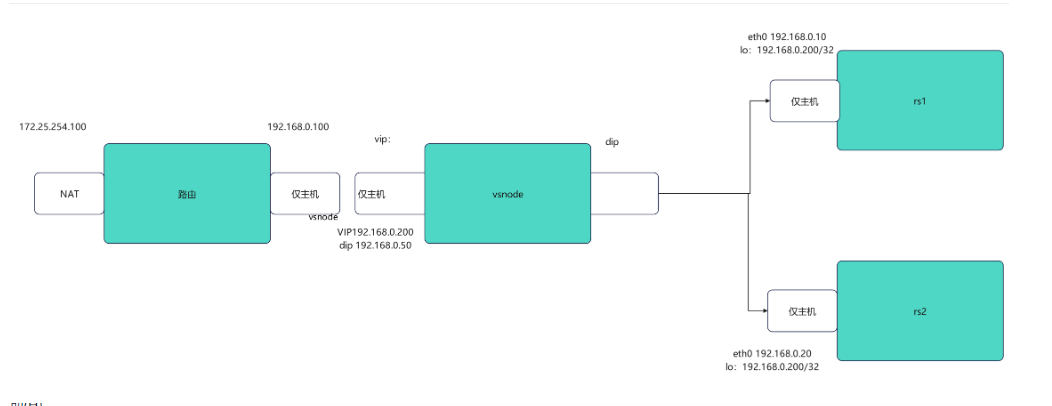

--rate:输出速率信息1.4 DR模式

LVS 的 DR 模式(Direct Routing,直接路由) 是生产环境中性能最优、最常用的工作模式,核心是通过改写 MAC 地址实现高效转发,避免 Director 成为响应流量的瓶颈。

一、核心原理

请求路径:客户端访问 VIP(虚拟服务 IP),Director(调度器)收到请求后,仅将数据包的目标 MAC 地址改写为选中的 Real Server 的 MAC 地址,而目标 IP 仍为 VIP,随后将数据包转发到同一二层网络内的 Real Server。

响应路径:Real Server 收到数据包后,发现目标 IP 是自己在 lo 网卡上配置的 VIP,因此直接处理请求。处理完成后,响应包的源 IP 为 VIP,目标 IP 为客户端 IP,直接通过自身的物理网卡发回客户端,不再经过 Director。

关键特点:Director 仅处理入站请求,响应流量直达客户端,极大减轻了 Director 的负载

实验

路由器

bash

[root@router ~]# vmset.sh eth0 172.25.254.100 router

[root@router ~]# vmset.sh eth1 192.168.0.100 router noroute、

#设定内核路由功能

[root@router ~]# echo net.ipv4.ip_forward=1 >> /etc/sysctl.conf

[root@router ~]# sysctl -p

net.ipv4.ip_forward = 1

#数据转发策略,把进来的数据伪装成192.168.0.100/172.25.254.100

[root@router ~]# iptables -t nat -A POSTROUTING -o eth1 -j SNAT --to-source 192.168.0.100

[root@vsnode ~]# iptables -t nat -A POSTROUTING -o eth0 -j SNAT --to-source 172.25.254.100VSnode

bash

#vsnode 调度器

[root@vsnode ~]# vmset.sh eth0 192.168.0.50 vsnode norouter

[root@vsnode ~]# vim /etc/NetworkManager/system-connections/eth0.nmconnection

[connection]

id=eth0

type=ethernet

interface-name=eth0

[ipv4]

method=manual

address1==192.168.0.50/24,192.168.0.100#dip

[root@vsnode ~]# cd /etc/NetworkManager/system-connections/

[root@vsnode system-connections]# cp -p eth0.nmconnection lo.nmconnection

[root@vsnode system-connections]# vim lo.nmconnection

[connection]

id=lo

type=loopback

interface-name=lo

[ipv4]

method=manual

address1==127.0.0.1/8

address2=192.168.0.200/32 #vip

[root@RS1 system-connections]# nmcli connection reload

[root@RS1 system-connections]# nmcli connection up eth0

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/7)

[root@RS1 system-connections]# nmcli connection up lo

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/8)

#检测

root@vsnode system-connections]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.0.100 0.0.0.0 UG 100 0 0 eth0

192.168.0.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

192.168.0.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

[root@vsnode system-connections]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet 192.168.0.200/32 brd 192.168.0.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:41:e5:8b brd ff:ff:ff:ff:ff:ff

altname enp3s0

altname ens160

inet 192.168.0.50/24 brd 192.168.0.255 scope global secondary noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 fe80::e40:8975:6b9:fea8/64 scope link noprefixroute

valid_lft forever preferred_lft forever

轮询调度

[root@vsnode ~]# dnf install ipvsadm.x86_64

[root@vsnode ~]# ipvsadm -C

[root@vsnode ~]# ipvsadm -A -t 192.168.0.200:80 -s rr

[root@vsnode ~]# ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.10:80 -m -w 1

[root@vsnode ~]# ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.20:80 -m -w 1

[root@vsnode ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.0.200:80 wrr

-> 192.168.0.10:80 Masq 1 0 0

-> 192.168.0.20:80 Masq 1 0 0

[root@vsnode ~]# ipvsadm-save -n > /etc/sysconfig/ipvsadm

[root@vsnode ~]# systemctl enable --now ipvsadm.service客户端

bash

#客户端

[root@client ~]# vmset.sh eth0 172.25.254.99 client norouter

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/4)

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:e5:75:af brd ff:ff:ff:ff:ff:ff

altname enp3s0

altname ens160

inet 172.25.254.99/24 brd 172.25.254.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fee5:75af/64 scope link tentative noprefixroute

valid_lft forever preferred_lft forever

client

[root@client ~]# vim /etc/NetworkManager/system-connections/eth0.nmconnection

[connection]

id=eth0

type=ethernet

interface-name=eth0

[ipv4]

method=manual

address1=172.25.254.99/24,172.25.254.100

dns=8.8.8.8;

[root@client ~]# nmcli connection reload

[root@client ~]# nmcli connection up eth0

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/5)

[root@client ~]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 172.25.254.100 0.0.0.0 UG 100 0 0 eth0

172.25.254.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

#检测ping通vip

[root@client ~]# ping 192.168.0.200

PING 192.168.0.200 (192.168.0.200) 56(84) 比特的数据。

64 比特,来自 192.168.0.200: icmp_seq=1 ttl=128 时间=1.08 毫秒RS1

bash

#RS1

[root@RS1 ~]# vmset.sh eth0 192.168.0.10 RS1 noroute

[root@RS1 ~]# nmcli connection modify eth0 ipv4.gateway 192.168.0.100#网关是路由器的那个口

[root@RS1 ~]# nmcli connection reload

[root@RS1 ~]# nmcli connection up eth0

[root@RS1 ~]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.0.100 0.0.0.0 UG 100 0 0 eth0

192.168.0.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

#在lo上设定vip,因为回数据时能直接使用vip地址而不用调度器分配

[root@RS1 ~]# cd /etc/NetworkManager/system-connections/

[root@RS1 system-connections]# cp -p eth0.nmconnection lo.nmconnection

[root@RS1 system-connections]# vim lo.nmconnection

[connection]

id=lo

type=loopback

interface-name=lo

[ethernet]

[ipv4]

address1=127.0.0.1/8

address2=192.168.0.200/32

method=manual

[root@RS1 system-connections]# nmcli connection reload

[root@RS1 system-connections]# nmcli connection up lo

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/6)

[root@RS1 system-connections]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet 192.168.0.200/32 scope global lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

#arp禁止响应

[root@rs1 ~]# echo 1 > /proc/sys/net/ipv4/conf/all/arp_ignore

[root@rs1 ~]# echo 1 > /proc/sys/net/ipv4/conf/lo/arp_ignore

[root@rs1 ~]# echo 2 > /proc/sys/net/ipv4/conf/lo/arp_announce

[root@rs1 ~]# echo 2 > /proc/sys/net/ipv4/conf/all/arp_announceRS2

bash

#RS2

[root@RS2 ~]# vmset.sh eth0 192.168.0.20 RS2 noroute

[root@RS2 ~]# nmcli connection modify eth0 ipv4.gateway 192.168.0.100

[root@RS2 ~]# nmcli connection reload

[root@RS2 ~]# nmcli connection up eth0

[root@RS2 ~]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.0.100 0.0.0.0 UG 100 0 0 eth0

192.168.0.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

#在lo上设定vip

[root@RS2 ~]# cd /etc/NetworkManager/system-connections/

[root@RS2 system-connections]# cp -p eth0.nmconnection lo.nmconnection

[root@RS2 system-connections]# vim lo.nmconnection

[connection]

id=lo

type=loopback

interface-name=lo

[ethernet]

[ipv4]

address1=127.0.0.1/8

address2=192.168.0.200/32

method=manual

[root@RS2 system-connections]# nmcli connection reload

[root@RS2 system-connections]# nmcli connection up lo

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/6)

[root@RS2 system-connections]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet 192.168.0.200/32 scope global lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

#arp禁止响应

[root@rs2 ~]# echo 1 > /proc/sys/net/ipv4/conf/all/arp_ignore

[root@rs2 ~]# echo 1 > /proc/sys/net/ipv4/conf/lo/arp_ignore

[root@rs2 ~]# echo 2 > /proc/sys/net/ipv4/conf/lo/arp_announce

[root@rs2 ~]# echo 2 > /proc/sys/net/ipv4/conf/all/arp_announce1.5.1静态调度算法

- 轮询(RR,Round Robin)

请求按顺序轮流分配给 RS1/RS2,无权重差异,1:1 分发。

bash

ipvsadm -C && ipvsadm -Z

# 添加VIP虚拟服务,指定算法为rr

ipvsadm -A -t 192.168.0.200:80 -s rr

# 添加RS1/RS2,DR模式指定-g,权重默认1(可省略-w 1)

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.10 -g

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.20 -g

# 查看规则(验证)

ipvsadm -Ln2.加权轮询(WRR,Weighted Round Robin)

按预设权重分发,权重越高,分配到的请求越多(如 RS1 权重 2、RS2 权重 1,分发比例 2:1)。

bash

ipvsadm -C && ipvsadm -Z

ipvsadm -A -t 192.168.0.200:80 -s wrr

# RS1权重2(-w 2)、RS2权重1(-w 1),DR模式-g

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.10 -g -w 2

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.20 -g -w 1

ipvsadm -Ln- 源地址哈希(SH,Source Hashing)

根据客户端源 IP哈希计算,同一客户端的所有请求始终分配到同一台 RS,实现会话粘滞。

bash

ipvsadm -C && ipvsadm -Z

ipvsadm -A -t 192.168.0.200:80 -s sh

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.10 -g

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.20 -g

ipvsadm -Ln- 目标地址哈希(DH,Destination Hashing)

核心原理

根据请求目标 IP哈希计算(本实验目标 IP 均为 VIP 192.168.0.200),同一目标 IP 的请求始终分配到同一台 RS,适合缓存集群

bash

ipvsadm -C && ipvsadm -Z

ipvsadm -A -t 192.168.0.200:80 -s dh

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.10 -g

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.20 -g

ipvsadm -Ln1.5.2 动态调度

- 最少连接(LC,Least Connections)

核心原理

新请求优先分配给当前活动连接数最少的 RS,不考虑权重。

bash

ipvsadm -C && ipvsadm -Z

ipvsadm -A -t 192.168.0.200:80 -s lc

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.10 -g

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.20 -g

ipvsadm -Ln- 加权最少连接(WLC,Weighted Least Connections)

核心原理

LVS默认算法,结合活动连接数 + 权重,按公式活动连接数/权重计算,值越小优先级越高,兼顾负载和服务器性能。

bash

ipvsadm -C && ipvsadm -Z

ipvsadm -A -t 192.168.0.200:80 -s wlc

# 给高性能RS1设权重3,低性能RS2设权重1(即使RS2负载低,也会少分配)

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.10 -g -w 3

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.20 -g -w 1

ipvsadm -Ln3.最短期望延迟(SED,Shortest Expected Delay)

核心原理

WLC 的改进版,公式为(活动连接数+1)/权重,更倾向于权重高的 RS,减少整体请求延迟,适合高权重服务器优先处理请求。

bash

ipvsadm -C && ipvsadm -Z

ipvsadm -A -t 192.168.0.200:80 -s sed

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.10 -g -w 3

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.20 -g -w 1

ipvsadm -Ln- 永不排队(NQ,Never Queue)

核心原理

贪心算法,若存在活动连接数为 0 的空闲 RS,直接分配请求给它,无需计算公式;无空闲 RS 时,退化为SED 算法,适合充分利用空闲服务器。

bash

ipvsadm -C && ipvsadm -Z

ipvsadm -A -t 192.168.0.200:80 -s nq

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.10 -g -w 3

ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.20 -g -w 1

ipvsadm -Ln1.6利用火墙标记解决轮询错误

1.在rs主机中同时开始http和https两种协议

#在RS1和RS2中开启https

[root@RS1+RS2 ~]# dnf install mod_ssl -y

[root@RS1+RS2 ~]# systemctl restart httpd

[root@RS1+RS2 ~]# systemctl restart httpd2.在vsnode中添加https的轮询策略

bash

root@vsnode boot]# ip^Cadm -A -t 192.168.0.200:80 -s rr

[root@vsnode boot]# ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.20 -g

[root@vsnode boot]# ipvsadm -a -t 192.168.0.200:80 -r 192.168.0.10 -g

[root@vsnode boot]# ipvsadm -A -t 192.168.0.200:443 -s rr

[root@vsnode boot]# ipvsadm -a -t 192.168.0.200:443 -r 192.168.0.10:443 -g

[root@vsnode boot]# ipvsadm -a -t 192.168.0.200:443 -r 192.168.0.20:443 -g

[root@vsnode boot]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.0.200:80 rr

-> 192.168.0.10:80 Route 1 0 0

-> 192.168.0.20:80 Route 1 0 0

TCP 192.168.0.200:443 rr

-> 192.168.0.10:443 Route 1 0 0

-> 192.168.0.20:443 3.轮询错误展示

Bash

[root@client ~]# curl 192.168.0.200;curl -k https://192.168.0.200

RS2 - 192.168.0.20

RS2 - 192.168.0.20

#当上述设定完成后http和https是独立的service,轮询会出现重复问题解决方案:使用火墙标记访问vip的80和443的所有数据包,设定标记为6666,然后对此标记进行负载

[root@vsnode boot]# iptables -t mangle -A PREROUTING -d 192.168.0.200 -p tcp -m multiport --dports 80,443 -j MARK --set-mark 6666

[root@vsnode boot]# ipvsadm -A -f 6666 -s rr

[root@vsnode boot]# ipvsadm -a -f 6666 -r 192.168.0.10 -g

[root@vsnode boot]# ipvsadm -a -f 6666 -r 192.168.0.20 -g

#测试:在客户端

[root@client ~]# curl 192.168.0.200;curl -k https://192.168.0.200

RS2 - 192.168.0.20

RS1 - 192.168.0.101.7利用持久连接实现会话粘滞

1.设定ipvs调度策略

[root@vsnode ~]# ipvsadm -A -f 6666 -s rr -p 1

[root@vsnode ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

FWM 6666 rr persistent 1

-> 192.168.0.10:0 Route 1 0 0

-> 192.168.0.20:0 2,测试:

[root@client ~]# curl 192.168.0.200

RS1 - 192.168.0.10

[root@client ~]# curl 192.168.0.200

RS1 - 192.168.0.103.观察

[root@vsnode ~]# watch -n 1 ipvsadm -Lnc

IPVS connection entries

pro expire state source virtual destination

TCP 01:56 FIN_WAIT 172.25.254.99:42420 192.168.0.200:80 192.168.0.20:80

IP 00:57 ASSURED 172.25.254.99:0 0.0.26.10:0 192.168.0.20:0

TCP 01:54 FIN_WAIT 172.25.254.99:46216 192.168.0.200:80 192.168.0.20:80

TCP 01:55 FIN_WAIT 172.25.254.99:46222 192.168.0.200:80 192.168.0.20:80二.Haproxy

2.1Haproxy简介

HAProxy(High Availability Proxy)是一款开源的四层 + 七层一体化负载均衡与高可用代理软件,专为高并发、高可靠性场景设计,核心作用是将客户端的请求智能分发到后端多台服务器,实现负载分担、故障自动隔离,同时提供健康检查、会话保持、SSL 卸载等实用功能,是搭建企业级服务集群的必备工具。

- 双协议支持,场景全覆盖

四层负载均衡 :针对 TCP/UDP 协议(如 MySQL、Redis、SSH、MQ),基于「IP + 端口」转发流量,配置简洁,性能接近内核态的 LVS;

七层负载均衡 :针对 HTTP/HTTPS/HTTP2 协议,解析应用层信息(URL、域名、请求头、Cookie),实现按路径分流、动静分离、域名转发等精细化调度。

一套配置搞定不同协议、不同业务的负载均衡,无需单独部署多款工具。 - 高性能、低消耗,扛得住高并发

HAProxy 采用单进程 + 多线程的事件驱动模型(基于 epoll/kqueue),无进程切换开销,内存占用极低(正常运行仅几十 MB),单台服务器可轻松支撑十万级并发连接,处理数百万请求 / 秒,在电商秒杀、直播等高并发场景下表现稳定,资源利用率远高于传统代理工具。 - 智能健康检查,保障服务高可用

后端服务器宕机、服务异常是分布式架构的常见问题,HAProxy 提供精细化的主动 + 被动健康检查机制:

主动检查:定时检测后端服务器的端口、HTTP 状态码、自定义 URL,失败达到阈值自动摘除故障节点,恢复后自动上线;

被动检查:监控请求响应状态(如 502/503 错误、超时),实时识别异常服务器并快速切流。

无需人工干预,实现服务故障的自动隔离,保障业务持续可用。 - 轻量灵活,易配置、易维护

配置文件为纯文本格式,语法简洁易懂,核心配置仅需几行,新手也能快速上手;

支持配置热重载,修改配置后无需重启服务,避免业务中断;

无多余依赖,安装包体积小,部署简单,跨 Linux/FreeBSD 等类 Unix 系统,适配各类生产环境。

此外,HAProxy 还支持会话保持(源 IP/Cookie 绑定)、SSL/TLS 卸载(将加密解密工作从后端剥离)、流量限流、真实 IP 透传等实用功能,满足企业级场景的多样化需求。

2.2 Haproxy实验

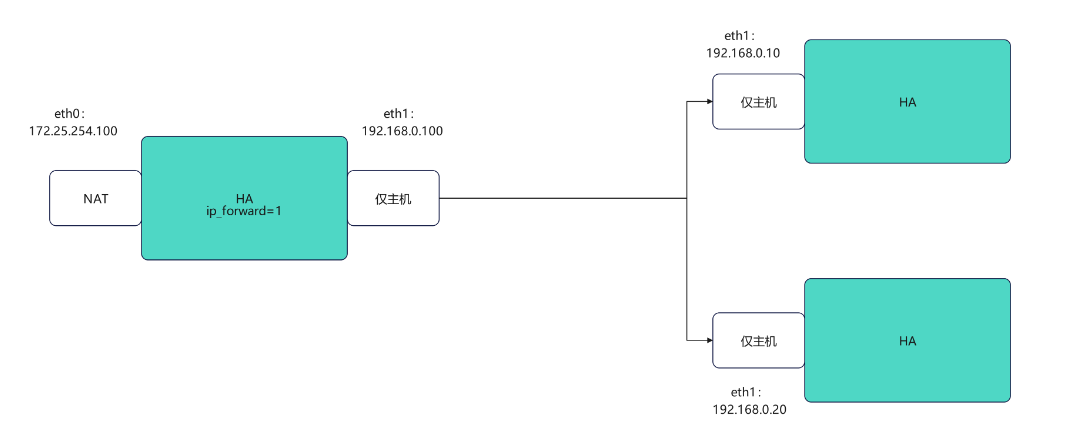

2.2.1实验环境

Haproxy主配置文件 /etc/haproxy/haproxy.cfg。

Haproxy

bash

#配置网卡

[root@haproxy ~]# vmset.sh eth0 172.25.254.100 haproxy

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/7)

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:0c:6f:ee brd ff:ff:ff:ff:ff:ff

altname enp3s0

altname ens160

inet 172.25.254.100/24 brd 172.25.254.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe0c:6fee/64 scope link tentative noprefixroute

valid_lft forever preferred_lft forever

haproxy

[root@haproxy ~]# vmset.sh eth1 192.168.0.100 haproxy norouter

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/8)

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:0c:6f:f8 brd ff:ff:ff:ff:ff:ff

altname enp19s0

altname ens224

inet 192.168.0.100/24 brd 192.168.0.255 scope global noprefixroute eth1

valid_lft forever preferred_lft forever

inet6 fe80::4ca7:8cde:1244:8df/64 scope link tentative noprefixroute

valid_lft forever preferred_lft forever

haproxy

#配置内核路由功能

[root@haproxy ~]# echo net.ipv4.ip_forward=1 > /etc/sysctl.conf

[root@haproxy ~]# sysctl -p

net.ipv4.ip_forward = 1

[root@haproxy ~]# dnf install haproxy.x86_64 -y

[root@haproxy ~]# systemctl enable --now haproxyweb1

bash

[root@webserver1 ~]# vmset.sh eth0 192.168.0.10 webserver1 noroute

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/4)

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:8c:96:72 brd ff:ff:ff:ff:ff:ff

altname enp3s0

altname ens160

inet 192.168.0.10/24 brd 192.168.0.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe8c:9672/64 scope link tentative noprefixroute

valid_lft forever preferred_lft forever

webserver1

[root@webserver1 ~]# dnf install httpd -y

root@webserver1 ~]# echo webserver1 - 192.168.0.10 > /var/www/html/index.html

[root@webserver1 ~]# systemctl enable --now httpd

Created symlink /etc/systemd/system/multi-user.target.wants/httpd.service → /usr/lib/systemd/system/httpd.service.web2

bash

[root@webserver2 ~]# vmset.sh eth0 192.168.0.20 webserver2 noroute

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/4)

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:8c:96:72 brd ff:ff:ff:ff:ff:ff

altname enp3s0

altname ens160

inet 192.168.0.20/24 brd 192.168.0.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe8c:9672/64 scope link tentative noprefixroute

valid_lft forever preferred_lft forever

webserver2

[root@webserver2 ~]# dnf install httpd -y

[root@webserver2 ~]# echo webserver2 - 192.168.0.20 > /var/www/html/index.html

[root@webserver2 ~]# systemctl enable --now httpd

Created symlink /etc/systemd/system/multi-user.target.wants/httpd.service → /usr/lib/systemd/system/httpd.service.验证

bash

#在haproxy中访问

[root@haproxy ~]# curl 192.168.0.10

webserver1 - 192.168.0.10

[root@haproxy ~]# curl 192.168.0.20

webserver2 - 192.168.0.20haproxy基本负载`

bash

#设定vim中tab键的空格个数

[root@haproxy ~]# vim ~/.vimrc

set ts=4 ai

#前后端分开设定

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

frontend webcluster

bind *:80

mode http

use_backend webserver-80

backend webserver-80

server web1 192.168.0.10:80 check inter 3s fall 3 rise 5

server web2 192.168.0.20:80 check inter 3s fall 3 rise 5

[root@haproxy ~]# systemctl restart haproxy.service

#测试:

[root@haproxy ~]# curl 172.25.254.100

webserver2 - 192.168.0.20

[root@haproxy ~]# curl 172.25.254.100

webserver1 - 192.168.0.10

#用listen方式书写负载均衡

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

mode http

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5

[root@haproxy ~]# systemctl restart haproxy.service

#测试

[root@haproxy ~]# curl 172.25.254.100

webserver2 - 192.168.0.20

[root@haproxy ~]# curl 172.25.254.100

webserver1 - 192.168.0.102.2.2 指定log发送到哪里(指定日志发送到192.168.0.10)

bash

#在192.168.0.10 开启接受日志的端口

[root@webserver1 ~]# vim /etc/rsyslog.conf

32 module(load="imudp") # needs to be done just once

33 input(type="imudp" port="514")

[root@webserver1 ~]# systemctl restart rsyslog.service

#测试接受日志端口是否开启

[root@webserver1 ~]# netstat -antlupe | grep rsyslog

udp 0 0 0.0.0.0:514 0.0.0.0:* 0 74140 30965/rsyslogd

udp6 0 0 :::514 :::* 0 74141 30965/rsyslogd

#在haproxy主机中设定日志发送信息

[root@haproxy haproxy]# vim vim /etc/haproxy/haproxy.cfg

log 192.168.0.10 local2

[root@haproxy haproxy]# systemctl restart haproxy.service

#验证

─

[2026-01-23 15:13.54] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100

webserver1 - 192.168.0.10

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-23 15:19.05] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100

webserver2 - 192.168.0.20

[root@webserver1 ~]# cat /var/log/messages

Jan 23 15:19:06 192.168.0.100 haproxy[31310]: 172.25.254.1:9514 [23/Jan/2026:15:19:06.320] webcluster webcluster/haha 0/0/0/1/1 200 273 - - ---- 1/1/0/0/0 0/0 "GET / HTTP/1.1"

Jan 23 15:19:10 192.168.0.100 haproxy[31310]: 172.25.254.1:9519 [23/Jan/2026:15:19:10.095] webcluster webcluster/hehe 0/0/0/0/0 200 273 - - ---- 1/1/0/0/0 0/0 "GET / HTTP/1.1"2.2.3 haproxy的多进程

bash

#默认haproxy是单进程

[root@haproxy ~]# pstree -p | grep haproxy

|-haproxy(31439)---haproxy(31441)-+-{haproxy}(31442)

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

global

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 100000

user haproxy

group haproxy

daemon

nbproc 2 #写在这里

[root@haproxy ~]# systemctl restart haproxy.service

#验证

[root@haproxy ~]# pstree -p | grep haproxy

|-haproxy(31549)-+-haproxy(31551)

| `-haproxy(31552)

#多进程cpu绑定

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

nbproc 2

cpu-map 1 0 #第一个进程绑定cpu的第一个内核

cpu-map 2 1 #第二个进程绑定cpu的第二个内核

[root@haproxy ~]# systemctl restart haproxy.service

#为不同进程准备不同套接字

[root@haproxy ~]# systemctl stop haproxy.service

[root@haproxy ~]# rm -fr /var/lib/haproxy/stats

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

#stats socket /var/lib/haproxy/stats

stats socket /var/lib/haproxy/haproxy1 mode 600 level admin process 1

stats socket /var/lib/haproxy/haporxy2 mode 600 level admin process 1

[root@haproxy ~]# systemctl restart haproxy.service

#效果

[root@haproxy ~]# ll /var/lib/haproxy/

总用量 0

srw------- 1 root root 0 1月 23 15:41 haporxy2

srw------- 1 root root 0 1月 23 15:41 haproxy1注意多线程不能和多进程同时启用,一个work进程只能开启一个线程

2.2.4 haproxy的多线程

bash

#查看当前haproxy的进程信息

[root@haproxy ~]# pstree -p | grep haproxy

|-haproxy(31742)-+-haproxy(31744)

| `-haproxy(31745)

#查看haproxy子进程的线程信息

[root@haproxy ~]# cat /proc/31744/status | grep Threads

Threads: 1

#启用多线程

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

#nbproc 2

#cpu-map 1 0

#cpu-map 2 1

nbthread 2

# turn on stats unix socket

stats socket /var/lib/haproxy/stats

#stats socket /var/lib/haproxy/haproxy1 mode 600 level admin process 1

#stats socket /var/lib/haproxy/haporxy2 mode 660 level admin process 1

[root@haproxy ~]# systemctl restart haproxy.service

#效果

[root@haproxy ~]# pstree -p | grep haproxy

|-haproxy(31858)---haproxy(31860)---{haproxy}(31861)

[root@haproxy ~]# cat /proc/31860/status | grep Threads

Threads: 22.2.5 ip透传

bash

#开启ip透传的方式

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

。。。忽略。。。。。

defaults

mode http

log global

option httplog

option dontlognull

option http-server-close

option forwardfor except 127.0.0.0/8 #开启haproxy透传功能

option redispatch

retries 3

timeout http-request 10s

timeout queue 1m

timeout connect 10s

timeout client 1m

timeout server 1m

timeout http-keep-alive 10s

timeout check 10s

maxconn 3000

#在rs中设定采集透传IP 添加\"%{X-Forwarded-For}i\"

[root@webserver2 ~]# vim /etc/httpd/conf/httpd.conf

201 LogFormat "%h %l %u %t \"%r\" %>s %b \"%{X-Forwarded-For}i\" \"%{Referer}i\" \"%{User-Agent}i \"" combined

[root@webserver2 ~]# systemctl restart httpd

#测试效果

[root@webserver2 ~]# cat /etc/httpd/logs/access_log

192.168.0.100 - - [26/Jan/2026:10:10:29 +0800] "GET / HTTP/1.1" 200 26 "172.25.254.1" "-" "curl/7.65.0"

192.168.0.100 - - [26/Jan/2026:10:10:30 +0800] "GET / HTTP/1.1" 200 26 "172.25.254.1" "-" "curl/7.65.0"

192.168.0.100 - - [26/Jan/2026:10:10:30 +0800] "GET / HTTP/1.1" 200 26 "172.25.254.1" "-" "curl/7.65.0"2.2.6 socat热更新工具

热更新

在服务或软件不停止的情况下更新软件或服务的工作方式,完成对软件不停工更新,典型的热更新设备,usb,在使用usb进行插拔时,电脑系统时不需要停止工作的,这中设备叫热插拔设备,

bash

#安装socat

[root@haproxy ~]# dnf install socat -y

[root@haproxy ~]# socat -h

#对socket进行授权

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

stats socket /var/lib/haproxy/stats mode 600 level admin

[root@haproxy ~]# rm -rf /var/lib/haproxy/*

[root@haproxy ~]# systemctl restart haproxy.service

[root@haproxy ~]# ll /var/lib/haproxy/

总用量 0

srw------- 1 root root 0 1月 25 10:04 stats

[root@haproxy ~]# echo "show servers state" | socat stdio /var/lib/haproxy/stats

1

# be_id be_name srv_id srv_name srv_addr srv_op_state srv_admin_state srv_uweight srv_iweight srv_time_since_last_change srv_check_status srv_check_result srv_check_health srv_check_state srv_agent_state bk_f_forced_id srv_f_forced_id srv_fqdn srv_port srvrecord srv_use_ssl srv_check_port srv_check_addr srv_agent_addr srv_agent_port

2 webcluster 1 haha 192.168.0.10 2 0 1 1 275 6 3 7 6 0 0 0 - 80 - 0 0 - - 0

2 webcluster 2 hehe 192.168.0.20 2 0 1 1 275 6 3 7 6 0 0 0 - 80 - 0 0 - - 0

[root@haproxy ~]# echo "get weight webcluster/hehe" | socat stdio /var/lib/haproxy/stats

1 (initial 1)

[root@haproxy ~]# echo "set weight webcluster/hehe 4 " | socat stdio /var/lib/haproxy/stats

[root@haproxy ~]# echo "get weight webcluster/hehe" | socat stdio /var/lib/haproxy/stats

4 (initial 1)

#测试

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100; done

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver2 - 192.168.0.202.2.7 静态算法

1.static-rr,

基于权重的轮询调度

不支持运行时利用socat进行权重的动态调整(只支持0和1,不支持其它值)

不支持端服务器慢启动

其后端主机数量没有限制,相当于LVS中的 wrr

慢启动是指在服务器刚刚启动上不会把他所应该承担的访问压力全部给它,而是先给一部分,当没

问题后在给一部分

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

balance static-rr

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5 weight 2

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#测试

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100; done

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

#检测是否支持热更新

[root@haproxy ~]# echo "get weight webcluster/haha" | socat stdio /var/lib/haproxy/stats

2 (initial 2)

[root@haproxy ~]# echo "set weight webcluster/haha 1 " | socat stdio /var/lib/haproxy/stats Backend is using a static LB algorithm and only accepts weights '0%' and '100%'2.first

根据服务器在列表中的位置,自上而下进行调度

其只会当第一台服务器的连接数达到上限,新请求才会分配给下一台服务

其会忽略服务器的权重设置

不支持用socat进行动态修改权重,可以设置0和1,可以设置其它值但无效

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

balance first

server haha 192.168.0.10:80 maxconn 1 check inter 3s fall 3 rise 5 weight 2

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#测试:在一个shell中执行持续访问

[Administrator.DESKTOP-VJ307M3] ➤ while true; do curl 172.25.254.100; done

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

.... .....

#之后把两个server的位置切换在观察2.2.8 动态算法

1.roundrobin

1. 基于权重的轮询动态调度算法

-

支持权重的运行时调整,不同于lvs中的rr轮训模式,

-

HAProxy中的roundrobin支持慢启动(新加的服务器会逐渐增加转发数),

-

其每个后端backend中最多支持4095个real server,

-

支持对real server权重动态调整,

-

roundrobin为默认调度算法,此算法使用广泛

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

balance roundrobin

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5 weight 2

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#测试

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100; done

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

#动态权重更新

[root@haproxy ~]# echo "get weight webcluster/haha" | socat stdio /var/lib/haproxy/stats

2 (initial 2)

[root@haproxy ~]# echo "set weight webcluster/haha 1 " | socat stdio /var/lib/haproxy/stats

[root@haproxy ~]# echo "get weight webcluster/haha" | socat stdio /var/lib/haproxy/stats

1 (initial 2)

#效果

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100; done

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.102 leastconn

leastconn加权的最少连接的动态

支持权重的运行时调整和慢启动,即:根据当前连接最少的后端服务器而非权重进行优先调度(新客户

端连接)

比较适合长连接的场景使用,比如:MySQL等场景。

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

balance leastconn

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5 weight 2

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100; done

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver2 - 192.168.0.20

webserver1 - 192.168.0.10

webserver2 - 192.168.0.202.2.9 混合算法

1.source

源地址hash,基于用户源地址hash并将请求转发到后端服务器,后续同一个源地址请求将被转发至同一个后端web服务器。

此方式当后端服务器数据量发生变化时,会导致很多用户的请求转发至新的后端服

务器,默认为静态方式,但是可以通过hash-type支持的选项更改这个算法一般是在不插入Cookie的TCP模式下使用,也可给拒绝会话cookie的客户提供最好的会话粘性,适用于session会话保持但不支持cookie和缓存的场景源地址有两种转发客户端请求到后端服务器的服务器选取计算方式,分别是取模法和一致性hash

bash

#默认静态算法

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

balance source

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5 weight 2

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#测试:

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100; done

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

#source动态算法

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

balance source

hash-type consistent

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5 weight 2

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#测试:

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100; done

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.202.uri

基于对用户请求的URI的左半部分或整个uri做hash,再将hash结果对总权重进行取模后,根据最终结果将请求转发到后端指定服务器

bash

#主备实验环境

[root@webserver1 ~]# echo RS1 - 192.168.0.10 > /var/www/html/index1.html

[root@webserver1 ~]# echo RS1 - 192.168.0.10 > /var/www/html/index2.html

[root@webserver2 ~]# echo RS2 - 192.168.0.20 > /var/www/html/index1.html

[root@webserver2 ~]# echo RS2 - 192.168.0.20 > /var/www/html/index2.html

#设定uri算法

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

balance uri

hash-type consistent

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5 weight 2

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#测试:

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100/index.html; done

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-25 14:51.59] ~

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100/index2.html; done

RS2 - 172.168.0.20

RS2 - 172.168.0.20

RS2 - 172.168.0.20

RS2 - 172.168.0.20

RS2 - 172.168.0.20

RS2 - 172.168.0.20

RS2 - 172.168.0.20

RS2 - 172.168.0.20

RS2 - 172.168.0.20

RS2 - 172.168.0.20

3.url_param

url_param对用户请求的url中的 params 部分中的一个参数key对应的value值作hash计算,并由服务器总权重相除以后派发至某挑出的服务器,后端搜索同一个数据会被调度到同一个服务器,多用与电商通常用于追踪用户,以确保来自同一个用户的请求始终发往同一个real server

如果无没key,将按roundrobin算法

bash

#主备实验环境

[root@webserver1 ~]# echo RS1 - 192.168.0.10 > /var/www/html/index1.html

[root@webserver1 ~]# echo RS1 - 192.168.0.10 > /var/www/html/index2.html

[root@webserver2 ~]# echo RS2 - 192.168.0.20 > /var/www/html/index1.html

[root@webserver2 ~]# echo RS2 - 192.168.0.20 > /var/www/html/index2.html

#设定url_param算法

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

balance url_param name

hash-type consistent

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5 weight 2

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#测试:

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100/index.html?name=lee; done

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

webserver2 - 192.168.0.20

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..10}; do curl 172.25.254.100/index.html?name=redhat; done

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.10

webserver1 - 192.168.0.104.hdr

针对用户每个http头部(header)请求中的指定信息做hash,

此处由 name 指定的http首部将会被取出并做hash计算,

然后由服务器总权重取模以后派发至某挑出的服务器,如果无有效值,则会使用默认的轮询调度。

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

balance hdr(User-Agent)

hash-type consistent

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5 weight 2

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#测试:

[Administrator.DESKTOP-VJ307M3] ➤ curl -A "lee" 172.25.254.100

webserver2 - 192.168.0.20

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-25 15:00.53] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl -A "lee" 172.25.254.100

webserver2 - 192.168.0.20

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-25 15:00.54] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl -A "timinglee" 172.25.254.100

webserver2 - 192.168.0.20

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-25 15:01.00] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl -A "timing" 172.25.254.100

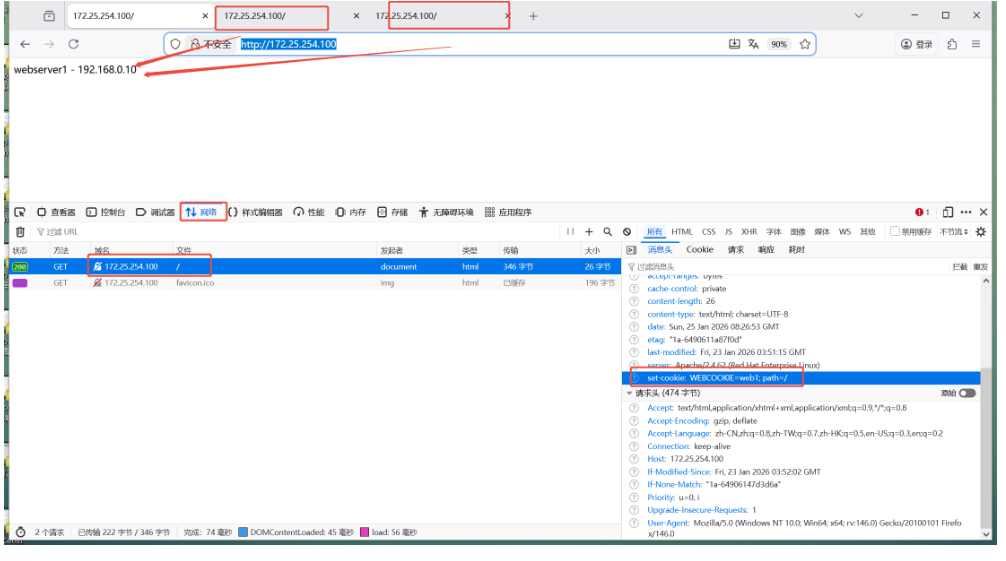

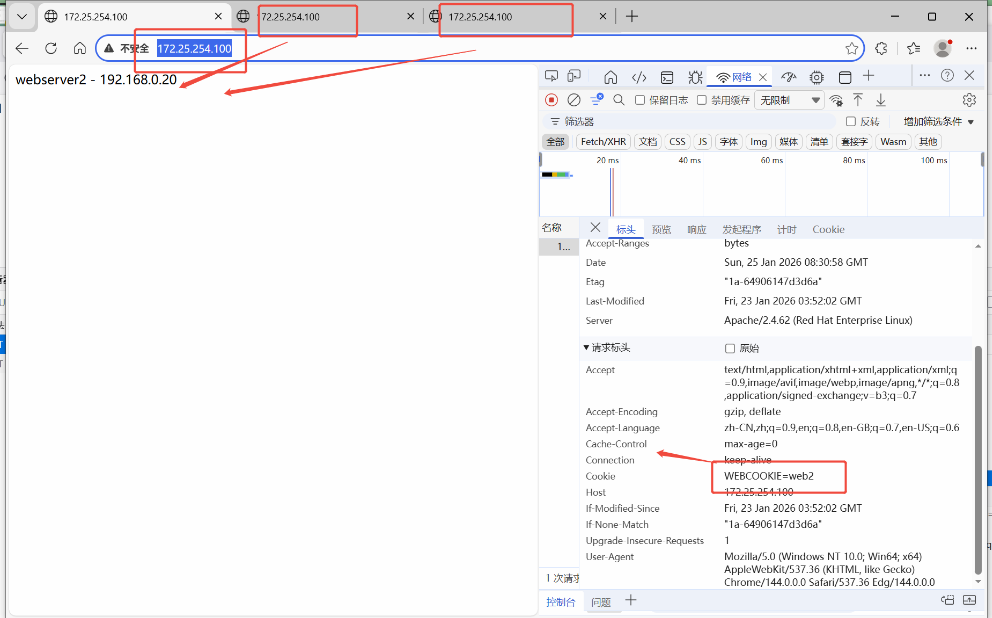

webserver1 - 192.168.0.102.2.10 cookie会话保持

让同一台客户端中同一个浏览器中访问的是同一个服务器

bash

#配合基于cookie的会话保持方法

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

balance roundrobin

hash-type consistent

cookie WEBCOOKIE insert nocache indirect

server haha 192.168.0.10:80 cookie web1 check inter 3s fall 3 rise 5 weight 2

server hehe 192.168.0.20:80 cookie web2 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.servicefirefox

edge

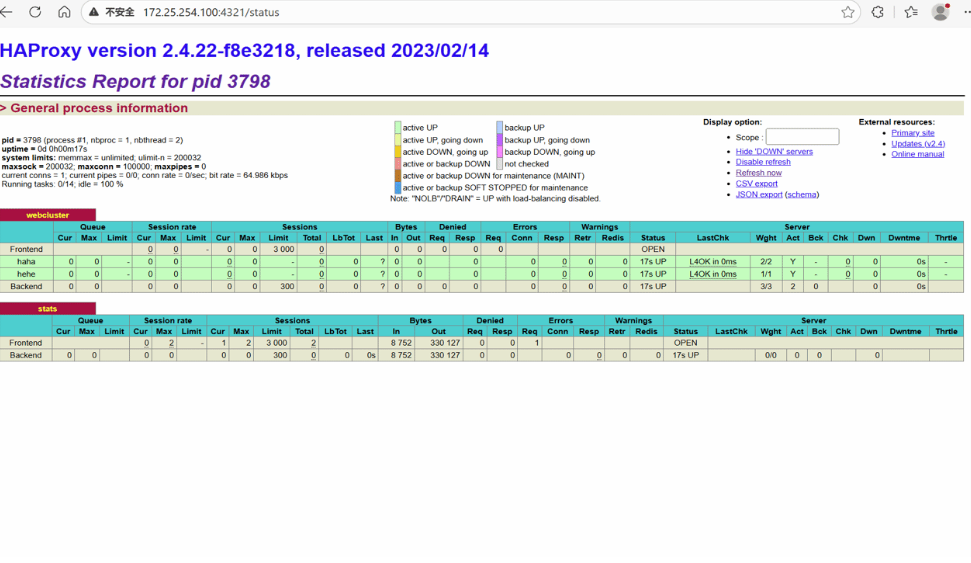

2.2.11 的状态页

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen stats

mode http

bind 0.0.0.0:4321

stats enable

log global

# stats refresh

stats uri /status

stats auth lee:lee

[root@haproxy ~]# systemctl restart haproxy.service

bash

开启自动刷新

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen stats

mode http

bind 0.0.0.0:4321

stats enable

log global

stats refresh 1

stats uri /status

stats auth lee:lee

[root@haproxy ~]# systemctl restart haproxy.service

2.2.12 四层IP透传

bash

#环境设置

#在RS中把apache停止

[root@webserver1 ~]# systemctl disable --now httpd

[root@webserver2 ~]# systemctl disable --now httpd

#部署nginx

[root@webserver1 ~]# dnf install nginx -y

[root@webserver2 ~]# dnf install nginx -y

[root@webserver1 ~]# echo RS1 - 192.168.0.10 > /usr/share/nginx/html/index.html

[root@webserver2 ~]# echo RS2 - 192.168.0.20 > /usr/share/nginx/html/index.html

[root@webserver1 ~]# systemctl enable --now nginx

[root@webserver2 ~]# systemctl enable --now nginx

#测环境

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..5}; do curl 172.25.254.100; done

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

#启用nginx的四层访问控制

[root@webserver1 ~]# vim /etc/nginx/nginx.conf

server {

listen 80 proxy_protocol; #启用四层访问控制

listen [::]:80;

server_name _;

root /usr/share/nginx/html;

# Load configuration files for the default server block.

include /etc/nginx/default.d/*.conf;

error_page 404 /404.html;

location = /404.html {

}

[root@webserver2 ~]# vim /etc/nginx/nginx.conf

server {

listen 80 proxy_protocol; #启用四层访问控制

listen [::]:80;

server_name _;

root /usr/share/nginx/html;

# Load configuration files for the default server block.

include /etc/nginx/default.d/*.conf;

error_page 404 /404.html;

location = /404.html {

}

[root@webserver1 ~]# systemctl restart nginx.service

[root@webserver2 ~]# systemctl restart nginx.service

#测试

Administrator.DESKTOP-VJ307M3] ➤ for i in {1..5}; do curl 172.25.254.100; done

<html><body><h1>502 Bad Gateway</h1>

The server returned an invalid or incomplete response.

</body></html>

<html><body><h1>502 Bad Gateway</h1>

The server returned an invalid or incomplete response.

</body></html>

<html><body><h1>502 Bad Gateway</h1>

The server returned an invalid or incomplete response.

</body></html>

<html><body><h1>502 Bad Gateway</h1>

The server returned an invalid or incomplete response.

</body></html>

<html><body><h1>502 Bad Gateway</h1>

The server returned an invalid or incomplete response.

</body></html>

出现上述报错标识nginx只支持四层访问

#设定haproxy访问4层

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

mode tcp #四层访问

balance roundrobin

server haha 192.168.0.10:80 send-proxy check inter 3s fall 3 rise 5 weight 1

server hehe 192.168.0.20:80 send-proxy check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#测试四层访问

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..5}; do curl 172.25.254.100; done

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

#设置4层ip透传

[root@webserver1&2 ~]# vim /etc/nginx/nginx.conf

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'"$proxy_protocol_addr"' #采集透传信息

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

[root@webserver1&2 ~]# systemctl restart nginx.service

#测试

[Administrator.DESKTOP-VJ307M3] ➤ for i in {1..5}; do curl 172.25.254.100; done

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS2 - 192.168.0.20

RS1 - 192.168.0.10

RS2 - 192.168.0.20

[root@webserver1 ~]# cat /var/log/nginx/access.log

192.168.0.100 - - [26/Jan/2026:10:52:40 +0800] "GET / HTTP/1.1" "172.25.254.1"200 19 "-" "curl/7.65.0" "-"

192.168.0.100 - - [26/Jan/2026:10:53:49 +0800] "GET / HTTP/1.1" "172.25.254.1"200 19 "-" "curl/7.65.0" "-"

192.168.0.100 - - [26/Jan/2026:10:53:50 +0800] "GET / HTTP/1.1" "172.25.254.1"200 19 "-" "curl/7.65.0" "-"

192.168.0.100 - - [26/Jan/2026:10:53:50 +0800] "GET / HTTP/1.1" "172.25.254.1"200 19 "-" "curl/7.65.0" "-"2.2.13 haproxy的四层负载

RS

bash

#部署mariadb数据库

[root@webserver1+2 ~]# dnf install mariadb-server mariadb -y

[root@webserver1+1 ~]# vim /etc/my.cnf.d/mariadb-server.cnf

[mysqld]

server_id=10 #设定数据库所在主机的id标识,在20上设定id为20

datadir=/var/lib/mysql

socket=/var/lib/mysql/mysql.sock

log-error=/var/log/mariadb/mariadb.log

pid-file=/run/mariadb/mariadb.pid

#建立远程登录用户并授权

[root@webserver2+1 ~]# mysql

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 3

Server version: 10.5.27-MariaDB MariaDB Server

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> CREATE USER 'lee'@'%' identified by 'lee';#创建用户

Query OK, 0 rows affected (0.001 sec)

MariaDB [(none)]> CREATE USER 'lee'@'%' identified by 'lee';

MariaDB [(none)]> GRANT ALL ON *.* TO 'lee'@'%';#给予权限

Query OK, 0 rows affected (0.000 sec)

#测试

[root@webserver2 ~]# mysql -ulee -plee -h 192.168.0.20 #测试10时修改ip即可

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 4

Server version: 10.5.27-MariaDB MariaDB Server

Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]>haproxy

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen mariadbcluster

bind *:6663

mode tcp #改为tcp

balance roundrobin

server haha 192.168.0.10:3306 check inter 3s fall 3 rise 5 weight 1

server hehe 192.168.0.20:3306 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#检测端口

[root@haproxy ~]# netstat -antlupe | grep haproxy

tcp 0 0 0.0.0.0:6663 0.0.0.0:* LISTEN 0 44430 2136/haproxy

tcp 0 0 0.0.0.0:80 0.0.0.0:* LISTEN 0 44429 2136/haproxy

tcp 0 0 0.0.0.0:4321 0.0.0.0:* LISTEN 0 44431 2136/haproxy

#测试:

[Administrator.DESKTOP-VJ307M3] ➤ mysql -ulee -plee -h172.25.254.100 -P 6663 #登陆

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 7

Server version: 10.5.27-MariaDB MariaDB Server

Copyright (c) 2000, 2017, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> SELECT @@server_id;#查找是哪一个mysql

+-------------+

| @@server_id |

+-------------+

| 20 |

+-------------+

1 row in set (0.00 sec)

MariaDB [(none)]> quit

Bye

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-26 11:39.31] ~

[Administrator.DESKTOP-VJ307M3] ➤ mysql -ulee -plee -h172.25.254.100 -P 6663

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 8

Server version: 10.5.27-MariaDB MariaDB Server

Copyright (c) 2000, 2017, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> SELECT @@server_id;#查找

+-------------+

| @@server_id |

+-------------+

| 10 |

+-------------+

1 row in set (0.00 sec)

MariaDB [(none)]>2.2.14 backup参数(备份)

将后端服务器标记为备份状态,只在所有非备份主机down机时提供服务,

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen mariadbcluster

bind *:6663

mode tcp

balance roundrobin

server haha 192.168.0.10:3306 check inter 3s fall 3 rise 5 weight 1

server hehe 192.168.0.20:3306 check inter 3s fall 3 rise 5 weight 1 backup

[root@haproxy ~]# systemctl restart haproxy.service

#测试

[Administrator.DESKTOP-VJ307M3] ➤ mysql -ulee -plee -h172.25.254.100 -P 6663

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 4

Server version: 10.5.27-MariaDB MariaDB Server

Copyright (c) 2000, 2017, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> SELECT @@server_id;

+-------------+

| @@server_id |

+-------------+

| 10 |

+-------------+

1 row in set (0.00 sec)

MariaDB [(none)]> quit

Bye

[Administrator.DESKTOP-VJ307M3] ➤ mysql -ulee -plee -h172.25.254.100 -P 6663

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 4

Server version: 10.5.27-MariaDB MariaDB Server

Copyright (c) 2000, 2017, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> SELECT @@server_id;

+-------------+

| @@server_id |

+-------------+

| 10 |

+-------------+

1 row in set (0.00 sec)

MariaDB [(none)]> quit

Bye

#关闭10的mariadb并等待1分钟

[root@webserver1 ~]# systemctl stop mariadb

[Administrator.DESKTOP-VJ307M3] ➤ mysql -ulee -plee -h172.25.254.100 -P 6663

ERROR 2013 (HY000): Lost connection to MySQL server at 'reading initial communication packet', system error: 1 "Operation not permitted" #标识haproxy 没有完成故障转换,需要等待

[Administrator.DESKTOP-VJ307M3] ➤ mysql -ulee -plee -h172.25.254.100 -P 6663

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 11

Server version: 10.5.27-MariaDB MariaDB Server

Copyright (c) 2000, 2017, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> SELECT @@server_id;

+-------------+

| @@server_id |

+-------------+

| 20 |

+-------------+

1 row in set (0.00 sec)

MariaDB [(none)]>

#还原故障主机等待片刻

[root@webserver1 ~]# systemctl start mariadb

[Administrator.DESKTOP-VJ307M3] ➤ mysql -ulee -plee -h172.25.254.100 -P 6663

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 4

Server version: 10.5.27-MariaDB MariaDB Server

Copyright (c) 2000, 2017, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> SELECT @@server_id;

+-------------+

| @@server_id |

+-------------+

| 10 |

+-------------+

1 row in set (0.00 sec)

MariaDB [(none)]>2.2.15 haproxy自定义错误页面

1.配置sorryserver

正常的所有服务器如果出现宕机,那么客户将被定向到指定的主机中,这个当业务主机出问题时被临时访问的主机叫做sorryserver

bash

#在新主机中安装apache(可以用haproxy主机代替)

[root@haproxy ~]# dnf install httpd -y

[root@haproxy ~]# vim /etc/httpd/conf/httpd.conf

47 Listen 8080

[root@haproxy ~]# systemctl enable --now httpd

Created symlink /etc/systemd/system/multi-user.target.wants/httpd.service → /usr/lib/systemd/system/httpd.service.

[root@haproxy ~]# echo "错误" > /var/www/html/index.html

#配置sorryserver上线、

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

listen webcluster

bind *:80

mode tcp

balance roundrobin

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5 weight 1

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

server wuwu 192.168.0.100:8080 backup #sorryserver

[root@haproxy ~]# systemctl restart haproxy.service

#测试

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100

webserver1 - 192.168.0.10

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-26 14:22.33] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100

webserver2 - 192.168.0.20

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-26 14:22.35] ~

#关闭两台正常的业务主机

[root@webserver1+2 ~]# systemctl stop httpd

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100

错误2.自定义错误页面

当所有主机包括sorryserver都宕机了,那么haproxy会提供一个默认访问的错误页面,这个错误页面跟报错代码有关,这个页面可以通过定义来机型设置

bash

#出现的错误页面

[root@webserver1+2 ~]# systemctl stop httpd

[root@haproxy ~]# systemctl stop httpd

#所有后端web服务都宕机

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100

<html><body><h1>503 Service Unavailable</h1>

No server is available to handle this request.

</body></html>

[root@haproxy ~]# mkdir /errorpage/html/ -p

[root@haproxy ~]# vim /errorpage/html/503.http

HTTP/1.0 503 Service Unavailable

Cache-Control: no-cache

Connection: close

Content-Type: text/html;charset=UTF-8

<html><body><h1>什么动物生气最安静</h1>

大猩猩!!

</body></html>

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

defaults

mode http

log global

option httplog

option dontlognull

option http-server-close

option forwardfor except 127.0.0.0/8

option redispatch

retries 3

timeout http-request 10s

timeout queue 1m

timeout connect 10s

timeout client 1m

timeout server 1m

timeout http-keep-alive 10s

timeout check 10s

maxconn 3000

errorfile 503 /errorpage/html/503.http #error 页面

[root@haproxy ~]# systemctl restart haproxy.service

#测试

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100

<html><body><h1>什么动物生气最安静</h1>

大猩猩!!

</body></html>3.错误指定到特定网站

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

defaults

mode http

log global

option httplog

option dontlognull

option http-server-close

option forwardfor except 127.0.0.0/8

option redispatch

retries 3

timeout http-request 10s

timeout queue 1m

timeout connect 10s

timeout client 1m

timeout server 1m

timeout http-keep-alive 10s

timeout check 10s

maxconn 3000

errorloc 503 http://www.baidu.com #error 页面

[root@haproxy ~]# systemctl restart haproxy.service

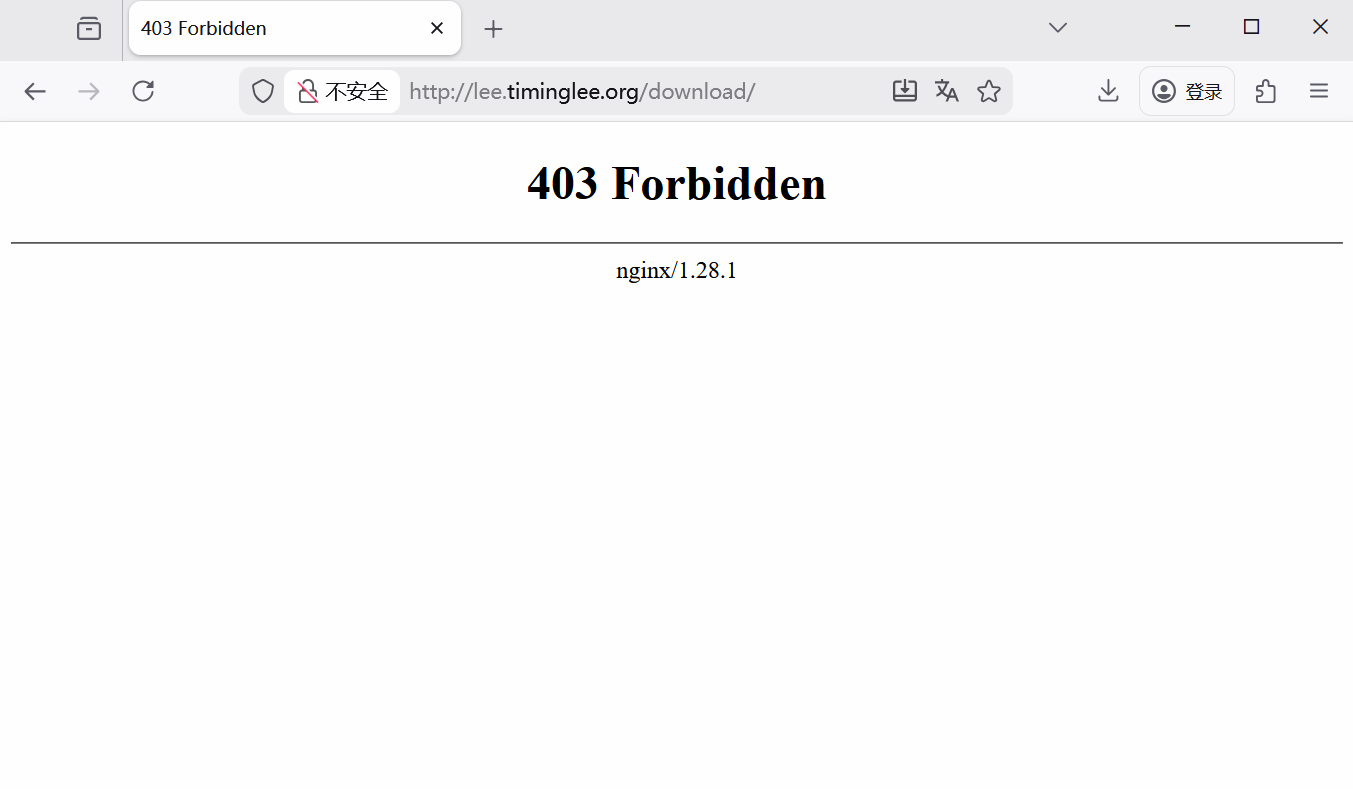

#在浏览器中访问2.2.16 haproxy ACL控制访问

1.做好本地解析

bash

#在浏览器或者curl主机中设定本地解析

在windows中设定解析

#在Linux中设定解析

[Administrator.DESKTOP-VJ307M3] ➤ vim /etc/hosts

172.25.254.100 www.timinglee.org bbs.timinglee.org news.timinglee.org login.timinglee.org www.lee.org www.lee.com

#测试

ping bbs.timinglee.org2.基础acl展示

bash

#在访问的网址中,所有以.com 结尾的访问10,其他访问20

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

frontend webcluster

bind *:80

mode http

acl test hdr_end(host) -i .com #acl列表

use_backend webserver-80-web1 if test #acl列表访问匹配

default_backend webserver-80-web2 #acl列表访问不匹配

backend webserver-80-web1

server web1 192.168.0.10:80 check inter 3s fall 3 rise 5

backend webserver-80-web2

server web2 192.168.0.20:80 check inter 3s fall 3 rise 5

#测试

[2026-01-26 15:50.45] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl www.lee.com

webserver1 - 192.168.0.10

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-26 15:50.45] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl www.lee.org

webserver2 - 192.168.0.20

#基于访问头部

acl head hdr_beg(host) -i bbs.

use_backend webserver-80-web1 if head

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

frontend webcluster

bind *:80

mode http

acl test hdr_end(host) -i .com #acl列表

acl head hdr_beg(host) -i bbs.

use_backend webserver-80-web1 if head

default_backend webserver-80-web2

backend webserver-80-web1

server web1 192.168.0.10:80 check inter 3s fall 3 rise 5

backend webserver-80-web2

server web2 192.168.0.20:80 check inter 3s fall 3 rise 5

#测试效果

#base参数acl

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

frontend webcluster

bind *:80

mode http

acl pathdir base_dir -i /lee

use_backend webserver-80-web1 if pathdir

default_backend webserver-80-web2 #acl列表访问不匹配

backend webserver-80-web1

server web1 192.168.0.10:80 check inter 3s fall 3 rise 5

backend webserver-80-web2

server web2 192.168.0.20:80 check inter 3s fall 3 rise 5

[root@webserver1+2 ~]# mkdir -p /var/www/html/lee/

[root@webserver1+2 ~]# mkdir -p /var/www/html/lee/test/

[root@webserver1 ~]# echo lee - 192.168.0.10 > /var/www/html/lee/index.html

[root@webserver1 ~]# echo lee/test - 192.168.0.10 > /var/www/html/lee/test/index.html

[root@webserver2 ~]# echo lee - 192.168.0.20 > /var/www/html/lee/index.html

[root@webserver2 ~]# echo lee/test - 192.168.0.10 > /var/www/html/lee/test/index.html

#测试

[2026-01-26 16:01.56] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100/lee/

lee - 192.168.0.10

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-26 16:01.57] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100/lee/test/

lee/test - 192.168.0.10

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-26 16:02.01] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100/index.html

webserver2 - 192.168.0.20

#acl禁止列表黑名单

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

frontend webcluster

bind *:80

mode http

acl test hdr_end(host) -i .com #acl列表

use_backend webserver-80-web1 if test #acl列表访问匹配

default_backend webserver-80-web2 #acl列表访问不匹配

acl invalid_src src 172.25.254.1

http-request deny if invalid_src

backend webserver-80-web1

server web1 192.168.0.10:80 check inter 3s fall 3 rise 5

backend webserver-80-web2

server web2 192.168.0.20:80 check inter 3s fall 3 rise 5

#测试:

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100

<html><body><h1>403 Forbidden</h1>

Request forbidden by administrative rules.

</body></html>

#禁止列表白名单

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

frontend webcluster

bind *:80

mode http

acl test hdr_end(host) -i .com #acl列表

use_backend webserver-80-web1 if test #acl列表访问匹配

default_backend webserver-80-web2 #acl列表访问不匹配

acl invalid_src src 172.25.254.1

http-request deny if ! invalid_src

backend webserver-80-web1

server web1 192.168.0.10:80 check inter 3s fall 3 rise 5

backend webserver-80-web2

server web2 192.168.0.20:80 check inter 3s fall 3 rise 5

#测试:

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.100

webserver2 - 192.168.0.20

[root@haproxy ~]# curl 172.25.254.100

<html><body><h1>403 Forbidden</h1>

Request forbidden by administrative rules.

</body></html>2.2.17 haproxy全站加密

1.先制作证书

bash

[root@haproxy ~]# mkdir /etc/haproxy/certs/

[root@haproxy ~]# openssl req -newkey rsa:2048 -nodes -sha256 -keyout /etc/haproxy/certs/timinglee.org.key -x509 -days 365 -out /etc/haproxy/certs/timinglee.org.crt

You are about to be asked to enter information that will be incorporated

into your certificate request.

What you are about to enter is what is called a Distinguished Name or a DN.

There are quite a few fields but you can leave some blank

For some fields there will be a default value,

If you enter '.', the field will be left blank.

-----

Country Name (2 letter code) [XX]:CN

State or Province Name (full name) []:Shaanxi

Locality Name (eg, city) [Default City]:Xi'an

Organization Name (eg, company) [Default Company Ltd]:timinglee

Organizational Unit Name (eg, section) []:linux

Common Name (eg, your name or your server's hostname) []:www.timinglee.org

Email Address []:admin@timinglee.org

[root@haproxy ~]# ls /etc/haproxy/certs/

timinglee.org.crt timinglee.org.key

[root@haproxy ~]# cat /etc/haproxy/certs/timinglee.org.{key,crt} > /etc/haproxy/certs/timinglee.pem2.全站加密

bash

[root@haproxy ~]# vim /etc/haproxy/haproxy.cfg

frontend webcluster-http

bind *:80

redirect scheme https if ! { ssl_fc }

listen webcluster-https

bind *:443 ssl crt /etc/haproxy/certs/timinglee.pem #公钥和密钥的位置

mode http

balance roundrobin

server haha 192.168.0.10:80 check inter 3s fall 3 rise 5 weight 1

server hehe 192.168.0.20:80 check inter 3s fall 3 rise 5 weight 1

[root@haproxy ~]# systemctl restart haproxy.service

#测试:

[Administrator.DESKTOP-VJ307M3] ➤ curl -v -k -L http://172.25.254.100

* Trying 172.25.254.100:80...

* TCP_NODELAY set

* Connected to 172.25.254.100 (172.25.254.100) port 80 (#0)

> GET / HTTP/1.1

> Host: 172.25.254.100

> User-Agent: curl/7.65.0

> Accept: */*

>

* Mark bundle as not supporting multiuse

< HTTP/1.1 302 Found

< content-length: 0

< location: https://172.25.254.100/ #转换信息体现

< cache-control: no-cache

<

* Connection #0 to host 172.25.254.100 left intact

* Issue another request to this URL: 'https://172.25.254.100/'

* Trying 172.25.254.100:443...

* TCP_NODELAY set

* Connected to 172.25.254.100 (172.25.254.100) port 443 (#1)

* ALPN, offering http/1.1

* Cipher selection: ALL:!EXPORT:!EXPORT40:!EXPORT56:!aNULL:!LOW:!RC4:@STRENGTH

* successfully set certificate verify locations:

* CAfile: /etc/pki/tls/certs/ca-bundle.crt

CApath: none

* TLSv1.2 (OUT), TLS header, Certificate Status (22):

* TLSv1.2 (OUT), TLS handshake, Client hello (1):

* TLSv1.2 (IN), TLS handshake, Server hello (2):

* TLSv1.2 (IN), TLS handshake, Certificate (11):

* TLSv1.2 (IN), TLS handshake, Server key exchange (12):

* TLSv1.2 (IN), TLS handshake, Server finished (14):

* TLSv1.2 (OUT), TLS handshake, Client key exchange (16):

* TLSv1.2 (OUT), TLS change cipher, Change cipher spec (1):

* TLSv1.2 (OUT), TLS handshake, Finished (20):

* TLSv1.2 (IN), TLS change cipher, Change cipher spec (1):

* TLSv1.2 (IN), TLS handshake, Finished (20):

* SSL connection using TLSv1.2 / ECDHE-RSA-AES256-GCM-SHA384

* ALPN, server did not agree to a protocol

* Server certificate:

* subject: C=CN; ST=Shaanxi; L=Xi'an; O=timinglee; OU=linux; CN=www.timinglee.org; emailAddress=admin@timinglee.org

* start date: Jan 26 08:38:57 2026 GMT

* expire date: Jan 26 08:38:57 2027 GMT

* issuer: C=CN; ST=Shaanxi; L=Xi'an; O=timinglee; OU=linux; CN=www.timinglee.org; emailAddress=admin@timinglee.org

* SSL certificate verify result: self signed certificate (18), continuing anyway.

> GET / HTTP/1.1

> Host: 172.25.254.100

> User-Agent: curl/7.65.0

> Accept: */*

>

* Mark bundle as not supporting multiuse

< HTTP/1.1 200 OK

< date: Mon, 26 Jan 2026 08:48:34 GMT

< server: Apache/2.4.62 (Red Hat Enterprise Linux)

< last-modified: Fri, 23 Jan 2026 03:52:02 GMT

< etag: "1a-64906147d3d6a"

< accept-ranges: bytes

< content-length: 26

< content-type: text/html; charset=UTF-8

<

webserver2 - 192.168.0.20

* Connection #1 to host 172.25.254.100 left intact三.keepalived

3.1 keepzlived介绍

后端部署中,最怕"单点故障"------一台服务器宕机,整个服务就崩了。Keepalived 就是一款轻量工具,核心作用只有一个:避免单点故障,实现服务自动切换,全程不用人工操作

1.做什么

它就像一个"自动切换开关",搭配两台服务器(主服务器+备服务器)使用:

-

正常情况下,主服务器工作,对外提供一个统一的访问地址(叫VIP),客户端只需要访问这个VIP;

-

如果主服务器宕机、服务崩溃,Keepalived 会立刻检测到,自动让备服务器"接手"VIP,继续提供服务;

-

主服务器修复后,会自动抢回VIP,恢复主服务器身份,备服务器回到待命状态。

简单说:有了它,客户端访问服务不会因为一台服务器故障而中断,全程无感知。

2.核心逻辑

Keepalived 的核心是"心跳检测+自动切换",不用懂复杂协议,记住这3点就够:

-

两台服务器(主+备)组成一个小组,共享1个VIP(客户端访问的唯一地址);

-

主服务器会每隔1秒,给备服务器发一个"我还活着"的信号(叫心跳);

-

备服务器没收到这个信号(超过3秒),就判定主服务器挂了,立刻接手VIP,开始工作。

3.2实验

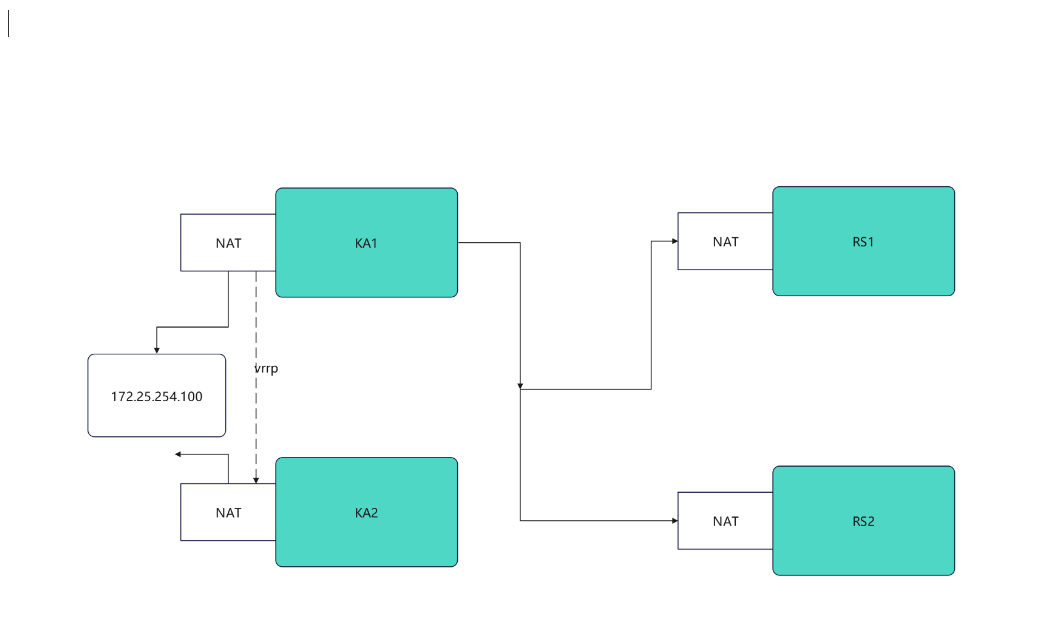

3.2.1 实验环境佳加虚拟路由配置

VRRP 虚拟路由器冗余协议

bash

#检测语法是否错误

keepalived -t -f /etc/keepalived/my_keepalived.confKA1,KA2

bash

[root@KA1 ~]# vmset.sh eth0 172.25.254.50 KA1

[root@KA2 ~]# vmset.sh eth0 172.25.254.60 KA2

#设定本地解析

[root@KA1 ~]# vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.50 KA1

172.25.254.60 KA2

172.25.254.10 rs1

172.25.254.20 rs2

[root@KA1 ~]# for i in 60 10 20

> do

> scp /etc/hosts 172.25.254.$i:/etc/hosts

> done

#在所有主机中查看/etc/hosts

#在ka1中开启时间同步服务

[root@KA1 ~]# vim /etc/chrony.conf

26 allow 0.0.0.0/0

29 local stratum 10

[root@KA1 ~]# systemctl restart chronyd

[root@KA1 ~]# systemctl enable --now chronyd

#在ka2中使用ka1的时间同步服务

[root@KA2 ~]# vim /etc/chrony.conf

pool 172.25.254.50 iburst

[root@KA2 ~]# systemctl restart chronyd

[root@KA2 ~]# systemctl enable --now chronyd

[root@KA2 ~]# chronyc sources -v

.-- Source mode '^' = server, '=' = peer, '#' = local clock.

/ .- Source state '*' = current best, '+' = combined, '-' = not combined,

| / 'x' = may be in error, '~' = too variable, '?' = unusable.

|| .- xxxx [ yyyy ] +/- zzzz

|| Reachability register (octal) -. | xxxx = adjusted offset,

|| Log2(Polling interval) --. | | yyyy = measured offset,

|| \ | | zzzz = estimated error.

|| | | \

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^* KA1 3 6 17 13 +303ns[+6125ns] +/- 69ms

#Keepalived安装

[root@KA1 ~]# dnf install keepalived.x86_64 -y

[root@KA2 ~]# dnf install keepalived.x86_64 -y

#配置虚拟路由

[root@KA1 ~]# vim /etc/keepalived/keepalived.conf

global_defs {

notification_email {

qq@163.com #keepalived出现问题发送的邮箱

}

notification_email_from qq@163.com

smtp_server 127.0.0.1 #邮件服务器

smtp_connect_timeout 30

router_id KA1

vrrp_skip_check_adv_addr

#vrrp_strict

vrrp_garp_interval 1

vrrp_gna_interval 1

vrrp_mcast_group4 224.0.0.44 #组播地址

}

vrrp_instance WEB_VIP {

state MASTER #类型是主

interface eth0 #网卡名字

virtual_router_id 51 ##

priority 100 #优先级,越大越优先

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress { #设置的vip

172.25.254.100/24 dev eth0 label eth0:0

}

}

[root@KA1 ~]# systemctl enable --now keepalived.service

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

#在KA2中设定

[root@KA2 ~]# vim /etc/keepalived/keepalived.conf

global_defs {

notification_email {

qq@163.com ##

}

notification_email_from qq@163.com ##

smtp_server 127.0.0.1 ##

smtp_connect_timeout 30

router_id KA1

vrrp_skip_check_adv_addr

#vrrp_strict

vrrp_garp_interval 1

vrrp_gna_interval 1

vrrp_mcast_group4 224.0.0.44 ##

}

vrrp_instance WEB_VIP {

state BACKUP ##

interface eth0 ##

virtual_router_id 51 ##要和主的一样

priority 80 ##

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress { ##

172.25.254.100/24 dev eth0 label eth0:0

}

}

[root@KA2 ~]# systemctl enable --now keepalived.service

Created symlink /etc/systemd/system/multi-user.target.wants/keepalived.service → /usr/lib/systemd/system/keepalived.service.

#验证

[root@KA1 ~]# tcpdump -i eth0 -nn host 224.0.0.44

11:38:46.183386 IP 172.25.254.50 > 224.0.0.44: VRRPv2, Advertisement, vrid 51, prio 100, authtype simple, intvl 1s, length 20

11:38:47.184051 IP 172.25.254.50 > 224.0.0.44: VRRPv2, Advertisement, vrid 51, prio 100, authtype simple, intvl 1s, length 20

11:38:48.184610 IP 172.25.254.50 > 224.0.0.44: VRRPv2, Advertisement, vrid 51, prio 100, authtype simple, intvl 1s, length 20

11:38:49.185084 IP 172.25.254.50 > 224.0.0.44: VRRPv2, Advertisement, vrid 51, prio 100, authtype simple, intvl 1s, length 20

[root@KA1 ~]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.25.254.50 netmask 255.255.255.0 broadcast 172.25.254.255

inet6 fe80::3901:aeea:786a:7227 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:26:33:d9 txqueuelen 1000 (Ethernet)

RX packets 5847 bytes 563634 (550.4 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 5224 bytes 698380 (682.0 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

eth0:0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.25.254.100 netmask 255.255.255.0 broadcast 0.0.0.0

ether 00:0c:29:26:33:d9 txqueuelen 1000 (Ethernet)

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 42 bytes 3028 (2.9 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 42 bytes 3028 (2.9 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0RS1,RS2

bash

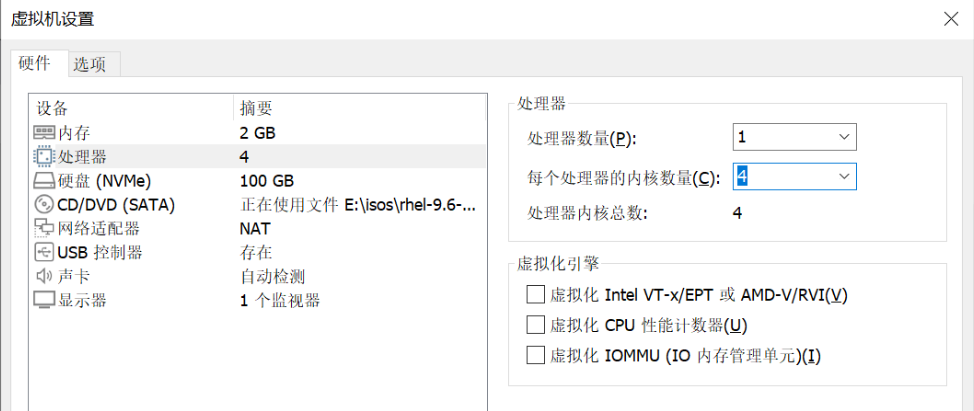

#部署rs1和rh2(单网卡NAT模式)

[root@rs1 ~]# vmset.sh eth0 172.25.254.10 rs1

[root@rs1 ~]# dnf install httpd -y

[root@rs1 ~]# echo RS1 - 172.25.254.10 > /var/www/html/index.html

[root@rs1 ~]# systemctl enable --now httpd

[root@rs2 ~]# vmset.sh eth0 172.25.254.20 rs2

[root@rs2 ~]# dnf install httpd -y

[root@rs2 ~]# echo RS2 - 172.25.254.20 > /var/www/html/index.html

[root@rs2 ~]# systemctl enable --now httpd

#测试:

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.10

RS1 - 172.25.254.10

✔

─────────────────────────────────────────────────────────────────────────────────────────────────────

[2026-01-28 10:36.42] ~

[Administrator.DESKTOP-VJ307M3] ➤ curl 172.25.254.20

RS2 - 172.25.254.20

bash

#测试故障

#在一个独立的shell中执行

[root@KA1 ~]# tcpdump -i eth0 -nn host 224.0.0.44

#在kA1中模拟故障

[root@KA1 ~]# systemctl stop keepalived.service

#在KA2中看vip是否被迁移到当前主机

[root@KA2 ~]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.25.254.60 netmask 255.255.255.0 broadcast 172.25.254.255

inet6 fe80::26df:35e5:539:56bc prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:1e:fd:7a txqueuelen 1000 (Ethernet)

RX packets 2668 bytes 237838 (232.2 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 2229 bytes 280474 (273.9 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

eth0:0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.25.254.100 netmask 255.255.255.0 broadcast 0.0.0.0

ether 00:0c:29:1e:fd:7a txqueuelen 1000 (Ethernet)

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 52 bytes 3528 (3.4 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 52 bytes 3528 (3.4 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 03.2.2 keepalived的日志分离

bash

[root@KA1 ~]# vim /etc/sysconfig/keepalived

KEEPALIVED_OPTIONS="-D -S 6"

[root@KA1 ~]# systemctl restart keepalived.service

[root@KA1 ~]# vim /etc/rsyslog.conf

local6.* /var/log/keepalived.log

[root@KA1 ~]# systemctl restart rsyslog.service

#测试

[root@KA1 log]# ls -l /var/log/keepalived.log

ls: 无法访问 'keepalived.log': 没有那个文件或目录

[root@KA1 log]# ls keepalived.log

keepalived.log3.2.3 keepalived的独立子配置文件配置

bash

[root@KA1 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

timinglee_zln@163.com

}

notification_email_from timinglee_zln@163.com

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id KA1

vrrp_skip_check_adv_addr

#vrrp_strict

vrrp_garp_interval 1

vrrp_gna_interval 1

vrrp_mcast_group4 224.0.0.44

}

include /etc/keepalived/conf.d/*.conf #指定独立子配置文件

[root@KA1 ~]# mkdir /etc/keepalived/conf.d -p

[root@KA1 ~]# vim /etc/keepalived/conf.d/webvip.conf

vrrp_instance WEB_VIP {

state MASTER

interface eth0

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.25.254.100/24 dev eth0 label eth0:0

}