一、概述

本文主要介绍观测云对 Serverless 容器内日志采集的最佳实践,通过观测云 CRD+DataKit Operator 注入 logfwd sidecar 的方式实现采集,方案主要特点如下:

- 集中管理采集配置:支持监听 Kubernetes ClusterLoggingConfig CRD,并暴露匹配结果供 logfwd sidecar 轮询获取(sidecar 默认每 60 秒向 Operator 发起 HTTP 请求,logfwd 需 ≥ 1.86.0)。

- 热更新 & 精细匹配:CRD selector(Namespace/Pod/Label/Container)随改随生效,无需重建 Workload。

二、前置条件

- Kubernetes 集群版本 1.16+

- 安装 DataKit 并开启

logfwdserver采集器,例如默认监听端口是9533 - DataKit service 需要开放

9533端口,使得其他 Pod 能访问datakit-service.datakit.svc:9533 - DataKit-Operator v1.7.0 以及以上版本

- 集群管理员权限(用于注册 CRD)

三、采集流程

1. 注册 Kubernetes CRD

- 使用以下 YAML 注册 ClusterLoggingConfig CRD:

yaml

apiVersion: apiextensions.k8s.io/v1

kind: CustomResourceDefinition

metadata:

name: clusterloggingconfigs.logging.datakits.io

labels:

app: datakit-logging-config

version: v1alpha1

spec:

group: logging.datakits.io

versions:

- name: v1alpha1

served: true

storage: true

schema:

openAPIV3Schema:

type: object

properties:

apiVersion:

type: string

kind:

type: string

metadata:

type: object

spec:

type: object

required:

- selector

properties:

selector:

type: object

properties:

namespaceRegex:

type: string

podRegex:

type: string

podLabelSelector:

type: string

containerRegex:

type: string

podTargetLabels:

type: array

items:

type: string

configs:

type: array

items:

type: object

required:

- source

- type

properties:

source:

type: string

type:

type: string

disable:

type: boolean

path:

type: string

multiline_match:

type: string

pipeline:

type: string

storage_index:

type: string

tags:

type: object

additionalProperties:

type: string

scope: Cluster

names:

plural: clusterloggingconfigs

singular: clusterloggingconfig

kind: ClusterLoggingConfig

shortNames:

- logging-

创建 CRD 资源,自动应用采集配置

kubectl apply -f clusterloggingconfig-crd.yaml

-

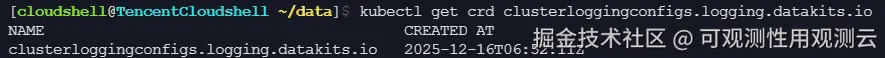

验证 CRD 注册

arduino

kubectl get crd clusterloggingconfigs.logging.datakits.io

2. 安装配置 DataKit-Operator

- 安装 DataKit-Operator v1.7.0 及以上版本,可通过命令 kubectl apply -f datakit-operator.yaml 安装最新的 datakit-operator.yaml 即可带上必要权限,或参考下列最小示例:

yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: datakit-operator

rules:

- apiGroups: ["logging.datakits.io"]

resources: ["clusterloggingconfigs"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: datakit-operator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: datakit-operator

subjects:

- kind: ServiceAccount

name: datakit-operator

namespace: datakit

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: datakit-operator

namespace: datakit

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: datakit-operator

namespace: datakit

labels:

app: datakit-operator

spec:

replicas: 1 # Do not change the ReplicaSet number!

selector:

matchLabels:

app: datakit-operator

template:

metadata:

labels:

app: datakit-operator

spec:

serviceAccountName: datakit-operator

containers:

- name: operator

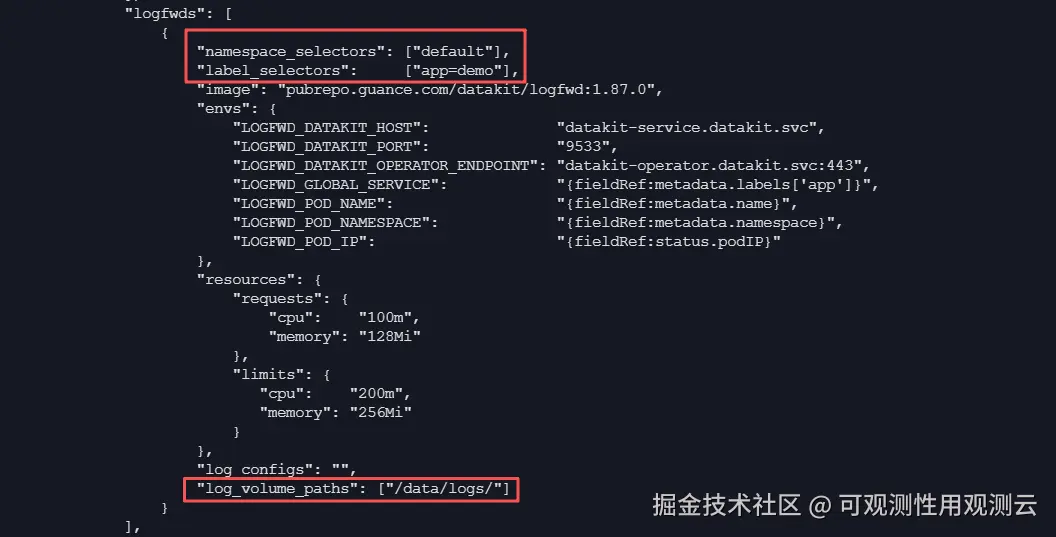

# other..- 如下图,在 DataKit-Operator 配置中设置 logfwds 数组,主要配置 namespace_selectors/label_selectors 匹配规则和 log_volume_paths 挂载目录字段,namespace_selectors 和 label_selectors 为且的关系。

3. DataKit Deployment 部署

- 在超级节点集群安装部署 Deployment 类型的 DataKit,主要注意资源类型,副本,logfwdserver 采集器开关,以及 Deployment 的更新策略修改,如下:

yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: datakit

rules:

- apiGroups: ["rbac.authorization.k8s.io"]

resources: ["clusterroles"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["nodes", "nodes/stats", "nodes/metrics", "namespaces", "pods", "pods/log", "events", "services", "endpoints", "persistentvolumes", "persistentvolumeclaims", "pods/exec"]

verbs: ["get", "list", "watch"]

- apiGroups: ["apps"]

resources: ["deployments", "daemonsets", "statefulsets", "replicasets"]

verbs: ["get", "list", "watch"]

- apiGroups: ["batch"]

resources: ["jobs", "cronjobs"]

verbs: [ "get", "list", "watch"]

- apiGroups: ["monitoring.coreos.com"]

resources: ["podmonitors", "servicemonitors"]

verbs: ["get", "list", "watch"]

- apiGroups: ["logging.datakits.io"]

resources: ["clusterloggingconfigs"]

verbs: ["get", "list", "watch"]

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: datakit

namespace: datakit

---

apiVersion: v1

kind: Service

metadata:

name: datakit-service

namespace: datakit

spec:

selector:

app: daemonset-datakit

ports:

- name: svc-http-port

protocol: TCP # for HTTP apis and some collector(inputs) HTTP server, such as DDTrace

port: 9529

targetPort: http-port

- name: svc-statsd-port

protocol: UDP

port: 8125

targetPort: statsd-port

- name: svc-otel-grpc-port

protocol: TCP

port: 4317

targetPort: otel-grpc-port

- name: svc-logfwd-port

protocol: TCP

port: 9533

targetPort: logfwd-port

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: datakit

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: datakit

subjects:

- kind: ServiceAccount

name: datakit

namespace: datakit

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: daemonset-datakit

name: datakit

namespace: datakit

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

app: daemonset-datakit

template:

metadata:

labels:

app: daemonset-datakit

spec:

hostNetwork: true

dnsPolicy: ClusterFirstWithHostNet

containers:

- env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: ENV_K8S_NODE_IP

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: status.hostIP

- name: ENV_K8S_NODE_NAME

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: spec.nodeName

#- name: ENV_K8S_CLUSTER_NODE_NAME

# value: cluster_a_$(ENV_K8S_NODE_NAME)

- name: ENV_DATAWAY

value: https://openway.guance.com?token=tkn_3a0052c9f6d3498c8ce9ca0988fd9c82 # Fill your real Dataway server and(or) workspace token

- name: ENV_CLUSTER_NAME_K8S

value: lyr-test

- name: ENV_GLOBAL_HOST_TAGS

value: host=__datakit_hostname,host_ip=__datakit_ip

- name: ENV_GLOBAL_ELECTION_TAGS # Default not set

value: ""

- name: ENV_DEFAULT_ENABLED_INPUTS

value: statsd,dk,cpu,disk,diskio,mem,swap,system,hostobject,net,host_processes,container,kubernetesprometheus,logfwdserver,ddtrace

- name: ENV_ENABLE_ELECTION

value: enable

- name: ENV_HTTP_LISTEN

value: 0.0.0.0:9529

- name: HOST_PROC

value: /rootfs/proc

- name: HOST_SYS

value: /rootfs/sys

- name: HOST_ETC

value: /rootfs/etc

- name: HOST_VAR

value: /rootfs/var

- name: HOST_RUN

value: /rootfs/run

- name: HOST_DEV

value: /rootfs/dev

- name: HOST_ROOT

value: /rootfs

image: pubrepo.guance.com/datakit/datakit:1.86.2

imagePullPolicy: IfNotPresent

name: datakit

ports:

- containerPort: 9529

hostPort: 9529

name: http-port

protocol: TCP

- containerPort: 8125

hostPort: 8125

name: statsd-port

protocol: UDP

- containerPort: 4317

hostPort: 4317

name: otel-grpc-port

protocol: TCP

- containerPort: 9533

hostPort: 9533

name: logfwd-port

protocol: TCP

resources:

requests:

cpu: "200m"

memory: "128Mi"

limits:

cpu: "2000m"

memory: "4Gi"

securityContext:

privileged: true

volumeMounts:

- mountPath: /usr/local/datakit/cache

name: cache

readOnly: false

- mountPath: /rootfs

name: rootfs

mountPropagation: HostToContainer

- mountPath: /var/run

name: run

mountPropagation: HostToContainer

- mountPath: /sys/kernel/debug

name: debugfs

- mountPath: /var/lib/containerd/container_logs

name: container-logs

mountPropagation: HostToContainer

hostIPC: true

hostPID: true

restartPolicy: Always

serviceAccount: datakit

serviceAccountName: datakit

tolerations:

- operator: Exists

volumes:

- configMap:

name: datakit-conf

name: datakit-conf

# - name: hellopythond

# configMap:

# name: python-scripts

- hostPath:

path: /

name: rootfs

- hostPath:

path: /var/run

name: run

- hostPath:

path: /sys/kernel/debug

name: debugfs

- hostPath:

path: /root/datakit_cache

name: cache

- hostPath:

path: /var/lib/containerd/container_logs

name: container-logs

# # ---iploc-start

#- emptyDir: {}

# name: datakit-ipdb

# # ---iploc-end

strategy:

rollingUpdate:

maxUnavailable: 1

type: RollingUpdate-

安装部署执行

kubectl apply -f datakit.yaml

4. 创建日志 CRD 采集配置

- 对应采集配置如下,该采集配置用于采集 default 工作空间 demo 业务的容器内日志,容器内日志来源 source 自定义命名为 demo-file,更多配置参考链接: docs.guance.com/integration...

yaml

apiVersion: logging.datakits.io/v1alpha1

kind: ClusterLoggingConfig

metadata:

name: demo-logs

spec:

selector:

namespaceRegex: "^(default)$"

podRegex: "^(deploy.*)$"

podLabelSelector: "app=demo"

podTargetLabels:

- app

- version

- enviroment

configs:

- source: "demo-file"

type: "file"

path: "/data/logs/server/server.log"

tags:

log_type: "server"

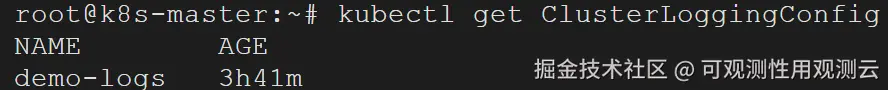

component: "springboot-server"- 应用配置

arduino

kubectl apply -f logging-config.yaml

5. 查看日志上报(首次需重启业务)

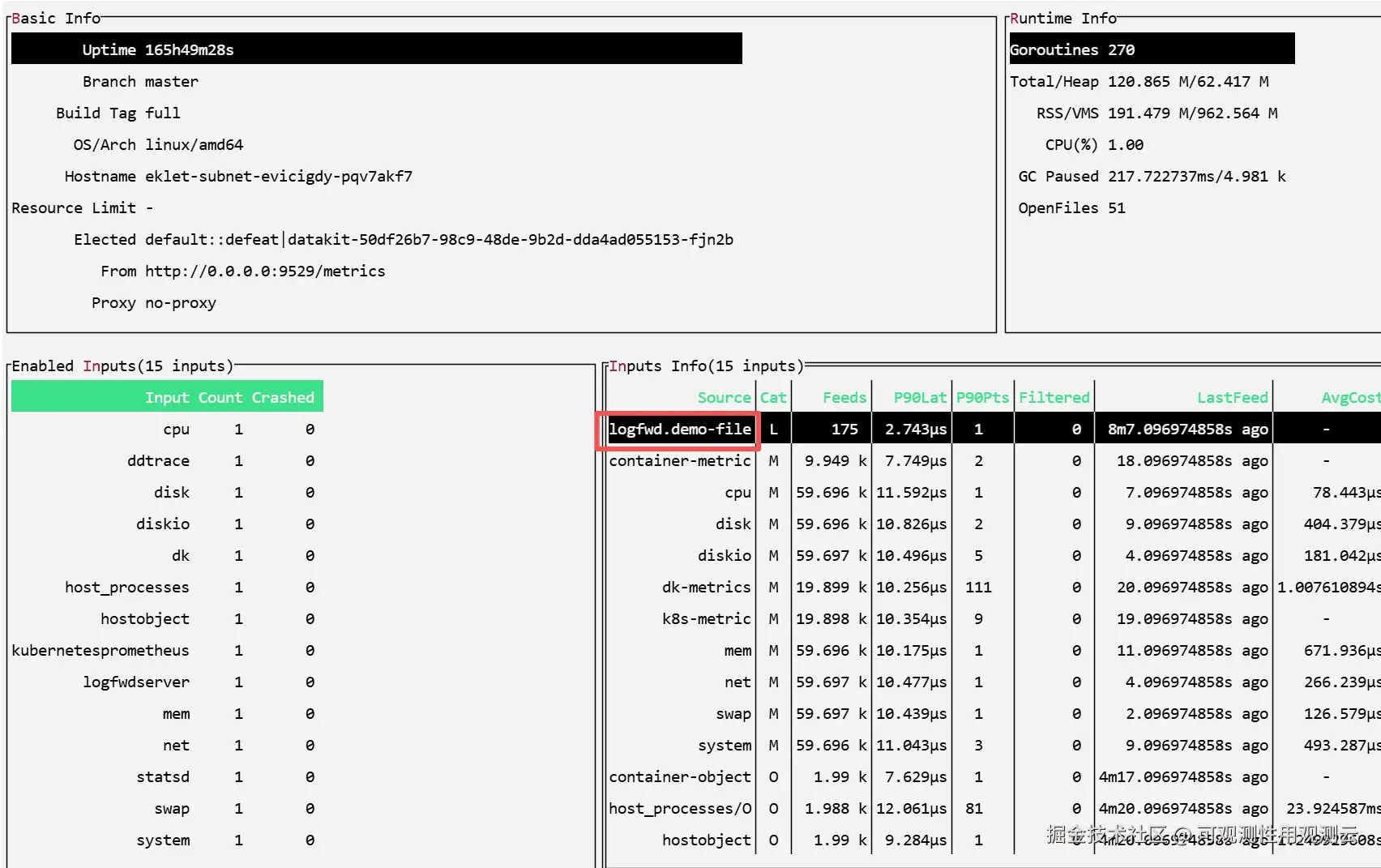

- 在 DataKit 容器内,通过"datakit monitor"命令查看日志上报:

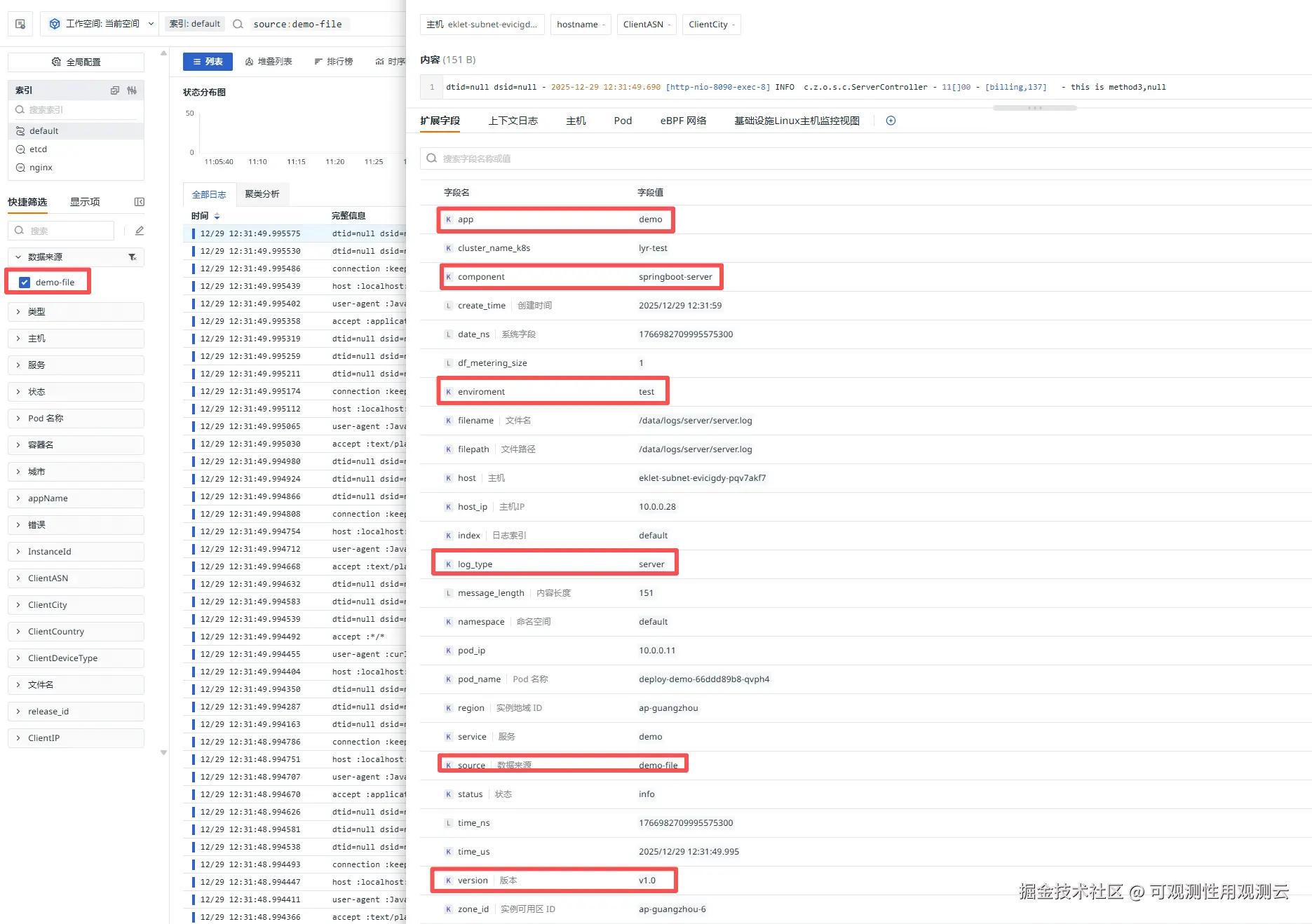

- 容器内日志如下图,数据成功上报到观测云,在观测云控制台筛选相关 source 为"demo-file"即可查看,并可以查看到 CRD 配置的相关字段展示: