实验简介

一、实验背景与核心目标

MySQL 组复制(MGR)是 MySQL 官方提供的高可用集群方案,支持多主 / 单主模式,能实现数据同步、故障自动切换;MySQL Router 是轻量级路由中间件,可实现读写分离、负载均衡,对外提供统一的访问入口。本次实验的核心目标:

- 搭建 3 节点的 MySQL 组复制集群(多主模式),实现节点间数据实时同步;

- 部署 MySQL Router,对集群做读写分离和负载均衡,简化客户端访问。

二、实验一:MySQL 组复制(MGR)部署

1. 前置准备:MySQL 节点还原

为保证集群环境纯净,先对所有 MySQL 节点做初始化还原,提供了两种方式:

- Ansible 自动化还原:通过 Ansible 批量操作 3 个 MySQL 节点(172.25.254.10/20/30),包括停止 MySQL 服务、清空数据目录、重建目录并重新初始化 MySQL,同时配置 Ansible 免密提权,提升批量操作效率;

- 手动还原 :逐节点停止 MySQL、清空数据、配置基础

my.cnf(如server-id、GTID、binlog 格式等核心参数),再初始化 MySQL,适合小规模环境或自动化脚本调试。

2. 组复制核心配置

(1)节点基础配置

所有节点统一配置:

- 主机名解析(

/etc/hosts),保证节点间互通; - 修改

my.cnf,启用组复制插件、配置组名称(group_replication_group_name)、节点通信地址(group_replication_local_address)、集群种子节点(group_replication_group_seeds),并开启多主模式(group_replication_single_primary_mode=OFF)。

(2)集群初始化(分节点操作)

- 首节点(mysql-node1) :初始化后登录 MySQL,创建组复制专用用户

rpl_user并授予复制、连接管理等权限;配置复制源(指向rpl_user);启动组复制引导(group_replication_bootstrap_group=ON),初始化集群并加入第一个节点。 - 其余节点(mysql-node2/3) :同样创建

rpl_user并授权,配置复制源后启动组复制;若遇3092报错,执行reset master清空 binlog 即可解决,最终 3 节点均进入ONLINE状态,集群搭建完成。

3. 集群功能测试

在任意节点执行数据操作(创建库、表、插入数据),验证其他节点是否实时同步:

- 在 node1 创建

timinglee库和userlist表,插入user1; - node2 可查看到

user1,并插入user2,node1/3 同步; - node3 插入

user3,所有节点均能查询到完整数据,验证多主模式下数据双向同步。

三、实验二:MySQL Router 部署与配置

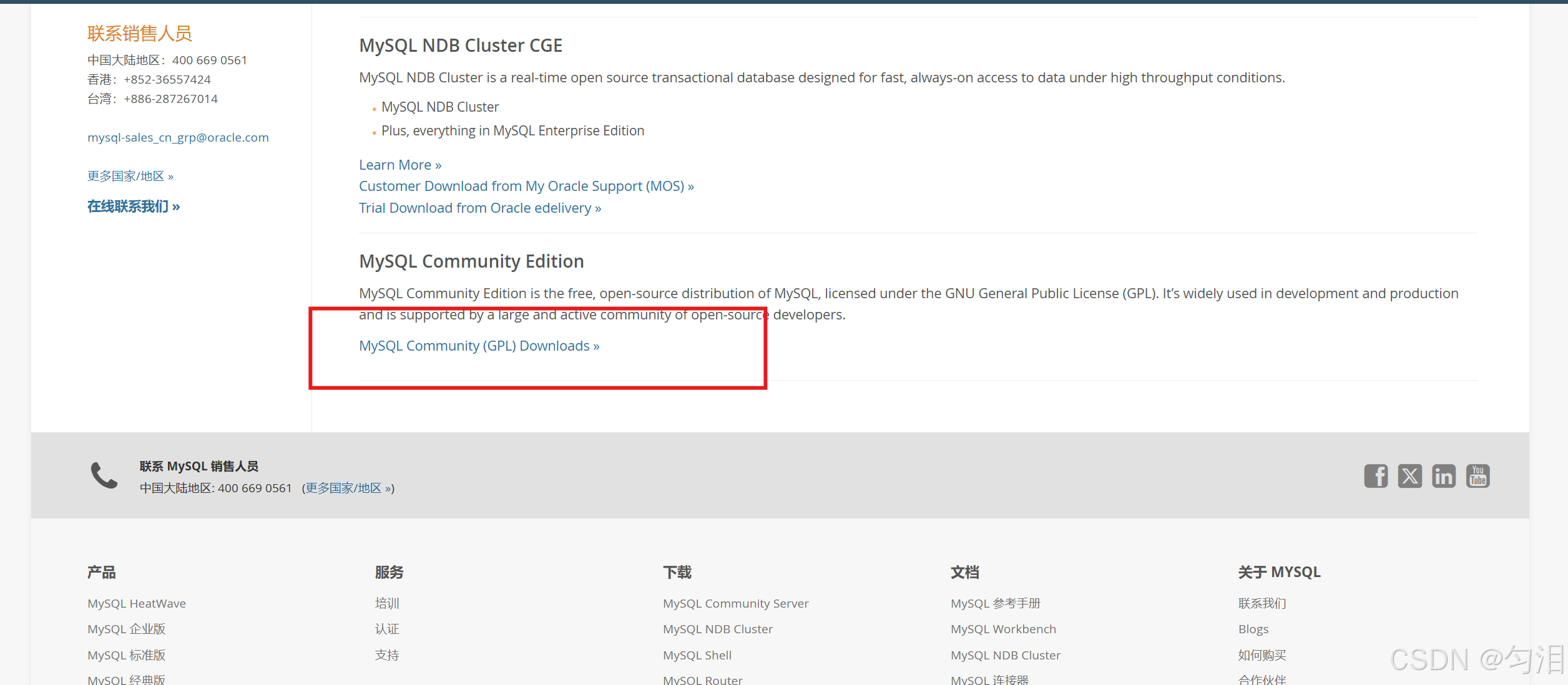

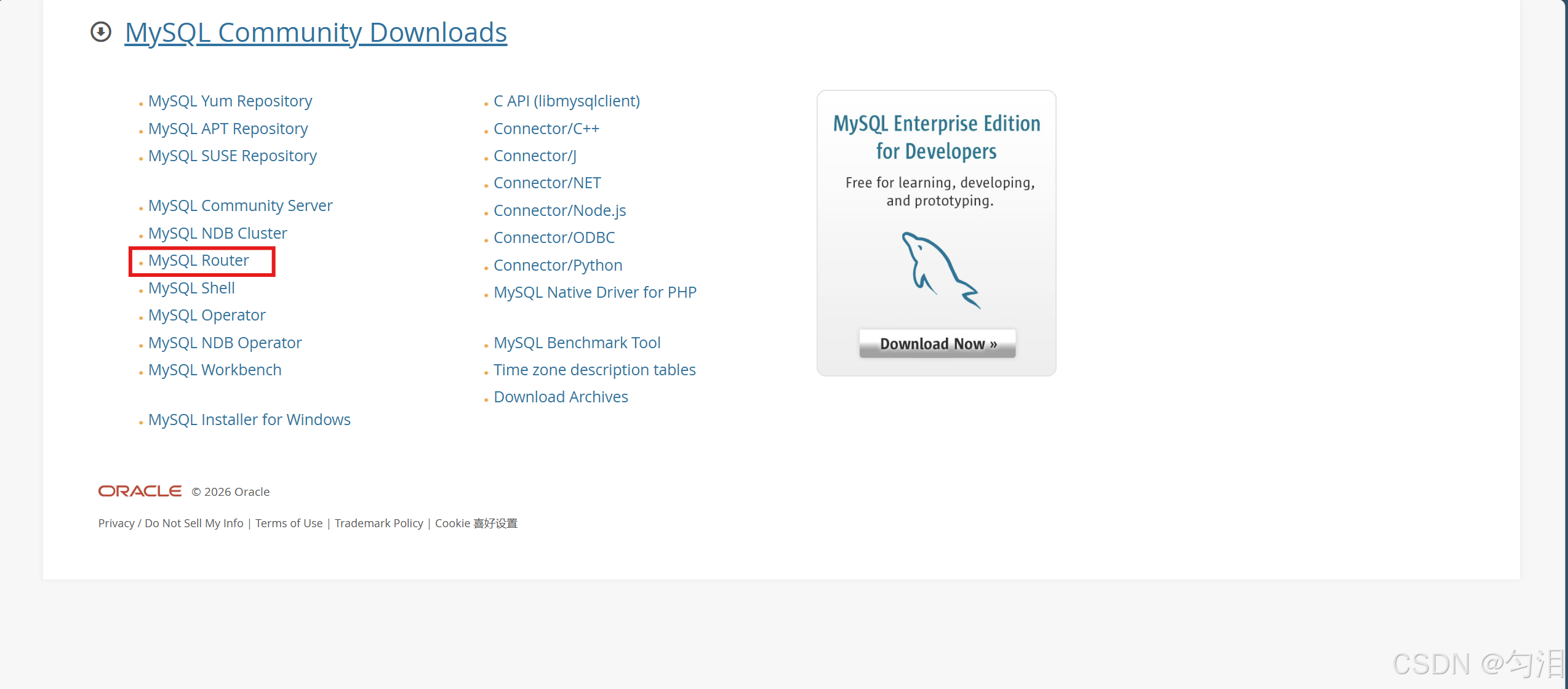

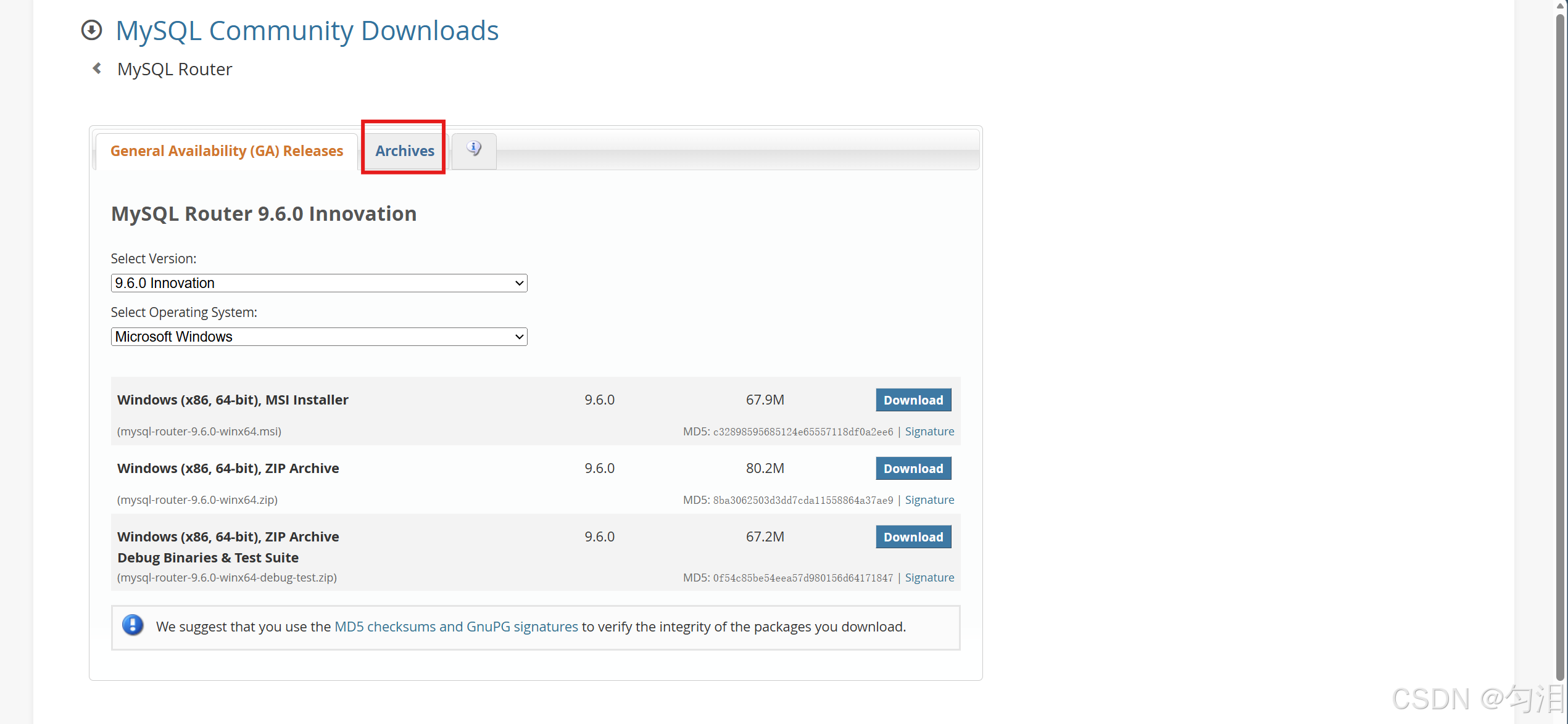

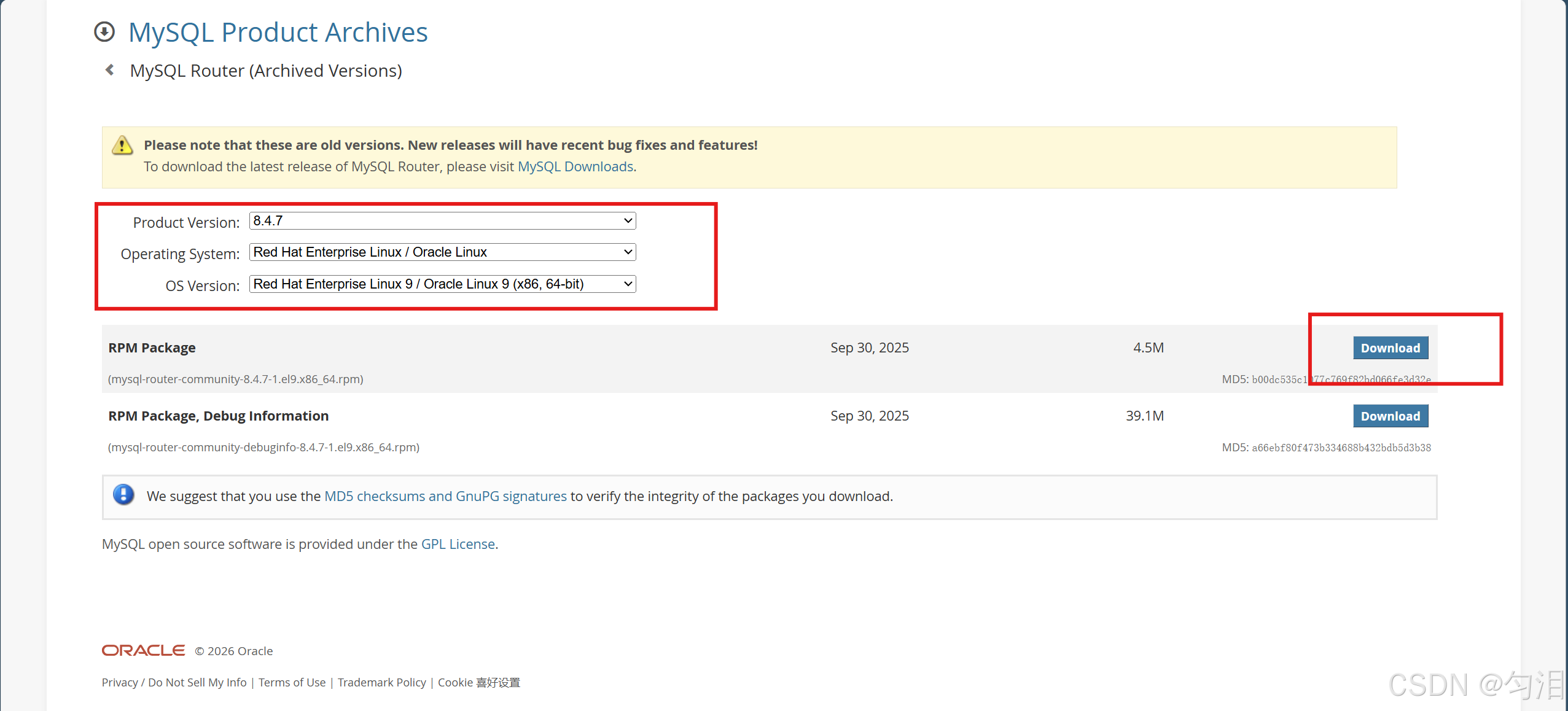

1. 软件部署

- 下载适配 EL9 系统的 MySQL Router 安装包(

mysql-router-community-8.4.7-1.el9.x86_64.rpm); - 通过

dnf安装,查看配置文件路径(主配置文件:/etc/mysqlrouter/mysqlrouter.conf)。

2. 路由核心配置

配置文件分两个路由模块,实现读写分离:

| 模块 | 端口 | 作用 | 策略 | 目标节点 |

|---|---|---|---|---|

[routing:ro] |

7001 | 读请求路由 | round-robin(轮询) |

3 个 MySQL 节点,分摊读压力 |

[routing:rw] |

7002 | 写请求路由 | first-available(优先可用) |

3 个 MySQL 节点,保证写可用 |

3. 功能验证

- 授权 root 远程登录,保证 Router 能访问集群;

- 通过

netstat验证 Router 7001/7002 端口监听; - 客户端通过

mysql -h RouterIP -P7001/7002访问,验证读轮询、写优先可用的效果; - 通过

watch lsof -i :3306监控 MySQL 3306 端口连接,观察 Router 的调度行为。

四、实验价值与总结

- 高可用:MGR 集群多主模式下,任意节点故障不影响集群读写,数据自动同步;

- 负载均衡:MySQL Router 实现读写分离,读请求轮询分摊压力,写请求优先可用节点,提升集群性能;

- 易用性:Router 对外提供统一入口,客户端无需感知后端集群节点,简化访问架构;

- 自动化:Ansible 批量管理节点,提升集群部署 / 维护效率,适合生产环境规模化运维。

mysql组复制

ansible

#利用ansible还原所有节点

[root@mha ~]# cat > /etc/yum.repos.d/epel.repo <<EOF

> [epel]

> name = epel

> baseurl = https://mirrors.aliyun.com/epel-archive/9.6/Everything/x86_64/

> gpgcheck = 0

> EOF

[root@mha ~]# dnf install ansible -y

[root@mha ~]# ansible --version

ansible [core 2.14.18]

config file = /etc/ansible/ansible.cfg

configured module search path = ['/root/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/lib/python3.9/site-packages/ansible

ansible collection location = /root/.ansible/collections:/usr/share/ansible/collections

executable location = /usr/bin/ansible

python version = 3.9.21 (main, Feb 10 2025, 00:00:00) [GCC 11.5.0 20240719 (Red Hat 11.5.0-5)] (/usr/bin/python3)

jinja version = 3.1.2

libyaml = True

[root@mha ~]# useradd devops

[root@mha ~]# echo lee | passwd --stdin devops

更改用户 devops 的密码 。

passwd:所有的身份验证令牌已经成功更新。

[root@mha ~]# su - devops

[devops@mha ~]$ mkdir ansible

[devops@mha ~]$ cat >ansible.cfg <<EOF

> [defaults]

> inventory=./inventory

> remote_user=root

> host_key_checking=false

> [privilege_escalation]

> become=False

> EOF

[devops@mha ~]$ vim /home/devops/inventory

[mysql]

172.25.254.10

172.25.254.20

172.25.254.30

[mysql:vars]

ansible_ssh_user=root

ansible_ssh_pass=123456

ansible_ssh_port=22

[devops@mha ~]$ ansible mysql -m shell -a 'echo devops | passwd --stdin devops'

172.25.254.20 | CHANGED | rc=0 >>

更改用户 devops 的密码 。

passwd:所有的身份验证令牌已经成功更新。

172.25.254.10 | CHANGED | rc=0 >>

更改用户 devops 的密码 。

passwd:所有的身份验证令牌已经成功更新。

172.25.254.30 | CHANGED | rc=0 >>

更改用户 devops 的密码 。

passwd:所有的身份验证令牌已经成功更新。

[devops@mha ~]$ ansible mysql -m shell -a 'echo "devops ALL=(ALL) NOPASSWD: ALL" >> /etc/sudoers'

172.25.254.30 | CHANGED | rc=0 >>

172.25.254.20 | CHANGED | rc=0 >>

172.25.254.10 | CHANGED | rc=0 >>

[devops@mha ~]$ ansible all -m file -a 'path=/home/devops/.ssh owner=devops group=devops mode="0700" state=directory'

172.25.254.10 | CHANGED => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3"

},

"changed": true,

"gid": 1001,

"group": "devops",

"mode": "0700",

"owner": "devops",

"path": "/home/devops/.ssh",

"size": 6,

"state": "directory",

"uid": 1001

}

172.25.254.30 | CHANGED => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3"

},

"changed": true,

"gid": 1001,

"group": "devops",

"mode": "0700",

"owner": "devops",

"path": "/home/devops/.ssh",

"size": 6,

"state": "directory",

"uid": 1001

}

172.25.254.20 | CHANGED => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3"

},

"changed": true,

"gid": 1001,

"group": "devops",

"mode": "0700",

"owner": "devops",

"path": "/home/devops/.ssh",

"size": 6,

"state": "directory",

"uid": 1001

}

[devops@mha ~]$ ansible all -m copy -a 'src=/home/devops/.ssh/authorized_keys dest=/home/devops/.ssh/authorized_keys owner=devops group=devops mode='0600''

172.25.254.30 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3"

},

"changed": false,

"checksum": "8fb882b5c0c16dfc635265d0c8f7e54cc9a792c6",

"dest": "/home/devops/.ssh/authorized_keys",

"gid": 1001,

"group": "devops",

"mode": "0600",

"owner": "devops",

"path": "/home/devops/.ssh/authorized_keys",

"size": 392,

"state": "file",

"uid": 1001

}

172.25.254.10 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3"

},

"changed": false,

"checksum": "8fb882b5c0c16dfc635265d0c8f7e54cc9a792c6",

"dest": "/home/devops/.ssh/authorized_keys",

"gid": 1001,

"group": "devops",

"mode": "0600",

"owner": "devops",

"path": "/home/devops/.ssh/authorized_keys",

"size": 392,

"state": "file",

"uid": 1001

}

172.25.254.20 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3"

},

"changed": false,

"checksum": "8fb882b5c0c16dfc635265d0c8f7e54cc9a792c6",

"dest": "/home/devops/.ssh/authorized_keys",

"gid": 1001,

"group": "devops",

"mode": "0600",

"owner": "devops",

"path": "/home/devops/.ssh/authorized_keys",

"size": 392,

"state": "file",

"uid": 1001

}

cat >ansible.cfg <<EOF

[defaults]

inventory=./inventory

remote_user=devops

host_key_checking=false

[privilege_escalation]

become=True

become_ask_pass=False

become_method=sudo

become_user=root

EOF

[devops@mha ~]$ ansible all -m shell -a 'whoami'

172.25.254.10 | CHANGED | rc=0 >>

root

172.25.254.20 | CHANGED | rc=0 >>

root

172.25.254.30 | CHANGED | rc=0 >>

root

[devops@mha ~]$ vim clear_mysql.yml

- name: reset mysql

hosts: mysql

tasks:

- name: stop mysql

shell: '/etc/init.d/mysqld stop'

ignore_errors: yes

- name: delete mysql data

file:

path: /data/mysql

state: absent

- name: crate data directroy

file:

path: /data/mysql

state: directory

owner: mysql

group: mysql

- name: initialize mysql

shell: '/usr/local/mysql/bin/mysqld --initialize --user=mysql'

[devops@mha ~]$ ansible-playbook clear_mysql.yml -vv | grep password手动还原方式

#所有节点初始化数据

[root@mysql-node1 ~]# /etc/init.d/mysqld stop

[root@mysql-node1 ~]# rm -rf /data/mysql/*

[root@mysql-node1 ~]# cat > /etc/my.cnf <<EOF

[mysqld]

datadir=/data/mysql

socket=/data/mysql/mysql.sock

symbolic-links=0

#不同主机server-id一定要根据实际情况做相应改变

server-id=10|20|30

log-bin=mysql-bin

gtid_mode=ON

enforce-gtid-consistency=ON

default_authentication_plugin=mysql_native_password

log_slave_updates=ON

binlog_format=ROW

binlog_checksum=NONE

disabled_storage_engines="MyISAM,BLACKHOLE,FEDERATED,ARCHIVE,MEMORY"

EOF

[root@mysql-node1 ~]# mysqld --user=mysql --initialize部署组复制

#设置所有mysql节点的解析

[root@mysql-node1 ~]# cat > /etc/hosts <<EOF

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.10 mysql-node1

172.25.254.20 mysql-node2

172.25.254.30 mysql-node3

EOF

[root@mysql-node1 ~]# cat >> /etc/my.cnf <<EOF

plugin_load_add='group_replication.so'

group_replication_group_name="aaaaaaaa-aaaa-aaaa-aaaa-aaaaaaaaaaaa"

group_replication_start_on_boot=off

group_replication_local_address="172.25.254.10:33061" #其他两台主机一定要根据ip进行修改

group_replication_group_seeds="172.25.254.10:33061,172.25.254.20:33061,172.25.254.30:33061"

group_replication_bootstrap_group=off

group_replication_single_primary_mode=OFF

EOF

#配置组复制-在首台主机中

[root@mysql-node1 ~]# mysql -uroot -p'lsyVh+etR1ht'

mysql> alter user root@localhost identified by 'lee';

Query OK, 0 rows affected (0.04 sec)

mysql> SET SQL_LOG_BIN=0;

Query OK, 0 rows affected (0.00 sec)

mysql> CREATE USER rpl_user@'%' IDENTIFIED BY 'lee';

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT REPLICATION SLAVE ON *.* TO rpl_user@'%';

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT CONNECTION_ADMIN ON *.* TO rpl_user@'%';

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT BACKUP_ADMIN ON *.* TO rpl_user@'%';

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT GROUP_REPLICATION_STREAM ON *.* TO rpl_user@'%';

Query OK, 0 rows affected (0.00 sec)

mysql> FLUSH PRIVILEGES;

Query OK, 0 rows affected (0.00 sec)

mysql> SET SQL_LOG_BIN=1;

Query OK, 0 rows affected (0.00 sec)

mysql> CHANGE REPLICATION SOURCE TO SOURCE_USER='rpl_user', SOURCE_PASSWORD='lee' FOR CHANNEL 'group_replication_recovery';

Query OK, 0 rows affected, 2 warnings (0.01 sec)

mysql> SHOW PLUGINS; #查看组复制插件是否激活

| group_replication | ACTIVE | GROUP REPLICATION | group_replication.so | GPL |

mysql> SET GLOBAL group_replication_bootstrap_group=ON;

Query OK, 0 rows affected (0.00 sec)

mysql> START GROUP_REPLICATION USER='rpl_user', PASSWORD='lee';

Query OK, 0 rows affected (1.10 sec)

mysql> SET GLOBAL group_replication_bootstrap_group=OFF;

Query OK, 0 rows affected (0.00 sec)

mysql> SELECT * FROM performance_schema.replication_group_members;

+---------------------------+--------------------------------------+-------------+-------------+--------------+-------------+----------------+----------------------------+

| CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE | MEMBER_ROLE | MEMBER_VERSION | MEMBER_COMMUNICATION_STACK |

+---------------------------+--------------------------------------+-------------+-------------+--------------+-------------+----------------+----------------------------+

| group_replication_applier | ac3d6eaf-1a0a-11f1-9efa-000c29f4a60c | mysql-node1 | 3306 | ONLINE | PRIMARY | 8.3.0 | XCom |

+---------------------------+--------------------------------------+-------------+-------------+--------------+-------------+----------------+----------------------------+

1 row in set (0.00 sec)

#配置组复制在其余主机中

[root@mysql-node2 ~]# /etc/init.d/mysqld start

[root@mysql-node2 ~]# mysql -uroot -p'XkP<Uaa:9so5'

mysql> alter user root@localhost identified by 'lee';

Query OK, 0 rows affected (0.00 sec)

mysql> SET SQL_LOG_BIN=0;

Query OK, 0 rows affected (0.00 sec)

mysql> CREATE USER rpl_user@'%' IDENTIFIED BY 'lee';

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT REPLICATION SLAVE ON *.* TO rpl_user@'%';

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT CONNECTION_ADMIN ON *.* TO rpl_user@'%';

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT BACKUP_ADMIN ON *.* TO rpl_user@'%';

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT GROUP_REPLICATION_STREAM ON *.* TO rpl_user@'%';

Query OK, 0 rows affected (0.00 sec)

mysql> SET SQL_LOG_BIN=1;

Query OK, 0 rows affected (0.00 sec)

mysql> CHANGE REPLICATION SOURCE TO SOURCE_USER='rpl_user',SOURCE_PASSWORD='lee' FOR CHANNEL 'group_replication_recovery';

Query OK, 0 rows affected, 2 warnings (0.00 sec)

mysql> START GROUP_REPLICATION USER='rpl_user', PASSWORD='lee';

ERROR 3092 (HY000): The server is not configured properly to be an active member of the group. Please see more details on error log. #出现此处报错可以初始化下master

mysql> reset master; #用过此命令解决以上报错

Query OK, 0 rows affected, 1 warning (0.04 sec)

mysql> START GROUP_REPLICATION USER='rpl_user', PASSWORD='lee';

Query OK, 0 rows affected (7.94 sec)

mysql> SELECT * FROM performance_schema.replication_group_members;

+---------------------------+--------------------------------------+-------------+-------------+--------------+-------------+----------------+----------------------------+

| CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE | MEMBER_ROLE | MEMBER_VERSION | MEMBER_COMMUNICATION_STACK |

+---------------------------+--------------------------------------+-------------+-------------+--------------+-------------+----------------+----------------------------+

| group_replication_applier | ac3d6eaf-1a0a-11f1-9efa-000c29f4a60c | mysql-node1 | 3306 | ONLINE | PRIMARY | 8.3.0 | XCom |

| group_replication_applier | e0b37b20-1a0b-11f1-a62c-000c29e84b64 | mysql-node2 | 3306 | ONLINE | PRIMARY | 8.3.0 | XCom |

+---------------------------+--------------------------------------+-------------+-------------+--------------+-------------+----------------+----------------------------+

2 rows in set (0.00 sec) #看到主机online表示成功

[root@mysql-node3 ~]#

mysql> SELECT * FROM performance_schema.replication_group_members;

+---------------------------+--------------------------------------+-------------+-------------+--------------+-------------+----------------+----------------------------+

| CHANNEL_NAME | MEMBER_ID | MEMBER_HOST | MEMBER_PORT | MEMBER_STATE | MEMBER_ROLE | MEMBER_VERSION | MEMBER_COMMUNICATION_STACK |

+---------------------------+--------------------------------------+-------------+-------------+--------------+-------------+----------------+----------------------------+

| group_replication_applier | 4a17e9b8-1a23-11f1-b9bc-000c295fc08e | mysql-node1 | 3306 | ONLINE | PRIMARY | 8.3.0 | XCom |

| group_replication_applier | 813a67e4-1a23-11f1-b861-000c298c338f | mysql-node2 | 3306 | ONLINE | PRIMARY | 8.3.0 | XCom |

| group_replication_applier | 91be3fa4-1a23-11f1-8120-000c29a83238 | mysql-node3 | 3306 | ONLINE | PRIMARY | 8.3.0 | XCom |

+---------------------------+--------------------------------------+-------------+-------------+--------------+-------------+----------------+----------------------------+

3 rows in set (0.00 sec)测试

#测试所有节点是否可以执行读写并数据是否同步

#node1中

mysql> create database timinglee;

Query OK, 1 row affected (0.00 sec)

mysql> CREATE TABLE timinglee.userlist (

-> username VARCHAR(10) PRIMARY KEY NOT NULL,

-> password VARCHAR(50) NOT NULL

-> );

Query OK, 0 rows affected (0.05 sec)

mysql> INSERT INTO timinglee.userlist VALUES ('user1','111');

Query OK, 1 row affected (0.01 sec)

mysql> select * from timinglee.userlist;

+----------+----------+

| username | password |

+----------+----------+

| user1 | 111 |

+----------+----------+

1 row in set (0.00 sec)

#在node2中查看并插入数据

mysql> select * from timinglee.userlist;

+----------+----------+

| username | password |

+----------+----------+

| user1 | 111 |

+----------+----------+

1 row in set (0.01 sec)

mysql> insert into timinglee.userlist values ('user2','222');

Query OK, 1 row affected (0.01 sec)

mysql> select * from timinglee.userlist;

+----------+----------+

| username | password |

+----------+----------+

| user1 | 111 |

| user2 | 222 |

+----------+----------+

2 rows in set (0.00 sec)

#在node3中查看并插入数据

mysql> select * from timinglee.userlist;

+----------+----------+

| username | password |

+----------+----------+

| user1 | 111 |

| user2 | 222 |

+----------+----------+

2 rows in set (0.00 sec)

mysql> insert into timinglee.userlist values ('user3','333');

Query OK, 1 row affected (0.00 sec)

mysql> select * from timinglee.userlist;

+----------+----------+

| username | password |

+----------+----------+

| user1 | 111 |

| user2 | 222 |

| user3 | 333 |

+----------+----------+

3 rows in set (0.00 sec)

#在node1和2中查看以上数据

mysql> select * from timinglee.userlist;

+----------+----------+

| username | password |

+----------+----------+

| user1 | 111 |

| user2 | 222 |

| user3 | 333 |

+----------+----------+

3 rows in set (0.00 sec)

mysql> select * from timinglee.userlist;

+----------+----------+

| username | password |

+----------+----------+

| user1 | 111 |

| user2 | 222 |

| user3 | 333 |

+----------+----------+

3 rows in set (0.00 sec)Mysqlrouter

软件下载

[root@mysql-router ~]# wget https://downloads.mysql.com/archives/get/p/41/file/mysql-router-community-8.4.7-1.el9.x86_64.rpm

--2026-03-08 11:32:05-- https://downloads.mysql.com/archives/get/p/41/file/mysql-router-community-8.4.7-1.el9.x86_64.rpm

正在解析主机 downloads.mysql.com (downloads.mysql.com)... 23.51.143.41, 2600:140e:6:d97::2e31, 2600:140e:6:d94::2e31

正在连接 downloads.mysql.com (downloads.mysql.com)|23.51.143.41|:443... 已连接。

已发出 HTTP 请求,正在等待回应... 302 Moved Temporarily

位置:https://cdn.mysql.com/archives/mysql-router/mysql-router-community-8.4.7-1.el9.x86_64.rpm [跟随至新的 URL]

--2026-03-08 11:32:06-- https://cdn.mysql.com/archives/mysql-router/mysql-router-community-8.4.7-1.el9.x86_64.rpm

正在解析主机 cdn.mysql.com (cdn.mysql.com)... 23.42.125.160, 2600:140e:6:daf::1d68, 2600:140e:6:d9d::1d68

正在连接 cdn.mysql.com (cdn.mysql.com)|23.42.125.160|:443... 已连接。

已发出 HTTP 请求,正在等待回应... 200 OK

长度:4737377 (4.5M) [application/x-redhat-package-manager]

正在保存至: "mysql-router-community-8.4.7-1.el9.x86_64.rpm"

mysql-router-communit 100%[=======================>] 4.52M 3.25MB/s 用时 1.4s

2026-03-08 11:32:09 (3.25 MB/s) - 已保存 "mysql-router-community-8.4.7-1.el9.x86_64.rpm" [4737377/4737377])安装mysqlrouter

[root@mysql-router ~]# dnf install mysql-router-community-8.4.7-1.el9.x86_64.rpm -ymysqlrouter配置文件

[root@mysql-router ~]# rpm -qc mysql-router-community

/etc/logrotate.d/mysqlrouter #日志轮询及日志截断策略

/etc/mysqlrouter/mysqlrouter.conf #主配置文件

[root@mysql-router ~]#systemctl status mysqlrouter.service #启动脚本配置mysqlrouter

[root@mysql-router ~]# vim /etc/mysqlrouter/mysqlrouter.conf

[routing:ro]

bind_address = 0.0.0.0

bind_port = 7001

destinations = 172.25.254.10:3306,172.25.254.20:3306,172.25.254.30:3306

routing_strategy = round-robin

[routing:rw]

bind_address = 0.0.0.0

bind_port = 7002

destinations = 172.25.254.30:3306,172.25.254.20:3306,172.25.254.10:3306

routing_strategy = first-available

[root@mysql-router ~]# systemctl enable --now mysqlrouter.service

Created symlink /etc/systemd/system/multi-user.target.wants/mysqlrouter.service → /usr/lib/systemd/system/mysqlrouter.service.

[root@mysql-router ~]# netstat -antlupe | grep mysql

tcp 0 0 0.0.0.0:7001 0.0.0.0:* LISTEN 991 176991 39587/mysqlrouter

tcp 0 0 0.0.0.0:7002 0.0.0.0:* LISTEN 991 176009 39587/mysqlrouter测试

#在mysql节点的任意主机中添加root远程登录

[root@mysql-node1 ~]# mysql -uroot -plee

mysql> CREATE USER root@'%' identified by 'lee';

Query OK, 0 rows affected (0.00 sec)

mysql> GRANT ALL ON *.* TO root@'%';

Query OK, 0 rows affected (0.00 sec)

mysql> quit

Bye

[root@mysql-node1 ~]# mysql -uroot -plee -h172.25.254.10

mysql> quit

Bye

[root@mysql-node1 ~]# mysql -uroot -plee -h172.25.254.20

mysql> quit

Bye

[root@mysql-node1 ~]# mysql -uroot -plee -h172.25.254.30

mysql> quit

#查看调度效果

[root@mysql-node10 & 20 & 30 ~]# watch -n1 lsof -i :3306

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

mysqld 9879 mysql 22u IPv6 56697 0t0 TCP *:mysql (LISTEN)

#测试效果

[root@mysql-node1 ~]# mysql -uroot -plee -h172.25.254.40 -P7002