llama.cpp没有发布官方aarch64的二进制,需要自己编译,好在Termux已经有编译好的包可用。

按照文章在安卓手机上用vulkan加速推理LLM的方法,

1.在Termux中安装llama-cpp软件

~ $ apt install llama-cpp

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

E: Unable to locate package llama-cpp

~ $ apt update

Get:1 https://mirrors.tuna.tsinghua.edu.cn/termux/apt/termux-main stable InRelease [14.0 kB]

Get:2 https://mirrors.tuna.tsinghua.edu.cn/termux/apt/termux-main stable/main aarch64 Packages [542 kB]

Fetched 556 kB in 1s (425 kB/s)

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

83 packages can be upgraded. Run 'apt list --upgradable' to see them.

~ $ apt install llama-cpp

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

The following additional packages will be installed:

libandroid-spawn

Suggested packages:

llama-cpp-backend-vulkan llama-cpp-backend-opencl

The following NEW packages will be installed:

libandroid-spawn llama-cpp

0 upgraded, 2 newly installed, 0 to remove and 83 not upgraded.

Need to get 9927 kB of archives.

After this operation, 99.2 MB of additional disk space will be used.

Do you want to continue? [Y/n]

Get:1 https://mirrors.tuna.tsinghua.edu.cn/termux/apt/termux-main stable/main aarch64 libandroid-spawn aarch64 0.3 [15.2 kB]

Get:2 https://mirrors.tuna.tsinghua.edu.cn/termux/apt/termux-main stable/main aarch64 llama-cpp aarch64 0.0.0-b8184-0 [9911 kB]

Fetched 9927 kB in 2s (4059 kB/s)

Selecting previously unselected package libandroid-spawn.

(Reading database ... 6651 files and directories currently installed.)

Preparing to unpack .../libandroid-spawn_0.3_aarch64.deb ...

Unpacking libandroid-spawn (0.3) ...

Selecting previously unselected package llama-cpp.

Preparing to unpack .../llama-cpp_0.0.0-b8184-0_aarch64.deb ...

Unpacking llama-cpp (0.0.0-b8184-0) ...

Setting up libandroid-spawn (0.3) ...

Setting up llama-cpp (0.0.0-b8184-0) ...如果找不到这个包,就先执行apt update更新目录。为简单起见,先不安装llama-cpp-backend-vulkan,用cpu来执行llama-cpp。

2.下载Qwen3.5-0.8B-UD-Q4_K_XL.gguf模型

~ $ mkdir model

~ $ cd model

~/model $ wget -c https://hf-mirror.com/unsloth/Qwen3.5-0.8B-GGUF/resolve/main/Qwen3.5-0.8B-UD-Q4_K_XL.gguf

The program wget is not installed. Install it by executing:

pkg install wget

~/model $ curl -LO https://hf-mirror.com/unsloth/Qwen3.5-0.8B-GGUF/resolve/main/Qwen3.5-0.8B-UD-Q4_K_XL.gguf

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 1391 0 1391 0 0 1771 0 --:--:-- --:--:-- --:--:-- 1771

100 532M 100 532M 0 0 4147k 0 0:02:11 0:02:11 --:--:-- 5141k这个模型是Q4量化的,比原版减少了一半空间,而能力差不多。

3.用lama-cli交互工具加载模型并对话

~/model $ lama-cli -m Qwen3.5-0.8B-UD-Q4_K_XL.gguf --ctx-size 16384 -cnv

No command lama-cli found, did you mean:

Command alass-cli in package alass

Command ani-cli in package ani-cli

~/model $ llama-cli -m Qwen3.5-0.8B-UD-Q4_K_XL.gguf --ctx-size 16384 -cnv

load_backend: loaded CPU backend from /data/data/com.termux/files/usr/bin/../lib/libggml-cpu.so

Loading model...

▄▄ ▄▄

██ ██

██ ██ ▀▀█▄ ███▄███▄ ▀▀█▄ ▄████ ████▄ ████▄

██ ██ ▄█▀██ ██ ██ ██ ▄█▀██ ██ ██ ██ ██ ██

██ ██ ▀█▄██ ██ ██ ██ ▀█▄██ ██ ▀████ ████▀ ████▀

██ ██

▀▀ ▀▀

build : b0-unknown

model : Qwen3.5-0.8B-UD-Q4_K_XL.gguf

modalities : text

available commands:

/exit or Ctrl+C stop or exit

/regen regenerate the last response

/clear clear the chat history

/read add a text file用因式分解法解方程x^2-x-20=0

我们要解的是方程:

x 2 − x − 20 = 0 x^2 - x - 20 = 0 x2−x−20=0

1. 分析方程结构

这是一个一元二次方程,可以看作是配方法(或平方差公式)的逆运算。

观察方程 x 2 − 2 x + 9 − x = 0 x^2 - 2x + 9 - x = 0 x2−2x+9−x=0,这看起来不像标准形式,但我们可以尝试配方:

x 2 − 20 + x = 0 x^2 - 20 + x = 0 x2−20+x=0

这也不对,因为系数没有统一。让我们尝试直接利用平方差公式 a 2 − b 2 = ( a − b ) ( a + b ) a^2 - b^2 = (a-b)(a+b) a2−b2=(a−b)(a+b)。

我们需要将方程写成 ( x − ... ) ( x + ... ) = 0 (x - \dots)(x + \dots) = 0 (x−...)(x+...)=0 的形式。

通常这类方程(一次项和常数项互为相反数的形式)可以通过配方转化为:

( x − 1 2 ) 2 − ( 1 2 ) 2 − 20 = 0 (x - \frac{1}{2})^2 - (\frac{1}{2})^2 - 20 = 0 (x−21)2−(21)2−20=0

这会导致 x x x 变成四次方程,显然不是我们想要的。

让我们回到直接因式分解 。

观察常数项是 − 20 -20 −20,一次项系数是 1 1 1。

我们需要找到两个数,乘积为 − 20 -20 −20,和为 1 1 1。

这两个数显然是 4 和 − 5 -5 −5。

所以,我们可以将方程左边变形为:

( x − 4 ) ( x + 5 ) = x 2 − 4 x + 5 x − 20 = x 2 + x − 20 (x - 4)(x + 5) = x^2 - 4x + 5x - 20 = x^2 + x - 20 (x−4)(x+5)=x2−4x+5x−20=x2+x−20

2. 验证并求解

让我们重新检查一下刚才的变形是否正确:

( x − 4 ) ( x + 5 ) = x 2 + 5 x − 4 x − 20 = x 2 + x − 20 (x - 4)(x + 5) = x^2 + 5x - 4x - 20 = x^2 + x - 20 (x−4)(x+5)=x2+5x−4x−20=x2+x−20

这与原方程完全一致。

因此,原方程可以分解为:

x 2 + x − 20 = 0 x^2 + x - 20 = 0 x2+x−20=0

根据零乘性(Zero Product Property),如果两个因式的乘积为 0,那么其中一个因式必须为 0。

所以:

x − 4 = 0 或 x + 5 = 0 x - 4 = 0 \quad \text{或} \quad x + 5 = 0 x−4=0或x+5=0

解得:

x 1 = 4 x_1 = 4 x1=4

x 2 = − 5 x_2 = -5 x2=−5

3. 结论

方程的解为:

x = 4 或 x = − 5 x = 4 \text{ 或 } x = -5 x=4 或 x=−5

Prompt: 45.1 t/s \| Generation: 6.6 t/s

/exit

Exiting...

llama_memory_breakdown_print: | memory breakdown [MiB] | total free self model context compute unaccounted |

llama_memory_breakdown_print: | - Host | 1222 = 522 + 211 + 489 |因为模型很小,智能比较弱,胡说一通后,勉强算对了。

4.利用llama-server内置的web-ui功能

~/model $ ls -l

total 546220

-rw------- 1 u0_a270 u0_a270 558772480 Mar 8 09:40 Qwen3.5-0.8B-UD-Q4_K_XL.gguf

~/model $ llama-server -m ./Qwen3.5-0.8B-UD-Q4_K_XL.gguf --jinja -c 0 --host 127.0.0.1 --port 8033

load_backend: loaded CPU backend from /data/data/com.termux/files/usr/bin/../lib/libggml-cpu.so

main: n_parallel is set to auto, using n_parallel = 4 and kv_unified = true

build: 0 (unknown) with Clang 21.0.0 for Android aarch64

system info: n_threads = 8, n_threads_batch = 8, total_threads = 8

system_info: n_threads = 8 (n_threads_batch = 8) / 8 | CPU : NEON = 1 | ARM_FMA = 1 | LLAMAFILE = 1 | REPACK = 1 |

Running without SSL

init: using 7 threads for HTTP server

start: binding port with default address family

main: loading model

srv load_model: loading model './Qwen3.5-0.8B-UD-Q4_K_XL.gguf'

common_init_result: fitting params to device memory, for bugs during this step try to reproduce them with -fit off, or provide --verbose logs if the bug only occurs with -fit on

llama_params_fit_impl: no devices with dedicated memory found

llama_params_fit: successfully fit params to free device memory

llama_params_fit: fitting params to free memory took 0.85 seconds

llama_model_loader: loaded meta data with 46 key-value pairs and 320 tensors from ./Qwen3.5-0.8B-UD-Q4_K_XL.gguf (version GGUF V3 (latest))

...

load_tensors: loading model tensors, this can take a while... (mmap = true, direct_io = false)

load_tensors: CPU_Mapped model buffer size = 522.43 MiB

...............................................................

llama_context: CPU output buffer size = 3.79 MiB

llama_kv_cache: CPU KV buffer size = 3072.00 MiB

llama_kv_cache: size = 3072.00 MiB (262144 cells, 6 layers, 4/1 seqs), K (f16): 1536.00 MiB, V (f16): 1536.00 MiB

llama_memory_recurrent: CPU RS buffer size = 77.06 MiB

llama_memory_recurrent: size = 77.06 MiB ( 4 cells, 24 layers, 4 seqs), R (f32): 5.06 MiB, S (f32): 72.00 MiB

sched_reserve: reserving ...

sched_reserve: Flash Attention was auto, set to enabled

sched_reserve: CPU compute buffer size = 786.02 MiB

sched_reserve: graph nodes = 3123 (with bs=512), 1737 (with bs=1)

sched_reserve: graph splits = 1

sched_reserve: reserve took 37.35 ms, sched copies = 1

common_init_from_params: warming up the model with an empty run - please wait ... (--no-warmup to disable)

srv load_model: initializing slots, n_slots = 4

common_speculative_is_compat: the target context does not support partial sequence removal

srv load_model: speculative decoding not supported by this context

slot load_model: id 0 | task -1 | new slot, n_ctx = 262144

slot load_model: id 1 | task -1 | new slot, n_ctx = 262144

slot load_model: id 2 | task -1 | new slot, n_ctx = 262144

slot load_model: id 3 | task -1 | new slot, n_ctx = 262144

srv load_model: prompt cache is enabled, size limit: 8192 MiB

srv load_model: use `--cache-ram 0` to disable the prompt cache

srv load_model: for more info see https://github.com/ggml-org/llama.cpp/pull/16391

init: chat template, example_format: '<|im_start|>system

You are a helpful assistant<|im_end|>

<|im_start|>user

Hello<|im_end|>

<|im_start|>assistant

Hi there<|im_end|>

<|im_start|>user

How are you?<|im_end|>

<|im_start|>assistant

<think>

</think>

'

srv init: init: chat template, thinking = 0

main: model loaded

main: server is listening on http://127.0.0.1:8033

main: starting the main loop...

srv update_slots: all slots are idle系统检测到CPU有8个线程,用了7个,输出一堆参数后等待用浏览器访问http://127.0.0.1:8033。

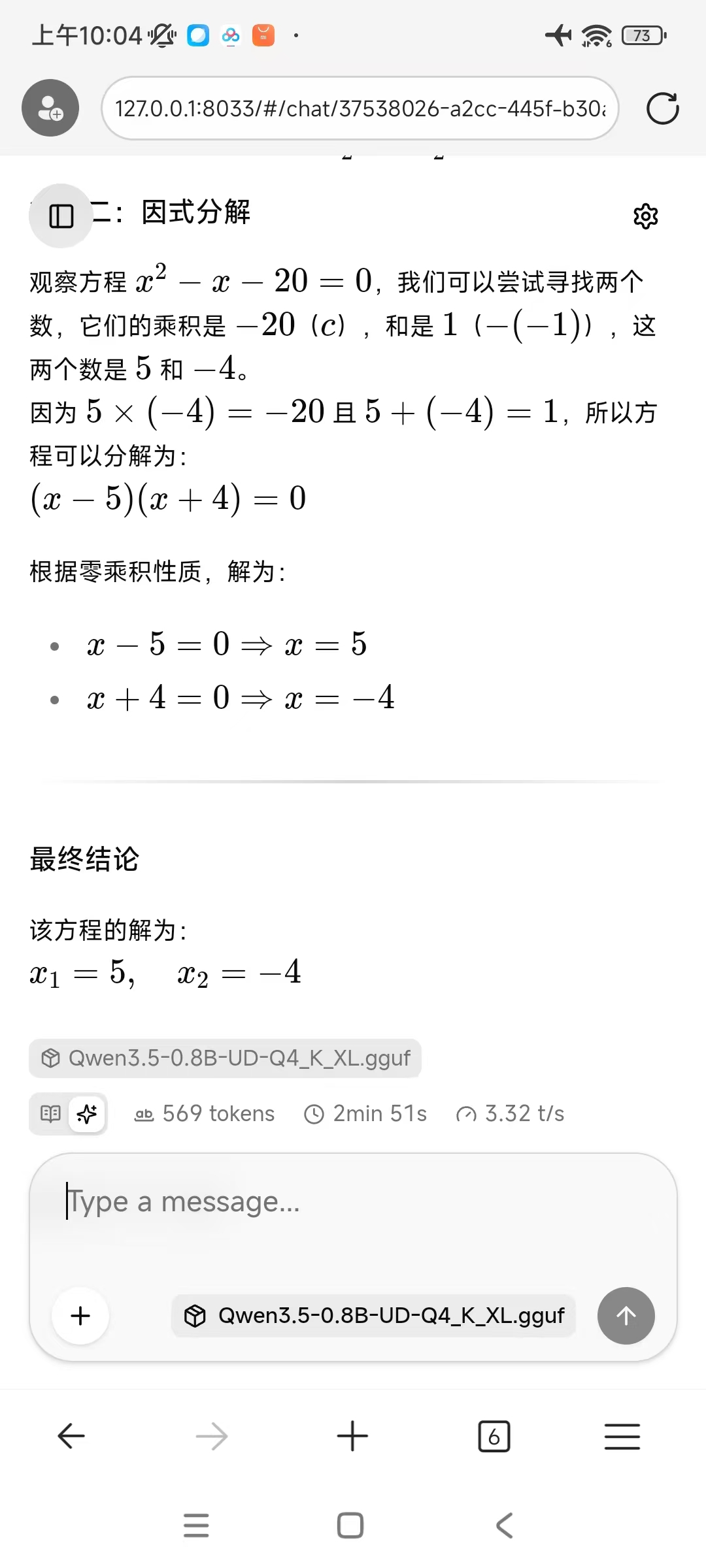

在浏览器中输入问题,输出速度比命令行慢一些,大约3t/s。

服务端输出如下内容:

srv log_server_r: done request: GET / 127.0.0.1 200

srv params_from_: Chat format: peg-constructed

slot get_availabl: id 3 | task -1 | selected slot by LRU, t_last = -1

slot launch_slot_: id 3 | task -1 | sampler chain: logits -> ?penalties -> ?dry -> ?top-n-sigma -> top-k -> ?typical -> top-p -> min-p -> ?xtc -> temp-ext -> dist

slot launch_slot_: id 3 | task 0 | processing task, is_child = 0

slot update_slots: id 3 | task 0 | new prompt, n_ctx_slot = 262144, n_keep = 0, task.n_tokens = 23

slot update_slots: id 3 | task 0 | n_tokens = 0, memory_seq_rm [0, end)

srv log_server_r: done request: POST /v1/chat/completions 127.0.0.1 200

slot init_sampler: id 3 | task 0 | init sampler, took 0.01 ms, tokens: text = 23, total = 23

slot update_slots: id 3 | task 0 | prompt processing done, n_tokens = 23, batch.n_tokens = 23

slot print_timing: id 3 | task 0 |

prompt eval time = 1447.31 ms / 23 tokens ( 62.93 ms per token, 15.89 tokens per second)

eval time = 171453.86 ms / 569 tokens ( 301.32 ms per token, 3.32 tokens per second)

total time = 172901.17 ms / 592 tokens

slot release: id 3 | task 0 | stop processing: n_tokens = 591, truncated = 0

srv update_slots: all slots are idle

^Csrv operator(): operator(): cleaning up before exit...

llama_memory_breakdown_print: | memory breakdown [MiB] | total free self model context compute unaccounted |

llama_memory_breakdown_print: | - Host | 4457 = 522 + 3149 + 786 |