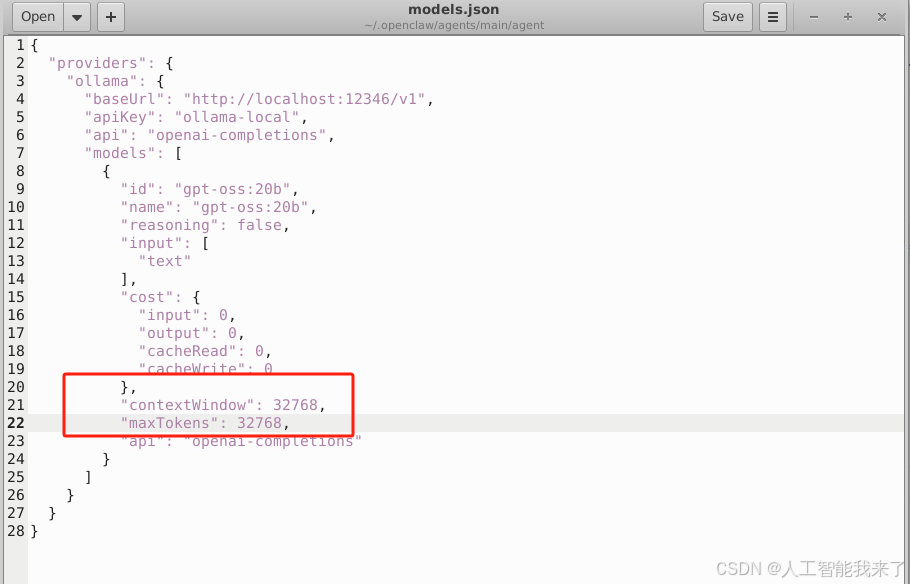

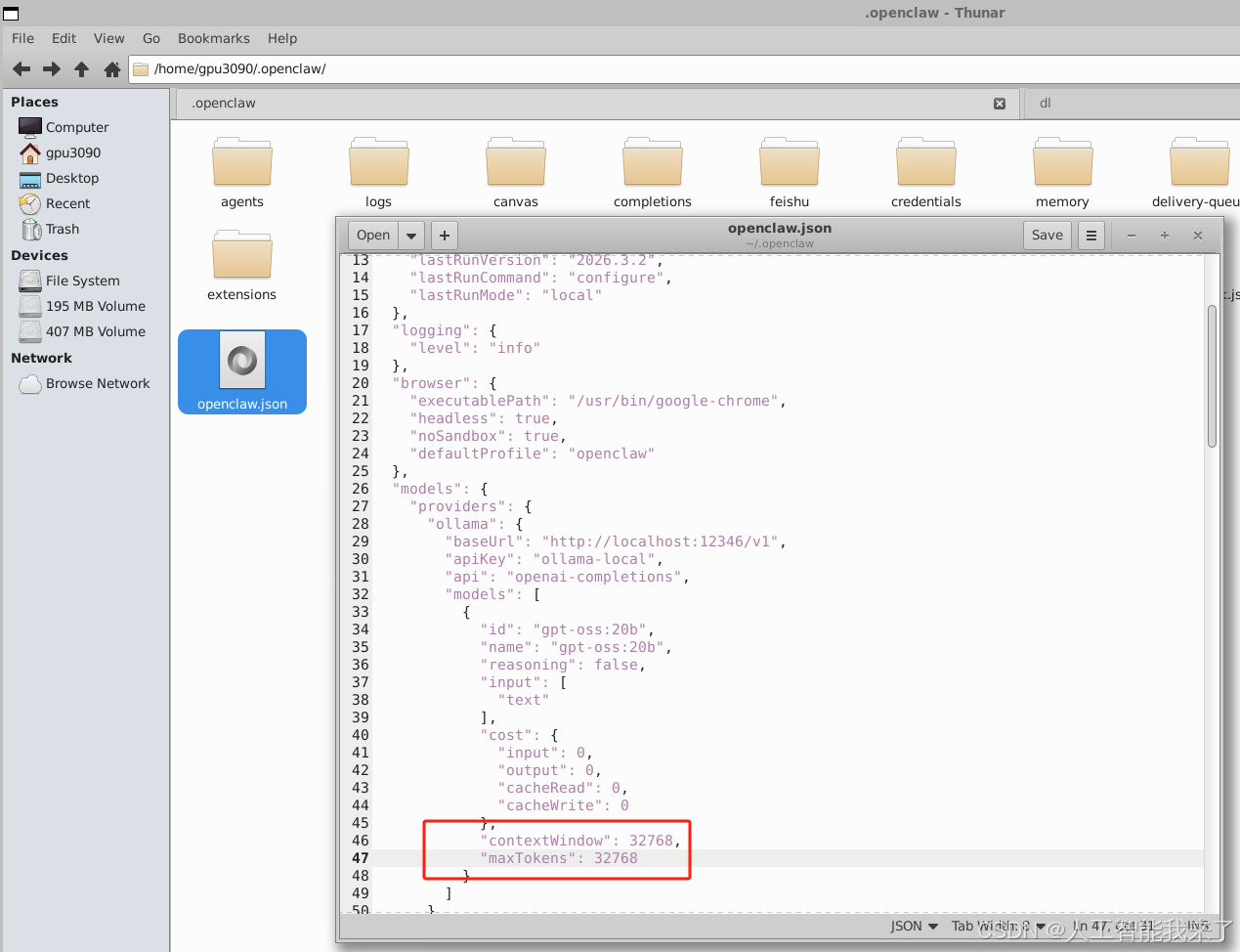

1.解决上下文窗口问题

OpenClaw 从模型元数据中读取的上下文修改为32768, Ollama 中设置改为32768。

1.1 手动修改配置文件

找到 OpenClaw 的两个 JSON 配置文件:

~/.openclaw/openclaw.json

~/.openclaw/agents/main/agent/models.json

{

"providers": {

"ollama": {

"baseUrl": "http://localhost:12346/v1",

"apiKey": "ollama-local",

"api": "openai-completions",

"models": [

{

"id": "gpt-oss:20b",

"name": "gpt-oss:20b",

"reasoning": false,

"input": [

"text"

],

"cost": {

"input": 0,

"output": 0,

"cacheRead": 0,

"cacheWrite": 0

},

"contextWindow": 32768,

"maxTokens": 32768,

"api": "openai-completions"

}

]

}

}

}

1.2 重启ollama

(base) gpu3090@DESKTOP-8IU6393:/mnt/c/Users/Administrator$ sudo killall -9 ollama

[sudo] password for gpu3090:

[1]+ Killed OLLAMA_HOST=0.0.0.0:12346 OLLAMA_MODELS=/home/gpu3090/.ollama/models ollama serve

(base) gpu3090@DESKTOP-8IU6393:/mnt/c/Users/Administrator$ OLLAMA_HOST=0.0.0.0:12346 OLLAMA_MODELS=/home/gpu3090/.ollama/models ollama serve &1.3 重启openclaw

openclaw gateway restart