k8s 部署中间件(mysql、redis、minio、nacos)并持久化数据

1、环境清单

| 机器名称 | 机器 IP | 角色 | 主要功能 |

|---|---|---|---|

| k8s-master | 192.168.40.61 | master | 运行应用 Pod + NFS 客户端 |

| k8s-node01 | 192.168.40.62 | Worker | 运行应用 Pod + NFS 客户端 |

| k8s-node02 | 192.168.40.63 | Worker | 运行应用 Pod + NFS 客户端 |

| hub,nfs | 192.168.40.64 | NFS 服务器 | 提供 NFS 共享存储,镜像仓库 |

2、配置NFS

所有节点安装nfs并配置目录和规则

shell

yum install -y nfs-utils rpcbind

#NFS 服务器配置

# 创建共享目录(用于存储MySQL数据)

mkdir -p /data/nfs/mysql

# 设置目录权限

chmod -R 777 /data/nfs/mysql

chown -R nfsnobody:nfsnobody /data/nfs/mysql

#配置共享规则

#编辑NFS 服务器 NFS 配置文件 /etc/exports,添加以下内容:

cat /etc/exports

/data/nfs/mysql 192.168.40.0/24(rw,sync,no_root_squash,no_all_squash)

[root@localhost ~]#

rw:读写权限

sync:数据实时同步到磁盘(保证数据一致性)

no_root_squash:允许 root 用户操作(避免 K8s Pod 权限问题)

no_all_squash:保留用户身份(不映射为匿名用户)

systemctl start rpcbind

systemctl start nfs-server

systemctl enable rpcbind

systemctl enable nfs-server

# 一定要关闭防火墙

master节点测试

[root@k8s-master ~]# showmount -e 192.168.40.64

Export list for 192.168.40.64:

/data/nfs/mysql 192.168.40.0/24

[root@k8s-master ~]# 3、部署nfs动态动态供应器

自动为 PVC 创建对应的 PV,并关联 NFS 共享目录,避免手动创建 PV 的繁琐。

3.1 创建部署清单 nfs-provisioner.yaml

实现NFS动态存储供应的完整权限和部署配置,让Kubernetes能够自动创建NFS类型的PV(持久化卷),无需手动预先创建存储卷。

shell

---

# 1. 服务账户:给供应器授权访问 K8s API 的权限

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-provisioner

namespace: kube-system

---

# 2. 集群角色:定义供应器需要的权限

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: nfs-provisioner-runner

rules:

# PV 相关权限

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

# PVC 相关权限

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

# StorageClass 相关权限

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

# Events 相关权限

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

# Services 和 Endpoints 权限 - 增加了 endpoints 的写权限

- apiGroups: [""]

resources: ["services", "endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

# Deployments 权限

- apiGroups: ["apps"]

resources: ["deployments"]

verbs: ["get", "list", "watch", "create", "delete", "update"]

---

# 3. 集群角色绑定:将集群角色权限绑定到服务账户

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: run-nfs-provisioner

subjects:

- kind: ServiceAccount

name: nfs-provisioner

namespace: kube-system

roleRef:

kind: ClusterRole

name: nfs-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

# 4. 为 leader election 添加 Role 和 RoleBinding(关键修复)

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: leader-locking-nfs-provisioner

namespace: kube-system

rules:

# Endpoints 完整权限 - leader election 必需

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch", "delete"]

# ConfigMaps 权限 - 某些版本可能用到

- apiGroups: [""]

resources: ["configmaps"]

verbs: ["get", "list", "watch", "create", "update", "patch", "delete"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: leader-locking-nfs-provisioner

namespace: kube-system

subjects:

- kind: ServiceAccount

name: nfs-provisioner

namespace: kube-system

roleRef:

kind: Role

name: leader-locking-nfs-provisioner

apiGroup: rbac.authorization.k8s.io

---

# 5. 部署供应器 Pod

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

namespace: kube-system

labels:

app: nfs-client-provisioner

spec:

replicas: 1

selector:

matchLabels:

app: nfs-client-provisioner

strategy:

type: Recreate

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-provisioner

containers:

- name: nfs-client-provisioner

image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/nfs-subdir-external-provisioner:v4.0.2

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: k8s-sigs.io/nfs-subdir-external-provisioner

- name: NFS_SERVER

value: 192.168.40.64

- name: NFS_PATH

value: /data/nfs/mysql

# 添加 leader election 相关配置

- name: LEADER_ELECTION

value: "true"

resources:

limits:

cpu: 200m

memory: 256Mi

requests:

cpu: 100m

memory: 128Mi

volumes:

- name: nfs-client-root

nfs:

server: 192.168.40.64

path: /data/nfs/mysql

shell

[root@k8s-master nfs]# kubectl apply -f nfs-provisioner.yaml

serviceaccount/nfs-provisioner created

clusterrole.rbac.authorization.k8s.io/nfs-provisioner-runner created

clusterrolebinding.rbac.authorization.k8s.io/run-nfs-provisioner created

deployment.apps/nfs-client-provisioner created

[root@k8s-master nfs]# kubectl get pod -n kube-system -o wide |grep nfs

nfs-client-provisioner-b5b885d9c-6kprx 1/1 Running 0 2m57s 10.244.2.22 k8s-node01 <none> <none>3.2 创建 StorageClass

定义动态供应的规则(如回收策略、是否允许扩容等),关联 NFS 供应器。

storageclass.yaml

shell

vim storageclass.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-storage # StorageClass 名称,PVC 会引用此名称

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner # 必须与供应器的 PROVISIONER_NAME 一致

parameters:

archiveOnDelete: "false" # 删除 PVC 时是否归档数据(false 表示直接删除,避免残留文件)

reclaimPolicy: Delete # PV 回收策略(Delete:删除 PVC 时自动删除 PV;Retain:保留 PV 手动处理)

allowVolumeExpansion: true # 允许 PVC 扩容(需在 PVC 中修改 storage 请求)

volumeBindingMode: Immediate # 立即绑定(PVC 创建后立即分配 PV)

shell

[root@k8s-master nfs]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

nfs-storage k8s-sigs.io/nfs-subdir-external-provisioner Delete Immediate true 9s

[root@k8s-master nfs]# 4、配置k8s镜像认证

shell

# 并且一个命名空间一个认证 不可以跨命名空间

[root@k8s-master docker_hub_secret]# kubectl create secret docker-registry images-secret \

> --docker-server=192.168.40.64:80 \

> --docker-username=admin \

> --docker-password=Harbor12345 \

> --docker-email=zhangfeilong0713@163.com -n mysql --dry-run=client -o yaml >images_secret.yaml

[root@k8s-master docker_hub_secret]# kubectl apply -f images_secret.yaml

secret/images-secret created

[root@k8s-master certs.d]# kubectl get secrets -n mysql

NAME TYPE DATA AGE

images-secret kubernetes.io/dockerconfigjson 1 14s

[root@k8s-master certs.d]#

#每个机器配置,并重启containerd

[root@k8s-master 192.168.40.64:80]# pwd

/etc/containerd/certs.d/192.168.40.64:80

[root@k8s-master 192.168.40.64:80]# cat hosts.toml

server = "http://192.168.40.64:80"

[host."http://192.168.40.64:80"]

capabilities = ["pull", "resolve"]

skip_verify = true

[root@k8s-master 192.168.40.64:80]# 5、部署mysql

5.1 创建 MySQL 命名空间(可选,推荐)

shell

kubectl create namespace mysql5.2 创建 MySQL 持久化存储(PVC)

shell

#利用已配置的 NFS 动态存储,通过 PVC 自动获取 PV。

vim mysql-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: mysql-data-pvc # PVC 名称,供 Deployment 引用

namespace: mysql # 与 MySQL 部署在同一命名空间

spec:

accessModes:

- ReadWriteMany # NFS 支持多节点读写(RWX)

resources:

requests:

storage: 10Gi # 申请 10GB 存储(根据需求调整)

storageClassName: nfs-storage # 关联 NFS 动态存储类

shell

[root@k8s-master nfs]# kubectl get pvc -n mysql

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

mysql-data-pvc Bound pvc-d89bea02-b665-4543-94b1-baae48876b19 10Gi RWX nfs-storage 7m6s

[root@k8s-master nfs]#

accessModes: ReadWriteMany:允许多个节点的 Pod 同时读写存储(适合单实例 MySQL,也兼容未来主从架构)。

storageClassName: nfs-storage:指定使用 NFS 动态存储,无需手动创建 PV。5.3 创建mysql配置文件

通过 ConfigMap 管理 MySQL 配置(如字符集、连接数等),避免硬编码到镜像中

创建 mysql-config.yaml 清单:

shell

apiVersion: v1

kind: ConfigMap

metadata:

name: mysql-config # ConfigMap 名称,供 Pod 挂载

namespace: mysql

data:

# MySQL 主配置文件内容

my.cnf: |

[mysqld]

character-set-server=utf8mb4 # 支持 emoji 字符集

collation-server=utf8mb4_unicode_ci

max_connections=1000 # 最大连接数

default-storage-engine=InnoDB # 默认存储引擎

skip-name-resolve # 禁用 DNS 解析(加速连接)

shel

[root@k8s-master mysql]# kubectl apply -f mysql-config.yaml

configmap/mysql-config created

[root@k8s-master mysql]# kubectl get configmaps -n mysql

NAME DATA AGE

kube-root-ca.crt 1 13m

mysql-config 1 14s

[root@k8s-master mysql]# 5.4 部署mysql-deployment.yaml 清单

shell

apiVersion: apps/v1

kind: Deployment

metadata:

name: mysql # Deployment 名称

namespace: mysql

spec:

replicas: 1 # 单实例(生产可根据需求调整)

selector:

matchLabels:

app: mysql

template:

metadata:

labels:

app: mysql

spec:

imagePullSecrets:

- name: images-secret

containers:

- name: mysql

image: 192.168.40.64:80/retec/mysql:5.7

imagePullPolicy: IfNotPresent # 优先使用本地镜像

ports:

- containerPort: 3306 # MySQL 默认端口

env:

- name: MYSQL_ROOT_PASSWORD

value: "111111" # 直接写密码

- name: MYSQL_DATABASE

value: "yd-config" # 直接写数据库名

volumeMounts:

- name: mysql-data # 挂载持久化存储(数据目录)

mountPath: /var/lib/mysql # MySQL 数据存储路径

- name: mysql-config # 挂载配置文件

mountPath: /etc/mysql/conf.d # MySQL 配置文件加载目录

resources: # 资源限制(根据节点配置调整)

requests:

cpu: 500m # 最小 CPU 需求

memory: 512Mi # 最小内存需求

limits:

cpu: 1000m # 最大 CPU 限制

memory: 1Gi # 最大内存限制

livenessProbe: # 存活探针(检测容器是否运行)

tcpSocket:

port: 3306

initialDelaySeconds: 30 # 启动后延迟 30s 开始检测

periodSeconds: 10 # 每 10s 检测一次

readinessProbe: # 就绪探针(检测容器是否可用)

exec:

command: ["mysqladmin", "ping", "-h", "localhost", "-u", "root", "-p111111"]

initialDelaySeconds: 5

periodSeconds: 5

volumes:

- name: mysql-data

persistentVolumeClaim:

claimName: mysql-data-pvc # 关联之前创建的 PVC

- name: mysql-config

configMap:

name: mysql-config # 关联之前创建的 ConfigMap5.5 创建mysql-service.yaml

shell

[root@k8s-master mysql]# cat mysql-service.yaml

#可供外部访问

apiVersion: v1

kind: Service

metadata:

name: mysql-nodeport # 服务名称,保持与之前一致(避免影响内部引用)

namespace: mysql # 与 MySQL 部署同命名空间

spec:

selector:

app: mysql # 关联 MySQL Pod 的标签(必须与 Deployment 中一致)

ports:

- port: 3306 # Service 内部暴露的端口(集群内应用访问用)

targetPort: 3306 # 映射到 Pod 的端口(MySQL 容器实际监听端口)

nodePort: 30036 # 节点暴露的端口(外部访问用,范围:30000-32767)

type: NodePort # 类型改为 NodePort,允许外部通过节点 IP+nodePort 访问

---

apiVersion: v1

kind: Service

metadata:

name: mysql # Service 名称,应用通过此名称访问

namespace: mysql

spec:

selector:

app: mysql # 关联 Deployment 的 Pod 标签

ports:

- port: 3306 # Service 暴露的端口

targetPort: 3306 # 映射到 Pod 的端口

type: ClusterIP # 仅集群内访问(默认类型)

#type: ClusterIP:服务仅在集群内部可见,应用通过 mysql.mysql.svc.cluster.local:3306 访问(格式:服务名.命名空间.svc.cluster.local)。

shell

deployment.apps/mysql created

[root@k8s-master mysql]# vim mysql-service.yaml

[root@k8s-master mysql]# kubectl apply -f mysql-service.yaml

[root@k8s-master mysql]# kubectl get svc -n mysql

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mysql ClusterIP 10.100.247.134 <none> 3306/TCP 59s

mysql-nodeport NodePort 10.107.6.144 <none> 3306:30036/TCP 59s

[root@k8s-master mysql]#

shell

[root@k8s-master mysql]# kubectl apply -f mysql-service.yaml

service/mysql created

[root@k8s-master mysql]# kubectl get svc -A |grep mysql

mysql mysql NodePort 10.99.19.190 <none> 3306:30036/TCP 18s

[root@k8s-master mysql]# 5.6 验证数据

shell

#nfs服务器

[root@localhost containerd]# cd /data/nfs/

[root@localhost nfs]# ls

mysql

[root@localhost nfs]# cd mysql/

[root@localhost mysql]# ls

mysql-mysql-data-pvc-pvc-d89bea02-b665-4543-94b1-baae48876b19

[root@localhost mysql]# cd mysql-mysql-data-pvc-pvc-d89bea02-b665-4543-94b1-baae48876b19/

[root@localhost mysql-mysql-data-pvc-pvc-d89bea02-b665-4543-94b1-baae48876b19]# ls

auto.cnf ca-key.pem ca.pem client-cert.pem client-key.pem ib_buffer_pool ibdata1 ib_logfile0 ib_logfile1 ibtmp1 mysql mysql.sock performance_schema private_key.pem public_key.pem server-cert.pem server-key.pem sys

[root@localhost mysql-mysql-data-pvc-pvc-d89bea02-b665-4543-94b1-baae48876b19]# 6、部署redis

6.1 创建pvc

还是使用上面的动态存储 ,数据目录还用/data/mysql/

shell

vim redis-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: redis-data-pvc # PVC 名称,供 Deployment 引用

namespace: default

spec:

accessModes:

- ReadWriteMany # NFS 支持多节点读写(RWX)

resources:

requests:

storage: 10Gi # 申请 10GB 存储(根据需求调整)

storageClassName: nfs-storage # 关联 NFS 动态存储类

shell

[root@k8s-master redis]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

redis-data-pvc Bound pvc-0cc8da83-83b0-4ba4-9ea7-2f77a4a9df00 10Gi RWX nfs-storage 12s

[root@k8s-master redis]#

nfs服务器查看

shell

[root@localhost mysql]# ls

default-redis-data-pvc-pvc-0cc8da83-83b0-4ba4-9ea7-2f77a4a9df00 mysql-mysql-data-pvc-pvc-d89bea02-b665-4543-94b1-baae48876b19

[root@localhost mysql]# 6.2 创建服务和service

shell

apiVersion: apps/v1

kind: Deployment

metadata:

name: redis

spec:

replicas: 1

selector:

matchLabels:

app: redis

template:

metadata:

labels:

app: redis

spec:

imagePullSecrets:

- name: images-secret

containers:

- name: redis

image: 192.168.40.64:80/retec/redis:1.0.0

imagePullPolicy: IfNotPresent

command:

- redis-server

args:

- --requirepass redis123 # 直接写密码

- --appendonly yes

ports:

- containerPort: 6379

volumeMounts:

- name: redis-data

mountPath: /data

volumes:

- name: redis-data

persistentVolumeClaim:

claimName: redis-data-pvc # 关联之前创建的 PVC

---

apiVersion: v1

kind: Service

metadata:

name: redis-nodeport

spec:

type: NodePort

selector:

app: redis

ports:

- port: 6379

targetPort: 6379

nodePort: 30379

---

apiVersion: v1

kind: Service

metadata:

name: redis

namespace: default

labels:

app: redis

spec:

type: ClusterIP

selector:

app: redis

ports:

- port: 6379

targetPort: 6379

shell

[root@k8s-master redis]# kubectl get all -A |grep redis

default pod/redis-79d85d9fc-m6ckx 1/1 Running 0 69s

default service/redis ClusterIP 10.104.107.38 <none> 6379/TCP 7m34s

default service/redis-nodeport NodePort 10.103.110.7 <none> 6379:30379/TCP 7m34s

default deployment.apps/redis 1/1 1 1 69s

default replicaset.apps/redis-79d85d9fc 1 1 1 69s

[root@k8s-master redis]# 7、部署miniio

本次使用pv pvc来部署

pv创建到k8s-node01节点上的/data/minio目录

minio也部署在k8s-node01

shell

apiVersion: v1

kind: PersistentVolume

metadata:

name: minio-pv

labels:

app: minio

spec:

capacity:

storage: 10Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

local:

path: /data/minio

nodeAffinity:

required: # 对于 Local PV,应该是 required,不是 requiredDuringSchedulingIgnoredDuringExecution

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- k8s-node01

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: minio-pvc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10Gi

selector:

matchLabels:

app: minio

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: minio

labels:

app: minio

spec:

replicas: 1

selector:

matchLabels:

app: minio

template:

metadata:

labels:

app: minio

spec:

nodeSelector:

kubernetes.io/hostname: k8s-node01

imagePullSecrets:

- name: images-secret

containers:

- name: minio

image: 192.168.40.64:80/retec/minio:1.0.0

imagePullPolicy: IfNotPresent

args:

- server

- /data

- --console-address

- ":9001"

ports:

- containerPort: 9000

name: api

- containerPort: 9001

name: console

env:

- name: MINIO_ROOT_USER

value: "minioadmin"

- name: MINIO_ROOT_PASSWORD

value: "minio123"

volumeMounts:

- name: data

mountPath: /data

volumes:

- name: data

persistentVolumeClaim:

claimName: minio-pvc

---

apiVersion: v1

kind: Service

metadata:

name: minio

spec:

type: NodePort

selector:

app: minio

ports:

- name: api

port: 9000

targetPort: 9000

nodePort: 30090

- name: console

port: 9001

targetPort: 9001

nodePort: 30091

shell

[root@k8s-master minio]# kubectl apply -f minio.yaml

persistentvolume/minio-pv created

persistentvolumeclaim/minio-pvc created

deployment.apps/minio created

service/minio created

[root@k8s-master minio]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

minio-pv 10Gi RWO Retain Bound default/minio-pvc 4s

pvc-0cc8da83-83b0-4ba4-9ea7-2f77a4a9df00 10Gi RWX Delete Bound default/redis-data-pvc nfs-storage 21m

pvc-d89bea02-b665-4543-94b1-baae48876b19 10Gi RWX Delete Bound mysql/mysql-data-pvc nfs-storage 96m

[root@k8s-master minio]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

minio-pvc Bound minio-pv 10Gi RWO 10s

redis-data-pvc Bound pvc-0cc8da83-83b0-4ba4-9ea7-2f77a4a9df00 10Gi RWX nfs-storage 21m

[root@k8s-master minio]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 46h

minio NodePort 10.103.209.88 <none> 9000:30090/TCP,9001:30091/TCP 16s

redis ClusterIP 10.104.107.38 <none> 6379/TCP 15m

redis-nodeport NodePort 10.103.110.7 <none> 6379:30379/TCP 15m

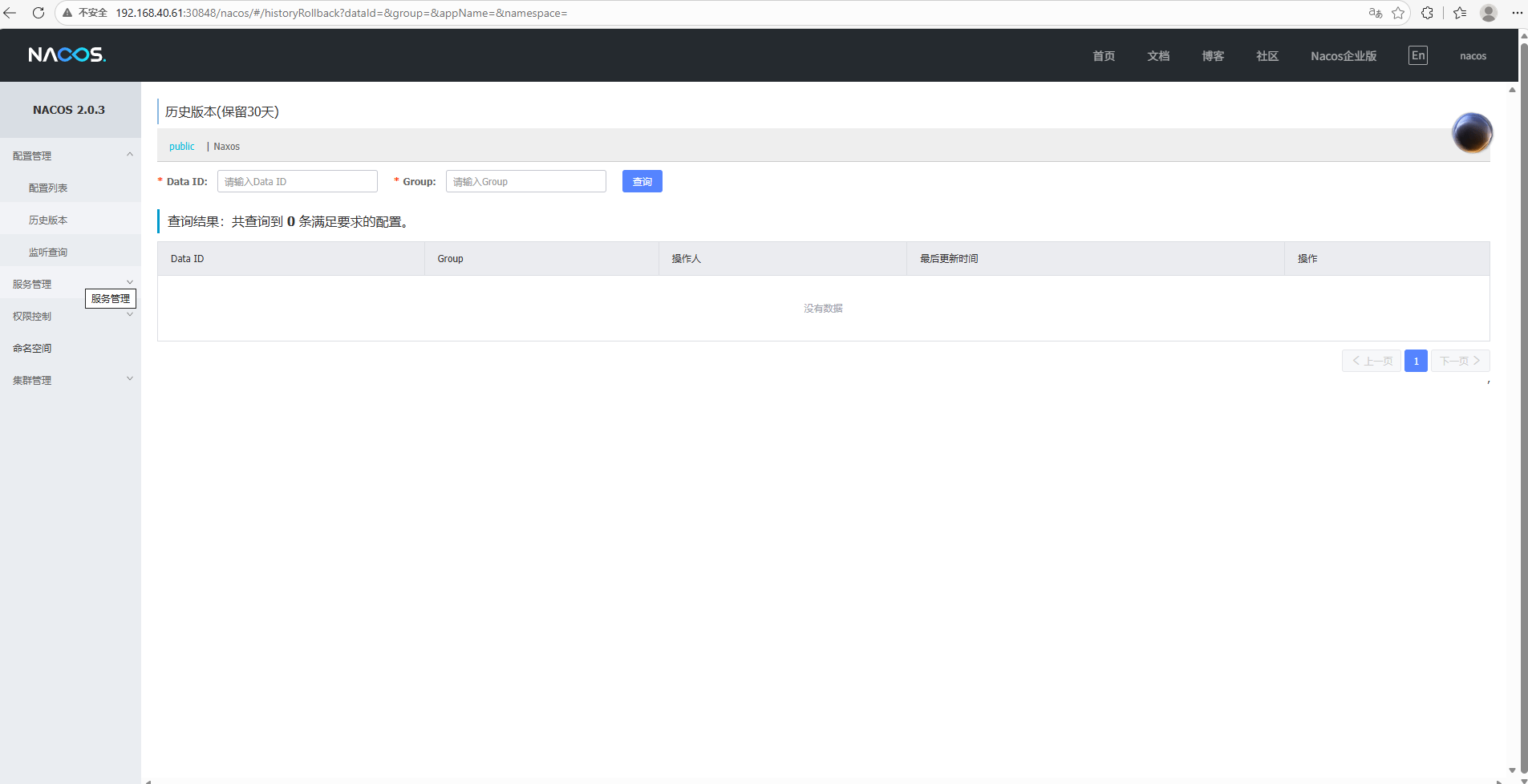

[root@k8s-master minio]# 8、部署nacos

8.1 创建nacos_pvc

shell

vim nacos-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nacos-data-pvc # PVC 名称,供 Deployment 引用

namespace: default

spec:

accessModes:

- ReadWriteMany # NFS 支持多节点读写(RWX)

resources:

requests:

storage: 20Gi # 申请 10GB 存储(根据需求调整)

storageClassName: nfs-storage # 关联 NFS 动态存储类8.2 mysql数据库导入nacos的数据结构

shell

#先把对应版本的表结构导入到对应的mysql库里

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mysql |

| performance_schema |

| sys |

| yd-config |

+--------------------+

5 rows in set (0.01 sec)

mysql> use yd-config

Reading table information for completion of table and column names

You can turn off this feature to get a quicker startup with -A

Database changed

mysql> show tables;

+----------------------+

| Tables_in_yd-config |

+----------------------+

| config_info |

| config_info_aggr |

| config_info_beta |

| config_info_tag |

| config_tags_relation |

| group_capacity |

| his_config_info |

| permissions |

| roles |

| tenant_capacity |

| tenant_info |

| users |

+----------------------+

12 rows in set (0.00 sec)

mysql> 8.3 创建 nacos-deployment.yaml

shell

[root@k8s-master nacos]# cat nacos-deployment.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: nacos-cm

data:

mysql.host: "mysql.mysql.svc.cluster.local"

mysql.db.name: "yd-config"

mysql.port: "3306"

mysql.user: "root"

mysql.password: "111111"

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nacos

labels:

app: nacos

spec:

replicas: 1

selector:

matchLabels:

app: nacos

template:

metadata:

labels:

app: nacos

spec:

containers:

- name: nacos

image: nacos/nacos-server:2.0.3 # 使用您的私有镜像

imagePullPolicy: IfNotPresent

ports:

- containerPort: 8848

name: client-port

- containerPort: 9848

name: client-rpc

- containerPort: 9849

name: raft-rpc

env:

# 运行模式:单机

- name: MODE

value: "standalone"

# MySQL 配置 - 使用 ConfigMap

- name: MYSQL_SERVICE_HOST

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.host

- name: MYSQL_SERVICE_DB_NAME

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.db.name

- name: MYSQL_SERVICE_PORT

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.port

- name: MYSQL_SERVICE_USER

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.user

- name: MYSQL_SERVICE_PASSWORD

valueFrom:

configMapKeyRef:

name: nacos-cm

key: mysql.password

# MySQL 连接参数

- name: MYSQL_SERVICE_DB_PARAM

value: "characterEncoding=utf8&connectTimeout=1000&socketTimeout=3000&autoReconnect=true&useSSL=false&serverTimezone=Asia/Shanghai"

# 数据源平台

- name: SPRING_DATASOURCE_PLATFORM

value: "mysql"

# JVM 配置

- name: JVM_XMS

value: "1g"

- name: JVM_XMX

value: "1g"

- name: JVM_XMN

value: "512m"

# Nacos 认证配置(可选)

- name: NACOS_AUTH_ENABLE

value: "true"

- name: NACOS_AUTH_TOKEN

value: "SecretKey012345678901234567890123456789012345678901234567890123456789"

- name: NACOS_AUTH_IDENTITY_KEY

value: "nacos"

- name: NACOS_AUTH_IDENTITY_VALUE

value: "nacos"

resources:

requests:

memory: "1Gi"

cpu: "500m"

limits:

memory: "2Gi"

cpu: "1000m"

volumeMounts:

- name: data

mountPath: /home/nacos/data

livenessProbe:

tcpSocket:

port: 8848

initialDelaySeconds: 60

periodSeconds: 30

readinessProbe:

tcpSocket:

port: 8848

initialDelaySeconds: 30

periodSeconds: 10

volumes:

- name: data

persistentVolumeClaim:

claimName: nacos-data-pvc8.4 创建nacos-service

shell

vim nacos-service.yaml

apiVersion: v1

kind: Service

metadata:

name: nacos

namespace: default

labels:

app: nacos

spec:

type: NodePort # NodePort 类型同时支持内外部访问

selector:

app: nacos

ports:

- name: http

port: 8848 # 内部访问端口(ClusterIP)

targetPort: 8848 # Pod 端口

nodePort: 30848 # 外部访问端口

- name: grpc

port: 9848 # 内部访问端口(ClusterIP)

targetPort: 9848 # Pod 端口

nodePort: 30849 # 外部访问端口

powershell

[root@k8s-master nacos]# kubectl get svc,configmaps,pod,pvc |grep nacos

service/nacos NodePort 10.103.16.143 <none> 8848:30848/TCP,9848:30849/TCP 64m

configmap/nacos-cm 5 17m

pod/nacos-555f7b8cdf-znrv7 1/1 Running 4 (3m7s ago) 17m

persistentvolumeclaim/nacos-data-pvc Bound pvc-b5bfafe6-5537-4ee1-8673-11af5fe32029 20Gi RWX nfs-storage 75m

[root@k8s-master nacos]#