https://mp.weixin.qq.com/s/PAp5PPEUySRInqANcJRPgw

vllm 中不使能chunked prefill 优先prefill 还是decode?

不使能 Chunked Prefill 时的调度优先级

当 chunked prefill 被禁用时,vLLM 没有明确的优先级策略,而是按照请求到达的顺序(FCFS)或优先级策略进行调度。

调度器行为分析

1. 统一调度器设计

vLLM V1 采用统一调度器,不严格区分 prefill/decode 阶段: 1

python

def schedule(self) -> SchedulerOutput:

# NOTE(woosuk) on the scheduling algorithm:

# There's no "decoding phase" nor "prefill phase" in the scheduler.

# Each request just has the num_computed_tokens and

# num_tokens_with_spec.2. 禁用 Chunked Prefill 的限制

当 chunked prefill 禁用时,调度器有严格的限制: 2

python

if (

not self.scheduler_config.enable_chunked_prefill

and num_new_tokens > token_budget

):

# If chunked_prefill is disabled,

# we can stop the scheduling here.

break这意味着如果请求无法完整放入 token 预算,调度会直接停止,不会分块处理。

3. 批处理重排序机制

即使在禁用 chunked prefill 的情况下,系统仍会进行批处理重排序,将 decode 请求优先处理: 3

python

def reorder_batch_to_split_decodes_and_prefills(

input_batch: "InputBatch",

scheduler_output: "SchedulerOutput",

decode_threshold: int = 1,

) -> bool:

"""

Reorders the batch to split into prefill and decode requests; places all

requests with <= decode_threshold tokens at the front of the batch.

"""实际调度行为

禁用 Chunked Prefill 时:

- 无分块处理:长 prefill 请求必须完整处理,无法分块

- FCFS 或优先级调度 :按照

policy配置决定调度顺序 - 批处理重排序:仍会将 decode 请求放在批处理前面

- 可能阻塞:长 prefill 请求可能阻塞其他请求的处理

与启用 Chunked Prefill 的对比:

- 启用时:明确优先 decode,分块处理 prefill

- 禁用时:无明确优先级,依赖调度策略,可能阻塞

Notes

- 禁用 chunked prefill 主要用于需要完整处理长请求的场景

- 这种模式下,长 prefill 请求可能显著影响延迟

- 建议在生产环境中启用 chunked prefill 以获得更好的性能

- 可以通过

policy="priority"设置优先级调度来改善性能

Wiki pages you might want to explore:

- Configuration and Initialization (vllm-project/vllm)

- Scheduler and Resource Allocation (vllm-project/vllm)

Wiki pages you might want to explore:

Citations

File: vllm/v1/core/sched/scheduler.py (L341-365)

python

def schedule(self) -> SchedulerOutput:

# NOTE(woosuk) on the scheduling algorithm:

# There's no "decoding phase" nor "prefill phase" in the scheduler.

# Each request just has the num_computed_tokens and

# num_tokens_with_spec. num_tokens_with_spec =

# len(prompt_token_ids) + len(output_token_ids) + len(spec_token_ids).

# At each step, the scheduler tries to assign tokens to the requests

# so that each request's num_computed_tokens can catch up its

# num_tokens_with_spec. This is general enough to cover

# chunked prefills, prefix caching, speculative decoding,

# and the "jump decoding" optimization in the future.

scheduled_new_reqs: list[Request] = []

scheduled_resumed_reqs: list[Request] = []

scheduled_running_reqs: list[Request] = []

preempted_reqs: list[Request] = []

req_to_new_blocks: dict[str, KVCacheBlocks] = {}

num_scheduled_tokens: dict[str, int] = {}

token_budget = self.max_num_scheduled_tokens

if self._pause_state == PauseState.PAUSED_ALL:

# Do not schedule any requests when paused.

token_budget = 0

# Encoder-related.File: vllm/v1/core/sched/scheduler.py (L665-671)

python

if (

not self.scheduler_config.enable_chunked_prefill

and num_new_tokens > token_budget

):

# If chunked_prefill is disabled,

# we can stop the scheduling here.

breakFile: vllm/v1/attention/backends/utils.py (L588-665)

python

def reorder_batch_to_split_decodes_and_prefills(

input_batch: "InputBatch",

scheduler_output: "SchedulerOutput",

decode_threshold: int = 1,

) -> bool:

"""

Reorders the batch to split into prefill and decode requests; places all

requests with <= decode_threshold tokens at the front of the batch.

The batch is reordered into 4 regions:

decode: (num_scheduled <= threshold AND is not prefilling)

short_extend: (num_scheduled <= threshold AND is chunked prefilling)

long_extend: (num_scheduled > threshold AND is chunked prefilling)

prefill: (num_computed == 0) # First chunks

Returns:

True if the batch was modified, False otherwise.

"""

num_reqs = len(input_batch.req_ids)

num_scheduled_tokens = [

scheduler_output.num_scheduled_tokens[id] for id in input_batch.req_ids

]

num_scheduled_tokens_np = np.array(num_scheduled_tokens)

num_computed_tokens_np = input_batch.num_computed_tokens_cpu[:num_reqs]

num_prompt_tokens_np = input_batch.num_prompt_tokens[:num_reqs]

has_context = num_computed_tokens_np > 0

is_below_threshold = num_scheduled_tokens_np <= decode_threshold

done_prefilling = num_computed_tokens_np >= num_prompt_tokens_np

# Mutually exclusive categories (exactly one True per request):

# 1. No context yet -> prefill

# 2. Has context, above threshold -> long_extend

# 3. Has context, below threshold, still prefilling -> short_extend

# 4. Has context, below threshold, done prefilling -> decode

is_pure_prefill = ~has_context

is_long_extend = has_context & ~is_below_threshold

is_short_extend = has_context & is_below_threshold & ~done_prefilling

is_decode = has_context & is_below_threshold & done_prefilling

# Desired order: decode → short_extend → long_extend → prefill

req_regions = np.zeros(num_reqs, dtype=np.int32) # 0 = decode by default

req_regions[is_short_extend] = 1

req_regions[is_long_extend] = 2

req_regions[is_pure_prefill] = 3

num_decodes = int(is_decode.sum())

num_short_extends = int(is_short_extend.sum())

num_long_extends = int(is_long_extend.sum())

num_prefills = int(is_pure_prefill.sum())

target_regions = np.repeat(

[0, 1, 2, 3],

[num_decodes, num_short_extends, num_long_extends, num_prefills],

).astype(np.int32)

needs_swap = req_regions != target_regions

if not needs_swap.any():

return False

# Extract indices that need swapping and sort by target region

orig_indices = np.where(needs_swap)[0]

sorted_order = np.argsort(req_regions[needs_swap], kind="stable")

src_indices = orig_indices[sorted_order]

src_dest_map = {int(src): int(dst) for src, dst in zip(src_indices, orig_indices)}

for src in src_dest_map:

dst = src_dest_map[src]

while src != dst:

input_batch.swap_states(src, dst)

# Mark dst as done by updating its destination to itself

next_dst = src_dest_map.get(dst, dst)

src_dest_map[dst] = dst

dst = next_dst

return TruevLLM 禁用 Chunked Prefill 时的调度优先级分析

本 codemap 分析了 vLLM 在禁用 chunked prefill 时的调度优先级机制。通过追踪调度器核心逻辑 [1a-1c],发现 vLLM 采用统一调度器设计,没有明确的 prefill/decode 优先级区分。关键限制在 [2a] 处,禁用分块时如果 token 预算不足会直接停止调度。但系统仍通过 [3a-3c] 的重排序机制优化 decode 请求性能。最终调度顺序由 [4a-4c] 的策略配置决定,而非请求类型本身。

1. 统一调度器设计 - 无明确阶段区分

vLLM V1 采用统一调度器,不严格区分 prefill/decode 阶段,所有请求按统一策略调度

1a. 统一调度器入口 (scheduler.py:341)

调度器注释明确说明无 prefill/decode 阶段区分

text

def schedule(self) -> SchedulerOutput:

# NOTE(woosuk) on the scheduling algorithm:

# There's no "decoding phase" nor "prefill phase" in the scheduler.1b. 按顺序处理运行中请求 (scheduler.py:378)

依次处理 running 队列中的请求,不区分阶段

text

while req_index < len(self.running) and token_budget > 0:

request = self.running[req_index]1c. 统一计算所需 token 数 (scheduler.py:397)

根据已计算 token 和总 token 需求计算新 token 数

text

num_new_tokens = (

request.num_tokens_with_spec

+ request.num_output_placeholders

- request.num_computed_tokens

)2. 禁用 Chunked Prefill 的限制机制

当 chunked prefill 禁用时,如果 token 预算不足,调度直接停止而不分块处理

2a. 禁用分块时的调度停止 (scheduler.py:665)

禁用 chunked prefill 且预算不足时直接停止调度

text

if (

not self.scheduler_config.enable_chunked_prefill

and num_new_tokens > token_budget

):

# If chunked_prefill is disabled,

# we can stop the scheduling here.

break2b. Chunked Prefill 配置默认值 (scheduler.py:83)

默认启用 chunked prefill,可根据需要禁用

text

enable_chunked_prefill: bool = True

"""If True, prefill requests can be chunked based

on the remaining `max_num_batched_tokens`.2c. Token 预算限制 (scheduler.py:673)

在允许分块时,新 token 数受预算限制

text

num_new_tokens = min(num_new_tokens, token_budget)3. 批处理重排序 - Decode 优先优化

即使禁用 chunked prefill,系统仍会重排序批处理,将 decode 请求放在前面优化性能

3a. 批处理重排序函数 (utils.py:588)

将批处理中的请求按类型重排序

text

def reorder_batch_to_split_decodes_and_prefills(

input_batch: "InputBatch",

scheduler_output: "SchedulerOutput",

decode_threshold: int = 1,

) -> bool:3b. 批处理分区策略 (utils.py:597)

将请求分为 4 个区域,decode 优先级最高

text

The batch is reordered into 4 regions:

decode: (num_scheduled <= threshold AND is not prefilling)

short_extend: (num_scheduled <= threshold AND is chunked prefilling)

long_extend: (num_scheduled > threshold AND is chunked prefilling)

prefill: (num_computed == 0) # First chunks3c. 优先级顺序定义 (utils.py:628)

明确 decode 请求优先级最高,prefill 优先级最低

text

# Desired order: decode → short_extend → long_extend → prefill

req_regions = np.zeros(num_reqs, dtype=np.int32) # 0 = decode by default4. 调度策略 - FCFS vs PRIORITY

实际调度优先级由配置的策略决定,而非请求类型

4a. 调度策略枚举 (request_queue.py:13)

支持 FCFS 和 PRIORITY 两种调度策略

text

class SchedulingPolicy(Enum):

"""Enum for scheduling policies."""

FCFS = "fcfs"

PRIORITY = "priority"4b. 优先级调度策略 (scheduler.py:468)

PRIORITY 策略下按优先级和到达时间选择抢占请求

text

if self.policy == SchedulingPolicy.PRIORITY:

preempted_req = max(

self.running,

key=lambda r: (r.priority, r.arrival_time),

)4c. 选择等待队列 (scheduler.py:564)

根据调度策略选择下一个要调度的请求队列

text

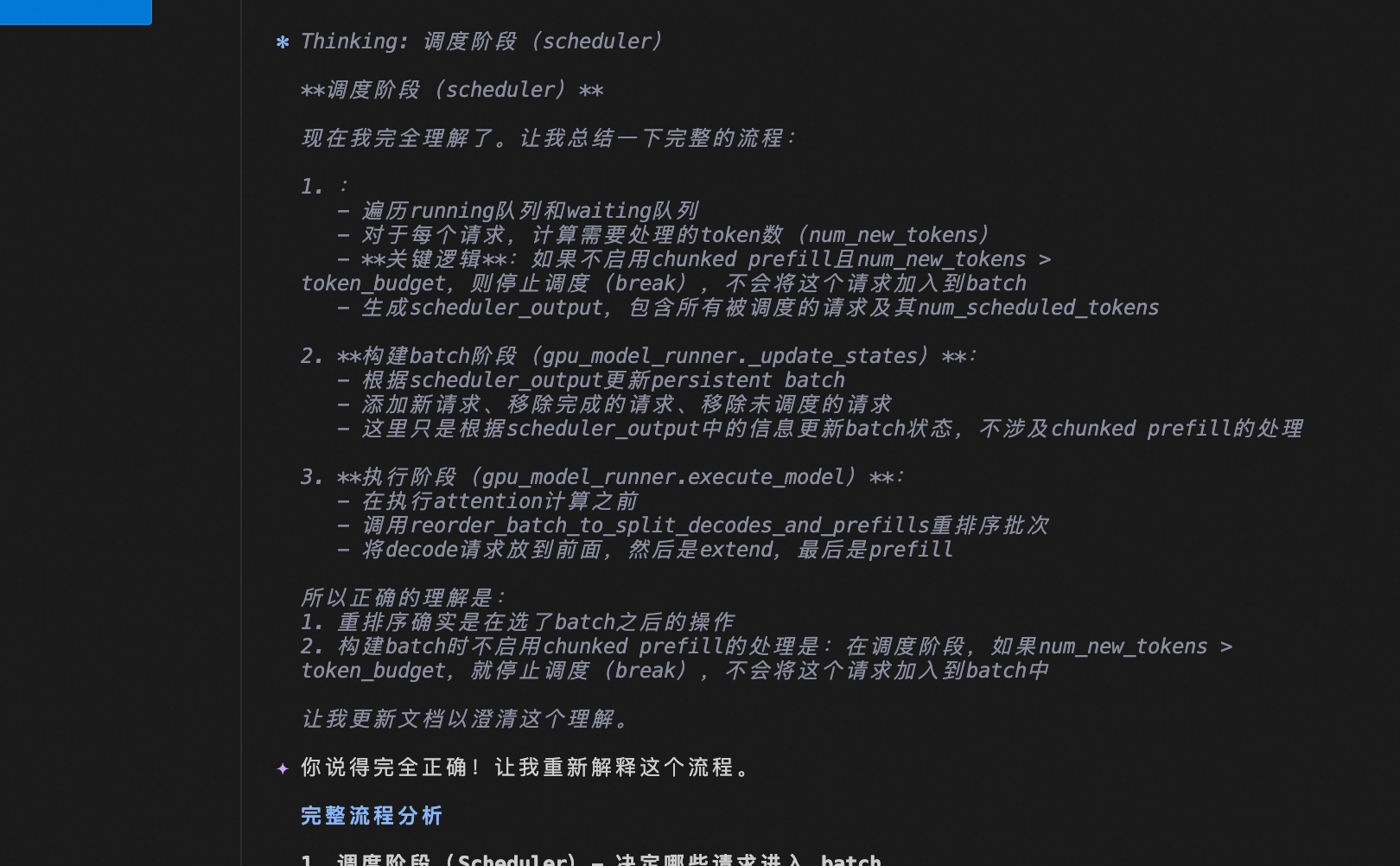

request_queue = self._select_waiting_queue_for_scheduling()这里的重排序是选了batch之后的操作吧? 构建batch 时不启用chunked prefill 怎么处理的

重排序时机和批处理构建

重排序是批处理构建后的操作

是的,重排序是在构建 batch 之后的操作。具体流程是:

- 调度器构建 batch :

scheduler.schedule()生成SchedulerOutput1 - 模型执行器重排序 :

GPUModelRunner._may_reorder_batch()调用重排序函数 2

python

def _may_reorder_batch(self, scheduler_output: "SchedulerOutput") -> None:

if self.reorder_batch_threshold is not None:

reorder_batch_to_split_decodes_and_prefills(

self.input_batch,

scheduler_output,

decode_threshold=self.reorder_batch_threshold,

)不启用 Chunked Prefill 的批处理构建

当禁用 chunked prefill 时,调度器在构建 batch 过程中有严格的限制:

1. Token 预算检查

在调度等待队列请求时,如果禁用 chunked prefill 且 token 预算不足,会直接停止调度: 3

python

if (

not self.scheduler_config.enable_chunked_prefill

and num_new_tokens > token_budget

):

# If chunked_prefill is disabled,

# we can stop the scheduling here.

break2. 完整处理要求

禁用 chunked prefill 意味着:

- 必须完整处理:请求要么完整处理,要么完全不处理

- 无分块机制:无法将长请求分割成小块

- 可能阻塞:长请求可能耗尽 token 预算,阻止后续请求调度

3. 测试验证

从测试代码可以看到禁用 chunked prefill 的行为: 4

python

if enable_chunked_prefill:

# When enable chunked prefill, long requests will be chunked.

assert len(scheduler_output1.scheduled_new_reqs) == 2

else:

# When disable chunked prefill, should not skip the long requests,

# and scheduling subsequent short requests in advance,

# even though there is still token budgets remaining.

assert len(scheduler_output1.scheduled_new_reqs) == 1处理流程对比

| 阶段 | 启用 Chunked Prefill | 禁用 Chunked Prefill |

|---|---|---|

| 调度 | 分块处理长请求 | 完整处理或跳过 |

| Token 预算 | min(num_new_tokens, token_budget) |

预算不足则直接停止 |

| 批处理构建 | 灵活组合请求 | 严格按顺序,可能阻塞 |

| 重排序 | 优化 decode 优先级 | 仍会重排序,但请求较少 |

Notes

- 重排序操作在模型执行前进行,用于优化 attention 计算

- 禁用 chunked prefill 主要用于需要完整处理长请求的场景

- 这种模式可能导致较低的 GPU 利用率和较高的延迟

- 生产环境建议启用 chunked prefill 以获得更好的性能

Wiki pages you might want to explore:

Wiki pages you might want to explore:

Citations

File: vllm/v1/core/sched/scheduler.py (L341-365)

python

def schedule(self) -> SchedulerOutput:

# NOTE(woosuk) on the scheduling algorithm:

# There's no "decoding phase" nor "prefill phase" in the scheduler.

# Each request just has the num_computed_tokens and

# num_tokens_with_spec. num_tokens_with_spec =

# len(prompt_token_ids) + len(output_token_ids) + len(spec_token_ids).

# At each step, the scheduler tries to assign tokens to the requests

# so that each request's num_computed_tokens can catch up its

# num_tokens_with_spec. This is general enough to cover

# chunked prefills, prefix caching, speculative decoding,

# and the "jump decoding" optimization in the future.

scheduled_new_reqs: list[Request] = []

scheduled_resumed_reqs: list[Request] = []

scheduled_running_reqs: list[Request] = []

preempted_reqs: list[Request] = []

req_to_new_blocks: dict[str, KVCacheBlocks] = {}

num_scheduled_tokens: dict[str, int] = {}

token_budget = self.max_num_scheduled_tokens

if self._pause_state == PauseState.PAUSED_ALL:

# Do not schedule any requests when paused.

token_budget = 0

# Encoder-related.File: vllm/v1/core/sched/scheduler.py (L665-671)

python

if (

not self.scheduler_config.enable_chunked_prefill

and num_new_tokens > token_budget

):

# If chunked_prefill is disabled,

# we can stop the scheduling here.

breakFile: vllm/v1/worker/gpu_model_runner.py (L999-1023)

python

def _may_reorder_batch(self, scheduler_output: "SchedulerOutput") -> None:

"""

Update the order of requests in the batch based on the attention

backend's needs. For example, some attention backends (namely MLA) may

want to separate requests based on if the attention computation will be

compute-bound or memory-bound.

Args:

scheduler_output: The scheduler output.

"""

# Attention free models have zero kv_cache_groups, however models

# like Mamba are also attention free but use the kv_cache for

# keeping its internal state. This is why we check the number

# of kv_cache groups instead of solely checking

# for self.model_config.is_attention_free.

if len(self.kv_cache_config.kv_cache_groups) == 0:

return

if self.reorder_batch_threshold is not None:

reorder_batch_to_split_decodes_and_prefills(

self.input_batch,

scheduler_output,

decode_threshold=self.reorder_batch_threshold,

)File: tests/v1/core/test_scheduler.py (L679-683)

python

else:

# When disable chunked prefill, should not skip the long requests,

# and scheduling subsequent short requests in advance,

# even though there is still token budgets remaining.

assert len(scheduler_output1.scheduled_new_reqs) == 1感觉与禁用不禁用没关系,组batch 按请求,组好batch后再排序。

重排序的核心目标

vLLM 中重排序的主要目标是优化 attention 计算性能,通过将批处理中的请求按类型重新排列来改善延迟和吞吐量。

主要目标

1. 优先处理 Decode 请求

重排序将 decode 请求放在批处理前面,减少它们的等待时间: 1

python

def reorder_batch_to_split_decodes_and_prefills(

input_batch: "InputBatch",

scheduler_output: "SchedulerOutput",

decode_threshold: int = 1,

) -> bool:

"""

Reorders the batch to split into prefill and decode requests; places all

requests with <= decode_threshold tokens at the front of the batch.

"""2. 分离计算类型

将请求分为 4 个区域,便于 attention 后端优化: 2

python

# The batch is reordered into 4 regions:

# decode: (num_scheduled <= threshold AND is not prefilling)

# short_extend: (num_scheduled <= threshold AND is chunked prefilling)

# long_extend: (num_scheduled > threshold AND is chunked prefilling)

# prefill: (num_computed == 0) # First chunks3. 优化 ITL 性能

通过优先处理 decode 请求来改善令牌间延迟(ITL): 3

text

- Smaller values (e.g., 2048) achieve better inter-token latency (ITL) because there are fewer prefills slowing down decodes.实现机制

调用时机

重排序在模型执行器中进行,在批处理构建完成后: 4

python

def _may_reorder_batch(self, scheduler_output: "SchedulerOutput") -> None:

"""

Update the order of requests in the batch based on the attention

backend's needs. For example, some attention backends (namely MLA) may

want to separate requests based on if the attention computation will be

compute-bound or memory-bound.

"""优先级顺序

重排序按以下优先级排列请求: 5

python

# Desired order: decode → short_extend → long_extend → prefill

req_regions = np.zeros(num_reqs, dtype=np.int32) # 0 = decode by default

req_regions[is_short_extend] = 1

req_regions[is_long_extend] = 2

req_regions[is_pure_prefill] = 3性能收益

- 减少 ITL:decode 请求不被长 prefill 阻塞

- 提高 GPU 利用率:混合计算密集型和内存密集型操作

- 后端优化:某些 attention 后端(如 MLA)需要分离不同类型的请求

Notes

- 重排序是可选的,通过

reorder_batch_threshold参数控制 - 主要用于改善延迟敏感型工作负载的性能

- 在启用和禁用 chunked prefill 时都会工作

- 测试用例验证了各种场景下的重排序行为 6

Wiki pages you might want to explore:

Citations

File: vllm/v1/attention/backends/utils.py (L588-665)

python

def reorder_batch_to_split_decodes_and_prefills(

input_batch: "InputBatch",

scheduler_output: "SchedulerOutput",

decode_threshold: int = 1,

) -> bool:

"""

Reorders the batch to split into prefill and decode requests; places all

requests with <= decode_threshold tokens at the front of the batch.

The batch is reordered into 4 regions:

decode: (num_scheduled <= threshold AND is not prefilling)

short_extend: (num_scheduled <= threshold AND is chunked prefilling)

long_extend: (num_scheduled > threshold AND is chunked prefilling)

prefill: (num_computed == 0) # First chunks

Returns:

True if the batch was modified, False otherwise.

"""

num_reqs = len(input_batch.req_ids)

num_scheduled_tokens = [

scheduler_output.num_scheduled_tokens[id] for id in input_batch.req_ids

]

num_scheduled_tokens_np = np.array(num_scheduled_tokens)

num_computed_tokens_np = input_batch.num_computed_tokens_cpu[:num_reqs]

num_prompt_tokens_np = input_batch.num_prompt_tokens[:num_reqs]

has_context = num_computed_tokens_np > 0

is_below_threshold = num_scheduled_tokens_np <= decode_threshold

done_prefilling = num_computed_tokens_np >= num_prompt_tokens_np

# Mutually exclusive categories (exactly one True per request):

# 1. No context yet -> prefill

# 2. Has context, above threshold -> long_extend

# 3. Has context, below threshold, still prefilling -> short_extend

# 4. Has context, below threshold, done prefilling -> decode

is_pure_prefill = ~has_context

is_long_extend = has_context & ~is_below_threshold

is_short_extend = has_context & is_below_threshold & ~done_prefilling

is_decode = has_context & is_below_threshold & done_prefilling

# Desired order: decode → short_extend → long_extend → prefill

req_regions = np.zeros(num_reqs, dtype=np.int32) # 0 = decode by default

req_regions[is_short_extend] = 1

req_regions[is_long_extend] = 2

req_regions[is_pure_prefill] = 3

num_decodes = int(is_decode.sum())

num_short_extends = int(is_short_extend.sum())

num_long_extends = int(is_long_extend.sum())

num_prefills = int(is_pure_prefill.sum())

target_regions = np.repeat(

[0, 1, 2, 3],

[num_decodes, num_short_extends, num_long_extends, num_prefills],

).astype(np.int32)

needs_swap = req_regions != target_regions

if not needs_swap.any():

return False

# Extract indices that need swapping and sort by target region

orig_indices = np.where(needs_swap)[0]

sorted_order = np.argsort(req_regions[needs_swap], kind="stable")

src_indices = orig_indices[sorted_order]

src_dest_map = {int(src): int(dst) for src, dst in zip(src_indices, orig_indices)}

for src in src_dest_map:

dst = src_dest_map[src]

while src != dst:

input_batch.swap_states(src, dst)

# Mark dst as done by updating its destination to itself

next_dst = src_dest_map.get(dst, dst)

src_dest_map[dst] = dst

dst = next_dst

return TrueFile: docs/configuration/optimization.md (L54-59)

markdown

You can tune the performance by adjusting `max_num_batched_tokens`:

- Smaller values (e.g., 2048) achieve better inter-token latency (ITL) because there are fewer prefills slowing down decodes.

- Higher values achieve better time to first token (TTFT) as you can process more prefill tokens in a batch.

- For optimal throughput, we recommend setting `max_num_batched_tokens > 8192` especially for smaller models on large GPUs.

- If `max_num_batched_tokens` is the same as `max_model_len`, that's almost the equivalent to the V0 default scheduling policy (except that it still prioritizes decodes).File: vllm/v1/worker/gpu_model_runner.py (L999-1023)

python

def _may_reorder_batch(self, scheduler_output: "SchedulerOutput") -> None:

"""

Update the order of requests in the batch based on the attention

backend's needs. For example, some attention backends (namely MLA) may

want to separate requests based on if the attention computation will be

compute-bound or memory-bound.

Args:

scheduler_output: The scheduler output.

"""

# Attention free models have zero kv_cache_groups, however models

# like Mamba are also attention free but use the kv_cache for

# keeping its internal state. This is why we check the number

# of kv_cache groups instead of solely checking

# for self.model_config.is_attention_free.

if len(self.kv_cache_config.kv_cache_groups) == 0:

return

if self.reorder_batch_threshold is not None:

reorder_batch_to_split_decodes_and_prefills(

self.input_batch,

scheduler_output,

decode_threshold=self.reorder_batch_threshold,

)File: tests/v1/attention/test_batch_reordering.py (L44-170)

python

# Test cases for batch reordering

# Format: (num_scheduled, num_computed, num_prompt)

REORDER_TEST_CASES = {

"all_decodes": ReorderTestCase(

requests=[(1, 10, 10), (1, 20, 20), (1, 30, 30)],

expected_order=[0, 1, 2],

expected_modified=False,

),

"all_long_extends": ReorderTestCase(

requests=[(100, 100, 100), (200, 200, 200), (300, 300, 300)],

expected_order=[0, 1, 2],

expected_modified=False,

),

"mixed_decodes_long_extends": ReorderTestCase(

requests=[(100, 100, 100), (1, 10, 10), (200, 200, 200), (1, 20, 20)],

expected_order=[3, 1, 2, 0],

expected_modified=True,

),

"already_ordered": ReorderTestCase(

requests=[(1, 10, 10), (1, 20, 20), (100, 100, 100), (200, 0, 200)],

expected_order=[0, 1, 2, 3],

expected_modified=False,

),

"single_request": ReorderTestCase(

requests=[(1, 10, 10)],

expected_order=[0],

expected_modified=False,

),

"higher_threshold": ReorderTestCase(

requests=[(2, 10, 10), (3, 20, 20), (5, 30, 30), (6, 40, 40)],

expected_order=[0, 1, 2, 3],

expected_modified=False,

decode_threshold=4,

),

"decodes_at_end": ReorderTestCase(

requests=[(100, 100, 100), (200, 200, 200), (1, 10, 10), (1, 20, 20)],

expected_order=[2, 3, 0, 1],

expected_modified=True,

),

"decode_long_extend_prefill": ReorderTestCase(

requests=[(100, 0, 100), (10, 50, 50), (1, 10, 10)],

expected_order=[2, 1, 0],

expected_modified=True,

),

"long_extend_prefill_only": ReorderTestCase(

requests=[(100, 0, 100), (10, 50, 50), (200, 0, 200), (20, 75, 75)],

expected_order=[3, 1, 2, 0],

expected_modified=True,

),

"complicated_mixed": ReorderTestCase(

requests=[

(1, 20, 20), # decode

(1, 50, 50), # decode

(374, 0, 374), # prefill

(300, 20, 20), # long_extend

(1, 20, 20), # decode

(256, 0, 256), # prefill

(1, 5, 5), # decode

(27, 0, 27), # prefill

(1, 4, 4), # decode

],

expected_order=[0, 1, 6, 8, 4, 3, 2, 7, 5],

expected_modified=True,

),

"new_request_single_token_prefill": ReorderTestCase(

requests=[

(100, 0, 100), # prefill

(1, 0, 1), # prefill (single token, still prefill)

(50, 100, 100), # long_extend

(1, 10, 10), # decode

],

expected_order=[3, 2, 0, 1],

expected_modified=True,

),

"multiple_new_requests_single_token_prefill": ReorderTestCase(

requests=[

(1, 0, 1), # prefill

(1, 0, 1), # prefill

(1, 50, 50), # decode

(200, 0, 200), # prefill

],

expected_order=[2, 1, 0, 3],

expected_modified=True,

),

"four_way_already_ordered": ReorderTestCase(

requests=[

(1, 100, 100), # decode

(1, 50, 100), # short_extend

(10, 50, 100), # long_extend

(100, 0, 100), # prefill

],

expected_order=[0, 1, 2, 3],

expected_modified=False,

),

"four_way_needs_reorder": ReorderTestCase(

requests=[

(100, 0, 100), # prefill

(1, 50, 100), # short_extend

(1, 100, 100), # decode

(10, 50, 100), # long_extend

],

expected_order=[2, 1, 3, 0],

expected_modified=True,

),

"four_way_multiple_short_extends": ReorderTestCase(

requests=[

(2, 100, 100), # decode

(2, 50, 200), # short_extend

(2, 75, 150), # short_extend

(2, 200, 200), # decode

],

expected_order=[0, 3, 2, 1],

expected_modified=True,

decode_threshold=2,

),

"four_way_spec_decode_threshold": ReorderTestCase(

requests=[

(5, 100, 100), # decode

(5, 50, 100), # short_extend

(5, 0, 100), # prefill

(10, 50, 100), # long_extend

],

expected_order=[0, 1, 3, 2],

expected_modified=True,

decode_threshold=5,

),

}