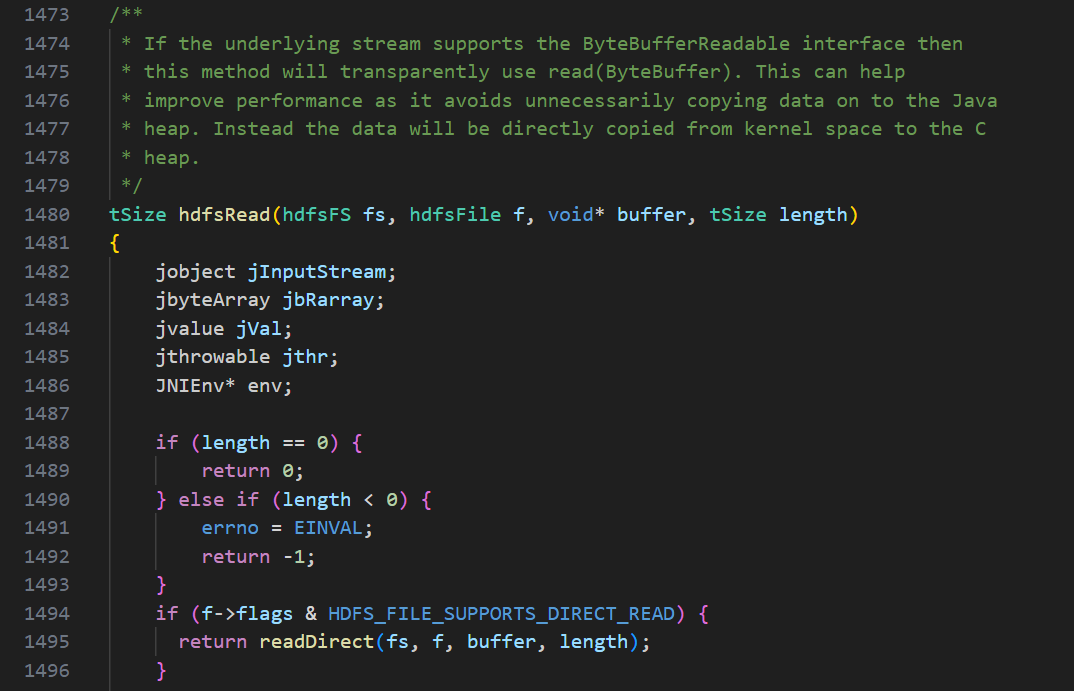

hdfsRead

readDirect

csharp

tSize readDirect(hdfsFS fs, hdfsFile f, void* buffer, tSize length)

{

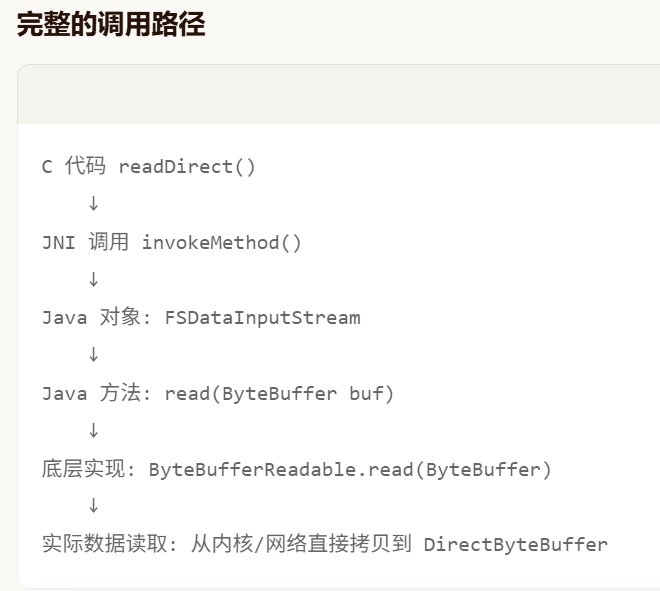

// JAVA EQUIVALENT:

// ByteBuffer buf = ByteBuffer.allocateDirect(length) // wraps C buffer

// fis.read(buf);

jobject jInputStream;

jvalue jVal;

jthrowable jthr;

jobject bb;

//Get the JNIEnv* corresponding to current thread

JNIEnv* env = getJNIEnv();

if (env == NULL) {

errno = EINTERNAL;

return -1;

}

if (readPrepare(env, fs, f, &jInputStream) == -1) {

return -1;

}

//Read the requisite bytes

bb = (*env)->NewDirectByteBuffer(env, buffer, length);

if (bb == NULL) {

errno = printPendingExceptionAndFree(env, PRINT_EXC_ALL,

"readDirect: NewDirectByteBuffer");

return -1;

}

jthr = invokeMethod(env, &jVal, INSTANCE, jInputStream,

JC_FS_DATA_INPUT_STREAM, "read",

"(Ljava/nio/ByteBuffer;)I", bb);

destroyLocalReference(env, bb);

if (jthr) {

errno = printExceptionAndFree(env, jthr, PRINT_EXC_ALL,

"readDirect: FSDataInputStream#read");

return -1;

}

// Reached EOF, return 0

if (jVal.i < 0) {

return 0;

}

// 0 bytes read, return error

if (jVal.i == 0) {

errno = EINTR;

return -1;

}

return jVal.i;

}

csharp

org.apache.hadoop.fs.FSDataInputStream

public int read(java.nio.ByteBuffer buf) throws IOExceptionhadoop-common-project/hadoop-common/src/main/java/org/apache/hadoop/fs/FSDataInputStream.java

csharp

@Override

public int read(ByteBuffer buf) throws IOException {

if (in instanceof ByteBufferReadable) {

return ((ByteBufferReadable)in).read(buf);

}

throw new UnsupportedOperationException("Byte-buffer read unsupported " +

"by " + in.getClass().getCanonicalName());

}

csharp

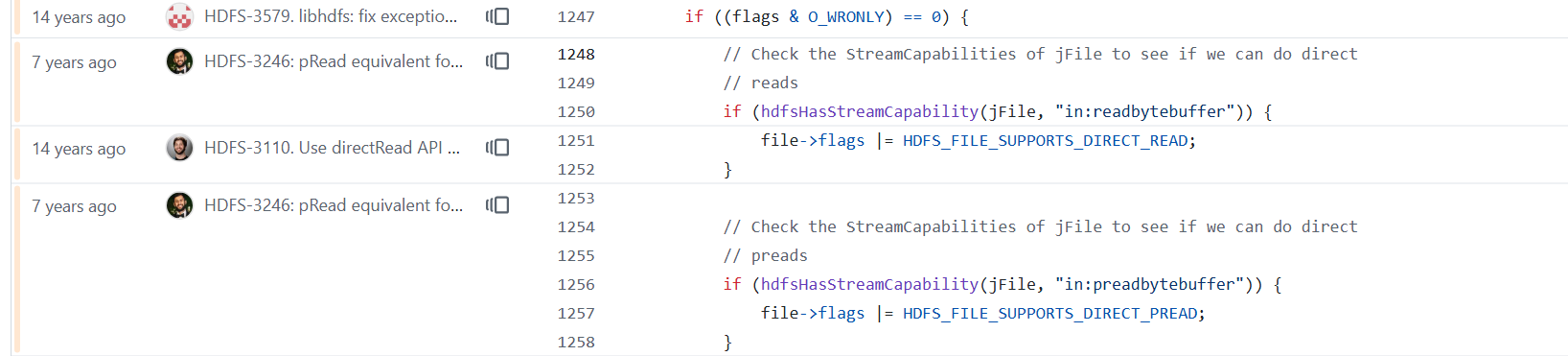

if ((flags & O_WRONLY) == 0) {

// Check the StreamCapabilities of jFile to see if we can do direct

// reads

if (hdfsHasStreamCapability(jFile, "in:readbytebuffer")) {

file->flags |= HDFS_FILE_SUPPORTS_DIRECT_READ;

}

// Check the StreamCapabilities of jFile to see if we can do direct

// preads

if (hdfsHasStreamCapability(jFile, "in:preadbytebuffer")) {

file->flags |= HDFS_FILE_SUPPORTS_DIRECT_PREAD;

}

}

ret = 0;

csharp

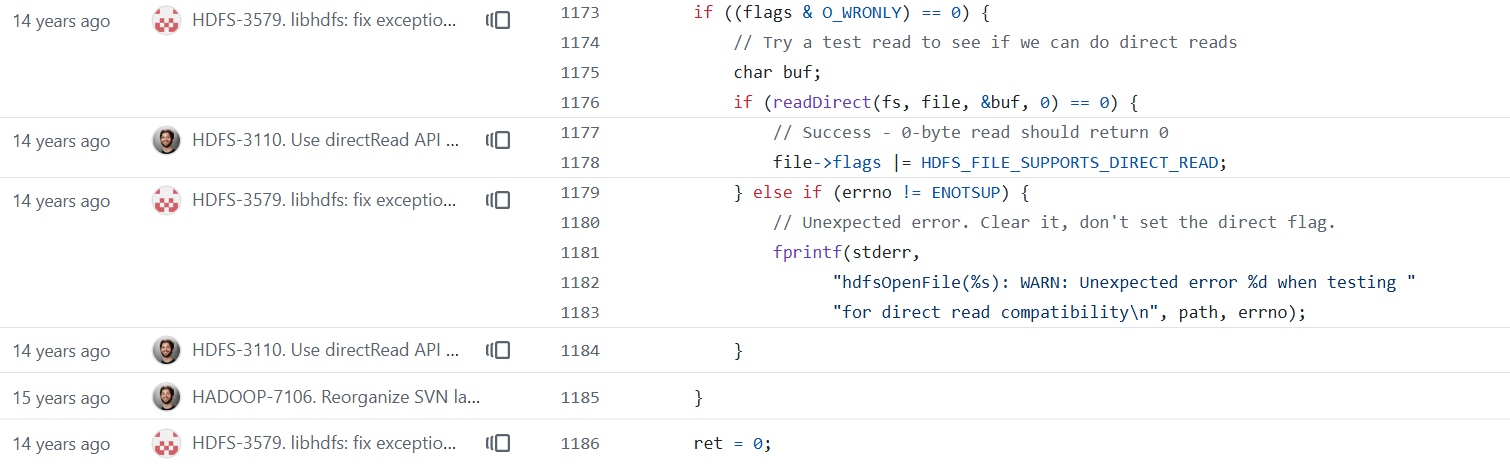

if ((flags & O_WRONLY) == 0) {

// Try a test read to see if we can do direct reads

char buf;

if (readDirect(fs, file, &buf, 0) == 0) {

// Success - 0-byte read should return 0

file->flags |= HDFS_FILE_SUPPORTS_DIRECT_READ;

} else if (errno != ENOTSUP) {

// Unexpected error. Clear it, don't set the direct flag.

fprintf(stderr,

"hdfsOpenFile(%s): WARN: Unexpected error %d when testing "

"for direct read compatibility\n", path, errno);

}

}

ret = 0;

csharp

/**

* Stream read(ByteBuffer) capability implemented by

* {@link ByteBufferReadable#read(java.nio.ByteBuffer)}.

*/

String READBYTEBUFFER = "in:readbytebuffer";

/**

* Stream read(long, ByteBuffer) capability implemented by

* {@link ByteBufferPositionedReadable#read(long, java.nio.ByteBuffer)}.

*/

String PREADBYTEBUFFER = "in:preadbytebuffer";查看进程时使用了哪些动态库

csharp

3747188 Jps

[root@hadoop00 test]#

[root@hadoop00 test]#

[root@hadoop00 test]# lsof -p 3745282 | grep hdfs

java 3745282 root mem REG 8,4 307760 1282588866 /usr/local/hadoop-3.3.3/lib/native/libhdfs.so.0.0.0

java 3745282 root mem REG 8,4 5023516 1623503278 /usr/local/spark-3.1.1-bin-hadoop3.2/jars/hadoop-hdfs-client-3.2.0.jar

java 3745282 root 279r REG 8,4 5023516 1623503278 /usr/local/spark-3.1.1-bin-hadoop3.2/jars/hadoop-hdfs-client-3.2.0.jar