OpenStack

OpenStack Flamingo 在社区庆祝成立 15 周年之际,平衡了稳定性和安全性,以满足不断增长的用户群的需求。

OpenStack 2025.2(Flamingo)正式发布,这是全球部署最广泛的开源云基础设施软件的第32个版本。这一里程碑式的发布正值全球生产环境中OpenStack核心部署量预计超过5500万之际,凸显了OpenStack作为可靠、弹性且创新型数字基础设施基础架构的重要作用。

- OpenStack 多个组件新增了安全功能:

Nova 现在支持一次性使用的直通设备。此类设备分配给单个实例,实例删除后,设备将保持预留状态,而不是自动变为可用状态。这确保操作员可以在重新使用设备之前执行必要的安全检查或硬件重置。

Nova 还增加了对 AMD 安全加密虚拟化 - 加密状态 (SEV-ES) 和 libvirt 的支持,扩展了 Nova 中的机密计算功能,以保护客户机内存和 CPU 寄存器状态。

Magnum 新增了一个 API 端点,允许在现有集群中轮换凭据。这可用于转移集群所有权或重新创建已失效的凭据。

Manila 现在支持自带加密密钥来访问加密共享服务器

Horizon 现在可以显示二维码,以便您在手机上的身份验证应用程序中设置新的 TOTP。 - Flamingo 版本的另一个重要主题是显著改善了 OpenStack 对 Eventlet 的依赖。

Eventlet 在 OpenStack 早期引入,用于驱动异步操作。Python 3 引入了 asyncio,一种更原生的异步操作处理方式,而 Eventlet 的维护工作则逐渐减少。

将 OpenStack 从 Eventlet 迁移出来是一项艰巨的任务,但为了确保 OpenStack 的长期可持续发展,偿还这笔技术债务是必要的。Octavia 早在 2017 年初就完成了迁移,为后续的迁移铺平了道路。

在 Flamingo 开发周期中,Ironic、Mistral、Barbican 和 Heat 都完全从 Eventlet 迁移了出去!Neutron API、RPC、代理(元数据、DHCP、L3 等)、工作程序和所有相关代码也都迁移了出去,Nova API、元数据和调度程序服务也是如此。 - Flamingo版本引入的其他重要改进包括:

指定添加对服务绑定 (SVCB) 和 HTTPS 资源记录的支持,显著降低新端点连接的握手延迟

Manila 新增了对在与源不同的共享上恢复共享备份的支持

Nova 通过 libvirt 驱动程序支持 QEMU 的内存气球自动释放和空闲页面报告功能,从而提升内存性能。

Watcher 现在在其主机维护策略中为实时和冷迁移场景提供精细的控制,使操作员能够精确控制维护期间的工作负载放置。

初始化环境

单节点搭建

bash

root@huhy:~# hostnamectl

Static hostname: huhy

Icon name: computer-vm

Chassis: vm 🖴

Machine ID: c8a9b7f5efae45c5bc6a260f67e2100d

Boot ID: dba9ff6f5af04b7bad94fbc609934829

Virtualization: vmware

Operating System: Ubuntu 24.04 LTS

Kernel: Linux 6.8.0-31-generic

Architecture: x86-64

Hardware Vendor: VMware, Inc.

Hardware Model: VMware Virtual Platform

Firmware Version: 6.00

Firmware Date: Thu 2020-11-12

Firmware Age: 5y 4month 4w 2d

bash

root@huhy:~# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host noprefixroute

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:64:d5:35 brd ff:ff:ff:ff:ff:ff

altname enp2s1

inet 192.168.200.160/24 brd 192.168.200.255 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe64:d535/64 scope link

valid_lft forever preferred_lft forever

3: ens34: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:64:d5:3f brd ff:ff:ff:ff:ff:ff

altname enp2s2

inet6 fe80::20c:29ff:fe64:d53f/64 scope link

valid_lft forever preferred_lft forever- 初始化环境:免密,主机名、时间同步

bash

vi init.sh

bash

#!/bin/bash

# 定义节点信息

NODES=("192.168.200.160 controller root")

# 定义当前节点的密码(默认集群统一密码)

HOST_PASS="000000"

# 时间同步的目标节点

TIME_SERVER=controller

# 时间同步的地址段

TIME_SERVER_IP=192.160.200.0/24

# 欢迎界面

cat > /etc/motd <<EOF

################################

# Welcome to openstack #

################################

EOF

# 修改主机名

for node in "${NODES[@]}"; do

ip=$(echo "$node" | awk '{print $1}')

hostname=$(echo "$node" | awk '{print $2}')

# 获取当前节点的主机名和 IP

current_ip=$(hostname -I | awk '{print $1}')

current_hostname=$(hostname)

# 检查当前节点与要修改的节点信息是否匹配

if [[ "$current_ip" == "$ip" && "$current_hostname" != "$hostname" ]]; then

echo "Updating hostname to $hostname on $current_ip..."

hostnamectl set-hostname "$hostname"

if [ $? -eq 0 ]; then

echo "Hostname updated successfully."

else

echo "Failed to update hostname."

fi

break

fi

done

# 遍历节点信息并添加到 hosts 文件

for node in "${NODES[@]}"; do

ip=$(echo "$node" | awk '{print $1}')

hostname=$(echo "$node" | awk '{print $2}')

# 检查 hosts 文件中是否已存在相应的解析

if grep -q "$ip $hostname" /etc/hosts; then

echo "Host entry for $hostname already exists in /etc/hosts."

else

# 添加节点的解析条目到 hosts 文件

sudo sh -c "echo '$ip $hostname' >> /etc/hosts"

echo "Added host entry for $hostname in /etc/hosts."

fi

done

if [[ ! -s ~/.ssh/id_rsa.pub ]]; then

ssh-keygen -t rsa -N '' -f ~/.ssh/id_rsa -q -b 2048

fi

# 检查并安装 sshpass 工具

if ! which sshpass &> /dev/null; then

echo "sshpass 工具未安装,正在安装 sshpass..."

sudo apt-get install -y sshpass

fi

# 遍历所有节点进行免密操作

for node in "${NODES[@]}"; do

ip=$(echo "$node" | awk '{print $1}')

hostname=$(echo "$node" | awk '{print $2}')

user=$(echo "$node" | awk '{print $3}')

# 使用 sshpass 提供密码,并自动确认密钥

sshpass -p "$HOST_PASS" ssh-copy-id -o StrictHostKeyChecking=no -i /root/.ssh/id_rsa.pub "$user@$hostname"

done

# 时间同步

apt install -y chrony

if [[ $TIME_SERVER_IP == *$(hostname -I)* ]]; then

# 配置当前节点为时间同步源

sed -i '20,23s/^/#/g' /etc/chrony/chrony.conf

echo "server $TIME_SERVER iburst maxsources 2" >> /etc/chrony/chrony.conf

echo "allow $TIME_SERVER_IP" >> /etc/chrony/chrony.conf

echo "local stratum 10" >> /etc/chrony/chrony.conf

else

# 配置当前节点同步到目标节点

sed -i '20,23s/^/#/g' /etc/chrony/chrony.conf

echo "pool $TIME_SERVER iburst maxsources 2" >> /etc/chrony/chrony.conf

fi

# 重启并启用 chrony 服务

systemctl restart chronyd

systemctl enable chrony

echo "###############################################################"

echo "################# 集群初始化成功 #####################"

echo "###############################################################"配置环境变量

bash

mkdir /etc/openstack/

bash

cat > /etc/openstack/openrc.sh << eof

#--------------------system Config--------------------##

#Controller Server Manager IP. example:x.x.x.x

HOST_IP=192.168.200.160

#Controller HOST Password. example:000000

HOST_PASS=000000

#Controller Server hostname. example:controller

HOST_NAME=controller

#--------------------Rabbit Config ------------------##

#user for rabbit. example:openstack

RABBIT_USER=openstack

#Password for rabbit user .example:000000

RABBIT_PASS=000000

#--------------------MySQL Config---------------------##

#Password for MySQL root user . exmaple:000000

DB_PASS=000000

#--------------------Keystone Config------------------##

#Password for Keystore admin user. exmaple:000000

DOMAIN_NAME=default

ADMIN_PASS=000000

DEMO_PASS=000000

#Password for Mysql keystore user. exmaple:000000

KEYSTONE_DBPASS=000000

#--------------------Glance Config--------------------##

#Password for Mysql glance user. exmaple:000000

GLANCE_DBPASS=000000

#Password for Keystore glance user. exmaple:000000

GLANCE_PASS=000000

#--------------------Placement Config----------------------##

#Password for Mysql placement user. exmaple:000000

PLACEMENT_DBPASS=000000

#Password for Keystore placement user. exmaple:000000

PLACEMENT_PASS=000000

#--------------------Nova Config----------------------##

#Password for Mysql nova user. exmaple:000000

NOVA_DBPASS=000000

#Password for Keystore nova user. exmaple:000000

NOVA_PASS=000000

#--------------------Neutron Config-------------------##

#Password for Mysql neutron user. exmaple:000000

NEUTRON_DBPASS=000000

#Password for Keystore neutron user. exmaple:000000

NEUTRON_PASS=000000

#metadata secret for neutron. exmaple:000000

METADATA_SECRET=000000

#External Network Interface. example:eth1

INTERFACE_NAME=ens34

#用于创建ovs网络

OVS_NAME=br-ens34

#External Network The Physical Adapter. example:provider

Physical_NAME=provider

#First Vlan ID in VLAN RANGE for VLAN Network. exmaple:101

minvlan=1

#Last Vlan ID in VLAN RANGE for VLAN Network. example:200

maxvlan=1000

eof配置仓库源

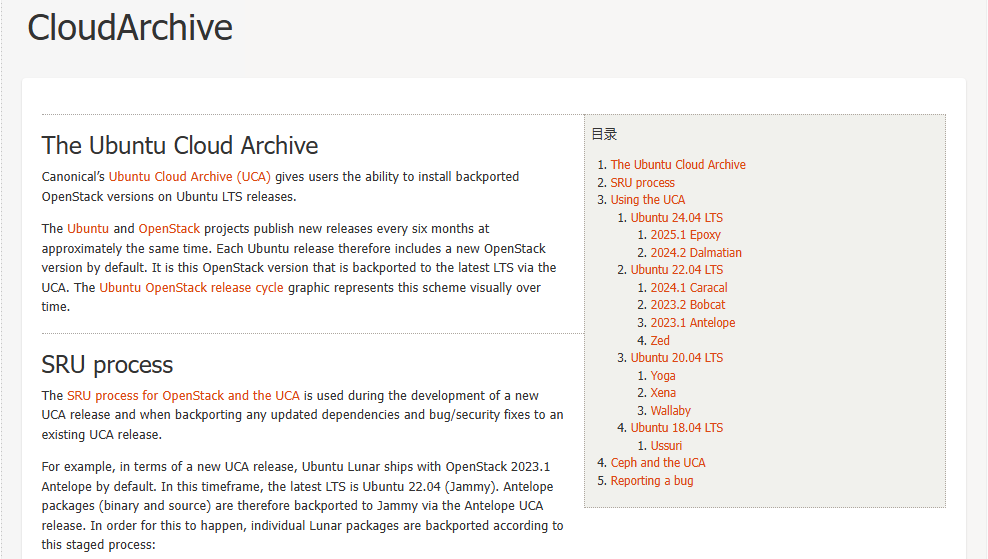

Ubuntu和OpenStack项目大约每六个月发布一次新版本,时间大致相同。因此,每个 Ubuntu 版本默认都包含一个新的 OpenStack 版本。这个 OpenStack 版本会通过 UCA 向后移植到最新的 LTS 版本中。Ubuntu OpenStack发布周期图以可视化的方式展现了这一过程

而Ubuntu 24.04 LTS 版本默认使用的OpenStack C版的源,根据需要进行替换D和E版的源,但本次实验搭建使用F版本flamingo,需要修复keystone组件的依赖问题

- D版源

bash

apt update

apt install -y software-properties-common

add-apt-repository cloud-archive:dalmatian- E版源

bash

apt update

apt install -y software-properties-common

add-apt-repository cloud-archive:epoxy- F版源

bash

apt update

apt install -y software-properties-common

add-apt-repository cloud-archive:flamingo安装数据库、memcahe、rabbitmq等服务

bash

vi iaas-install-mysql.sh

bash

#!/bin/bash

source /etc/openstack/openrc.sh

apt update

# install package

apt install -y python3-openstackclient

apt install -y mariadb-server python3-pymysql

cat > /etc/mysql/mariadb.conf.d/99-openstack.cnf << EOF

[mysqld]

bind-address = 0.0.0.0

default-storage-engine = innodb

innodb_file_per_table = on

max_connections = 4096

collation-server = utf8_general_ci

character-set-server = utf8

EOF

systemctl enable --now mariadb

mysql -e "ALTER USER 'root'@'localhost' IDENTIFIED BY '$DB_PASS';"

mysql -uroot -p$DB_PASS -e "FLUSH PRIVILEGES"

systemctl restart mariadb

apt install -y rabbitmq-server

rabbitmqctl add_user $RABBIT_USER $RABBIT_PASS

rabbitmqctl set_permissions $RABBIT_USER ".*" ".*" ".*"

systemctl enable --now rabbitmq-server

apt install -y memcached python3-memcache

sed -i 's/-l 127.0.0.1/-l 0.0.0.0/'g /etc/memcached.conf

systemctl enable --now memcached

echo "################# mariadb,rabbitmq,memcached installation completed ####################"

bash

bash iaas-install-mysql.sh安装keystone服务

bash

vi iaas-install-keystone.sh

bash

#!/bin/bash

source /etc/openstack/openrc.sh

#keystone mysql

mysql -uroot -p$DB_PASS -e "create database IF NOT EXISTS keystone ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'localhost' IDENTIFIED BY '$KEYSTONE_DBPASS' ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' IDENTIFIED BY '$KEYSTONE_DBPASS' ;"

apt install -y keystone

cp /etc/keystone/keystone.conf{,.bak}

cat > /etc/keystone/keystone.conf << eof

[DEFAULT]

log_dir = /var/log/keystone

[application_credential]

[assignment]

[auth]

[cache]

[catalog]

[cors]

[credential]

[database]

connection = mysql+pymysql://keystone:$KEYSTONE_DBPASS@$HOST_NAME/keystone

[domain_config]

[endpoint_filter]

[endpoint_policy]

[eventlet_server]

[extra_headers]

Distribution = Ubuntu

[federation]

[fernet_receipts]

[fernet_tokens]

[healthcheck]

[identity]

[identity_mapping]

[jwt_tokens]

[ldap]

[memcache]

[oauth1]

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_middleware]

[oslo_policy]

[policy]

[profiler]

[receipt]

[resource]

[revoke]

[role]

[saml]

[security_compliance]

[shadow_users]

[token]

provider = fernet

[tokenless_auth]

[totp]

[trust]

[unified_limit]

[wsgi]

eof

su -s /bin/sh -c "keystone-manage db_sync" keystone

keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone

keystone-manage credential_setup --keystone-user keystone --keystone-group keystone

keystone-manage bootstrap --bootstrap-password $ADMIN_PASS \

--bootstrap-admin-url http://$HOST_NAME:5000/v3/ \

--bootstrap-internal-url http://$HOST_NAME:5000/v3/ \

--bootstrap-public-url http://$HOST_NAME:5000/v3/ \

--bootstrap-region-id RegionOne

echo "ServerName $HOST_NAME" >> /etc/apache2/apache2.conf

###修复开始##

mkdir -p /var/www/keystone

# 创建 WSGI 入口文件

cat > /var/www/keystone/wsgi.py << 'EOF'

from keystone.server.flask import core as flask_core

# 创建 application 对象

application = flask_core.initialize_application(

name='public',

config_files=flask_core._get_config_files()

)

EOF

chown -R keystone:keystone /var/www/keystone

chmod 755 /var/www/keystone

chmod 644 /var/www/keystone/wsgi.py

chmod +x /usr/lib/python3/dist-packages/keystone

chmod +x /usr/lib/python3/dist-packages/keystone/server

chmod +x /usr/lib/python3/dist-packages/keystone/server/flask

mkdir -p /var/log/keystone

chown -R keystone:keystone /var/log/keystone

# 备份原有配置

cp /etc/apache2/sites-available/keystone.conf /etc/apache2/sites-available/keystone.conf.bak 2>/dev/null

# 创建新的配置文件

cat > /etc/apache2/sites-available/keystone.conf << 'EOF'

Listen 5000

<VirtualHost *:5000>

ServerName controller

WSGIDaemonProcess keystone-public processes=5 threads=1 user=keystone group=keystone python-path=/usr/lib/python3/dist-packages:/var/www/keystone

WSGIProcessGroup keystone-public

WSGIScriptAlias / /var/www/keystone/wsgi.py

WSGIApplicationGroup %{GLOBAL}

WSGIPassAuthorization On

LimitRequestBody 114688

ErrorLog /var/log/apache2/keystone.log

CustomLog /var/log/apache2/keystone_access.log combined

<Directory /var/www/keystone>

Require all granted

</Directory>

</VirtualHost>

EOF

systemctl enable --now apache2

###修复结束###

cat > /etc/keystone/admin-openrc.sh << EOF

export OS_PROJECT_DOMAIN_NAME=Default

export OS_USER_DOMAIN_NAME=Default

export OS_PROJECT_NAME=admin

export OS_USERNAME=admin

export OS_PASSWORD=$ADMIN_PASS

export OS_AUTH_URL=http://$HOST_NAME:5000/v3

export OS_IDENTITY_API_VERSION=3

export OS_IMAGE_API_VERSION=2

EOF

systemctl restart apache2

source /etc/keystone/admin-openrc.sh

openstack project create --domain default --description "Service Project" service

openstack token issue

echo "############################ keystone installation completed ###########################"

bash

bash iaas-install-keystone.sh- 查看版本

bash

root@controller:~# keystone-manage --version

28.0.0

bash

root@controller:~# openstack token issue

+------------+---------------------------------------------------------------------------------------------------------+

| Field | Value |

+------------+---------------------------------------------------------------------------------------------------------+

| expires | 2026-04-13T06:56:17+0000 |

| id | gAAAAABp3IWB8PE4K31LAZzORWJEyDEuC_nLd-e9LRAsiXrX2-wQyvqSbOLBqLTF0aSmQx6GreK7c8-Q8UKtoozd0QPf- |

| | TBlEP2U1rpHHfRXY2G_sXhb7Wx6aPbKs0LvNRiLNTRhw9qp5lB4k3QNHC3GCTKSnejcHvWrnkj7D4rnXnP0BjZFPXA |

| project_id | 4271406a5f574212bf0e66420c94f1e1 |

| user_id | e18635ccb3ac44e4adec58091e480d81 |

+------------+---------------------------------------------------------------------------------------------------------+安装glance服务

bash

vi iaas-install-glance.sh

bash

#!/bin/bash

source /etc/openstack/openrc.sh

source /etc/keystone/admin-openrc.sh

#glance mysql

mysql -uroot -p$DB_PASS -e "create database IF NOT EXISTS glance ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'localhost' IDENTIFIED BY '$GLANCE_DBPASS' ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' IDENTIFIED BY '$GLANCE_DBPASS' ;"

openstack user create --domain $DOMAIN_NAME --password $GLANCE_PASS glance

openstack role add --project service --user glance admin

openstack service create --name glance --description "OpenStack Image" image

openstack endpoint create --region RegionOne image public http://$HOST_NAME:9292

openstack endpoint create --region RegionOne image internal http://$HOST_NAME:9292

openstack endpoint create --region RegionOne image admin http://$HOST_NAME:9292

apt install -y glance

cp /etc/glance/glance-api.conf{,.bak}

cat > /etc/glance/glance-api.conf << eof

[DEFAULT]

[barbican]

[barbican_service_user]

[cinder]

[cors]

[database]

connection = mysql+pymysql://glance:$GLANCE_DBPASS@$HOST_NAME/glance

[glance_store]

stores = file,http

default_store = file

filesystem_store_datadir = /var/lib/glance/images/

[image_format]

disk_formats = ami,ari,aki,vhd,vhdx,vmdk,raw,qcow2,vdi,iso,ploop.root-tar

[keystone_authtoken]

www_authenticate_uri = http://$HOST_NAME:5000

auth_url = http://$HOST_NAME:5000

memcached_servers = $HOST_NAME:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = glance

password = $GLANCE_PASS

[paste_deploy]

flavor = keystone

eof

su -s /bin/sh -c "glance-manage db_sync" glance

systemctl enable --now glance-api

systemctl restart glance-api

echo "########################## glance installation completed ###############################"

bash

bash iaas-install-glance.sh- 查看版本

cpp

root@controller:~# glance-manage --version

31.0.0安装placement服务

bash

vi iaas-install-placement.sh

bash

#!/bin/bash

source /etc/openstack/openrc.sh

source /etc/keystone/admin-openrc.sh

#placement mysql

mysql -uroot -p$DB_PASS -e "CREATE DATABASE placement;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'localhost' IDENTIFIED BY '$PLACEMENT_DBPASS';"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'%' IDENTIFIED BY '$PLACEMENT_DBPASS';"

openstack user create --domain $DOMAIN_NAME --password $PLACEMENT_PASS placement

openstack role add --project service --user placement admin

openstack service create --name placement --description "Placement API" placement

openstack endpoint create --region RegionOne placement public http://$HOST_NAME:8778

openstack endpoint create --region RegionOne placement internal http://$HOST_NAME:8778

openstack endpoint create --region RegionOne placement admin http://$HOST_NAME:8778

apt install -y placement-api

cp /etc/placement/placement.conf{,.bak}

cat > /etc/placement/placement.conf << eof

[DEFAULT]

[api]

auth_strategy = keystone

[cors]

[keystone_authtoken]

auth_url = http://$HOST_NAME:5000/v3

memcached_servers = $HOST_NAME:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = placement

password = $PLACEMENT_PASS

[placement_database]

connection = mysql+pymysql://placement:$PLACEMENT_DBPASS@$HOST_NAME/placement

eof

su -s /bin/sh -c "placement-manage db sync" placement

systemctl restart apache2

placement-status upgrade check

echo "############################# placement installation completed #########################"

bash

bash iaas-install-placement.sh- 查看版本

cpp

root@controller:~# placement-manage --version

14.0.0安装nova服务

bash

vi iaas-install-nova-controller.sh

bash

#!/bin/bash

source /etc/openstack/openrc.sh

source /etc/keystone/admin-openrc.sh

mysql -uroot -p$DB_PASS -e "create database IF NOT EXISTS nova ;"

mysql -uroot -p$DB_PASS -e "create database IF NOT EXISTS nova_api ;"

mysql -uroot -p$DB_PASS -e "create database IF NOT EXISTS nova_cell0 ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' IDENTIFIED BY '$NOVA_DBPASS' ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY '$NOVA_DBPASS' ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'localhost' IDENTIFIED BY '$NOVA_DBPASS' ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' IDENTIFIED BY '$NOVA_DBPASS' ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'localhost' IDENTIFIED BY '$NOVA_DBPASS' ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' IDENTIFIED BY '$NOVA_DBPASS' ;"

openstack user create --domain $DOMAIN_NAME --password $NOVA_PASS nova

openstack role add --project service --user nova admin

openstack service create --name nova --description "OpenStack Compute" compute

openstack endpoint create --region RegionOne compute public http://$HOST_NAME:8774/v2.1

openstack endpoint create --region RegionOne compute internal http://$HOST_NAME:8774/v2.1

openstack endpoint create --region RegionOne compute admin http://$HOST_NAME:8774/v2.1

apt install -y nova-api nova-conductor nova-novncproxy nova-scheduler

apt install -y nova-compute

cp /etc/nova/nova.conf{,.bak}

cat > /etc/nova/nova.conf << eof

[DEFAULT]

log_dir = /var/log/nova

lock_path = /var/lock/nova

state_path = /var/lib/nova

transport_url = rabbit://$RABBIT_USER:$RABBIT_PASS@$HOST_NAME

my_ip = $HOST_IP

[api]

auth_strategy = keystone

[api_database]

connection = mysql+pymysql://nova:$NOVA_DBPASS@$HOST_NAME/nova_api

[barbican]

[barbican_service_user]

[cache]

[cinder]

[compute]

[conductor]

[console]

[consoleauth]

[cors]

[cyborg]

[database]

connection = mysql+pymysql://nova:$NOVA_DBPASS@$HOST_NAME/nova

[devices]

[ephemeral_storage_encryption]

[filter_scheduler]

[glance]

api_servers = http://$HOST_NAME:9292

[guestfs]

[healthcheck]

[hyperv]

[image_cache]

[ironic]

[key_manager]

[keystone]

[keystone_authtoken]

www_authenticate_uri = http://$HOST_NAME:5000/

auth_url = http://$HOST_NAME:5000/

memcached_servers = $HOST_NAME:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = $NOVA_PASS

[libvirt]

[metrics]

[mks]

[neutron]

[notifications]

[oslo_concurrency]

lock_path = /var/lib/nova/tmp

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_middleware]

[oslo_policy]

[oslo_reports]

[pci]

[placement]

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://$HOST_NAME:5000/v3

username = placement

password = $PLACEMENT_PASS

[powervm]

[privsep]

[profiler]

[quota]

[rdp]

[remote_debug]

[scheduler]

[serial_console]

[service_user]

[spice]

[upgrade_levels]

[vault]

[vendordata_dynamic_auth]

[vmware]

[vnc]

enabled = true

server_listen = $HOST_IP

server_proxyclient_address = $HOST_IP

novncproxy_base_url = http://$HOST_IP:6080/vnc_auto.html

[workarounds]

[wsgi]

[zvm]

[cells]

enable = False

[os_region_name]

openstack =

eof

su -s /bin/sh -c "nova-manage api_db sync" nova

su -s /bin/sh -c "nova-manage cell_v2 map_cell0" nova

su -s /bin/sh -c "nova-manage cell_v2 create_cell --name=cell1 --verbose" nova

su -s /bin/sh -c "nova-manage db sync" nova

su -s /bin/sh -c "nova-manage cell_v2 list_cells" nova

systemctl enable --now nova-scheduler

systemctl enable --now nova-conductor

systemctl enable --now nova-novncproxy

cat > /root/nova-service-restart.sh <<EOF

#!bin/bash

# 处理api服务

systemctl restart apache2

# 处理资源调度服务

service nova-scheduler restart

# 处理数据库服务

service nova-conductor restart

# 处理vnc远程窗口服务

service nova-novncproxy restart

# 处理nova-compute服务

service nova-compute restart

EOF

nova-manage cell_v2 discover_hosts

nova-manage cell_v2 map_cell_and_hosts

bash /root/nova-service-restart.sh

echo "############################# nova installation completed ##############################"

bash

bash iaas-install-nova-controller.sh- 查看版本

cpp

root@controller:~# nova-manage --version

32.0.0- 注:此时nova-api服务运行在 Apache 中(端口 8774),不使用systemd管理

bash

root@controller:~# ls -la /etc/apache2/sites-enabled/ | grep nova

lrwxrwxrwx 1 root root 32 Apr 13 06:19 nova-api.conf -> ../sites-available/nova-api.conf

bash

root@controller:~# ps aux | grep -E "apache|nova" | grep -v grep

www-data 9295 0.0 0.0 3616 1544 ? Ss 06:10 0:00 /usr/bin/htcacheclean -d 120 -p /var/cache/apache2/mod_cache_disk -l 300M -n

root 35392 0.0 0.1 16972 6568 ? Ss 06:22 0:00 /usr/sbin/apache2 -k start

keystone 35394 9.5 3.2 268208 129612 ? Sl 06:22 0:03 /usr/sbin/apache2 -k start

keystone 35395 9.9 3.2 268208 129368 ? Sl 06:22 0:03 /usr/sbin/apache2 -k start

keystone 35396 9.8 3.2 267184 129468 ? Sl 06:22 0:03 /usr/sbin/apache2 -k start

keystone 35397 10.0 3.2 267180 129476 ? Sl 06:22 0:03 /usr/sbin/apache2 -k start

keystone 35398 9.3 3.2 268208 129548 ? Sl 06:22 0:03 /usr/sbin/apache2 -k start

nova 35399 0.0 0.3 110968 13112 ? Sl 06:22 0:00 (wsgi:nova-api) -k start

nova 35400 0.0 0.3 110968 13112 ? Sl 06:22 0:00 (wsgi:nova-api) -k start

nova 35401 0.0 0.3 110968 13112 ? Sl 06:22 0:00 (wsgi:nova-api) -k start

nova 35402 0.0 0.3 110968 13112 ? Sl 06:22 0:00 (wsgi:nova-api) -k start

nova 35403 0.0 0.3 110968 13112 ? Sl 06:22 0:00 (wsgi:nova-api) -k start

www-data 35413 0.0 0.3 1946680 15160 ? Sl 06:22 0:00 /usr/sbin/apache2 -k start

www-data 35414 0.0 0.3 2012288 15416 ? Sl 06:22 0:00 /usr/sbin/apache2 -k start

nova 35525 4.6 3.2 147672 126864 ? Ss 06:22 0:01 /usr/bin/python3 /usr/bin/nova-scheduler --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-scheduler.log

nova 35535 4.7 3.2 147796 127372 ? Ss 06:22 0:01 /usr/bin/python3 /usr/bin/nova-conductor --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-conductor.log

nova 35545 3.8 3.3 167676 133256 ? Ss 06:22 0:01 /usr/bin/python3 /usr/bin/nova-novncproxy --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-novncproxy.log

nova 35555 6.3 4.1 1771560 164980 ? Ssl 06:22 0:02 /usr/bin/python3 /usr/bin/nova-compute --config-file=/etc/nova/nova.conf --config-file=/etc/nova/nova-compute.conf --log-file=/var/log/nova/nova-compute.log

nova 35584 0.1 2.9 149172 114976 ? S 06:22 0:00 /usr/bin/python3 /usr/bin/nova-scheduler --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-scheduler.log

nova 35585 0.1 2.9 149172 115360 ? S 06:22 0:00 /usr/bin/python3 /usr/bin/nova-scheduler --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-scheduler.log

nova 35586 0.1 2.9 149172 114976 ? S 06:22 0:00 /usr/bin/python3 /usr/bin/nova-scheduler --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-scheduler.log

nova 35587 0.1 2.9 149172 115104 ? S 06:22 0:00 /usr/bin/python3 /usr/bin/nova-scheduler --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-scheduler.log

nova 35592 0.4 2.9 150624 116984 ? S 06:22 0:00 /usr/bin/python3 /usr/bin/nova-conductor --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-conductor.log

nova 35593 0.3 2.9 150616 116856 ? S 06:22 0:00 /usr/bin/python3 /usr/bin/nova-conductor --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-conductor.log

nova 35594 0.4 2.9 150752 117240 ? S 06:22 0:00 /usr/bin/python3 /usr/bin/nova-conductor --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-conductor.log

nova 35595 0.3 2.9 150780 117112 ? S 06:22 0:00 /usr/bin/python3 /usr/bin/nova-conductor --config-file=/etc/nova/nova.conf --log-file=/var/log/nova/nova-conductor.log安装neutron服务

bash

vi iaas-install-neutron-controller.sh

bash

#!/bin/bash

source /etc/openstack/openrc.sh

source /etc/keystone/admin-openrc.sh

mysql -uroot -p$DB_PASS -e "create database IF NOT EXISTS neutron ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'localhost' IDENTIFIED BY '$NEUTRON_DBPASS' ;"

mysql -uroot -p$DB_PASS -e "GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' IDENTIFIED BY '$NEUTRON_DBPASS' ;"

openstack user create --domain $DOMAIN_NAME --password $NEUTRON_PASS neutron

openstack role add --project service --user neutron admin

openstack service create --name neutron --description "OpenStack Networking" network

openstack endpoint create --region RegionOne network public http://$HOST_NAME:9696

openstack endpoint create --region RegionOne network internal http://$HOST_NAME:9696

openstack endpoint create --region RegionOne network admin http://$HOST_NAME:9696

cat >> /etc/sysctl.conf << EOF

# 用于控制系统是否开启对数据包源地址的校验,关闭

net.ipv4.conf.all.rp_filter=0

net.ipv4.conf.default.rp_filter=0

# 开启二层转发设备

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

EOF

modprobe br_netfilter

sysctl -p

apt install -y neutron-server neutron-plugin-ml2 neutron-l3-agent neutron-dhcp-agent neutron-metadata-agent neutron-openvswitch-agent

cp /etc/neutron/neutron.conf{,.bak}

cat > /etc/neutron/neutron.conf << eof

[DEFAULT]

core_plugin = ml2

service_plugins = router

allow_overlapping_ips = true

auth_strategy = keystone

state_path = /var/lib/neutron

dhcp_agent_notification = true

allow_overlapping_ips = true

notify_nova_on_port_status_changes = true

notify_nova_on_port_data_changes = true

transport_url = rabbit://$RABBIT_USER:$RABBIT_PASS@$HOST_NAME

[agent]

root_helper = "sudo /usr/bin/neutron-rootwrap /etc/neutron/rootwrap.conf"

[database]

connection = mysql+pymysql://neutron:$NEUTRON_DBPASS@$HOST_NAME/neutron

[keystone_authtoken]

www_authenticate_uri = http://$HOST_NAME:5000

auth_url = http://$HOST_NAME:5000

memcached_servers = $HOST_NAME:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = $NEUTRON_PASS

[nova]

auth_url = http://$HOST_NAME:5000

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = nova

password = $NOVA_PASS

[oslo_concurrency]

lock_path = /var/lib/neutron/tmp

eof

cp /etc/neutron/plugins/ml2/ml2_conf.ini{,.bak}

cat > /etc/neutron/plugins/ml2/ml2_conf.ini << eof

[DEFAULT]

[ml2]

type_drivers = flat,vlan,vxlan,gre

tenant_network_types = vxlan

mechanism_drivers = openvswitch,l2population

extension_drivers = port_security

[ml2_type_flat]

flat_networks = $Physical_NAME

[ml2_type_geneve]

[ml2_type_gre]

[ml2_type_vlan]

[ml2_type_vxlan]

vni_ranges = $minvlan:$maxvlan

[ovs_driver]

[securitygroup]

enable_ipset = true

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver

[sriov_driver]

eof

cp /etc/neutron/plugins/ml2/openvswitch_agent.ini{,.bak}

cat > /etc/neutron/plugins/ml2/openvswitch_agent.ini << eof

[DEFAULT]

[agent]

l2_population = True

tunnel_types = vxlan

prevent_arp_spoofing = True

[dhcp]

[network_log]

[ovs]

local_ip = $HOST_IP

bridge_mappings = $Physical_NAME:$OVS_NAME

[securitygroup]

eof

cp /etc/neutron/l3_agent.ini{,.bak}

cat > /etc/neutron/l3_agent.ini << eof

[DEFAULT]

interface_driver = neutron.agent.linux.interface.OVSInterfaceDriver

external_network_bridge =

[agent]

[network_log]

[ovs]

eof

cp /etc/neutron/dhcp_agent.ini{,.bak}

cat > /etc/neutron/dhcp_agent.ini << eof

[DEFAULT]

interface_driver = neutron.agent.linux.interface.OVSInterfaceDriver

dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

enable_isolated_metadata = True

[agent]

[ovs]

eof

cp /etc/neutron/metadata_agent.ini{,.bak}

cat > /etc/neutron/metadata_agent.ini << eof

[DEFAULT]

nova_metadata_host = $HOST_NAME

metadata_proxy_shared_secret = $METADATA_SECRET

[agent]

[cache]

eof

sed -i '2s/.*/linuxnet_interface_driver = nova.network.linux_net.LinuxOVSlnterfaceDriver\n&/' /etc/nova/nova.conf

sed -i "50s/.*/auth_url = http:\/\/$HOST_NAME:5000\nauth_type = password\nproject_domain_name = default\nuser_domain_name = default\nregion_name = RegionOne\nproject_name = service\nusername = neutron\npassword = $NEUTRON_PASS\nservice_metadata_proxy = true\nmetadata_proxy_shared_secret = $METADATA_SECRET\n&/" /etc/nova/nova.conf

su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron

systemctl restart apache2

ovs-vsctl add-br $OVS_NAME

ovs-vsctl add-port $OVS_NAME $INTERFACE_NAME

systemctl enable --now neutron-openvswitch-agent

systemctl enable --now neutron-dhcp-agent

systemctl enable --now neutron-metadata-agent

systemctl enable --now neutron-l3-agent

cat > /root/neutron-service-restart.sh <<EOF

#!bin/bash

# 提供neutron服务

systemctl restart apache2

# 提供ovs服务

service neutron-openvswitch-agent restart

# 提供地址动态服务

service neutron-dhcp-agent restart

# 提供元数据服务

service neutron-metadata-agent restart

# 提供三层网络服务

service neutron-l3-agent restart

EOF

bash /root/neutron-service-restart.sh

echo "######################### neutron installation completed ###############################"

bash

bash iaas-install-neutron-controller.sh- 查看版本

cpp

root@controller:~# dpkg -l | grep neutron-common

ii neutron-common 2:27.0.0-0ubuntu1~cloud0 all Neutron is a virtual network service for Openstack - common- neutron-server服务运行在 Apache 中(端口 9696)

bash

root@controller:~# ls -la /etc/apache2/sites-enabled/ | grep neutron

lrwxrwxrwx 1 root root 35 Apr 13 06:25 neutron-api.conf -> ../sites-available/neutron-api.conf安装horizon服务

bash

vi iaas-install-horizon.sh

bash

#!/bin/bash

source /etc/openstack/openrc.sh

source /etc/keystone/admin-openrc.sh

apt install -y openstack-dashboard

cp /etc/openstack-dashboard/local_settings.py{,.bak}

sed -i '126s/.*/OPENSTACK_HOST = "'$HOST_NAME'"/' /etc/openstack-dashboard/local_settings.py

sed -i '112s/.*/SESSION_ENGINE = '\''django.contrib.sessions.backends.cache'\''/' /etc/openstack-dashboard/local_settings.py

sed -i '127s#.*#OPENSTACK_KEYSTONE_URL = "http://%s:5000/v3" % OPENSTACK_HOST#' /etc/openstack-dashboard/local_settings.py

echo "OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = True" >> /etc/openstack-dashboard/local_settings.py

echo "OPENSTACK_API_VERSIONS = {

\"identity\": 3,

\"image\": 2,

\"volume\": 3,

}" >> /etc/openstack-dashboard/local_settings.py

echo "OPENSTACK_KEYSTONE_DEFAULT_DOMAIN = \"Default\"" >> /etc/openstack-dashboard/local_settings.py

echo 'OPENSTACK_KEYSTONE_DEFAULT_ROLE = "user"' >> /etc/openstack-dashboard/local_settings.py

echo "OPENSTACK_CINDER_FEATURES = {

'enable_backup': True,

}" >> /etc/openstack-dashboard/local_settings.py

sed -i '131s/.*/TIME_ZONE = "Asia\/Shanghai"/' /etc/openstack-dashboard/local_settings.py

systemctl reload apache2

echo "######################### horizon installation completed ###############################"

bash

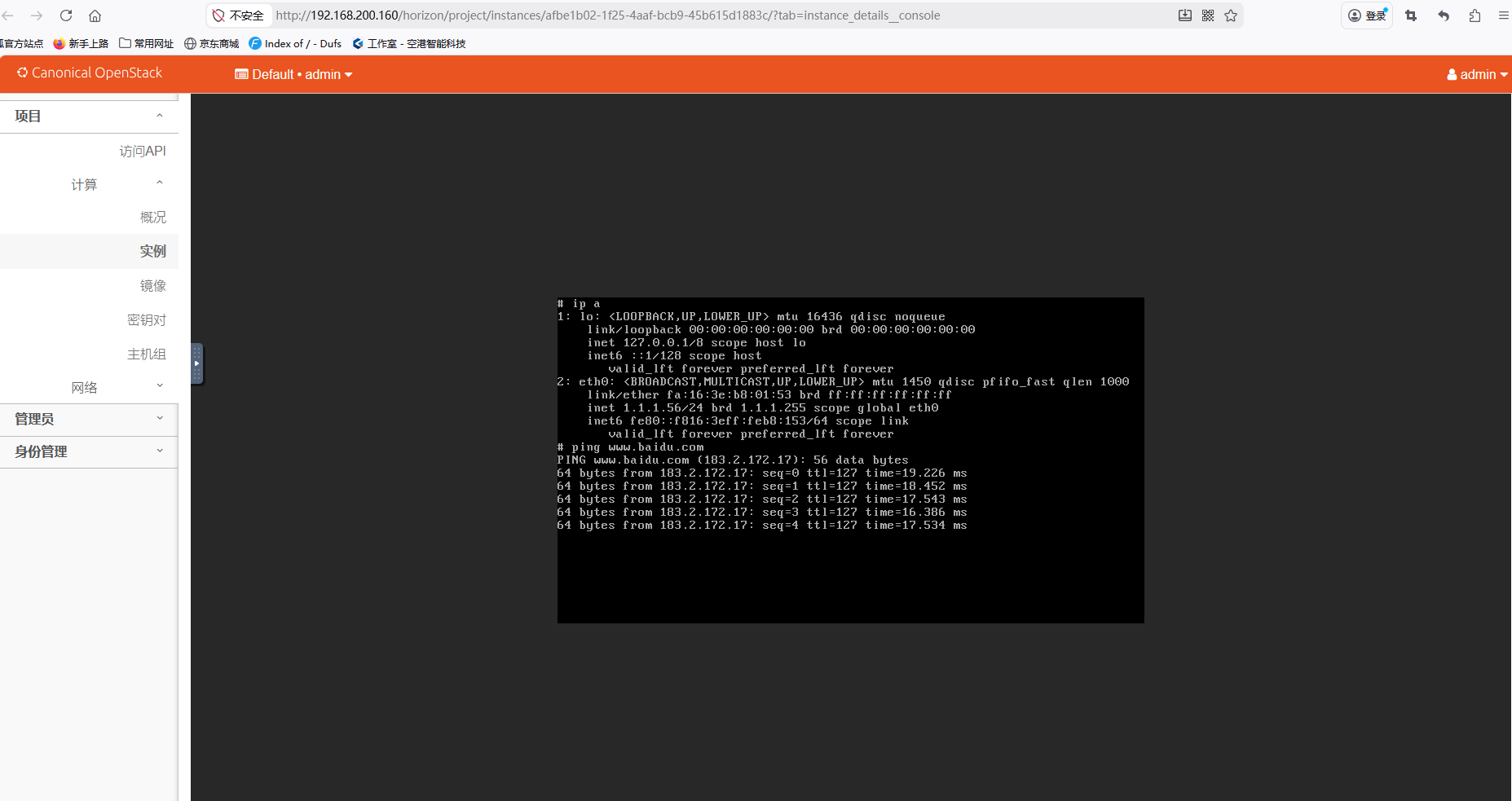

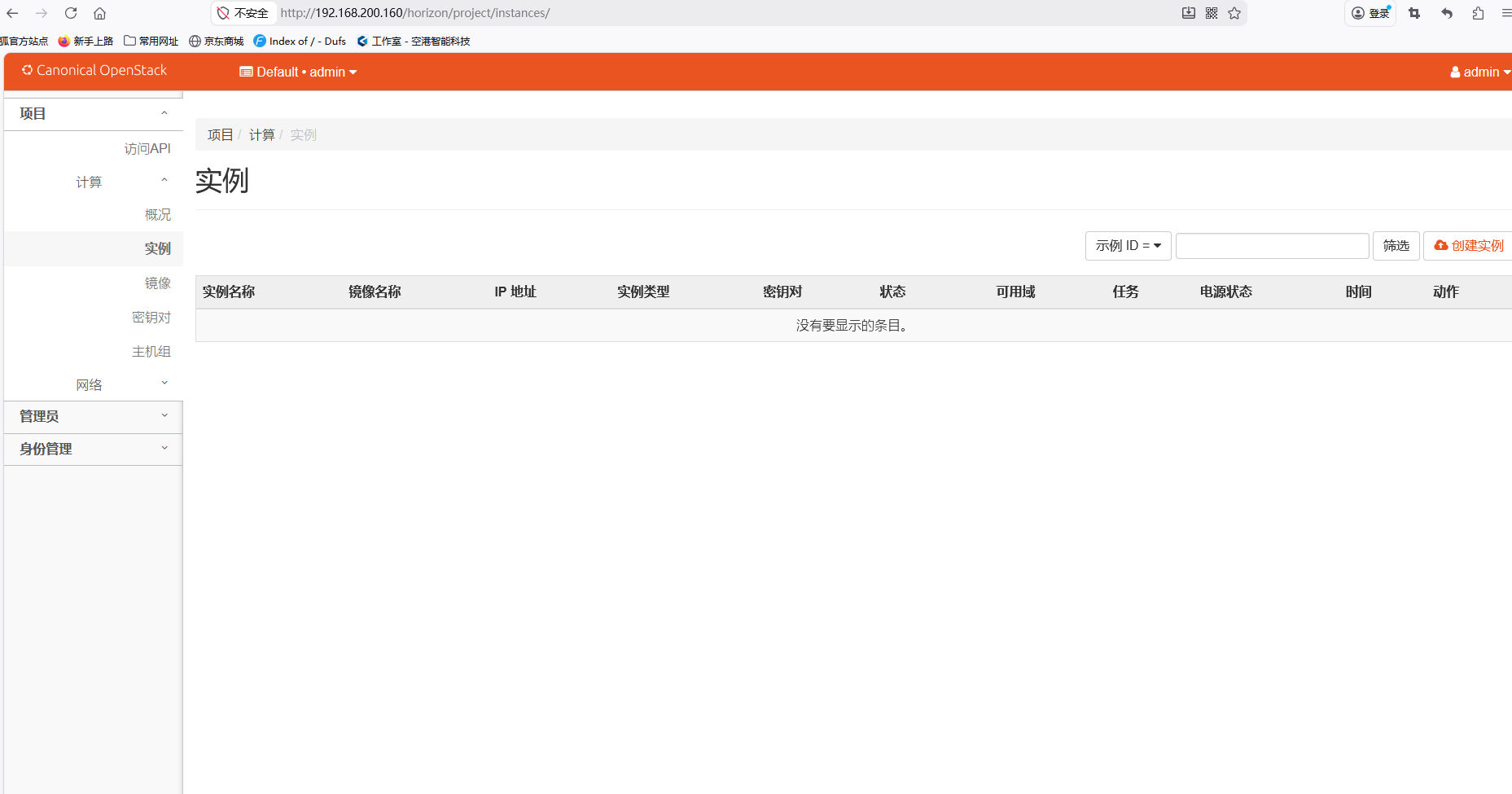

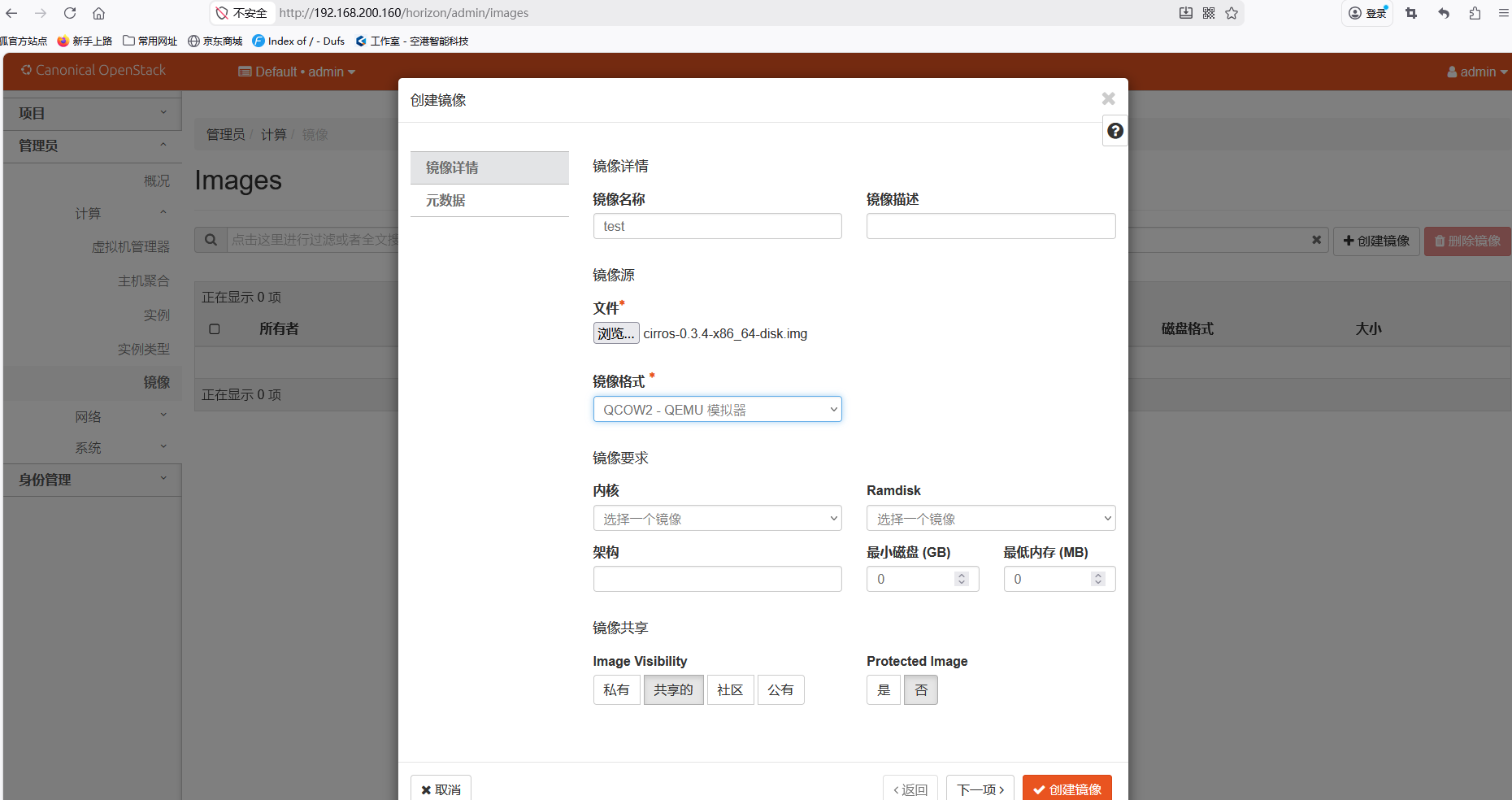

bash iaas-install-horizon.sh配置实例上网

-

浏览器访问web服务:IP/horizon(admin/000000/default)

-

创建测试镜像

-

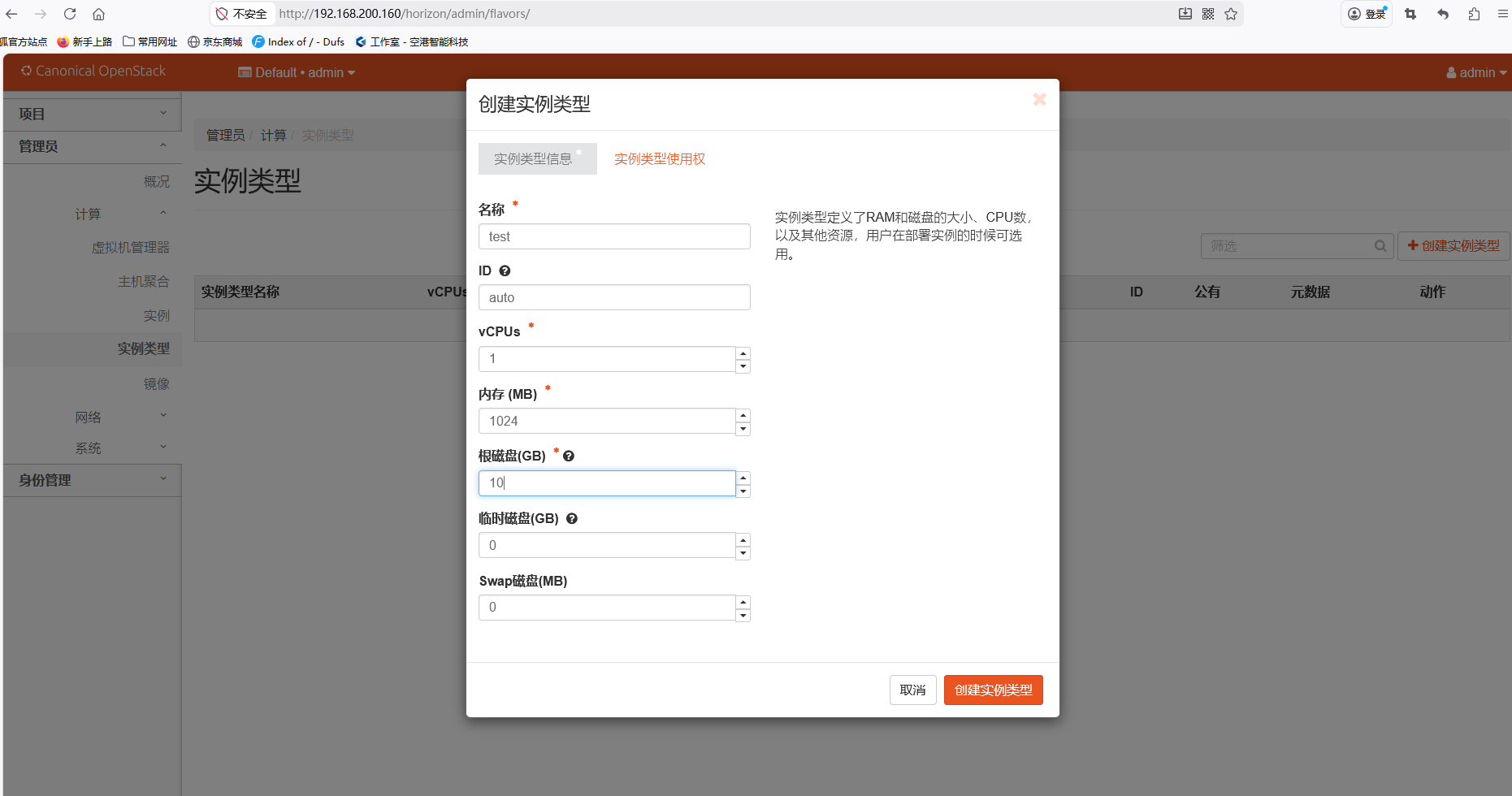

创建测试实例类型

-

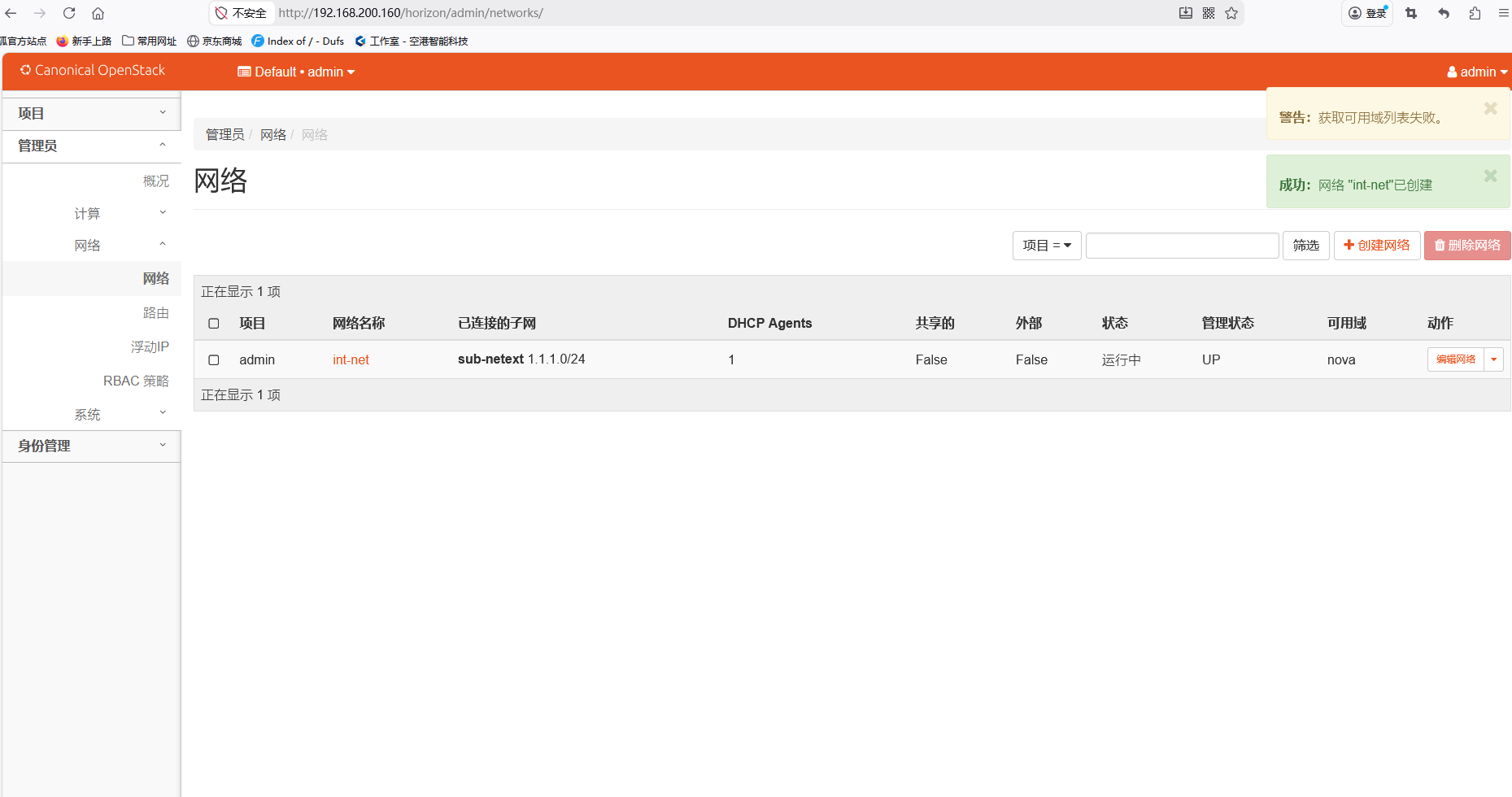

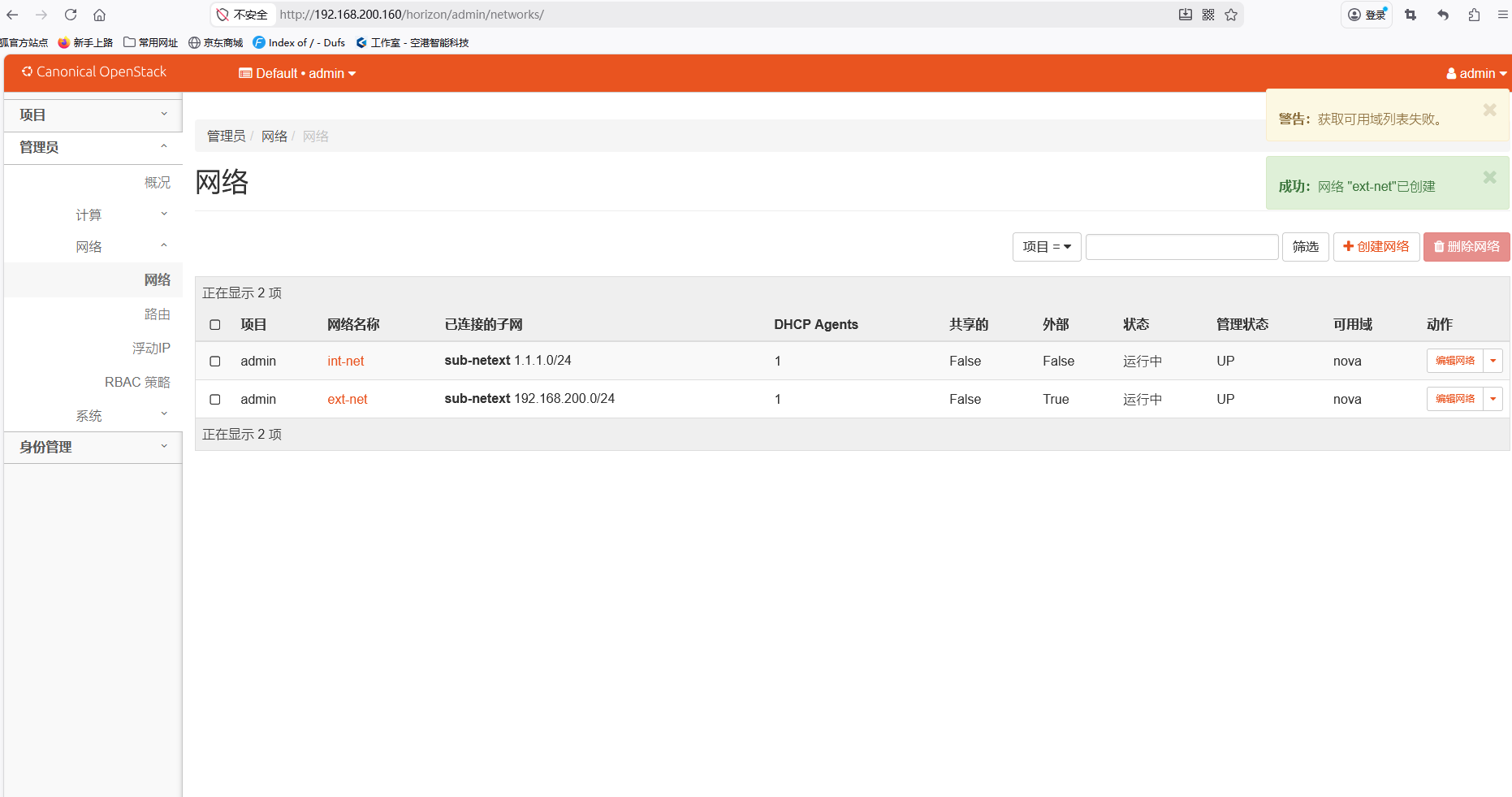

创建测试内部网络(vxlan模式)

-

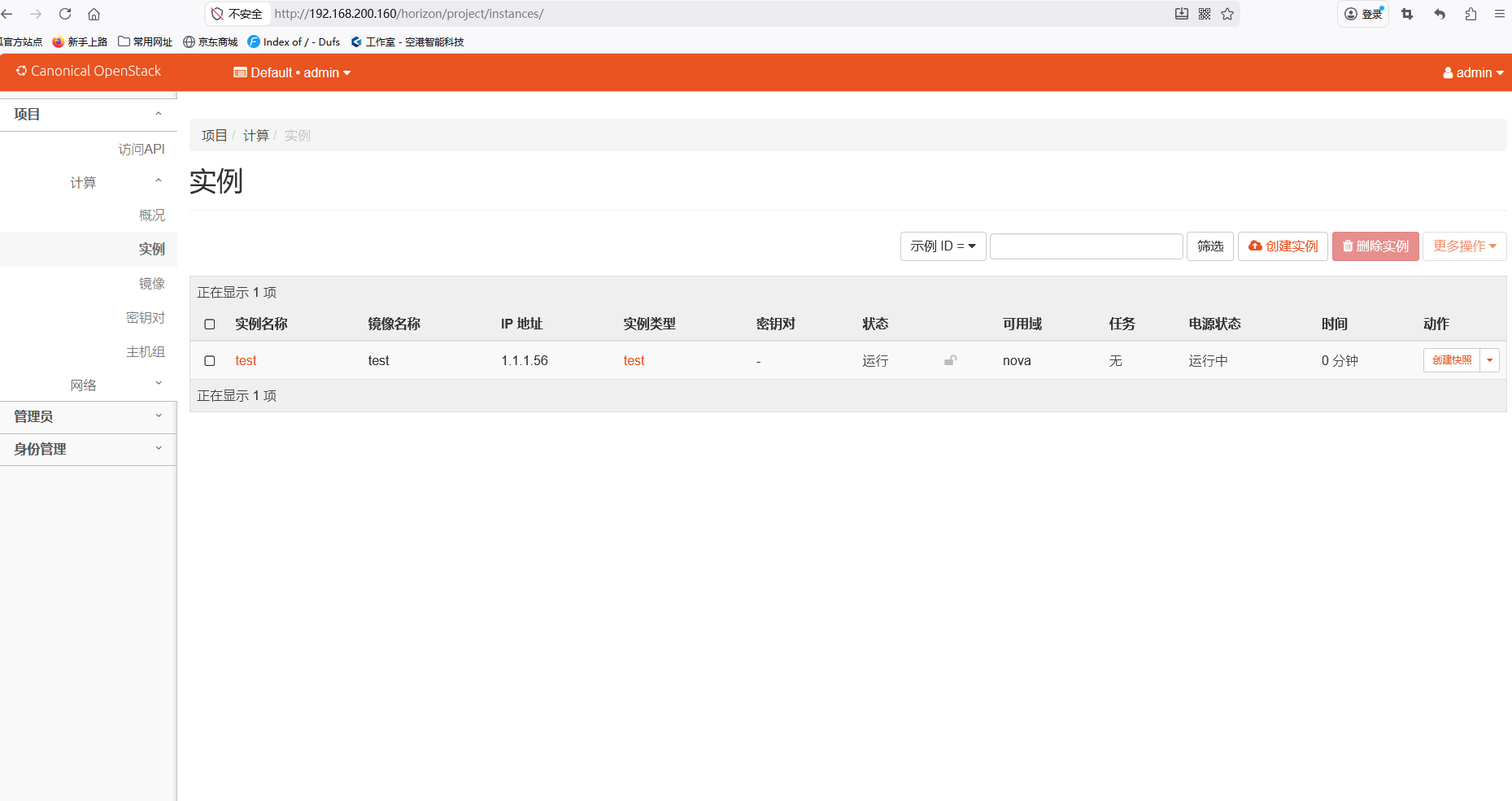

创建实例

-

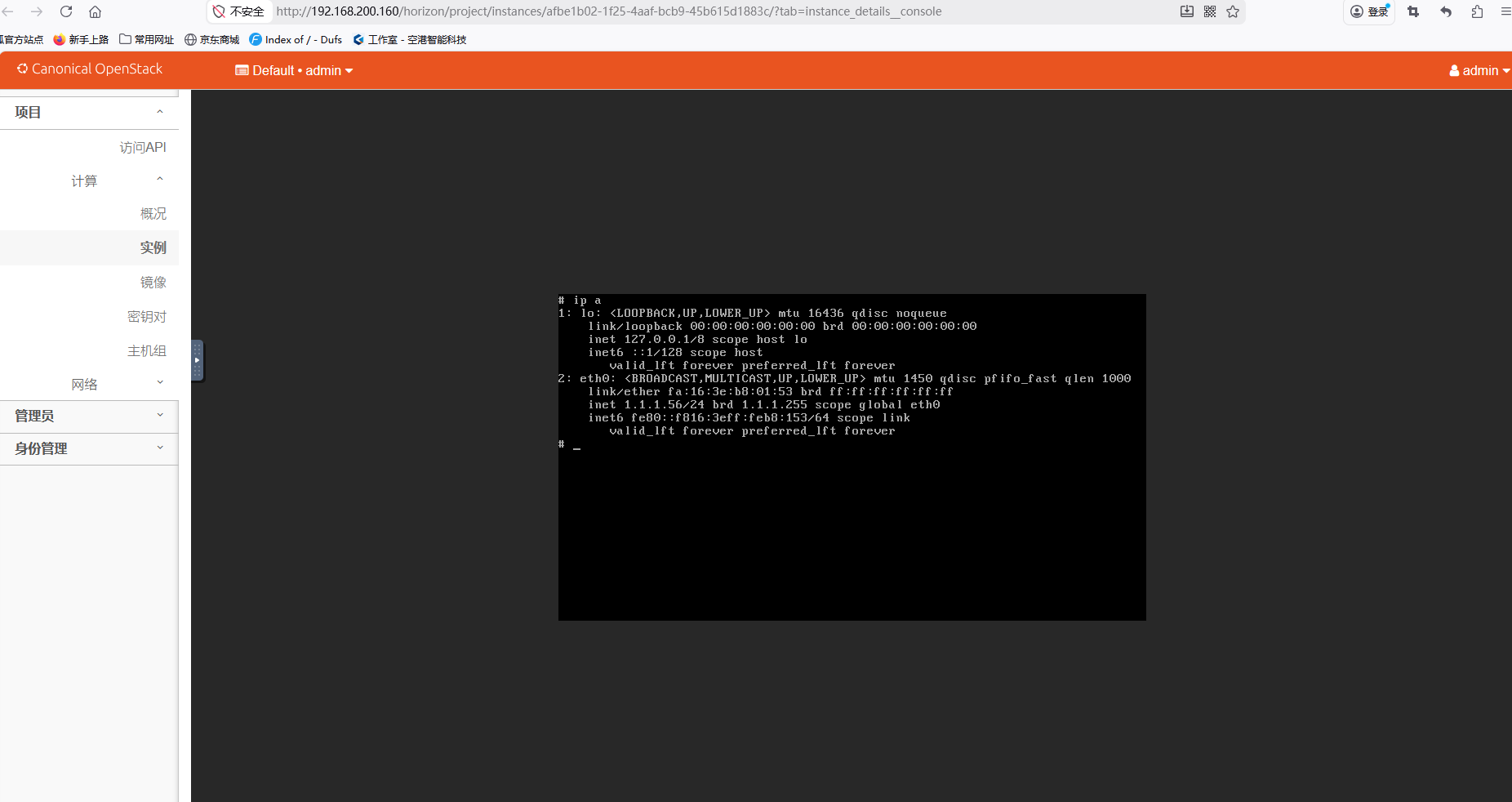

控制台登录实例

-

配置外部网卡,用于实例上网

-

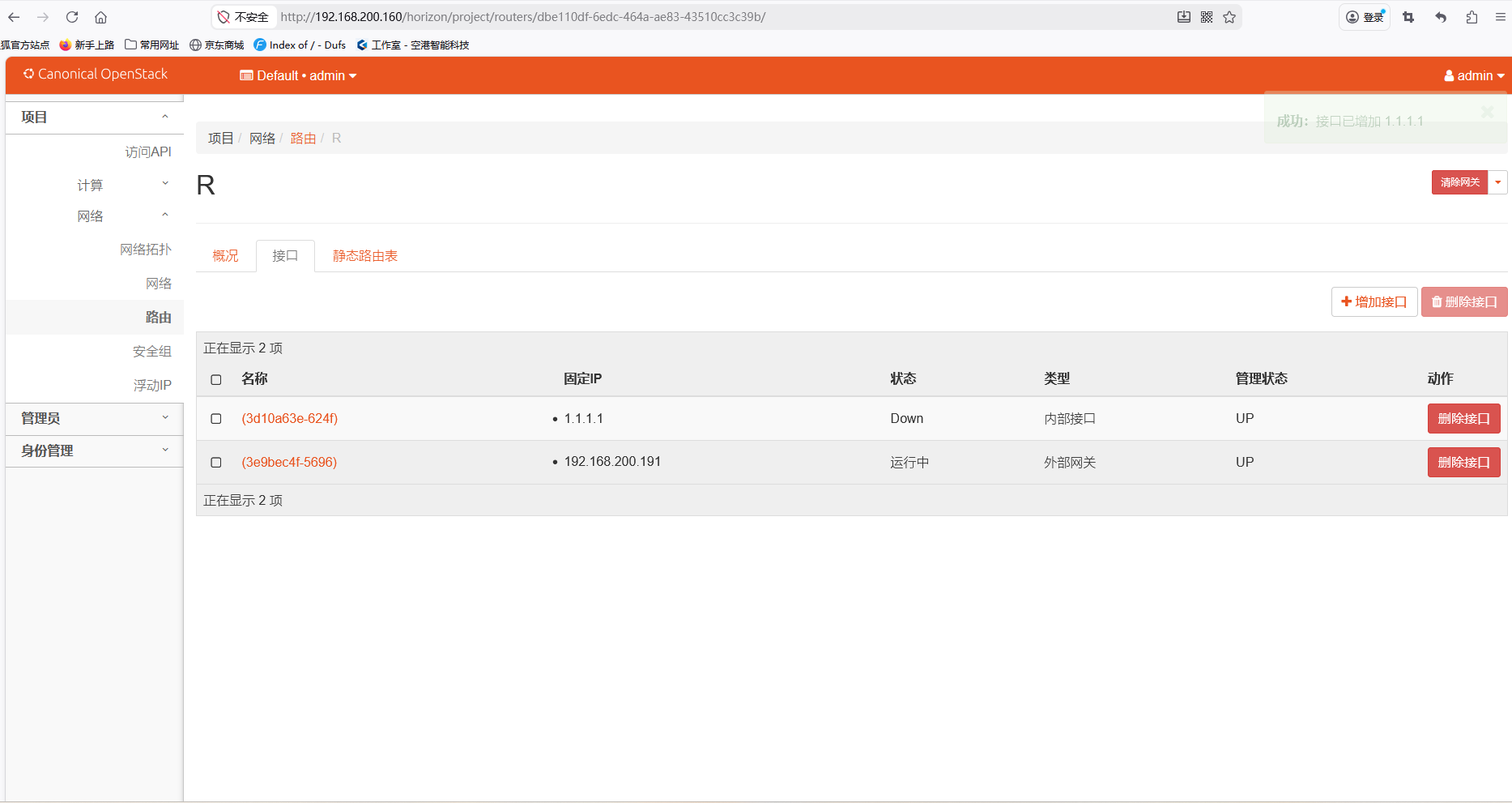

创建路由,选择外网接口,然后添加内网接口

-

此时实例即可上网