概述

作为MinIO分片上传的续集,本文讲述腾讯云COS分片上传的完整实现,并附带代码。省略Controller层接口方法,请参考MinIO,其实也并不重要。

下面直接给出实现逻辑。

初始化

直接给出核心Service方法:

java

import com.qcloud.cos.COSClient;

import com.qcloud.cos.model.InitiateMultipartUploadRequest;

import com.qcloud.cos.model.InitiateMultipartUploadResult;

public MultipartUploadInitResponse initMultipartUpload(MultipartUploadInitRequest req) {

long chunkSize = req.getChunkSize() != null ? req.getChunkSize() : DEFAULT_CHUNK_SIZE;

int totalParts = (int) Math.ceil((double) req.getFileSize() / chunkSize);

String filePath = commonService.generateFilePath("", req.getFileName(), req.getBizScene());

InitiateMultipartUploadResult uploadResult;

try {

InitiateMultipartUploadRequest request = new InitiateMultipartUploadRequest(req.getBucketName(), filePath);

uploadResult = cosClient.initiateMultipartUpload(request);

} catch (Exception e) {

log.error("COS初始化分片上传请求失败:", e);

throw new BackendBizException(GENERATE_MULTIPART_UPLOAD_URL_FAIL);

}

// 注意点

String uploadId = uploadResult.getUploadId();

ObjectStorageDO storage = commonService.buildObjectStorage(req, filePath, uploadId, totalParts);

Long id = objectStorageService.addAndReturnId(storage);

List<MultipartUploadDO> parts = new ArrayList<>();

GeneratePresignedUrlRequest partReq = new GeneratePresignedUrlRequest(req.getBucketName(), filePath);

for (int i = 1; i <= totalParts; i++) {

long partSize = (i == totalParts) ? req.getFileSize() - (i - 1) * chunkSize : chunkSize;

MultipartUploadDO part = new MultipartUploadDO();

part.setUploadId(uploadId);

part.setPartNumber(i);

part.setPartSize(partSize);

part.setUploadStatus(false);

if (req.getEnableDirectUpload()) {

part.setUploadUrl(generatePresignedUrl(partReq, String.valueOf(i), uploadId));

}

part.setExpireTime(OffsetDateTime.now().plusHours(URL_EXPIRE_HOURS));

part.setCreateTime(OffsetDateTime.now());

parts.add(part);

}

multipartUploadService.batchInsert(parts);

// 构建响应

MultipartUploadInitResponse resp = new MultipartUploadInitResponse();

resp.setUploadId(uploadId);

resp.setFileId(id);

resp.setTotalParts(totalParts);

resp.setChunkSize(chunkSize);

List<MultipartUploadInitResponse.PartInfo> partInfos = parts.stream()

.map(item -> {

MultipartUploadInitResponse.PartInfo info = new MultipartUploadInitResponse.PartInfo();

info.setPartNumber(item.getPartNumber());

info.setPartSize(item.getPartSize());

info.setUploaded(false);

info.setUploadUrl(item.getUploadUrl());

info.setExpireTime(item.getExpireTime().toInstant().toEpochMilli());

return info;

})

.collect(Collectors.toList());

resp.setUploadedParts(partInfos);

log.info("分片上传初始化完成,uploadId: {}, 总分片数: {}", uploadId, totalParts);

return resp;

}解读:和MinIO初始化分片上传没有多大区别,核心区别在于直接复用腾讯云COS系统生成的uploadId,形如177034658908ec2fa748ffa73ce8d31fbf2096518ea8b898ea93908fa2437a4a541c4db3b0,74位UUID。

分片上传

java

import com.qcloud.cos.model.UploadPartRequest;

import com.qcloud.cos.model.UploadPartResult;

public String uploadPart(MultipartFile file, MultipartUploadDTO req) {

try {

MutableTriple<Boolean, MultipartUploadDO, ObjectStorageDO> result = commonService.checkDbInfo(req.getUploadId(), req.getPartNumber(), StorageTypeEnum.COS.name());

if (!result.getLeft()) {

return "";

}

Boolean check = commonService.checkPartAndHash(file, req);

if (!check) {

return "";

}

ObjectStorageDO storage = result.right;

// 上传分片到cos

UploadPartRequest request = new UploadPartRequest();

request.setBucketName(storage.getBucketName());

request.setKey(storage.getFilePath());

request.setUploadId(req.getUploadId());

request.setPartNumber(req.getPartNumber());

request.setInputStream(file.getInputStream());

request.setPartSize(req.getPartSize());

// 注意

request.setMd5Digest(calContentMd5(req.getPartHash()));

UploadPartResult partRes = cosClient.uploadPart(request);

boolean updated = multipartUploadService.updatePartStatus(req.getUploadId(), req.getPartNumber(), partRes.getETag(), req.getPartHash());

if (updated) {

// 更新主表的已完成分片数

Integer completed = multipartUploadService.countUploadedParts(req.getUploadId());

storage.setCompletedParts(completed);

objectStorageService.update(storage);

log.info("分片上传成功,uploadId: {}, partNumber: {}, 已完成: {}/{}", req.getUploadId(), req.getPartNumber(), completed, storage.getTotalParts());

}

return partRes.getETag();

} catch (Exception e) {

log.error("COS分片上传失败: uploadId={}, partNumber={}", req.getUploadId(), req.getPartNumber(), e);

return "";

}

}解读:

md5Digest参数虽然在源码里标记为可选,但强烈建议设置,不设置,后续的分片合并方法会有问题。

私有方法如下:

java

private String calContentMd5(String hash) throws DecoderException {

// 先把 Hex 转回二进制字节数组

byte[] md5Bytes = Hex.decodeHex(hash);

// 再把字节数组转成 Base64 字符串

return Base64.encodeBase64String(md5Bytes);

}分片合并

java

import com.qcloud.cos.model.CompleteMultipartUploadRequest;

import com.qcloud.cos.model.ObjectMetadata;

public FileDTO completeMultipartUpload(MultipartUploadCompleteRequest request) {

try {

ObjectStorageDO storage = objectStorageService.findByUploadIdAndPlatform(request.getUploadId(), StorageTypeEnum.COS.name());

if (storage == null) {

throw new BackendBizException(UPLOAD_TASK_NOT_EXIST);

}

// 检查所有分片是否都已上传

Integer completedParts = multipartUploadService.countUploadedParts(request.getUploadId());

if (!completedParts.equals(storage.getTotalParts())) {

throw new BackendBizException(CHUNK_NOT_UPLOAD_COMPLETE);

}

// 获取所有分片信息

List<MultipartUploadDO> parts = multipartUploadService.findByUploadId(request.getUploadId());

// 合并分片

CompleteMultipartUploadRequest req = new CompleteMultipartUploadRequest();

req.setBucketName(storage.getBucketName());

req.setKey(storage.getFilePath());

req.setUploadId(request.getUploadId());

req.setPartETags(parts.stream().map(part -> new PartETag(part.getPartNumber(), part.getEtag())).collect(Collectors.toList()));

cosClient.completeMultipartUpload(req);

// 验证最终文件哈希

try {

ObjectMetadata metadata = cosClient.getObjectMetadata(storage.getBucketName(), storage.getFilePath());

List<String> list = parts.stream().map(MultipartUploadDO::getPartHash).collect(Collectors.toList());

String actualHash = commonService.getFileHash(list);

// TODO: 腾讯云COS ETag有问题,无法匹配

if (!metadata.getETag().equals(storage.getFileHash())) {

log.error("COS文件哈希验证失败: uploadId={}, expected={}, actual={}", request.getUploadId(), storage.getFileHash(), actualHash);

cosClient.deleteObject(storage.getBucketName(), storage.getFilePath());

throw new BackendBizException(FILE_HASH_VERIFY_FAIL);

}

// 验证文件大小

if (!storage.getFileSize().equals(metadata.getContentLength())) {

log.error("COS文件大小验证失败: uploadId={}, expected={}, actual={}", request.getUploadId(), storage.getFileSize(), metadata.getContentLength());

// 删除不完整的文件

cosClient.deleteObject(storage.getBucketName(), storage.getFilePath());

throw new BackendBizException(FILE_SIZE_VERIFY_FAIL);

}

// 更新文件的最终ETag

storage.setFileHash(metadata.getETag().contains(DASH) ? metadata.getETag().substring(0, metadata.getETag().indexOf(DASH)) : metadata.getETag());

} catch (Exception e) {

log.error("COS文件验证失败: uploadId={} ", request.getUploadId(), e);

throw new BackendBizException(FILE_VERIFY_FAIL);

}

storage.setUploadStatus(UploadStatusEnum.COMPLETED.getCode());

storage.setModifyTime(LocalDateTime.now().atOffset(ZoneOffset.UTC));

objectStorageService.update(storage);

multipartUploadService.deleteByUploadId(request.getUploadId());

// 构建响应

FileDTO dto = new FileDTO();

dto.setId(storage.getId());

dto.setName(storage.getFileName());

dto.setUrl(cosClient.generatePresignedUrl(storage.getBucketName(), storage.getFilePath(), getDate(URL_EXPIRE_HOURS, TimeUnit.HOURS), HttpMethodName.GET).toString());

log.info("COS分片上传完成,uploadId: {}, fileId: {}", request.getUploadId(), storage.getId());

return dto;

} catch (Exception e) {

log.error("COS完成分片上传失败: uploadId={} ", request.getUploadId(), e);

throw new BackendBizException(COMPLETE_MULTIPART_UPLOAD_FAIL);

}

}解读:

- 文件合并时,若校验失败,则删除合并后的文件,不是删除分片文件;

- 文件合并时,若校验成功,保留合并后的文件(废话),与MinIO不同的是,无需手动删除分片文件,COS Server会自动删除分片文件;

建表语句

附录两个建表语句(仅供参考,PG写法有些注释调整,旨在简化):

sql

create table t_cms_object_storage

(

id bigint not null primary key,

platform varchar(64) default 'MINIO'::character varying not null comment '存储平台:MINIO(默认)、COS(腾讯云)、OSS(阿里云)、BOS(百度云)、S3(亚马逊)',

file_path varchar(128) not null comment '实际访问地址',

file_name varchar(255) not null comment '原始文件名',

file_size bigint default 0 comment '文件大小,单位:字节',

file_hash varchar(64) not null comment '文件哈希值,用于数据完整性校验',

hash_algorithm varchar(16) default 'MD5'::character varying comment '哈希算法,默认使用MD5',

bucket_name varchar(63) not null comment '存储桶名称',

upload_id varchar(128) default ''::character varying comment '分片上传的唯一标识,普通上传和秒传时为空',

total_parts integer default 0 comment '分片上传的总分片数,普通上传为0',

completed_parts integer default 0 comment '已完成上传的分片数,普通上传为0',

upload_type varchar(64) default 'NORMAL'::character varying comment '上传类型:NORMAL(普通上传)、QUICK(秒传)、MULTIPART(分片上传)',

upload_status varchar(32) default 'pending'::character varying comment '上传状态:pending(待上传)、uploading(上传中)、completed(已完成)、failed(失败)',

biz_scene varchar(64) comment '业务场景:post(发布)、avatar(头像)等',

is_deleted smallint default 0,

creator varchar(50),

create_time timestamp(6) with time zone default CURRENT_TIMESTAMP,

modify_time timestamp(6) with time zone default CURRENT_TIMESTAMP,

modifier varchar(50)

) comment '对象存储主表,记录文件的元数据信息';

create table t_cms_multipart_upload

(

id bigint not null primary key,

upload_id varchar(128) not null comment '分片上传任务ID,与t_cms_object_storage表的upload_id字段一致',

part_number integer not null comment '分片序号,从1开始递增',

part_size bigint not null comment '分片大小,单位:字节',

etag varchar(255) comment '分片ETag值,用于校验分片数据完整性',

part_hash varchar(64) comment '分片哈希值,用于校验分片数据完整性',

upload_url varchar(255) comment '预签名的分片上传URL',

expire_time timestamp(6) with time zone comment '上传URL的过期时间',

upload_status boolean default false comment '分片上传状态:false(未上传)、true(已上传)',

is_deleted smallint default 0,

creator varchar(50),

create_time timestamp(6) with time zone default CURRENT_TIMESTAMP,

modify_time timestamp(6) with time zone,

modifier varchar(50)

) comment '文件分片信息表,记录分片上传详情';

create unique index uq_upload_part

on t_cms_multipart_upload (upload_id, part_number);是的:我就是在吐槽PG的写法很恶心,注释要和建表语句分片;已有表加字段,只能加在最后一列,强迫症要发狂。

问题

记录实现过程中遇到的问题。

The Content-MD5 you specified is not valid

核心报错信息:com.qcloud.cos.exception.CosServiceException: The Content-MD5 you specified is not valid.,省略掉报错堆栈。

解决方法:之前是request.setMd5Digest(req.getPartHash());,调整为request.setMd5Digest(calContentMd5(req.getPartHash()));。

The specified multipart upload does not exist

核心报错信息:com.qcloud.cos.exception.CosServiceException: The specified multipart upload does not exist.The upload ID might be invalid, or the multipart upload might have been aborted or completed.

问题出现在合并分片时,由于在开发自测调试阶段,想要多次合并文件,结果出现上面的问题。

AI分析:

MinIOvs腾讯云COS逻辑对比

| 特性 | COScompleteMultipartUpload |

MinIOcomposeObject |

|---|---|---|

| 底层依据 | 基于UploadId(状态机) |

基于SourceObjects(物理存在) |

| 二次请求结果 | 报错404NoSuchUpload(若UploadId不存在) |

成功(只要源文件仍在) |

| 清理机制 | 合并成功后,碎片由存储桶自动销毁 | 需手动删除参与合并的源分片文件 |

| 原子性 | 极强 | 极强 |

拓展

cosbrowser

分片上传成功,至少COSClient没有报错,那如何二次确认分片文件确实上传成功了呢?能不能像MinIO Web UI一样查看到上传的分片文件?

首先自然会想到打开腾讯云COS开发者控制台,使用secretId和secretKey登录成功后,并没有如期看到分片文件。

GPT的答复:在控制台或COS Browser的常规 文件列表里,看不见这些切割文件。COS的设计逻辑是:分片(Parts)在合并之前,不属于正式对象(Object)。

GPT并没有告诉我去下载控制台,吭哧吭哧折腾花费不少时间,才注意到COS Browser.

类似于阿里云OSS提供ossbrowser,腾讯云也提供cosbrowser客户端,官网下载即可。

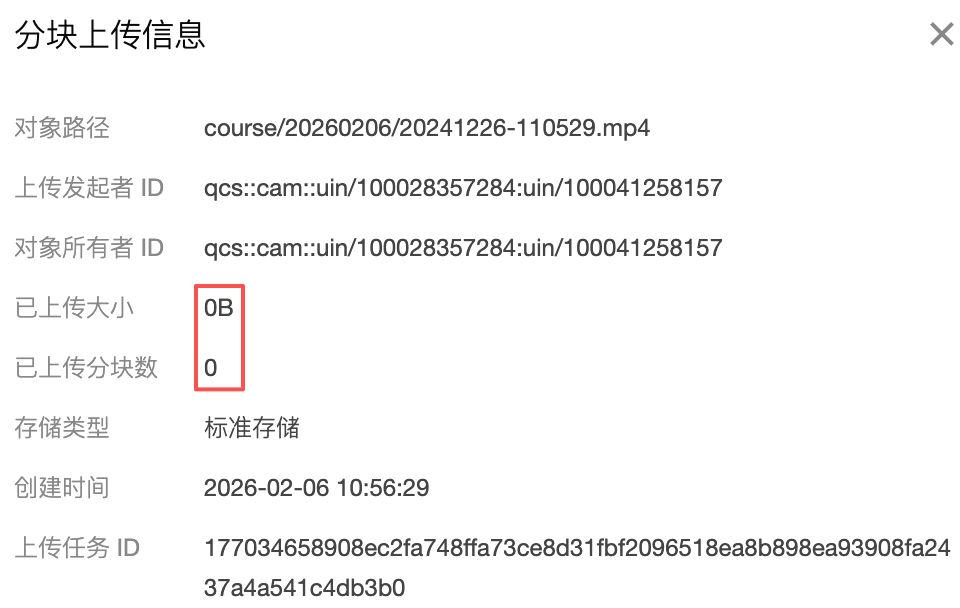

打开客户端工具后,经过几分钟摸索与熟悉,终于发现【碎片文件】

点击

点击按钮

分析:

- 请求

cosClient.initiateMultipartUpload(req);方法就会开始创建文件碎片元数据信息,如上图所示; - 随着分片的持续上传,即请求

cosClient.uploadPart(req);方法,已上传大小和已上传分块数会持续更新; - 调用合并分片方法

cosClient.completeMultipartUpload(req);,则会在服务端执行文件合并,合并成功后,上面看到的文件碎片会自动被COS删除

注意事项(GPT加粗提醒):

这是一个很多开发者容易忽略的坑:

- 只要uploadPart成功,这些数据就已经占用腾讯云的物理存储。

- 哪怕你永远不合并,腾讯云也会一直对这些碎片计费。

- 如果调试时产生很多UploadId却没合并,建议在碎片管理里点一下清理,否则月底账单会让你心疼。