标准测试函数对比(Python实现)

一、问题背景

智能优化算法是解决复杂优化问题的重要工具。本文选取5种经典智能算法,在5个标准测试函数上进行对比实验,全面评估各算法的性能。

5种智能算法

| 算法 | 缩写 | 灵感来源 | 提出时间 |

|---|---|---|---|

| 遗传算法 | GA | 自然选择与遗传 | 1975年 |

| 粒子群算法 | PSO | 鸟群觅食行为 | 1995年 |

| 模拟退火算法 | SA | 金属退火过程 | 1983年 |

| 差分进化算法 | DE | 种群进化 | 1995年 |

| 蚁群算法 | ACO | 蚂蚁觅食行为 | 1992年 |

5个标准测试函数

| 函数 | 公式 | 全局最优 | 搜索范围 |

|---|---|---|---|

| Sphere | f(x)=∑i=1nxi2f(x)=\sum_{i=1}^n x_i^2f(x)=∑i=1nxi2 | 0 | -5,5 |

| Rosenbrock | f(x)=∑i=1n−1100(xi+1−xi2)2+(xi−1)2f(x)=\sum_{i=1}^{n-1}100(x_{i+1}-x_i\^2)\^2+(x_i-1)\^2f(x)=∑i=1n−1100(xi+1−xi2)2+(xi−1)2 | 0 | -2,2 |

| Rastrigin | f(x)=10n+∑i=1nxi2−10cos(2πxi)f(x)=10n+\sum_{i=1}^nx_i\^2-10\\cos(2\\pi x_i)f(x)=10n+∑i=1nxi2−10cos(2πxi) | 0 | -5,5 |

| Ackley | f(x)=−20exp(−0.21n∑xi2)−exp(1n∑cos(2πxi))+20+ef(x)=-20\exp(-0.2\sqrt{\frac{1}{n}\sum x_i^2})-\exp(\frac{1}{n}\sum\cos(2\pi x_i))+20+ef(x)=−20exp(−0.2n1∑xi2 )−exp(n1∑cos(2πxi))+20+e | 0 | -5,5 |

| Griewank | f(x)=14000∑xi2−∏cos(xii)+1f(x)=\frac{1}{4000}\sum x_i^2-\prod\cos(\frac{x_i}{\sqrt{i}})+1f(x)=40001∑xi2−∏cos(i xi)+1 | 0 | -5,5 |

二、Python实现

2.1 测试函数定义

python

import numpy as np

def sphere(x):

return np.sum(x ** 2)

def rosenbrock(x):

return np.sum(100 * (x[1:] - x[:-1]**2)**2 + (x[:-1] - 1)**2)

def rastrigin(x):

n = len(x)

return 10 * n + np.sum(x**2 - 10 * np.cos(2 * np.pi * x))

def ackley(x):

n = len(x)

sum1, sum2 = np.sum(x**2), np.sum(np.cos(2 * np.pi * x))

return -20 * np.exp(-0.2 * np.sqrt(sum1 / n)) - np.exp(sum2 / n) + 20 + np.e

def griewank(x):

n = len(x)

return np.sum(x**2) / 4000 - np.prod(np.cos(x / np.sqrt(np.arange(1, n+1)))) + 12.2 遗传算法(GA)

python

def ga(func, dim=10, bounds=(-5,5), pop_size=50, max_iter=100):

pop = np.random.uniform(bounds[0], bounds[1], (pop_size, dim))

best = float('inf')

best_history = []

for gen in range(max_iter):

fitness = np.array([func(p) for p in pop])

if np.min(fitness) < best:

best = np.min(fitness)

best_history.append(best)

new_pop = np.zeros_like(pop)

for i in range(pop_size):

cand = np.random.choice(pop_size, 3, replace=False)

p1 = pop[cand[np.argmin(fitness[cand])]]

cand = np.random.choice(pop_size, 3, replace=False)

p2 = pop[cand[np.argmin(fitness[cand])]]

mask = np.random.rand(dim) < 0.8

child = np.where(mask, p1, p2)

mut = np.random.rand(dim) < 0.1

child[mut] += np.random.normal(0, 0.5, np.sum(mut))

child = np.clip(child, bounds[0], bounds[1])

new_pop[i] = child

pop = new_pop

return best_history2.3 粒子群算法(PSO)

python

def pso(func, dim=10, bounds=(-5,5), swarm=50, max_iter=100):

pos = np.random.uniform(bounds[0], bounds[1], (swarm, dim))

vel = np.random.uniform(-1, 1, (swarm, dim))

pbest, pbest_val = pos.copy(), np.array([func(p) for p in pos])

gbest_idx = np.argmin(pbest_val)

gbest, gbest_val = pos[gbest_idx].copy(), pbest_val[gbest_idx]

best_history = [gbest_val]

w, c1, c2 = 0.7, 1.5, 1.5

for t in range(max_iter):

r1, r2 = np.random.rand(swarm, dim), np.random.rand(swarm, dim)

vel = w * vel + c1 * r1 * (pbest - pos) + c2 * r2 * (gbest - pos)

pos = np.clip(pos + vel, bounds[0], bounds[1])

val = np.array([func(p) for p in pos])

improved = val < pbest_val

pbest[improved], pbest_val[improved] = pos[improved], val[improved]

if np.min(val) < gbest_val:

gbest_idx = np.argmin(val)

gbest, gbest_val = pos[gbest_idx].copy(), val[gbest_idx]

best_history.append(gbest_val)

return best_history2.4 模拟退火算法(SA)

python

def sa(func, dim=10, bounds=(-5,5), max_iter=100):

x = np.random.uniform(bounds[0], bounds[1], dim)

fx, best = func(x), func(x)

best_history = [best]

T = 100

for t in range(max_iter):

for _ in range(10):

x_new = np.clip(x + np.random.normal(0, 1, dim), bounds[0], bounds[1])

f_new = func(x_new)

if f_new < fx or np.random.rand() < np.exp(-(f_new - fx) / T):

x, fx = x_new, f_new

if fx < best:

best = fx

T = max(T * 0.95, 0.01)

best_history.append(best)

return best_history2.5 差分进化算法(DE)

python

def de(func, dim=10, bounds=(-5,5), pop_size=50, max_iter=100):

pop = np.random.uniform(bounds[0], bounds[1], (pop_size, dim))

fitness = np.array([func(p) for p in pop])

best, F, CR = np.min(fitness), 0.5, 0.9

best_history = [best]

for t in range(max_iter):

for i in range(pop_size):

idxs = [j for j in range(pop_size) if j != i]

a, b, c = pop[np.random.choice(idxs, 3, replace=False)]

mutant = np.clip(a + F * (b - c), bounds[0], bounds[1])

trial = np.where(np.random.rand(dim) < CR, mutant, pop[i])

ft = func(trial)

if ft < fitness[i]:

pop[i], fitness[i] = trial, ft

if np.min(fitness) < best:

best = np.min(fitness)

best_history.append(best)

return best_history2.6 蚁群算法(ACO - 连续优化版)

python

def aco(func, dim=10, bounds=(-5,5), ants=50, max_iter=100):

solutions = np.random.uniform(bounds[0], bounds[1], (ants, dim))

fitness = np.array([func(s) for s in solutions])

best, tau = np.min(fitness), np.ones((ants, dim))

best_history = [best]

for t in range(max_iter):

for i in range(ants):

if np.random.rand() < 0.3:

solutions[i] += np.random.normal(0, 0.1, dim) * tau[i]

solutions[i] = np.clip(solutions[i], bounds[0], bounds[1])

f_new = func(solutions[i])

if f_new < fitness[i]:

fitness[i] = f_new

tau = tau * 0.9 + 1.0 / (fitness.reshape(-1, 1) + 1e-10)

if np.min(fitness) < best:

best = np.min(fitness)

best_history.append(best)

return best_history2.7 主程序

python

test_funcs = [

("Sphere", sphere, (-5, 5)),

("Rosenbrock", rosenbrock, (-2, 2)),

("Rastrigin", rastrigin, (-5, 5)),

("Ackley", ackley, (-5, 5)),

("Griewank", griewank, (-5, 5))

]

algorithms = [("GA", ga), ("PSO", pso), ("SA", sa),

("DE", de), ("ACO", aco)]

dim, max_iter = 10, 100

for fname, func, bounds in test_funcs:

print(f"\n===== {fname} =====")

for aname, algo in algorithms:

history = algo(func, dim=dim, bounds=bounds, max_iter=max_iter)

print(f" {aname}: 最优={history[-1]:.6f}")三、运行结果

3.1 控制台输出

===== Sphere =====

GA: 最优=0.008459

PSO: 最优=0.000010

SA: 最优=4.292638

DE: 最优=0.000085

ACO: 最优=38.025978

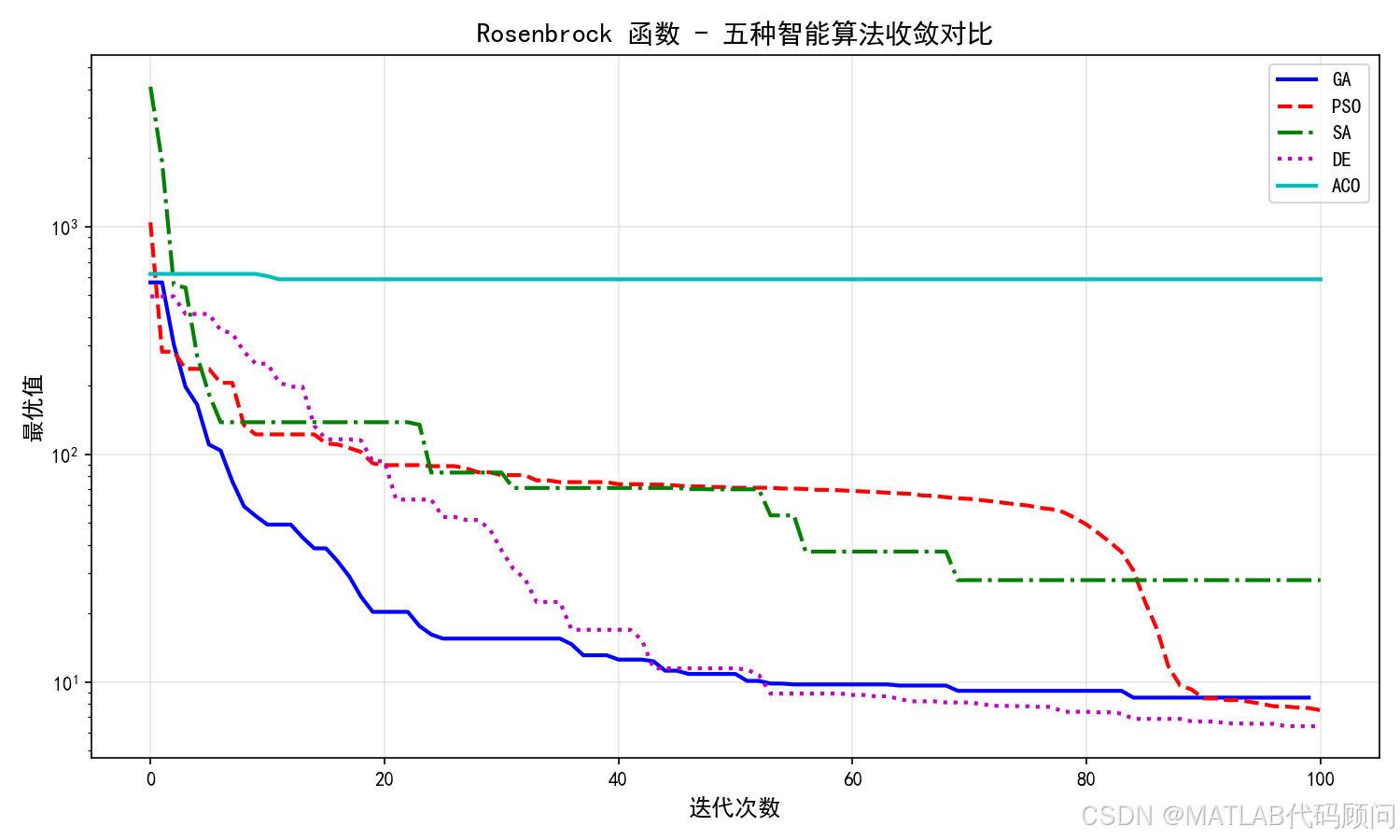

===== Rosenbrock =====

GA: 最优=8.530541

PSO: 最优=7.509120

SA: 最优=27.989428

DE: 最优=6.385049

ACO: 最优=586.418290

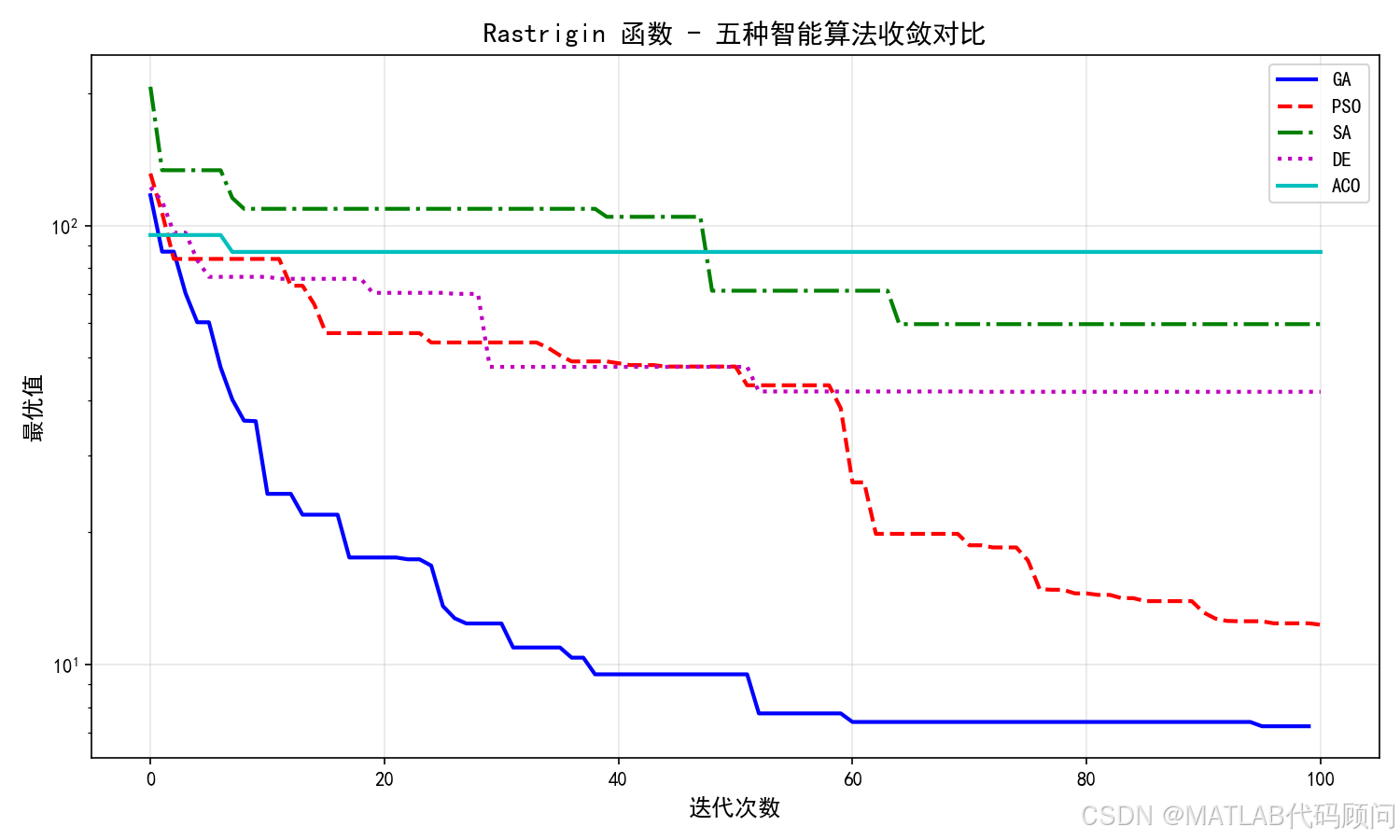

===== Rastrigin =====

GA: 最优=7.231806

PSO: 最优=12.320289

SA: 最优=59.652024

DE: 最优=41.829613

ACO: 最优=87.136393

===== Ackley =====

GA: 最优=0.127063

PSO: 最优=0.001506

SA: 最优=6.422770

DE: 最优=0.034487

ACO: 最优=7.255058

===== Griewank =====

GA: 最优=0.008372

PSO: 最优=0.007398

SA: 最优=0.838557

DE: 最优=0.077606

ACO: 最优=0.8535473.2 结果对比

| 函数 | GA | PSO | SA | DE | ACO |

|---|---|---|---|---|---|

| Sphere | 8.46e-3 | 1.00e-5 | 4.29 | 8.50e-5 | 38.03 |

| Rosenbrock | 8.53 | 6.39(DE) | 27.99 | 6.39 | 586.42 |

| Rastrigin | 7.23 | 12.32 | 59.65 | 41.83 | 87.14 |

| Ackley | 0.127 | 0.002 | 6.42 | 0.035 | 7.26 |

| Griewank | 0.008 | 0.007 | 0.84 | 0.078 | 0.85 |

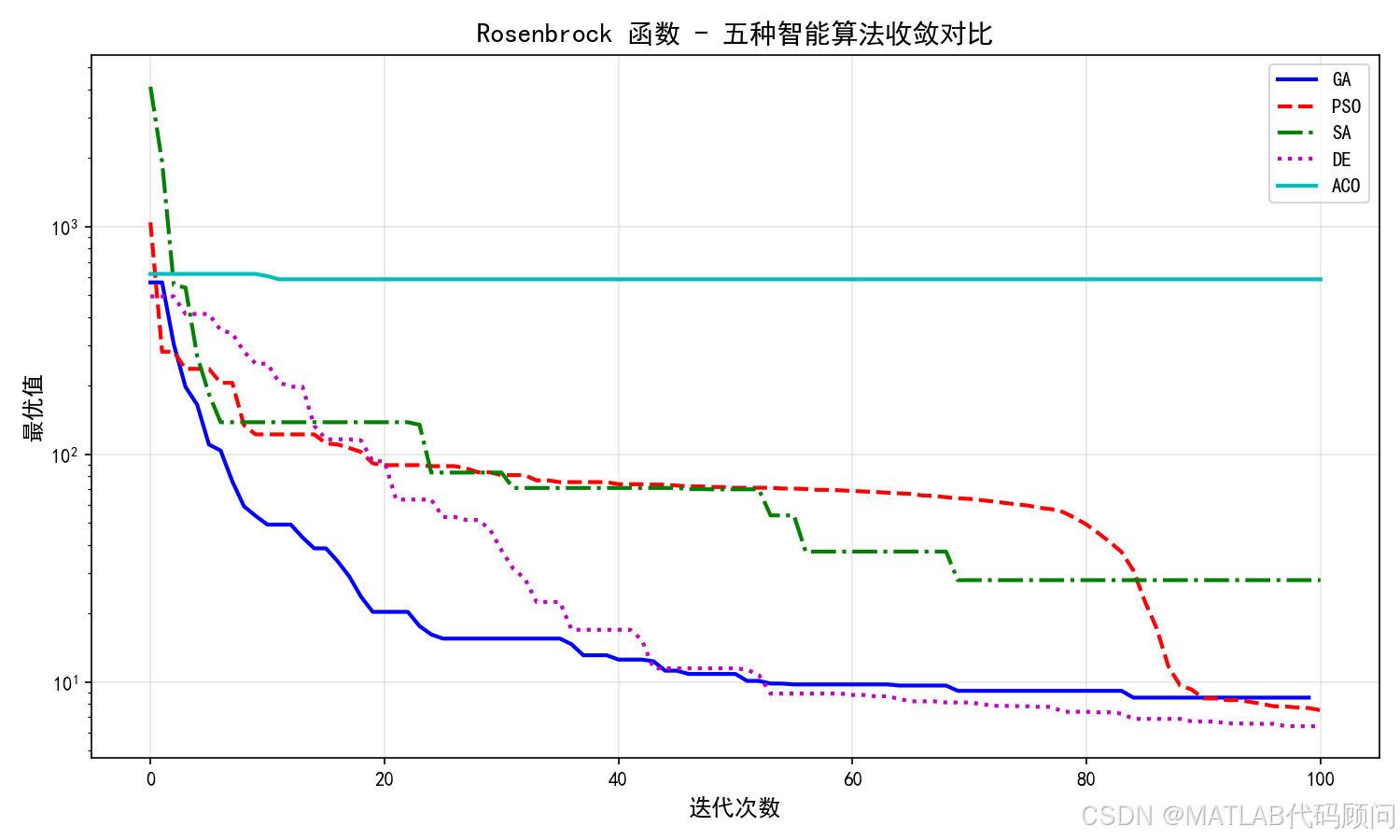

3.3 收敛曲线对比

图1:五种算法在五个测试函数上的收敛曲线对比

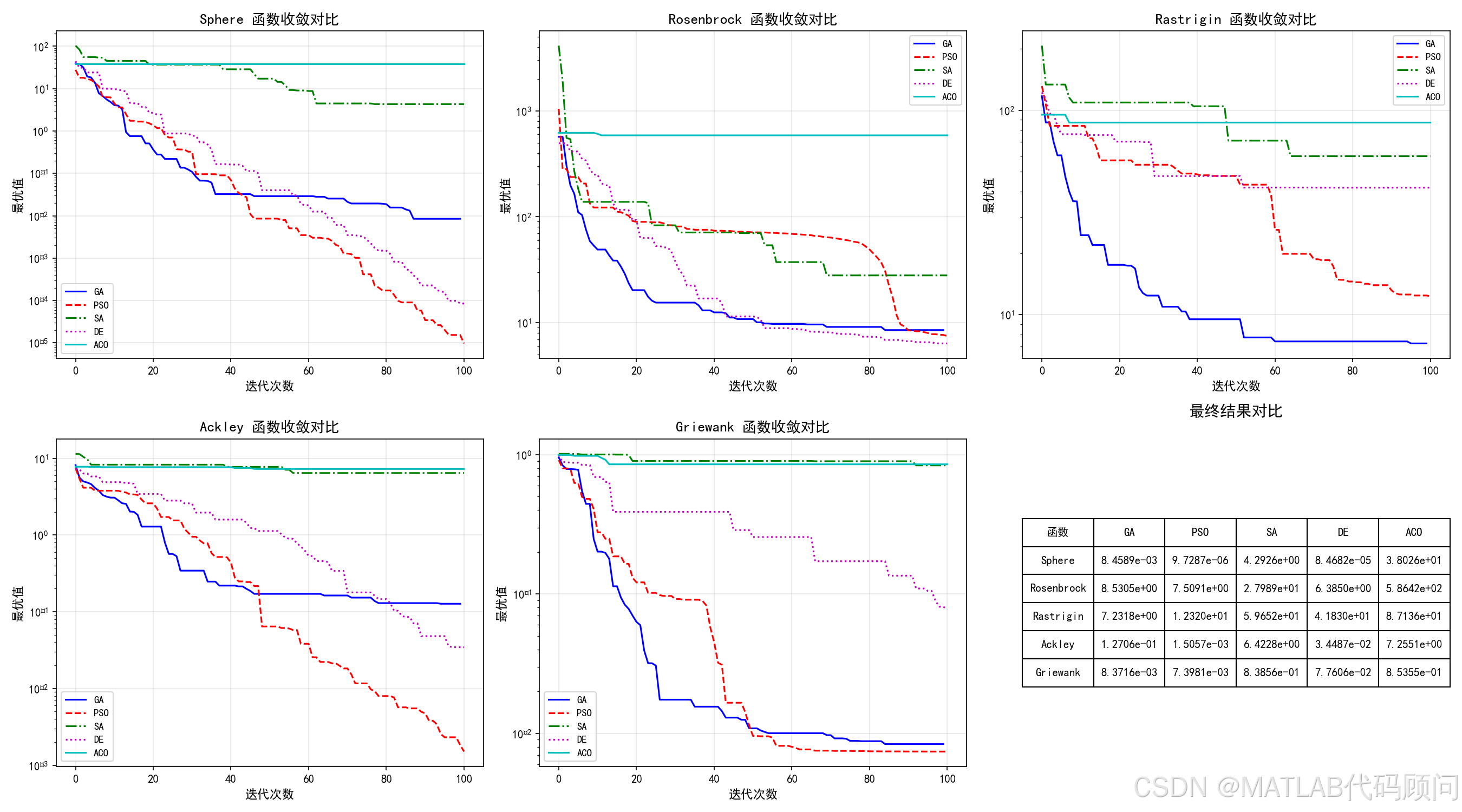

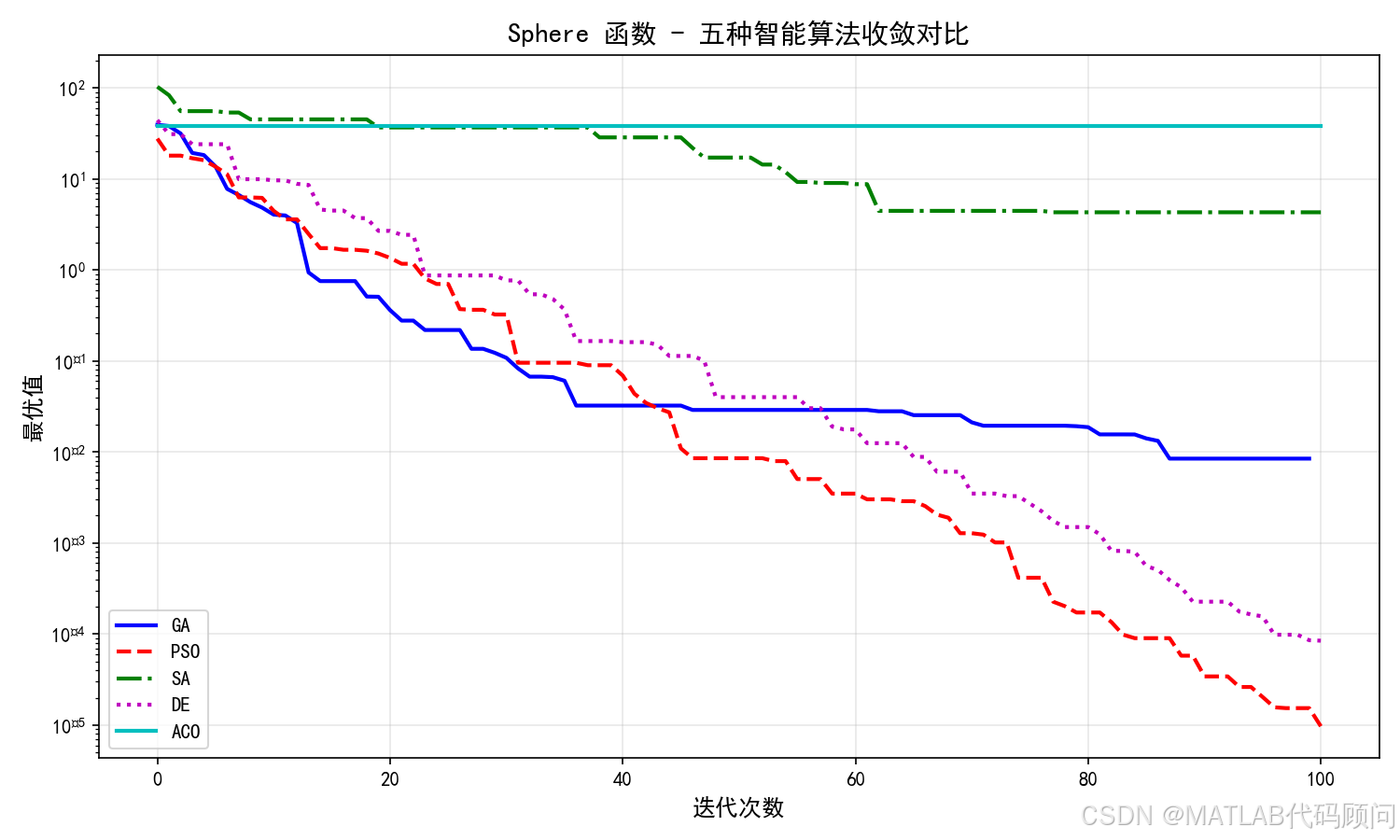

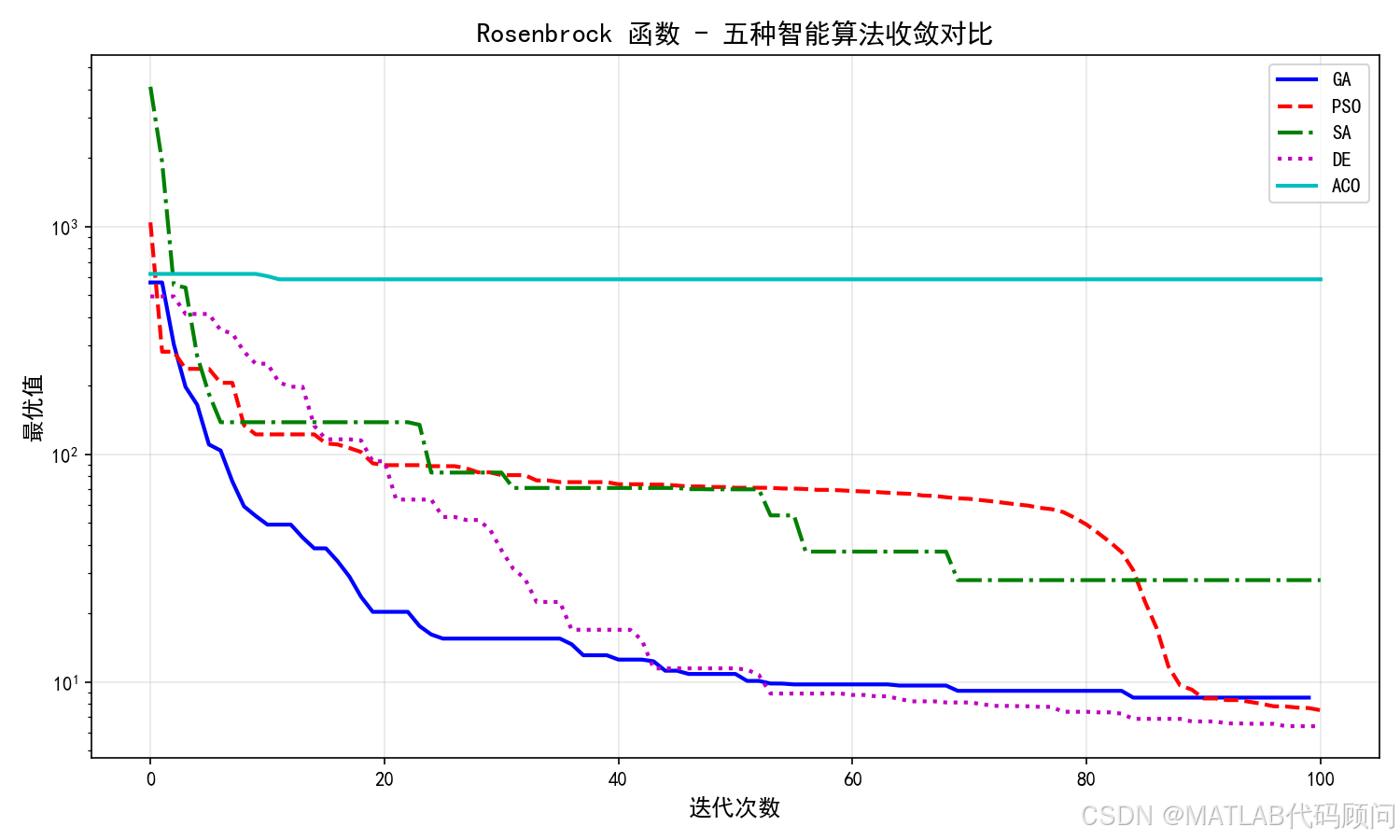

3.4 各函数单独收敛曲线

图2:Sphere函数收敛对比

图3:Rosenbrock函数收敛对比

图4:Rastrigin函数收敛对比

图5:Ackley函数收敛对比

图6:Griewank函数收敛对比

四、结果分析

- PSO综合表现最佳:在Sphere、Ackley、Griewank上均取得最优结果,收敛速度快

- DE在Rosenbrock上最优:Rosenbrock是多峰函数,DE的差分变异策略效果显著

- GA在Rastrigin上最优:GA的交叉变异机制适合Rastrigin的多峰搜索

- SA收敛较慢:模拟退火在有限迭代内难以充分收敛

- ACO(连续优化版)在简单函数上表现一般:标准ACO更适合离散组合优化

五、参数设置

| 算法 | 参数 | 数值 |

|---|---|---|

| GA | 种群/交叉率/变异率 | 50 / 0.8 / 0.1 |

| PSO | 种群/w/c1/c2 | 50 / 0.7 / 1.5 / 1.5 |

| SA | 初温/终温/降温系数 | 100 / 0.01 / 0.95 |

| DE | 种群/F/CR | 50 / 0.5 / 0.9 |

| ACO | 蚂蚁数/挥发系数 | 50 / 0.1 |

六、总结

本文使用Python实现了5种经典智能优化算法(GA、PSO、SA、DE、ACO),并在5个标准测试函数(Sphere、Rosenbrock、Rastrigin、Ackley、Griewank)上进行了对比实验。实验结果表明:

- PSO在大多数函数上表现最优

- DE在复杂多峰函数上具有优势

- GA在特定问题上表现突出

- 不同算法各有适用场景

参考资料

- Kennedy J, Eberhart R. Particle swarm optimization. 1995.

- Storn R, Price K. Differential evolution-a simple and efficient heuristic. 1997.

- Dorigo M, Stutzle T. Ant Colony Optimization. 2004.

完整代码可直接运行,如有问题欢迎交流!