准备

- NVIDIA Jetson Orin Nano 开发板

- Raspberry Camera V2 普通版

调通摄像头

插线

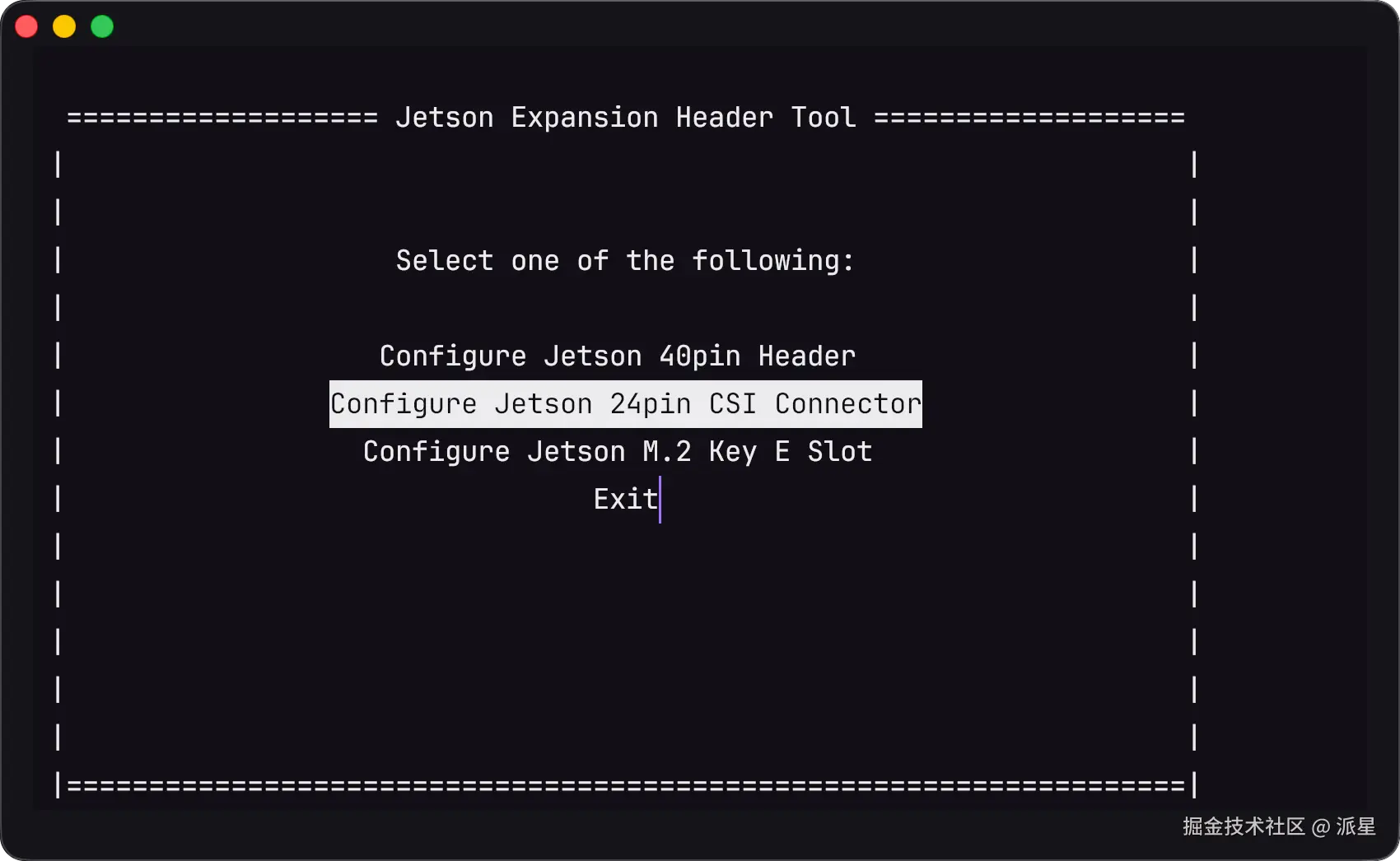

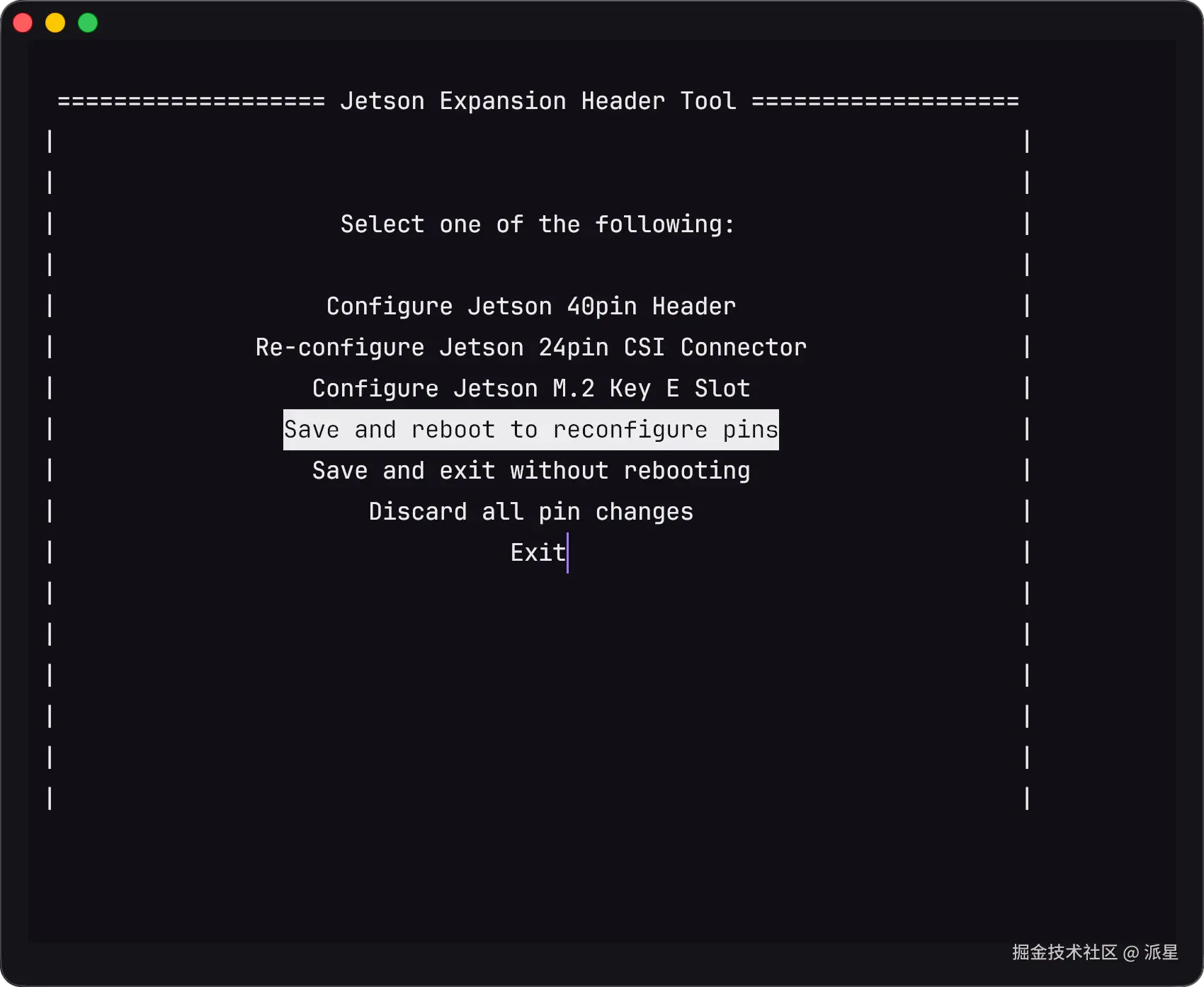

配置引脚工作模式

bash

cd /opt/nvidia/jetson-io

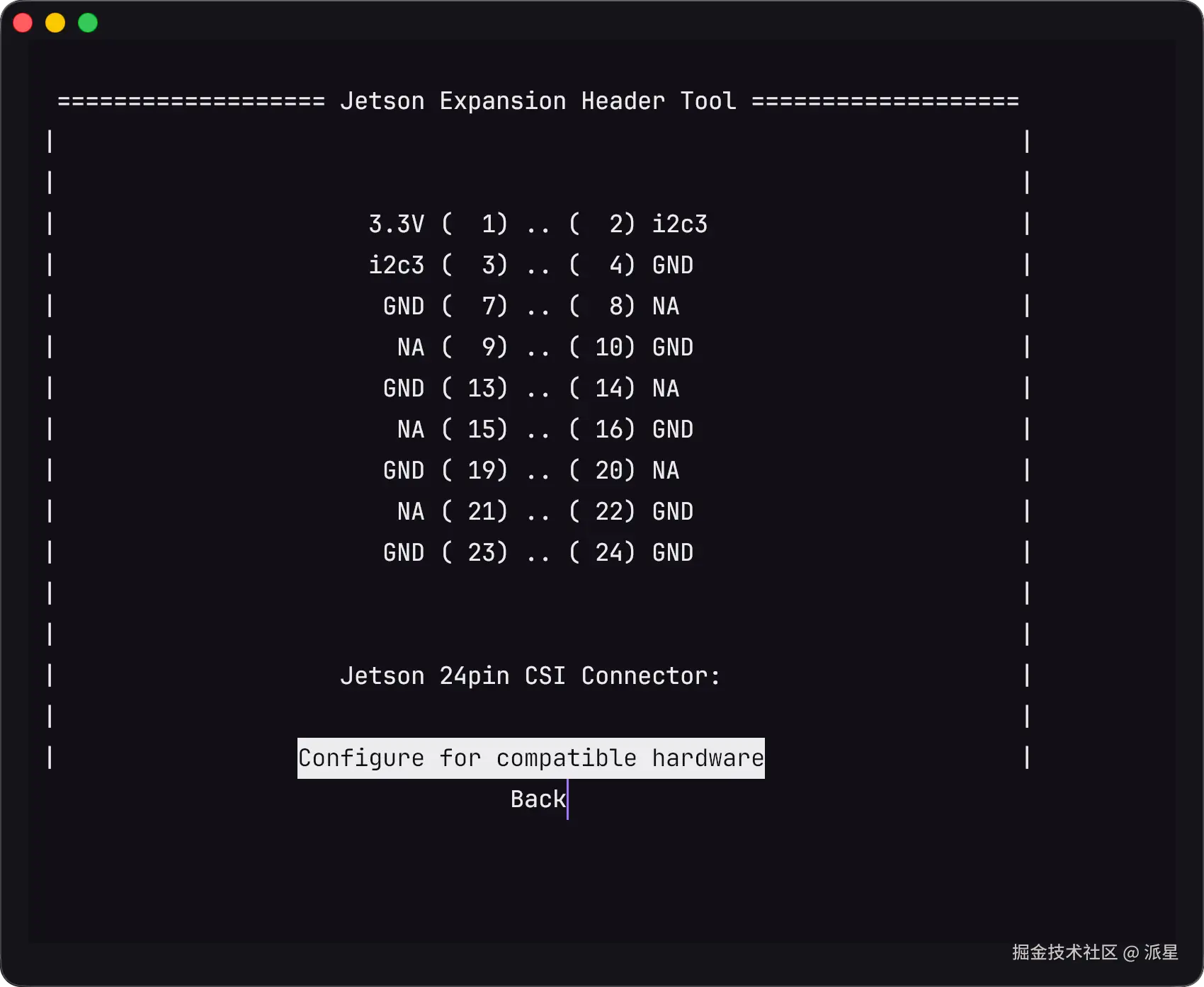

sudo python jetson-io.py进入设置页面后,参考下面的选项,回车即可

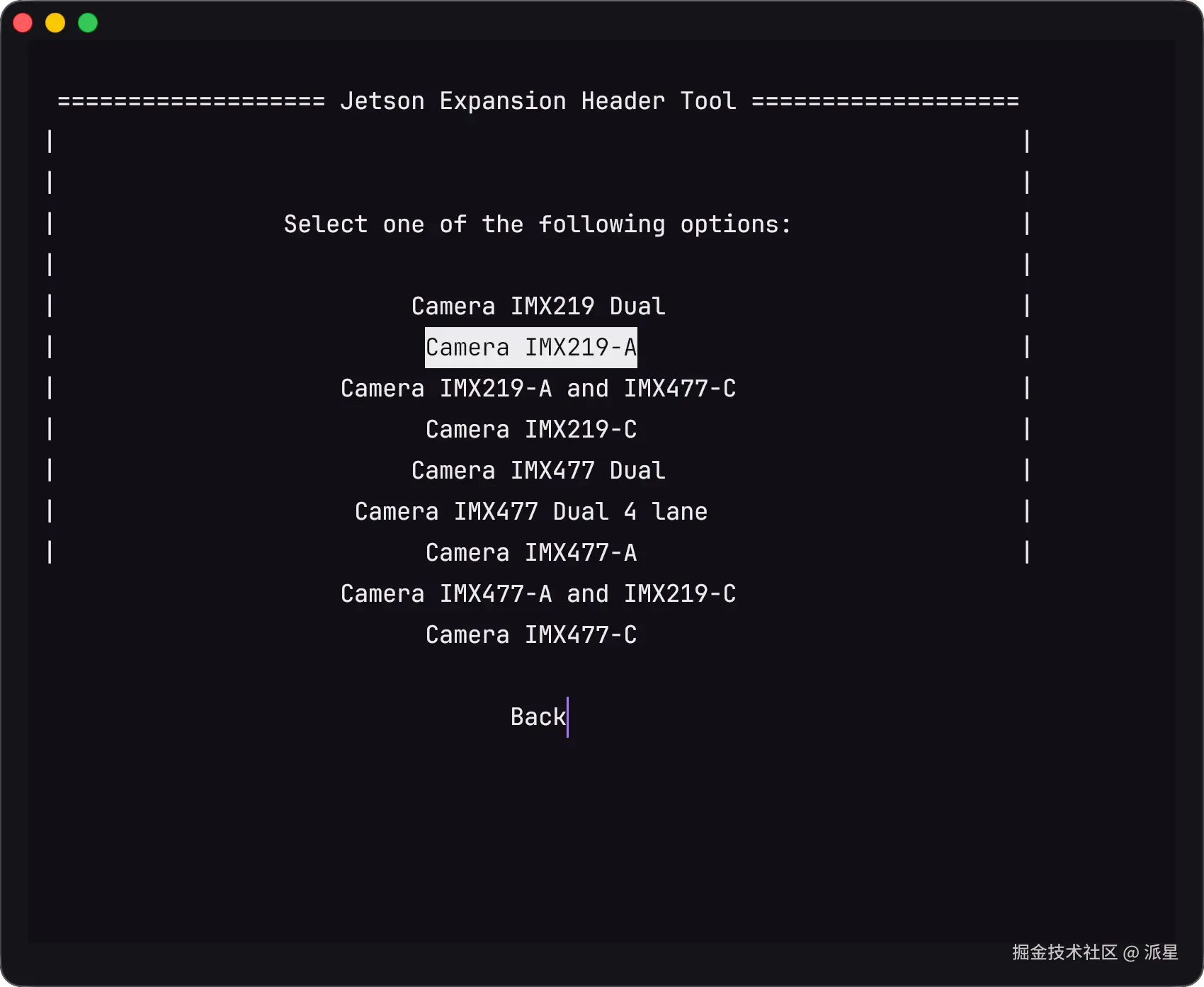

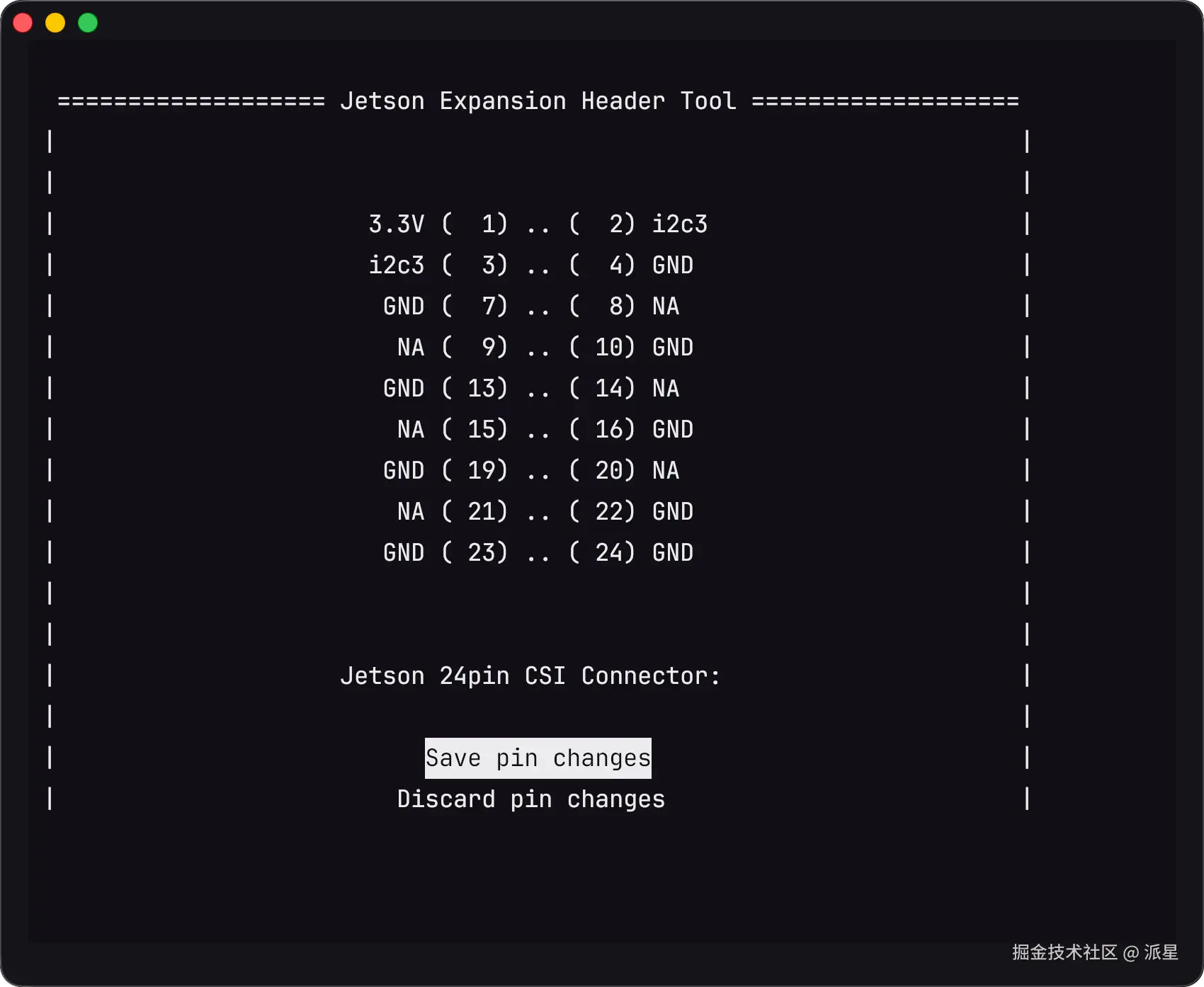

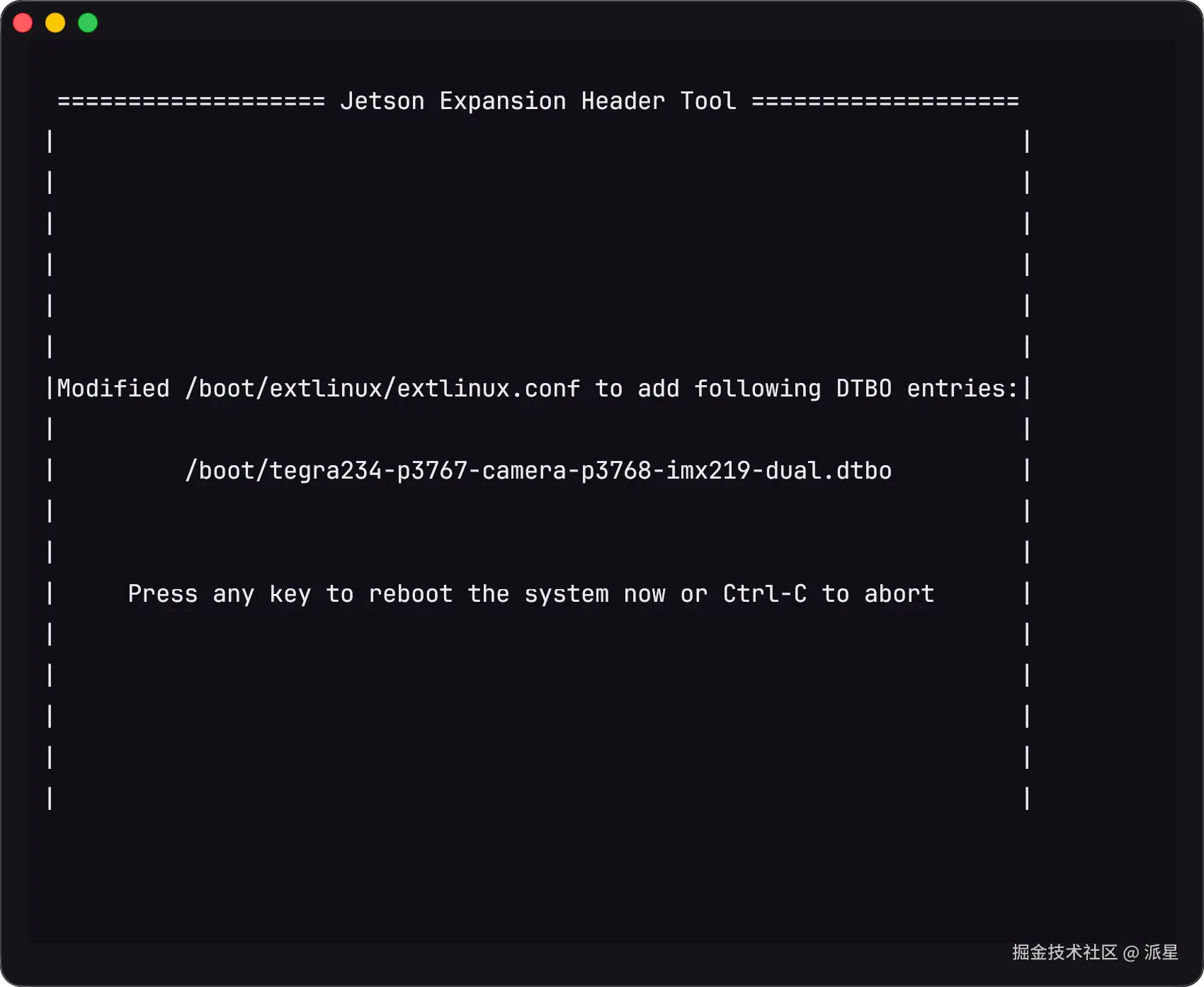

我这里是单个摄像头,选择IMX219-A

之后设备会自动重启

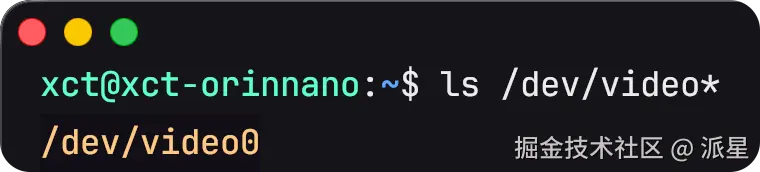

查看是否有摄像头设备:

bash

ls /dev/video*

可以看到有设备

执行CSI测试代码

进入github.com/JetsonHacks...,克隆该仓库,之后运行simple_camera.py

bash

git clone https://github.com/JetsonHacksNano/CSI-Camera.git

cd CSI-Camera

# simple_camera.py通过 OpenCV 读取摄像头画面,并将画面帧显示在屏幕窗口中

python simple_camera.py # 注意这条命令要在开发板的桌面上运行。不要ssh远程连接运行之后会看到视频窗口,说明摄像头连接成功,并且画面可成功传输。

OpenCV + GStreamer 推流

注意: 这里使用全局的python环境。想启用Gstreamer推流,就不要用pip安装opencv,而是用apt安装opencv(若已用pip

目标:

- CSI 摄像头可采集

- OpenCV 可通过 GStreamer 读帧

- OpenCV + GStreamer 推出 H264 UDP 流

- 项目后端

serve_api.sh正常提供实时画面与推理接口

全程只讲一种方案:CSI(nvarguscamerasrc)。

1. 环境安装(全局 Python,不用 .venv)

bash

sudo apt update

sudo apt install -y \

python3 python3-pip python3-dev \

python3-opencv python3-numpy \

gstreamer1.0-tools \

gstreamer1.0-plugins-base \

gstreamer1.0-plugins-good \

gstreamer1.0-plugins-bad \

gstreamer1.0-libav \

gstreamer1.0-alsa \

gstreamer1.0-glJetson 上补装/修复 CSI 相关组件:

bash

sudo apt install --reinstall -y nvidia-l4t-gstreamer

sudo systemctl restart nvargus-daemon如果之前装过 pip 版 OpenCV,建议先清掉,避免覆盖系统 OpenCV:

bash

python3 -m pip uninstall -y opencv-python opencv-contrib-python opencv-python-headless2. 先验证 CSI 与 GStreamer(不经过 OpenCV)

检查 Argus 服务与插件:

bash

sudo systemctl status nvargus-daemon --no-pager

gst-inspect-1.0 nvarguscamerasrc跑一条最小采集链路(稳定输出即成功):

bash

gst-launch-1.0 -e nvarguscamerasrc sensor-id=0 ! \

"video/x-raw(memory:NVMM),width=1280,height=720,framerate=30/1" ! \

queue ! nvvidconv ! queue ! fakesink3. 验证 OpenCV 是否可用 GStreamer

bash

python3 - <<'PY'

import cv2

print("OpenCV:", cv2.__version__)

print("GStreamer support:", "YES" if "GStreamer: YES" in cv2.getBuildInformation() else "NO/UNKNOWN")

PY输出里必须看到 YES,否则后面的 cv2.CAP_GSTREAMER 会失败。

4. OpenCV 读 CSI(采集链路)

bash

python3 - <<'PY'

import cv2

cap_str = (

"nvarguscamerasrc sensor-id=0 ! "

"video/x-raw(memory:NVMM),width=1280,height=720,framerate=30/1 ! "

"nvvidconv ! video/x-raw,format=BGRx ! "

"videoconvert ! video/x-raw,format=BGR ! "

"appsink drop=true max-buffers=1"

)

cap = cv2.VideoCapture(cap_str, cv2.CAP_GSTREAMER)

if not cap.isOpened():

raise SystemExit("OpenCV 打开 CSI 失败")

ok_count = 0

for _ in range(120):

ok, _ = cap.read()

if ok:

ok_count += 1

cap.release()

print("read ok frames:", ok_count)

PY5. OpenCV + GStreamer 推流(UDP/H264)

运行推流端(发送到本机 5600):

bash

python3 - <<'PY'

import cv2

width, height, fps = 1280, 720, 30

host, port = "127.0.0.1", 5600

cap_pipeline = (

"nvarguscamerasrc sensor-id=0 ! "

f"video/x-raw(memory:NVMM),width={width},height={height},framerate={fps}/1 ! "

"nvvidconv ! video/x-raw,format=BGRx ! "

"videoconvert ! video/x-raw,format=BGR ! "

"appsink drop=true max-buffers=1"

)

push_pipeline = (

"appsrc ! "

f"video/x-raw,format=BGR,width={width},height={height},framerate={fps}/1 ! "

"videoconvert ! "

"x264enc tune=zerolatency speed-preset=ultrafast bitrate=2000 key-int-max=30 ! "

"h264parse ! rtph264pay config-interval=1 pt=96 ! "

f"udpsink host={host} port={port} sync=false async=false"

)

cap = cv2.VideoCapture(cap_pipeline, cv2.CAP_GSTREAMER)

wr = cv2.VideoWriter(push_pipeline, cv2.CAP_GSTREAMER, 0, float(fps), (width, height), True)

assert cap.isOpened(), "cap open failed"

assert wr.isOpened(), "writer open failed"

while True:

ok, frame = cap.read()

if not ok:

break

wr.write(frame)

PY另开一个终端做拉流验证(这个需要显示,ssh远程终端中会执行失败):

bash

gst-launch-1.0 -v udpsrc port=5600 \

caps="application/x-rtp, media=video, encoding-name=H264, payload=96" ! \

rtph264depay ! avdec_h264 ! videoconvert ! autovideosink sync=false如果只验证流是否正常到达(不依赖本地显示/OpenGL),用下面的命令:

bash

gst-launch-1.0 -v udpsrc port=5600 \

caps="application/x-rtp, media=video, encoding-name=H264, payload=96" ! \

rtph264depay ! avdec_h264 ! fakesink sync=false当 fakesink 正常但 autovideosink 崩溃时,通常说明是显示 sink 问题,不是推流链路问题。

6. 接入后端

把相机采集函数 + HTTP 接口 + 启动脚本三件事拼起来,就能复用这条 CSI 方案。

6.1 后端最小接口设计建议

建议至少提供 2 个接口:

GET /api/health:服务健康检查GET /api/camera/mjpeg:输出 multipart MJPEG 画面(浏览器可直接<img src=...>)

可选再加:

GET /api/live-result:采集固定帧数后跑一次算法,返回 JSON 结果

6.2 Python 侧相机采集核心(可直接复用)

python

import cv2

def csi_pipeline(sensor_id=0, w=1280, h=720, fps=30):

return (

f"nvarguscamerasrc sensor-id={sensor_id} ! "

f"video/x-raw(memory:NVMM),width={w},height={h},framerate={fps}/1 ! "

"nvvidconv ! video/x-raw,format=BGRx ! "

"videoconvert ! video/x-raw,format=BGR ! "

"appsink drop=true max-buffers=1"

)

def open_csi():

cap = cv2.VideoCapture(csi_pipeline(), cv2.CAP_GSTREAMER)

if not cap.isOpened():

raise RuntimeError("无法打开 CSI 摄像头")

return cap

# 返回一个可用的视频捕获对象cap,后续可以用cap.read()读帧6.3 serve_api.sh 启动脚本模版(Jetson 可用)

把下面内容保存为你项目根目录的 serve_api.sh:

bash

#!/usr/bin/env bash

set -euo pipefail

SCRIPT_DIR="$(cd "$(dirname "${BASH_SOURCE[0]}")" && pwd)"

cd "$SCRIPT_DIR"

# 1) 基础环境(全局 Python)

PYTHON="${PYTHON:-python3}"

PORT="${PORT:-8000}"

# 2) CUDA / Jetson 常见路径(让 torch / triton 更稳)

prepend_env_path() {

local var_name="$1"

local val="$2"

local old="${!var_name:-}"

case ":$old:" in

*":$val:"*) ;;

*) export "${var_name}=${val}${old:+:$old}" ;;

esac

}

prepend_env_path LD_LIBRARY_PATH "/usr/local/cuda/targets/aarch64-linux/lib"

prepend_env_path PATH "/usr/local/cuda/bin"

if [ -f "/usr/local/cuda/include/cuda.h" ]; then

export CUDA_HOME="/usr/local/cuda"

prepend_env_path CPATH "/usr/local/cuda/include"

prepend_env_path C_INCLUDE_PATH "/usr/local/cuda/include"

fi

if [ -x "/usr/local/cuda/bin/ptxas" ]; then

export TRITON_PTXAS_PATH="/usr/local/cuda/bin/ptxas"

fi

# 3) CSI 参数(可按需覆盖)

export MOCK_RPPG_CSI_SENSOR_ID="${MOCK_RPPG_CSI_SENSOR_ID:-0}"

export MOCK_RPPG_CSI_CAPTURE_WIDTH="${MOCK_RPPG_CSI_CAPTURE_WIDTH:-1920}"

export MOCK_RPPG_CSI_CAPTURE_HEIGHT="${MOCK_RPPG_CSI_CAPTURE_HEIGHT:-1080}"

export MOCK_RPPG_CSI_DISPLAY_WIDTH="${MOCK_RPPG_CSI_DISPLAY_WIDTH:-960}"

export MOCK_RPPG_CSI_DISPLAY_HEIGHT="${MOCK_RPPG_CSI_DISPLAY_HEIGHT:-540}"

export MOCK_RPPG_CSI_FRAMERATE="${MOCK_RPPG_CSI_FRAMERATE:-30}"

# 4) 启动 FastAPI(把 your_backend.app:app 改成你的入口)

exec "$PYTHON" -m uvicorn your_backend.app:app --host 0.0.0.0 --port "$PORT"赋予执行权限并启动:

bash

chmod +x ./serve_api.sh

bash ./serve_api.sh6.4 验证后端是否真的接上 CSI

- 浏览器打开:

http://127.0.0.1:8000/api/health - 浏览器打开:

http://127.0.0.1:8000/api/camera/mjpeg- 或者在同一网段下的其他电脑的浏览器上,用

IP:端口来访问

- 或者在同一网段下的其他电脑的浏览器上,用

- 若实现了

live-result,再验证算法接口输出

在日志里打印:

- 当前使用的 GStreamer 管道字符串

cap.isOpened()结果- 每秒读帧计数(FPS)