高可用架构

本文采用kubeadm方式搭建k8s高可用集群,k8s高可用集群主要是对apiserver、etcd、controller-manager、scheduler做的高可用;高可用形式只要是为:

-

apiserver利用haproxy+keepalived做的负载,多apiserver节点同时工作;

-

etcd利用k8s内部提供的解决方案做的高可用,最多容忍一台etcd服务宕机

-

controller-manager、scheduler的高可用机制为如果多个服务同时存在,则会选举一个leader工作,另外两个处于sleep的状态

集群信息规划

|----------------------|-----------|---------------|-------------------|

| 主机名称 | 系统版本 | 主机IP | 备注 |

| K8s-master01 | Centos7.6 | 192.168.0.101 | Master01/registry |

| K8s-master02 | Centos7.6 | 192.168.0.102 | Master02 |

| K8s-master03 | Centos7.6 | 192.168.0.103 | Master03 |

| K8s-node01 | Centos7.6 | 192.168.0.111 | Node01 |

| K8s-node02 | Centos7.6 | 192.168.0.112 | Node02 |

| K8s-node03 | Centos7.6 | 192.168.0.113 | Node03 |

| Apiserver-keepalived | Centos7.6 | 192.168.0.100 | HA-apiserver |

服务器的初始化

#此些操作根据实际需求所有服务器都需要操作

修改 hosts 文件

|-------------------------------------------------------------------------------------------------------------------------------------------------------------|

| 192.168.0.101 k8s-master01 192.168.0.102 k8s-master02 192.168.0.103 k8s-master03 192.168.0.111 k8s-node01 192.168.0.112 k8s-node02 192.168.0.113 k8s-node03 |

修改主机名称

|--------------------------------------------------------------------------------|

| hostnamectl set-hostname k8s-master01 hostname -b k8s-master01 #其余服务器按照此方式进行修改 |

关闭 NetworkManager 服务

[root@k8s-master01 ~]# systemctl stop NetworkManager

[root@k8s-master01 ~]# systemctl disable NetworkManager

Removed symlink /etc/systemd/system/multi-user.target.wants/NetworkManager.service.

Removed symlink /etc/systemd/system/dbus-org.freedesktop.nm-dispatcher.service.

Removed symlink /etc/systemd/system/network-online.target.wants/NetworkManager-wait-online.service.修改服务器的 IP 地址

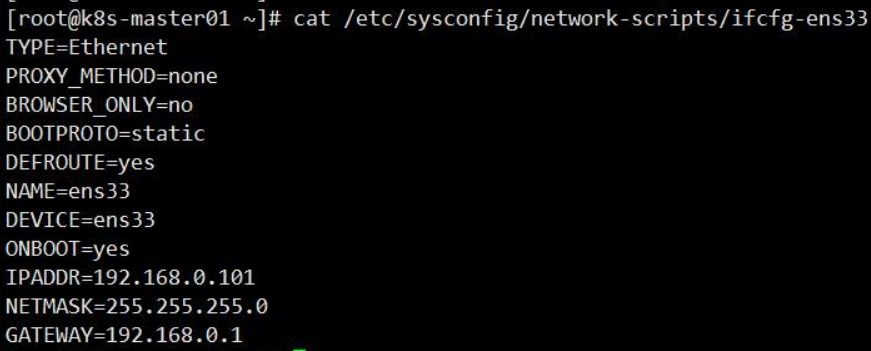

[root@k8s-master01 ~]# sed -i '/IP/d' /etc/sysconfig/network-scripts/ifcfg-ens33

[root@k8s-master01 ~]# sed -i 's/BOOTPROTO=dhcp/BOOTPROTO=static/g' /etc/sysconfig/network-scripts/ifcfg-ens33

[root@k8s-master01 ~]# sed -i 's/ONBOOT=no/ONBOOT=yes/g' /etc/sysconfig/network-scripts/ifcfg-ens33

[root@k8s-master01 ~]# sed -i '/UUID/d' /etc/sysconfig/network-scripts/ifcfg-ens33

[root@k8s-master01 ~]# echo -e "IPADDR=192.168.0.101\nNETMASK=255.255.255.0\nGATEWAY=192.168.0.1" >> /etc/sysconfig/network-scripts/ifcfg-ens33

[root@k8s-master01 ~]# systemctl restart network

安装依赖包

[root@k8s-master01 ~]# yum install -y conntrack ntpdate ntp ipvsadm ipset jq iptables curl sysstat libseccomp wgetvimnet-tools git设置防火墙并且清空规则

[root@k8s-master01 ~]# systemctl stop firewalld && systemctl disable firewalld

[root@k8s-master01 ~]#yum -y install iptables-services && systemctl start iptables && systemctl enable iptables&& iptables -F && service iptables save关闭 selinux

[root@k8s-master02 ~]# swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

[root@k8s-master01 ~]# setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config服务器之间免密配置

#生成密钥

[root@k8s-master01 ~]# ssh-keygen -t rsa

#将密钥传到其他服务器上面

[root@k8s-master01 ~]# ssh-copy-id -i /root/.ssh/id_rsa.pub root@192.168.0.102Docker 依赖安装

[root@k8s-master01 ~]# yum install -y yum-utils device-mapper-persistent-data lvm2安装 Docker 源

[root@k8s-master01 ~]# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo安装 Docker

[root@k8s-master01 ~]# yum update -y && yum install -y docker-ce配置 daemon 配置文件

[root@k8s-master01 ~]# mkdir -p /etc/docker

[root@k8s-master01 ~]# mkdir -p /etc/systemd/system/docker.service.d

[root@k8s-master01 ~]# cat > /etc/docker/daemon.json < {

> "exec-opts": ["native.cgroupdriver=systemd"], #着重注意标红字体

> "log-driver": "json-file",

> "log-opts": {

> "max-size": "100m"

> },

> "insecure-registries":["http://registry.k8s-test.com"] #后面会使用到私有镜像仓库

> }

> EOF启动 docker 并且设置开机自启动

[root@k8s-master01 ~]# systemctl daemon-reload && systemctl restart docker && systemctl enable docker安装 kubelet

[root@k8s-master01 ~]# cat < /etc/yum.repos.d/kubernetes.repo

> [kubernetes]

> name=Kubernetes

> baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

> enabled=1

> gpgcheck=0

> repo_gpgcheck=0

> gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

> http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

> EOF

[root@k8s-master01 ~]# yum install -y kubelet-1.16.4 kubeadm-1.16.4 kubectl-1.16.4启动 kubelet 并且设置为开机启动

[root@k8s-master01 ~]# systemctl enable kubelet && systemctl start kubeletKeepalive 安装

[root@k8s-master01 ~]# yum -y install keepalived修改配置文件:

master01和master02和master03配置一样,只是router_id记得变一下

[root@k8s-master01 ~]# more /etc/keepa hived/keepalhived.Conf

! Configuration File for keepalived

global_ defs{

router_id master01

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 5

priority 90

advert_int 1

authentication {

auth type PASS

auth pass 1111

}

virtual ipaddress {

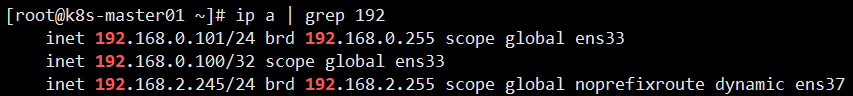

192.168.0.100

}

}启动 keepalived

[root@k8s-master02 ~]# systemctl enable keepalived && systemctl start keepalived验证

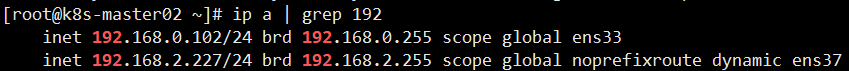

master01

master02

master03

k8s安装

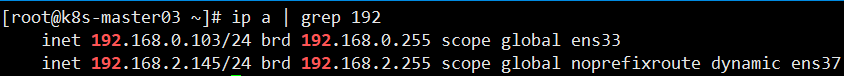

下载镜像

[root@k8s-master01 tools]# sh get_images.sh

部署镜像中心

[root@k8s-master01 images]# docker images | grep k8s-registry.com/registry

[root@k8s-master01 images]# docker run -d -p 80:5000 -v /home/registry:/var/lib/registry --restart=always --name registry k8s-registry.com/registry:1.0将镜像 push 到镜像中心

[root@k8s-master01 images]# docker images | awk '{print $1":"$2}' | xargs -i docker push {}创建 kubeadm-config.yaml 的配置文件

[root@k8s-master01 install-master]# more kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.16.4

apiServer:

certSANs:

- k8s-master01

- k8s-master02

- k8s-master03

- k8s-node01

- k8s-node02

- k8s-node03

- 192.168.0.100

- 192.168.0.101

- 192.168.0.102

- 192.168.0.103

- 192.168.0.111

- 192.168.0.112

- 192.168.0.113

controlPlaneEndpoint: "192.168.0.100:6443"

networking:

podSubnet: "10.244.0.0/16"初始化 master

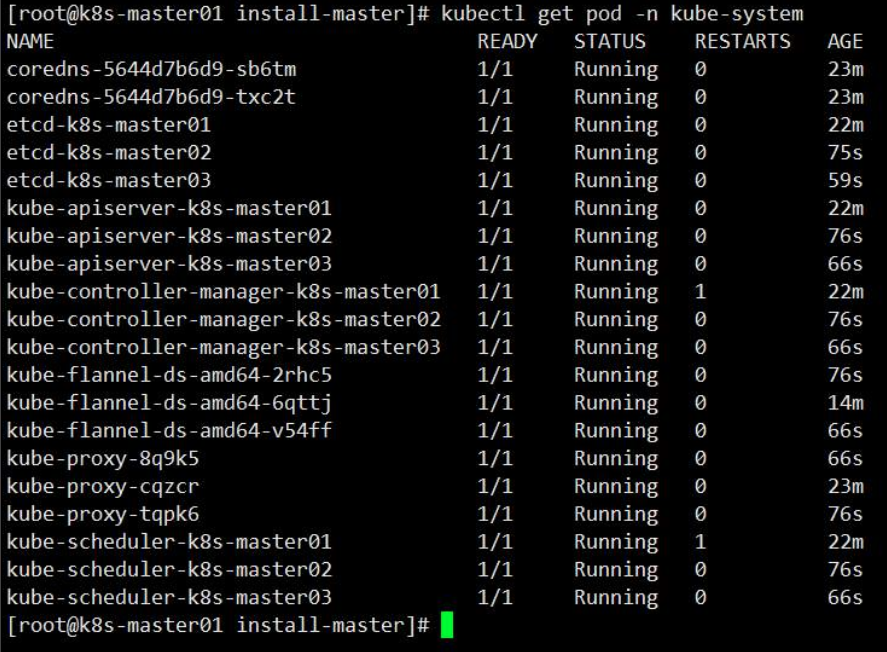

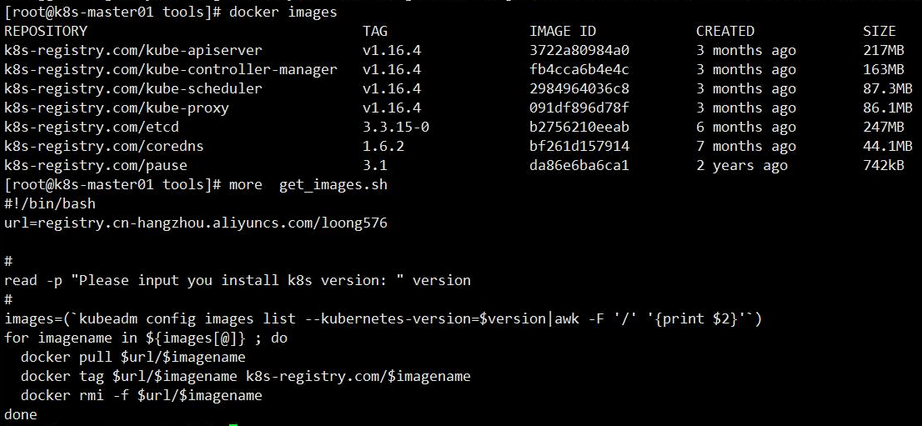

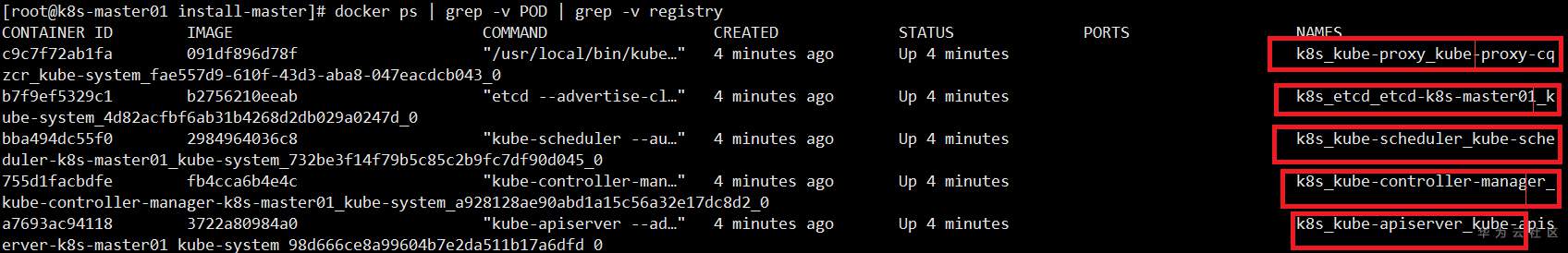

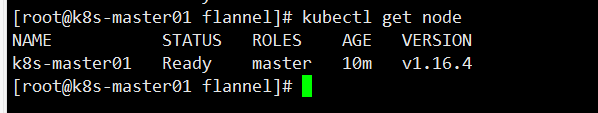

[root@k8s-master01 install-master]# kubeadm init --config=kubeadm-config.yaml验证安装

创建flannel网络

下载 flannel 的 yaml 文件

[root@k8s-master01 flannel]# wget https://raw.githubusercontent.com/coreos/flannel/2140ac876ef134e0ed5af15c65e414cf26827915/Documentation/kube-flannel.yml创建 flannel 网络

[root@k8s-master01 flannel]# kubectl app -f kube-flannel.yml验证安装

master节点添加至集群

master01向其他两个节点颁发证书

[root@k8s-master01 tools]# cat cert-amin-master.sh

[root@k8s-master01 tools]# sh cert-amin-master.sh其他节点将证书放到指定目录

[root@k8s-master02 ~]# sh cert-other-master.sh

[root@k8s-master02 ~]# more cert-other-master.sh加入集群

kubeadm join 192.168.0.100:6443 --token lllil4.2wm1u6ocuxmysn7l \

--discovery-token-ca-cert-hash sha256:fa5075ba896b8dbfdaf19125dee28817fdd349b7c4cea9ab243ad4224eb90892 \

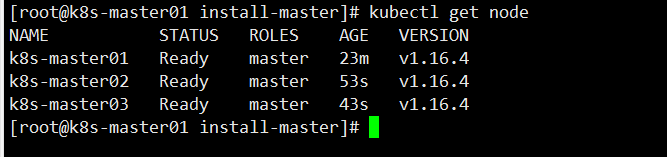

--control-plane查看布置的节点