parquet发音:美 pɑrˈkeɪ 镶木地板;拼花木地板

理解Parquet文件和Arrow格式:从Hugging Face数据集的角度出发

引言

在机器学习和大数据处理中,数据的存储和传输格式对于性能至关重要。两种广泛使用的格式是 Parquet 和 Arrow。它们在数据存储、传输和处理上都有各自的优势,尤其是在大规模数据集的使用中尤为重要。

在这篇博客中,我们将探讨 Parquet 和 Arrow 格式的基本概念、它们的优势以及它们在Hugging Face(HF)数据集中的应用。我们还将结合实际示例,展示如何使用这些格式,并解释为什么在HF上下载的Parquet格式数据集会变成Arrow文件。

什么是Parquet格式?

Parquet 是一种开源的列式存储格式,专为大数据处理和分析任务设计。它是由Apache软件基金会开发的,并且是Hadoop生态系统的一部分。Parquet格式能够高效地存储结构化和半结构化数据,特别适合大规模数据集的存储和查询。

Parquet格式的特点

- 列式存储:数据以列而不是行的方式存储,这意味着只读取需要的列时,I/O效率大大提高,尤其是对于大数据集。

- 高效压缩:由于数据是按列存储的,类似类型的数据会被压缩在一起,从而减少存储空间。

- 支持复杂数据类型:Parquet能够支持嵌套的数据结构,如数组、映射等。

- 跨语言支持:Parquet支持多种编程语言,如Java、Python、C++等,适用于跨平台的数据处理。

为什么Hugging Face使用Parquet格式?

Hugging Face平台上提供的数据集通常使用Parquet格式进行存储,这是因为:

- 高效存储:Parquet的列式存储特性能够提高数据存储和读取效率。

- 大规模数据支持:Parquet在处理大规模数据时,能够节省大量的存储空间,同时提升数据处理速度。

- 与大数据工具兼容:Parquet文件与许多大数据处理框架(如Apache Spark、Apache Hive)兼容,因此适用于大规模数据处理任务。

什么是Arrow格式?

Arrow 是一个跨语言的数据交换格式,主要用于内存中的数据存储和数据传输。它由Apache Arrow项目开发,旨在优化数据的传输效率,尤其是在不同程序之间交换数据时。Arrow格式的特点包括:

- 列式内存格式:Arrow在内存中采用列式存储,能够提高大数据处理中的查询和计算效率。

- 零拷贝数据共享:Arrow支持零拷贝传输,允许多个进程或系统之间共享数据而无需复制数据,极大提高了性能。

- 跨平台支持:Arrow支持多种编程语言,包括Python、C++、Java等,适用于多种数据处理框架和大数据平台。

- 高效的内存布局:Arrow的内存布局设计优化了数据处理效率,特别是在并行计算和数据分析中具有显著的性能提升。

Arrow和Parquet的关系

- Parquet格式 是一种用于数据持久化存储的格式,而 Arrow格式 是一种高效的内存存储和传输格式。

- Parquet格式的文件通常是基于Arrow格式的数据结构来存储的。因此,Arrow和Parquet格式是密切相关的,尤其是在Hugging Face的

datasets库中,Parquet文件经常会被加载为Arrow格式的内存对象。

为什么在Hugging Face上下载的Parquet文件变成Arrow文件?

在Hugging Face上,你会发现许多数据集以Parquet格式存储。这里为什么会使用Parquet格式呢?我们已经讨论过它的存储优势,但你可能会注意到,当你通过datasets库下载这些数据集时,文件变成了Arrow格式。

这主要是因为 Hugging Face的datasets库 使用了 Arrow格式 作为其数据加载和处理的底层格式。尽管数据集存储为Parquet文件,但当你下载数据集时,datasets库会将这些Parquet文件解码成Arrow格式,并将数据加载到内存中。原因如下:

-

高效的内存操作 :Arrow格式是专为内存操作设计的,它能够在内存中高效地表示和操作数据,特别适合大规模数据处理。通过将数据加载为Arrow格式,

datasets库能够更快地进行数据操作和处理。 -

兼容性和跨平台支持:Arrow支持多种编程语言和数据框架,因此它能够确保Hugging Face平台上的数据集能够跨平台、高效地共享和处理。

-

零拷贝数据访问:Arrow支持零拷贝的数据访问,这意味着在加载数据时,避免了不必要的数据复制,从而加速了数据处理的速度。

示例代码:如何加载和使用Parquet和Arrow格式的数据

1. 使用Hugging Face datasets库加载Parquet格式的数据集

python

from datasets import load_dataset

# 加载一个Hugging Face上的Parquet格式数据集

dataset = load_dataset('your_dataset_name')

# 查看数据集的结构

print(dataset)通过上述代码,datasets库会自动处理文件格式的转换,并将Parquet文件转换为内存中的Arrow格式对象。

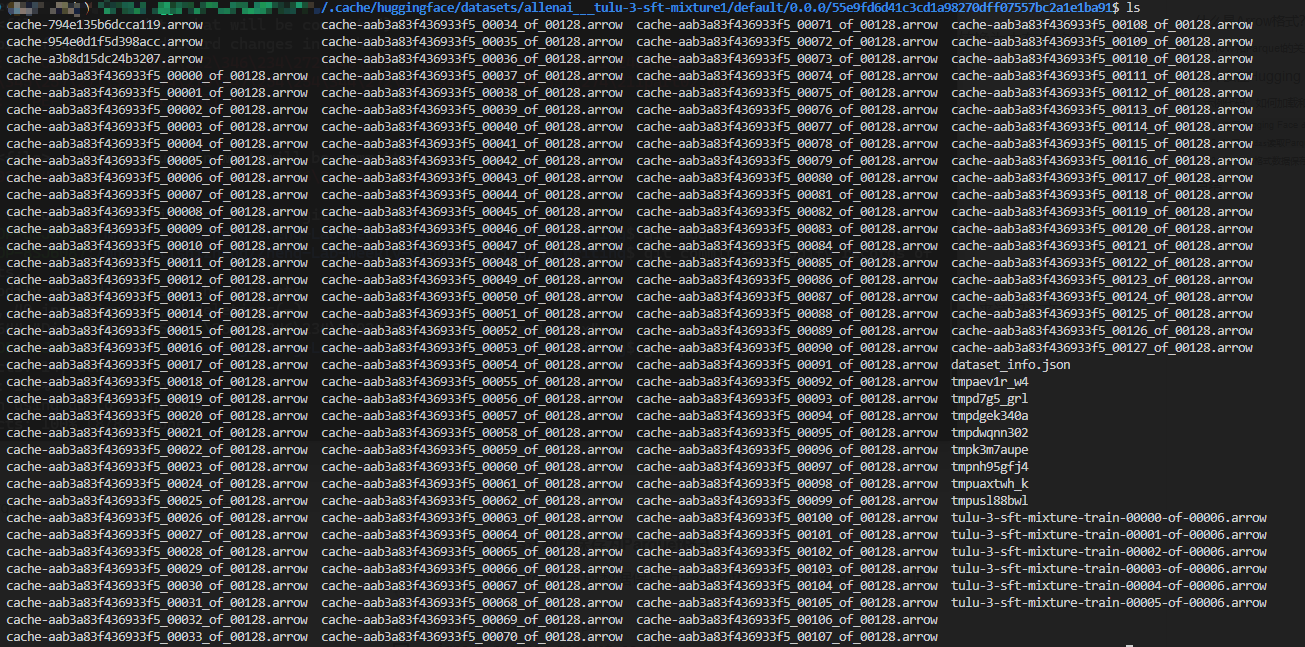

比如执行如下代码:

c

from datasets import Dataset, load_dataset, load_from_disk

# dataset = load_dataset("allenai/tulu-v2-sft-mixture")

dataset = load_dataset("allenai/tulu-3-sft-mixture")

dataset会得到如此多的arrow文件

2. 使用pandas读取Parquet文件

如果你直接从Hugging Face或其他地方下载了Parquet文件,可以使用pandas来读取该文件:

python

import pandas as pd

# 读取本地的Parquet文件

df = pd.read_parquet('your_file.parquet')

# 查看数据

print(df.head())3. 将Arrow格式数据保存为Parquet格式

如果你想将Arrow格式的数据保存为Parquet格式,可以使用pyarrow库:

python

import pyarrow as pa

import pyarrow.parquet as pq

# 假设arrow_table是一个Arrow格式的数据表

arrow_table = pa.Table.from_pandas(df)

# 保存为Parquet格式

pq.write_table(arrow_table, 'your_file.parquet')总结

在本文中,我们讨论了 Parquet格式 和 Arrow格式 的基本概念,并结合Hugging Face的实际应用案例,说明了为什么Hugging Face使用Parquet格式存储数据集,并将其加载为Arrow格式。我们还通过示例代码展示了如何加载、读取和转换这两种格式。

- Parquet格式 适用于高效的数据存储,特别是在大数据处理场景中,而 Arrow格式 则更适合高效的内存操作和跨平台数据交换。

- Hugging Face使用Parquet格式来存储数据集,同时利用Arrow格式来加载和处理数据,以确保高效的内存操作和跨平台兼容性。

通过理解这两种格式的区别和用途,我们可以更好地理解如何处理大规模数据集,以及如何利用Hugging Face平台来进行高效的机器学习和数据处理工作。

Understanding Parquet Files and Arrow Format: A Guide with Hugging Face Datasets

Introduction

In the world of machine learning and big data processing, the format in which data is stored and transferred plays a critical role in performance. Two widely used formats in this context are Parquet and Arrow. Both formats offer significant advantages in terms of data storage, transfer, and processing, especially when dealing with large-scale datasets.

In this blog post, we will explore the basic concepts of Parquet and Arrow formats, their advantages, and their usage in Hugging Face (HF) datasets. We will also provide practical examples, showing how to work with these formats, and explain why Parquet files downloaded from HF are converted into Arrow files.

What is the Parquet Format?

Parquet is an open-source columnar storage format designed for big data processing and analytics. It was developed by the Apache Software Foundation as part of the Hadoop ecosystem. Parquet is efficient for storing both structured and semi-structured data, making it ideal for large-scale datasets.

Key Features of Parquet Format

- Columnar Storage: Data is stored in columns rather than rows. This significantly improves I/O performance when only specific columns are needed, especially for large datasets.

- Efficient Compression: Similar types of data in a column are stored together, enabling better compression rates and reducing storage costs.

- Support for Complex Data Types: Parquet can handle nested data structures like arrays and maps.

- Cross-Language Support: Parquet is supported by various programming languages such as Java, Python, and C++, making it compatible with a wide range of data processing tools.

Why Does Hugging Face Use Parquet Format?

Hugging Face stores many datasets in the Parquet format for several reasons:

- Efficient Storage: Parquet's columnar storage format leads to more efficient storage and faster access to the data, especially when dealing with large datasets.

- Support for Large-Scale Data: Parquet is optimized for storing and querying large volumes of data, making it an ideal choice for machine learning and NLP tasks.

- Compatibility with Big Data Tools: Parquet files are compatible with big data frameworks like Apache Spark and Apache Hive, allowing for seamless integration into large-scale data processing pipelines.

What is Arrow Format?

Arrow is a cross-language data exchange format designed for in-memory data storage and data transfer. It was developed by the Apache Arrow project, aimed at optimizing the performance of data transfer between systems and processing frameworks.

Key Features of Arrow Format

- Columnar In-Memory Format: Similar to Parquet, Arrow uses a columnar storage format but is specifically designed for in-memory operations, enhancing performance in data processing tasks.

- Zero-Copy Data Sharing: Arrow enables zero-copy data sharing, which means that multiple processes or systems can share data without needing to copy it, drastically improving performance.

- Cross-Platform Support: Arrow supports multiple programming languages, including Python, C++, and Java, making it highly versatile across various data processing frameworks.

- Optimized Memory Layout: Arrow's memory layout is designed to optimize data processing, particularly in parallel computation and analytics.

The Relationship Between Arrow and Parquet

- Parquet is a format used for persistent storage of data, while Arrow is used for efficient in-memory storage and data transfer.

- Parquet files are often structured based on Arrow's data model, meaning that when you load a Parquet file into memory (like in Hugging Face), it is typically converted into an Arrow table.

Why Do Hugging Face Datasets Convert Parquet to Arrow?

While many datasets on Hugging Face are stored in Parquet format, when you download these datasets using the datasets library, the files are automatically converted into Arrow format for processing. This happens for several reasons:

-

Efficient Memory Operations: Arrow is designed for in-memory operations, making it much faster for data manipulation and processing than Parquet. Hugging Face uses Arrow for efficient handling of datasets once they are loaded into memory.

-

Cross-Platform and Framework Compatibility: Arrow is designed to be cross-platform and compatible with various data frameworks. This allows Hugging Face datasets to be processed across different environments seamlessly.

-

Zero-Copy Data Access: Arrow's zero-copy data sharing capability allows multiple processes or systems to access the same data without duplication, improving performance and reducing memory usage.

Example Code: How to Work with Parquet and Arrow Files

1. Loading a Parquet Dataset from Hugging Face

python

from datasets import load_dataset

# Load a dataset from Hugging Face (in Parquet format)

dataset = load_dataset('your_dataset_name')

# Check the structure of the dataset

print(dataset)With the datasets library, you can load Parquet datasets, which are internally converted to Arrow format for faster data processing.

2. Reading a Parquet File Using pandas

If you download a Parquet file from Hugging Face or another source, you can read it directly using pandas:

python

import pandas as pd

# Read a Parquet file locally

df = pd.read_parquet('your_file.parquet')

# View the first few rows

print(df.head())3. Saving Arrow Data as Parquet

If you want to save Arrow-format data back as a Parquet file, you can use the pyarrow library:

python

import pyarrow as pa

import pyarrow.parquet as pq

# Assume arrow_table is an Arrow table

arrow_table = pa.Table.from_pandas(df)

# Save as a Parquet file

pq.write_table(arrow_table, 'your_file.parquet')Summary

In this post, we've explored the Parquet and Arrow formats, explained their key features, and discussed why Hugging Face uses them for its datasets.

- Parquet is used for efficient storage of large datasets, especially in big data environments, while Arrow is optimized for high-speed in-memory operations and data transfer.

- Hugging Face stores datasets in Parquet format but uses Arrow for in-memory data manipulation to take advantage of its efficiency and cross-platform capabilities.

By understanding these formats and how they are used, you'll be better equipped to handle large-scale datasets in your own machine learning and data processing workflows.

后记

2024年11月29日12点12分于上海,在GPT4o辅助下完成。