【YOLOv8改进系列】:

YOLOv8改进系列(1)----替换主干网络之EfficientViT(CVPR2023)

YOLOv8改进系列(2)----替换主干网络之FasterNet

YOLOv8改进系列(3)----替换主干网络之ConvNeXt V2

YOLOv8改进系列(4)----替换C2f之FasterNet中的FasterBlock替换C2f中的Bottleneck

YOLOv8改进系列(5)----替换主干网络之EfficientFormerV2

YOLOv8改进系列(6)----替换主干网络之VanillaNet

YOLOv8改进系列(7)----替换主干网络之LSKNet

YOLOv8改进系列(8)----替换主干网络之Swin Transformer

YOLOv8改进系列(9)----替换主干网络之RepViT

YOLOv8改进系列(10)----替换主干网络之UniRepLKNet

目录

[1. 简介](#1. 简介)

[2. MobileNetV4 架构设计](#2. MobileNetV4 架构设计)

[1. Universal Inverted Bottleneck (UIB)](#1. Universal Inverted Bottleneck (UIB))

[2. Mobile MQA](#2. Mobile MQA)

[3. 优化的NAS配方](#3. 优化的NAS配方)

[3. 实验与结果](#3. 实验与结果)

[4. 关键结论](#4. 关键结论)

💯一、MobileNetV4介绍

- 论文题目:《MobileNetV4: Universal Models for the Mobile Ecosystem》

- 论文地址:https://arxiv.org/pdf/2404.10518

1. 简介

文章介绍了MobileNetV4(MNv4),这是Google团队开发的最新一代MobileNets,专为移动设备设计的高效神经网络架构。MNv4通过引入通用倒置瓶颈(Universal Inverted Bottleneck,UIB)搜索块、Mobile MQA注意力块以及优化的神经架构搜索(NAS)配方,实现了在多种移动硬件(包括CPU、DSP、GPU和专用加速器)上的高效性能。MNv4模型在保持高性能的同时,显著提高了计算效率,适用于多种移动设备。

2. MobileNetV4 架构设计

背景知识

- 移动设备上的神经网络需要在准确性和效率之间取得平衡。由于移动设备的计算能力有限,因此需要设计出既高效又能实现实时交互体验的神经网络。MNv4旨在通过创新的架构设计和优化技术,实现这一目标。

研究方法

1. Universal Inverted Bottleneck (UIB)

UIB是MNv4的核心构建块,它将倒置瓶颈(IB)、ConvNext、前馈网络(FFN)和一种新的Extra Depthwise(ExtraDW)变体合并到一个统一的、灵活的结构中。UIB通过可选的深度卷积提供了空间和通道混合的灵活性,扩展了感受野,并提高了计算效率。

2. Mobile MQA

Mobile MQA是一种为移动加速器优化的注意力块,与多头注意力(MHSA)相比,它在移动加速器上实现了超过39%的推理加速。Mobile MQA通过减少内存带宽需求,提高了操作强度,从而在高计算硬件上实现了更高的性能。

3. 优化的NAS配方

MNv4采用了两阶段的NAS方法,首先进行粗粒度搜索以确定最优的滤波器大小,然后进行细粒度搜索以优化UIB的深度卷积层配置。这种方法显著提高了搜索效率,并允许创建比以往更大、更高效的模型。

3. 实验与结果

性能分析

MNv4模型在多种硬件平台上进行了广泛的性能测试,包括ARM Cortex CPU、Qualcomm Hexagon DSP、ARM Mali GPU、Apple Neural Engine和Google EdgeTPU。测试结果显示,MNv4模型在这些平台上都实现了接近Pareto最优的性能,即在不同的硬件上都能在准确性和延迟之间取得良好的平衡。

ImageNet分类任务

在ImageNet-1K分类任务中,MNv4模型与其他领先的高效模型进行了比较。MNv4-Conv-S模型在Pixel 6 CPU上实现了73.8%的Top-1准确率,延迟为2.4ms;而MNv4-Hybrid-L模型在Pixel 8 EdgeTPU上实现了83.4%的Top-1准确率,延迟为3.8ms。通过引入一种新的蒸馏技术,MNv4-Hybrid-L模型的准确率进一步提高到87%,仅比其教师模型低0.5%,但MACs减少了39倍。

COCO目标检测任务

MNv4模型还被用于COCO目标检测任务,与SOTA模型进行了比较。MNv4-Conv-M检测器实现了32.6%的AP,与MobileNetMultiAvg和MobileNetV2相当,但在Pixel 6 CPU上的延迟降低了12%到23%。MNv4-Hybrid-M检测器在AP上提高了1.6%,但延迟增加了18%。

4. 关键结论

-

MNv4通过UIB和Mobile MQA等创新构建块,以及优化的NAS配方,实现了在多种移动硬件上的高效性能。这些模型不仅在准确性上取得了显著提升,而且在延迟上也表现出色,适用于多种移动应用场景。此外,MNv4还引入了一种新的蒸馏技术,进一步提高了模型的准确率,使其在ImageNet-1K分类任务中达到了87%的Top-1准确率,同时保持了较低的延迟。这些成果为移动设备上的计算机视觉任务提供了新的基准。以下是这篇论文的创新与贡献:

-

UIB和Mobile MQA:这两个新构建块为MNv4提供了灵活性和效率,使其能够适应不同的硬件平台。

-

优化的NAS配方:两阶段的NAS方法提高了搜索效率,允许创建更大的模型。

-

蒸馏技术:通过混合不同增强的数据集和添加平衡的同类数据,提高了模型的泛化能力和准确性。

-

性能统一性:MNv4是首个在多种硬件平台上实现接近Pareto最优性能的模型,为移动设备上的深度学习模型设计提供了新的方向。

💯二、具体添加方法

第①步:创建MobileNetV4.py

创建完成后,将下面代码直接复制粘贴进去:

from typing import Any, Callable, Dict, List, Mapping, Optional, Tuple, Union

import torch

import torch.nn as nn

__all__ = ['MobileNetV4ConvSmall', 'MobileNetV4ConvMedium', 'MobileNetV4ConvLarge', 'MobileNetV4HybridMedium', 'MobileNetV4HybridLarge']

MNV4ConvSmall_BLOCK_SPECS = {

"conv0": {

"block_name": "convbn",

"num_blocks": 1,

"block_specs": [

[3, 32, 3, 2]

]

},

"layer1": {

"block_name": "convbn",

"num_blocks": 2,

"block_specs": [

[32, 32, 3, 2],

[32, 32, 1, 1]

]

},

"layer2": {

"block_name": "convbn",

"num_blocks": 2,

"block_specs": [

[32, 96, 3, 2],

[96, 64, 1, 1]

]

},

"layer3": {

"block_name": "uib",

"num_blocks": 6,

"block_specs": [

[64, 96, 5, 5, True, 2, 3],

[96, 96, 0, 3, True, 1, 2],

[96, 96, 0, 3, True, 1, 2],

[96, 96, 0, 3, True, 1, 2],

[96, 96, 0, 3, True, 1, 2],

[96, 96, 3, 0, True, 1, 4],

]

},

"layer4": {

"block_name": "uib",

"num_blocks": 6,

"block_specs": [

[96, 128, 3, 3, True, 2, 6],

[128, 128, 5, 5, True, 1, 4],

[128, 128, 0, 5, True, 1, 4],

[128, 128, 0, 5, True, 1, 3],

[128, 128, 0, 3, True, 1, 4],

[128, 128, 0, 3, True, 1, 4],

]

},

"layer5": {

"block_name": "convbn",

"num_blocks": 2,

"block_specs": [

[128, 960, 1, 1],

[960, 1280, 1, 1]

]

}

}

MNV4ConvMedium_BLOCK_SPECS = {

"conv0": {

"block_name": "convbn",

"num_blocks": 1,

"block_specs": [

[3, 32, 3, 2]

]

},

"layer1": {

"block_name": "fused_ib",

"num_blocks": 1,

"block_specs": [

[32, 48, 2, 4.0, True]

]

},

"layer2": {

"block_name": "uib",

"num_blocks": 2,

"block_specs": [

[48, 80, 3, 5, True, 2, 4],

[80, 80, 3, 3, True, 1, 2]

]

},

"layer3": {

"block_name": "uib",

"num_blocks": 8,

"block_specs": [

[80, 160, 3, 5, True, 2, 6],

[160, 160, 3, 3, True, 1, 4],

[160, 160, 3, 3, True, 1, 4],

[160, 160, 3, 5, True, 1, 4],

[160, 160, 3, 3, True, 1, 4],

[160, 160, 3, 0, True, 1, 4],

[160, 160, 0, 0, True, 1, 2],

[160, 160, 3, 0, True, 1, 4]

]

},

"layer4": {

"block_name": "uib",

"num_blocks": 11,

"block_specs": [

[160, 256, 5, 5, True, 2, 6],

[256, 256, 5, 5, True, 1, 4],

[256, 256, 3, 5, True, 1, 4],

[256, 256, 3, 5, True, 1, 4],

[256, 256, 0, 0, True, 1, 4],

[256, 256, 3, 0, True, 1, 4],

[256, 256, 3, 5, True, 1, 2],

[256, 256, 5, 5, True, 1, 4],

[256, 256, 0, 0, True, 1, 4],

[256, 256, 0, 0, True, 1, 4],

[256, 256, 5, 0, True, 1, 2]

]

},

"layer5": {

"block_name": "convbn",

"num_blocks": 2,

"block_specs": [

[256, 960, 1, 1],

[960, 1280, 1, 1]

]

}

}

MNV4ConvLarge_BLOCK_SPECS = {

"conv0": {

"block_name": "convbn",

"num_blocks": 1,

"block_specs": [

[3, 24, 3, 2]

]

},

"layer1": {

"block_name": "fused_ib",

"num_blocks": 1,

"block_specs": [

[24, 48, 2, 4.0, True]

]

},

"layer2": {

"block_name": "uib",

"num_blocks": 2,

"block_specs": [

[48, 96, 3, 5, True, 2, 4],

[96, 96, 3, 3, True, 1, 4]

]

},

"layer3": {

"block_name": "uib",

"num_blocks": 11,

"block_specs": [

[96, 192, 3, 5, True, 2, 4],

[192, 192, 3, 3, True, 1, 4],

[192, 192, 3, 3, True, 1, 4],

[192, 192, 3, 3, True, 1, 4],

[192, 192, 3, 5, True, 1, 4],

[192, 192, 5, 3, True, 1, 4],

[192, 192, 5, 3, True, 1, 4],

[192, 192, 5, 3, True, 1, 4],

[192, 192, 5, 3, True, 1, 4],

[192, 192, 5, 3, True, 1, 4],

[192, 192, 3, 0, True, 1, 4]

]

},

"layer4": {

"block_name": "uib",

"num_blocks": 13,

"block_specs": [

[192, 512, 5, 5, True, 2, 4],

[512, 512, 5, 5, True, 1, 4],

[512, 512, 5, 5, True, 1, 4],

[512, 512, 5, 5, True, 1, 4],

[512, 512, 5, 0, True, 1, 4],

[512, 512, 5, 3, True, 1, 4],

[512, 512, 5, 0, True, 1, 4],

[512, 512, 5, 0, True, 1, 4],

[512, 512, 5, 3, True, 1, 4],

[512, 512, 5, 5, True, 1, 4],

[512, 512, 5, 0, True, 1, 4],

[512, 512, 5, 0, True, 1, 4],

[512, 512, 5, 0, True, 1, 4]

]

},

"layer5": {

"block_name": "convbn",

"num_blocks": 2,

"block_specs": [

[512, 960, 1, 1],

[960, 1280, 1, 1]

]

}

}

MNV4HybridConvMedium_BLOCK_SPECS = {

}

MNV4HybridConvLarge_BLOCK_SPECS = {

}

MODEL_SPECS = {

"MobileNetV4ConvSmall": MNV4ConvSmall_BLOCK_SPECS,

"MobileNetV4ConvMedium": MNV4ConvMedium_BLOCK_SPECS,

"MobileNetV4ConvLarge": MNV4ConvLarge_BLOCK_SPECS,

"MobileNetV4HybridMedium": MNV4HybridConvMedium_BLOCK_SPECS,

"MobileNetV4HybridLarge": MNV4HybridConvLarge_BLOCK_SPECS,

}

def make_divisible(

value: float,

divisor: int,

min_value: Optional[float] = None,

round_down_protect: bool = True,

) -> int:

"""

This function is copied from here

"https://github.com/tensorflow/models/blob/master/official/vision/modeling/layers/nn_layers.py"

This is to ensure that all layers have channels that are divisible by 8.

Args:

value: A `float` of original value.

divisor: An `int` of the divisor that need to be checked upon.

min_value: A `float` of minimum value threshold.

round_down_protect: A `bool` indicating whether round down more than 10%

will be allowed.

Returns:

The adjusted value in `int` that is divisible against divisor.

"""

if min_value is None:

min_value = divisor

new_value = max(min_value, int(value + divisor / 2) // divisor * divisor)

# Make sure that round down does not go down by more than 10%.

if round_down_protect and new_value < 0.9 * value:

new_value += divisor

return int(new_value)

def conv_2d(inp, oup, kernel_size=3, stride=1, groups=1, bias=False, norm=True, act=True):

conv = nn.Sequential()

padding = (kernel_size - 1) // 2

conv.add_module('conv', nn.Conv2d(inp, oup, kernel_size, stride, padding, bias=bias, groups=groups))

if norm:

conv.add_module('BatchNorm2d', nn.BatchNorm2d(oup))

if act:

conv.add_module('Activation', nn.ReLU6())

return conv

class InvertedResidual(nn.Module):

def __init__(self, inp, oup, stride, expand_ratio, act=False):

super(InvertedResidual, self).__init__()

self.stride = stride

assert stride in [1, 2]

hidden_dim = int(round(inp * expand_ratio))

self.block = nn.Sequential()

if expand_ratio != 1:

self.block.add_module('exp_1x1', conv_2d(inp, hidden_dim, kernel_size=1, stride=1))

self.block.add_module('conv_3x3', conv_2d(hidden_dim, hidden_dim, kernel_size=3, stride=stride, groups=hidden_dim))

self.block.add_module('red_1x1', conv_2d(hidden_dim, oup, kernel_size=1, stride=1, act=act))

self.use_res_connect = self.stride == 1 and inp == oup

def forward(self, x):

if self.use_res_connect:

return x + self.block(x)

else:

return self.block(x)

class UniversalInvertedBottleneckBlock(nn.Module):

def __init__(self,

inp,

oup,

start_dw_kernel_size,

middle_dw_kernel_size,

middle_dw_downsample,

stride,

expand_ratio

):

super().__init__()

# Starting depthwise conv.

self.start_dw_kernel_size = start_dw_kernel_size

if self.start_dw_kernel_size:

stride_ = stride if not middle_dw_downsample else 1

self._start_dw_ = conv_2d(inp, inp, kernel_size=start_dw_kernel_size, stride=stride_, groups=inp, act=False)

# Expansion with 1x1 convs.

expand_filters = make_divisible(inp * expand_ratio, 8)

self._expand_conv = conv_2d(inp, expand_filters, kernel_size=1)

# Middle depthwise conv.

self.middle_dw_kernel_size = middle_dw_kernel_size

if self.middle_dw_kernel_size:

stride_ = stride if middle_dw_downsample else 1

self._middle_dw = conv_2d(expand_filters, expand_filters, kernel_size=middle_dw_kernel_size, stride=stride_, groups=expand_filters)

# Projection with 1x1 convs.

self._proj_conv = conv_2d(expand_filters, oup, kernel_size=1, stride=1, act=False)

# Ending depthwise conv.

# this not used

# _end_dw_kernel_size = 0

# self._end_dw = conv_2d(oup, oup, kernel_size=_end_dw_kernel_size, stride=stride, groups=inp, act=False)

def forward(self, x):

if self.start_dw_kernel_size:

x = self._start_dw_(x)

# print("_start_dw_", x.shape)

x = self._expand_conv(x)

# print("_expand_conv", x.shape)

if self.middle_dw_kernel_size:

x = self._middle_dw(x)

# print("_middle_dw", x.shape)

x = self._proj_conv(x)

# print("_proj_conv", x.shape)

return x

def build_blocks(layer_spec):

if not layer_spec.get('block_name'):

return nn.Sequential()

block_names = layer_spec['block_name']

layers = nn.Sequential()

if block_names == "convbn":

schema_ = ['inp', 'oup', 'kernel_size', 'stride']

args = {}

for i in range(layer_spec['num_blocks']):

args = dict(zip(schema_, layer_spec['block_specs'][i]))

layers.add_module(f"convbn_{i}", conv_2d(**args))

elif block_names == "uib":

schema_ = ['inp', 'oup', 'start_dw_kernel_size', 'middle_dw_kernel_size', 'middle_dw_downsample', 'stride', 'expand_ratio']

args = {}

for i in range(layer_spec['num_blocks']):

args = dict(zip(schema_, layer_spec['block_specs'][i]))

layers.add_module(f"uib_{i}", UniversalInvertedBottleneckBlock(**args))

elif block_names == "fused_ib":

schema_ = ['inp', 'oup', 'stride', 'expand_ratio', 'act']

args = {}

for i in range(layer_spec['num_blocks']):

args = dict(zip(schema_, layer_spec['block_specs'][i]))

layers.add_module(f"fused_ib_{i}", InvertedResidual(**args))

else:

raise NotImplementedError

return layers

class MobileNetV4(nn.Module):

def __init__(self, model):

# MobileNetV4ConvSmall MobileNetV4ConvMedium MobileNetV4ConvLarge

# MobileNetV4HybridMedium MobileNetV4HybridLarge

"""Params to initiate MobilenNetV4

Args:

model : support 5 types of models as indicated in

"https://github.com/tensorflow/models/blob/master/official/vision/modeling/backbones/mobilenet.py"

"""

super().__init__()

assert model in MODEL_SPECS.keys()

self.model = model

self.spec = MODEL_SPECS[self.model]

# conv0

self.conv0 = build_blocks(self.spec['conv0'])

# layer1

self.layer1 = build_blocks(self.spec['layer1'])

# layer2

self.layer2 = build_blocks(self.spec['layer2'])

# layer3

self.layer3 = build_blocks(self.spec['layer3'])

# layer4

self.layer4 = build_blocks(self.spec['layer4'])

# layer5

self.layer5 = build_blocks(self.spec['layer5'])

self.features = nn.ModuleList([self.conv0, self.layer1, self.layer2, self.layer3, self.layer4, self.layer5])

self.channel = [i.size(1) for i in self.forward(torch.randn(1, 3, 640, 640))]

def forward(self, x):

input_size = x.size(2)

scale = [4, 8, 16, 32]

features = [None, None, None, None]

for f in self.features:

x = f(x)

if input_size // x.size(2) in scale:

features[scale.index(input_size // x.size(2))] = x

return features

def MobileNetV4ConvSmall():

model = MobileNetV4('MobileNetV4ConvSmall')

return model

def MobileNetV4ConvMedium():

model = MobileNetV4('MobileNetV4ConvMedium')

return model

def MobileNetV4ConvLarge():

model = MobileNetV4('MobileNetV4ConvLarge')

return model

def MobileNetV4HybridMedium():

model = MobileNetV4('MobileNetV4HybridMedium')

return model

def MobileNetV4HybridLarge():

model = MobileNetV4('MobileNetV4HybridLarge')

return model

if __name__ == '__main__':

model = MobileNetV4ConvSmall()

inputs = torch.randn((1, 3, 640, 640))

res = model(inputs)

for i in res:

print(i.size())第②步:修改task.py

(1)引入创建的MobileNetV4文件

from ultralytics.nn.backbone.mobilenetv4 import *(2)修改_predict_once函数

可直接将下述代码替换对应位置

def _predict_once(self, x, profile=False, visualize=False, embed=None):

"""

Perform a forward pass through the network.

Args:

x (torch.Tensor): The input tensor to the model.

profile (bool): Print the computation time of each layer if True, defaults to False.

visualize (bool): Save the feature maps of the model if True, defaults to False.

embed (list, optional): A list of feature vectors/embeddings to return.

Returns:

(torch.Tensor): The last output of the model.

"""

y, dt, embeddings = [], [], [] # outputs

for idx, m in enumerate(self.model):

if m.f != -1: # if not from previous layer

x = y[m.f] if isinstance(m.f, int) else [x if j == -1 else y[j] for j in m.f] # from earlier layers

if profile:

self._profile_one_layer(m, x, dt)

if hasattr(m, 'backbone'):

x = m(x)

for _ in range(5 - len(x)):

x.insert(0, None)

for i_idx, i in enumerate(x):

if i_idx in self.save:

y.append(i)

else:

y.append(None)

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x if x_ is not None])}')

x = x[-1]

else:

x = m(x) # run

y.append(x if m.i in self.save else None) # save output

# if type(x) in {list, tuple}:

# if idx == (len(self.model) - 1):

# if type(x[1]) is dict:

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x[1]["one2one"]])}')

# else:

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x[1]])}')

# else:

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x if x_ is not None])}')

# elif type(x) is dict:

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x["one2one"]])}')

# else:

# if not hasattr(m, 'backbone'):

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{x.size()}')

if visualize:

feature_visualization(x, m.type, m.i, save_dir=visualize)

if embed and m.i in embed:

embeddings.append(nn.functional.adaptive_avg_pool2d(x, (1, 1)).squeeze(-1).squeeze(-1)) # flatten

if m.i == max(embed):

return torch.unbind(torch.cat(embeddings, 1), dim=0)

return x(3)修改parse_model函数

可以直接把下面的代码粘贴到对应的位置中

def parse_model(d, ch, verbose=True): # model_dict, input_channels(3)

"""

Parse a YOLO model.yaml dictionary into a PyTorch model.

Args:

d (dict): Model dictionary.

ch (int): Input channels.

verbose (bool): Whether to print model details.

Returns:

(tuple): Tuple containing the PyTorch model and sorted list of output layers.

"""

import ast

# Args

max_channels = float("inf")

nc, act, scales = (d.get(x) for x in ("nc", "activation", "scales"))

depth, width, kpt_shape = (d.get(x, 1.0) for x in ("depth_multiple", "width_multiple", "kpt_shape"))

if scales:

scale = d.get("scale")

if not scale:

scale = tuple(scales.keys())[0]

LOGGER.warning(f"WARNING ⚠️ no model scale passed. Assuming scale='{scale}'.")

if len(scales[scale]) == 3:

depth, width, max_channels = scales[scale]

elif len(scales[scale]) == 4:

depth, width, max_channels, threshold = scales[scale]

if act:

Conv.default_act = eval(act) # redefine default activation, i.e. Conv.default_act = nn.SiLU()

if verbose:

LOGGER.info(f"{colorstr('activation:')} {act}") # print

if verbose:

LOGGER.info(f"\n{'':>3}{'from':>20}{'n':>3}{'params':>10} {'module':<60}{'arguments':<50}")

ch = [ch]

layers, save, c2 = [], [], ch[-1] # layers, savelist, ch out

is_backbone = False

for i, (f, n, m, args) in enumerate(d["backbone"] + d["head"]): # from, number, module, args

try:

if m == 'node_mode':

m = d[m]

if len(args) > 0:

if args[0] == 'head_channel':

args[0] = int(d[args[0]])

t = m

m = getattr(torch.nn, m[3:]) if 'nn.' in m else globals()[m] # get module

except:

pass

for j, a in enumerate(args):

if isinstance(a, str):

with contextlib.suppress(ValueError):

try:

args[j] = locals()[a] if a in locals() else ast.literal_eval(a)

except:

args[j] = a

n = n_ = max(round(n * depth), 1) if n > 1 else n # depth gain

if m in {

Classify, Conv, ConvTranspose, GhostConv, Bottleneck, GhostBottleneck, SPP, SPPF, DWConv, Focus,

BottleneckCSP, C1, C2, C2f, ELAN1, AConv, SPPELAN, C2fAttn, C3, C3TR,

C3Ghost, nn.Conv2d, nn.ConvTranspose2d, DWConvTranspose2d, C3x, RepC3, PSA, SCDown, C2fCIB

}:

if args[0] == 'head_channel':

args[0] = d[args[0]]

c1, c2 = ch[f], args[0]

if c2 != nc: # if c2 not equal to number of classes (i.e. for Classify() output)

c2 = make_divisible(min(c2, max_channels) * width, 8)

if m is C2fAttn:

args[1] = make_divisible(min(args[1], max_channels // 2) * width, 8) # embed channels

args[2] = int(

max(round(min(args[2], max_channels // 2 // 32)) * width, 1) if args[2] > 1 else args[2]

) # num heads

args = [c1, c2, *args[1:]]

elif m in {AIFI}:

args = [ch[f], *args]

c2 = args[0]

elif m in (HGStem, HGBlock):

c1, cm, c2 = ch[f], args[0], args[1]

if c2 != nc: # if c2 not equal to number of classes (i.e. for Classify() output)

c2 = make_divisible(min(c2, max_channels) * width, 8)

cm = make_divisible(min(cm, max_channels) * width, 8)

args = [c1, cm, c2, *args[2:]]

if m in (HGBlock):

args.insert(4, n) # number of repeats

n = 1

elif m is ResNetLayer:

c2 = args[1] if args[3] else args[1] * 4

elif m is nn.BatchNorm2d:

args = [ch[f]]

elif m is Concat:

c2 = sum(ch[x] for x in f)

elif m in frozenset({Detect, WorldDetect, Segment, Pose, OBB, ImagePoolingAttn, v10Detect}):

args.append([ch[x] for x in f])

elif m is RTDETRDecoder: # special case, channels arg must be passed in index 1

args.insert(1, [ch[x] for x in f])

elif m is CBLinear:

c2 = make_divisible(min(args[0][-1], max_channels) * width, 8)

c1 = ch[f]

args = [c1, [make_divisible(min(c2_, max_channels) * width, 8) for c2_ in args[0]], *args[1:]]

elif m is CBFuse:

c2 = ch[f[-1]]

elif isinstance(m, str):

t = m

if len(args) == 2:

m = timm.create_model(m, pretrained=args[0], pretrained_cfg_overlay={'file': args[1]},

features_only=True)

elif len(args) == 1:

m = timm.create_model(m, pretrained=args[0], features_only=True)

c2 = m.feature_info.channels()

elif m in {MobileNetV4ConvSmall, MobileNetV4ConvMedium, MobileNetV4ConvLarge, MobileNetV4HybridMedium, MobileNetV4HybridLarge

}:

m = m(*args)

c2 = m.channel

else:

c2 = ch[f]

if isinstance(c2, list):

is_backbone = True

m_ = m

m_.backbone = True

else:

m_ = nn.Sequential(*(m(*args) for _ in range(n))) if n > 1 else m(*args) # module

t = str(m)[8:-2].replace('__main__.', '') # module type

m.np = sum(x.numel() for x in m_.parameters()) # number params

m_.i, m_.f, m_.type = i + 4 if is_backbone else i, f, t # attach index, 'from' index, type

if verbose:

LOGGER.info(f"{i:>3}{str(f):>20}{n_:>3}{m.np:10.0f} {t:<60}{str(args):<50}") # print

save.extend(x % (i + 4 if is_backbone else i) for x in ([f] if isinstance(f, int) else f) if

x != -1) # append to savelist

layers.append(m_)

if i == 0:

ch = []

if isinstance(c2, list):

ch.extend(c2)

for _ in range(5 - len(ch)):

ch.insert(0, 0)

else:

ch.append(c2)

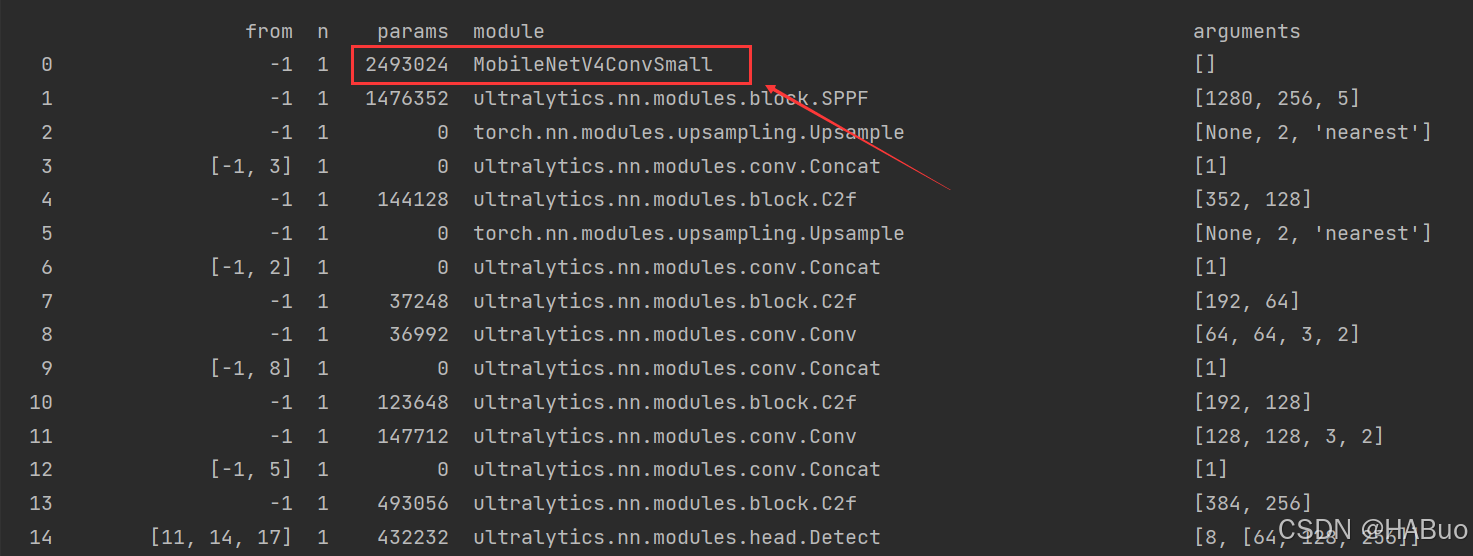

return nn.Sequential(*layers), sorted(save)具体改进差别如下图所示:

第③步:yolov8.yaml文件修改

在下述文件夹中创立yolov8-mobilenetv4.yaml

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# 0-P1/2

# 1-P2/4

# 2-P3/8

# 3-P4/16

# 4-P5/32

# YOLOv8.0n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, MobileNetV4ConvSmall, []] # 4

- [-1, 1, SPPF, [1024, 5]] # 5

# YOLOv8.0n head

head:

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 6

- [[-1, 3], 1, Concat, [1]] # 7 cat backbone P4

- [-1, 3, C2f, [512]] # 8

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 9

- [[-1, 2], 1, Concat, [1]] # 10 cat backbone P3

- [-1, 3, C2f, [256]] # 11 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]] # 12

- [[-1, 8], 1, Concat, [1]] # 13 cat head P4

- [-1, 3, C2f, [512]] # 14 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]] # 15

- [[-1, 5], 1, Concat, [1]] # 16 cat head P5

- [-1, 3, C2f, [1024]] # 17 (P5/32-large)

- [[11, 14, 17], 1, Detect, [nc]] # Detect(P3, P4, P5)第④步:验证是否加入成功

将train.py中的配置文件进行修改,并运行

【YOLOv8改进系列】:

YOLOv8改进系列(1)----替换主干网络之EfficientViT(CVPR2023)

YOLOv8改进系列(2)----替换主干网络之FasterNet

YOLOv8改进系列(3)----替换主干网络之ConvNeXt V2

YOLOv8改进系列(4)----替换C2f之FasterNet中的FasterBlock替换C2f中的Bottleneck

YOLOv8改进系列(5)----替换主干网络之EfficientFormerV2

YOLOv8改进系列(6)----替换主干网络之VanillaNet

YOLOv8改进系列(7)----替换主干网络之LSKNet

YOLOv8改进系列(8)----替换主干网络之Swin Transformer

YOLOv8改进系列(9)----替换主干网络之RepViT

YOLOv8改进系列(10)----替换主干网络之UniRepLKNet