✨作者主页 :IT研究室✨

个人简介:曾从事计算机专业培训教学,擅长Java、Python、微信小程序、Golang、安卓Android等项目实战。接项目定制开发、代码讲解、答辩教学、文档编写、降重等。

文章目录

一、前言

系统介绍

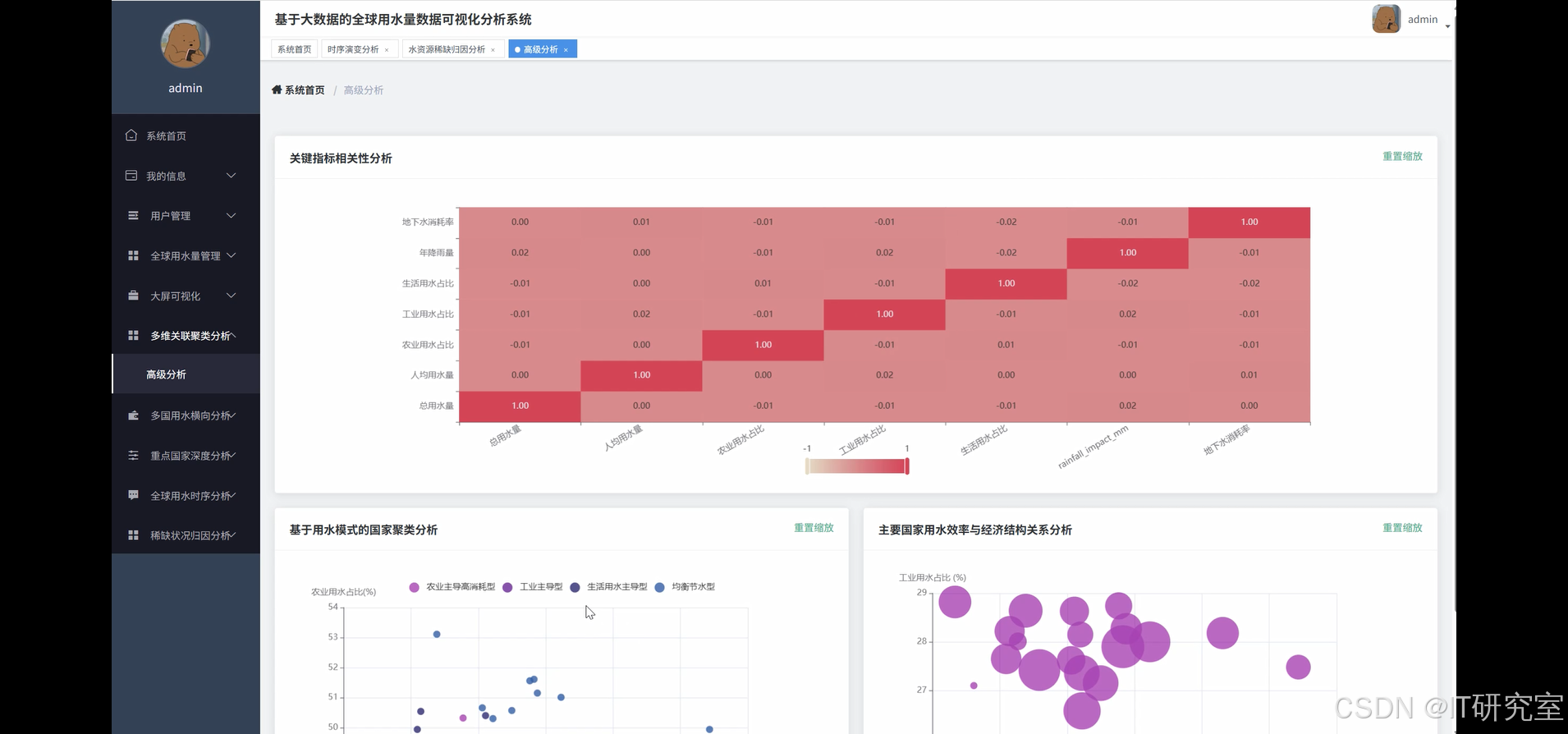

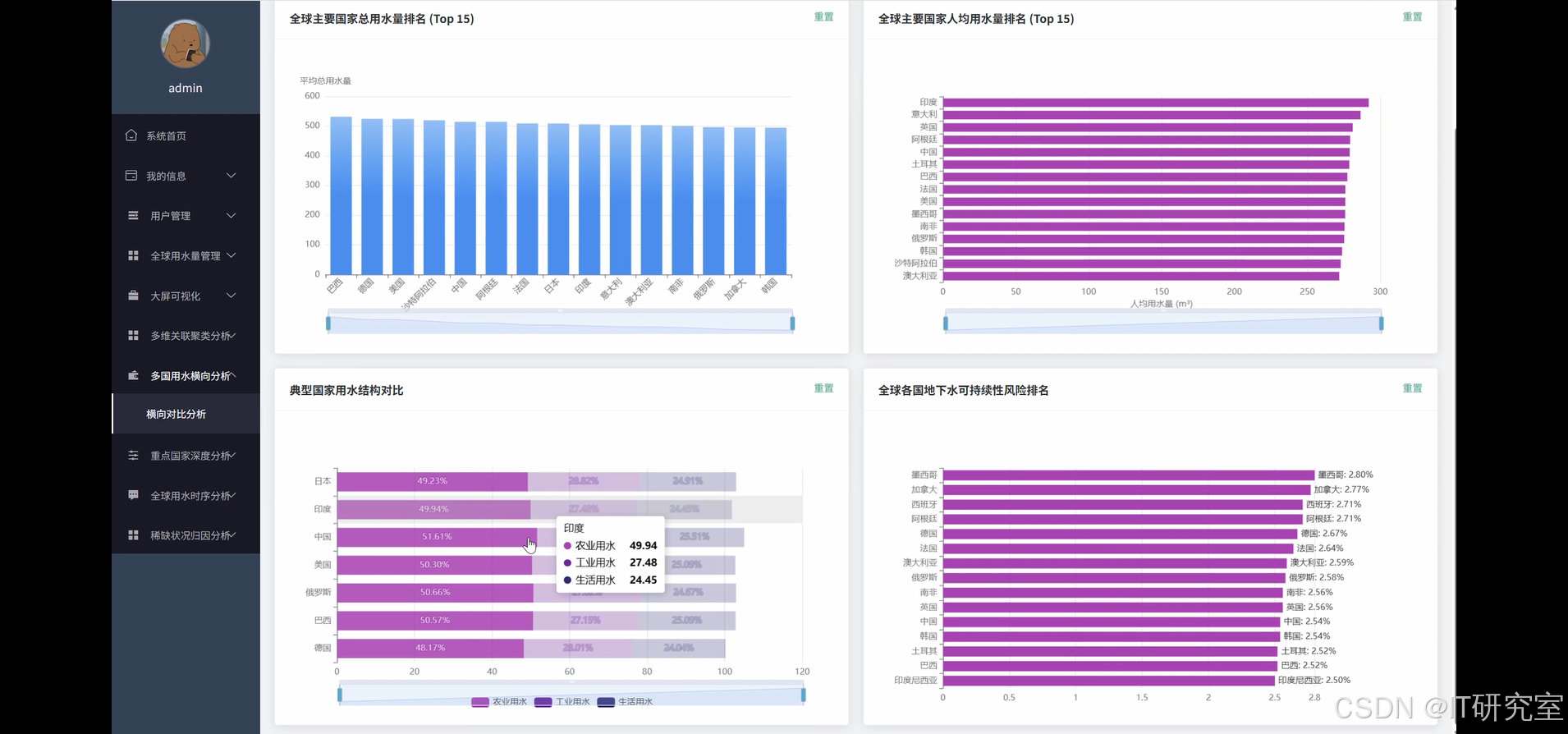

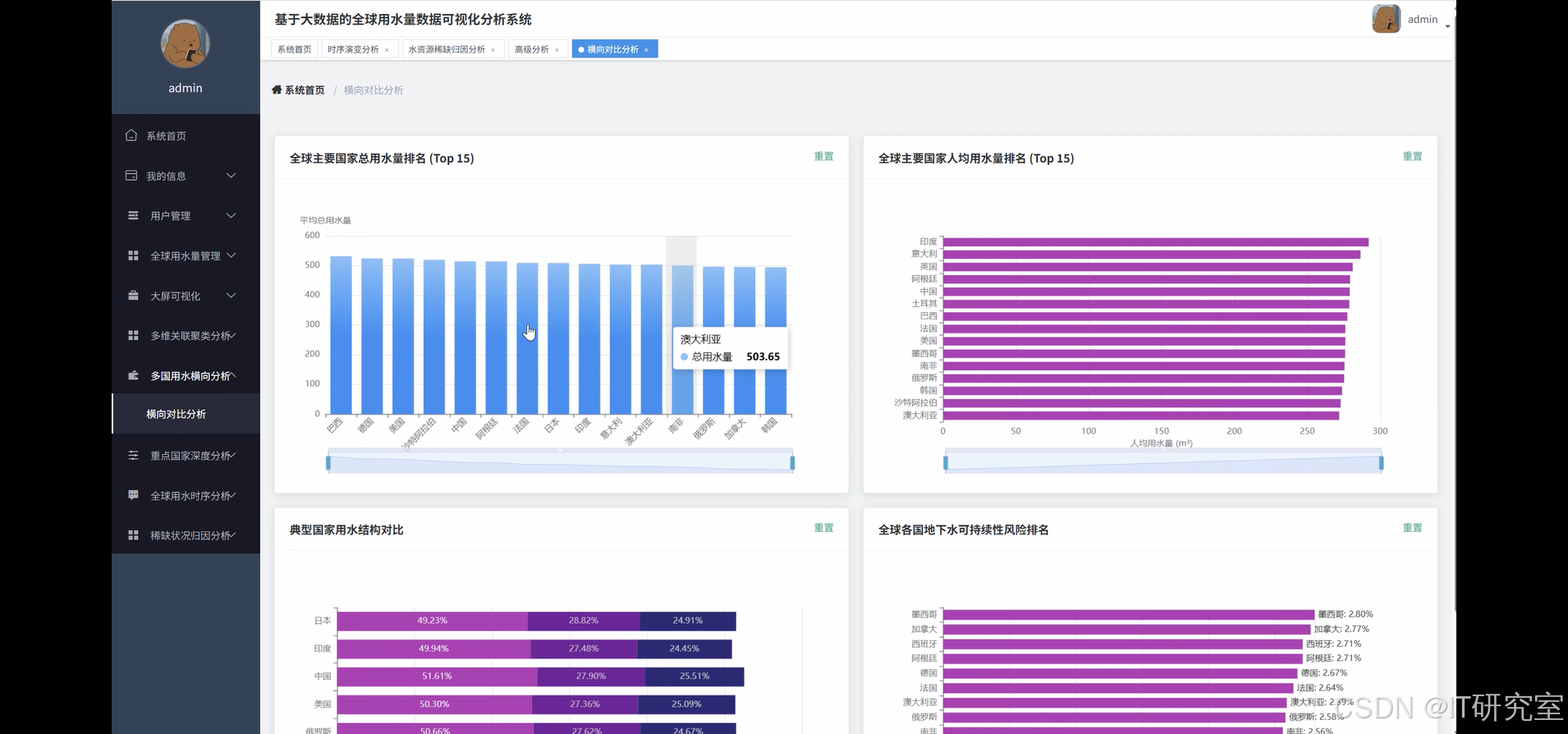

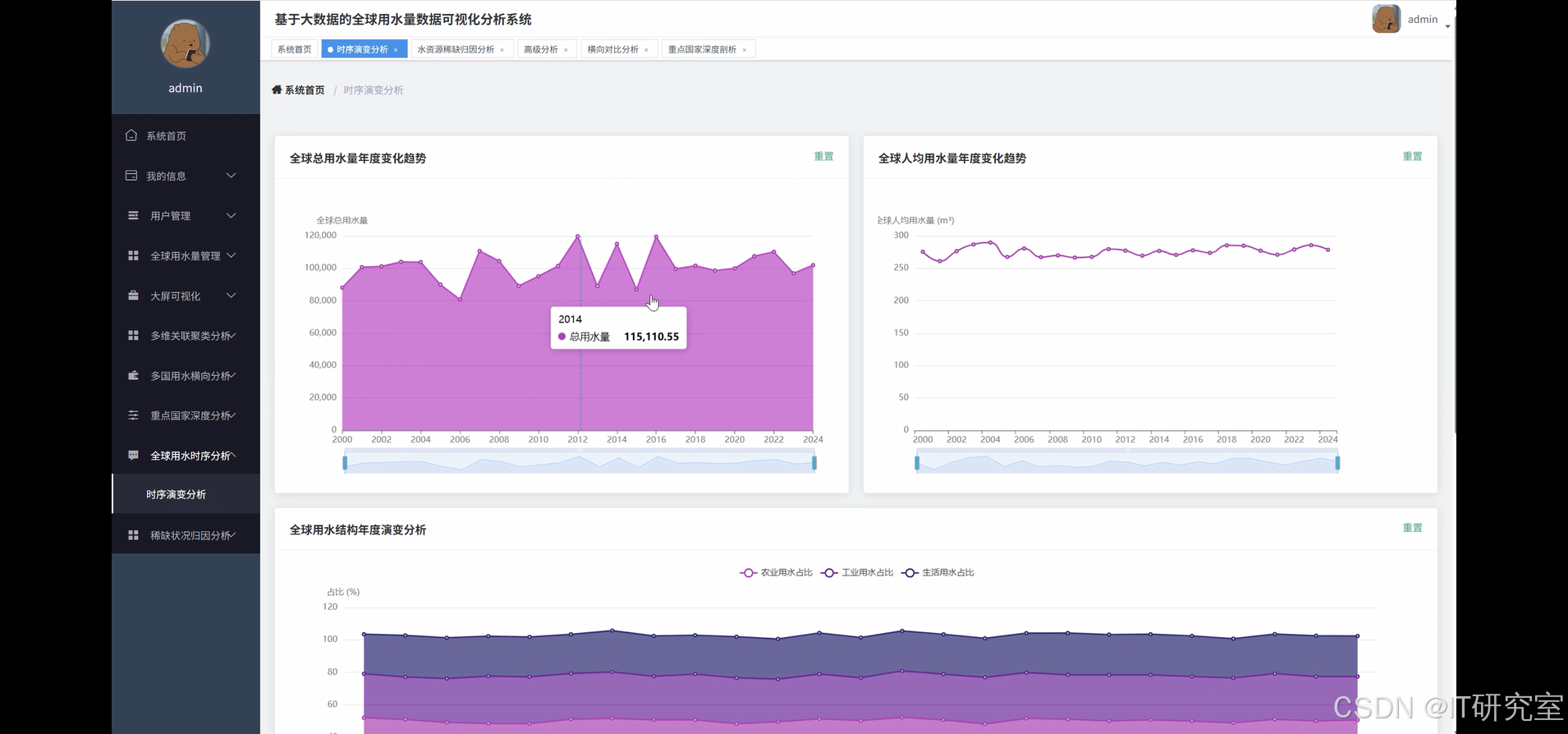

基于大数据的全球用水量数据可视化分析系统是一个集数据采集、存储、处理、分析和可视化展示于一体的综合性水资源管理平台。该系统采用Hadoop+Spark大数据处理框架作为核心技术架构,充分利用HDFS分布式存储和Spark分布式计算的优势,实现对全球海量用水数据的高效处理。系统支持Python/Java语言开发模式,后端采用Django、Spring Boot框架,前端基于Vue+ElementUI构建现代化用户界面,集成Echarts图表库实现丰富的数据可视化效果。通过Spark SQL进行复杂的数据查询分析,结合Pandas和NumPy进行科学计算,系统能够从多个维度对全球用水数据进行深度挖掘,包括多维关联聚类分析、横向对比分析、时序演变分析、水资源稀缺归因分析等功能模块,为用户提供直观清晰的数据洞察和决策支持。

选题背景

当前全球面临着日益严峻的水资源挑战,人口增长、工业发展和气候变化等因素导致各地区用水需求快速增长,水资源供需矛盾日趋突出。传统的水资源管理方式往往依赖于局部数据和经验判断,缺乏全局性的数据支撑和科学分析手段。随着物联网技术的普及和传感器设备的广泛部署,全球各地产生了大量的用水监测数据,这些数据具有体量大、类型多、时效性强等特点,传统的数据处理方法已经无法满足实际需求。同时,水资源管理部门迫切需要一套能够整合多源异构数据、提供实时分析能力的智能化平台,来支撑水资源的科学配置和可持续利用。在这样的背景下,运用大数据技术构建全球用水量数据分析系统,对于提升水资源管理的智能化水平具有重要的现实需求。

选题意义

本系统的建设具有多方面的实际意义,能够为水资源管理领域带来切实的改进和提升。通过构建统一的数据分析平台,系统可以帮助相关部门更好地掌握全球用水格局和变化趋势,为制定合理的水资源政策提供数据参考。在技术层面,系统将大数据处理技术与水资源管理实际需求相结合,验证了Hadoop和Spark等技术在环境科学领域的应用效果,为类似系统的开发提供了技术参考。对于学术研究而言,系统产生的分析结果可以为水资源相关的科研工作提供数据支撑,推动相关理论和方法的发展。虽然作为毕业设计项目,系统的规模和影响范围相对有限,但在实际应用中仍能为小范围的水资源管理实践提供一定的技术支持,特别是在数据可视化和趋势分析方面,能够帮助管理人员更直观地理解复杂的用水数据,提高决策的科学性和准确性。

二、开发环境

- 大数据框架:Hadoop+Spark(本次没用Hive,支持定制)

- 开发语言:Python+Java(两个版本都支持)

- 后端框架:Django+Spring Boot(Spring+SpringMVC+Mybatis)(两个版本都支持)

- 前端:Vue+ElementUI+Echarts+HTML+CSS+JavaScript+jQuery

- 详细技术点:Hadoop、HDFS、Spark、Spark SQL、Pandas、NumPy

- 数据库:MySQL

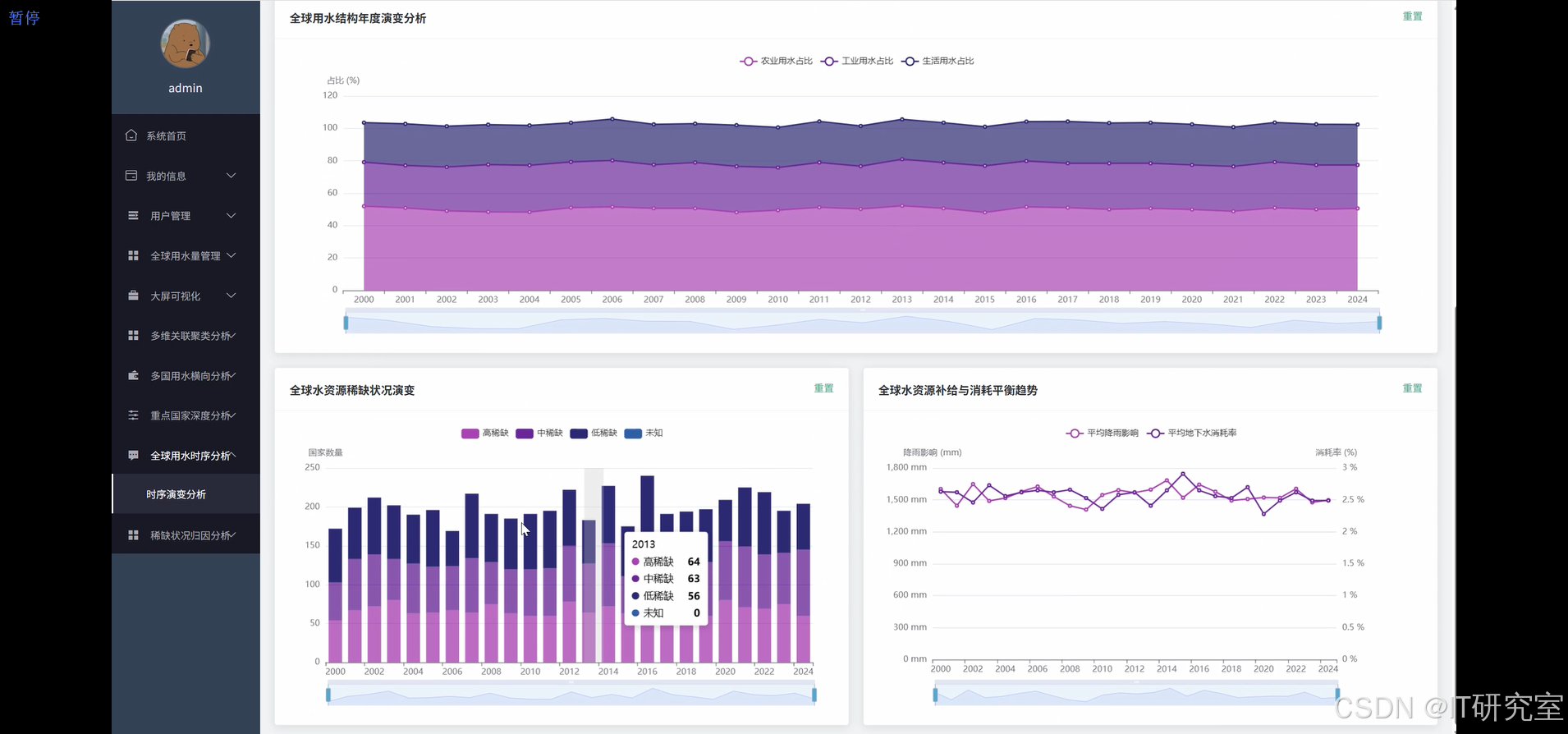

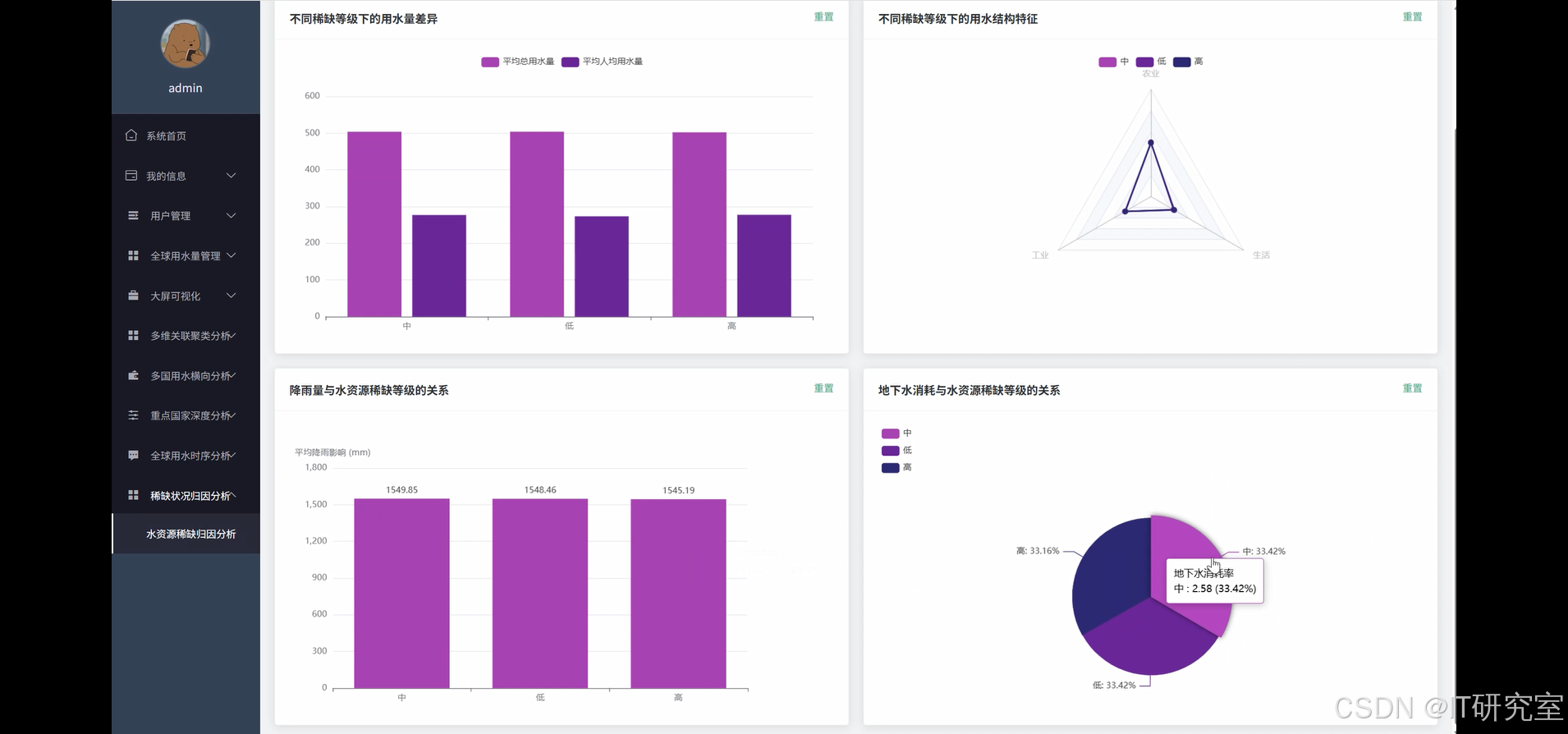

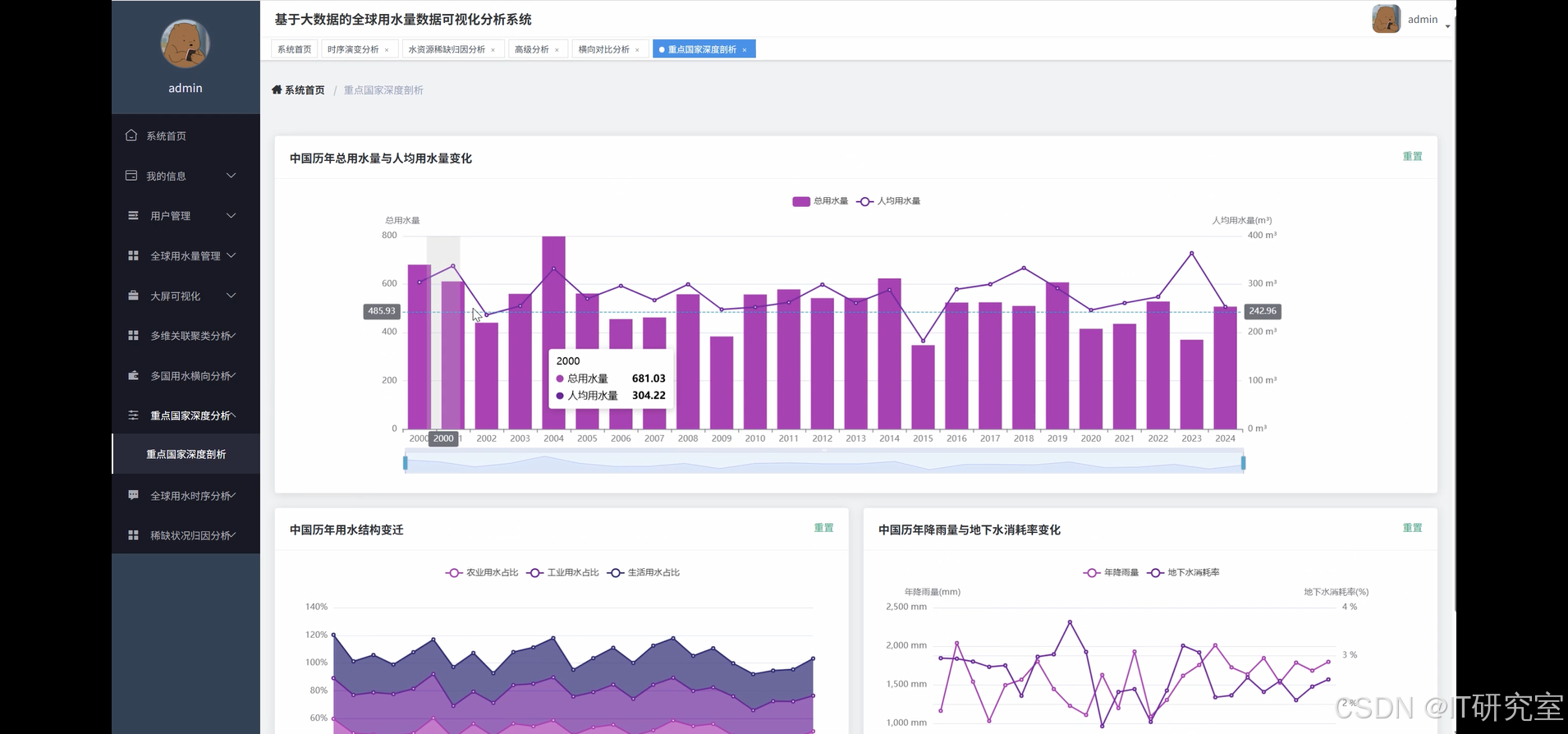

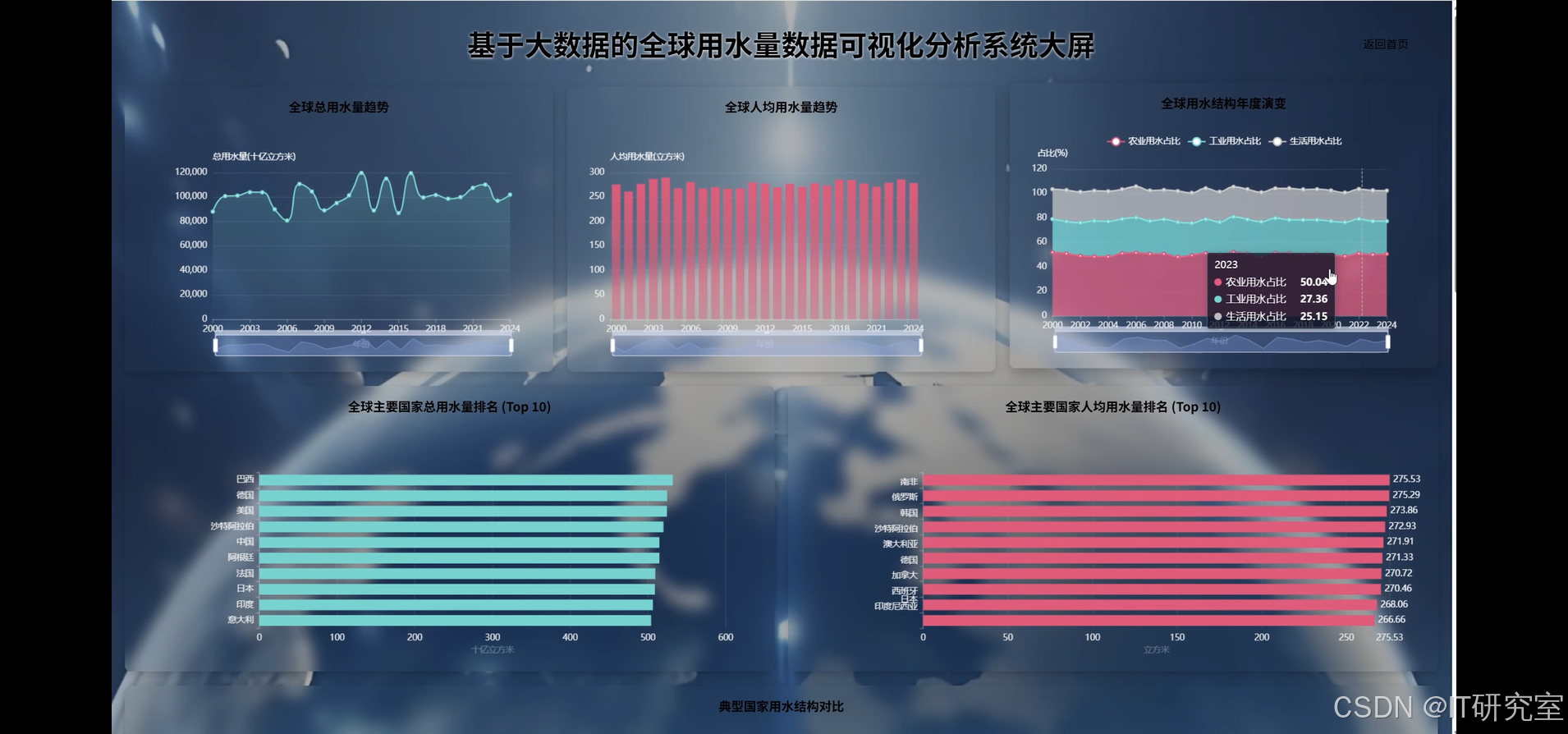

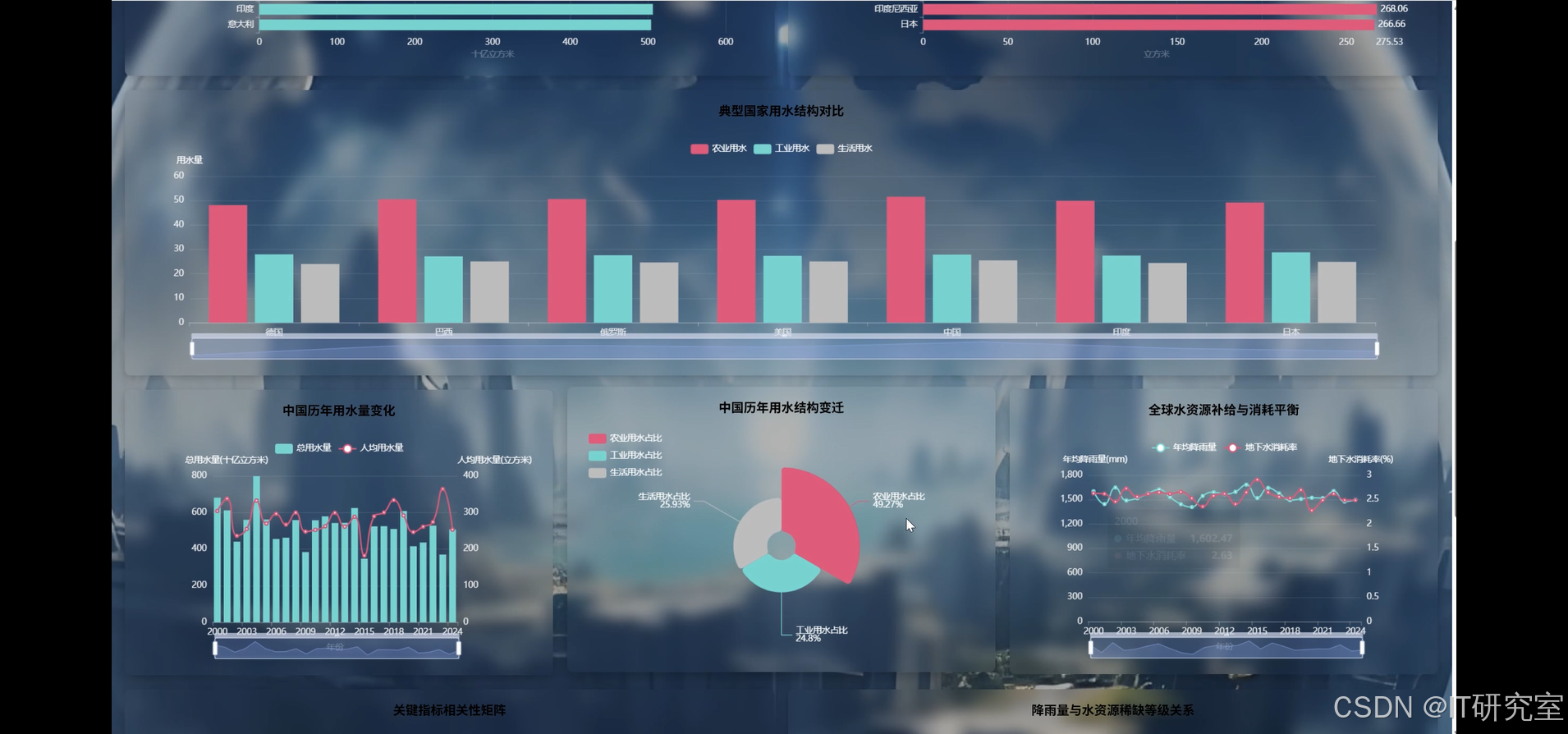

三、系统界面展示

- 基于大数据的全球用水量数据可视化分析系统界面展示:

四、代码参考

- 项目实战代码参考:

java(贴上部分代码)

from pyspark.sql import SparkSession

from pyspark.sql.functions import col, sum, avg, count, when, isnan, isnull, corr, window, lag

from pyspark.sql.types import StructType, StructField, StringType, DoubleType, IntegerType, TimestampType

from pyspark.ml.clustering import KMeans

from pyspark.ml.feature import VectorAssembler, StandardScaler

from pyspark.ml.stat import Correlation

import pandas as pd

import numpy as np

from datetime import datetime, timedelta

import json

spark = SparkSession.builder.appName("GlobalWaterUsageAnalysis").config("spark.sql.adaptive.enabled", "true").config("spark.sql.adaptive.coalescePartitions.enabled", "true").getOrCreate()

def multi_dimensional_clustering_analysis(country_data, usage_data, climate_data):

water_df = spark.read.parquet("hdfs://namenode:9000/water_usage/global_data")

climate_df = spark.read.parquet("hdfs://namenode:9000/climate_data/global_climate")

economic_df = spark.read.parquet("hdfs://namenode:9000/economic_data/gdp_population")

joined_df = water_df.join(climate_df, ["country", "year"], "inner").join(economic_df, ["country", "year"], "inner")

feature_columns = ["total_usage", "agricultural_usage", "industrial_usage", "domestic_usage", "precipitation", "temperature", "gdp_per_capita", "population_density"]

cleaned_df = joined_df.na.fill(0).filter(col("total_usage") > 0)

for column in feature_columns:

Q1 = cleaned_df.approxQuantile(column, [0.25], 0.05)[0]

Q3 = cleaned_df.approxQuantile(column, [0.75], 0.05)[0]

IQR = Q3 - Q1

lower_bound = Q1 - 1.5 * IQR

upper_bound = Q3 + 1.5 * IQR

cleaned_df = cleaned_df.filter((col(column) >= lower_bound) & (col(column) <= upper_bound))

assembler = VectorAssembler(inputCols=feature_columns, outputCol="features")

feature_df = assembler.transform(cleaned_df)

scaler = StandardScaler(inputCol="features", outputCol="scaled_features", withStd=True, withMean=True)

scaler_model = scaler.fit(feature_df)

scaled_df = scaler_model.transform(feature_df)

kmeans = KMeans(k=5, seed=42, featuresCol="scaled_features", predictionCol="cluster")

model = kmeans.fit(scaled_df)

clustered_df = model.transform(scaled_df)

cluster_summary = clustered_df.groupBy("cluster").agg(

count("*").alias("country_count"),

avg("total_usage").alias("avg_total_usage"),

avg("agricultural_usage").alias("avg_agricultural_usage"),

avg("industrial_usage").alias("avg_industrial_usage"),

avg("domestic_usage").alias("avg_domestic_usage"),

avg("precipitation").alias("avg_precipitation"),

avg("temperature").alias("avg_temperature"),

avg("gdp_per_capita").alias("avg_gdp_per_capita")

).orderBy("cluster")

correlation_matrix = Correlation.corr(scaled_df, "scaled_features").head()[0].toArray()

correlation_results = {}

for i, col1 in enumerate(feature_columns):

for j, col2 in enumerate(feature_columns):

if i < j:

correlation_results[f"{col1}_{col2}"] = float(correlation_matrix[i][j])

cluster_countries = clustered_df.select("country", "cluster", "total_usage", "year").groupBy("cluster", "country").agg(avg("total_usage").alias("avg_usage")).orderBy("cluster", col("avg_usage").desc())

return {

"cluster_summary": cluster_summary.toPandas().to_dict("records"),

"correlation_matrix": correlation_results,

"cluster_countries": cluster_countries.toPandas().to_dict("records"),

"model_centers": [center.tolist() for center in model.clusterCenters()]

}

def time_series_evolution_analysis(start_year, end_year, countries_list):

usage_df = spark.read.parquet("hdfs://namenode:9000/water_usage/time_series_data")

filtered_df = usage_df.filter((col("year") >= start_year) & (col("year") <= end_year))

if countries_list:

filtered_df = filtered_df.filter(col("country").isin(countries_list))

global_trend = filtered_df.groupBy("year").agg(

sum("total_usage").alias("global_total_usage"),

sum("agricultural_usage").alias("global_agricultural_usage"),

sum("industrial_usage").alias("global_industrial_usage"),

sum("domestic_usage").alias("global_domestic_usage"),

avg("per_capita_usage").alias("avg_per_capita_usage")

).orderBy("year")

window_spec = Window.partitionBy().orderBy("year")

trend_df = global_trend.withColumn("prev_total_usage", lag("global_total_usage").over(window_spec))

trend_df = trend_df.withColumn("growth_rate",

when(col("prev_total_usage").isNotNull() & (col("prev_total_usage") != 0),

((col("global_total_usage") - col("prev_total_usage")) / col("prev_total_usage") * 100))

.otherwise(0))

seasonal_analysis = filtered_df.groupBy("year", "month").agg(

avg("total_usage").alias("monthly_avg_usage"),

sum("total_usage").alias("monthly_total_usage")

).orderBy("year", "month")

country_trends = filtered_df.groupBy("country", "year").agg(

sum("total_usage").alias("country_total_usage"),

avg("per_capita_usage").alias("country_per_capita_usage")

).orderBy("country", "year")

country_window = Window.partitionBy("country").orderBy("year")

country_trends = country_trends.withColumn("prev_usage", lag("country_total_usage").over(country_window))

country_trends = country_trends.withColumn("country_growth_rate",

when(col("prev_usage").isNotNull() & (col("prev_usage") != 0),

((col("country_total_usage") - col("prev_usage")) / col("prev_usage") * 100))

.otherwise(0))

usage_structure_evolution = filtered_df.groupBy("year").agg(

(sum("agricultural_usage") / sum("total_usage") * 100).alias("agricultural_percentage"),

(sum("industrial_usage") / sum("total_usage") * 100).alias("industrial_percentage"),

(sum("domestic_usage") / sum("total_usage") * 100).alias("domestic_percentage")

).orderBy("year")

volatility_analysis = country_trends.groupBy("country").agg(

avg("country_growth_rate").alias("avg_growth_rate"),

stddev("country_growth_rate").alias("growth_volatility"),

max("country_total_usage").alias("peak_usage"),

min("country_total_usage").alias("min_usage")

).orderBy(col("growth_volatility").desc())

return {

"global_trend": global_trend.toPandas().to_dict("records"),

"trend_with_growth": trend_df.toPandas().to_dict("records"),

"seasonal_patterns": seasonal_analysis.toPandas().to_dict("records"),

"country_trends": country_trends.toPandas().to_dict("records"),

"usage_structure": usage_structure_evolution.toPandas().to_dict("records"),

"volatility_metrics": volatility_analysis.toPandas().to_dict("records")

}

def water_scarcity_attribution_analysis(region_filter, scarcity_threshold):

water_df = spark.read.parquet("hdfs://namenode:9000/water_usage/comprehensive_data")

resource_df = spark.read.parquet("hdfs://namenode:9000/water_resources/availability_data")

infrastructure_df = spark.read.parquet("hdfs://namenode:9000/infrastructure/water_systems")

comprehensive_df = water_df.join(resource_df, ["country", "year"], "inner").join(infrastructure_df, ["country", "year"], "inner")

if region_filter:

comprehensive_df = comprehensive_df.filter(col("region").isin(region_filter))

scarcity_df = comprehensive_df.withColumn("water_stress_index",

col("total_usage") / col("renewable_water_resources"))

scarcity_df = scarcity_df.withColumn("scarcity_level",

when(col("water_stress_index") >= scarcity_threshold, "High")

.when(col("water_stress_index") >= scarcity_threshold * 0.7, "Medium")

.otherwise("Low"))

scarcity_countries = scarcity_df.filter(col("scarcity_level") == "High")

demand_factors = scarcity_countries.withColumn("population_pressure",

col("population_growth_rate") * col("population_density") / 100)

demand_factors = demand_factors.withColumn("economic_pressure",

col("gdp_growth_rate") * col("industrial_water_intensity") / 100)

demand_factors = demand_factors.withColumn("agricultural_pressure",

col("agricultural_land_percentage") * col("irrigation_efficiency_inverse") / 100)

supply_factors = scarcity_countries.withColumn("climate_impact",

when(col("precipitation_change") < -10, 3)

.when(col("precipitation_change") < 0, 2)

.otherwise(1))

supply_factors = supply_factors.withColumn("infrastructure_adequacy",

col("water_storage_capacity") / col("total_demand"))

supply_factors = supply_factors.withColumn("resource_depletion",

when(col("groundwater_depletion_rate") > 5, 3)

.when(col("groundwater_depletion_rate") > 2, 2)

.otherwise(1))

attribution_scores = supply_factors.withColumn("demand_score",

(col("population_pressure") + col("economic_pressure") + col("agricultural_pressure")) / 3)

attribution_scores = attribution_scores.withColumn("supply_score",

(col("climate_impact") + col("infrastructure_adequacy") + col("resource_depletion")) / 3)

attribution_scores = attribution_scores.withColumn("primary_cause",

when(col("demand_score") > col("supply_score"), "Demand-driven")

.otherwise("Supply-constrained"))

regional_attribution = attribution_scores.groupBy("region", "primary_cause").agg(

count("*").alias("country_count"),

avg("water_stress_index").alias("avg_stress_index"),

avg("demand_score").alias("avg_demand_score"),

avg("supply_score").alias("avg_supply_score")

).orderBy("region", "primary_cause")

factor_correlation = attribution_scores.select(

corr("population_pressure", "water_stress_index").alias("population_correlation"),

corr("economic_pressure", "water_stress_index").alias("economic_correlation"),

corr("agricultural_pressure", "water_stress_index").alias("agricultural_correlation"),

corr("climate_impact", "water_stress_index").alias("climate_correlation"),

corr("infrastructure_adequacy", "water_stress_index").alias("infrastructure_correlation")

).collect()[0]

mitigation_priority = attribution_scores.withColumn("mitigation_urgency",

col("water_stress_index") * col("population_density") / 1000)

priority_ranking = mitigation_priority.select(

"country", "water_stress_index", "primary_cause", "mitigation_urgency",

"demand_score", "supply_score"

).orderBy(col("mitigation_urgency").desc())

return {

"scarcity_overview": scarcity_df.groupBy("scarcity_level").count().toPandas().to_dict("records"),

"attribution_analysis": attribution_scores.toPandas().to_dict("records"),

"regional_patterns": regional_attribution.toPandas().to_dict("records"),

"factor_correlations": factor_correlation.asDict(),

"priority_countries": priority_ranking.limit(20).toPandas().to_dict("records")

}五、系统视频

基于大数据的全球用水量数据可视化分析系统项目视频:

大数据毕业设计选题推荐-基于大数据的全球用水量数据可视化分析系统-大数据-Spark-Hadoop-Bigdata

结语

大数据毕业设计选题推荐-基于大数据的全球用水量数据可视化分析系统-大数据-Spark-Hadoop-Bigdata

想看其他类型的计算机毕业设计作品也可以和我说~谢谢大家!

有技术这一块问题大家可以评论区交流或者私我~

大家可以帮忙点赞、收藏、关注、评论啦~

源码获取:⬇⬇⬇