在(三十)和(三十一)中,我们尝试搭建了DPDK和Hyperscan开发环境用于开发网络数据流处理程序。如果我们使用流处理程序提取了网络数据中的元数据信息,就可以输入到数据库(比如clickhouse),使用dbever或者spark进行联机分析了。当然,在此之前,我们需要搭建kafka-clickhouse的流水线。因为,使用DPDK对网络数据流的处理对实时性要求较高,clickhouse的写入优化又需要数据尽可能以万条左右的批处理方式进行处理,所以我们需要kafka做一下缓冲和中转(当然用Ring做缓冲不是不行,只是有可能造成DPDK处理线程的抖动和丢包)。

上次折腾这种搭环境的事到现在差不多快一年了。不要钱的Linux和工具软件就是这点麻烦,之前好容易从cenos7换到stream 8;结果现在stream 8的镜像源都不太好找了,docker也不像以前偶尔还能链上hub仓库。所以,本篇就一切从头吧。

一、CentOS 8 Stream下的环境准备

1. 更改镜像源

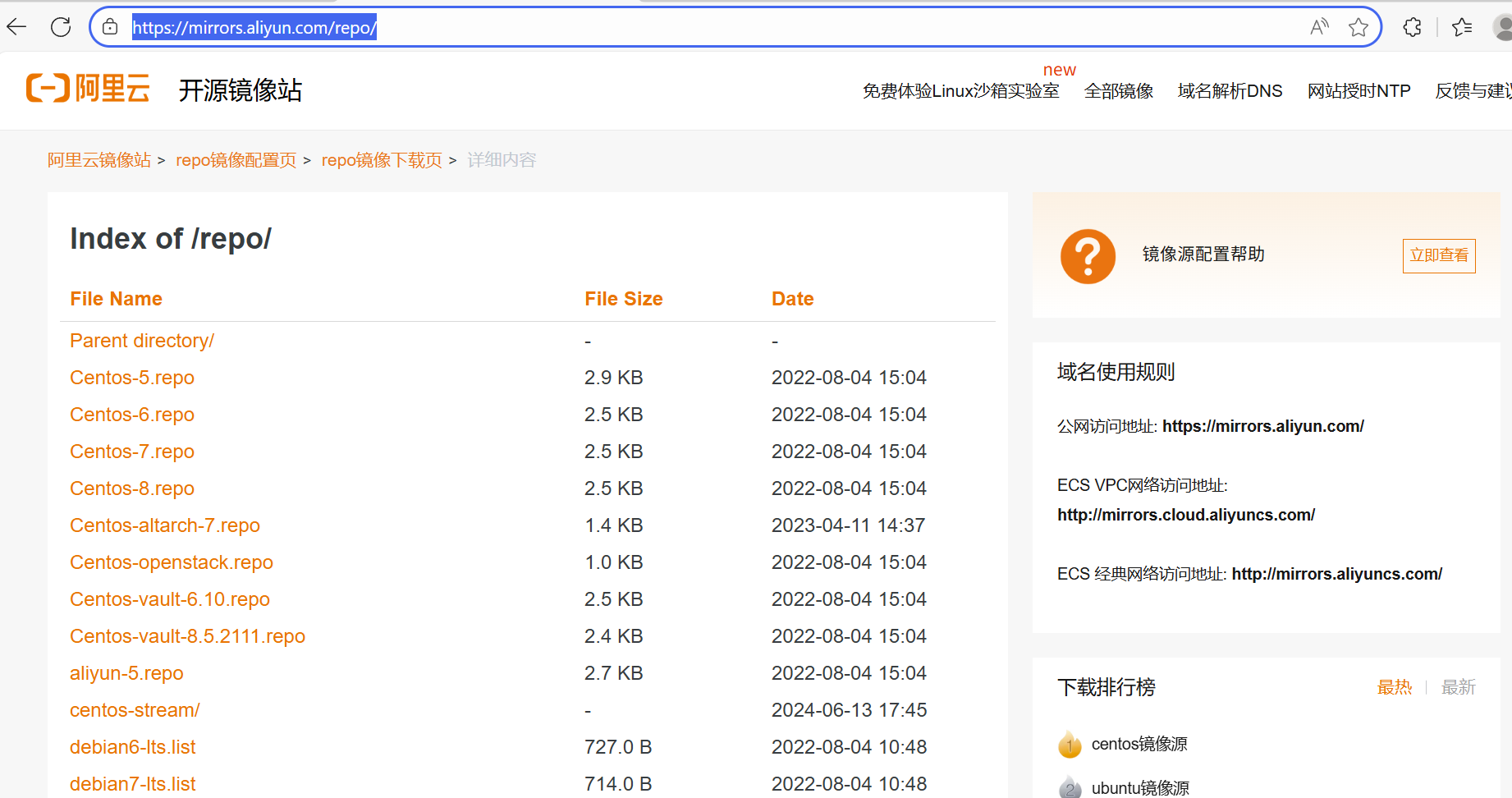

之前我们基本都使用的是centos 8 stream,不过centos 8 的EOL已经过期,Linux社区不再维护,所以我们之前更改镜像源时使用的那些都已经失效了...... 虽然我也尝试了新版本的系统,但我实在受不了那个章鱼桌面。所以,还是找找过期的镜像源吧,还好阿里开发者社区的存货还比较全面。

在https://developer.aliyun.com/mirror/centos页面,给出了替换/etc/yum.repos.d中系统自带repo文件的阿里镜像源文件连接:

将官方repo删除(或者备份后),直接访问该repo地址:

将所有文件下载到/etc/yum.repos.d下:

cd /etc/yum.repos.d

mkdir bak

mv *.repo bak

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-AppStream.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-BaseOS.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-Debuginfo.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-Extras-common.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-Extras.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-HighAvailability.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-Media.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-NFV.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-PowerTools.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-RealTime.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-ResilientStorage.repo

wget https://mirrors.aliyun.com/repo/centos-stream/8/CentOS-Stream-Sources.repo

yum clean all

yum makecache

yum update然后执行yum clean all && yum makecache 即可。

yum update可选,更新有一定版本适配上的风险,不过我没遇到。

2.设置网络

用命令行的方式配置网络,主要是为以后在容器中使用做做铺垫。如之前博文中所述,在centos 6中,尚可以通过更改配置文件和systemctl restart network的方式来配置IP地址等:

[root@localhost etc]# cat /etc/sysconfig/network-scripts/ifcfg-ens160

TYPE=Ethernet

PROXY_METHOD=none

BROWSER_ONLY=no

BOOTPROTO=static #更改为静态IP

IPADDR=192.168.76.11 #增加IP地址

DEFROUTE=yes

IPV4_FAILURE_FATAL=no

IPV6INIT=yes

IPV6_AUTOCONF=yes

IPV6_DEFROUTE=yes

IPV6_FAILURE_FATAL=no

NAME=ens160

DEVICE=ens160

ONBOOT=yes #更改为开机启动但在centos 8 stream中,网络服务的管理替换为NetworkManager,停止与重启NetworkManager服务(注意NetworkManager的大小写,写错了可tab不出来),可以关闭和打开GUI界面右上角的小图标------代表网络服务启动与否。

不过官方还是建议使用nmcli工具进行设置:

如下,在没有打开网络服务前,ifconfig是网络接口处于未配置的状态,使用nmcli connection up interface name 命令启动接口,并使用nmcli connection modify interface name connection.autoconnect yes使网络服务保持开机启动。

[root@localhost etc]# ifconfig

ens160: flags=<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

...... ......

[root@localhost etc]# nmcli connection up ens160

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/3)

[root@localhost etc]# nmcli con mod ens160 connection.autoconnect yesNetworkManager服务启动后,可见对应的接口已经配置了IP地址。一般情况下,我们需要将其更改为静态的,以方便后面的集群配置。仍然使用nmcli的modify命令,更改ipv4.address参数即可。

[root@localhost etc]# ifconfig

ens160: flags=<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.76.128 netmask 255.255.255.0 broadcast 192.168.76.255

RX packets 1049 bytes 78090 (76.2 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 88 bytes 10624 (10.3 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

...... ......

[root@localhost network-scripts]# nmcli connection modify ens160 ipv4.address 192.168.76.11

[root@localhost network-scripts]# ifconfig

ens160: flags=<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.76.128 netmask 255.255.255.0 broadcast 192.168.76.255

RX packets 352 bytes 51066 (49.8 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 285 bytes 35771 (34.9 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

...... ......

[root@localhost network-scripts]# nmcli connection down ens160

成功停用连接 "ens160"(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/6)

[root@localhost network-scripts]# nmcli connection up ens160

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/7)

ens160: flags=<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.76.11 netmask 255.255.255.255 broadcast 0.0.0.0

RX packets 406 bytes 62225 (60.7 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 340 bytes 42599 (41.6 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

...... ......不过这种更改方式似乎并不会全局生效,在GUI界面的网络配置中,仍然使用更改前的值(可以理解为GUI实际在系统启动时就读了这个值,nmcli modifu并不会与GUI同步)。所以,如果确实没有同步的话,直接重启整个系统也未尝不可。

3. 设置hostname和hosts

[root@localhost etc]# hostnamectl set-hostname node1或者 /etc/hostname 直接更改也行,使用sed命令将/etc/hostname的第1行也是唯一1行替换掉:

[root@bogon etc]# cat /etc/hostname

localhost.localdomain

[root@bogon etc]# sed -i '1c node2' /etc/hostname

[root@bogon etc]# cat /etc/hostname

node2

[root@bogon etc]# hostname

node2然后在所有节点的/etc/hosts文件中添加IP与hostname映射关系。尽管只有4个节点,这里也使用循环脚本的方式增加全部的host映射关系,毕竟保不齐什么时候需要在成百上千的大集群中进行这项配置,一个一个手敲估计使受不了的。

[root@node4 etc]# cat hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

[root@node4 etc]# t=1;while(($t<=4));do n=`wc -l hosts|awk -F ' ' '{print $1}'`;sed -i "${n}a 192.168.76.1${t} node${t}" hosts;let "t++";done

[root@node4 etc]# cat hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.76.11 node1

192.168.76.12 node2

192.168.76.13 node3

192.168.76.14 node44. SSH免密

免密的配置流程没有太大变化

(1)生成密钥

[root@node1 ~]# ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

Generating public/private rsa key pair.

Created directory '/root/.ssh'.

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:root@node1

The key's randomart image is:

+---[RSA 3072]----+

+----[SHA256]-----+

[root@node1 ~]# ls -hl .ssh

总用量 8.0K

-rw-------. 1 root root 2.6K 11月 8 06:22 id_rsa

-rw-r--r--. 1 root root 564 11月 8 06:22 id_rsa.pub(2)拷贝密钥

[root@node1 ~]# t=1;while(($t<=4));do ssh-copy-id node$t;let "t++";done

The authenticity of host 'node1 (192.168.76.11)' can't be established.

ECDSA key fingerprint is SHA256:

Are you sure you want to continue connecting (yes/no/[fingerprint])? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@node1's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'node1'"

and check to make sure that only the key(s) you wanted were added.

...... ...... ......到这一步,仅仅能够解决从node1向其它节点的免密登录。如果要两两间实现免密登录,还需要将公私钥同时分发到各个节点中。借助sshpass可以做到这一点。

(3)安装sshpass

[root@node1 ~]# yum install sshpass -y

上次元数据过期检查:23:26:36 前,执行于 2025年11月07日 星期五 07时31分07秒。

依赖关系解决。

=======================================================================

............ ............ ............

已安装:

sshpass-1.09-4.el8.x86_64 (4)批量拷贝密钥

[root@node1 ~]# t=1;while(($t<=4));do sshpass -p password ssh-copy-id node$t;let "t++";done

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'node1'"

and check to make sure that only the key(s) you wanted were added.

...... ...... ......

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'node4'"

and check to make sure that only the key(s) you wanted were added.不过相比这种比较"正规"的方式,感觉还是之前使用的直接修改并分发.ssh文件夹的方式更简便写。

5. 安装JAVA环境

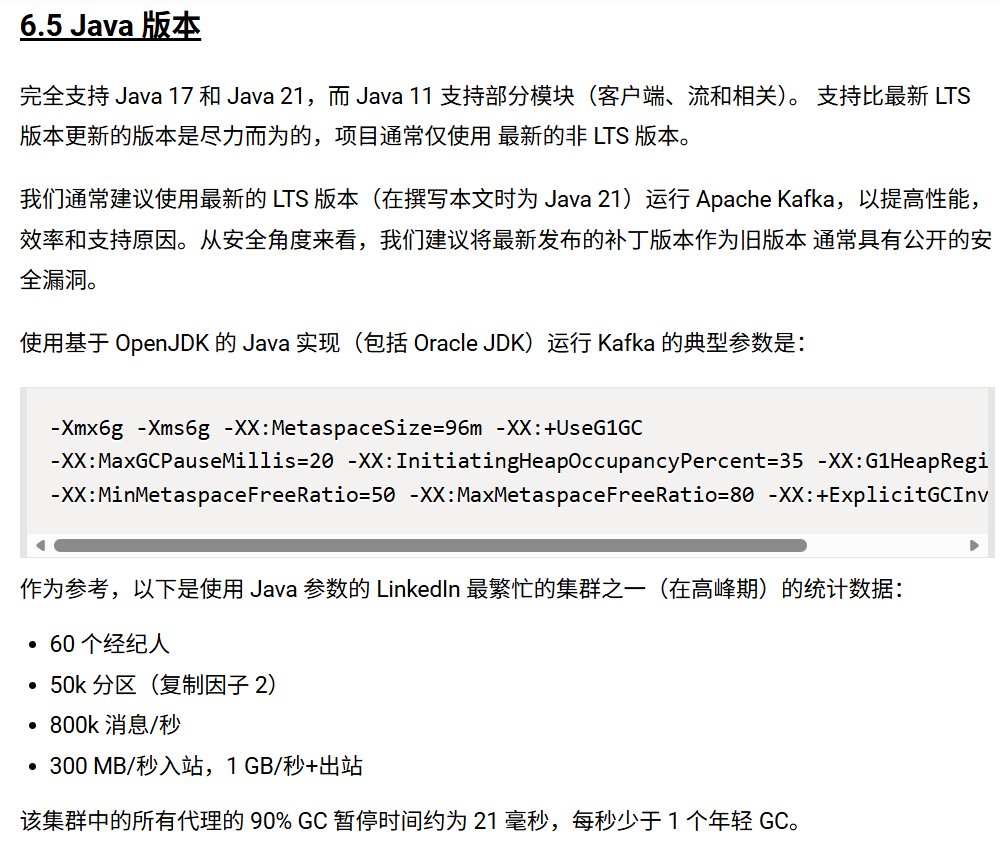

从官网上显示的信息可知,新版本的kafka已经不支持java 8了,需要安装java11以上的版本:

而且似乎使用java21要更保险一点:

(1)下载JDK21

使用连接直接下载rpm安装包,当然这个链接可能也会在未来某天失效:https://download.oracle.com/java/21/latest/jdk-21_linux-x64_bin.rpm

[root@node1 ~]# wget https://download.oracle.com/java/21/latest/jdk-21_linux-x64_bin.rpm

--2025-11-08 07:47:43-- https://download.oracle.com/java/21/latest/jdk-21_linux-x64_bin.rpm

正在解析主机 download.oracle.com (download.oracle.com)... 184.25.52.122

正在连接 download.oracle.com (download.oracle.com)|184.25.52.122|:443... 已连接。

已发出 HTTP 请求,正在等待回应... 200 OK

长度:196766553 (188M) [application/x-redhat-package-manager]

正在保存至: "jdk-21_linux-x64_bin.rpm"

jdk-21_linux-x64_bin.rpm 100%[=====================================================================================================================>] 187.65M 5.73MB/s 用时 29s

2025-11-08 07:48:12 (6.56 MB/s) - 已保存 "jdk-21_linux-x64_bin.rpm" [196766553/196766553])(2)安装并检查

[root@node1 ~]# rpm -ivh jdk-21_linux-x64_bin.rpm

Verifying... ################################# [100%]

准备中... ################################# [100%]

正在升级/安装...

1:jdk-21-2000:21.0.9-7 ################################# [100%]

[root@node1 ~]# ls /usr/lib/jvm/jdk-21.0.9-oracle-x64/

bin conf include jmods legal lib LICENSE man README release

[root@node1 ~]# echo 'export JAVA_HOME=/usr/lib/jvm/jdk-21.0.9-oracle-x64' >> ~/.bashrc

[root@node1 ~]# echo 'export PATH=$JAVA_HOME/bin:$PATH' >> ~/.bashrc

[root@node1 ~]# source ~/.bashrc

[root@node1 ~]# vim .bashrc

[root@node1 ~]# java --version

java 21.0.9 2025-10-21 LTS

Java(TM) SE Runtime Environment (build 21.0.9+7-LTS-338)

Java HotSpot(TM) 64-Bit Server VM (build 21.0.9+7-LTS-338, mixed mode, sharing)

[root@node1 ~]# (3)批量下载

[root@node1 ~]# i=2;while(($i<=4));do scp jdk-21_linux-x64_bin.rpm root@node$i:/root/.;let "i++";done

jdk-21_linux-x64_bin.rpm 100% 188MB 192.9MB/s 00:00

jdk-21_linux-x64_bin.rpm 100% 188MB 229.6MB/s 00:00

jdk-21_linux-x64_bin.rpm 100% 188MB 215.6MB/s 00:00 (4)批量安装

[root@node1 ~]# i=2;while(($i<=4));do ssh root@node$i "rpm -ivh jdk-21_linux-x64_bin.rpm && echo 'export JAVA_HOME=/usr/lib/jvm/jdk-21.0.9-oracle-x64' >> ~/.bashrc && echo 'export PATH=\$JAVA_HOME/bin:\$PATH' >> ~/.bashrc && source ~/.bashrc";let "i++";done

警告:jdk-21_linux-x64_bin.rpm: 头V3 RSA/SHA256 Signature, 密钥 ID 8d8b756f: NOKEY

Verifying... ########################################

准备中... ########################################

正在升级/安装...

...... ...... ......二、安装KAFKA

1. 下载和解压

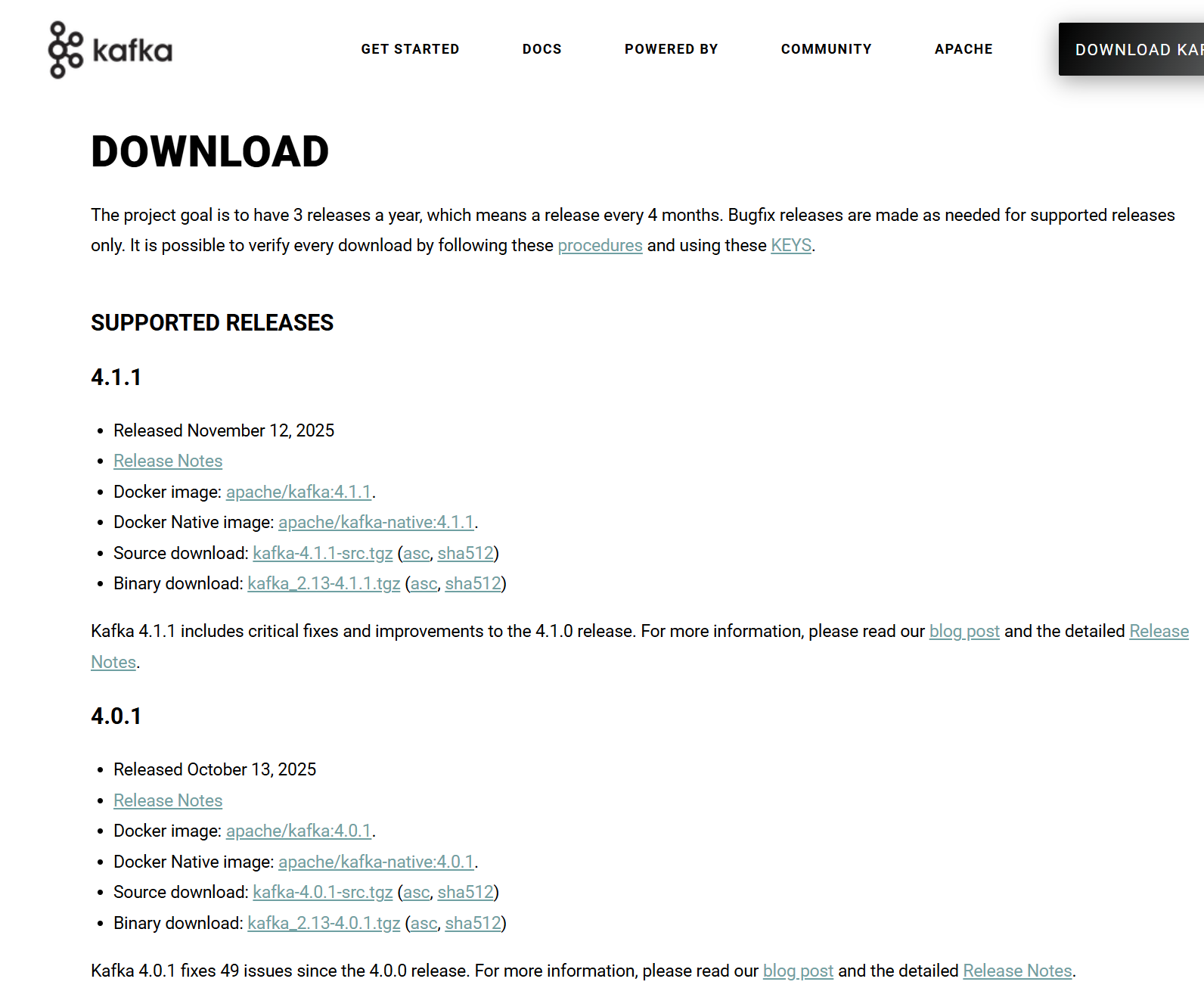

到官方网站Apache Kafka找到下载链接

比如4.0.1版本的binary download,右键复制链接,使用wget下载,并解压及挪到合适的目录:

[root@node1 ~]# wget https://dlcdn.apache.org/kafka/4.0.1/kafka_2.13-4.0.1.tgz

--2025-11-08 23:56:11-- https://dlcdn.apache.org/kafka/4.0.1/kafka_2.13-4.0.1.tgz

正在解析主机 dlcdn.apache.org (dlcdn.apache.org)... 151.101.2.132, 2a04:4e42::644

正在连接 dlcdn.apache.org (dlcdn.apache.org)|151.101.2.132|:443... 已连接。

已发出 HTTP 请求,正在等待回应... 200 OK

长度:132145232 (126M) [application/x-gzip]

正在保存至: "kafka_2.13-4.0.1.tgz"

kafka_2.13-4.0.1.tgz 100%[=====================================================================================================================>] 126.02M 545KB/s 用时 2m 53s

2025-11-08 23:59:10 (748 KB/s) - 已保存 "kafka_2.13-4.0.1.tgz" [132145232/132145232])

[root@node1 ~]# tar -xf kafka_2.13-4.0.1.tgz

[root@node1 ~]# ls

公共 模板 视频 图片 文档 下载 音乐 桌面 anaconda-ks.cfg initial-setup-ks.cfg jdk-21_linux-x64_bin.rpm kafka_2.13-4.0.1 kafka_2.13-4.0.1.tgz

[root@node1 ~]# mv kafka_2.13-4.0.1 /opt/kafka

[root@node1 ~]# ls /opt/kafka

bin config libs LICENSE licenses NOTICE site-docs

[root@node1 ~]# 分发到所有节点:

[root@node1 opt]# i=2;while(($i<=4));do scp -r /opt/kafka root@node$i:/opt;let "i++";done

LICENSE 100% 14KB 512.8KB/s 00:00

NOTICE 100% 26KB 20.6MB/s 00:00

kafka-delete-records.sh 100% 880 662.9KB/s 00:00

trogdor.sh 100% 1714 1.4MB/s 00:00

kafka-jmx.sh 100% 867 784.4KB/s 00:00

connect-mirror-maker.sh 100% 1387 1.2MB/s 00:00

kafka-console-consumer.sh 100% 965 803.7KB/s 00:00

kafka-consumer-perf-test.sh 100% 959 502.1KB/s 00:00

kafka-log-dirs.sh 100% 874 739.9KB/s 00:00

kafka-metadata-quorum.sh 100% 881 171.3KB/s 00:00

kafka-verifiable-consumer.sh 100% 958 745.6KB/s 00:00

...... ...... ......2. 配置参数

当前版本的kafka不再使用zookeeper,而是使用C语言实现的Kraft作为替代。在启动Kafka前,需要对其配置文件进行对应的更改。例如我们将kafka解压到/opt/kafka,那么配置文件就在/opt/kafka/config目录下:

[root@node1 ~]# cd /opt/kafka/config

[root@node1 config]# ls

broker.properties connect-distributed.properties connect-log4j2.yaml consumer.properties producer.properties trogdor.

connect-console-sink.properties connect-file-sink.properties connect-mirror-maker.properties controller.properties server.properties

connect-console-source.properties connect-file-source.properties connect-standalone.properties log4j2.yaml tools-log4j2.yaml虽然配置文件模板有一大堆,但是在Kraft模式下,只需关注server.properties即可,不同的节点的controller和broker身份由broker.id和process.roles来配置。

使用sed方式批量更改配置的脚本如下,也是为了方便构建docker镜像做铺垫:

i=1;while(($i<=3));do

ssh root@node$i "mkdir -p /opt/kafka/log &&

cd /opt/kafka/config &&

t=$(sed -n '/controller.quorum.voters/p' server.properties) &&

if [ -z $t ] ;

then echo controller.quorum.voters=1@node1:9093,2@node2:9093,3@node3:9093>>server.properties;

else sed -i \"/^controller.quorum.voters=/c\controller.quorum.voters=1@node1:9093,2@node2:9093,3@node3:9093\" server.properties;

fi &&

sed -i \"/^process.roles=/c\process.roles=broker,controller\" server.properties &&

sed -i \"/^node.id=/c\node.id=$i\" server.properties &&

sed -i \"/^listeners=/c\listeners=CONTROLLER://node$i:9093,PLAINTEXT://node$i:9092\" server.properties &&

sed -i \"/^advertised.listeners=/c\advertised.listeners=PLAINTEXT://node$i:9092\" server.properties &&

sed -i \"/^log.dirs=/c\log.dirs=/opt/kafka/log\" server.properties";let "i++";done如上,我们使用3个节点构建kafka集群,每个节点及作为controller也作为borker。则需要修改的配置包括:

(1) controller.quorum.voters=1@node1:9093,2@node2:9093,3@node3:9093

在Kafka的KRaft模式下,controller.quorum.voters参数用于指定集群中所有控制器节点的连接信息,以上配置了3个控制节点构成的仲裁组,控制节点监听端口均为9093。其中,"1@"的形式表示控制节点node1的控制节点ID为1。

(2) process.roles=broker,controller

明确配置文件所在节点的角色,可以为broker,或者controller,也可以两者都是,比如我们的例子中,每个节点都有双重身份。

(3) node.id=1

节点唯一标识,在Kafka集群中必须保持唯一性,用于区分不同的节点,KRaft(无ZooKeeper)模式下的关键配置参数,对应环境变量KAFKA_NODE_ID。

(4) listeners=CONTROLLER://node1:9093,PLAINTEXT://node1:9092

该配置定义配置文件所在节点监听的两个不同端口,分别用于不同的通信场景:

CONTROLLER://node1:9093 - 控制器专用端口

- 用于KRaft模式下控制器节点间的内部通信

- 负责集群元数据管理、领导者选举等核心协调任务

- 9093端口专供控制器仲裁组使用,确保元数据一致性

PLAINTEXT://node1:9092 - 客户端通信端口

- 用于接收生产者和消费者的消息请求

- 采用PLAINTEXT明文协议,不加密通信内容

- 9092是Kafka客户端连接的默认端口

这里比较吊诡的一点,borker的端口名称并不是"BROKER",而是默认为"PLAINTEXT"。当然我们可以自定义borker的名称,这个会在更后面一些提到。

以上配置均指定了hostname(或者IP),则表示外部可以通过该hostname和IP访问该节点。 实际上,以上配置如果写为:

listeners=CONTROLLER://:9093,PLAINTEXT://:9092或

listeners=CONTROLLER://:9093,PLAINTEXT://0.0.0.0:9092这两种方式都表示监听所有网络接口的指定端口,适合集群内多节点通信,但若遇到多网卡环境,存在使用错误IP地址的概率,所以尽量还是要配置hostname或者IP地址。

若指定为localhost,则仅能够监听回环地址,仅在部署单机测试环境时可用:

listeners=CONTROLLER://localhost:9093,PLAINTEXT://localhost:9092在参数(5)不配置的情况下,Broker默认公开的外部访问接口就是该配置中PLAINTEXT的对应配置。所以,如果参数(4)采取了wildcard配置,允许监听任何IP地址(://:9093或者0.0.0.0:9093之类)则必须配置参数(5),否则kafka会不知道其所公布的broker监听主机的hostname或者IP是什么。没有确定的监听地址,消费者和生产者也就不知道如何连接到kafka。

(5) advertised.listeners=PLAINTEXT://node1:9092

指定了 Broker 对外发布的访问地址,这里是node1,则node1或者node1对应的IP地址可以被外部用来访问Broker。

(6) log.dirs=\log.dirs=/opt/kafka/log

这个看起来很像是日志文件路径的配置参数,实际是 Kafka 所有主题分区数据的物理存储位置。当生产者发送消息到 Kafka 时,这些消息最终会以日志段文件的形式保存在 log.dirs 指定的目录中。

(7) 还有一个一般不用改的配置: listener.security.protocol.map

listener.security.protocol.map=CONTROLLER:PLAINTEXT,PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSLlistener.security.protocol.map 配置用于定义Kafka监听器名称与安全协议之间的映射关系 ,让不同的监听器可以使用不同的安全机制。和(4)中配置相关的是:

CONTROLLER:PLAINTEXT: CONTROLLER 监听器使用明文协议,专供KRaft模式下控制器节点间内部通信使用

PLAINTEXT:PLAINTEXT: PLAINTEXT (也就是Broker)监听器使用明文协议,通常用于客户端连接,适合测试环境

> ssh root@node$i "mkdir -p /opt/kafka/log &&

> cd /opt/kafka/config &&

> t=$(sed -n '/controller.quorum.voters/p' server.properties) &&

> if [ -z $t ] ;

> then echo controller.quorum.voters=1@node1:9093,2@node2:9093,3@node3:9093>>server.properties;

> else sed -i \"/^controller.quorum.voters=/c\controller.quorum.voters=1@node1:9093,2@node2:9093,3@node3:9093\" server.properties;

> fi &&

> sed -i \"/^process.roles=/c\process.roles=broker,controller\" server.properties &&

> sed -i \"/^node.id=/c\node.id=$i\" server.properties &&

> sed -i \"/^listeners=/c\listeners=CONTROLLER://node$i:9093,PLAINTEXT://node$i:9092\" server.properties &&

> sed -i \"/^advertised.listeners=/c\advertised.listeners=PLAINTEXT://node$i:9092\" server.properties &&

> sed -i \"/^log.dirs=/c\log.dirs=/opt/kafka/log\" server.properties";let "i++";done

[root@node1 config]# 3. 初始化

完成配置后,需要在所有Controller节点上执行存储区格式化指令,初始化元数据存储目录。包括为kafka集群创建唯一的元数据标识、格式化配置文件种指定的日志目录等。

执行kafka-storage.sh random-uuid指令生成唯一标识,并使用该唯一标识在所有控制节点上执行kafka-storage.sh format指令。

[root@node1 kafka]# chmod +x bin

[root@node1 kafka]# uuid=$(/opt/kafka/bin/kafka-storage.sh random-uuid)

[root@node1 kafka]# echo $uuid >> /opt/kafka/uuid_record

[root@node1 kafka]# i=1;while(($i<=3));do ssh root@node$i "/opt/kafka/bin/kafka-storage.sh format -t $uuid -c /opt/kafka/config/server.properties";let "i++";done

Formatting metadata directory /opt/kafka/log with metadata.version 4.0-IV3.

Formatting metadata directory /opt/kafka/log with metadata.version 4.0-IV3.

Formatting metadata directory /opt/kafka/log with metadata.version 4.0-IV3.执行完毕后,查看控制节点下我们在配置文件中指定的日志文件目录,会发现多了一些东西:

[root@node1 ~]# ls /opt/kafka/log

bootstrap.checkpoint meta.properties4. 打开防火墙

在防火墙中加入白名单端口,或者干脆就systemctl disable firewalld.service

[root@node1 ~]# i=1;while(($i<=3));do ssh root@node$i "firewall-cmd --permanent --add-port=9092/tcp && firewall-cmd --permanent --add-port=9093/tcp && firewall-cmd --reload";let "i++";done

success

success

success

success

success

success5. 启动Kafka服务

在每个节点上使用指定的配置文件启动kafka服务:

i=1;while(($i<=3));do ssh root@node$i "/opt/kafka/bin/kafka-server-start.sh -daemon /opt/kafka/config/server.properties";let "i++";done查看状态:

[root@node1 ~]# /opt/kafka/bin/kafka-broker-api-versions.sh --bootstrap-server node1:9092

node2:9092 (id: 2 rack: null isFenced: false) -> (

Produce(0): 0 to 12 [usable: 12],

Fetch(1): 4 to 17 [usable: 17],

ListOffsets(2): 1 to 10 [usable: 10],

Metadata(3): 0 to 13 [usable: 13],

OffsetCommit(8): 2 to 9 [usable: 9],

OffsetFetch(9): 1 to 9 [usable: 9],

FindCoordinator(10): 0 to 6 [usable: 6],

JoinGroup(11): 0 to 9 [usable: 9],

Heartbeat(12): 0 to 4 [usable: 4],

LeaveGroup(13): 0 to 5 [usable: 5],

SyncGroup(14): 0 to 5 [usable: 5],

DescribeGroups(15): 0 to 6 [usable: 6],

ListGroups(16): 0 to 5 [usable: 5],

SaslHandshake(17): 0 to 1 [usable: 1],

ApiVersions(18): 0 to 4 [usable: 4],

CreateTopics(19): 2 to 7 [usable: 7],

DeleteTopics(20): 1 to 6 [usable: 6],

DeleteRecords(21): 0 to 2 [usable: 2],

InitProducerId(22): 0 to 5 [usable: 5],

OffsetForLeaderEpoch(23): 2 to 4 [usable: 4],

AddPartitionsToTxn(24): 0 to 5 [usable: 5],

AddOffsetsToTxn(25): 0 to 4 [usable: 4],

EndTxn(26): 0 to 5 [usable: 5],

WriteTxnMarkers(27): 1 [usable: 1],

TxnOffsetCommit(28): 0 to 5 [usable: 5],

DescribeAcls(29): 1 to 3 [usable: 3],

CreateAcls(30): 1 to 3 [usable: 3],

DeleteAcls(31): 1 to 3 [usable: 3],

DescribeConfigs(32): 1 to 4 [usable: 4],

AlterConfigs(33): 0 to 2 [usable: 2],

AlterReplicaLogDirs(34): 1 to 2 [usable: 2],

DescribeLogDirs(35): 1 to 4 [usable: 4],

SaslAuthenticate(36): 0 to 2 [usable: 2],

CreatePartitions(37): 0 to 3 [usable: 3],

CreateDelegationToken(38): 1 to 3 [usable: 3],

RenewDelegationToken(39): 1 to 2 [usable: 2],

...... ...... ......服务没有启动的情况下是这样的:

[2025-11-09 09:38:04,326] WARN [LegacyAdminClient clientId=admin-1] Bootstrap broker node1:9092 (id: -1 rack: null isFenced: false) disconnected (org.apache.kafka.clients.NetworkClient)

Request METADATA failed on brokers [node1:9092 (id: -1 rack: null isFenced: false)]

java.lang.RuntimeException: Request METADATA failed on brokers [node1:9092 (id: -1 rack: null isFenced: false)]

at org.apache.kafka.tools.BrokerApiVersionsCommand$AdminClient.sendAnyNode(BrokerApiVersionsCommand.java:241)

at org.apache.kafka.tools.BrokerApiVersionsCommand$AdminClient.findAllBrokers(BrokerApiVersionsCommand.java:272)

at org.apache.kafka.tools.BrokerApiVersionsCommand$AdminClient.awaitBrokers(BrokerApiVersionsCommand.java:264)

at org.apache.kafka.tools.BrokerApiVersionsCommand.execute(BrokerApiVersionsCommand.java:86)

at org.apache.kafka.tools.BrokerApiVersionsCommand.mainNoExit(BrokerApiVersionsCommand.java:74)

at org.apache.kafka.tools.BrokerApiVersionsCommand.main(BrokerApiVersionsCommand.java:69)三、测试Kafka

1. 查看服务状态

虽然可以通过broker-api-version命令判断kafka服务启动与否,但通过查看监听端口,也可以进一步判断kafka的活动状态。如下,可以看到kafka属于Java进程,确实在监听9092和9093端口

[root@node1 config]# netstat -tuanp|grep 90

tcp6 0 0 :::9093 :::* LISTEN 4261/java

tcp6 0 0 :::9092 :::* LISTEN 4261/java

tcp6 0 0 192.168.76.11:42020 192.168.76.12:9092 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:9092 192.168.76.12:58894 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:38802 192.168.76.12:9093 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:38790 192.168.76.12:9093 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:9092 192.168.76.13:49056 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:9093 192.168.76.12:56142 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:56712 192.168.76.13:9092 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:37242 192.168.76.13:9093 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:38776 192.168.76.12:9093 ESTABLISHED 4261/java

[root@node1 config]# netstat -tuanp|grep java

tcp6 0 0 :::9093 :::* LISTEN 4261/java

tcp6 0 0 :::45557 :::* LISTEN 4261/java

tcp6 0 0 :::9092 :::* LISTEN 4261/java

tcp6 0 0 192.168.76.11:42020 192.168.76.12:9092 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:9092 192.168.76.12:58894 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:38802 192.168.76.12:9093 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:38790 192.168.76.12:9093 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:9092 192.168.76.13:49056 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:9093 192.168.76.12:56142 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:56712 192.168.76.13:9092 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:37242 192.168.76.13:9093 ESTABLISHED 4261/java

tcp6 0 0 192.168.76.11:38776 192.168.76.12:9093 ESTABLISHED 4261/java 使用jps查看,可以看到kafka服务确在活动状态

[root@node2 ~]# jps -ml

6576 jdk.jcmd/sun.tools.jps.Jps -ml

4012 kafka.Kafka /opt/kafka/config/server.properties2. 创建topic:

任选一个节点登录进去,执行kafka-topic命令,创建一个测试的topic:

[root@node1 ~]# /opt/kafka/bin/kafka-topics.sh --create --topic testTopic --bootstrap-server node1:9092 --partitions 3 --replication-factor 3

Created topic testTopic.3. 创建生产者

比如在node1上创建生产者,向刚才创建的测试topic发消息

[root@node1 ~]# /opt/kafka/bin/kafka-console-producer.sh --topic testTopic --bootstrap-server node1:9092

>test

>this is a hello from node14. 创建消费者

从另两个节点上收消息:

[root@node3 config]# /opt/kafka/bin/kafka-console-consumer.sh --topic testTopic --bootstrap-server node3:9092

test

this is a hello from node1开得晚的,就仅仅收到后一个消息,如果需要从头收,需要加上--from-beginning

[root@node2 ~]# /opt/kafka/bin/kafka-console-consumer.sh --topic testTopic --bootstrap-server node2:9092

this is a hello from node15. 测试完毕,停止服务

[root@node1 ~]# i=1;while(($i<=3));do ssh root@node$i "/opt/kafka/bin/kafka-server-stop.sh";let "i++";done

[root@node1 ~]#