Java SPI 的实现主要遵循以下 4 个规范步骤:

- 定义接口:通常在通用的包或核心库中定义一个接口(Interface)。

- 提供实现:第三方厂商或开发者实现该接口。

- 配置文件:

-

- 在实现项目的

src/main/resources/下创建目录META-INF/services/。 - 创建一个文件名与接口全限定名(包名+类名)完全一致的文件。

- 文件内容是实现类的全限定名。

- 在实现项目的

- 加载使用 :使用 JDK 自带的

java.util.ServiceLoader类动态加载实现。

三、SPI 实战示例

假设我们要开发一个解析 Java Spring Boot 项目代码的工具,然后让 AI 去根据解析器生成的 AST(抽象语法树)来生成对应的该项目的架构图。

这时候该怎么实现呢?怎么考虑这个功能的可扩展性,如果这个系统不仅仅需要能够解析 Java 的 Spring Boot 项目,而且能够解析 python 项目呢,或者是 Node.js 项目呢。这时候如果使用普通的传统的硬编码的方式去针对每一种语言和框架去写解析逻辑是不是就太复杂了,可能需要改动的代码很多?

那么这个时候我们就可以使用 Java 的 SPI 机制去让这个功能变得可扩展

首先要解析 Java 代码我们经常使用的库是 javaparser,

引入 maven 依赖

xml

<!-- Source: https://mvnrepository.com/artifact/com.github.javaparser/javaparser-core -->

<dependency>

<groupId>com.github.javaparser</groupId>

<artifactId>javaparser-core</artifactId>

<version>3.25.8</version>

<scope>compile</scope>

</dependency>

<dependency>

<groupId>com.github.javaparser</groupId>

<artifactId>javaparser-symbol-solver-core</artifactId>

<version>3.25.8</version>

<scope>compile</scope>

</dependency>在你的 Spring Boot 项目中引入这两个依赖。

这时候再来回顾一下 SPI 的实现步骤

- 定义接口

- 提供实现

- 配置文件

-

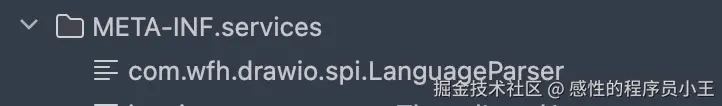

- 通常在项目的 resources 目录下创建一个 META-INF/services 目录

- 创建一个文件名和接口全类名完全一致的文件

- 文件内容是实现类的全类名

- 加载使用,使用 jdk 自带的 ServiceLoader 类加载器去实现类的动态加载

开发实现

首先新建一个 spi 包(com.example.parse.spi)

定义代码解析接口

arduino

package com.wfh.drawio.spi;

import com.wfh.drawio.core.model.ProjectAnalysisResult;

/**

* 核心解析器接口

* 贡献者只需要实现这个接口,就能支持一种新语言

*/

public interface LanguageParser {

/**

* 1. 这个解析器叫什么名字?

* e.g., "Java-Spring", "Python-Django", "MySQL-DDL"

*/

String getName();

/**

* 2. 这个解析器支持这种文件/项目吗?

* @param projectDir 用户上传的项目根目录或文件

* @return true 如果支持

*/

boolean canParse(String projectDir);

/**

* 3. 核心逻辑:把代码变成通用模型

* @param projectDir 项目文件

* @return 分析结果 (节点+关系)

*/

ProjectAnalysisResult parse(String projectDir);

}这是解析任何的项目或者说是代码的通用逻辑

然后我们新建一个 Java 解析器

scss

package com.wfh.drawio.spi.parser;

import com.github.javaparser.JavaParser;

import com.github.javaparser.ParserConfiguration;

import com.github.javaparser.ast.CompilationUnit;

import com.github.javaparser.ast.ImportDeclaration;

import com.github.javaparser.ast.body.ClassOrInterfaceDeclaration;

import com.github.javaparser.ast.comments.Comment;

import com.github.javaparser.ast.expr.AnnotationExpr;

import com.github.javaparser.ast.type.ClassOrInterfaceType;

import com.github.javaparser.symbolsolver.JavaSymbolSolver;

import com.github.javaparser.symbolsolver.resolution.typesolvers.CombinedTypeSolver;

import com.github.javaparser.symbolsolver.resolution.typesolvers.JavaParserTypeSolver;

import com.github.javaparser.symbolsolver.resolution.typesolvers.ReflectionTypeSolver;

import com.wfh.drawio.core.model.ArchNode;

import com.wfh.drawio.core.model.ArchRelationship;

import com.wfh.drawio.core.model.ProjectAnalysisResult;

import com.wfh.drawio.spi.LanguageParser;

import lombok.extern.slf4j.Slf4j;

import org.springframework.util.StringUtils;

import java.io.File;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Path;

import java.nio.file.Paths;

import java.util.*;

import java.util.stream.Collectors;

import java.util.stream.Stream;

/**

* @Title: JavaSpringParser

* @Author wangfenghuan

* @description: 极致优化的 Spring Boot 项目解析器 (支持 Javadoc 提取)

*/

@Slf4j

public class JavaSpringParser implements LanguageParser {

private static final Map<String, String> STEREOTYPE_MAPPING = Map.of(

"RestController", "API Layer",

"Controller", "API Layer",

"Service", "Business Layer",

"Repository", "Data Layer",

"Mapper", "Data Layer",

"Component", "Infrastructure",

"Configuration", "Infrastructure",

"Entity", "Data Layer",

"Table", "Data Layer"

);

private static final Set<String> IGNORED_SUFFIXES = Set.of(

"Test", "Tests", "DTO", "VO", "Request", "Response", "Exception", "Constant", "Config", "Utils", "Properties"

);

private static final Set<String> IGNORED_PACKAGES = Set.of(

"java.", "javax.", "jakarta.",

"org.springframework.", "org.slf4j.", "org.apache.",

"com.baomidou.", "lombok.", "com.fasterxml."

);

@Override

public String getName() {

return "Java-Spring-Doc-Enhanced";

}

@Override

public boolean canParse(String projectDir) {

Path rootPath = Paths.get(projectDir);

if (!Files.exists(rootPath) || !Files.isDirectory(rootPath)) return false;

try (Stream<Path> stream = Files.walk(rootPath, 2)) {

return stream.filter(Files::isRegularFile).anyMatch(p -> {

String name = p.getFileName().toString();

return name.equals("pom.xml") || name.equals("build.gradle") || name.equals("build.gradle.kts");

});

} catch (IOException e) {

return false;

}

}

@Override

public ProjectAnalysisResult parse(String projectDir) {

log.info("Starting Analysis with Javadoc Extraction: {}", projectDir);

JavaParser javaParser = initializeSymbolSolver(projectDir);

List<ArchNode> rawNodes = new ArrayList<>();

List<ArchRelationship> rawRelationships = new ArrayList<>();

Set<String> processedClasses = new HashSet<>();

try {

List<Path> javaFiles = findJavaFiles(projectDir);

for (Path javaFile : javaFiles) {

try {

CompilationUnit cu = javaParser.parse(javaFile).getResult().orElse(null);

if (cu == null) continue;

String packageName = cu.getPackageDeclaration().map(pd -> pd.getNameAsString()).orElse("");

cu.findAll(ClassOrInterfaceDeclaration.class).forEach(clazz -> {

String className = clazz.getNameAsString();

if (isIgnoredClass(className)) return;

String fullClassName = getFullyQualifiedName(clazz, packageName);

if (processedClasses.contains(fullClassName)) return;

ArchNode node = analyzeClass(clazz, fullClassName);

if (node != null) rawNodes.add(node);

rawRelationships.addAll(analyzeRelationships(clazz, fullClassName, cu));

processedClasses.add(fullClassName);

});

} catch (Exception e) {

log.warn("Parse error: {}", e.getMessage());

}

}

} catch (IOException e) {

log.error("IO Error", e);

}

return optimizeResult(rawNodes, rawRelationships);

}

private ArchNode analyzeClass(ClassOrInterfaceDeclaration clazz, String id) {

ArchNode node = new ArchNode();

node.setId(id);

node.setName(clazz.getNameAsString());

// 1. 提取 Javadoc 注释 (新增功能)

String comment = extractClassComment(clazz);

String type = "Class";

String stereotype = "Model";

if (clazz.isInterface()) type = "Interface";

List<String> annotations = clazz.getAnnotations().stream().map(a -> a.getNameAsString()).collect(Collectors.toList());

if (clazz.getNameAsString().endsWith("Mapper") || annotations.contains("Mapper")) {

type = "Interface";

stereotype = "Data Layer";

}

for (Map.Entry<String, String> entry : STEREOTYPE_MAPPING.entrySet()) {

if (annotations.stream().anyMatch(a -> a.contains(entry.getKey()))) {

type = entry.getKey().toUpperCase();

stereotype = entry.getValue();

break;

}

}

node.setType(type);

node.setStereotype(stereotype);

// 2. 构建 Description (合并 注释 + 技术细节)

List<String> descParts = new ArrayList<>();

// Part A: 中文注释

if (comment != null && !comment.isEmpty()) {

descParts.add(comment);

}

// Part B: 技术细节 (API 路由 或 表名)

if ("API Layer".equals(stereotype)) {

List<String> routes = extractApiRoutes(clazz);

if (!routes.isEmpty()) {

descParts.add("APIs:\n" + String.join("\n", routes));

}

} else if (clazz.getNameAsString().endsWith("Entity") || annotations.contains("TableName")) {

extractTableName(clazz).ifPresent(t -> descParts.add("Table: " + t));

}

if (!descParts.isEmpty()) {

node.setDescription(String.join("\n\n", descParts));

}

node.setFields(Collections.emptyList());

node.setMethods(Collections.emptyList());

return node;

}

/**

* 新增:提取并清洗 Javadoc

*/

private String extractClassComment(ClassOrInterfaceDeclaration clazz) {

return clazz.getComment()

.map(Comment::getContent)

.map(this::cleanJavadoc)

.orElse("");

}

/**

* 清洗 Javadoc:去除 * 号、@author 等标签

*/

private String cleanJavadoc(String content) {

if (content == null) return "";

String[] lines = content.split("\n");

StringBuilder sb = new StringBuilder();

for (String line : lines) {

// 去除开头的 * 和空格

String cleanLine = line.trim().replaceAll("^\*+\s?", "").trim();

// 遇到 @ 标签(如 @author, @date)停止读取,或者跳过

// 这里策略是:只读取第一段描述,遇到 @ 就停止,通常第一段是核心描述

if (cleanLine.startsWith("@")) {

break;

}

// 忽略空行

if (!cleanLine.isEmpty()) {

sb.append(cleanLine).append(" "); // 将多行描述合并为一行

}

}

return sb.toString().trim();

}

private ProjectAnalysisResult optimizeResult(List<ArchNode> nodes, List<ArchRelationship> relationships) {

Set<String> validNodeIds = nodes.stream().map(ArchNode::getId).collect(Collectors.toSet());

List<ArchRelationship> cleanRelationships = relationships.stream()

.filter(r -> validNodeIds.contains(r.getSourceId()) && validNodeIds.contains(r.getTargetId()))

.filter(r -> !r.getSourceId().equals(r.getTargetId()))

.collect(Collectors.toList());

Set<String> connectedNodes = new HashSet<>();

cleanRelationships.forEach(r -> {

connectedNodes.add(r.getSourceId());

connectedNodes.add(r.getTargetId());

});

List<ArchNode> cleanNodes = nodes.stream()

.filter(n -> {

if ("API Layer".equals(n.getStereotype())) return true;

return connectedNodes.contains(n.getId());

})

.collect(Collectors.toList());

ProjectAnalysisResult result = new ProjectAnalysisResult();

result.setNodes(cleanNodes);

result.setRelationships(cleanRelationships);

return result;

}

private List<ArchRelationship> analyzeRelationships(ClassOrInterfaceDeclaration clazz, String sourceId, CompilationUnit cu) {

List<ArchRelationship> rels = new ArrayList<>();

clazz.getExtendedTypes().forEach(t -> addRel(rels, sourceId, resolveType(t, cu), "EXTENDS"));

clazz.getImplementedTypes().forEach(t -> addRel(rels, sourceId, resolveType(t, cu), "IMPLEMENTS"));

clazz.getFields().forEach(field -> {

if (field.isAnnotationPresent("Autowired") || field.isAnnotationPresent("Resource") || field.isAnnotationPresent("Inject")) {

field.getVariables().forEach(v -> addRel(rels, sourceId, resolveType(field.getElementType(), cu), "DEPENDS_ON"));

}

});

clazz.getConstructors().forEach(c -> c.getParameters().forEach(p -> addRel(rels, sourceId, resolveType(p.getType(), cu), "DEPENDS_ON")));

return rels;

}

private boolean isIgnoredClass(String className) {

return IGNORED_SUFFIXES.stream().anyMatch(className::endsWith);

}

private boolean isIgnoredPackage(String typeName) {

if (typeName == null) return true;

return IGNORED_PACKAGES.stream().anyMatch(typeName::startsWith);

}

private void addRel(List<ArchRelationship> rels, String src, String target, String type) {

if (target == null || target.equals(src) || isIgnoredPackage(target)) return;

ArchRelationship rel = new ArchRelationship();

rel.setSourceId(src);

rel.setTargetId(target);

rel.setType(type);

rels.add(rel);

}

private JavaParser initializeSymbolSolver(String projectDir) {

CombinedTypeSolver combinedSolver = new CombinedTypeSolver();

combinedSolver.add(new ReflectionTypeSolver());

Path srcPath = Paths.get(projectDir, "src", "main", "java");

if (Files.exists(srcPath)) {

combinedSolver.add(new JavaParserTypeSolver(srcPath));

} else {

combinedSolver.add(new JavaParserTypeSolver(new File(projectDir)));

}

ParserConfiguration config = new ParserConfiguration();

config.setSymbolResolver(new JavaSymbolSolver(combinedSolver));

return new JavaParser(config);

}

private String resolveType(ClassOrInterfaceType type, CompilationUnit cu) {

try {

return type.resolve().asReferenceType().getQualifiedName();

} catch (Exception e) {

return inferFullyQualifiedNameFromImports(type.getNameAsString(), cu);

}

}

private String resolveType(com.github.javaparser.ast.type.Type type, CompilationUnit cu) {

try {

if (type.isClassOrInterfaceType()) return type.asClassOrInterfaceType().resolve().asReferenceType().getQualifiedName();

return null;

} catch (Exception e) {

return inferFullyQualifiedNameFromImports(type.asString(), cu);

}

}

private String inferFullyQualifiedNameFromImports(String simpleName, CompilationUnit cu) {

Optional<ImportDeclaration> match = cu.getImports().stream()

.filter(i -> !i.isAsterisk() && i.getNameAsString().endsWith("." + simpleName))

.findFirst();

if (match.isPresent()) return match.get().getNameAsString();

String packageName = cu.getPackageDeclaration().map(pd -> pd.getNameAsString()).orElse("");

return packageName.isEmpty() ? simpleName : packageName + "." + simpleName;

}

private String getFullyQualifiedName(ClassOrInterfaceDeclaration clazz, String packageName) {

return packageName.isEmpty() ? clazz.getNameAsString() : packageName + "." + clazz.getNameAsString();

}

private List<String> extractApiRoutes(ClassOrInterfaceDeclaration clazz) {

List<String> routes = new ArrayList<>();

String basePath = "";

Optional<AnnotationExpr> classMapping = clazz.getAnnotationByName("RequestMapping");

if (classMapping.isPresent()) basePath = extractPath(classMapping.get());

String finalBasePath = basePath;

clazz.getMethods().forEach(m -> {

Stream.of("GetMapping", "PostMapping", "PutMapping", "DeleteMapping", "RequestMapping").forEach(methodType -> {

m.getAnnotationByName(methodType).ifPresent(ann -> {

String methodPath = extractPath(ann);

String httpMethod = methodType.replace("Mapping", "").toUpperCase();

if ("REQUEST".equals(httpMethod)) httpMethod = "ALL";

routes.add("[" + httpMethod + "] " + (finalBasePath + methodPath).replaceAll("//", "/"));

});

});

});

return routes;

}

private String extractPath(AnnotationExpr ann) {

if (ann.isSingleMemberAnnotationExpr()) {

return ann.asSingleMemberAnnotationExpr().getMemberValue().toString().replace(""", "");

} else if (ann.isNormalAnnotationExpr()) {

return ann.asNormalAnnotationExpr().getPairs().stream()

.filter(p -> p.getNameAsString().equals("value") || p.getNameAsString().equals("path"))

.findFirst().map(p -> p.getValue().toString().replace(""", "")).orElse("");

}

return "";

}

private Optional<String> extractTableName(ClassOrInterfaceDeclaration clazz) {

return clazz.getAnnotationByName("TableName").map(this::extractPath)

.or(() -> clazz.getAnnotationByName("Table").map(this::extractPath));

}

private List<Path> findJavaFiles(String projectPath) throws IOException {

try (Stream<Path> paths = Files.walk(Paths.get(projectPath))) {

return paths.filter(Files::isRegularFile).filter(p -> p.toString().endsWith(".java")).collect(Collectors.toList());

}

}

}紧接着 在 resources 目录下新建文件

文件内容是:com.wfh.drawio.spi.parser.JavaSpringParser

也就是我们刚才写的 Java 解析器的全类名

但是还不够,我们还需要定义一个解析器工厂,因为刚才不是说要使用 ServiceLoader 类加载器去动态的加载类吗

所以新建一个解析器工厂类

java

package com.wfh.drawio.spi;

import java.util.Optional;

import java.util.ServiceLoader;

/**

* @Title: ParserFactory

* @Author wangfenghuan

* @Package com.wfh.drawio.spi

* @Date 2026/2/17 19:22

* @description: 解析器工厂类

*/

public class ParserFactory {

public static Optional<LanguageParser> getParser(String projectDir){

ServiceLoader<LanguageParser> loader = ServiceLoader.load(LanguageParser.class);

// 遍历所有的解析器,找到第一个能解析这个文件的解析器

for (LanguageParser languageParser : loader) {

if (languageParser.canParse(projectDir)){

return Optional.of(languageParser);

}

}

return Optional.empty();

}

}到此为止,我们就实现了 SPI 动态加载代码解析器,就像是一个插件一样。

假如后续还需要写其他的代码解析器,只需要定义对应的实现类去实现解析器接口就可以了,然后在 META-INF/services 目录下的解析器接口文件中写上对应的解析器实现类的全类名

实现一个 sql 解析器

新建解析器实现类

scss

package com.wfh.drawio.spi.parser;

import com.alibaba.druid.sql.SQLUtils;

import com.alibaba.druid.sql.ast.SQLStatement;

import com.alibaba.druid.sql.ast.statement.*;

import com.alibaba.druid.util.JdbcConstants;

import com.wfh.drawio.core.model.ArchNode;

import com.wfh.drawio.core.model.ArchRelationship;

import com.wfh.drawio.core.model.ProjectAnalysisResult;

import com.wfh.drawio.spi.LanguageParser;

import lombok.extern.slf4j.Slf4j;

import java.io.File;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Path;

import java.nio.file.Paths;

import java.util.*;

import java.util.stream.Collectors;

import java.util.stream.Stream;

/**

* @Title: SqlParser

* @Author wangfenghuan

* @description: 优化版 SQL 解析器 (已修复 Lambda 变量 Final 问题)

*/

@Slf4j

public class SqlParser implements LanguageParser {

private static final Set<String> IGNORED_COLUMNS = Set.of(

"create_time", "update_time", "create_at", "update_at",

"created_time", "updated_time", "created_at", "updated_at",

"is_delete", "is_deleted", "version", "revision"

);

@Override

public String getName() {

return "SQL-DDL-Enhanced";

}

@Override

public boolean canParse(String projectDir) {

File file = new File(projectDir);

if (!file.exists()) {

return false;

}

if (file.isFile()) {

return file.getName().toLowerCase().endsWith(".sql");

}

try (Stream<Path> paths = Files.walk(Paths.get(projectDir), 3)) {

return paths.anyMatch(p -> p.toString().toLowerCase().endsWith(".sql"));

} catch (IOException e) {

return false;

}

}

@Override

public ProjectAnalysisResult parse(String projectDir) {

log.info("Starting SQL analysis: {}", projectDir);

ProjectAnalysisResult result = new ProjectAnalysisResult();

List<ArchNode> nodes = new ArrayList<>();

List<ArchRelationship> relationships = new ArrayList<>();

Set<String> tableNames = new HashSet<>();

try {

List<Path> sqlFiles = findSqlFiles(projectDir);

for (Path sqlFile : sqlFiles) {

String content = Files.readString(sqlFile);

parseSqlContent(content, nodes, relationships, tableNames);

}

inferLogicalRelationships(nodes, relationships, tableNames);

} catch (Exception e) {

log.error("Error parsing SQL files", e);

}

result.setNodes(nodes);

result.setRelationships(relationships);

return result;

}

private void parseSqlContent(String content, List<ArchNode> nodes, List<ArchRelationship> relationships, Set<String> tableNames) {

List<SQLStatement> statements = parseWithFallback(content);

for (SQLStatement stmt : statements) {

if (stmt instanceof SQLCreateTableStatement) {

SQLCreateTableStatement createTable = (SQLCreateTableStatement) stmt;

ArchNode node = processCreateTable(createTable, relationships);

if (node != null) {

nodes.add(node);

tableNames.add(node.getId());

}

}

}

}

private List<SQLStatement> parseWithFallback(String content) {

try {

return SQLUtils.parseStatements(content, JdbcConstants.MYSQL);

} catch (Exception e1) {

try {

return SQLUtils.parseStatements(content, JdbcConstants.POSTGRESQL);

} catch (Exception e2) {

try {

return SQLUtils.parseStatements(content, JdbcConstants.ORACLE);

} catch (Exception e3) {

return Collections.emptyList();

}

}

}

}

private ArchNode processCreateTable(SQLCreateTableStatement createTable, List<ArchRelationship> relationships) {

String tableName = cleanName(createTable.getTableName());

ArchNode node = new ArchNode();

node.setId(tableName);

node.setName(tableName);

node.setType("TABLE");

node.setStereotype("Database Table");

if (createTable.getComment() != null) {

node.setDescription(cleanComment(createTable.getComment().toString()));

}

List<String> fields = new ArrayList<>();

for (SQLTableElement element : createTable.getTableElementList()) {

if (element instanceof SQLColumnDefinition) {

SQLColumnDefinition column = (SQLColumnDefinition) element;

String colName = cleanName(column.getName().getSimpleName());

if (IGNORED_COLUMNS.contains(colName.toLowerCase())) {

continue;

}

String colType = column.getDataType().getName();

StringBuilder fieldStr = new StringBuilder(colName).append(": ").append(colType);

if (column.isPrimaryKey()) {

fieldStr.append(" (PK)");

} else if (createTable.findColumn(colName) != null && isPrimaryKeyInConstraints(createTable, colName)) {

fieldStr.append(" (PK)");

}

if (column.getComment() != null) {

String comment = cleanComment(column.getComment().toString());

if (!comment.isEmpty()) {

fieldStr.append(" // ").append(comment);

}

}

fields.add(fieldStr.toString());

} else if (element instanceof SQLForeignKeyConstraint) {

SQLForeignKeyConstraint fk = (SQLForeignKeyConstraint) element;

String targetTable = cleanName(fk.getReferencedTableName().getSimpleName());

ArchRelationship rel = new ArchRelationship();

rel.setSourceId(tableName);

rel.setTargetId(targetTable);

rel.setType("FOREIGN_KEY");

String colName = fk.getReferencingColumns().stream()

.map(c -> cleanName(c.getSimpleName()))

.collect(Collectors.joining(","));

rel.setLabel("FK: " + colName);

relationships.add(rel);

}

}

node.setFields(fields);

node.setMethods(Collections.emptyList());

return node;

}

/**

* 修复点:inferLogicalRelationships 方法

* 引入 finalTarget 变量,解决 Lambda 表达式报错

*/

private void inferLogicalRelationships(List<ArchNode> nodes, List<ArchRelationship> relationships, Set<String> allTables) {

for (ArchNode node : nodes) {

if (node.getFields() == null) continue;

for (String fieldStr : node.getFields()) {

String colName = fieldStr.split(":")[0].trim();

if (colName.toLowerCase().endsWith("_id")) {

String potentialTableName = colName.substring(0, colName.length() - 3);

String tempTarget = matchTable(potentialTableName, allTables);

if (tempTarget == null) {

tempTarget = matchTable(potentialTableName + "s", allTables);

}

// ✅ 关键修复:将可能变化的 tempTarget 赋值给一个 final 变量

String finalTarget = tempTarget;

if (finalTarget != null && !finalTarget.equals(node.getId())) {

// 在 Lambda 中只使用 finalTarget

boolean exists = relationships.stream().anyMatch(r ->

r.getSourceId().equals(node.getId()) && r.getTargetId().equals(finalTarget)

);

if (!exists) {

ArchRelationship rel = new ArchRelationship();

rel.setSourceId(node.getId());

rel.setTargetId(finalTarget);

rel.setType("LOGICAL_KEY");

rel.setLabel("Link: " + colName);

relationships.add(rel);

}

}

}

}

}

}

private String matchTable(String guess, Set<String> tables) {

for (String table : tables) {

if (table.equalsIgnoreCase(guess)) return table;

}

return null;

}

private boolean isPrimaryKeyInConstraints(SQLCreateTableStatement table, String colName) {

if (table.getTableElementList() == null) return false;

for (SQLTableElement element : table.getTableElementList()) {

if (element instanceof SQLPrimaryKey) {

SQLPrimaryKey pk = (SQLPrimaryKey) element;

return pk.getColumns().stream().anyMatch(c -> cleanName(c.getExpr().toString()).equals(colName));

}

}

return false;

}

private String cleanName(String name) {

if (name == null) return "";

return name.replace("`", "").replace(""", "").replace("'", "").trim();

}

private String cleanComment(String comment) {

if (comment == null) return "";

return comment.replace("'", "").trim();

}

private List<Path> findSqlFiles(String projectPath) throws IOException {

Path startPath = Paths.get(projectPath);

if (Files.isRegularFile(startPath) && startPath.toString().toLowerCase().endsWith(".sql")) {

return List.of(startPath);

}

try (Stream<Path> paths = Files.walk(startPath)) {

return paths

.filter(Files::isRegularFile)

.filter(path -> path.toString().toLowerCase().endsWith(".sql"))

.collect(Collectors.toList());

}

}

}在该文件中写入解析器全类名

com.wfh.drawio.spi.parser.SqlParser

这样就实现了 sql 解析器

代码中的 dto 类如下

typescript

package com.wfh.drawio.core.model;

import lombok.Data;

import java.util.List;

/**

* 1. 架构图中的"节点" (比如一个类、一个表、一个微服务)

* @author fenghuanwang

*/

@Data

public class ArchNode {

private String id; // 唯一标识 (e.g., "com.example.UserService")

private String name; // 显示名称 (e.g., "UserService")

private String type; // 类型 (e.g., "CLASS", "INTERFACE", "TABLE", "SERVICE")

private String stereotype; // 构造型/注解 (e.g., "@Controller", "@Repository")

private List<String> fields; // 关键字段

// 新增字段:用于存放 API 路由列表、SQL 表名或其他备注

private String description;

// 建议新增:用于分组(比如按包名分组,画出子图)

private String group;

private List<String> methods; // 关键方法

}

ruby

package com.wfh.drawio.core.model;

import lombok.Data;

/**

* 2. 架构图中的"连线" (比如继承、调用、外键)

* @author fenghuanwang

*/

@Data

public class ArchRelationship {

private String sourceId; // 起点

private String targetId; // 终点

private String type; // 关系类型 (e.g., "DEPENDS_ON", "INHERITS", "CALLS", "FOREIGN_KEY")

private String label; // 连线上的文字 (e.g., "findUserById")

}

java

package com.wfh.drawio.core.model;

import lombok.Data;

import java.util.List;

/**

* 3. 完整的分析结果

* @author fenghuanwang

*/

@Data

public class ProjectAnalysisResult {

private List<ArchNode> nodes;

private List<ArchRelationship> relationships;

}