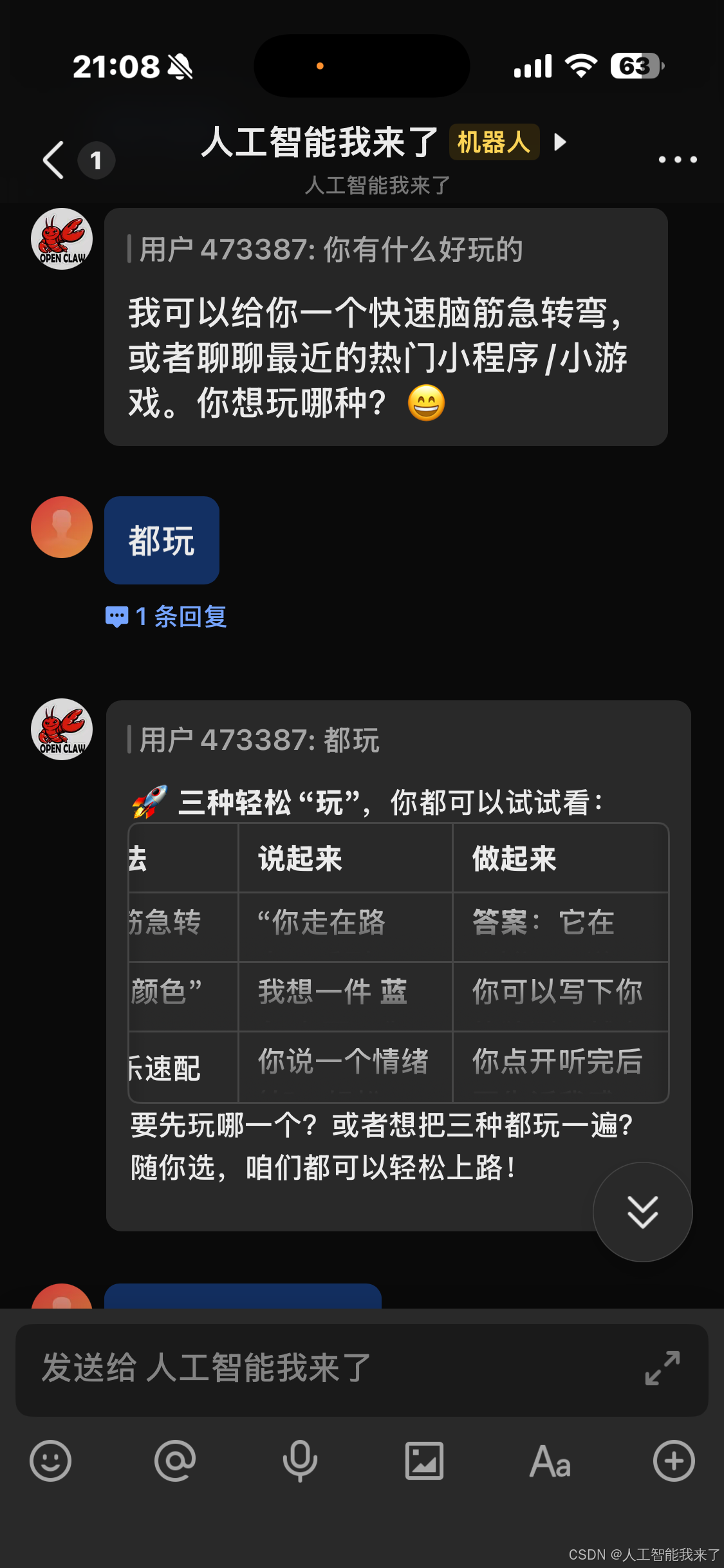

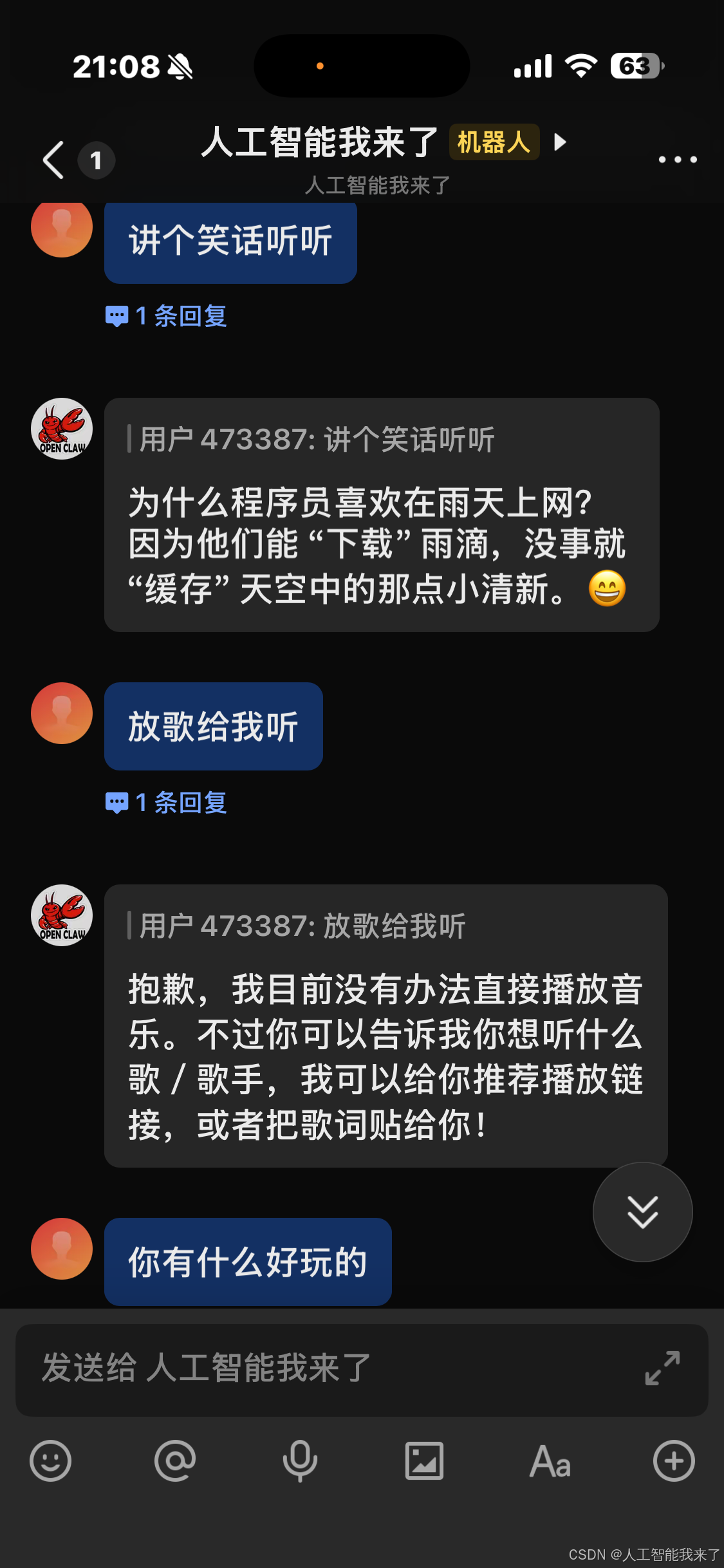

1. 从第三集过来,我们就可以开始实现飞书机器人功能了

2.实现的效果不错,很丝滑

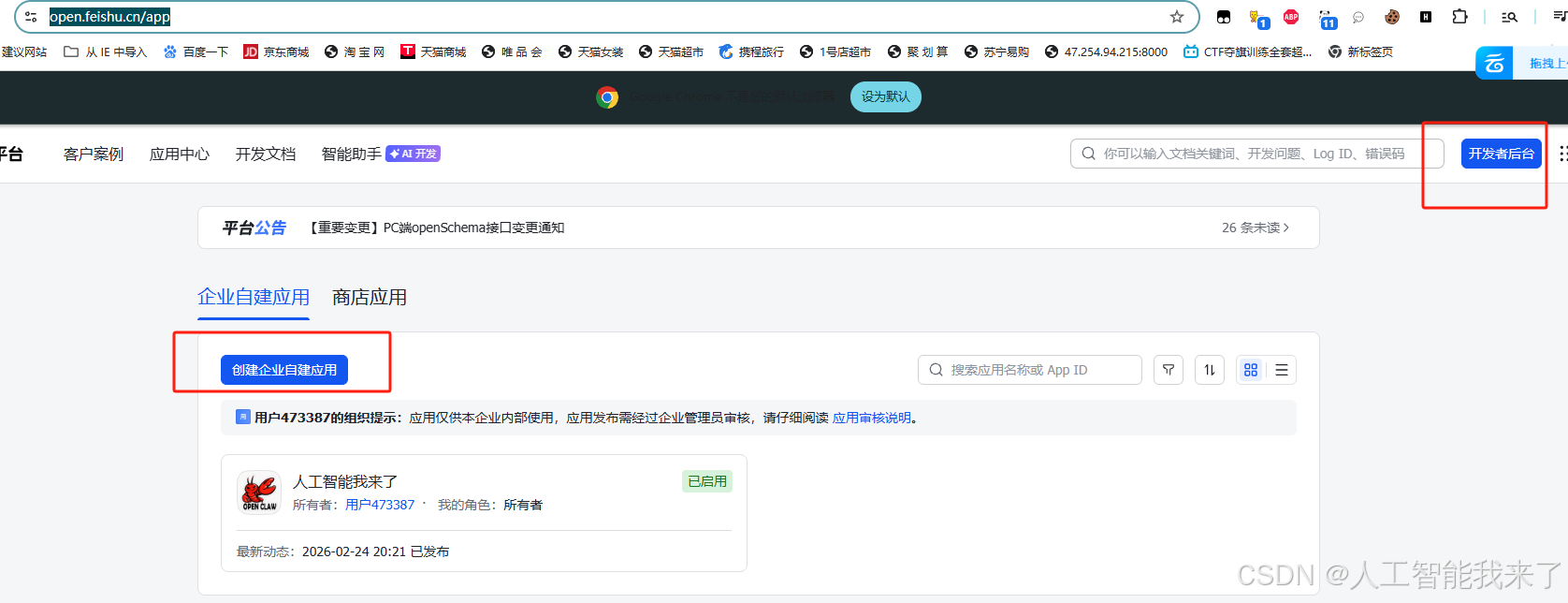

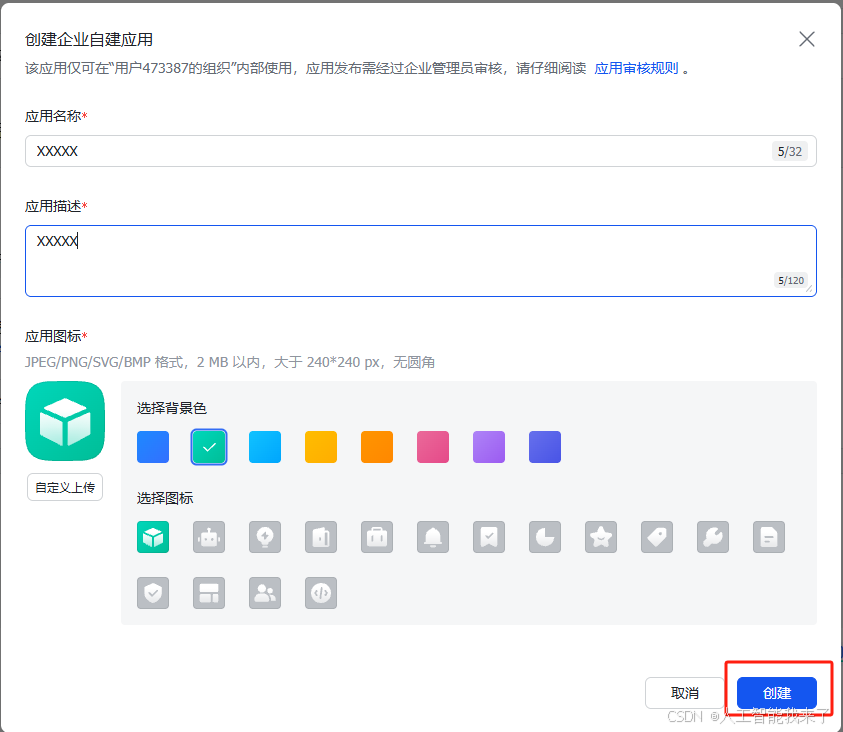

3.飞书侧的配置

3.1 登录https://open.feishu.cn/app

3.2

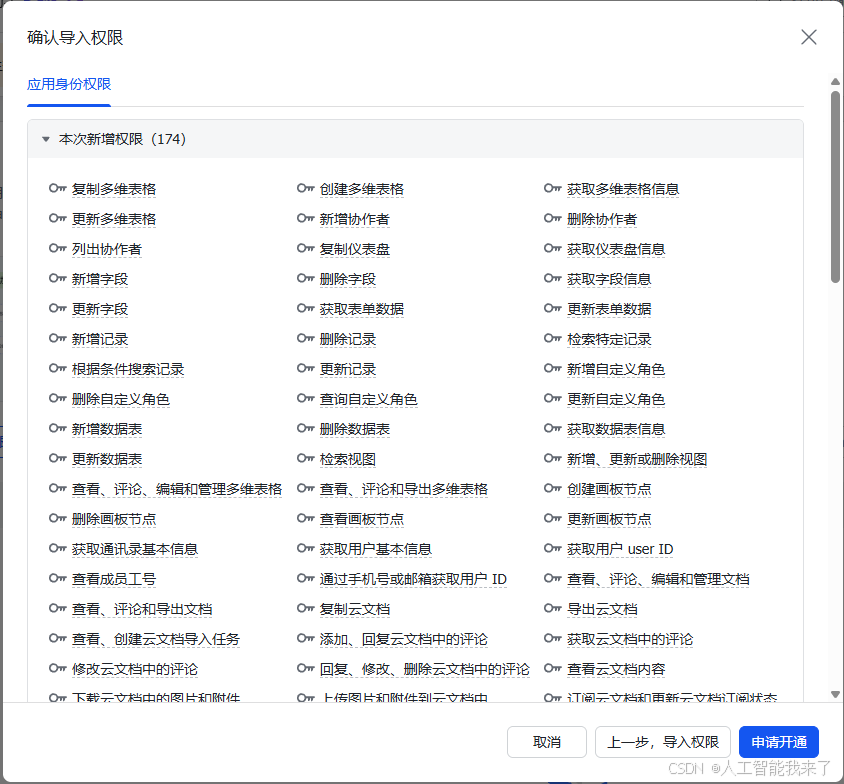

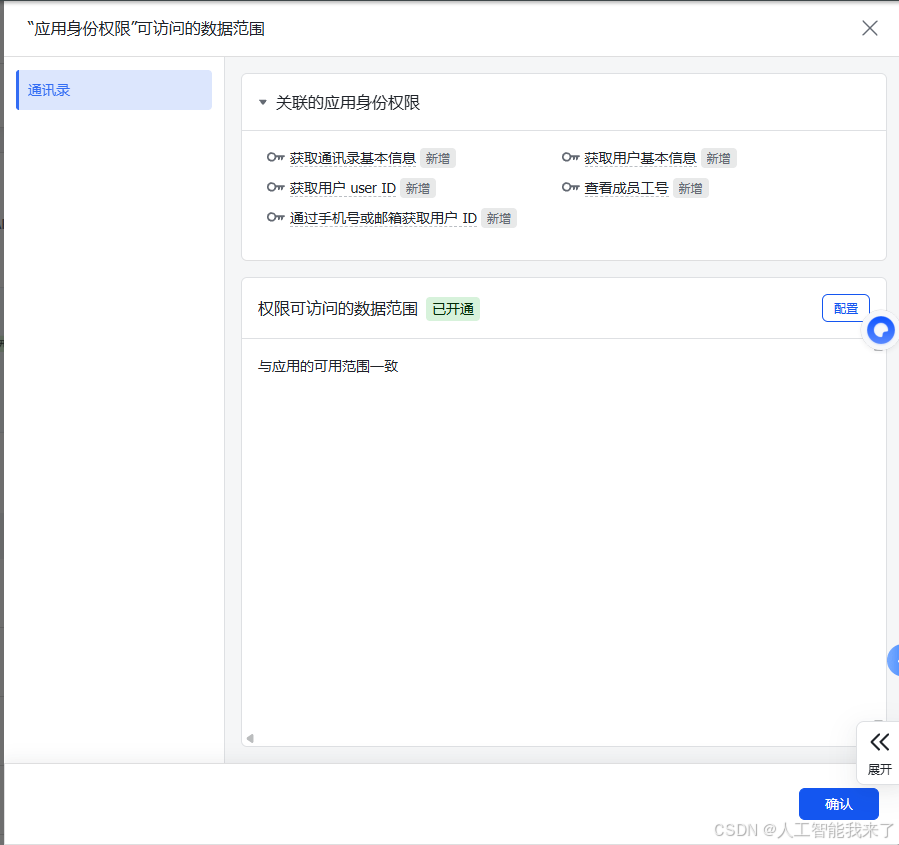

3.3 飞书权限

json

{

"scopes": {

"tenant": [

"base:app:copy",

"base:app:create",

"base:app:read",

"base:app:update",

"base:collaborator:create",

"base:collaborator:delete",

"base:collaborator:read",

"base:dashboard:copy",

"base:dashboard:read",

"base:field:create",

"base:field:delete",

"base:field:read",

"base:field:update",

"base:form:read",

"base:form:update",

"base:record:create",

"base:record:delete",

"base:record:read",

"base:record:retrieve",

"base:record:update",

"base:role:create",

"base:role:delete",

"base:role:read",

"base:role:update",

"base:table:create",

"base:table:delete",

"base:table:read",

"base:table:update",

"base:view:read",

"base:view:write_only",

"bitable:app",

"bitable:app:readonly",

"board:whiteboard:node:create",

"board:whiteboard:node:delete",

"board:whiteboard:node:read",

"board:whiteboard:node:update",

"contact:contact.base:readonly",

"contact:user.base:readonly",

"contact:user.employee_id:readonly",

"contact:user.employee_number:read",

"contact:user.id:readonly",

"docs:doc",

"docs:doc:readonly",

"docs:document.comment:create",

"docs:document.comment:read",

"docs:document.comment:update",

"docs:document.comment:write_only",

"docs:document.content:read",

"docs:document.media:download",

"docs:document.media:upload",

"docs:document.subscription",

"docs:document.subscription:read",

"docs:document:copy",

"docs:document:export",

"docs:document:import",

"docs:event.document_deleted:read",

"docs:event.document_edited:read",

"docs:event.document_opened:read",

"docs:event:subscribe",

"docs:permission.member",

"docs:permission.member:auth",

"docs:permission.member:create",

"docs:permission.member:delete",

"docs:permission.member:readonly",

"docs:permission.member:retrieve",

"docs:permission.member:transfer",

"docs:permission.member:update",

"docs:permission.setting",

"docs:permission.setting:read",

"docs:permission.setting:readonly",

"docs:permission.setting:write_only",

"docx:document",

"docx:document.block:convert",

"docx:document:create",

"docx:document:readonly",

"drive:drive",

"drive:drive.metadata:readonly",

"drive:drive.search:readonly",

"drive:drive:readonly",

"drive:drive:version",

"drive:drive:version:readonly",

"drive:export:readonly",

"drive:file",

"drive:file.like:readonly",

"drive:file.meta.sec_label.read_only",

"drive:file:download",

"drive:file:readonly",

"drive:file:upload",

"drive:file:view_record:readonly",

"event:ip_list",

"im:app_feed_card:write",

"im:biz_entity_tag_relation:read",

"im:biz_entity_tag_relation:write",

"im:chat",

"im:chat.access_event.bot_p2p_chat:read",

"im:chat.announcement:read",

"im:chat.announcement:write_only",

"im:chat.chat_pins:read",

"im:chat.chat_pins:write_only",

"im:chat.collab_plugins:read",

"im:chat.collab_plugins:write_only",

"im:chat.managers:write_only",

"im:chat.members:bot_access",

"im:chat.members:read",

"im:chat.members:write_only",

"im:chat.menu_tree:read",

"im:chat.menu_tree:write_only",

"im:chat.moderation:read",

"im:chat.tabs:read",

"im:chat.tabs:write_only",

"im:chat.top_notice:write_only",

"im:chat.widgets:read",

"im:chat.widgets:write_only",

"im:chat:create",

"im:chat:delete",

"im:chat:moderation:write_only",

"im:chat:operate_as_owner",

"im:chat:read",

"im:chat:readonly",

"im:chat:update",

"im:datasync.feed_card.time_sensitive:write",

"im:message",

"im:message.group_at_msg:readonly",

"im:message.group_msg",

"im:message.p2p_msg:readonly",

"im:message.pins:read",

"im:message.pins:write_only",

"im:message.reactions:read",

"im:message.reactions:write_only",

"im:message.urgent",

"im:message.urgent.status:write",

"im:message.urgent:phone",

"im:message.urgent:sms",

"im:message:readonly",

"im:message:recall",

"im:message:send_as_bot",

"im:message:send_multi_depts",

"im:message:send_multi_users",

"im:message:send_sys_msg",

"im:message:update",

"im:resource",

"im:tag:read",

"im:tag:write",

"im:url_preview.update",

"im:user_agent:read",

"sheets:spreadsheet",

"sheets:spreadsheet.meta:read",

"sheets:spreadsheet.meta:write_only",

"sheets:spreadsheet:create",

"sheets:spreadsheet:read",

"sheets:spreadsheet:readonly",

"sheets:spreadsheet:write_only",

"space:document.event:read",

"space:document:delete",

"space:document:move",

"space:document:retrieve",

"space:document:shortcut",

"space:folder:create",

"wiki:member:create",

"wiki:member:retrieve",

"wiki:member:update",

"wiki:node:copy",

"wiki:node:create",

"wiki:node:move",

"wiki:node:read",

"wiki:node:retrieve",

"wiki:node:update",

"wiki:setting:read",

"wiki:setting:write_only",

"wiki:space:read",

"wiki:space:retrieve",

"wiki:space:write_only",

"wiki:wiki",

"wiki:wiki:readonly"

]

}

}

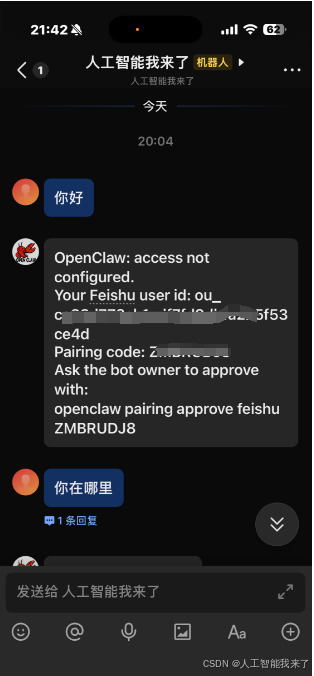

4.WSL侧的配置

4.1 openclaw的飞书配置

bash

(base) gpu3090@DESKTOP-8IU6393:~/openclaw$ openclaw onboard

Config warnings:\n- plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may be overridden (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts)

🦞 OpenClaw 2026.2.23 (b817600) --- Your messages, your servers, your control.

│

◇ Config warnings ────────────────────────────────────────────────────────────────────────────────────╮

│ │

│ - plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may │

│ be overridden │

│ (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts) │

│ │

├──────────────────────────────────────────────────────────────────────────────────────────────────────╯

│

◇ Doctor changes ────────────────────────────╮

│ │

│ feishu configured, enabled automatically. │

│ │

├─────────────────────────────────────────────╯

│

◇ Doctor ──────────────────────────────────────────────╮

│ │

│ Run "openclaw doctor --fix" to apply these changes. │

│ │

├───────────────────────────────────────────────────────╯

Config warnings:\n- plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may be overridden (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts)

12:00:52 [plugins] plugins.allow is empty; discovered non-bundled plugins may auto-load: feishu (/home/gpu3090/.openclaw/extensions/feishu/index.ts). Set plugins.allow to explicit trusted ids.

Config warnings:\n- plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may be overridden (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts)

12:01:01 [plugins] feishu_doc: Registered feishu_doc, feishu_app_scopes

12:01:01 [plugins] feishu_wiki: Registered feishu_wiki tool

12:01:01 [plugins] feishu_drive: Registered feishu_drive tool

12:01:01 [plugins] feishu_bitable: Registered bitable tools

12:01:01 [plugins] feishu: loaded without install/load-path provenance; treat as untracked local code and pin trust via plugins.allow or install records (/home/gpu3090/.openclaw/extensions/feishu/index.ts)

▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄▄

██░▄▄▄░██░▄▄░██░▄▄▄██░▀██░██░▄▄▀██░████░▄▄▀██░███░██

██░███░██░▀▀░██░▄▄▄██░█░█░██░█████░████░▀▀░██░█░█░██

██░▀▀▀░██░█████░▀▀▀██░██▄░██░▀▀▄██░▀▀░█░██░██▄▀▄▀▄██

▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀▀

🦞 OPENCLAW 🦞

┌ OpenClaw onboarding

│

◇ Security ──────────────────────────────────────────────────────────────────────────────╮

│ │

│ Security warning --- please read. │

│ │

│ OpenClaw is a hobby project and still in beta. Expect sharp edges. │

│ This bot can read files and run actions if tools are enabled. │

│ A bad prompt can trick it into doing unsafe things. │

│ │

│ If you're not comfortable with basic security and access control, don't run OpenClaw. │

│ Ask someone experienced to help before enabling tools or exposing it to the internet. │

│ │

│ Recommended baseline: │

│ - Pairing/allowlists + mention gating. │

│ - Sandbox + least-privilege tools. │

│ - Keep secrets out of the agent's reachable filesystem. │

│ - Use the strongest available model for any bot with tools or untrusted inboxes. │

│ │

│ Run regularly: │

│ openclaw security audit --deep │

│ openclaw security audit --fix │

│ │

│ Must read: https://docs.openclaw.ai/gateway/security │

│ │

├─────────────────────────────────────────────────────────────────────────────────────────╯

│

◇ I understand this is powerful and inherently risky. Continue?

│ Yes

│

◇ Onboarding mode

│ QuickStart

│

◇ Existing config detected ─────────╮

│ │

│ workspace: ~/.openclaw/workspace │

│ model: ollama/gpt-oss:20b │

│ gateway.mode: local │

│ gateway.port: 18789 │

│ gateway.bind: loopback │

│ │

├────────────────────────────────────╯

│

◇ Config handling

│ Use existing values

│

◇ QuickStart ─────────────────────────────╮

│ │

│ Keeping your current gateway settings: │

│ Gateway port: 18789 │

│ Gateway bind: Loopback (127.0.0.1) │

│ Gateway auth: Token (default) │

│ Tailscale exposure: Off │

│ Direct to chat channels. │

│ │

├──────────────────────────────────────────╯

Config warnings:\n- plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may be overridden (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts)

│

◇ Model/auth provider

│ Skip for now

│

◇ Filter models by provider

│ ollama

│

◇ Default model

│ Keep current (ollama/gpt-oss:20b)

[info]: [ 'client ready' ]

│

◇ Channel status ───────────────────────────────────────────╮

│ │

│ Telegram: needs token │

│ Feishu: connected as ou_eb8c5e1d04bc3f409c348066f53b7aed │

│ WhatsApp: not configured │

│ Discord: not configured │

│ IRC: not configured │

│ Google Chat: not configured │

│ Slack: not configured │

│ Signal: not configured │

│ iMessage: not configured │

│ Google Chat: install plugin to enable │

│ Nostr: install plugin to enable │

│ Microsoft Teams: install plugin to enable │

│ Mattermost: install plugin to enable │

│ Nextcloud Talk: install plugin to enable │

│ Matrix: install plugin to enable │

│ BlueBubbles: install plugin to enable │

│ LINE: install plugin to enable │

│ Zalo: install plugin to enable │

│ Zalo Personal: install plugin to enable │

│ Synology Chat: install plugin to enable │

│ Tlon: install plugin to enable │

│ │

├────────────────────────────────────────────────────────────╯

│

◇ How channels work ───────────────────────────────────────────────────────────────────────╮

│ │

│ DM security: default is pairing; unknown DMs get a pairing code. │

│ Approve with: openclaw pairing approve <channel> <code> │

│ Public DMs require dmPolicy="open" + allowFrom=["*"]. │

│ Multi-user DMs: run: openclaw config set session.dmScope "per-channel-peer" (or │

│ "per-account-channel-peer" for multi-account channels) to isolate sessions. │

│ Docs: channels/pairing │

│ │

│ Telegram: simplest way to get started --- register a bot with @BotFather and get going. │

│ WhatsApp: works with your own number; recommend a separate phone + eSIM. │

│ Discord: very well supported right now. │

│ IRC: classic IRC networks with DM/channel routing and pairing controls. │

│ Google Chat: Google Workspace Chat app with HTTP webhook. │

│ Slack: supported (Socket Mode). │

│ Signal: signal-cli linked device; more setup (David Reagans: "Hop on Discord."). │

│ iMessage: this is still a work in progress. │

│ Feishu: 飞书/Lark enterprise messaging. │

│ Nostr: Decentralized protocol; encrypted DMs via NIP-04. │

│ Microsoft Teams: Bot Framework; enterprise support. │

│ Mattermost: self-hosted Slack-style chat; install the plugin to enable. │

│ Nextcloud Talk: Self-hosted chat via Nextcloud Talk webhook bots. │

│ Matrix: open protocol; install the plugin to enable. │

│ BlueBubbles: iMessage via the BlueBubbles mac app + REST API. │

│ LINE: LINE Messaging API bot for Japan/Taiwan/Thailand markets. │

│ Zalo: Vietnam-focused messaging platform with Bot API. │

│ Zalo Personal: Zalo personal account via QR code login. │

│ Synology Chat: Connect your Synology NAS Chat to OpenClaw with full agent capabilities. │

│ Tlon: decentralized messaging on Urbit; install the plugin to enable. │

│ │

├───────────────────────────────────────────────────────────────────────────────────────────╯

│

◇ Select channel (QuickStart)

│ Feishu/Lark (飞书)

│

◇ Feishu already configured. What do you want to do?

│ Skip (leave as-is)

Config warnings:

- plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may be overridden (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts)

Config overwrite: /home/gpu3090/.openclaw/openclaw.json (sha256 383fc01adf5e6d22ff26c8a88e7daab8bdfd4a4380a983c8ec9aef6f9cb67caa -> 3c519fdbdc2ae58df8890e1c8490567bb0287aa70fd50aef0c1ed9d815be14e7, backup=/home/gpu3090/.openclaw/openclaw.json.bak)

Updated ~/.openclaw/openclaw.json

Workspace OK: ~/.openclaw/workspace

Sessions OK: ~/.openclaw/agents/main/sessions

│

◇ Skills status ─────────────╮

│ │

│ Eligible: 10 │

│ Missing requirements: 38 │

│ Unsupported on this OS: 7 │

│ Blocked by allowlist: 0 │

│ │

├─────────────────────────────╯

│

◇ Configure skills now? (recommended)

│ No

│

◇ Hooks ──────────────────────────────────────────────────────────────────╮

│ │

│ Hooks let you automate actions when agent commands are issued. │

│ Example: Save session context to memory when you issue /new or /reset. │

│ │

│ Learn more: https://docs.openclaw.ai/automation/hooks │

│ │

├──────────────────────────────────────────────────────────────────────────╯

│

◇ Enable hooks?

│ Skip for now

Config warnings:

- plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may be overridden (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts)

Config overwrite: /home/gpu3090/.openclaw/openclaw.json (sha256 3c519fdbdc2ae58df8890e1c8490567bb0287aa70fd50aef0c1ed9d815be14e7 -> bd6eabd7f470daa88d0ccec4be9099659f7872174a9ee6265f3ca866a5d5adf0, backup=/home/gpu3090/.openclaw/openclaw.json.bak)

│

◇ Systemd ───────────────────────────────────────────────────────────────────────────────╮

│ │

│ Systemd user services are unavailable. Skipping lingering checks and service install. │

│ │

├─────────────────────────────────────────────────────────────────────────────────────────╯

Config warnings:\n- plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may be overridden (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts)

│

◇

Feishu: ok

Agents: main (default)

Heartbeat interval: 30m (main)

Session store (main): /home/gpu3090/.openclaw/agents/main/sessions/sessions.json (1 entries)

- agent:main:main (1m ago)

│

◇ Optional apps ────────────────────────╮

│ │

│ Add nodes for extra features: │

│ - macOS app (system + notifications) │

│ - iOS app (camera/canvas) │

│ - Android app (camera/canvas) │

│ │

├────────────────────────────────────────╯

│

◇ Control UI ─────────────────────────────────────────────────────────────────────╮

│ │

│ Web UI: http://127.0.0.1:18789/ │

│ Web UI (with token): │

│ http://127.0.0.1:18789/#token=653d40aee8d790919cd740786a2bc5f6b41b3ef0b20ee905 │

│ Gateway WS: ws://127.0.0.1:18789 │

│ Gateway: reachable │

│ Docs: https://docs.openclaw.ai/web/control-ui │

│ │

├──────────────────────────────────────────────────────────────────────────────────╯

│

◇ Start TUI (best option!) ─────────────────────────────────╮

│ │

│ This is the defining action that makes your agent you. │

│ Please take your time. │

│ The more you tell it, the better the experience will be. │

│ We will send: "Wake up, my friend!" │

│ │

├────────────────────────────────────────────────────────────╯

│

◇ Token ─────────────────────────────────────────────────────────────────────────────────╮

│ │

│ Gateway token: shared auth for the Gateway + Control UI. │

│ Stored in: ~/.openclaw/openclaw.json (gateway.auth.token) or OPENCLAW_GATEWAY_TOKEN. │

│ View token: openclaw config get gateway.auth.token │

│ Generate token: openclaw doctor --generate-gateway-token │

│ Web UI stores a copy in this browser's localStorage (openclaw.control.settings.v1). │

│ Open the dashboard anytime: openclaw dashboard --no-open │

│ If prompted: paste the token into Control UI settings (or use the tokenized dashboard │

│ URL). │

│ │

├─────────────────────────────────────────────────────────────────────────────────────────╯

│

◇ How do you want to hatch your bot?

│ Open the Web UI

│

◇ Dashboard ready ────────────────────────────────────────────────────────────────╮

│ │

│ Dashboard link (with token): │

│ http://127.0.0.1:18789/#token=653d40aee8d790919cd740786a2bc5f6b41b3ef0b20ee905 │

│ Copy/paste this URL in a browser on this machine to control OpenClaw. │

│ No GUI detected. Open from your computer: │

│ ssh -N -L 18789:127.0.0.1:18789 gpu3090@<host> │

│ Then open: │

│ http://localhost:18789/ │

│ http://localhost:18789/#token=653d40aee8d790919cd740786a2bc5f6b41b3ef0b20ee905 │

│ Docs: │

│ https://docs.openclaw.ai/gateway/remote │

│ https://docs.openclaw.ai/web/control-ui │

│ │

├──────────────────────────────────────────────────────────────────────────────────╯

│

◇ Workspace backup ────────────────────────────────────────╮

│ │

│ Back up your agent workspace. │

│ Docs: https://docs.openclaw.ai/concepts/agent-workspace │

│ │

├───────────────────────────────────────────────────────────╯

│

◇ Security ──────────────────────────────────────────────────────╮

│ │

│ Running agents on your computer is risky --- harden your setup: │

│ https://docs.openclaw.ai/security │

│ │

├─────────────────────────────────────────────────────────────────╯

│

◇ Web search (optional) ─────────────────────────────────────────────────────────────────╮

│ │

│ If you want your agent to be able to search the web, you'll need an API key. │

│ │

│ OpenClaw uses Brave Search for the `web_search` tool. Without a Brave Search API key, │

│ web search won't work. │

│ │

│ Set it up interactively: │

│ - Run: openclaw configure --section web │

│ - Enable web_search and paste your Brave Search API key │

│ │

│ Alternative: set BRAVE_API_KEY in the Gateway environment (no config changes). │

│ Docs: https://docs.openclaw.ai/tools/web │

│ │

├─────────────────────────────────────────────────────────────────────────────────────────╯

│

◇ What now ─────────────────────────────────────────────────────────────╮

│ │

│ What now: https://openclaw.ai/showcase ("What People Are Building"). │

│ │

├────────────────────────────────────────────────────────────────────────╯

│

└ Onboarding complete. Use the dashboard link above to control OpenClaw.

(base) gpu3090@DESKTOP-8IU6393:~/openclaw$ openclaw pairing approve feishu ZMBRUDJ8

Config warnings:\n- plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may be overridden (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts)

12:06:02 [plugins] plugins.allow is empty; discovered non-bundled plugins may auto-load: feishu (/home/gpu3090/.openclaw/extensions/feishu/index.ts). Set plugins.allow to explicit trusted ids.

Config warnings:\n- plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may be overridden (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts)

12:06:04 [plugins] feishu_doc: Registered feishu_doc, feishu_app_scopes

12:06:04 [plugins] feishu_wiki: Registered feishu_wiki tool

12:06:04 [plugins] feishu_drive: Registered feishu_drive tool

12:06:04 [plugins] feishu_bitable: Registered bitable tools

12:06:04 [plugins] feishu: loaded without install/load-path provenance; treat as untracked local code and pin trust via plugins.allow or install records (/home/gpu3090/.openclaw/extensions/feishu/index.ts)

🦞 OpenClaw 2026.2.23 (b817600) --- End-to-end encrypted, drama-to-drama excluded.

│

◇ Config warnings ────────────────────────────────────────────────────────────────────────────────────╮

│ │

│ - plugins.entries.feishu: plugin feishu: duplicate plugin id detected; later plugin may │

│ be overridden │

│ (/home/gpu3090/.nvm/versions/node/v22.12.0/lib/node_modules/openclaw/extensions/feishu/index.ts) │

│ │

├──────────────────────────────────────────────────────────────────────────────────────────────────────╯

│

◇ Doctor changes ────────────────────────────╮

│ │

│ feishu configured, enabled automatically. │

│ │

├─────────────────────────────────────────────╯

│

◇ Doctor ──────────────────────────────────────────────╮

│ │

│ Run "openclaw doctor --fix" to apply these changes. │

│ │

├───────────────────────────────────────────────────────╯

Approved feishu sender ou_ca33d772eb1cdf7fd9dbfa225f53ce4d.启动ollama里面的大模型

bash

PS C:\Users\Administrator> wsl -u gpu3090

Now using node v22.12.0 (npm v10.9.0)

(base) gpu3090@DESKTOP-8IU6393:/mnt/c/Users/Administrator$ cd

(base) gpu3090@DESKTOP-8IU6393:~$ OLLAMA_HOST=0.0.0.0:12346 OLLAMA_MODELS=/home/gpu3090/.ollama/models ollama serve &

[1] 15030

(base) gpu3090@DESKTOP-8IU6393:~$ time=2026-02-24T20:10:33.806+08:00 level=INFO source=routes.go:1663 msg="server config" env="map[CUDA_VISIBLE_DEVICES: GGML_VK_VISIBLE_DEVICES: GPU_DEVICE_ORDINAL: HIP_VISIBLE_DEVICES: HSA_OVERRIDE_GFX_VERSION: HTTPS_PROXY:http://127.0.0.1:7897 HTTP_PROXY:http://127.0.0.1:7897 NO_PROXY:172.31.*,172.30.*,172.29.*,172.28.*,172.27.*,172.26.*,172.25.*,172.24.*,172.23.*,172.22.*,172.21.*,172.20.*,172.19.*,172.18.*,172.17.*,172.16.*,10.*,192.168.*,127.*,localhost,<local> OLLAMA_CONTEXT_LENGTH:0 OLLAMA_DEBUG:INFO OLLAMA_EDITOR: OLLAMA_FLASH_ATTENTION:false OLLAMA_GPU_OVERHEAD:0 OLLAMA_HOST:http://0.0.0.0:12346 OLLAMA_KEEP_ALIVE:5m0s OLLAMA_KV_CACHE_TYPE: OLLAMA_LLM_LIBRARY: OLLAMA_LOAD_TIMEOUT:5m0s OLLAMA_MAX_LOADED_MODELS:0 OLLAMA_MAX_QUEUE:512 OLLAMA_MODELS:/home/gpu3090/.ollama/models OLLAMA_MULTIUSER_CACHE:false OLLAMA_NEW_ENGINE:false OLLAMA_NOHISTORY:false OLLAMA_NOPRUNE:false OLLAMA_NO_CLOUD:false OLLAMA_NUM_PARALLEL:1 OLLAMA_ORIGINS:[http://localhost https://localhost http://localhost:* https://localhost:* http://127.0.0.1 https://127.0.0.1 http://127.0.0.1:* https://127.0.0.1:* http://0.0.0.0 https://0.0.0.0 http://0.0.0.0:* https://0.0.0.0:* app://* file://* tauri://* vscode-webview://* vscode-file://*] OLLAMA_REMOTES:[ollama.com] OLLAMA_SCHED_SPREAD:false OLLAMA_VULKAN:false ROCR_VISIBLE_DEVICES: http_proxy:http://127.0.0.1:7897 https_proxy:http://127.0.0.1:7897 no_proxy:172.31.*,172.30.*,172.29.*,172.28.*,172.27.*,172.26.*,172.25.*,172.24.*,172.23.*,172.22.*,172.21.*,172.20.*,172.19.*,172.18.*,172.17.*,172.16.*,10.*,192.168.*,127.*,localhost,<local>]"

time=2026-02-24T20:10:33.810+08:00 level=INFO source=routes.go:1665 msg="Ollama cloud disabled: false"

time=2026-02-24T20:10:34.150+08:00 level=INFO source=images.go:473 msg="total blobs: 53"

time=2026-02-24T20:10:34.313+08:00 level=INFO source=images.go:480 msg="total unused blobs removed: 0"

time=2026-02-24T20:10:34.458+08:00 level=INFO source=routes.go:1718 msg="Listening on [::]:12346 (version 0.16.3)"

time=2026-02-24T20:10:34.473+08:00 level=INFO source=runner.go:67 msg="discovering available GPUs..."

time=2026-02-24T20:10:34.502+08:00 level=INFO source=server.go:431 msg="starting runner" cmd="/usr/local/bin/ollama runner --ollama-engine --port 46037"

time=2026-02-24T20:10:36.614+08:00 level=INFO source=runner.go:106 msg="experimental Vulkan support disabled. To enable, set OLLAMA_VULKAN=1"

time=2026-02-24T20:10:36.615+08:00 level=INFO source=server.go:431 msg="starting runner" cmd="/usr/local/bin/ollama runner --ollama-engine --port 46237"

time=2026-02-24T20:10:38.176+08:00 level=INFO source=server.go:431 msg="starting runner" cmd="/usr/local/bin/ollama runner --ollama-engine --port 46265"

time=2026-02-24T20:10:38.186+08:00 level=INFO source=server.go:431 msg="starting runner" cmd="/usr/local/bin/ollama runner --ollama-engine --port 45607"

time=2026-02-24T20:10:38.416+08:00 level=INFO source=types.go:42 msg="inference compute" id=GPU-67135303-3c02-f35c-3e58-dc2c1b4892fc filter_id="" library=CUDA compute=8.9 name=CUDA0 description="NVIDIA GeForce RTX 4090 D" libdirs=ollama,cuda_v13 driver=13.0 pci_id=0000:07:00.0 type=discrete total="24.0 GiB" available="20.5 GiB"

time=2026-02-24T20:10:38.416+08:00 level=INFO source=routes.go:1768 msg="vram-based default context" total_vram="24.0 GiB" default_num_ctx=32768

time=2026-02-24T20:10:55.284+08:00 level=INFO source=server.go:431 msg="starting runner" cmd="/usr/local/bin/ollama runner --ollama-engine --port 45515"

time=2026-02-24T20:10:55.902+08:00 level=INFO source=server.go:247 msg="enabling flash attention"

time=2026-02-24T20:10:55.903+08:00 level=INFO source=server.go:431 msg="starting runner" cmd="/usr/local/bin/ollama runner --ollama-engine --model /home/gpu3090/.ollama/models/blobs/sha256-e7b273f9636059a689e3ddcab3716e4f65abe0143ac978e46673ad0e52d09efb --port 46267"

time=2026-02-24T20:10:55.903+08:00 level=INFO source=sched.go:491 msg="system memory" total="62.7 GiB" free="60.9 GiB" free_swap="16.0 GiB"

time=2026-02-24T20:10:55.903+08:00 level=INFO source=sched.go:498 msg="gpu memory" id=GPU-67135303-3c02-f35c-3e58-dc2c1b4892fc library=CUDA available="20.1 GiB" free="20.5 GiB" minimum="457.0 MiB" overhead="0 B"

time=2026-02-24T20:10:55.903+08:00 level=INFO source=server.go:757 msg="loading model" "model layers"=25 requested=-1

time=2026-02-24T20:10:55.919+08:00 level=INFO source=runner.go:1411 msg="starting ollama engine"

time=2026-02-24T20:10:55.941+08:00 level=INFO source=runner.go:1446 msg="Server listening on 127.0.0.1:46267"

time=2026-02-24T20:10:55.949+08:00 level=INFO source=runner.go:1284 msg=load request="{Operation:fit LoraPath:[] Parallel:1 BatchSize:512 FlashAttention:Enabled KvSize:32768 KvCacheType: NumThreads:12 GPULayers:25[ID:GPU-67135303-3c02-f35c-3e58-dc2c1b4892fc Layers:25(0..24)] MultiUserCache:false ProjectorPath: MainGPU:0 UseMmap:false}"

time=2026-02-24T20:10:56.075+08:00 level=INFO source=ggml.go:136 msg="" architecture=gptoss file_type=MXFP4 name="" description="" num_tensors=459 num_key_values=32

load_backend: loaded CPU backend from /usr/local/lib/ollama/libggml-cpu-haswell.so

ggml_cuda_init: GGML_CUDA_FORCE_MMQ: no

ggml_cuda_init: GGML_CUDA_FORCE_CUBLAS: no

ggml_cuda_init: found 1 CUDA devices:

Device 0: NVIDIA GeForce RTX 4090 D, compute capability 8.9, VMM: yes, ID: GPU-67135303-3c02-f35c-3e58-dc2c1b4892fc

load_backend: loaded CUDA backend from /usr/local/lib/ollama/cuda_v13/libggml-cuda.so

time=2026-02-24T20:10:56.244+08:00 level=INFO source=ggml.go:104 msg=system CPU.0.SSE3=1 CPU.0.SSSE3=1 CPU.0.AVX=1 CPU.0.AVX2=1 CPU.0.F16C=1 CPU.0.FMA=1 CPU.0.BMI2=1 CPU.0.LLAMAFILE=1 CPU.1.LLAMAFILE=1 CUDA.0.ARCHS=750,800,860,870,890,900,1000,1030,1100,1200,1210 CUDA.0.USE_GRAPHS=1 CUDA.0.PEER_MAX_BATCH_SIZE=128 compiler=cgo(gcc)

time=2026-02-24T20:10:58.623+08:00 level=INFO source=runner.go:1284 msg=load request="{Operation:alloc LoraPath:[] Parallel:1 BatchSize:512 FlashAttention:Enabled KvSize:32768 KvCacheType: NumThreads:12 GPULayers:25[ID:GPU-67135303-3c02-f35c-3e58-dc2c1b4892fc Layers:25(0..24)] MultiUserCache:false ProjectorPath: MainGPU:0 UseMmap:false}"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=runner.go:1284 msg=load request="{Operation:commit LoraPath:[] Parallel:1 BatchSize:512 FlashAttention:Enabled KvSize:32768 KvCacheType: NumThreads:12 GPULayers:25[ID:GPU-67135303-3c02-f35c-3e58-dc2c1b4892fc Layers:25(0..24)] MultiUserCache:false ProjectorPath: MainGPU:0 UseMmap:false}"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=ggml.go:482 msg="offloading 24 repeating layers to GPU"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=ggml.go:489 msg="offloading output layer to GPU"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=ggml.go:494 msg="offloaded 25/25 layers to GPU"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=device.go:240 msg="model weights" device=CUDA0 size="11.8 GiB"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=device.go:245 msg="model weights" device=CPU size="1.1 GiB"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=device.go:251 msg="kv cache" device=CUDA0 size="876.0 MiB"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=device.go:262 msg="compute graph" device=CUDA0 size="202.3 MiB"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=device.go:267 msg="compute graph" device=CPU size="5.6 MiB"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=device.go:272 msg="total memory" size="13.9 GiB"

time=2026-02-24T20:10:59.089+08:00 level=INFO source=sched.go:566 msg="loaded runners" count=1

time=2026-02-24T20:10:59.089+08:00 level=INFO source=server.go:1350 msg="waiting for llama runner to start responding"

time=2026-02-24T20:10:59.090+08:00 level=INFO source=server.go:1384 msg="waiting for server to become available" status="llm server loading model"

time=2026-02-24T20:13:29.638+08:00 level=INFO source=server.go:1388 msg="llama runner started in 150.89 seconds"

[GIN] 2026/02/24 - 20:13:33 | 200 | 2m36s | 127.0.0.1 | POST "/v1/chat/completions"

[GIN] 2026/02/24 - 20:14:13 | 200 | 2.66893432s | 127.0.0.1 | POST "/v1/chat/completions"

[GIN] 2026/02/24 - 20:14:15 | 200 | 1.55337028s | 127.0.0.1 | POST "/v1/chat/completions"

[GIN] 2026/02/24 - 20:14:19 | 200 | 3.807682829s | 127.0.0.1 | POST "/v1/chat/completions"

[GIN] 2026/02/24 - 20:16:44 | 200 | 4.57320368s | 127.0.0.1 | POST "/v1/chat/completions"

ggml_backend_cuda_device_get_memory device GPU-67135303-3c02-f35c-3e58-dc2c1b4892fc utilizing NVML memory reporting free: 7589896192 total: 25757220864

time=2026-02-24T20:21:55.251+08:00 level=INFO source=server.go:431 msg="starting runner" cmd="/usr/local/bin/ollama runner --ollama-engine --port 46347"

time=2026-02-24T20:23:16.264+08:00 level=INFO source=server.go:431 msg="starting runner" cmd="/usr/local/bin/ollama runner --ollama-engine --port 44657"

time=2026-02-24T20:23:16.818+08:00 level=INFO source=server.go:247 msg="enabling flash attention"

time=2026-02-24T20:23:16.819+08:00 level=INFO source=server.go:431 msg="starting runner" cmd="/usr/local/bin/ollama runner --ollama-engine --model /home/gpu3090/.ollama/models/blobs/sha256-e7b273f9636059a689e3ddcab3716e4f65abe0143ac978e46673ad0e52d09efb --port 44781"

time=2026-02-24T20:23:16.820+08:00 level=INFO source=sched.go:491 msg="system memory" total="62.7 GiB" free="60.9 GiB" free_swap="16.0 GiB"

time=2026-02-24T20:23:16.820+08:00 level=INFO source=sched.go:498 msg="gpu memory" id=GPU-67135303-3c02-f35c-3e58-dc2c1b4892fc library=CUDA available="20.0 GiB" free="20.4 GiB" minimum="457.0 MiB" overhead="0 B"