早就想学你了,k8s,哥来了! 哈哈!

- 系统:Centos7

- K8s版本:1.23.17

- Rancher版本:2.7 9

1、机器准备

共计3台机器:

shell

192.168.1.102 = master

192.168.1.130 = worker1

192.168.1.133 = worker23台机器的内核和系统信息都一模一样,如下:

shell

[root@master ~]# uname -a

Linux master 3.10.0-1160.el7.x86_64 #1 SMP Mon Oct 19 16:18:59 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux

[root@master ~]#

[root@master ~]#

[root@master ~]# cat /etc/os-release

NAME="CentOS Linux"

VERSION="7 (Core)"

ID="centos"

ID_LIKE="rhel fedora"

VERSION_ID="7"

PRETTY_NAME="CentOS Linux 7 (Core)"

ANSI_COLOR="0;31"

CPE_NAME="cpe:/o:centos:centos:7"

HOME_URL="https://www.centos.org/"

BUG_REPORT_URL="https://bugs.centos.org/"

CENTOS_MANTISBT_PROJECT="CentOS-7"

CENTOS_MANTISBT_PROJECT_VERSION="7"

REDHAT_SUPPORT_PRODUCT="centos"

REDHAT_SUPPORT_PRODUCT_VERSION="7"

[root@master ~]# 2、一键安装k8s脚本编写(1.23.17版本)

如果之前有装过k8s那么为了确保干净,需要清理下:

shell

systemctl stop kubelet

kubeadm reset -f

rm -rf /etc/kubernetes/

rm -rf $HOME/.kube/

yum remove -y kubelet kubeadm kubectl注意需要自行替换下边的(PRIVATE_REGISTRY="xxxx.xuanyuan.run") 镜像地址。我是自己买了一个。

bash

#!/bin/bash

set -e

# ========================= 一键安装 Kubernetes 集群脚本 =========================

# 全部使用国内源 + 自定义镜像加速

# ===============================================================================

# ------------------------- 配置区域 -------------------------

declare -A NODES=(

["192.168.1.102"]="master"

["192.168.1.130"]="worker1"

["192.168.1.133"]="worker2"

)

POD_NETWORK_CIDR="10.244.0.0/16"

K8S_VERSION="1.23.17"

IMAGE_REPO="registry.aliyuncs.com/google_containers"

PAUSE_IMAGE="${IMAGE_REPO}/pause:3.6"

# 你的私有镜像加速地址

PRIVATE_REGISTRY="xxxx.xuanyuan.run"

# ------------------------- 颜色输出 -------------------------

RED='\033[0;31m'

GREEN='\033[0;32m'

YELLOW='\033[1;33m'

NC='\033[0m'

log() { echo -e "${GREEN}[INFO] $1${NC}"; }

warn() { echo -e "${YELLOW}[WARN] $1${NC}"; }

error() { echo -e "${RED}[ERROR] $1${NC}"; exit 1; }

# ------------------------- 公共函数 -------------------------

check_root() {

[[ $EUID -eq 0 ]] || error "请使用 root 用户执行此脚本。"

}

get_local_ip() {

ip -4 addr show | grep -oP '(?<=inet\s)\d+(\.\d+){3}' | grep -v '^127\.' | head -n1

}

setup_hosts() {

log "配置 /etc/hosts 解析..."

for ip in "${!NODES[@]}"; do

hostname=${NODES[$ip]}

sed -i "/${hostname}$/d" /etc/hosts

sed -i "/^${ip}\s/d" /etc/hosts

done

for ip in "${!NODES[@]}"; do

hostname=${NODES[$ip]}

echo "$ip $hostname" >> /etc/hosts

done

}

check_hostname() {

local_ip=$(get_local_ip)

expected_name=${NODES[$local_ip]}

[[ -n "$expected_name" ]] || error "本机 IP $local_ip 未在 NODES 列表中定义。"

current_name=$(hostname)

if [[ "$current_name" != "$expected_name" ]]; then

warn "当前主机名 ($current_name) 与预期 ($expected_name) 不符,自动修改..."

hostnamectl set-hostname "$expected_name"

export HOSTNAME="$expected_name"

log "主机名已修改为 $expected_name"

fi

}

disable_firewall() {

log "关闭防火墙..."

systemctl stop firewalld 2>/dev/null || true

systemctl disable firewalld 2>/dev/null || true

}

disable_swap() {

log "关闭 swap..."

swapoff -a

sed -i '/swap/s/^/#/' /etc/fstab

}

disable_selinux() {

log "禁用 SELinux..."

setenforce 0 2>/dev/null || true

sed -i 's/^SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config 2>/dev/null || true

}

load_kernel_modules() {

log "加载内核模块..."

cat > /etc/modules-load.d/k8s.conf <<EOF

br_netfilter

overlay

EOF

modprobe br_netfilter 2>/dev/null || true

modprobe overlay 2>/dev/null || true

}

set_sysctl() {

log "配置内核参数..."

cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

vm.swappiness = 0

EOF

sysctl --system >/dev/null

}

install_containerd() {

log "安装 containerd..."

yum remove -y containerd.io docker-ce docker-ce-cli 2>/dev/null || true

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install -y containerd.io

log "配置 containerd..."

mkdir -p /etc/containerd

containerd config default > /etc/containerd/config.toml

sed -i 's/SystemdCgroup = false/SystemdCgroup = true/' /etc/containerd/config.toml

sed -i 's|config_path = ""|config_path = "/etc/containerd/certs.d"|' /etc/containerd/config.toml

# 配置所有镜像加速

mkdir -p /etc/containerd/certs.d/docker.io

mkdir -p /etc/containerd/certs.d/registry.k8s.io

mkdir -p /etc/containerd/certs.d/k8s.gcr.io

mkdir -p /etc/containerd/certs.d/quay.io

mkdir -p /etc/containerd/certs.d/ghcr.io

# Docker Hub - 使用你的私有镜像仓库

cat > /etc/containerd/certs.d/docker.io/hosts.toml <<EOF

server = "https://docker.io"

[host."https://${PRIVATE_REGISTRY}"]

capabilities = ["pull", "resolve"]

skip_verify = true

[host."https://docker.m.daocloud.io"]

capabilities = ["pull", "resolve"]

EOF

# registry.k8s.io

cat > /etc/containerd/certs.d/registry.k8s.io/hosts.toml <<EOF

server = "https://registry.k8s.io"

[host."https://${PRIVATE_REGISTRY}"]

capabilities = ["pull", "resolve"]

skip_verify = true

[host."https://k8s.m.daocloud.io"]

capabilities = ["pull", "resolve"]

EOF

# k8s.gcr.io

cat > /etc/containerd/certs.d/k8s.gcr.io/hosts.toml <<EOF

server = "https://k8s.gcr.io"

[host."https://${PRIVATE_REGISTRY}"]

capabilities = ["pull", "resolve"]

skip_verify = true

[host."https://k8s.m.daocloud.io"]

capabilities = ["pull", "resolve"]

EOF

# quay.io

cat > /etc/containerd/certs.d/quay.io/hosts.toml <<EOF

server = "https://quay.io"

[host."https://${PRIVATE_REGISTRY}"]

capabilities = ["pull", "resolve"]

skip_verify = true

[host."https://quay.m.daocloud.io"]

capabilities = ["pull", "resolve"]

EOF

# ghcr.io

cat > /etc/containerd/certs.d/ghcr.io/hosts.toml <<EOF

server = "https://ghcr.io"

[host."https://${PRIVATE_REGISTRY}"]

capabilities = ["pull", "resolve"]

skip_verify = true

[host."https://ghcr.m.daocloud.io"]

capabilities = ["pull", "resolve"]

EOF

systemctl daemon-reload

systemctl restart containerd

systemctl enable containerd

# 配置 crictl

cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

log "containerd 安装配置完成。"

}

install_kubeadm() {

log "安装 kubeadm / kubelet / kubectl (版本 ${K8S_VERSION})..."

# 清理旧版本

if command -v kubeadm &>/dev/null; then

warn "检测到已安装 kubeadm,正在清理..."

kubeadm reset -f 2>/dev/null || true

yum remove -y kubelet kubeadm kubectl 2>/dev/null || true

rm -rf /etc/kubernetes /var/lib/etcd /var/lib/kubelet $HOME/.kube /etc/cni/net.d/*

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X 2>/dev/null || true

fi

cat > /etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

repo_gpgcheck=0

EOF

yum install -y kubelet-${K8S_VERSION} kubeadm-${K8S_VERSION} kubectl-${K8S_VERSION} --disableexcludes=kubernetes

mkdir -p /var/lib/kubelet

echo "KUBELET_KUBEADM_ARGS=\"--pod-infra-container-image=${PAUSE_IMAGE}\"" > /var/lib/kubelet/kubeadm-flags.env

systemctl enable kubelet

log "kubeadm 安装完成。"

}

# ------------------------- Master 专属 -------------------------

setup_master() {

local_ip=$(get_local_ip)

log "初始化 Kubernetes 控制平面 (Master IP: $local_ip)..."

cat > /tmp/kubeadm-config.yaml <<EOF

apiVersion: kubeadm.k8s.io/v1beta3

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: ${local_ip}

bindPort: 6443

nodeRegistration:

criSocket: unix:///run/containerd/containerd.sock

imagePullPolicy: IfNotPresent

taints: []

---

apiVersion: kubeadm.k8s.io/v1beta3

kind: ClusterConfiguration

kubernetesVersion: v${K8S_VERSION}

imageRepository: ${IMAGE_REPO}

networking:

podSubnet: ${POD_NETWORK_CIDR}

serviceSubnet: 10.96.0.0/12

dns:

imageRepository: ${IMAGE_REPO}

imageTag: v1.8.6

---

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

cgroupDriver: systemd

EOF

log "拉取 Kubernetes 所需镜像..."

kubeadm config images pull --config /tmp/kubeadm-config.yaml

log "开始初始化集群..."

kubeadm init --config /tmp/kubeadm-config.yaml --upload-certs

rm -f /tmp/kubeadm-config.yaml

log "配置 kubectl..."

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

log "等待 API Server 就绪..."

sleep 10

until kubectl get nodes &>/dev/null; do

sleep 3

done

# 安装 Flannel

install_flannel

# 生成 join 命令

kubeadm token create --print-join-command > /root/kubeadm_join_cmd.txt 2>/dev/null || true

if [[ ! -s /root/kubeadm_join_cmd.txt ]]; then

kubeadm token create

token=$(kubeadm token list | grep -v TOKEN | head -1 | awk '{print $1}')

cert_hash=$(openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //')

echo "kubeadm join ${local_ip}:6443 --token ${token} --discovery-token-ca-cert-hash sha256:${cert_hash}" > /root/kubeadm_join_cmd.txt

fi

echo ""

echo "=========================================="

log "Kubernetes 集群初始化完成!"

echo "=========================================="

kubectl get nodes -o wide

echo ""

echo "Worker 加入命令: /root/kubeadm_join_cmd.txt"

cat /root/kubeadm_join_cmd.txt

echo "=========================================="

}

install_flannel() {

log "安装 Flannel CNI 网络插件..."

# 预拉取 Flannel 镜像

log "预拉取 Flannel 镜像..."

crictl pull ${PRIVATE_REGISTRY}/flannel/flannel:v0.24.3 2>/dev/null || true

crictl pull ${PRIVATE_REGISTRY}/flannel/flannel-cni-plugin:v1.2.0 2>/dev/null || true

cat > /tmp/kube-flannel.yml <<'FLANNELEOF'

apiVersion: v1

kind: Namespace

metadata:

labels:

k8s-app: flannel

pod-security.kubernetes.io/enforce: privileged

name: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: flannel

name: flannel

namespace: kube-flannel

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: flannel

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

- apiGroups:

- networking.k8s.io

resources:

- clustercidrs

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: flannel

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ConfigMap

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

k8s-app: flannel

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

k8s-app: flannel

spec:

selector:

matchLabels:

app: flannel

k8s-app: flannel

template:

metadata:

labels:

tier: node

app: flannel

k8s-app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: __REGISTRY__/flannel/flannel-cni-plugin:v1.2.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: __REGISTRY__/flannel/flannel:v0.24.3

command:

- /bin/sh

- -c

- |

set -e

cp -f /etc/kube-flannel/cni-conf.json /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: __REGISTRY__/flannel/flannel:v0.24.3

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

FLANNELEOF

# 替换镜像地址

sed -i "s|__REGISTRY__|${PRIVATE_REGISTRY}|g" /tmp/kube-flannel.yml

kubectl apply -f /tmp/kube-flannel.yml

rm -f /tmp/kube-flannel.yml

log "等待节点 Ready..."

sleep 10

kubectl wait --for=condition=Ready node/${HOSTNAME} --timeout=300s 2>/dev/null || true

log "等待 Flannel Pod 启动..."

kubectl wait --for=condition=Ready pod -l k8s-app=flannel -n kube-flannel --timeout=180s 2>/dev/null || true

}

# ------------------------- Worker 专属 -------------------------

setup_worker() {

log "配置 Worker 节点..."

if [[ -f /root/kubeadm_join_cmd.txt ]]; then

join_cmd=$(cat /root/kubeadm_join_cmd.txt)

warn "使用本地 join 命令文件..."

else

echo "请输入从 Master 节点获得的 kubeadm join 命令:"

read -p "> " join_cmd

[[ -n "$join_cmd" ]] || error "未输入 join 命令,退出。"

fi

log "执行加入集群..."

$join_cmd

log "Worker 节点已加入集群。"

}

# ------------------------- 重置 -------------------------

reset_cluster() {

warn "将重置 Kubernetes 集群配置..."

read -p "确认重置? (y/n) " -n 1 -r; echo

if [[ $REPLY =~ ^[Yy]$ ]]; then

kubeadm reset -f 2>/dev/null || true

rm -rf /etc/kubernetes /var/lib/etcd /var/lib/kubelet $HOME/.kube /etc/cni/net.d/*

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X 2>/dev/null || true

systemctl restart containerd

log "重置完成。"

fi

}

# ------------------------- 主逻辑 -------------------------

main() {

check_root

local_ip=$(get_local_ip)

log "本机 IP: ${local_ip}"

case "${1:-}" in

--reset) reset_cluster; exit 0 ;;

esac

setup_hosts

check_hostname

disable_firewall

disable_swap

disable_selinux

load_kernel_modules

set_sysctl

install_containerd

install_kubeadm

case ${NODES[$local_ip]} in

master) setup_master ;;

worker*) setup_worker ;;

*) error "未知角色,IP: ${local_ip}" ;;

esac

log "脚本执行完毕。"

kubectl get nodes -o wide 2>/dev/null || true

}

main "$@"3、依次在3台机器执行一键安装脚本

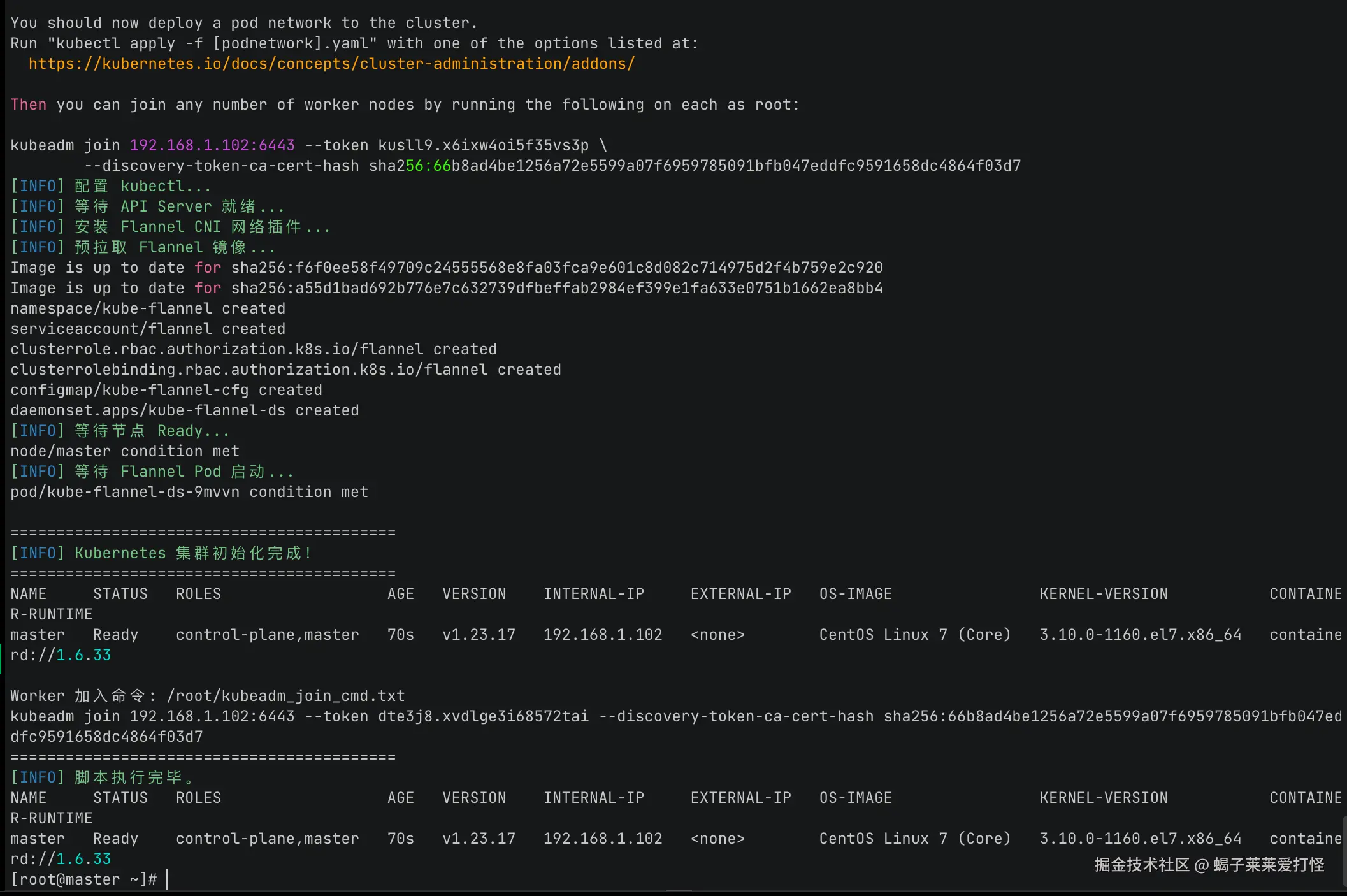

在主节点 192.168.1.102执行一键安装脚本:

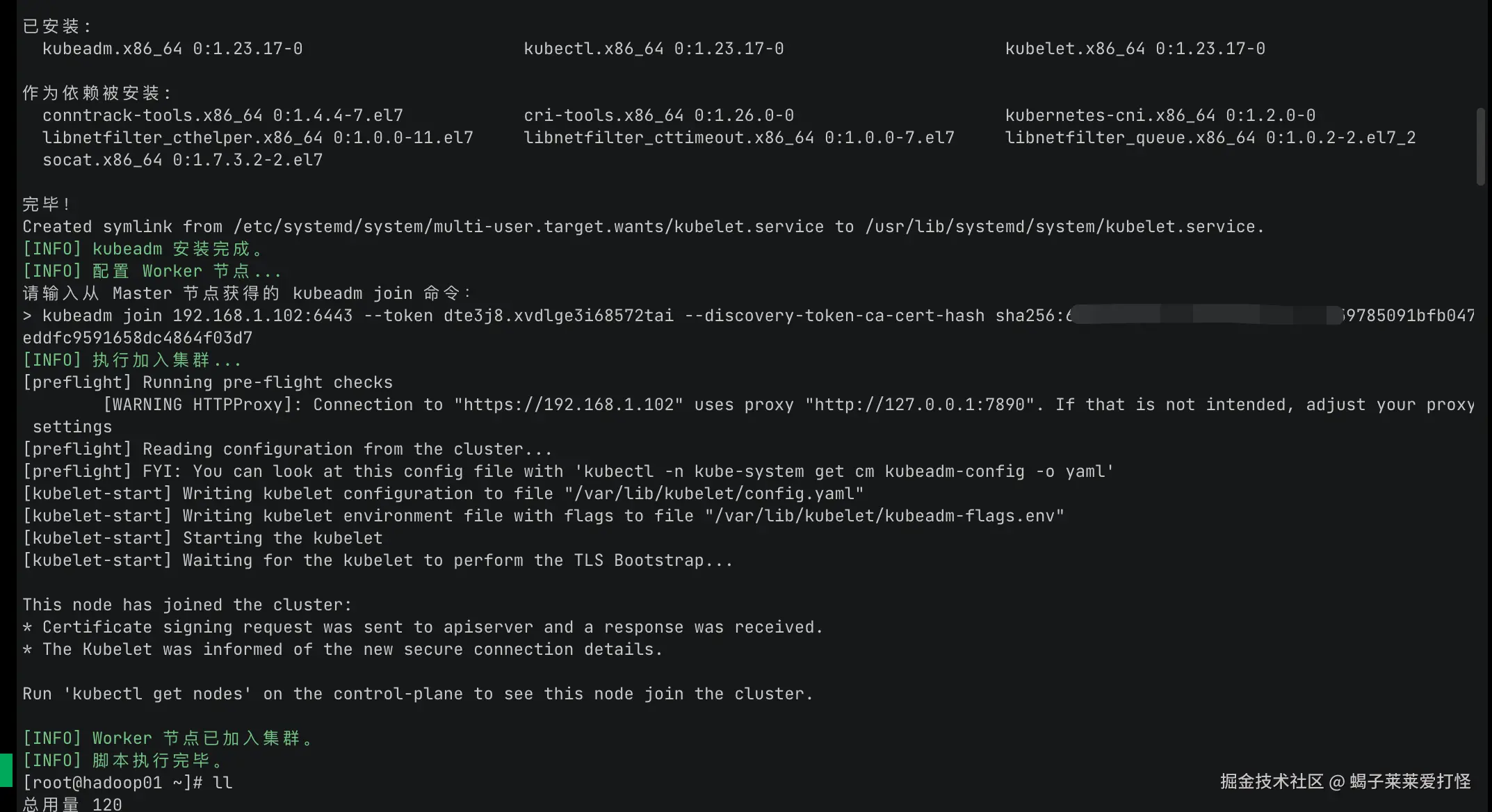

在另外两台(192.168.1.130,192.168.1.133)从节点执行同样脚本:

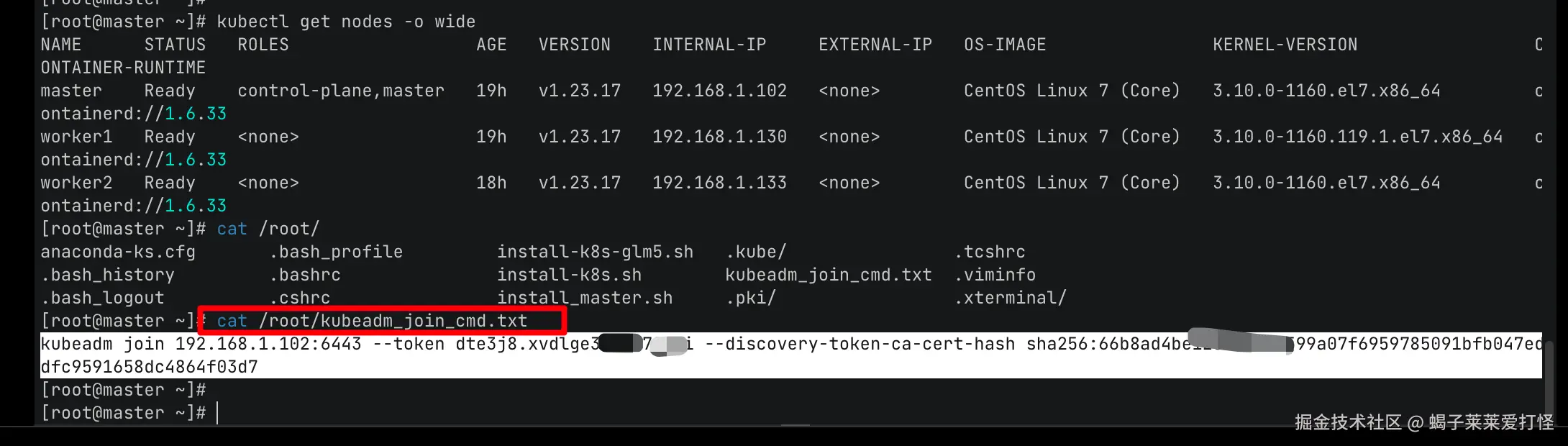

注意worker节点在执行过程中需要输入加入指令,加入指令在master节点的 /root/kubeadm_join_cmd.txt 文件下,粘贴这个指令:

复制到worker节点的 kubeadm join 命令输入处并回车

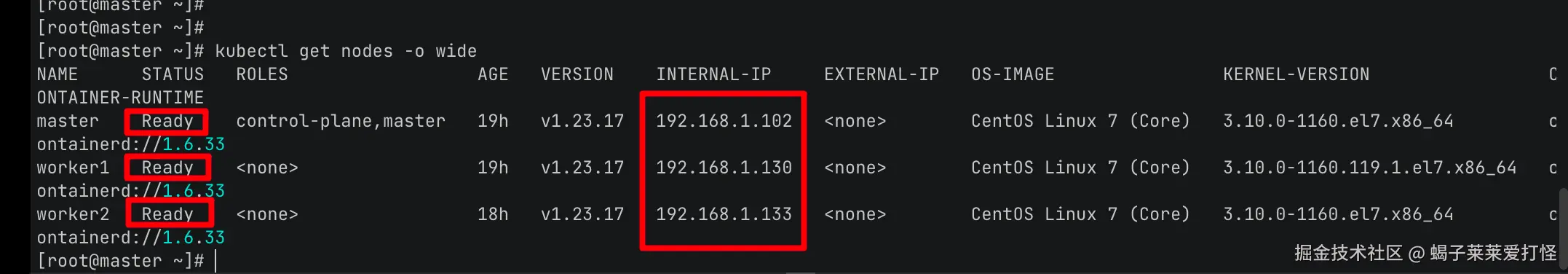

4、使用kubectl命令查看节点情况

最终在master节点查看,可看到集群搭建成功:

5、搭建rancher服务并导入k8s集群

ps:rancher版本使用 (v2.7.9)

这里使用docker安装rancher:

shell

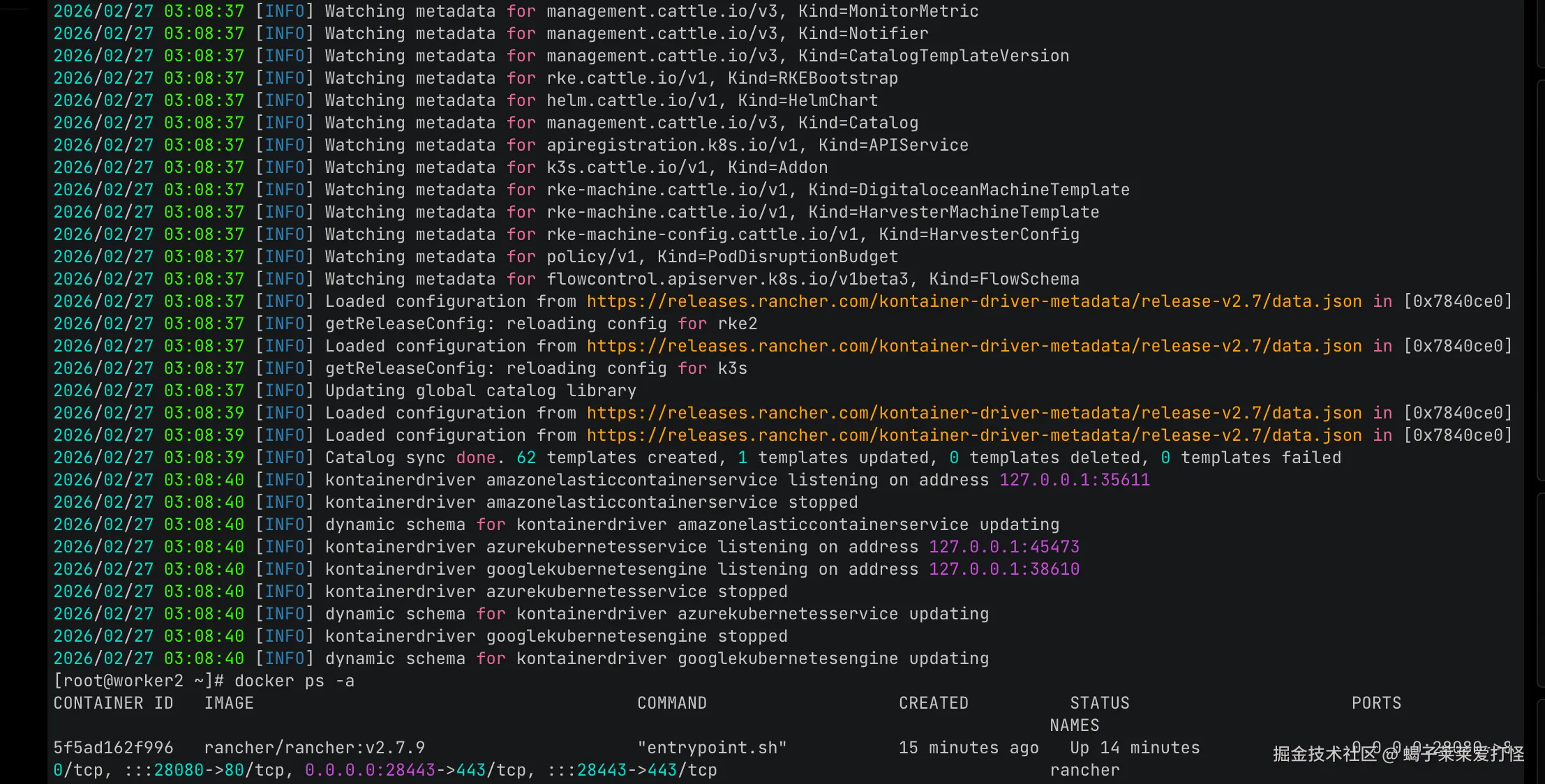

docker run -d --restart=unless-stopped -p 28080:80 -p 28443:443 -v /opt/rancher:/var/lib/rancher --privileged --name rancher rancher/rancher:v2.7.9安装完成后可以看到日志和容器状态:

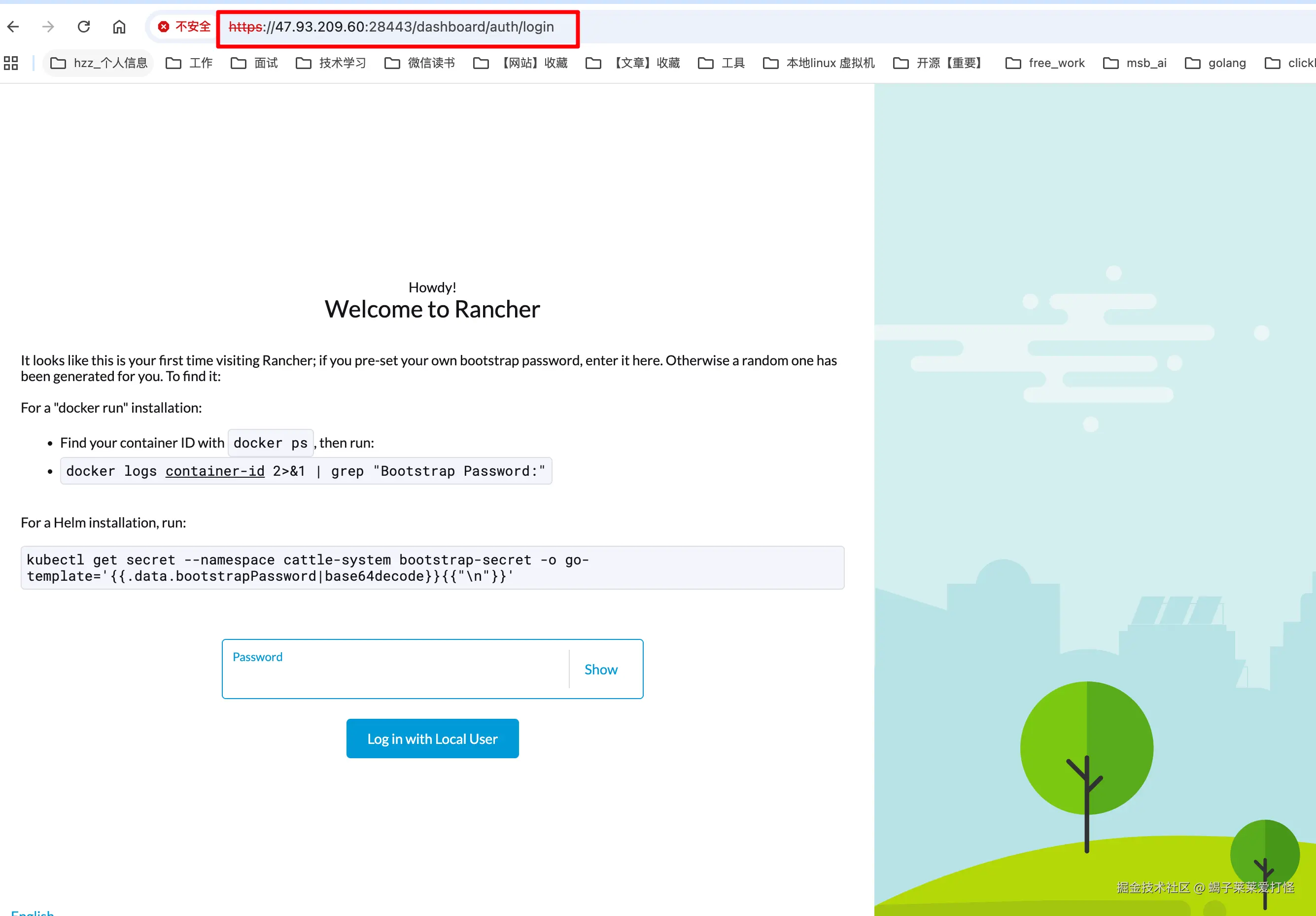

访问rancher(https://47.93.209.60:28443):

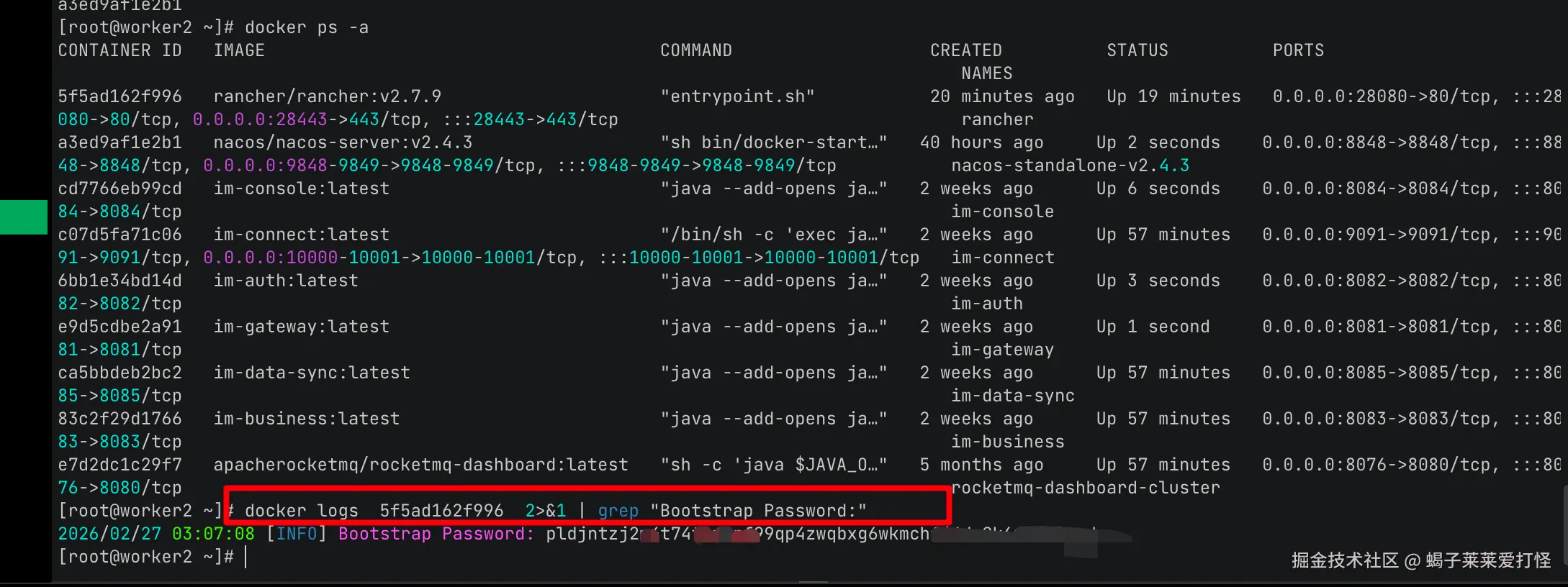

查看黏贴初始密码:

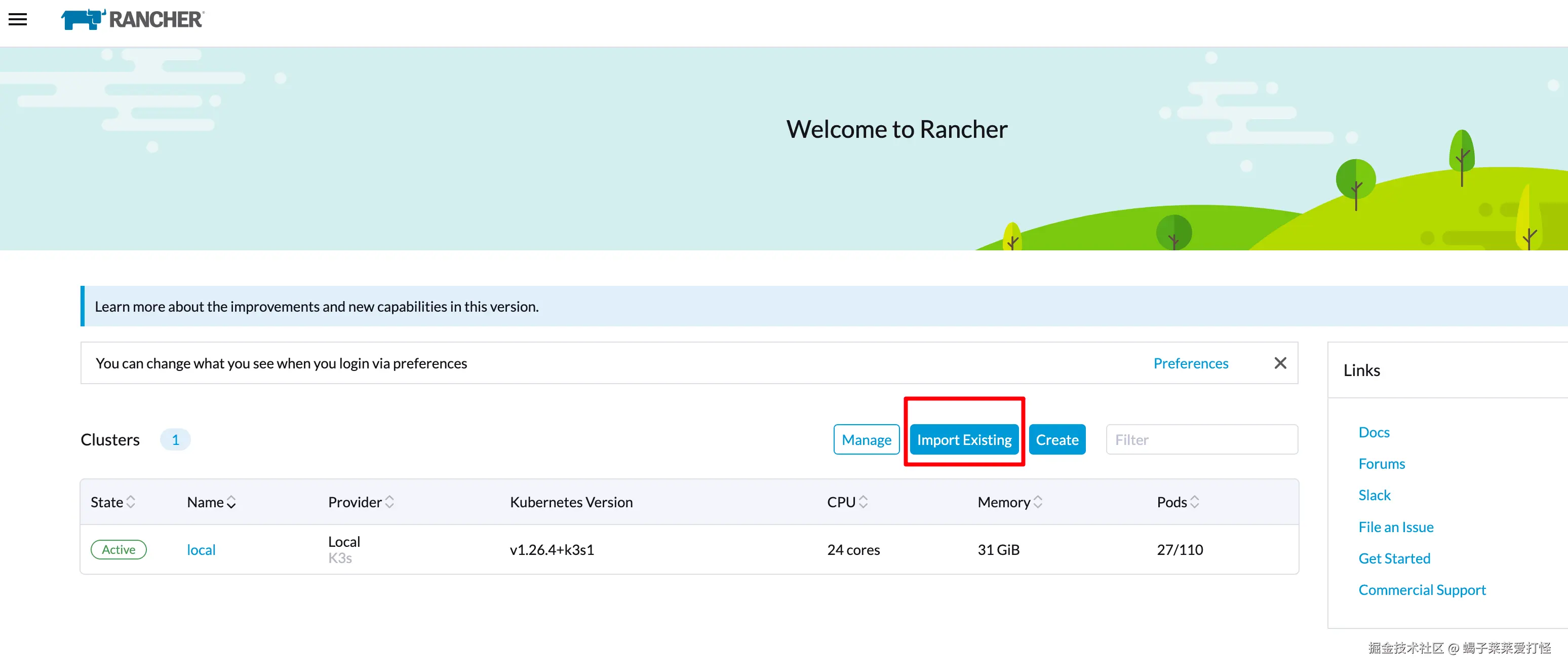

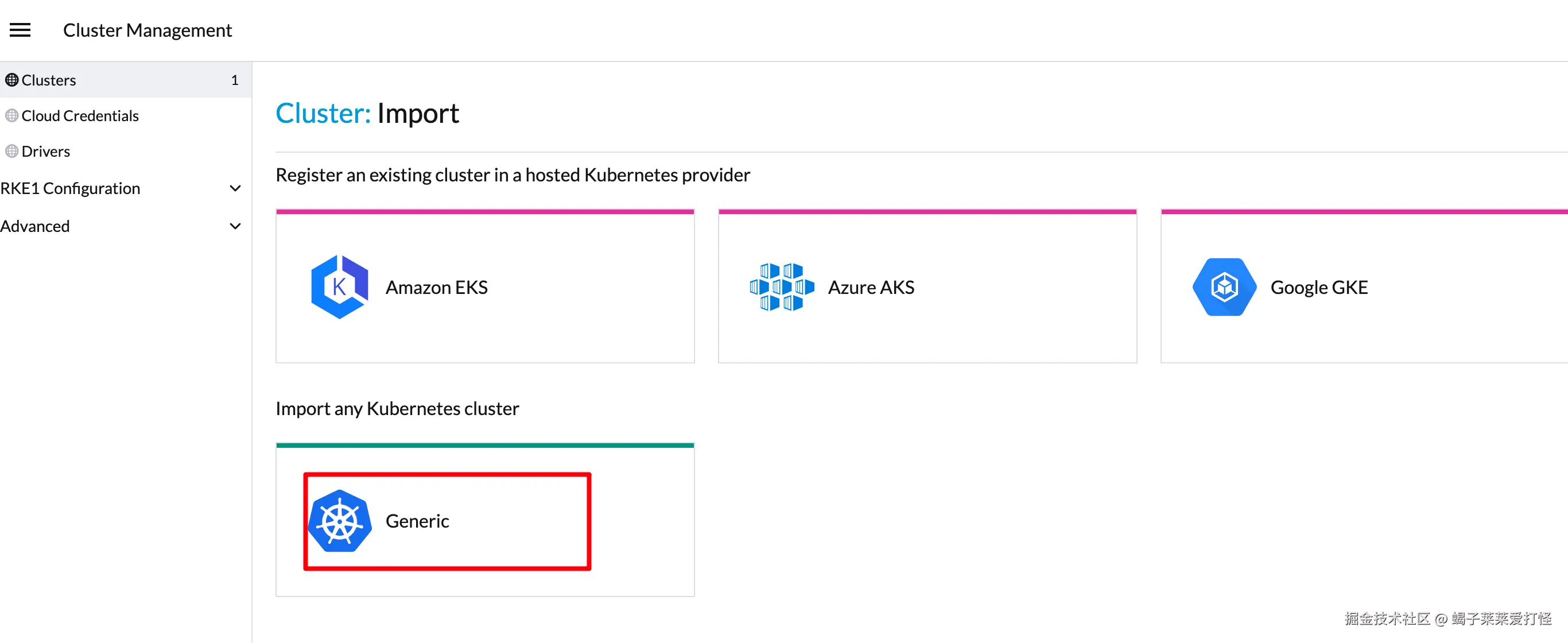

点击导入已存在的k8s集群:

自己搭建的这种k8s一般都选择通用:

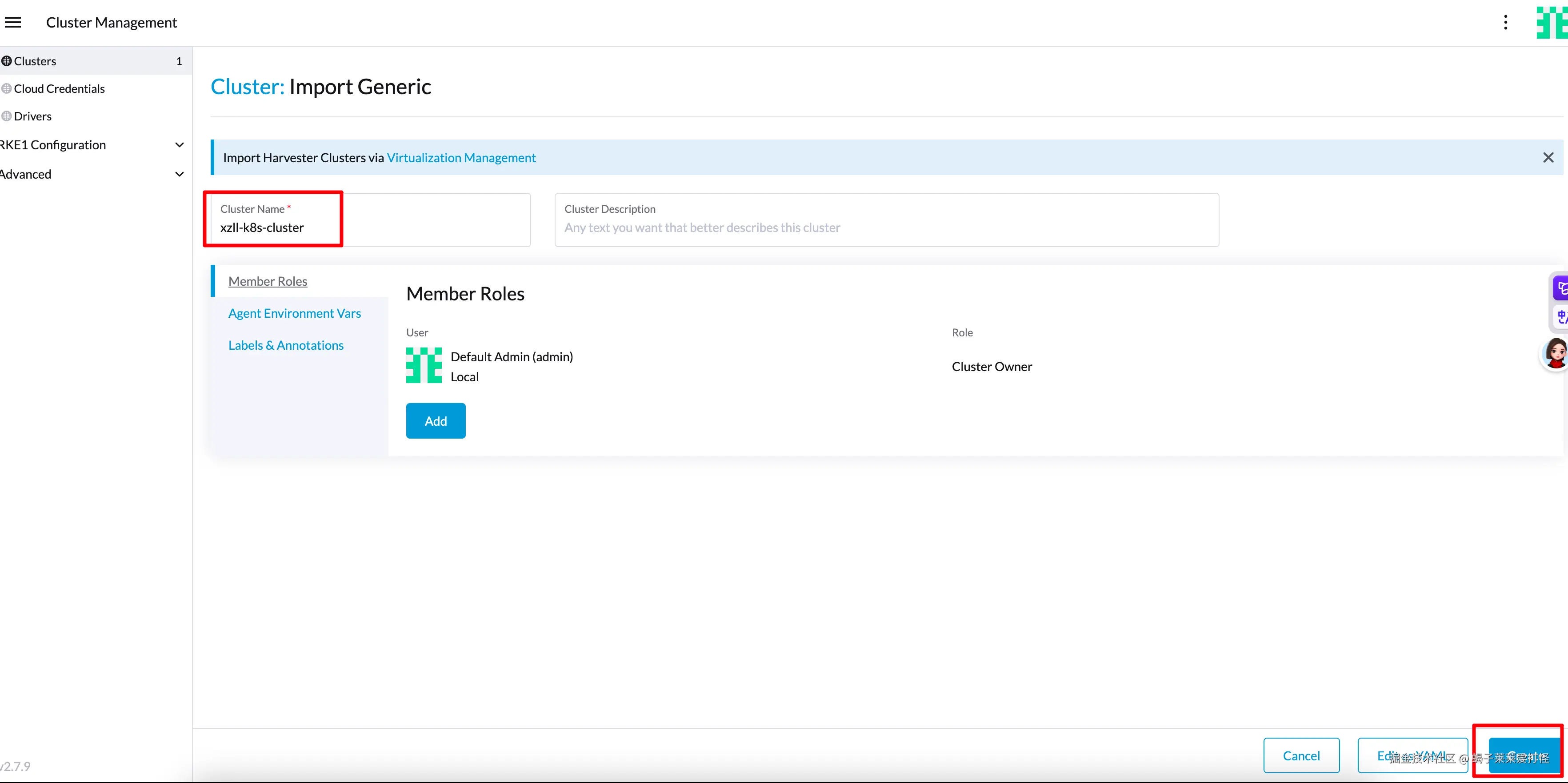

起个名字 然后点击创建:

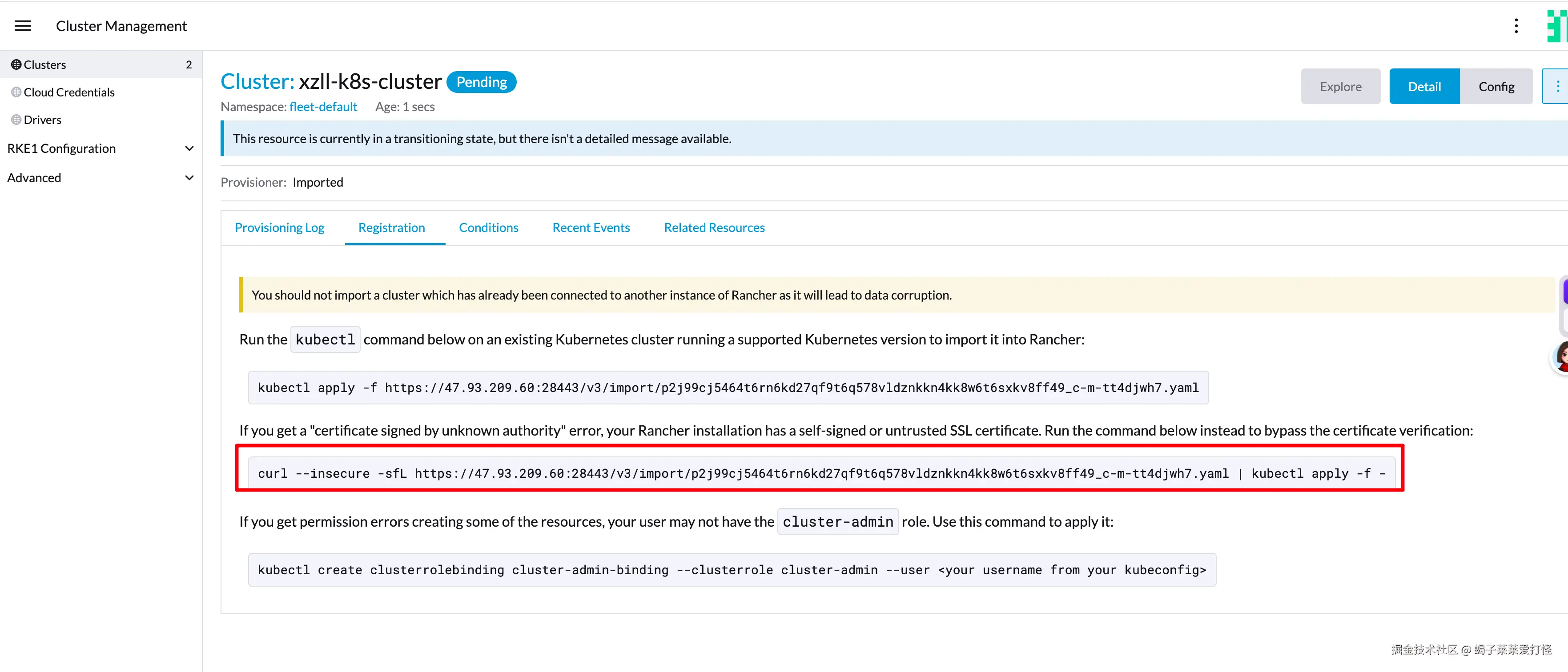

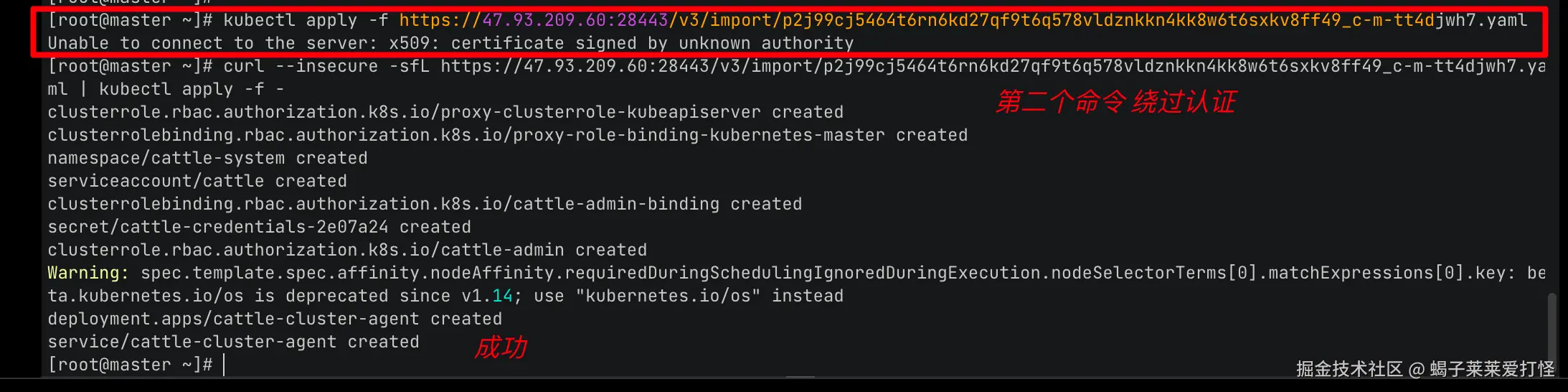

我这里第一种方式会报认证问题,所以选第二个命令绕过认证:

shell

curl --insecure -sfL https://47.93.209.60:28443/v3/import/p2j99cj5464t6rn6kd27qf9t6q578vldznkkn4kk8w6t6sxkv8ff49_c-m-tt4djwh7.yaml | kubectl apply -f -

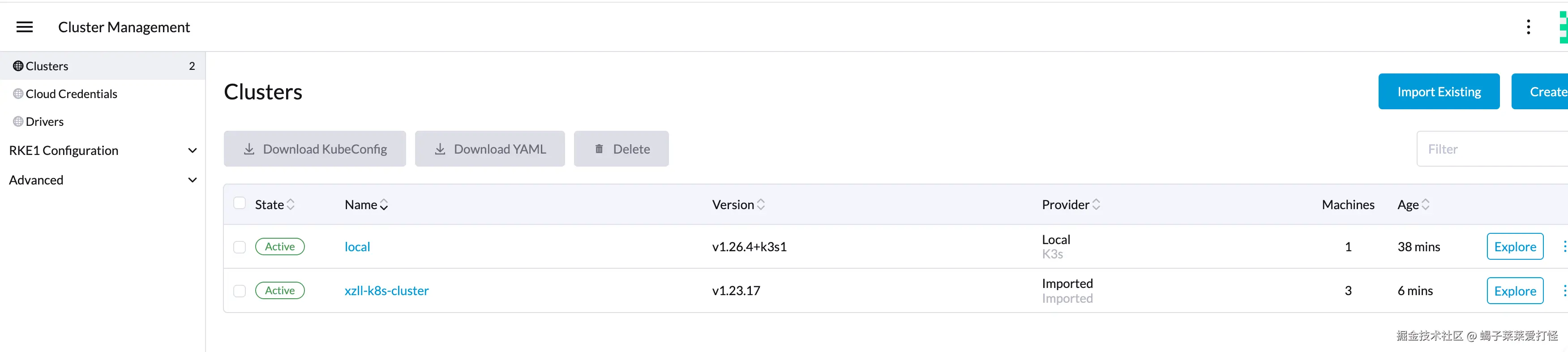

等待1-2分钟,可以看到集群状态正常:  集群节点信息:

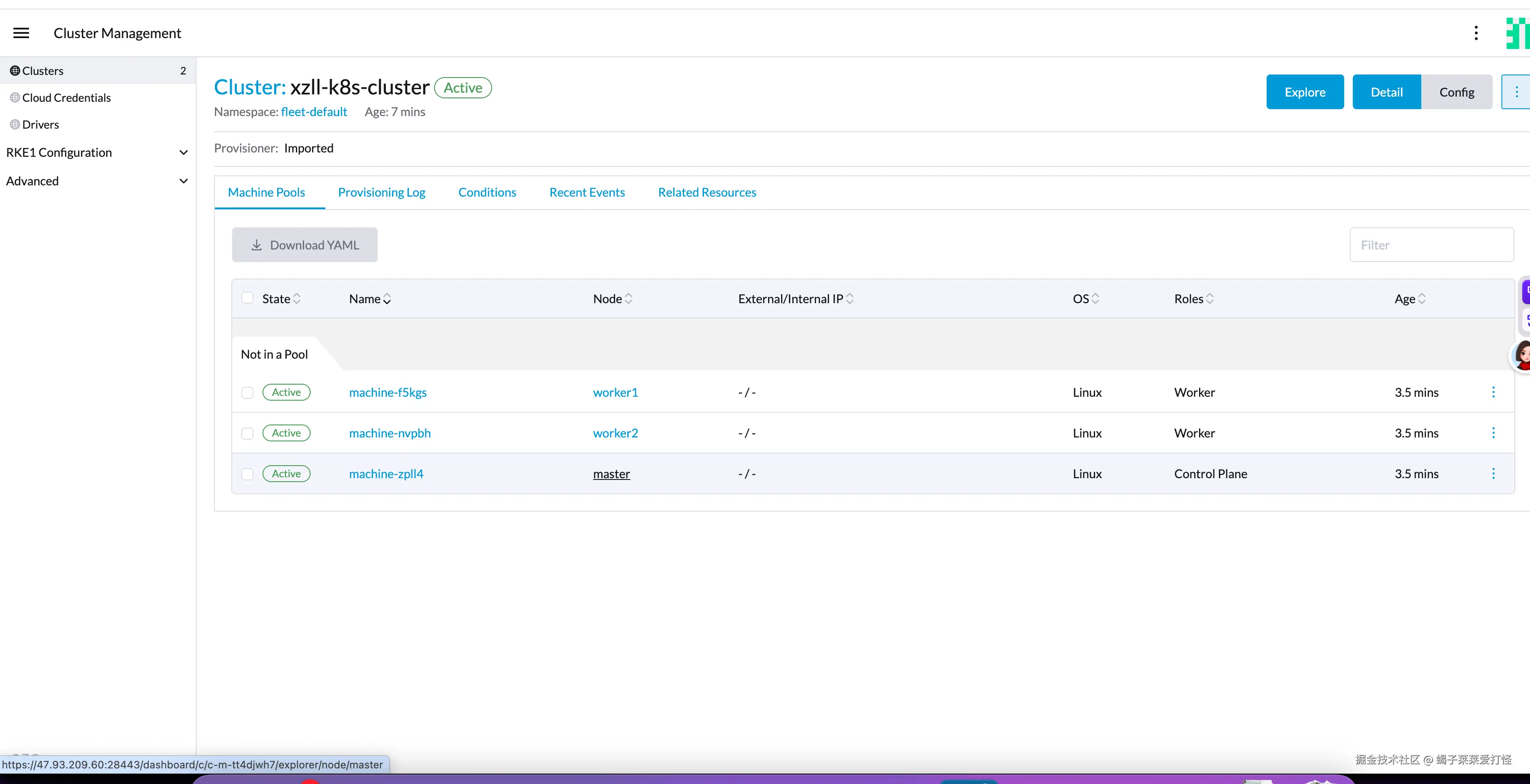

集群节点信息:

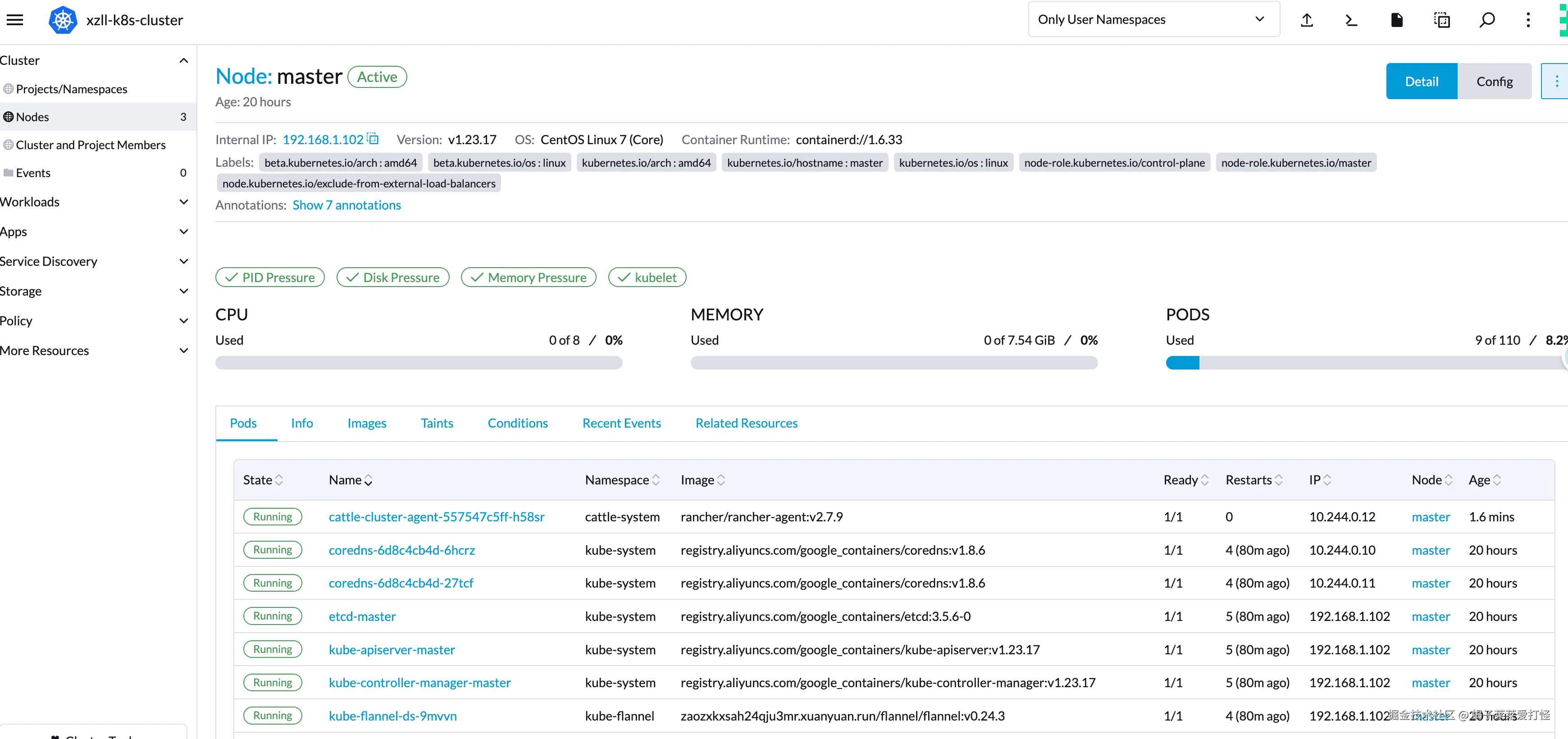

点击进去可以看到某节点详细信息:

当然rancher功能非常丰富,日后使用到再补充到这里。