Springboot集成kafka

环境配置

maven依赖如下 其余依赖根据业务自行配置

xml

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

<version>3.1.3</version>

<exclusions>

<exclusion>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>4.0.1</version> <!-- 与你的 Kafka 服务端版本一致 -->

</dependency>

<dependency>

<groupId>org.jspecify</groupId>

<artifactId>jspecify</artifactId>

<version>1.0.0</version>

<scope>compile</scope>

</dependency>

<dependency>

<groupId>org.springframework</groupId>

<artifactId>spring-expression</artifactId>

<version>6.1.4</version> <!-- 3.2.3对应的固定版本 -->

<scope>compile</scope> <!-- 强制编译+运行时包含 -->

</dependency>application.yml

yaml

spring:

kafka:

# bootstrap-servers: localhost:9092,localhost:9094,localhost:9096

bootstrap-servers: localhost:9092

# 生产者配置

producer:

# 消息Key/Value的序列化方式(必须和消费者反序列化对应)

key-serializer: org.apache.kafka.common.serialization.StringSerializer

value-serializer: org.apache.kafka.common.serialization.StringSerializer

# 消息确认机制:all=所有副本确认(最高可靠性,生产环境推荐)

acks: all

# 重试次数:发送失败时重试3次

retries: 3

# 批次大小:16KB(批量发送提升性能)

batch-size: 16384

# 缓冲区大小:32MB

buffer-memory: 33554432

# 消费者配置

consumer:

# 消息Key/Value的反序列化方式

key-deserializer: org.apache.kafka.common.serialization.StringDeserializer

value-deserializer: org.apache.kafka.common.serialization.StringDeserializer

# 消费者组ID(必填,同一组内的消费者负载均衡消费)

group-id: springboot-kafka-group

# 消费起始位置:earliest=从最开始消费;latest=从最新消息消费

auto-offset-reset: earliest

# 关闭自动提交偏移量(手动提交更安全,避免重复消费)

enable-auto-commit: true

auto-commit-interval: 1000生产者producer

java

@Resource

private KafkaTemplate<String, String> kafkaTemplate;

/**

* 异步发送消息(带消息Key:相同Key的消息会进入同一个分区,保证有序)

* @param topic 主题名

* @param key 消息Key

* @param message 消息内容

*/

public void sendMessageAsyncWithKey(String topic, String key, String message) {

ProducerRecord<String, String> record = new ProducerRecord<>(topic, key, message);

CompletableFuture<SendResult<String, String>> future = kafkaTemplate.send(record);

future.thenAcceptAsync(new Consumer<SendResult<String, String>>() {

@Override

public void accept(SendResult<String, String> stringStringSendResult) {

System.out.println(stringStringSendResult.getProducerRecord());

System.out.println(stringStringSendResult.getRecordMetadata());

}

});

}消费者consumer

java

@KafkaListener( topics = "test-springboot-topic",

groupId = "springboot-kafka-group"

)

public void consumeMessage(ConsumerRecord<String, String> record) {

System.out.println("消费到消息:" + record.value() );

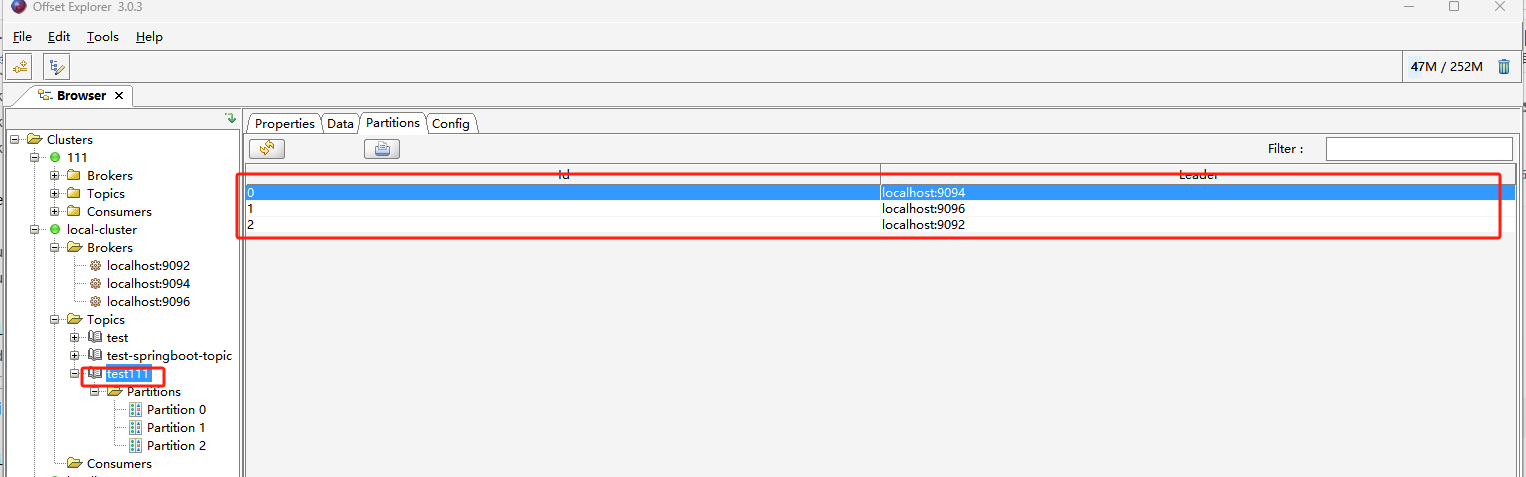

}创建topic

java

import org.apache.kafka.clients.admin.Admin;

import org.apache.kafka.clients.admin.AdminClientConfig;

import org.apache.kafka.clients.admin.CreateTopicsResult;

import org.apache.kafka.clients.admin.NewTopic;

import java.util.Collections;

import java.util.Map;

import java.util.concurrent.ConcurrentHashMap;

import java.util.concurrent.ExecutionException;

public class AdminTopicTest {

public static void main(String[] args) throws ExecutionException, InterruptedException {

Map<String,Object> configMap = new ConcurrentHashMap<>();

configMap.put(AdminClientConfig.BOOTSTRAP_SERVERS_CONFIG,"127.0.0.1:9092,127.0.0.1:9094,127.0.0.1:9096");

Admin admin = Admin.create(configMap);

//设置topic的名称 分区数 副本数

NewTopic topic = new NewTopic("test111",3,(short) 3);

CreateTopicsResult topics = admin.createTopics(Collections.singleton(topic));

admin.close();

}

}