目录

[1 搭建Openclaw](#1 搭建Openclaw)

[1.1 预备工作](#1.1 预备工作)

[- 供应商模型及API Keys:](#- 供应商模型及API Keys:)

[- Channel供应商](#- Channel供应商)

[- 系统配置](#- 系统配置)

[1.2 安装](#1.2 安装)

[- 采用npm安装openclaw方式](#- 采用npm安装openclaw方式)

[- 局域网访问允许跨域](#- 局域网访问允许跨域)

[- 连接飞书](#- 连接飞书)

[2 模型配置与切换](#2 模型配置与切换)

[3 Openclaw常用命令](#3 Openclaw常用命令)

[4 错误记录](#4 错误记录)

[- API rate limit reached. Please try again later.](#- API rate limit reached. Please try again later.)

[5 参考](#5 参考)

说明

-

在我的香橙派上安装小龙虾,并在飞书上喊它为我做事情

-

模型切换

-

全流程测试

1 搭建Openclaw

1.1 预备工作

- 供应商模型及API Keys:

| 厂商/条项 | 百炼千问 | 智谱 | Kimi |

| 开放平台 | https://bailian.console.aliyun.com | https://open.bigmodel.cn | https://platform.moonshot.cn |

| 模型 | qwen-turbo, qwen3.5-plus .... | glm-5 | kimi-k2.5 |

| Baseurl | https://dashscope.aliyuncs.com/compatible-mode/v1 | https://open.bigmodel.cn/api/paas/v4 | https://api.moonshot.cn/v1 |

| ApiKeys | -- | -- | -- |

|---|

- Channel供应商

| 厂商/条项 | 飞书 | |

| 开放平台 | https://open.feishu.cn | |

| app id | 略,(一般为 cli_xxx, 平台里面建项目应用后获取) | |

| app secret | 略 |

|---|

- 系统配置

香橙派5B(8核8G+128TF卡),Ubuntu22.04

1.2 安装

- 采用npm安装openclaw方式

注:安装channels时先略过不配置(目前安装有点小bug,会触发重复配置的错误),等装完系统后再通过chat交互界面来自动设置。

(https://docs.openclaw.ai/install#npm-pnpm)

1. Remove any existing Node.js installations (optional but recommended):

sudo apt purge nodejs npm -y

sudo apt autoremove -y

2. Install NVM (Node Version Manager):

sudo apt update

sudo apt install curl -y

curl -o- https://raw.githubusercontent.com/nvm-sh/nvm/v0.39.7/install.sh | bash

3. Activate NVM in your current session:

export NVM_DIR="$HOME/.nvm"

[ -s "$NVM_DIR/nvm.sh" ] && \. "$NVM_DIR/nvm.sh" # This loads nvm

[ -s "$NVM_DIR/bash_completion" ] && \. "$NVM_DIR/bash_completion" # This loads nvm bash_completion

4. Install Node.js v22 (or the latest version):

nvm install node

# Or to install a specific version, e.g., 22:

nvm install 22

npm install -g openclaw@latest

openclaw onboard --install-daemon

openclaw gateway install安装完成后,管理后台可通过http://{ip}:18789/#token=xxx访问。

- 局域网访问允许跨域

"controlUi": {

"allowedOrigins": [

"http://172.16.1.20:18789",

"http://localhost:18789",

"http://127.0.0.1:18789"

],

"allowInsecureAuth": true,

"dangerouslyDisableDeviceAuth": true- 连接飞书

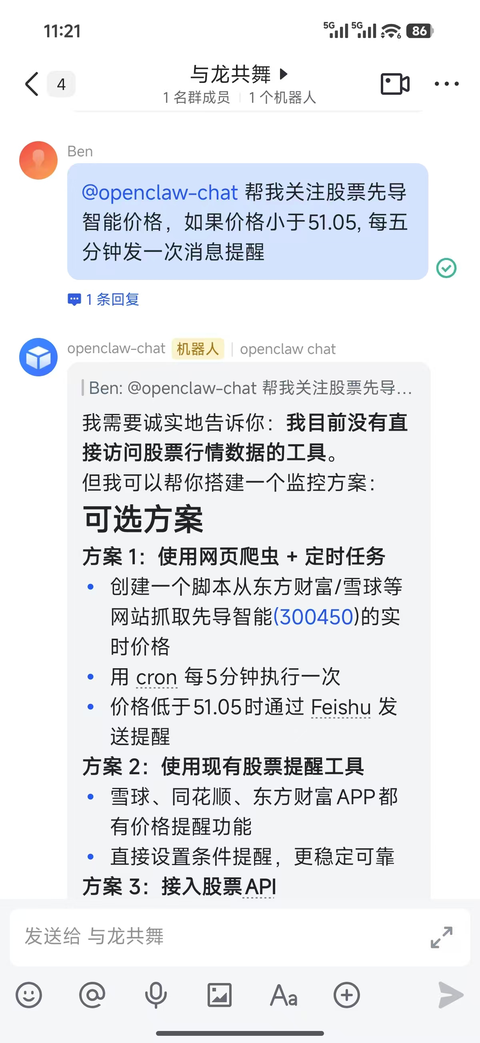

可在管理后台的聊天界面通过对话方式让小龙虾与你的飞书群连接,它会自动安装飞书扩展并让你提供对应的app id等信息帮助设置。

步骤:

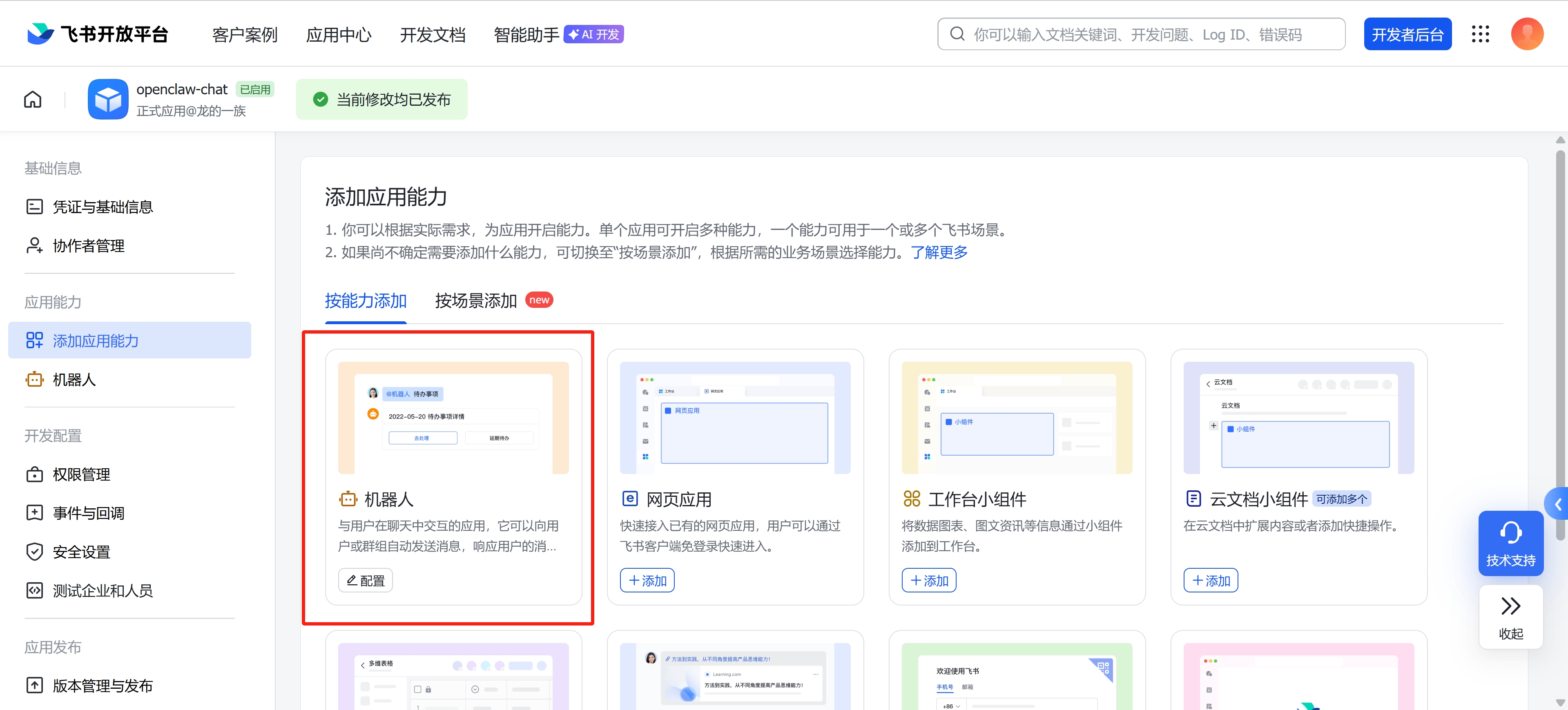

- 先在飞书建立应用openclaw-chat,然后添加机器人,添加相关权限

- 事件订阅方式采用长连接,添加相关事件

- 在飞书应用建群,在群配置-添加群机器人openclaw-chat

- 在管理后台要小龙虾配置飞书

- 效果

2 模型配置与切换

- 执行命令

openclaw models set <模型 ID>

注:有时修改后,有些会话所用的model并不能及时切换(即使restart gateway),这时候需要重启机器再观察。

-

配置主文件位于 ~/.openclaw/openclaw.json, 完整参考:

{

meta: {

lastTouchedVersion: '2026.3.11',

lastTouchedAt: '2026-03-19T03:57:31.099Z',

},

wizard: {

lastRunAt: '2026-03-13T02:48:28.037Z',

lastRunVersion: '2026.3.8',

lastRunCommand: 'doctor',

lastRunMode: 'local',

},

update: {

checkOnStart: false,

auto: {

enabled: false,

},

},

auth: {

profiles: {

'qwen-portal:default': {

provider: 'qwen-portal',

mode: 'oauth',

},

},

},

models: {

providers: {

'qwen-portal': {

baseUrl: 'https://portal.qwen.ai/v1',

apiKey: 'OPENCLAW_REDACTED',

api: 'openai-completions',

models: [

{

id: 'coder-model',

name: 'Qwen Coder',

reasoning: false,

input: [

'text',

],

cost: {

input: 0,

output: 0,

cacheRead: 0,

cacheWrite: 0,

},

contextWindow: 128000,

maxTokens: 8192,

},

{

id: 'vision-model',

name: 'Qwen Vision',

reasoning: false,

input: [

'text',

'image',

],

cost: {

input: 0,

output: 0,

cacheRead: 0,

cacheWrite: 0,

},

contextWindow: 128000,

maxTokens: 8192,

},

],

},

bailian: {

baseUrl: 'https://dashscope.aliyuncs.com/compatible-mode/v1',

apiKey: 'OPENCLAW_REDACTED',

api: 'openai-completions',

models: [

{

id: 'qwen-turbo',

name: 'qwen-turbo',

api: 'openai-completions',

reasoning: false,

input: [

'text',

'image',

],

cost: {

input: 0,

output: 0,

cacheRead: 0,

cacheWrite: 0,

},

contextWindow: 1000000,

maxTokens: 65536,

},

{

id: 'qwen3-coder-next',

name: 'qwen3-coder-next',

api: 'openai-completions',

reasoning: false,

input: [

'text',

],

cost: {

input: 0,

output: 0,

cacheRead: 0,

cacheWrite: 0,

},

contextWindow: 262144,

maxTokens: 65536,

},

],

},

moonshot: {

baseUrl: 'https://api.moonshot.cn/v1',

apiKey: 'OPENCLAW_REDACTED',

api: 'openai-completions',

models: [

{

id: 'kimi-k2.5',

name: 'Kimi K2.5',

reasoning: false,

input: [

'text',

],

cost: {

input: 0,

output: 0,

cacheRead: 0,

cacheWrite: 0,

},

contextWindow: 256000,

maxTokens: 8192,

},

{

id: 'kimi-k2-thinking',

name: 'Kimi K2 Thinking',

reasoning: true,

input: [

'text',

],

cost: {

input: 0,

output: 0,

cacheRead: 0,

cacheWrite: 0,

},

contextWindow: 256000,

maxTokens: 8192,

},

],

},

zhipu: {

baseUrl: 'https://open.bigmodel.cn/api/paas/v4',

apiKey: 'OPENCLAW_REDACTED',

api: 'openai-completions',

models: [

{

id: 'glm-5',

name: 'GLM 5.0',

api: 'openai-completions',

reasoning: true,

input: [

'text',

],

cost: {

input: 0,

output: 0,

cacheRead: 0,

cacheWrite: 0,

},

contextWindow: 128000,

maxTokens: 8192,

},

{

id: 'glm-5-flash',

name: 'GLM 5.0 Flash',

api: 'openai-completions',

reasoning: false,

input: [

'text',

],

cost: {

input: 0,

output: 0,

cacheRead: 0,

cacheWrite: 0,

},

contextWindow: 128000,

maxTokens: 8192,

},

],

},

},

},

agents: {

defaults: {

model: {

primary: 'moonshot/kimi-k2.5',

},

models: {

'qwen-portal/coder-model': {

alias: 'qwen',

},

'qwen-portal/vision-model': {},

'bailian/qwen-turbo': {},

'moonshot/kimi-k2.5': {

alias: 'kimi',

},

'moonshot/kimi-k2-thinking': {},

'zhipu/glm-5': {},

'zhipu/glm-5-flash': {},

},

workspace: '/root/.openclaw/workspace',

compaction: {

mode: 'safeguard',

},

},

list: [

{

id: 'main',

model: 'zhipu/glm-5',

},

],

},

tools: {

profile: 'coding',

web: {

search: {

enabled: true,

provider: 'kimi',

kimi: {

apiKey: 'OPENCLAW_REDACTED',

},

},

},

},

bindings: [

{

agentId: 'main',

match: {

channel: 'feishu',

accountId: 'default',

},

},

],

commands: {

native: 'auto',

nativeSkills: 'auto',

restart: true,

ownerDisplay: 'raw',

},

session: {

dmScope: 'per-channel-peer',

},

hooks: {

internal: {

enabled: true,

entries: {

'command-logger': {

enabled: true,

},

'boot-md': {

enabled: true,

},

},

},

},

channels: {

feishu: {

enabled: true,

appId: 'cli_a93bf33765f81bc0',

appSecret: 'OPENCLAW_REDACTED',

connectionMode: 'websocket',

domain: 'feishu',

groupPolicy: 'open',

},

},

gateway: {

port: 18789,

mode: 'local',

bind: 'lan',

controlUi: {

allowedOrigins: [

'http://172.16.1.20:18789',

'http://localhost:18789',

'http://127.0.0.1:18789',

],

allowInsecureAuth: true,

dangerouslyDisableDeviceAuth: true,

},

auth: {

mode: 'token',

token: 'OPENCLAW_REDACTED',

},

tailscale: {

mode: 'off',

resetOnExit: false,

},

remote: {

url: 'ws://127.0.0.1:18789',

},

nodes: {

denyCommands: [

'camera.snap',

'camera.clip',

'screen.record',

'contacts.add',

'calendar.add',

'reminders.add',

'sms.send',

],

},

},

plugins: {

entries: {

'qwen-portal-auth': {

enabled: true,

},

feishu: {

enabled: true,

},

},

},

}

3 Openclaw常用命令

# openclaw 医生

openclaw doctor

# 配置入口

openclaw configure --section gateway

# 重启入口网关

openclaw gateway restart

# 配置LLM访问验证

openclaw models auth login --provider qwen-portal

# 更改模型

openclaw models set <模型 ID>

# 查会话列表

openclaw sessions

# 查模型

openclaw models

openclaw models list

# channel或飞书相关

openclaw channels logs # 实时查看日志,确认是否出现 "not in groupAllowFrom" 错误

openclaw config get channels.feishu.appId # 获取飞书应用id

openclaw config get channels.feishu.appSecret

openclaw config get channels.feishu.groupPolicy

openclaw config get channels.feishu.groupAllowFrom4 错误记录

- API rate limit reached. Please try again later.

A: 厂商模型API调用配额满