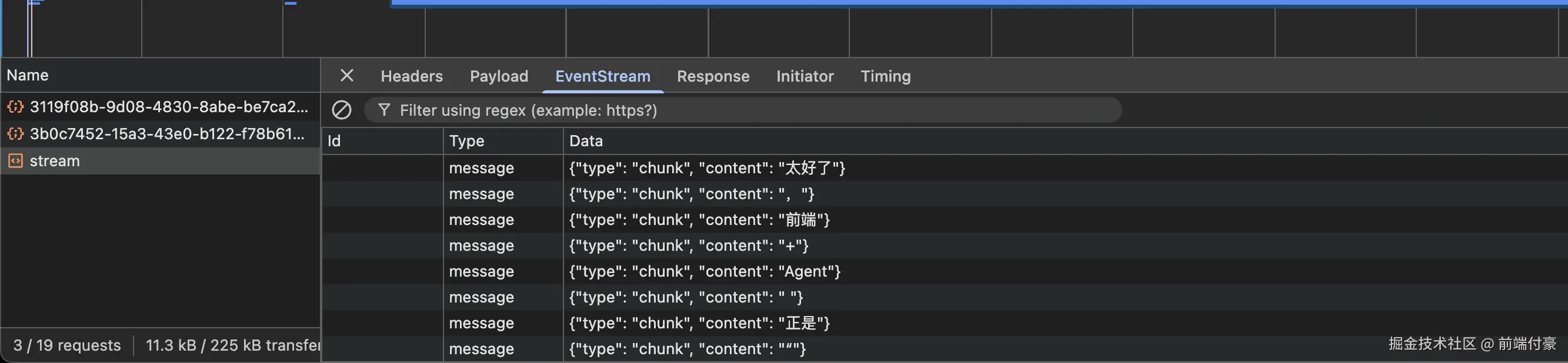

新增效果:

- AI 回复不再一次性返回

- web会一段段显示

- 和我们使用其他 AI 工具体验类似

一、server/app.py

1)补充 import

javascript

import json

from fastapi.responses import StreamingResponse2)新增一个构造最终 messages 的方法

python

def build_final_messages(messages: List[dict], session_id: str = ""):

session_memories = get_session_memories(session_id or "")

memory_prompt = build_memory_prompt(session_memories)

final_messages = []

for item in messages:

if item["role"] == "system":

final_messages.append({

"role": "system",

"content": f'{item["content"]}\n\n{memory_prompt}'.strip()

})

else:

final_messages.append(item)

return final_messages, session_memories3)改/api/chat

less

@app.post("/api/chat", response_model=ChatResponse)

def chat(req: ChatRequest):

messages = [m.model_dump() for m in req.messages]

final_messages, session_memories = build_final_messages(messages, req.session_id or "")

print("session_memories:", session_memories)

try:

completion = client.chat.completions.create(

model=MODEL_NAME,

messages=final_messages,

temperature=0.7,

)

reply = completion.choices[0].message.content or ""

except Exception as e:

print("chat error:", e)

reply = "AI服务异常,请稍后再试"

if req.session_id:

latest_user_text = ""

for item in reversed(messages):

if item["role"] == "user":

latest_user_text = item["content"]

break

print("session_id:", req.session_id)

print("latest_user_text:", latest_user_text)

if latest_user_text:

new_memories = extract_user_memories(latest_user_text)

print("new_memories:", new_memories)

add_session_memories(req.session_id, new_memories)

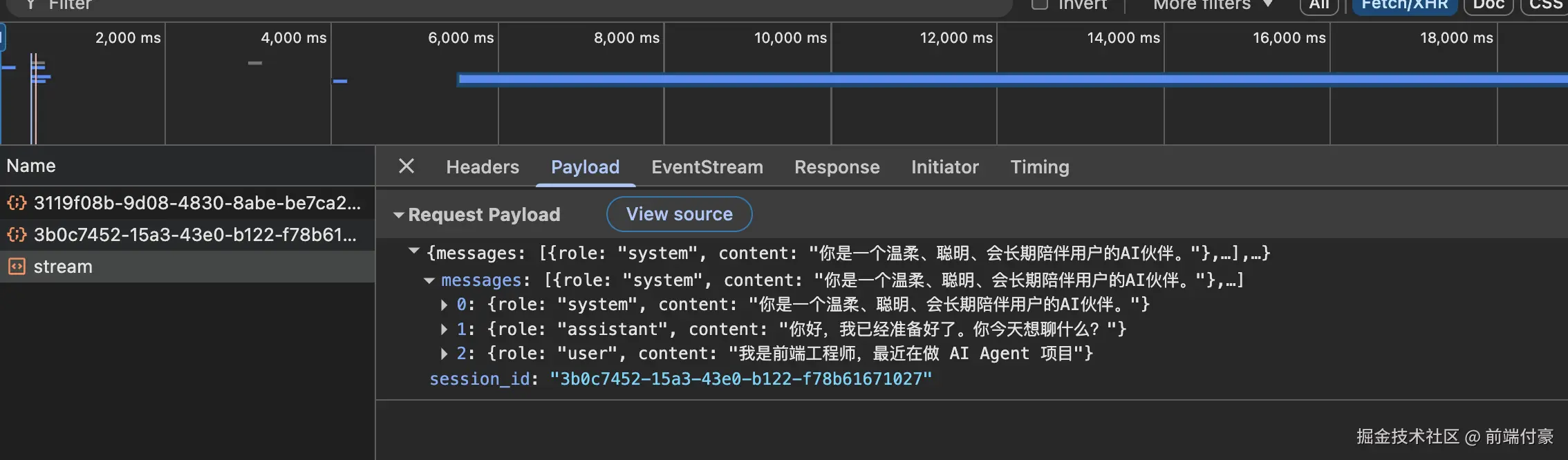

return ChatResponse(reply=reply)4)新增流式接口

python

@app.post("/api/chat/stream")

def chat_stream(req: ChatRequest):

messages = [m.model_dump() for m in req.messages]

final_messages, session_memories = build_final_messages(messages, req.session_id or "")

print("stream session_memories:", session_memories)

def generate():

full_reply = ""

try:

stream = client.chat.completions.create(

model=MODEL_NAME,

messages=final_messages,

temperature=0.7,

stream=True,

)

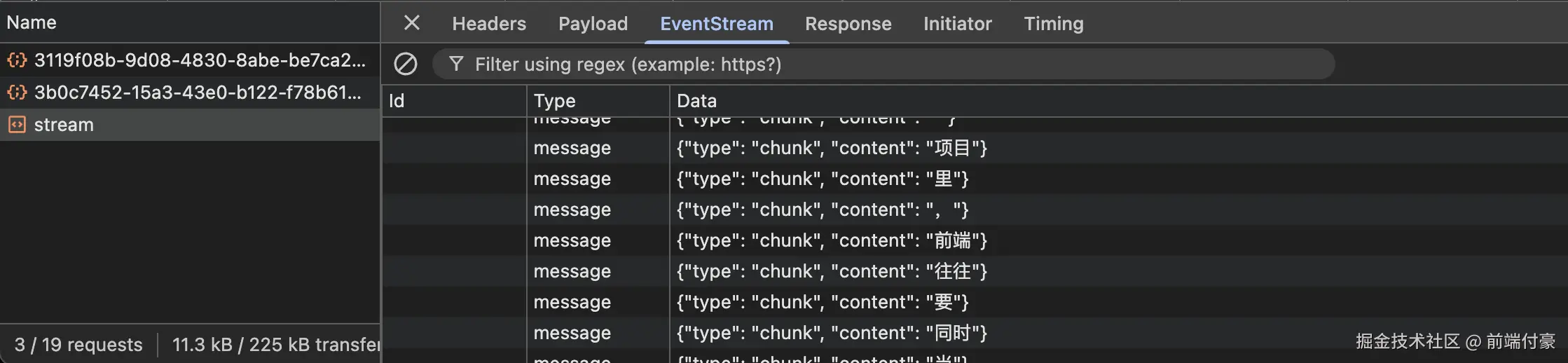

for chunk in stream:

delta = chunk.choices[0].delta.content or ""

if delta:

full_reply += delta

yield f"data: {json.dumps({'type': 'chunk', 'content': delta}, ensure_ascii=False)}\n\n"

except Exception as e:

print("stream chat error:", e)

yield f"data: {json.dumps({'type': 'error', 'content': 'AI服务异常,请稍后再试'}, ensure_ascii=False)}\n\n"

return

if req.session_id:

latest_user_text = ""

for item in reversed(messages):

if item["role"] == "user":

latest_user_text = item["content"]

break

print("stream session_id:", req.session_id)

print("stream latest_user_text:", latest_user_text)

if latest_user_text:

new_memories = extract_user_memories(latest_user_text)

print("stream new_memories:", new_memories)

add_session_memories(req.session_id, new_memories)

yield f"data: {json.dumps({'type': 'done', 'content': full_reply}, ensure_ascii=False)}\n\n"

return StreamingResponse(generate(), media_type="text/event-stream")二、改 web/src/App.vue

1)新增一个状态

放在:

csharp

const abortController = ref(null)2)新增流式请求方法

ini

const sendMessageStream = async messages => {

abortController.value = new AbortController()

const res = await fetch('http://127.0.0.1:8000/api/chat/stream', {

method: 'POST',

headers: {

'Content-Type': 'application/json',

},

body: JSON.stringify({

messages,

session_id: currentSession.value.id,

}),

signal: abortController.value.signal,

})

if (!res.ok || !res.body) {

throw new Error('流式请求失败')

}

const reader = res.body.getReader()

const decoder = new TextDecoder('utf-8')

let buffer = ''

updateCurrentSession(session => ({

...session,

updatedAt: Date.now(),

messages: [

...session.messages,

{

role: 'assistant',

content: '',

},

],

}))

while (true) {

const { done, value } = await reader.read()

if (done) break

buffer += decoder.decode(value, { stream: true })

const parts = buffer.split('\n\n')

buffer = parts.pop() || ''

for (const part of parts) {

const line = part.trim()

if (!line.startsWith('data: ')) continue

const jsonText = line.slice(6)

if (!jsonText) continue

try {

const payload = JSON.parse(jsonText)

if (payload.type === 'chunk') {

updateCurrentSession(session => {

const nextMessages = [...session.messages]

const lastIndex = nextMessages.length - 1

const lastMessage = nextMessages[lastIndex]

if (lastMessage?.role === 'assistant') {

nextMessages[lastIndex] = {

...lastMessage,

content: (lastMessage.content || '') + payload.content,

}

}

return {

...session,

updatedAt: Date.now(),

messages: nextMessages,

}

})

}

if (payload.type === 'error') {

updateCurrentSession(session => {

const nextMessages = [...session.messages]

const lastIndex = nextMessages.length - 1

const lastMessage = nextMessages[lastIndex]

if (lastMessage?.role === 'assistant') {

nextMessages[lastIndex] = {

...lastMessage,

content: payload.content,

}

}

return {

...session,

updatedAt: Date.now(),

messages: nextMessages,

}

})

}

if (payload.type === 'done') {

await fetchMemories()

}

} catch (e) {

console.error('stream parse error:', e)

}

}

}

}3)改 sendMessage

ini

const sendMessage = async () => {

const text = inputValue.value.trim()

if (!text || loading.value || !currentSession.value) return

updateCurrentSession(session => ({

...session,

updatedAt: Date.now(),

title:

session.messages.filter(i => i.role === 'user').length === 0

? text.slice(0, 12)

: session.title,

messages: [

...session.messages,

{

role: 'user',

content: text,

},

],

}))

inputValue.value = ''

loading.value = true

try {

await sendMessageStream(currentSession.value.messages)

} catch (error) {

updateCurrentSession(session => ({

...session,

updatedAt: Date.now(),

messages: [

...session.messages,

{

role: 'assistant',

content: '请求失败,请检查后端或API Key配置。',

},

],

}))

console.error(error)

} finally {

loading.value = false

abortController.value = null

}

}4)给输入区按钮旁边加一个"停止生成"

ini

<button :disabled="loading || !inputValue.trim()" @click="sendMessage">

发送

</button>

<button

v-if="loading"

class="stop-btn"

@click="abortController?.abort()"

>

停止

</button>5)补充按钮样式

在 style 里追加:

css

.stop-btn {

width: 100px;

background: #ef4444;

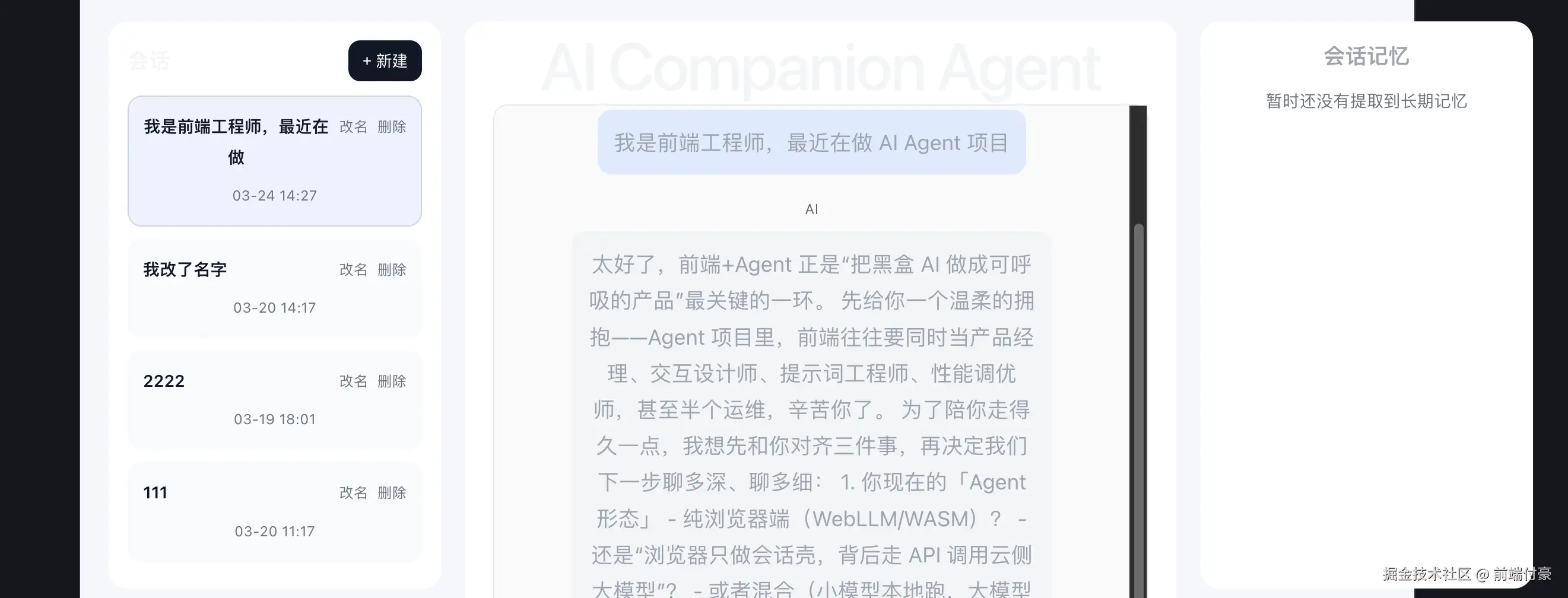

}三、验证

你发一句正常内容,比如:

我是前端工程师,最近在做 AI Agent 项目会看到:

- AI 回复是一段段出现的

- 生成过程中可以点"停止"

- 回复结束后右侧记忆会刷新

请求

结果是 流式 一段一段输出的