bash

k8s 部署情况:

dh-k8s-master1 内网:172.16.3.35

dh-k8s-master2 内网:172.16.3.36

dh-k8s-master3 内网:172.16.3.37

dh-k8s-node1 内网:172.16.3.38

dh-k8s-node2 内网:172.16.3.39

dh-k8s-node3 内网:172.16.3.40在 172.16.3.35 执行:

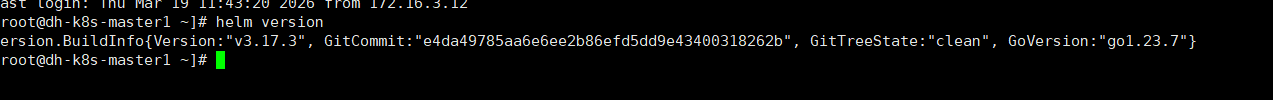

第 1 步:安装 Helm

bash

cd /usr/local/src

curl -fsSL -o helm.tar.gz https://get.helm.sh/helm-v3.17.3-linux-amd64.tar.gz

tar -zxvf helm.tar.gz

cp linux-amd64/helm /usr/local/bin/helm

chmod +x /usr/local/bin/helm

helm version

第 2 步:创建 Alloy 命名空间

bash

kubectl create namespace alloy

kubectl get ns | grep alloy如果提示已存在,可以忽略

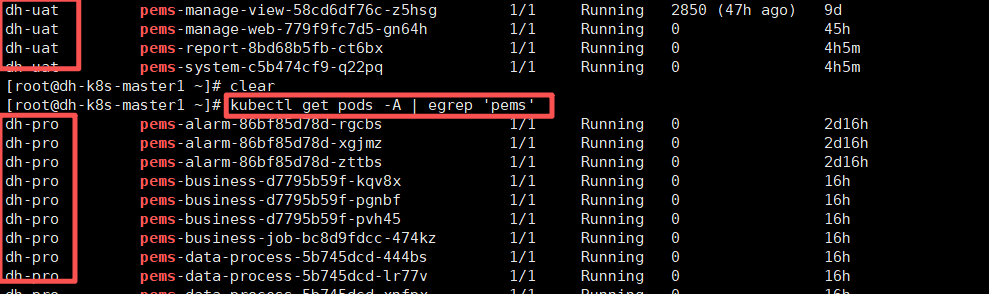

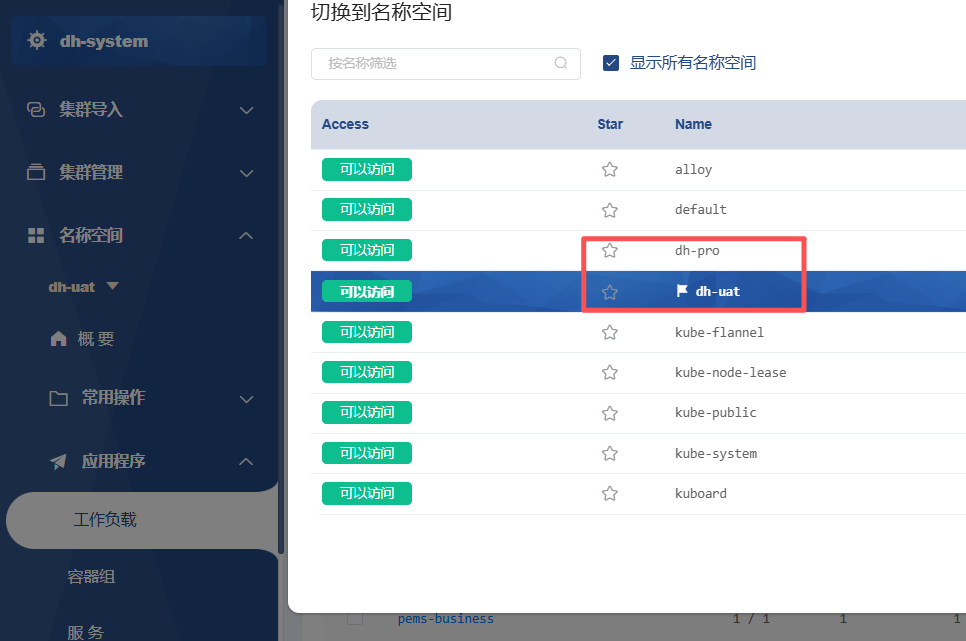

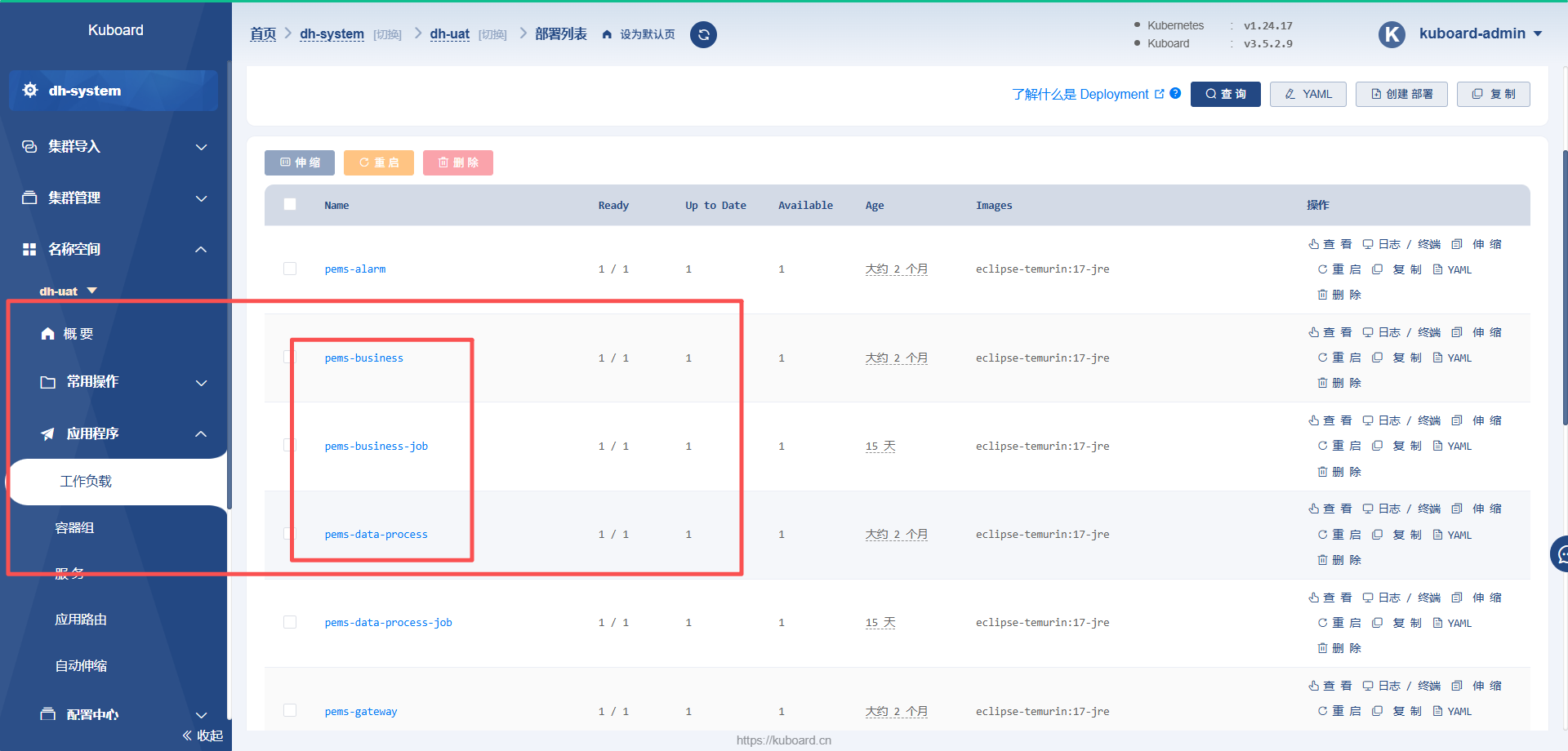

第 3 步:确认你的服务所在命名空间

bash

kubectl get pods -A | egrep '过滤条件'

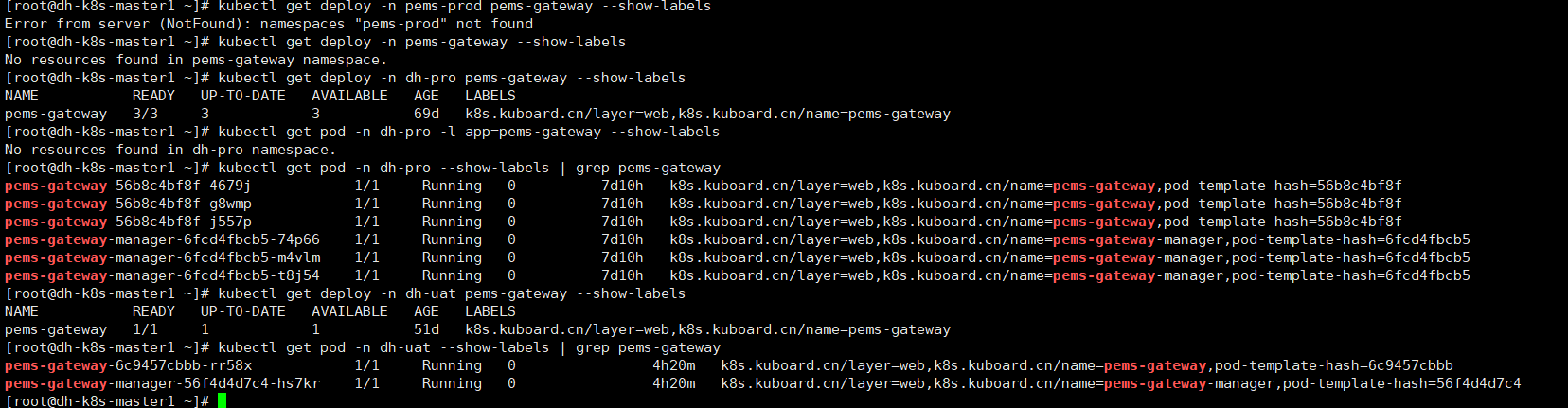

第 4 步:检查服务是否已经有标准标签

bash

kubectl get deploy -n dh-pro pems-gateway --show-labels

kubectl get pod -n dh-pro -l app=pems-gateway --show-labels

kubectl get pod -n dh-pro --show-labels | grep pems-gateway

kubectl get deploy -n dh-uat pems-gateway --show-labels

kubectl get pod -n dh-uat --show-labels | grep pems-gateway

第 5 步:添加 Grafana Helm 仓库

Grafana 官方 Helm chart 仓库就是这个,Alloy Helm chart 也在这个仓库里

https://grafana.com/docs/helm-charts/?utm_source=chatgpt.com

bash

helm repo add grafana https://grafana.github.io/helm-charts

helm repo update第 6 步:创建 Alloy 的 values 文件

bash

mkdir -p /root/alloy这一版"只采 gateway"的 Alloy 配置

bash

cat > /root/alloy/values.yaml <<'EOF'

alloy:

configMap:

create: true

content: |-

discovery.kubernetes "pods" {

role = "pod"

}

discovery.relabel "dh_uat_only" {

targets = discovery.kubernetes.pods.targets

rule {

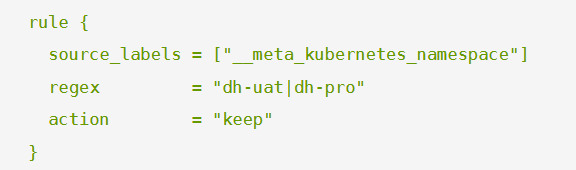

source_labels = ["__meta_kubernetes_namespace"]

regex = "dh-uat|dh-pro"

action = "keep"

}

rule {

source_labels = ["__meta_kubernetes_pod_node_name"]

regex = env("NODE_NAME")

action = "keep"

}

rule {

source_labels = ["__meta_kubernetes_pod_name"]

regex = "pems-system-.*|pems-business-.*|pems-data-process-.*|pems-alarm-.*|pems-gateway-.*|pems-infra-.*|pems-report-.*"

action = "keep"

}

rule {

source_labels = ["__meta_kubernetes_pod_name"]

regex = ".*-job-.*|.*-manager-.*"

action = "drop"

}

rule {

source_labels = ["__meta_kubernetes_namespace"]

target_label = "namespace"

}

rule {

source_labels = ["__meta_kubernetes_pod_container_name"]

target_label = "container"

}

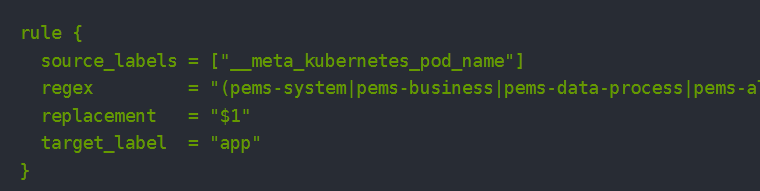

rule {

source_labels = ["__meta_kubernetes_pod_name"]

regex = "(pems-system|pems-business|pems-data-process|pems-alarm|pems-gateway|pems-infra|pems-report)-.*"

replacement = "$1"

target_label = "app"

}

rule {

source_labels = ["__meta_kubernetes_pod_name"]

regex = "(pems-system)-.*"

replacement = "system"

target_label = "component"

}

rule {

source_labels = ["__meta_kubernetes_pod_name"]

regex = "(pems-business)-.*"

replacement = "business"

target_label = "component"

}

rule {

source_labels = ["__meta_kubernetes_pod_name"]

regex = "(pems-data-process)-.*"

replacement = "data-process"

target_label = "component"

}

rule {

source_labels = ["__meta_kubernetes_pod_name"]

regex = "(pems-alarm)-.*"

replacement = "alarm"

target_label = "component"

}

rule {

source_labels = ["__meta_kubernetes_pod_name"]

regex = "(pems-gateway)-.*"

replacement = "gateway"

target_label = "component"

}

rule {

source_labels = ["__meta_kubernetes_pod_name"]

regex = "(pems-infra)-.*"

replacement = "infra"

target_label = "component"

}

rule {

source_labels = ["__meta_kubernetes_pod_name"]

regex = "(pems-report)-.*"

replacement = "report"

target_label = "component"

}

rule {

source_labels = ["__meta_kubernetes_namespace"]

regex = "dh-uat"

replacement = "uat"

target_label = "env"

}

rule {

source_labels = ["__meta_kubernetes_namespace"]

regex = "dh-pro"

replacement = "pro"

target_label = "env"

}

rule {

replacement = "k8s-main"

target_label = "cluster"

}

rule {

replacement = "kubernetes-pods"

target_label = "job"

}

}

loki.source.kubernetes "pods" {

targets = discovery.relabel.dh_uat_only.output

forward_to = [loki.write.default.receiver]

}

loki.write "default" {

endpoint {

url = "http://172.16.3.31:3100/loki/api/v1/push"

}

}

extraEnv:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

controller:

type: daemonset

serviceAccount:

create: true

rbac:

create: true

alloy-mounts:

varlog: false

dockercontainers: false

extra:

- name: alloy-tmp

mountPath: /tmp/alloy

type: emptyDir

alloy-extraArgs:

- --stability.level=generally-available

tolerations: []

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: node-role.kubernetes.io/control-plane

operator: DoesNotExist

- key: node-role.kubernetes.io/master

operator: DoesNotExist

EOF说明 :

1:这个是dh-uat\|dh-pro两个对应自己的项目环境,测试,正式

2:这个是要采集哪些系统的日志

3:这个type : daemonset类型表示是每一个node节点上都会有一个alloy,分别采集日志,向loki发送日志

type:deployment 为这个时,只会部署一个alloy, 其他k8s 工作节点会把日志同步到这一个安装有alloy有节点上,再统一发送给Loki

bash

controller:

type: deployment

replicas: 1这份配置的逻辑是:

discovery.kubernetes发现 Podloki.source.kubernetes采集 Pod 日志loki.relabel只保留你那 7 个服务,并把 K8s 元数据映射为 Loki 标签loki.write把日志直接写到172.16.3.31:3100的 Loki

这正符合 Alloy 官方组件职责定义

https://grafana.com/docs/alloy/latest/reference/components/loki/loki.write/

第 7 步:安装 Alloy

bash

helm repo add grafana https://grafana.github.io/helm-charts

helm repo update

helm upgrade --install alloy grafana/alloy \

-n alloy \

-f /root/alloy/values.yamlGrafana 官方文档说明 Alloy 可以通过 Helm chart 安装到 Kubernetes

https://grafana.com/docs/alloy/latest/set-up/install/kubernetes/?utm_source=chatgpt.com

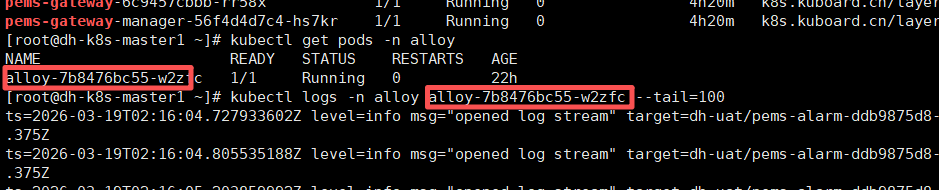

第 8 步:查看 Alloy Pod 是否正常起来

bash

kubectl get pods -n alloy -o wide因为我们用了 controller.type: daemonset,理论上会看到 每个节点一个 Alloy Pod。

当前方案的效果:采集端贴近每个节点。K8s 日志采集文档也明确把 DaemonSet 作为常见部署方式

https://grafana.com/docs/alloy/latest/collect/logs-in-kubernetes/

第 9 步:看 Alloy 日志,确认是否能连到 31 上的 Loki

先列出 Pod:

bash

kubectl get pods -n alloy然后挑一个 Pod,看日志:

bash

kubectl logs -n alloy <alloy-pod-name> --tail=100

重点看有没有这类错误:

connection refusedtimeoutno such hostserver returned HTTP status 4xx/5xx

如果没有,说明 Alloy 已经能把日志写到 31 上的 Loki。

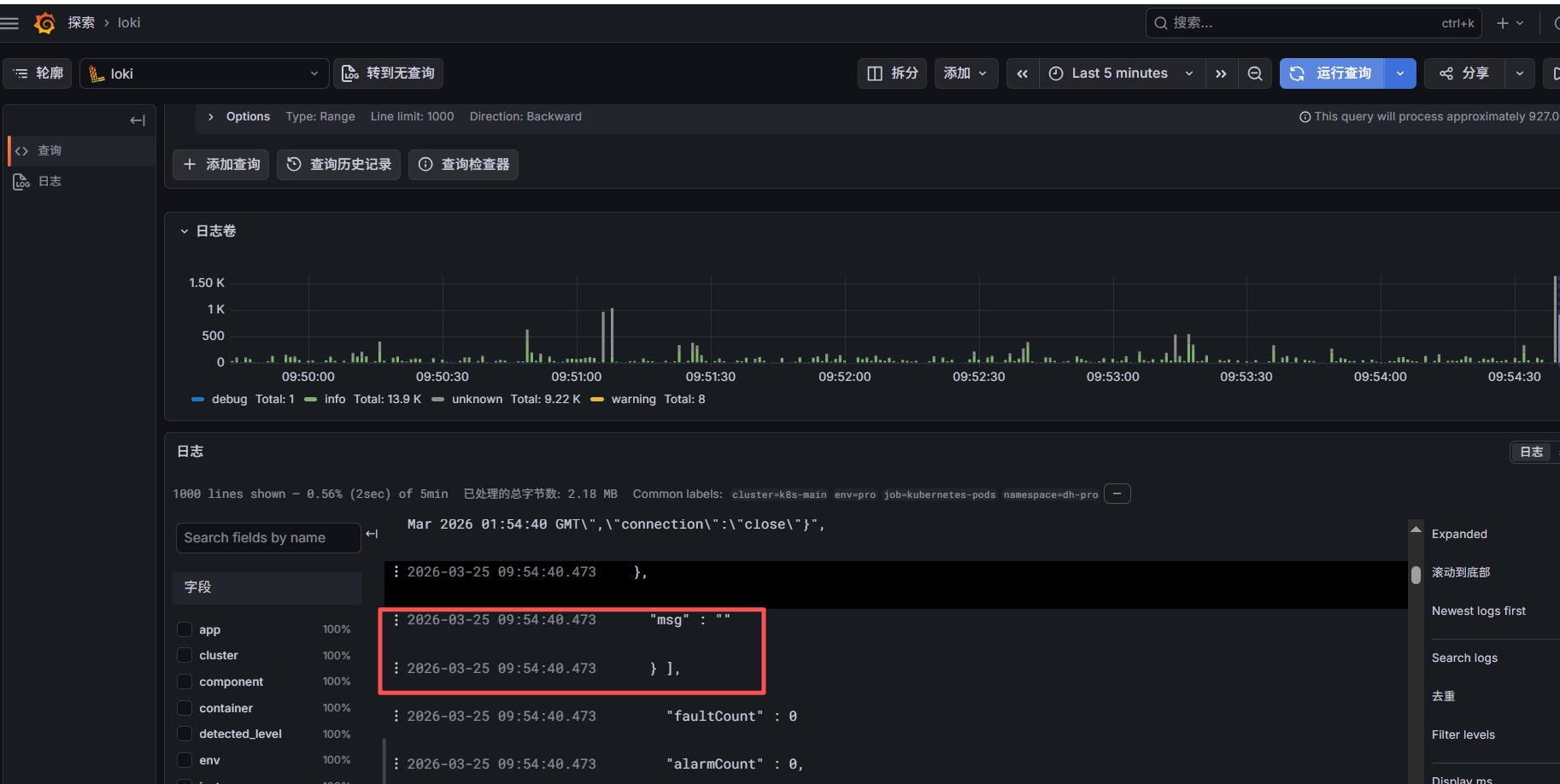

第 10 步:回到 Grafana 验证是否已经收到日志

打开 Grafana 的 Explore,选择 Loki 数据源,执行这条查询:

bash

{job="kubernetes-pods"}如果有数据,再继续查:

bash

{namespace="pems-prod"}或者:

bash

{app="pems-gateway"}Loki 的查询模式本来就是"先按标签缩小范围,再看日志内容",你现在这些标签就是给后面查询准备的

https://grafana.com/docs/alloy/latest/collect/logs-in-kubernetes/

第 11 步:如果查不到日志,优先查这 4 个地方

1)Alloy Pod 是否都 Running

bash

kubectl get pods -n alloy2)Alloy 能不能访问 31 的 Loki

在任意一个 Alloy Pod 里测试:

bash

kubectl exec -n alloy -it <alloy-pod-name> -- sh进入后执行:

bash

wget -qO- http://172.16.3.31:3100/ready如果返回 ready,说明网络通。

3)业务 Pod 标签是否匹配

bash

kubectl get pods -n pems-prod --show-labels | grep pems-gateway如果没有 app.kubernetes.io/name=pems-gateway 这种标签,当前 relabel 规则会筛不出来。

4)业务日志是不是输出到了 stdout

如果应用只写文件、不往控制台输出,K8s 容器日志就抓不到。Alloy 的 Kubernetes 日志采集是围绕 Pod/container logs 来做的

https://grafana.com/docs/alloy/latest/collect/logs-in-kubernetes/

最后注意一点:这个时间是以日志打印时输出的具体时间来查询的,如果服务器时间比正常时间快或者慢,查询结果可能不准确