iommufd 是一个新的用户空间 API,用于控制 IOMMU 子系统,以管理指向用户空间内存的 IO 页表。它的目标是取代 VFIO 中相关的 IOMMU 用户 API,并成为在不同用户空间驱动框架(如 VFIO、vDPA 等)之间传递新 IOMMU 特性的核心接口。因此,原来和VFIO强相关的开源项目,例如QEMU 需要进行重新设计,以适配新的 iommufd API,同时保持与旧版 VFIO API 的向后兼容性,例如通过适当的抽象来支持设备为中心模型(在 iommufd 中)和组为中心模型(在旧版 VFIO 中,/dev/vfio/#groupid设备节点为IOMMU GROUP)。

VFIO和用户空间DMA

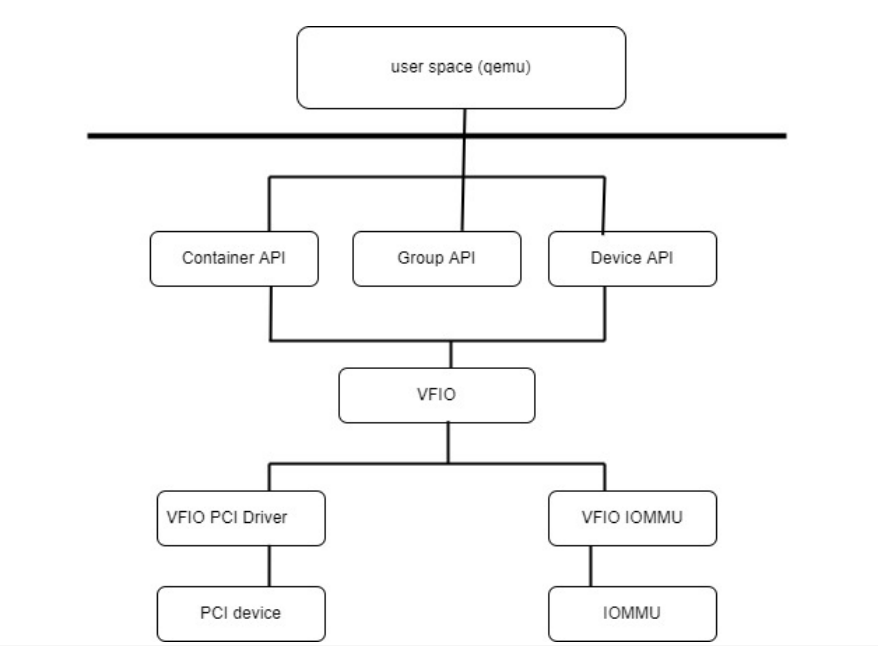

通过VFIO接口,用户空间可以直接操控支持DMA的硬件设备,VFIO确保将设备置于隔离环境,避免用户DMA操作危及其它系统组件。VFIO基于一个重要假设,那就是系统需要依赖物理IOMMU提供的设备保护机制。VFIO采用以组为核心的设计架构,设备组是IOMMU管理的基本单元,IOMMU GROUP是IOMMU隔离机制的最小单位。无法独立隔离的设备被归为同组。所有设备分属不同组,通过sysfs可以识别设备与分组的对应关系。

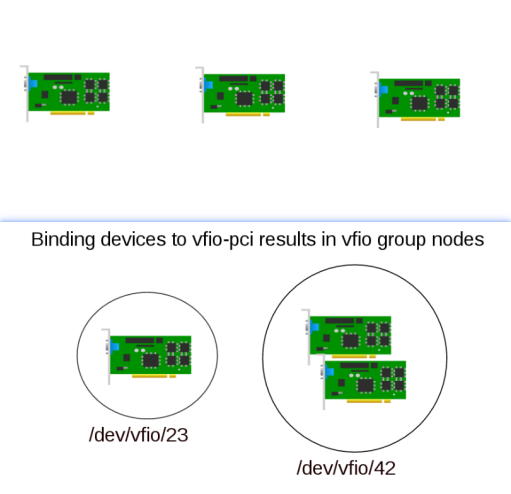

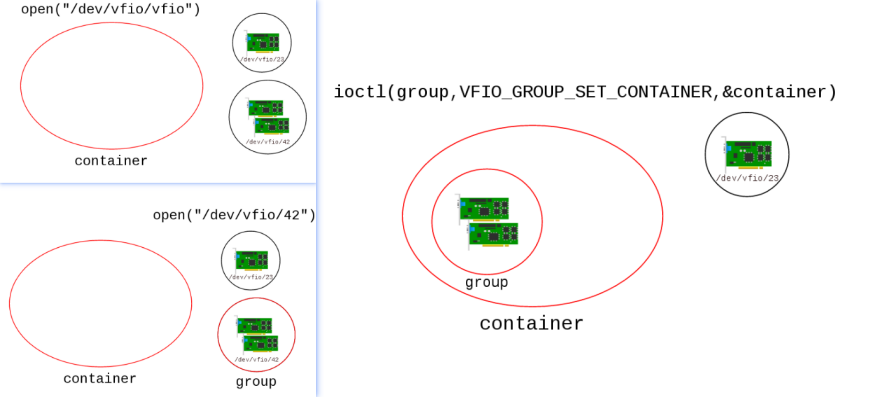

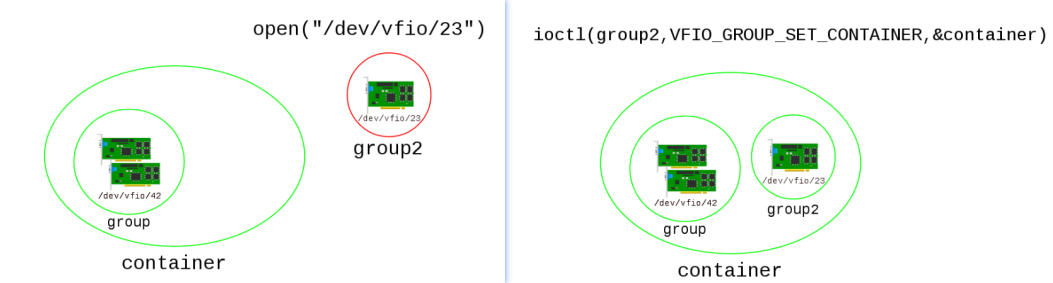

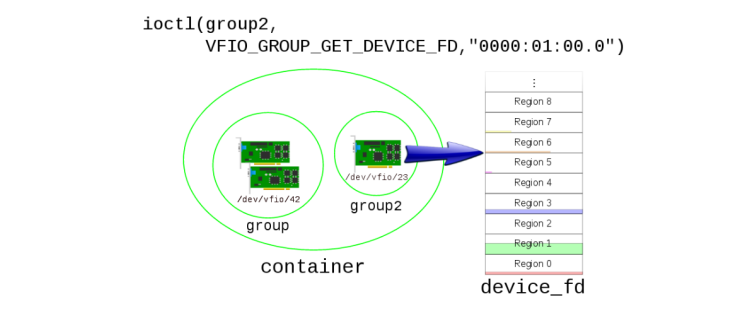

设备绑定VFIO驱动后会自动创建VFIO IOMMU GROUP组。当某组内所有设备都绑定了VFIO驱动或者移除VFIO驱动,该组即视为可用。并可挂在到VFIO容器中。该容器实现所有VFIO挂在组共享的IOMMU地址空间。只有在组被成功挂载到容器后,用户空间才能获取VFIO设备的FD进行编程。

设备分组,分组极大影响VFIO-PCI驱动的调用逻辑:

将组添加到容器中:

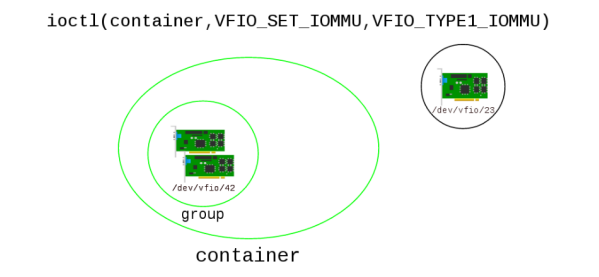

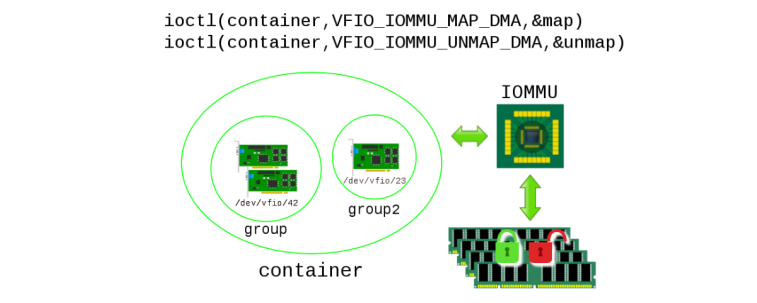

告诉容器,要用AMD/INTEL IOMMU做这个容器IOVA到HPA的映射:

多个组可以添加给同一个容器,将来一起透传给虚拟机:

容器对应一组IOVA到HPA的DMA映射,将映射建立起来:

之后,你就可以获取组中的设备FD了,通过设别FD,可以配置设备MMIO:

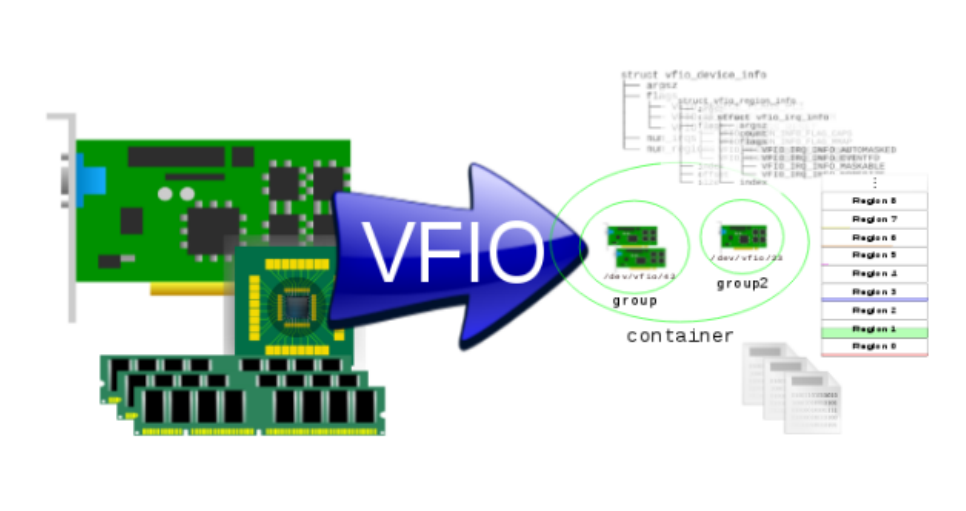

VFIO设备就像是一个坚果,需要层层拨开VFIO为其设计的坚固框架,才能访问设备:

IOMMUFD

IOMMUFD框架新提供了/dev/iommu字符设备,该字符设备可以管理多个I/O 地址空间,这个地址空间称为IOAS,每个IOAS对应一组IOVA与用于内存对应的物理页(通过PIN)之间的映射关系。该映射关系可以由硬件页表实现,这样就将地址映射方式和具体的硬件页表进行了解耦。通过引入IOAS, IOMMUFD实现了:

-

多地址空间管理:一个

iommufd上下文(对应/dev/iommu的一个文件描述符)可以创建多个 IOAS。每个 IOAS 本质上是一个独立的 IOVA 映射表。 -

动态映射:用户空间通过

IOMMU_IOAS_MAP等 ioctl 命令,在指定的 IOAS 中建立 IOVA 到物理页(通过pin_user_pages长期固定)的映射。

解耦后,用户空间程序(如 QEMU)不再需要关心底层 IOMMU 是 Intel VT-d 还是 ARM SMMU。它只需要:

-

创建一个 IOAS。

-

调用

IOMMU_IOAS_MAP填充映射。 -

将设备(Device FD)附件(attach) 到该 IOAS。

内核中的 iommufd 核心层会自动根据设备所属的 IOMMU 域类型,选择合适的页表格式(如 x86 的 4-level 或 5-level 页表,ARM 的 64-bit 页表等)来填充硬件结构。映射关系(即"我要把 IOVA A 映射到物理页 B")与"如何用硬件页表结构描述这个关系"彻底分离。

在 legacy 模型中,映射操作是直接作用于容器(container)的,而容器又与特定的设备组绑定。在 iommufd 中:

-

IOAS 独立于设备存在:你可以先创建 IOAS,建立好所有映射(预先分配 IOVA 空间),然后再将设备绑定上去。

-

共享与复用:你可以将一个 IOAS 同时 attach 给多个不同的设备。

ioas,hwpt(iommu_domain)基于资源池的架构来管理这些对象的生命周期,

基于IOMMUFD框架实现N卡用户态DMA MAP

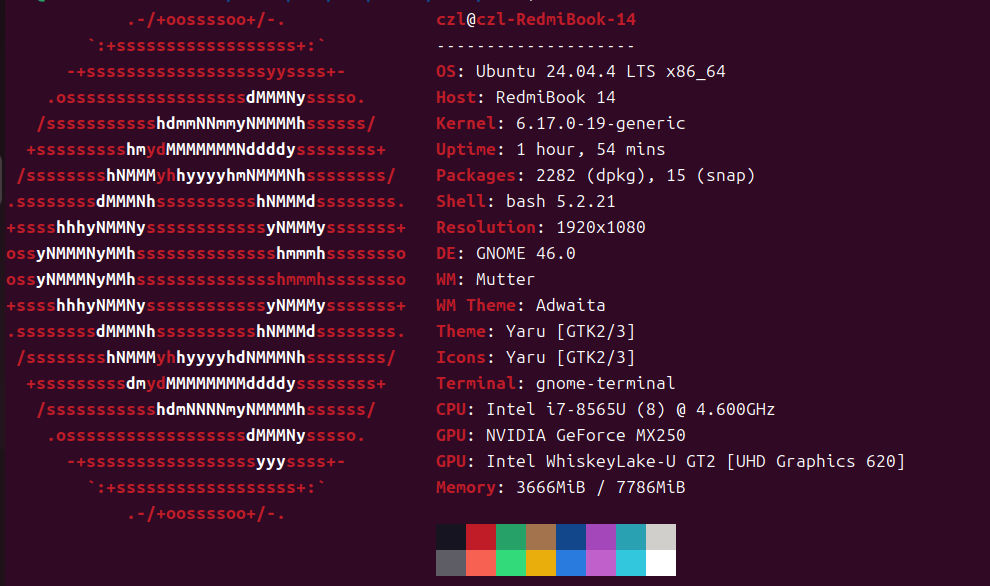

下面测试基于UBUNTU24.04.4内核实现NVIDIA独立显卡用户态DMA MAP的测试,UBUNTU24.04.4已经支持了IOMMUFD,所以我们可以用其作测试:

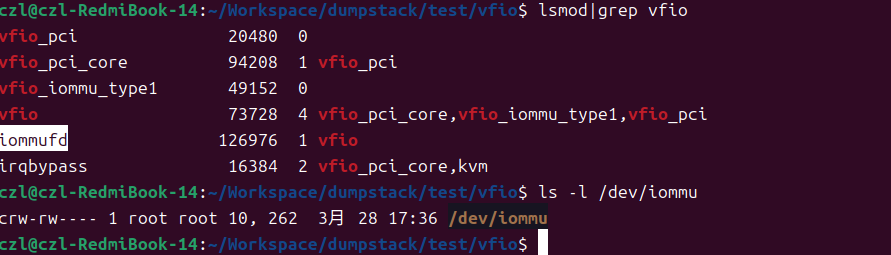

系统默认没有挂载iommufd.ko模块,手动将其加载,因为VFIO模块对IOMMUFD有依赖,直接加载VFIO驱动即可,完成后,确认/dev/iommu设备节点已经存在。

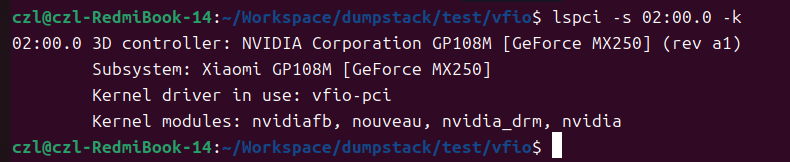

第二步,将N卡设备设置为VFIO-PCI接管,比较容易,省略。

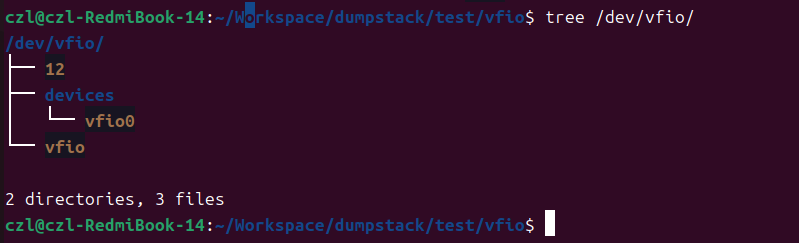

完成后,确认VFIO设备绑定成功,可以看到为了兼容LEGACY模式,GROUP ID字符设备节点仍然被创建了,不过我们计划开发基于IOMMUFD的PCIE设备透传模型,/dev/vfio/12对我们没有任何意义。

第三步,开发代码如下:

/*

* IOMMUFD-based DMA Mapping Test Case

*

* This is a rewritten version of the legacy VFIO test case to work with

* the new IOMMUFD framework. It retains the same core requirements:

* - Open IOMMUFD context

* - Create IO Address Space (IOAS)

* - Bind and attach device

* - Perform DMA mapping

*

* Architecture Comparison:

*

* Legacy VFIO:

* +------------------------+

* | container |

* | +-------+ +------+ |

* | | group0| |group1| |

* | | dev0 | | dev2 | |

* | | dev1 | +------+ |

* | +-------+ |

* +------------------------+

*

* IOMMUFD:

* +------------------------+

* | iommufd_ctx |

* | +-------+ +------+ |

* | | IOAS0 | | IOAS1| |

* | | HWPT | | HWPT | |

* | | dev0 | | dev2 | |

* | +-------+ +------+ |

* +------------------------+

*

* Flow (based on QEMU and kernel analysis):

* 1. Open /dev/iommu

* 2. Create IOAS (IOMMU_IOAS_ALLOC)

* 3. Open VFIO device cdev (/dev/vfio/devices/vfioX)

* 4. Bind device to IOMMUFD (VFIO_DEVICE_BIND_IOMMUFD)

* 5. Attach device to IOAS (VFIO_DEVICE_ATTACH_IOMMUFD_PT)

* 6. Query IOVA ranges (IOMMU_IOAS_IOVA_RANGES) - after attach!

* 7. Map DMA (IOMMU_IOAS_MAP)

*

* Before running this test:

* 1. Ensure IOMMU is enabled in BIOS

* 2. Bind device to vfio-pci driver:

* # echo vfio-pci > /sys/bus/pci/devices/0000:02:00.0/driver_override

* # echo -n 0000:02:00.0 > /sys/bus/pci/drivers/$old_driver/unbind

* # echo -n 0000:02:00.0 > /sys/bus/pci/drivers/vfio-pci/bind

*

* Or add to kernel cmdline:

* intel_iommu=on vfio-pci.ids=VENDOR:DEVICE

*/

#include <stdio.h>

#include <stdlib.h>

#include <sys/stat.h>

#include <fcntl.h>

#include <sys/ioctl.h>

#include <unistd.h>

#include <string.h>

#include <sys/mman.h>

#include <errno.h>

#include <dirent.h>

#include <stdint.h>

#include <libgen.h>

#include <linux/iommufd.h>

#include <linux/vfio.h>

#define IOVA_DMA_MAPSZ (1*1024UL*1024UL)

#define IOVA_START (0xbeefb000UL)

#define VADDR 0x400000000000

/* IOMMUFD test object structure */

typedef struct iommufd_test_struct {

int iommufd_fd; /* /dev/iommu fd */

int device_fd; /* Device fd from VFIO cdev */

__u32 ioas_id; /* IOAS object ID */

__u32 hwpt_id; /* HWPT object ID (returned by attach) */

__u32 dev_id; /* Device object ID (returned by bind) */

unsigned long offset_base;

char *dev_bdf; /* PCI BDF: 0000:02:00.0 */

char *vfio_device; /* VFIO device path: /dev/vfio/devices/vfio0 */

void *mapped_addr; /* Mapped user memory */

unsigned long iova_start;/* Actual IOVA used */

unsigned long iova_size; /* DMA mapping size */

} iommufd_test_struct_t;

/*

* Find VFIO device path by PCI BDF

* Returns: 0 on success, -1 on failure

*/

static int find_vfio_device_by_bdf(const char *bdf, char *path, size_t path_size)

{

DIR *dir;

struct dirent *entry;

char iommu_group_path[512];

char iommu_group[64];

char sysfs_path[512];

char link_target[512];

ssize_t len;

char *group_name;

/* Build sysfs path for the device's iommu_group */

snprintf(sysfs_path, sizeof(sysfs_path),

"/sys/bus/pci/devices/%s/iommu_group", bdf);

/* Read the iommu_group number */

len = readlink(sysfs_path, iommu_group_path, sizeof(iommu_group_path) - 1);

if (len < 0) {

printf("%s line %d, failed to read iommu_group link: %s\n",

__func__, __LINE__, strerror(errno));

return -1;

}

iommu_group_path[len] = '\0';

/* Extract group number from link target */

group_name = basename(iommu_group_path);

strncpy(iommu_group, group_name, sizeof(iommu_group) - 1);

printf("%s line %d, device %s is in IOMMU group %s\n",

__func__, __LINE__, bdf, iommu_group);

/* Look for VFIO device file */

dir = opendir("/dev/vfio/devices");

if (!dir) {

printf("%s line %d, failed to open /dev/vfio/devices: %s\n",

__func__, __LINE__, strerror(errno));

return -1;

}

while ((entry = readdir(dir)) != NULL) {

if (entry->d_name[0] == '.')

continue;

char vfio_dev_link[512];

char vfio_sys_path[512];

snprintf(vfio_dev_link, sizeof(vfio_dev_link),

"/dev/vfio/devices/%s", entry->d_name);

/* Read the symlink to get the actual device */

snprintf(vfio_sys_path, sizeof(vfio_sys_path),

"/sys/class/vfio-dev/%s/device", entry->d_name);

len = readlink(vfio_sys_path, link_target, sizeof(link_target) - 1);

if (len < 0)

continue;

link_target[len] = '\0';

/* Check if this VFIO device matches our BDF */

if (strstr(link_target, bdf)) {

strncpy(path, vfio_dev_link, path_size - 1);

path[path_size - 1] = '\0';

printf("%s line %d, found VFIO device: %s\n",

__func__, __LINE__, path);

closedir(dir);

return 0;

}

}

/* Alternative: just use first available vfio device */

rewinddir(dir);

while ((entry = readdir(dir)) != NULL) {

if (entry->d_name[0] == '.')

continue;

if (strncmp(entry->d_name, "vfio", 4) == 0) {

snprintf(path, path_size, "/dev/vfio/devices/%s", entry->d_name);

printf("%s line %d, using VFIO device: %s\n",

__func__, __LINE__, path);

closedir(dir);

return 0;

}

}

closedir(dir);

return -1;

}

/*

* Create an IO Address Space (IOAS)

* IOAS is the equivalent of the legacy VFIO container's DMA mapping space

*/

static int iommufd_create_ioas(int iommufd_fd, __u32 *ioas_id)

{

struct iommu_ioas_alloc alloc = {

.size = sizeof(alloc),

.flags = 0,

};

if (ioctl(iommufd_fd, IOMMU_IOAS_ALLOC, &alloc)) {

printf("%s line %d, failed to allocate IOAS: %s\n",

__func__, __LINE__, strerror(errno));

return -1;

}

*ioas_id = alloc.out_ioas_id;

printf("%s line %d, created IOAS with id %u\n",

__func__, __LINE__, *ioas_id);

return 0;

}

/*

* Get IOVA ranges available in the IOAS

* IMPORTANT: Must be called AFTER device attach to get valid ranges

* Returns: 0 on success, -1 on failure

*/

static int iommufd_get_iova_ranges(int iommufd_fd, __u32 ioas_id,

unsigned long *iova_start, unsigned long *iova_size)

{

struct iommu_ioas_iova_ranges ranges = {

.size = sizeof(ranges),

.ioas_id = ioas_id,

};

struct iommu_iova_range *iova_ranges = NULL;

int ret;

/* First call to get the number of ranges */

if (ioctl(iommufd_fd, IOMMU_IOAS_IOVA_RANGES, &ranges)) {

printf("%s line %d, failed to get IOVA ranges: %s\n",

__func__, __LINE__, strerror(errno));

return -1;

}

printf("%s line %d, IOVA ranges: num_iovas=%u, alignment=0x%llx\n",

__func__, __LINE__, ranges.num_iovas,

(unsigned long long)ranges.out_iova_alignment);

if (ranges.num_iovas == 0) {

printf("%s line %d, no IOVA ranges available\n", __func__, __LINE__);

return 0;

}

/* Allocate buffer for ranges */

iova_ranges = calloc(ranges.num_iovas, sizeof(*iova_ranges));

if (!iova_ranges) {

printf("%s line %d, failed to allocate IOVA ranges buffer\n",

__func__, __LINE__);

return -1;

}

/* Second call to get actual ranges */

ranges.allowed_iovas = (uintptr_t)iova_ranges;

ret = ioctl(iommufd_fd, IOMMU_IOAS_IOVA_RANGES, &ranges);

if (ret) {

printf("%s line %d, failed to get IOVA ranges data: %s\n",

__func__, __LINE__, strerror(errno));

free(iova_ranges);

return -1;

}

/* Print ranges and return first valid range */

for (__u32 i = 0; i < ranges.num_iovas; i++) {

printf(" IOVA range %u: start=0x%llx, last=0x%llx (size=%llu MB)\n",

i, (unsigned long long)iova_ranges[i].start,

(unsigned long long)iova_ranges[i].last,

(unsigned long long)(iova_ranges[i].last - iova_ranges[i].start + 1) / (1024 * 1024));

/* Use first range that can hold our mapping */

if (iova_start && iova_size &&

(iova_ranges[i].last - iova_ranges[i].start + 1) >= IOVA_DMA_MAPSZ) {

*iova_start = iova_ranges[i].start;

*iova_size = iova_ranges[i].last - iova_ranges[i].start + 1;

printf(" Using IOVA range %u for DMA mapping\n", i);

break;

}

}

free(iova_ranges);

return 0;

}

/*

* Map DMA memory in IOAS

* This is equivalent to VFIO_IOMMU_MAP_DMA in legacy VFIO

*/

static int iommufd_dma_map(int iommufd_fd, __u32 ioas_id,

unsigned long iova, unsigned long vaddr,

size_t size)

{

struct iommu_ioas_map map = {

.size = sizeof(map),

.flags = IOMMU_IOAS_MAP_READABLE | IOMMU_IOAS_MAP_WRITEABLE |

IOMMU_IOAS_MAP_FIXED_IOVA,

.ioas_id = ioas_id,

.iova = iova,

.user_va = vaddr,

.length = size,

};

if (ioctl(iommufd_fd, IOMMU_IOAS_MAP, &map)) {

printf("%s line %d, failed to map DMA: %s\n",

__func__, __LINE__, strerror(errno));

return -1;

}

printf("%s line %d, DMA map success: iova=0x%llx, vaddr=0x%lx, size=%zu\n",

__func__, __LINE__, (unsigned long long)map.iova, vaddr, size);

return 0;

}

/*

* Unmap DMA memory from IOAS

*/

static int iommufd_dma_unmap(int iommufd_fd, __u32 ioas_id,

unsigned long iova, size_t size)

{

struct iommu_ioas_unmap unmap = {

.size = sizeof(unmap),

.ioas_id = ioas_id,

.iova = iova,

.length = size,

};

if (ioctl(iommufd_fd, IOMMU_IOAS_UNMAP, &unmap)) {

printf("%s line %d, failed to unmap DMA: %s\n",

__func__, __LINE__, strerror(errno));

return -1;

}

printf("%s line %d, DMA unmap success: iova=0x%llx\n",

__func__, __LINE__, (unsigned long long)iova);

return 0;

}

/*

* Open and initialize IOMMUFD test object

*

* Flow (matches QEMU's iommufd_cdev_attach()):

* 1. Open /dev/iommu

* 2. Create IOAS (IOMMU_IOAS_ALLOC)

* 3. Open VFIO device cdev

* 4. Bind device to IOMMUFD (VFIO_DEVICE_BIND_IOMMUFD)

* 5. Attach device to IOAS (VFIO_DEVICE_ATTACH_IOMMUFD_PT)

* 6. Query IOVA ranges (after attach!)

* 7. Map DMA memory (IOMMU_IOAS_MAP)

*/

static int iommufd_test_open(iommufd_test_struct_t *obj)

{

void *maddr = NULL;

struct vfio_device_info device_info = { .argsz = sizeof(device_info) };

char vfio_device_path[512];

/* Step 1: Open /dev/iommu */

obj->iommufd_fd = open("/dev/iommu", O_RDWR);

if (obj->iommufd_fd < 0) {

printf("%s line %d, failed to open /dev/iommu: %s\n",

__func__, __LINE__, strerror(errno));

return -1;

}

printf("%s line %d, opened /dev/iommu, fd=%d\n",

__func__, __LINE__, obj->iommufd_fd);

/* Step 2: Create IO Address Space (IOAS) */

if (iommufd_create_ioas(obj->iommufd_fd, &obj->ioas_id)) {

close(obj->iommufd_fd);

return -1;

}

/* Step 3: Find and open VFIO device cdev */

if (obj->vfio_device) {

strncpy(vfio_device_path, obj->vfio_device, sizeof(vfio_device_path) - 1);

} else if (obj->dev_bdf) {

if (find_vfio_device_by_bdf(obj->dev_bdf, vfio_device_path,

sizeof(vfio_device_path))) {

printf("%s line %d, failed to find VFIO device for %s\n",

__func__, __LINE__, obj->dev_bdf);

close(obj->iommufd_fd);

return -1;

}

} else {

printf("%s line %d, no device specified\n", __func__, __LINE__);

close(obj->iommufd_fd);

return -1;

}

obj->device_fd = open(vfio_device_path, O_RDWR);

if (obj->device_fd < 0) {

printf("%s line %d, failed to open %s: %s\n",

__func__, __LINE__, vfio_device_path, strerror(errno));

close(obj->iommufd_fd);

return -1;

}

printf("%s line %d, opened VFIO device %s, fd=%d\n",

__func__, __LINE__, vfio_device_path, obj->device_fd);

/*

* Step 4: Bind device to IOMMUFD

* This is a VFIO ioctl that binds the device to iommufd context

* Reference: QEMU iommufd_cdev_connect_and_bind()

*/

{

struct vfio_device_bind_iommufd bind = {

.argsz = sizeof(bind),

.flags = 0,

.iommufd = obj->iommufd_fd, /* Pass iommufd FD */

};

if (ioctl(obj->device_fd, VFIO_DEVICE_BIND_IOMMUFD, &bind)) {

printf("%s line %d, failed to bind device to IOMMUFD: %s\n",

__func__, __LINE__, strerror(errno));

printf("Note: This requires kernel 6.6+ with IOMMUFD support\n");

printf(" and the device must be bound to vfio-pci driver\n");

close(obj->device_fd);

close(obj->iommufd_fd);

return -1;

}

obj->dev_id = bind.out_devid;

printf("%s line %d, device bound to IOMMUFD, dev_id=%u\n",

__func__, __LINE__, obj->dev_id);

}

/* Get device info */

if (ioctl(obj->device_fd, VFIO_DEVICE_GET_INFO, &device_info)) {

printf("%s line %d, failed to get device info: %s\n",

__func__, __LINE__, strerror(errno));

close(obj->device_fd);

close(obj->iommufd_fd);

return -1;

}

printf("%s line %d, device info: flags=0x%x, num_regions=%u, num_irqs=%u\n",

__func__, __LINE__, device_info.flags,

device_info.num_regions, device_info.num_irqs);

/* Reset device */

ioctl(obj->device_fd, VFIO_DEVICE_RESET);

/*

* Step 5: Attach device to IOAS

* This associates the device with the IO address space

* Reference: QEMU iommufd_cdev_attach_ioas_hwpt()

*

* Note: Passing IOAS ID causes kernel to create a HWPT automatically

* and returns the HWPT ID in pt_id field.

*/

{

struct vfio_device_attach_iommufd_pt attach = {

.argsz = sizeof(attach),

.flags = 0,

.pt_id = obj->ioas_id, /* Input: IOAS ID */

// .pasid = 0, /* Not using PASID */

};

if (ioctl(obj->device_fd, VFIO_DEVICE_ATTACH_IOMMUFD_PT, &attach)) {

printf("%s line %d, failed to attach device to IOAS: %s\n",

__func__, __LINE__, strerror(errno));

close(obj->device_fd);

close(obj->iommufd_fd);

return -1;

}

/* Attach returns the HWPT ID that was created/used */

obj->hwpt_id = attach.pt_id; /* Output: HWPT ID */

printf("%s line %d, device attached to IOAS, hwpt_id=%u\n",

__func__, __LINE__, obj->hwpt_id);

}

/*

* Step 6: Query IOVA ranges AFTER device attach

* IOVA ranges are determined by the IOMMU hardware capabilities

* Reference: QEMU iommufd_cdev_get_info_iova_range()

*/

{

unsigned long valid_iova_start = 0;

unsigned long valid_iova_size = 0;

if (iommufd_get_iova_ranges(obj->iommufd_fd, obj->ioas_id,

&valid_iova_start, &valid_iova_size) == 0) {

if (valid_iova_size > 0) {

printf("%s line %d, valid IOVA range: 0x%lx - 0x%lx\n",

__func__, __LINE__, valid_iova_start,

valid_iova_start + valid_iova_size - 1);

/* Optionally use the valid range instead of fixed IOVA */

/* obj->iova_start = valid_iova_start; */

}

}

}

/* Step 7: Map DMA memory */

maddr = mmap((void *)(VADDR + obj->offset_base), IOVA_DMA_MAPSZ,

PROT_READ | PROT_WRITE,

MAP_SHARED | MAP_ANONYMOUS | MAP_FIXED, -1, 0);

if (maddr == MAP_FAILED) {

printf("%s line %d, failed to map buffer: %s\n",

__func__, __LINE__, strerror(errno));

close(obj->device_fd);

close(obj->iommufd_fd);

return -1;

}

if ((unsigned long)maddr != (VADDR + obj->offset_base)) {

printf("%s line %d, address mismatch: maddr=%p, expected=0x%lx\n",

__func__, __LINE__, maddr, VADDR + obj->offset_base);

munmap(maddr, IOVA_DMA_MAPSZ);

close(obj->device_fd);

close(obj->iommufd_fd);

return -1;

}

obj->mapped_addr = maddr;

printf("%s line %d, mmap success: vaddr=%p\n", __func__, __LINE__, maddr);

/* Fill buffer with test pattern */

memset(maddr, 0x5a, IOVA_DMA_MAPSZ);

/* Store IOVA for cleanup */

obj->iova_start = IOVA_START + obj->offset_base;

obj->iova_size = IOVA_DMA_MAPSZ;

/* Map DMA in IOAS */

if (iommufd_dma_map(obj->iommufd_fd, obj->ioas_id,

obj->iova_start, (unsigned long)maddr, obj->iova_size)) {

munmap(maddr, IOVA_DMA_MAPSZ);

close(obj->device_fd);

close(obj->iommufd_fd);

return -1;

}

printf("%s line %d, IOMMUFD DMA setup complete!\n", __func__, __LINE__);

printf(" IOVA: 0x%lx -> VADDR: %p (size: %lu bytes)\n",

obj->iova_start, maddr, obj->iova_size);

return 0;

}

/*

* Close and cleanup IOMMUFD test object

*

* Cleanup order (reverse of setup):

* 1. Unmap DMA

* 2. Detach device from IOAS

* 3. Destroy IOAS

* 4. Close device fd (unbind happens automatically)

* 5. Close iommufd fd

*/

static int iommufd_test_close(iommufd_test_struct_t *obj)

{

/* Step 1: Unmap DMA */

if (obj->ioas_id && obj->iommufd_fd >= 0 && obj->iova_size > 0) {

iommufd_dma_unmap(obj->iommufd_fd, obj->ioas_id,

obj->iova_start, obj->iova_size);

}

/* Unmap user memory */

if (obj->mapped_addr) {

munmap(obj->mapped_addr, obj->iova_size);

obj->mapped_addr = NULL;

}

/* Step 2: Detach device from IOAS */

if (obj->device_fd >= 0) {

struct vfio_device_detach_iommufd_pt detach = {

.argsz = sizeof(detach),

.flags = 0,

// .pasid = 0,

};

if (ioctl(obj->device_fd, VFIO_DEVICE_DETACH_IOMMUFD_PT, &detach) == 0) {

printf("%s line %d, detached device from IOMMUFD\n",

__func__, __LINE__);

}

}

/* Step 3: Destroy IOAS */

if (obj->ioas_id && obj->iommufd_fd >= 0) {

struct iommu_destroy destroy = {

.size = sizeof(destroy),

.id = obj->ioas_id,

};

if (ioctl(obj->iommufd_fd, IOMMU_DESTROY, &destroy) == 0) {

printf("%s line %d, destroyed IOAS %u\n",

__func__, __LINE__, obj->ioas_id);

}

obj->ioas_id = 0;

}

/* Step 4 & 5: Close file descriptors */

/* Note: Unbind happens automatically when device FD is closed */

if (obj->device_fd >= 0) {

close(obj->device_fd);

printf("%s line %d, closed device fd\n", __func__, __LINE__);

obj->device_fd = -1;

}

if (obj->iommufd_fd >= 0) {

close(obj->iommufd_fd);

printf("%s line %d, closed iommufd fd\n", __func__, __LINE__);

obj->iommufd_fd = -1;

}

return 0;

}

/*

* Print usage information

*/

static void print_usage(const char *prog)

{

printf("Usage: %s [options]\n", prog);

printf("\nOptions:\n");

printf(" -d <bdf> PCI device BDF (e.g., 0000:02:00.0)\n");

printf(" -v <path> VFIO device path (e.g., /dev/vfio/devices/vfio0)\n");

printf(" -h Show this help\n");

printf("\nExample:\n");

printf(" %s -d 0000:02:00.0\n", prog);

printf(" %s -v /dev/vfio/devices/vfio0\n", prog);

printf("\nBefore running:\n");

printf(" 1. Enable IOMMU in BIOS\n");

printf(" 2. Bind device to vfio-pci:\n");

printf(" echo vfio-pci > /sys/bus/pci/devices/0000:02:00.0/driver_override\n");

printf(" echo -n 0000:02:00.0 > /sys/bus/pci/drivers/vfio-pci/bind\n");

printf(" 3. Verify device appears in /dev/vfio/devices/\n");

}

int main(int argc, char *argv[])

{

iommufd_test_struct_t obj;

int opt;

char *dev_bdf = NULL;

char *vfio_device = NULL;

printf("IOMMUFD DMA Mapping Test\n");

printf("========================\n\n");

/* Parse command line options */

while ((opt = getopt(argc, argv, "d:v:h")) != -1) {

switch (opt) {

case 'd':

dev_bdf = optarg;

break;

case 'v':

vfio_device = optarg;

break;

case 'h':

print_usage(argv[0]);

return 0;

default:

print_usage(argv[0]);

return 1;

}

}

/* Initialize test object */

memset(&obj, 0, sizeof(obj));

obj.dev_bdf = dev_bdf;

obj.vfio_device = vfio_device;

obj.offset_base = 0;

obj.iommufd_fd = -1;

obj.device_fd = -1;

/* Check for required kernel support */

if (access("/dev/iommu", F_OK) != 0) {

printf("Error: /dev/iommu not found.\n");

printf("Please ensure:\n");

printf(" 1. Kernel version 6.6 or later with IOMMUFD support\n");

printf(" 2. IOMMU enabled in BIOS and kernel (intel_iommu=on or amd_iommu=on)\n");

return 1;

}

/* Check for VFIO device directory */

if (access("/dev/vfio/devices", F_OK) != 0) {

printf("Warning: /dev/vfio/devices not found.\n");

printf("This suggests VFIO devices may not be available.\n");

printf("Try: modprobe vfio-pci\n");

}

/* Run the test */

printf("\n--- Starting IOMMUFD Test ---\n\n");

if (iommufd_test_open(&obj) != 0) {

printf("\n--- Test Failed ---\n");

return 1;

}

printf("\n--- Test Running (Ctrl+C to stop) ---\n");

printf("DMA mapping active, device can now access IOVA 0x%lx\n",

obj.iova_start);

#if 0

/* Keep running to allow device access */

while (1) {

sleep(10);

}

#endif

/* Cleanup (unreachable in this example) */

iommufd_test_close(&obj);

return 0;

}执行如下命令:

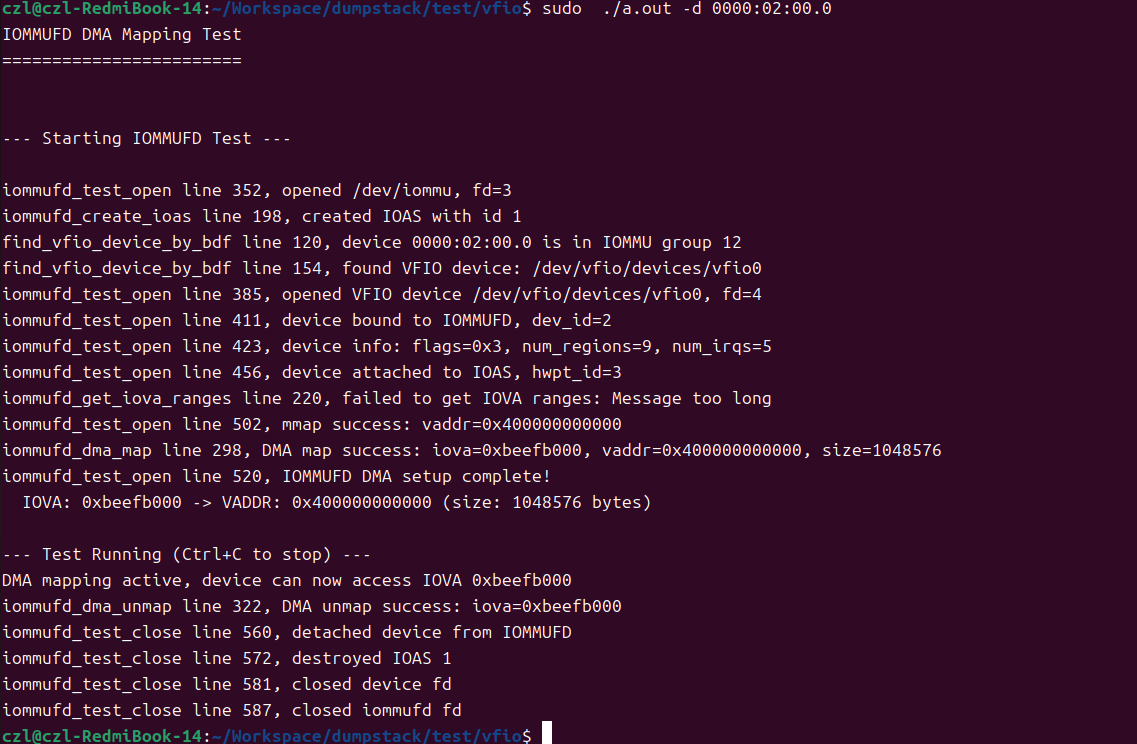

# ./a.out -d 0000:02:00.0测试成功执行:

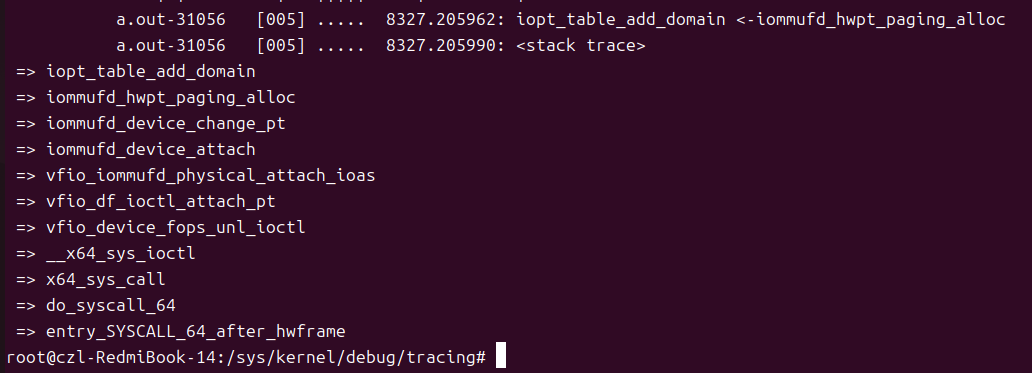

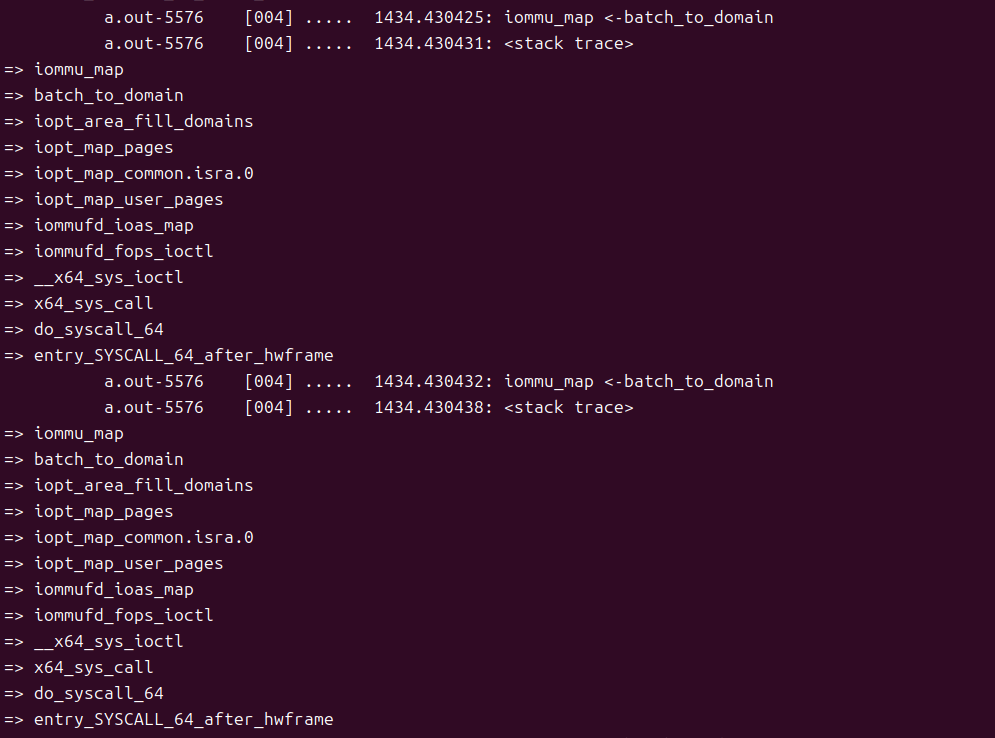

下图包括首次设备和IOAS绑定(添加IOMMU DOMAIN到IOAS上),内核中也TRACE到了设备在用户态发起的DMA映射:

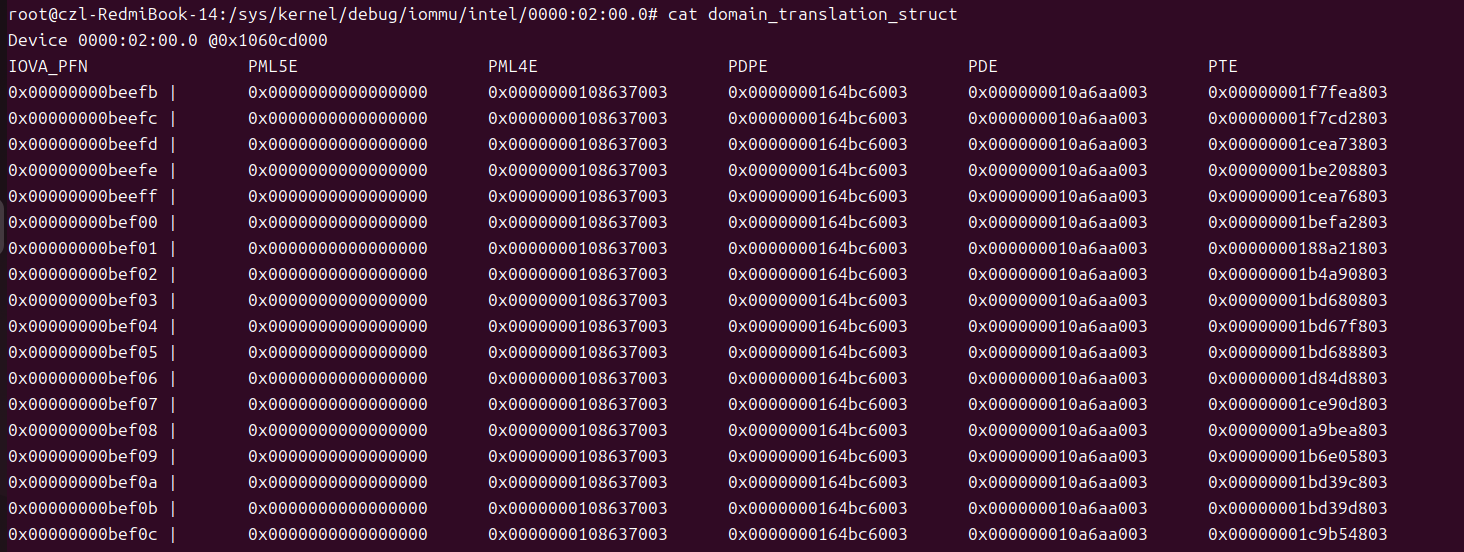

看用户态是否映射成功,可以通过DUMP IOMMU页表实现,可以看到,测试用来从0xbeefb GFA开始映射的1M,确实存在于页表中:

参考文档

https://static.sched.com/hosted_files/ossna2022/5d/UIO_VFIO-OSS-NA22.pdf