使用workbuddy专家模式,技术文档工程师调用ssh-mcp-server,一句话部署ELK日志平台

部署成功,可正常访问

如下为生成的文档

版本 : 2.0.0 | 日期 : 2026-04-08 | 状态: ✅ 已部署并验证

架构概览

数据流向:

etcd日志(/var/log/etcd/etcd.log) ──► Filebeat ──► Kafka(topic: etcd-logs) ──► Logstash ──► ES index: etcd-logs-YYYY.MM.dd

/var/log/messages ──► Filebeat ──► Kafka(topic: syslog-logs) ──► Logstash ──► ES index: syslog-logs-YYYY.MM.dd环境信息

| 节点 | IP | 角色 | 组件 |

|---|---|---|---|

| 日志平台 | 192.168.44.135 | 服务端 | Elasticsearch + Kibana + Kafka + Zookeeper + Logstash |

| etcd节点1 | 192.168.44.132 | 采集端 | Filebeat (yum) |

| etcd节点2 | 192.168.44.133 | 采集端 | Filebeat (yum) |

| etcd节点3 | 192.168.44.134 | 采集端 | Filebeat (yum) |

软件版本:

| 组件 | 版本 |

|---|---|

| OS | Rocky Linux 9.7 |

| Docker | 29.3.1 |

| Docker Compose | v5.1.1 |

| Elasticsearch | 8.17.4 |

| Kibana | 8.17.4 |

| Logstash | 8.17.4 |

| Filebeat | 8.19.x (yum最新) |

| Kafka | confluentinc/cp-kafka:7.6.1 |

| Zookeeper | 3.8 |

认证信息:

| 服务 | 用户名 | 密码 | 访问地址 |

|---|---|---|---|

| Elasticsearch | elastic | Securitydev2021# | http://192.168.44.135:9200 |

| Kibana | kibana_system | Securitydev2021# | http://192.168.44.135:5601 |

| Kibana管理员 | elastic | Securitydev2021# | http://192.168.44.135:5601 |

⚠️ 安全说明:ES 启用了 xpack.security(用户认证),但未启用 TLS/SSL(使用 HTTP 明文传输)。生产环境请参考附录启用 TLS。

第一部分:135服务器部署(Docker Compose)

1.1 系统预配置

# 设置 vm.max_map_count(ES 必要参数)

grep -q 'vm.max_map_count' /etc/sysctl.conf || echo 'vm.max_map_count=262144' >> /etc/sysctl.conf

sysctl -w vm.max_map_count=262144

# 验证

sysctl vm.max_map_count

# 输出: vm.max_map_count = 2621441.2 创建目录结构

mkdir -p /data/elk/{elasticsearch/{data,logs},kibana/{conf,logs},logstash/{conf,logs,data},zookeeper/{data,logs},kafka/data}

# 设置必要权限

chmod 777 /data/elk/elasticsearch/data

chmod 777 /data/elk/elasticsearch/logs

chmod 777 /data/elk/logstash/data

chmod 777 /data/elk/logstash/logs

chmod 777 /data/elk/kibana/logs

chmod 777 /data/elk/zookeeper/data

chmod 777 /data/elk/zookeeper/logs

chmod 777 /data/elk/kafka/data目录结构如下:

/data/elk/

├── docker-compose.yml # 主部署文件

├── kibana.yml # Kibana 配置

├── logstash.yml # Logstash 主配置

├── logstash.conf # Logstash Pipeline 配置

├── elasticsearch/

│ ├── data/ # ES 数据目录

│ └── logs/ # ES 日志目录

├── kibana/

│ └── logs/ # Kibana 日志目录

├── logstash/

│ ├── data/ # Logstash 数据目录

│ └── logs/ # Logstash 日志目录

├── zookeeper/

│ ├── data/ # ZK 数据目录

│ └── logs/ # ZK 日志目录

└── kafka/

└── data/ # Kafka 数据目录1.3 Kibana 配置文件

cat > /data/elk/kibana.yml << 'EOF'

server.host: "0.0.0.0"

server.port: 5601

server.name: "kibana"

elasticsearch.hosts: ["http://elasticsearch:9200"]

elasticsearch.username: "kibana_system"

elasticsearch.password: "Securitydev2021#"

monitoring.ui.container.elasticsearch.enabled: true

i18n.locale: "zh-CN"

logging:

appenders:

file:

type: file

fileName: /usr/share/kibana/logs/kibana.log

layout:

type: json

root:

appenders: [default, file]

EOF1.4 Logstash 主配置

cat > /data/elk/logstash.yml << 'EOF'

node.name: node-1

http.host: "0.0.0.0"

http.port: 9600

EOF1.5 Logstash Pipeline 配置

cat > /data/elk/logstash.conf << 'EOF'

input {

kafka {

bootstrap_servers => "kafka:9092"

topics => ["etcd-logs", "syslog-logs"]

group_id => "logstash-elk"

codec => "json"

consumer_threads => 2

decorate_events => true

}

}

filter {

# 提取 Kafka topic 名称用于路由

if [@metadata][kafka][topic] {

mutate {

add_field => { "kafka_topic" => "%{[@metadata][kafka][topic]}" }

}

}

# 处理 etcd 日志(JSON 格式)

if [kafka_topic] == "etcd-logs" {

if [message] {

json {

source => "message"

target => "etcd"

skip_on_invalid_json => true

}

}

mutate {

add_field => { "log_source" => "etcd" }

}

}

# 处理 syslog 日志

if [kafka_topic] == "syslog-logs" {

grok {

match => { "message" => "%{SYSLOGTIMESTAMP:syslog_timestamp} %{SYSLOGHOST:syslog_host} %{DATA:syslog_program}(?:$$%{POSINT:syslog_pid}$$)?: %{GREEDYDATA:syslog_message}" }

overwrite => ["message"]

}

mutate {

add_field => { "log_source" => "syslog" }

}

}

# 统一时间处理

date {

match => ["syslog_timestamp", "MMM d HH:mm:ss", "MMM dd HH:mm:ss"]

target => "@timestamp"

}

mutate {

remove_field => ["@version"]

}

}

output {

if [kafka_topic] == "etcd-logs" {

elasticsearch {

hosts => ["http://elasticsearch:9200"]

user => "elastic"

password => "Securitydev2021#"

index => "etcd-logs-%{+YYYY.MM.dd}"

}

} else if [kafka_topic] == "syslog-logs" {

elasticsearch {

hosts => ["http://elasticsearch:9200"]

user => "elastic"

password => "Securitydev2021#"

index => "syslog-logs-%{+YYYY.MM.dd}"

}

} else {

elasticsearch {

hosts => ["http://elasticsearch:9200"]

user => "elastic"

password => "Securitydev2021#"

index => "other-logs-%{+YYYY.MM.dd}"

}

}

}

EOF1.6 docker-compose.yml

cat > /data/elk/docker-compose.yml << 'EOF'

networks:

elk-net:

driver: bridge

ipam:

config:

- subnet: 172.25.0.0/16

services:

# ============================================================

# Elasticsearch

# ============================================================

elasticsearch:

image: elasticsearch:8.17.4

container_name: elasticsearch

hostname: elasticsearch

restart: unless-stopped

environment:

- ES_JAVA_OPTS=-Xms1g -Xmx1g

- discovery.type=single-node

- xpack.security.enabled=true

- xpack.security.http.ssl.enabled=false

- xpack.security.transport.ssl.enabled=false

- ELASTIC_PASSWORD=Securitydev2021#

ports:

- "9200:9200"

- "9300:9300"

volumes:

- /data/elk/elasticsearch/data:/usr/share/elasticsearch/data

- /data/elk/elasticsearch/logs:/usr/share/elasticsearch/logs

- /etc/localtime:/etc/localtime:ro

networks:

- elk-net

healthcheck:

test: ["CMD-SHELL", "curl -sf http://localhost:9200/_cluster/health -u elastic:Securitydev2021# | grep -q 'status'"]

interval: 30s

timeout: 10s

retries: 10

start_period: 90s

ulimits:

memlock:

soft: -1

hard: -1

nofile:

soft: 65536

hard: 65536

# ============================================================

# Kibana

# ============================================================

kibana:

image: kibana:8.17.4

container_name: kibana

hostname: kibana

restart: unless-stopped

ports:

- "5601:5601"

volumes:

- /data/elk/kibana.yml:/usr/share/kibana/config/kibana.yml:ro

- /data/elk/kibana/logs:/usr/share/kibana/logs

- /etc/localtime:/etc/localtime:ro

networks:

- elk-net

depends_on:

elasticsearch:

condition: service_healthy

healthcheck:

test: ["CMD-SHELL", "curl -sf http://localhost:5601/api/status | grep -q 'available' || exit 1"]

interval: 30s

timeout: 10s

retries: 10

start_period: 120s

# ============================================================

# Zookeeper

# ============================================================

zookeeper:

image: zookeeper:3.8

container_name: zookeeper

hostname: zookeeper

restart: unless-stopped

ports:

- "2181:2181"

environment:

- ZOO_MY_ID=1

- ZOO_SERVERS=server.1=zookeeper:2888:3888;2181

volumes:

- /data/elk/zookeeper/data:/data

- /data/elk/zookeeper/logs:/datalog

- /etc/localtime:/etc/localtime:ro

networks:

- elk-net

healthcheck:

test: ["CMD", "zkServer.sh", "status"]

interval: 20s

timeout: 10s

retries: 5

# ============================================================

# Kafka

# ============================================================

kafka:

image: confluentinc/cp-kafka:7.6.1

container_name: kafka

hostname: kafka

restart: unless-stopped

ports:

- "9092:9092"

environment:

- KAFKA_ZOOKEEPER_CONNECT=zookeeper:2181

- KAFKA_ADVERTISED_LISTENERS=PLAINTEXT://192.168.44.135:9092

- KAFKA_LISTENERS=PLAINTEXT://0.0.0.0:9092

- KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR=1

- KAFKA_AUTO_CREATE_TOPICS_ENABLE=true

- KAFKA_LOG_RETENTION_HOURS=72

- KAFKA_LOG_SEGMENT_BYTES=104857600

- KAFKA_LOG_RETENTION_CHECK_INTERVAL_MS=300000

- KAFKA_NUM_PARTITIONS=3

- KAFKA_DEFAULT_REPLICATION_FACTOR=1

volumes:

- /data/elk/kafka/data:/var/lib/kafka/data

- /etc/localtime:/etc/localtime:ro

networks:

- elk-net

depends_on:

zookeeper:

condition: service_healthy

healthcheck:

test: ["CMD", "kafka-topics", "--bootstrap-server", "localhost:9092", "--list"]

interval: 30s

timeout: 10s

retries: 10

start_period: 60s

# ============================================================

# Logstash

# ============================================================

logstash:

image: logstash:8.17.4

container_name: logstash

hostname: logstash

restart: unless-stopped

ports:

- "9600:9600"

volumes:

- /data/elk/logstash.conf:/usr/share/logstash/pipeline/logstash.conf:ro

- /data/elk/logstash.yml:/usr/share/logstash/config/logstash.yml:ro

- /data/elk/logstash/data:/usr/share/logstash/data

- /data/elk/logstash/logs:/usr/share/logstash/logs

- /etc/localtime:/etc/localtime:ro

networks:

- elk-net

depends_on:

elasticsearch:

condition: service_healthy

kafka:

condition: service_healthy

environment:

- LS_JAVA_OPTS=-Xms512m -Xmx512m

EOF1.7 拉取 Docker 镜像

# 方式1:直接拉取(需要稳定网络)

docker pull zookeeper:3.8

docker pull confluentinc/cp-kafka:7.6.1

docker pull elasticsearch:8.17.4

docker pull kibana:8.17.4

docker pull logstash:8.17.4

# 方式2:使用 systemd 服务(适合 SSH 会话容易断开的情况)

cat > /etc/systemd/system/docker-pull-elk.service << 'SVC_EOF'

[Unit]

Description=Pull ELK Docker Images

After=docker.service

Requires=docker.service

[Service]

Type=oneshot

RemainAfterExit=no

ExecStart=/bin/bash /tmp/pull_images.sh

TimeoutStartSec=3600

StandardOutput=journal

StandardError=journal

[Install]

WantedBy=multi-user.target

SVC_EOF

cat > /tmp/pull_images.sh << 'PULL_EOF'

#!/bin/bash

for img in zookeeper:3.8 confluentinc/cp-kafka:7.6.1 elasticsearch:8.17.4 kibana:8.17.4 logstash:8.17.4; do

echo "Pulling $img ..."

docker pull $img

done

echo "ALL DONE"

PULL_EOF

systemctl daemon-reload

systemctl start docker-pull-elk.service

# 监控进度

journalctl -fu docker-pull-elk.service1.8 启动 ELK Stack

cd /data/elk

docker compose up -d

# 查看启动状态(等待所有容器 healthy)

docker ps --format 'table {{.Names}}\t{{.Status}}\t{{.Ports}}'预期输出(约2分钟后全部 healthy):

NAMES STATUS PORTS

logstash Up 5 minutes 5044/tcp, 0.0.0.0:9600->9600/tcp

kibana Up 5 minutes (healthy) 0.0.0.0:5601->5601/tcp

elasticsearch Up 6 minutes (healthy) 0.0.0.0:9200->9200/tcp, 0.0.0.0:9300->9300/tcp

kafka Up 14 minutes (healthy) 0.0.0.0:9092->9092/tcp

zookeeper Up 14 minutes (healthy) 2888/tcp, 3888/tcp, 0.0.0.0:2181->2181/tcp1.9 初始化 ES 密码

# 重置 kibana_system 用户密码(通过 REST API)

curl -X POST 'http://localhost:9200/_security/user/kibana_system/_password' \

-H 'Content-Type: application/json' \

-u 'elastic:Securitydev2021#' \

-d '{"password":"Securitydev2021#"}'

# 验证 ES 集群健康

curl -sf http://localhost:9200/_cluster/health -u elastic:Securitydev2021# | python3 -m json.tool1.10 创建 Kafka Topics

# 创建 etcd 日志 topic(6个分区)

docker exec kafka kafka-topics \

--bootstrap-server localhost:9092 \

--create --topic etcd-logs \

--partitions 6 \

--replication-factor 1 \

--if-not-exists

# 创建 syslog 日志 topic(6个分区)

docker exec kafka kafka-topics \

--bootstrap-server localhost:9092 \

--create --topic syslog-logs \

--partitions 6 \

--replication-factor 1 \

--if-not-exists

# 验证 topics

docker exec kafka kafka-topics --bootstrap-server localhost:9092 --list

# 输出: etcd-logs syslog-logs第二部分:Filebeat 节点部署(132/133/134)

在 三个节点上分别执行 ,仅

NODE_IP不同。

2.1 配置 YUM 仓库

cat > /etc/yum.repos.d/elasticsearch.repo << 'EOF'

[elasticsearch]

name=Elasticsearch repository for 8.x packages

baseurl=https://artifacts.elastic.co/packages/8.x/yum

gpgcheck=1

gpgkey=https://artifacts.elastic.co/GPG-KEY-elasticsearch

enabled=1

autorefresh=1

type=rpm-md

EOF2.2 安装 Filebeat

# 后台安装(避免超时)

nohup yum install -y filebeat > /tmp/filebeat-install.log 2>&1 &

# 查看安装进度

tail -f /tmp/filebeat-install.log

# 验证安装

filebeat version也可以从官网进行下载

null![]() https://www.elastic.co/downloads/past-releases/filebeat-8-19-14

https://www.elastic.co/downloads/past-releases/filebeat-8-19-14

2.3 配置 Filebeat

以下配置分别适用于三个节点(修改 NODE_IP 和 tags):

192.168.44.132 配置:

NODE_IP="192.168.44.132"

NODE_TAG="node132"192.168.44.133 配置:

NODE_IP="192.168.44.133"

NODE_TAG="node133"192.168.44.134 配置:

NODE_IP="192.168.44.134"

NODE_TAG="node134"

bash

# 将 NODE_IP 和 NODE_TAG 变量替换后执行

cat > /etc/filebeat/filebeat.yml << EOF

# ============================================================

# Filebeat 配置 - 节点 ${NODE_IP}

# ============================================================

filebeat.inputs:

# --- etcd 日志采集 ---

- type: log

id: etcd-log

enabled: true

paths:

- /var/log/etcd/etcd.log

fields:

log_type: etcd

node_ip: "${NODE_IP}"

node_role: etcd

fields_under_root: true

multiline:

type: pattern

pattern: '^{"level"'

negate: true

match: after

ignore_older: 24h

scan_frequency: 10s

close_inactive: 5m

tags: ["etcd", "${NODE_TAG}"]

# --- /var/log/messages 系统日志采集 ---

- type: log

id: syslog

enabled: true

paths:

- /var/log/messages

fields:

log_type: syslog

node_ip: "${NODE_IP}"

node_role: system

fields_under_root: true

ignore_older: 24h

scan_frequency: 10s

close_inactive: 5m

tags: ["syslog", "${NODE_TAG}"]

filebeat.config.modules:

path: \${path.config}/modules.d/*.yml

reload.enabled: false

setup.template.settings:

index.number_of_shards: 1

name: "${NODE_IP}"

setup.kibana:

host: "http://192.168.44.135:5601"

output.kafka:

hosts: ["192.168.44.135:9092"]

topic: '%{[log_type]}-logs'

partition.round_robin:

reachable_only: false

required_acks: 1

compression: gzip

max_message_bytes: 1000000

worker: 2

loadbalance: true

processors:

- drop_fields:

fields:

- agent.ephemeral_id

- agent.id

- ecs

ignore_missing: true

- add_host_metadata:

when.not.contains.tags: forwarded

logging.level: info

logging.to_files: true

logging.files:

path: /var/log/filebeat

name: filebeat

keepfiles: 7

permissions: 0644

EOF2.4 验证并启动 Filebeat

# 验证配置语法

filebeat test config -c /etc/filebeat/filebeat.yml

# 输出: Config OK

# 测试 Kafka 连通性

filebeat test output -c /etc/filebeat/filebeat.yml

# 启动服务

systemctl enable filebeat

systemctl start filebeat

# 查看状态

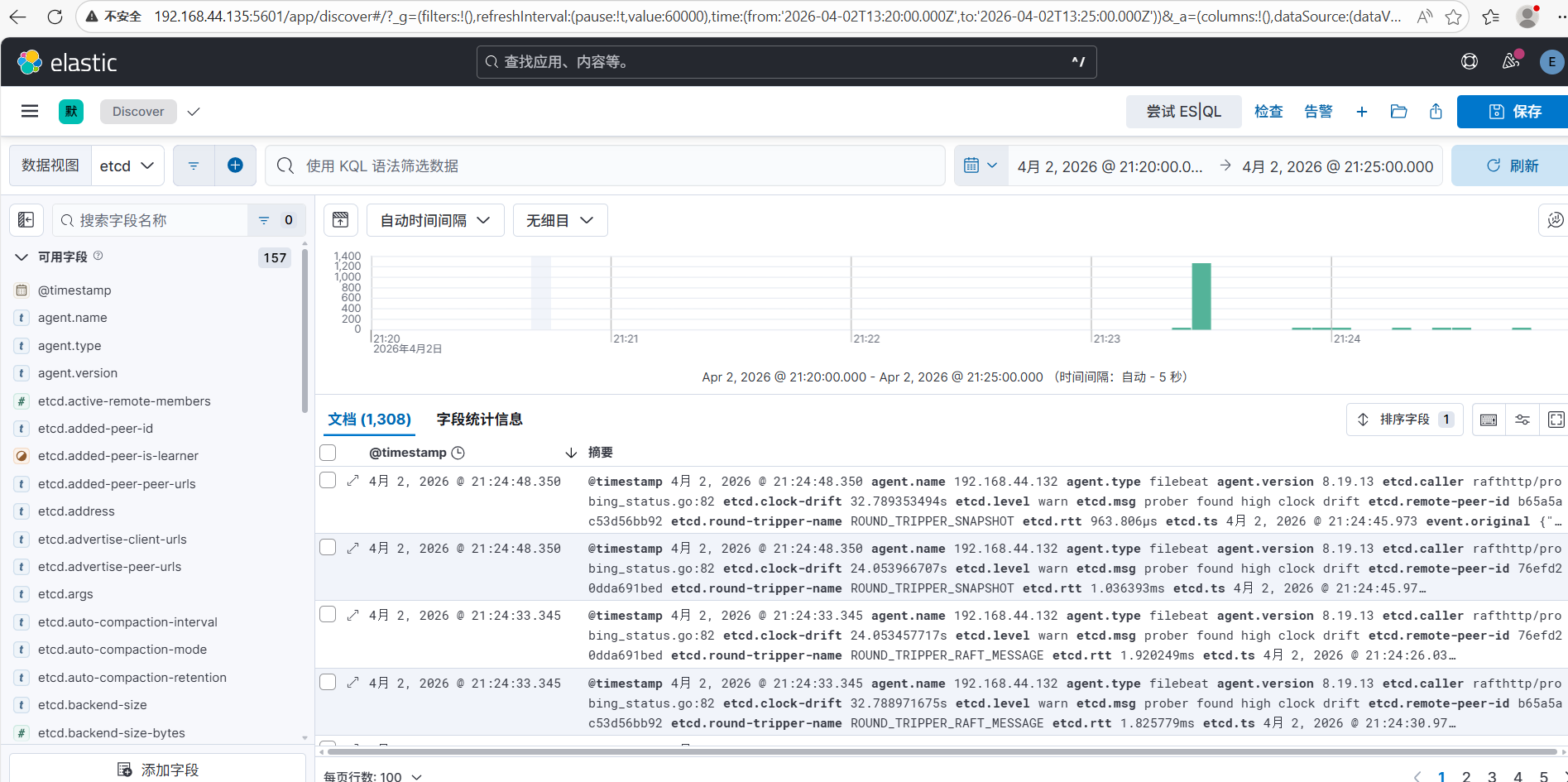

systemctl status filebeat第三部分:验证

3.1 容器健康检查(在 135 执行)

docker ps --format 'table {{.Names}}\t{{.Status}}\t{{.Ports}}'3.2 Elasticsearch 验证

# 集群健康

curl -sf http://localhost:9200/_cluster/health -u elastic:Securitydev2021# | python3 -m json.tool

# 查看索引(有日志后会出现)

curl -sf 'http://localhost:9200/_cat/indices?v&h=index,docs.count,store.size,status' -u elastic:Securitydev2021#预期输出:

index docs.count store.size status

etcd-logs-2026.04.02 1310 3mb open

syslog-logs-2026.04.02 141 257.3kb open3.3 Kafka 验证

# 查看 topic 列表

docker exec kafka kafka-topics --bootstrap-server localhost:9092 --list

# 查看 topic 消息数量

docker exec kafka kafka-run-class kafka.tools.GetOffsetShell --bootstrap-server localhost:9092 --topic etcd-logs

docker exec kafka kafka-run-class kafka.tools.GetOffsetShell --bootstrap-server localhost:9092 --topic syslog-logs

# 查看消费者组

docker exec kafka kafka-consumer-groups --bootstrap-server localhost:9092 --list

# 输出: logstash-elk3.4 Logstash 验证

curl -sf http://localhost:9600/?pretty | grep -E 'version|status'

# "status" : "green"3.5 Filebeat 节点验证(132/133/134 执行)

systemctl status filebeat

# Active: active (running)

# 查看日志

tail -50 /var/log/filebeat/filebeat第四部分:Kibana 配置数据视图

4.1 访问 Kibana

浏览器访问:http://192.168.44.135:5601

- 用户名:

elastic - 密码:

Securitydev2021#

4.2 创建数据视图(Index Pattern)

- 进入 Management → Stack Management → Kibana → Data Views

- 点击 Create data view

- 创建以下数据视图:

| 名称 | 索引模式 | 时间字段 |

|---|---|---|

| etcd-logs | etcd-logs-* |

@timestamp |

| syslog-logs | syslog-logs-* |

@timestamp |

4.3 查看日志

- 进入 Discover

- 选择数据视图(

etcd-logs或syslog-logs) - 调整时间范围,查看实时日志

第五部分:日常运维

5.1 ELK 服务管理

# 在 192.168.44.135 执行

# 查看所有容器状态

docker compose -f /data/elk/docker-compose.yml ps

# 重启特定服务

docker compose -f /data/elk/docker-compose.yml restart elasticsearch

docker compose -f /data/elk/docker-compose.yml restart kibana

docker compose -f /data/elk/docker-compose.yml restart logstash

docker compose -f /data/elk/docker-compose.yml restart kafka

# 查看容器日志

docker logs elasticsearch --tail 50 -f

docker logs kibana --tail 50 -f

docker logs logstash --tail 50 -f

docker logs kafka --tail 50 -f

# 停止整个 ELK 栈

docker compose -f /data/elk/docker-compose.yml down

# 启动整个 ELK 栈

docker compose -f /data/elk/docker-compose.yml up -d5.2 Filebeat 服务管理

# 在各采集节点执行

systemctl status filebeat

systemctl restart filebeat

systemctl stop filebeat

systemctl start filebeat

# 查看实时日志

journalctl -fu filebeat

# 查看 Filebeat 日志文件

tail -f /var/log/filebeat/filebeat5.3 ES 索引管理

# 查看所有索引及大小

curl -sf 'http://localhost:9200/_cat/indices?v&s=index' -u elastic:Securitydev2021#

# 删除指定日期的索引(示例)

curl -X DELETE 'http://localhost:9200/etcd-logs-2026.03.01' -u elastic:Securitydev2021#

# 查看 ES 磁盘占用

curl -sf 'http://localhost:9200/_cat/allocation?v' -u elastic:Securitydev2021#5.4 Kafka 管理

# 查看 topic 详情

docker exec kafka kafka-topics --bootstrap-server localhost:9092 --describe --topic etcd-logs

# 查看消费者组 lag

docker exec kafka kafka-consumer-groups --bootstrap-server localhost:9092 --describe --group logstash-elk

# 删除 topic(谨慎操作)

docker exec kafka kafka-topics --bootstrap-server localhost:9092 --delete --topic etcd-logs第六部分:故障排查

6.1 ES 启动失败

# 查看 ES 日志

docker logs elasticsearch --tail 100 2>&1 | grep -E 'ERROR|WARN|exception'

# 常见问题:vm.max_map_count 不足

sysctl vm.max_map_count

# 如果小于 262144,执行:

sysctl -w vm.max_map_count=262144

echo 'vm.max_map_count=262144' >> /etc/sysctl.conf6.2 Logstash 无法消费 Kafka

# 查看 Logstash 日志

docker logs logstash --tail 50 -f

# 检查 Kafka 消费者 lag

docker exec kafka kafka-consumer-groups \

--bootstrap-server localhost:9092 \

--describe --group logstash-elk6.3 Filebeat 无法连接 Kafka

# 检查 Kafka 端口

nc -zv 192.168.44.135 9092

# 检查防火墙

firewall-cmd --list-ports

# 重启 Filebeat

systemctl restart filebeat

journalctl -fu filebeat --since "5 minutes ago"6.4 Kibana 无法连接 ES

# 检查 kibana_system 用户密码

curl -sf http://localhost:9200/_security/user/kibana_system -u elastic:Securitydev2021#

# 重置密码

curl -X POST 'http://localhost:9200/_security/user/kibana_system/_password' \

-H 'Content-Type: application/json' \

-u 'elastic:Securitydev2021#' \

-d '{"password":"Securitydev2021#"}'端口汇总

| 服务 | 端口 | 协议 | 说明 |

|---|---|---|---|

| Elasticsearch | 9200 | HTTP | REST API |

| Elasticsearch | 9300 | TCP | 节点通信 |

| Kibana | 5601 | HTTP | Web UI |

| Kafka | 9092 | TCP | 消息队列 |

| Zookeeper | 2181 | TCP | ZK 客户端 |

| Logstash | 9600 | HTTP | 监控 API |

附录:部署脚本说明

本文档配套脚本位于 scripts/ 目录:

| 脚本 | 用途 |

|---|---|

01_setup_135_elk.sh |

135 服务器一键部署 ELK |

02_setup_filebeat_node.sh |

Filebeat 节点安装配置(传参 NODE_IP) |

03_init_es_passwords.sh |

初始化 ES 用户密码 |

04_verify_platform.sh |

验证平台整体状态 |

05_start_filebeat_all.sh |

批量启动所有节点 Filebeat |

文档最后更新:2026-04-08 | 部署验证时间:2026-04-02 21:30 CST