LangChain解决的问题

一个基于LLM的应用,人工写代码需要与LLM进行多次提示词交互,并且还需要对LLM的输出结果,进行解析。上下文的处理,需要编写大量的粘合代码。

为了简化这些重复的工作,并且形成良好的代码开发。LangChain顺势而出,方便快速构建一个LLM的应用,且为基于LLM开发的生态达成共识。

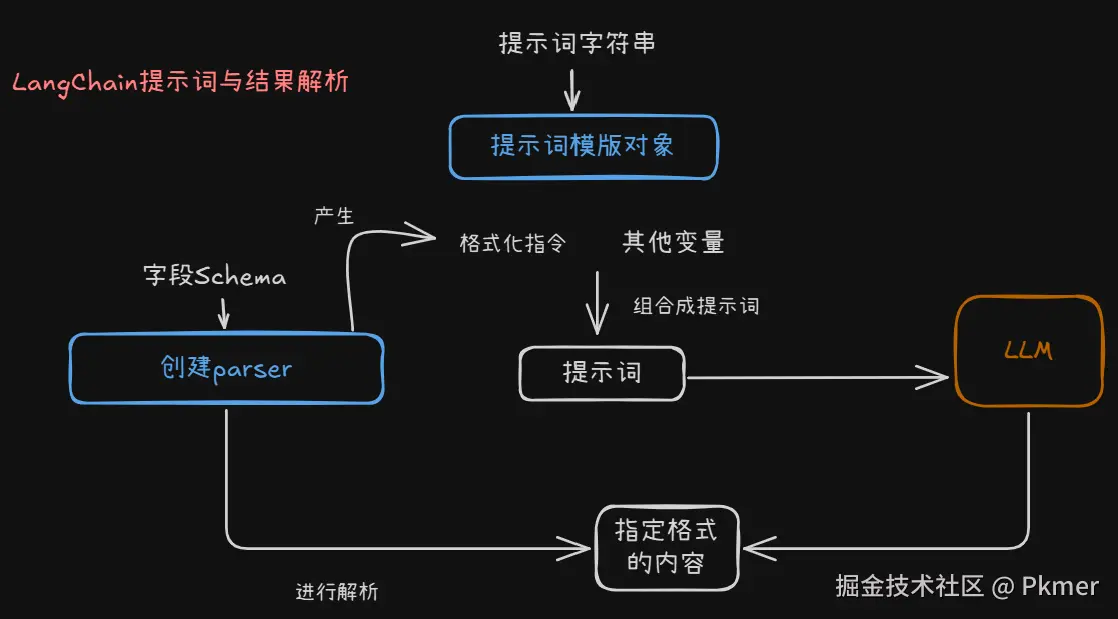

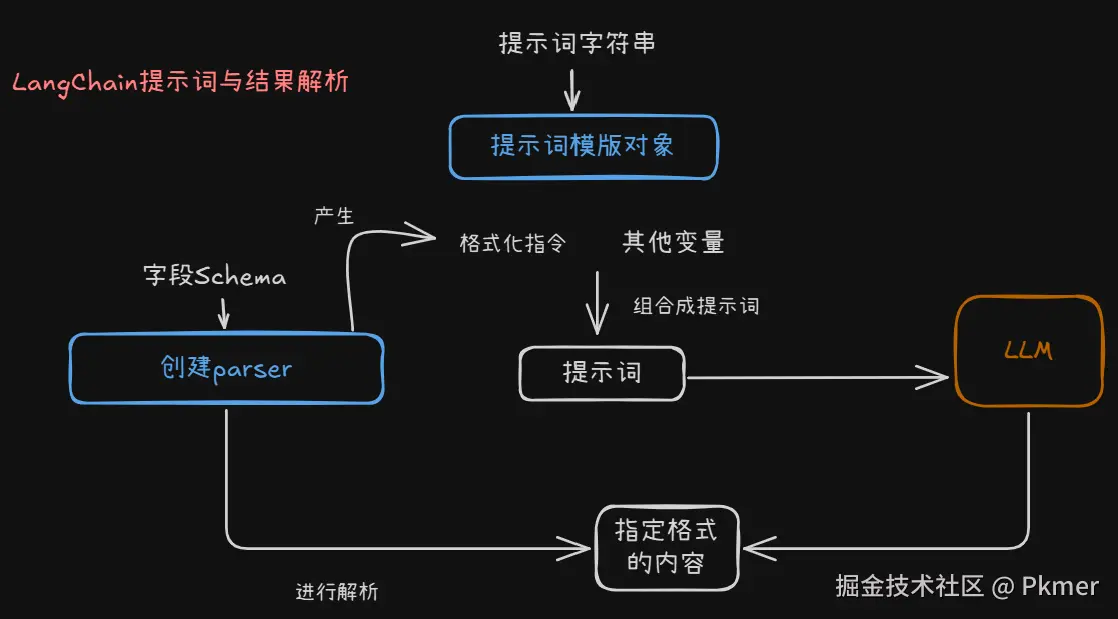

应用与模型的对话:提示词与回复解析

提示词

Langchain将提示词字符串模版变成编程领域中可复用的提示词模版对象。

原始的方式

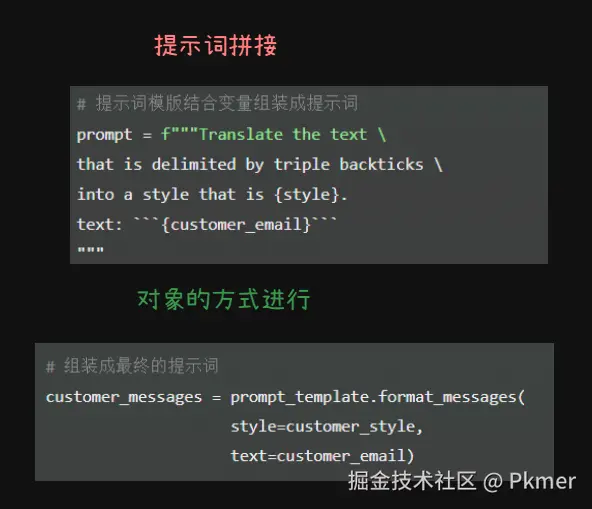

手写代码的方式,定义提示词模版之后,用变量进行填充

python

# 变量

customer_email = """

Arrr, I be fuming that me blender lid \

flew off and splattered me kitchen walls \

with smoothie! And to make matters worse,\

the warranty don't cover the cost of \

cleaning up me kitchen. I need yer help \

right now, matey!

"""

# 变量

style = """American English \

in a calm and respectful tone"""

# 提示词模版结合变量组装成提示词

prompt = f"""Translate the text \

that is delimited by triple backticks \

into a style that is {style}.

text: ```{customer_email}```

"""此时发送给LLM最终的提示词为

txt

Translate the text that is delimited by triple backticks into a style that is

American English in a calm and respectful tone .

text: ``` Arrr, I be fuming that me blender lid flew off and splattered me kitchen walls with smoothie!

And to make matters worse,the warranty don't cover the cost of cleaning up me kitchen.

I need yer help right now, matey! ```Langchain方式

将提示词模版变成对象

这里的提示词字符串模版,关于要定义的变量使用

{}进行包裹占位,而不是想之前使用f-string(格式化字符串字面值)

python

# 提示词模版

template_string = """Translate the text \

that is delimited by triple backticks \

into a style that is {style}. \

text: ```{text}```

"""

# 将提示词模版变成对象

from langchain.prompts import ChatPromptTemplate

prompt_template = ChatPromptTemplate.from_template(template_string)此时的提示词模版,变成了一个可复用的带对象,可以看到变量此时变成了这个提示词模版的输入变量,非常符合编程的习惯,开发体验良好

python

prompt_template.messages[0].prompt

"""输出

PromptTemplate(

input_variables=['style', 'text'],

output_parser=None,

partial_variables={},

template='Translate the text that is delimited by triple backticks into a style that is {style}. text: ```{text}```\n',

template_format='f-string',

validate_template=True

)

"""组装变量,形成一个完整的提示词给LLM

python

# 变量

customer_style = """American English \

in a calm and respectful tone

"""

# 变量

customer_email = """

Arrr, I be fuming that me blender lid \

flew off and splattered me kitchen walls \

with smoothie! And to make matters worse, \

the warranty don't cover the cost of \

cleaning up me kitchen. I need yer help \

right now, matey!

"""

# 组装成最终的提示词

customer_messages = prompt_template.format_messages(

style=customer_style,

text=customer_email)

# 发送

from langchain.chat_models import ChatOpenAI

chat = ChatOpenAI()

customer_response = chat(customer_messages)发送的提示词

python

print(type(customer_messages[0]))

# <class 'langchain.schema.HumanMessage'>

print(customer_messages[0])

"""输出

content = """Translate the text that is delimited by triple backticks into a style that is American English in a calm and respectful tone. Text: ```

I am very upset that my blender lid flew off and splattered my kitchen walls with smoothie. To make matters worse, the warranty does not cover the cost of cleaning up my kitchen. I would appreciate your help with this matter.

```"""

"""小结

将字符串的拼接形成最终提示词,变成使用LangChain的对象方式。抽象出来提示词模版对象。

结果解析

当构建一个复杂的LLM应用的时候,我们都会指示LLM以某种特定的格式进行输出。

ReAct:Reasoning(Thought),Action,Observation),连锁思维推理(一个循环)。补充一点工具的调用就是在这个循环中使用的,让LLM自主决策选用调用哪一个工具。然后执行工作调用,将工作调用的结果整合进上下文,进行下一轮的推理循环。直到完成任务,退出循环。

LLM回复的结果是一段字符串,而我们需要进行解析,把字符串变成编程的对象的属性,方便我们对结果进行编程处理。让LLM回复我们JSON字符串,在python中我们把它变成字典,在Java中变成POJO的对象属性

json

{

"gift": False,

"delivery_days": 5,

"price_value": "pretty affordable!"

}LLM回复的字符串

使用上面的LangChain提示词方式构建提示词,发送给LLM

python

# 变量

customer_review = """

This leaf blower is pretty amazing. It has four settings:

candle blower, gentle breeze, windy city, and tornado.

It arrived in two days, just in time for my wife's

anniversary present.

I think my wife liked it so much she was speechless.

So far I've been the only one using it, and I've been

using it every other morning to clear the leaves on our lawn.

It's slightly more expensive than the other leaf blowers

out there, but I think it's worth it for the extra features.

"""

# 字符串模版

review_template = """

For the following text, extract the following information:

gift: Was the item purchased as a gift for someone else?

Answer True if yes, False if not or unknown.

delivery_days: How many days did it take for the product

to arrive? If this information is not found, output -1.

price_value: Extract any sentences about the value or price,

and output them as a comma separated Python list.

Format the output as JSON with the following keys:

gift

delivery_days

price_value

text: {text}

"""

prompt_template = ChatPromptTemplate.from_template(review_template)

messages = prompt_template.format_messages(text=customer_review)

chat = ChatOpenAI(temperature=0.0, model=llm_model)

response = chat(messages)

print(response.content)结果是一个json形式的字符串, response.content是字符串,直接获取某个属性response.content.get('gift')会进行报错❌️

json

{

"gift": true,

"delivery_days": 2,

"price_value": ["It's slightly more expensive than the other leaf blowers out there, but I think it's worth it for the extra features."]

}指定格式的字符串变成编程语言的类型

这里指定格式的字符串一般使用json格式,由LLM返回,通过Langchain变成python变成语言下的字典形式

定义响应的Schema,构建输出解析器,解析器能够得到格式化的指令,用于添加在提示词中,指导LLM生成指定的格式

python

from langchain.output_parsers import ResponseSchema

from langchain.output_parsers import StructuredOutputParser

# 定义响应字段的schema

gift_schema = ResponseSchema(name="gift",

description="Was the item purchased\

as a gift for someone else? \

Answer True if yes,\

False if not or unknown.")

delivery_days_schema = ResponseSchema(name="delivery_days",

description="How many days\

did it take for the product\

to arrive? If this \

information is not found,\

output -1.")

price_value_schema = ResponseSchema(name="price_value",

description="Extract any\

sentences about the value or \

price, and output them as a \

comma separated Python list.")

# 定义输出解释器

response_schemas = [gift_schema,

delivery_days_schema,

price_value_schema]

output_parser = StructuredOutputParser.from_response_schemas(response_schemas)解析器可以得到格式化指令,添加在提示词中。

python

format_instructions = output_parser.get_format_instructions()

print(format_instructions)

"""生成的格式化指令

The output should be a markdown code snippet formatted in the following schema, including the leading and trailing "\`\`\`json" and "\`\`\`":

{

"gift": "string", // Was the item purchased as a gift for someone else? Answer True if yes, False if not or unknown.

"delivery_days": "string", // How many days did it take for the product to arrive? If this information is not found, output -1.

"price_value": "string" // Extract any sentences about the value or price, and output them as a comma separated Python list.

}

"""最终传给LLM的完整提示词

python

# 提示词模版

review_template_2 = """\

For the following text, extract the following information:

gift: Was the item purchased as a gift for someone else? \

Answer True if yes, False if not or unknown.

delivery_days: How many days did it take for the product\

to arrive? If this information is not found, output -1.

price_value: Extract any sentences about the value or price,\

and output them as a comma separated Python list.

text: {text}

{format_instructions}

"""

# 提示词模版对象

prompt = ChatPromptTemplate.from_template(template=review_template_2)

# 传给LLM的完整提示词

messages = prompt.format_messages(text=customer_review,

format_instructions=format_instructions)

print(messages[0].content)

"""完整提示词

For the following text, extract the following information:

gift: Was the item purchased as a gift for someone else? \

Answer True if yes, False if not or unknown.

delivery_days: How many days did it take for the product\

to arrive? If this information is not found, output -1.

price_value: Extract any sentences about the value or price,\

and output them as a comma separated Python list.

text: This leaf blower is pretty amazing. It has four settings:candle blower, gentle breeze, windy city, and tornado. It arrived in two days, just in time for my wife's anniversary present. I think my wife liked it so much she was speechless. So far I've been the only one using it, and I've been using it every other morning to clear the leaves on our lawn. It's slightly more expensive than the other leaf blowers out there, but I think it's worth it for the extra features.

The output should be a markdown code snippet formatted in the following schema, including the leading and trailing "\`\`\`json" and "\`\`\`":

{

"gift": "string", // Was the item purchased as a gift for someone else? Answer True if yes, False if not or unknown.

"delivery_days": "string", // How many days did it take for the product to arrive? If this information is not found, output -1.

"price_value": "string" // Extract any sentences about the value or price, and output them as a comma separated Python list.

}

"""LLM回复的仍然是字符串,但是是按照我们提示词指定的指令格式返回的字符串。有了这种既定的格式,那么就可以使用解析器进行处理了。

python

response = chat(messages)

print(response.content)

"""LLM返回的内容

```json

{

"gift": true,

"delivery_days": 2,

"price_value": ["It's slightly more expensive than the other leaf blowers out there, but I think it's worth it for the extra features."]

}

\```

"""调用解析器进行解析变成python的字典

python

output_dict = output_parser.parse(response.content)

print(type(output_dict)) # dict

print(output_dict.get['delivery_days']) # 2小结

提示词模版通常与输出解析器一起结合使用