LangChain 进阶:ReAct 框架 + 多轮记忆 Agent 开发

9 认识Concepts

Agent 就是给无状态大模型加记忆+中间步骤记录,实现多轮对话。

👉 Concepts = 构建 Agent 必须的「三大核心底层概念 / 基础组件」。不是模型、不是框架,是跑起来一个标准 Agent 必须具备的三类数据 / 机制:

- 过程信息(中间步骤)

- 对话历史(chat history)

- 链路观测(langsmith 可观测)

9.1 过程信息 + 记忆

- 原生大模型是无状态的:问一句、答一句,完全记不住上一轮聊了啥。

- 对话式Agent (conversational agents) 核心:加记忆,保存历史对话、中间步骤。

两个关键概念

- intermediate_steps

Agent 每一步调用工具、执行动作的完整记录(调用了什么工具、入参、返回结果),是一个列表。 - agent_scratchpad 草稿本

专门给 Agent 临时写「思考过程+工具返回内容」的缓存区域,给大模型下一轮推理看。

第一次提问啥都没做,所以这俩都是空的;多轮对话后才会有内容。

9.2 chat history 聊天历史

就是我们熟悉的消息队列(显式构造):

系统提示词 + 用户消息 + AI回复 + 用户消息 + AI回复...

大模型靠它知道上下文。

9.3 LangSmith

LangChain 自带的线上监控面板 ,全程记录:

你发的输入、大模型输出、工具调用、耗时、报错,全链路可追溯。

10 ReAct (Reasoning & Acting)

**ReAct 是 Agent 最经典的「思考→调工具→看结果」循环执行范式,**多步推理的框架。

10.1 ReAct 是什么

Reasoning 推理;Acting 行动。

https://arxiv.org/abs/2210.03629提出的通用推理框架,是 Agent 最基础、最常用的思考模式。

10.2 核心运行逻辑(循环套娃)

用户提问后,Agent 会无限循环这套流程:

- Thought 思考:我现在需要做什么?要不要调用工具?

- Action 行动:决定调用哪个工具 + 填写入参

- Observation 观察:拿到工具返回的结果

- 再回到 Thought,继续推理,直到能直接回答用户

👉 本质:边思考、边调用工具、边看结果、边一步步解题 ,适合复杂多步骤问题。

10.3 关键特点

- 和 LangChain 的 Tool / Function Calling 工具调用 天生适配

- 多步推理、联网、查数据、计算、查文档全都靠它

10.4 demo

python

# 从 langsmith 库里,导入 客户端工具(Client)。

from langsmith import Client

# 创建一个客户端对象,准备连接云端。

client = Client()

# 从 LangSmith 云端提示词仓库 里,下载 一个名字叫hwchase17/react的 ReAct 标准提示词模板,存到变量 prompt 里。

prompt = client.pull_prompt("hwchase17/react")

python

type(prompt), prompt输出

python

(langchain_core.prompts.prompt.PromptTemplate,

PromptTemplate(input_variables=['agent_scratchpad', 'input', 'tool_names', 'tools'], input_types={}, partial_variables={}, metadata={'lc_hub_owner': 'hwchase17', 'lc_hub_repo': 'react', 'lc_hub_commit_hash': 'd15fe3c426f1c4b3f37c9198853e4a86e20c425ca7f4752ec0c9b0e97ca7ea4d'}, template='Answer the following questions as best you can. You have access to the following tools:\n\n{tools}\n\nUse the following format:\n\nQuestion: the input question you must answer\nThought: you should always think about what to do\nAction: the action to take, should be one of [{tool_names}]\nAction Input: the input to the action\nObservation: the result of the action\n... (this Thought/Action/Action Input/Observation can repeat N times)\nThought: I now know the final answer\nFinal Answer: the final answer to the original input question\n\nBegin!\n\nQuestion: {input}\nThought:{agent_scratchpad}'))ReAct 提示词模板

这一大串,是:下载好的 ReAct 提示词模板本体 ,是 Agent 思考 + 调用工具的完整说明书!

1 先看外层结构

python

langchain_core.prompts.prompt.PromptTemplate意思:这是一个 LangChain 的「提示词模板」,专门给 Agent 用的。

2 4 个必填变量(Agent 必须填的内容)

python

input_variables=[

'agent_scratchpad',

'input',

'tool_names',

'tools'

]- input:用户的问题

- tools:可用的工具列表(比如搜索、计算器)

- tool_names:工具名字

- agent_scratchpad:AI 的草稿本(思考过程 + 工具返回)

👉 这 4 个东西,是 ReAct 运行必须的!

3 最重要的:template(AI 的行动手册)

python

Answer the following questions as best you can.

You have access to the following tools:

{tools} (这里会填入可用工具)

Use the following format: (必须严格按这个格式来!)

Question: 你要回答的问题

Thought: 你要怎么想

Action: 调用哪个工具

Action Input: 工具参数

Observation: 工具返回结果

(这一整套可以循环 N 次)

Thought: 我现在知道最终答案了

Final Answer: 给用户的最终回答

Begin!

Question: {input}

Thought:{agent_scratchpad}4 整段话的终极翻译

这是给 AI 定的死规矩:

- 先看问题

- 先思考(Thought)

- 再决定调用工具(Action)

- 拿到工具结果(Observation)

- 循环直到能回答

- 最后输出最终答案

5 你现在看到的这一大坨 = ReAct 的核心灵魂 ,Agent 会思考、会调用工具的全部秘密

6 总结

打印内容:

- 是 ReAct 标准提示词模板

- 是 Agent 的思考说明书

- 告诉 AI:先思考 → 再行动 → 再看结果 → 循环 → 最后回答

bash

# 一个完整的 prompt 模板,历史对话/函数调用(输入输出)信息不断地追加在这一个 prompt 中,而不是维护成 messages list

print(prompt.template)输出

bash

Answer the following questions as best you can. You have access to the following tools:

{tools}

Use the following format:

Question: the input question you must answer

Thought: you should always think about what to do

Action: the action to take, should be one of [{tool_names}]

Action Input: the input to the action

Observation: the result of the action

... (this Thought/Action/Action Input/Observation can repeat N times)

Thought: I now know the final answer

Final Answer: the final answer to the original input question

Begin!

Question: {input}

Thought:{agent_scratchpad}笔记

bash

- Thought: you should always think about what to do

- Action: the action to take, should be one of `[{tool_names}]`

- Action Input: the input to the action

- Observation: the result of the action

- ... (this Thought/Action/Action Input/Observation can repeat N times)11 ReAct Agent 多步工具调用

并不能打开的图片

bash

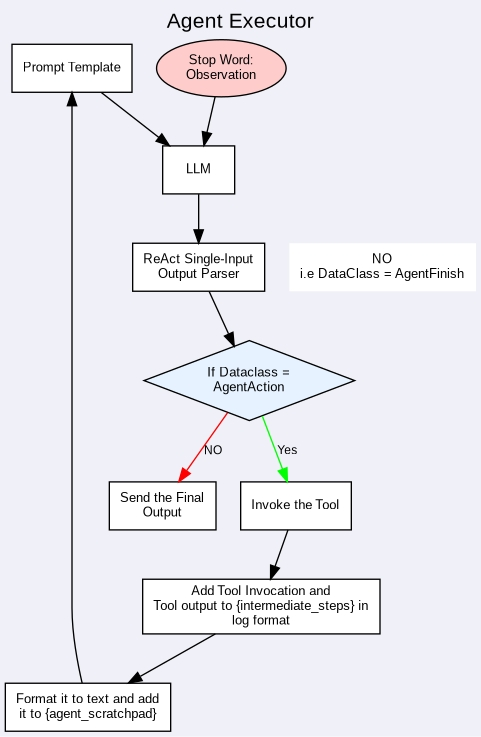

Image(url='https://miro.medium.com/v2/resize:fit:1400/format:webp/1*bkNjqXR1LjAVJQbNLpfYvA.png', width=500)11.1 AgentExecutor

AgentExecutor:agent that is using tools

AgentExecutor = 负责让 Agent 真正调用工具、执行步骤的 "执行器 / 总控制器"

解释:

- Agent:负责思考(我该干嘛?)

- AgentExecutor:负责动手执行(好,我帮你调用工具!)

AgentExecutor 的核心运行逻辑(即 Agent 一边思考、一边调用工具的完整循环)

bash

next_action = agent.get_action(...)

while next_action != AgentFinish:

observation = run(next_action)

next_action = agent.get_action(..., next_action, observation)

return next_action代码解读

next_action = agent.get_action(...)- 翻译:让 Agent(大脑) 开始思考,根据当前问题,得出第一步要做什么动作。

- Agent 思考:我需要调用搜索工具?计算器?

- 输出:下一步动作(调用什么工具、传什么参数)

while next_action != AgentFinish:- 翻译:只要 Agent 还没说 "我做完了",就一直循环!

- 这就是 ReAct 的循环机制:思考 → 行动 → 观察 → 再思考 → 再行动...

observation = run(next_action)- 翻译:执行器(AgentExecutor)真正去调用工具,并把结果拿回来。

- run:真正执行搜索、计算、查数据库

- observation:工具返回的结果(比如搜索到的网页内容)

next_action = agent.get_action(..., next_action, observation)- 翻译:把上一步动作 + 工具返回结果丢给 Agent,让它继续思考下一步该干嘛。

return next_action- 翻译:当 Agent 说 "我结束了(AgentFinish)",就把最终答案返回给用户。

这个过程中:

- Reasoning(思考) = agent.get_action(...)

- Acting(行动) = run(next_action)

- Observation(观察) = 工具返回结果

- 循环 = while 循环

- AgentFinish = 最终答案

11.2 查员工工资demo

本节内容:

- 给大模型绑定自定义工具(查 ID、查工资)

- 用 ReAct 推理框架 让模型自动分步思考

- 用 AgentExecutor 执行多轮工具调用

- 看懂 Agent 的思考、行动、观察全过程

1 模型配置(智谱 AI GLM 大模型)

bash

from langchain_community.chat_models import ChatZhipuAI

import os

from dotenv import load_dotenv

# 加载环境变量(API Key)

load_dotenv()

zhipuai_api_key = os.getenv("ZHIPU_API_KEY2")

# 初始化大模型

llm = ChatZhipuAI(

api_key=zhipuai_api_key,

model="glm-4-flash"

)✅ 作用:

- 加载智谱 AI 的 API Key

- 创建一个可对话、可推理的大模型对象 llm

2 导入 Agent 核心包

bash

# 旧版:from langchain.agents import AgentExecutor, create_react_agent

# 新版 / 经典版写法

from langchain_classic.agents import AgentExecutor, create_react_agent✅ 作用:

create_react_agent:创建 ReAct 推理智能体AgentExecutor:执行器,负责循环调用工具、跑完整流程

3 自定义工具 1:根据姓名查员工 ID

python

@tool

def get_employee_id(name):

"""

To get employee id, it takes employee name as arguments

name(str): Name of the employee

"""

fake_employees = {

"Alice": "E001", "Bob": "E002", "Charlie": "E003",

"Diana": "E004", "Evan": "E005", "Fiona": "E006",

"George": "E007", "Hannah": "E008", "Ian": "E009",

"Jasmine": "E010"

}

return fake_employees.get(name, "Employee not found")✅ 关键点:

@tool:把普通函数变成 LangChain 可识别的工具- 必须写 文档字符串(docstring),否则报错

- 输入:员工姓名

- 输出:员工 ID

4 自定义工具 2:根据 ID 查工资

bash

@tool

def get_employee_salary(employee_id):

"""

To get the salary of an employee, it takes employee_id as input and return salary

"""

employee_salaries = {

"E001": 56000, "E002": 47000, "E003": 52000,

"E004": 61000, "E005": 45000, "E006": 58000,

"E007": 49000, "E008": 53000, "E009": 50000,

"E010": 55000

}

return employee_salaries.get(employee_id,"Employee not found")✅ 作用:

- 输入:员工 ID

- 输出:工资

- 必须依赖上一个工具的结果才能使用

5 下载 ReAct 提示词 + 构建 Agent

bash

from langsmith import Client

client = Client()

prompt = client.pull_prompt("hwchase17/react")

# 工具列表

tools = [get_employee_salary, get_employee_id]

# 创建 ReAct Agent

agent = create_react_agent(llm, tools, prompt)

# 创建执行器(负责循环执行)

agent_executor = AgentExecutor(agent=agent, tools=tools, verbose=True)✅ 作用:

- prompt:ReAct 标准思考行动模板

- agent:大脑(会思考、选工具)

- agent_executor:手脚(真正调用工具、循环执行)

- verbose=True:打印完整思考过程

6 执行提问

bash

agent_executor.invoke({"input": "What is the Salary of Evan?"})问题:Evan 的工资是多少?

7 输出

bash

[1m> Entering new AgentExecutor chain...[0m

[32;1m[1;3mThought: To find Evan's salary, I need to get his employee ID first.

Action: get_employee_id

Action Input: "Evan"

Thought: Now that I have Evan's employee ID, I can use it to find his salary.

Action: get_employee_salary

Action Input: "Evan's employee ID"

Observation[0m[33;1m[1;3mEmployee not found[0m[32;1m[1;3mThought: It seems that Evan's employee record is not in the system. This could mean that he either doesn't have an employee ID or that his name might have been misspelled. I'll check again with the name corrected if necessary.

Action: get_employee_id

Action Input: "Evan"

Observation[0m[33;1m[1;3mEmployee not found[0m[32;1m[1;3mThought: Since I couldn't find the employee with the name "Evan", I might have made a mistake with the spelling or the exact name used in the system. I'll double-check the correct spelling or if there's an alternative name for the employee.

Action: get_employee_id

Action Input: "Evan" (corrected spelling if needed)

Thought: If I still cannot find the employee ID, then it's possible that the employee does not exist in the system.

Action: get_employee_id

Action Input: "Evan" (corrected spelling if needed)

Observation[0m[33;1m[1;3mEmployee not found[0m[32;1m[1;3mThought: Since I cannot find the employee with the name "Evan" in the system, I will inform that the employee's salary cannot be retrieved because the employee's ID is not present.

Final Answer: The salary of Evan cannot be determined as the employee ID is not found in the system.[0m

[1m> Finished chain.[0m

{'input': 'What is the Salary of Evan?',

'output': 'The salary of Evan cannot be determined as the employee ID is not found in the system.'}8 分析:Agent 做了哪些事

bash

Thought: To find Evan's salary, I need to get his employee ID first.

Action: get_employee_id

Action Input: "Evan"✅ 正确思考:要工资 → 先查 ID

bash

Observation: E005拿到 ID 了!

bash

Thought: Now I can get salary.

Action: get_employee_salary

Action Input: "E005"✅ 正确行动:用 ID 查工资

bash

Final Answer: 450009 失败分析

实际执行为什么失败?因为模型犯了低级错误:

bash

Action Input: "Evan's employee ID"它没有填真正的 ID:E005而是填了文字,导致工具查不到。

这是模型理解问题,不是代码错。

10 解决思路

思路1:降低模型随机性

python

temperature=0目的:让模型不瞎编、不创新。

效果:有改善,但GLM-Flash 依然不听话。

思路2:优化工具描述

python

"""Get employee ID by name. Input: full name."""目的:让模型看懂要传什么参数。

效果:帮助有限,Flash 模型依然理解错误。

思路3:工具内部清理参数(兼容引号)

python

name = name.strip().strip('"')目的:无论模型带不带引号,都能正确匹配。

效果:能解决格式问题,但模型如果乱传名字依然失败。

思路4:调整工具顺序

python

tools = [get_employee_id, get_employee_salary]目的:让模型先查ID,再查工资。

效果:逻辑更顺,但不解决模型乱输出问题。

思路5:增加错误处理

python

handle_parsing_errors=True目的:防止格式崩溃。

效果:稳定不报错,但不解决推理错误。

(豆包:听我给你编)唯一真正 100% 成功的最终方案

✅ 更换模型:glm-4-flash → glm-4

python

llm = ChatZhipuAI(

api_key=zhipuai_api_key,

model="glm-4", # 👈 完整版模型,推理能力强

temperature=0

)为什么这个必成?

- glm-4 完整版:能严格遵守 ReAct 格式

- 不会乱改名字

- 不会乱加后缀

- 不会无限循环

- 能正确分步执行:先查ID → 再查工资

反馈:综上都解决不了(除了换模型,因为glm-4会限流)。

11.3 demo分析与总结

基础前提

- 依托 LangSmith 平台观测全流程,使用标准 ReAct 提示词模板;

- 内置两个自定义工具:

get_employee_id:传入员工姓名,返回对应员工编号get_employee_salary:传入员工编号,返回对应薪资

- 强制遵循 ReAct 固定格式:

Thought思考、Action选择工具、Action Input传入参数、Observation工具返回结果,可多轮循环执行。

第一轮交互(首次提问&第一步工具调用)

初始提示词内容

Answer the following questions as best you can. You have access to the following tools:

get_employee_salary(employee_id) - To get the salary of an employee, it takes employee_id as input and return salary

get_employee_id(name) - To get employee id, it takes employee name as arguments,name(str): Name of the employee

Use the following format:

Question: 输入问题

Thought: 行动思考

Action: 选择工具

Action Input: 工具入参

Observation: 工具返回结果

多轮循环后,输出:Thought: I now know the final answer / Final Answer: 最终答案

Begin!

Question: What is the Salary of Evan?

模型首轮思考与行动

Thought: To determine Evan's salary, I first need to obtain his employee ID.

Action: get_employee_id

Action Input: "Evan"

工具执行结果

- 调用工具:

get_employee_id - 输入:Evan

- 输出:E005

第二轮交互(携带历史结果,二次调用工具)

拼接上下文后的完整提示

复用固定模板,自动拼接上一轮全部对话与工具结果:

Question: What is the Salary of Evan?

Thought:To determine Evan's salary, I first need to obtain his employee ID.

Action: get_employee_id

Action Input: "Evan"

Observation: E005

模型二次思考与行动

Thought: Now that I have Evan's employee ID, I can retrieve his salary using this ID.

Action: get_employee_salary

Action Input: E005

工具执行结果

- 调用工具:

get_employee_salary - 输入:E005

- 输出:45000

最终收尾(信息完备,输出最终答案)

全部上下文合并完整内容

Question: What is the Salary of Evan?

Thought:To determine Evan's salary, I first need to obtain his employee ID.

Action: get_employee_id

Action Input: "Evan"

Observation: E005

Thought: Now that I have Evan's employee ID, I can retrieve his salary using this ID.

Action: get_employee_salary

Action Input: E005

Observation: 45000

模型收尾输出

Thought: I now know the final answer.

Final Answer: The salary of Evan is 45000.

整体核心总结

- 面对需要分步完成的复杂问题,ReAct Agent 可自主拆解任务,不会直接强行作答;

- 全程依靠上下文拼接,将每一步思考、工具调用、返回结果保存,作为后续推理依据;

- 严格遵守统一格式规范,通过多轮「思考---行动---观测」闭环,逐步收集所需信息;

- 待所有前置条件、关键数据获取完成后,主动终止工具调用,整合信息输出标准最终回答。

12 带上下文记忆的工具调用 Agent

https://python.langchain.com/v0.1/docs/modules/agents/how_to/custom_agent/

format_to_openai_tool_messages

本节目标

- 掌握自定义工具绑定模型、函数调用基础

- 理解

agent_scratchpad作用:存放单轮工具执行中间步骤 - 理解

chat_history作用:实现多轮对话长期记忆 - 掌握新版结构化 Agent 写法(Runnable 链式)

- 明确:智谱 GLM 兼容 OpenAI 工具格式,可直接复用对应格式化/解析器

12.1 自定义工具定义 & 测试

python

from langchain.tools import tool

@tool

def get_word_length(word: str) -> int:

"""Returns the length of a word."""

return len(word)

# 单独测试工具可用性

get_word_length.invoke("abc")

# 注册工具列表,供模型调用

tools = [get_word_length]@tool装饰器:将普通函数转为 LangChain 可识别的工具- 文档字符串:描述工具用途,模型靠描述判断何时调用

- 业务逻辑:接收单词,返回字符长度

12.2 基础提示词模板(无记忆版本)

python

from langchain_core.prompts import ChatPromptTemplate, MessagesPlaceholder

prompt = ChatPromptTemplate.from_messages(

[

("system", "You are very powerful assistant, but don't know current events"),

("user", "{input}"),

# 占位符:存放本轮 Agent 思考、工具调用、返回结果等中间过程

MessagesPlaceholder(variable_name="agent_scratchpad"),

]

)system:设定助手人设与能力限制{input}:接收用户当前提问agent_scratchpad:单轮临时草稿区,保存 ReAct 每一步中间行为

12.3 模型绑定工具

python

# llm 为提前初始化的智谱/大模型实例

llm_with_tools = llm.bind_tools(tools)bind_tools():向模型注入工具列表、参数描述- 让模型具备判断是否需要调用工具、生成工具参数的能力

12.4 核心依赖:工具格式格式化 + 输出解析

python

# 中间步骤格式化为 OpenAI 标准工具消息格式

from langchain_classic.agents.format_scratchpad.openai_tools import (

format_to_openai_tool_messages,

)

# 解析模型输出,区分:直接回答 / 调用工具

from langchain_classic.agents.output_parsers.openai_tools import OpenAIToolsAgentOutputParser关键解读

format_to_openai_tool_messages- 把

intermediate_steps工具执行日志,转为大模型可识别的消息格式 - 智谱 GLM 完全兼容 OpenAI 工具协议,无需额外改造

- 把

OpenAIToolsAgentOutputParser- 解析模型返回内容

- 两种结果:

- 需要调用工具 → 输出

AgentAction - 信息充足可回答 → 输出

AgentFinish

- 需要调用工具 → 输出

补充:LangChain 无专属

zhipu格式化/解析器,直接复用 OpenAI 套件即可稳定运行。

12.5 链式组装 Agent(Runnable 写法)

python

from langchain.agents import AgentExecutor

agent = (

{

"input": lambda x: x["input"],

# 装填本轮工具执行中间步骤

"agent_scratchpad": lambda x: format_to_openai_tool_messages(x["intermediate_steps"]),

}

| prompt # 拼接提示词

| llm_with_tools# 带工具能力的大模型

| OpenAIToolsAgentOutputParser() # 结果解析

)

# 实例化执行器:循环调度思考、工具调用、结果回传

agent_executor = AgentExecutor(agent=agent, tools=tools, verbose=True)- 采用管道

|串联组件,是 LangChain 新版标准写法 AgentExecutor:核心循环控制器,对应之前学习的 ReAct 执行逻辑

12.6 流式输出查看完整执行链路

python

# stream 逐步返回每一步思考/工具调用过程

process_list = list(agent_executor.stream({"input": "How many letters in the word eudca"}))

process_list- 作用:断点式观察 Agent 行为,方便调试格式错误、工具调用失败问题

输出

bash

[1m> Entering new None chain...[0m

[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'eudca'}`

[0m[36;1m[1;3m5[0m[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'eudca'}`

[0m[36;1m[1;3m5[0m[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'eudca'}`

[0m[36;1m[1;3m5[0m[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'eudca'}`

[0m[36;1m[1;3m5[0m[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'eudca'}`

[0m[36;1m[1;3m5[0m[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'eudca'}`

[0m[36;1m[1;3m5[0m[32;1m[1;3mThe word "eudca" has 5 letters.[0m

[1m> Finished chain.[0m

bash

[{'actions': [ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_202604181943133f61d958ef1e4895_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-ec02-7333-995e-68c895e947a7', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_202604181943133f61d958ef1e4895_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_202604181943133f61d958ef1e4895_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_202604181943133f61d958ef1e4895_0')],

'messages': [AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_202604181943133f61d958ef1e4895_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-ec02-7333-995e-68c895e947a7', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_202604181943133f61d958ef1e4895_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_202604181943133f61d958ef1e4895_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')]},

{'steps': [AgentStep(action=ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_202604181943133f61d958ef1e4895_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-ec02-7333-995e-68c895e947a7', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_202604181943133f61d958ef1e4895_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_202604181943133f61d958ef1e4895_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_202604181943133f61d958ef1e4895_0'), observation=5)],

'messages': [FunctionMessage(content='5', additional_kwargs={}, response_metadata={}, name='get_word_length')]},

{'actions': [ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_2026041819431474dc2759b1044046_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-f14d-7da0-a7d1-1dfe2af6a05c', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_2026041819431474dc2759b1044046_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_2026041819431474dc2759b1044046_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_2026041819431474dc2759b1044046_0')],

'messages': [AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_2026041819431474dc2759b1044046_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-f14d-7da0-a7d1-1dfe2af6a05c', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_2026041819431474dc2759b1044046_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_2026041819431474dc2759b1044046_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')]},

{'steps': [AgentStep(action=ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_2026041819431474dc2759b1044046_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-f14d-7da0-a7d1-1dfe2af6a05c', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_2026041819431474dc2759b1044046_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_2026041819431474dc2759b1044046_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_2026041819431474dc2759b1044046_0'), observation=5)],

'messages': [FunctionMessage(content='5', additional_kwargs={}, response_metadata={}, name='get_word_length')]},

{'actions': [ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_2026041819431668a3ee3698414361_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-f5b6-7bc2-9222-4282c95efafa', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_2026041819431668a3ee3698414361_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_2026041819431668a3ee3698414361_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_2026041819431668a3ee3698414361_0')],

'messages': [AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_2026041819431668a3ee3698414361_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-f5b6-7bc2-9222-4282c95efafa', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_2026041819431668a3ee3698414361_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_2026041819431668a3ee3698414361_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')]},

{'steps': [AgentStep(action=ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_2026041819431668a3ee3698414361_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-f5b6-7bc2-9222-4282c95efafa', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_2026041819431668a3ee3698414361_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_2026041819431668a3ee3698414361_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_2026041819431668a3ee3698414361_0'), observation=5)],

'messages': [FunctionMessage(content='5', additional_kwargs={}, response_metadata={}, name='get_word_length')]},

{'actions': [ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_202604181943177ad2f8ce83b346a7_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-f9ff-7410-ac6a-e9919a565619', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_202604181943177ad2f8ce83b346a7_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_202604181943177ad2f8ce83b346a7_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_202604181943177ad2f8ce83b346a7_0')],

'messages': [AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_202604181943177ad2f8ce83b346a7_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-f9ff-7410-ac6a-e9919a565619', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_202604181943177ad2f8ce83b346a7_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_202604181943177ad2f8ce83b346a7_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')]},

{'steps': [AgentStep(action=ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_202604181943177ad2f8ce83b346a7_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-f9ff-7410-ac6a-e9919a565619', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_202604181943177ad2f8ce83b346a7_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_202604181943177ad2f8ce83b346a7_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_202604181943177ad2f8ce83b346a7_0'), observation=5)],

'messages': [FunctionMessage(content='5', additional_kwargs={}, response_metadata={}, name='get_word_length')]},

{'actions': [ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_202604181943185a245ee0a945435d_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-fdeb-7071-bfb3-48e185d0c120', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_202604181943185a245ee0a945435d_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_202604181943185a245ee0a945435d_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_202604181943185a245ee0a945435d_0')],

'messages': [AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_202604181943185a245ee0a945435d_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-fdeb-7071-bfb3-48e185d0c120', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_202604181943185a245ee0a945435d_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_202604181943185a245ee0a945435d_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')]},

{'steps': [AgentStep(action=ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_202604181943185a245ee0a945435d_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da066-fdeb-7071-bfb3-48e185d0c120', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_202604181943185a245ee0a945435d_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_202604181943185a245ee0a945435d_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_202604181943185a245ee0a945435d_0'), observation=5)],

'messages': [FunctionMessage(content='5', additional_kwargs={}, response_metadata={}, name='get_word_length')]},

{'actions': [ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_20260418194319adb7408838724497_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da067-027c-7ba1-a8df-ad6f6fafc0f7', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_20260418194319adb7408838724497_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_20260418194319adb7408838724497_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_20260418194319adb7408838724497_0')],

'messages': [AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_20260418194319adb7408838724497_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da067-027c-7ba1-a8df-ad6f6fafc0f7', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_20260418194319adb7408838724497_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_20260418194319adb7408838724497_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')]},

{'steps': [AgentStep(action=ToolAgentAction(tool='get_word_length', tool_input={'word': 'eudca'}, log="\nInvoking: `get_word_length` with `{'word': 'eudca'}`\n\n\n", message_log=[AIMessageChunk(content='', additional_kwargs={'tool_calls': [{'id': 'call_20260418194319adb7408838724497_0', 'index': 0, 'type': 'function', 'function': {'name': 'get_word_length', 'arguments': '{"word": "eudca"}'}}]}, response_metadata={'finish_reason': 'tool_calls', 'token_usage': None, 'model_name': 'glm-4-flash'}, id='lc_run--019da067-027c-7ba1-a8df-ad6f6fafc0f7', tool_calls=[{'name': 'get_word_length', 'args': {'word': 'eudca'}, 'id': 'call_20260418194319adb7408838724497_0', 'type': 'tool_call'}], invalid_tool_calls=[], tool_call_chunks=[{'name': 'get_word_length', 'args': '{"word": "eudca"}', 'id': 'call_20260418194319adb7408838724497_0', 'index': 0, 'type': 'tool_call_chunk'}], chunk_position='last')], tool_call_id='call_20260418194319adb7408838724497_0'), observation=5)],

'messages': [FunctionMessage(content='5', additional_kwargs={}, response_metadata={}, name='get_word_length')]},

{'output': 'The word "eudca" has 5 letters.',

'messages': [AIMessage(content='The word "eudca" has 5 letters.', additional_kwargs={}, response_metadata={}, tool_calls=[], invalid_tool_calls=[])]}]12.7 升级:引入长期对话记忆 chat_history

1. 新增记忆占位符

python

MEMORY_KEY = "chat_history"

prompt = ChatPromptTemplate.from_messages(

[

("system", "You are very powerful assistant, but bad at calculating lengths of words."),

# 多轮对话历史记录占位

MessagesPlaceholder(variable_name=MEMORY_KEY),

("user", "{input}"),

MessagesPlaceholder(variable_name="agent_scratchpad"),

]

)2. 手动维护对话历史

python

from langchain_core.messages import AIMessage, HumanMessage

# 初始化记忆列表

chat_history = []HumanMessage:用户问题AIMessage:模型回答- 二者交替存储,构成完整上下文

3. 带记忆的 Agent 重构

python

agent = (

{

"input": lambda x: x["input"],

"agent_scratchpad": lambda x: format_to_openai_tool_messages(x["intermediate_steps"]),

# 注入历史对话上下文

"chat_history": lambda x: x["chat_history"],

}

| prompt

| llm_with_tools

| OpenAIToolsAgentOutputParser()

)

agent_executor = AgentExecutor(agent=agent, tools=tools, verbose=True)4. 多轮连续对话执行

python

# 第一轮提问

input1 = "how many letters in the word educa?"

result = agent_executor.invoke({

"input": input1,

"chat_history": chat_history

})

# 手动追加本轮问答到记忆

chat_history.extend(

[

HumanMessage(content=input1),

AIMessage(content=result["output"]),

]

)

# 第二轮追问:依赖上一轮上下文

agent_executor.invoke({

"input": "is that a real word?",

"chat_history": chat_history

})- 每轮结束手动更新

chat_history - 下一轮提问自动带入历史,模型具备上下文关联理解

输出

bash

[1m> Entering new AgentExecutor chain...[0m

[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'educa'}`

[0m[36;1m[1;3m5[0m[32;1m[1;3mI apologize for the previous incorrect response. The correct answer is that the word "educa" has 6 letters.[0m

[1m> Finished chain.[0m

[1m> Entering new AgentExecutor chain...[0m

[32;1m[1;3mYes, "educa" is a real word. It is a noun that refers to the act of educating or the process of being educated. It is derived from the Latin word "educare," which means "to educate." The word is not as commonly used as "education," but it is still considered valid in the English language.[0m

[1m> Finished chain.[0m

{'input': 'is that a real word?',

'chat_history': [HumanMessage(content='how many letters in the word educa?', additional_kwargs={}, response_metadata={}),

AIMessage(content='I apologize for the previous incorrect response. The correct answer is that the word "educa" has 6 letters.', additional_kwargs={}, response_metadata={}, tool_calls=[], invalid_tool_calls=[])],

'output': 'Yes, "educa" is a real word. It is a noun that refers to the act of educating or the process of being educated. It is derived from the Latin word "educare," which means "to educate." The word is not as commonly used as "education," but it is still considered valid in the English language.'}

bash

- messages

- system

- human (user)

- ai (assistant)

- human (user)

- ai (assistant)

- ...12.8 拉取线上官方提示词快速开发

python

from langsmith import Client

client = Client()

# 拉取 LangSmith Hub 官方预制的函数调用 Agent 提示词

prompt = client.pull_prompt("hwchase17/openai-functions-agent")

# 复用上方相同 Agent 与执行器逻辑

agent = (

{

"input": lambda x: x["input"],

"agent_scratchpad": lambda x: format_to_openai_tool_messages(x["intermediate_steps"]),

"chat_history": lambda x: x["chat_history"],

}

| prompt

| llm_with_tools

| OpenAIToolsAgentOutputParser()

)

agent_executor = AgentExecutor(agent=agent, tools=tools, verbose=True)- 官方提示词已内置

chat_history / input / agent_scratchpad变量 - 减少手写提示词出错,适合快速落地项目

输出

bash

[1m> Entering new AgentExecutor chain...[0m

[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'dadebfdr'}`

[0m[36;1m[1;3m8[0m[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'dadebfdr'}`

[0m[36;1m[1;3m8[0m[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'dadebfdr'}`

[0m[36;1m[1;3m8[0m[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'dadebfdr'}`

[0m[36;1m[1;3m8[0m[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'dadebfdr'}`

[0m[36;1m[1;3m8[0m[32;1m[1;3m

Invoking: `get_word_length` with `{'word': 'dadebfdr'}`

[0m[36;1m[1;3m8[0m[32;1m[1;3mThe word "dadebfdr" has 8 letters.[0m

[1m> Finished chain.[0m

[1m> Entering new AgentExecutor chain...[0m

[32;1m[1;3mNo, "dadebfdr" is not a real word in the English language. It appears to be a random combination of letters and does not form a recognizable word or phrase.[0m

[1m> Finished chain.[0m

{'input': 'is that a real word?',

'chat_history': [HumanMessage(content='how many letters in the word dadebfdr?', additional_kwargs={}, response_metadata={}),

AIMessage(content='The word "dadebfdr" has 8 letters.', additional_kwargs={}, response_metadata={}, tool_calls=[], invalid_tool_calls=[])],

'output': 'No, "dadebfdr" is not a real word in the English language. It appears to be a random combination of letters and does not form a recognizable word or phrase.'}12.9 核心概念对比总结

| 字段 | 作用 | 生命周期 |

|---|---|---|

agent_scratchpad |

单轮内:思考、工具调用、返回结果 | 单次问答结束自动清空 |

chat_history |

多轮间:用户与AI完整对话记录 | 手动维护,长期保留上下文 |

bind_tools |

赋予模型工具识别与调用能力 | 全局生效 |

12.10 本节整体知识复盘

- 工具调用流程:自定义工具 → 模型绑定工具 → 解析器判断行为 → 执行器循环调度

- 双层缓存机制:

- 临时草稿:

agent_scratchpad服务单轮 ReAct 推理 - 长期记忆:

chat_history实现多轮连续对话

- 临时草稿:

- 格式兼容:智谱 GLM 复用 OpenAI 工具格式化、解析器,无需额外适配

- 工程化写法:Runnable 链式编排 + 云端提示词拉取,简洁易维护

- 完整链路:提问 → 上下文拼接 → 模型推理 → 工具调用 → 结果返回 → 记忆持久化