一、基础环境规划

- 系统:CentOS 7.9

- 架构:1 主 1 从

- master1:192.168.63.149

- node1:192.168.63.150

- 容器:containerd 1.6.24

- K8s 版本:1.28.2

- 网络插件:Calico 3.26.1

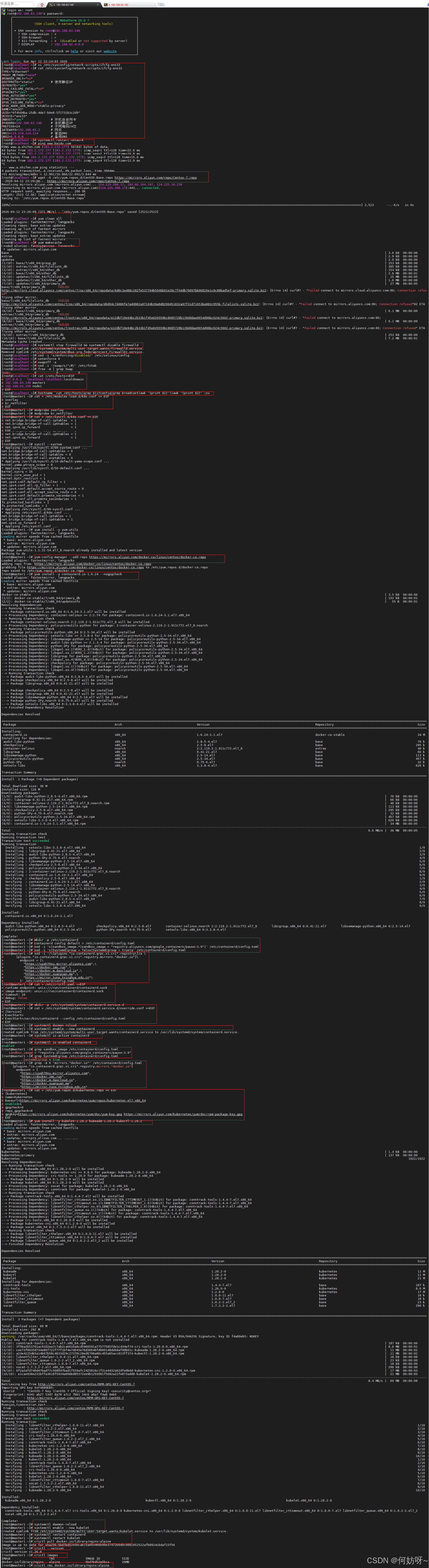

二、Kubernetes 1.28.2 集群初始化(Master + Node 都执行)

# 编辑网卡配置文件,设置静态IP

vi /etc/sysconfig/network-scripts/ifcfg-ens33

# 查看网卡配置是否正确

cat /etc/sysconfig/network-scripts/ifcfg-ens33

...............................................

TYPE="Ethernet"

PROXY_METHOD="none"

BROWSER_ONLY="no"

BOOTPROTO="static" # 使用静态IP

DEFROUTE="yes"

IPV4_FAILURE_FATAL="no"

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

IPV6_DEFROUTE="yes"

IPV6_FAILURE_FATAL="no"

IPV6_ADDR_GEN_MODE="stable-privacy"

NAME="ens33"

UUID="6f45d4ba-25db-4de7-b0e8-5f2731b3c2d9"

DEVICE="ens33"

ONBOOT="yes" # 开机自启网卡

IPADDR0=192.168.63.149 # 本机静态IP

PREFIX0=24 # 子网掩码24位

GATEWAY0=192.168.63.2 # 网关

DNS1=114.114.114.114 # 首选DNS

DNS2=8.8.8.8 # 备用DNS

# 重启网络服务使IP配置生效

systemctl restart network

# 测试网络连通性(能通说明IP/网关/DNS正常)

ping www.baidu.com

# 下载阿里云CentOS7基础源,替换默认源

wget -O /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

# 清理原有yum缓存

yum clean all

# 生成新的yum缓存

yum makecache

# 关闭防火墙并设置开机不自启

systemctl stop firewalld && systemctl disable firewalld

# 永久关闭SELinux(修改配置文件)

sed -i 's/enforcing/disabled/' /etc/selinux/config

# 临时关闭SELinux(立即生效)

setenforce 0

# 临时关闭交换分区

swapoff -a

# 永久关闭交换分区(注释fstab挂载配置)

sed -i '/swap/s/^/#/' /etc/fstab

# 验证交换分区是否关闭(无输出代表成功)

free -m | grep Swap

# 写入hosts映射,master和node都要配置一致

cat >/etc/hosts<<EOF

127.0.0.1 localhost localhost.localdomain

192.168.63.149 master1

192.168.63.150 node1

EOF

# 自动根据IP设置本机主机名(无需手动输入,直接执行)

hostname `cat /etc/hosts|grep $(ifconfig|grep broadcast|awk '{print $2}')|awk '{print $2}'`;su

# 加载K8s必需的内核模块

cat > /etc/modules-load.d/k8s.conf << EOF

overlay

br_netfilter

EOF

# 手动加载模块,立即生效

modprobe overlay

modprobe br_netfilter

# 配置系统网络参数(桥接、IP转发)

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF

# 让内核参数立即永久生效

sysctl --system

# 安装yum工具依赖

yum install -y yum-utils

# 添加阿里云containerd/docker软件源

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# 安装指定版本containerd

yum install -y containerd.io-1.6.24 --nogpgcheck

# 创建containerd配置目录

mkdir -p /etc/containerd

# 生成默认配置文件

containerd config default > /etc/containerd/config.toml

# 替换pause镜像为阿里云地址(解决国外镜像拉取失败)

sed -i 's|sandbox_image.*|sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.9"|' /etc/containerd/config.toml

# 开启systemd cgroup驱动(K8s必须配置)

sed -i 's|SystemdCgroup = false|SystemdCgroup = true|g' /etc/containerd/config.toml

# 配置containerd镜像加速地址(国内加速拉取镜像)

sed -i '/\[plugins."io.containerd.grpc.v1.cri".registry\]/a \

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]\

endpoint = [\

"https://uyah70su.mirror.aliyuncs.com",\

"https://docker.1ms.run",\

"https://docker.m.daocloud.io",\

"https://docker.xuanyuan.me",\

"https://mirror.tuna.tsinghua.edu.cn"\

]' /etc/containerd/config.toml

# 配置crictl工具(containerd命令行管理工具)

cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

# 创建containerd自定义启动配置(指定加载自定义配置文件)

mkdir -p /etc/systemd/system/containerd.service.d

cat > /etc/systemd/system/containerd.service.d/override.conf <<EOF

[Service]

ExecStart=

ExecStart=/usr/bin/containerd --config /etc/containerd/config.toml

EOF

# 重新加载系统服务配置

systemctl daemon-reload

# 设置containerd开机自启并立即启动

systemctl enable --now containerd

# 查看containerd是否正常运行(输出active即为成功)

systemctl is-active containerd

# 查看是否开机自启(输出enabled即为成功)

systemctl is-enabled containerd

# 验证pause镜像是否修改成功

grep sandbox_image /etc/containerd/config.toml

# 验证cgroup驱动是否开启

grep SystemdCgroup /etc/containerd/config.toml

# 验证镜像加速配置是否正确

grep -A 6 'mirrors."docker.io"' /etc/containerd/config.toml

# 添加阿里云K8s软件yum源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 安装指定版本K8s三大组件

yum install -y kubelet-1.28.2 kubeadm-1.28.2 kubectl-1.28.2

# 重新加载系统服务配置

systemctl daemon-reload

# 设置kubelet开机自启并立即启动

systemctl enable --now kubelet

# 重启containerd确保与K8s兼容

systemctl restart containerd

# 重启kubelet使所有配置最终生效

systemctl restart kubelet

# 测试拉取nginx镜像(????)

crictl pull docker.io/library/nginx:alpine

#查看crictl版本

crictl --version

# 查看本地已下载镜像

crictl images

# 删除测试镜像(清理空间)

crictl rmi docker.io/library/nginx:alpine

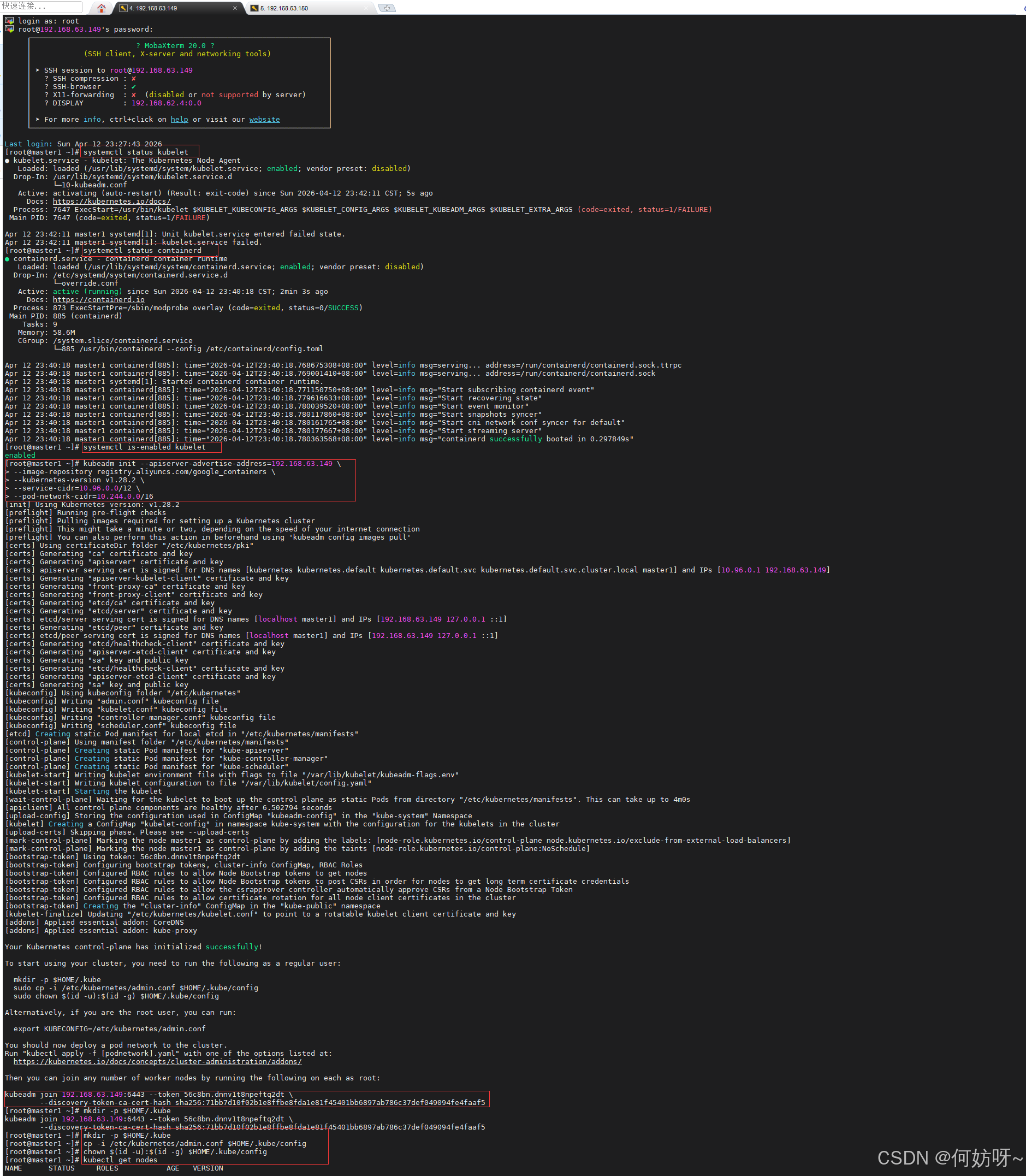

1、初始化k8s集群

# 查看kubelet运行状态,显示active (running)即为正常

systemctl status kubelet

# 查看containerd运行状态

systemctl status containerd

# 查看kubelet是否开机自启,显示enabled即为正常

systemctl is-enabled kubelet

# 初始化K8s集群(核心命令,必须完整复制执行)

kubeadm init --apiserver-advertise-address=192.168.63.149 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.28.2 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16

# 执行成功后,会生成类似下面的节点加入命令,复制保存下来,Node节点要用

# kubeadm join 192.168.63.149:6443 --token 56c8bn.dnnv1t8npeftq2dt \

--discovery-token-ca-cert-hash sha256:71bb7d10f02b1e8ffbe8fda1e81f45401bb6897ab786c37def049094fe4faaf5

# 创建kubectl配置目录

mkdir -p $HOME/.kube

# 复制管理员认证文件

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

# 修改文件权限

chown $(id -u):$(id -g) $HOME/.kube/config

# 查看节点状态(此时节点状态为NotReady,因为还没装网络插件)

kubectl get nodes

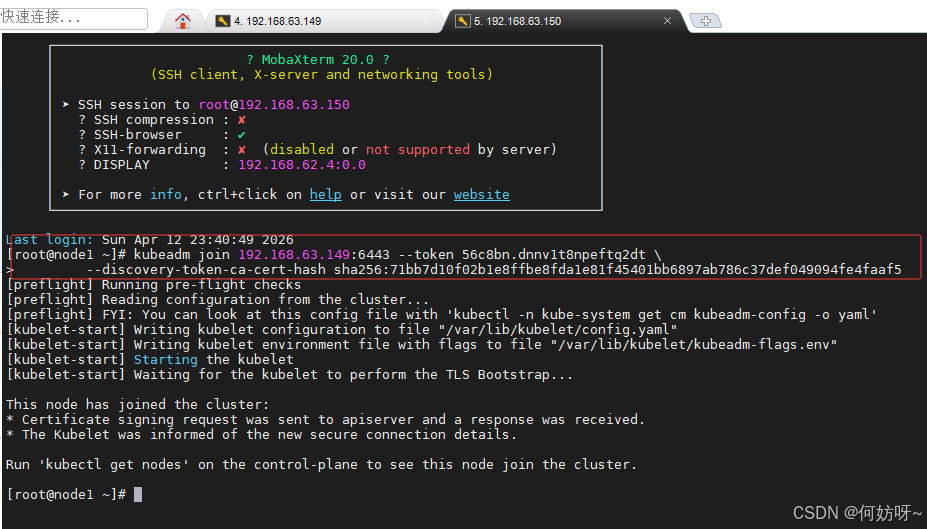

2、加入k8s集群

#把192.168.63.150(Node节点)加入K8s集群

# 粘贴上一步在Master节点生成的【kubeadm join】命令,直接执行

# 示例(替换成你自己的):

kubeadm join 192.168.63.149:6443 --token 0fwxe9.2baovv9tdixdqbi9 \

--discovery-token-ca-cert-hash sha256:fc259ad77e45a87678b52f9833ed324d938bd7e093bb0b5868013ba7e056ce35

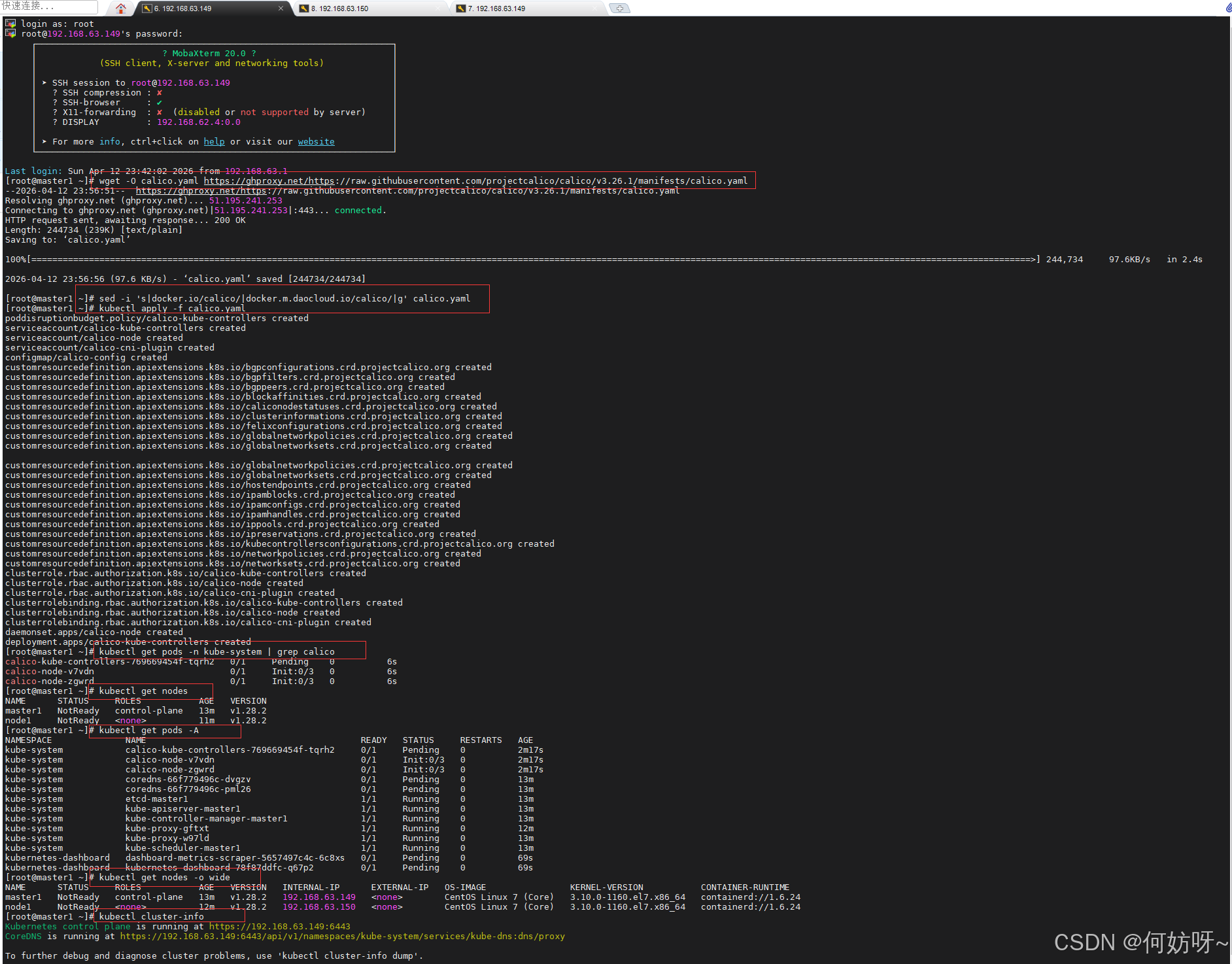

三、安装calico网络插件

#在192.168.63.149(Master 节点)安装Calico网络插件(必须安装,节点才能变为Ready)

# 下载Calico yaml文件(使用ghproxy加速)

wget -O calico.yaml https://ghproxy.net/https://raw.githubusercontent.com/projectcalico/calico/v3.26.1/manifests/calico.yaml

# 替换国内镜像地址,解决拉取失败问题

sed -i 's|docker.io/calico/|docker.m.daocloud.io/calico/|g' calico.yaml

# 部署Calico网络插件

kubectl apply -f calico.yaml

# 查看Calico Pod运行状态

kubectl get pods -n kube-system | grep calico

# 查看节点状态,等待1-3分钟,节点会从NotReady变为Ready

kubectl get nodes

# 查看所有Pod运行状态

kubectl get pods -A

# 查看节点详细信息

kubectl get nodes -o wide

# 查看K8s集群信息

kubectl cluster-info

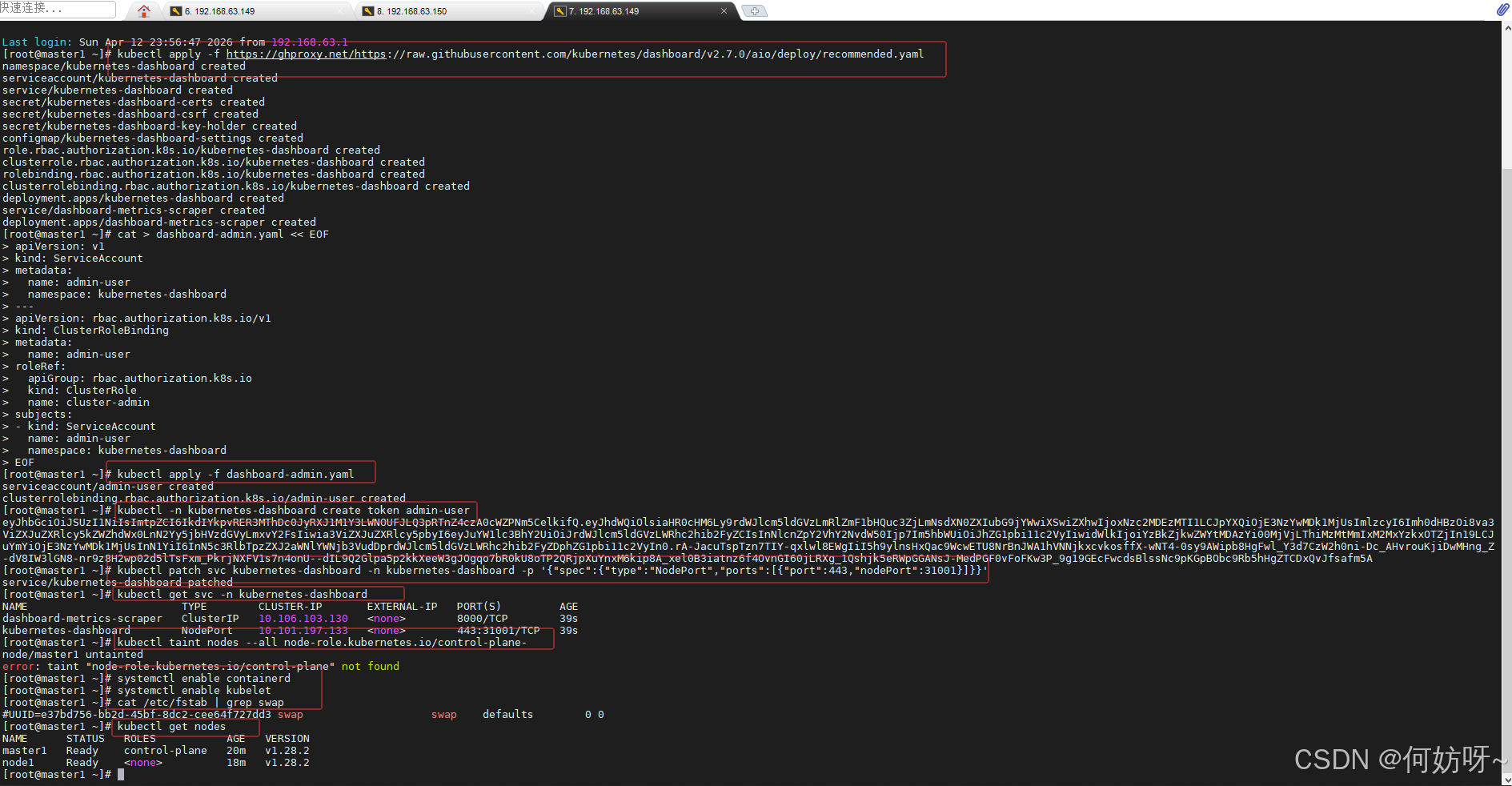

四、部署Dashhboard

#在192.168.63.149(Master 节点)部署K8s Dashboard

# 部署Dashboard官方yaml

kubectl apply -f https://ghproxy.net/https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

# 创建管理员账号(拥有集群最高权限)

cat > dashboard-admin.yaml << EOF

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

EOF

# 应用管理员账号配置

kubectl apply -f dashboard-admin.yaml

# 获取登录Token(复制这一长串字符串,登录Dashboard用)

kubectl -n kubernetes-dashboard create token admin-user

# 将Dashboard改为NodePort模式,外部可访问,端口固定为31001

kubectl patch svc kubernetes-dashboard -n kubernetes-dashboard -p '{"spec":{"type":"NodePort","ports":[{"port":443,"nodePort":31001}]}}'

# 查看Dashboard服务与端口

kubectl get svc -n kubernetes-dashboard

# 清理Master节点污点,允许Pod调度到Master(可选,测试环境用)

kubectl taint nodes --all node-role.kubernetes.io/control-plane-

#检查集群健康

# 确保核心服务开机自启

systemctl enable containerd

systemctl enable kubelet

# 确认交换分区已永久关闭

cat /etc/fstab | grep swap

#实时查看所有命名空间 Pod(自动刷新)

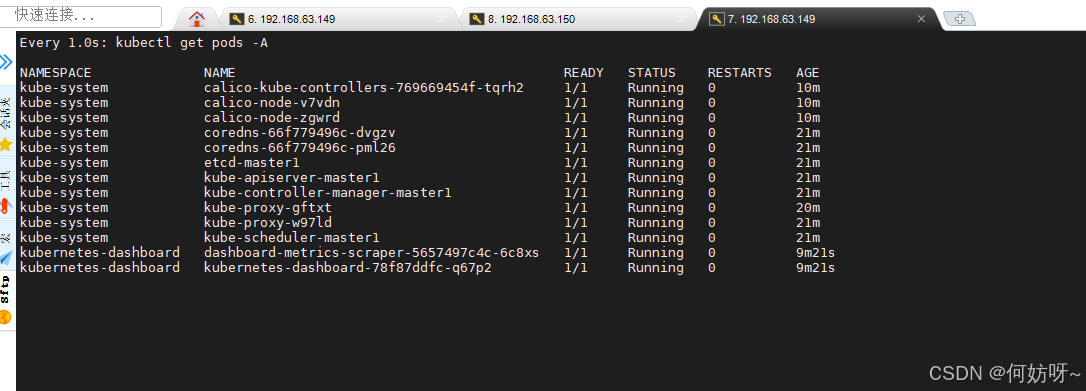

watch -n 1 kubectl get pods -A

# 最终查看节点状态,全部Ready代表集群部署成功

kubectl get nodes

实时查看所有命名空间 Pod(自动刷新)

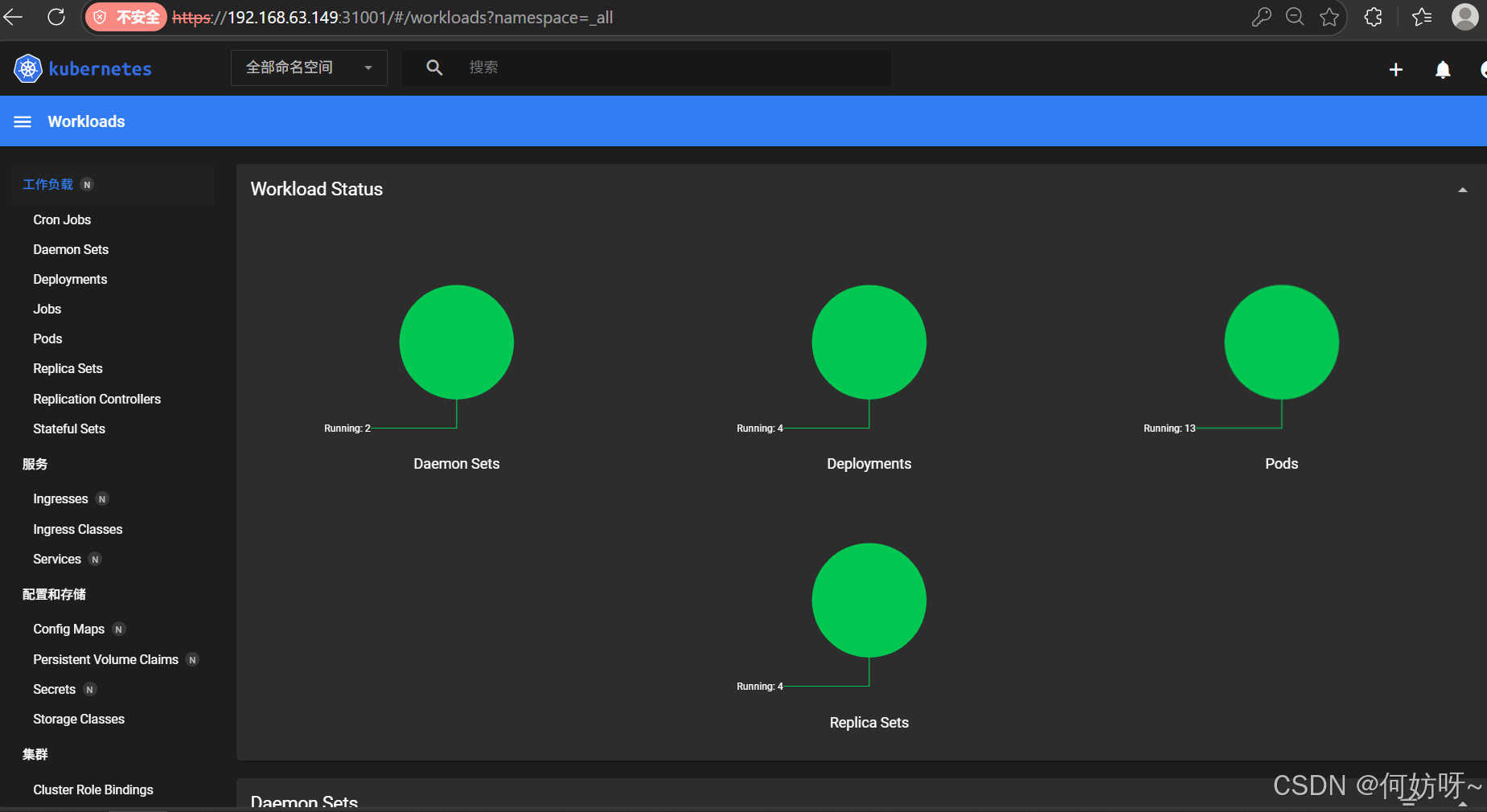

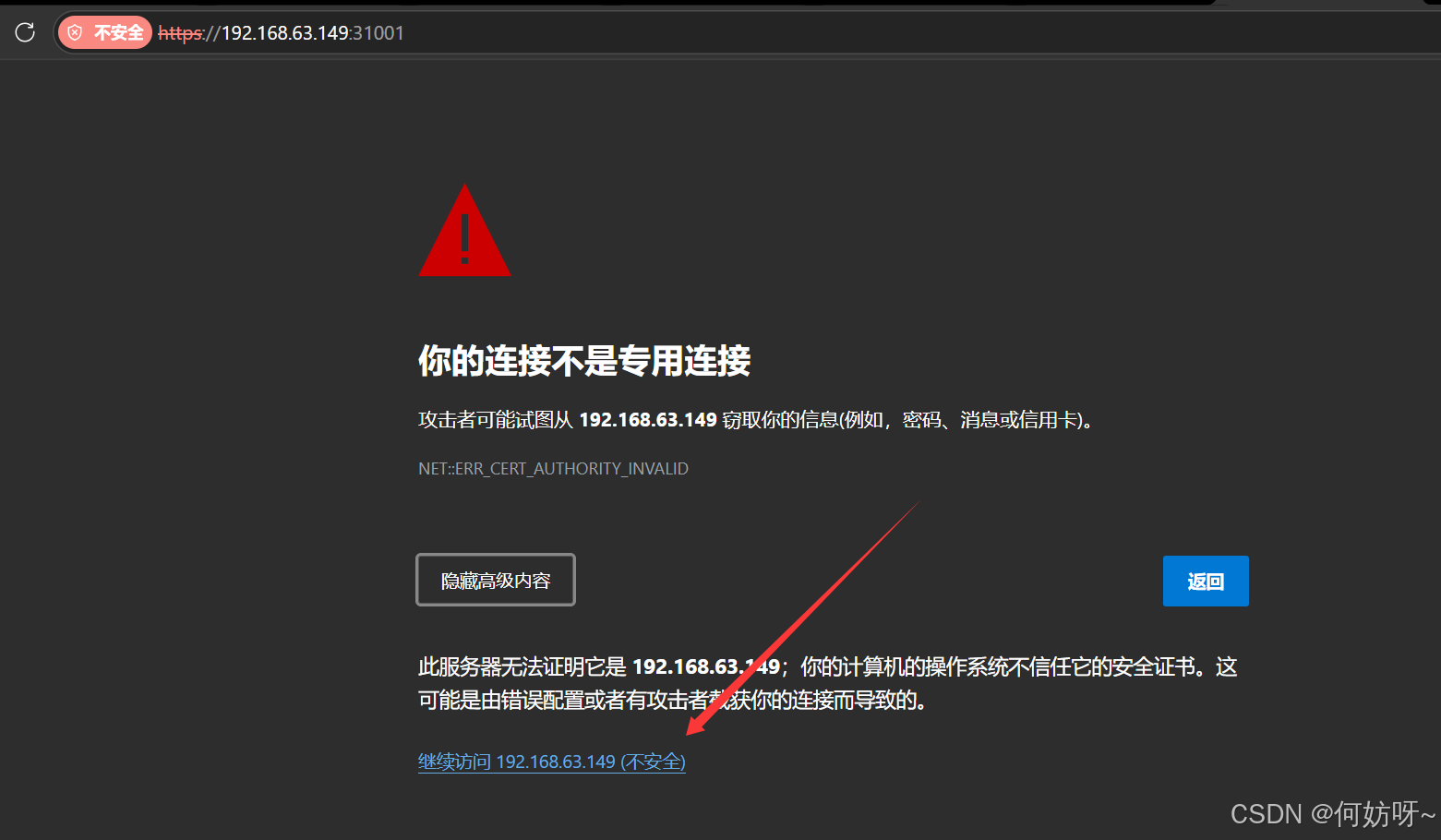

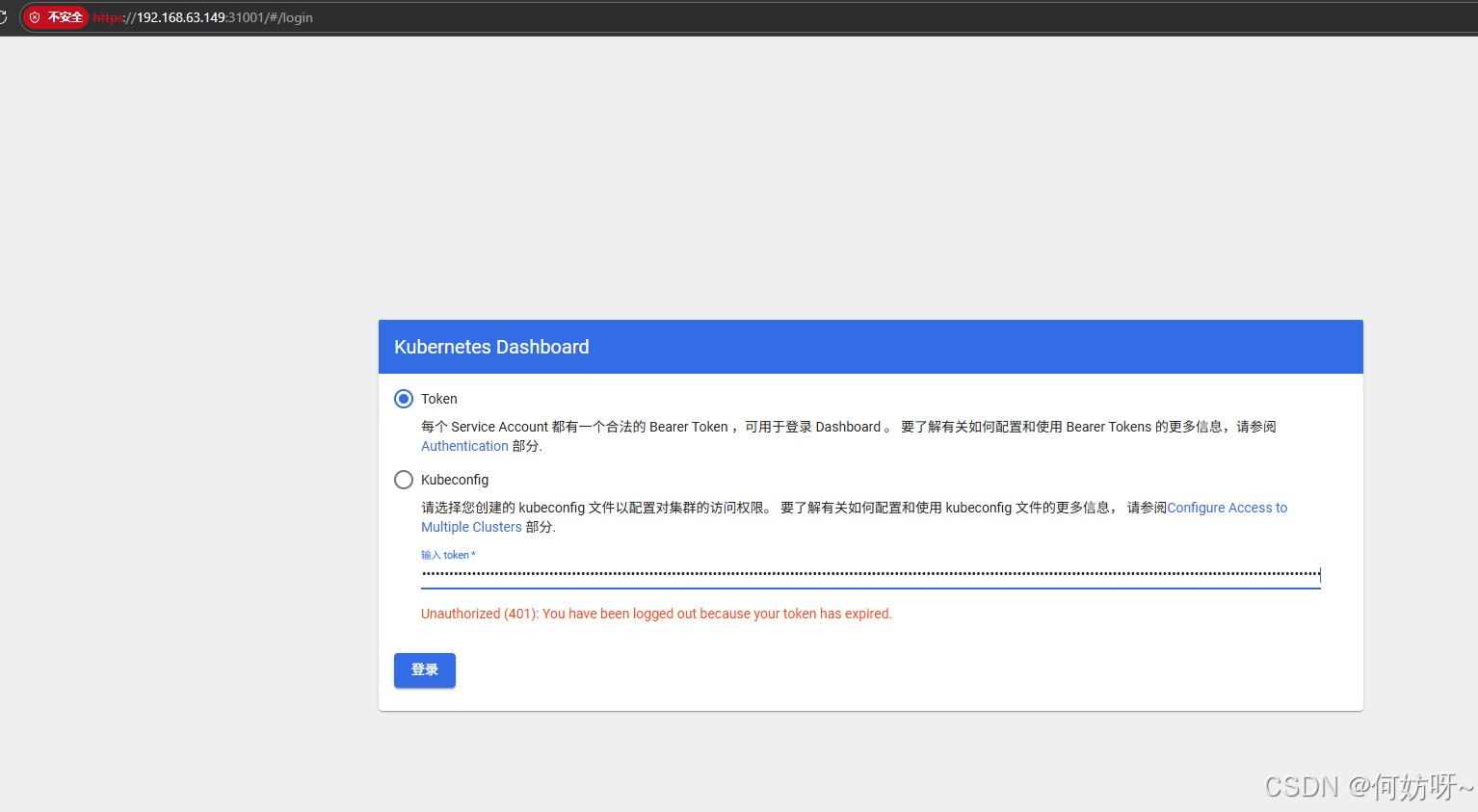

五、验证部署结果

# 访问地址(必须用https,谷歌/Edge浏览器直接访问)

https://192.168.63.149:31001

# 重启服务器,验证开机自动恢复集群

reboot

输入Token登录即可

部署成功