一、 服务器环境及初始化

部署k8s-单Master集群首先要确保IP地址是静态的不需更改,不能变化。

master服务器的内存最少4Gb

以一master,两node做演示,

1、清空Iptales默认规则及关闭防火墙

iptables -t nat -F

iptables -t filter -F

systemctl disable --now firewalld

2、关闭SELINUX

setenforce 0

sed -i 's/SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config

3、要关闭每个服务器上的Swap交换空间(k8s对性能要求极高,当Swap代替性能空间时,就会崩溃,并且k8s在安装过程中会验证Swap是否开启,开启默认不许安装)

swapoff -a

sed -i 's/.*swap.*/#&/' /etc/fstab

4、分别设置主机名

hostnamectl set-hostname k8s-master

hostnamectl set-hostname k8s-node1

hostnamectl set-hostname k8s-node2

5、编写hosts文件

cat <<EOF > /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.11.160 k8s-master

192.168.11.136 k8s-node1

192.168.11.137 k8s-node2

EOF

6、设置内核参数(开启路由转发功能,开启ipv4、ipv6网桥转发功能,以及生效命令,modprobe br_netfilter是临时命令,重启就失效)

cat <<EOF >> /etc/sysctl.conf

net.ipv4.ip_forward=1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

modprobe br_netfilter

sysctl net.bridge.bridge-nf-call-ip6tables=1

sysctl net.bridge.bridge-nf-call-iptables=1

sysctl -p

输完命令后进入vim /etc/sysctl.conf

删除net.ipv4.ip_forward=0 这一句

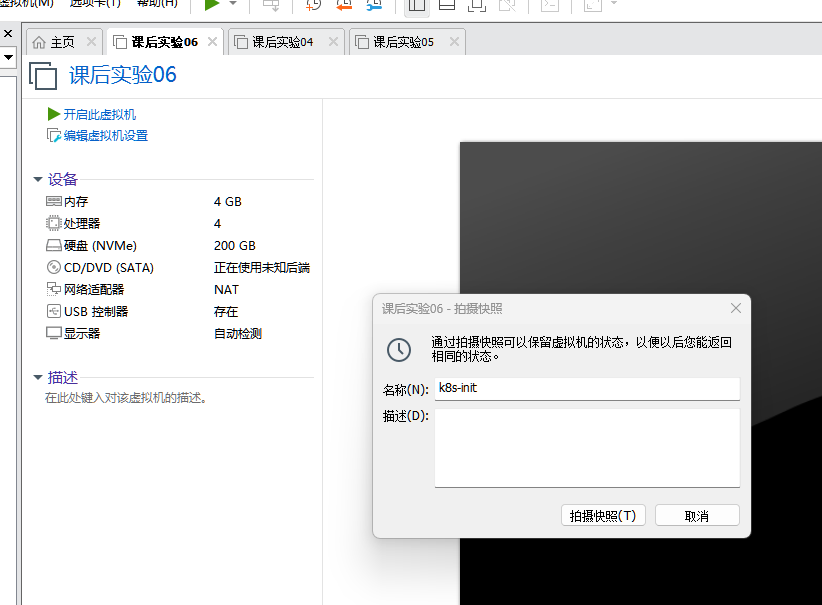

7、完成以上步骤关闭服务器

init 0

做快照,以上是k8s部署前的配置要求,为以后服务器故障留保障

后续需要互相传输镜像和文件,将私钥互相考

ssh-keygen

ssh-copy-id k8s-node1

ssh-copy-id k8s-node2

安装需要的配置 yum install -y lrzsz

二、安装Docker环境

1、在每台服务器上配置阿里源

cat <<EOF >> /etc/yum.repos.d/docker-ce.repo

docker-ce-stable

name=Docker CE Stable - $basearch

baseurl=https://mirrors.aliyun.com/docker-ce/linux/centos/9/x86_64/stable/

enabled=1

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

EOF

2、在每台服务器上安装docker

yum install -y docker-ce-28.5.2

3、优化每台服务器上的连接仓库

cat <<EOF >>/etc/docker/daemon.json

{

"registry-mirrors": [

"https://0vmzj3q6.mirror.aliyuncs.com",

"https://docker.m.daocloud.io",

"https://mirror.baidubce.com",

"https://dockerhub.timeweb.cloud",

"https://vlgh0kqj.mirror.aliyuncs.com"

]

}

EOF

systemctl daemon-reload

systemctl enable --now docker

4、在每台服务器上安装cri.docker

下载地址:https://github.com/Mirantis/cri-dockerd/releases

yum localinstall cri-dockerd-0.3.8-3.el8.x86_64.rpm -y

修改CRI启动脚本(添加基础容器,没有它k8s跑不了任何容器)

vim /usr/lib/systemd/system/cri-docker.service

ExecStart=/usr/bin/cri-dockerd --container-runtime-endpoint fd:// --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.9

5、在每台服务器上启动cri.docker

systemctl daemon-reload

systemctl enable --now cri-docker

---------------------------------------------以上k8s运行所需插件安装完毕------------------------------------------

三、安装kubeadm和kubectl

1、在每台服务器上配置yum源

cat <<EOF | tee /etc/yum.repos.d/kubernetes.repo

kubernetes

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.28/rpm/

enabled=1

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.28/rpm/repodata/repomd.xml.key

EOF

2、在每台服务器上安装kubelet kubeadm kubectl

yum install -y kubelet kubeadm kubectl

3、在每台服务器上设置kubectl开机自启动

systemctl enable kubelet && systemctl start kubelet

4、启动kubeadm和kubectl命令补齐功能(OpenEuler不用)

yum install -y bash-completion

source <(kubeadm completion bash)

source <(kubectl completion bash)

echo -e "source <(kubeadm completion bash)\nsource <(kubectl completion bash)" >> /root/.bashrc

source /root/.bashrc

四、部署Master节点

1、在k8s-master节点执行下述命令:(指定谁运行apiserver)

kubeadm init --apiserver-advertise-address=192.168.11.160 --image-repository=registry.aliyuncs.com/google_containers --kubernetes-version=v1.28.15 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12 --cri-socket=unix:///var/run/cri-dockerd.sock

执行后在最后会有校验证书

2、在家目录创建目录

mkdir .kube

复制目录

cp /etc/kubernetes/admin.conf .kube/config

五、部署node节点

分别在k8s-node1和k8s-node2中执行(每个k8s的master节点的校验证书不一样,自行查看)

kubeadm join 192.168.11.160:6443 --token viv0by.3c0q3zyy4yxlim1o \

--discovery-token-ca-cert-hash sha256:e297e12ed5e0f3d35bad72efeabbea75879853d35bfd4e5dab723c78fb0f97d8 --cri-socket=unix:///var/run/cri-dockerd.sock

六、部署网络插件

calico组件的相关镜像,目前在国内无法下载。需要在master主机先创建calico的相关资源,然后查看所需镜像,最后通过外网服务器进行下载。具体的操作流程如下:

拉入之后执行:

docker load -i calico.tar

docker load -i calico-apiserver.tar

将两个资源清单文件考入master服务器

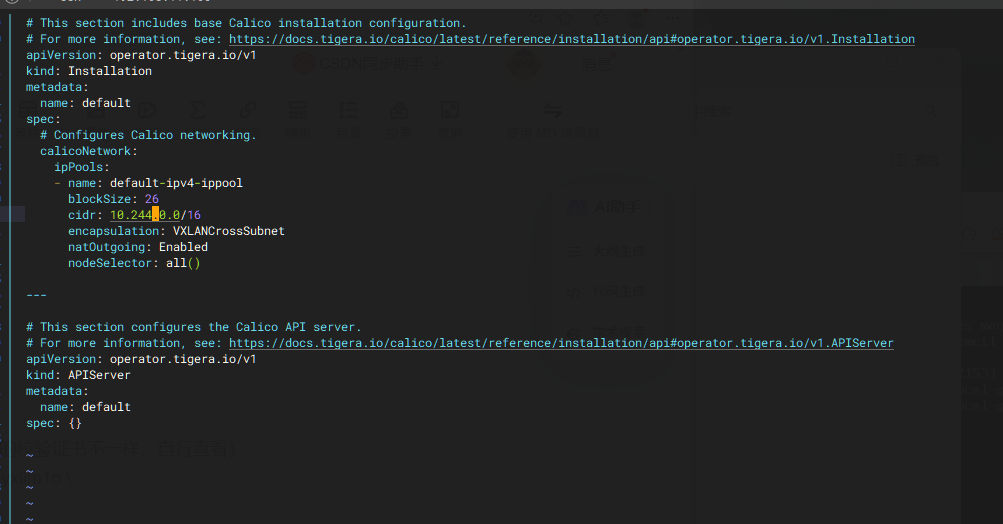

编译文件vim custom-resources.yaml

将文件中的cidr地址改为与pod地址同网段

先运行 kubectl create -f tigera-operator.yaml

再运行kubectl create -f custom-resources.yaml

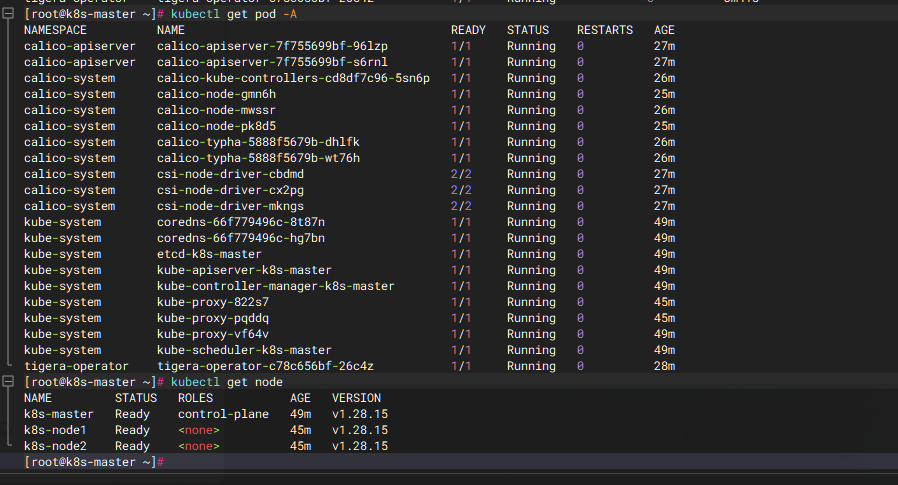

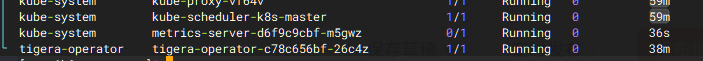

等待calico全部软件启动完毕(用kubectl get pod -A命令查看)

都是Running和Ready就是好了,k8s集群就可以使用了

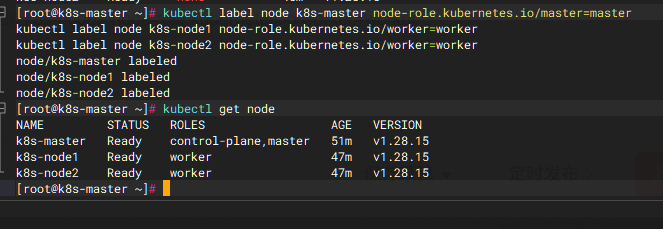

目前看到的是NotReady状态,是由于没有安装网络插件的原因。ROLES角色一栏显示"none",可以通过一下命令修改角色名称:

kubectl label node k8s-master node-role.kubernetes.io/master=master

kubectl label node k8s-node1 node-role.kubernetes.io/worker=worker

kubectl label node k8s-node2 node-role.kubernetes.io/worker=worker

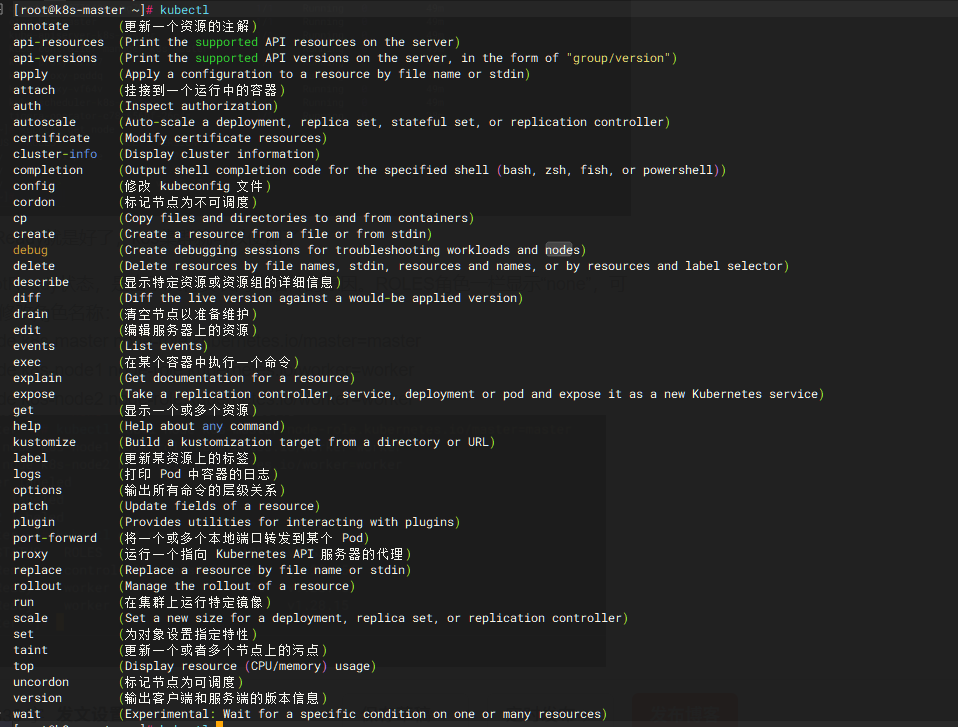

k8s的命令

七、Metrics部署

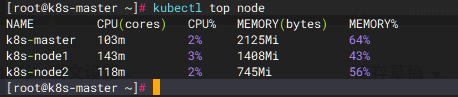

系统资源的采集需要使用Metrics-server,能够采集节点和pod的内存、磁盘、CPU、网络的使用率!

将k8s-master01的front-proxy-ca.crt文件复制到所有NODE节点

scp /etc/kubernetes/pki/front-proxy-ca.crt k8s-node1:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/front-proxy-ca.crt k8s-node2:/etc/kubernetes/pki/

vim metrics.yaml

写入文件

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

name: system:aggregated-metrics-reader

rules:

-

apiGroups:

resources:

-

pods

-

nodes

verbs:

-

get

-

list

-

watch

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

rules:

-

apiGroups:

-

""

resources:

- nodes/metrics

verbs:

-

get

-

apiGroups:

-

""

resources:

-

pods

-

nodes

verbs:

-

get

-

list

-

watch

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

ports:

- name: https

port: 443

protocol: TCP

targetPort: https

selector:

k8s-app: metrics-server

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 0

template:

metadata:

labels:

k8s-app: metrics-server

spec:

containers:

-

args:

-

--cert-dir=/tmp

-

--secure-port=10250

-

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

-

--kubelet-use-node-status-port

-

--metric-resolution=15s

-

--kubelet-insecure-tls ##增加证书验证

image: registry.aliyuncs.com/google_containers/metrics-server:v0.7.2 ##修改为国内镜像源

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

tcpSocket: ###修改探针模式

port: 10250 ###修改探测端口

periodSeconds: 10

name: metrics-server

ports:

- containerPort: 10250

name: https

protocol: TCP

readinessProbe:

failureThreshold: 3

tcpSocket: ###修改探针模式

port: 10250 ###修改探测端口

initialDelaySeconds: 20

periodSeconds: 10

resources:

requests:

cpu: 100m

memory: 200Mi

securityContext:

allowPrivilegeEscalation: false

capabilities:

drop:

- ALL

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

seccompProfile:

type: RuntimeDefault

volumeMounts:

- mountPath: /tmp

name: tmp-dir

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:

- emptyDir: {}

name: tmp-dir

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

labels:

k8s-app: metrics-server

name: v1beta1.metrics.k8s.io

spec:

group: metrics.k8s.io

groupPriorityMinimum: 100

insecureSkipTLSVerify: true

service:

name: metrics-server

namespace: kube-system

version: v1beta1

versionPriority: 100

完成之后 kubectl create -f metrics.yaml 提交文件

等待metrics软件启动 用 kubectl get pod -A 查看

查看各节点资源占用 kubectl top node

八、部署Dashboard

在nobe服务器端下载Dashboard资源清单