目录

完整代码下载 :神经网络常见层Numpy封装参考 - 卷积层

前置层

- 神经网络常见层Numpy封装参考(1):损失层

- 神经网络常见层Numpy封装参考(2):线性层

- 神经网络常见层Numpy封装参考(3):激活层

- 神经网络常见层Numpy封装参考(4):优化器

- 神经网络常见层Numpy封装参考(5):其他层

python

from typing import List, Dict, Tuple, Any, Iterator, Optional

import cv2

import numpy as np

import matplotlib.pyplot as pltOpenCV的使用

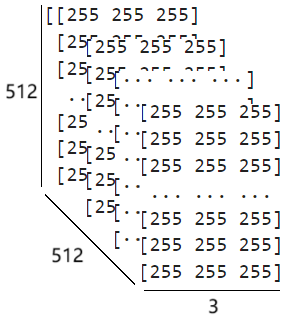

使用cv2.IMREAD_COLOR标志调用 imread 函数时,图像将以彩色模式读取,并存储为一个 NumPy 数组。该数组的形状为 (height, width, channels),其中 height 和 width 分别表示图像的高度和宽度(以像素为单位),channels 表示颜色通道数(对于彩色图像为 3)。在内存布局上,该三维数组可以理解为三个并行的二维平面叠放而成,每个平面对应一个颜色通道的数据,通道顺序遵循 OpenCV 的 BGR 格式,即第一通道为蓝色(B),第二通道为绿色(G),第三通道为红色(R)。因此,数组中每个元素的数值表示图像在特定空间位置和颜色通道上的强度,即该通道的颜色深度值,数值越大表示该通道的亮度越高(越接近255),反之则越接近黑色(越接近0)。

python

# 读取图像数据

img = cv2.imread("chara.jpg", cv2.IMREAD_COLOR)

img = cv2.resize(img, (512, 512))

print(img.shape)

print(img)(512, 512, 3)

[[[255 255 255]

[255 255 255]

[255 255 255]

...

[255 255 255]

[255 255 255]

[255 255 255]]

[[255 255 255]

[255 255 255]

[255 255 255]

...

[255 255 255]

[255 255 255]

[255 255 255]]

[[255 255 255]

[255 255 255]

[255 255 255]

...

[255 255 255]

[255 255 255]

[255 255 255]]

...

[[254 251 253]

[244 241 243]

[212 209 211]

...

[237 237 237]

[252 252 252]

[255 255 255]]

[[255 253 255]

[254 251 253]

[238 235 237]

...

[234 234 234]

[250 250 250]

[255 255 255]]

[[255 253 255]

[255 252 254]

[248 245 247]

...

[231 231 231]

[249 249 249]

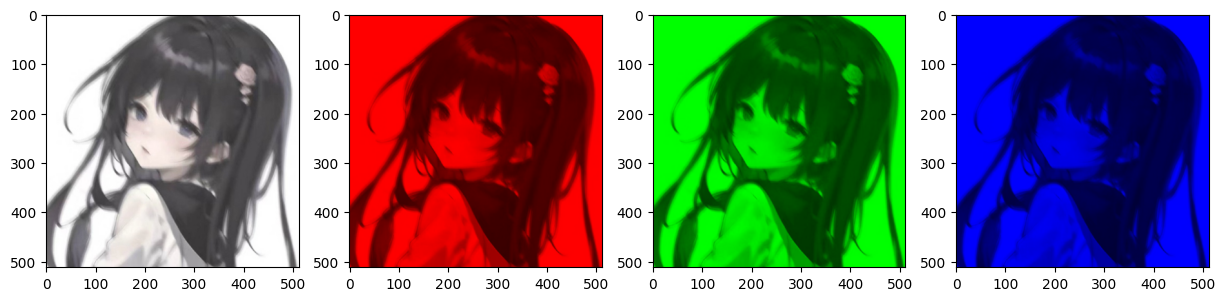

[255 255 255]]]由于读取得到的图像数据中,最后一维对应颜色通道,且通道排列顺序为BGR,因此在显示图像前需将其转换为RGB顺序。

python

# BGR -> RGB

img = img[:,:,::-1]

fig, axes = plt.subplots(1, 4, figsize=(15, 15))

# 显示三个通道

axes[0].imshow(img)

# 将G,B通道设为0以单独显示R通道

img_channel_R = img.copy()

img_channel_R[:,:,1] = 0

img_channel_R[:,:,2] = 0

axes[1].imshow(img_channel_R)

# 将R,B通道设为0以单独显示G通道

img_channel_G = img.copy()

img_channel_G[:,:,0] = 0

img_channel_G[:,:,2] = 0

axes[2].imshow(img_channel_G)

# 将R,G通道设为0以单独显示B通道

img_channel_B = img.copy()

img_channel_B[:,:,0] = 0

img_channel_B[:,:,1] = 0

axes[3].imshow(img_channel_B)

plt.show()

若需将三个颜色通道分离,可将通道所在的最后一个维度转置至首位,再通过数组索引分别提取各通道数据即可。

python

# 将最后一维前置

img_channels = np.transpose(img, (2, 0, 1))

print(img_channels.shape)

print(img_channels[0].shape)

print(img_channels[1].shape)

print(img_channels[2].shape)(3, 512, 512)

(512, 512)

(512, 512)

(512, 512)im2col算法实现卷积

im2col

假设给定一个形状为 H × W = 4 × 5 H \times W=4 \times 5 H×W=4×5的输入矩阵 X \bf X X,以及一个形状为 H k × W k = 3 × 3 H_k \times W_k=3 \times 3 Hk×Wk=3×3的卷积核 K \bf K K:

X ( 4 × 5 ) = [ 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 ] ( H × W ) , K ( 3 × 3 ) = [ 1 2 3 4 5 6 7 8 9 ] ( H k × W k ) {{\bf X}{(4 \times 5)}} = {\left[ {\begin{array}{c} 1&2&3&4&5\\ 6&7&8&9&{10}\\ {11}&{12}&{13}&{14}&{15}\\ {16}&{17}&{18}&{19}&{20} \end{array}} \right]{(H \times W)}} , {{\bf K}{(3 \times 3)}} = {\left[ {\begin{array}{c} {1}&{2}&{3}\\ {4}&{5}&{6}\\ {7}&{8}&{9} \end{array}} \right]{({H_k} \times {W_k})}} X(4×5)= 1611162712173813184914195101520 (H×W),K(3×3)= 147258369 (Hk×Wk)

为简化卷积步长与填充的参数设置,我们统一采用标量表示,即默认宽高两个方向上的值相同。假设步长 stride = 1 \text{stride} = 1 stride=1,填充 padding = 0 \text{padding} = 0 padding=0,那么可以由如下公式计算卷积结果矩阵的形状:

H o u t = ⌊ H + 2 × padding − H k stride ⌋ + 1 W o u t = ⌊ W + 2 × padding − W k stride ⌋ + 1 \begin{aligned} H_{out} &= \left\lfloor \frac{H + 2 \times \text{padding} - H_{k}}{\text{stride}} \right\rfloor + 1 \\ W_{out} &= \left\lfloor \frac{W + 2 \times \text{padding} - W_{k}}{\text{stride}} \right\rfloor + 1 \end{aligned} HoutWout=⌊strideH+2×padding−Hk⌋+1=⌊strideW+2×padding−Wk⌋+1

本例中,卷积结果的形状为: { H o u t = ⌊ 4 − 3 1 ⌋ + 1 = 2 W o u t = ⌊ 5 − 3 1 ⌋ + 1 = 3 \left\{ \begin{array}{l} {H_{out}} = \left\lfloor {\frac{{4 - 3}}{1}} \right\rfloor + 1 = 2\\ {W_{out}} = \left\lfloor {\frac{{5 - 3}}{1}} \right\rfloor + 1 = 3 \end{array} \right. {Hout=⌊14−3⌋+1=2Wout=⌊15−3⌋+1=3

X ( 4 × 5 ) ∗ K ( 3 × 3 ) = Y ( 2 × 3 ) {{\bf{X}}{(4 \times 5)}}*{{\bf{K}}{(3 \times 3)}} = {{\bf{Y}}_{(2 \times 3)}} X(4×5)∗K(3×3)=Y(2×3)

在im2col算法中,要先对输入矩阵 X \bf X X按照卷积的运算步骤列举出所有滑动窗口:

X ( 4 × 5 ) = [ 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 ] ( H × W ) ⇒ X w i n ( 2 × 3 × 3 × 3 ) = [ [ 1 2 3 6 7 8 11 12 13 ] [ 2 3 4 7 8 9 12 13 14 ] [ 3 4 5 8 9 10 13 14 15 ] [ 6 7 8 11 12 13 16 17 18 ] [ 7 8 9 12 13 14 17 18 19 ] [ 8 9 10 13 14 15 18 19 20 ] ] ( H o u t × W o u t × H k × W k ) {{\bf{X}}{(4 \times 5)}} = {\left[ {\begin{array}{c} 1&2&3&4&5\\ 6&7&8&9&{10}\\ {11}&{12}&{13}&{14}&{15}\\ {16}&{17}&{18}&{19}&{20} \end{array}} \right]{(H \times W)}} \Rightarrow \mathop {{{\bf{X}}{{\bf{win}}}}}\limits^{(2 \times 3 \times 3 \times 3)} = {\left[ {\begin{array}{c} {\left[ {\begin{array}{c} 1&2&3\\ 6&7&8\\ {11}&{12}&{13} \end{array}} \right]}&{\left[ {\begin{array}{c} 2&3&4\\ 7&8&9\\ {12}&{13}&{14} \end{array}} \right]}&{\left[ {\begin{array}{c} 3&4&5\\ 8&9&{10}\\ {13}&{14}&{15} \end{array}} \right]}\\ {\left[ {\begin{array}{c} 6&7&8\\ {11}&{12}&{13}\\ {16}&{17}&{18} \end{array}} \right]}&{\left[ {\begin{array}{c} 7&8&9\\ {12}&{13}&{14}\\ {17}&{18}&{19} \end{array}} \right]}&{\left[ {\begin{array}{c} 8&9&{10}\\ {13}&{14}&{15}\\ {18}&{19}&{20} \end{array}} \right]} \end{array}} \right]{({H_{out}} \times {W_{out}} \times {H_k} \times {W_k})}} X(4×5)= 1611162712173813184914195101520 (H×W)⇒Xwin(2×3×3×3)= 161127123813 611167121781318 271238134914 712178131891419 3813491451015 8131891419101520 (Hout×Wout×Hk×Wk)

最后,分别将最后两个维度和前两个维度展平,得到col矩阵;另外,将卷积核的第二个维度展平:

X c o l = [ 1 2 3 6 7 8 11 12 13 2 3 4 7 8 9 12 13 14 3 4 5 8 9 10 13 14 15 6 7 8 11 12 13 16 17 18 7 8 9 12 13 14 17 18 19 8 9 10 13 14 15 18 19 20 ] ( H o u t W o u t × H k W k ) , K f l a t = [ 1 2 3 4 5 6 7 8 9 ] ( H k W k × 1 ) {{\bf{X}}{{\bf{col}}}} = {\left[ {\begin{array}{c} 1&2&3&6&7&8&{11}&{12}&{13}\\ 2&3&4&7&8&9&{12}&{13}&{14}\\ 3&4&5&8&9&{10}&{13}&{14}&{15}\\ 6&7&8&{11}&{12}&{13}&{16}&{17}&{18}\\ 7&8&9&{12}&{13}&{14}&{17}&{18}&{19}\\ 8&9&{10}&{13}&{14}&{15}&{18}&{19}&{20} \end{array}} \right]{({H_{out}}{W_{out}} \times {H_k}{W_k})}},{{\bf{K}}{{\bf{flat}}}} = {\left[ {\begin{array}{c} {1}\\ {2}\\ {3}\\ {4}\\ {5}\\ {6}\\ {7}\\ {8}\\ {9} \end{array}} \right]{({H_k}{W_k} \times 1)}} Xcol= 12367823478934589106781112137891213148910131415111213161718121314171819131415181920 (HoutWout×HkWk),Kflat= 123456789 (HkWk×1)

只需将col矩阵与展平后的卷积核做内积即可完成卷积运算,得到展平的卷积运算结果:

X c o l ( H o u t W o u t × H k W k ) ⋅ K f l a t ( H k W k × 1 ) = Y f l a t ( H o u t W o u t × 1 ) \mathop {{{\bf{X}}{{\bf{col}}}}}\limits^{({H{out}}{W_{out}} \times {H_k}{W_k})} \cdot \mathop {{{\bf{K}}{{\bf{flat}}}}}\limits^{({H_k}{W_k} \times 1)} = \mathop {{{\bf{Y}}{{\bf{flat}}}}}\limits^{{({H{out}}{W_{out}} \times 1)}} Xcol(HoutWout×HkWk)⋅Kflat(HkWk×1)=Yflat(HoutWout×1)

最后将 Y f l a t ( H o u t W o u t × 1 ) \mathop {{{\bf{Y}}{{\bf{flat}}}}}\limits^{{({H_{out}}{W_{out}} \times 1)}} Yflat(HoutWout×1)形状还原即可:

Y f l a t ( H o u t W o u t × 1 ) ⇒ Y ( H o u t × W o u t ) \mathop {{{\bf{Y}}{{\bf{flat}}}}}\limits^{{({H_{out}}{W_{out}} \times 1)}} \Rightarrow {{\bf{Y}}{{({H_{out}} \times {W_{out}})}}} Yflat(HoutWout×1)⇒Y(Hout×Wout)

下面用代码完成im2col矩阵的构建,核心是使用numpy封装好的sliding_window_view函数。该函数要求我们提前计算好卷积输出的结果形状:

python

# 封装一个生成滑动窗口的函数

def generate_slide_windows(x : np.ndarray, H_out : int, W_out : int, H_k : int, W_k : int):

shape = (H_out, W_out, H_k, W_k) # 最终产生的滑动窗口矩阵的形状

strides = (stride * x.strides[0],

stride * x.strides[1],

x.strides[0],

x.strides[1])

return np.lib.stride_tricks.as_strided(x, shape=shape, strides=strides)

python

# 输入矩阵

x = np.array([

[1, 2, 3, 4, 5 ],

[6, 7, 8, 9, 10],

[11,12,13,14,15],

[16,17,18,19,20],

])

# 卷积核

k = np.array([

[1, 2, 3],

[4, 5, 6],

[7, 8, 9],

])

# 输入矩阵形状

H, W = x.shape

# 卷积核形状

H_k, W_k = k.shape

# 步长大小

stride = 1

# 填充大小

padding = 1

# 计算卷积输出的结果形状

H_out = (H + 2*padding - H_k) // stride + 1

W_out = (W + 2*padding - W_k) // stride + 1

# 边缘填充后的输入矩阵

x_pad = np.pad(x, (

(padding, padding),

(padding, padding)

), mode='constant')

# 填充后的输入矩阵形状

H_pad, W_pad = x_pad.shape

# 生成滑动窗口

x_win = generate_slide_windows(x_pad, H_out, W_out, H_k, W_k)

# 展平窗口,得到col矩阵

x_col = x_win.reshape(H_out*W_out, H_k*W_k)

# 展平卷积核

k_flat = k.reshape(-1, 1)

# 将col矩阵与展平后的卷积核点积后,得到展平的输出矩阵

y_flat = x_col @ k_flat

# 再还原为输出形状

y = y_flat.reshape(H_out, W_out)

print('输入矩阵形状:', (H, W))

print('卷积核形状:', (H_k, W_k))

print('卷积结果形状:', (H_out, W_out))

print('----------------------------')

print('填充后的输入矩阵:\n', x_pad)

print('----------------------------')

print('col矩阵:\n', x_col, x_col.shape)

print('----------------------------')

print('展平后的卷积核:\n', k_flat, k_flat.shape)

print('----------------------------')

print('点积结果:\n', y_flat, y_flat.shape)

print('----------------------------')

print('还原为输出形状:\n', y, y.shape)输入矩阵形状: (4, 5)

卷积核形状: (3, 3)

卷积结果形状: (4, 5)

----------------------------

填充后的输入矩阵:

[[ 0 0 0 0 0 0 0]

[ 0 1 2 3 4 5 0]

[ 0 6 7 8 9 10 0]

[ 0 11 12 13 14 15 0]

[ 0 16 17 18 19 20 0]

[ 0 0 0 0 0 0 0]]

----------------------------

col矩阵:

[[ 0 0 0 0 1 2 0 6 7]

[ 0 0 0 1 2 3 6 7 8]

[ 0 0 0 2 3 4 7 8 9]

[ 0 0 0 3 4 5 8 9 10]

[ 0 0 0 4 5 0 9 10 0]

[ 0 1 2 0 6 7 0 11 12]

[ 1 2 3 6 7 8 11 12 13]

[ 2 3 4 7 8 9 12 13 14]

[ 3 4 5 8 9 10 13 14 15]

[ 4 5 0 9 10 0 14 15 0]

[ 0 6 7 0 11 12 0 16 17]

[ 6 7 8 11 12 13 16 17 18]

[ 7 8 9 12 13 14 17 18 19]

[ 8 9 10 13 14 15 18 19 20]

[ 9 10 0 14 15 0 19 20 0]

[ 0 11 12 0 16 17 0 0 0]

[11 12 13 16 17 18 0 0 0]

[12 13 14 17 18 19 0 0 0]

[13 14 15 18 19 20 0 0 0]

[14 15 0 19 20 0 0 0 0]] (20, 9)

----------------------------

展平后的卷积核:

[[1]

[2]

[3]

[4]

[5]

[6]

[7]

[8]

[9]] (9, 1)

----------------------------

点积结果:

[[128]

[202]

[241]

[280]

[184]

[276]

[411]

[456]

[501]

[318]

[441]

[636]

[681]

[726]

[453]

[240]

[331]

[352]

[373]

[220]] (20, 1)

----------------------------

还原为输出形状:

[[128 202 241 280 184]

[276 411 456 501 318]

[441 636 681 726 453]

[240 331 352 373 220]] (4, 5)col2im

如果想要把生成的col矩阵还原为原来的输入矩阵,实现代码如下:

python

# 还原为窗口

x_col_windows = x_col.reshape(H_out, W_out, H_k, W_k)

# 我们需要根据输入矩阵形状提前生成一个零矩阵,然后像处理x那样,生成一个形状和滑动规则与之完全一致的滑动窗口

x_pad_rec = np.zeros((H_pad, W_pad))

x_pad_rec_win = generate_slide_windows(x_pad_rec, H_out, W_out, H_k, W_k)

# 直接将生成的零矩阵滑动窗口与还原后的x_col_windows窗口求和

# 由于x_pad_rec_win与原始x_pad_rec共享内存,这一操作相当于把每个卷积窗口在图像对应位置的贡献直接累加回填充画布中,完成逆向映射

x_pad_rec_win += x_col_windows

print('还原后的输入矩阵:\n', x_pad_rec)

# 去除填充部分

if not (padding == (0, 0)):

x_rec = x_pad_rec[padding:-padding, padding:-padding]

print('去除填充后:\n', x_rec)还原后的输入矩阵:

[[ 0. 0. 0. 0. 0. 0. 0.]

[ 0. 1. 2. 3. 4. 5. 0.]

[ 0. 6. 7. 8. 9. 10. 0.]

[ 0. 11. 12. 13. 14. 15. 0.]

[ 0. 16. 17. 18. 19. 20. 0.]

[ 0. 0. 0. 0. 0. 0. 0.]]

去除填充后:

[[ 1. 2. 3. 4. 5.]

[ 6. 7. 8. 9. 10.]

[11. 12. 13. 14. 15.]

[16. 17. 18. 19. 20.]]批数据

如果我们要处理的输入数据变成了这样:

N × ( [ r 1 ( 1 ) r 1 ( 2 ) ⋯ r 1 ( W ) r 2 ( 1 ) r 2 ( 2 ) ⋯ r 2 ( W ) ⋯ ⋯ ⋯ ⋯ r H ( 1 ) r H ( 2 ) ⋯ r H ( W ) ] ( H × W ) , [ g 1 ( 1 ) g 1 ( 2 ) ⋯ g 1 ( W ) g 2 ( 1 ) g 2 ( 2 ) ⋯ g 2 ( W ) ⋯ ⋯ ⋯ ⋯ g H ( 1 ) g H ( 2 ) ⋯ g H ( W ) ] ( H × W ) , [ b 1 ( 1 ) b 1 ( 2 ) ⋯ b 1 ( W ) b 2 ( 1 ) b 2 ( 2 ) ⋯ b 2 ( W ) ⋯ ⋯ ⋯ ⋯ b H ( 1 ) b H ( 2 ) ⋯ b H ( W ) ] ( H × W ) , ⋯ , C ) N \times \left( \begin{bmatrix} r_1^{(1)} & r_1^{(2)} & \cdots & r_1^{(W)} \\ r_2^{(1)} & r_2^{(2)} & \cdots & r_2^{(W)} \\ \cdots & \cdots & \cdots & \cdots \\ r_H^{(1)} & r_H^{(2)} & \cdots & r_H^{(W)} \end{bmatrix}{(H \times W)}, \begin{bmatrix} g_1^{(1)} & g_1^{(2)} & \cdots & g_1^{(W)} \\ g_2^{(1)} & g_2^{(2)} & \cdots & g_2^{(W)} \\ \cdots & \cdots & \cdots & \cdots \\ g_H^{(1)} & g_H^{(2)} & \cdots & g_H^{(W)} \end{bmatrix}{(H \times W)}, \begin{bmatrix} b_1^{(1)} & b_1^{(2)} & \cdots & b_1^{(W)} \\ b_2^{(1)} & b_2^{(2)} & \cdots & b_2^{(W)} \\ \cdots & \cdots & \cdots & \cdots \\ b_H^{(1)} & b_H^{(2)} & \cdots & b_H^{(W)} \end{bmatrix}_{(H \times W)}, \cdots, C \right) N× r1(1)r2(1)⋯rH(1)r1(2)r2(2)⋯rH(2)⋯⋯⋯⋯r1(W)r2(W)⋯rH(W) (H×W), g1(1)g2(1)⋯gH(1)g1(2)g2(2)⋯gH(2)⋯⋯⋯⋯g1(W)g2(W)⋯gH(W) (H×W), b1(1)b2(1)⋯bH(1)b1(2)b2(2)⋯bH(2)⋯⋯⋯⋯b1(W)b2(W)⋯bH(W) (H×W),⋯,C

S h a p e = ( N , C , H , W ) Shape=(N, C, H, W) Shape=(N,C,H,W)

这意味着,输入数据共包含 N N N张图像,其中每张图像具有 C C C个独立通道、大小为 H × W H \times W H×W,它们被统一存放在一个形状为 ( N , C , H , W ) (N, C, H, W) (N,C,H,W)的矩阵 X ( N , C , H , W ) {{\bf{X}}{{\rm{(N, C, H, W)}}}} X(N,C,H,W)内。显然, X ( N , C , H , W ) {{\bf{X}}{{\rm{(N, C, H, W)}}}} X(N,C,H,W)与卷积核的卷积运算仅在后两个维度(即图像宽高)上进行。批数据的im2col算法实现如下:

python

# 封装一个生成滑动窗口的函数

def generate_slide_windows(x : np.ndarray, N : int, C : int, H_out : int, W_out : int, H_k : int, W_k : int):

shape = (N, C, H_out, W_out, H_k, W_k) # 最终产生的滑动窗口矩阵的形状

strides = (

x.strides[0],

x.strides[1],

stride * x.strides[2],

stride * x.strides[3],

x.strides[2],

x.strides[3])

return np.lib.stride_tricks.as_strided(x, shape=shape, strides=strides)

python

def im2col(x, kernel_size : Tuple = (3, 3), stride : int = 1, padding : int = 0):

# 填充(不填充前两个维度,仅填充宽高)

x_pad = np.pad(x, (

(0,0),

(0,0),

(padding, padding),

(padding, padding)

), mode='constant')

# 输入数据形状

N, C, H_pad, W_pad = x_pad.shape

# 卷积核形状

H_k, W_k = kernel_size

# 卷积结果形状(直接获取填充后的数据宽高,因此不再考虑padding)

H_out = (H_pad - H_k) // stride + 1

W_out = (W_pad - W_k) // stride + 1

# 生成滑动窗口

x_win = generate_slide_windows(x_pad, N, C, H_out, W_out, H_k, W_k)

# 展平后四个维度中的前两个和后两个

x_col = x_win.reshape(N, C, H_out * W_out, H_k*W_k)

# 返回卷积结果形状

out_shape = (H_out, W_out)

return x_col, out_shape测试

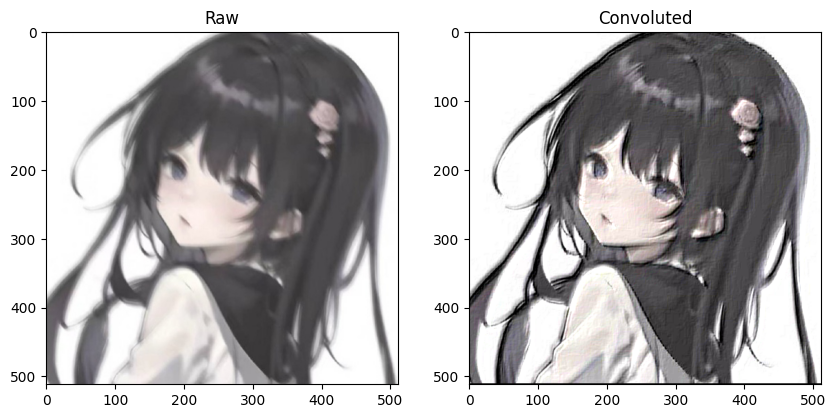

python

# 读取本地图像

img = cv2.imread("chara.jpg", cv2.IMREAD_COLOR)

# 重设图像大小并转换为RGB通道

img = cv2.resize(img, (512, 512))[:,:,::-1]

# 前置通道维度

img_channels = np.transpose(img, (2, 0, 1))

# 图像数据

x = np.array([img_channels])

print(f'数据形状为:{x.shape},这表示数据包含{x.shape[0]}个图像,其中每个图像有{x.shape[1]}个通道、大小为{x.shape[2]}*{x.shape[3]}')数据形状为:(1, 3, 512, 512),这表示数据包含1个图像,其中每个图像有3个通道、大小为512*512

python

# 定义一个Emboss滤波器作为卷积核

k = np.array([

[-2,-1, 0 ],

[-1, 1, 1 ],

[ 0, 1, 2 ]

])

# 生成col矩阵

x_col, out_shape = im2col(x, kernel_size=k.shape, stride=1, padding=1)

print('col矩阵形状:', x_col.shape)

# 将col矩阵的三个通道同时与展平后的卷积核点乘

conv = x_col @ k.reshape(-1, 1)

print('点乘结果形状:', conv.shape)

# 还原为卷积结果形状

conv = conv.reshape(conv.shape[0], conv.shape[1], *out_shape)

print('卷积结果形状:', conv.shape)

# 将结果限制在0-255之间

conv = np.clip(conv, 0, 255)

# 显示图像

fig, axs = plt.subplots(1, 2, figsize=(10, 5))

axs[0].set_title('Raw')

axs[0].imshow(img)

axs[1].set_title('Convoluted')

axs[1].imshow(conv[0].transpose(1, 2, 0))

plt.show()col矩阵形状: (1, 3, 262144, 9)

点乘结果形状: (1, 3, 262144, 1)

卷积结果形状: (1, 3, 512, 512)

卷积层数据结构

在卷积层中,输入数据满足如下形状:

N × ( [ r 1 ( 1 ) r 1 ( 2 ) ⋯ r 1 ( W ) r 2 ( 1 ) r 2 ( 2 ) ⋯ r 2 ( W ) ⋯ ⋯ ⋯ ⋯ r H ( 1 ) r H ( 2 ) ⋯ r H ( W ) ] ( H × W ) , [ g 1 ( 1 ) g 1 ( 2 ) ⋯ g 1 ( W ) g 2 ( 1 ) g 2 ( 2 ) ⋯ g 2 ( W ) ⋯ ⋯ ⋯ ⋯ g H ( 1 ) g H ( 2 ) ⋯ g H ( W ) ] ( H × W ) , [ b 1 ( 1 ) b 1 ( 2 ) ⋯ b 1 ( W ) b 2 ( 1 ) b 2 ( 2 ) ⋯ b 2 ( W ) ⋯ ⋯ ⋯ ⋯ b H ( 1 ) b H ( 2 ) ⋯ b H ( W ) ] ( H × W ) , ⋯ , C ) N \times \left( \begin{bmatrix} r_1^{(1)} & r_1^{(2)} & \cdots & r_1^{(W)} \\ r_2^{(1)} & r_2^{(2)} & \cdots & r_2^{(W)} \\ \cdots & \cdots & \cdots & \cdots \\ r_H^{(1)} & r_H^{(2)} & \cdots & r_H^{(W)} \end{bmatrix}{(H \times W)}, \begin{bmatrix} g_1^{(1)} & g_1^{(2)} & \cdots & g_1^{(W)} \\ g_2^{(1)} & g_2^{(2)} & \cdots & g_2^{(W)} \\ \cdots & \cdots & \cdots & \cdots \\ g_H^{(1)} & g_H^{(2)} & \cdots & g_H^{(W)} \end{bmatrix}{(H \times W)}, \begin{bmatrix} b_1^{(1)} & b_1^{(2)} & \cdots & b_1^{(W)} \\ b_2^{(1)} & b_2^{(2)} & \cdots & b_2^{(W)} \\ \cdots & \cdots & \cdots & \cdots \\ b_H^{(1)} & b_H^{(2)} & \cdots & b_H^{(W)} \end{bmatrix}_{(H \times W)}, \cdots, C \right) N× r1(1)r2(1)⋯rH(1)r1(2)r2(2)⋯rH(2)⋯⋯⋯⋯r1(W)r2(W)⋯rH(W) (H×W), g1(1)g2(1)⋯gH(1)g1(2)g2(2)⋯gH(2)⋯⋯⋯⋯g1(W)g2(W)⋯gH(W) (H×W), b1(1)b2(1)⋯bH(1)b1(2)b2(2)⋯bH(2)⋯⋯⋯⋯b1(W)b2(W)⋯bH(W) (H×W),⋯,C

ImgShape = ( N , C , H , W ) \text{ImgShape}=(N, C, H, W) ImgShape=(N,C,H,W)

而卷积核被当作权重参数 处理,且共有 C o u t × C i n C_{out} \times C_{in} Cout×Cin个。其中, C i n C_{in} Cin维度与输入数据的通道数维度 C C C相同,在前向传播过程中,这两个维度对齐并逐一完成卷积 ,显然该维度的所有卷积结果形状一致,接下来我们需要把所有的卷积结果求和,得到一个累加后的卷积结果 。在卷积层中,我们可以这样操作 C o u t C_{out} Cout次,也就能得到 C o u t C_{out} Cout个这样的求和卷积结果,从而实现将输入数据的通道从 C i n C_{in} Cin维映射到 C o u t C_{out} Cout维,最终经前向传播产生一个形状为 N × C o u t × H o u t × W o u t N \times C_{out} \times H_{out} \times W_{out} N×Cout×Hout×Wout的输出。

C o u t × ( [ r 1 ( 1 ) r 1 ( 2 ) ⋯ r 1 ( W k ) r 2 ( 1 ) r 2 ( 2 ) ⋯ r 2 ( W k ) ⋯ ⋯ ⋯ ⋯ r H k ( 1 ) r H k ( 2 ) ⋯ r H k ( W k ) ] ( H k × W k ) , [ g 1 ( 1 ) g 1 ( 2 ) ⋯ g 1 ( W k ) g 2 ( 1 ) g 2 ( 2 ) ⋯ g 2 ( W k ) ⋯ ⋯ ⋯ ⋯ g H k ( 1 ) g H k ( 2 ) ⋯ g H k ( W k ) ] ( H k × W k ) , [ b 1 ( 1 ) b 1 ( 2 ) ⋯ b 1 ( W k ) b 2 ( 1 ) b 2 ( 2 ) ⋯ b 2 ( W k ) ⋯ ⋯ ⋯ ⋯ b H k ( 1 ) b H k ( 2 ) ⋯ b H k ( W k ) ] ( H k × W k ) , ⋯ , C i n ) C_{out} \times \left( \begin{bmatrix} r_1^{(1)} & r_1^{(2)} & \cdots & r_1^{({W_k})} \\ r_2^{(1)} & r_2^{(2)} & \cdots & r_2^{({W_k})} \\ \cdots & \cdots & \cdots & \cdots \\ r_{H_k}^{(1)} & r_{H_k}^{(2)} & \cdots & r_{H_k}^{({W_k})} \end{bmatrix}{({H_k} \times {W_k})}, \begin{bmatrix} g_1^{(1)} & g_1^{(2)} & \cdots & g_1^{({W_k})} \\ g_2^{(1)} & g_2^{(2)} & \cdots & g_2^{({W_k})} \\ \cdots & \cdots & \cdots & \cdots \\ g{H_k}^{(1)} & g_{H_k}^{(2)} & \cdots & g_{H_k}^{({W_k})} \end{bmatrix}{({H_k} \times {W_k})}, \begin{bmatrix} b_1^{(1)} & b_1^{(2)} & \cdots & b_1^{({W_k})} \\ b_2^{(1)} & b_2^{(2)} & \cdots & b_2^{({W_k})} \\ \cdots & \cdots & \cdots & \cdots \\ b{H_k}^{(1)} & b_{H_k}^{(2)} & \cdots & b_{H_k}^{({W_k})} \end{bmatrix}{({H_k} \times {W_k})}, \cdots, C{in} \right) Cout× r1(1)r2(1)⋯rHk(1)r1(2)r2(2)⋯rHk(2)⋯⋯⋯⋯r1(Wk)r2(Wk)⋯rHk(Wk) (Hk×Wk), g1(1)g2(1)⋯gHk(1)g1(2)g2(2)⋯gHk(2)⋯⋯⋯⋯g1(Wk)g2(Wk)⋯gHk(Wk) (Hk×Wk), b1(1)b2(1)⋯bHk(1)b1(2)b2(2)⋯bHk(2)⋯⋯⋯⋯b1(Wk)b2(Wk)⋯bHk(Wk) (Hk×Wk),⋯,Cin

KernelShape = ( C o u t , C i n , H k , W k ) \text{KernelShape}=(C_{out}, C_{in}, {H_k}, {W_k}) KernelShape=(Cout,Cin,Hk,Wk)

同时,对于每个累加卷积,也就是每一个输出通道,还会有一个对应的偏置项与之求和:

b 1 ( 1 ) b 1 ( 2 ) ⋯ b 1 ( W o u t ) \] \\begin{bmatrix} b_1\^{(1)} \& b_1\^{(2)} \& \\cdots \& b_1\^{({W_{out}})} \\end{bmatrix} \[b1(1)b1(2)⋯b1(Wout)

BiasShape = ( C o u t , ) \text{BiasShape}=({C_{out}}, ) BiasShape=(Cout,)

经前向传播产生的输出形状如下:

N × ( [ r 1 ( 1 ) r 1 ( 2 ) ⋯ r 1 ( W o u t ) r 2 ( 1 ) r 2 ( 2 ) ⋯ r 2 ( W o u t ) ⋯ ⋯ ⋯ ⋯ r H o u t ( 1 ) r H o u t ( 2 ) ⋯ r H o u t ( W o u t ) ] ( H o u t × W o u t ) , [ g 1 ( 1 ) g 1 ( 2 ) ⋯ g 1 ( W o u t ) g 2 ( 1 ) g 2 ( 2 ) ⋯ g 2 ( W o u t ) ⋯ ⋯ ⋯ ⋯ g H o u t ( 1 ) g H o u t ( 2 ) ⋯ g H o u t ( W o u t ) ] ( H o u t × W o u t ) , [ b 1 ( 1 ) b 1 ( 2 ) ⋯ b 1 ( W o u t ) b 2 ( 1 ) b 2 ( 2 ) ⋯ b 2 ( W o u t ) ⋯ ⋯ ⋯ ⋯ b H o u t ( 1 ) b H o u t ( 2 ) ⋯ b H o u t ( W o u t ) ] ( H o u t × W o u t ) , ⋯ , C o u t ) N \times \left( \begin{bmatrix} r_1^{(1)} & r_1^{(2)} & \cdots & r_1^{({W_{out}})} \\ r_2^{(1)} & r_2^{(2)} & \cdots & r_2^{({W_{out}})} \\ \cdots & \cdots & \cdots & \cdots \\ r_{H_{out}}^{(1)} & r_{H_{out}}^{(2)} & \cdots & r_{H_{out}}^{({W_{out}})} \end{bmatrix}{({H{out}} \times {W_{out}})}, \begin{bmatrix} g_1^{(1)} & g_1^{(2)} & \cdots & g_1^{({W_{out}})} \\ g_2^{(1)} & g_2^{(2)} & \cdots & g_2^{({W_{out}})} \\ \cdots & \cdots & \cdots & \cdots \\ g_{H_{out}}^{(1)} & g_{H_{out}}^{(2)} & \cdots & g_{H_{out}}^{({W_{out}})} \end{bmatrix}{({H{out}} \times {W_{out}})}, \begin{bmatrix} b_1^{(1)} & b_1^{(2)} & \cdots & b_1^{({W_{out}})} \\ b_2^{(1)} & b_2^{(2)} & \cdots & b_2^{({W_{out}})} \\ \cdots & \cdots & \cdots & \cdots \\ b_{H_{out}}^{(1)} & b_{H_{out}}^{(2)} & \cdots & b_{H_{out}}^{({W_{out}})} \end{bmatrix}{({H{out}} \times {W_{out}})}, \cdots, C_{out} \right) N× r1(1)r2(1)⋯rHout(1)r1(2)r2(2)⋯rHout(2)⋯⋯⋯⋯r1(Wout)r2(Wout)⋯rHout(Wout) (Hout×Wout), g1(1)g2(1)⋯gHout(1)g1(2)g2(2)⋯gHout(2)⋯⋯⋯⋯g1(Wout)g2(Wout)⋯gHout(Wout) (Hout×Wout), b1(1)b2(1)⋯bHout(1)b1(2)b2(2)⋯bHout(2)⋯⋯⋯⋯b1(Wout)b2(Wout)⋯bHout(Wout) (Hout×Wout),⋯,Cout

OutputShape = ( N , C o u t , H o u t , W o u t ) \text{OutputShape}=(N, C_{out}, {H_{out}}, {W_{out}}) OutputShape=(N,Cout,Hout,Wout)

上述计算过程本质上就是沿指定轴进行矩阵内积 ,主要通过 NumPy 的 tensordot 函数实现:

Y ( N × C o u t × H o u t W o u t ) = n p . t e n s o r d o t ( X c o l ( N × C i n × H o u t W o u t × H k W k ) , K f l a t ( C o u t × C i n × H k W k ) , a x e s = [ [ 1 , − 1 ] , [ 1 , − 1 ] ] ) + b ( 1 × C o u t ) {{\bf{Y}}{(N \times {C{out}} \times {H_{out}}{W_{out}})}} = {\rm{np}}.{\rm{tensordot}}\left( {\mathop {{{\bf{X}}{{\bf{col}}}}}\limits^{(N \times {C{in}} \times {H_{out}}{W_{out}} \times {H_k}{W_k})} ,\mathop {{{\bf{K}}{{\bf{flat}}}}}\limits^{({C{out}} \times {C_{in}} \times {H_k}{W_k})} ,{\rm{axes}} = \left[ {[1, - 1],[1, - 1]} \right]} \right) + {{\bf{b}}{(1 \times {C{out}})}} Y(N×Cout×HoutWout)=np.tensordot(Xcol(N×Cin×HoutWout×HkWk),Kflat(Cout×Cin×HkWk),axes=[[1,−1],[1,−1]])+b(1×Cout)

前向传播的具体运算规则如下动图所示:

下面我们用代码来实现:

python

# 读取本地图像

img = cv2.imread("chara.jpg", cv2.IMREAD_COLOR)

# 重设图像大小并转换为RGB通道

img = cv2.resize(img, (512, 512))[:,:,::-1]

# 前置通道维度

img_channels = np.transpose(img, (2, 0, 1))

# 图像数据

x = np.array([img_channels])

python

# 我们定义了6个不同的滤波器

k_emboss = np.array([

[-2,-1, 0 ],

[-1, 1, 1 ],

[ 0, 1, 2 ]

])

k_sharpen = np.array([

[ 0, -1, 0 ],

[-1, 5, -1 ],

[ 0, -1, 0 ]

])

k_outline = np.array([

[-1, -1, -1],

[-1, 8, -1],

[-1, -1, -1]

])

k_bottom_sobel = np.array([

[-1, -2, -1],

[ 0, 0, 0],

[ 1, 2, 1]

])

k_left_sobel = np.array([

[ 1, 0, -1],

[ 2, 0, -2],

[ 1, 0, -1]

])

k_right_sobel = np.array([

[-1, 0, 1],

[-2, 0, 2],

[-1, 0, 1]

])

# 前三者输出第一个通道,在后续权重参数的卷积计算中,三者将会通过加法运算叠加到结果的第一个通道

channel_1 = np.stack((k_emboss, k_sharpen, k_outline))

# 后三者输出第二个通道

channel_2 = np.stack((k_bottom_sobel, k_left_sobel, k_right_sobel))

# 整合成权重参数

W = np.stack((channel_1, channel_2))

# 四个维度分别对应输出通道数、输入通道数、卷积核高度、卷积核宽度

C_out, C_in, H_k, W_k = W.shape

# 初始化偏置项

b = np.zeros(C_out)

print(f'数据形状:{x.shape},包含{x.shape[0]}个图像,每个图像有{x.shape[1]}个通道、大小为{x.shape[2]}×{x.shape[3]}')

print(f'卷积核形状:{W.shape},最后两个维度储存有{W.shape[-2]}×{W.shape[-1]}的卷积核;第二个维度表示有{W.shape[1]}个这样形状的卷积核,分别对应输入图像的{W.shape[1]}个通道;第一个维度表示输出通道数为{W.shape[0]}。')数据形状:(1, 3, 512, 512),包含1个图像,每个图像有3个通道、大小为512×512

卷积核形状:(2, 3, 3, 3),最后两个维度储存有3×3的卷积核;第二个维度表示有3个这样形状的卷积核,分别对应输入图像的3个通道;第一个维度表示输出通道数为2。

python

# 生成col矩阵

x_col, shape_out = im2col(x, kernel_size=(W_k, H_k), stride=1, padding=1)

# 将权重矩阵的后两个维度展平,即展平每个卷积核

W_flat = W.reshape(C_out, C_in, -1)

print('col矩阵形状:',x_col.shape)

print('展平后的卷积核形状:', W_flat.shape)

# 将col矩阵的最后一维与展平后卷积核的最后一维点乘,表示卷积操作

# 将col矩阵的第二维与展平后卷积核的第二维点乘,表示3个通道的卷积结果求和

# 最后为每个通道加上一个偏置项

# (1, 3, 262144, 25)

# dot (6, 3, 25)

# = (1, 6, 262144)

out = np.tensordot(

x_col,

W_flat,

axes=([-1,1],[-1,1])

) + b

out = out.transpose(0,2,1)

print('点积结果形状:', out.shape)

out = out.reshape(out.shape[0], out.shape[1], *shape_out)

print('还原为图像形状:', out.shape)

# 将结果限制在0-255之间

out = np.clip(out.astype(int), 0, 255)

out_c1 = out[0][0]

out_c1 = np.stack((out_c1, out_c1, out_c1))

out_c2 = out[0][1]

out_c2 = np.stack((out_c2, out_c2, out_c2))

# 显示图像

fig, axs = plt.subplots(1, 2, figsize=(10, 5))

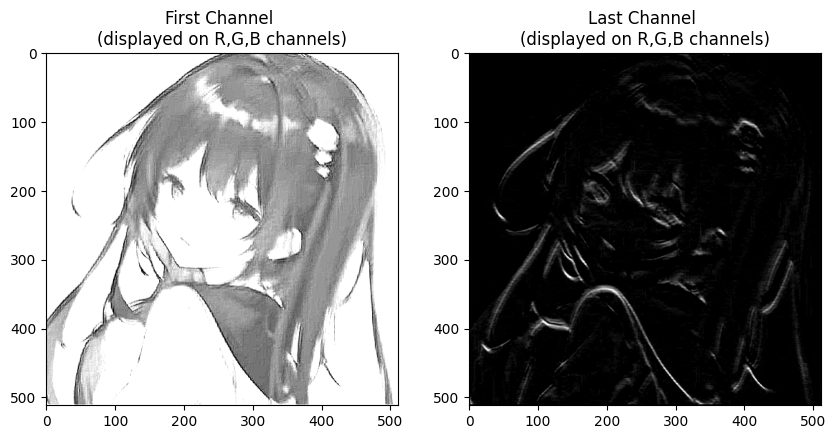

axs[0].set_title('First Channel \n(displayed on R,G,B channels)')

axs[0].imshow(out_c1.transpose(1, 2, 0))

axs[1].set_title('Last Channel \n(displayed on R,G,B channels)')

axs[1].imshow(out_c2.transpose(1, 2, 0))

plt.show()col矩阵形状: (1, 3, 262144, 9)

展平后的卷积核形状: (2, 3, 9)

点积结果形状: (1, 2, 262144)

还原为图像形状: (1, 2, 512, 512)

卷积神经网络

卷积层

python

class Conv2D(Module):

def __init__(self, in_channels : int, out_channels : int, kernel_size : Tuple, stride : int=1, padding : int=0):

super().__init__()

# 保存参数

self.in_channels = in_channels

self.out_channels = out_channels

self.kernel_size = kernel_size

self.stride = stride

self.padding = padding

# 初始化卷积核与偏置向量

self.W = Parameter(np.random.randn(out_channels, in_channels, *self.kernel_size), requires_grad=True)

self.b = Parameter(np.zeros(out_channels), requires_grad=True)

# 储存形状数据,格式为(N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k)

self.cache = None

self.x_col = None

# 填充函数

def _pad(self, x : np.ndarray):

# (N, self.in_channels, H_pad, W_pad)

return np.pad(x, (

(0,0),

(0,0),

(self.padding, self.padding),

(self.padding, self.padding)

), mode='constant')

# 生成滑动窗口

def _generate_slide_windows(self, x : np.ndarray):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

shape = (N, C, H_out, W_out, H_k, W_k) # 最终产生的滑动窗口矩阵的形状

strides = (

x.strides[0],

x.strides[1],

self.stride * x.strides[2],

self.stride * x.strides[3],

x.strides[2],

x.strides[3])

# (N, C, H_out, W_out, H_k, W_k)

return np.lib.stride_tricks.as_strided(x, shape=shape, strides=strides)

def _im2col(self, x : np.ndarray):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

# 生成滑动窗口

x_win = self._generate_slide_windows(x)

# 展平后四个维度中的前两个和后两个

# (N, C, H_out, W_out, H_k, W_k) -> (N, C, H_out*W_out, H_k*W_k)

x_col = x_win.reshape(N, C, H_out*W_out, H_k*W_k)

return x_col

def _col2im(self, grad : np.ndarray):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

# (N, C, H_pad, W_pad)

ret = np.zeros((N, C, H_pad, W_pad))

# (N, C, H_out, W_out, H_k, W_k)

ret_col = self._generate_slide_windows(ret)

# 改变grad形状以匹配梯度累计,不要操作ret_col以防止地址引用失效

ret_col += grad.reshape(N, C, H_out, W_out, H_k, W_k)

# 去除填充部分

if not (self.padding == 0):

# (N, C, H, W)

return ret[:, :, self.padding:-self.padding, self.padding:-self.padding]

# (N, C, H, W)

return ret

def forward(self, x):

# 获取形状数据

N, C, H, W = x.shape

H_k, W_k = self.kernel_size

# 填充

x_pad = self._pad(x)

# 卷积结果形状

H_out = (H + 2*self.padding - H_k) // self.stride + 1

W_out = (W + 2*self.padding - W_k) // self.stride + 1

# 获取填充后的数据形状

_, _, H_pad, W_pad = x_pad.shape

# 保存数据

self.cache = (N, C, H, W, H_pad, W_pad, H_out, W_out, *self.kernel_size)

# 生成col矩阵

# (N, C, H_out*W_out, H_k*W_k)

x_col = self._im2col(x_pad)

# 保存

self.x_col = x_col

# (C_out, C, H_k, W_k) -> (C_out, C, H_k*W_k)

W_flat = self.W.data.reshape(self.out_channels, C, -1)

# (N, C_out, H_out*W_out)

ret = np.tensordot(

x_col, # (N, C, H_out*W_out, H_k*W_k)

W_flat, # (C_out, C, H_k*W_k)

axes=([-1,1],[-1,1])

)

# (N, C_out, H_out*W_out) -> (N, C_out, H_out, W_out)

return ret.reshape(N, self.out_channels, H_out, W_out)

def backward(self, grad):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

# 生成卷积核与偏置向量的梯度

# (C_out, C, H_k, W_k)

self.W.grad += np.tensordot(

grad.reshape(N, self.out_channels, H_out*W_out), # (N, C_out, H_out, W_out) -> (N, C_out, H_out*W_out)

self.x_col.reshape(N, C, H_out*W_out, H_k, W_k), # (N, C, H_out*W_out, H_k*W_k) -> (N, C, H_out*W_out, H_k, W_k)

axes=([0,2],[0,2])

)

# (N, C_out, H_out, W_out) -> # (C_out,)

self.b.grad += np.sum(grad, axis=(0, 2, 3))

# 生成梯度

# (N, H_out, W_out, C, H_k*W_k)

grad_col = np.tensordot(

grad, # (N, C_out, H_out, W_out)

self.W.data.reshape(self.out_channels, C, H_k*W_k), # (C_out, C, H_k, W_k) -> (C_out, C, H_k*W_k)

axes=([1],[0])

)

# (N, H_out, W_out, C, H_k*W_k) -> (N, C, H_out, W_out, H_k*W_k)

grad_col = np.transpose(grad_col, (0, 3, 1, 2, 4))

# (N, C, H_out, W_out, H_k*W_k) -> (N, C, H_out*W_out, H_k*W_k)

grad_col = grad_col.reshape(N, C, H_out*W_out, H_k*W_k)

# (N, C, H, W)

return self._col2im(grad_col)

def __call__(self, x):

return self.forward(x)

def __repr__(self):

return self.__class__.__name__ + f'(in_channels={self.in_channels}, out_channels={self.out_channels}, kernel_size={self.kernel_size}, stride={self.stride}, padding={self.padding})'展平层

python

class Flatten(Module):

def __init__(self):

super().__init__()

# 储存输入数据形状

self.shape = None

def forward(self, x):

self.shape = x.shape

# 将除了第一维之外的其他维度展平

return x.reshape(x.shape[0], -1)

def backward(self, grad):

# 将梯度还原回原来的形状

return grad.reshape(self.shape)

def __call__(self, x):

return self.forward(x)测试

python

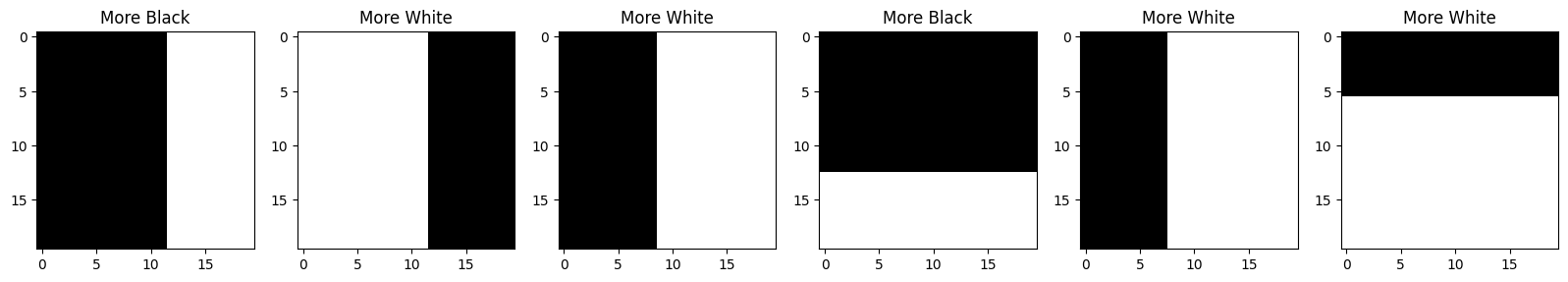

# 定义一个生成黑白分割图像的函数,用于检验卷积层的效果

def generate_bw_image(img_shape : Tuple = (20, 20)):

H, W = img_shape

# 初始图像为全黑色图片

im = np.zeros((3, 20, 20))

# 将图像随机区域改为白色

h = np.random.randint(1, H + 1)

# 四种分割情况

case = np.random.randint(0, 4)

if(case == 0):

im[:, :h, :] = np.ones((3, h, 20))

elif(case == 1):

im[:, -h:, :] = np.ones((3, h, 20))

elif(case == 2):

im[:, :, :h] = np.ones((3, 20, h))

elif(case == 3):

im[:, :, -h:] = np.ones((3, 20, h))

# 如果白色部分较多则标签为1

h += 1

tag = (h >= (H - h))

return im, tag

N = 100

# 生成100张图片数据

data = [generate_bw_image((20, 20)) for i in range(N)]

x = np.array([im for (im, _) in data])

y = np.array([tag for (_, tag) in data]).reshape(-1, 1)

print('x形状:', x.shape)

print('y形状:', y.shape)

num = 6

fig, axs = plt.subplots(1, 6, figsize=(20, 3))

for i in range(num):

axs[i].imshow(x[i].transpose(1, 2, 0))

axs[i].set_title('More White' if y[i] else 'More Black')x形状: (100, 3, 20, 20)

y形状: (100, 1)

python

# 使用卷积层+线性层模拟图像二分类任务

model = Sequential(

Conv2D(3, 3, kernel_size=(3, 3), stride=1, padding=1),

BatchNorm2d(3),

Flatten(),

Linear(1200, 100),

ReLU(),

Linear(100, 1),

Sigmoid()

)

# 采用均方误差

loss_fn = MSELoss()

# 优化器

optimizer = Adam(params=model.parameters(), lr=1e-4)

python

# 训练模型

for epoch in range(100):

# 前向传播

y_pred = model.forward(x)

# 生成损失

loss, grad = loss_fn(y_pred, y)

# 反向传播

model.backward(grad)

# 梯度更新

optimizer.step()

# 梯度清零

optimizer.zero_grad()

# 打印损失

if (epoch % 20 == 0):

print(loss)

print('当前准确率:', ((y_pred >= 0.5) == y).mean())0.3440579680229362

0.07697754731566137

0.0049227621261795414

0.0019373767223245285

0.0012633904212997274

当前准确率: 1.0

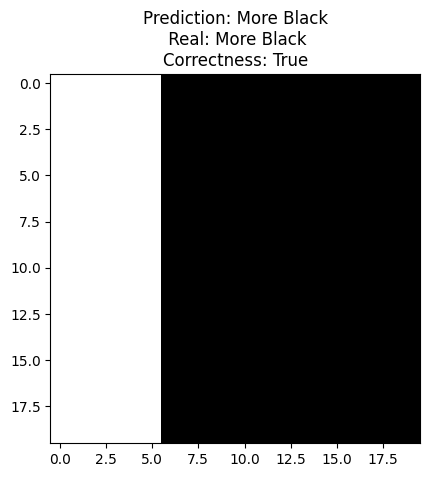

python

# 生成一张新图像

im, tag = generate_bw_image((20, 20))

# 预测类别

tag_pred = model(np.array([im]))

# 比较预测类别与真实类别

prediction = 'More White' if (tag_pred >= 0.5) else 'More Black'

real = 'More White' if (tag >= 0.5) else 'More Black'

# 绘图

plt.imshow(im.transpose(1,2,0))

plt.title(f'Prediction: {prediction}\n Real: {real}\nCorrectness: {prediction == real}')

plt.show()

池化层

平均池化层

封装

python

class AvgPool2d(Module):

def __init__(self, kernel_size : Tuple, stride : int=1, padding : int=0):

super().__init__()

# 保存参数

self.kernel_size = kernel_size

self.stride = stride

self.padding = padding

# 储存形状数据

# (N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k)

self.cache = None

# 填充函数

def _pad(self, x : np.ndarray):

# 平均池化层使用0进行填充

# (N, C, H_pad, W_pad)

return np.pad(x, (

(0,0),

(0,0),

(self.padding, self.padding),

(self.padding, self.padding)

), mode='constant')

# 生成滑动窗口

def _generate_slide_windows(self, x : np.ndarray):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

shape = (N, C, H_out, W_out, H_k, W_k) # 最终产生的滑动窗口矩阵的形状

strides = (

x.strides[0],

x.strides[1],

self.stride * x.strides[2],

self.stride * x.strides[3],

x.strides[2],

x.strides[3])

# (N, C, H_out, W_out, H_k, W_k)

return np.lib.stride_tricks.as_strided(x, shape=shape, strides=strides)

def _im2col(self, x : np.ndarray):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

# 生成滑动窗口

x_win = self._generate_slide_windows(x)

# 展平后四个维度中的前两个和后两个

# (N, C, H_out, W_out, H_k, W_k) -> (N, C, H_out*W_out, H_k*W_k)

x_col = x_win.reshape(N, C, H_out*W_out, H_k*W_k)

return x_col

def _col2im(self, grad : np.ndarray):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

# (N, C, H_pad, W_pad)

ret = np.zeros((N, C, H_pad, W_pad))

# (N, C, H_out, W_out, H_k, W_k)

ret_col = self._generate_slide_windows(ret)

# 改变grad形状以匹配梯度累计,不要操作ret_col以防止地址引用失效

ret_col += grad.reshape(N, C, H_out, W_out, H_k, W_k)

# 去除填充部分

if not (self.padding == 0):

# (N, C, H, W)

return ret[:, :, self.padding:-self.padding, self.padding:-self.padding]

# (N, C, H, W)

return ret

def forward(self, x):

# 获取形状数据

N, C, H, W = x.shape

H_k, W_k = self.kernel_size

# 填充

x_pad = self._pad(x)

# 卷积结果形状

H_out = (H + 2*self.padding - H_k) // self.stride + 1

W_out = (W + 2*self.padding - W_k) // self.stride + 1

# 获取填充后的数据形状

_, _, H_pad, W_pad = x_pad.shape

# 保存数据

self.cache = (N, C, H, W, H_pad, W_pad, H_out, W_out, *self.kernel_size)

# 生成col矩阵

# (N, C, H_out*W_out)

x_col = self._im2col(x_pad)

# 输出每行的最大值,即每个卷积核范围的最大值

# (N, C, H_out*W_out)

x_col_max = np.mean(x_col, axis=-1)

# 调整为输出形状

# (N, C, H_out, W_out)

return x_col_max.reshape(N, C, H_out, W_out)

def backward(self, grad):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

# 展平梯度最后一维以匹配输入梯度

# (N, C, H_out, W_out) -> (N, C, H_out*W_out, 1)

grad = grad.reshape(N, C, H_out*W_out, 1)

# 输出层梯度形状

# (N, C, H_out*W_out, H_k*W_k)

out_grad = np.zeros((N, C, H_out*W_out, H_k*W_k))

# (N, C, H_out*W_out, H_k*W_k)

grad = (grad + out_grad) / (H_k*W_k)

return self._col2im(grad)

def __call__(self, x):

return self.forward(x)

def __repr__(self):

return self.__class__.__name__ + f'kernel_size={self.kernel_size}, stride={self.stride}, padding={self.padding})'测试

python

# 使用卷积层+批归一化+平均池化层+线性层模拟图像二分类任务

model = Sequential(

Conv2D(3, 1, kernel_size=(3, 3), stride=1, padding=1),

BatchNorm2d(1),

AvgPool2d(kernel_size=(2, 2), stride=2, padding=1),

ReLU(),

Conv2D(1, 3, kernel_size=(3, 3), stride=1, padding=1),

BatchNorm2d(3),

AvgPool2d(kernel_size=(2, 2), stride=1, padding=1),

Flatten(),

Linear(432, 100),

ReLU(),

Linear(100, 1),

Sigmoid()

)

# 采用均方误差

loss_fn = MSELoss()

# 优化器

optimizer = Adam(params=model.parameters(), lr=1e-4)

python

# 训练模型

for epoch in range(100):

# 前向传播

y_pred = model.forward(x)

# 生成损失

loss, grad = loss_fn(y_pred, y)

# 反向传播

model.backward(grad)

# 梯度更新

optimizer.step()

# 梯度清零

optimizer.zero_grad()

# 打印损失

if (epoch % 20 == 0):

print(loss)

print('当前准确率:', ((y_pred >= 0.5) == y).mean())0.23448127366079674

0.07891417716087412

0.042494507580926905

0.02864539474340755

0.021375144130226745

当前准确率: 1.0最大池化层

封装

python

class MaxPool2d(Module):

def __init__(self, kernel_size : Tuple, stride : int=1, padding : int=0):

super().__init__()

# 保存参数

self.kernel_size = kernel_size

self.stride = stride

self.padding = padding

# 储存形状数据

# (N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k)

self.cache = None

# 层梯度

# (N, C, H_out*W_out, H_k*W_k)

self.dx_col = None

# 填充函数

def _pad(self, x : np.ndarray):

# 为了防止参与最大值竞争,最大池化层要使用-inf填充

# (N, C, H_pad, W_pad)

return np.pad(x, (

(0,0),

(0,0),

(self.padding, self.padding),

(self.padding, self.padding)

), mode='constant', constant_values=-np.inf)

# 生成滑动窗口

def _generate_slide_windows(self, x : np.ndarray):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

shape = (N, C, H_out, W_out, H_k, W_k) # 最终产生的滑动窗口矩阵的形状

strides = (

x.strides[0],

x.strides[1],

self.stride * x.strides[2],

self.stride * x.strides[3],

x.strides[2],

x.strides[3])

# (N, C, H_out, W_out, H_k, W_k)

return np.lib.stride_tricks.as_strided(x, shape=shape, strides=strides)

def _im2col(self, x : np.ndarray):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

# 生成滑动窗口

x_win = self._generate_slide_windows(x)

# 展平后四个维度中的前两个和后两个

# (N, C, H_out, W_out, H_k, W_k) -> (N, C, H_out*W_out, H_k*W_k)

x_col = x_win.reshape(N, C, H_out*W_out, H_k*W_k)

return x_col

def _col2im(self, grad : np.ndarray):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

# (N, C, H_pad, W_pad)

ret = np.zeros((N, C, H_pad, W_pad))

# (N, C, H_out, W_out, H_k, W_k)

ret_col = self._generate_slide_windows(ret)

# 改变grad形状以匹配梯度累计,不要操作ret_col以防止地址引用失效

ret_col += grad.reshape(N, C, H_out, W_out, H_k, W_k)

# 去除填充部分

if not (self.padding == 0):

# (N, C, H, W)

return ret[:, :, self.padding:-self.padding, self.padding:-self.padding]

# (N, C, H, W)

return ret

def forward(self, x):

# 获取形状数据

N, C, H, W = x.shape

H_k, W_k = self.kernel_size

# 填充

x_pad = self._pad(x)

# 卷积结果形状

H_out = (H + 2*self.padding - H_k) // self.stride + 1

W_out = (W + 2*self.padding - W_k) // self.stride + 1

# 获取填充后的数据形状

_, _, H_pad, W_pad = x_pad.shape

# 保存数据

self.cache = (N, C, H, W, H_pad, W_pad, H_out, W_out, *self.kernel_size)

# 生成col矩阵

# (N, C, H_out*W_out)

x_col = self._im2col(x_pad)

# 输出每行的最大值,即每个卷积核范围的最大值

# (N, C, H_out*W_out)

x_col_max = np.max(x_col, axis=-1)

# 层梯度

# (N, C, H_out*W_out, H_k*W_k)

self.dx_col = np.eye(H_k*W_k)[np.argmax(x_col, axis=-1)]

# 调整为输出形状

# (N, C, H_out, W_out)

return x_col_max.reshape(N, C, H_out, W_out)

def backward(self, grad):

# 获取形状数据

N, C, H, W, H_pad, W_pad, H_out, W_out, H_k, W_k = self.cache

# 展平梯度最后一维以匹配输入梯度

# (N, C, H_out, W_out) -> (N, C, H_out*W_out, 1)

grad = grad.reshape(N, C, H_out*W_out, 1)

# (N, C, H_out*W_out, H_k*W_k)

grad = grad * self.dx_col

return self._col2im(grad)

def __call__(self, x):

return self.forward(x)

def __repr__(self):

return self.__class__.__name__ + f'kernel_size={self.kernel_size}, stride={self.stride}, padding={self.padding})'测试

python

# 使用卷积层+批归一化+最大池化层+线性层模拟图像二分类任务

model = Sequential(

Conv2D(3, 1, kernel_size=(3, 3), stride=1, padding=1),

BatchNorm2d(1),

MaxPool2d(kernel_size=(2, 2), stride=2, padding=1),

ReLU(),

Conv2D(1, 3, kernel_size=(3, 3), stride=1, padding=1),

BatchNorm2d(3),

MaxPool2d(kernel_size=(2, 2), stride=1, padding=1),

Flatten(),

Linear(432, 100),

ReLU(),

Linear(100, 1),

Sigmoid()

)

# 采用均方误差

loss_fn = MSELoss()

# 优化器

optimizer = Adam(params=model.parameters(), lr=1e-4)

python

# 训练模型

for epoch in range(100):

# 前向传播

y_pred = model.forward(x)

# 生成损失

loss, grad = loss_fn(y_pred, y)

# 反向传播

model.backward(grad)

# 梯度更新

optimizer.step()

# 梯度清零

optimizer.zero_grad()

# 打印损失

if (epoch % 20 == 0):

print(loss)

print('当前准确率:', ((y_pred >= 0.5) == y).mean())0.3266376591441796

0.081577964205126

0.03259214618452535

0.020044786350449657

0.01540252224822

当前准确率: 0.99