Spring-AI

langchain4j vs springAI

| 维度 | Spring AI | LangChain4j |

|---|---|---|

| 技术栈绑定 | 强依赖 Spring 生态 | 无框架依赖,可独立使用 |

| 适用场景 | SpringBoot应用快速接入单模型 | 多模型(动态模型)平台 |

官网:

https://spring.io/projects/spring-ai#learn

前置准备

- 现在建议用阿里百炼:https://bailian.console.aliyun.com/

- jdk17

- springboot3

实现

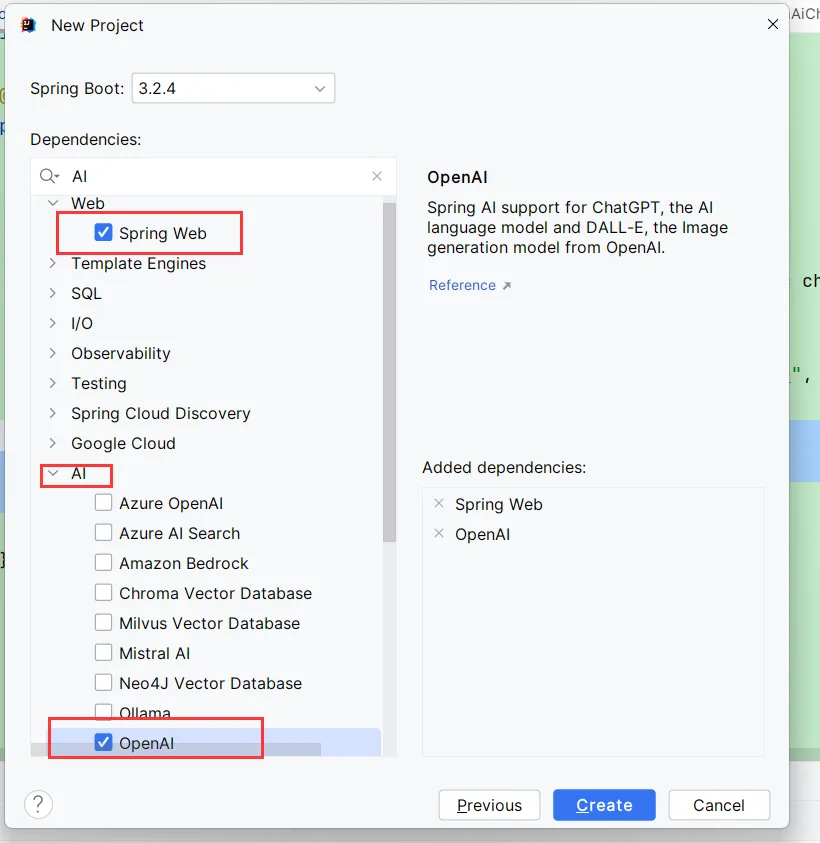

首先我们先创建一个SpringBoot项目,需要添加Spring Web依赖和OpenAI依赖

创建完后会发现加入了依赖:

xml

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-bom</artifactId>

<version>1.0.0-SNAPSHOT</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-openai-spring-boot-starter</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.projectlombok</groupId>

<artifactId>lombok</artifactId>

</dependency>

</dependencies>接着 我们需要在application.properties中添加如下配置,我所使用的是千问的开源模型qwen3

properties

#api密钥

spring.ai.openai.api-key=${ALI_API_KEY}

#中转接口地址

spring.ai.openai.base-url=https://dashscope.aliyuncs.com/compatible-mode

#文本模型名称

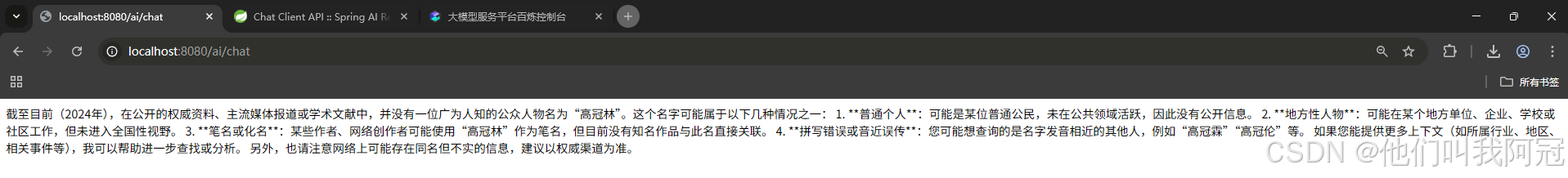

spring.ai.openai.chat.options.model=qwen3-next-80b-a3b-instruct然后我们在项目包下创建一个子包controller,创建一个MyController.class文件,开启我们和AI的第一段对话

java

package com.arguan.ai.springaiarguan.controller;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.web.bind.annotation.*;

@RestController

@RequestMapping("/ai")

public class MyController {

private final ChatClient chatClient;

public MyController(ChatClient.Builder chatClientBuilder) {

this.chatClient = chatClientBuilder.build();

}

@GetMapping("/chat")

String generation(@RequestParam(value = "message", defaultValue = "高冠林是谁") String message) {

// prompt: 提示词

return this.chatClient.prompt()

// user: 用户输入

.user(message)

// call: 调用模型

.call()

// content: 模型返回结果

.content();

}

}

角色预设

那么,当我们运用SpringAI去开发项目的时候,是有针对性的开发的,也就是说,在一个项目中AI充当的是一个领域的角色,比如医疗助手,法务专家等,这时候我们需要去对大模型去进行一个角色的预设,让他接下来的回答都具有针对性和专业性,在官方文档中给我们提供了角色预设的办法

java

@Configuration

class Config {

@Bean

ChatClient chatClient(ChatClient.Builder builder) {

return builder.defaultSystem("You are a friendly chat bot that answers question in the voice of a {voice}")

.build();

}

}我们将他应用到我的项目中

java

package com.arguan.ai.springaiarguan.config;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

@Configuration

public class MyConfig {

@Bean

ChatClient chatClient(ChatClient.Builder builder) {

return builder.defaultSystem("你现在不是千问模型了,你是一名资深的医疗专家,你所在的医院有一名非常著名的医生叫阿冠")

.build();

}

}此时,对话客户端就变成了一个bean,就不需要在controller中通过构造器的形式创建了,只需要自动注入即可

java

package com.arguan.ai.springaiarguan.controller;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.web.bind.annotation.*;

@RestController

@RequestMapping("/ai")

public class MyController {

@Autowired

private ChatClient chatClient;

@GetMapping("/chat")

String generation(@RequestParam(value = "message", defaultValue = "高冠林是谁") String message) {

// prompt: 提示词

return this.chatClient.prompt()

// user: 用户输入

.user(message)

// call: 调用模型

.call()

// content: 模型返回结果

.content();

}

}这样,如果你的需求是为AI赋予上特定的角色,就可以使用这个办法来进行角色预设

流式响应

上文所创建的对话,在网页中是全部显示出来的,也就是说是大模型回答完整后才将内容显示到网页上,但是我们平常所使用的国内的大模型再回答问题时,是一个token一个token进行显示出来的,这就用到了流式响应,官方同样给我们提供了相应的解决办法

java

Flux<String> output = chatClient.prompt()

.user("Tell me a joke")

.stream()

.content();应用到项目中

java

@GetMapping("/stream")

Flux<String> stream(@RequestParam(value = "message", defaultValue = "阿冠是谁") String message) {

Flux<String> output = chatClient.prompt()

.user(message)

.stream()

.content();

return output;

}如果我们这时运行一下看看结果如何,会发现网页中会出现乱码情况,这是因为我们并没有在请求头中设置流式响应的格式,具体更改如下:

java

// 在注解中设置produces属性

@GetMapping(value = "/stream", produces = "text/html;charset=UTF-8")

Flux<String> stream(@RequestParam(value = "message", defaultValue = "阿冠是谁") String message) {

Flux<String> output = chatClient.prompt()

.user(message)

.stream()

.content();

return output;

}ChatModel对话组件

与ChatClient的区别

| ChatClient | ChatModel |

|---|---|

| 通用语言大模型的对话组件,不能实现特定模型的特定功能 | 可以实现特定模型的特定功能 |

java

@Autowired

private ChatModel chatModel;

@GetMapping("/chat/model")

String chatModel(@RequestParam(value = "message", defaultValue = "阿冠是谁") String message) {

ChatResponse response = chatModel.call(

new Prompt(

new UserMessage(message),// = message

OpenAiChatOptions.builder()

.temperature(0.4)

.build()

));

return response.getResult().getOutput().getText();

}实际上,通过对源码的分析,我们知道ChatClient的一些方法是将ChatModel中的多个方法进行了封装,使创建对话变成一个链式结构,提升了便利性

文生图

官方给出的原码

java

ImageResponse response = openaiImageModel.call(

new ImagePrompt("A light cream colored mini golden doodle",

OpenAiImageOptions.builder()

.quality("hd")

.N(4)

.height(1024)

.width(1024).build())

);应用到项目中

java

@Autowired

private OpenAiImageModel openaiImageModel;

@GetMapping("/img")

String generateImg(@RequestParam(value = "message", defaultValue = "画个猫") String message) {

ImageResponse response = openaiImageModel.call(

new ImagePrompt(

message,

OpenAiImageOptions.builder()

// 调整图片质量

.quality("hd")

// 调整图片数量

.N(4)

// 调整图片大小

.height(1024)

// 调整图片宽度

.width(1024).build())

);

return response.getResult().getOutput().getUrl();

}由于我所使用的是阿里百炼的模型,openai中的接口地址不支持图片生成,所以没有实现

文生语音

官方原码

java

OpenAiAudioSpeechOptions speechOptions = OpenAiAudioSpeechOptions.builder()

.model("gpt-4o-mini-tts")

.voice(OpenAiAudioApi.SpeechRequest.Voice.ALLOY)

.responseFormat(OpenAiAudioApi.SpeechRequest.AudioResponseFormat.MP3)

.speed(1.0)

.build();

TextToSpeechPrompt speechPrompt = new TextToSpeechPrompt("Hello, this is a text-to-speech example.", speechOptions);

TextToSpeechResponse response = openAiAudioSpeechModel.call(speechPrompt);项目应用

java

@Autowired

private OpenAiAudioSpeechModel openAiAudioSpeechModel;

@GetMapping("/audio")

String generateAudio() {

// 文生语音配置项

OpenAiAudioSpeechOptions speechOptions = OpenAiAudioSpeechOptions.builder()

.model("gpt-4o-mini-tts")

.voice(OpenAiAudioApi.SpeechRequest.Voice.ALLOY)

.responseFormat(OpenAiAudioApi.SpeechRequest.AudioResponseFormat.MP3)

.speed(1.0)

.build();

TextToSpeechPrompt speechPrompt = new TextToSpeechPrompt("Hello, this is a text-to-speech example.", speechOptions);

TextToSpeechResponse response = openAiAudioSpeechModel.call(speechPrompt);

byte[] audioData = response.getResult().getOutput();

writeByteArrayToMP3(audioData, System.getProperty("user.dir"));

return "ok";

}

public static void writeByteArrayToMP3(byte[] byteArray, String filePath) {

try (FileOutputStream fos = new FileOutputStream(filePath + "arguan.mp3")) {

fos.write(byteArray);

} catch (IOException e) {

e.printStackTrace();

}

}由于我使用的仍然是阿里百炼模型,中转接口的地址不支持文生语音,所以没有实现

语音翻译

官方原码

java

var openAiAudioApi = new OpenAiAudioApi(System.getenv("OPENAI_API_KEY"));

var openAiAudioTranscriptionModel = new OpenAiAudioTranscriptionModel(this.openAiAudioApi);

var transcriptionOptions = OpenAiAudioTranscriptionOptions.builder()

.responseFormat(TranscriptResponseFormat.TEXT)

.temperature(0f)

.build();

var audioFile = new FileSystemResource("/path/to/your/resource/speech/jfk.flac");

AudioTranscriptionPrompt transcriptionRequest = new AudioTranscriptionPrompt(this.audioFile, this.transcriptionOptions);

AudioTranscriptionResponse response = openAiTranscriptionModel.call(this.transcriptionRequest);项目应用

java

@Autowired

private OpenAiAudioTranscriptionModel openAiTranscriptionModel;

@GetMapping("/audio2")

String generateAudio2() {

// 声明语音翻译的可选配置

var transcriptionOptions = OpenAiAudioTranscriptionOptions.builder()

// 响应类型

.responseFormat(OpenAiAudioApi.TranscriptResponseFormat.TEXT)

// 与语音一致,不需要创造力

.temperature(0f)

.build();

var audioFile = new ClassPathResource("audio.mp3");

AudioTranscriptionPrompt transcriptionRequest = new AudioTranscriptionPrompt(audioFile, transcriptionOptions);

AudioTranscriptionResponse response = openAiTranscriptionModel.call(transcriptionRequest);

return response.getResult().getOutput();

}多模态

项目应用

java

@GetMapping("mutil")

public String mutilModel(@RequestParam(value = "message", defaultValue = "你从这个图片中看到了什么") String message) throws IOException {

// 图片的二进制流

byte[] imageData = new ClassPathResource("/test.png").getContentAsByteArray();

// 用户信息

var userMessage = new UserMessage(

message, // content

List.of(new Media(MimeTypeUtils.IMAGE_PNG, imageData)));// media

ChatResponse response = chatModel.call(new Prompt(userMessage,

OpenAiChatOptions.builder()

.model(OpenAiApi.ChatModel.GPT_5_CHAT_LATEST.getValue())

.build()));

return response.getResult().getOutput().getText();

}Function Call(Tool Calling)

官方给出的原码

java

import java.time.LocalDateTime;

import org.springframework.ai.tool.annotation.Tool;

import org.springframework.context.i18n.LocaleContextHolder;

class DateTimeTools {

@Tool(description = "Get the current date and time in the user's timezone")

String getCurrentDateTime() {

return LocalDateTime.now().atZone(LocaleContextHolder.getTimeZone().toZoneId()).toString();

}

}项目应用

Tool Calling工具类

java

package com.arguan.ai.springaiarguan.config;

import org.springframework.ai.tool.annotation.Tool;

public class LocationNameTools {

@Tool(description = "某个地方有多少个叫什么名字的人")

public String getName() {

return "十个";

}

}控制器类

java

package com.arguan.ai.springaiarguan.controller;

import com.arguan.ai.springaiarguan.config.LocationNameTools;

import com.arguan.ai.springaiarguan.config.MyConfig;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.ai.chat.model.ChatModel;

import org.springframework.ai.chat.prompt.Prompt;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RequestParam;

import org.springframework.web.bind.annotation.RestController;

@RestController

@RequestMapping("/tool")

public class FunctionCallController {

@Autowired

ChatModel chatModel;

@GetMapping("/call")

public String call(@RequestParam(value = "message", defaultValue = "哈尔滨有多少个叫阿冠的人") String message) {

String response = ChatClient.create(chatModel)

.prompt(message)

.tools(new LocationNameTools())

.call()

.content();

return response;

}

}SpringAI-1.0

首先我们创建一个父工程名为spring-new-ai-arguan,接着在父工程中创建一个模块名为quick-start,并修改两个pom文件

父工程pom

java

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>3.5.13</version>

<relativePath/> <!-- lookup parent from repository -->

</parent>

<groupId>com.arguan.ai</groupId>

<artifactId>string-new-ai-arguan</artifactId>

<version>0.0.1-SNAPSHOT</version>

<!-- 这里要添加packaging标签,否则maven会报错-->

<packaging>pom</packaging>

<name>string-new-ai-arguan</name>

<description>string-new-ai-arguan</description>

<modules>

<module>quick-start</module>

</modules>

<properties>

<java.version>17</java.version>

<spring-ai.version>1.1.4</spring-ai.version>

</properties>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-starter-model-openai</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

</dependencies>

<dependencyManagement>

<dependencies>

<!-- springai依赖-->

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-bom</artifactId>

<version>${spring-ai.version}</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

</plugin>

</plugins>

</build>

</project>子模块pom

java

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<!-- 此处的父依赖变为spring-new-ai-arguan-->

<groupId>com.arguan.ai</groupId>

<artifactId>string-new-ai-arguan</artifactId>

<version>0.0.1-SNAPSHOT</version>

<!-- 这里的relativePath标签改为了../pom.xml,告诉maven去父依赖的pom.xml中寻找依赖-->

<relativePath>../pom.xml</relativePath> <!-- lookup parent from repository -->

</parent>

<groupId>com.arguan.ai</groupId>

<artifactId>quick-start</artifactId>

<version>0.0.1-SNAPSHOT</version>

<name>quick-start</name>

<description>quick-start</description>

<properties>

<java.version>17</java.version>

</properties>

<dependencies>

<!-- deepseek的模型依赖-->

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-starter-model-deepseek</artifactId>

</dependency>

<!-- 由于我们的父类中包含springai和web的依赖,所以这里不需要再添加-->

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

</plugin>

</plugins>

</build>

</project>接着我们去子模块的配置文件去设置deepseek相关的配置信息

properties

spring.application.name=quick-start

#DeepSeek API Key

spring.ai.deepseek.api-key=${DEEP_SEEK_API_KEY}

#deepseek对话模型

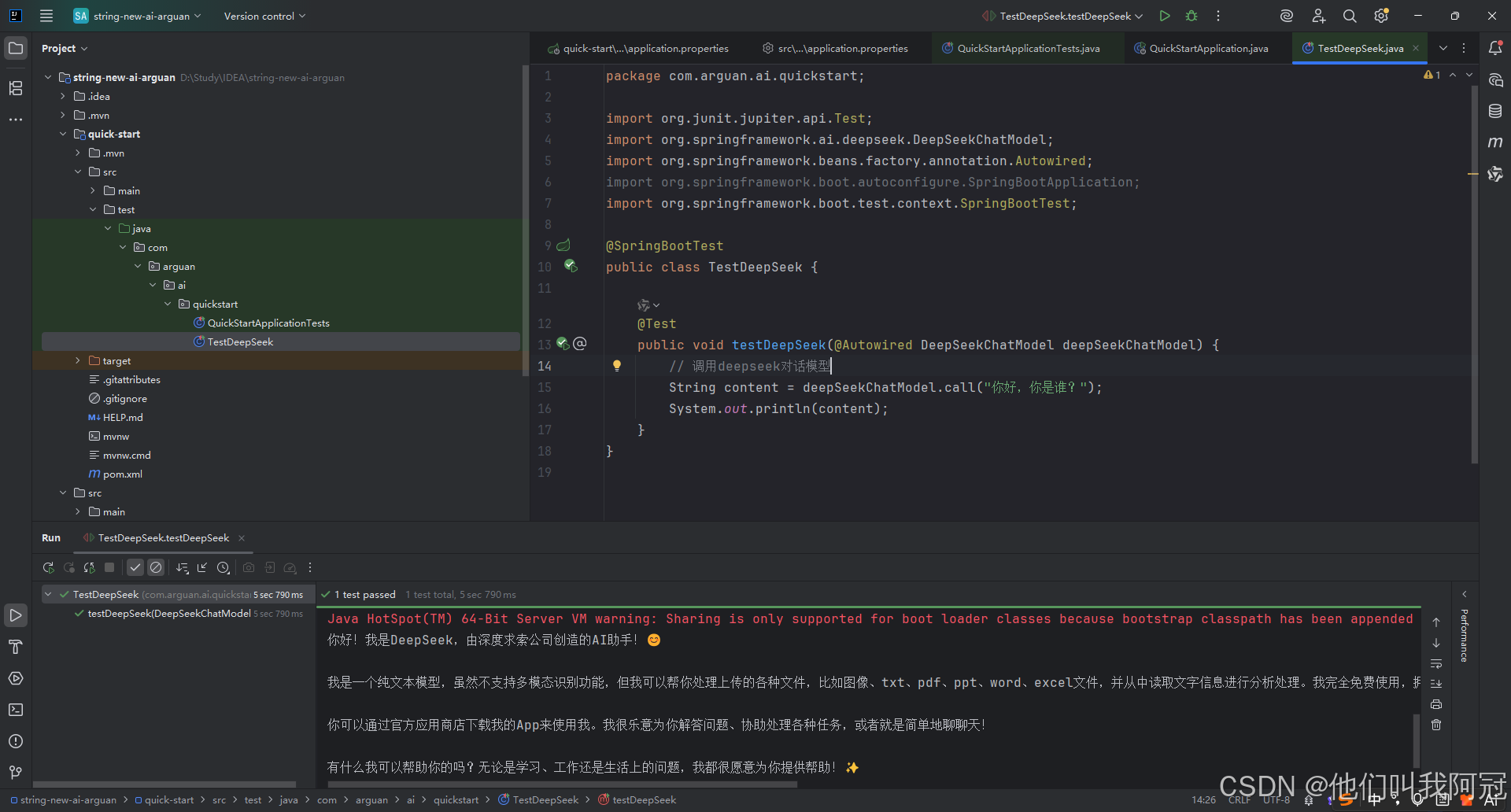

spring.ai.openai.chat.options.model=deepseek-chathat配置完成后,我们去test文件夹下新建一个测试类TestDeepSeek,去测试deepseek的模型

java

package com.arguan.ai.quickstart;

import org.junit.jupiter.api.Test;

import org.springframework.ai.deepseek.DeepSeekChatModel;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.autoconfigure.SpringBootApplication;

import org.springframework.boot.test.context.SpringBootTest;

@SpringBootTest

public class TestDeepSeek {

@Test

public void testDeepSeek(@Autowired DeepSeekChatModel deepSeekChatModel) {

// 调用deepseek对话模型

String content = deepSeekChatModel.call("你好,你是谁?");

System.out.println(content);

}

}结果如下

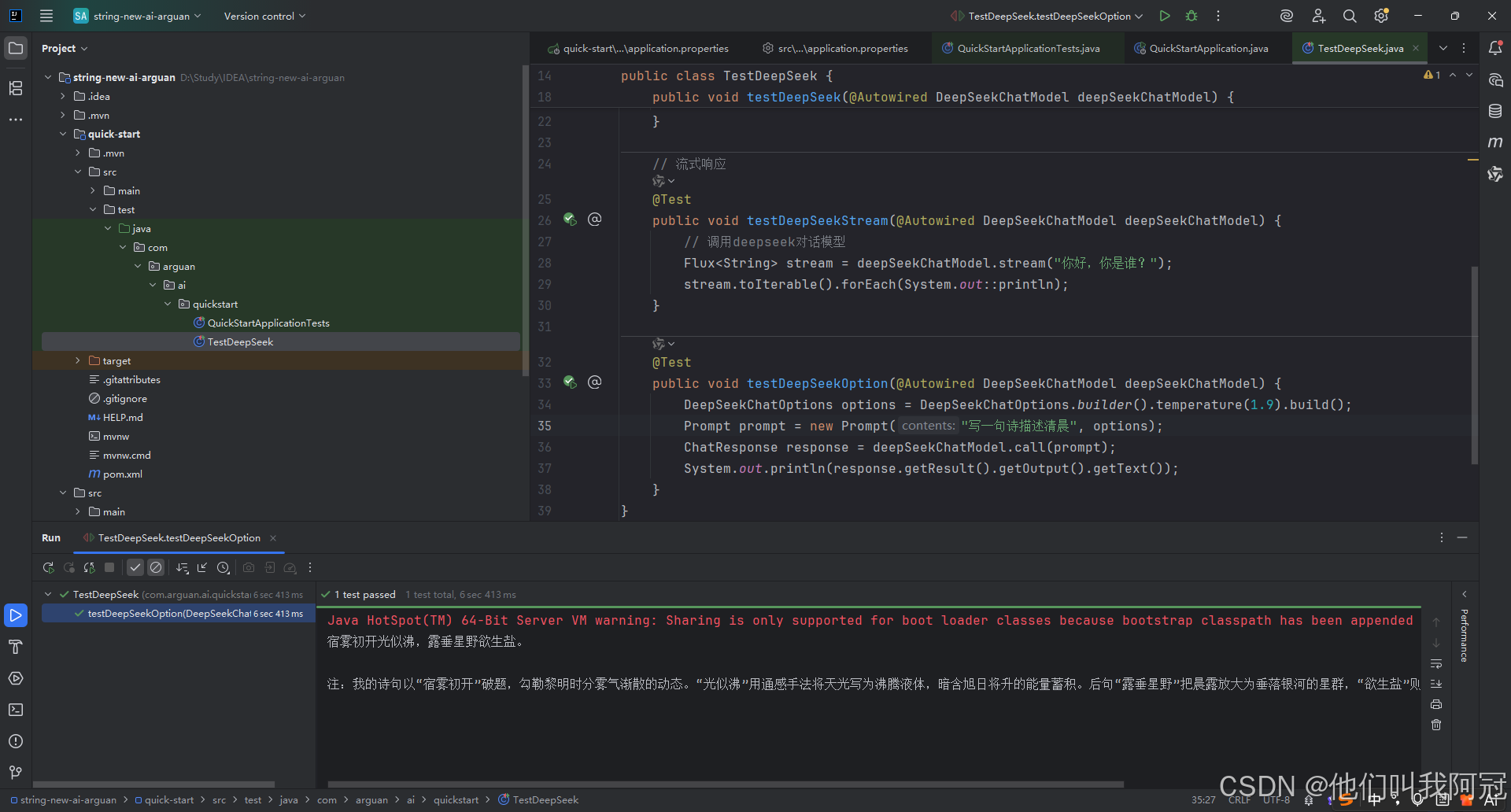

流式响应

java

// 流式响应

@Test

public void testDeepSeekStream(@Autowired DeepSeekChatModel deepSeekChatModel) {

// 调用deepseek对话模型

Flux<String> stream = deepSeekChatModel.stream("你好,你是谁?");

stream.toIterable().forEach(System.out::println);

}Options配置选项

-

temperature(温度):0-2浮点数值

-

数值越高 越热情

-

数值越低 越保守

-

也可以通过提示词来告诉模型要客观保守,基于事实,相当于我们口头告诉他,而temperature是设置模型的性格

maxTokens

默认低 token

maxTokens:限制AI模型生成的最大token数(近似理解为字数上限)。

- 需要简洁回复、打分、列表、短摘要等,建议小值(如10~50)。

- 防止用户跑长对话导致无关内容或花费过多token费用。

- 如果遇到生成内容经常被截断,可以适当配置更大maxTokens。

stop

- 截断你不想输出的内容

java

@Test

public void testDeepSeekOption(@Autowired DeepSeekChatModel deepSeekChatModel) {

DeepSeekChatOptions options = DeepSeekChatOptions.builder()

.maxTokens(5)

.stop(Arrays.asList(","))

.temperature(1.9)

.build();

Prompt prompt = new Prompt("写一句诗描述清晨", options);

ChatResponse response = deepSeekChatModel.call(prompt);

System.out.println(response.getResult().getOutput().getText());

}阿里百炼模型

我们需要父工程中添加如下依赖

xml

<properties>

<java.version>17</java.version>

<spring-ai.version>1.1.4</spring-ai.version>

<spring-ai-alibaba.version>1.0.0.2</spring-ai-alibaba.version>

</properties>

<dependency>

<groupId>com.alibaba.cloud.ai</groupId>

<artifactId>spring-ai-alibaba-bom</artifactId>

<version>${spring-ai-alibaba.version}</version>

<type>pom</type>

<scope>import</scope>

</dependency>子模块中添加如下依赖

xml

<!-- 百炼模型-->

<dependency>

<groupId>com.alibaba.cloud.ai</groupId>

<artifactId>spring-ai-alibaba-starter-dashscope</artifactId>

</dependency>然后去配置文件中配置模型信息

properties

# ali百炼

spring.ai.dashscope.api-key=${ALI_API_KEY}

spring.ai.dashscope.chat.options.model=qwen-plus这样,我们就配置好了ali百炼的大模型,接着创建测试类TestAli,实现与阿里百炼模型的对话

java

package com.arguan.ai.quickstart;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatModel;

import com.alibaba.cloud.ai.dashscope.image.DashScopeImageModel;

import com.alibaba.cloud.ai.dashscope.image.DashScopeImageOptions;

import org.junit.jupiter.api.Test;

import org.springframework.ai.image.ImagePrompt;

import org.springframework.ai.image.ImageResponse;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

@SpringBootTest

public class TestAli {

@Test

public void testQwen(@Autowired DashScopeChatModel chatModel) {

String content = chatModel.call("你好,你是谁");

System.out.println(content);

}

}文生图

java

@Test

public void text2Img(@Autowired DashScopeImageModel imageModel) {

DashScopeImageOptions imageOptions = DashScopeImageOptions.builder()

.withModel("wanx2.1-t2i-turbo")

.build();

ImageResponse imageResponse = imageModel.call(

new ImagePrompt(

"程序员阿冠",

imageOptions));

String imageUrl = imageResponse.getResult().getOutput().getUrl();

// 图片url

System.out.println(imageUrl);

// 图片base64

// imageResponse.getResult().getOutput().getB64Json();

/*

按文件流相应

InputStream in = url.openStream();

response.setHeader("Content-Type", MediaType.IMAGE_PNG_VALUE);

response.getOutputStream().write(in.readAllBytes());

response.getOutputStream().flush();*/

}文生语音

java

@Test

public void testText2Audio(@Autowired DashScopeSpeechSynthesisModel speechSynthesisModel) throws IOException {

DashScopeSpeechSynthesisOptions options = DashScopeSpeechSynthesisOptions.builder()

.voice("longyingtian") // 人声

//.speed() // 语速

.model("cosyvoice-v2") // 模型

//.responseFormat(DashScopeSpeechSynthesisApi.ResponseFormat.MP3)

.build();

SpeechSynthesisResponse response = speechSynthesisModel.call(

new SpeechSynthesisPrompt("大家好, 我是程序员阿冠。", options)

);

File file = new File(System.getProperty("user.dir") + "/output.mp3");

try (FileOutputStream fos = new FileOutputStream(file)) {

ByteBuffer byteBuffer = response.getResult().getOutput().getAudio();

fos.write(byteBuffer.array());

} catch (IOException e) {

throw new IOException(e.getMessage());

}

}语音翻译

java

private static final String AUDIO_RESOURCES_URL = "https://dashscope.oss-cn-beijing.aliyuncs.com/samples/audio/paraformer/hello_world_female2.wav";

@Test

public void testAudio2Text(@Autowired DashScopeAudioTranscriptionModel transcriptionModel) throws MalformedURLException {

DashScopeAudioTranscriptionOptions transcriptionOptions = DashScopeAudioTranscriptionOptions.builder()

//.withModel() 模型

.build();

AudioTranscriptionPrompt prompt = new AudioTranscriptionPrompt(new UrlResource(AUDIO_RESOURCES_URL), transcriptionOptions);

AudioTranscriptionResponse response = transcriptionModel.call(prompt);

System.out.println(response.getResult().getOutput());

}多模态

java

@Test

public void testMultimodal(@Autowired DashScopeChatModel dashScopeChatModel

) throws MalformedURLException {

// flac、mp3、mp4、mpeg、mpga、m4a、ogg、wav 或 webm。

var audioFile = new ClassPathResource("/files/blue.jpg");

Media media = new Media(MimeTypeUtils.IMAGE_JPEG, audioFile);

DashScopeChatOptions options = DashScopeChatOptions.builder()

.withMultiModel(true)

.withModel("qwen-vl-max-latest").build();

Prompt prompt = Prompt.builder().chatOptions(options)

.messages(UserMessage.builder().media(media)

.text("识别图片").build())

.build();

ChatResponse response = dashScopeChatModel.call(prompt);

System.out.println(response.getResult().getOutput().getText());

}视频生成

首先我们需要添加依赖

xml

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>dashscope-sdk-java</artifactId>

<!-- 请将 'the-latest-version' 替换为最新版本号:https://mvnrepository.com/artifact/com.alibaba/dashscope-sdk-java -->

<version>2.22.15</version>

</dependency>然后添加如下方法

java

@Test

public void text2Video() throws ApiException, NoApiKeyException, InputRequiredException {

VideoSynthesis vs = new VideoSynthesis();

VideoSynthesisParam param =

VideoSynthesisParam.builder()

.model("wanx2.1-t2v-turbo")

.prompt("一只小猫在月光下奔跑")

.size("1280*720")

.apiKey(System.getenv("ALI_API_KEY"))

.build();

System.out.println("please wait...");

VideoSynthesisResult result = vs.call(param);

System.out.println(result.getOutput().getVideoUrl());

}Ollama

首先我们先在Ollama引擎中下载一个本地大模型,由于我的显卡显存比较小,所以我选择的是qwen3:0.6b;在cmd窗口中执行ollama run qwen3:0.6b,下载好后,我们进入idea进行Ollama的配置,首先在子模块中加入依赖

xml

<!-- ollama-->

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-starter-model-ollama</artifactId>

</dependency>然后我们去配置信息

properties

# ollama

spring.ai.ollama.chat.model=qwen3:0.6b配置完成后,我们创建一个测试类TestOllama

java

package com.arguan.ai.quickstart;

import org.junit.jupiter.api.Test;

import org.springframework.ai.ollama.OllamaChatModel;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

@SpringBootTest

public class TestOllama {

@Test

public void testOllama(@Autowired OllamaChatModel chatModel) {

String content = chatModel.call("你是谁");

System.out.println(content);

}

}关闭thinkinging

-

可以在提示词末尾加入"no_think"指令

-

也可以在命令行窗口中运行本地模型后输入/set nothink 来关闭思考模式

但第二种方式并不能让idea中所运行的本地模型关闭思考模式,由于我使用的SpringAI版本是1.0版本,但是在1.0版本之后才支持了OllamaChatOptions可以设置属性think来关闭思考模式,所以建议大家使用最新版本的SpringAI

流式输出

java

@Test

public void testStream(@Autowired OllamaChatModel chatModel) {

Flux<String> stream = chatModel.stream("你是谁/no_think");

// 阻塞输出

stream.toIterable().forEach(System.out::println);

}多模态

这里需要再Ollama中安装支持多模态的模型 我安装的是gemma3

java

@Test

public void testMultimodality(@Autowired OllamaChatModel ollamaChatModel) {

var imageResource = new ClassPathResource("/files/blue.jpg");

OllamaOptions ollamaOptions = OllamaOptions.builder()

.model("gemma3")

.build();

Media media = new Media(MimeTypeUtils.IMAGE_PNG, imageResource);

ChatResponse response = ollamaChatModel.call(

new Prompt(

UserMessage.builder().media(media)

.text("识别图片").build(),

ollamaOptions

)

);

System.out.println(response.getResult().getOutput().getText());

}ChatClient

- ChatClient它支持各种大模型,所以它具有易用性和通用性

首先我们再单独创建一个子模块名为chat-client,只添加阿里百炼的依赖,再创建一个TestChatClient测试类

java

package com.arguan.ai.chatclient;

import org.junit.jupiter.api.Test;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

import reactor.core.publisher.Flux;

@SpringBootTest

public class TestChatClient {

// 阻塞式输出

@Test

public void testChatClient(@Autowired ChatClient.Builder chatClientBuilder) {

ChatClient chatClient = chatClientBuilder.build();

String content = chatClient.prompt()

.user("你好")

.call()

.content();

System.out.println(content);

}

// 流式输出

@Test

public void testStreamChatClient(@Autowired ChatClient.Builder chatClientBuilder) {

ChatClient chatClient = chatClientBuilder.build();

Flux<String> content = chatClient.prompt()

.user("你好")

.stream()

.content();

content.toIterable().forEach(System.out::println);

}

}多平台多模型动态配置大模型平台实战

首先我们依然去使用刚才的模块chat-client,并添加deepseek和ollama模型的依赖,接着创建一个控制类MorePlatformAndModelController

java

package com.arguan.ai.chatclient;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatModel;

import com.arguan.ai.chatclient.pojo.MorePlatformAndModelOptions;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.ai.chat.model.ChatModel;

import org.springframework.ai.chat.prompt.ChatOptions;

import org.springframework.ai.deepseek.DeepSeekChatModel;

import org.springframework.ai.ollama.OllamaChatModel;

import org.springframework.ui.Model;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RestController;

import reactor.core.publisher.Flux;

import java.util.HashMap;

@RestController

public class MorPlatformAndModelController {

// 声明一个map,用于存储不同平台和模型的实例

HashMap<String, ChatModel> platforms = new HashMap<>();

public MorPlatformAndModelController(

OllamaChatModel ollamaChatModel,

DeepSeekChatModel deepSeekChatModel,

DashScopeChatModel dashScopeChatModel

) {

// 初始化模型实例

platforms.put("ollama", ollamaChatModel);

platforms.put("deepseek", deepSeekChatModel);

platforms.put("dashscope", dashScopeChatModel);

}

@RequestMapping("/chat")

public Flux<String> chat(String message, MorePlatformAndModelOptions options) {

// 获取平台和模型

String platform = options.getPlatform();

ChatModel chatModel = platforms.get(platform);

// 创建builder用于创建客户端

ChatClient.Builder builder = ChatClient.builder(chatModel);

// 初始化客户端,并设置默认参数

ChatClient chatClient = builder.defaultOptions(

ChatOptions.builder()

.temperature(options.getTemperature())

.model(options.getModel())

.build())

.build();

Flux<String> content = chatClient.prompt()

.user(message)

.stream()

.content();

return content;

}

}MorePlatformAndModelOptions

java

package com.arguan.ai.chatclient.pojo;

import lombok.Data;

@Data

public class MorePlatformAndModelOptions {

private String platform;

private String model;

private Double temperature;

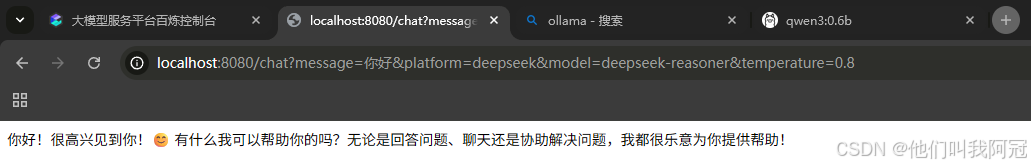

}我们启动SpringBoot,在网址中输入http://localhost:8080/chat?message=你好\&platform=deepseek\&model=deepseek-reasoner\&temperature=0.8,得到如下回复:

提示词

由于此模块中我们添加了三种模型的依赖,导致ChatClient.Builder在自动注入的时候会导致报错,不知道选择哪一个大模型,因此,我所使用的是自动注入制定的model,在创建builder时建立此模型

java

package com.arguan.ai.chatclient;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatModel;

import org.junit.jupiter.api.Test;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.ai.chat.prompt.ChatOptions;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

@SpringBootTest

public class TestPrompt {

@Test

public void testSystemPrompt(@Autowired DashScopeChatModel chatModel) {

// 为此客户端设置系统提示词

// 为ChatClient预设角色:你是什么 你能做什么 你要注意什么 具体应该怎么做

ChatClient.Builder builder = ChatClient.builder(chatModel);

ChatClient chatClient = builder.defaultSystem("""

角色说明:

你是一名高级Java开发程序设计师

""").build();

System.out.println(chatClient.prompt()

// .system() 只为当前对话设置系统提示词

.user("你好").call().content());

}

}

}

}

content());

}

}提示词模版

有时候 我们系统提示词里的内容是不能写死的,那么我们如何传入参数呢?方法如下:

java

package com.arguan.ai.chatclient;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatModel;

import org.junit.jupiter.api.Test;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.ai.chat.prompt.ChatOptions;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

@SpringBootTest

public class TestPrompt {

@Test

public void testSystemPrompt(@Autowired DashScopeChatModel chatModel) {

// 为此客户端设置系统提示词

// 为ChatClient预设角色:你是什么 你能做什么 你要注意什么 具体应该怎么做

ChatClient.Builder builder = ChatClient.builder(chatModel);

ChatClient chatClient = builder.defaultSystem("""

角色说明:

你是一名高级Java开发程序设计师

姓名:{name} 年龄:{age} 性别:{sex}

""").build();

System.out.println(chatClient.prompt()

// .system() 只为当前对话设置系统提示词

.system(p -> p.param("name", "张三")

.param("age", 18).

param("sex", "男"))

.user("你好")

.call()

.content());

}

}这样我们就可以动态的获取信息去补充系统提示词里缺失的内容

伪系统提示词

有的开发者他不会去设置系统提示词,而是全部放在用户提示词中,并将message设置为里面的参数,如何实现呢?如下:

java

@Test

public void testSystemPrompt1(@Autowired DashScopeChatModel chatModel) {

// 为此客户端设置系统提示词

// 为ChatClient预设角色:你是什么 你能做什么 你要注意什么 具体应该怎么做

ChatClient.Builder builder = ChatClient.builder(chatModel);

ChatClient chatClient = builder.build();

System.out.println(chatClient.prompt()

// .system() 只为当前对话设置系统提示词

.user(u -> u.text("""

角色说明:

你是一名高级Java开发程序设计师

问题:{question}

""").param("question", "如何使用redis解决高并发"))

.call()

.content());

}提示词设置技巧

简单技巧

-

文本摘要:将大量文本缩减为简单摘要,捕捉关键点和重要思想

-

问答:专注于根据用户提出的问题,从提供的文本中获取答案

-

文本分类:系统的将文本分类到预定义的类别或组中

-

对话:创建交互式对话

-

代码生成:根据用户特定要求生成代码片段

高级技巧

-

指令明确:避免情绪化内容,描述足够清楚,把大模型比作小学生,交代越清楚执行越具体

-

格式清晰:可以使用markdown格式

Advisor对话拦截日志记录

首先我们要去配置日志拦截的优先级

properties

logging.level.org.springframework.ai.chat.client.advisor=DEBUG创建测试类TestAdvisor

java

package com.arguan.ai.chatclient;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatModel;

import org.junit.jupiter.api.Test;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.ai.chat.client.advisor.SimpleLoggerAdvisor;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

@SpringBootTest

public class TestAdvisor {

@Test

public void testAdvisor(@Autowired DashScopeChatModel chatModel) {

ChatClient.Builder builder = ChatClient.builder(chatModel);

ChatClient chatClient = builder

.defaultAdvisors(new SimpleLoggerAdvisor())

.build();

String content = chatClient.prompt()

.user("你好")

// .advisors()

.call()

.content();

System.out.println(content);

}

}测试后,控制台会边输出ai的对话结果边输出请求响应的日志信息

Advisor实现敏感词拦截

java

@Test

public void testAdvisor(@Autowired DashScopeChatModel chatModel) {

ChatClient.Builder builder = ChatClient.builder(chatModel);

ChatClient chatClient = builder

.defaultAdvisors(new SimpleLoggerAdvisor(),

new SafeGuardAdvisor(List.of("阿冠")))

.build();

String content = chatClient.prompt()

.user("阿冠帅不帅")

// .advisors()

.call()

.content();

System.out.println(content);

}自定义拦截实现Reread重读

- 重读的核心在于让LLMs重新审视输入的问题

自定义拦截器

java

package com.arguan.ai.chatclient.util;

import org.springframework.ai.chat.client.ChatClientRequest;

import org.springframework.ai.chat.client.ChatClientResponse;

import org.springframework.ai.chat.client.advisor.api.Advisor;

import org.springframework.ai.chat.client.advisor.api.AdvisorChain;

import org.springframework.ai.chat.client.advisor.api.BaseAdvisor;

import org.springframework.ai.chat.prompt.Prompt;

import org.springframework.ai.chat.prompt.PromptTemplate;

import java.util.Map;

public class ReReadingAdvisor implements BaseAdvisor {

private static final String DEFAULT_USER_TEXT_ADVISE = """

{re2_input_query}

Read the question again:{re2_input_query}

""";

@Override

public ChatClientRequest before(ChatClientRequest chatClientRequest, AdvisorChain advisorChain) {

// 请求之前 重读提示词

String contents = chatClientRequest.prompt().getContents();

String re2InputQuery = PromptTemplate.builder().template(DEFAULT_USER_TEXT_ADVISE).build()

.render(Map.of("re2_input_query", contents));

ChatClientRequest clientRequest = chatClientRequest.mutate()

.prompt(Prompt.builder().content(re2InputQuery).build()).build();

return clientRequest;

}

@Override

public ChatClientResponse after(ChatClientResponse chatClientResponse, AdvisorChain advisorChain) {

return null;

}

// 优先级

@Override

public int getOrder() {

return 0;

}

}测试方法

java

// 敏感词拦截件

@Test

public void testReReadingAdvisor(@Autowired DashScopeChatModel chatModel) {

ChatClient.Builder builder = ChatClient.builder(chatModel);

ChatClient chatClient = builder

.defaultAdvisors(new SimpleLoggerAdvisor(),

new ReReadingAdvisor())

.build();

String content = chatClient.prompt()

.user("阿冠帅不帅")

// .advisors()

.call()

.content();

System.out.println(content);

}对话记忆

- LLM是无状态的,这代表他们不会保留先前交互的信息

首先我们要添加可以自动注入ChatMemory的依赖,否则需要自己去构建

xml

<!-- 自动注入chatmemory的依赖-->

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-autoconfigure-model-chat-memory</artifactId>

</dependency>测试类

java

package com.arguan.ai.chatclient;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatModel;

import org.junit.jupiter.api.Test;

import org.springframework.ai.chat.memory.ChatMemory;

import org.springframework.ai.chat.memory.MessageWindowChatMemory;

import org.springframework.ai.chat.messages.UserMessage;

import org.springframework.ai.chat.model.ChatResponse;

import org.springframework.ai.chat.prompt.Prompt;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

@SpringBootTest

public class TestMemory {

@Test

public void testMemory(@Autowired DashScopeChatModel chatModel) {

ChatMemory chatMemory = MessageWindowChatMemory.builder().build();

String memoryId = "ag01";// 当前对话的唯一标识:id

// 第一轮对话

UserMessage userMessage1 = new UserMessage("你好,我是阿冠");

chatMemory.add(memoryId, userMessage1);

ChatResponse response1 = chatModel.call(new Prompt(chatMemory.get(memoryId)));

chatMemory.add(memoryId, response1.getResult().getOutput());

// 第二轮对话

UserMessage userMessage2 = new UserMessage("我是谁");

chatMemory.add(memoryId, userMessage2);

ChatResponse response2 = chatModel.call(new Prompt(chatMemory.get(memoryId)));

chatMemory.add(memoryId, response2.getResult().getOutput());

System.out.println(response2.getResult().getOutput().getText());

}

}配置聊天记录的最大存储数量

-

聊天记录每次发给大模型,会消耗token

-

大模型的token具有上限,发送过多聊天记录,会导致token过长

java

// 配置当前测试的内部的测试类来防止影响其他的测试类

@TestConfiguration

static class TestConfig {

@Bean

public ChatMemory chatMemory(ChatMemoryRepository chatMemoryRepository) {

return MessageWindowChatMemory

.builder()

// 聊天记录最多对话次数

.maxMessages(10)

.chatMemoryRepository(chatMemoryRepository)

.build();

}

}配置多用户隔离记忆

java

// 配置多用户记忆隔离

@Test

public void testChatOptions(@Autowired DashScopeChatModel chatModel,

@Autowired ChatMemory chatMemory) {

ChatClient.Builder builder = ChatClient.builder(chatModel);

ChatClient chatClient = builder

.defaultAdvisors(

PromptChatMemoryAdvisor.builder(chatMemory).build()

)

.build();

// 第一个用户的对话

String content = chatClient.prompt()

.user("我叫阿冠")

.advisors(advisorSpec -> advisorSpec.param(ChatMemory.CONVERSATION_ID, "1"))

.call()

.content();

System.out.println(content);

System.out.println("------------------");

content = chatClient.prompt()

.user("我叫什么")

.advisors(advisorSpec -> advisorSpec.param(ChatMemory.CONVERSATION_ID, "1"))

.call()

.content();

System.out.println(content);

System.out.println("------------------");

// 第二个用户的对话

content = chatClient.prompt()

.user("我叫什么")

.advisors(advisorSpec -> advisorSpec.param(ChatMemory.CONVERSATION_ID, "2"))

.call()

.content();

System.out.println(content);

}数据库存储对话记忆

默认情况,对话内容会存在于jvm虚拟机中会导致:

-

1 一直存储会撑爆jvm

-

2 重启就会造成内容丢失

所以,我们应该存储到第三方来保证存储持久化,首先添加依赖

xml

<!-- jdbc记忆存储依赖-->

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-starter-model-chat-memory-repository-jdbc</artifactId>

</dependency>

<!-- jdbc-->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-jdbc</artifactId>

</dependency>

<!-- mysql驱动-->

<dependency>

<groupId>com.mysql</groupId>

<artifactId>mysql-connector-j</artifactId>

<scope>runtime</scope>

</dependency>然后添加配置

properties

spring.ai.chat.memory.repository.jdbc.initialize-schema=always

# 需要自己定义sql文件

spring.ai.chat.memory.repository.jdbc.schema=classpath:/schema-mysql.sql测试类

java

package com.arguan.ai.chatclient;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatModel;

import org.junit.jupiter.api.Test;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.ai.chat.client.advisor.PromptChatMemoryAdvisor;

import org.springframework.ai.chat.memory.ChatMemory;

import org.springframework.ai.chat.memory.MessageWindowChatMemory;

import org.springframework.ai.chat.memory.repository.jdbc.JdbcChatMemoryRepository;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

import org.springframework.boot.test.context.TestConfiguration;

import org.springframework.context.annotation.Bean;

@SpringBootTest

public class TestJDBCAdvisor {

@TestConfiguration

static class ChatMemoryConfig {

@Bean

ChatMemory chatMemory(JdbcChatMemoryRepository chatMemoryRepository) {

return MessageWindowChatMemory

.builder()

.maxMessages(10)

.chatMemoryRepository(chatMemoryRepository)

.build();

}

}

@Test

public void testChatOptions(@Autowired DashScopeChatModel chatModel,

@Autowired ChatMemory chatMemory) {

ChatClient.Builder builder = ChatClient.builder(chatModel);

ChatClient chatClient = builder

.defaultAdvisors(

PromptChatMemoryAdvisor.builder(chatMemory).build()

)

.build();

// 第一个用户的对话

String content = chatClient.prompt()

.system("""

禁止使用表情符号

""")

.user("我叫阿冠")

.advisors(advisorSpec -> advisorSpec.param(ChatMemory.CONVERSATION_ID, "1"))

.call()

.content();

System.out.println(content);

System.out.println("------------------");

content = chatClient.prompt()

.system("""

禁止使用表情符号

""")

.user("我叫什么")

.advisors(advisorSpec -> advisorSpec.param(ChatMemory.CONVERSATION_ID, "1"))

.call()

.content();

System.out.println(content);

System.out.println("------------------");

// 第二个用户的对话

content = chatClient.prompt()

.system("""

禁止使用表情符号

""")

.user("我叫什么")

.advisors(advisorSpec -> advisorSpec.param(ChatMemory.CONVERSATION_ID, "2"))

.call()

.content();

System.out.println(content);

}

}Redis存储对话记忆

我们先添加相关依赖

xml

<properties>

<jedis.version>5.2.0</jedis.version>

</properties>

<!-- redis记忆存储依赖-->

<dependency>

<groupId>com.alibaba.cloud.ai</groupId>

<artifactId>spring-ai-alibaba-starter-memory-redis</artifactId>

</dependency>

<!-- redis依赖-->

<dependency>

<groupId>redis.clients</groupId>

<artifactId>jedis</artifactId>

<version>${jedis.version}</version>

</dependency>接着添加配置文件

properties

# redis

spring.data.redis.host=localhost

spring.data.redis.port=6379

spring.data.redis.timeout=5000

spring.data.redis.password=项目中应用

java

package com.arguan.ai.chatclient.memory;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatModel;

import com.alibaba.cloud.ai.memory.redis.RedisChatMemoryRepository;

import org.junit.jupiter.api.Test;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.ai.chat.client.advisor.PromptChatMemoryAdvisor;

import org.springframework.ai.chat.memory.ChatMemory;

import org.springframework.ai.chat.memory.MessageWindowChatMemory;

import org.springframework.ai.chat.memory.repository.jdbc.JdbcChatMemoryRepository;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.beans.factory.annotation.Value;

import org.springframework.boot.test.context.SpringBootTest;

import org.springframework.boot.test.context.TestConfiguration;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

@SpringBootTest

public class TestRedisMemory {

@TestConfiguration

static class Config {

@Value("${spring.ai.memory.redis.host}")

private String redisHost;

@Value("${spring.ai.memory.redis.port}")

private int redisPort;

@Value("${spring.ai.memory.redis.password}")

private String redisPassword;

@Value("${spring.ai.memory.redis.timeout}")

private int redisTimeout;

@Bean

public RedisChatMemoryRepository redisChatMemoryRepository() {

return RedisChatMemoryRepository.builder()

.host(redisHost)

.port(redisPort)

// 若没有密码则注释该项

// .password(redisPassword)

.timeout(redisTimeout)

.build();

}

@Bean

ChatMemory chatMemory(RedisChatMemoryRepository chatMemoryRepository) {

return MessageWindowChatMemory

.builder()

.maxMessages(10)

.chatMemoryRepository(chatMemoryRepository)

.build();

}

}

@Test

public void testChatOptions(@Autowired DashScopeChatModel chatModel,

@Autowired ChatMemory chatMemory) {

ChatClient.Builder builder = ChatClient.builder(chatModel);

ChatClient chatClient = builder

.defaultAdvisors(

PromptChatMemoryAdvisor.builder(chatMemory).build()

)

.build();

// 第一个用户的对话

String content = chatClient.prompt()

.system("""

禁止使用表情符号

""")

.user("我叫阿冠")

.advisors(advisorSpec -> advisorSpec.param(ChatMemory.CONVERSATION_ID, "1"))

.call()

.content();

System.out.println(content);

System.out.println("------------------");

content = chatClient.prompt()

.system("""

禁止使用表情符号

""")

.user("我叫什么")

.advisors(advisorSpec -> advisorSpec.param(ChatMemory.CONVERSATION_ID, "1"))

.call()

.content();

System.out.println(content);

System.out.println("------------------");

// 第二个用户的对话

content = chatClient.prompt()

.system("""

禁止使用表情符号

""")

.user("我叫什么")

.advisors(advisorSpec -> advisorSpec.param(ChatMemory.CONVERSATION_ID, "2"))

.call()

.content();

System.out.println(content);

}

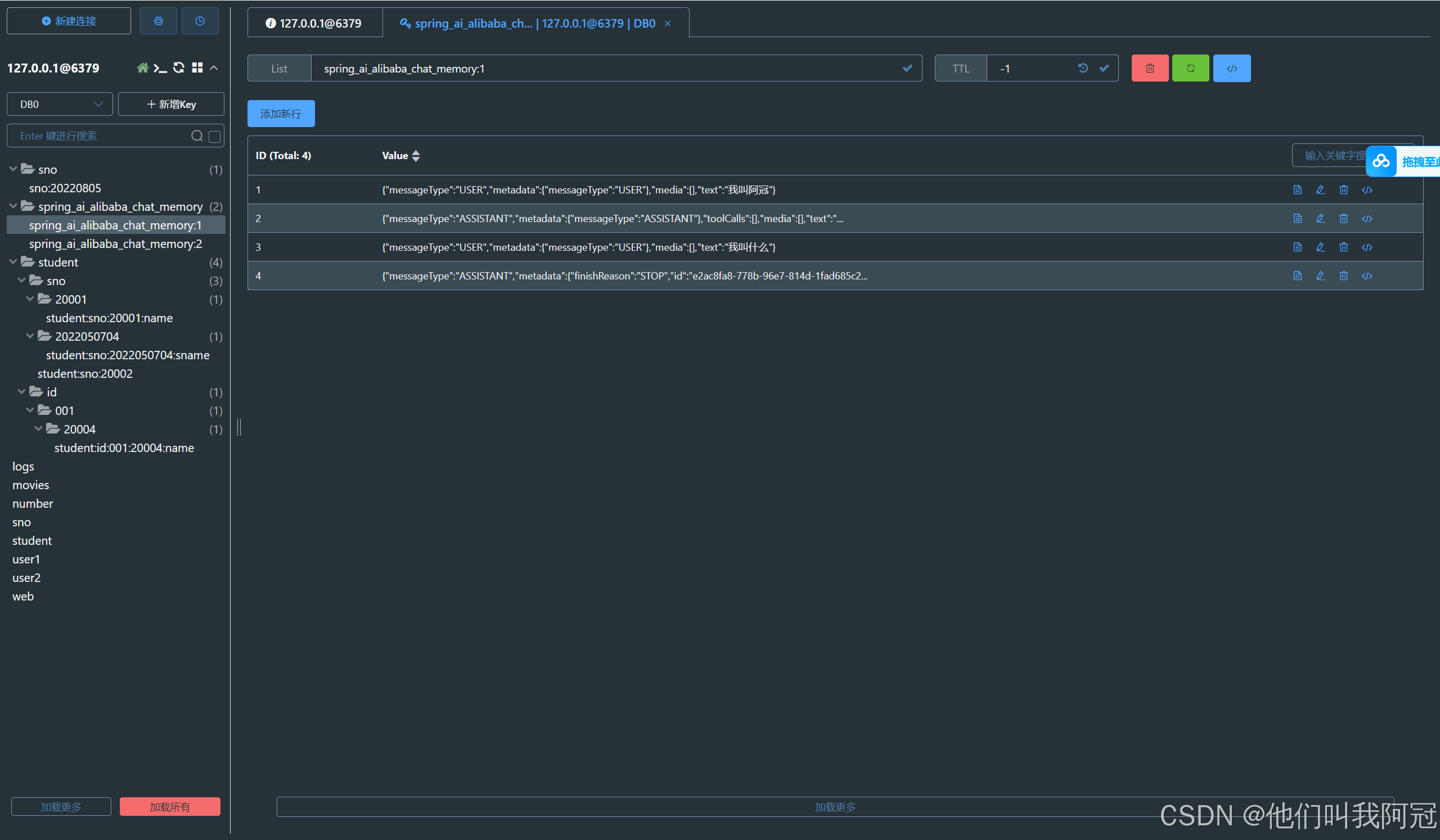

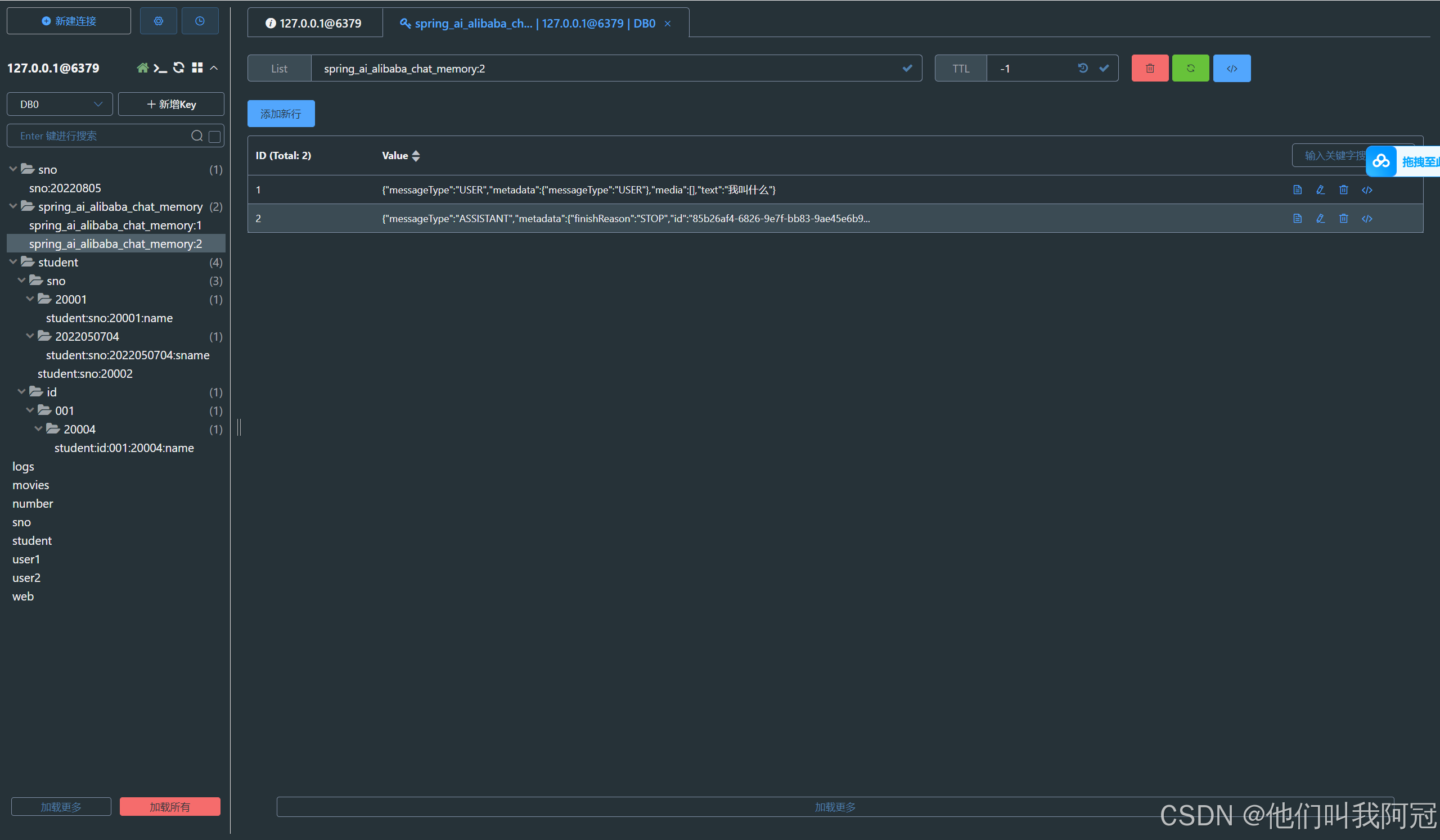

}启动之后,我们去redis的可视化界面中查看是否记忆存储到了redis当中

我们发现,存储成功了,并根据memoryid进行了对话隔离

多层次记忆架构

痛点

-

记忆多=聪明,记忆多会触发token上限

-

聊天记录一旦增多,就会超过token上限

模仿人类

-

近期记忆:保留在上下文窗口中的最近几轮对话,每轮对话完成后立即存储(ChatMemory)

-

中期记忆:通过RAG检索相关的历史对话(每轮对话完成后,异步将对话内容转换为向量并存入向量数据库)

-

长期记忆:关键信息的固化总结

-

方式一:定时批处理

-

通过定时任务对积累的对话进行总结和提炼

-

提取关键信息,用户偏好,重要事实等

-

批处理方式降低计算成本,适合大规模处理

-

-

方式二:关键点实时处理

-

在对话中识别出关键信息点时立即提取并存储

-

例如:当用户明确表达偏好,提供个人信息或设置持久性指令时

-

采用"写入触发器"机智,在特定条件下自动更新长期记忆

-

-

结构化输出

基础类型:

以Boolean为例,在agent中可以用于判定用于的内容2个分支,不同的分支走不同的逻辑

java

package com.arguan.ai.chatclient;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatModel;

import org.junit.jupiter.api.BeforeEach;

import org.junit.jupiter.api.Test;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

@SpringBootTest

public class TestStructureOut {

ChatClient chatClient;

@BeforeEach

public void init(@Autowired DashScopeChatModel chatModel) {

chatClient = ChatClient.builder(chatModel).build();

}

@Test

public void testBoolOut() {

Boolean isComplain = chatClient

.prompt()

.system("""

请判断用户是否表达了投诉意图?

只能用true或false回答,不要输出多余内容

""")

.user("你们家的快递还不错!")

.call()

.entity(Boolean.class);

// 分支逻辑

if (Boolean.TRUE.equals(isComplain)) {

System.out.println("用户是投诉,转接人工客服");

} else {

System.out.println("用户不是投诉,自动转接客服机器人");

// todo... dress.class);

System.out.println(address);

}

}另外,还可以通过结构化输出来根据用户输入的收货信息中提取关键信息(Pojo类型)

java

public record Address(

String name,// 姓名

String phone,// 手机号

String province,// 省

String city,// 市

String district,// 区

String detail// 详细地址

) {}

@Test

public void testEntityOut() {

Address address = chatClient.prompt()

.system("""

从下面的这段文本中获取收货信息

""")

.user("收货人:薛安琪,电话:15561756197,地址:黑龙江省哈尔滨市浦源路2468号")

.call()

.entity(Address.class);

System.out.println(address);

}原理

SpringAI底层是通过BeanOutputConverter转换器来自动设置提示词来提取需要的内容和以什么格式返回,再进行反序列化变为我们Java中的pojo类型

票务助手实战

-

我要退票---提取任务类型关键字---是否有姓名和预定号---退票方法调用

-

你好---调用智能客服

xml文件

xml

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>com.arguan.ai</groupId>

<artifactId>string-new-ai-arguan</artifactId>

<version>0.0.1-SNAPSHOT</version>

<relativePath>../pom.xml</relativePath>

</parent>

<artifactId>more-model-structured-agent</artifactId>

<name>more-model-structured-agent</name>

<description>more-model-structured-agent</description>

<properties>

<java.version>17</java.version>

</properties>

<dependencies>

<dependency>

<groupId>com.alibaba.cloud.ai</groupId>

<artifactId>spring-ai-alibaba-starter-dashscope</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-autoconfigure-model-chat-memory</artifactId>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>17</source>

<target>17</target>

</configuration>

</plugin>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

<configuration>

<mainClass>com.arguan.ai.moremodelstructuredagent.Application</mainClass>

<skip>true</skip>

</configuration>

</plugin>

</plugins>

</build>

</project>

ns>

</plugin>

</plugins>

</build>

</project>配置文件

properties

spring.ai.dashscope.api-key=${ALI_API_KEY}AI配置类,用于定义两种情况的对话智能体,一种是处理退票业务的,一种是智能客服

java

package com.arguan.ai.moremodelstructuredagent;

import com.alibaba.cloud.ai.autoconfigure.dashscope.DashScopeChatProperties;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatModel;

import com.alibaba.cloud.ai.dashscope.chat.DashScopeChatOptions;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.ai.chat.client.advisor.MessageChatMemoryAdvisor;

import org.springframework.ai.chat.memory.ChatMemory;

import org.springframework.ai.chat.memory.MessageWindowChatMemory;

import org.springframework.ai.chat.prompt.DefaultChatOptions;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

@Configuration

public class AiConfig {

@Bean

public ChatClient planningChatClient(DashScopeChatModel chatModel,

DashScopeChatProperties options,

ChatMemory chatMemory) {

DashScopeChatOptions defaultChatOptions = DashScopeChatOptions.fromOptions(options.getOptions());

defaultChatOptions.setTemperature(0.4);

return ChatClient.builder(chatModel)

.defaultSystem("""

# 票务助手任务拆分规则

## 1 要求

### 1.1 根据用户内容识别任务

## 2 任务

### 2.1 JobType:退票(CANCEL) 要求用户提供姓名和预定号,或者从对话中提取;

### 2.2 JobType:查票(QUERY) 要求用户提供预定号,或者从对话中提取;

### 2.3 JobType:其他(OTHER)

""")

.defaultAdvisors(

MessageChatMemoryAdvisor.builder(chatMemory).build()

)

.defaultOptions(defaultChatOptions)

.build();

}

// 智能客服

@Bean

public ChatClient botChatClient(DashScopeChatModel chatModel,

DashScopeChatProperties options,

ChatMemory chatMemory) {

DashScopeChatOptions dashScopeChatOptions = DashScopeChatOptions.fromOptions(options.getOptions());

dashScopeChatOptions.setTemperature(1.2);

return ChatClient.builder(chatModel)

.defaultSystem("""

你是Arguan航空智能客服代理,请以友好的语气服务用户

""")

.defaultAdvisors(

MessageChatMemoryAdvisor.builder(chatMemory).build()

)

.defaultOptions(dashScopeChatOptions)

.build();

}

}定义AiJob类结构化输出模型,JobType用于枚举任务处理类型,Job Record为结构化数据结构,利用SpringAI的entity功能来返回Java对象

java

package com.arguan.ai.moremodelstructuredagent;

import java.util.Map;

public class AiJob {

// 结构化数据结构

record Job(

JobType jobType, Map<String, String> keyInfos

) {}

// 任务类型枚举

public enum JobType {

CANCEL,

QUERY,

OTHER,

}

}Controller

java

package com.arguan.ai.moremodelstructuredagent;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.RequestParam;

import org.springframework.web.bind.annotation.RestController;

import reactor.core.publisher.Flux;

import reactor.core.publisher.Sinks;

@RestController

public class MultiModelsController {

@Autowired

ChatClient planningChatClient;

@Autowired

ChatClient botChatClient;

@GetMapping(value = "/stream", produces = "text/stream;charset=UTF-8")

Flux<String> stream(@RequestParam String message) {

// 创建一个用于接收多条消息的Sink

Sinks.Many<String> sink = Sinks.many().unicast().onBackpressureBuffer();

// 推送消息

sink.tryEmitNext("正在计划任务...<br/>");

new Thread(() -> {

AiJob.Job job = planningChatClient.prompt().user(message)

.call().entity(AiJob.Job.class);

switch (job.jobType()) {

case CANCEL -> {

System.out.println(job);

if (job.keyInfos().size() == 0) {

sink.tryEmitNext("请输入姓名和订单号:");

} else {

// todo.. 执行业务 ticketService.cancel

sink.tryEmitNext("退票成功!");

}

}

case QUERY -> {

System.out.println(job);

if (job.keyInfos().size() == 0) {

sink.tryEmitNext("请输入订单号:");

}

// todo.. 执行业务 ticketService.query

sink.tryEmitNext("查询预订信息:xxxxxx");

}

case OTHER -> {

Flux<String> content = botChatClient.prompt().user(message).stream().content();

content.doOnNext(sink::tryEmitNext)// 推送每条AI流内容

.doOnComplete(() -> sink.tryEmitComplete())

.subscribe();

}

default -> {

System.out.println(job);

sink.tryEmitNext("解析失败");

}

}

}).start();

return sink.asFlux();

}

}Function-Call

-

信息检索:可用于从外部源(数据库,Web服务,文件系统,Web搜索引擎等)检索信息

-

采取行动:自动执行原本需要人工干预或显式编程的任务

ToolService类,用于提供tools工具,并由此类接收到参数去执行业务方法处理业务逻辑

java

package com.arguan.ai.moremodelstructuredagent;

import org.springframework.ai.tool.annotation.Tool;

import org.springframework.ai.tool.annotation.ToolParam;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.stereotype.Service;

@Service

public class ToolService {

@Autowired

private TicketService ticketService;

// @Tool注解告诉大模型我们提供了什么工具

@Tool(description = "退票")

public String cancel(

//@ToolParam注解告诉大模型这个参数的描述

@ToolParam(description = "预定号,格式可以是纯数字") String ticketNumber,

@ToolParam(description = "姓名") String name) {

ticketService.cancel(ticketNumber, name);

return "退票成功!";

}

}ToolsController测试类,用于测试Function-call的使用

java

package com.arguan.ai.moremodelstructuredagent;

import org.springframework.ai.chat.client.ChatClient;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RequestParam;

import org.springframework.web.bind.annotation.RestController;

@RestController

public class ToolsController {

ChatClient chatClient;

public ToolsController(ChatClient.Builder ChatClientBuilder,

ToolService toolService) {

this.chatClient = ChatClientBuilder

.defaultTools(toolService)// 底层告诉大模型提供了什么工具,需要什么参数

.build();

}

@RequestMapping("/tool")

public String tool(@RequestParam(value = "message", defaultValue = "讲个笑话") String message) {

return chatClient.prompt().user(message).call().content();

}

}TicketService票务处理业务类

java

package com.arguan.ai.moremodelstructuredagent;

import org.springframework.stereotype.Service;

@Service

public class TicketService {

public void cancel(String ticketNumber, String name) {

// todo.. 退票业务

System.out.println("已经取消了名为" + name + "订单号为" + ticketNumber + "的订单");

}

}Tools原理

-

用户输入: "帮我取消订单,订单号是 789,我叫李四"

-

ChatClient 发送请求 + 工具描述到 LLM

-

LLM 识别意图,返回:调用 cancel(ticketNumber="789", name="李四")

-

Spring AI 自动执行 ToolService.cancel("789", "李四")

-

TicketService 执行实际退票业务

-

返回结果 "退票成功!" 给 LLM

-

LLM 生成友好回复:"已为您取消订单 789,乘客李四。"

-

用户收到 最终回复

Tools参数无法自动推算问题

-

温度太低,AI可能缺失自由度变得比较拘谨(从一定程度上可以解决)

-

也可以通过描述更加准确(推荐)

强行适配Tool参数的幻觉问题

-

加严参数描述与校验

-

后端代码加强校验和兜底保护

-

系统prompt设定限制

-

加强人工确认

Tools其他注意事项

-

工具暴露的接口名,方法名,参数名要可读,业务化

-

避免乱码,缩写

-

工具方法不适合做超耗时操作,导致用户体验不佳,线程堆积

Tools权限控制

- 可以利用SpringSecurity限制

相关依赖

xml

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-security</artifactId>

</dependency>配置类

java

package com.arguan.ai.moremodelstructuredagent.config;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.security.config.Customizer;

import org.springframework.security.config.annotation.method.configuration.EnableMethodSecurity;

import org.springframework.security.config.annotation.web.builders.HttpSecurity;

import org.springframework.security.config.annotation.web.configurers.FormLoginConfigurer;

import org.springframework.security.core.userdetails.User;

import org.springframework.security.core.userdetails.UserDetails;

import org.springframework.security.core.userdetails.UserDetailsService;

import org.springframework.security.crypto.bcrypt.BCryptPasswordEncoder;

import org.springframework.security.crypto.password.PasswordEncoder;

import org.springframework.security.provisioning.InMemoryUserDetailsManager;

import org.springframework.security.web.SecurityFilterChain;

@Configuration

@EnableMethodSecurity

public class SecurityConfig {

/**

* 配置并返回基于内存的用户详情服务

* 创建两个测试用户:普通用户(user1)和管理员(admin)

*

* @return UserDetailsService 包含预配置用户的内存用户详情服务实例

*/

@Bean

public UserDetailsService userDetailsService() {

UserDetails user = User.withUsername("user1").password(passwordEncoder().encode("pass1")).roles("USER").build();

UserDetails admin = User.withUsername("admin").password(passwordEncoder().encode("pass2")).roles("ADMIN").build();

return new InMemoryUserDetailsManager(user, admin);

}

/**

* 配置安全过滤链,定义HTTP请求的访问规则和认证方式

* 允许/tool端点公开访问,其他所有请求需要认证

* 启用默认的表单登录功能

*

* @param http HttpSecurity对象,用于配置Web安全策略

* @return SecurityFilterChain 配置完成的安全过滤链实例

* @throws Exception 配置过程中可能抛出的异常

*/

@Bean

public SecurityFilterChain filterChain(HttpSecurity http) throws Exception {

http

.authorizeHttpRequests(authz -> authz

.requestMatchers("/tool").permitAll()

.anyRequest().authenticated()

)

.with(new FormLoginConfigurer<>(), Customizer.withDefaults());

return http.build();

}

/**

* 配置密码编码器Bean,使用BCrypt强哈希算法对用户密码进行加密

* BCrypt会自动生成盐值,确保相同密码产生不同的哈希结果

*

* @return PasswordEncoder BCrypt密码编码器实例

*/

@Bean

public PasswordEncoder passwordEncoder() {

return new BCryptPasswordEncoder();

}

}退票方法

java

package com.arguan.ai.moremodelstructuredagent;

import org.springframework.ai.tool.annotation.Tool;

import org.springframework.ai.tool.annotation.ToolParam;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.security.access.prepost.PreAuthorize;

import org.springframework.security.core.context.SecurityContextHolder;

import org.springframework.stereotype.Service;

@Service

public class ToolService {

@Autowired

private TicketService ticketService;

/**

* 退票工具方法,供AI调用执行退票操作

* 需要ADMIN角色权限才能执行

*

* @param ticketNumber 预定号,格式可以是纯数字

* @param name 姓名

* @return String 包含操作员用户名的退票成功消息

*/

@Tool(description = "退票")

@PreAuthorize("hasRole('ADMIN')")

public String cancel(

@ToolParam(description = "预定号,格式可以是纯数字") String ticketNumber,

@ToolParam(description = "姓名") String name) {

String username = SecurityContextHolder.getContext().getAuthentication().getName();

ticketService.cancel(ticketNumber, name);

return username + "退票成功!";

}

}-

将tools和权限资源一起存储,然后动态设置tools

- 根据当前用户读取当前用户所属角色的所有tools

java

/**

* 模拟从数据库中动态根据当前用户角色读取tools

* 通过反射机制创建工具回调对象,实现动态工具注册

*

* @param toolService 工具服务对象实例

* @return List<ToolCallback> 工具回调列表

*/

public List<ToolCallback> getToolCallList(ToolService toolService) {

// todo.. 从数据库中读取的代码

// 拿一个tool举例

Method method = ReflectionUtils.findMethod(ToolService.class, "cancel", String.class, String.class);

ToolDefinition build = ToolDefinition.builder()

.name("cancel")

.description("退票")

.inputSchema("""

{

"type": "object",

"properties": {

"ticketNumber": {

"type": "string",

"description": "预定号,可以是纯数字"

},

"name": {

"type": "string",

"description": "真实人名"

}

},

"required": ["ticketNumber", "name"]

}

""")

.build();

ToolCallback toolCallback = MethodToolCallback.builder()

.toolDefinition(

build

)

.toolMethod(method)

.toolObject(toolService)

.build();

return List.of(toolCallback);

return List.of(toolCallback);

}Tools过多影响

-

token上限

-

选择困难症

向量数据库,一个数据库用来做相似性检索

实现方式:

-

把所有tools存入到向量数据库

-

每次对话的时候根据当前对话信息检索到相似的tools(RAG)

-

动态设置tools