基于Kubeadm和Docker部署K8S高可用集群

由于环境不够只能部署单节点的K8S

1、基于Kubeadm和Docker部署kubernetes(K8S)高可用集群

环境准备

| IP | 主机名 | 角色 |

|---|---|---|

| 10.0.0.134 | master1.wang.org | K8s 集群主节点 1,Master和etcd |

| 10.0.0.135 | node1.wang.org | K8s 集群工作节点 1 |

| 10.0.0.134 | kubeapi.wang.org | K8s 主节点访问入口 1,提供高可用及负载均衡 |

1.1 初始化环境准备

操作系统及 Kubernetes 组件版本

以下案例中部署集群使用的操作系统、容器引擎、Kubernetes 版本信息:

-

OS: Ubuntu Ubuntu 24.04 LTS

-

CRI: Docker 28.2.2 Cgroup Driver: systemd

-

Kubernetes: 1.35.0

主机名并实现主机名解析

在每个主机设置不同的主机名,IP 和主机名解析:

cat >> /etc/hosts <<EOF

10.0.0.134 master1 master1.wang.org

10.0.0.135 node1 node1.wang.org

10.0.0.134 kubeapi.wang.org

EOF在 VMware 的宿主机Windows 上添加 hosts 解析:

#c:\windows\system32\drivers\etc\hosts

10.0.0.134 master1 master1.wang.org

10.0.0.135 node1 node1.wang.org

10.0.0.134 kubeapi.wang.org主机时间同步

在集群的 Master 和各 node 同步时间,如果使用提供的虚拟机,此步不用做

[root@master1 ~]#timedatectl set-timezone Asia/Shanghai

[root@master1 ~]#apt update

[root@master1 ~]#apt install chrony -y

[root@master1 ~]#vim /etc/chrony/chrony.conf

#加下面一行

pool ntp.aliyun.com iburst maxsources 2

[root@master1 ~]#systemctl enable chrony

[root@master1 ~]#systemctl restart chrony

[root@master1 ~]#chronyc sources禁用 SELinux

RHEL/CentOS/Rocky 系列关闭 SELinux:

~# setenforce 0

~# sed -i 's#^\(SELINUX=\).*#\1disabled#' /etc/sysconfig/selinux关闭防火墙

集群的 Master 和各 node 执行

RHEL/CentOS/Rocky系统

~# systemctl disable --now firewalldUbuntu系统:

#如果安装ufw

~# ufw disable

#确认关闭

~# ufw status

#如果安装firewalld

~# systemctl disable --now firewalld禁用Swap设备

在集群的 Master 和各 node 执行

~# swapoff -a

~# sed -i '/swap/s/^/#/' /etc/fstab内核优化

集群的 Master 和各 node 执行

#开机加载内核模块

cat <<EOF | tee /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOF

#立即加载内核模块

modprobe overlay

modprobe br_netfilter

#验证模块已加载

lsmod |grep -E 'overlay|br_netfilter'

br_netfilter 32768 0

bridge 421888 1 br_netfilter

overlay 212992 0

#设置所需的 sysctl 参数,参数在重新启动后保持不变

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF

#应用 sysctl 参数生效而不重新启动

sysctl --system1.2 高可用反向代理

可以利用Haproxy(或nginx)和keepalived 实现Kube-API server的高可用和负载均衡

实现 keepalived

在两台主机ha1和ha2 按下面步骤部署和配置 keepalived

[root@ha1 ~]#apt update && apt -y install keepalived

#keepalived配置

[root@ha1 ~]#cp /usr/share/doc/keepalived/samples/keepalived.conf.vrrp

/etc/keepalived/keepalived.conf

[root@ha1 ~]#vim /etc/keepalived/keepalived.conf

#第一个节点的配置

[root@ha1 ~]#cat /etc/keepalived/keepalived.conf

global_defs {

router_id ha1.wang.org #指定router_id,#在ha2上为ha2.wang.org

}

vrrp_script check_haproxy {

script "killall -0 haproxy"

interval 1

weight -30

fall 3

rise 2

timeout 2

}

vrrp_instance VI_1 {

state MASTER #在ha2上为BACKUP

interface eth0

garp_master_delay 10

smtp_alert

virtual_router_id 66 #指定虚拟路由器ID,ha1和ha2此值必须相同

priority 100 #在ha2上为80

advert_int 1

authentication {

auth_type PASS

auth_pass 123456 #指定验证密码,ha1和ha2此值必须相同

}

virtual_ipaddress {

10.0.0.100/24 dev eth0 label eth0:1 #指定VIP,ha1和ha2此值必须相同

}

track_script {

check_haproxy

}

}

#第二个节点的配置

[root@ha2 ~]#cat /etc/keepalived/keepalived.conf

global_defs {

router_id ha2.wang.org #指定router_id,#在ha2上为ha2.wang.org

}

vrrp_instance VI_1 {

state BACKUP #在ha2上为BACKUP

interface eth0

garp_master_delay 10

smtp_alert

virtual_router_id 66 #指定虚拟路由器ID,ha1和ha2此值必须相同

priority 80 #在ha2上为80

advert_int 1

authentication {

auth_type PASS

auth_pass 123456 #指定验证密码,ha1和ha2此值必须相同

}

virtual_ipaddress {

10.0.0.100/24 dev eth0 label eth0:1 #指定VIP,ha1和ha2此值必须相同

}

}

[root@ha1 ~]#hostname -I

10.0.0.107

[root@ha1 ~]#systemctl start keepalived.service

#验证keepalived服务是否正常

[root@ha1 ~]#hostname -I

10.0.0.107 10.0.0.100

[root@ha1 ~]#ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group

default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP

group default qlen 1000

link/ether 00:0c:29:40:27:06 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.107/24 brd 10.0.0.255 scope global eth0

valid_lft forever preferred_lft forever

inet 10.0.0.100/24 scope global secondary eth0:1

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe40:2706/64 scope link

valid_lft forever preferred_lft forever

[root@ha1 ~]#systemctl status keepalived.service

● keepalived.service - Keepalive Daemon (LVS and VRRP)

Loaded: loaded (/lib/systemd/system/keepalived.service; enabled; vendor

preset: enabled)

Active: active (running) since Tue 2020-04-21 11:04:56 CST; 28s ago

Process: 27859 ExecStart=/usr/sbin/keepalived $DAEMON_ARGS (code=exited,

status=0/SUCCESS)

Main PID: 27860 (keepalived)实现Haproxy

通过 Harproxy 实现 kubernetes Api-server的四层反向代理和负载均衡功能

#在两台主机ha1和ha2都执行下面操作

[root@ha1 ~]#cat >> /etc/sysctl.conf <<EOF

net.ipv4.ip_nonlocal_bind = 1

EOF

[root@ha1 ~]#sysctl -p

#安装配置haproxy

[root@ha1 ~]#apt -y install haproxy

[root@ha1 ~]#vim /etc/haproxy/haproxy.cfg

errorfile 503 /etc/haproxy/errors/503.http

errorfile 504 /etc/haproxy/errors/504.http

##########添加以下内容######################

listen stats

mode http

bind 0.0.0.0:8888

stats enable

log global

stats uri /status

stats auth admin:123456

listen kubernetes-api-6443

bind 10.0.0.100:6443

mode tcp

server master1 10.0.0.101:6443 check inter 3s fall 3 rise 3

#先暂时禁用master2和master3,等kubernetes安装完成后,再启用

#server master2 10.0.0.102:6443 check inter 3s fall 3 rise 3

#server master3 10.0.0.103:6443 check inter 3s fall 3 rise 3

[root@ha1 ~]#systemctl restart haproxy

[root@ha1 ~]#systemctl status haproxy

● haproxy.service - HAProxy Load Balancer

Loaded: loaded (/lib/systemd/system/haproxy.service; enabled; vendor preset:

enabled)

Active: active (running) since Tue 2020-04-21 20:23:22 CST; 58min ago

Docs: man:haproxy(1)

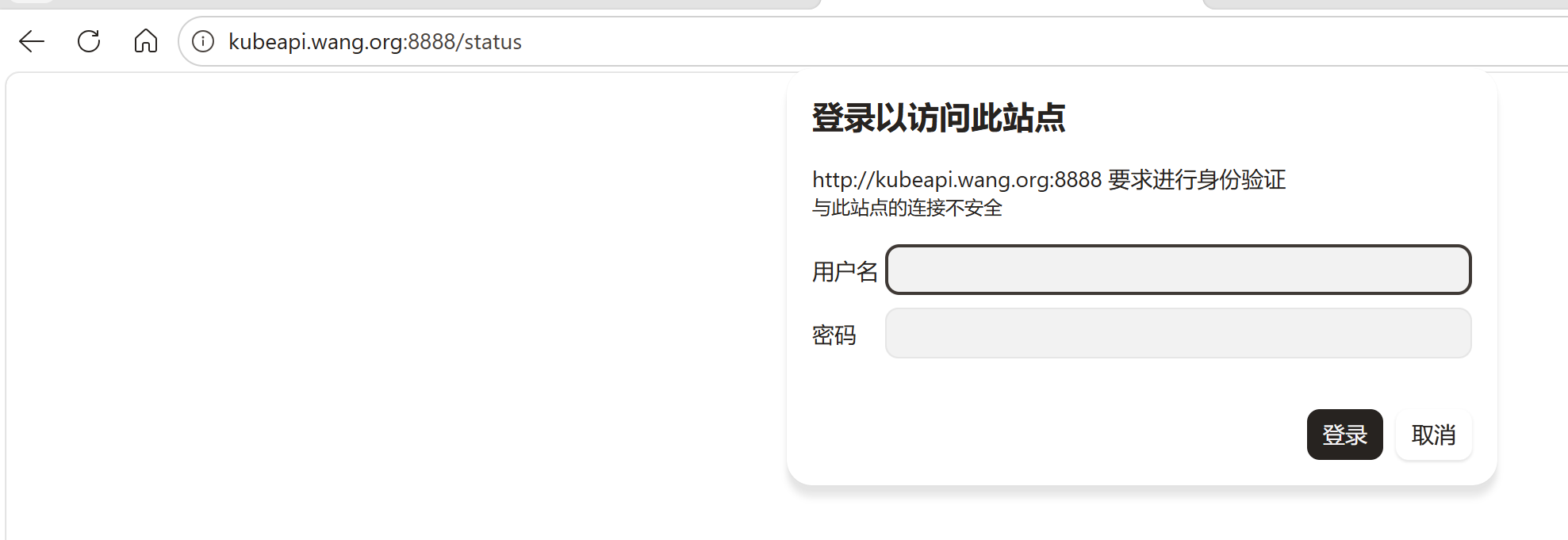

file:/usr/share/doc/haproxy/configuration.txt.gz浏览器访问: http://ha1.wang.org:8888/status ,可以看到下面界面,

用户名:admin

密码:123456

1.3 所有master和node节点安装和配置docker

安装docker

范例:在所有master和worker上安装系统内置版本docker

[root@master1 ~]#apt update && apt -y install docker.io

[root@master1 ~]#docker version

Client:

Version: 28.2.2

API version: 1.50

Go version: go1.23.1

Git commit: 28.2.2-0ubuntu1~24.04.1

Built: Wed Sep 10 14:16:39 2025

OS/Arch: linux/amd64

Context: default

Server:

Engine:

Version: 28.2.2

API version: 1.50 (minimum version 1.24)

Go version: go1.23.1

Git commit: 28.2.2-0ubuntu1~24.04.1

Built: Wed Sep 10 14:16:39 2025

OS/Arch: linux/amd64

Experimental: false

containerd:

Version: 1.7.28

GitCommit:

runc:

Version: 1.3.3-0ubuntu1~24.04.3

GitCommit:

docker-init:

Version: 0.19.0

GitCommit

#因为国内无法访问docker官方镜像,需要配置docker访问docker官方镜像

[root@master1 ~]#cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": [

"https://docker.m.daocloud.io",

"https://docker.1panel.live",

"https://docker.1ms.run",

"https://docker.xuanyuan.me"

],

"insecure-registries": ["harbor.wang.org"]

}

EOF

[root@master1 ~]#systemctl restart docker所有主机安装 cri-dockerd(v1.24以后版本)

在所有主机上载Linux通用二进制文件并创建service和socket文件

#下载Linux通用版本

[root@ubuntu2404 ~]#VERSION=0.3.23(安装版本)

[root@ubuntu2404 ~]#wgert https://github.com/Mirantis/cridockerd/releases/download/v${VERSION}/cri-dockerd-${VERSION}.amd64.tgz

[root@ubuntu2404 ~]#tar tf cri-dockerd-${VERSION}.amd64.tgz

cri-dockerd/

cri-dockerd/cri-dockerd

[root@ubuntu2404 ~]#tar xf cri-dockerd-${VERSION}.amd64.tgz

[root@ubuntu2404 ~]#mv cri-dockerd/cri-dockerd /usr/bin/

#准备service和socket文件

service和socket文件拷贝到 /lib/systemd/system/

#https://github.com/Mirantis/cri-dockerd/tree/master/packaging/systemd

[root@ubuntu2404 ~]#wget -O /lib/systemd/system/cri-docker.service

https://raw.githubusercontent.com/Mirantis/cridockerd/refs/heads/master/packaging/systemd/cri-docker.service

[root@ubuntu2404 ~]#wget -O /lib/systemd/system/cri-docker.socket

https://raw.githubusercontent.com/Mirantis/cridockerd/refs/heads/master/packaging/systemd/cri-docker.socket

[root@ubuntu2404 ~]#systemctl daemon-reload && systemctl enable --now cri-docker.service && systemctl enable --now cri-docker.socket所有主机配置cri-dockerd(v1.24以后版本)

众所周知的原因,从国内 cri-dockerd 服务无法下载 k8s.gcr.io上面相关镜像,导致无法启动,所以需要修改cri-dockerd 使用国内镜像源

vim /lib/systemd/system/cri-docker.service

#修改ExecStart行如下

#最新版Kubernetes v1.35.0和v1.34.1

ExecStart=/usr/bin/cri-dockerd --container-runtime-endpoint fd:// --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.10.1

#同步至所有节点

[root@master1 ~]#for i in {102..106};do scp /lib/systemd/system/cridocker.service10.0.0.$i:/lib/systemd/system/cri-docker.service; ssh 10.0.0.$i

"systemctl daemon-reload && systemctl enable cri-docker.service cri-docker.socket

&& systemctl restart cri-docker.service";done1.4 所有master和node节点安装kubeadm等相关包

通过国内镜像站点阿里云安装的参考链接:

https://developer.aliyun.com/mirror/kubernetesDebian / Ubuntu

apt-get update && apt-get install -y apt-transport-https

curl -fsSL https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.28/deb/Release.key |

gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

echo "deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.28/deb/ /" |

tee /etc/apt/sources.list.d/kubernetes.list

apt-get update

apt-get install -y kubelet kubeadm kubectl范例: 在所有master和node节点执行下面操作安装k8s相关包

[root@master1 ~]#apt-get update && apt-get install -y apt-transport-https

[root@master1 ~]#export K8S_VERSION=v1.35

[root@master1 ~]#curl -fsSL https://mirrors.aliyun.com/kubernetes-new/core/stable/${K8S_VERSION}/deb/Release.key |

gpg --dearmor -o /etc/apt/keyrings/kubernetes-apt-keyring.gpg

[root@master1 ~]#echo "deb [signed-by=/etc/apt/keyrings/kubernetes-aptkeyring.gpg] https://mirrors.aliyun.com/kubernetesnew/core/stable/${K8S_VERSION}/deb/ /" | tee

/etc/apt/sources.list.d/kubernetes.list

[root@master1 ~]#apt-get update

#查看版本信息

[root@master1 ~]# apt-cache madison kubeadm

#查看kubeadm,kubectl,kubelet最新版本

[root@master1 ~]#apt list kubeadm kubectl kubelet

kubeadm | 1.35.0-1.1 | https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.35/deb Packages

#安装指定版本的k8s相关包

#在所有master和node节点安装 kubeadm,kubelet和kubectl,但是默认为最新版本,所以此处要指定版

本

#注意:因为有依赖关系,安装kubeadm时会自动安装kubelet和kubectl,但是为最新版本,所以此处要指定版

本

#需要指定三个安装包

[root@master1 ~]#apt -y install kubeadm kubelet kubectl

#服务kubelet因缺少配置文件默认无法启动

[root@master1 ~]#systemctl status kubelet

● kubelet.service - kubelet: The Kubernetes Node Agent

Loaded: loaded (/lib/systemd/system/kubelet.service; enabled; vendor preset:

enabled)

Drop-In: /etc/systemd/system/kubelet.service.d

└─10-kubeadm.conf

Active: activating (auto-restart) (Result: exit-code) since Tue 2020-04-21

22:45:14 CST; 1s ago

Docs: https://kubernetes.io/docs/home/1.5 实现kubeadm命令补全

[root@master1 ~]#echo 'source <(kubeadm completion bash)' >> .bashrc

[root@master1 ~]#exit

#验证

[root@master1 ~]#kubeadm <2TAB>

alpha completion config init join reset token

upgrade version1.6 在第一个master节点运行kubeadm init初始化命令

在三台 master 中任意一台 master 主机执行kubeadm命令进行集群初始化,而且集群初始化只需要初始化一次

#默认的网络配置进行初始化

[root@master1 ~]K8S_RELEASE_VERSION=1.35.0(指定安装1.35.0版本)

[root@master1 ~]kubeadm init \

--kubernetes-version=v1.28.0 \

--control-plane-endpoint=kubeapi.wang.org \

--pod-network-cidr=10.244.0.0/16 \

--service-cidr=10.96.0.0/12 \

--token-ttl=0 \

--image-repository=registry.aliyuncs.com/google_containers \

--upload-certs \

--cri-socket=unix:///run/cri-dockerd.sock1.7 在第一个master节点kubectl命令的授权

根据第一台主机初始化成功的提示信息进行下面操作

#复制认证为Kubernetes系统管理员的配置文件至目标用户(例如当前用户root)的家目录下,生成配置

kube-config 文件

[root@master1 ~]#mkdir -p $HOME/.kube

[root@master1 ~]#sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config1.8 添加其它的master节点到集群

根据前面集群初始化时最后面信息,添加master2和master3节点

在所有其他master节点执行下面所需命令

#基于kubeadm init命令执行的输出结果,添加 --certificate-key 参数,执行下面命令

kubeadm join kubeapi.wang.org:6443 --token d0ycw9.gmqbo6fsow7maeww \

--discovery-token-ca-cert-hash sha256:99d2059310bbbfce67719efc50e42e5a54b255d55af3d6f127aaf087966defec \

--control-plane --certificate-key bbe9096e3aeb07b4406312418a9198beab9aa43b81439d31c3edbc11aea8f9c8 --cri-socket=unix:///run/cri-dockerd.sock

#按上面提示生成kubectl命令的授权配置文件

[root@master2 ~]#mkdir -p $HOME/.kube

[root@master2 ~]#sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@master2 ~]#sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@master1 ~]#kubectl get nodes

NAME STATUS ROLES AGE VERSION

master1.wang.org NotReady control-plane 20m v1.35.0

master2.wang.org NotReady control-plane 14m v1.35.0

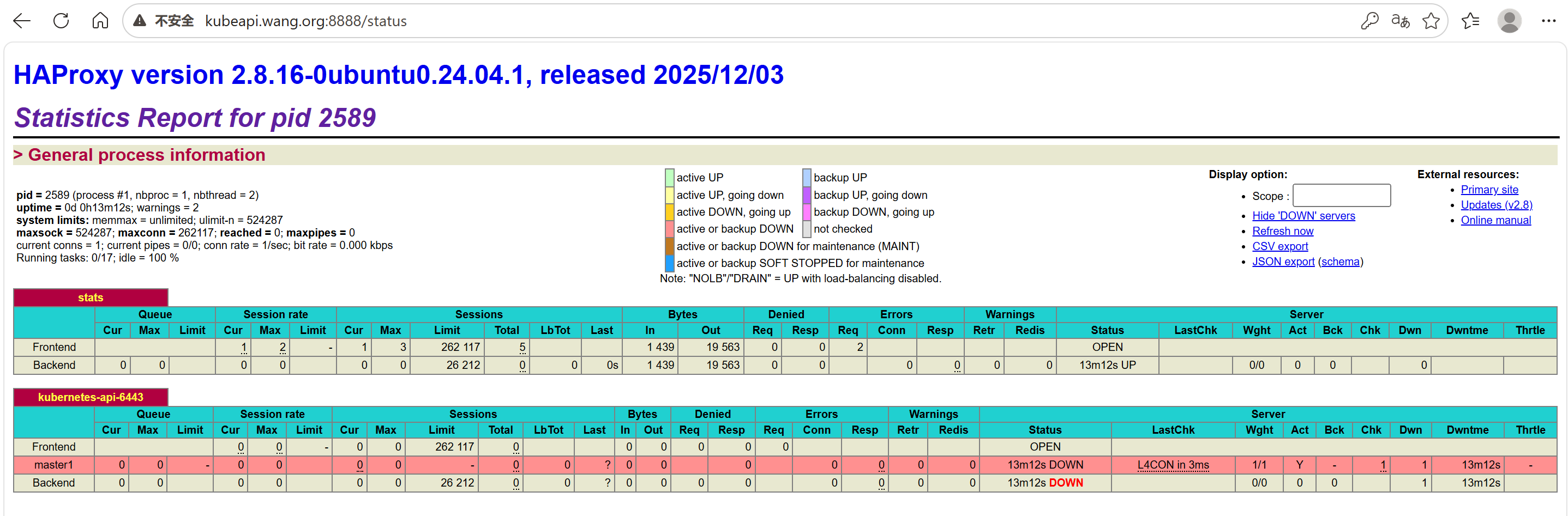

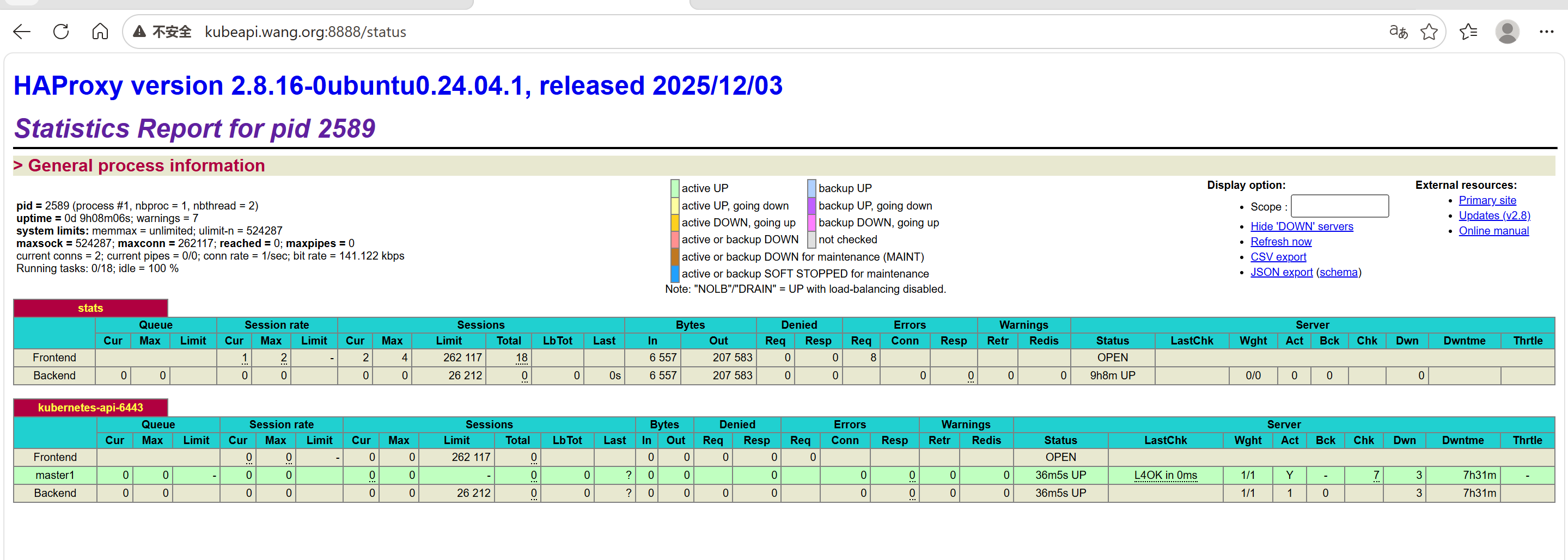

master3.wang.org NotReady control-plane 12m v1.35.01.9 修改haproxy的配置

由于我只部署了一台就不用修改配置,如果部署多台就要修改配置

[root@ha1 ~]#vi /etc/haproxy/haproxy.cfg

listen stats

mode http

bind 0.0.0.0:8888

stats enable

log global

stats uri /status

stats auth admin:123456

listen kubernetes-api-6443

bind 10.0.0.100:6443

mode tcp

server master1 10.0.0.101:6443 check inter 3s fall 3 rise 3

server master2 10.0.0.102:6443 check inter 3s fall 3 rise 3 #启用两行

server master3 10.0.0.103:6443 check inter 3s fall 3 rise 3

[root@ha1 ~]#systemctl reload haproxy观察haproxy的状态页,可以看到所有 master上线

1.10 在每个worker节点分别执行命令将所有Worker主机加入集群

在所有node节点执行上面的命令即可加入集群

解析要加入

加master地址

echo "10.0.0.134 kubeapi.wang.org" >> /etc/hosts

[root@node1 ~]kubeadm join kubeapi.wang.org:6443 --token d0ycw9.gmqbo6fsow7maeww \

--discovery-token-ca-cert-hash sha256:99d2059310bbbfce67719efc50e42e5a54b255d55af3d6f127aaf087966defec --cri-socket=unix:///run/cri-dockerd.sock (#注意:v1.24版本需要添加此项才能支持docker)

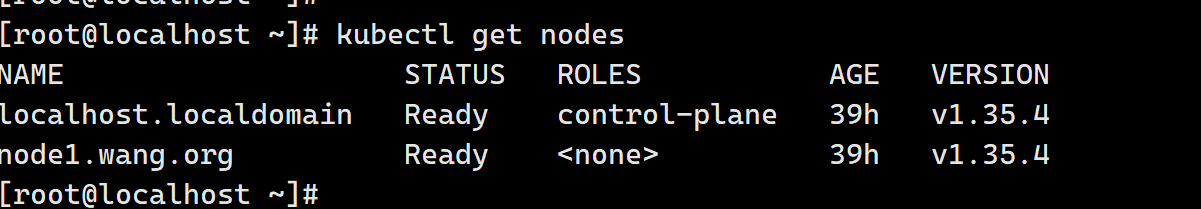

#在master上验证 Worker 节点是否加入集群

#稍等几分钟后可以查看下面Ready状态

[root@master1 ~]#kubectl get nodes

NAME STATUS ROLES AGE VERSION

master1.wang.org NotReady control-plane 20m v1.35.0

master2.wang.org NotReady control-plane 14m v1.35.0

master3.wang.org NotReady control-plane 12m v1.35.0

node1.wang.org NotReady <none> 10m v1.35.0

node2.wang.org NotReady <none> 10m v1.35.0

node3.wang.org NotReady <none> 10m v1.35.0

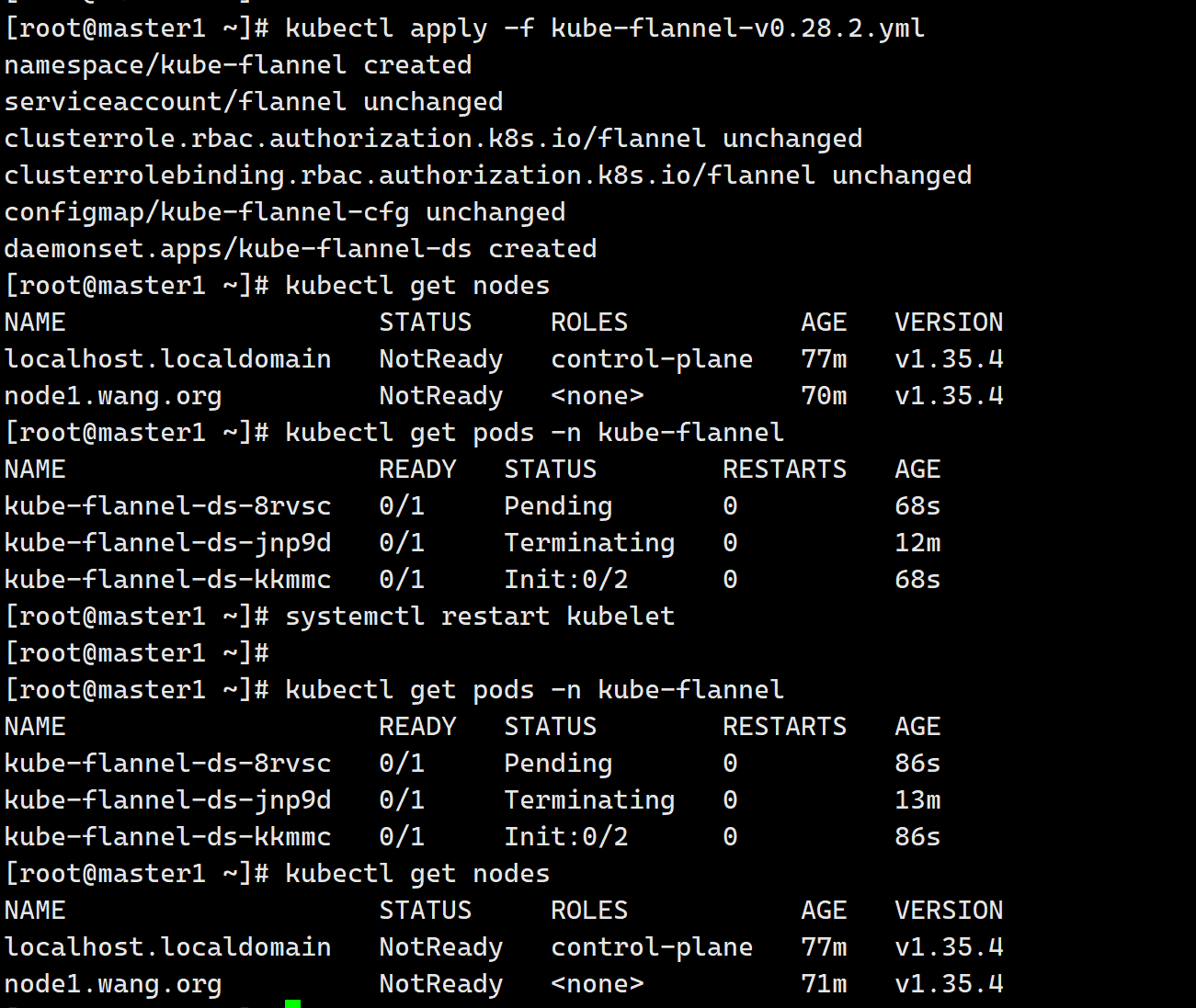

1.11 在第一个master节点配置网络组件

Kubernetes系统上Pod网络的实现依赖于第三方插件进行,这类插件有近数十种之多,较为著名的有flannel、calico、canal和kube-router等,简单易用的实现是为CoreOS提供的flannel项目。下面的命令用于在线部署flannel至Kubernetes系统之上

#查看节点信息为NotReady状态,原因为没有网络插件

把kube-flannel-v0.28.2.yml文件上传

[root@master1 ~]# kubectl apply -f kube-flannel-v0.28.2.yml

namespace/kube-flannel created

serviceaccount/flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

configmap/kube-flannel-cfg created

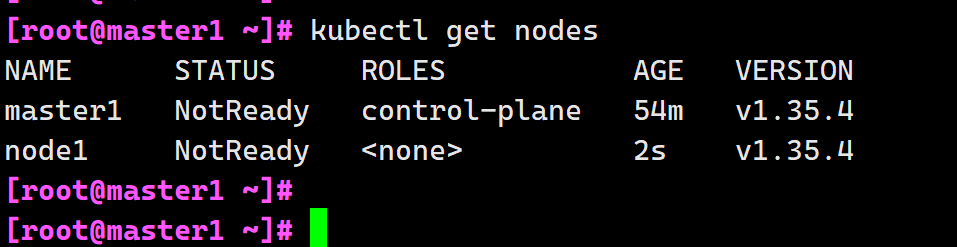

daemonset.apps/kube-flannel-ds created按照网络插件后查看是NotReady状态

[root@master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master1 NotReady control-plane 61m v1.35.4

node1 NotReady <none> 6m34s v1.35.4查看插件是否运行

[root@master1 ~]# kubectl get pods -n kube-flannel

NAME READY STATUS RESTARTS AGE

kube-flannel-ds-fptk2 1/1 Running 0 2m8s

kube-flannel-ds-vds9l 1/1 Running 0 2m8s

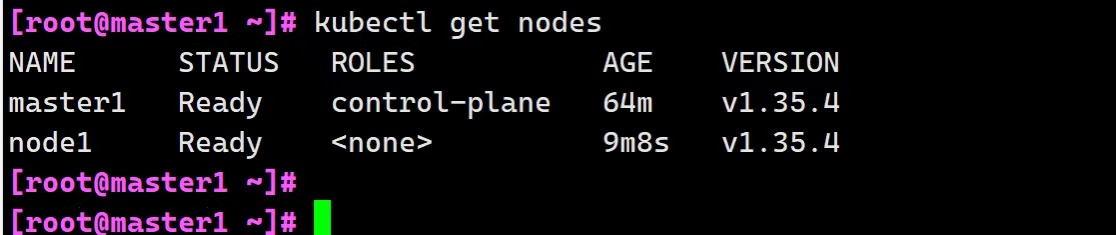

[root@master1 ~]# 重启kubelet

[root@master1 ~]# systemctl restart kubelet再次查看

[root@master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master1 Ready control-plane 64m v1.35.4

node1 Ready <none> 9m8s v1.35.4

[root@master1 ~]# 状态显示Ready加入成功

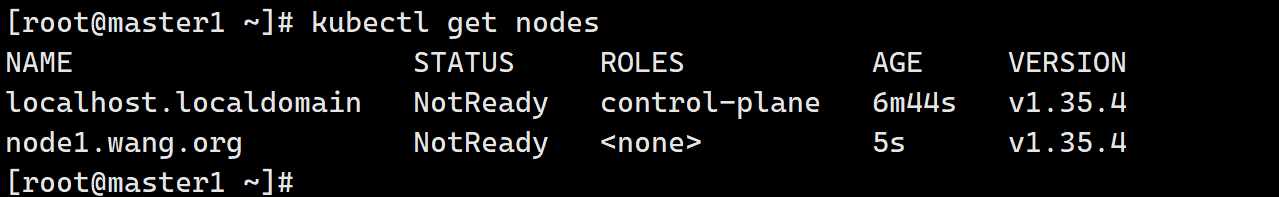

基于rocky系统安装的截图,步骤和前面差不多,有些地方做些修改。

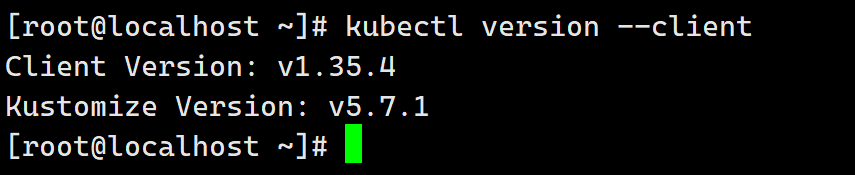

kubectl查看版本

查看集群状态,提示没有安装网络插件导致的

安装插件

等待一会时间后自己回变成Ready状态(由于master节点没有修改主机名,后排操作出现了问题,所有环境准备要提前准备好)