基于HTTP流式传输的MCP服务器创建流程

-

- 8、基于HTTP流式传输的MCP服务器创建流程

-

- 1、HTTP流式传输MCP服务器与客户端通信流程

- 2、HTTP流式传输MCP服务器开发流程

- 3、HTTP流式传输MCP服务器开启与测试

- 4、自定义MCP客户端Client接入HTTP流式传输MCP服务器

- [5、借助自定义client接入流式HTTP MCP服务器](#5、借助自定义client接入流式HTTP MCP服务器)

8、基于HTTP流式传输的MCP服务器创建流程

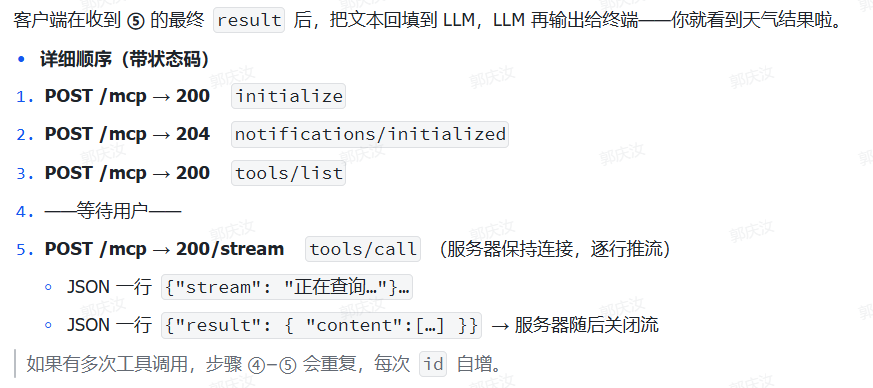

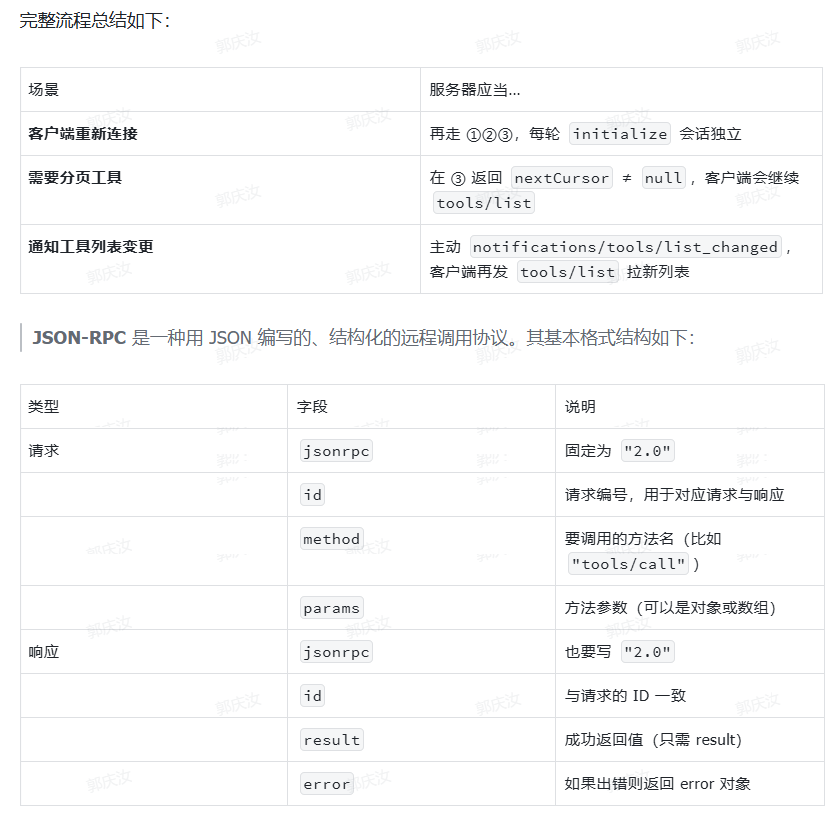

1、HTTP流式传输MCP服务器与客户端通信流程

- 启动时: 3 步握手(无用户输入)

- 当用户第一次提问时(模型判断要用工具)

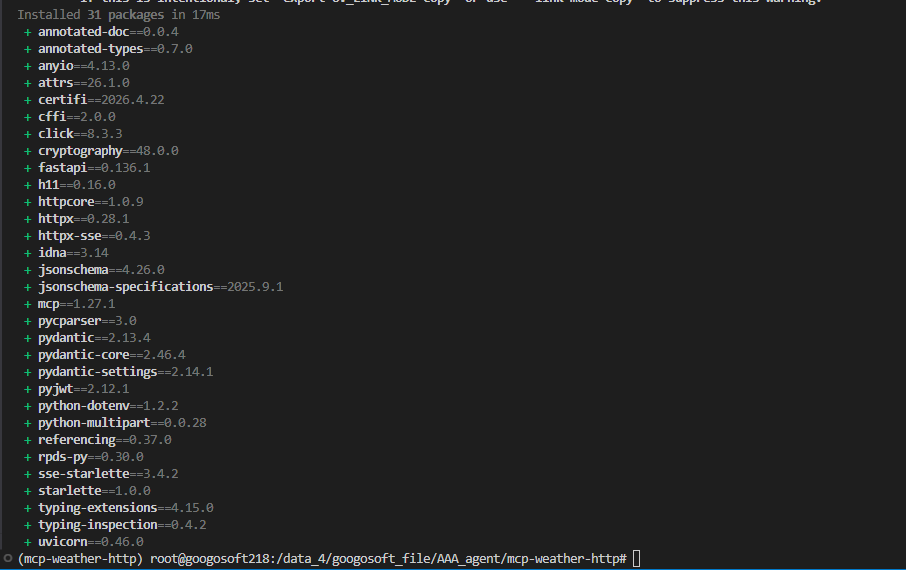

2、HTTP流式传输MCP服务器开发流程

- 创建项目文件:

python

cd /root/autodl-tmp/MCP/MCP-sse-test

uv init mcp-weather-http

cd mcp-weather-http

# 创建虚拟环境

uv venv

# 激活虚拟环境

source .venv/bin/activate

uv add mcp httpx fastapi

python

mkdir -p ./src/mcp_weather_http

cd ./src/mcp_weather_http然后创建server.py:

并写入如下代码:

python

"""weather_http_server_v8.py -- MCP Streamable HTTP (Cherry‑Studio verified)

==========================================================================

• initialize → protocolVersion + capabilities(streaming + tools.listChanged)

+ friendly instructions

• notifications/initialized → ignored (204)

• tools/list → single-page tool registry (get_weather)

• tools/call → execute get_weather, stream JSON (content[])

• GET → 405 (no SSE stream implemented)

"""

from __future__ import annotations

import argparse

import asyncio

import json

from typing import Any, AsyncIterator

import httpx

from fastapi import FastAPI, Request, Response, status

from fastapi.responses import StreamingResponse

# ---------------------------------------------------------------------------

# Server constants

# ---------------------------------------------------------------------------

SERVER_NAME = "WeatherServer"

SERVER_VERSION = "1.0.0"

PROTOCOL_VERSION = "2024-11-05" # Cherry Studio current

# ---------------------------------------------------------------------------

# Weather helpers

# ---------------------------------------------------------------------------

OPENWEATHER_URL = "https://api.openweathermap.org/data/2.5/weather"

API_KEY: str | None = None

USER_AGENT = "weather-app/1.0"

async def fetch_weather(city: str) -> dict[str, Any]:

if not API_KEY:

return {"error": "API_KEY 未设置,请提供有效的 OpenWeather API Key。"}

params = {"q": city, "appid": API_KEY, "units": "metric", "lang": "zh_cn"}

headers = {"User-Agent": USER_AGENT}

async with httpx.AsyncClient(timeout=30.0) as client:

try:

r = await client.get(OPENWEATHER_URL, params=params, headers=headers)

r.raise_for_status()

return r.json()

except httpx.HTTPStatusError as exc:

return {"error": f"HTTP 错误: {exc.response.status_code}"}

except Exception as exc: # noqa: BLE001

return {"error": f"请求失败: {exc}"}

def format_weather(data: dict[str, Any]) -> str:

if "error" in data:

return data["error"]

city = data.get("name", "未知")

country = data.get("sys", {}).get("country", "未知")

temp = data.get("main", {}).get("temp", "N/A")

humidity = data.get("main", {}).get("humidity", "N/A")

wind = data.get("wind", {}).get("speed", "N/A")

desc = data.get("weather", [{}])[0].get("description", "未知")

return (

f"🌍 {city}, {country}\n"

f"🌡 温度: {temp}°C\n"

f"💧 湿度: {humidity}%\n"

f"🌬 风速: {wind} m/s\n"

f"🌤 天气: {desc}"

)

async def stream_weather(city: str, req_id: int | str) -> AsyncIterator[bytes]:

# progress chunk

yield json.dumps({"jsonrpc": "2.0", "id": req_id, "stream": f"查询 {city} 天气中..."}).encode() + b"\n"

await asyncio.sleep(0.3)

data = await fetch_weather(city)

if "error" in data:

yield json.dumps({"jsonrpc": "2.0", "id": req_id, "error": {"code": -32000, "message": data["error"]}}).encode() + b"\n"

return

yield json.dumps({

"jsonrpc": "2.0", "id": req_id,

"result": {

"content": [

{"type": "text", "text": format_weather(data)}

],

"isError": False

}

}).encode() + b"\n"

# ---------------------------------------------------------------------------

# FastAPI app

# ---------------------------------------------------------------------------

app = FastAPI(title="WeatherServer HTTP-Stream v8")

TOOLS_REGISTRY = {

"tools": [

{

"name": "get_weather",

"description": "用于进行天气信息查询的函数,输入城市英文名称,即可获得当前城市天气信息。",

"inputSchema": {

"type": "object",

"properties": {

"city": {

"type": "string",

"description": "City name, e.g. 'Hangzhou'"

}

},

"required": ["city"]

}

}

],

"nextCursor": None

}

@app.get("/mcp")

async def mcp_initialize_via_get():

# GET 请求也执行了 initialize 方法

return {

"jsonrpc": "2.0",

"id": 0,

"result": {

"protocolVersion": PROTOCOL_VERSION,

"capabilities": {

"streaming": True,

"tools": {"listChanged": True}

},

"serverInfo": {

"name": SERVER_NAME,

"version": SERVER_VERSION

},

"instructions": "Use the get_weather tool to fetch weather by city name."

}

}

@app.post("/mcp")

async def mcp_endpoint(request: Request):

try:

body = await request.json()

# ✅ 打印客户端请求内容

print("💡 收到请求:", json.dumps(body, ensure_ascii=False, indent=2))

except Exception:

return {"jsonrpc": "2.0", "id": None, "error": {"code": -32700, "message": "Parse error"}}

req_id = body.get("id", 1)

method = body.get("method")

# ✅ 打印当前方法类型

print(f"🔧 方法: {method}")

# 0) Ignore initialized notification (no response required)

if method == "notifications/initialized":

return Response(status_code=status.HTTP_204_NO_CONTENT)

# 1) Activation probe (no method)

if method is None:

return {"jsonrpc": "2.0", "id": req_id, "result": {"status": "MCP server online."}}

# 2) initialize

if method == "initialize":

return {

"jsonrpc": "2.0", "id": req_id,

"result": {

"protocolVersion": PROTOCOL_VERSION,

"capabilities": {

"streaming": True,

"tools": {"listChanged": True}

},

"serverInfo": {"name": SERVER_NAME, "version": SERVER_VERSION},

"instructions": "Use the get_weather tool to fetch weather by city name."

}

}

# 3) tools/list

if method == "tools/list":

print(json.dumps(TOOLS_REGISTRY, indent=2, ensure_ascii=False))

return {"jsonrpc": "2.0", "id": req_id, "result": TOOLS_REGISTRY}

# 4) tools/call

if method == "tools/call":

params = body.get("params", {})

tool_name = params.get("name")

args = params.get("arguments", {})

if tool_name != "get_weather":

return {"jsonrpc": "2.0", "id": req_id, "error": {"code": -32602, "message": "Unknown tool"}}

city = args.get("city")

if not city:

return {"jsonrpc": "2.0", "id": req_id, "error": {"code": -32602, "message": "Missing city"}}

return StreamingResponse(stream_weather(city, req_id), media_type="application/json")

# 5) unknown method

return {"jsonrpc": "2.0", "id": req_id, "error": {"code": -32601, "message": "Method not found"}}

# ---------------------------------------------------------------------------

# Runner

# ---------------------------------------------------------------------------

def main() -> None:

parser = argparse.ArgumentParser(description="Weather MCP HTTP-Stream v8")

parser.add_argument("--api_key", required=True)

parser.add_argument("--host", default="127.0.0.1")

parser.add_argument("--port", type=int, default=8000)

args = parser.parse_args()

global API_KEY

API_KEY = args.api_key

import uvicorn

uvicorn.run(app, host=args.host, port=args.port, log_level="info")

if __name__ == "__main__":

main()代码解释如下:总体结构说明

python

📦 weather_http_server_v8.py

├── 常量定义(协议版本 + OpenWeather 配置)

├── 工具方法(fetch_weather、format_weather、stream_weather)

├── FastAPI 路由

│ ├── GET /mcp → 可选支持

│ └── POST /mcp → 支持所有 JSON-RPC 调用

└── main() → 启动 uvicorn 服务器1️⃣ 头部信息(元数据 + 模块引入)

python

"""weather_http_server_v8.py -- MCP Streamable HTTP (Cherry‑Studio verified)

• initialize → 声明 streaming + tools.listChanged 能力

• tools/list → 提供 get_weather 工具

• tools/call → 调用后 stream JSON 数据

• GET → 405 或简单返回信息(支持 Cherry 探测)

"""✔ 简洁明了地说明这个服务符合 Cherry Studio 所支持的 MCP HTTP 协议子集。

2️⃣ 常量定义

python

SERVER_NAME = "WeatherServer"

SERVER_VERSION = "1.0.0"

PROTOCOL_VERSION = "2024-11-05" # MCP 规定版本号

OPENWEATHER_URL = "https://api.openweathermap.org/data/2.5/weather"

API_KEY: str | None = None # 启动时注入

USER_AGENT = "weather-app/1.0"✔ 提前定义版本号、天气接口、全局变量等。

3️⃣ 天气处理逻辑

python

async def fetch_weather(city: str) -> dict[str, Any]:- 向 OpenWeather API 请求天气数据

- 自动处理错误(比如 key 不对、超时)

python

def format_weather(data: dict[str, Any]) -> str:- 把原始 JSON 格式化成可读文本 🌤️

python

async def stream_weather(city: str, req_id: int | str) -> AsyncIterator[bytes]:- 生成器流式返回天气内容(符合 MCP 协议的逐行 JSON)

示例输出:

python

{"jsonrpc": "2.0", "id": 1, "stream": "查询 Hangzhou 天气中..."}

{"jsonrpc": "2.0", "id": 1, "result": { "content": [{"type":"text","text":"🌡 温度: 22°C"}] }}4️⃣ MCP 工具注册表

python

TOOLS_REGISTRY = {

"tools": [

{

"name": "get_weather",

"description": "...",

"inputSchema": {

"type": "object",

"properties": {

"city": {

"type": "string",

"description": "City name, e.g. 'Hangzhou'"

}

},

"required": ["city"]

}

}

],

"nextCursor": None

}✔ 符合 MCP 2025-03-26 文档对 tools/list 的格式要求。

5️⃣ 核心路由逻辑(FastAPI)

✔ /mcp GET

python

@app.get("/mcp")- Cherry Studio 在探测时会发 GET 请求

- 我们这里返回一个 initialize 风格的响应

✔ /mcp POST

python

@app.post("/mcp")

async def mcp_endpoint(request: Request):

6️⃣main() 启动逻辑

python

def main():

parser.add_argument("--api_key", required=True)

uvicorn.run(app, host=..., port=...)✔ 从命令行传入 OpenWeather 的 API Key 并启动服务。

python

uv run ./server.py --api_key 你的key3、HTTP流式传输MCP服务器开启与测试

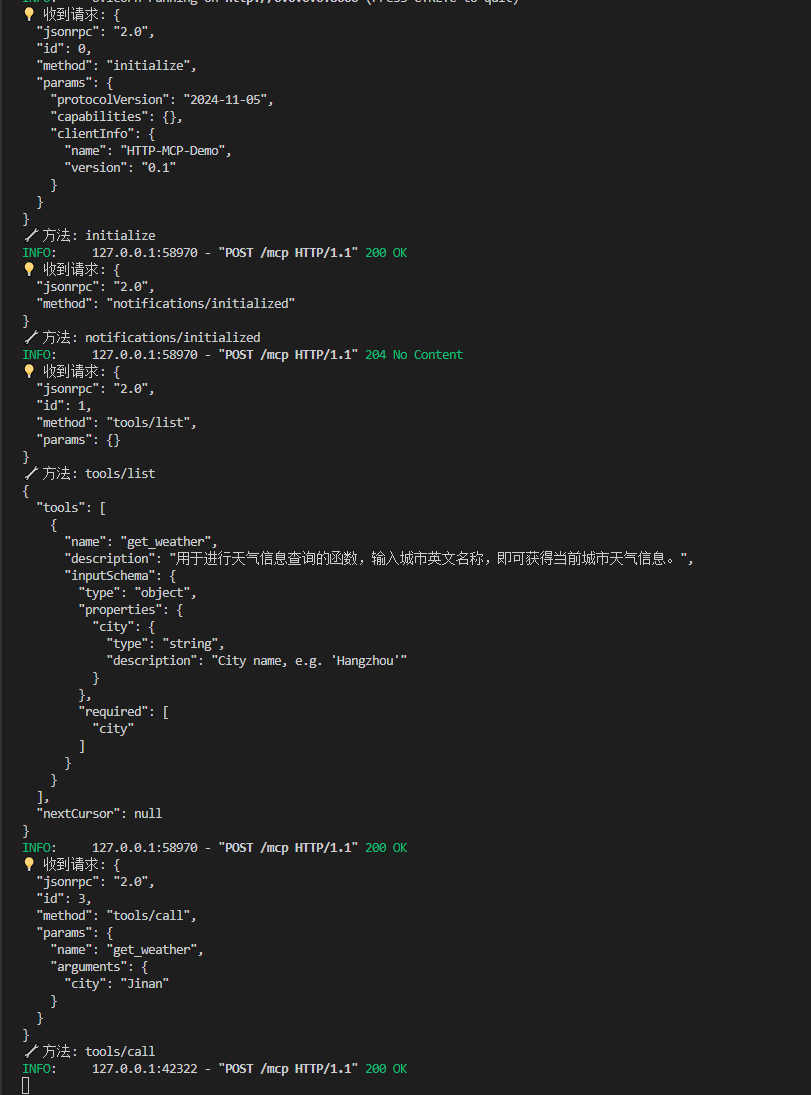

在创建完server.py后,我们可以开启服务并进行测试。需要注意的是,Inspector并不支持流式传输的MCP服务器测试,我们只能基于对HTTP流式传输的协议理解,创建一个测试流程。

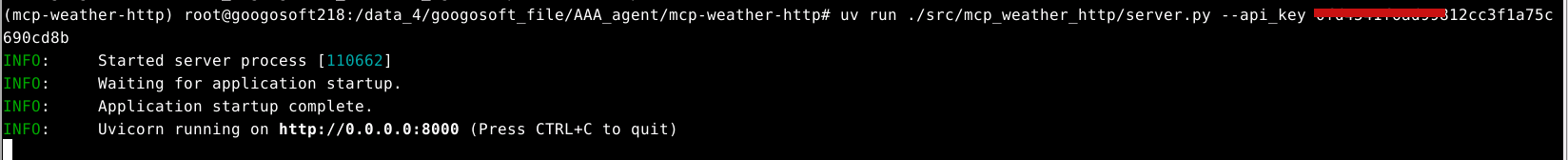

- 开启HTTP流式传输服务器

python

# 回到项目主目录

# cd /root/autodl-tmp/MCP/MCP-sse-test/mcp-weather-http

uv run ./src/mcp_weather_http/server.py --api_key YOUR_API_KEY

接下来我们通过 4 个 curl 命令来模拟 MCP 客户端与服务器的标准通信流程。

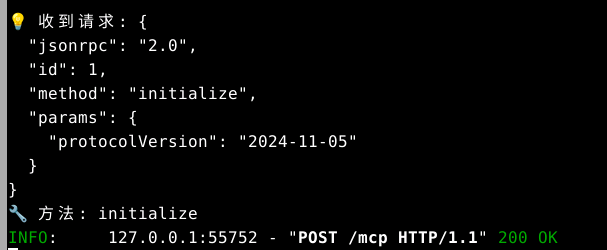

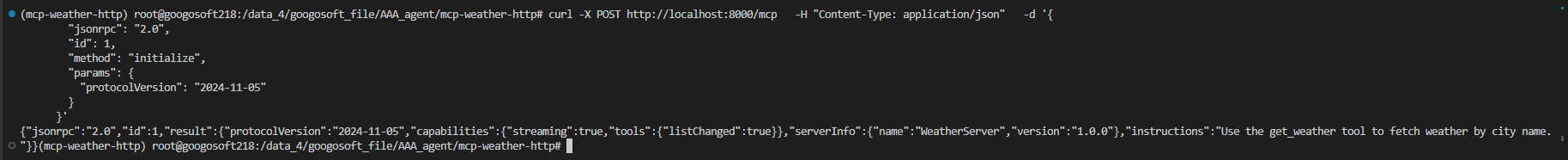

- ① initialize 请求(能力协商)

python

curl -X POST http://localhost:8000/mcp \

-H "Content-Type: application/json" \

-d '{

"jsonrpc": "2.0",

"id": 1,

"method": "initialize",

"params": {

"protocolVersion": "2024-11-05"

}

}'服务端:

客户端:

期望响应:返回服务器支持的协议版本、功能:

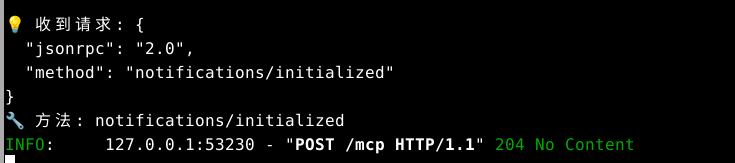

- ② notifications/initialized 通知(确认上线)

python

curl -X POST http://localhost:8000/mcp \

-H "Content-Type: application/json" \

-d '{

"jsonrpc": "2.0",

"method": "notifications/initialized"

}'服务端:

📥 期望响应:204 No Content(因为是通知类型):

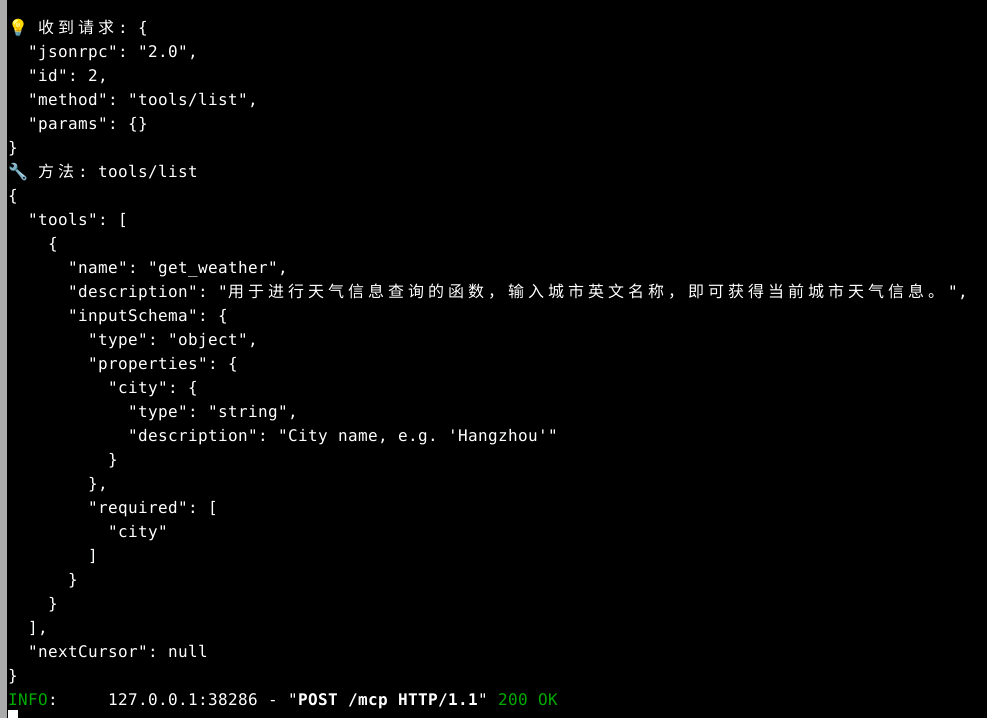

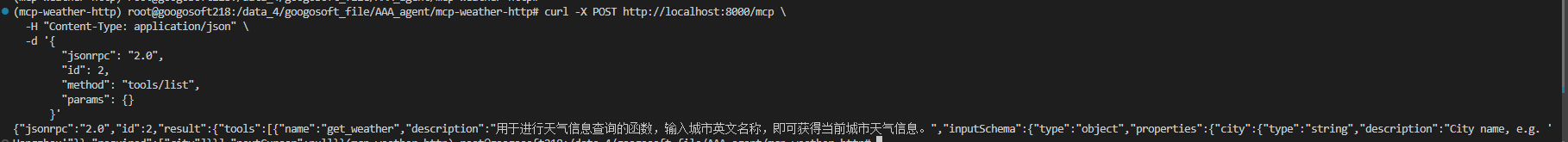

- ③ tools/list 请求(获取工具注册表)

python

curl -X POST http://localhost:8000/mcp \

-H "Content-Type: application/json" \

-d '{

"jsonrpc": "2.0",

"id": 2,

"method": "tools/list",

"params": {}

}'📥 期望响应:返回工具清单, get_weather 工具的结构体和 schema:

服务端:

客户端:

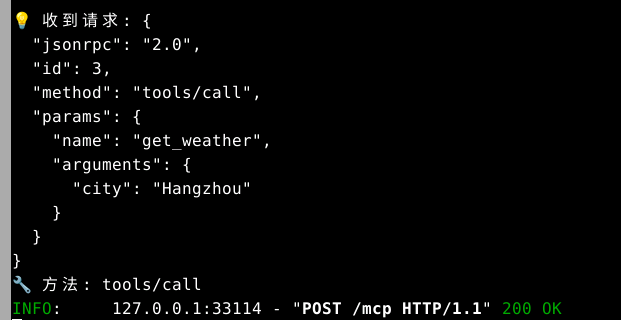

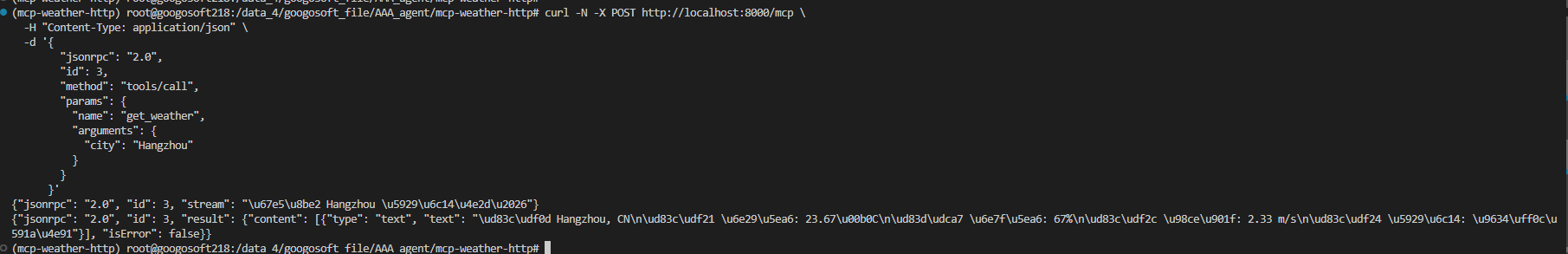

- ④ tools/call 请求(调用实际工具,流式返回)

python

curl -N -X POST http://localhost:8000/mcp \

-H "Content-Type: application/json" \

-d '{

"jsonrpc": "2.0",

"id": 3,

"method": "tools/call",

"params": {

"name": "get_weather",

"arguments": {

"city": "Hangzhou"

}

}

}'服务端:

客户端:

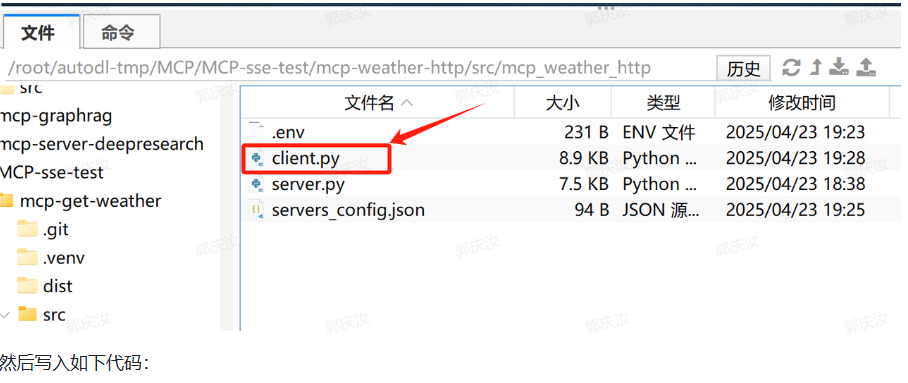

4、自定义MCP客户端Client接入HTTP流式传输MCP服务器

截止目前,主流的MCP客户端都无法很好的完成HTTP流式MCP服务器的接入,因此这里为大家介绍如何从零编写MCP客户端,并按照标准流程接入HTTP流式MCP服务器。

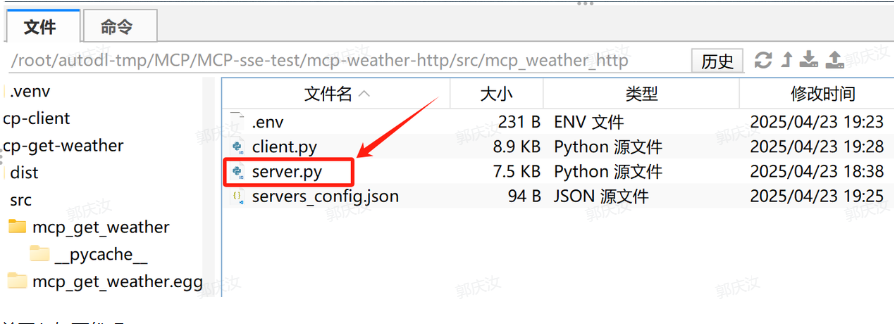

- 编写client.py脚本

python

# 回到代码文件夹

cd /root/autodl-tmp/MCP/MCP-sse-test/mcp-weather-http/src/mcp_weather_http创建client.py:

然后写入如下代码:

python

"""mcp_http_client.py -- Async MCP client (Streamable HTTP)

===========================================================

• 完全移除 stdio 传输,改用 Streamable HTTP (POST + optional SSE)

• 支持 initialize → notifications/initialized → tools/list → tools/call

• 自动处理 line‑delimited JSON stream (application/json 或 text/event-stream)

• 保留交互式 chat_loop,兼容 OpenAI Function Calling

"""

from __future__ import annotations

import asyncio

import json

import logging

import os

from contextlib import AsyncExitStack

from typing import Any, Dict, List, Optional

import httpx

from dotenv import load_dotenv

from openai import OpenAI

################################################################################

# 通用配置加载

################################################################################

class Configuration:

def __init__(self) -> None:

load_dotenv()

self.api_key = os.getenv("LLM_API_KEY")

self.base_url = os.getenv("BASE_URL")

self.model = os.getenv("MODEL", "gpt-4o")

if not self.api_key:

raise ValueError("❌ 未找到 LLM_API_KEY,请在 .env 文件中配置")

@staticmethod

def load_config(path: str) -> Dict[str, Any]:

with open(path, "r", encoding="utf-8") as f:

return json.load(f)

################################################################################

# HTTP‑based MCP Server wrapper

################################################################################

class HTTPMCPServer:

"""与单个 MCP Streamable HTTP 服务器通信"""

def __init__(self, name: str, endpoint: str) -> None:

self.name = name

self.endpoint = endpoint.rstrip("/") # e.g. http://localhost:8000/mcp

self.session: Optional[httpx.AsyncClient] = None

self.protocol_version: str = "2024-11-05"

async def initialize(self) -> None:

self.session = httpx.AsyncClient(timeout=httpx.Timeout(30.0))

# 1) initialize

init_req = {

"jsonrpc": "2.0",

"id": 0,

"method": "initialize",

"params": {

"protocolVersion": self.protocol_version,

"capabilities": {},

"clientInfo": {"name": "HTTP-MCP-Demo", "version": "0.1"},

},

}

r = await self._post_json(init_req)

if "error" in r:

raise RuntimeError(f"Initialize error: {r['error']}")

# 2) send initialized notification (no response expected)

await self._post_json({"jsonrpc": "2.0", "method": "notifications/initialized"})

async def list_tools(self) -> List[Dict[str, Any]]:

req = {"jsonrpc": "2.0", "id": 1, "method": "tools/list", "params": {}}

res = await self._post_json(req)

return res["result"]["tools"]

async def call_tool_stream(self, tool_name: str, arguments: Dict[str, Any]) -> str:

"""调用工具并将流式结果拼接为完整文本"""

req = {

"jsonrpc": "2.0",

"id": 3,

"method": "tools/call",

"params": {"name": tool_name, "arguments": arguments},

}

assert self.session is not None

async with self.session.stream(

"POST", self.endpoint, json=req, headers={"Accept": "application/json"}

) as resp:

if resp.status_code != 200:

raise RuntimeError(f"HTTP {resp.status_code}")

collected_text: List[str] = []

async for line in resp.aiter_lines():

if not line:

continue

chunk = json.loads(line)

if "stream" in chunk:

continue # 中间进度

if "error" in chunk:

raise RuntimeError(chunk["error"]["message"])

if "result" in chunk:

# 根据协议,文本在 result.content[0].text

for item in chunk["result"]["content"]:

if item["type"] == "text":

collected_text.append(item["text"])

return "\n".join(collected_text)

async def _post_json(self, payload: Dict[str, Any]) -> Dict[str, Any]:

assert self.session is not None

r = await self.session.post(self.endpoint, json=payload, headers={"Accept": "application/json"})

if r.status_code == 204 or not r.content:

return {} # ← 通知无响应体

r.raise_for_status()

return r.json()

async def close(self) -> None:

if self.session:

await self.session.aclose()

self.session = None

################################################################################

# LLM 封装(OpenAI Function‑Calling)

################################################################################

class LLMClient:

def __init__(self, api_key: str, base_url: Optional[str], model: str) -> None:

self.client = OpenAI(api_key=api_key, base_url=base_url)

self.model = model

def chat(self, messages: List[Dict[str, Any]], tools: Optional[List[Dict[str, Any]]]):

return self.client.chat.completions.create(model=self.model, messages=messages, tools=tools)

################################################################################

# 多服务器 MCP + LLM Function Calling

################################################################################

class MultiHTTPMCPClient:

def __init__(self, servers_conf: Dict[str, Any], api_key: str, base_url: Optional[str], model: str) -> None:

self.servers: Dict[str, HTTPMCPServer] = {

name: HTTPMCPServer(name, cfg["endpoint"]) for name, cfg in servers_conf.items()

}

self.llm = LLMClient(api_key, base_url, model)

self.all_tools: List[Dict[str, Any]] = [] # 转为 OAI FC 的 tools 数组

async def start(self):

for srv in self.servers.values():

await srv.initialize()

tools = await srv.list_tools()

for t in tools:

# 重命名以区分不同服务器

full_name = f"{srv.name}_{t['name']}"

self.all_tools.append({

"type": "function",

"function": {

"name": full_name,

"description": t["description"],

"parameters": t["inputSchema"],

},

})

logging.info("已连接服务器并汇总工具:%s", [t["function"]["name"] for t in self.all_tools])

async def call_local_tool(self, full_name: str, args: Dict[str, Any]) -> str:

srv_name, tool_name = full_name.split("_", 1)

srv = self.servers[srv_name]

# 兼容 city/location

city = args.get("city") or args.get("location")

if not city:

raise ValueError("Missing city/location")

return await srv.call_tool_stream(tool_name, {"city": city})

async def chat_loop(self):

print("🤖 HTTP MCP + Function Calling 客户端已启动,输入 quit 退出")

messages: List[Dict[str, Any]] = []

while True:

user = input("你: ").strip()

if user.lower() == "quit":

break

messages.append({"role": "user", "content": user})

# 1st LLM call

resp = self.llm.chat(messages, self.all_tools)

choice = resp.choices[0]

if choice.finish_reason == "tool_calls":

tc = choice.message.tool_calls[0]

tool_name = tc.function.name

tool_args = json.loads(tc.function.arguments)

print(f"[调用工具] {tool_name} → {tool_args}")

tool_resp = await self.call_local_tool(tool_name, tool_args)

messages.append(choice.message.model_dump())

messages.append({"role": "tool", "content": tool_resp, "tool_call_id": tc.id})

resp2 = self.llm.chat(messages, self.all_tools)

print("AI:", resp2.choices[0].message.content)

messages.append(resp2.choices[0].message.model_dump())

else:

print("AI:", choice.message.content)

messages.append(choice.message.model_dump())

async def close(self):

for s in self.servers.values():

await s.close()

################################################################################

# main entry

################################################################################

async def main():

logging.basicConfig(level=logging.INFO, format="%(asctime)s - %(levelname)s - %(message)s")

conf = Configuration()

servers_conf = conf.load_config("./src/mcp_weather_http/servers_config.json").get("mcpServers", {})

client = MultiHTTPMCPClient(servers_conf, conf.api_key, conf.base_url, conf.model)

try:

await client.start()

await client.chat_loop()

finally:

await client.close()

if __name__ == "__main__":

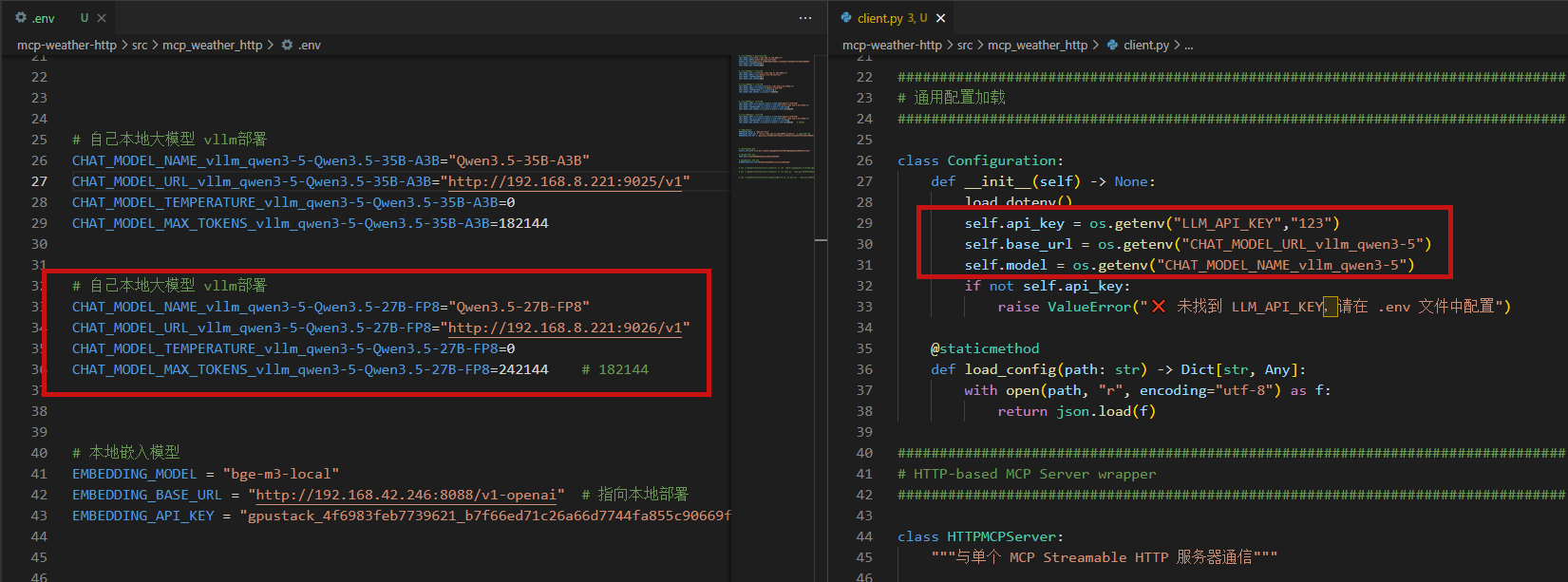

asyncio.run(main())- 创建.env和servers_config.json文件

然后在src文件夹内创建.env文件,并写入如下内容:

python

# 自己本地大模型 vllm部署

CHAT_MODEL_NAME_vllm_qwen3-5-Qwen3.5-27B-FP8="Qwen3.5-27B-FP8"

CHAT_MODEL_URL_vllm_qwen3-5-Qwen3.5-27B-FP8="http://192.168.8.221:9026/v1"

CHAT_MODEL_TEMPERATURE_vllm_qwen3-5-Qwen3.5-27B-FP8=0

CHAT_MODEL_MAX_TOKENS_vllm_qwen3-5-Qwen3.5-27B-FP8=242144 # 182144然后创建servers_config.json,用于记录HTTP流式传输服务器地址,例如:

python

{

"mcpServers": {

"weather": {

"endpoint": "http://127.0.0.1:8000/mcp"

}

}

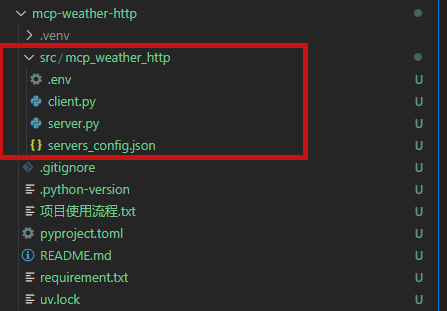

}文件结构:

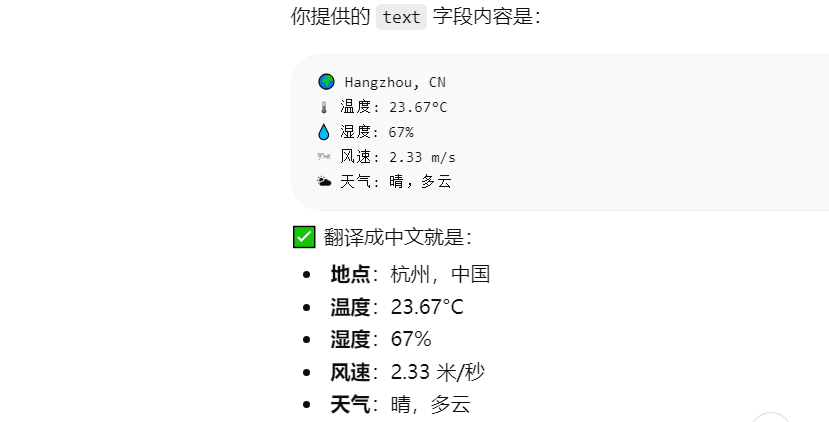

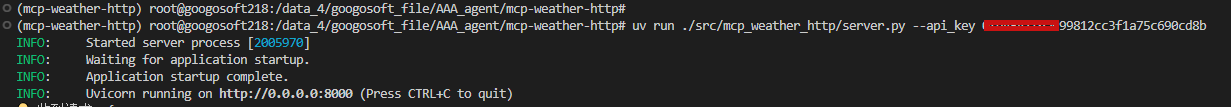

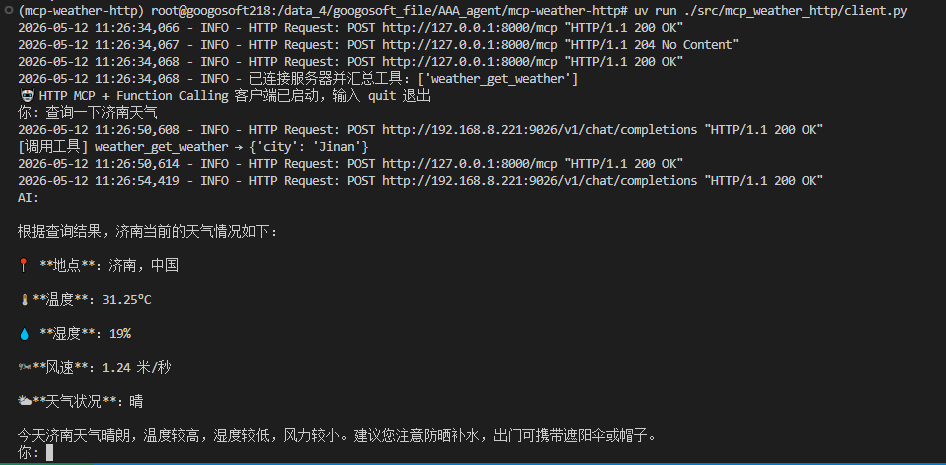

5、借助自定义client接入流式HTTP MCP服务器

- 开启流式HTTP MCP服务器

在项目主目录下:

服务端启动

python

uv run ./src/mcp_weather_http/server.py --api_key YOUR_API_KRI

客户端启动

python

# 回到项目主目录

# cd /root/autodl-tmp/MCP/MCP-sse-test/mcp-weather-http

uv run ./src/mcp_weather_http/client.py

- 观察HTTP流式传输服务器端运行效果