本文介绍如何在Android手机端部署开源语音识别模型sherpa-onnx-paraformer-zh-2024-03-09/model.int8.onnx,具体步骤如下

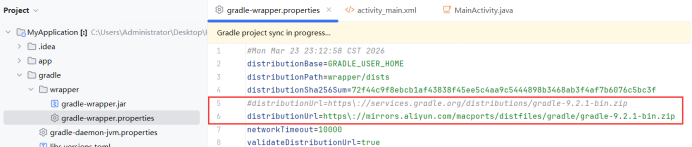

step1:创建Android工程,语言选择Java,并更换镜像源。

修改-->gradle/wrapper/gradle-wrapper.properties

python

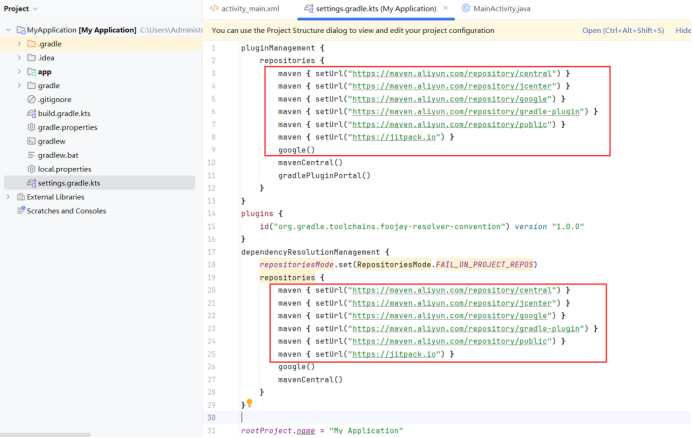

distributionUrl=https\://mirrors.aliyun.com/macports/distfiles/gradle/gradle-9.2.1-bin.zip修改-->settings.gradle.kts

python

maven { setUrl("https://maven.aliyun.com/repository/central") }

maven { setUrl("https://maven.aliyun.com/repository/jcenter") }

maven { setUrl("https://maven.aliyun.com/repository/google") }

maven { setUrl("https://maven.aliyun.com/repository/gradle-plugin") }

maven { setUrl("https://maven.aliyun.com/repository/public") }

maven { setUrl("https://jitpack.io") }

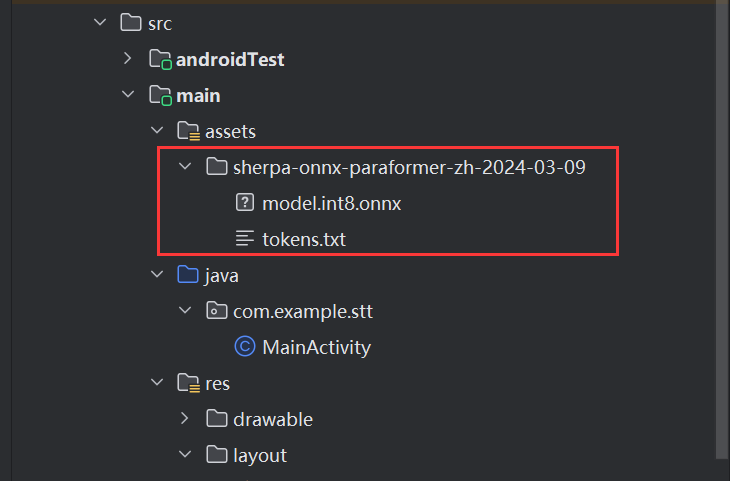

google()step2:下载sherpa-onnx-paraformer-zh-2024-03-09/model.int8.onnx模型,并创建Android工程,将模型放置assets目录:

step3: 编写界面activity_main.xml

XML

<?xml version="1.0" encoding="utf-8"?>

<androidx.constraintlayout.widget.ConstraintLayout xmlns:android="http://schemas.android.com/apk/res/android"

xmlns:app="http://schemas.android.com/apk/res-auto"

xmlns:tools="http://schemas.android.com/tools"

android:id="@+id/main"

android:layout_width="match_parent"

android:layout_height="match_parent"

tools:context=".MainActivity">

<androidx.core.widget.NestedScrollView

android:id="@+id/contentScroll"

android:layout_width="0dp"

android:layout_height="0dp"

android:clipToPadding="false"

android:fillViewport="true"

android:paddingStart="20dp"

android:paddingEnd="20dp"

app:layout_constraintBottom_toBottomOf="parent"

app:layout_constraintEnd_toEndOf="parent"

app:layout_constraintStart_toStartOf="parent"

app:layout_constraintTop_toTopOf="parent">

<LinearLayout

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:gravity="center_horizontal"

android:orientation="vertical"

android:paddingTop="28dp"

android:paddingBottom="28dp">

<LinearLayout

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:gravity="center_vertical"

android:orientation="horizontal">

<LinearLayout

android:layout_width="0dp"

android:layout_height="wrap_content"

android:layout_weight="1"

android:orientation="vertical">

<TextView

android:id="@+id/titleText"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:textSize="28sp"

android:textStyle="bold" />

<TextView

android:id="@+id/subtitleText"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:layout_marginTop="4dp"

android:textSize="14sp" />

</LinearLayout>

<TextView

android:id="@+id/statusChip"

android:layout_width="wrap_content"

android:layout_height="32dp"

android:gravity="center"

android:minWidth="76dp"

android:paddingStart="14dp"

android:paddingEnd="14dp"

android:text="加载中"

android:textSize="13sp"

android:textStyle="bold" />

</LinearLayout>

<LinearLayout

android:id="@+id/modelPanel"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:layout_marginTop="22dp"

android:gravity="center_vertical"

android:orientation="horizontal"

android:padding="14dp">

<ImageView

android:layout_width="32dp"

android:layout_height="32dp"

android:contentDescription="@null"/>

<LinearLayout

android:layout_width="0dp"

android:layout_height="wrap_content"

android:layout_marginStart="12dp"

android:layout_weight="1"

android:orientation="vertical">

<TextView

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:textSize="12sp" />

<TextView

android:id="@+id/modelNameText"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:layout_marginTop="2dp"

android:ellipsize="end"

android:maxLines="1"

android:text="sherpa-onnx-paraformer-zh-2024-03-09"

android:textSize="15sp"

android:textStyle="bold" />

</LinearLayout>

</LinearLayout>

<com.google.android.material.textfield.TextInputLayout

android:id="@+id/resultInputLayout"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:layout_marginTop="18dp"

app:boxBackgroundMode="outline"

app:boxCornerRadiusBottomEnd="8dp"

app:boxCornerRadiusBottomStart="8dp"

app:boxCornerRadiusTopEnd="8dp"

app:boxCornerRadiusTopStart="8dp">

<com.google.android.material.textfield.TextInputEditText

android:id="@+id/resultEditText"

android:layout_width="match_parent"

android:layout_height="220dp"

android:gravity="top|start"

android:inputType="textMultiLine|textCapSentences"

android:minLines="8"

android:padding="16dp"

android:scrollbars="vertical"

android:textSize="17sp" />

</com.google.android.material.textfield.TextInputLayout>

<TextView

android:id="@+id/statusMessage"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:layout_marginTop="12dp"

android:text="正在准备离线模型"

android:textSize="14sp" />

<LinearLayout

android:id="@+id/controlRow"

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:layout_marginTop="22dp"

android:gravity="center_vertical"

android:orientation="horizontal">

<com.google.android.material.button.MaterialButton

android:id="@+id/recordButton"

android:layout_width="0dp"

android:layout_height="58dp"

android:layout_weight="1"

android:enabled="false"

android:textAllCaps="false"

android:textSize="17sp"

android:textStyle="bold"

app:cornerRadius="29dp"

app:iconGravity="textStart"

app:iconPadding="10dp"

app:iconTint="@color/white" />

<com.google.android.material.button.MaterialButton

android:id="@+id/clearButton"

android:layout_width="108dp"

android:layout_height="58dp"

android:layout_marginStart="12dp"

android:textAllCaps="false"

android:text="清空"

app:cornerRadius="29dp"

app:iconGravity="textStart"

app:iconPadding="8dp"

app:strokeWidth="1dp" />

</LinearLayout>

<LinearLayout

android:layout_width="match_parent"

android:layout_height="wrap_content"

android:layout_marginTop="14dp"

android:gravity="center_vertical"

android:orientation="horizontal">

<ImageView

android:layout_width="22dp"

android:layout_height="22dp"

android:contentDescription="@null"/>

<TextView

android:id="@+id/durationText"

android:layout_width="wrap_content"

android:layout_height="wrap_content"

android:layout_marginStart="8dp"

android:text="00:00.0"

android:textSize="14sp"

android:textStyle="bold" />

</LinearLayout>

</LinearLayout>

</androidx.core.widget.NestedScrollView>

</androidx.constraintlayout.widget.ConstraintLayout>编写res/values/strings.xml文件

XML

<resources>

<string name="app_name">离线语音输入</string>

<string name="main_title">离线语音输入</string>

<string name="main_subtitle">Sherpa-ONNX + Paraformer-zh-large-int8</string>

<string name="result_hint">识别结果会显示在这里,也可以继续手动编辑</string>

<string name="record_idle">按住说话</string>

<string name="record_active">松开识别</string>

<string name="record_busy">识别中...</string>

<string name="clear_text">清空</string>

<string name="model_label">离线模型</string>

<string name="hold_hint">按住按钮开始录音,松开后自动识别并写入文本框</string>

</resources>step4:编写Java程序MainActivity.java

java

package com.example.stt;

import android.Manifest;

import android.annotation.SuppressLint;

import android.content.pm.PackageManager;

import android.content.res.ColorStateList;

import android.media.AudioFormat;

import android.media.AudioRecord;

import android.media.MediaRecorder;

import android.os.Bundle;

import android.os.Handler;

import android.os.Looper;

import android.os.SystemClock;

import android.text.Editable;

import android.util.Log;

import android.view.MotionEvent;

import android.widget.TextView;

import android.widget.Toast;

import androidx.activity.EdgeToEdge;

import androidx.appcompat.app.AppCompatActivity;

import androidx.core.app.ActivityCompat;

import androidx.core.content.ContextCompat;

import androidx.core.graphics.Insets;

import androidx.core.view.ViewCompat;

import androidx.core.view.WindowInsetsCompat;

import com.google.android.material.button.MaterialButton;

import com.google.android.material.textfield.TextInputEditText;

import com.k2fsa.sherpa.onnx.OfflineModelConfig;

import com.k2fsa.sherpa.onnx.OfflineParaformerModelConfig;

import com.k2fsa.sherpa.onnx.OfflineRecognizer;

import com.k2fsa.sherpa.onnx.OfflineRecognizerConfig;

import com.k2fsa.sherpa.onnx.OfflineStream;

import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.io.InputStream;

import java.io.OutputStream;

import java.util.ArrayList;

import java.util.List;

import java.util.Locale;

import java.util.concurrent.ExecutorService;

import java.util.concurrent.Executors;

public class MainActivity extends AppCompatActivity {

private static final String TAG = "STT_demo";

private static final int REQUEST_RECORD_AUDIO_PERMISSION = 200;

private static final int SAMPLE_RATE = 16000;

private static final int CHANNEL_CONFIG = AudioFormat.CHANNEL_IN_MONO;

private static final int AUDIO_FORMAT = AudioFormat.ENCODING_PCM_16BIT;

private static final long MAX_RECORD_MILLIS = 60_000L;

private static final String MODEL_DIR = "sherpa-onnx-paraformer-zh-2024-03-09";

private static final String MODEL_FILE = "model.int8.onnx";

private static final String TOKENS_FILE = "tokens.txt";

private enum StatusStyle {

LOADING,

READY,

RECORDING,

ERROR

}

private final Handler mainHandler = new Handler(Looper.getMainLooper());

private final ExecutorService modelExecutor = Executors.newSingleThreadExecutor();

private final ExecutorService recognitionExecutor = Executors.newSingleThreadExecutor();

private final Object samplesLock = new Object();

private final List<float[]> sampleChunks = new ArrayList<>();

private TextView statusChip;

private TextView statusMessage;

private TextView durationText;

private TextInputEditText resultEditText;

private MaterialButton recordButton;

private MaterialButton clearButton;

private OfflineRecognizer recognizer;

private AudioRecord audioRecord;

private Thread recordingThread;

private int totalSamples = 0;

private long recordingStartedAtMillis = 0L;

private volatile boolean hasModelAssets = false;

private volatile boolean isModelReady = false;

private volatile boolean isModelLoading = false;

private volatile boolean isRecording = false;

private volatile boolean isRecognizing = false;

private volatile boolean isDestroyed = false;

private final Runnable durationTicker = new Runnable() {

@Override

public void run() {

if (!isRecording) {

return;

}

long elapsed = SystemClock.elapsedRealtime() - recordingStartedAtMillis;

durationText.setText(formatDuration(elapsed));

if (elapsed >= MAX_RECORD_MILLIS) {

stopRecordingAndRecognize();

return;

}

mainHandler.postDelayed(this, 100L);

}

};

@Override

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

EdgeToEdge.enable(this);

setContentView(R.layout.activity_main);

ViewCompat.setOnApplyWindowInsetsListener(findViewById(R.id.main), (v, insets) -> {

Insets systemBars = insets.getInsets(WindowInsetsCompat.Type.systemBars());

v.setPadding(systemBars.left, systemBars.top, systemBars.right, systemBars.bottom);

return insets;

});

bindViews();

wireControls();

requestAudioPermissionIfNeeded();

checkModelAssets();

}

private void bindViews() {

statusChip = findViewById(R.id.statusChip);

statusMessage = findViewById(R.id.statusMessage);

durationText = findViewById(R.id.durationText);

resultEditText = findViewById(R.id.resultEditText);

recordButton = findViewById(R.id.recordButton);

clearButton = findViewById(R.id.clearButton);

setStatus(StatusStyle.LOADING, "检查中", "正在检查离线模型文件");

updateRecordButtonAvailability();

}

@SuppressLint("ClickableViewAccessibility")

private void wireControls() {

clearButton.setOnClickListener(v -> {

resultEditText.setText("");

durationText.setText(formatDuration(0));

});

recordButton.setOnClickListener(v -> {

// Touch events handle press and release; this keeps accessibility click routing valid.

});

recordButton.setOnTouchListener((v, event) -> {

switch (event.getActionMasked()) {

case MotionEvent.ACTION_DOWN:

if (canStartRecording()) {

startRecording();

}

return true;

case MotionEvent.ACTION_UP:

v.performClick();

if (isRecording) {

stopRecordingAndRecognize();

}

return true;

case MotionEvent.ACTION_CANCEL:

if (isRecording) {

stopRecordingAndRecognize();

}

return true;

default:

return true;

}

});

}

private void requestAudioPermissionIfNeeded() {

if (!hasRecordPermission()) {

ActivityCompat.requestPermissions(

this,

new String[]{Manifest.permission.RECORD_AUDIO},

REQUEST_RECORD_AUDIO_PERMISSION

);

}

}

@Override

public void onRequestPermissionsResult(int requestCode, String[] permissions, int[] grantResults) {

super.onRequestPermissionsResult(requestCode, permissions, grantResults);

if (requestCode != REQUEST_RECORD_AUDIO_PERMISSION) {

return;

}

boolean granted = grantResults.length > 0 && grantResults[0] == PackageManager.PERMISSION_GRANTED;

if (!granted) {

setStatus(StatusStyle.ERROR, "无权限", "需要麦克风权限才能录音");

} else if (isModelReady) {

setStatus(StatusStyle.READY, "已就绪", "按住按钮开始录音");

} else if (hasModelAssets) {

setStatus(StatusStyle.READY, "待准备", "首次使用会加载离线模型");

}

updateRecordButtonAvailability();

}

private boolean hasRecordPermission() {

return ContextCompat.checkSelfPermission(this, Manifest.permission.RECORD_AUDIO)

== PackageManager.PERMISSION_GRANTED;

}

private void checkModelAssets() {

try {

ensureModelAssets();

hasModelAssets = true;

if (hasRecordPermission()) {

setStatus(StatusStyle.READY, "待准备", "首次使用会加载离线模型");

} else {

setStatus(StatusStyle.ERROR, "无权限", "需要麦克风权限才能录音");

}

} catch (IOException e) {

hasModelAssets = false;

setStatus(StatusStyle.ERROR, "模型缺失", e.getMessage());

}

updateRecordButtonAvailability();

}

private void prepareRecognizerAsync() {

if (isModelLoading || isModelReady) {

return;

}

isModelLoading = true;

setStatus(StatusStyle.LOADING, "加载中", "正在准备离线模型,首次加载会比较久");

updateRecordButtonAvailability();

modelExecutor.execute(() -> {

try {

Log.i(TAG, "Start initializing Sherpa-ONNX offline recognizer");

OfflineRecognizer preparedRecognizer = createRecognizer();

if (isDestroyed) {

preparedRecognizer.release();

return;

}

recognizer = preparedRecognizer;

isModelReady = true;

isModelLoading = false;

Log.i(TAG, "Finished initializing Sherpa-ONNX offline recognizer");

runOnUiThread(() -> {

setStatus(StatusStyle.READY, "已就绪", "模型已加载,请按住按钮开始录音");

updateRecordButtonAvailability();

});

} catch (Throwable e) {

Log.e(TAG, "Failed to initialize Sherpa-ONNX", e);

isModelReady = false;

isModelLoading = false;

runOnUiThread(() -> {

setStatus(StatusStyle.ERROR, "加载失败", e.getMessage());

updateRecordButtonAvailability();

});

}

});

}

private OfflineRecognizer createRecognizer() throws IOException {

File modelDir = ensureModelFiles();

String modelPath = new File(modelDir, MODEL_FILE).getAbsolutePath();

String tokensPath = new File(modelDir, TOKENS_FILE).getAbsolutePath();

OfflineParaformerModelConfig paraformer = new OfflineParaformerModelConfig();

paraformer.setModel(modelPath);

OfflineModelConfig modelConfig = new OfflineModelConfig();

modelConfig.setParaformer(paraformer);

modelConfig.setTokens(tokensPath);

modelConfig.setNumThreads(Math.max(1, Math.min(2, Runtime.getRuntime().availableProcessors())));

modelConfig.setDebug(false);

OfflineRecognizerConfig config = new OfflineRecognizerConfig();

config.setModelConfig(modelConfig);

config.setDecodingMethod("greedy_search");

return new OfflineRecognizer(null, config);

}

private void ensureModelAssets() throws IOException {

String[] assets = getAssets().list(MODEL_DIR);

boolean hasModel = false;

boolean hasTokens = false;

if (assets != null) {

for (String asset : assets) {

if (MODEL_FILE.equals(asset)) {

hasModel = true;

} else if (TOKENS_FILE.equals(asset)) {

hasTokens = true;

}

}

}

if (!hasModel || !hasTokens) {

throw new IOException("请将 " + MODEL_FILE + " 和 " + TOKENS_FILE

+ " 放入 app/src/main/assets/" + MODEL_DIR);

}

}

private File ensureModelFiles() throws IOException {

ensureModelAssets();

File modelDir = new File(getFilesDir(), MODEL_DIR);

File marker = new File(modelDir, ".ready");

File modelFile = new File(modelDir, MODEL_FILE);

File tokensFile = new File(modelDir, TOKENS_FILE);

if (marker.exists() && modelFile.exists() && modelFile.length() > 0

&& tokensFile.exists() && tokensFile.length() > 0) {

return modelDir;

}

if (!modelDir.exists() && !modelDir.mkdirs()) {

throw new IOException("无法创建模型目录: " + modelDir.getAbsolutePath());

}

Log.i(TAG, "Copy model assets to " + modelDir.getAbsolutePath());

copyAssetFile(MODEL_DIR + "/" + MODEL_FILE, modelFile);

copyAssetFile(MODEL_DIR + "/" + TOKENS_FILE, tokensFile);

if (!marker.exists() && !marker.createNewFile()) {

throw new IOException("无法写入模型完成标记");

}

return modelDir;

}

private void copyAssetFile(String assetPath, File outputFile) throws IOException {

File tempFile = new File(outputFile.getAbsolutePath() + ".tmp");

try (InputStream input = getAssets().open(assetPath);

OutputStream output = new FileOutputStream(tempFile)) {

byte[] buffer = new byte[1024 * 1024];

int read;

while ((read = input.read(buffer)) != -1) {

output.write(buffer, 0, read);

}

}

if (outputFile.exists() && !outputFile.delete()) {

throw new IOException("无法替换旧模型文件: " + outputFile.getAbsolutePath());

}

if (!tempFile.renameTo(outputFile)) {

throw new IOException("无法写入模型文件: " + outputFile.getAbsolutePath());

}

}

private boolean canStartRecording() {

if (isRecording || isRecognizing) {

return false;

}

if (isModelLoading) {

return false;

}

if (!hasRecordPermission()) {

requestAudioPermissionIfNeeded();

return false;

}

if (!hasModelAssets) {

setStatus(StatusStyle.ERROR, "模型缺失", "请检查 assets 中的模型文件");

return false;

}

if (!isModelReady || recognizer == null) {

Toast.makeText(this, "正在加载模型,完成后再按住说话", Toast.LENGTH_SHORT).show();

prepareRecognizerAsync();

return false;

}

return true;

}

@SuppressLint("MissingPermission")

private void startRecording() {

try {

int minBufferSize = AudioRecord.getMinBufferSize(SAMPLE_RATE, CHANNEL_CONFIG, AUDIO_FORMAT);

if (minBufferSize <= 0) {

throw new IllegalStateException("无法初始化麦克风缓冲区");

}

AudioRecord recorder = new AudioRecord(

MediaRecorder.AudioSource.VOICE_RECOGNITION,

SAMPLE_RATE,

CHANNEL_CONFIG,

AUDIO_FORMAT,

minBufferSize * 2

);

if (recorder.getState() != AudioRecord.STATE_INITIALIZED) {

recorder.release();

throw new IllegalStateException("麦克风初始化失败");

}

synchronized (samplesLock) {

sampleChunks.clear();

totalSamples = 0;

}

audioRecord = recorder;

isRecording = true;

recordingStartedAtMillis = SystemClock.elapsedRealtime();

recorder.startRecording();

recordingThread = new Thread(() -> readMicrophone(recorder), "stt-recorder");

recordingThread.start();

setRecordingUi();

mainHandler.post(durationTicker);

} catch (Exception e) {

Log.e(TAG, "Failed to start recording", e);

isRecording = false;

setStatus(StatusStyle.ERROR, "录音失败", e.getMessage());

updateRecordButtonAvailability();

}

}

private void readMicrophone(AudioRecord recorder) {

int bufferSize = Math.max(1024, SAMPLE_RATE / 10);

short[] buffer = new short[bufferSize];

try {

while (isRecording) {

int read = recorder.read(buffer, 0, buffer.length);

if (read <= 0) {

continue;

}

float[] samples = new float[read];

for (int i = 0; i < read; i++) {

samples[i] = buffer[i] / 32768.0f;

}

synchronized (samplesLock) {

sampleChunks.add(samples);

totalSamples += read;

}

}

} catch (Exception e) {

Log.e(TAG, "Microphone read failed", e);

} finally {

try {

recorder.stop();

} catch (Exception ignored) {

// Recorder may already be stopped by the release action.

}

recorder.release();

if (audioRecord == recorder) {

audioRecord = null;

}

}

}

private void stopRecordingAndRecognize() {

if (!isRecording) {

return;

}

isRecording = false;

mainHandler.removeCallbacks(durationTicker);

AudioRecord recorder = audioRecord;

if (recorder != null) {

try {

recorder.stop();

} catch (Exception ignored) {

// The recording thread owns final release.

}

}

setRecognizingUi();

Thread threadToJoin = recordingThread;

recognitionExecutor.execute(() -> {

joinRecordingThread(threadToJoin);

float[] samples = drainSamples();

if (samples.length < SAMPLE_RATE / 4) {

runOnUiThread(() -> {

isRecognizing = false;

setStatus(StatusStyle.READY, "已就绪", "录音太短,请再试一次");

updateRecordButtonAvailability();

});

return;

}

recognizeSamples(samples);

});

}

private void joinRecordingThread(Thread thread) {

if (thread == null) {

return;

}

try {

thread.join(1200L);

} catch (InterruptedException e) {

Thread.currentThread().interrupt();

}

}

private float[] drainSamples() {

synchronized (samplesLock) {

float[] merged = new float[totalSamples];

int offset = 0;

for (float[] chunk : sampleChunks) {

System.arraycopy(chunk, 0, merged, offset, chunk.length);

offset += chunk.length;

}

sampleChunks.clear();

totalSamples = 0;

return merged;

}

}

private void recognizeSamples(float[] samples) {

OfflineStream stream = null;

try {

OfflineRecognizer currentRecognizer = recognizer;

if (currentRecognizer == null) {

throw new IllegalStateException("识别器尚未初始化");

}

stream = currentRecognizer.createStream();

stream.acceptWaveform(samples, SAMPLE_RATE);

currentRecognizer.decode(stream);

String text = currentRecognizer.getResult(stream).getText().trim();

runOnUiThread(() -> {

isRecognizing = false;

if (text.isEmpty()) {

setStatus(StatusStyle.READY, "已就绪", "未识别到有效语音");

} else {

insertRecognizedText(text);

setStatus(StatusStyle.READY, "已写入", "识别完成");

}

updateRecordButtonAvailability();

});

} catch (Exception e) {

Log.e(TAG, "Recognition failed", e);

runOnUiThread(() -> {

isRecognizing = false;

setStatus(StatusStyle.ERROR, "识别失败", e.getMessage());

updateRecordButtonAvailability();

});

} finally {

if (stream != null) {

stream.release();

}

}

}

private void insertRecognizedText(String text) {

Editable editable = resultEditText.getText();

if (editable == null) {

resultEditText.setText(text);

resultEditText.setSelection(text.length());

return;

}

int selectionStart = resultEditText.getSelectionStart();

int selectionEnd = resultEditText.getSelectionEnd();

if (selectionStart < 0 || selectionEnd < 0) {

editable.append(text);

resultEditText.setSelection(editable.length());

return;

}

int start = Math.min(selectionStart, selectionEnd);

int end = Math.max(selectionStart, selectionEnd);

editable.replace(start, end, text);

resultEditText.setSelection(start + text.length());

}

private void setRecordingUi() {

recordButton.setEnabled(true);

recordButton.setText(R.string.record_active);

setStatus(StatusStyle.RECORDING, "录音中", "正在收音");

}

private void setRecognizingUi() {

isRecognizing = true;

recordButton.setEnabled(false);

recordButton.setText(R.string.record_busy);

setStatus(StatusStyle.LOADING, "识别中", "正在离线识别");

}

private void updateRecordButtonAvailability() {

boolean enabled = hasModelAssets && hasRecordPermission()

&& !isRecording && !isRecognizing && !isModelLoading;

recordButton.setEnabled(enabled);

if (isModelLoading) {

recordButton.setText("加载模型中");

} else if (!isModelReady) {

recordButton.setText("加载模型");

} else {

recordButton.setText(R.string.record_idle);

}

}

private void setStatus(StatusStyle style, String chipText, String message) {

statusChip.setText(chipText == null ? "" : chipText);

statusMessage.setText(message == null ? "" : message);

switch (style) {

case READY:

break;

case RECORDING:

break;

case ERROR:

break;

case LOADING:

default:

break;

}

}

private ColorStateList colorStateList(int colorRes) {

return ColorStateList.valueOf(color(colorRes));

}

private int color(int colorRes) {

return ContextCompat.getColor(this, colorRes);

}

private String formatDuration(long millis) {

long totalTenths = millis / 100L;

long minutes = totalTenths / 600L;

long seconds = (totalTenths / 10L) % 60L;

long tenths = totalTenths % 10L;

return String.format(Locale.getDefault(), "%02d:%02d.%d", minutes, seconds, tenths);

}

@Override

protected void onDestroy() {

isDestroyed = true;

isRecording = false;

mainHandler.removeCallbacks(durationTicker);

AudioRecord recorder = audioRecord;

if (recorder != null) {

try {

recorder.stop();

} catch (Exception ignored) {

// The recording thread owns final release.

}

}

if (recognizer != null) {

recognizer.release();

recognizer = null;

}

modelExecutor.shutdownNow();

recognitionExecutor.shutdownNow();

super.onDestroy();

}

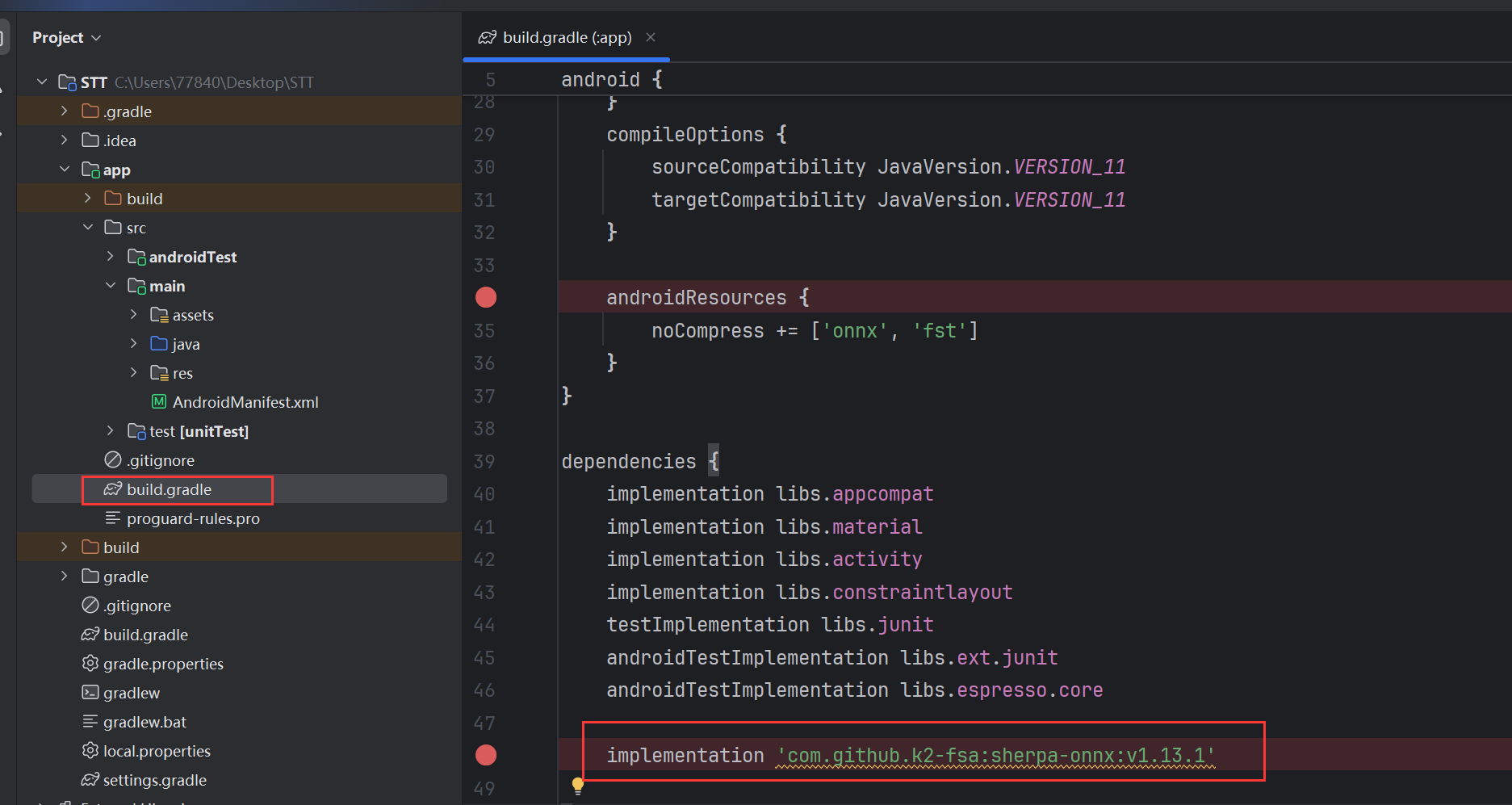

}step5: 编辑app/build.gradle文件添加依赖

java

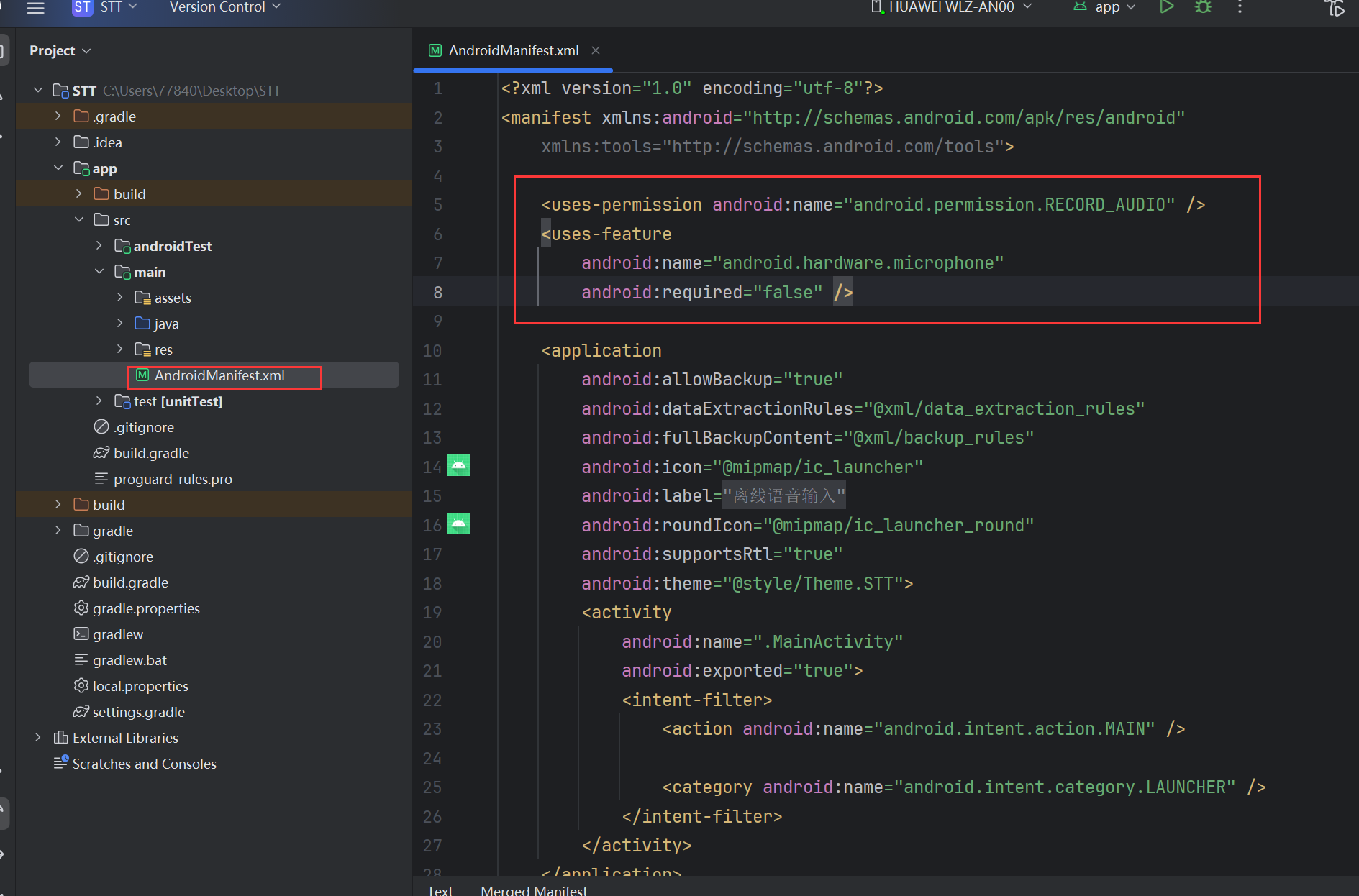

implementation 'com.github.k2-fsa:sherpa-onnx:v1.13.1'step6:编辑AndroidManifest.xml文件添加权限

java

<uses-permission android:name="android.permission.RECORD_AUDIO" />

<uses-feature

android:name="android.hardware.microphone"

android:required="false" />step7:测试