基于K8S1.28.2实验rook部署ceph

原文链接:基于K8S1.28.2实验rook部署ceph | 严千屹博客

Rook 支持 Kubernetes v1.22 或更高版本。

rook版本1.12.8

K8S版本1.28.2

部署出来ceph版本是quincy版本

|---------|----------------|-----------|------|------|----|

| 主机名 | ip1(NAT) | 系统 | 新硬盘 | 磁盘 | 内存 |

| master1 | 192.168.48.101 | Centos7.9 | 100G | 100G | 4G |

| master2 | 192.168.48.102 | Centos7.9 | | 100G | 4G |

| master3 | 192.168.48.103 | Centos7.9 | | 100G | 4G |

| node01 | 192.168.48.104 | Centos7.9 | 100G | 100G | 6G |

| node02 | 192.168.48.105 | Centos7.9 | 100G | 100G | 6G |

我这里是五台机,本应该ceph(三节点)是需要部署在三台node上的,这里为了测试方便,仅部署在master1,node01,node02上所以需要给这三台加一个物理硬盘

注意!使用之前,请确定是否去掉master节点的污点

以下所有操作都在master进行

前期准备

克隆仓库

登录后复制

plain

git clone --single-branch --branch v1.12.8 https://github.com/rook/rook.git

cd rook/deploy/examples查看所需镜像

登录后复制

plain

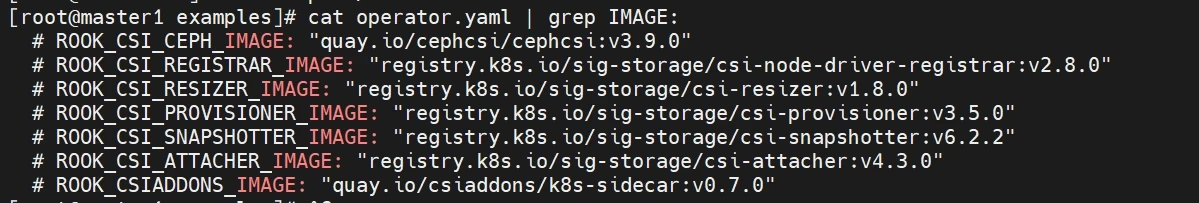

[root@master1 examples]# cat operator.yaml | grep IMAGE:

# ROOK_CSI_CEPH_IMAGE: "quay.io/cephcsi/cephcsi:v3.9.0"

# ROOK_CSI_REGISTRAR_IMAGE: "registry.k8s.io/sig-storage/csi-node-driver-registrar:v2.8.0"

# ROOK_CSI_RESIZER_IMAGE: "registry.k8s.io/sig-storage/csi-resizer:v1.8.0"

# ROOK_CSI_PROVISIONER_IMAGE: "registry.k8s.io/sig-storage/csi-provisioner:v3.5.0"

# ROOK_CSI_SNAPSHOTTER_IMAGE: "registry.k8s.io/sig-storage/csi-snapshotter:v6.2.2"

# ROOK_CSI_ATTACHER_IMAGE: "registry.k8s.io/sig-storage/csi-attacher:v4.3.0"

# ROOK_CSIADDONS_IMAGE: "quay.io/csiaddons/k8s-sidecar:v0.7.0"

[root@master1 examples]# cat operator.yaml | grep image:

image: rook/ceph:v1.12.8

基本都是国外的镜像,在这里通过阿里云+github方式构建镜像仓库解决(以下是添加为自己私人构建的镜像)

登录后复制

plain

sed -i 's/# ROOK_CSI_CEPH_IMAGE: "quay.io\/cephcsi\/cephcsi:v3.9.0"/ROOK_CSI_CEPH_IMAGE: "registry.cn-hangzhou.aliyuncs.com\/qianyios\/cephcsi:v3.9.0"/g' operator.yaml

sed -i 's/# ROOK_CSI_REGISTRAR_IMAGE: "registry.k8s.io\/sig-storage\/csi-node-driver-registrar:v2.8.0"/ROOK_CSI_REGISTRAR_IMAGE: "registry.cn-hangzhou.aliyuncs.com\/qianyios\/csi-node-driver-registrar:v2.8.0"/g' operator.yaml

sed -i 's/# ROOK_CSI_RESIZER_IMAGE: "registry.k8s.io\/sig-storage\/csi-resizer:v1.8.0"/ROOK_CSI_RESIZER_IMAGE: "registry.cn-hangzhou.aliyuncs.com\/qianyios\/csi-resizer:v1.8.0"/g' operator.yaml

sed -i 's/# ROOK_CSI_PROVISIONER_IMAGE: "registry.k8s.io\/sig-storage\/csi-provisioner:v3.5.0"/ROOK_CSI_PROVISIONER_IMAGE: "registry.cn-hangzhou.aliyuncs.com\/qianyios\/csi-provisioner:v3.5.0"/g' operator.yaml

sed -i 's/# ROOK_CSI_SNAPSHOTTER_IMAGE: "registry.k8s.io\/sig-storage\/csi-snapshotter:v6.2.2"/ROOK_CSI_SNAPSHOTTER_IMAGE: "registry.cn-hangzhou.aliyuncs.com\/qianyios\/csi-snapshotter:v6.2.2"/g' operator.yaml

sed -i 's/# ROOK_CSI_ATTACHER_IMAGE: "registry.k8s.io\/sig-storage\/csi-attacher:v4.3.0"/ROOK_CSI_ATTACHER_IMAGE: "registry.cn-hangzhou.aliyuncs.com\/qianyios\/csi-attacher:v4.3.0"/g' operator.yaml

sed -i 's/# ROOK_CSIADDONS_IMAGE: "quay.io\/csiaddons\/k8s-sidecar:v0.7.0"/ROOK_CSIADDONS_IMAGE: "registry.cn-hangzhou.aliyuncs.com\/qianyios\/k8s-sidecar:v0.7.0"/g' operator.yaml

sed -i 's/image: rook\/ceph:v1.12.8/image: registry.cn-hangzhou.aliyuncs.com\/qianyios\/ceph:v1.12.8/g' operator.yaml开启自动发现磁盘(用于后期扩展)

登录后复制

plain

sed -i 's/ROOK_ENABLE_DISCOVERY_DAEMON: "false"/ROOK_ENABLE_DISCOVERY_DAEMON: "true"/' /root/rook/deploy/examples/operator.yaml建议提前下载镜像

登录后复制

plain

docker pull registry.cn-hangzhou.aliyuncs.com/qianyios/cephcsi:v3.9.0

docker pull registry.cn-hangzhou.aliyuncs.com/qianyios/csi-node-driver-registrar:v2.8.0

docker pull registry.cn-hangzhou.aliyuncs.com/qianyios/csi-resizer:v1.8.0

docker pull registry.cn-hangzhou.aliyuncs.com/qianyios/csi-provisioner:v3.5.0

docker pull registry.cn-hangzhou.aliyuncs.com/qianyios/csi-snapshotter:v6.2.2

docker pull registry.cn-hangzhou.aliyuncs.com/qianyios/csi-attacher:v4.3.0

docker pull registry.cn-hangzhou.aliyuncs.com/qianyios/k8s-sidecar:v0.7.0

docker pull registry.cn-hangzhou.aliyuncs.com/qianyios/ceph:v1.12.8安装rook+ceph集群

开始部署

- 创建crd&common&operator

登录后复制

plain

kubectl create -f crds.yaml -f common.yaml -f operator.yaml

- 创建cluster(ceph)

修改配置:等待operator容器和discover容器启动,配置osd节点

先注意一下自己的磁盘(lsblk)根据自身情况修改下面的配置文件

登录后复制

plain

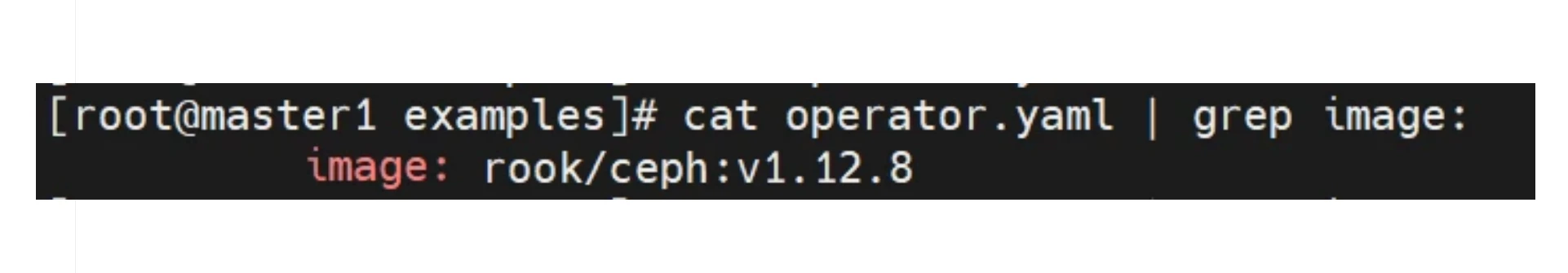

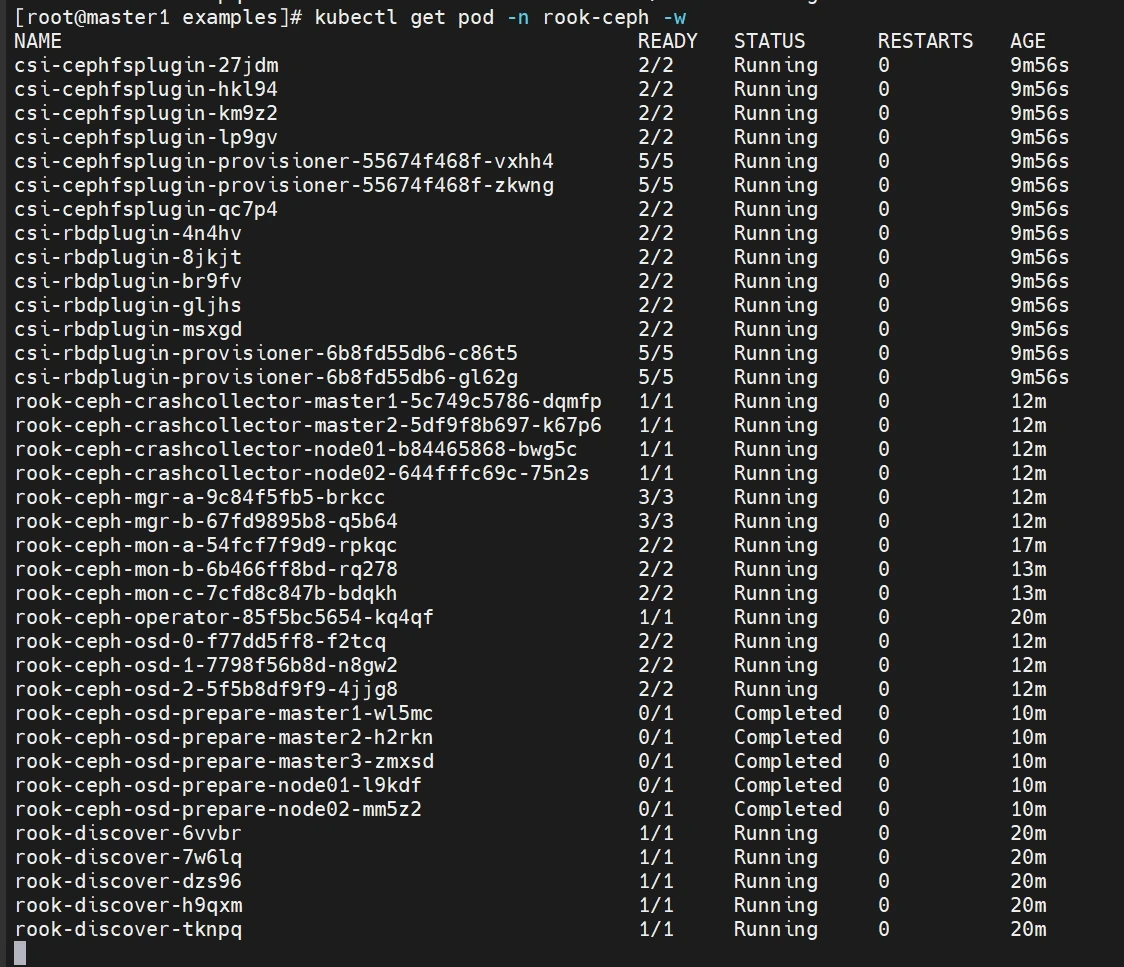

#更改为国内镜像

sed -i 's#image: quay.io/ceph/ceph:v17.2.6#image: registry.cn-hangzhou.aliyuncs.com/qianyios/ceph:v17.2.6#' cluster.yaml登录后复制

plain

vim cluster.yaml

-------------------------------------

- 修改镜像

image: registry.cn-hangzhou.aliyuncs.com/qianyios/ceph:v17.2.6

- 改为false,并非使用所有节点所有磁盘作为osd

- 启用deviceFilter

- 按需配置config

- 会自动跳过非裸盘

storage: # cluster level storage configuration and selection

useAllNodes: false

useAllDevices: false

deviceFilter:

config:

nodes:

- name: "master1"

deviceFilter: "sda"

- name: "node01"

deviceFilter: "sda"

- name: "node02"

deviceFilter: "^sd." #自动匹配sd开头的裸盘这里的三个节点,是我们开头讲到的三台机,自行根据修改调整,注意这里的名字是k8s集群的名字可以在kubectl get nodes查看

部署cluster

登录后复制

plain

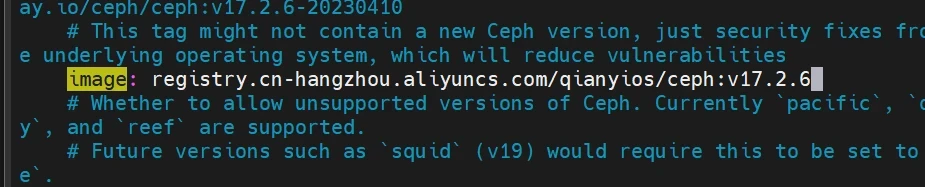

kubectl create -f cluster.yaml查看状态

登录后复制

plain

- 实时查看pod创建进度

kubectl get pod -n rook-ceph -w

- 实时查看集群创建进度

kubectl get cephcluster -n rook-ceph rook-ceph -w

- 详细描述

kubectl describe cephcluster -n rook-ceph rook-ceph

安装ceph客户端工具

登录后复制

plain

- 进入工作目录

cd rook/deploy/examples/

- 查看所需镜像

[root@master1 examples]# cat toolbox.yaml | grep image:

image: quay.io/ceph/ceph:v17.2.6

- 更改为国内镜像

sed -i 's#image: quay.io/ceph/ceph:v17.2.6#image: registry.cn-hangzhou.aliyuncs.com/qianyios/ceph:v17.2.6#' toolbox.yaml

- 创建toolbox

kubectl create -f toolbox.yaml -n rook-ceph

- 查看pod

kubectl get pod -n rook-ceph -l app=rook-ceph-tools

- 进入pod

kubectl -n rook-ceph exec -it deploy/rook-ceph-tools -- bash

- 查看集群状态

ceph status

- 查看osd状态

ceph osd status

- 集群空间用量

ceph df

暴露dashboard

登录后复制

plain

cat > rook-dashboard.yaml << EOF

---

apiVersion: v1

kind: Service

metadata:

labels:

app: rook-ceph-mgr

ceph_daemon_id: a

rook_cluster: rook-ceph

name: rook-ceph-mgr-dashboard-np

namespace: rook-ceph

spec:

ports:

- name: http-dashboard

port: 8443

protocol: TCP

targetPort: 8443

nodePort: 30700

selector:

app: rook-ceph-mgr

ceph_daemon_id: a

sessionAffinity: None

type: NodePort

EOF

kubectl apply -f rook-dashboard.yaml查看dashboard密码

登录后复制

plain

kubectl -n rook-ceph get secret rook-ceph-dashboard-password -o jsonpath="{['data']['password']}" | base64 --decode && echo

Qmu/!$ZvfQTAd-aCuHF+访问dashboard

登录后复制

plain

https://192.168.48.200:30700

如果出现以下报错(可以按下面解决,反之跳过)

消除HEALTH_WARN警告

- 查看警告详情

- AUTH_INSECURE_GLOBAL_ID_RECLAIM_ALLOWED: mons are allowing insecure global_id reclaim

- MON_DISK_LOW: mons a,b,c are low on available space

官方解决方案:https://docs.ceph.com/en/latest/rados/operations/health-checks/

- AUTH_INSECURE_GLOBAL_ID_RECLAIM_ALLOWED

登录后复制

plain

方法一:

- 进入toolbox

kubectl -n rook-ceph exec -it deploy/rook-ceph-tools -- bash

ceph config set mon auth_allow_insecure_global_id_reclaim false

方法二:

kubectl get configmap rook-config-override -n rook-ceph -o yaml

kubectl edit configmap rook-config-override -n rook-ceph -o yaml

config: |

[global]

mon clock drift allowed = 1

#删除pod

kubectl -n rook-ceph delete pod $(kubectl -n rook-ceph get pods -o custom-columns=NAME:.metadata.name --no-headers| grep mon)

#显示一下信息

pod "rook-ceph-mon-a-557d88c-6ksmg" deleted

pod "rook-ceph-mon-b-748dcc9b89-j8l24" deleted

pod "rook-ceph-mon-c-5d47c664-p855m" deleted

#最后查看健康值

ceph -s

- MON_DISK_LOW:根分区使用率过高,清理即可。

Ceph存储使用

三种存储类型

|--------------|----------------------------------------------------|-----------|---------|

| 存储类型 | 特征 | 应用场景 | 典型设备 |

| 块存储(RBD) | 存储速度较快 不支持共享存储 [ReadWriteOnce] | 虚拟机硬盘 | 硬盘 Raid |

| 文件存储(CephFS) | 存储速度慢(需经操作系统处理再转为块存储) 支持共享存储 [ReadWriteMany] | 文件共享 | FTP NFS |

| 对象存储(Object) | 具备块存储的读写性能和文件存储的共享特性 操作系统不能直接访问,只能通过应用程序级别的API访问 | 图片存储 视频存储 | OSS |

块存储

创建CephBlockPool和StorageClass

- 文件路径:

/root/rook/deploy/examples/csi/rbd/storageclass.yaml - CephBlockPool和StorageClass都位于storageclass.yaml 文件

- 配置文件简要解读:

登录后复制

plain

cd /root/rook/deploy/examples/csi/rbd

[root@master1 rbd]# grep -vE '^\s*(#|$)' storageclass.yaml

apiVersion: ceph.rook.io/v1

kind: CephBlockPool

metadata:

name: replicapool

namespace: rook-ceph # namespace:cluster

spec:

failureDomain: host # host级容灾

replicated:

size: 3 # 默认三个副本

requireSafeReplicaSize: true

---

apiVersion: storage.k8s.io/v1

kind: StorageClass # sc无需指定命名空间

metadata:

name: rook-ceph-block

provisioner: rook-ceph.rbd.csi.ceph.com # 存储驱动

parameters:

clusterID: rook-ceph # namespace:cluster

pool: replicapool # 关联到CephBlockPool

imageFormat: "2"

imageFeatures: layering

csi.storage.k8s.io/provisioner-secret-name: rook-csi-rbd-provisioner

csi.storage.k8s.io/provisioner-secret-namespace: rook-ceph # namespace:cluster

csi.storage.k8s.io/controller-expand-secret-name: rook-csi-rbd-provisioner

csi.storage.k8s.io/controller-expand-secret-namespace: rook-ceph # namespace:cluster

csi.storage.k8s.io/node-stage-secret-name: rook-csi-rbd-node

csi.storage.k8s.io/node-stage-secret-namespace: rook-ceph # namespace:cluster

csi.storage.k8s.io/fstype: ext4

allowVolumeExpansion: true # 是否允许扩容

reclaimPolicy: Delete # PV回收策略

[root@master1 rbd]#创建CephBlockPool和StorageClass

登录后复制

plain

kubectl create -f storageclass.yaml查看

登录后复制

plain

- 查看sc

kubectl get sc

- 查看CephBlockPool(也可在dashboard中查看)

kubectl get cephblockpools -n rook-ceph

块存储使用示例

- Deployment 单副本+PersistentVolumeClaim

登录后复制

plain

cat > nginx-deploy-rbd.yaml << "EOF"

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-deploy-rbd

name: nginx-deploy-rbd

spec:

replicas: 1

selector:

matchLabels:

app: nginx-deploy-rbd

template:

metadata:

labels:

app: nginx-deploy-rbd

spec:

containers:

- image: registry.cn-hangzhou.aliyuncs.com/qianyios/nginx:latest

name: nginx

volumeMounts:

- name: data

mountPath: /usr/share/nginx/html

volumes:

- name: data

persistentVolumeClaim:

claimName: nginx-rbd-pvc

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nginx-rbd-pvc

spec:

storageClassName: "rook-ceph-block" #就是这里指定了前面的创建的sc

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

EOF登录后复制

plain

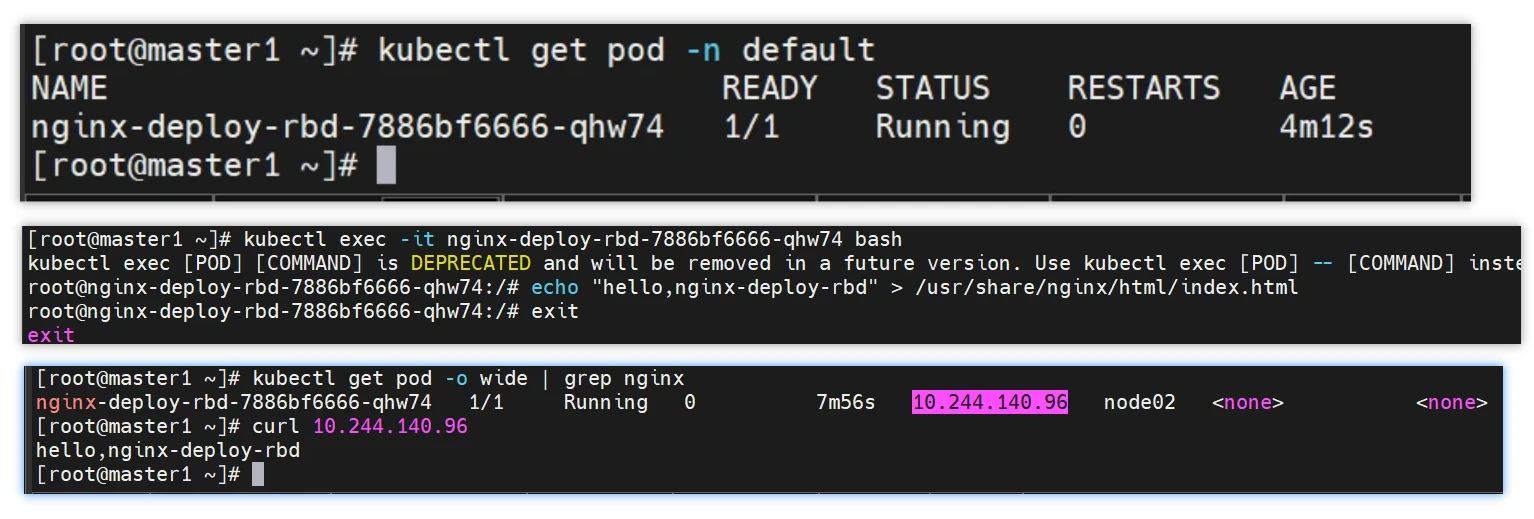

kubectl create -f nginx-deploy-rbd.yaml

kubectl exec -it nginx-deploy-rbd-7886bf6666-qhw74 bash

echo "hello,nginx-deploy-rbd" > /usr/share/nginx/html/index.html

exit

kubectl get pod -o wide | grep nginx

#测试完就删除

kubectl delete -f nginx-deploy-rbd.yaml

- StatefulSet 多副本+volumeClaimTemplates

登录后复制

plain

cat > nginx-ss-rbd.yaml << "EOF"

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: nginx-ss-rbd

spec:

selector:

matchLabels:

app: nginx-ss-rbd

serviceName: "nginx"

replicas: 3

template:

metadata:

labels:

app: nginx-ss-rbd

spec:

containers:

- name: nginx

image: registry.cn-hangzhou.aliyuncs.com/qianyios/nginx:latest

ports:

- containerPort: 80

name: web

volumeMounts:

- name: www

mountPath: /usr/share/nginx/html

volumeClaimTemplates:

- metadata:

name: www

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: "rook-ceph-block" #就是这里指定了前面的创建的sc

resources:

requests:

storage: 2Gi

EOF部署

登录后复制

plain

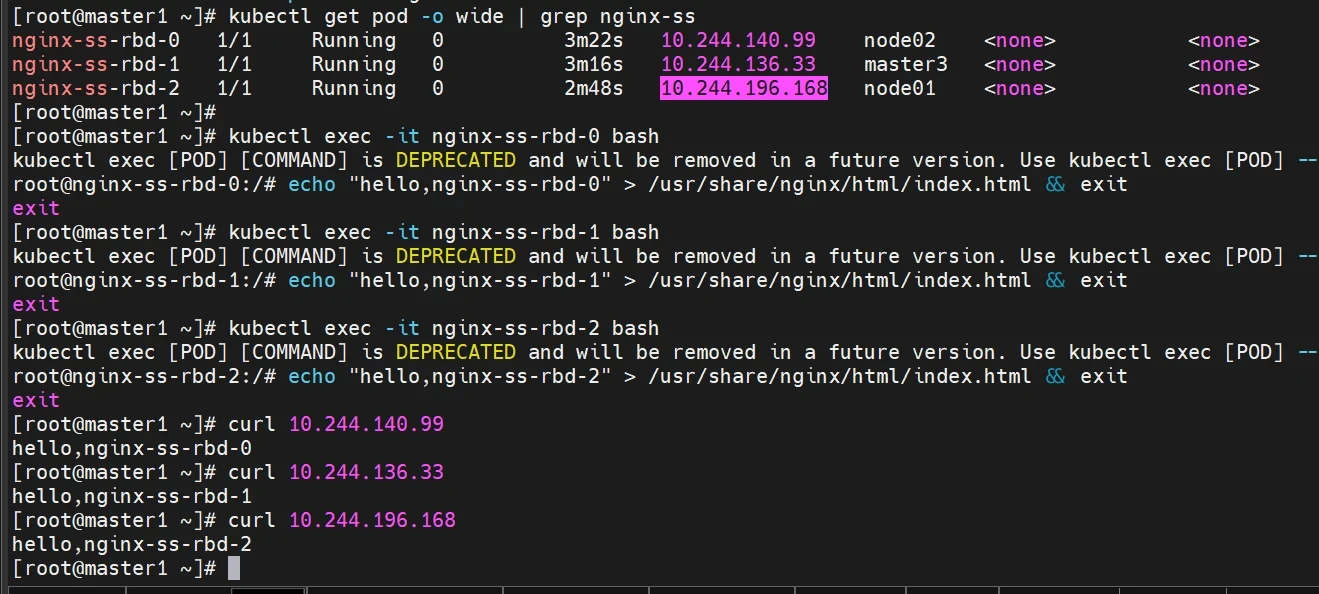

kubectl create -f nginx-ss-rbd.yaml

kubectl get pod -o wide | grep nginx-ss

kubectl exec -it nginx-ss-rbd-0 bash

echo "hello,nginx-ss-rbd-0" > /usr/share/nginx/html/index.html && exit

kubectl exec -it nginx-ss-rbd-1 bash

echo "hello,nginx-ss-rbd-1" > /usr/share/nginx/html/index.html && exit

kubectl exec -it nginx-ss-rbd-2 bash

echo "hello,nginx-ss-rbd-2" > /usr/share/nginx/html/index.html && exit

#测试完就删除

kubectl delete -f nginx-ss-rbd.yaml

这里可能需要手动删除一下pvc

[root@master1 ~]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

www-nginx-ss-rbd-0 Bound pvc-4a75f201-eec0-47fa-990c-353c52fe14f4 2Gi RWO rook-ceph-block 6m27s

www-nginx-ss-rbd-1 Bound pvc-d5f7e29f-79e4-4d1e-bcbb-65ece15a8172 2Gi RWO rook-ceph-block 6m21s

www-nginx-ss-rbd-2 Bound pvc-8cce06e9-dfe4-429d-ae44-878f8e4665e0 2Gi RWO rook-ceph-block 5m53s

[root@master1 ~]# kubectl delete pvc www-nginx-ss-rbd-0

persistentvolumeclaim "www-nginx-ss-rbd-0" deleted

[root@master1 ~]# kubectl delete pvc www-nginx-ss-rbd-1

persistentvolumeclaim "www-nginx-ss-rbd-1" deleted

[root@master1 ~]# kubectl delete pvc www-nginx-ss-rbd-2

persistentvolumeclaim "www-nginx-ss-rbd-2" deleted

共享文件存储

部署MDS服务

创建Cephfs文件系统需要先部署MDS服务,该服务负责处理文件系统中的元数据。

- 文件路径:

/root/rook/deploy/examples/filesystem.yaml

配置文件解读

登录后复制

plain

cd /root/rook/deploy/examples

[root@master1 examples]# grep -vE '^\s*(#|$)' filesystem.yaml

apiVersion: ceph.rook.io/v1

kind: CephFilesystem

metadata:

name: myfs

namespace: rook-ceph # namespace:cluster

spec:

metadataPool:

replicated:

size: 3 # 元数据副本数

requireSafeReplicaSize: true

parameters:

compression_mode:

none

dataPools:

- name: replicated

failureDomain: host

replicated:

size: 3 # 存储数据的副本数

requireSafeReplicaSize: true

parameters:

compression_mode:

none

preserveFilesystemOnDelete: true

metadataServer:

activeCount: 1 # MDS实例的副本数,默认1,生产环境建议设置为3

activeStandby: true

......省略

kubectl create -f filesystem.yaml

kubectl get pod -n rook-ceph | grep mds

登录后复制

plain

- 进入pod

kubectl -n rook-ceph exec -it deploy/rook-ceph-tools -- bash

- 查看集群状态

ceph status

配置存储(StorageClass)

配置文件:/root/rook/deploy/examples/csi/cephfs/storageclass.yaml

登录后复制

plain

cd /root/rook/deploy/examples/csi/cephfs

kubectl apply -f storageclass.yaml

共享文件存储使用示例

登录后复制

plain

cat > nginx-deploy-cephfs.yaml << "EOF"

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-deploy-cephfs

name: nginx-deploy-cephfs

spec:

replicas: 3

selector:

matchLabels:

app: nginx-deploy-cephfs

template:

metadata:

labels:

app: nginx-deploy-cephfs

spec:

containers:

- image: registry.cn-hangzhou.aliyuncs.com/qianyios/nginx:latest

name: nginx

volumeMounts:

- name: data

mountPath: /usr/share/nginx/html

volumes:

- name: data

persistentVolumeClaim:

claimName: nginx-cephfs-pvc

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nginx-cephfs-pvc

spec:

storageClassName: "rook-cephfs"

accessModes:

- ReadWriteMany

resources:

requests:

storage: 1Gi

EOF

kubectl apply -f nginx-deploy-cephfs.yaml

kubectl get pod -o wide | grep cephfs

kubectl exec -it nginx-deploy-cephfs-6dc8797866-4s564 bash

echo "hello cephfs" > /usr/share/nginx/html/index.html && exit

#测试完删除

kubectl delete -f nginx-deploy-cephfs.yaml

在K8S中直接调用出ceph命令

登录后复制

plain

#安装epel源

yum install epel-release -y

#安装ceph仓库

yum install https://mirrors.aliyun.com/ceph/rpm-octopus/el7/noarch/ceph-release-1-1.el7.noarch.rpm -y

yum list ceph-common --showduplicates |sort -r

#安装ceph客户端

yum install ceph-common -y同步ceph中的认证文件

登录后复制

plain

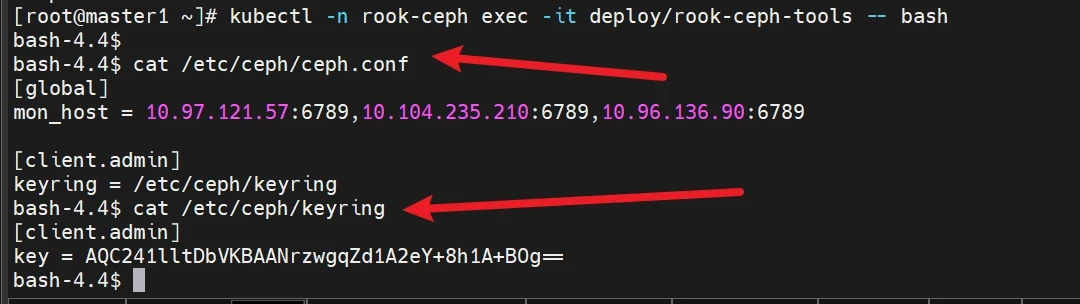

kubectl -n rook-ceph exec -it deploy/rook-ceph-tools -- bash

[root@master1 ~]# kubectl -n rook-ceph exec -it deploy/rook-ceph-tools -- bash

bash-4.4$ cat /etc/ceph/ceph.conf

[global]

mon_host = 10.97.121.57:6789,10.104.235.210:6789,10.96.136.90:6789

[client.admin]

keyring = /etc/ceph/keyring

bash-4.4$ cat /etc/ceph/keyring

[client.admin]

key = AQC241lltDbVKBAANrzwgqZd1A2eY+8h1A+BOg==

bash-4.4$

注意这两个文件,复制内容之后exit退出

登录后复制

plain

直接在master1创建这两个文件(这里的master1是指我要在master1可以调用ceph的客户端)

cat > /etc/ceph/ceph.conf << "EOF"

[global]

mon_host = 10.97.121.57:6789,10.104.235.210:6789,10.96.136.90:6789

[client.admin]

keyring = /etc/ceph/keyring

EOF

cat > /etc/ceph/keyring << "EOF"

[client.admin]

key = AQC241lltDbVKBAANrzwgqZd1A2eY+8h1A+BOg==

EOF当你添加完之后直接调用ceph的命令

删除pvc,sc及对应的存储资源

登录后复制

plain

- 按需删除pvc、pv

kubectl get pvc -n [namespace] | awk '{print $1};' | xargs kubectl delete pvc -n [namespace]

kubectl get pv | grep Released | awk '{print $1};' | xargs kubectl delete pv

- 删除块存储及SC

kubectl delete -n rook-ceph cephblockpool replicapool

kubectl delete storageclass rook-ceph-block特别声明

千屹博客旗下的所有文章,是通过本人课堂学习和课外自学所精心整理的知识巨著

难免会有出错的地方

如果细心的你发现了小失误,可以在下方评论区告诉我,或者私信我!

非常感谢大家的热烈支持!