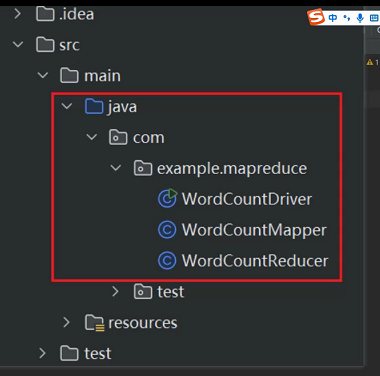

创建三个类:

package com.example.mapreduce;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class WordCountDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//设置用户名:

System.setProperty("HADOOP_USER_NAME", "root");

//1.获取job对象

Configuration conf = new Configuration();

conf.set("fs.defaultFS", "hdfs://hadoop100:8020");

Job job = Job.getInstance(conf);

//2.关联啊本地Driver类的jar

job.setJarByClass(WordCountDriver.class);

//3.关联map和reduce

job.setMapperClass(WordCountMapper.class);

job.setReducerClass(WordCountReducer.class);

//4.设置map的输出kv类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(LongWritable.class);

//5.设置map的输出kv类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(LongWritable.class);

//6.设置输入数据和输出结果的地址

//FileInputFormat.setInputPaths(job, new Path("E\\cinput"));

//FileOutputFormat.setOutputPath(job, new Path("E\\output10"));

FileInputFormat.setInputPaths(job, new Path("/cinput"));

FileOutputFormat.setOutputPath(job, new Path("/output10"));

//7.提交job

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

package com.example.mapreduce;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.io.Text;

import java.io.IOException;

//1.继承 hadoop的map重写

//2.重写map方法

public class WordCountMapper extends Mapper<LongWritable, Text, Text, LongWritable> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//每一行的文本内容,使用空格做拆分,得到一个列表

String[] words = value.toString().split(" ");

//对每一个单词,把它当做key,并设置value为1

for (String word : words) {

context.write(new Text(word), new LongWritable(1));

}

}

}

package com.example.mapreduce;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.io.Text;

import java.io.IOException;

//继承hadoop的reducer类

//重写reduce方法

public class WordCountReducer extends Reducer<Text, LongWritable, Text, LongWritable> {

@Override

protected void reduce(Text key, Iterable<LongWritable> values, Context context) throws IOException, InterruptedException {

//对value中的值做累加求和

long sum = 0;

for (LongWritable value : values) {

sum += value.get();

}

//将结果输出

context.write(key, new LongWritable(sum));

}

}