python

import torch

from torch import nn

from d2l import torch as d2l

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)3.7.1 初始化模型参数

python

net = nn.Sequential(nn.Flatten(), nn.Linear(784, 10))

def init_weights(m):

if type(m) == nn.Linear:

nn.init.normal_(m.weight, std=0.01)

net.apply(init_weights);3.7.2 重新审视Softmax的实现

python

loss = nn.CrossEntropyLoss(reduction='none')3.7.3 优化算法

python

# 在这里,我们(使用学习率为0.1的小批量随机梯度下降作为优化算法)

trainer = torch.optim.SGD(net.parameters(), lr=0.1)3.7.4 训练

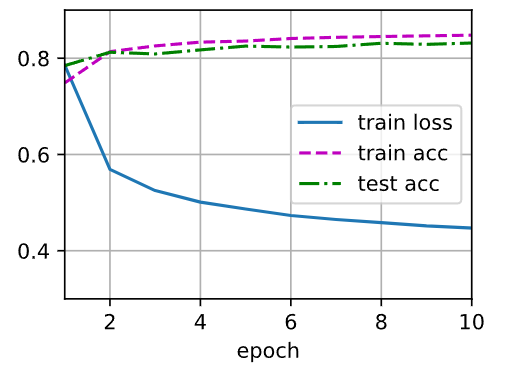

python

num_epochs = 10

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, trainer)

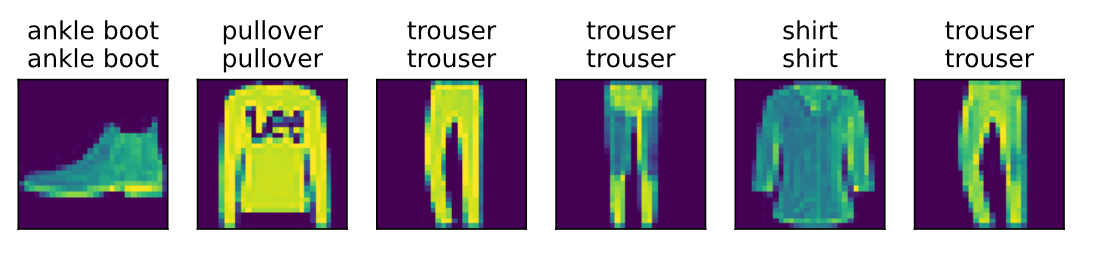

3.7.5 预测

python

batch_size = 256 #迭代器批量

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

def predict_ch3(net, test_iter, n=6):

"""Predict labels (defined in Chapter 3)."""

for X, y in test_iter: # 获取第一批测试数据

break

trues = d2l.get_fashion_mnist_labels(y) # 真实标签转文本

preds = d2l.get_fashion_mnist_labels(d2l.argmax(net(X), axis=1)) # 预测标签转文本

titles = [true +'\n' + pred for true, pred in zip(trues, preds)] # 组合标签

d2l.show_images(d2l.reshape(X[0:n], (n, 28, 28)), 1, n, titles=titles[0:n]) # 可视化

predict_ch3(net, test_iter)