k8s 集群部署

k8s 环境部署说明

| 主机名 | ip | 角色 |

|---|---|---|

| harbor.rin.org | 172.25.254.200 | harbor仓库 |

| k8s-master.rin.org | 172.25.254.100 | master,k8s集群控制节点 |

| k8s-node1.rin.org | 172.25.254.10 | worker,k8s集群工作节点 |

| k8s-node2.rin.org | 172.25.254.20 | worker,k8s集群工作节点 |

配置harbor仓库

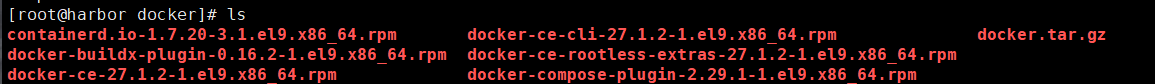

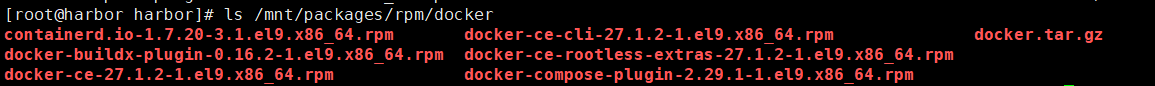

安装docker:

bash

dnf install *.rpm -y --allowerasing编辑docker文件配置:

bash

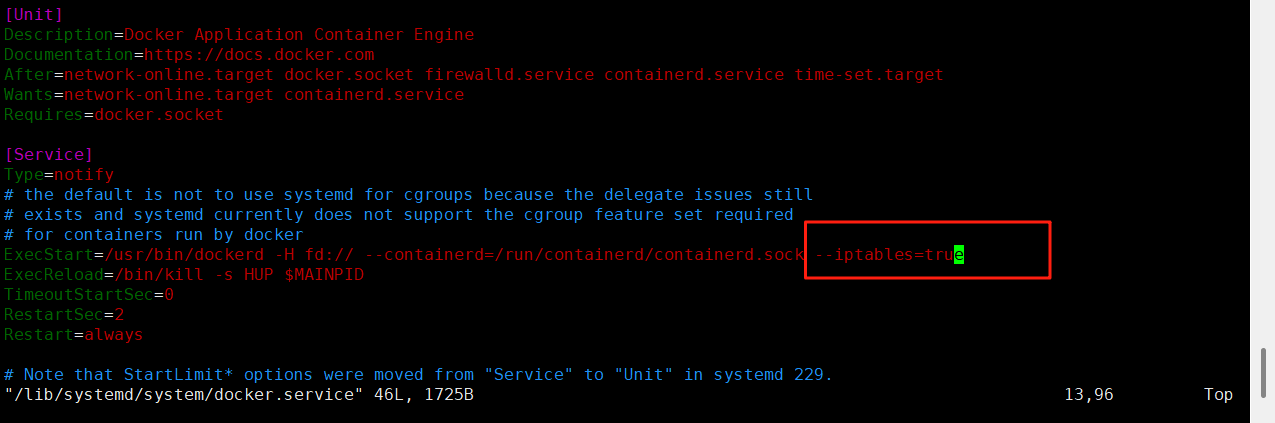

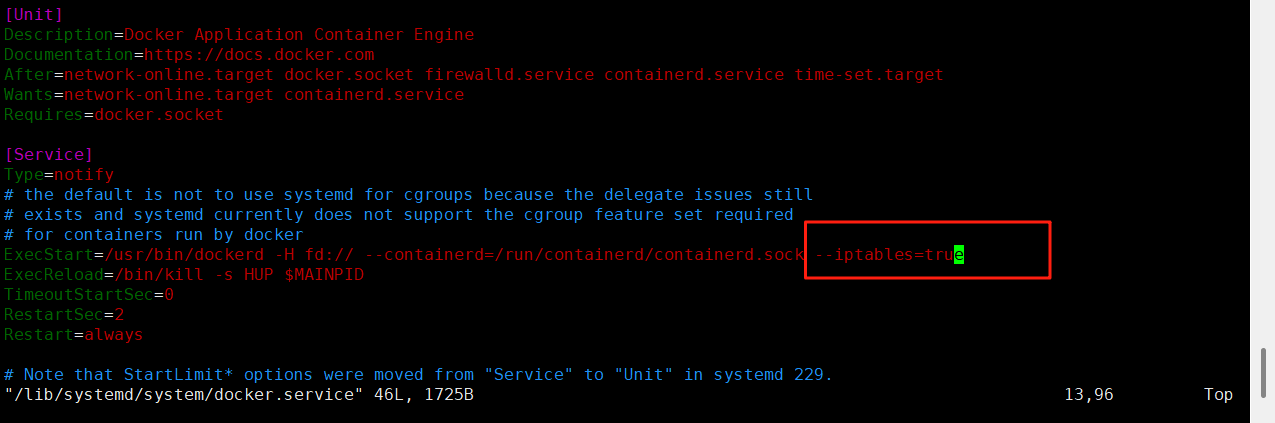

vim /lib/systemd/system/docker.service添加参数:

bash

--iptables=true

关闭防火墙:

bash

systemctl stop firewalld.service启动docker:

bash

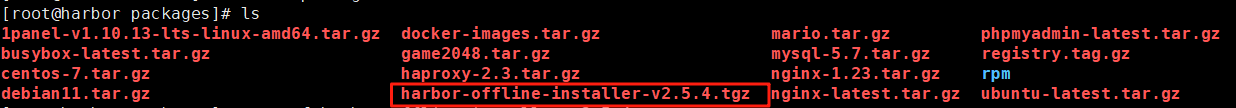

systemctl start docker解压harbor包

bash

tar xzf harbor-offline-installer-v2.5.4.tgz 创建证书存放目录:

bash

mkdir -p /data/certs生成证书:

bash

openssl req -newkey rsa:4096 \

-nodes -sha256 -keyout /data/certs/rin.key \

-addext "subjectAltName = DNS:www.rin.com" \

-x509 -days 365 -out /data/certs/rin.crtCountry Name (2 letter code) XX:CN

State or Province Name (full name) \[\]:guangxi

Locality Name (eg, city) Default City:nanning

Organization Name (eg, company) Default Company Ltd:k8s

Organizational Unit Name (eg, section) \[\]:harbor

Common Name (eg, your name or your server's hostname) \[\]:www.rin.com

Email Address \[\]:rin@rin.com

编辑harbor仓库配置文件:

bash

cp harbor.yml.tmpl harbor.yml

vim harbor.yml

bash

# The IP address or hostname to access admin UI and registry service.

# DO NOT use localhost or 127.0.0.1, because Harbor needs to be accessed by external clients.

hostname: www.rin.com

# https related config

https:

# https port for harbor, default is 443

port: 443

# The path of cert and key files for nginx

certificate: /data/certs/rin.crt

private_key: /data/certs/rin.key

# The initial password of Harbor admin

# It only works in first time to install harbor

# Remember Change the admin password from UI after launching Harbor.

harbor_admin_password: rin

# The default data volume

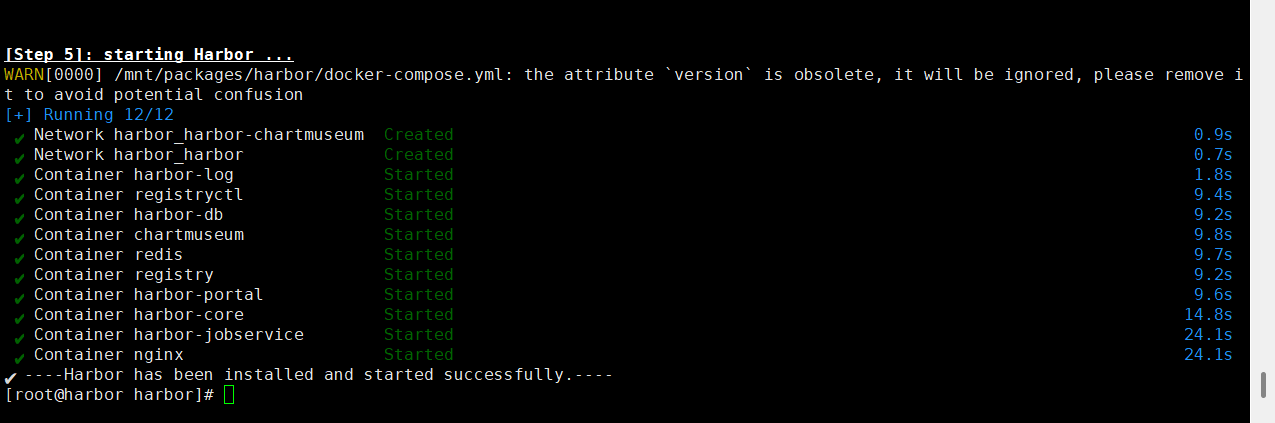

data_volume: /data执行安装脚本:

bash

./install.sh --with-chartmuseum

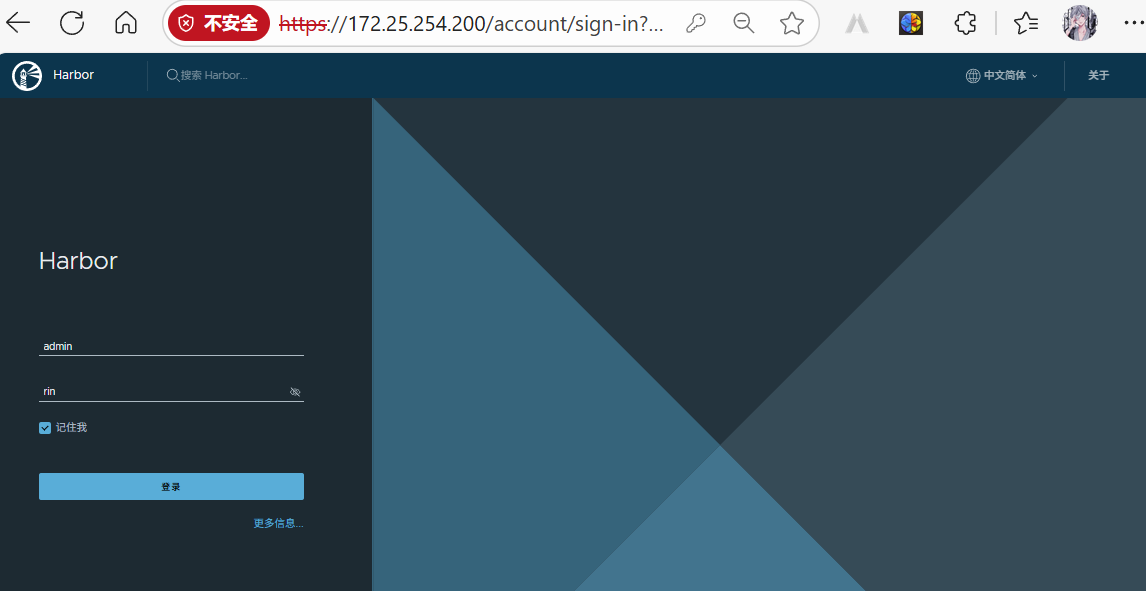

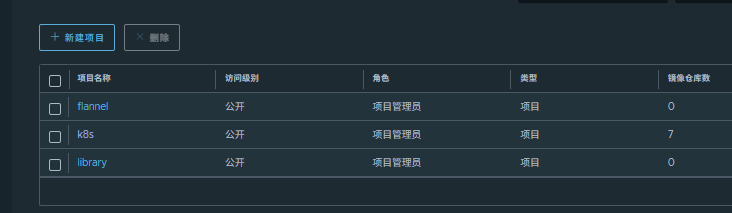

通过网页访问harbor仓库:

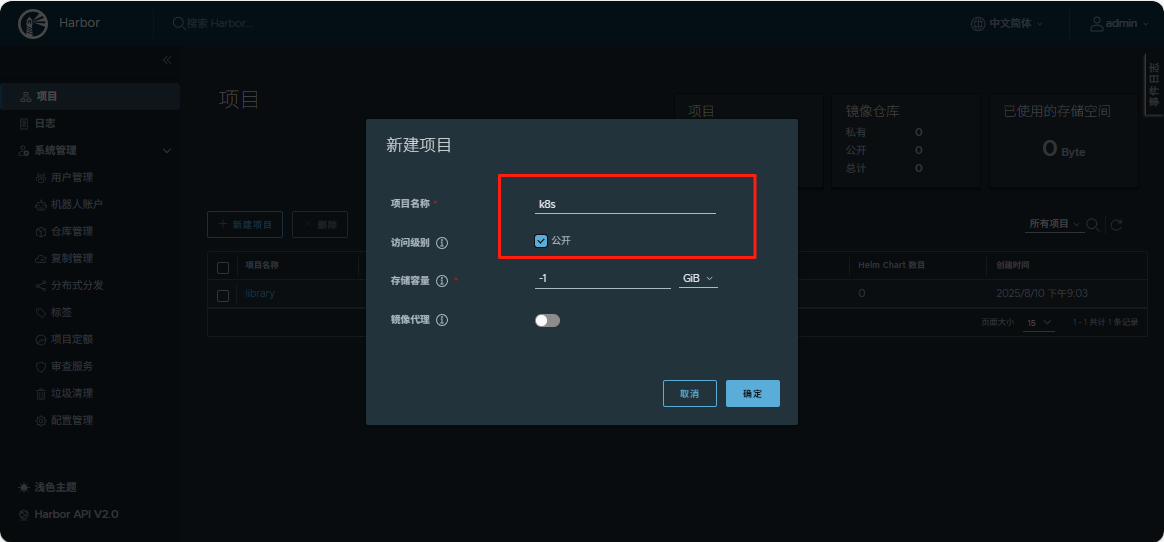

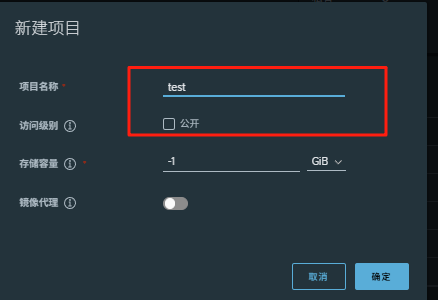

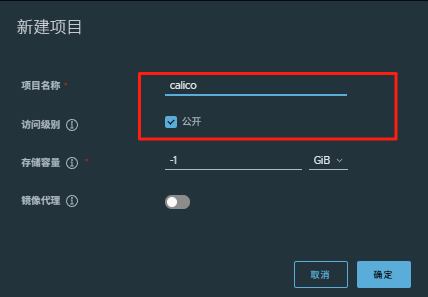

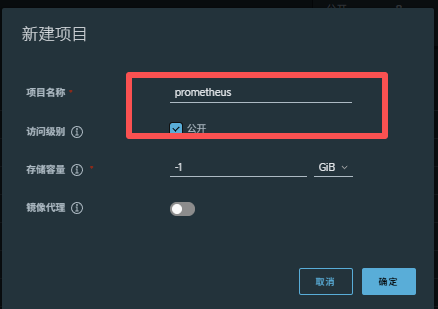

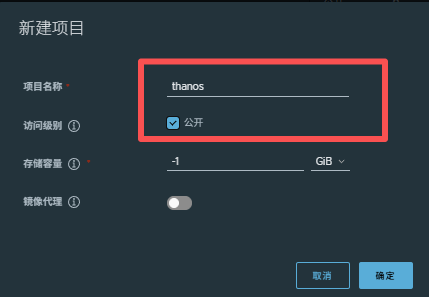

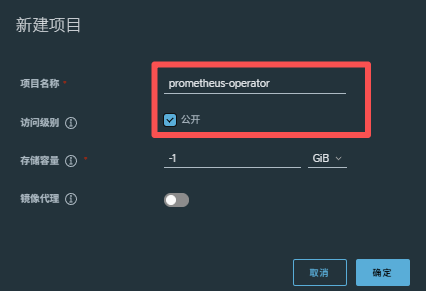

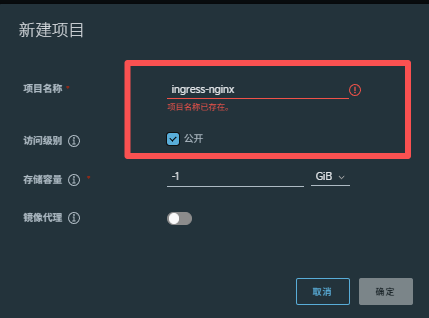

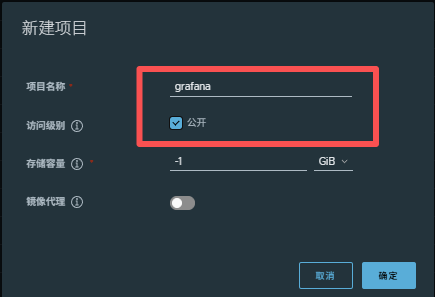

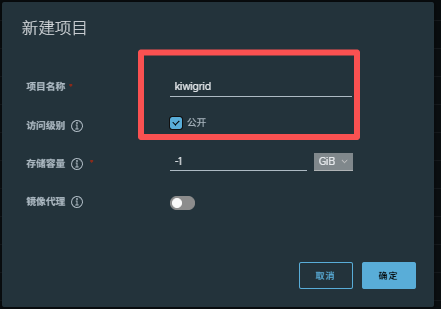

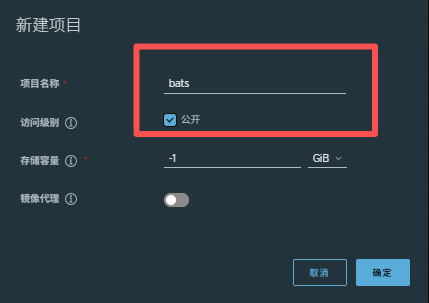

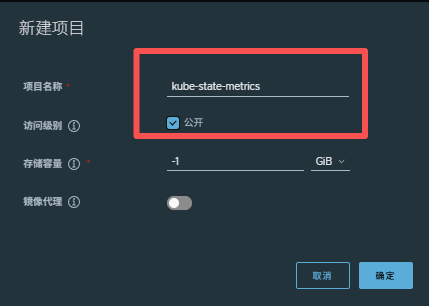

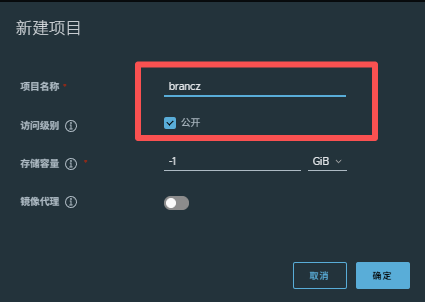

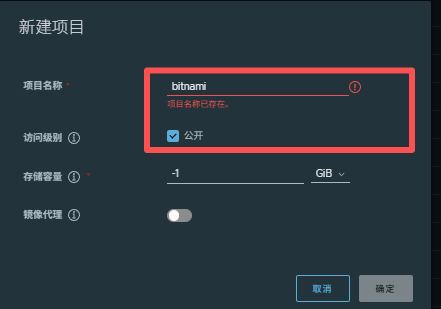

新建项目目录:

新建项目目录:

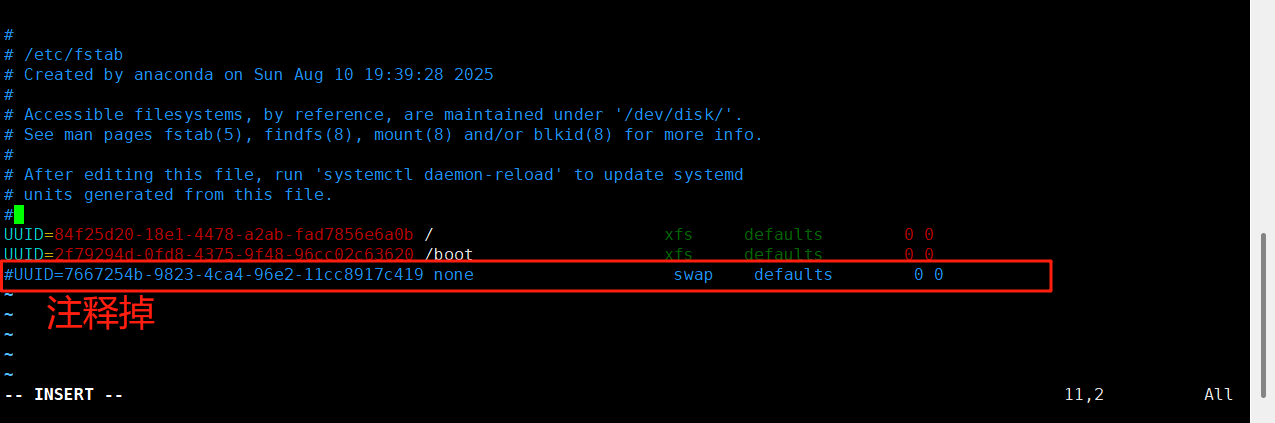

所有禁用swap

对master和node进行配置:

bash

systemctl mask swap.target

bash

vim /etc/fstab

重新加载配置:

bash

systemctl daemon-reload重启后再次检测:

没有显示则表示关闭成功

bash

swapon -s配置其余节点(master、node)

关闭防火墙:

bash

systemctl stop firewalld.service在200上将安装包传到各主机:

bash

for i in 10 20 100;

do scp -r /mnt/packages/rpm/docker root@172.25.254.$i:/mnt/;

done安装docker,对于冲突插件允许系统进行卸载:

bash

dnf install *.rpm -y --allowerasing编辑docker文件配置:

bash

vim /lib/systemd/system/docker.service添加参数:

bash

--iptables=true

配置完后,进行批量覆盖:

bash

for i in 10 20 100;

do scp -r /lib/systemd/system/docker.service root@172.25.254.$i:/lib/systemd/system/docker.service;

done配置全证书:

在前面配置完证书的harbor节点进行证书分发:

bash

for i in 10 20 100;

do ssh root@172.25.254.$i "mkdir -p /etc/docker/certs.d/www.rin.com";

scp /data/certs/rin.crt root@172.25.254.$i:/etc/docker/certs.d/www.rin.com/ca.crt;

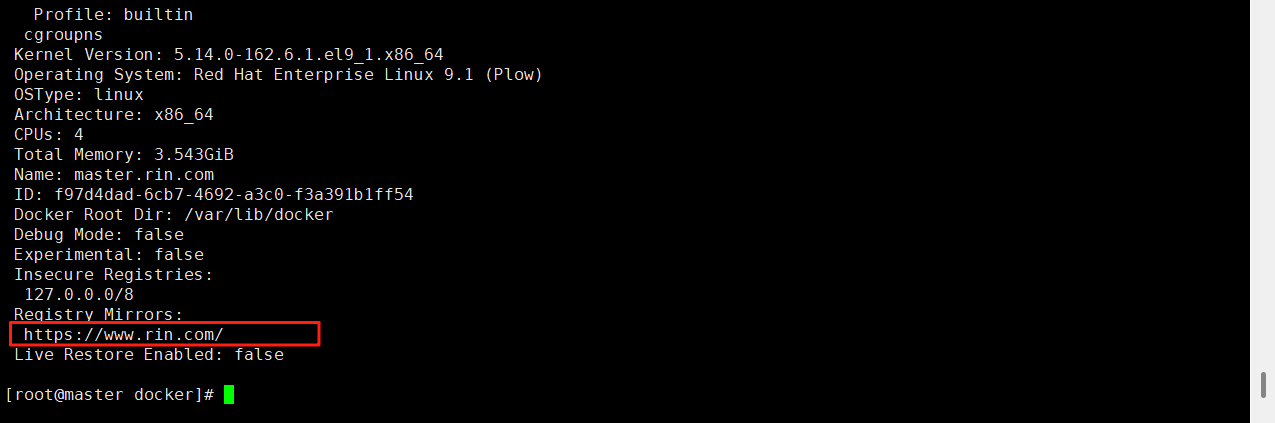

done设置docker默认库

bash

vim /etc/docker/daemon.json

bash

{

"registry-mirrors": ["https://www.rin.com"]

}将配置好的默认库文件覆盖传送到个节点:

bash

for i in 10 20 100;

do scp -r /etc/docker/daemon.json root@172.25.254.$i:/etc/docker/daemon.json;

done启动各节点的docker:

bash

for i in 10 20 100;

do ssh root@172.25.254.$i "systemctl start docker";

done查看各节点的默认库路径是否配置成功:

bash

docker info

配置解析:

bash

vim /etc/hosts

bash

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.100 master.rin.com

172.25.254.200 harbor.rin.com

172.25.254.10 node1.rin.com

172.25.254.20 node2.rin.com将解析分发覆盖各节点:

bash

for i in 10 20 100;

do scp -r /etc/hosts root@172.25.254.$i:/etc/hosts;

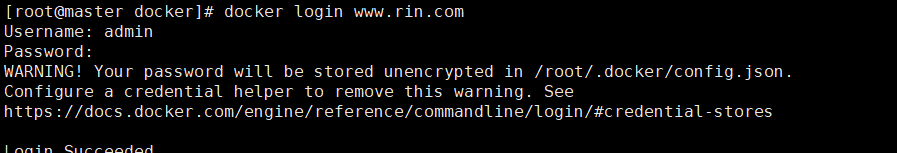

done登录harbor仓库:

bash

docker login www.rin.com

安装K8S部署工具

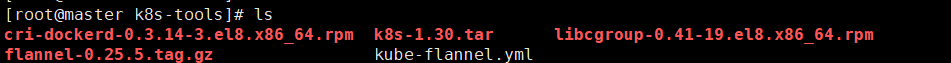

在所节点安装cri-docker

将tools目录传到各节点:(master、node)

bash

for i in 10 20 100;

do scp -r /mnt/k8s-tools root@172.25.254.$i:/mnt;

done安装里面的两个rpm包:

bash

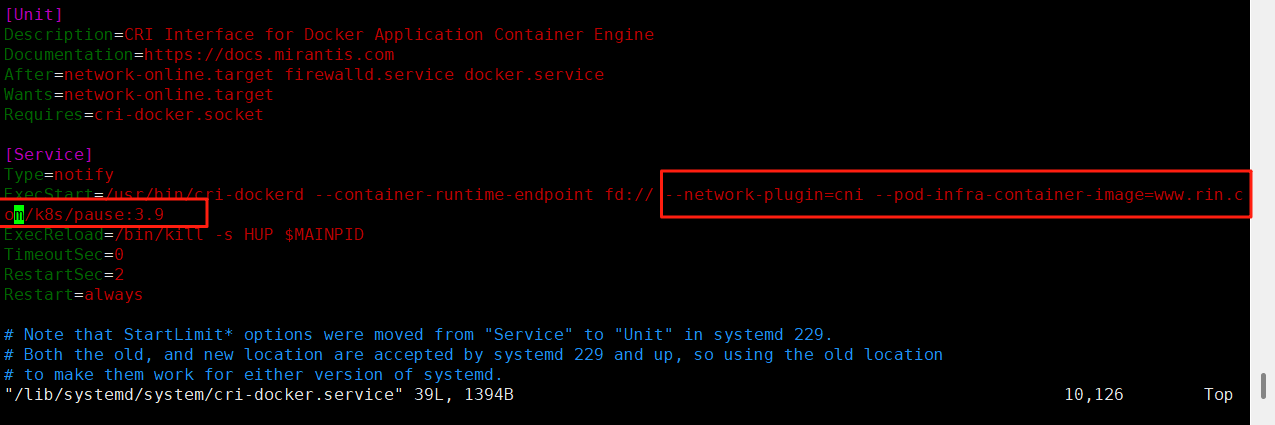

dnf install *.rpm -y编辑cri配置文件:

bash

vim /lib/systemd/system/cri-docker.service

bash

--network-plugin=cni --pod-infra-container-image=www.rin.com/k8s/pause:3.9

将配置好的文件,分发覆盖给个节点:

bash

for i in 10 20 100;

do scp -r /lib/systemd/system/cri-docker.service

root@172.25.254.$i:/lib/systemd/system/cri-docker.service;

done启动cri插件:

bash

systemctl start docker

bash

systemctl enable --now cri-docker.service

bash

systemctl start cri-docker.socket

systemctl start cri-docker

systemctl status cri-docker解压k8s-1.30.tar.gz包:

安装依赖:

bash

dnf install libnetfilter_conntrack -y接下来,安装解压好的所有rpm包

cpp

[root@master k8s-1.30]# ls

k8s-1.30.tar.gz

[root@master k8s-1.30]# tar -xzf k8s-1.30.tar.gz

[root@master k8s-1.30]# ls

conntrack-tools-1.4.7-2.el9.x86_64.rpm kubernetes-cni-1.4.0-150500.1.1.x86_64.rpm

cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm

cri-tools-1.30.1-150500.1.1.x86_64.rpm libnetfilter_conntrack-1.0.9-1.el9.x86_64.rpm

k8s-1.30.tar.gz libnetfilter_cthelper-1.0.0-22.el9.x86_64.rpm

kubeadm-1.30.0-150500.1.1.x86_64.rpm libnetfilter_cttimeout-1.0.0-19.el9.x86_64.rpm

kubectl-1.30.0-150500.1.1.x86_64.rpm libnetfilter_queue-1.0.5-1.el9.x86_64.rpm

kubelet-1.30.0-150500.1.1.x86_64.rpm socat-1.7.4.1-5.el9.x86_64.rpm将这个包目录传给其他节点:

bash

for i in 10 20 100;

do scp -r /mnt/k8s-tools root@172.25.254.$i:/mnt/;

done安装目录内的所有rpm包:

bash

dnf install *.rpm -y设置kubectl命令补齐功能

bash

dnf install bash-completion -y

echo "source <(kubectl completion bash)" >> ~/.bashrc

source ~/.bashrc导入镜像:

bash

docker load -i k8s_docker_images-1.30.tar给镜像打标签:

bash

docker images | awk '/google/{ print $1":"$2}' \

| awk -F "/" '{system("docker tag "$0" www.rin.com/k8s/"$3)}'推送镜像:

bash

docker images | awk '/k8s/{system("docker push "$1":"$2)}'集群初始化

#启动kubelet服务

bash

systemctl enable --now kubelet.service初始化:

bash

kubeadm init

--pod-network-cidr=10.244.0.0/16

--image-repository www.rin.com/k8s

--kubernetes-version v1.30.0

--cri-socket=unix:///var/run/cri-dockerd.sock成功如下显示:

cpp

[root@master mnt]# kubeadm init --pod-network-cidr=10.244.0.0/16 --image-repository www.rin.com/k8s --kubernetes-version v1.30.0 --cri-socket=unix:///var/run/cri-dockerd.sock

[init] Using Kubernetes version: v1.30.0

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local master.rin.com] and IPs [10.96.0.1 172.25.254.100]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost master.rin.com] and IPs [172.25.254.100 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost master.rin.com] and IPs [172.25.254.100 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "super-admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests"

[kubelet-check] Waiting for a healthy kubelet. This can take up to 4m0s

[kubelet-check] The kubelet is healthy after 1.50323907s

[api-check] Waiting for a healthy API server. This can take up to 4m0s

[api-check] The API server is healthy after 7.007327396s

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master.rin.com as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node master.rin.com as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: a4l2fc.ahmfiubi738p3iup

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 172.25.254.100:6443 --token a4l2fc.ahmfiubi738p3iup \

--discovery-token-ca-cert-hash sha256:b99fd92564901bbe4c29b376a8616224ebc05279bf4212f775aaa985592cf169 #指定集群配置文件变量

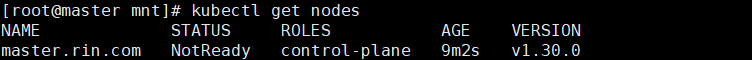

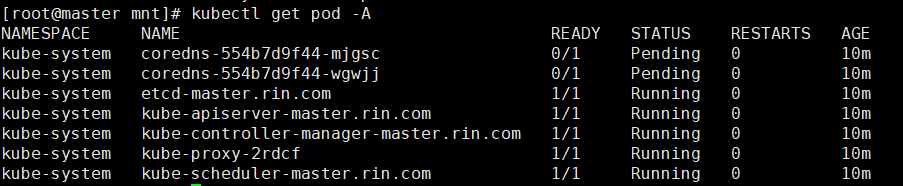

bash

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

bash

export KUBECONFIG=/etc/kubernetes/admin.conf

echo $KUBECONFIG

kubectl get nodes

bash

kubectl get pod -A

若初始化失败,清楚残余数据重新进行:

bash

[root@master mnt]# rm -rf /etc/cni/net.d

[root@master mnt]# rm -rf /var/lib/etcd/*

[root@master mnt]# systemctl restart docker cri-docker

[root@master mnt]# kubeadm reset -f --cri-socket=unix:///var/run/cri-dockerd.sock

[root@master mnt]# lsof -i :6443 | grep -v "PID" | awk '{print $2}' | xargs -r kill -9

[root@master mnt]# lsof -i :10259 | grep -v "PID" | awk '{print $2}' | xargs -r kill -9

[root@master mnt]# lsof -i :10257 | grep -v "PID" | awk '{print $2}' | xargs -r kill -9

[root@master mnt]# lsof -i :10250 | grep -v "PID" | awk '{print $2}' | xargs -r kill -9

[root@master mnt]# lsof -i :2379 | grep -v "PID" | awk '{print $2}' | xargs -r kill -9

[root@master mnt]# lsof -i :2380 | grep -v "PID" | awk '{print $2}' | xargs -r kill -9

[root@master mnt]# rm -rf /etc/kubernetes/manifests/*

[root@master mnt]# rm -rf /etc/kubernetes/pki/*

[root@master mnt]# rm -rf /var/lib/etcd/*

[root@master mnt]# rm -rf /var/lib/kubelet/*

[root@master mnt]# rm -rf /var/lib/kube-proxy/*

[root@master mnt]# rm -rf /var/run/kubernetes/*

[root@master mnt]# systemctl restart kubelet

[root@master mnt]# systemctl restart docker cri-docker重新初始化:

bash

kubeadm init

--pod-network-cidr=10.244.0.0/16

--image-repository www.rin.com/k8s

--kubernetes-version v1.30.0

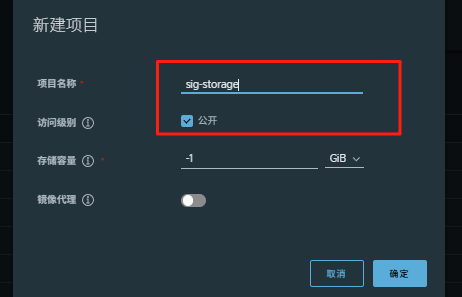

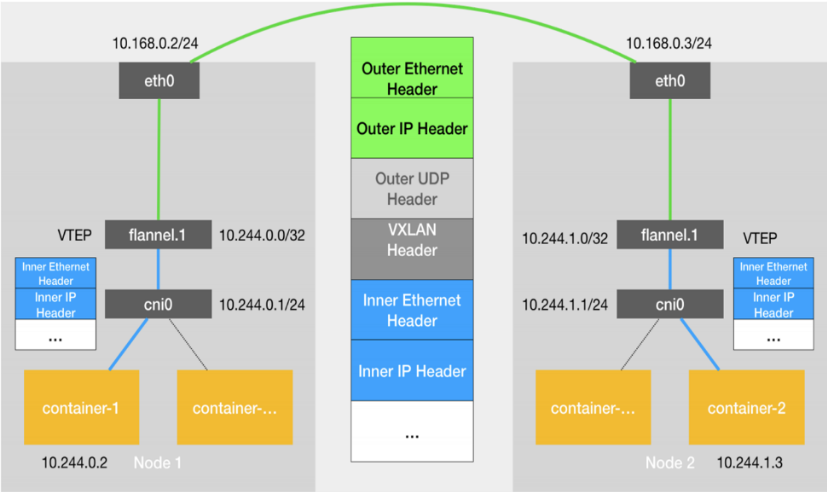

--cri-socket=unix:///var/run/cri-dockerd.sock安装flannel网络插件

#下载flannel的yaml部署文件

bash

wget https://github.com/flannel-io/flannel/releases/latest/download/kube-flannel.yml挂载镜像:

bash

docker load -i flannel-0.25.5.tag.gz

cpp

[root@master k8s-tools]# ls

cri-dockerd-0.3.14-3.el8.x86_64.rpm k8s k8s_docker_images-1.30.tar libcgroup-0.41-19.el8.x86_64.rpm

flannel-0.25.5.tag.gz k8s-1.30 kube-flannel.yml

[root@master k8s-tools]# docker load -i flannel-0.25.5.tag.gz

ef7a14b43c43: Loading layer [==================================================>] 8.079MB/8.079MB

1d9375ff0a15: Loading layer [==================================================>] 9.222MB/9.222MB

4af63c5dc42d: Loading layer [==================================================>] 16.61MB/16.61MB

2b1d26302574: Loading layer [==================================================>] 1.544MB/1.544MB

d3dd49a2e686: Loading layer [==================================================>] 42.11MB/42.11MB

7278dc615b95: Loading layer [==================================================>] 5.632kB/5.632kB

c09744fc6e92: Loading layer [==================================================>] 6.144kB/6.144kB

0a2b46a5555f: Loading layer [==================================================>] 1.923MB/1.923MB

5f70bf18a086: Loading layer [==================================================>] 1.024kB/1.024kB

601effcb7aab: Loading layer [==================================================>] 1.928MB/1.928MB

Loaded image: flannel/flannel:v0.25.5

21692b7dc30c: Loading layer [==================================================>] 2.634MB/2.634MB

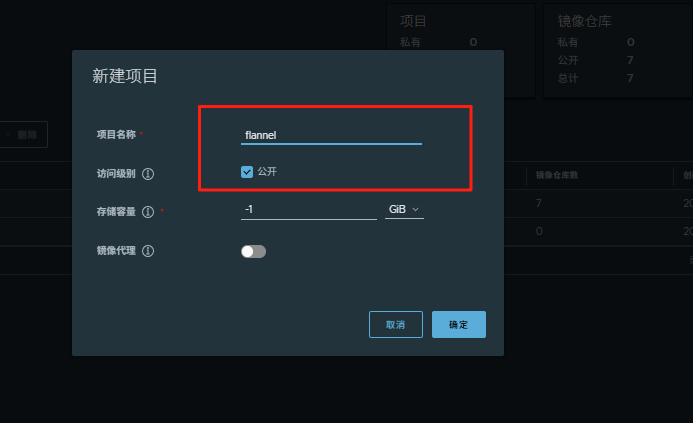

Loaded image: flannel/flannel-cni-plugin:v1.5.1-flannel1创建项目仓库:

#上传镜像到仓库

bash

docker tag flannel/flannel:v0.25.5 www.rin.com/flannel/flannel:v0.25.5

docker push www.rin.com/flannel/flannel:v0.25.5

docker tag flannel/flannel-cni-plugin:v1.5.1-flannel1 www.rin.com/flannel/flannel-cni-plugin:v1.5.1-flannel1

docker push www.rin.com/flannel/flannel-cni-plugin:v1.5.1-flannel1过程如下:

cpp

[root@master k8s-tools]# docker tag flannel/flannel:v0.25.5 www.rin.com/flannel/flannel:v0.25.5

[root@master k8s-tools]# docker push www.rin.com/flannel/flannel:v0.25.5

The push refers to repository [www.rin.com/flannel/flannel]

601effcb7aab: Pushed

5f70bf18a086: Pushed

0a2b46a5555f: Pushed

c09744fc6e92: Pushed

7278dc615b95: Pushed

d3dd49a2e686: Pushed

2b1d26302574: Pushed

4af63c5dc42d: Pushed

1d9375ff0a15: Pushed

ef7a14b43c43: Pushed

v0.25.5: digest: sha256:89be0f0c323da5f3b9804301c384678b2bf5b1aca27e74813bfe0b5f5005caa7 size: 2414

[root@master k8s-tools]# docker tag flannel/flannel-cni-plugin:v1.5.1-flannel1 www.rin.com/flannel/flannel-cni-plugin:v1.5.1-flannel1

[root@master k8s-tools]# docker push www.rin.com/flannel/flannel-cni-plugin:v1.5.1-flannel1

The push refers to repository [www.rin.com/flannel/flannel-cni-plugin]

21692b7dc30c: Pushed

ef7a14b43c43: Mounted from flannel/flannel

v1.5.1-flannel1: digest: sha256:c026a9ad3956cb1f98fe453f26860b1c3a7200969269ad47a9b5996f25ab0a18 size: 738#编辑kube-flannel.yml 修改镜像下载位置

bash

vim kube-flannel.yml需要修改以下几行

cpp

[root@master k8s-tools]# grep -n image kube-flannel.yml

146: image: www.rin.com/flannel/flannel:v0.25.5

173: image: www.rin.com/flannel/flannel-cni-plugin:v1.5.1-flannel1

184: image: www.rin.com/flannel/flannel:v0.25.5#安装flannel网络插件

bash

kubectl apply -f kube-flannel.yml

cpp

[root@master k8s-tools]# kubectl apply -f kube-flannel.yml

namespace/kube-flannel created

serviceaccount/flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created节点扩容:

在所有的worker节点中

1 确认部署好以下内容

2 禁用swap

3 安装:

kubelet-1.30.0

kubeadm-1.30.0

kubectl-1.30.0

docker-ce

cri-dockerd

4 修改cri-dockerd启动文件添加

--network-plugin=cni

--pod-infra-container-image=reg.timinglee.org/k8s/pause:3.9

5 启动服务

kubelet.service

cri-docker.service

以上信息确认完毕后即可加入集群

bash

kubeadm join 172.25.254.100:6443 --token a4l2fc.ahmfiubi738p3iup --discovery-token-ca-cert-hash sha256:b99fd92564901bbe4c29b376a8616224ebc05279bf4212f775aaa985592cf169 --cri-socket=unix:///var/run/cri-dockerd.sock测试集群运行情况:

bash

kubectl get nodes

cpp

[root@master k8s-tools]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master.rin.com Ready control-plane 41m v1.30.0

node1.rin.com Ready <none> 3m7s v1.30.0

node2.rin.com Ready <none> 2m27s v1.30.0

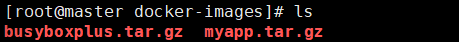

挂载镜像:

bash

docker load -i busyboxplus.tar.gz

docker load -i myapp.tar.gz 打标签:

bash

docker tag timinglee/myapp:v1 www.rin.com/library/myapp:v1

docker tag timinglee/myapp:v2 www.rin.com/library/myapp:v2

docker tag busyboxplus:latest www.rin.com/library/busyboxplus:latest登录仓库:

bash

docker login www.rin.com推送镜像到仓库:

bash

docker push www.rin.com/library/busyboxplus:latest

docker push www.rin.com/library/myapp:v1

docker push www.rin.com/library/myapp:v2应用版本的更新

#利用控制器建立pod

创建了一个名为 test的 Deployment,该 Deployment 会管理 2 个基于 myapp:v1 镜像的 Pod 副本。

bash

kubectl create deployment test1 --image myapp:v1 --replicas 2暴露端口:

bash

kubectl expose deployment test1 --port 80 --target-port 80

cpp

[root@master docker-images]# kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 5h12m

test1 ClusterIP 10.102.5.196 <none> 80/TCP 13s访问服务:

cpp

[root@master docker-images]# curl 10.102.5.196

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>#查看历史版本:

cpp

[root@master docker-images]# kubectl rollout history deployment test1

deployment.apps/test1

REVISION CHANGE-CAUSE

1 <none>#更新控制器镜像版本

bash

kubectl set image deployment/test1 myapp=myapp:v2查看历史版本:

cpp

[root@master docker-images]# kubectl rollout history deployment test1

deployment.apps/test1

REVISION CHANGE-CAUSE

1 <none>

2 <none>访问内容测试:

cpp

[root@master docker-images]# curl 10.102.5.196

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>版本回滚:

cpp

[root@master docker-images]# kubectl rollout undo deployment test1 --to-revision 1

deployment.apps/test1 rolled back

[root@master docker-images]# curl 10.102.5.196

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>利用yaml文件部署应用

用yaml文件部署应用有以下优点

声明式配置:

-

清晰表达期望状态:以声明式的方式描述应用的部署需求,包括副本数量、容器配置、网络设置等。这使得配置易于理解和维护,并且可以方便地查看应用的预期状态。

-

可重复性和版本控制:配置文件可以被版本控制,确保在不同环境中的部署一致性。可以轻松回滚到以前的版本或在不同环境中重复使用相同的配置。

-

团队协作:便于团队成员之间共享和协作,大家可以对配置文件进行审查和修改,提高部署的可靠性和稳定性。

灵活性和可扩展性:

-

丰富的配置选项:可以通过 YAML 文件详细地配置各种 Kubernetes 资源,如 Deployment、Service、ConfigMap、Secret 等。可以根据应用的特定需求进行高度定制化。

-

组合和扩展:可以将多个资源的配置组合在一个或多个 YAML 文件中,实现复杂的应用部署架构。同时,可以轻松地添加新的资源或修改现有资源以满足不断变化的需求。

与工具集成:

-

与 CI/CD 流程集成:可以将 YAML 配置文件与持续集成和持续部署(CI/CD)工具集成,实现自动化的应用部署。例如,可以在代码提交后自动触发部署流程,使用配置文件来部署应用到不同的环境。

-

命令行工具支持:Kubernetes 的命令行工具

kubectl对 YAML 配置文件有很好的支持,可以方便地应用、更新和删除配置。同时,还可以使用其他工具来验证和分析 YAML 配置文件,确保其正确性和安全性。

资源清单参数

| 参数名称 | 类型 | 参数说明 |

|---|---|---|

| version | String | 这里是指的是K8S API的版本,目前基本上是v1,可以用kubectl api-versions命令查询 |

| kind | String | 这里指的是yaml文件定义的资源类型和角色,比如:Pod |

| metadata | Object | 元数据对象,固定值就写metadata |

| metadata.name | String | 元数据对象的名字,这里由我们编写,比如命名Pod的名字 |

| metadata.namespace | String | 元数据对象的命名空间,由我们自身定义 |

| Spec | Object | 详细定义对象,固定值就写Spec |

| spec.containers\[\] | list | 这里是Spec对象的容器列表定义,是个列表 |

| spec.containers\[\].name | String | 这里定义容器的名字 |

| spec.containers\[\].image | string | 这里定义要用到的镜像名称 |

| spec.containers\[\].imagePullPolicy | String | 定义镜像拉取策略,有三个值可选: (1) Always: 每次都尝试重新拉取镜像 (2) IfNotPresent:如果本地有镜像就使用本地镜像 (3) )Never:表示仅使用本地镜像 |

| spec.containers\[\].command\[\] | list | 指定容器运行时启动的命令,若未指定则运行容器打包时指定的命令 |

| spec.containers\[\].args\[\] | list | 指定容器运行参数,可以指定多个 |

| spec.containers\[\].workingDir | String | 指定容器工作目录 |

| spec.containers\[\].volumeMounts\[\] | list | 指定容器内部的存储卷配置 |

| spec.containers\[\].volumeMounts\[\].name | String | 指定可以被容器挂载的存储卷的名称 |

| spec.containers\[\].volumeMounts\[\].mountPath | String | 指定可以被容器挂载的存储卷的路径 |

| spec.containers\[\].volumeMounts\[\].readOnly | String | 设置存储卷路径的读写模式,ture或false,默认为读写模式 |

| spec.containers\[\].ports\[\] | list | 指定容器需要用到的端口列表 |

| spec.containers\[\].ports\[\].name | String | 指定端口名称 |

| spec.containers\[\].ports\[\].containerPort | String | 指定容器需要监听的端口号 |

| spec.containers\[\] ports\[\].hostPort | String | 指定容器所在主机需要监听的端口号,默认跟上面containerPort相同,注意设置了hostPort同一台主机无法启动该容器的相同副本(因为主机的端口号不能相同,这样会冲突) |

| spec.containers\[\].ports\[\].protocol | String | 指定端口协议,支持TCP和UDP,默认值为 TCP |

| spec.containers\[\].env\[\] | list | 指定容器运行前需设置的环境变量列表 |

| spec.containers\[\].env\[\].name | String | 指定环境变量名称 |

| spec.containers\[\].env\[\].value | String | 指定环境变量值 |

| spec.containers\[\].resources | Object | 指定资源限制和资源请求的值(这里开始就是设置容器的资源上限) |

| spec.containers\[\].resources.limits | Object | 指定设置容器运行时资源的运行上限 |

| spec.containers\[\].resources.limits.cpu | String | 指定CPU的限制,单位为核心数,1=1000m |

| spec.containers\[\].resources.limits.memory | String | 指定MEM内存的限制,单位为MIB、GiB |

| spec.containers\[\].resources.requests | Object | 指定容器启动和调度时的限制设置 |

| spec.containers\[\].resources.requests.cpu | String | CPU请求,单位为core数,容器启动时初始化可用数量 |

| spec.containers\[\].resources.requests.memory | String | 内存请求,单位为MIB、GIB,容器启动的初始化可用数量 |

| spec.restartPolicy | string | 定义Pod的重启策略,默认值为Always. (1)Always: Pod-旦终止运行,无论容器是如何 终止的,kubelet服务都将重启它 (2)OnFailure: 只有Pod以非零退出码终止时,kubelet才会重启该容器。如果容器正常结束(退出码为0),则kubelet将不会重启它 (3) Never: Pod终止后,kubelet将退出码报告给Master,不会重启该 |

| spec.nodeSelector | Object | 定义Node的Label过滤标签,以key:value格式指定 |

| spec.imagePullSecrets | Object | 定义pull镜像时使用secret名称,以name:secretkey格式指定 |

| spec.hostNetwork | Boolean | 定义是否使用主机网络模式,默认值为false。设置true表示使用宿主机网络,不使用docker网桥,同时设置了true将无法在同一台宿主机 上启动第二个副本 |

示例6:资源限制

inti容器示例:

cpp

[root@master ~]# cat pod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

name: initpod

name: initpod

spec:

containers:

- image: myapp:v1

name: myapp

initContainers:

- name: init-myservice

image: busybox

command: ["sh","-c","until test -e /testfile;do echo wating for myservice; sleep 2;done"]

[root@master ~]# kubectl apply -f pod.yml

pod/initpod unchanged

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

initpod 0/1 Init:0/1 0 12m

test1-78bbb8f59d-bt5q2 1/1 Running 0 54m

test1-78bbb8f59d-k79wj 1/1 Running 0 54m

[root@master ~]# kubectl logs pods/initpod init-myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

wating for myservice

[root@master ~]# kubectl exec pods/initpod -c init-myservice -- /bin/sh -c "touch /testfile"

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

initpod 1/1 Running 0 12m

test1-78bbb8f59d-bt5q2 1/1 Running 0 54m

test1-78bbb8f59d-k79wj 1/1 Running 0 54m

[root@master ~]# 控制器

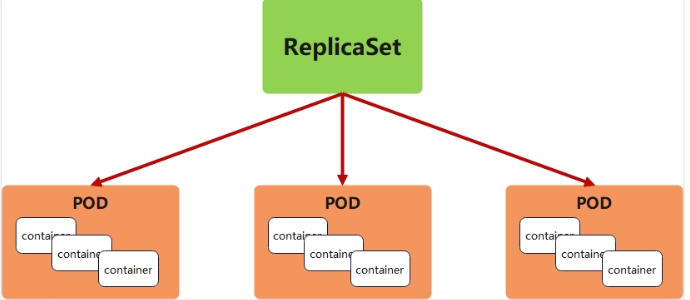

replicaset 控制器

replicaset功能

-

ReplicaSet 是下一代的 Replication Controller,官方推荐使用ReplicaSet

-

ReplicaSet和Replication Controller的唯一区别是选择器的支持,ReplicaSet支持新的基于集合的选择器需求

-

ReplicaSet 确保任何时间都有指定数量的 Pod 副本在运行

-

虽然 ReplicaSets 可以独立使用,但今天它主要被Deployments 用作协调 Pod 创建、删除和更新的机制

replicaset参数说明

| 参数名称 | 字段类型 | 参数说明 |

|---|---|---|

| spec | Object | 详细定义对象,固定值就写Spec |

| spec.replicas | integer | 指定维护pod数量 |

| spec.selector | Object | Selector是对pod的标签查询,与pod数量匹配 |

| spec.selector.matchLabels | string | 指定Selector查询标签的名称和值,以key:value方式指定 |

| spec.template | Object | 指定对pod的描述信息,比如lab标签,运行容器的信息等 |

| spec.template.metadata | Object | 指定pod属性 |

| spec.template.metadata.labels | string | 指定pod标签 |

| spec.template.spec | Object | 详细定义对象 |

| spec.template.spec.containers | list | Spec对象的容器列表定义 |

| spec.template.spec.containers.name | string | 指定容器名称 |

| spec.template.spec.containers.image | string | 指定容器镜像 |

replicaset 示例::

cpp

#生成yml文件

[root@master ~]# kubectl create deployment replicaset --image myapp:v1 --dry-run=client -o yaml > replicaset.yml

#编辑配置文件

[root@master ~]# cat replicaset.yml

apiVersion: apps/v1

kind: ReplicaSet

metadata:

name: replicaset

spec:

replicas: 2

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- image: myapp:v1

name: myapp

#创建了一个名为 replicaset 的 ReplicaSet 资源

[root@master ~]# kubectl apply -f replicaset.yml

replicaset.apps/replicaset created

#查看pod列表

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

replicaset-gq8q7 1/1 Running 0 7s app=myapp

replicaset-zpln9 1/1 Running 0 7s app=myapp

#将其中一个 replicaset的标签名字覆盖为rin

[root@master ~]# kubectl label pod replicaset-gq8q7 app=rin --overwrite

pod/replicaset-gq8q7 labeled

#查看pod列表,检测到名字不同,会自动生成一个新的 replicaset,标签为app=myapp

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

replicaset-gq8q7 1/1 Running 0 73s app=rin

replicaset-zdhwr 1/1 Running 0 1s app=myapp

replicaset-zpln9 1/1 Running 0 73s app=myapp

#将刚刚改为rin的标签名字更改回来

[root@master ~]# kubectl label pod replicaset-gq8q7 app=myapp --overwrite

pod/replicaset-gq8q7 labeled

#发现会自动将多的pod删除

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

replicaset-gq8q7 1/1 Running 0 109s app=myapp

replicaset-zpln9 1/1 Running 0 109s app=myapp

#随机一个pod删除

[root@master ~]# kubectl delete pods replicaset-gq8q7

pod "replicaset-gq8q7" deleted

#系统会自动补充pod

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

replicaset-bwcqc 1/1 Running 0 6s app=myapp

replicaset-zpln9 1/1 Running 0 2m30s app=myapp

#回收资源

[root@master ~]# kubectl delete -f replicaset.yml

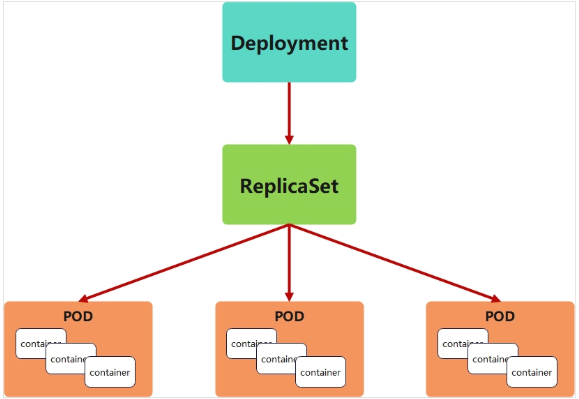

replicaset.apps "replicaset" deleteddeployment 控制器

deployment控制器的功能

-

为了更好的解决服务编排的问题,kubernetes在V1.2版本开始,引入了Deployment控制器。

-

Deployment控制器并不直接管理pod,而是通过管理ReplicaSet来间接管理Pod

-

Deployment管理ReplicaSet,ReplicaSet管理Pod

-

Deployment 为 Pod 和 ReplicaSet 提供了一个申明式的定义方法

-

在Deployment中ReplicaSet相当于一个版本

典型的应用场景:

-

用来创建Pod和ReplicaSet

-

滚动更新和回滚

-

扩容和缩容

-

暂停与恢复

deployment控制器示例

cpp

[root@master ~]# kubectl create deployment deployment --image myapp:v1 --dry-run=client -o yaml > deployment.yml

[root@master ~]# cat deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment

spec:

replicas: 4

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- image: myapp:v1

name: myapp

[root@master ~]# kubectl apply -f deployment.yml

deployment.apps/deployment created

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

deployment-5d886954d4-f5r2c 1/1 Running 0 5s app=myapp,pod-template-hash=5d886954d4

deployment-5d886954d4-gnjh4 1/1 Running 0 5s app=myapp,pod-template-hash=5d886954d4

deployment-5d886954d4-lxfdv 1/1 Running 0 5s app=myapp,pod-template-hash=5d886954d4

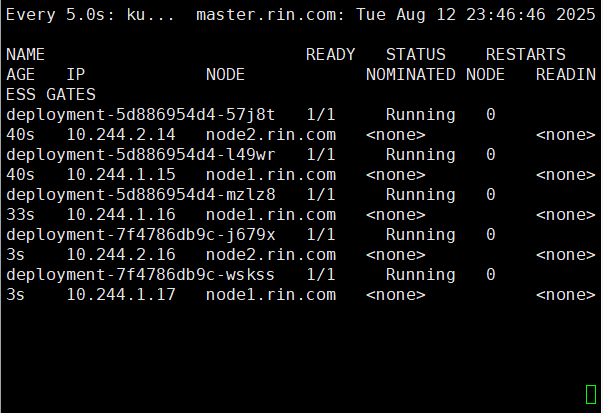

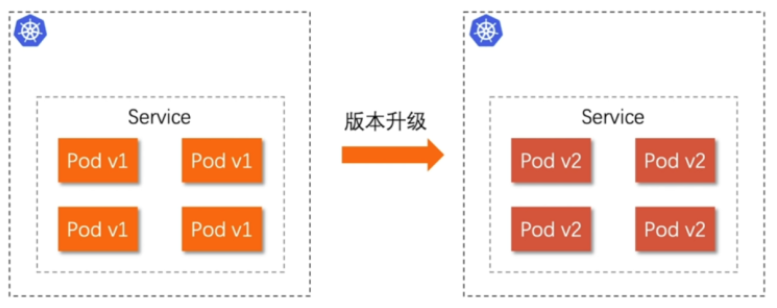

deployment-5d886954d4-vbngd 1/1 Running 0 5s app=myapp,pod-template-hash=5d886954d4版本迭代

cpp

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE ES

deployment-5d886954d4-f5r2c 1/1 Running 0 102s

deployment-5d886954d4-gnjh4 1/1 Running 0 102s

deployment-5d886954d4-lxfdv 1/1 Running 0 102s

deployment-5d886954d4-vbngd 1/1 Running 0 102s

#pod运行容器版本为v1

[root@master ~]# curl 10.244.1.8

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a

[root@master ~]# kubectl describe deployments.apps deployment

Name: deployment

Namespace: default

CreationTimestamp: Tue, 12 Aug 2025 23:35:58 -0400

Labels: <none>

Annotations: deployment.kubernetes.io/revision: 1

Selector: app=myapp

Replicas: 4 desired | 4 updated | 4 total | 4 ava

StrategyType: RollingUpdate

MinReadySeconds: 0

RollingUpdateStrategy: 25% max unavailable, 25% max surge

Pod Template:

Labels: app=myapp

Containers:

myapp:

Image: myapp:v1

Port: <none>

Host Port: <none>

Environment: <none>

Mounts: <none>

Volumes: <none>

Node-Selectors: <none>

Tolerations: <none>

Conditions:

Type Status Reason

---- ------ ------

Available True MinimumReplicasAvailable

Progressing True NewReplicaSetAvailable

OldReplicaSets: <none>

NewReplicaSet: deployment-5d886954d4 (4/4 replicas created)

Events:

Type Reason Age From Mess

---- ------ ---- ---- ----

Normal ScalingReplicaSet 2m44s deployment-controller Scal

#更新容器运行版本

[root@master ~]# vim deployment.yml

[root@master ~]# cat deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment

spec:

minReadySeconds: 5 #最小就绪时间5秒

replicas: 4

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- image: myapp:v2

name: myapp

[root@master ~]# kubectl apply -f deployment.yml #更新过程

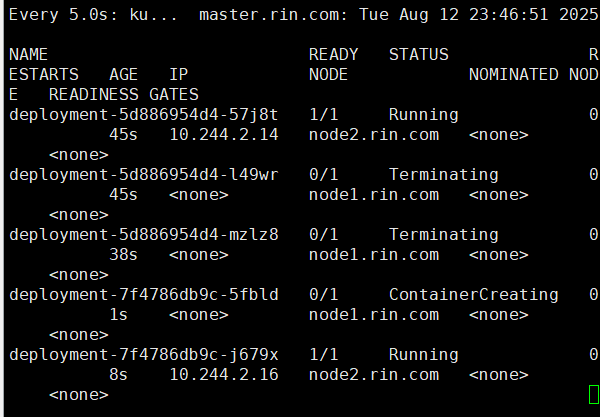

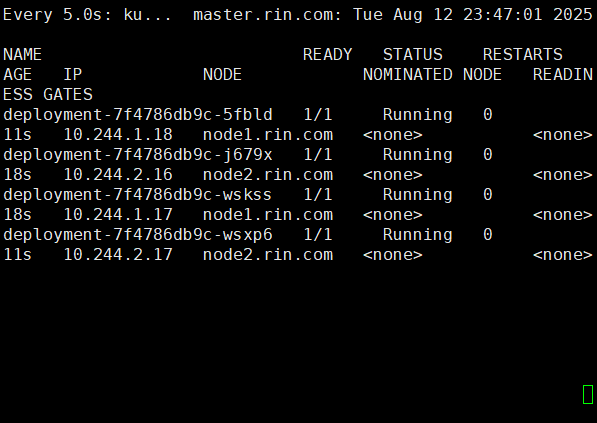

bash

watch -n5 kubectl get pods -o wide

cpp

[root@master ~]# curl 10.244.1.18

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>版本回滚

cpp

[root@master ~]# cat deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment

spec:

replicas: 4

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- image: myapp:v1 #回滚到之前版本

name: myapp

[root@master ~]# kubectl apply -f deployment.yml

#测试回滚效果

[root@master ~]# curl 10.244.1.8

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>滚动更新策略

cpp

[root@master ~]# cat deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment

spec:

minReadySeconds: 5

replicas: 4

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- image: myapp:v1

name: myapp

[root@master ~]# kubectl apply -f deployment.yml 暂停及恢复

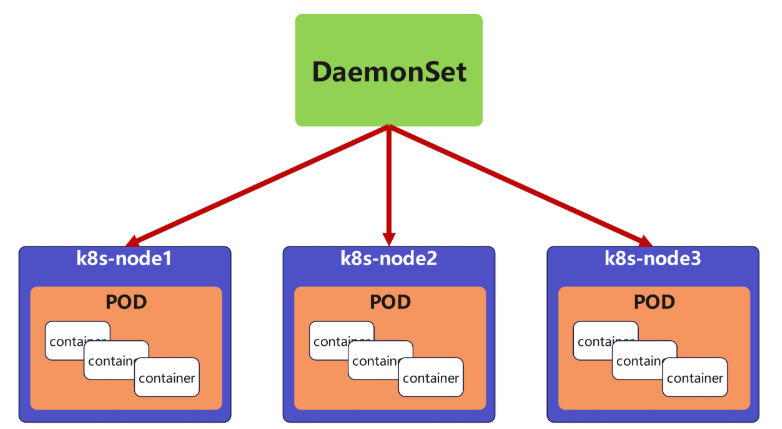

daemonset控制器

daemonset功能

DaemonSet 确保全部(或者某些)节点上运行一个 Pod 的副本。当有节点加入集群时, 也会为他们新增一个 Pod ,当有节点从集群移除时,这些 Pod 也会被回收。删除 DaemonSet 将会删除它创建的所有 Pod

DaemonSet 的典型用法:

-

在每个节点上运行集群存储 DaemonSet,例如 glusterd、ceph。

-

在每个节点上运行日志收集 DaemonSet,例如 fluentd、logstash。

-

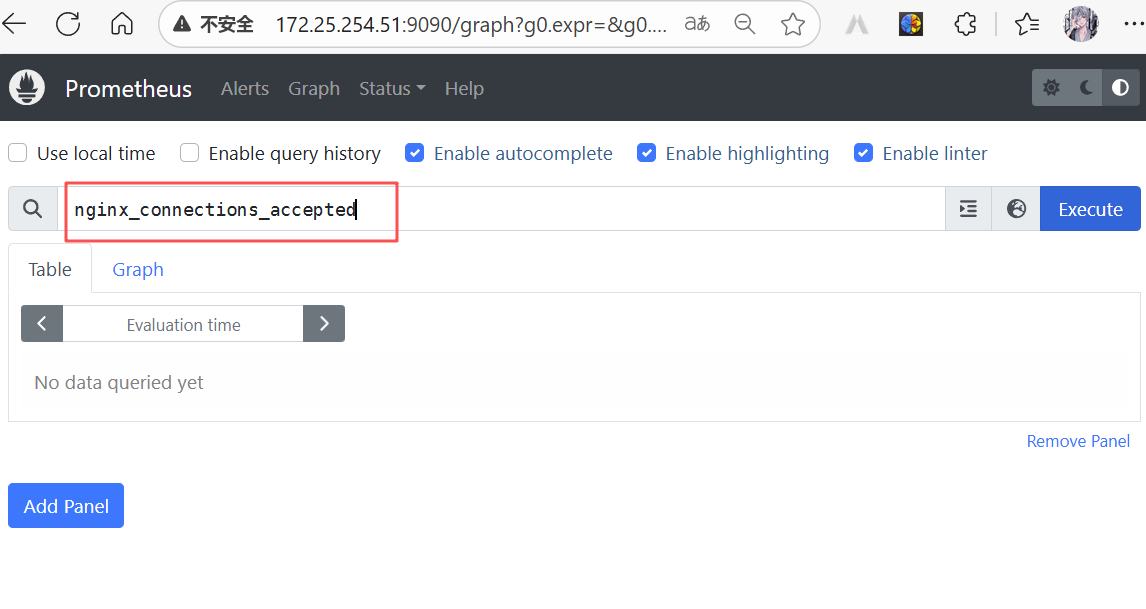

在每个节点上运行监控 DaemonSet,例如 Prometheus Node Exporter、zabbix agent等

-

一个简单的用法是在所有的节点上都启动一个 DaemonSet,将被作为每种类型的 daemon 使用

-

一个稍微复杂的用法是单独对每种 daemon 类型使用多个 DaemonSet,但具有不同的标志, 并且对不同硬件类型具有不同的内存、CPU 要求

daemonset 示例

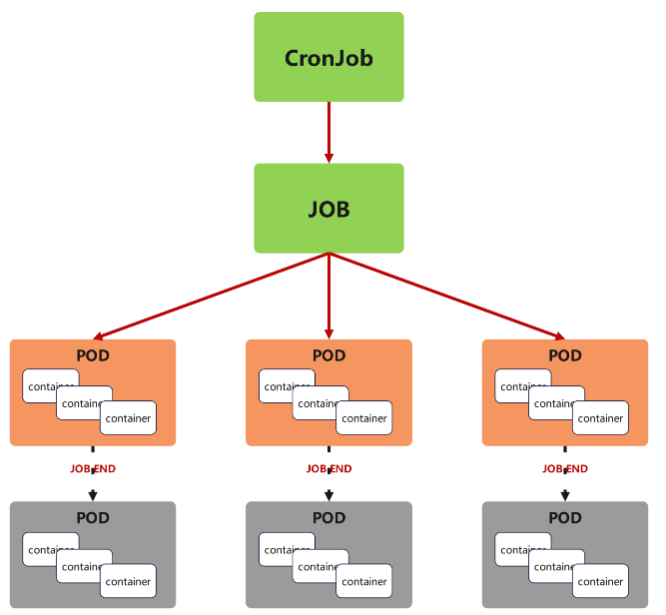

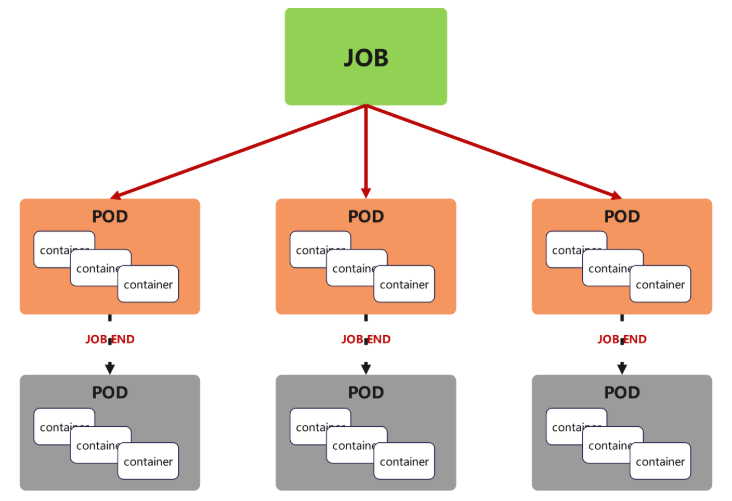

job 控制器

job控制器功能

Job,主要用于负责批量处理(一次要处理指定数量任务)短暂的一次性(每个任务仅运行一次就结束)任务

Job特点如下:

-

当Job创建的pod执行成功结束时,Job将记录成功结束的pod数量

-

当成功结束的pod达到指定的数量时,Job将完成执行

job 控制器示例

cpp

[root@master ~]# cat job.yml

apiVersion: batch/v1

kind: Job

metadata:

name: pi

spec:

completions: 6

parallelism: 2

template:

spec:

containers:

- name: pi

image: perl:5.34.0

command: ["perl", "-Mbignum=bpi", "-wle", "print bpi(2000)"]

restartPolicy: Never

backoffLimit: 4

[root@master ~]# kubectl apply -f job.yml

job.batch/pi created

# Job 会按照并行度 2 逐步创建 6 个 Pod,

# 每个 Pod 完成 π 的计算后退出,全部完成后 Job 状态变为 Complete

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

deployment-5d886954d4-4tdr8 1/1 Running 0 36m

deployment-5d886954d4-5ds78 1/1 Running 0 36m

deployment-5d886954d4-hxmh8 1/1 Running 0 36m

deployment-5d886954d4-jhfkq 1/1 Running 0 36m

pi-rmnhh 0/1 ContainerCreating 0 15s

pi-tdpcq 0/1 ContainerCreating 0 15s

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

deployment-5d886954d4-4tdr8 1/1 Running 0 38m

deployment-5d886954d4-5ds78 1/1 Running 0 38m

deployment-5d886954d4-hxmh8 1/1 Running 0 37m

deployment-5d886954d4-jhfkq 1/1 Running 0 38m

pi-rmnhh 1/1 Running 0 93s

pi-tdpcq 1/1 Running 0 93s

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

deployment-5d886954d4-4tdr8 1/1 Running 0 40m

deployment-5d886954d4-5ds78 1/1 Running 0 40m

deployment-5d886954d4-hxmh8 1/1 Running 0 39m

deployment-5d886954d4-jhfkq 1/1 Running 0 40m

pi-jf6jt 0/1 Completed 0 110s

pi-jqmwr 0/1 Completed 0 2m

pi-llvsk 0/1 Completed 0 116s

pi-pfswl 0/1 Completed 0 106s

pi-rmnhh 0/1 Completed 0 3m37s

pi-tdpcq 0/1 Completed 0 3m37s!NOTE

关于重启策略设置的说明:

如果指定为OnFailure,则job会在pod出现故障时重启容器

而不是创建pod,failed次数不变

如果指定为Never,则job会在pod出现故障时创建新的pod

并且故障pod不会消失,也不会重启,failed次数加1

如果指定为Always的话,就意味着一直重启,意味着job任务会重复去执行了

cronjob 控制器

cronjob 控制器功能

-

Cron Job 创建基于时间调度的 Jobs。

-

CronJob控制器以Job控制器资源为其管控对象,并借助它管理pod资源对象,

-

CronJob可以以类似于Linux操作系统的周期性任务作业计划的方式控制其运行时间点及重复运行的方式。

-

CronJob可以在特定的时间点(反复的)去运行job任务。

cronjob 控制器 示例

cpp

#每分钟执行一次任务,输出当前时间和一句问候信息

[root@master ~]# cat cronjob.yml

apiVersion: batch/v1

kind: CronJob

metadata:

name: hello

spec:

schedule: "* * * * *"

jobTemplate:

spec:

template:

spec:

containers:

- name: hello

image: busybox

imagePullPolicy: IfNotPresent

command:

- /bin/sh

- -c

- date; echo Hello from the Kubernetes cluster

restartPolicy: OnFailure

[root@master ~]# kubectl apply -f cronjob.yml

cronjob.batch/hello created

[root@master ~]# kubectl get jobs --watch

NAME STATUS COMPLETIONS DURATION AGE

hello-29251145 Complete 1/1 5s 77s

hello-29251146 Complete 1/1 4s 17s

hello-29251147 Running 0/1 0s

hello-29251147 Running 0/1 0s 0s

hello-29251147 Running 0/1 4s 4s

hello-29251147 Complete 1/1 4s 4s

hello-29251148 Running 0/1 0s

hello-29251148 Running 0/1 0s 0s

hello-29251148 Running 0/1 4s 4s

hello-29251148 Complete 1/1 4s 4s

hello-29251145 Complete 1/1 5s 3m4s

#可以查看日志内容

[root@master ~]# kubectl logs hello-2925114

hello-29251145-6nl57 hello-29251147-4lp5b

hello-29251146-7rjkq

[root@master ~]# kubectl logs hello-29251145-6nl57

Wed Aug 13 07:05:02 UTC 2025

Hello from the Kubernetes cluster

[root@master ~]# kubectl logs hello-29251147-4lp5b

Wed Aug 13 07:07:01 UTC 2025

Hello from the Kubernetes cluster

#清理资源

[root@master ~]# kubectl delete -f cronjob.yml

cronjob.batch "hello" deleted

[root@master ~]# kubectl get cronjobs

No resources found in default namespace.微服务

微服务的类型

| 微服务类型 | 作用描述 |

|---|---|

| ClusterIP | 默认值,k8s系统给service自动分配的虚拟IP,只能在集群内部访问 |

| NodePort | 将Service通过指定的Node上的端口暴露给外部,访问任意一个NodeIP:nodePort都将路由到ClusterIP |

| LoadBalancer | 在NodePort的基础上,借助cloud provider创建一个外部的负载均衡器,并将请求转发到 NodeIP:NodePort,此模式只能在云服务器上使用 |

| ExternalName | 将服务通过 DNS CNAME 记录方式转发到指定的域名(通过 spec.externlName 设定) |

cpp

[root@master ~]# cat rin.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: rin

name: rin

spec:

replicas: 2

selector:

matchLabels:

app: rin

template:

metadata:

creationTimestamp: null

labels:

app: rin

spec:

containers:

- image: myapp:v1

name: myapp

---

apiVersion: v1

kind: Service

metadata:

labels:

app: rin

name: rin

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: rin

[root@master ~]# kubectl apply -f rin.yaml

deployment.apps/rin created

#微服务默认使用iptables调度

[root@master ~]# kubectl get services -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 2d6h <none>

rin ClusterIP 10.102.246.215 <none> 80/TCP 3m14s app=rin

#可以在火墙中查看到策略信息

[root@master ~]# iptables -t nat -L | grep 80

DNAT tcp -- anywhere anywhere /* default/rin */ tcp to:10.244.2.30:80ipvs模式

-

Service 是由 kube-proxy 组件,加上 iptables 来共同实现的

-

kube-proxy 通过 iptables 处理 Service 的过程,需要在宿主机上设置相当多的 iptables 规则,如果宿主机有大量的Pod,不断刷新iptables规则,会消耗大量的CPU资源

-

IPVS模式的service,可以使K8s集群支持更多量级的Pod

ipvs模式配置方式

1.在所有节点中安装ipvsadm:

bash

for i in 100 10 20 ;

do ssh root@172.25.254.$i 'yum install ipvsadm -y';

done- 修改master节点的代理配置

cpp

[root@master ~]# kubectl -n kube-system edit cm kube-proxy

58 metricsBindAddress: ""

59 mode: "ipvs" #设置kube-proxy使用ipvs模式

60 nftables:3 .重启pod,在pod运行时配置文件中采用默认配置,当改变配置文件后已经运行的pod状态不会变化,所以要重启pod

cpp

[root@master ~]# kubectl -n kube-system get pods | awk '/kube-proxy/{system("kubectl -n kube-system delete pods "$1)}'

pod "kube-proxy-2rdcf" deleted

pod "kube-proxy-f9rxr" deleted

pod "kube-proxy-j5l5s" deleted

[root@master ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.96.0.1:443 rr

-> 172.25.254.100:6443 Masq 1 0 0

TCP 10.96.0.10:53 rr

-> 10.244.0.2:53 Masq 1 0 0

-> 10.244.0.3:53 Masq 1 0 0

TCP 10.96.0.10:9153 rr

-> 10.244.0.2:9153 Masq 1 0 0

-> 10.244.0.3:9153 Masq 1 0 0

TCP 10.102.246.215:80 rr

-> 10.244.1.25:80 Masq 1 0 0

-> 10.244.2.30:80 Masq 1 0 0

UDP 10.96.0.10:53 rr

-> 10.244.0.2:53 Masq 1 0 0

-> 10.244.0.3:53 Masq 1 0 0

#切换ipvs模式后,kube-proxy会在宿主机上添加一个虚拟网卡:kube-ipvs0,

#并分配所有service IP

[root@master ~]# ip a | tail

inet6 fe80::a496:efff:fe3a:17e8/64 scope link

valid_lft forever preferred_lft forever

8: kube-ipvs0: <BROADCAST,NOARP> mtu 1500 qdisc noop state DOWN group default

link/ether 3e:c2:c3:f0:71:f8 brd ff:ff:ff:ff:ff:ff

inet 10.102.246.215/32 scope global kube-ipvs0

valid_lft forever preferred_lft forever

inet 10.96.0.10/32 scope global kube-ipvs0

valid_lft forever preferred_lft forever

inet 10.96.0.1/32 scope global kube-ipvs0

valid_lft forever preferred_lft forever微服务类型详解

clusterip

特点:

clusterip模式只能在集群内访问,并对集群内的pod提供健康检测和自动发现功能

示例

cpp

[root@master ~]# cat myapp.yml

apiVersion: v1

kind: Service

metadata:

labels:

app: rin

name: rin

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: timinglee

type: ClusterIP

#查看 CoreDNS 的 Service IP

[root@master ~]# kubectl get svc kube-dns -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 2d7h

#service创建后集群DNS提供解析

[root@master ~]# dig rin.default.svc.cluster.local @10.96.0.10

; <<>> DiG 9.16.23-RH <<>> rin.default.svc.cluster.local @10.96.0.10

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 46369

;; flags: qr aa rd; QUERY: 1, ANSWER: 1, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

; COOKIE: fc2abb09975647ac (echoed)

;; QUESTION SECTION:

;rin.default.svc.cluster.local. IN A

;; ANSWER SECTION:

rin.default.svc.cluster.local. 30 IN A 10.102.246.215

;; Query time: 15 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: Wed Aug 13 04:55:04 EDT 2025

;; MSG SIZE rcvd: 115ClusterIP中的特殊模式headless

nodeport

loadbalancer

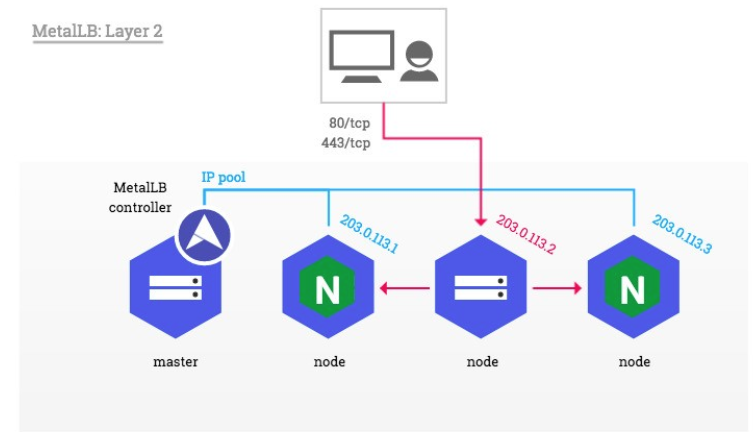

metalLB

官网:Installation :: MetalLB, bare metal load-balancer for Kubernetes

metalLB功能:为LoadBalancer分配vip

部署方式:

1.设置ipvs模式

cpp

[root@master metallb]# kubectl edit cm -n kube-system kube-proxy

59 mode: "ipvs"

44 strictARP: true

[root@master metallb]# kubectl -n kube-system get pods | awk '/kube-proxy/{system("kubectl -n kube-system delete pods "$1)}'

pod "kube-proxy-2wsl5" deleted

pod "kube-proxy-hbv2n" deleted

pod "kube-proxy-spgcm" deleted2.下载部署文件

bash

wget https://raw.githubusercontent.com/metallb/metallb/v0.13.12/config/manifests/metallb-native.yaml3.修改文件中镜像地址,与harbor仓库路径保持一致

cpp

[root@k8s-master ~]# vim metallb-native.yaml

image: metallb/controller:v0.14.8

image: metallb/speaker:v0.14.8加载镜像:

cpp

[root@master metallb]# docker load -i metalLB.tag.gz

f144bb4c7c7f: Loading layer [==================================================>] 327.7kB/327.7kB

49626df344c9: Loading layer [==================================================>] 40.96kB/40.96kB

945d17be9a3e: Loading layer [==================================================>] 2.396MB/2.396MB

4d049f83d9cf: Loading layer [==================================================>] 1.536kB/1.536kB

af5aa97ebe6c: Loading layer [==================================================>] 2.56kB/2.56kB

ac805962e479: Loading layer [==================================================>] 2.56kB/2.56kB

bbb6cacb8c82: Loading layer [==================================================>] 2.56kB/2.56kB

2a92d6ac9e4f: Loading layer [==================================================>] 1.536kB/1.536kB

1a73b54f556b: Loading layer [==================================================>] 10.24kB/10.24kB

f4aee9e53c42: Loading layer [==================================================>] 3.072kB/3.072kB

b336e209998f: Loading layer [==================================================>] 238.6kB/238.6kB

371134a463a4: Loading layer [==================================================>] 61.38MB/61.38MB

6e64357636e3: Loading layer [==================================================>] 13.31kB/13.31kB

Loaded image: quay.io/metallb/controller:v0.14.8

0b8392a2e3be: Loading layer [==================================================>] 2.137MB/2.137MB

3d5a6e3a17d1: Loading layer [==================================================>] 65.46MB/65.46MB

8311c2bd52ed: Loading layer [==================================================>] 49.76MB/49.76MB

4f4d43efeed6: Loading layer [==================================================>] 3.584kB/3.584kB

881ed6f5069a: Loading layer [==================================================>] 13.31kB/13.31kB

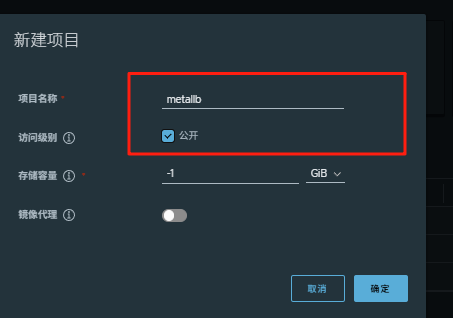

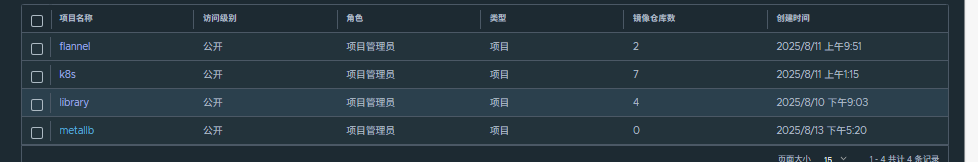

Loaded image: quay.io/metallb/speaker:v0.14.8在项目仓库创建metallb目录:

上传镜像到harbor:

cpp

[root@master metallb]# docker tag quay.io/metallb/speaker:v0.14.8 www.rin.com/metallb/speaker:v0.14.8

[root@master metallb]# docker tag quay.io/metallb/controller:v0.14.8 www.rin.com/metallb/controller:v0.14.8

[root@master metallb]# docker push www.rin.com/metallb/speaker:v0.14.8

[root@master metallb]# docker push www.rin.com/metallb/controller:v0.14.8部署服务

bash

kubectl apply -f metallb-native.yaml

kubectl -n metallb-system get pods过程:

cpp

[root@master metallb]# kubectl apply -f metallb-native.yaml

namespace/metallb-system created

customresourcedefinition.apiextensions.k8s.io/bfdprofiles.metallb.io created

customresourcedefinition.apiextensions.k8s.io/bgpadvertisements.metallb.io created

customresourcedefinition.apiextensions.k8s.io/bgppeers.metallb.io created

customresourcedefinition.apiextensions.k8s.io/communities.metallb.io created

customresourcedefinition.apiextensions.k8s.io/ipaddresspools.metallb.io created

customresourcedefinition.apiextensions.k8s.io/l2advertisements.metallb.io created

customresourcedefinition.apiextensions.k8s.io/servicel2statuses.metallb.io created

serviceaccount/controller created

serviceaccount/speaker created

role.rbac.authorization.k8s.io/controller created

role.rbac.authorization.k8s.io/pod-lister created

clusterrole.rbac.authorization.k8s.io/metallb-system:controller created

clusterrole.rbac.authorization.k8s.io/metallb-system:speaker created

rolebinding.rbac.authorization.k8s.io/controller created

rolebinding.rbac.authorization.k8s.io/pod-lister created

clusterrolebinding.rbac.authorization.k8s.io/metallb-system:controller created

clusterrolebinding.rbac.authorization.k8s.io/metallb-system:speaker created

configmap/metallb-excludel2 created

secret/metallb-webhook-cert created

service/metallb-webhook-service created

deployment.apps/controller created

daemonset.apps/speaker created

validatingwebhookconfiguration.admissionregistration.k8s.io/metallb-webhook-configuration created

[root@master metallb]# kubectl -n metallb-system get pods

NAME READY STATUS RESTARTS AGE

controller-65957f77c8-gjzvj 0/1 Running 0 6s

speaker-4dnxg 0/1 ContainerCreating 0 6s

speaker-8cctg 0/1 ContainerCreating 0 6s

speaker-jldqf 0/1 ContainerCreating 0 6s

[root@master metallb]# kubectl -n metallb-system get pods

NAME READY STATUS RESTARTS AGE

controller-65957f77c8-gjzvj 1/1 Running 0 22s

speaker-4dnxg 0/1 Running 0 22s

speaker-8cctg 0/1 Running 0 22s

speaker-jldqf 0/1 Running 0 22s配置分配地址段

cpp

[root@master metallb]# vim configmap.yml

[root@master metallb]# cat configmap.yml

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: first-pool #地址池名称

namespace: metallb-system

spec:

addresses:

- 172.25.254.50-172.25.254.99 #修改为自己本地地址段

--- #两个不同的kind中间必须加分割

apiVersion: metallb.io/v1beta1

kind: L2Advertisement

metadata:

name: example

namespace: metallb-system

spec:

ipAddressPools:

- first-pool #使用地址池,调用前面的配置

# 修改 rin Service 类型为 LoadBalancer

kubectl patch svc rin -p '{"spec":{"type":"LoadBalancer"}}'

# 修改 rin Service 类型为 LoadBalancer

kubectl patch svc rin -p '{"spec":{"type":"LoadBalancer"}}'

#查看IP分配情况:

[root@master metallb]# kubectl get svc rin

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

rin LoadBalancer 10.102.246.215 172.25.254.50 80:32709/TCP 69m

#通过分配地址从集群外访问服务

[root@master metallb]# curl 172.25.254.50

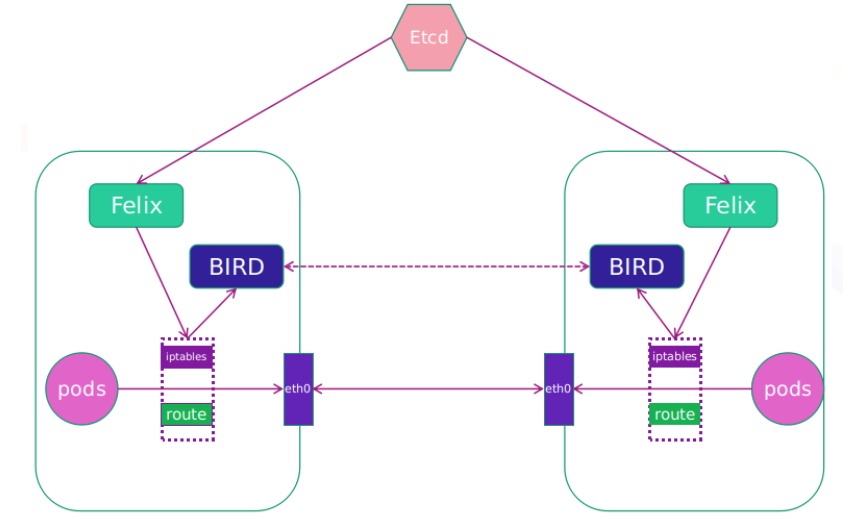

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>部署ingress

下载部署文件

bash

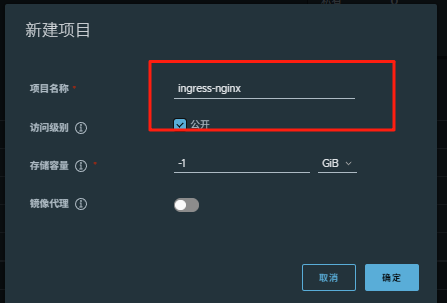

wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.11.2/deploy/static/provider/baremetal/deploy.yaml创建项目目录:

上传ingress所需镜像到harbor

bash

docker tag registry.k8s.io/ingress-nginx/kube-webhook-certgen:v1.6.1 www.rin.com/ingress-nginx/kube-webhook-certgen:v1.6.1

docker tag registry.k8s.io/ingress-nginx/controller:v1.13.1 www.rin.com/ingress-nginx/controller:v1.13.1

docker push www.rin.com/ingress-nginx/kube-webhook-certgen:v1.6.1

docker push www.rin.com/ingress-nginx/controller:v1.13.1安装ingress

cpp

[root@master ingress-1.13.1]# vim deploy.yaml

445 image: ingress-nginx/controller:v1.13.1

546 image: ingress-nginx/kube-webhook-certgen:v1.6.1

599 image: ingress-nginx/kube-webhook-certgen:v1.6.1

bash

kubectl apply -f deploy.yaml

cpp

[root@master ingress-1.13.1]# kubectl -n ingress-nginx get pods

NAME READY STATUS RESTARTS AGE

ingress-nginx-controller-85bc7f665d-n9s4s 1/1 Running 0 4m29s

[root@master ingress-1.13.1]# kubectl -n ingress-nginx get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller NodePort 10.107.49.212 <none> 80:30405/TCP,443:31020/TCP 5m1s

ingress-nginx-controller-admission ClusterIP 10.96.147.88 <none> 443/TCP 5m1s#修改微服务为loadbalancer

cpp

[root@master ingress-1.13.1]# kubectl -n ingress-nginx edit svc ingress-nginx-controller

49 type: LoadBalancer

[root@master ingress-1.13.1]# kubectl -n ingress-nginx get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller LoadBalancer 10.107.49.212 <pending> 80:30405/TCP,443:31020/TCP 6m32s

ingress-nginx-controller-admission ClusterIP 10.96.147.88 <none> 443/TCP 6m32s测试ingress

#生成yaml文件

cpp

kubectl create ingress webcluster --rule '*/=rin-svc:80' --dry-run=client -o yaml > rin-ingress.yml

[root@master ingress-1.13.1]# cat rin-ingress.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

creationTimestamp: null

name: webcluster

spec:

ingressClassName: nginx

rules:

- http:

paths:

- backend:

service:

name: rin-svc

port:

number: 80

path: /

pathType: Prefix

status:

loadBalancer: {}#建立ingress控制器

cpp

[root@master ingress-1.13.1]# kubectl apply -f rin-ingress.yml

ingress.networking.k8s.io/webcluster created

[root@master ingress-1.13.1]# kubectl get ingress

NAME CLASS HOSTS ADDRESS PORTS AGE

webcluster nginx * 172.25.254.10 80 68s基于路径的访问

1.建立用于测试的控制器myapp

cpp

[root@master ingress-1.13.1]# cat myapp-v1.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: myapp-v1

name: myapp-v1

spec:

replicas: 1

selector:

matchLabels:

app: myapp-v1

strategy: {}

template:

metadata:

labels:

app: myapp-v1

spec:

containers:

- image: myapp:v1

name: myapp

---

apiVersion: v1

kind: Service

metadata:

labels:

app: myapp-v1

name: myapp-v1

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: myapp-v1

apiVersion: v1

kind: Service

metadata:

creationTimestamp: null

labels:

app: myapp-v1

name: myapp-v1

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: myapp-v1

status:

loadBalancer: {}

apiVersion: v1

kind: Service

metadata:

creationTimestamp: null

labels:

app: myapp-v2

name: myapp-v2

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: myapp-v2

status:

loadBalancer: {}

[root@master ingress-1.13.1]# cat myapp-v2.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: myapp-v2

name: myapp-v2

spec:

replicas: 1

selector:

matchLabels:

app: myapp-v2

strategy: {}

template:

metadata:

labels:

app: myapp-v2

spec:

containers:

- image: myapp:v2

name: myapp

---

apiVersion: v1

kind: Service

metadata:

labels:

app: myapp-v2

name: myapp-v2

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: myapp-v2

cpp

[root@master ingress-1.13.1]# kubectl get services

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 4h46m

myapp-v1 ClusterIP 10.107.211.82 <none> 80/TCP 3m28s

myapp-v2 ClusterIP 10.96.65.27 <none> 80/TCP 97s2.建立ingress的yaml

cpp

[root@master ingress-1.13.1]# cat ingress1.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

name: ingress1

spec:

ingressClassName: nginx

rules:

- host: www.rin.com

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /v1

pathType: Prefix

- backend:

service:

name: myapp-v2

port:

number: 80

path: /v2

pathType: Prefix应用:

bash

kubectl apply -f ingress1.yml 测试:

添加本地解析:

bash

echo 172.25.254.50 www.rin.com >> /etc/hosts

cpp

[root@master ingress-1.13.1]# curl www.rin.com/v1

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@master ingress-1.13.1]# curl www.rin.com/v2

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

cpp

#nginx.ingress.kubernetes.io/rewrite-target: / 的功能实现

[root@master ingress-1.13.1]# curl www.rin.com/v2/aaa

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>基于域名的访问

cpp

#添加本地解析

[root@master ingress-1.13.1]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.100 master.rin.com

172.25.254.200 harbor.rin.com www.rin.com

172.25.254.10 node1.rin.com

172.25.254.20 node2.rin.com

172.25.254.50 www.rin.com myapp1.rin.com myapp2.rin.com

# 建立基于域名的yml文件

[root@master ingress-1.13.1]# cat ingress2.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

name: ingress2

spec:

ingressClassName: nginx

rules:

- host: myapp1.rin.com

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

- host: myapp2.rin.com

http:

paths:

- backend:

service:

name: myapp-v2

port:

number: 80

path: /

pathType: Prefix

#利用文件建立ingress

kubectl apply -f ingress2.yml

[root@master ingress-1.13.1]# kubectl describe ingress ingress2

Name: ingress2

Labels: <none>

Namespace: default

Address: 172.25.254.10

Ingress Class: nginx

Default backend: <default>

Rules:

Host Path Backends

---- ---- --------

myapp1.rin.com

/ myapp-v1:80 (10.244.1.5:80)

myapp2.rin.com

/ myapp-v2:80 (10.244.2.8:80)

Annotations: nginx.ingress.kubernetes.io/rewrite-target: /

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 2m6s (x3 over 5m36s) nginx-ingress-controller Scheduled for sync

#测试:

[root@master ingress-1.13.1]# curl myapp1.rin.com

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@master ingress-1.13.1]# curl myapp2.rin.com

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>建立tls加密

#建立证书

cpp

openssl req -newkey rsa:2048 -nodes -keyout tls.key -x509 -days 365 -subj "/CN=nginxsvc/O=nginxsvc" -out tls.crt#建立加密资源类型secret

secret通常在kubernetes中存放敏感数据,他并不是一种加密方式

cpp

kubectl create secret tls web-tls-secret --key tls.key --cert tls.crt

secret/web-tls-secret created

[root@master ingress-1.13.1]# kubectl get secrets

NAME TYPE DATA AGE

web-tls-secret kubernetes.io/tls 2 9s#建立ingress3基于tls认证的yml文件

cpp

[root@master ingress-1.13.1]# cat ingress3.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

name: ingress3

spec:

tls:

- hosts:

- myapp-tls.rin.com

secretName: web-tls-secret

ingressClassName: nginx

rules:

- host: myapp-tls.rin.com

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

[root@master ingress-1.13.1]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.100 master.rin.com

172.25.254.200 harbor.rin.com www.rin.com

172.25.254.10 node1.rin.com

172.25.254.20 node2.rin.com

172.25.254.50 www.rin.com myapp1.rin.com myapp2.rin.com myapp-tls.rin.com测试:

cpp

[root@master ingress-1.13.1]# curl -k https://myapp-tls.rin.com

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@master ingress-1.13.1]# curl https://myapp-tls.rin.com

curl: (60) SSL certificate problem: self-signed certificate

More details here: https://curl.se/docs/sslcerts.html

curl failed to verify the legitimacy of the server and therefore could not

establish a secure connection to it. To learn more about this situation and

how to fix it, please visit the web page mentioned above.

[root@master ingress-1.13.1]# curl -k https://myapp-tls.rin.com

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@master ingress-1.13.1]# 建立auth认证

cpp

#建立认证文件

[root@master ingress-1.13.1]# dnf install httpd-tools -y

[root@master ingress-1.13.1]# htpasswd -cm auth rin

New password:

Re-type new password:

Adding password for user rin

[root@master ingress-1.13.1]# cat auth

rin:$apr1$4qWC8T1y$fXRb/aB5m33pLGt5x2JRr0

#建立认证类型资源

[root@master ingress-1.13.1]# kubectl create secret generic auth-web --from-file auth

secret/auth-web created

[root@master ingress-1.13.1]# kubectl describe secrets auth-web

Name: auth-web

Namespace: default

Labels: <none>

Annotations: <none>

Type: Opaque

Data

====

auth: 42 bytes

#建立ingress4基于用户认证的yaml文件

[root@master ingress-1.13.1]# vim ingress4.yml

[root@master ingress-1.13.1]# cat ingress4.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/auth-type: basic

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-realm: "Please input username and password"

name: ingress4

spec:

tls:

- hosts:

- myapp-tls.rin.com

secretName: web-tls-secret

ingressClassName: nginx

rules:

- host: myapp-tls.rin.com

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

#建立ingress4

[root@master ingress-1.13.1]# kubectl apply -f ingress4.yml

ingress.networking.k8s.io/ingress4 created

[root@master ingress-1.13.1]# kubectl describe ingress ingress4

Name: ingress4

Labels: <none>

Namespace: default

Address:

Ingress Class: nginx

Default backend: <default>

TLS:

web-tls-secret terminates myapp-tls.rin.com

Rules:

Host Path Backends

---- ---- --------

myapp-tls.rin.com

/ myapp-v1:80 (10.244.1.5:80)

Annotations: nginx.ingress.kubernetes.io/auth-realm: Please input username and password

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-type: basic

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 11s nginx-ingress-controller Scheduled for sync

#测试:

[root@master ingress-1.13.1]# curl -k https://myapp-tls.rin.com

<html>

<head><title>401 Authorization Required</title></head>

<body>

<center><h1>401 Authorization Required</h1></center>

<hr><center>nginx</center>

</body>

</html>

[root@master ingress-1.13.1]# curl -k https://myapp-tls.rin.com -urin:123

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>rewrite重定向

cpp

#指定默认访问的文件到hostname.html上

[root@master ingress-1.13.1]# vim ingress5.yml

[root@master ingress-1.13.1]# cat ingress5.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/app-root: /hostname.html

nginx.ingress.kubernetes.io/auth-type: basic

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-realm: "Please input username and password"

name: ingress5

spec:

tls:

- hosts:

- myapp-tls.rin.com

secretName: web-tls-secret

ingressClassName: nginx

rules:

- host: myapp-tls.rin.com

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

[root@master ingress-1.13.1]# kubectl apply -f ingress5.yml

ingress.networking.k8s.io/ingress5 created

[root@master ingress-1.13.1]# kubectl describe ingress ingress5

Name: ingress5

Labels: <none>

Namespace: default

Address:

Ingress Class: nginx

Default backend: <default>

TLS:

web-tls-secret terminates myapp-tls.rin.com

Rules:

Host Path Backends

---- ---- --------

myapp-tls.rin.com

/ myapp-v1:80 (10.244.1.5:80)

Annotations: nginx.ingress.kubernetes.io/app-root: /hostname.html

nginx.ingress.kubernetes.io/auth-realm: Please input username and password

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-type: basic

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 9s nginx-ingress-controller Scheduled for sync

#测试:

[root@master ingress-1.13.1]# curl -Lk https://myapp-tls.rin.com -urin:123

myapp-v1-7479d6c54d-4dkgr

[root@master ingress-1.13.1]# curl -Lk https://myapp-tls.rin.com/hostname.html -urin:123

myapp-v1-7479d6c54d-4dkgr

[root@master ingress-1.13.1]# curl -Lk https://myapp-tls.rin.com/rin/hostname.html -urin:123

<html>

<head><title>404 Not Found</title></head>

<body bgcolor="white">

<center><h1>404 Not Found</h1></center>

<hr><center>nginx/1.12.2</center>

</body>

</html>

#解决重定向路径问题

[root@master ingress-1.13.1]# vim ingress5.yml

[root@master ingress-1.13.1]# cat ingress5.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /$2

nginx.ingress.kubernetes.io/use-regex: "true"

nginx.ingress.kubernetes.io/auth-type: basic

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-realm: "Please input username and password"

name: ingress5

spec:

tls:

- hosts:

- myapp-tls.rin.com

secretName: web-tls-secret

ingressClassName: nginx

rules:

- host: myapp-tls.rin.com

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /(.*)/(.*)

pathType: ImplementationSpecific

#重新加载配置:

[root@master ingress-1.13.1]# kubectl apply -f ingress5.yml

ingress.networking.k8s.io/ingress5 configured

测试:

[root@master ingress-1.13.1]# curl -Lk https://myapp-tls.rin.com/rin/hostname.html -urin:123

myapp-v1-7479d6c54d-4dkgr

[root@master ingress-1.13.1]# curl -Lk https://myapp-tls.rin.com/rin/hostname.html/inin/ -urin:123

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@master ingress-1.13.1]# Canary金丝雀发布

金丝雀发布:

金丝雀发布(Canary Release)也称为灰度发布,是一种软件发布策略。

主要目的是在将新版本的软件全面推广到生产环境之前,先在一小部分用户或服务器上进行测试和验证,以降低因新版本引入重大问题而对整个系统造成的影响。

是一种Pod的发布方式。金丝雀发布采取先添加、再删除的方式,保证Pod的总量不低于期望值。并且在更新部分Pod后,暂停更新,当确认新Pod版本运行正常后再进行其他版本的Pod的更新。

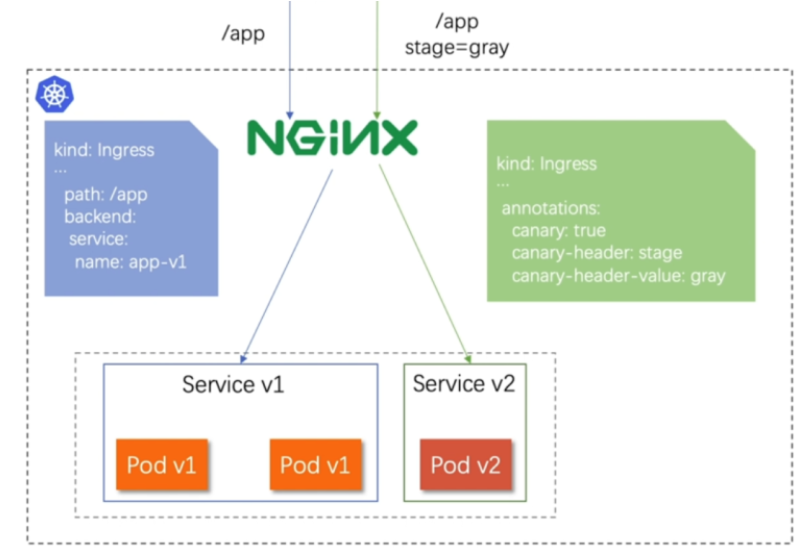

Canary发布方式

header > ccokie > weiht

其中header和weiht中的最多

基于header(http包头)灰度

-

通过Annotaion扩展

-

创建灰度ingress,配置灰度头部key以及value

-

灰度流量验证完毕后,切换正式ingress到新版本

-

之前我们在做升级时可以通过控制器做滚动更新,默认25%利用header可以使升级更为平滑,通过key 和vule 测试新的业务体系是否有问题。

cpp

#建立版本1的ingress

[root@master ingress-1.13.1]# cat ingress7.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

name: myapp-v1-ingress

spec:

ingressClassName: nginx

rules:

- host: myapp.rin.com

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

[root@master ingress-1.13.1]# kubectl describe ingress myapp-v1-ingress

Name: myapp-v1-ingress

Labels: <none>

Namespace: default

Address:

Ingress Class: nginx

Default backend: <default>

Rules:

Host Path Backends

---- ---- --------

myapp.rin.com

/ myapp-v1:80 (10.244.1.5:80)

Annotations: <none>

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 5s nginx-ingress-controller Scheduled for sync

#建立基于header的ingress

[root@master ingress-1.13.1]# cat ingress8.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-by-header: "version"

nginx.ingress.kubernetes.io/canary-by-header-value: "2"

name: myapp-v2-ingress

spec:

ingressClassName: nginx

rules:

- host: myapp.rin.com

http:

paths:

- backend:

service:

name: myapp-v2

port:

number: 80

path: /

pathType: Prefix

[root@master ingress-1.13.1]# kubectl apply -f ingress8.yml

ingress.networking.k8s.io/myapp-v2-ingress unchanged

[root@master ingress-1.13.1]# kubectl describe ingress myapp-v2-ingress

Name: myapp-v2-ingress

Labels: <none>

Namespace: default

Address: 172.25.254.10

Ingress Class: nginx

Default backend: <default>

Rules:

Host Path Backends

---- ---- --------

myapp.rin.com

/ myapp-v2:80 (10.244.2.8:80)

Annotations: nginx.ingress.kubernetes.io/canary: true

nginx.ingress.kubernetes.io/canary-by-header: version

nginx.ingress.kubernetes.io/canary-by-header-value: 2

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 9m25s (x2 over 9m47s) nginx-ingress-controller Scheduled for sync

测试:

[root@master ingress-1.13.1]# curl myapp.rin.com

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@master ingress-1.13.1]# curl -H "version: 2" myapp.rin.com

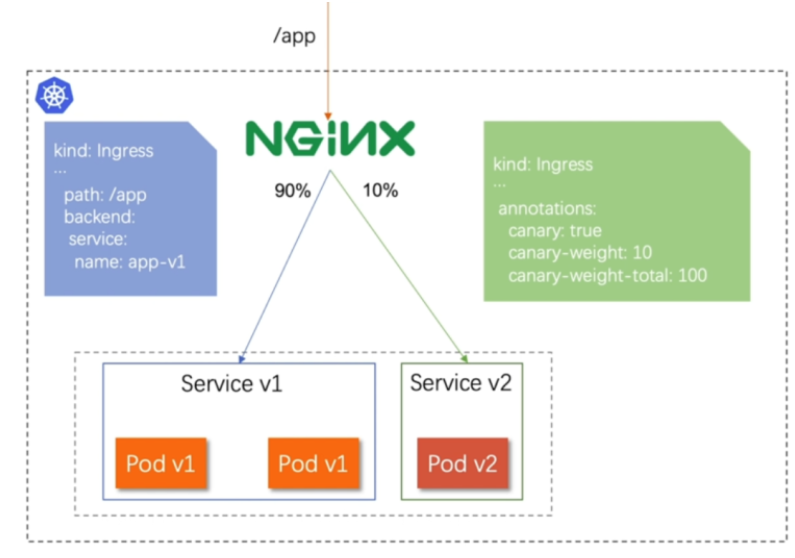

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>基于权重的灰度发布

-

通过Annotaion拓展

-

创建灰度ingress,配置灰度权重以及总权重

-

灰度流量验证完毕后,切换正式ingress到新版本

cpp

#基于权重的灰度发布

[root@master ingress-1.13.1]# cat ingress8.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-weight: "10"

nginx.ingress.kubernetes.io/canary-weight-total: "100"

name: myapp-v2-ingress

spec:

ingressClassName: nginx

rules:

- host: myapp.rin.com

http:

paths:

- backend:

service:

name: myapp-v2

port:

number: 80

path: /

pathType: Prefix

[root@master ingress-1.13.1]# cat ingress7.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

name: myapp-v1-ingress

spec:

ingressClassName: nginx

rules:

- host: myapp.rin.com

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

#更新配置

[root@master ingress-1.13.1]# kubectl apply -f ingress8.yml

ingress.networking.k8s.io/myapp-v2-ingress created

#编辑测试脚本

[root@master ingress-1.13.1]# cat check_ingress.sh

#!/bin/bash

v1=0

v2=0

for (( i=0; i<100; i++))

do

response=`curl -s myapp.rin.com |grep -c v1`

v1=`expr $v1 + $response`

v2=`expr $v2 + 1 - $response`

done

echo "v1:$v1, v2:$v2"

#测试:

[root@master ingress-1.13.1]# sh check_ingress.sh

v1:83, v2:17configmap

configmap的功能

-

configMap用于保存配置数据,以键值对形式存储。

-

configMap 资源提供了向 Pod 注入配置数据的方法。

-

镜像和配置文件解耦,以便实现镜像的可移植性和可复用性。

-

etcd限制了文件大小不能超过1M

configmap的使用场景

-

填充环境变量的值

-

设置容器内的命令行参数

-

填充卷的配置文件

configmap创建方式

字面值创建

创建一个名为 rin-config 的 ConfigMap,并通过 --from-literal 参数直接设置了两个键值对:fname=rin 和 name=rin。

cpp

[root@master ~]# kubectl create cm rin-config --from-literal fname=rin --from-literal name=rin

configmap/rin-config created

[root@master ~]# kubectl describe cm rin-config

Name: rin-config

Namespace: default

Labels: <none>

Annotations: <none>

Data #键值信息显示

====

name:

----

rin

fname:

----

rin

BinaryData

====

Events: <none>

[root@master ~]# 通过文件创建

创建一个名为 rin2-config 的 ConfigMap,其中包含 /etc/resolv.conf 文件的内容

--from-file /etc/resolv.conf表示从指定文件读取内容创建 ConfigMap- ConfigMap 中会生成一个与源文件同名的键(即

resolv.conf),其值为该文件的内容

cpp

[root@master ~]# cat /etc/resolv.conf

# Generated by NetworkManager

search rin.com

nameserver 114.114.114.114

[root@master ~]# kubectl create cm rin2-config --from-file /etc/resolv.conf

configmap/rin2-config created

[root@master ~]# kubectl describe cm rin2-config

Name: rin2-config

Namespace: default

Labels: <none>

Annotations: <none>

Data

====

resolv.conf:

----

# Generated by NetworkManager

search rin.com

nameserver 114.114.114.114

BinaryData

====

Events: <none>通过目录创建

通过目录 rinconfig/ 中的文件创建一个名为 rin3-config 的 ConfigMap。这个操作会将目录下的所有文件(fstab 和 rc.local)都添加到 ConfigMap 中,每个文件会作为 ConfigMap 的一个键,文件内容则作为对应的值。

--from-file rinconfig/表示从指定目录读取所有文件来创建 ConfigMap- 最终 ConfigMap 会包含两个键:

fstab和rc.local,分别对应原文件的内容

cpp

[root@master ~]# mkdir rinconfig

[root@master ~]# cp /etc/fstab /etc/rc.local rinconfig/

[root@master ~]# kubectl create cm rin3-config --from-file rinconfig/

configmap/rin3-config created

[root@master ~]# kubectl describe cm rin3-config

Name: rin3-config

Namespace: default

Labels: <none>

Annotations: <none>

Data

====

fstab:

----

#

# /etc/fstab

# Created by anaconda on Sun Aug 10 19:39:28 2025

#

# Accessible filesystems, by reference, are maintained under '/dev/disk/'.

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info.

#

# After editing this file, run 'systemctl daemon-reload' to update systemd

# units generated from this file.

#

UUID=84f25d20-18e1-4478-a2ab-fad7856e6a0b / xfs defaults 0 0

UUID=2f79294d-0fd8-4375-9f48-96cc02c63620 /boot xfs defaults 0 0

#UUID=7667254b-9823-4ca4-96e2-11cc8917c419 none swap defaults 0 0

rc.local:

----

#!/bin/bash

# THIS FILE IS ADDED FOR COMPATIBILITY PURPOSES

#

# It is highly advisable to create own systemd services or udev rules

# to run scripts during boot instead of using this file.

#

# In contrast to previous versions due to parallel execution during boot

# this script will NOT be run after all other services.

#

# Please note that you must run 'chmod +x /etc/rc.d/rc.local' to ensure

# that this script will be executed during boot.

touch /var/lock/subsys/local

mount /dev/sr0 /var/repo

BinaryData

====

Events: <none>通过yaml文件创建

创建一个名为 rin4-config 的 ConfigMap,但不会实际提交到 Kubernetes 集群(--dry-run=client),而是将配置以 YAML 格式输出到 rin-config.yaml 文件中。

--from-literal:直接指定键值对(db_host=172.25.254.100和db_port=3306)--dry-run=client:仅在客户端模拟执行,不实际创建资源-o yaml > rin-config.yaml:将结果以 YAML 格式输出到文件

cpp

[root@master ~]# kubectl create cm rin4-config --from-literal db_host=172.25.254.100 --from-literal db_port=3306 --dry-run=client -o yaml > rin-config.yaml

[root@master ~]# cat rin-config.yaml

apiVersion: v1

data:

db_host: 172.25.254.100

db_port: "3306"

kind: ConfigMap

metadata:

creationTimestamp: null

name: rin4-config

[root@master ~]# kubectl apply -f rin-config.yaml

configmap/rin4-config created

[root@master ~]# kubectl describe cm rin4-config

Name: rin4-config

Namespace: default

Labels: <none>

Annotations: <none>

Data

====

db_host:

----

172.25.254.100

db_port:

----

3306

BinaryData

====

Events: <none>

cpp

[root@master packages]# ls

busybox-latest.tar.gz centos-7.tar.gz ingress-1.13.1 mysql-8.0.tar packages perl-5.34.tar.gz phpmyadmin-latest.tar.gz

[root@master packages]# docker load -i mysql-8.0.tar

1355aaece24a: Loading layer [==================================================>] 116.9MB/116.9MB

0df7536aeacd: Loading layer [==================================================>] 11.26kB/11.26kB

890407b3e3c8: Loading layer [==================================================>] 2.359MB/2.359MB

dbbfcab5c162: Loading layer [==================================================>] 16.94MB/16.94MB

e1fb8ece750b: Loading layer [==================================================>] 6.656kB/6.656kB

9b2614373417: Loading layer [==================================================>] 3.072kB/3.072kB

8650ab4a9279: Loading layer [==================================================>] 147.4MB/147.4MB

8da4a3ff29de: Loading layer [==================================================>] 3.072kB/3.072kB